Abstract

In this contribution we will show that research in the field of toxicology, pharmacology and physiology is by and large characterised by a pendulum swing of which the amplitudes represent

INTRODUCTION

One of the focal points of toxicology is the improvement of public health through the implementation of results-based policies. The growing knowledgebase of toxicology helps to define and direct research and concomitant public policies. This is the case for chemical- and food-safety regulations but also environmental policies, to name just three.

Now, with advent of REACH (the European Community Regulation on

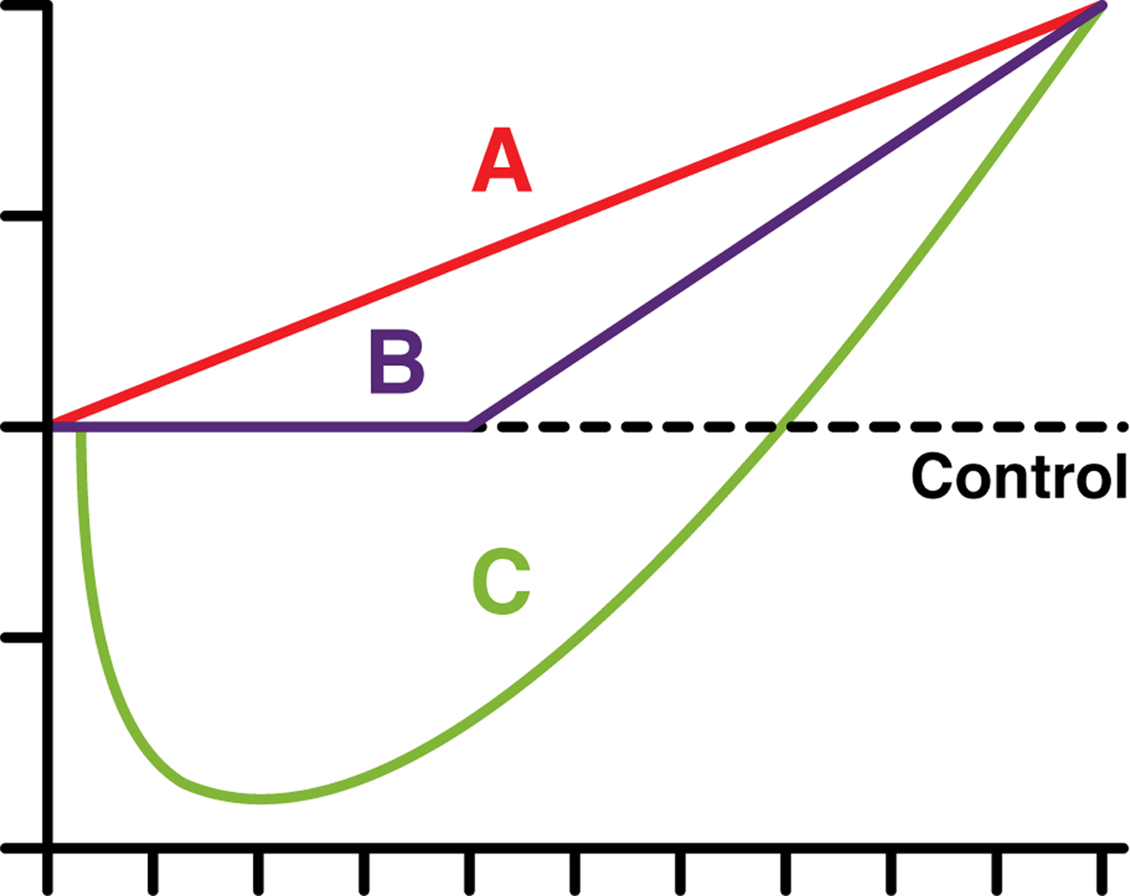

Dose response curves.

Obviously, the reductionist conundrum is as old as science itself. Reductionism is a widely accepted and highly successful practice in science, by which wholes are studied through breaking them up into their constituent parts. However, the success of the strategy does not straightforwardly imply that everything relevant to the whole can be studied

In toxicology reductionism would approximately reason as follows: a complex system (whole organism) is nothing but the sum of its parts (e.g. metabolic pathways) whereby a toxicological effect, induced by some compound at a certain concentration over a certain amount of time experimentally expressed

The laudable aim of REACH is to improve upon the protection of human health and the environment through the better and earlier identification of the intrinsic (dangerous or not) properties of chemical substances. At the same time, REACH aims to enhance innovation and competitiveness of the EU chemicals industry. Moreover, the benefits of REACH will come gradually, as more and more substances are phased into REACH. Whether these benefits –protection of human health and the environment and the enhancement of innovation and competitiveness of the EU chemicals industry- will measurably bear out in the future, of course, remains to be seen.

REACH is considered to be one the biggest efforts in regulatory history (Hartung and Rovinda 2009). Within the developing framework of REACH, it has become imperative to robustly extrapolate results from toxicological models to real-life circumstances in terms of exposure-levels, -duration, and, most importantly, -effects. Real-life exposures are usually the result of acute or routine exposures that are either unavoidable because of a natural (ecological) presence of chemicals or the result of industrial activities. The extrapolative strength of existing toxicological data is assumed without much discussion despite the fact that there are ‘too many rodent carcinogens’ (Ames and Gold 1990), not to mention the current developments in the field of epigenetics we cannot discuss here in any detail (See e.g. Trosko and Upham 2005), or indeed the increasingly important finding of the concept of hormesis (C; Fig. 1; Calabrese and Baldwin 2003).

Hormesis is usually described as a dose-response relationship that is characterised as biphasic (an inverse U- or J-shaped curve) with low-dose stimulation and high-dose inhibition. A famous example of a biphasic dose-response is found in the detailed analysis of the US FDA/2-AAF study by an expert panel of the US Society of Toxicology. It revealed a hormetic dose response for bladder cancer with risks decreasing below the control group at low doses. In their own words: ‘If we insist that it is possible to construct low-dose extrapolations for carcinogens in terms of increases in overall probability of tumor, then we are forced to use additive models. In such a case, the ED01 study provides more than evidence of a “threshold”. It provides statistically significant evidence that low doses of a carcinogen are beneficial.’ (Bruce et al. 1981)

In this article we probe toxicological modelling and its results in the understanding of human health at exposure levels that, as a rule, are substantially lower than experimental results and the fitted models normally allow for. This problem is far from new, and has been debated almost

The issue of extrapolation will be our focus in this contribution. With the aid of two examples we will outline the challenging relationship between current toxicological models and human health impacts of chemical exposures in the light of the regulatory drive to create some sort of a ‘toxic free society,’ 2 a society that is to all intents and purposes free from the burden of man-made chemicals. We will analyse these examples specifically with the aim to propose a way forward, that is to further the attempts to robustly connect toxicological research and the potential to improve upon human health with the aid of its results.

What will be shown is that research in toxicology, pharmacology and physiology is by and large characterised by a pendulum swing of which the amplitudes represent

‘The word pharmacology is derived from the Greek pharmakon, equivalent to ‘drug’, ‘medicine’ or ‘poison’, and logia, meaning ‘study’. […] The ideal drug would produce its desired effect –its therapeutically useful effect- in all biologic systems without inducing any side-effect, i.e. an effect other than that for which the drug is administered. But there is no ideal drug. Most drugs produce many effects, and all drugs produce at least two effects. And it is also a truism that no chemical agent can be considered entirely safe. For every drug, there is some dose that will produce a toxic effect—one deleterious to the subject or even life-threatening. Given the fact that any chemical can be expected to produce toxic effects, the risks entailed in its use must be weighed against its effectiveness before it can be considered a salutary agent.

Also, the examples show that despite the fact that toxicological models are incrementally refined in terms of understanding human health and chemicals exposure, new and innovative insights will always remain part of the scientific equation, by default excluding the potential to create the utopian ‘toxic free society’.

EXAMPLE 1 – NITRATE

Exposure to nitrate and its potential effects on human physiology are interesting on multiple levels. Firstly, exposure to the nitrate molecule (NO3 −) is a life-long experience that cannot be evaded, as nitrate is ubiquitous in our food and water. Nitrate is thereby a good ‘model compound’ in the food matrix with an extended scientific knowledgebase developed over the course of the 20th and 21st century. Secondly, nitrate is studied not only in experimental models but also at the population level. This gives insight in how scientists understand the developing knowledgebase at both ends of the research scale –pharmacology/toxicology and epidemiology- and how both ends do or do not link up. Thirdly, the developing knowledgebase of nitrate in human physiology and toxicology has its rendition in rules and regulations. It is interesting to see whether this regulatory rendition approximates the breadth and depth of the available and developing knowledgebase.

Obviously, nitrate itself is not the toxicological agent, both in connection to infant methaemoglobinaemia (also known as blue-baby syndrome; Comly 1945; Walton 1951)

3

and cancer (Mirvish 1995; Vermeer et al. 1998; Vermeer 2000), the two main risk factors of nitrate exposure that have scrutinised the most. In the former, nitrite (NO2

−) does the damage by oxidising the Fe2+ in haemoglobin to Fe3+ whereby the capacity to transport oxygen is nullified; in the latter nitrite reacts under acidic conditions with amines to form the rodent-carcinogens

Nitrate pharmacology, toxicology and physiology are best described as a pendulum swing. Towards one end of the amplitude, nitrate is shown to have a long-standing medical track record dating back to the 12th century and reaching its zenith in the 19th century (L'hirondel and L'hirondel 2002). However, in the 20th century the nitrate pendulum swung the opposite direction when the toxicological profile of nitrate was pushed on the scene. The risk factors described above still are the focus of much research in roughly the past half century.

The nitrate limit in water is 50 mg of NO3 −/L in the EU and 44 mg/L in the USA. These limits are in conformity with WHO recommendations established in 1970 and recently reviewed and reconfirmed in 2008 (WHO 2008). The limits were originally set, and remain so, on the basis of acute human health concerns: ‘50 mg/litre to protect against methaemoglobinaemia in bottle-fed infants (short-term exposure).’ (WHO 2008) Additionally, in two recent Dutch reports on ‘acid rain’, with reference to two publications only, nitrate is described as carcinogenic through the formation of N-nitroso compounds (De Vries 2008; Buijsman et al. 2010). This insubstantial rendering of nitrate toxicology in both reports makes for biased conclusions at least, exacerbated by the absence of a discussion of nitrate physiology we will touch on below.

Indeed, despite persistent regulatory and scientific focus on the risks of exposure to nitrate, the pendulum swung back as a result of new scientific perspectives once NO was discovered to be a major physiological chemical component (Furchgott and Zawadzki 1980; Radomski et al. 1987; Marletta et al. 1988; Knowles et al. 1989; Moncada et al. 1991; Schmidt and Walter 1994; Richardson et al. 2002; Weseler and Bast 2010). This discovery created and creates a multifaceted image on the role of nitrate, but also nitrite, in human physiology. NO production has been shown to be vital to maintain normal blood circulation and defence against infection (See e.g.: McKnight et al. 1997; McKnight et al. 1999). Nitric oxide, subsequently, is oxidised from nitrite to nitrate, which is conserved by the kidneys and concentrated in the saliva.

The discovery of NO as a vital physiological chemical explains the common knowledge that mammals produce nitrate

Despite these developing physiological insights, the entero-salivary circulation of nitrate has long been puzzling –the risk of gastro-intestinal cancers seemed to be the consequence of this mammalian nitrate circulation. After ingestion, nitrate is rapidly and effectively absorbed from the gastrointestinal tract into the bloodstream, where it mixes with the endogenously synthesised nitrate. Anaerobic bacteria in the oral cavity reduce salivary nitrate to nitrite, enhancing the gastric production of N-nitrosamines, a class of rodent carcinogens that might be detrimental to human health.

However, despite extensive research over the past 50 years, the link, if any, between commensal bacteria, nitrate and human gastric cancer is still up in the air. Indeed, despite the reality of NOC-formation, cohort studies of fertiliser workers (several hundreds or several thousands) professionally exposed to nitrate concentrations higher than the general public showed no increased risk of gastric cancer (See e.g.: Al-Dabbagh et al. 1986; Hagmar et al. 1991; Fandrem et al. 1993). In fact, there is increasing evidence that the nitrite that is formed in the mouth from nitrate can be used by the host to form biologically functional nitrogen oxides (including NO) that are important for gastro-intestinal host-defence against pathogenic micro-organism (Duncan et al. 1997; Larauche et al. 2003; Gladwin et al. 2005) and for the maintenance of normal physiological homeostasis in the stomach and elsewhere (Lundberg et al. 2004). This pathway for the generation of reactive nitrogen intermediates in mammals complements the endogenous production of these intermediates by nitric oxide synthase.

Moreover, the pendulum swing does not seem to be at its full amplitude. Sodium nitrate supplementation has been reported to reduce the O2-cost of sub-maximal exercise in humans, for instance (Bailey et al. 2009; see further: Larsen et al. 2011). Also, the dietary provision of nitrates and nitrites from vegetables and fruit may contribute the lowering of blood pressure (See e.g.: Hord, et al. 2009; Lundberg et al. 2011; Carlström et al. 2011). The nitrate–nitrite–NO pathway is boosted by exogenous administration of nitrate/nitrite and this seems to have important therapeutic as well as nutritional implications: cytoprotection of ischemia–reperfusion injury, including myocardial infarction, stroke, liver and kidney ischemia as well as chronic limb ischemia have been observed in animal models (Lundberg and Weitzberg 2010; see further: Lundberg et al. 2009). If shown to be on target by additional research, these effects will continue to alter the image nitrate.

The nitrate example gives ample insight into the conundrum we try to address here. First, the knowledge that a certain compound by itself, or through biotransformation, is shown to be a rodent-carcinogen is as such an ineffectual statement (Ames and Gold 1990; Gold et al. 2001). Indeed, the knowledge gathered in experimental settings was offset by epidemiological knowledge that showed no increased risk for gastrointestinal cancers at exposure levels higher than normal. To be sure, gathering epidemiological data is not always easy or possible. Nitrate perhaps is the exception to the rule considering its ubiquitous nature and the opportunity to research exposure levels in humans beyond the normal levels found amongst the average populace (Al-Dabbagh et al. 1986; Hagmar et al. 1991; Fandrem et al. 1993).

Secondly, the scrutinised compound might be part of a system that is not solely toxicological. Nitrate is part of a larger physiological whole that we are now uncovering. This has led to starling new insights, whereby infant methaemoglobinaemia is to be understood as a runaway physiological response to infections that Hegesh and Shiloah already reported in 1982 (Hegesh and Shiloah 1982). Then again, in toxicology many compounds are researched that are not regarded as part of the human physiology as nitrate and nitrite are –dioxins, PCBs, pesticides- whereby any exposure is regarded as detrimental only to the organism.

However, hormesis expands on the physiological notion we briefly developed for nitrate: any chemical-exposure is characterised within the boundaries of risks and benefits depending on dose, dose-spacing, and duration of exposure. This is an important notion on multiple levels we will discuss further at the end of this contribution. First, we will go to the next example that discusses the multifaceted history of chloramphenicol.

EXAMPLE 2 – CHLORAMPHENICOL

With the discovery of penicillin in 1928 by Alexander Fleming, the human potential to tackle bacterial infections in both humans and animals grew immeasurably. Penicillin is made by a fungus (

The

While secondary metabolites must in all likelihood confer an adaptive advantage, the roles of most are not fully understood. Antibiotics give a competitive advantage compared to other ground-dwelling micro-organisms that need to tap into the same biomass as nutrition. To give a very limited indication of the different antibiotics produced by Streptomycetes and their close relatives, we give some examples of medically important antibiotics in Table 1 (Chater 2006).

Antibiotic-producing organisms, antibiotics, and targeted diseases.

Chloramphenicol (or chloromycetin as it was called initially) was first isolated for therapeutic purposes by Ehrlich et al. in 1947 (Ehrlich et al. 1947; see in the same edition: Smadel and Jackson 1947). A year after its isolation it proved to be quite effective against typhoid fever (Patel et al. 1949). Apart from being used as human medication, chloramphenicol also has an extensive track record in animal food production. Chloramphenicol is an efficacious therapeutic agent that has been widely used in fish farms (Baticados et al. 1990; Morii et al. 2003). Despite its successful medical and veterinary history, chloramphenicol fell out of favour in the medical field because of the side-effect aplastic anaemia during therapy, a form of anaemia in which the bone marrow ceases to produce sufficient red and white blood cells. Its incidence is extremely rare but fatal (Benestad 1979).

The minimum dose of chloramphenicol associated with the development of aplastic anaemia is unknown. The aplastic anaemia incidence estimated by the JECFA (Joint FAO/WHO Expert Committee on Food Additives) is in the order of 1.5 cases per million people per year (IPCS-INCHEM, 1994). Only about 15 per cent of the total number of cases was associated with drug treatment, and among those chloramphenicol was not a major contributor. These data roughly give an overall incidence of therapeutic chloramphenicol-associated aplastic anaemia in humans of less than one case per 10 million per year. The ophthalmic use of chloramphenicol represents the lowest

Apart from this serious medical side effect, chloramphenicol is regarded as genotoxic and carcinogenic (Shu et al. 1987), thereby receiving an unfavourable appraisal in the veterinary field. Even so, the available data on the genotoxicity show mainly negative results in bacterial systems and mixed results in mammalian systems. It was concluded that chloramphenicol must be considered genotoxic, but only at concentrations about 25 times higher than those occurring in patients treated with the highest therapeutic dosages (Martelli et al. 1991). Chloramphenicol is categorised by the IARC (the International Agency for Research on Cancer) as probably carcinogenic in humans; group 2A (IARC 1997).

No tolerable daily intake (TDI) could be established for chloramphenicol due to the lack of scientific information to assess its carcinogenicity and effects on reproduction, and genotoxic activity (IPCS-INCHEM, 1994). As a result, no maximum residue level (MRL) could be established for chloramphenicol. For that reason it is not allowed in food-producing animals, including aquaculture. In Europe, zero tolerance levels were in force for compounds without a MRL, meaning that banned chemicals should not be detected in food products at all, regardless of concentrations. This is to all intents and purposes the regulatory use of the linear no-threshold model (LNT): when dealing with genotoxic carcinogens the ‘no-dose no-disease’ approach is regarded as the safest regulatory route (Calabrese and Baldwin 2003; despite the fact that it depicts a non-existing physico-chemical reality barred by the Second Law of Thermodynamics). Concisely, the explicit goal of zero tolerance is not

Zero tolerance, as a result of increasing analytical capabilities of detection, has created immense problems especially for imported aquaculture products, the epitome of which was the trade-dispute between the European Union and some Asian countries over the parts-per-billion-presence of chloramphenicol in shrimp during the first half of the 2000s (Hanekamp et al. 2003; Hanekamp and Wijnands 2004; Annex IV of Council Regulation (EEC) No. 2377/90). The zero-tolerance policy was replaced only in 2009 by MRPLs (Minimum Required Performance Limit) as targets for regulatory action levels of concern for banned antibiotics (Regulation (EC) No 470/2009). The fallacy of zero-tolerance that, however, is

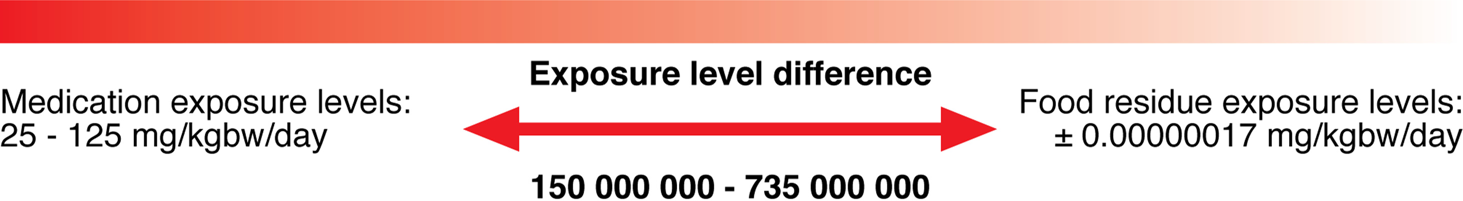

When considering the difference between therapeutic exposure –as a result of which aplastic anaemia has been observed- and the much lower exposure to food residues, it seems clear that chloramphenicol is unlikely to present a substantial risk, if at all, even assuming a linear dose-response correlation. The food residue exposure levels shown in Figure 2 are taken from the RIVM study (Dutch National Institute for Public Health and Environment) on chloramphenicol in shrimp (Janssen et al. 2001).

Exposure level-differences between medication and food.

The food exposure was calculated from the ‘reasonable worst-case’ scenario of a weekly shrimp consumption of 8.4 g contaminated with 10μg chloramphenicol per kg shrimp. The RIVM, using the LNT model, estimated the cancer risk as a result of the consumption of shrimp containing chloramphenicol as negligible. The concentrations in imported shrimp varied roughly between 1 and 10 ppb (1 to 10 μg/kg product). The estimated ‘reasonable worst-case risk’ as a result of eating shrimp containing chloramphenicol is lower than the MTR-level (being an added risk of disease or death of 1 individual in a population of 1 million usually put forward as 1:106) by at least a factor of 5000. Despite this auspicious risk-based analysis, chloramphenicol nonetheless is a suspect compound for which a ban is kept in place in the veterinary field.

The pendulum swing has seemingly frozen. What once began as a successful antibiotic in the late 1940s curing diseases such as typhoid fever and being effective in animal rearing and aquaculture, has now been discarded and banned for fear of its carcinogenic and genotoxic potential at any exposure level. However, apart from the continued efficacy of chloramphenicol in human medicine, the potential of chloramphenicol in aquaculture makes for an interesting conundrum in view of the rising food-requirements in the world (Selvin and Lipton 2003). In terms of the consumption of animal protein, aquaculture clearly seems a way forward. Indeed, ‘future increases in per capita fish consumption will depend on the availability of fishery products. With capture fisheries production stagnating, major increases in fish food production are forecast to come from aquaculture. Taking into account the population forecast, an additional 27 million tonnes of production will be needed to maintain the present level of per capita consumption in 2030.’ (FAO 2010, p. 69).

The UK Government Office for Science, in their final report ‘Foresight The Future of Food and Farming’, observes that the demand for fish ‘is expected to increase substantially, at least in line with other protein foods, and particularly in parts of east and south Asia. The majority of this extra demand will need to be met by further expansion of aquaculture, which will have significant consequences for the management of aquatic habitats and for the supply of feed resources’. Clearly, ‘[a]quaculture, which will have a major role to play in meeting the supply and resource challenges ahead, will need to produce more with increased sustainability.’ (The Government Office for Science 2011, p. 14 and 35).

In light of this point, it is ironic to note that chloramphenicol seems a very efficacious pharmacon against

But that is only part of the story. With this renewed interest in chloramphenicol in food production, the genotoxic and carcinogenic conundrum has not gone away and cannot be resolved within the old context of the LNT model, which assumes toxicity at any dose other than zero. In contrast, hormesis accounts for both sides of the pendulum swing, which the LNT model cannot. With hormesis at the basis for analyzing the risks from low-level exposures, the pendulum swing can be understood as more or less artificially created by our regulatory efforts to reduce chemicals exposure. In order to assess the risks and benefits of chloramphenicol –in terms of its use in food production such as aquaculture and the resulting exposure to humans through the produced foods- hormesis seems a way forward. This we will look into subsequently.

DISCUSSION AND CONCLUSION

The two examples we have discussed above demonstrate that risks and benefits are an integral part of chemical exposures. This holds for both nitrate and chloramphenicol. Interestingly, despite the enormous advances in research, regulatory efforts to diminish nitrate exposures from food are still in place. Nitrate stands model for a real-life experimentation in which idiosyncratic impetuses –e.g. the work of Comly and on nitrosamines- were understood as capturing the full toxicological profile. As the WHO states in its

In sharp contrast to nitrate, chloramphenicol and many other chemicals are not an inherent part of our physiology. Those and many other chemicals are regarded as xenobiotic, that is either as compounds foreign to human physiology or, more often, regarded as wholly artificial and thereby as alien. Yet, we are constantly exposed to a myriad of chemical compounds, primarily as part of the chemical food-matrix. Dividing this bulk of different chemicals between ‘innate’ and ‘foreign’, a proxy to ‘natural’ and ‘man-made’ or ‘good’ and ‘bad’, seems not only an impossible but also a fruitless task. The biochemistry of animals, plants or even entire biogeochemical cycles is immeasurably more intricate than the mere allocation of marker molecules for the legal control of certain chemicals (antibiotics such as chloramphenicol, pesticides, pollutants such as dioxins) suggests. Put differently, the set of man-made chemicals partly or wholly overlaps with the much bigger set of natural chemicals produced in the biogeosphere.

It is questionable that our immense complex biogeochemical reality –animals, plants, bacteria, fungi but also geochemical processes- can be forced into a reductionist scientific and legal construct put in place to protect the public against ‘toxic’ chemicals of man-made origin in food by ‘simply’ lessening their presence in the environment and our foods. It is not an exaggeration to state that most, if not all, (bio)organic molecules that can be identified as artificial will have their natural counterparts. A famous example is the group of chemicals known as halogenated hydrocarbons, of which the chlorinated chemical compounds are the most ‘notorious.’ 5

Chlorine is one of the most abundant elements on the surface of the earth. It was –and still is by most- widely believed that all chlorinated organic molecules are xenobiotic (that is man-made chemicals), that chlorine does not participate in biological processes at all and that it is present in the environment only as the relatively benign chloride anion Cl– (the anion of table salt NaCl). In the 1990s a controversy arose in the United States regarding an environmentalist call to ban or phase out the production and use of chlorinated organic chemicals, inorganic chlorine bleaches, and elemental chlorine (Thornton 1991). The argument for a chlorine ban was carried by the notion that the organochlorine compounds are foreign to nature (Cap 1996; Lewis and Chepesiuk 1995.

However, it has become increasingly clear that organohalogens are ubiquitously produced in nature. Some of these compounds are produced in amounts that dwarf human production. Other compounds are produced in small quantities. The sum total of different organohalogens is staggering –more than 5000 different natural organic halogen compounds, from the very simple to the very complex, have been identified so far- and come from widely diverging sources: marine, terrestrial biogenic, terrestrial abiotic, biomass combustion (natural and anthropogenic), and volcanoes (Öberg 2002; Gribble 2010). Paradoxically, analytical chemistry with its increasing detection capabilities uncovered the naturalness of so many organohalogens and other chemicals regarded and regulated as man-made. Thus, the regulatory dependence on analytical chemistry has greatly confounded the regulatory power to control food-safety solely on the presence of this or that chemical, instead of focussing on toxicological relevance.

Taking the above facts together, we should go beyond the artificial pendulum swing that characterises current research into the mechanism of organisms (humans) interacting with its chemical environment. Hormesis redefines the concept of ‘pollution’ or ‘contamination’ (Cross 2001). Chemical substances –innate of foreign- are not ‘bad’ or ‘good’, ‘natural’ or ‘artificial’. They are or can be both, depending on sources, exposure levels, exposure duration, and adaptive responses from the exposed organisms (Wiener 2001). Hormesis, the moderate overcompensation to a chemical (or radiological) perturbation of the homeostasis of an organism in order to re-establish the homeostasis, explicates the adaptive nature, the biological plasticity, of the overall process (Calabrese and Baldwin 2001). That should be on the scientific-toxicological agenda when considering chemical food-safety and the health-consequences of low-level chemical exposure, in line with definitions of human health that stress adaptiveness, rather than utopian definitions such as the current WHO characterization of health (Garner 1979; Larson 1999): 6 (i) ‘states of health or disease are the expressions of the success or failure experienced by the organism in its efforts to respond adaptively to environmental challenges’ (Dubos 1979, 1965); ‘Health consists in the capacity of the organism to maintain a balance in which it may be reasonably free of undue pain, discomfort, disability or limitation of action including social capacity’ (Goldsmith 1972; see further Selye, 1975).

Hormesis requires us to look at toxicological research in a different manner. Toxicity testing should be functional towards real-life scenarios of exposure to chemicals through the food-matrix as opposed to the standard reductionist endpoints that usually are remote proxies for human health. First, in order to close the gap between models and real-life scenarios the use of fully functional human primary cell-lines seem a way forward. Especially, lung epithelial, intestinal epithelial cells and blood vessel endothelial cells are of interest as these cells are part of human organs of first contact prone to major toxic risks (i.e. highest concentration of exposure). Also, biotransformation/elimination are processes found in hepatocytes and kidney cells, which makes them sensitive to toxicity. Secondly, markers of toxicity should reflect

These are just a few examples that can and will be added to in view of the increasing knowledgebase in toxicology, pharmacology and physiology (NRC 2007). What is important to realise is that that a search for a ‘toxic free society’ is not only pointless, but is also derived from a myopic view of human toxicology. The biological plasticity of human physiology as described by hormesis, the complexity of food chemistry, and the over-estimation to add, in terms of diversity, to the already gargantuan set of natural chemicals in the biogeosphere, underscores this myopism.

In order to bridge the chasm between toxicology and human health with respect to low-level chemical exposures, firstly, the proxy of detection (e.g. in food) should be abandoned in favour of toxicological relevance of (low-level) exposure. Secondly, organ specific functionalities should be part of toxicological research next to traditional endpoint research. Thirdly, and perhaps most importantly, the axiomatic linear extrapolative models in toxicology should be abandoned for the empirical hormetic dose-response model. Hormesis is focussed on the low-level exposure effects particularly important in food chemistry, captures both the risks and benefits of chemicals, and removes the counterproductive and artificial distinction between ‘natural’ and ‘man-made’ chemicals ironically exposed by state-of-the-art analytical chemistry. Fourthly, in view of these developments, it seems highly likely that REACH will be outdated before it even gets underway, making it a very costly and ultimately an unwarranted effort (Hartung and Rovinda 2009).

In the final analysis, the words of Thomas Nagel seem to capture a pointed reflection on the reductionist potential and its limits: ‘… for objectivity is both underrated and overrated, sometimes by the same persons. It is underrated by those who don't regard it as a method of understanding the world as it is in itself. It is overrated by those who believe it can provide a complete view of the world on its own, replacing the subjective views from which it has developed. These errors are connected: they both stem from an insufficiently robust sense of reality and of its independence of any particular form of human understanding.’ (Nagel 1986, p. 4).

Footnotes

1

Apart from methodological reductionism there is a second type of reductionism called conceptual reductionism, in which the concepts applicable to the whole are entirely articulated in terms of concepts applying to the parts. An example of a successful reduction of this kind is afforded by the use of the kinetic theory of gases as to accurately reduce the concept of temperature, originating in the thermodynamics of bulk matter, to the average kinetic energy of the molecules of the gas.

Nevertheless, there are many examples that clearly suggest that reductions of this kind will not always be successful. Individual water molecules, for instance, do not possess the property of what we as humans experience as ‘wetness’. This is a conceptually irreducible aspect of the behaviour of a multitude of water molecules, which together generate inter-molecular forces that are the source of the bulk property called surface tension, which is a phenomenon best illustrated by the water striders (predatory insects that make use of the water's surface tension to move about on water).

A third kind is called ontological reductionism. It implies that the whole is nothing but the sum of its parts. Study the parts and it will reveal the whole. This type of reductionism is the most troublesome; it is quite possible to hold to methodological reductionism yet to deny ontological reductionism as, in fact, many scientists do. The overarching conundrum of ontological reductionism is that it breaks down the distinction between logical and scientific possibilities. Put differently, methodological reduction, which in any scientific procedure is a normal and even required routine as to make any research feasible, is expanded beyond its original research limits erroneously making the evidence retrieved from scientific research to be regarded as all-encompassing.

2

With this remark we refer to the Swedish environmental goals. Since 1968, Sweden has realised that most of the country's environmental problems originate from outside its borders (Sweden is not a major player within the chemical industrial field), and hence to improve the country's environment it has to be internationally active. Domestic environmental policies ‘are increasingly designed with a deliberative view to the possible impact on EU policymaking.” (![]() ).

).

In 2000, the Swedish environmental objectives were defined as follows (Summary of Gov. Bill 2000 / 01:130): ‘The outcomes within a generation for the environmental quality objective A Non-Toxic Environment should include the following:

The concentrations of substances that naturally occur in the environment are close to the background concentrations. The levels of foreign substances in the environment are close to zero. Overall exposure in the work environment, the external environment and the indoor environment to particularly dangerous substances is close to zero and, as regards other chemical substances, to levels that are not harmful to human health. Polluted areas have been investigated and cleaned up where necessary.’

Löfstedt sees Sweden's environmental ambition reflected in EU's regulation (![]() ): ‘In the work building up to the 1992 Rio Conference on sustainable development, Sweden was one of the most active participants. Because of a Swedish initiative, chemicals received a separate chapter, largely based on Swedish chemical control policy (the substitution and precautionary principles). In 1994, the Swedish government organized a conference on chemicals, which in turn led to the establishment of an Intergovernmental Forum for Chemical Safety. When Sweden was negotiating its membership in the EU in 1994, Sweden was granted a four-year transition period during which the EU pledged to review its own legislation …. In fact, ever since Sweden joined the European Union it has been highly active in pushing for an international review of chemical policy. Following a Commission meeting in Chester in 1998 and an informal meeting of the Environmental Ministers in Weimar in May 1999, this objective became a reality.

): ‘In the work building up to the 1992 Rio Conference on sustainable development, Sweden was one of the most active participants. Because of a Swedish initiative, chemicals received a separate chapter, largely based on Swedish chemical control policy (the substitution and precautionary principles). In 1994, the Swedish government organized a conference on chemicals, which in turn led to the establishment of an Intergovernmental Forum for Chemical Safety. When Sweden was negotiating its membership in the EU in 1994, Sweden was granted a four-year transition period during which the EU pledged to review its own legislation …. In fact, ever since Sweden joined the European Union it has been highly active in pushing for an international review of chemical policy. Following a Commission meeting in Chester in 1998 and an informal meeting of the Environmental Ministers in Weimar in May 1999, this objective became a reality.

In the development of the European Commission's White Paper “Strategy for a Future Chemicals Policy” (CWP) Swedish regulators took a lead role, promoting, among other things, the reversed burden of proof requirement (European Commission. 2001. White paper: strategy for a future chemicals policy. European Commission, Brussels). As such, the differences between this White Paper and the recent Swedish document Non Hazardous Products: Proposals for Implementation of New Guidelines on Chemicals Policy are hardly discernable. One of the most important elements of the CWP is that future regulation of chemicals should be based on hazard, not risk—a prominent feature of Swedish chemical control policy that argues that chemicals are persistent, bioaccumulative, and should be phased out regardless of what actual risk they may pose ….' (See further: ![]() )

)

3

Haemoglobin, the oxygen-carrying protein that is present in large amounts in red blood cells, contains iron in its second oxidation state: Fe2+. This haemoglobin is also called ferro-oxy-haemoglobin. With iron in this oxidation state, haemoglobin is capable of binding and releasing oxygen. Iron oxidised further to Fe3+, resulting in the so-called (ferri) methaemoglobin, is incapable of binding oxygen and liberating it in organ tissue. Above a certain concentration level the organism may be harmed by oxygen deficiency. In the most serious cases this leads to death -occuring at methaemoglobin formation levels of between 45% and 60%.

4

Sattelmacher -in a famous world-wide survey- and Simon et al. -in a survey of German hospitals- have reviewed the relation between nitrate concentrations in drinking water and the relative number of infantile methaemoglobinaemia cases. Both reviews show that the majority of cases are reported above a nitrate concentration of 100 mg NO3

−/l. Sattelmacher 1962; ![]() ).

).

5

An argument against this proposition has been made based on the notion that chemicals specifically designed for a human pharmacological/physiological purpose cannot be found in nature, that is as compounds produced by non-human organisms (or as a result of geochemical processes). Nevertheless this argument seems flawed, which can be shown as follows:

Humans make particular chemicals with a specific human pharmacological/physiological purpose. Excludes that those rationally designed chemicals will be found as part of a certain biochemical process that differs essentially from human physiology. Suggests that science is capable of completely elucidating the functionalities of this rationally designed chemical whereby it is not possible that such a chemical can be part of other non-human processes in the biogeosphere. Implies that scientific knowledge is complete, or can be so, and will not be prone to revision or extension. This is a scientistic, not a scientific, perspective. Therefore, rationally designed chemicals can be part of a biogeochemical process unrelated to human physiology.

6

‘Health is a state of complete physical, mental and social well-being and not merely the absence of disease or infirmity.’ (WHO 1946). Garner remarks that the WHO's ‘utopian definition of health makes invalids of us all.’ Since, by this definition, ‘99% of the world's population must be in need of care and attention, the World Health Organization has simply written itself into existence for eternity.’ (Garner 1979, p. 14; see further ![]() ).

).