Abstract

A method for counterfactual explanation of machine learning survival models is proposed. One of the difficulties of solving the counterfactual explanation problem is that the classes of examples are implicitly defined through outcomes of a machine learning survival model in the form of survival functions. A condition that establishes the difference between survival functions of the original example and the counterfactual is introduced. This condition is based on using a distance between mean times to event. It is shown that the counterfactual explanation problem can be reduced to a standard convex optimization problem with linear constraints when the explained black-box model is the Cox model. For other black-box models, it is proposed to apply the well-known Particle Swarm Optimization algorithm. Numerical experiments with real and synthetic data demonstrate the proposed method.

Keywords

Introduction

Explanation of machine learning models is an important problem in many applications. For instance, medicine machine learning applications meet a requirement of understanding results provided by the models. A typical example is when a doctor has to have an explanation of a stated diagnosis in order to have an opportunity to choose a preferable treatment (Holzinger et al., 2019). This implies that decisions provided by the machine learning models should be explainable to help machine learning users to understand the obtained results, for example, doctors need to understand obtained diagnoses. One of the obstacles to obtain explainable decisions is the black-box nature of many models, especially, of deep learning models, i.e. inputs and outcomes of these models may be known, but there is no information what features impact on corresponding decisions provided by the models. Many explanation methods have been developed in order to overcome this obstacle and to explain the model outcomes. The explanation methods can be divided into two groups: local and global methods. Methods from the first group derive explanation locally around a test example, whereas methods from the second group try to explain the black-box model on the whole dataset or a part of the datasets. The global explanation methods are of great interest, but many application areas, especially, medicine, require to understand decisions concerning a specific patient, i.e. it is important to know what features of an example are responsible for a black-box model prediction. Therefore, this paper focuses on local explanations.

It is important to note that users of a black-box model are often not interested why a certain prediction was obtained and what features of an example led to a decision. They usually aim to understand what could be changed to get a preferable result by using the black-box model. Such explanations can be referred to the so-called counterfactual explanations or counterfactuals (Wachter et al., 2017), which may be more desirable, intuitive and useful than “direct” explanations (methods based on attributing a prediction to input features). According to Molnar (2019), a counterfactual explanation of a prediction can be defined as the smallest change to the feature values of an input original example that changes the prediction to a predefined outcome. There is a classic example of the loan application rejection (Molnar, 2019; Wachter et al., 2017), which explicitly explains counterfactuals. According to this example, a bank rejects an application of a user for a credit. A counterfactual explanation could be that “the credit would have been approved if the user would earn $500 more per month and have the credit score 30 points higher” (Molnar, 2019; Wachter et al., 2017).

So far, methods of counterfactual explanations have been applied to classification and regression problems where a black-box model produces a point-valued outcome for every input example. However, there are many models where the outcome is a function. Some of these models solve problems of survival analysis (Hosmer et al., 2008), where the outcome is a survival function (SF) or a cumulative hazard function (CHF). In contrast to the standard classification and regression machine learning models, the survival models deal with datasets containing a lot of censored data. This fact complicates the models.

This paper presents a method for finding counterfactual explanations for predictions of survival machine learning black-box models, which is based on analysis of SFs. The method allows us to find a counterfactual whose SF is connected with the SF of the original example by means of some conditions. One of the difficulties of solving the counterfactual explanation problem is that the classes of examples are implicitly defined through outcomes of a machine learning survival model in the form of SFs or CHFs. Therefore, a condition establishing the difference between mean times to event of the original example and the counterfactual is proposed. For example, the mean time to event of the counterfactual should be larger than the mean time to event of the original example by value r. The meaning of counterfactuals in survival analysis under the above condition can be represented by the statement: “Your treatment was not successful because of a small dose of the medicine (one tablet). If your dose had been three tablets, the mean time of recession would have been increased to a required value”. Here the difference between the required value of the mean time to recession and the recent mean time to recession of the patient is r. It depends on the black-box model used in a certain study. In particular, when the Cox model is considered as a black-box model, the exact solution can be obtained because the optimization problem for computing counterfactuals is reduced to a standard convex programming problem. In a general case of the black-box model, the optimization problem for computing counterfactuals is non-convex. Therefore, one of the ways for solving the optimization problem is to use some heuristic global optimization method. An optimization method called Particle Swarm Optimization (PSO), proposed by Kennedy and Eberhart (1995), can be applied to solving the counterfactual explanation problem. The method is a population-based stochastic optimization technique based on swarms and motivated by the intelligent collective behavior of some animals (Wang et al., 2018).

In summary, the following contributions are made in this paper:

A statement of the counterfactual explanation problem in the framework of survival analysis is proposed, and a criterion for defining counterfactuals is introduced.

It is shown that the counterfactual explanation problem can be reduced to a standard convex optimization problem with linear constraints when the black-box model is the Cox model. This fact leads to an exact solution of the counterfactual explanation problem.

The PSO algorithm is applied to solving the counterfactual explanation problem in a general case when the black-box model may be arbitrary.

The proposed approaches are illustrated by means of numerical experiments with synthetic and real data, which show the accuracy of the method.

The paper is organized as follows. Related work is briefly discussed in Section 2. Basic concepts of survival analysis and the Cox model are given in Section 3. Section 4 contains the standard counterfactual explanation problem statement and its extension to the case of survival analysis. In Section 5, it is shown that the counterfactual explanation problem for the black-box Cox model is a convex programming problem and therefore can simply be solved. Its application to the counterfactual explanation problem is considered in Section 7. Numerical experiments with synthetic data and real data are given in Section 8. Discussion of some peculiarities of the proposed method and concluding remarks can be found in Section 9.

In order to get intuitive and human-friendly explanations, several counterfactual explanation methods (Wachter et al., 2017) were developed by several authors (Buhrmester et al., 2019; Dandl et al., 2020; Fernandez et al., 2020; Goyal et al., 2019; Guidotti et al., 2019a; Hendricks et al., 2018b; Looveren and Klaise, 2019; Lucic et al., 2019; Poyiadzi et al., 2020; Russel, 2019; Vermeire and Martens, 2020; van der Waa et al., 2018; White and Garcez, 2019). The counterfactual explanation tells us what to do to achieve a desired outcome.

Some counterfactual explanation methods use combinations with other methods like LIME and SHAP. For example, (Ramon et al., 2019) proposed the so-called LIME-C and SHAP-C methods as counterfactual extensions of LIME and SHAP. White and Garcez (2019) proposed the CLEAR methods which can also be viewed as a combination of LIME and counterfactual explanations.

Many counterfactual explanation methods apply perturbation techniques to examine feature perturbations that lead to a different outcome of a black-box model. In fact, this is an approach to generate counterfactuals to alter an input of the black-box model and to observe how the output changes. One of the methods using perturbations is the Growing Spheres method (Laugel et al., 2018), which can be referred to counterfactual explanations to some extent. The method determines the minimal changes needed to alter a prediction. Perturbations are usually performed towards interpretable counterfactuals in a lot of methods (Dhurandhar et al., 2018, 2019; Looveren and Klaise, 2019).

Another direction for applying counterfactuals concerns with counterfactual visual explanations which can be generated to explain the decisions of a deep learning system by identifying what and how regions of an input image would need to change in order for the system to produce a specified output (Goyal et al., 2019). Hendricks et al. (2018a) proposed counterfactual explanations in natural language by generating counterfactual textual evidence. Counterfactual “feature-highlighting explanations” were proposed by Barocas et al. (2020) by highlighting a set of features deemed most relevant and withholding others. A counterfactual impact analysis of medical images was considered by Lenis et al. (2020), and by Bhatt et al. (2019).

Many other approaches have been proposed in the last few years (Verma et al., 2020), but there are no counterfactual explanations of predictions provided by the survival machine learning systems. Therefore, our aim is to propose a new method for counterfactual survival explanations.

Most of the above models dealing with survival data can be regarded as black-box models and should be explained. However, only the Cox model has a simple explanation due to its linear relationship between covariates. Therefore, it can be used to approximate more powerful models, including survival deep neural networks and random survival forests, for explaining predictions of these models.

Elements of Survival Analysis

Basic Concepts

In survival analysis, an example (patient) i is represented by a triplet

The survival and hazard functions are key concepts in survival analysis for describing the distribution of event times. The SF, denoted by

Another important concept is the CHF

The SF can be expressed through the CHF as

Let us consider main concepts of the Cox proportional hazards model (Hosmer et al., 2008). According to the model, the hazard function at time t given predictor values

In the framework of the Cox model, the SF

The Cox model is one of the models establishing an explicit relationship between the covariates and the distribution of survival times. It assumes a linear combination of the example covariates. On the one hand, this is a strong assumption that is not valid in many cases. It restricts the wide use of the model. On the other hand, this assumption allows us to apply the Cox model to solving the explanation problems as a linear approximation of some unknown function of covariates by considering coefficients of the covariates as quantitative impacts on the prediction.

The partial likelihood in this case is defined as follows:

We consider a definition of the counterfactual explanation proposed by Wachter et al. (2017) and rewrite it in terms of survival models.

(Wachter et al., 2017).

Assume a prediction function f is given. Computing a counterfactual

The function

It is important to note that the above optimization problem can be extended by including additional terms. In particular, many algorithms of the counterfactual explanation use a term which makes counterfactuals close to the observed data. It can be done, for example, by minimizing the distance between the counterfactual

Let us consider an analogy of survival models with the standard classification models where all points are divided into classes. We also have to divide all patients into classes by means of an implicit relationship between the black-box survival model predictions. It is important to note that predictions are the CHFs or the SFs. Therefore, the introduced loss function

Another way for separating patients is to consider the difference between the corresponding mean times to events for counterfactual

Then the optimization problem (7) can be rewritten as follows:

We suppose that a condition for a boundary of “classes” of patients can be defined by a predefined smallest distance between mean values, which is equal to r. In other words, a counterfactual

The condition for “classes” of patients can be also written as

Let us unite the above conditions by means of the function

It should be noted that several conditions of counterfactuals taking into account mean values can be proposed here. We take the difference between the mean time to events of explainable point

Let us consider the so-called hinge-loss function

Its minimization encourages to increase the difference

Taking into account (8) and (13), the entire loss function can be rewritten and the following optimization problem is formulated:

In summary, the optimization problem (15) has to be solved in order to find counterfactuals

It should be noted that counterfactuals can also be found by solving the following constrained optimization problem:

It is equivalent to problem (15) or to problem (7), which are simply derived from (16)–(17).

Generally, the function

This problem can be explained as follows. If point

We prove below that problem (18) can be reduced to a standard convex optimization problem with linear constraints and can be exactly solved if the black-box model is the Cox model. In other words, the counterfactual example

Let

Hence, the mean value is

Denote

Compute the following limits:

The derivative of

Note that

The above means that the function

It is obvious that there holds

Let

It follows from the above that

Let

It follows from the above that

The above conditions for r and the sets of solutions can be united

Constraints (17) to the problem (16)–(17) become (29) which are linear with

The problem (16) with constraints (29) can also be written in the form of the unconstrained problem (18), as follows:

The PSO algorithm proposed by Kennedy and Eberhart (1995) can be viewed as a stochastic optimization technique based on a swarm. There are several survey papers devoted to the PSO algorithms, for example, Wang et al. (2018, 2015). We briefly introduce this algorithm below.

The PSO performs searching via a swarm of particles that updates from iteration to iteration. In order to reach the optimal or suboptimal solution to the optimization problem, each particle moves in the direction to its previously best position (denoted as “pbest”) and the global best position (denoted as “gbest”) in the swarm. Suppose that the function

Initialization (zero iteration):

N particles

the best position

the best solution

Iteration t (

velocities are adjusted:

positions of particles are adjusted:

the best positions of particles are adjusted:

the best solution is adjusted:

The problem has five parameters: the number of particles N; the number of iterations

It is clear that parameters N and

Other parameters are selected by using some heuristics (Bai, 2010; Clerc and Kennedy, 2002), namely,

The following values of the above introduced parameters are often taken:

Particles are generated by means of the uniform distributions U with the following parameters:

Velocities are similarly generated as:

It should be noted that PSO is similar to the Genetic Algorithm (GA) in the sense that they are both population-based search approaches and that they both depend on information sharing among their population members to enhance their search processes using a combination of deterministic and probabilistic rules. However, many authors (Duan et al., 2009; Panda and Padhy, 2008; Sooda and Nair, 2011; Wang et al., 2008) claim that PSO has a better performance in terms of average and standard deviation from multiple runs of algorithms. PSO converges to arrive at the optimal values in fewer generations than GA. Moreover, PSO outperforms GA, when a smaller population size is available, and has higher robustness.

Let us return to the counterfactual explanation problem in the framework of survival analysis. Suppose that there exists a dataset D with triplets

Let us calculate bounds of the domain

According to the PSO algorithm, the initial positions of particles are generated as

So, the optimal solution can be found in the hyper parallelepiped

If there exists at least one point

Let us introduce a sphere

Loop: over all features of

The initial positions of particles are generated as follows:

Initial values of velocities are taken as

Let us point out properties of the above approach:

The optimization solutions are always located in the set

The “worst” optimal solution is

Another important question arising with respect to the above approach on the basis of the PSO is how to take into account categorical features. We have to note that the proposed method can potentially deal with categorical features. A direct way for taking into account these features is to consider the optimization problem (18) for different combinations of values of categorical features. Let us represent the feature vector

To perform numerical experiments, we use the following general scheme.

1. The Cox model and the RSF are considered as black-box models that are trained on synthetic or real survival data. Outputs of the trained models in the testing phase are SFs.

2. To study the proposed explanation algorithm by means of synthetic data, we generate random survival times to events by using the Cox model estimates.

In order to analyse the numerical results, the following schemes are proposed for verification. When the Cox model is used as a black-box model, we can get the exact solution. This implies that we can exactly compute the counterfactual

The next question is how to verify results of the RSF as a black-box model. The problem is that the RSF does not allow us to obtain exact results by means of formal methods, for example, by solving the optimization problem (16). However, the counterfactual can be found with arbitrary accuracy by considering all points or many points in accordance with a grid. Then the minimal distance between the original point

The code of the proposed algorithm in Python is available at

Numerical Experiments with Synthetic Data

Initial Parameters of Numerical Experiments with Synthetic Data

Random survival times to events are generated by using the Cox model estimates. An algorithm proposed by Bender et al. (2005) for survival time data for the Cox model with the Weibull distributed survival times is applied to generate the random times. The Weibull distribution for generation has the scale parameter

The event indicator

For testing, two points are randomly selected from the hyper parallelepiped

Original and counterfactual points by the parameter

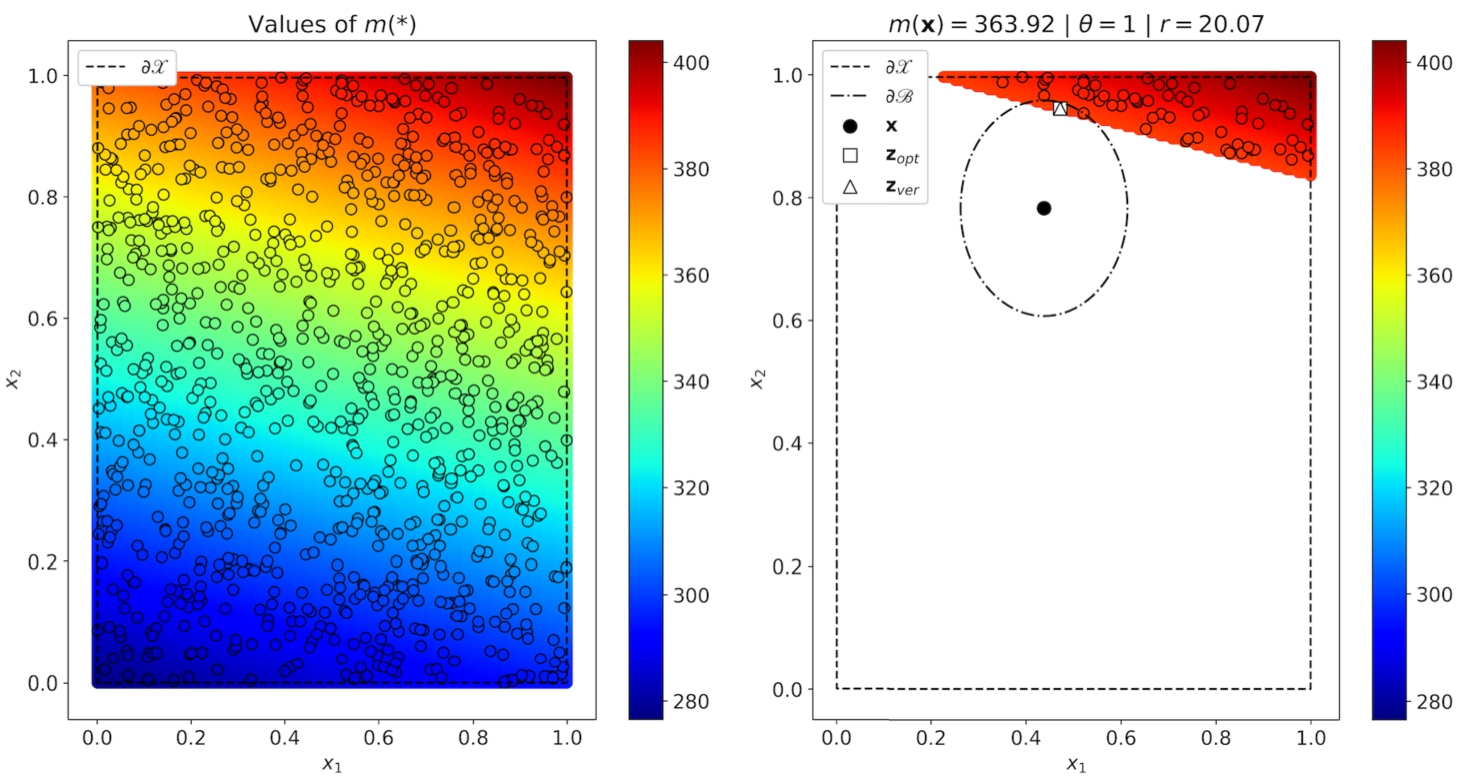

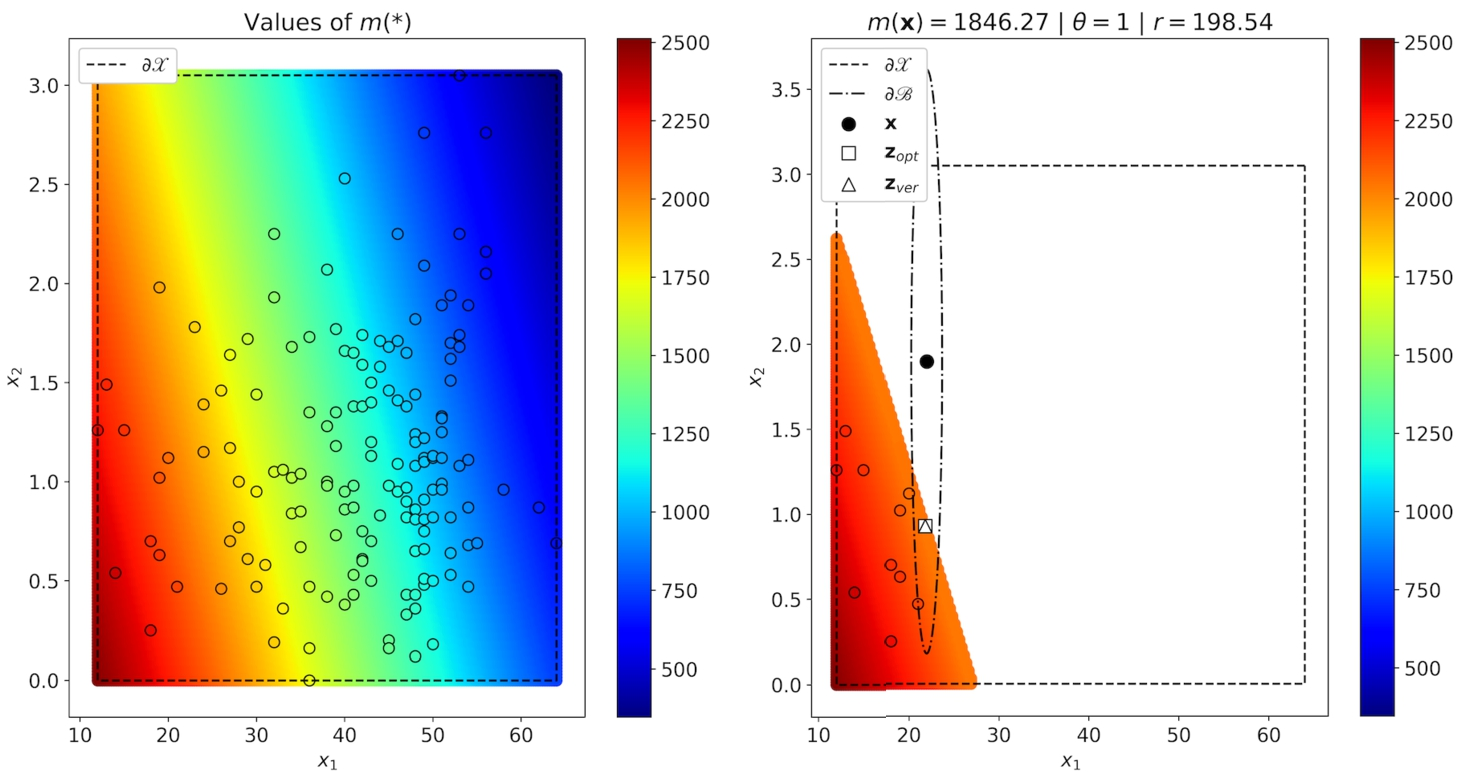

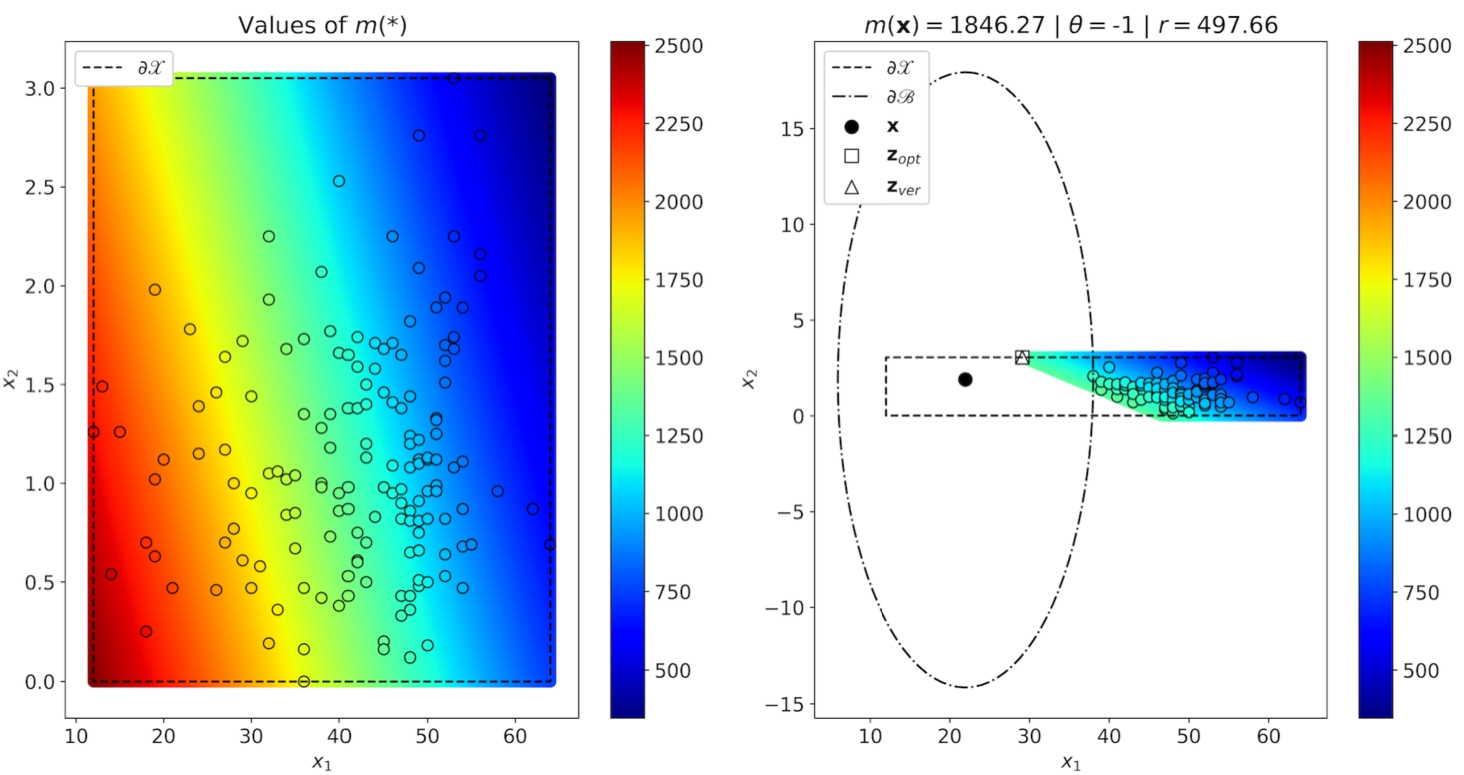

The first part of numerical experiments is performed with the black-box Cox model and aims to show how results obtained by means of the PSO approximate the verified results obtained as the solution of the convex optimization problem with objective function (16) and constraints (29). These results are illustrated in Figs. 1–2. The left figure in Fig. 1 shows how

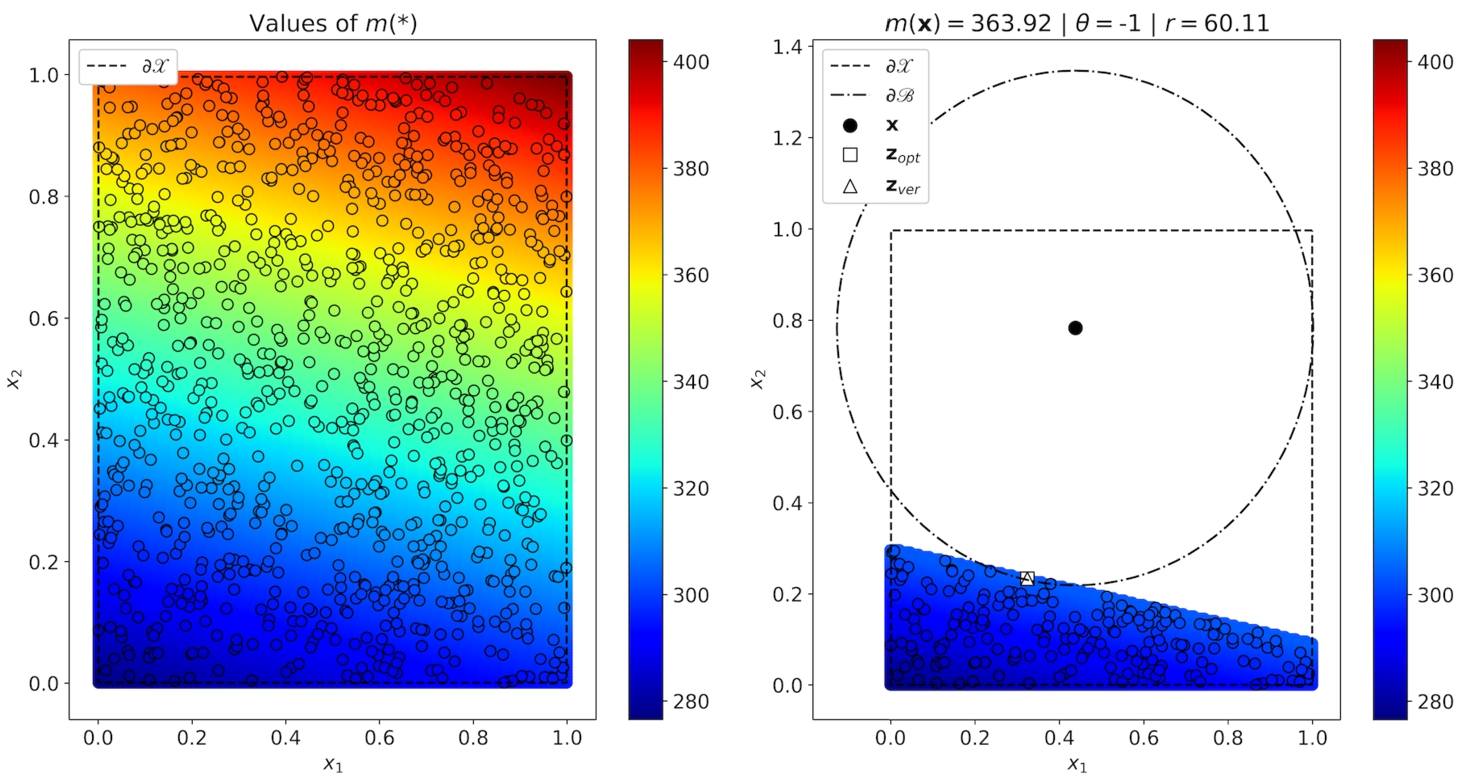

Original and counterfactual points by the parameter

Results of numerical experiments for the black-box Cox model trained on synthetic data.

Similar results cannot be visualized for the second type of synthetic data when feature vectors have the dimensionality 20. Therefore, we present them in Table 1 jointly with numerical results for the two-dimensional data. Parameters

The last three columns also display the relationship between

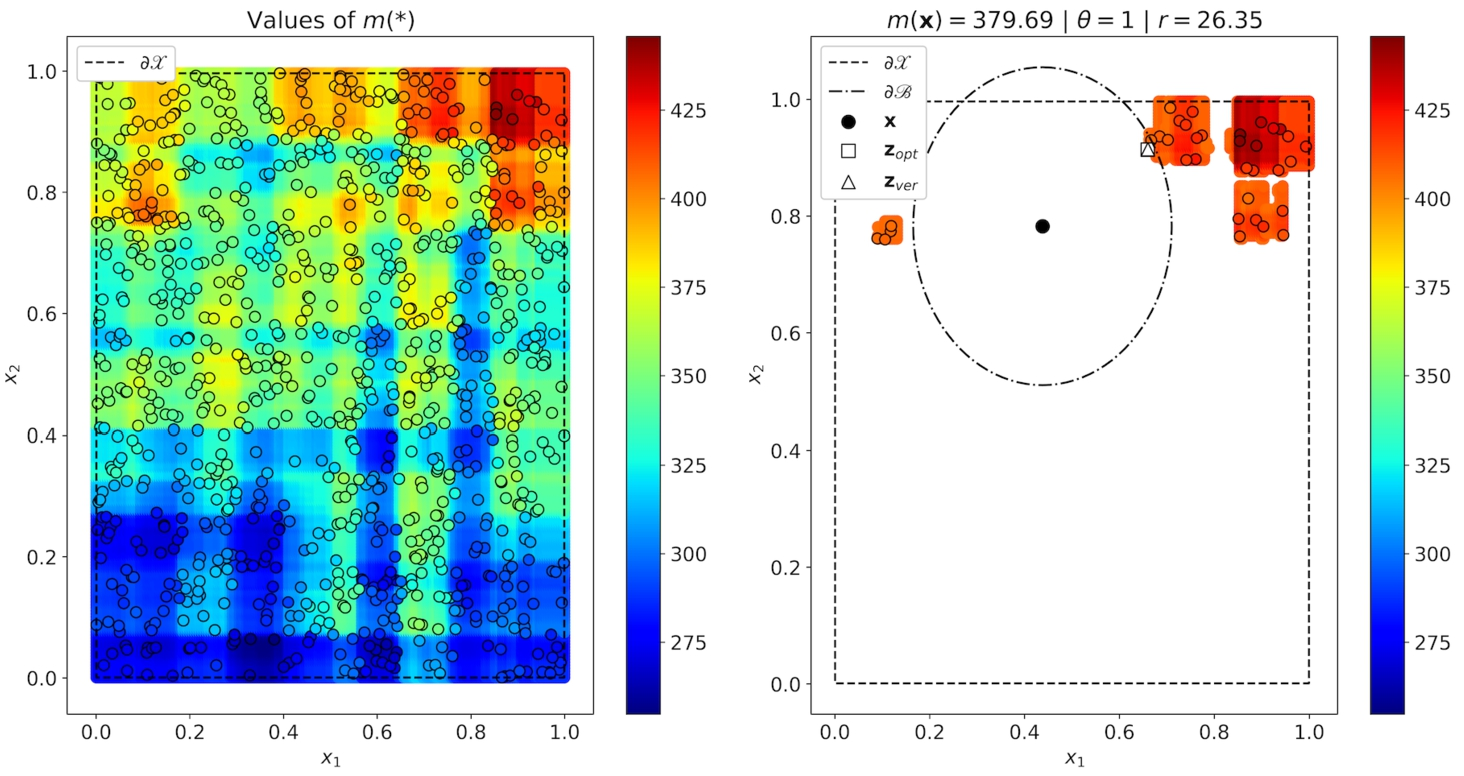

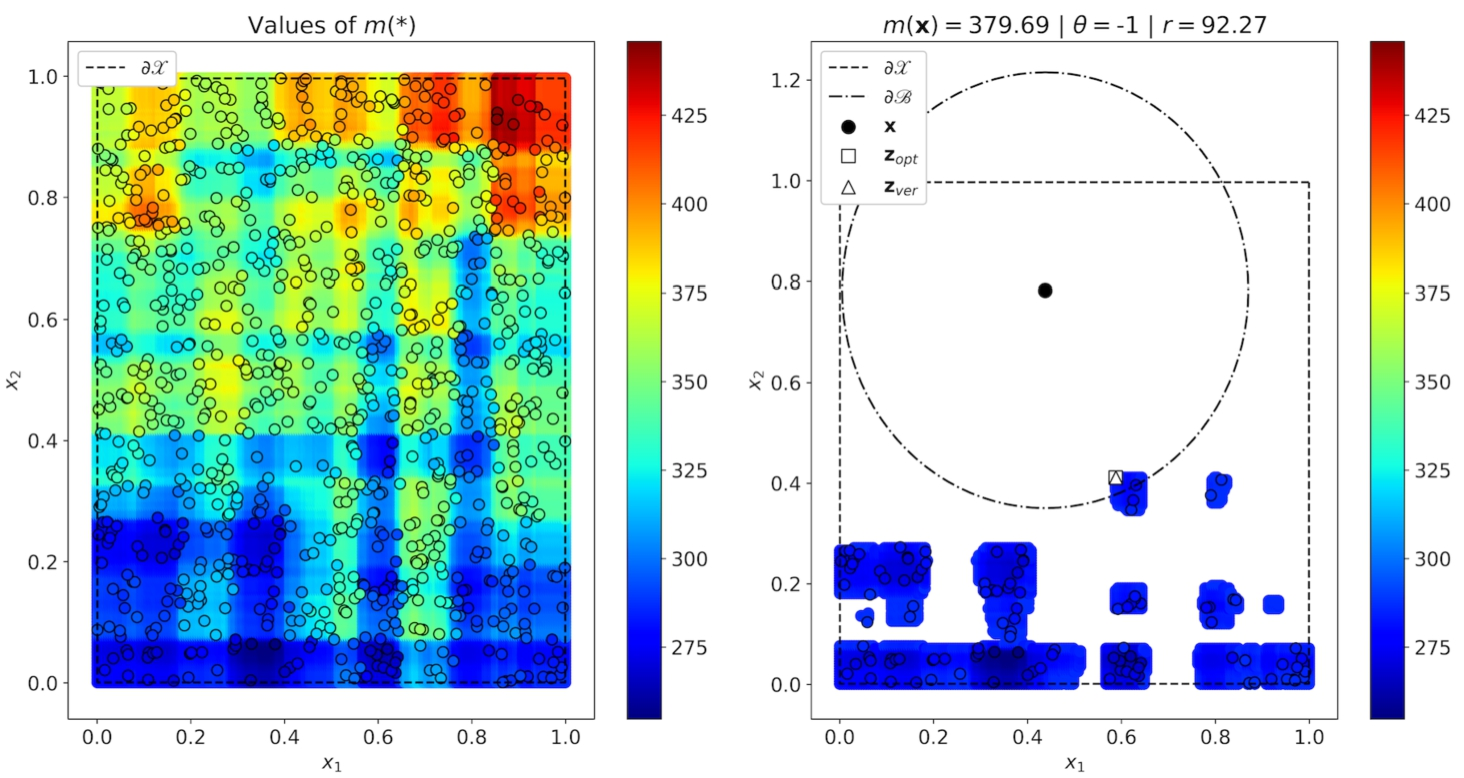

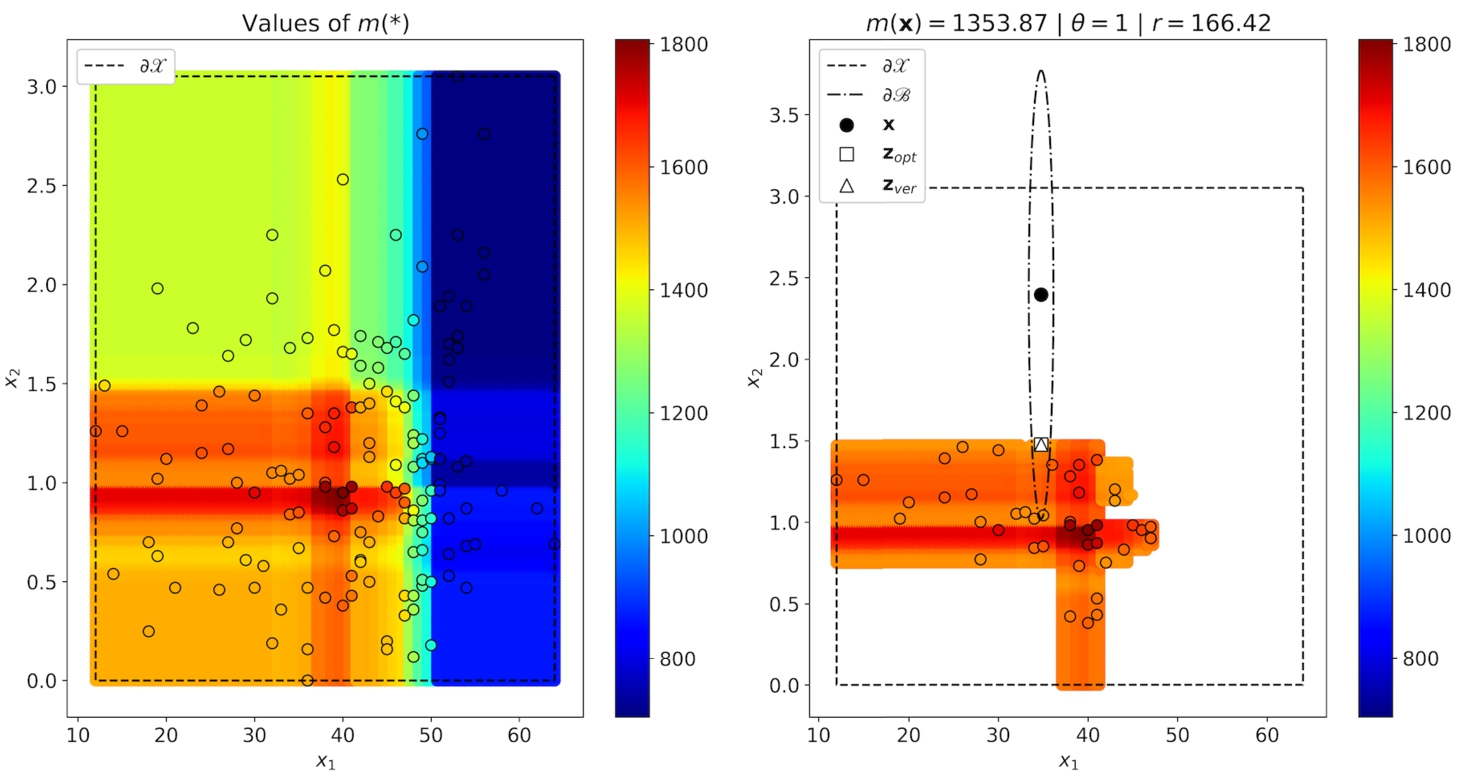

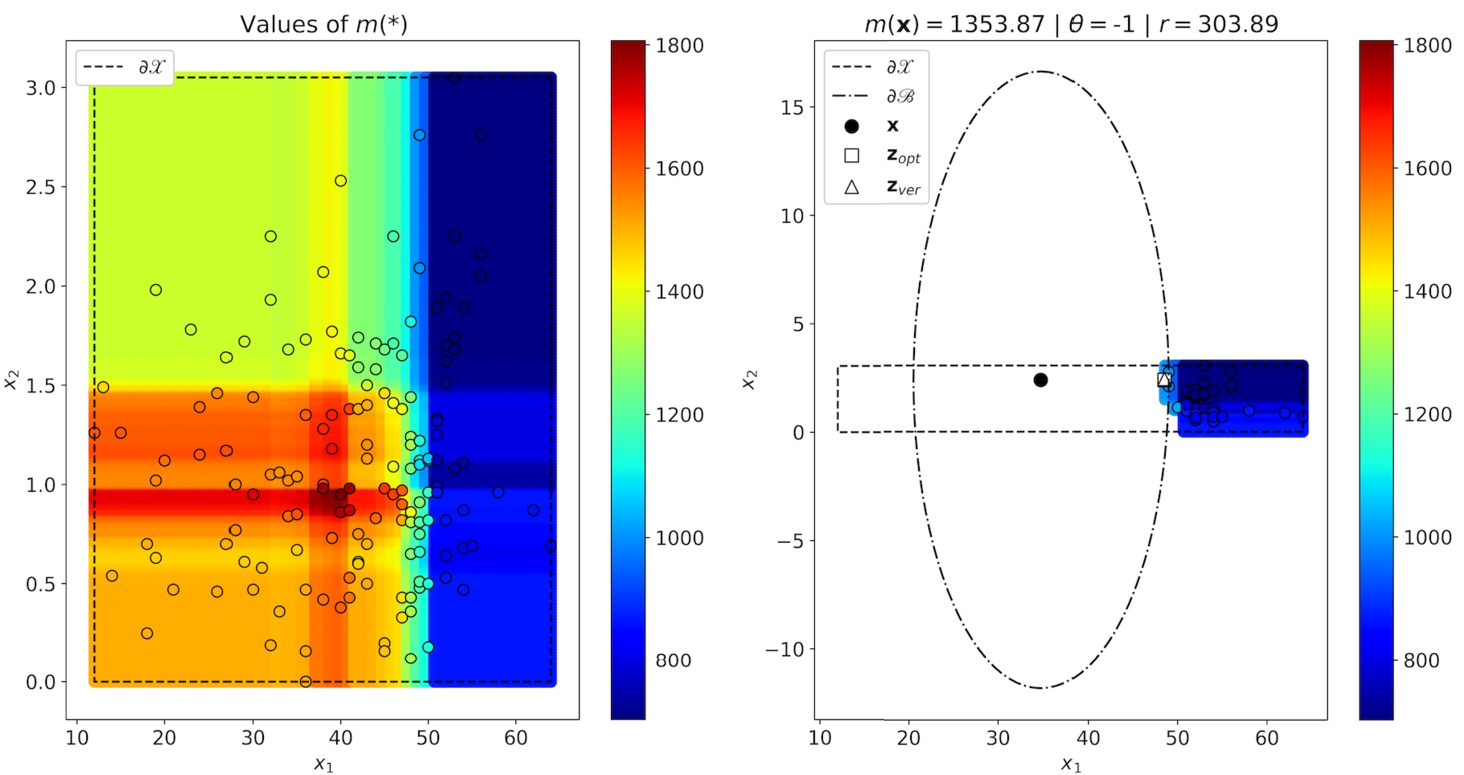

The second part of numerical experiments is performed with the RSF as a black-box model. The RSF consists of 250 decision survival trees. The results are shown in Figs. 3–4. In these cases,

Original and counterfactual points by the parameter

Original and counterfactual points by the parameter

Results of experiments with training data having two- and twenty-dimensional feature vectors are presented in Table 2. It can be seen from Table 2 that

Results of numerical experiments for the black-box RSF trained on synthetic data.

To study the accuracy of the proposed method, we perform testing using

Values of r and θ are randomly selected. Table 3 shows the above accuracy measures for

Accuracymeasures

We consider the following real datasets to study the proposed approach: Stanford2 and Myeloid. The datasets can be downloaded via R package “survival” and their brief descriptions can be also found in

The dataset Stanford2 consists of survival data of patients on the waiting list for the Stanford heart transplant program (Escobar and Meeker, 1992). It contains 184 patients. The number of features is 2 plus 3 variables: time to death, the event indicator, the subject identifier.

The dataset Myeloid is based on a trial in acute myeloid leukemia (Le-Rademacher et al., 2018). It contains 646 patients. The number of features is 5 plus 3 variables: time to death, the event indicator, the subject identifier. In this dataset, we do not consider the feature “sex” because it cannot be changed. Moreover, we consider two cases for the feature “trt” (treatment arm), when it takes values “A” and “B”. In other words, we divide all patients into two groups depending on the treatment arm. As a result, we have three datasets: Stanford2 and Myeloid-A and Myeloid-B.

The Black-Box Cox Model

Original and counterfactual points by the parameter

Since examples from the dataset Stanford2 have two features which can be changed (age

Original and counterfactual points by the parameter

Results of experiments with the Cox model trained on datasets Stanford2, Myeloid-A and Myeloid-B are shown in Table 4. One can see that the proposed method also provides exact results for datasets Myeloid-A and Myeloid-B.

Results of numerical experiments for the black-box Cox model trained on real data.

Results for the black-box RSF trained on the dataset Stanford2 are presented in Figs. 7–8. We again see that

Original and counterfactual points by the parameter

Original and counterfactual points by the parameter

Results of numerical experiments for the black-box RSF trained on real data.

To study the accuracy of the proposed method on real data, we perform testing using

Accuracymeasures

On the one hand, the proposed method and its illustration by means of numerical examples extend the class of explanation methods and algorithms dealing with survival data, which include methods like SurvLIME (Kovalev et al., 2020), SurvLIME-KS (Kovalev and Utkin, 2020), SurvLIME-Inf (Utkin et al., 2020). On the other hand, the method also extends the class of counterfactual explanation models which are becoming increasingly important for interpreting and explaining predictions of many machine learning diagnostic systems. To the best of our knowledge, none of the available counterfactual explanation methods explain the survival analysis functional predictions, for example, the SF. Moreover, in spite of importance of the counterfactual explanation, there are only a few papers discussing its meaning and its real applications in medicine, and there are no papers which discuss the counterfactual explanation in terms of survival analysis.

At the same time, a choice of a correct personalized treatment for a patient is the most actual problem. Petrocelli (2013) pointed out that counterfactual thinking as cognitively available representations of undesirable outcomes impact on decision making in medicine. A former undesirable experience of a doctor with a patient can change the doctor’s decisions in the next similar case.

The counterfactual problem can be met in the framework of the heterogeneous treatment effect analysis (Athey and Imbens, 2016; Kallus, 2016; Kunzel et al., 2019; Wager and Athey, 2015). The combination of this counterfactual problem with survival analysis was investigated by Zhang et al. (2017), where the authors try to answer the counterfactual questions: what would the survival outcome of a treated patient be, if he had not accepted the treatment; what would the outcome of an untreated patient be, if he had been treated? Answers on these questions add up to survival analysis of two groups of patients: treated and untreated.

The counterfactual explanation aims to implicitly identify many patient subgroups taking into account all their characteristics and to find an optimal treatment which can be regarded as the personalized treatment. At that, the outcome of every patient is the SF or the CHF depending on the corresponding subgroup. This identification is carried out under condition that the explained black-box survival model is perfect.

The most important question arising with respect to the proposed method is what the counterfactual explanations, taking into account SFs or CHFs, mean. It can be seen from the results that predictions of survival machine learning models differ from the standard classification or regression predictions which are mainly point-valued and have the well-known meanings. Even if we have a probability distribution defined on classes as a prediction in classification, we choose a class with the largest probability. Survival models provide predictions which are not familiar to a doctor or a user. Moreover, it is difficult to expect that a doctor is thinking in terms of basic concepts from survival analysis, and their decisions are represented in the form of SFs or CHFs. We have discussed in Kovalev et al. (2020) that a doctor can consider some point-valued measures, for instance, the mean time to event, the probability of event before some time. The same can be applied to counterfactual explanations. For instance, a doctor knows that a certain mean time to recession of a patient, which is attributable to patients from a subgroup, can be achieved by applying some treatment. The proposed method allows us to find an “optimal” treatment to some extent, which can move the patient to the required subgroup. Counterfactuals can also help to test whether the survival characteristics of a patient would have occurred had some precondition been different. Moreover, counterfactuals help a doctor to decide which intervention will move a patient out of an at-risk group under condition that the at-risk group is defined by the mean time to a certain event.

Another important question for discussion is why the term “machine learning survival models” is used in the paper instead of the term “survival models”. The point is that the paper aims to explain survival models which are black boxes that is only their inputs and the corresponding outputs are known. Many machine learning survival models can be regarded as black-box models, for example, RSFs, deep survival models, the survival SVM, etc. (Wang et al., 2019). In contrast to these black-box models, there are many survival models which are not black boxes, i.e. they are self-explainable and do not need to be explained. For example, the Cox model is self-explainable because its coefficients characterize impacts of covariates. We used the Cox model in numerical examples as the black-box one in order to compare its results with results of the proposed explanation method. At the same time, we also used the RSF which is a black-box machine learning survival model. This model is machine learning because it is an survival extension of the well-known ensemble-based machine learning model, Random Forest.

We have mentioned that one of the important difficulties of using the proposed method is to take into account categorical features. The difficulty is that the optimization problem cannot handle categorical data and becomes a mixed integer convex optimization problem whose solving is a difficult task in general. Sharma et al. (2019) proposed a genetic algorithm called CERTIFAI to partially cope with the problem and for computing counterfactuals. The same problem was studied by Russel (2019). Nevertheless, an efficient solver for this problem is a direction for further research. There are also some modifications of the original PSO taking into account categorical and integer features (Chowdhury et al., 2013; Laskari et al., 2002; Strasser et al., 2016). However, their application to the considered explanation problem is another direction for further research. We would like to point out an interesting and simple method for taking into account categorical features proposed by Kitayama and Yasuda (2006). According to this method, penalty functions of a specific form are introduced, and discrete conditions on the variables can be treated in terms of the penalty functions. Then the augmented objective function becomes multimodal and extrema (minima) are generated near discrete values. This simple way can be a candidate for the extension of this method on the case of computing optimal counterfactuals.

We have studied only one criterion for comparison of SFs of the original example and the counterfactual. This criterion is the difference between mean values. In fact, this criterion implicitly defines different classes of examples. However, other criteria can be applied to the problem and to separating the classes, for example, difference between values of SFs at some time moment. The median is also useful to consider, as mean is often very hard to interpret due to the influence of the tail, and that is beyond knowledge for most practitioners. The study of other criteria is also an important direction for further research.

Another interesting problem is when the feature vector is an image, for example, a computer tomography image of an organ. In this case, we have a high-dimensional explanation problem whose efficient solution is also a direction for further research.

Footnotes

Acknowledgements

The authors would like to express their appreciation to the anonymous referees whose very valuable comments have improved the paper.