Abstract

The information provided to the public by the NHS Breast Screening Programme has been criticized for lack of balance, omission of information on harms and substantially exaggerated estimates of benefit. These shortcomings have been particularly evident in the various invitation leaflets for breast screening and in the Programme's own 2008 Annual Review, which celebrated 20 years of screening. The debate on screening has been heated after new data published in the last two years questioned the benefit and documented substantial harm. We therefore analysed whether the recent debate and new pivotal data about breast screening has had any impact on the contents of the new 2010 leaflet and on the 2010 Annual Review. We conclude that spokespeople for the Programme have stuck to the beliefs about benefit that prevailed 25 years ago. Concerns about over-diagnosis have not been addressed either and official documents still downplay this most important harm of breast cancer screening.

Calls for more honesty from the NHS Breast Screening Programme

It has been pointed out repeatedly over many years that the information offered to women by the NHS Breast Screening Programme (NHS BSP) lacks balance, omits information on harms and substantially exaggerates the benefit. 1–4 These shortcomings were particularly evident in the various invitation leaflets for breast screening and in the Programme's own 2008 Annual Review, which celebrated 20 years of screening. 2–4

Our criticism of the revised leaflet in February 2009 3 led to a public call for improvements from a broad range of researchers and others. 5 In response, Professor Sir Mike Richards, the National Director for Cancer and End of Life Care, promised that an improved version of the leaflet would be available by the end of 2009, 6 but it did not appear until December 2010, shortly before Christmas. 7

The debate on screening has been heated after new data questioned the benefit and documented substantial harm. 8–17 An exception was a 2010 study by Stephen Duffy et al. 18 Duffy, a central figure in the NHS BSP, was quoted in the 2008 Annual Review for saying that, ‘The 10-year fatality of screen-detected tumours is 50% lower than that of symptomatic tumours’. 4 That statement is seriously misleading, as screen-detected tumours have an excellent prognosis because of length bias and over-diagnosis of many harmless cases. Further, it is a basic premise for screening evaluation that one cannot compare breast cancer mortality in attenders and non-attenders due to selection bias. 4

Duffy's new study is also seriously misleading. 19 He claimed a 28% reduction in breast cancer mortality in the UK due to screening, although there is none 12 (see below) and that few women are over-diagnosed, which is also wrong. 8,19 This resulted in a harm:benefit ratio of 2.5 women avoiding a breast cancer death for every 1 woman over-diagnosed, which is 25 times greater than the 1:10 ratio reported in the Cochrane review of the randomized trials. 20 The discrepancy was so huge that BMJ's editor, Fiona Godlee, asked an independent epidemiologist, Klim McPherson, to ‘take a look and come to a view’. 21 McPherson presented a strong criticism of Duffy's study and also criticized the NHS BSP for not having communicated the uncertainties about the benefit and the evidence of over-diagnosis to women. 22 He concluded that the service perhaps existed on its face validity plus concomitant scientific polarization in the face of uncertainty, which he would consider irresponsible. 22 Godlee repeated McPherson's call for ‘much more honesty from the NHS screening programme’ in an accompanying editorial. 21

We report here whether the recent debate and new pivotal data about breast screening have had any impact on the contents of the December 2010 leaflet 7 and on the 2010 Annual Review that was published in January 2011. 23

Breast cancer mortality – the facts

In the last two years, several studies in major medical journals have raised doubt about whether breast cancer screening is effective in today's setting. 11–14,17

Breast cancer mortality in the UK has decreased more in age groups that have never been screened than in age groups that could benefit. 19 Similarly, a study of 30 European countries found that breast cancer mortality decreased by 37% among women younger than 50 years, few of whom are invited to screening, and by 21% among women between 50–69 years of age. 14

Denmark has a unique control group, since only 20% of the population was screened during a 17-year period. In women who could benefit (between 55–74 years of age), breast cancer mortality decreased by 1% per year in screened areas and by 2% in the areas without screening. 12 In women too young to benefit (between 35–55 years), the decrease was 5% per year in the screened areas and 6% in the areas without screening. These findings were supported by a study from Norway, which also had a concomitant control group. 13

These negative results agree with the fact that screening has not led to a reduction in late-stage breast cancers. This was shown in a study of 14 countries and regions with at least 7 years of mammography screening where late-stage tumours were those over 20 mm in diameter at the time of diagnosis. 17 If screening does not reduce the incidence of late-stage cancer, it cannot have an effect on mortality.

Clearly, causes other than screening have been responsible for the large decline in breast cancer mortality observed in Europe over the past 20 years. One reason is that we have much better treatments, and they work whether or not the cancer is localized or has metastasized. 24 The other is increased breast cancer awareness. In Denmark, the average size of the tumours decreased from 33 mm in 1978–1979 to 24 mm only 10 years later. 25 This change occurred before screening started, and it did not lead to over-diagnosis. 9

How then, could Duffy et al. report that breast cancer mortality dropped more in the screened age group than in non-screened age groups, 18 when it isn't true? They cherry-picked the Two-County trial, which is notoriously unreliable, 20 and used a 38% reduction in breast cancer mortality, which is 2.5 times higher than the estimate based on all the trials. 10,20 They also used data from the NHS BSP but misrepresented them and even contradicted themselves, as they wrote that they considered the mortality stable in the unscreened age groups, but also reported a significant 18% decline in breast cancer mortality in women below 50 years of age. The decline in the age group 50–69 was only slightly larger, 27%, but by some obscure method described in a footnote to a table, they nevertheless concluded that screening had reduced breast cancer mortality by 28% compared with other age groups. Duffy's lack of transparency in his research methods is startling, and his result disagrees starkly with official data. Cancer Research UK writes: ‘Between 1989 and 2008 the breast cancer mortality rate fell by 44% in women aged 40–49 years; by 44% in women aged 50–64; by 37% in women aged 65–69; by 39% in women aged 15–39; and by 19% in women over 70’. 28 Thus, there is no sign of a screening effect.

In a figure, Duffy et al. lumped together data for women below 50 years. As deaths from breast cancer before the age of 30 years are extremely rare, 26 and as there are many women and girls in this age group, the graph is close to the x axis at the bottom of the figure, which conceals the inconvenient truth that there was a huge decline in mortality in young women.

Duffy et al. also combined data from the age group 50–64 years with 65–69 years, although screening of the latter group did not start before 2001. This conceals another inconvenient truth, namely that breast cancer mortality in the age group 50–64 years began to decline before the UK Programme started in 1988. 12

Breast cancer mortality – the propaganda

The recent findings constitute a serious challenge to the justification of screening, but they have been largely ignored by those responsible. The NHS Programme's Director, Professor Julietta Patnick, acknowledged in the 2010 Annual Review that the debate had ‘brought the Programme under intense scrutiny’. 23 But she also noted that ‘There is an abundance of evidence to show that breast screening helps save lives’.

The 2010 Annual Review 23 and the 2010 leaflet 7 repeat previous mortality estimates, although we and others have pointed out that they are wrong. 4,22 The public is told that screening reduces breast cancer mortality by 35% among participants; that one breast cancer death is prevented for every 400 women screened regularly over 10 years; and that 1400 lives are saved each year in the UK, which is the Programme's mantra whenever screening is questioned. 7,23

In the Annual Review 2010, the estimates are provided in a chapter written by Valerie Beral, Head of the Cancer Epidemiology Unit at the University of Oxford and Cancer Research UK. These estimates originate from a 2006 report described by Beral as the ‘UK's independent Advisory Committee on Breast Cancer Screening … focusing on actual experience’. 27 The report was not independent, as it was chaired by Beral, and it did not ‘focus on actual experience’. The 1400 lives were back-calculated based on breast cancer mortality rates in 2003 and the assumption that if there had not been screening, there would have been 54% (100%/65%) more breast cancer fatalities among the participants. 27

Ironically, there are data ‘focusing on actual experience’. In 2000, Blanks et al. provided such data in the BMJ. 28 They credited screening for only a 6% reduction in breast cancer mortality in England and Wales and estimated that 320 lives were saved annually. In 2002, the Programme's website noted that at least 300 lives are saved, with reference to Blanks' study, but the next sentence read: ‘That figure is set to rise to 1,250 by 2010’. 29 Set to rise? By whom? There was no explanation how this could be achieved.

It was hoped that the breast cancer death rate in women aged 55–69 years would be halved by 2010 compared to that seen in 1990, and the reference to this statement was ‘Dr Roger Blanks, The Institute of Cancer Research’. 29 However, as explained above, there has not been a visible effect of screening in the UK. 26 Beral ignored this, although the data are available on the website of Cancer Research UK, an organization Beral heads. She noted in the 2010 Annual Review that ‘Death rates from breast cancer in the UK have fallen by some 40% since 1990 – in part from earlier diagnosis associated with screening and in part from improvements in treatment’. This is astonishingly misleading, considering that a similar fall was seen in those not invited to screening.

Elsewhere in the material we downloaded from the Programme's website in 2002, the prophecy of 1250 lives saved in 2010 had already come true in 2002, overnight it seemed, up from just 300. Julietta Patnick declared that ‘We estimate that screening is now saving on average 1,250 lives a year’.

The 35% reduction in breast cancer mortality that is the foundation for the estimated screening benefit in the 2010 Annual Review is also misleading. Independent systematic reviews of the randomized trials reported reductions of only 15–16%. 20,30

The Programme's third estimate, preventing one breast cancer death for every 400 women screened regularly over 10 years, is wrong by a factor of five. 20 We have been unable to find any evidence for this estimate in reports from the Programme or elsewhere. The 1993 meta-analysis of the Swedish trials reported that one breast cancer death was avoided for every 1000 invited women after 10 years. 31 The number is 2000 if we use the more realistic estimates of a 15% reduction in breast cancer mortality.

Beral mentions that ‘In the 1970s and 1980s, when the randomized trials of screening were done in Scandinavia and the USA, the treatment of breast cancer is not really relevant for women in England today’. 23 We agree, but not on the implications. It means that the effect of screening is much smaller today than when the trials were carried out when not even tamoxifen was commonly used. When fewer die of breast cancer, screening will benefit fewer women. Surprisingly, however, the Review does not acknowledge this obvious implication for screening, but merely notes that the previous estimate of one saved for every 400 screened ‘still applies today’. 7,23 This comes with no explanation.

The Review describes that the Forrest Committee, in its 1986 report that was the foundation of the UK Programme, ‘analysed the available data, which largely came from trials in the US and Sweden in the 1970s’. 23 This wording suggests there were several trials. As a matter of fact, the report mentions only two trials, the New York Trial performed in the 1960s and incomplete data from the Two-County trial. The Forrest report promised unrealistically, ‘that deaths from breast cancer in women aged 50–65 years who are offered screening by mammography can be reduced by one-third or more’, 32 which key people in the NHS Programme still think they can obtain today, undaunted by new evidence.

Over-diagnosis – the propaganda and the facts

Major publications from the Programme and public announcements have provided contradictory information about over-diagnosis.

The 2006 Advisory Committee report acknowledged the existence of over-diagnosis and estimated there would be 15% more diagnoses after 10 years of screening. 27 Two years later, in the 26-page 2008 Annual Review, over-diagnosis was not mentioned at all, as if it did not exist. 4 In July and August 2010, the UK press covered McPherson's paper extensively, and his devastating criticism of Duffy's methods and results. He noted, for example, that Duffy's ratio of benefit versus harm was 10 times more positive than that offered by the Malmö trial investigators 22 (which was already 2.5 times too positive). 33

Beral stated in an interview in August 2010 that ‘There are claims that cancer is over-diagnosed – but it's a misnomer. We don't know there are some cancers that won't progress - it is an unanswerable question.’ 34 This remark disagrees with the description of over-diagnosis in her own 2006 Advisory Committee report: ‘It is inevitable that women who are screened regularly will be somewhat more likely than unscreened women to be diagnosed with breast cancer at a given age … For certain individuals, bringing forward the diagnosis of breast cancer would be of little benefit, as they would die from another cause before their breast cancer presented clinically. Such individuals would, in retrospect, have been subjected to over-diagnosis and hence overtreatment of their cancer.’ 27 Furthermore, the question of over-diagnosis is no more ‘unanswerable’ than that regarding the mortality reduction. Both have been quantified in randomized trials and in observational studies with a concomitant control group, similar to the screened group. 9,12,13,20

In the 2010 Annual Review, after four years where over-diagnosis apparently did not exist in the minds of NHS BSP executives, it was allowed back in again. The Review repeated the estimate from the 2006 report: 27 ‘1 in 8 women diagnosed with breast cancer through the NHSBSP would not have had the cancer diagnosed if she had not gone to screening’. It is obscure where this low estimate comes from. Beral asserts that it derives from an ‘all-encompassing review of all the evidence from the studies’. 23 This is definitely not correct. The 2006 report mentions that the number of breast cancers diagnosed in 1000 women over a 10-year period is calculated to be 30 and 26 for women with and without screening, respectively, based on the year 2002–2003. 27 While the numbers for women with screening appears to be observed numbers, the calculations and assumptions behind the hypothetical group of non-screened women are not shown. 27

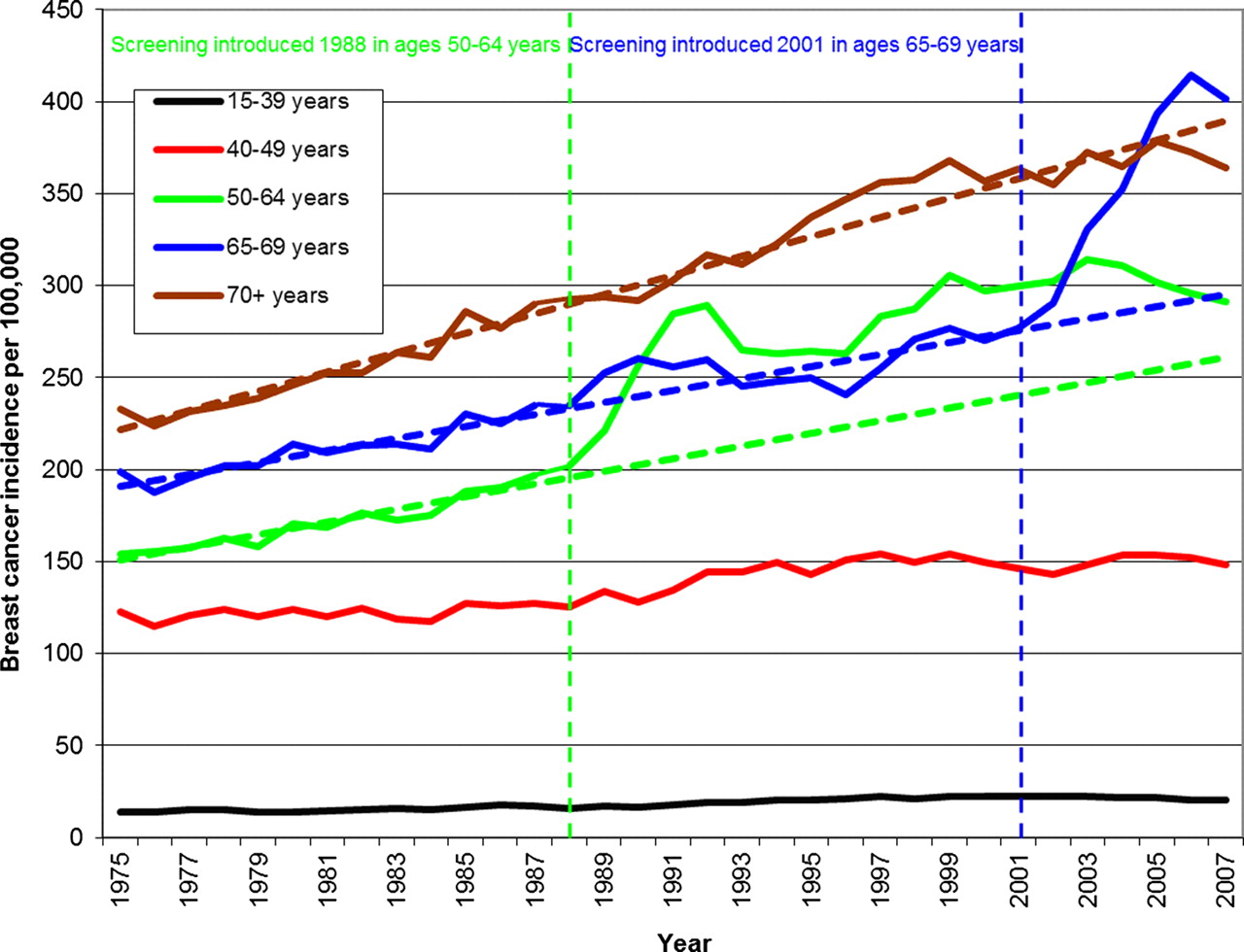

We showed in 2009 that the NHS Programme has resulted in 57% over-diagnosis in the age group 50–64 years. 8 When screening was extended to the age group 65–70 years in 2001, a sharp rise in incidence occurred in these women, very similar to that observed in unscreened women 20 years before (Figure 1), 35 although these women had been offered screening many times when they were younger and had already contributed to a massive increase in the incidence of carcinoma in situ and invasive cancers. This is difficult to explain unless we accept that many screen-detected cancers would have regressed spontaneously if left alone. 36

Incidence rates of invasive breast cancer (DCIS not included) in the UK 1975–2007. Background incidence projected from the pre-screening trends (dotted lines) (see

Other recent studies have shown that screening leads to so much over-diagnosis that one in three cancers identified in a screened population appears to have been over-diagnosed. 8,9,15,16

Again, the 2010 study by Duffy et al. 18 is telling, as it seriously underestimated over-diagnosis by a variety of doubtful methods. First, the authors excluded carcinoma in situ, which Duffy did not state in the paper but admitted when asked on national British radio. 19 Carcinoma in situ constitutes 21% of the diagnoses made through the NHS BSP, and these lesions are more often treated by mastectomy than invasive breast cancer. 23 Second, the authors used an unverified model with incorrect assumptions. It is clearly wrong to assume that essentially all the increase in incidence with screening represents earlier diagnosis, which they appear to have done. Third, they adjusted for a compensatory decline in breast cancer incidence in UK women who had passed the age limit for screening, which does not exist (Figure 1). 8 With these methods, Duffy et al. estimated that only 12% of cancers are over-diagnosed. 18

Duffy et al. were also selective in their choice of references. They claimed that only 37% of breast cancers are screen-detected, but this was based on a 200-word conference abstract. 19 The abstract mentions an unexplained algorithm to evaluate the West Midlands screening programme. Based on this, Duffy et al. concluded that our estimate of over-diagnosis implies that virtually all screen-detected cancers are over-diagnosed and called it an ‘absurd and frankly incredible conclusion’. What is absurd is the degree of their cherry-picking and misleading presentation of data. It is well known that between half and two-thirds of cancers are detected through screening. For example, 68% of cancers were screen-detected in the UK in 2006, 19 not 37% as Duffy et al. write. Our estimate of over-diagnosis therefore implies that about half the screen-detected breast cancers in the UK are over-diagnosed.

Is over-diagnosis mentioned in the 2010 leaflet?

UK women invited to screening are still being kept in the dark about over-diagnosis. The new leaflet does not use the term over-diagnosis, and although it speaks about ‘benefits’ it does not use the equivalent term ‘harms’ but the less negative ‘downsides’.

The only hint at over-diagnosis is this ambiguous sentence: ‘Screening can find cancers which are treated but which may not otherwise have been found during your lifetime’. 7 Some women could interpret this as ‘Great! Screening finds cancers that are hard to find – that's why I go for screening’.

We subjected the ambiguous sentence to a test. One of us gave a lecture to 4-year medical students about research methods three days after the leaflet came out and asked them to tick off one of three boxes on a piece of paper about whether they perceived this as ‘good for women who are screened’, ‘bad for women who are screened’, or ‘I can't tell’. They were unprepared, as they had not been lectured about screening. Thirty-one of the 41 students (76%) handed in their replies. Two felt it was good for women to have these cancers detected, 10 felt it was bad, 15 couldn't tell, and four gave no reply. They were also asked what they thought laypersons would make out of it. Only seven felt laypersons would think about over-diagnosis whereas 19 felt they would perceive the message as positive, e.g. a chance of cure.

This was a simple exercise that can be criticized but it provides food for thought. It is difficult to get into medical school and it requires high grades. We therefore suspect that many women invited to screening would also understand little of the ambiguous sentence. This agrees poorly with assertions that the leaflet was rigorously tested in a series of focus groups comprising women at appropriate ages, and that its authors consulted with parties interested in the content, ‘before the leaflet was finally reviewed by clinical and language experts to ensure accuracy and clarity’. 37

There is no quantification of over-diagnosis in the leaflet and no estimate of the balance between benefit and harm.

Increase in mastectomies is denied

As the mortality benefit from screening is increasingly being questioned, screening proponents have changed their focus into promising fewer mastectomies due to early detection. 38 The 2010 Annual Review mentions, with reference to the 2006 report, that ‘1 in 8 women in England with breast cancer diagnosed through the Programme would be spared a mastectomy’, and the leaflet promises that ‘If a breast cancer is found early, you are less likely to have a mastectomy’.

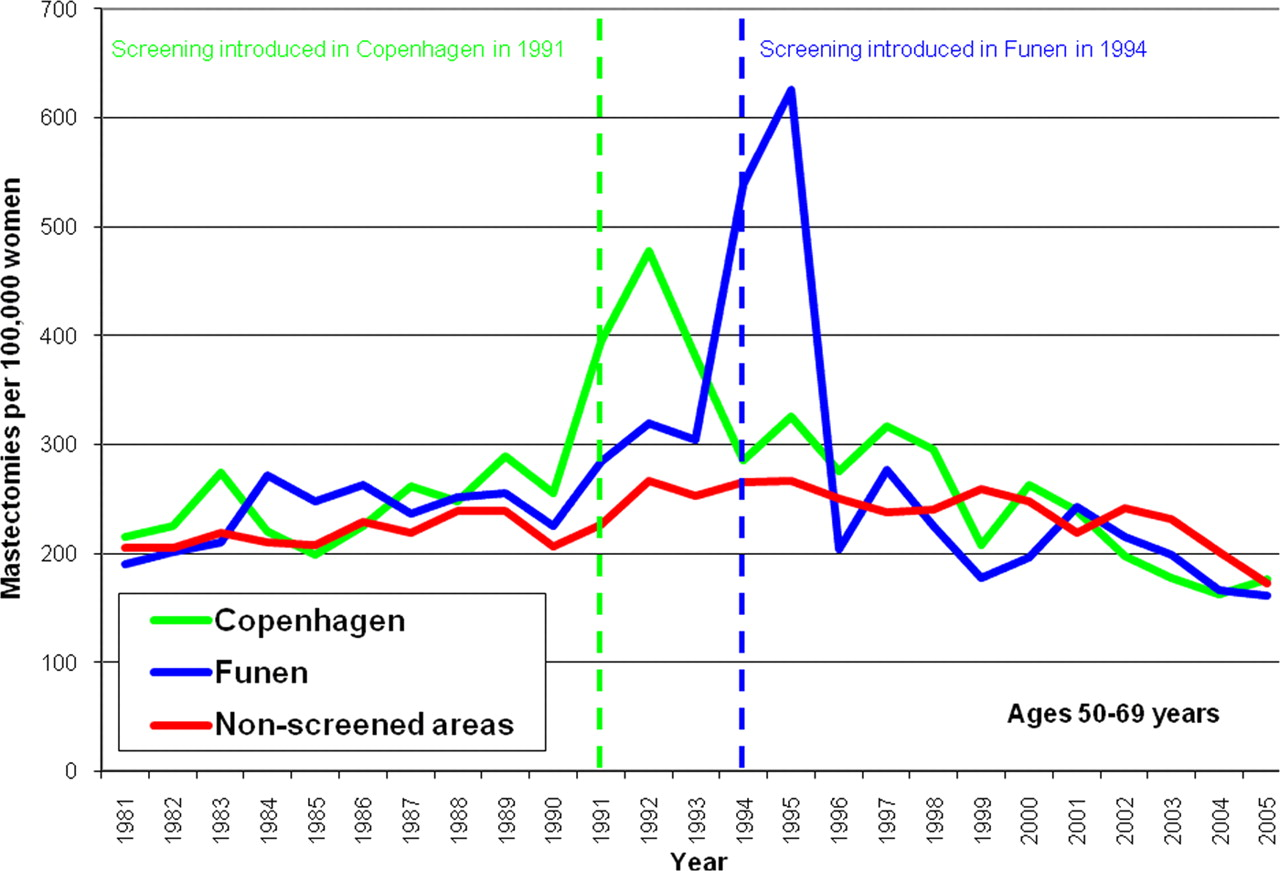

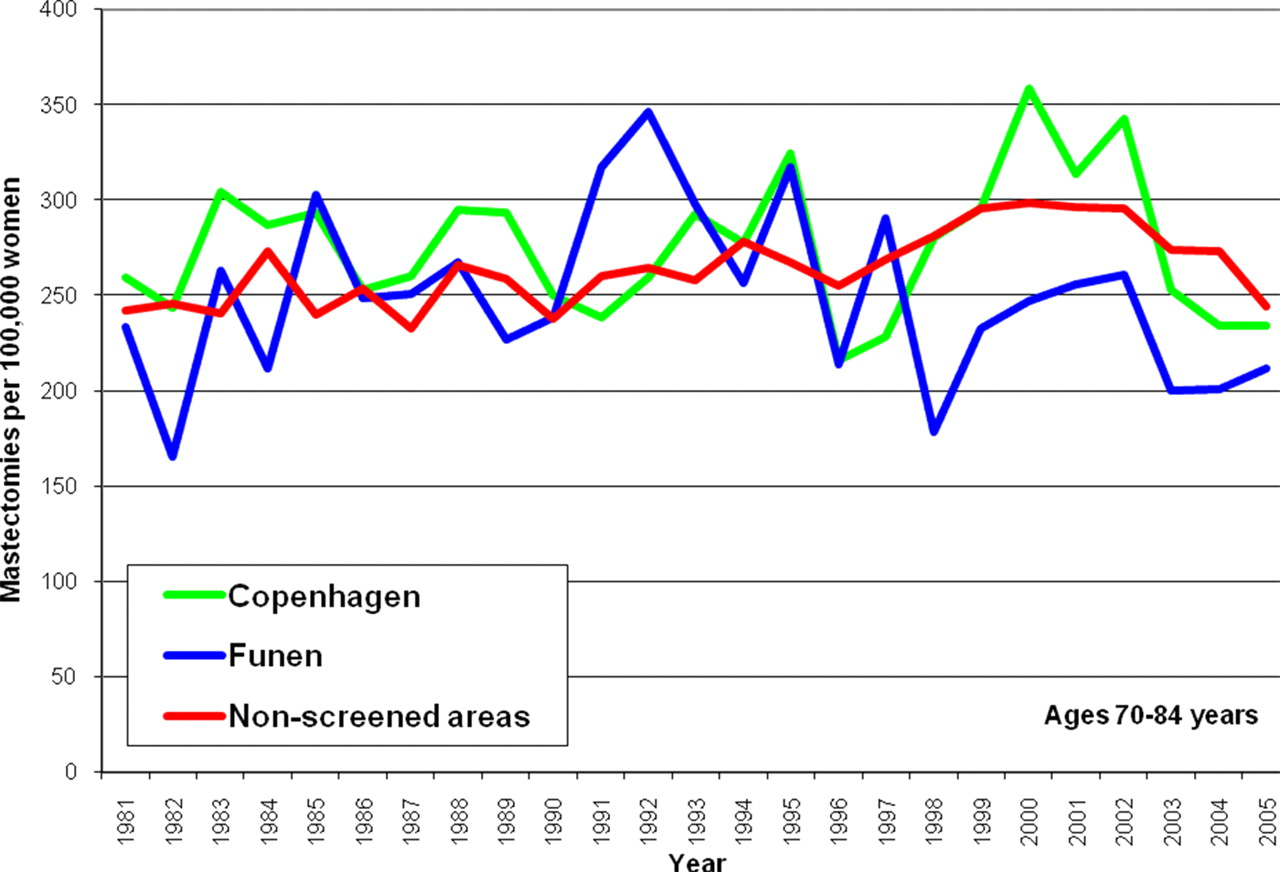

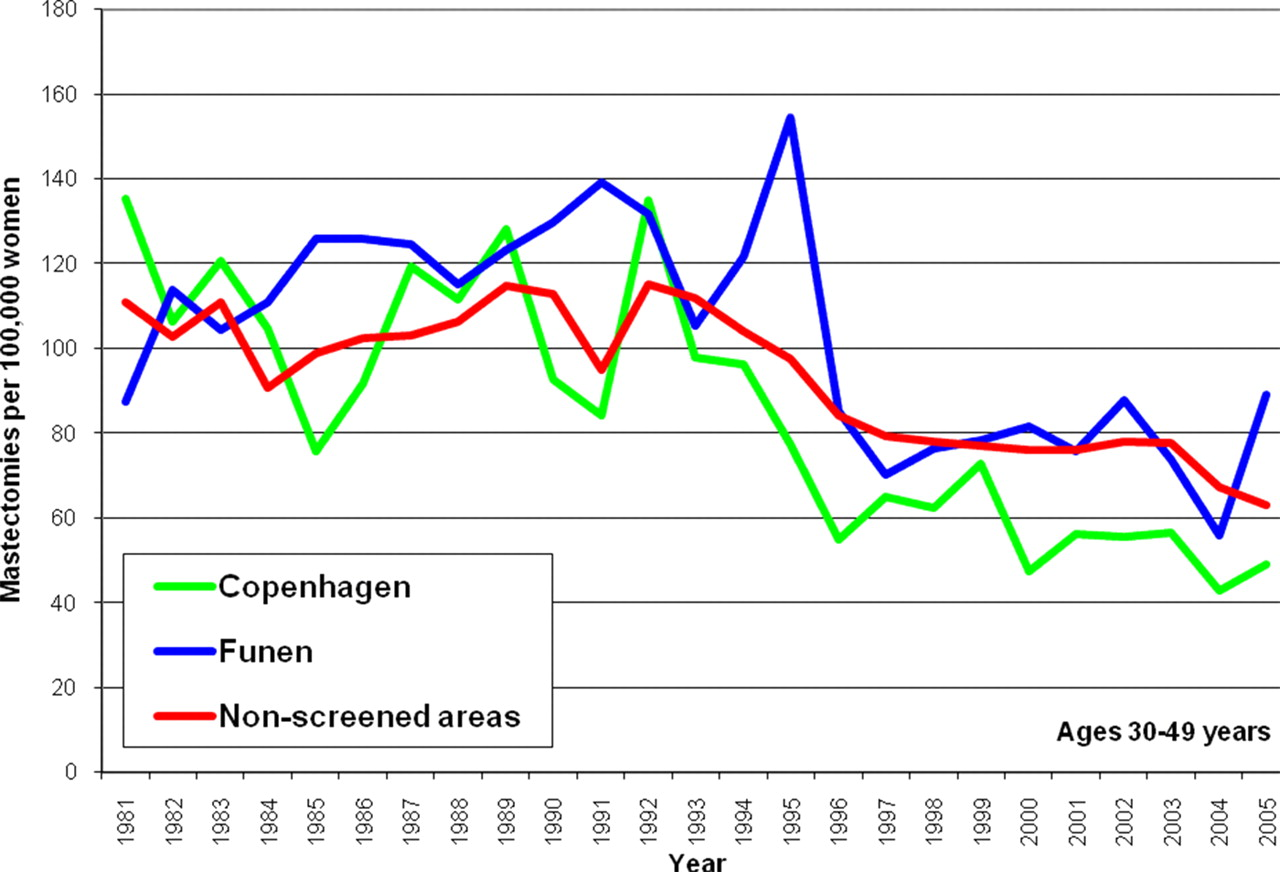

These statements are seriously misleading, as they suggest that by going to screening, the risk of losing a breast is reduced. It is not. Because of over-diagnosis, screening increases the use of mastectomies. 20,35 The many extra mastectomies when screening started in Denmark (Figure 2) have not been compensated by a later decline in screened areas compared to non-screened areas (Figure 2), or by a decline in mastectomy use in previously screened women (Figure 3). It is also clear that recent declines in mastectomies are due to a general policy change, both because they occur to a similar extent in screened and non-screened areas within the screened age range, and also because a decline has occurred in young women who were never invited to screening (Figure 4).

Mastectomy rates in women aged 50–69 years in Denmark. StatBank Denmark (see

Mastectomy rates in women aged 70–85 years in Denmark. StatBank Denmark (see

Mastectomy rates in women aged 30–49 years in Denmark. StatBank Denmark (see

Conclusions

Information provided to the public by the NHS Breast Screening Programme is seriously misleading, downplays the most important harm, and has remained largely unaffected by repeated criticism and pivotal research questioning the benefits of screening and documenting substantial over-diagnosis. This is unacceptable, and it was recently underlined in the Salzburg Statement that involvement in decision-making and high quality information are requirements in modern healthcare. 39 Spokespeople for the Programme have stuck to the beliefs about benefit that prevailed 25 years ago and continue to question the issue of over-diagnosis. Women therefore cannot make an informed choice whether to participate in screening based on the information the Programme provides. This must be changed.

DECLARATIONS

Competing interests

None declared

Funding

None

Ethical approval

Not applicable

Guarantor

PCG

Contributorship

PCG wrote the first draft of the 2010 NHS mammography screening invitation; KJJ drafted the sections regarding the NHS Breast Screening Programme Annual Review 2010, revised the sections on the invitation, and produced the graphs; PCG conducted the student survey and revised the manuscript

Acknowledgements

None