Abstract

Employing classical and behavioral decision theories, this article explores, based on decision cases, how decisions by AI and humans might differ in the context of top management decision-making in projects. Based on a phenomenological analysis of 37 top managers’ responses and corresponding answers provided by artificial intelligence (AI), findings indicate that, as project decision-making becomes hybrid, systematic differences in important dimensions have the potential to steer projects into different directions. Aimed at provoking thought, this pioneering article proposes a conceptual framework for project decision-making in the age of generative AI (GenAI) and highlights theoretical and practical implications of carelessly delegating big decisions to AI.

Keywords

Introduction

Since 1956 man has been working on something called artificial intelligence (AI) (Xu et al., 2021); whether it really qualifies as intelligence is a matter for a philosophical discussion (Bringsjord & Govindarajulu, 2022). Due to stellar advances in technology, AI has now become extremely powerful. Most recently, in an article published in Neuroscience News, AI outperformed humans in the Torrance Creativity Test (Shimek, 2023). This finding was interpreted as that AI may be developing creative ability on par with or even surpassing humans. Intelligent or not, AI is by many considered more than just another generation of IT tools (Benbya et al., 2021). From a sociological or even philosophical perspective, it challenges traditional notions of human exceptionalism (Spivey et al., 2022; Golinowski, 2024; Eagleman & Brandt, 2017) in that, with creativity and decision-making, activities thought of as uniquely human are being taken over by AI (Bridewell et al., 2024). And now—with the breakthrough of ChatGPT—AI has become ubiquitous (Euchner, 2023).

AI has also found its way into project management. As AI is becoming a transformative force (Müller et al., 2024), particularly in the context of Industry 5.0, here, as in other areas, traditional notions are being challenged (Holzmann et al., 2022). And here, equally, AI is being (increasingly) used for more creative or strategic tasks, which, apart from project planning (Barcaui & Monat, 2023), includes decision-making (Ahmed et al., 2024; Zheng et al., 2023; Jha & Iyer, 2021). As AI enters both project management and decision-making, and as project scholars increasingly engage with the topic of AI (Geraldi et al., 2024; Wolf & Stock-Homburg, 2023), two trends are emerging: (1) AI, which has previously been used predominantly for simple, repetitive tasks (Edwards et al., 2000), is entering strategic decision-making (Kiron & Schrage, 2019); and (2) as discussed in Mariani (2024) and Barcaui and Monat (2023), it is becoming increasingly clear that AI will not replace the project manager anytime soon. Rather, there seems to be a tendency toward hybrid use (Zhang et al., 2022), meaning that the contributions of humans and AI will merge into each other. This hybrid use is not least a topic in Industry 5.0, where the vision is for AI and humans to jointly act as decision agents, and where the impacts of making decisions based on AI and ways of ensuring a balance on human aspects are still far from clear (Chandel & Sharma, 2021; Abdel-Basset et al., 2025).

As AI is now finding its way into all walks of life, this raises important questions: How does AI actually make decisions and where? Is it possible that AI is applied without those affected by a decision or even the decision-maker even knowing? Does man control the machine, or does the machine control man? Does the use of AI influence the results in certain directions? And does that even matter? And if, over time, human aspects of decision-making become so intertwined with that of machines (AI) that they will become inseparable, this raises the question: Who’s steering whom?—And in which way? might we ask. This appears even more important. Do we know enough about how human and AI decisions may actually differ to responsibly give away such an important chunk of responsibility, knowing that decisions have consequences?

This article takes a first explorative step toward addressing this issue. We do this by analyzing the similarities and differences among human, hybrid, and AI decisions in the context of top management decisions on projects. We use experiments to uncover in which dimensions decisions made by AI may differ from those made by humans—and, in this case, human top managers in the context of projects, and whether hybrid decisions may tilt in directions not previously exhibited by human behavior. The models are based on ChatGPT3.5.

For our theoretical framework, we build on both the classical theory of rational decision- making—notably in its operationalization in the form of decision quality—as well as Stingl and Geraldi's work on behavioral decision-making in projects (Stingl & Geraldi, 2017), Zwikael’s theory of areas of top management support (Zwikael, 2008; Zwikael & Meredith, 2019), and the project decision chain (Rolstadås et al., 2015).

This article addresses the following questions:

In what dimensions might, based on decision theory, top managers’ decisions in projects differ from decisions made by AI? What could be the likely effect on projects? In our experiments, do we find any indications of that? And do we find any indication that hybrid decisions (i.e., decisions made both by humans and AI together or humans with the help of AI) would deviate from those made exclusively by humans and tilt toward the characteristics of AI decisions, meaning that human top managers allow themselves to be steered by AI?

This article contributes to the research in (1) decision-making in projects, (2) top management and projects, and (3) the use of AI or digital transformation in projects and Industry 5.0. We also provide a conceptual framework by which decisions in projects may be analyzed.

Literature Review

Decision-Making

Decision-making is crucial for projects (Stingl & Geraldi, 2017; Shi et al., 2020; Romero-Torres & Morin, 2023). This is due to the nature of projects, which includes uniqueness (Miterev et al., 2017) and temporary organization (Lundin & Söderholm, 1995). Both imply several possibilities of what to do, how to do it, and for whose benefit, which inevitably leads to the need to decide. Decision-making has been identified as a key activity in projects (Pinto et al., 2015) and as being crucial for project success (Pinto et al., 2015; Turner, 2014; Shi et al., 2020).

Classical Decision Theory

There is a theory on rational decision-making, which is grounded in economics and mathematics. It is a normative, prescriptive, model for decision-making, based on Bayesian theory and subjective expected utility maximization and the axioms of rational decision-making (von Neumann & Morgenstern, 1947; Raiffa, 1968). A decision is then rational, when (1) the problem; (2) the objectives to be reached have been clearly defined; and, following an information search (3), a number of alternative options are created, of which; (4) each option is evaluated, and additional money and effort are being spent on gathering more information and creating additional options, where this is reasonable, based on the expected value of information (Ponssard, 1976). Then (5) that option is chosen that best achieves the defined objective(s), based on (marginal, subjective; Menger, 1871; Savage, 1954) expected utility and while accepting the economic principle (which includes making the decision on the basis of cost benefit and opportunity cost) and adhering to the axioms of rational decision-making. The axioms of rational decision-making (von Neumann & Morgenstern, 1947; Luce & Raiffa, 1957) are the criteria for a decision so made to be rational and are: order, transitivity, continuity, independence, reduction, and monotonicity.

Contributions from the project management literature that build on normative decision theory include those from Gemünden and Hauschildt (1985), who analyze efficiency in complex strategic decisions, and the impact of alternative designing on decision quality. Bourgault et al. (2008), Marques et al. (2011), Karim (2011), and Caniels and Bakens (2012) all argue in favor of a rational decision-making process. Steffens et al. (2007) explore the use of decision criteria. Fischer and Adams (2011) cover the aspect of creative thought, which, in classical decision theory, is required for coming up with a range of creative alternatives from which to pick. Other work (e.g., Pinto et al., 2015) concentrates on decision-making tools and processes that might improve competence in decision-making and, consequently, project success. And, most recently, Romero-Torres and Morin (2023) used such a three-step decision-making process (IPO-model) for their analysis of building information modeling (BIM) in project decision-making.

Behavioral Decision-Making

Behavioral decision theory (Edwards, 1954) focuses on how decisions are actually made. Based on a classification by Powell et al. (2011), Stingl and Geraldi (2017) have sorted work in behavioral theory into three schools of thought/categories: reductionist, pluralist, and contextualist.

Following this structure, the reductionist school of behavioral decision-making (Kahneman & Tversky, 1979; Simon, 1982) sees human decisions as deviating from rationality due to a range of heuristics and cognitive biases. This includes phenomena such as bounded rationality (Simon, 1982) or different utility functions for gains and losses (prospect theory; Kahneman & Tversky, 1979). A number of topics have been identified, including the availability heuristic (Tversky & Kahneman, 1974), which lies behind hindsight bias; the anchor effect, optimism bias, and planning fallacy; overconfidence bias (Lichtenstein et al., 1982), which plays a large role in projects (e.g., Flyvbjerg, 2013); belief or confirmation bias (Nisbett & Ross, 1980; Kahneman et al., 1982); representativeness (Tversky & Kahneman, 1974); sunk cost fallacy; and illusion of control (Slovic, 1987).

The pluralist school of behavioral decision-making (Chapman et al., 2006; Kujala et al., 2007) would consider deviations from prima facie rationality as actually very rational behavior, as soon as personal interests are taken into account. Thus, decisions would be influenced by moral hazard (Akerlof, 1970) and questions of power. Examples of the pluralist school include work by Pinto (2014) and Yang et al. (2014) who study the impact of deviating interests, Chapman et al. (2006) in terms of opportunistic behavior, or Flyvbjerg’s (2007) and Pinto’s (2014) articles on strategic misrepresentation—in other words, a conscious and purposeful misunderestimation of project costs or time required with the aim of getting a project proposal approved.

Finally, the contextualist school, represented by Pitsis el al. (2003), Musca et al. (2014), and Alderman and Ivory (2011), emphasizes the aspect of sensemaking. According to this line of thought, the question of how something is decided would depend heavily on how the problem is defined in the first place; in other words, how a decision problem is derived from a real-world situation and how it is framed (Minsky, 1974). This school would see decisions as the result of a sensemaking process (Weick, 1995). Decisions are judged as right or successful as the result of the convergence of sense and meaning (Alderman & Ivory, 2011; Musca et al, 2014). It focuses on narratives and might be considered as constructivist. Past experience as a factor in decision-making features in the work by Beach and Lipshitz (1993).

Project Decision-Making and Top Management

Decision-making has been called “one of the core activities of a project manager” (Pinto et al., 2015, p. 6). Decision-making in project management has been analyzed by Rolstadås et al. (2014) and is considered of crucial importance for project success (Pinto et al., 2015; Turner, 2014). Good, presumably: efficient, decision-making has been found to lead to increased project performance (Turner, 2014).

Decision-Making and Projects

The literature on decision-making in projects has focused on issues such as decision quality (Gemünden & Hauschildt, 1985), the decision-making process (Bourgault et al., 2008; Pinto et al., 2015; Rolstadås et al., 2014), multicriteria performance analysis and decision-making (Marques et al., 2011, risk and information (Gemünden & Hauschildt, 1985; Rolstadås et al., 2014), complexity (van Marrewijk et al., 2018; Shi et al., 2020), the role of biases (Meyer, 2014), decision-making in megaprojects (Flyvbjerg et al., 2007), and many others. Arroyo’s study (2014) focused on the practice of decision-making in projects. Pinto et al. (2015) introduced the concept of a project decision chain, linking the key decisions in projects.

Top Management and Decision-Making

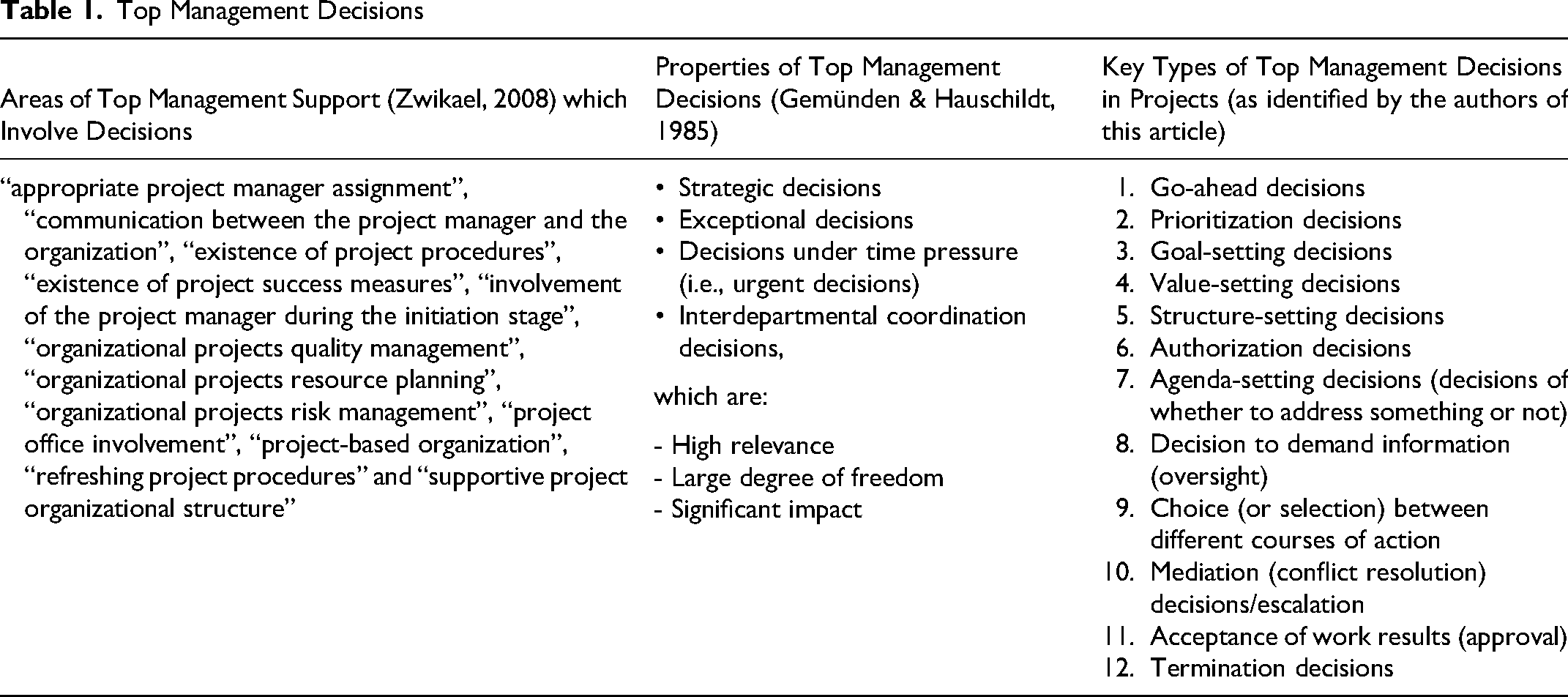

The role of top management has been discussed extensively in the project organizing literature, in part directly (Zwikael, 2008; Zwikael & Meredith, 2019) and as part of the literature on project governance (Müller et al., 2017; Turner, 2020). Top management has been studied by Zwikael (2008), who has empirically identified “areas of top management support,” which is, according to Fortune and White (2006), the most cited critical success factor. Senior executives support projects and their managers by providing a facilitative and supportive working environment, the provision of resources and feedback, and leadership (Berssaneti & Carvalho, 2015; Amoako-Gyampah et al., 2018). Zwikael (2008) counts 17 areas of top management support in projects (Table 1), which summarize the activities of top management. Top management has been found to play a major role in the early phases of projects (Zwikael & Meredith, 2019; Martinsuo & Lehtonen, 2007; Flyvbjerg, 2013), the setting of governance structures (Joslin & Müller, 2015), critical stages, and in premature termination (Meyer, 2014). One of the key roles of top management is, of course, to decide (Turner, 2020). Based on the work of Toivonen and Toivonen (2014), senior management makes the big decisions in projects.

Top Management Decisions

There is general literature on top management decisions, which dates all the way back to the work of Mintzberg et al. (1976). Gemünden and Hauschildt (1985) have defined top management decisions as strategic decisions, exceptional decisions, decisions under time pressure (i.e., urgent decisions), and interdepartmental coordination decisions.

We hypothesize that the main types of top management decisions in projects are (1) go-ahead decisions, (2) prioritization decisions, (3) goal-setting decisions, (4) value-setting decisions, (5) structure-setting decisions, (6) authorization decisions, (7) agenda-setting decisions (decisions on whether to address something or not), (8) decisions to demand for information (oversight), (9) choice (or selection) among different courses of action, (10) mediation (conflict resolution) decisions/escalation, (11) approval/acceptance of work results, and (12) termination decisions.

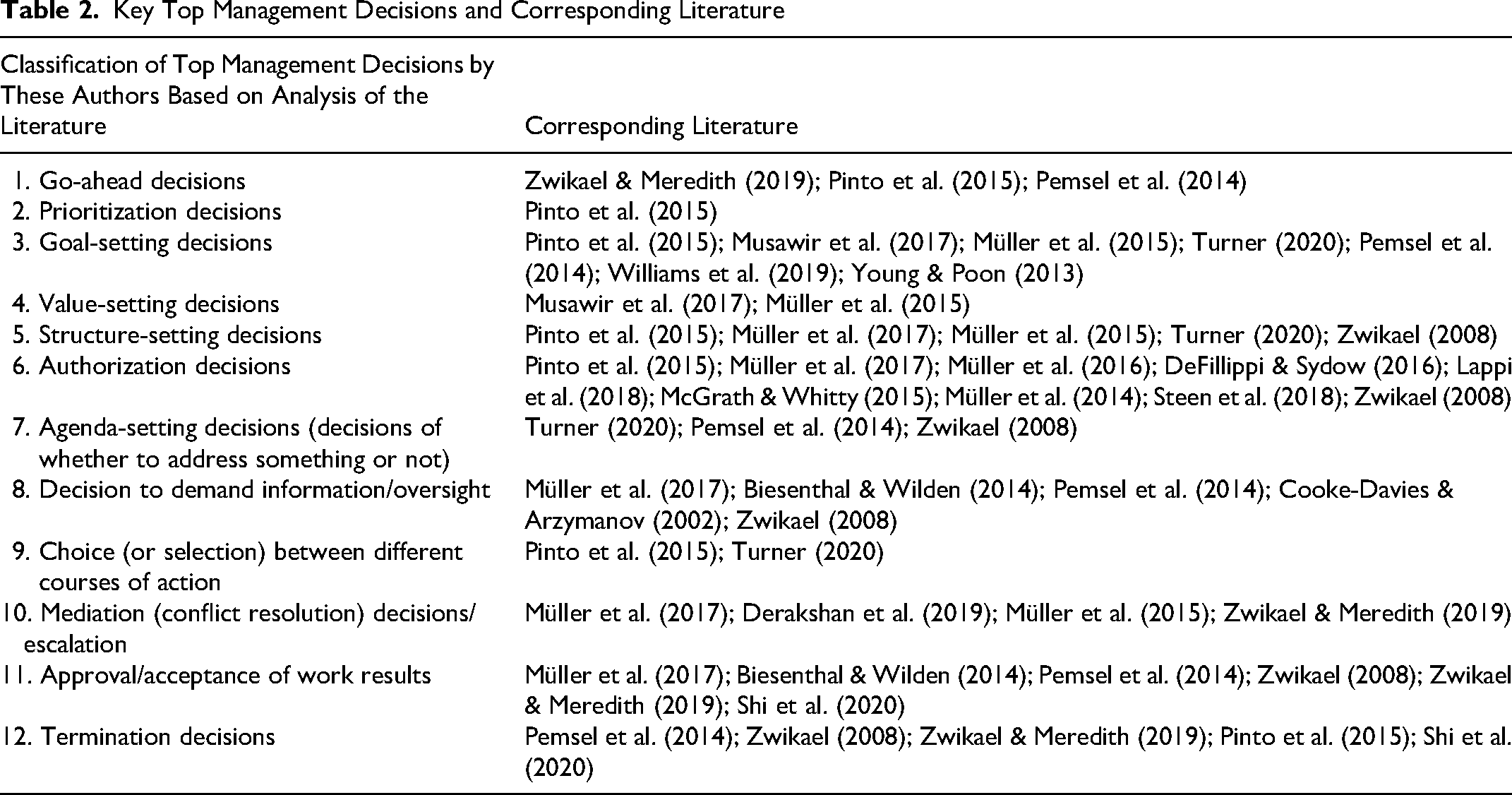

We base this on a general reading of the project management literature where, for example, Pinto et al. (2015), in their introduction of the concept of the project decision chain, differentiate among three types of decisions: authorization, selection, and plan decisions. When Müller et al. (2017) identify three streams of governance, they talk about: controls, defining responsibilities, defining how people should work, and how stakeholders should work together, and so forth. Tables 1 and 2 lists these types of top management decisions in projects.

Key Top Management Decisions and Corresponding Literature

AI in Decisions and Redefining (Project) Management

According to Müller et al. (2024), AI has already become a transformative force in project management. Research shows that AI is gaining prominence at a rapid pace (Ahmed et al., 2024), and there is currently a heightened academic interest among project scholars in the subject (Holzmann et al., 2022; Mariani et al., 2024; Yang et al., 2025). There are a range of applications for AI in project management, namely the automation of repetitive tasks, optimization of resource allocation, better risk management (Taboada et al., 2023; Prifti, 2022; Auth et al., 2021; Polonevych et al., 2020; Gil et al., 2020), and many uses have recently been investigated, including project planning, execution and monitoring, risk management, and mitigation (Love, 2021). Gyory et al. (2021) and Ahmed et al. (2024) show that decision-making is one of those uses, as do Zheng et al. (2023) and Jha and Iyer (2021). However, thus far, research has not shown in which way exactly project decision outcomes may differ between AI and project managers—and certainly not top managers in project situations. This research addresses this gap. In a similar setting to ours, Barcaui and Monat (2023) directly compared ChatGPT and the human approach to project planning, arriving at similar conclusions. Overall, research indicates that, rather than replacing the project manager (Mariani, 2024), AI is likely to develop into something that is used in a hybrid way (Mariani, 2024; Barcaui & Monat, 2023; Zhang et al., 2022). Thus, research essentially confirms that (1) the use of AI is going hybrid and (2) AI is increasingly being used in project decision-making.

As it is entering decision-making (Jarrahi, 2018; Duan et al., 2019; Raisch & Krakowski, 2021), AI—traditionally used to replace human decision-makers for structured or semistructured decisions (Edwards et al., 2000) and decisions in stable and familiar conditions (Duan et al., 2019)—is gradually making its move from operational applications toward complex areas, such as entrepreneurial decision-making (Lee et al., 2019; Valter et al, 2018), all the way up to strategic decisions (Kiron & Schrage, 2019; Stone et al., 2020) and the board level (Merendino et al. (2018). Those who think that top management—defining themselves in their properties as deciders would never outsource relevant, strategic, decisions to AI—may want to think again! Shresta et al. (2019) report one example, in which a leading firm in Hong Kong formally appointed an algorithm to its board, giving it a vote as sixth board member to vote on investment decisions. Our own research reveals that 68% of top managers surveyed in this study admit to having used ChatGPT in decision-making before.

AI Decision-Making is Hybrid

As AI finds its way into management—at a rapid pace—it has become more and more clear that AI will neither replace human managers nor fully replace management decisions (Raisch & Krakowski, 2020). The role that materializes rather is that AI will be augmenting human capabilities, and that decisions become more and more hybrid (Raisch & Krakowski, 2020; Stone et al., 2020; Joy, 2000). If this is where things go, then what will happen is that we will soon enter an era where human and AI decisions will become so intertwined (hybrid decisions) that they will become inseparable. And as that happens (Joy, 2000) we must be more and more inclined to accept what AI decides for us—at least partially. This, inevitably, leads to the question: Who’s steering whom?

Research Configuration

Method and Hypotheses

Asking ChatGPT, as we did as a starting point to this research, yields the following answer: “This question raises interesting philosophical and ethical questions, and there is no clear answer, as it depends strongly on the application and use of the Artificial Intelligence (AI). Let's go through some of these considerations: 1) **control by humans**: AI systems are programmed and constructed by humans to perform certain tasks. In this sense, man controls the machine, as he defines her goals, limitations, and functions. Even generative AI systems, which can create their own contents, do this within parameters defined by humans. 2) **automation and autonomy**: On the other side, advances in AI technology allow systems to learn and to adapt to new situations. These systems can take decisions, which have not been exactly foreseen by their original developers. In this sense, machines can act independently, which can lead to a kind of control, in particular, when these systems are employed in critical areas such as autonomous driving and financial systems. 3) **mutual influence**: In reality, the relationship between humans and AI is probably best described as reciprocal influence. Humans control and shape the AI, and the AI can, in turn, influence human behavior. For example, the algorithms of social media, which are based on AI, can influence which information users can see and how they see the world. In relation to ethics and responsibility, it is important that we stick to the idea that humans are ultimately responsible for AI systems. Even when an AI acts “independently”, these decisions are based on human programming and on humanly determined goals. Therefore, it is up to us to ensure that these systems are employed responsibly and for the benefit of all. ” (16/07/2023, see acknowledgements)

The consequences of this seem rather remarkable: When and as AI influences human decision- making—which it inevitably will as human and AI actions become more and more blurred—will AI influence human decisions into going in certain directions? Which directions could and would those be?

We aim to obtain insights into whether decisions made by AI would tend to differ from those made by human top management decision-makers in certain relevant dimensions. If they are, then this would indicate that humans would be steered into directions that would not previously be the result of human-only decision-making. Based on the diction of Söderlund and Geraldi (2012) that decision-making in projects is complex, and so research on decision-making must be multifaceted, we would thus need a model that incorporates all the most important aspects of the different theories of decision-making.

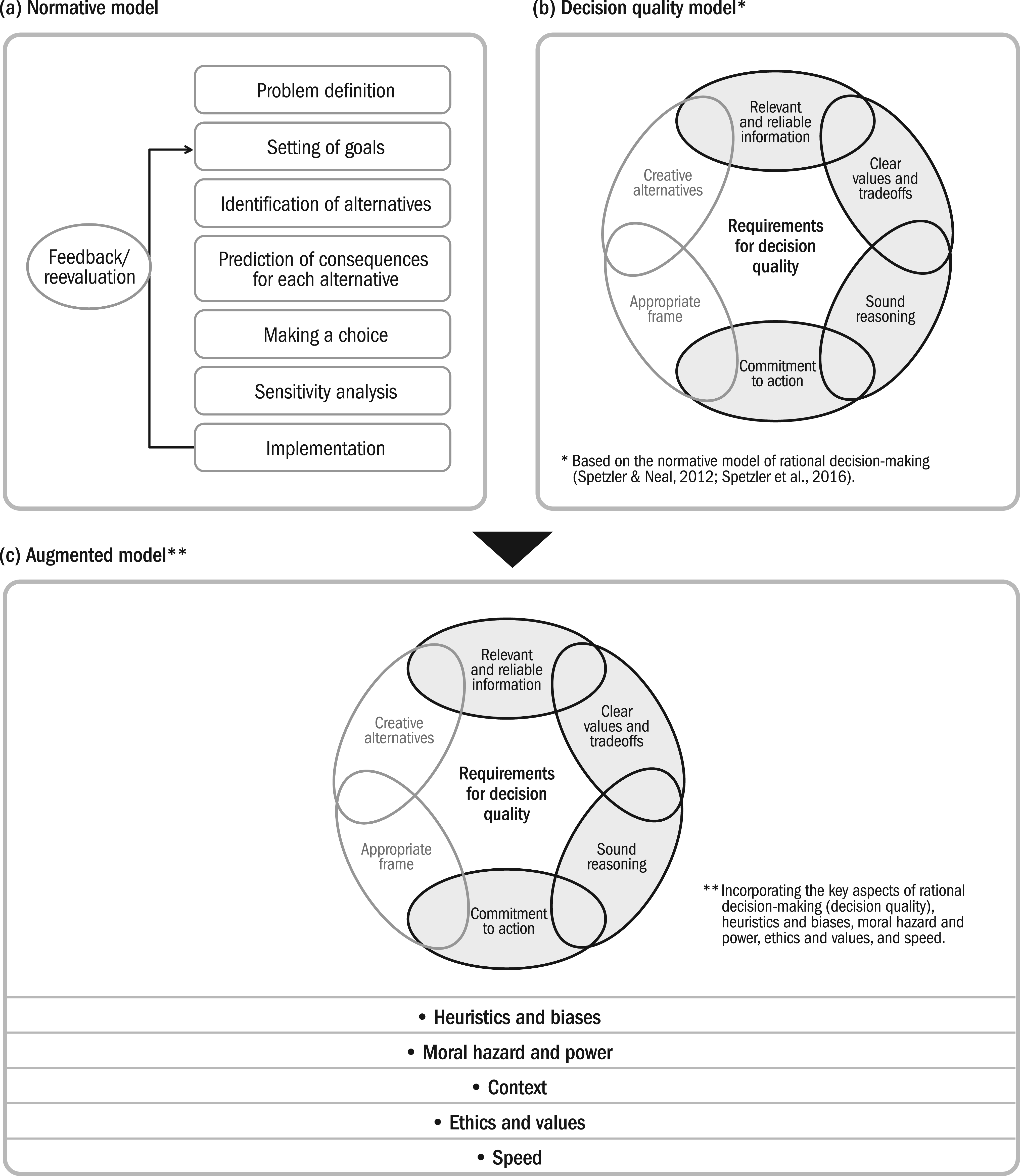

First, we need to acknowledge that there is a purely rational, objective way of decision-making, which has been put forward by classical decision theory. Keys to making a rational decision are a logical decision process, the creation of alternatives to choose from, expanding on alternatives based on information, and making a rational choice among them.

A decision model based on classical decision theory is the decision quality model from Spetzler (Spetzler & Neal, 2015; Spetzler et al., 2016), which incorporates and operationalizes the key aspects of rational decision-making. According to this model, the requirements of decision quality are an appropriate frame (which includes a clear understanding of the problem and what needs to be achieved), creative alternatives, meaningful information, clarity of desired outcomes, solid reasoning and sound logic, and a commitment to action.

We need to expand on this model, taking into account that human decisions only rarely fully conform to the rules of rational decision-making. As has been demonstrated by behavioral decision theory (Edwards, 1954; Tversky & Kahneman, 1974), humans tend to deviate from prescriptive theory. According to Stingl and Geraldi (2017), humans deviate from rational decisions in three dimensions, as described by the reductionist, pluralist, and contextualist schools. We thus add heuristics and biases, moral hazard and power, and context to our model to more fully cover all relevant aspects of decision-making. Finally, we take the questions of value and ethics as well as speed from the discussion about AI, as the two main factors where AI decisions, for good or worse, are expected to differ from current decision-making by humans. Taken together we arrive at a model that should include the key factors that characterize decision-making. Therefore, this study proposes the model shown in Figure 1.

Normative model, decision quality model, and extended model.

Based on this model, we can now test the following hypothesis:

Decisions made by AI deviate, at least in some relevant dimensions, from decisions made by human top managers.

Experiments and Data Analysis

Based on a range of experiments, we compare the decision-making of AI and top managers on projects. We have created a number of decision cases, which were presented to top managers and AI. We used phenomenological research (Creswell, 2013; Moustakas, 1994; Gioia et al., 2013) to evaluate the answers. Transcripts of the answers were used to count markers such as quotes, which—via second order themes—could be attributed to the various aspects of decision-making identified in the model (as shown in Figure 1), thereby creating a score for each dimension. We conducted t-test for equality of means to establish whether the groups of human and AI decisions differed significantly in any of these scores. In a parallel mode of analysis two experts, independently from each other, assessed all answers in every dimension, not knowing whether they were from humans or AI, using a five-point Likert scale.

Conduct of the Experiments

Experiments were conducted between mid-November 2023, and mid-December 2023. Our decision cases were presented to participants in the form of online interviews, conducted via videoconferencing. Interviews were recorded using the recording function of our video-conferencing tool and transcripts were created directly thereafter, which were then used for evaluation. The interviews followed an interview protocol, and the questions were open ended to allow for the interviewees to elaborate on important aspects and choose their own focus of emphasis. The exact purpose of our interviews—comparison of human versus AI answers—was not disclosed to our interviewees until after our experiment to avoid any biased answers. Before the experiment several tests were conducted with test persons both familiar and unfamiliar with project management, as well as AI, to streamline our questions. Care was taken to ensure AI-generated and human answers would be of approximately the same length. Paying tribute to the nationality of our sample, interviews were conducted in the local language and relevant parts translated ex post into English.

Selection of Groups

We sampled our human top managers via the GPM Deutsche Gesellschaft für Projektmanagement e. V. (German Association for Project Management [GPM]) automotive group. The auto industry is one where we can expect a high degree of project experience (Wagner & Hab, 2017; Wagner & Erasmus, 2023). As the country's lead industry, it is highly attractive for management talent (Häberle, 2023). Therefore, we can expect professional managers well-versed in projects. To keep variance to a minimum, we only sampled managers from this industry.

Only one person per company was allowed to participate to ensure observations were independent. To qualify as a top manager, participants had to be either from the first two hierarchical levels; be accountable for a (near) three-digit number of employees; hold a comparably senior role (e.g., director of the R&D department); or regularly perform the role of a project owner/sponsor or member of a steering committee in projects. In the end, 37 top managers participated in our experiment.

For the group of AI-generated answers, we asked a group of trained project professionals to, independently from each other, submit our questions to AI and record the answers, thus creating a pool of comparable size (28) to the group of participating top managers. Answers were standardized according to length and were to include a decision (for each case) and a justification. We consciously gave no instructions to our volunteers on prompting, hoping instead for a natural distribution of prompting techniques as might be expected in real life.

The AI we used was ChatGPT3.5, as it is the original breakthrough technology (Euchner, 2023), in what might be considered a Schumpeterian technological revolution (Bresnahan & Trajtenberg, 1995). ChatGPT is also highly accessible and thus likely to be used, confirmed by the finding that many of our top managers had previously used it. Alternative AIaaS tools, such as Grok, Bard, PMI Infinity™ (Project Management Institute [PMI], 2023), or even company-specific AI decision tools such as Einstein (Meissner & Keding, 2021), would have been justifiable alternatives from a technological point of view.

Selection of Questions

Our questions were designed to cover all the main steps in Pinto’s (2020) project decision chain, Zwikael’s (2008) areas of top management support, and our own previously defined key decisions of top management in projects (see Tables 1 and 2). They were characterized by the properties of top management decisions as described by Gemünden and Hauschildt (1985) and covered typical decisions such as selection and prioritization of projects, common decisions that might be transferred up the hierarchy and addressed at a steering committee, and a case of premature termination. The decisions were designed in such a way that allowed for a logical decision process to be pursued and explained, they permitted full rationality of outcome and offered a range of creative options to include and discuss in the decision-making process (rational decision-making). They also allowed for biases, in particular optimism bias as well as decision rationales based on moral hazard and power to come through (behavioral decision-making: reductionist, pluralist), they allowed for contextualization and personal experience (behavioral decision-making: contextualist) to play a role and, in one case in particular, for not-so-ethical decision-making behavior (main fear connected with AI).

Data Analysis

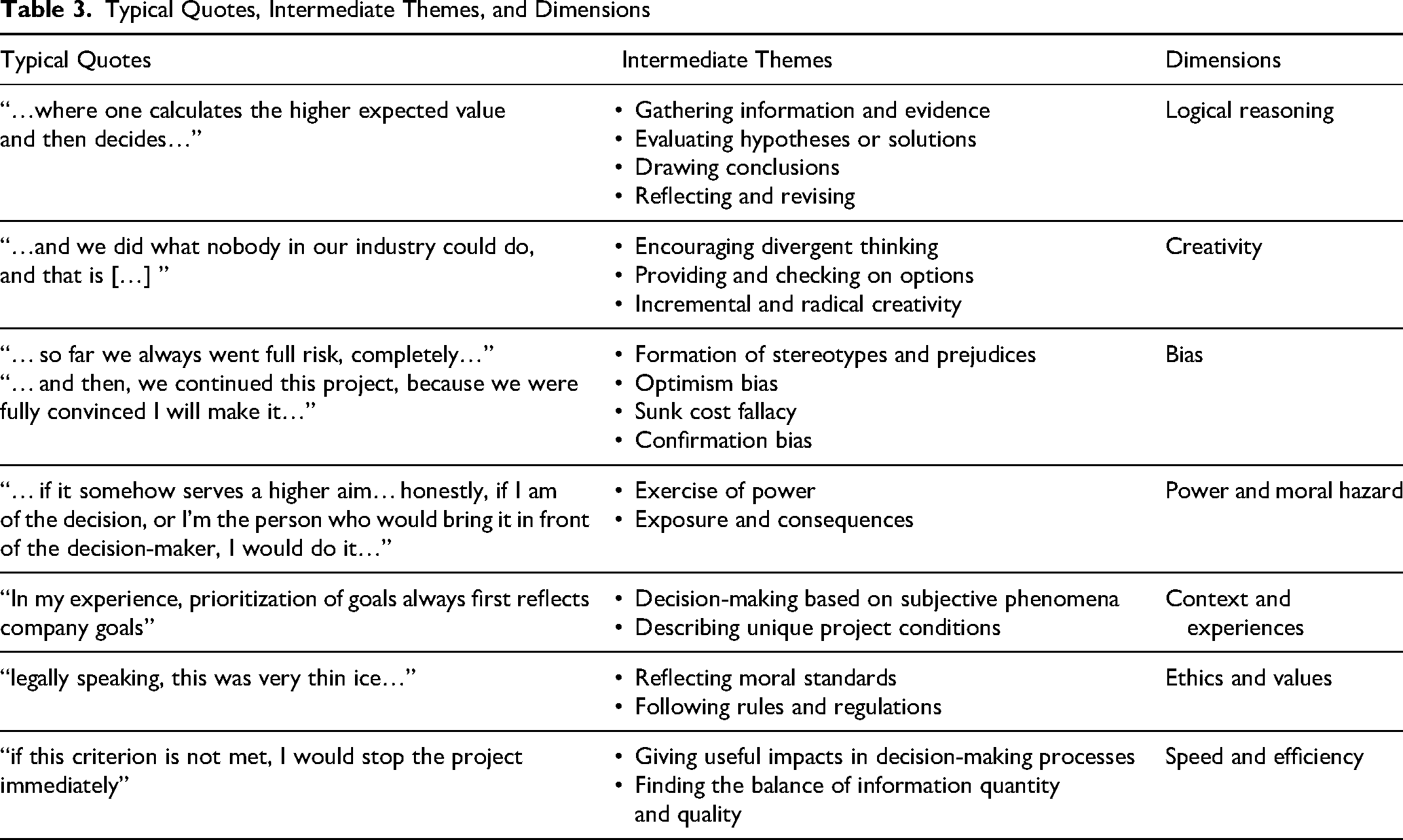

We used phenomenological research, which has its focus on describing the lived experience of a phenomenon for various individuals (Creswell, 2013). Significant statements were identified and, via medium-level themes, were attributed to our previously defined dimensions (Unterhitzenberger & Moeller, 2021). Note that this is not Gioia in the pure sense, as Gioia is purely inductive. We do this via a systematic analysis of transcripts, where we use quotes to search for indicators (markers) of different categories, which we then attribute to dimensions.

In a second mode of assessment, expert judgment was used to mark decision-makers’ answers on the different dimensions, using a 1-5 Likert scale. To do so, each questionnaire was assessed independently by two experts, and the average taken.

t-Test

We conducted t-test for equality of means, to find out whether we would find any statistically significant differences between AI and human decisions on any of these dimensions.

Results

Statements that could be interpreted as signs of a rational decision process were: “Where the highest expected value is calculated—this would be the decision at the end of the day” or “First, we would need a specification; second, a test plan; and third, intermediate goals. I would then check against the goals in the specification and, if needed, we would adjust the direction.” Creative ideas out of the ordinary and a wide option space were examples of what we interpreted as markers for creativity. We counted several biases, including confirmation bias, sunk cost fallacy, and optimism bias (“I would accept because I'm thinking positively, and I'm more looking in the direction of opportunities”; “I would continue the project, because I already invested time and money. And I think we should finish it.”). Moral hazard came through in several different manifestations, so did considerations of power and the need for negotiation, for example: “If it leads to some strategic value, and I'm the one who is the main decision-maker, I would [put my own department's project] ahead of the company project. If it is the board who decides, I would make that proposal.”

Interestingly, we found our AI, on one occasion, advocating an AI-related project despite objective criteria speaking clearly against it: “In this situation, I would probably tend to continue the current project on the development of artificial intelligence. The long-term strategic advantages and potential technological progress could lead to a sustainable competitive advantage” [obs. 41]. This led us to say that “our AI shows moral hazard when it comes to AI!” Other examples are included in Table 3.

Typical Quotes, Intermediate Themes, and Dimensions

t-Test for Equality of Means

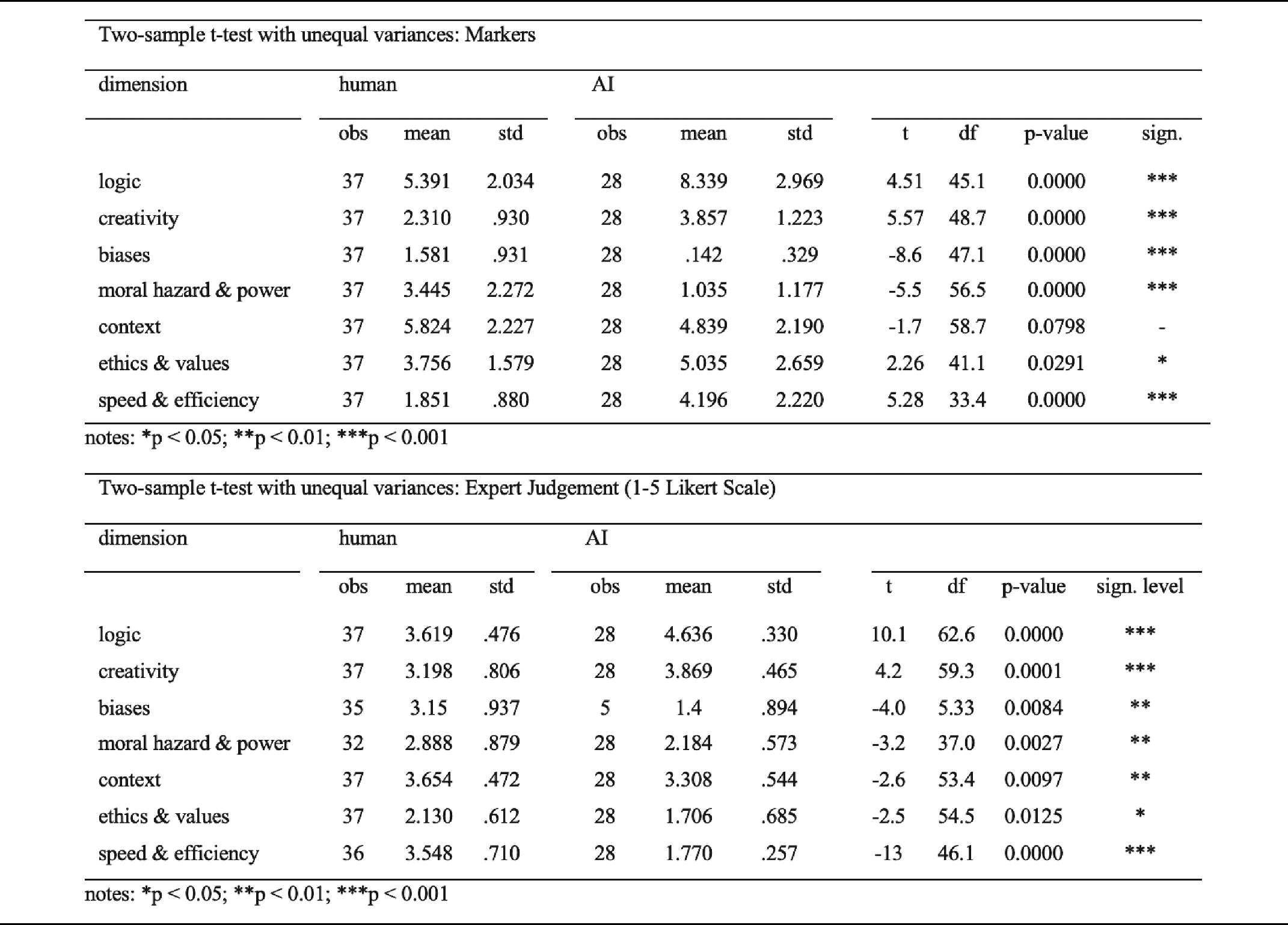

We used Welch's t-test for unequal variances instead of Student's t-test, as it has been shown to perform better under most circumstances (Delacre et al., 2017), and some Levene’s tests yielded unequal variances. We used Stata SE to conduct our analysis. The results can be seen in Table 4, for (1) count of markers and (2) using 1-5 Likert scales.

Results of t-Tests, Using Markers and Expert Judgment

According to our results, AI answers differed from those of experienced, trained top managers in the automotive industry in that they scored significantly higher on logical reasoning, both based on markers and expert judgment. They scored significantly higher on creativity and significantly lower on both bias and moral hazard/power/negotiation. Humans scored about two standard deviations higher on context, but about one standard deviation lower on ethics than AI. Finally, on the speed and efficiency of decision-making our results proved inconclusive, given that expert judgment and analysis based on markers came to two completely different conclusions.

Limitations

As one of the first inroads into the issue of AI in top management project decisions, there are naturally limitations to our research. Our experiments were conducted with a relatively moderate sample of 37 top managers in a laboratory setting. They are thus clearly exploratory in nature. Our experiments were based on a specific set of questions and the use of a specific AI. The AI used—ChatGPT—was not created to be explicitly used for decision-making, even though it is clear that people—including top managers we sampled—use it for that purpose. Whether our findings can thus be extrapolated to real-world scenarios and whether the characteristics we found can be extrapolated to AI in general—rather than just the particular AI used in our experiment—remain to be investigated in the future.

Discussion

Our results appear nevertheless plausible in the light of decision theory, where rational decisions are in many ways the opposite of heuristical decisions. In light of behavioral decision theory (Edwards, 1954; Tversky & Kahneman, 1974), it appears credible that if human decisions are less rational than those of AI, they also are more prone to heuristics and more biased (Kahneman & Tversky, 1979); this may even include less ethical behavior on the side of humans (see Table 4). That AI would score higher on logic and creativity would appear to make sense in light of AI's machine nature and its access to a vast base of learning data (Xu et al., 2021).

Man’s ability to take context, including social context, i.e. power structure, into account and to navigate personal interests have—far from having only negative implications—been found in the project management literature to play an important role when it comes to letting projects happen (Flyvbjerg, 2007; Gil, 2023) and navigating stakeholder groups (Yang & Fu, 2014; Kujala, 2007; Yang et al., 2021) as well as rallying people behind project benefits (Keeys & Huemann, 2017). Our findings seem consistent with this. Why then is our AI not purely rational? Large language models (LLMs) have been found previously to deviate from pure rationality (Spirling, 2023) and facts (Hoes et al., 2023) because they are based on learning data, which reflects all kinds of human behavior, and on probabilistic models. This may also explain why heuristics and biases and moral hazard are present, though at a lower rate, in AI’s decision approaches. It may also explain—perhaps along with a dose of relevant programming—ethics. The fact that AI, despite being programmed to mimic human behavior (Bringsjord & Govindarajulu, 2022) is at the same time not a perfect copy of it, makes systematic deviations, and thereby “steering,” plausible.

Theoretical Implications and Contributions

Should our findings hold—and we are very aware that at this time we must still classify this research as explorative—then human and AI decisions in projects differ in some important dimensions. As more decisions are made by or with the help of AI, these deviations must multiply and projects may be selected in slightly different, more rational ways (Flyvbjerg, 2007; Pinto, 2014, on strategic misrepresentation), and be run for the benefit of different people (Gil, 2023; Aubry et al., 2021; Keeys & Huemann, 2017) with other forms of benefits (Marnewick & Marnewick, 2022) in mind. Less emphasis might be put on the balancing of interests of various stakeholders, accounting for individual interests and power structures (Yang & Fu, 2014; Kujala, 2007), as perhaps the human aspects of leadership (Naumann et al., 2023; Kristensen & Shafiee, 2024), with probably negative corresponding impacts on trust and collaboration (Kadefors, 2004; Galvin et al., 2021; Yang et al. 2014). More projects might be subject to premature termination (Meyer, 2014), and the Sydney Opera House might have never been built (Gaim et al., 2022). Besides that, our study made the following theoretical contributions:

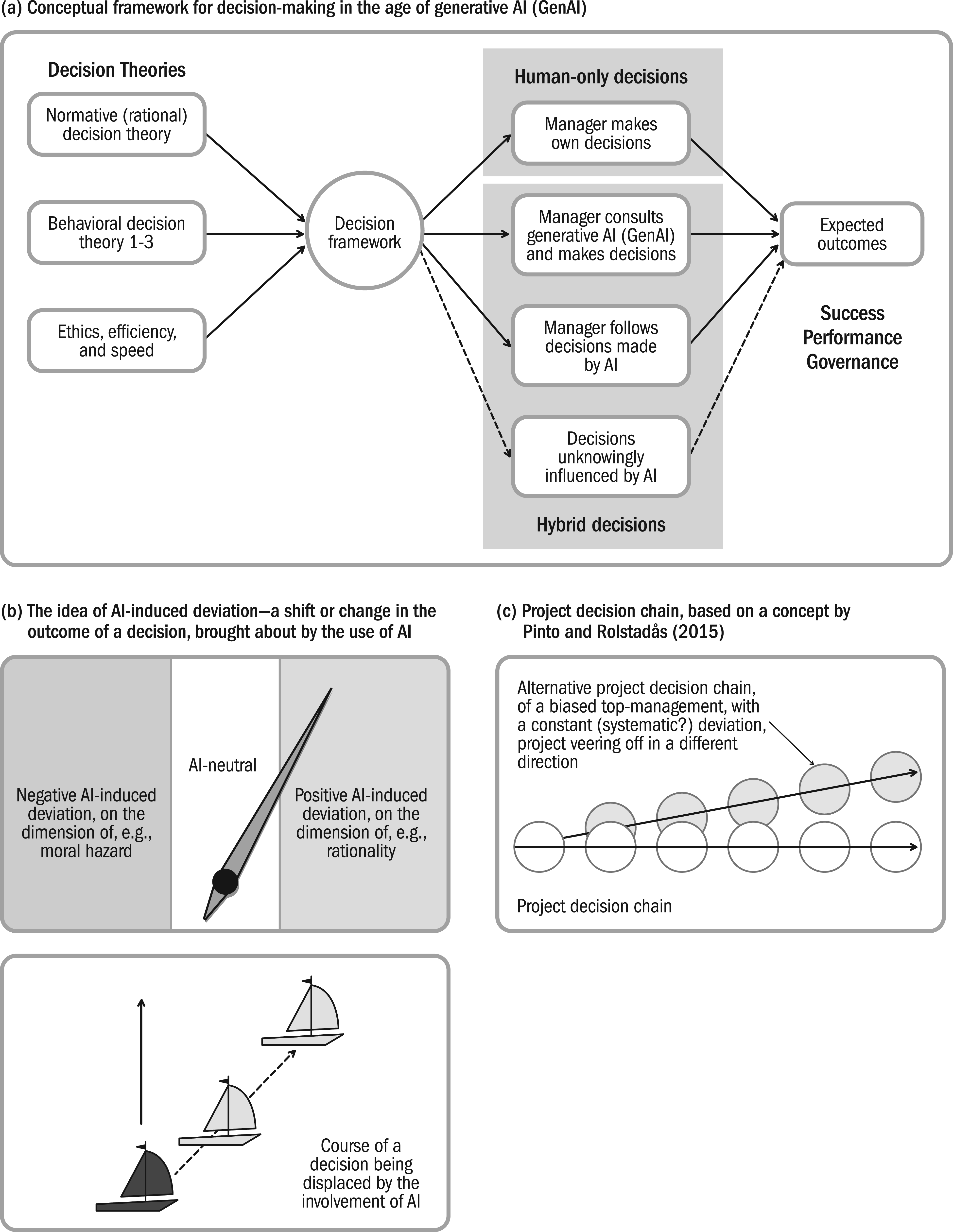

First, we propose a conceptual framework (Figure 2) for (project) decision-making in the age of AI and hybrid decision-making, which is based on our analysis. Based on this framework, a decision can be human-only, AI-only, or hybrid, where hybrid can mean (1) the manager consults GenAI and makes decisions; (2) the manager follows decisions made by AI; or that (3) the manager's decisions are unknowingly influenced by AI, for example, because AI was used in the preparation of a decision or the preselection of options. The properties of these decisions, made according to the various decision theories, vary in their characteristics according to the dimensions identified in this research (see Figure 1) and thus lead to different expected outcomes. It is a framework that still needs to be tested in real-world scenarios.

Theoretical concepts.

Note that, for the purpose of this study, we created a model that brings together the key aspects of both rational and behavioral decision-making theories (see Figure 1). In creating this model, we built on research by Stingl and Geraldi (2017) who identified three schools of behavioral decision-making as well as the decision quality model by Spetzler (Spetzler & Neal, 2015; Spetzler et al., 2016). In previous studies in project management research, the focus has been predominantly either on classical (e.g., Gemünden & Hauschildt, 1985) or behavioral decision theory (e.g., Flyvbjerg, 2007, 2013; Stingl & Geraldi, 2017). It has become clear, however, that both cannot be considered in isolation.

Second, we would like to introduce the concept of AI-induced deviation in decision-making. Similar to maritime navigation, where a compass needle deviates from the true north due to the magnetic field of the Earth; or sailing, with a sailboat being displaced by wind and sea (which is why AI-induced displacement might be a synonymous or even better term) the use of AI in decision-making has the potential to bring a decision on a slightly different course (“Who's Steering Whom?”). This deviation, which concerns one or all of the previously discussed dimensions (rationality, creativity, bias, and so on) can be positive or negative, strong or weak and, most likely, any deviation has a beneficial as well as a less beneficial impact. It is a logical, expected, consequence of any fundamental, systematic difference between an AI and a human decision. As AI decisions replace or complement human decisions, we would expect to find more and more of that. We would recommend that such oversteering or deviation must be addressed by and adjusted when AI is used in decision software. Alternatively, it should be made transparent, in the sense that each decision software should be required to state it, in what could be, just as in sailing, some form of a deviation table.

Third, we would like to build on the project decision chain (Rolstadås et al., 2015): If we take the project decision chain seriously (i.e., that each project can be viewed as a series of decisions) and we also believe that the big decisions in a project are made by top management (Toivonen & Toivonen, 2014), then (even partially) delegating such decisions to an AI whose decisions systematically differ in certain dimensions must bring a project on a different course as such decisions add up. Equally, far beyond the question of whether such decisions are made by AI or by humans, this must apply to any decision-makers with systematically different characteristics. According to upper echelons theory (Cyert & March, 1963; Hambrick & Mason, 1984), a project must be a reflection of its top management team (Krause et al., 2022). Thus, it can be concluded that any bias of a project's key decision-makers on the dimensions rationality, bias, moral hazard, context, ethics and values, efficiency and speed (whether caused by team composition, team culture, or the characteristics of individuals) would equally affect the path a project takes and thereby its outcome.

Fourth, although our focus was on AI, we have also reached a deeper understanding about the role top managers play in projects and top management decisions in general. Comparing the answers of human top managers to decision cases with those of AI, it becomes quickly clear that the strength of the human top manager lies in their ability to quickly make sense of a situation and bring it down to the one or two facts that really matter. It is this ability, perhaps best reflected in the structure of one top manager’s answer: “I will base my decision on [this criterion]. [There are many arguments,] but what it comes down to is: […] Therefore, I have to do it.” (obs.7). This comes down to heuristical decision-making, based on many years of experience and training and a high sensitivity for context and the sociopolitical environment and one's place in it (equals: moral hazard, power), rather than superior rationality or creativity that sets the top manager apart.

Managerial Implications

Our study provides several valuable managerial implications that can be used by managers to improve decision quality. Foremost, top managers should be aware that there may be significant systematic differences in outcomes between decisions made by humans, in particular human top managers, and decisions made by AI. Should the findings of our decision experiments be validated in real-world scenarios, decisions delegated to or made with AI would tend to be more rational, less biased, less prone to moral hazard and—possibly—more ethical than those made by human top managers (even though that may be dependent on the type of software), while at the same time lacking in context, and potentially speedier though less efficient. The implications of that are manifold: We hypothesize that, although more rationality and less bias may lead to a more objective selection of projects (Arroyo, 2014; Flyvbjerg, 2021), and strict rationality of outcome may have a significant effect on business cases and benefits management (Zwikael & Meredith, 2019; Marnewick & Marnewick, 2022), it may also lead to projects with less quantifiable benefits being less undertaken (Gil, 2023; van Marrewijk, 2024; Gaim et al., 2022). It may lead to a change of in whose benefit projects are run (Aubry et al., 2021), and to a loss of political and human sensitivity in decision-making.

Consequently, the introduction of AI in top management decision-making must be done consciously and must be scrutinized. Managers should watch for effects when outsourcing decisions to AI generally and actively decide whether that deviation is desirable.

Furthermore, managers should be aware of Joy's dictum (Joy, 2000) that by outsourcing decisions to AI, we implicitly agree to be bound by such decisions. Thus, as a matter of accountability, who decides that a decision—or a particular kind of decision—should be outsourced to AI should be held accountable for any outcome. Care must be taken that this potentially far-reaching decision is made at the right management level.

Conclusions, Limitations, and Future Research Directions

Employing classical and behavioral decision theories, our study adds to the project management literature by investigating how and whether AI and humans might differ in the context of top management decisions in projects. Our findings suggest systematic differences, which, when extrapolated over a huge number of decisions and projects, have the potential to “steer” projects into different directions. As one of the first studies in this field, ours has, of course, important limitations and there is ample room for future research: This concerns whether our findings hold across a spectrum of different AIs and versions and different AI-supported decision- making software programs and are therefore generalizable. The same applies to different groups of human (project) decision-makers. Our sample was restricted to managers in the German automotive industry, potentially limiting cultural and sectoral generalizability; experiments were conducted in a static (laboratory) situation. Hypothetical decisions may lack the depth, urgency, and complexity of real-life project environments. Digital literacy levels may vary among participants, which could have influenced the results. We were not able to fully investigate whether hybrid decisions deviate in the same directions as pure AI decisions albeit to a lesser degree, although logically, they should, as our experimental setup did not allow for human top managers to genuinely take into account AI-generated proposals in their own decision-making. Finally, our proposed systematic deviations between human and AI decisions and the concept of AI-induced deviation in decision outcomes must be investigated in the real-world. The concepts developed here, notably our conceptual framework for AI and hybrid decision-making and the concept of AI-induced deviation, may prove useful in Industry 5.0 contexts even beyond project management.

Footnotes

Acknowledgments

We thank our dear colleague, Professor Doris Weßels, who asked the original: “Who is steering whom?” question to ChatGPT, thereby inspiring this piece of research.