Abstract

In numerous real-world contexts, the prevalence of abnormal instances in comparison to normal instances is markedly low. This phenomenon, characterized by an imbalanced data distribution, results in an increased likelihood of misclassifying the minority class as the majority. The publicly accessible collection of coronavirus disease-2019 (COVID-19) chest X-ray images has become significantly imbalanced due to the ramifications of the pandemic. To address this challenge, the proposed methodology integrates Histogram of Oriented Gradients (HOG) with the Synthetic Minority Over-sampling Technique (SMOTE) and Kernel-based Extreme Learning Machine (KELM). This process is executed in three phases: initially, the images are subjected to preprocessing, followed by the extraction of features using the HOG algorithm. These extracted features facilitate the generation of synthetic minority class samples, thereby achieving a more balanced dataset. The SMOTE-augmented dataset is subsequently employed for training the KELM, which demonstrates superior performance relative to existing state-of-the-art models. A comprehensive experimental analysis was conducted on four datasets comprising chest X-ray images of COVID-19, pneumonia, tuberculosis, and Healthy lungs. The classification accuracy obtained are 96.72%, 96.38%, 97.15%, and 98.66% on Dataset-1, Dataset-2, Dataset-3, and Dataset-4, respectively.

Keywords

Introduction

The World Health Organization declared coronavirus disease-2019 (COVID-19) a pandemic due to its rapid spread and severe symptoms, significantly impacting human life and causing major economic challenges globally (Carter et al., 2020). The diagnostic methods utilized for the identification of COVID-19 can be classified into two primary categories: diagnostic tests and antibody tests. Polymerase chain reaction (PCR) tests and nucleic acid amplification tests (NAATs) are both advanced diagnostic techniques employed for the early detection of COVID-19 infection. In contrast, antibody tests are designed to detect antibodies in the bloodstream that the immune system produces in response to the SARS-CoV-2 virus, yet these tests are not recommended as diagnostic tools for COVID-19. Throughout the pandemic, the demand for COVID-19 testing kits surged; however, supply shortages were prevalent. Both reverse transcription-PCR and NAAT processes are intricate and time-intensive, necessitating specialized equipment and trained personnel. Furthermore, there exists a risk of false-positive results arising from potential contamination during these testing procedures (Maleki & Hojati, 2022). Moreover, COVID-19 and other forms of pneumonia cannot be distinguished by these tests. To diagnose COVID-19, imaging methods such as computed tomography (CT) scans, X-rays, and ultrasound have been employed (Chung et al., 2020).

Recent developments in computer vision and machine learning have significantly impacted the advancement of automated computer-aided detection and diagnosis systems, facilitating the diagnosis of various medical conditions (Ali & Yadav, 2021). The development of such sophisticated diagnostic systems, designed to be efficient and less susceptible to errors, can leverage datasets containing chest scans of COVID-19 patients in addition to data relating to other pulmonary disorders, such as tuberculosis (TB) and pneumonia. In the context of today’s global healthcare challenges, it can be assumed that X-ray images offer a viable solution for rapid, straightforward, and scalable diagnostic procedures in the face of epidemics, such as COVID-19 (Amin et al., 2024). Manual analysis and interpretation of radiological images require considerable time and are associated with an increased risk of misdiagnosis. To effectively reduce misclassification in diagnostic processes, deep learning (DL) models are developed to identify and extract relevant features from data automatically. This advanced capability not only minimizes the dependence on human judgment, but also helps alleviate the negative influences that human factors can have on the accuracy and reliability of diagnostic results. Using these models, organizations can enhance the precision of their assessments and improve overall results (Ali & Yadav, 2023). Consequently, DL models, combined with image processing methods, have been extensively explored in recent years for the development of systems designed to automate the detection of diseases through the analysis of radiological images.

The quality and reliability of machine learning techniques are substantially influenced by class imbalance, a phenomenon that is prevalent in various real-world applications. A salient example of class-imbalanced datasets is found within the biomedical domain, where it poses significant challenges in disease identification. The primary contributor to the imbalance in medical datasets is the disparity between data originating from patients with medical disorders and those without such disorders. Typically, the majority class comprises data from healthy individuals, while data pertaining to rare disorders constitutes the minority class. For example, the COVID-19 dataset (Chowdhury et al., 2020; Rahman et al., 2021) of chest radiographs consists of 3,616 images of COVID-19-infected lungs, whereas 10,192 images are of healthy lungs. Learning from these unbalanced datasets can be challenging, and the application of nontraditional machine learning techniques may sometimes be necessary to achieve acceptable results, especially when addressing clinical illnesses or diseases with low prevalence.

Motivation: The precise diagnosis of thoracic diseases using chest X-ray (CXR) images remains a vital challenge in the realm of medical image analysis. A principal obstacle to the development of dependable machine learning models for this purpose is the disproportionate class distribution—specific conditions, such as pneumonia, TB, or COVID-19, are underrepresented relative to normal cases in publicly accessible datasets. This disparity predisposes classifiers to favor the majority class, resulting in diminished sensitivity and low recall for rare yet clinically critical conditions. Furthermore, medical images frequently display intricate structures, noisy artifacts, and variations in intensity arising from differences in acquisition parameters, patient anatomy, and pathological conditions. Conventional pixel-level features are susceptible to such variations and often lack the robustness required to capture invariant structural attributes essential for reliable classification.

To address these challenges, this work proposes a hybrid approach that combines Histogram of Oriented Gradients (HOG) for extracting structural features with the Synthetic Minority Over-sampling Technique (SMOTE) to balance class distributions within the feature space. HOG provides a concise and noise-resistant representation of anatomical and pathological patterns by encoding local edge orientations, making it effective for capturing abnormalities such as lung infiltrates, nodules, or lesions. SMOTE enhances the model’s ability to generalize across all classes by generating synthetic samples for minority classes based on existing feature vectors, thereby mitigating bias caused by class imbalance. By combining these two techniques, the proposed method aims to enhance the discriminative power and generalization ability of machine learning models for CXR classification, especially in environments with limited or unevenly distributed data.

This article introduces a cost-efficient methodology that integrates statistical feature extraction techniques with advanced computational modeling approaches. Our research offers a significant contribution, which can be concisely summarized as follows: To address the dual challenge of feature noise and class imbalance in CXR datasets, a novel hybrid classification framework is proposed that integrates structural feature extraction, synthetic data augmentation, and kernel-based nonlinear learning. The key contribution of this work lies in the synergistic use of HOG, SMOTE-based oversampling, and Kernel-based Extreme Learning Machine (KELM) to build a robust, balanced, and efficient classifier for thoracic disease detection. First, HOG descriptors are employed to extract local structural features from CXR images. These descriptors effectively capture edge patterns corresponding to anatomical boundaries and pathological regions such as nodules, infiltrates, or lesions, while being relatively invariant to illumination and noise. Compared to raw pixel-based features, HOG provides a more compact and noise-resilient representation, particularly suited for the heterogeneous appearance of medical imaging data. Next, to mitigate the adverse effects of class imbalance, which is prevalent in real-world medical datasets, the SMOTE is applied using the HOG feature vector. This technique generates new synthetic samples for minority classes by interpolating between existing samples and their nearest neighbors, effectively balancing the class distribution. The application of SMOTE in the feature domain, rather than the image domain, ensures that generated samples preserve essential geometric properties while reducing computational cost. Finally, the balanced HOG feature set is fed into a KELM classifier. An extreme learning machine (ELM) is known for its high-speed learning and good generalization, but conventional ELMs often fail to handle nonlinear decision boundaries adequately. The incorporation of a kernel function enables the model to project the features into a higher-dimensional space, allowing for effective separation of classes with overlapping distributions. The proposed KELM model combines the speed advantage of ELMs with the representational power of kernel methods, making it particularly effective in handling the complexity and diversity inherent in CXR images. A comparative analysis has been performed between the proposed system and some of the latest advanced DL models available. The experimental results show that the proposed model is more accurate and efficient than most recent advanced DL models in terms of processing time, precision, recall,

Related Work

Researchers and scientists have made significant strides in developing diagnostic systems for COVID-19, specifically designed for the analysis and categorization of this viral infection alongside various other lung conditions. These innovative systems leverage sophisticated technologies such as Convolutional Neural Networks (CNNs) and DL methodologies, which are cutting-edge approaches in the field of AI. Moreover, some researchers have harnessed the power of transfer learning to refine and train DL models for more accurate classification results. Despite the advancements made thus far, there remains a concerted effort to improve the efficiency and reliability of these models. This includes tackling complex challenges such as imbalanced data distribution, which can skew results, as well as preparing for future needs that may arise in medical diagnostics.

Ozturk et al. (2020) employed the DarkNet model as a classifier for the you only look once system which does not require any feature extraction technique. Minaee et al. (2020) have conducted a comparison analysis on four pretrained DL networks trained on CXR images (ResNet18, ResNet50, SqueezeNet, and DenseNet-121); among them SqueezeNet performs comparatively better. A Bayesian optimized support vector machine combined with CNNs has been proposed by Lakshmi et al. (2024) for distinguishing COVID-19 from other disorders using CXR images. The performance of machine learning models in this context is often hindered by limited availability of annotated CXR images due to privacy concerns and the complex phenotypes associated with COVID-19. Additionally, models of this type face challenges such as computational overhead, lack of global optima guarantees, parameter sensitivity, and scalability. Kernel-based support vector machines, in particular, may encounter difficulties in very high-dimensional feature spaces without proper dimensionality reduction or feature engineering. Khalif et al. (2024) propose a novel approach that combines ‘Generative Adversarial Networks (GANs) with Deep Convolutional Neural Networks (DCNNs). This method improves the model’s ability to differentiate between COVID-19 patterns and healthy lung images, addressing the shortage of labeled COVID-19 data. By generating artificial images, GANs help alleviate issues caused by imbalanced datasets. A data augmentation techniques using a Conditional-GAN with DL model was proposed by Loey et al. (2025) for COVID-19 detection in chest CT scan images. They experimented five DL networks, and ResNet50 with data augmentation using Conditional Generative Adversarial Networks (CGAN) outperforms other DL models.

Jie et al. (2024) proposed a model that employs a pyramid Graph Neural Network (GNN) to tackle key challenges in the field. The approach involves dividing CXR images into multiple patches, which are subsequently processed through a CNN-based feature extractor. Specifically, the first five layers of ResNet50 are utilized for feature extraction. These patch-derived features function as nodes within the pyramid GNN framework. However, pyramid GNNs encounter limitations primarily associated with maintaining an appropriate balance between information retention and noise mitigation during the pooling process, scalability concerns for large graphs, and the potential loss of information arising from nonadjacent scale interactions.

Shazia et al. (2021) conducted a comparative analysis of contemporary DL models (VGG16, VGG-19, DenseNet121, Inception-ResNet-V2, InceptionV3, ResNet50, and Xception) to address the identification and categorization of coronavirus pneumonia from pneumonia patients. DenseNet121 outperformed the other models, displaying an accuracy rate of 99.48%. Albataineh et al. (2024) adapted a simple machine learning model using segmentation and three feature extraction methods: the ratio of white lesion regions to lung regions (ratio of infection), global statistical texture features (mean, standard deviation, skewness, kurtosis), and texture features from the gray-level co-occurrence matrix (GLCM) and gray-level run length matrix (GLRLM). To effectively categorize COVID-19 CXR images, Elaraby et al. (2024) develop a hybrid DCNN model in which features are retrieved using five distinct feature extraction techniques (speeded up robust features, GLCM, HOG, segmentation-based fractal texture analysis, and local binary patterns [LBPs]). These features are then integrated into a single feature vector.

Zhang et al. (2022) designed a deep ensemble dynamic learning network, which links a pretrained convolutional feature extractor and two-stage bagging dynamic learning network classifier in sequence. The concept proposed by Liu et al. (2024) is based on combining bi-directional long short-term memory modules with parallel deformable multilayer perceptrons. It faces issues with computational complexity, overfitting risks, and scalability. The dataset used to implement the model is small, making it difficult to assess its effectiveness when applied to larger datasets. Fan and Gong (2023) leverage an automated approach for COVID-19 that uses a dual-ended multiple attention learning model utilizing ResNet50 as the backbone network. Naz et al. (2024) developed a federated learning (FL) model using ResNet50 for training local and global models. FL enables training on decentralized datasets without central data integration, enhancing privacy. However, while FL handles larger datasets better than traditional DL, it may compromise accuracy to protect privacy. Since raw medical data remain local to each institution, and only model updates are shared, issues such as non-independent and identically distributed data distributions, limited communication, and the use of privacy-preserving mechanisms (e.g., differential privacy, secure aggregation) can affect convergence and reduce performance. Nonetheless, FL remains a promising framework for privacy-preserving collaboration in medical imaging tasks.

Hossain et al. (2024) adapted an optimized ensemble model for COVID-19 classification where VGG-19 and ResNet50 networks are used for feature extraction. The fused feature vector is optimized using principal component analysis, which is then used for training an ensemble model to categorize COVID-19. Mezina and Burget (2024) implemented a vision transformer-based neural network for COVID-19 classification. Global and local features are extracted using an Inception network and a combination of three Inception modules and a vision transformer network, respectively. The concatenated features are then utilized for classification. Tan et al. (2024) presented a new Self-Supervised Learning with Self-Distillation learning model. Two auxiliary tasks, image reconstruction and self-distillation modeling, comprise the pretraining portion of the entire model architecture. Following the completion of pretraining, the trained encoder weights are transferred to the optimized network, and COVID-19 classification is performed.

A variational autoencoder-based model utilizing the SMOTE–ENN (Edited Nearest Neighbor) approach to mitigate the class imbalance issue was adapted by Chatterjee et al. (2023). SMOTE–ENN is a hybrid approach that simultaneously uses oversampling of the minority examples and undersampling of the majority examples. Imbalanced class distribution is one of the significant challenges faced by classification models. Schaudt et al. (2023) employ an amalgamation of random oversampling and several augmentation strategies to synthetically generate a balanced dataset. During training, specialized augmentation procedures are employed to enhance the image diversity of the minority classes. Calderon-Ramirez et al. (2021) suggest an efficient method for resolving data imbalance using the Self-supervised Deep Learning Mix-Match architecture. The technique applies extra weight to the underrepresented classes in the labeled dataset using loss-based imbalance correction. Mix-Match method is utilized to resolve unlabeled data issue, where pseudo and augmented labels are generated for unlabeled instances. Chamseddine et al. (2022) experimented with some of the popular state-of-the-art CNN models (Dense-Net201, chest X-ray network (CheXNet), MobileNetV2, ResNet152, VGG-19, and Xception) along with Weighted Categorical Loss (WCL) and then the SMOTE for tackling imbalance data distribution problem. Compared to the other models tested, the WCL and CheXNet collectively achieved better performance. Javidi et al. (2021) propose regularized cost-sensitive CapsNet in conjunction with DenseNet model for balanced classification of COVID-19 CT scan images. After preprocessing, the CT image is conveyed into a deep neural network, where DenseNet integrates with CapsNet to retrieve essential features. In the capsule network, the cost-sensitive regularized loss function is taken into account to combat unbalanced data.

Amin et al. (2024) employed an ensemble-based machine learning approach, integrating models such as Light Gradient Boosting, Random Forest, and XGBoost for classification tasks. They extracted features from CXR images using HOG and LBP descriptors, which effectively captured salient image features. However, the use of HOG and LBP, both of which extract features related to spatial and structural patterns, could produce overlapping or redundant information, potentially leading to inefficiencies in classification. Also, combining these feature extraction methods resulted in a high-dimensional feature space, increasing computational costs and the risk of overfitting, especially given the small dataset size, thereby raising questions about its effectiveness on larger datasets. Al-Shourbaji et al. (2022) employed a batch-normalized CNN model inspired by the VGG architecture. The model consists of 18 layers, with 12 dedicated to feature extraction—organized into four repetitions of convolution, batch normalization, and max pooling—and six layers focused on classification, implemented as two repetitions of dense layers, batch normalization, and dropout. Despite its improved performance, the model faces several limitations, including high-computational complexity, lack of feature reuse, potential overfitting, limited architectural innovation, and reduced robustness. Nayak et al. (2022) introduced LW-CORONet, a streamlined CNN model consisting of a series of convolutional, rectified linear unit (ReLU) activation, and pooling layers, followed by two fully connected layers. This architecture effectively captures significant features from CXR images while employing only five trainable layers. Lightweight CNNs are valuable for their efficiency in resource-constrained environments, but they often struggle with accuracy, generalization, feature extraction, and handling complex tasks. These limitations highlight the inherent compromises in designing compact neural networks. Therefore, selecting an appropriate lightweight CNN requires careful consideration of the specific task needs and dataset features. Shankar et al. (2022) utilized Wiener filtering at the preprocessing stage, and for feature extraction, a fusion-based approach such as GLCM, GLRLM, and LBP was utilized. The Salp Swarm Algorithm (SSA) was employed to select the most relevant feature subset. An artificial neural network (ANN) was trained to differentiate between infected and healthy patients. The combined use of SSA and ANN offers an innovative approach that leverages swarm intelligence for parameter optimization. Nonetheless, this method encounters challenges such as inefficiency during training, sensitivity to initial conditions, and difficulties handling complex or high-dimensional data.

Bhattacharyya et al. (2022) proposed a comprehensive three-step methodology for analyzing lung conditions through image processing and machine learning techniques. The process begins with the segmentation of raw X-ray images using a CGAN to precisely delineate lung regions. Next, these segmented images are processed via a novel pipeline that combines key point extraction methods with trained deep neural networks to identify features relevant for classification. In the final stage, various machine learning models are applied to differentiate between COVID-19, pneumonia, and normal lung images. Their approach achieved a maximum testing accuracy of 96.6% with the VGG-19 model integrated with the Binary Robust Invariant Scalable Key-points (BRISK) algorithm. Nonetheless, it is noted that CGANs can produce overly smooth segmentations due to the adversarial loss, potentially impairing the preservation of fine details and boundaries necessary for high-quality segmentation. Additionally, training CGANs for high-resolution images is computationally demanding, which poses challenges for large-scale dataset applications. Alshahrni et al. (2023) proposed a novel system that integrates two-step-AS (Analytic Server) clustering, ensemble bootstrap aggregation, and multiple neural networks, incorporating fractal-based and statistical texture feature extraction. This hybrid approach was designed to enhance discrimination among COVID-19, pneumonia, and normal cases, thereby improving robustness against dataset imbalance. The model reportedly achieved a classification accuracy of 98.06%, surpassing conventional CNN classifiers and illustrating the advantages of combining unsupervised clustering with supervised DL in medical image analysis. However, the selection of variables, the methods of scaling, and the distance metric utilized are all critical factors that can significantly impact the results of a two-step cluster analysis. Determining the optimal number of clusters presents a significant challenge. An inaccurate specification of cluster counts can adversely affect the efficacy of the neural network training process. Computational efficiency, scalability, and reliance on clustering quality reduce its practicality in many real-world scenarios.

Proposed Methodology

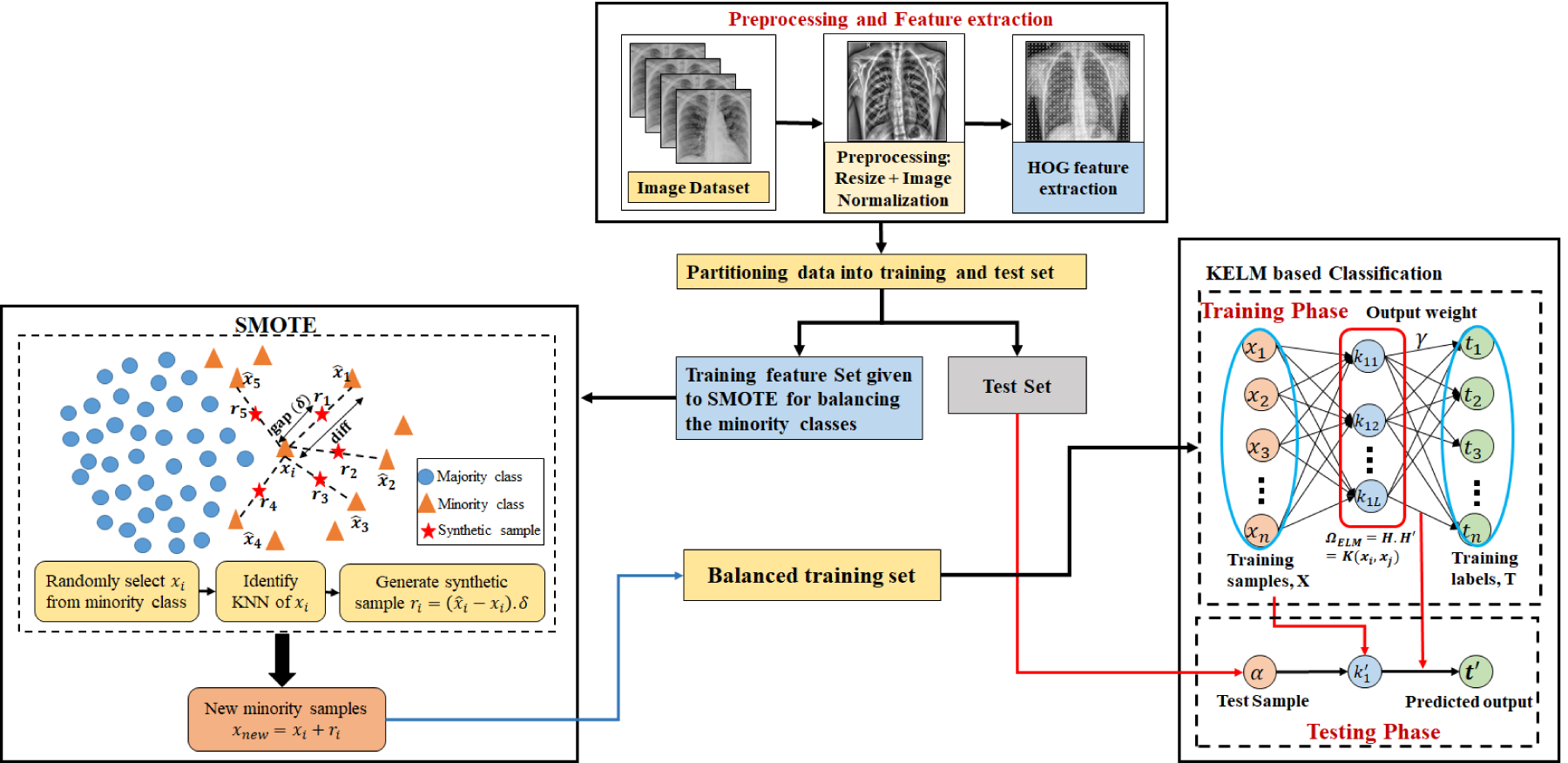

To address class imbalance in COVID-19 CXR datasets, this study proposes a hybrid framework that integrates the HOG feature descriptor (Dalal & Triggs, 2005), the SMOTE (Chawla et al., 2002) for data balancing, and the KELM (Huang et al., 2011) for classification. The primary objective of this integration is to enhance classification performance by mitigating class imbalance-induced bias, while preserving computational efficiency.

The proposed pipeline begins by preprocessing CXR images, after which HOG is employed to extract distinctive texture and structural features. HOG effectively captures the distribution of gradient orientations within localized regions of the image, emphasizing structural features—such as edges, contours, and shape information—and reducing the influence of pixel-level noise, texture inconsistencies, and illumination artifacts. This transformation yields a more compact and semantically meaningful feature space, in which samples of the same class tend to exhibit greater intraclass similarity and form more cohesive clusters. The application of SMOTE within this HOG-transformed feature space significantly enhances the probability of generating valid and class-consistent synthetic samples. The assumption of local linearity underlying SMOTE, that interpolated samples should lie within the true class distribution, is more reliable in the HOG space due to its denoising and structure-preserving properties. Consequently, even when the minority class exhibits a complex or sparse distribution in the original image space, the corresponding HOG feature space is smoother and more regular, rendering synthetic oversampling more effective and stable. Therefore, the integration of HOG and SMOTE enhances the oversampling process by ensuring that synthetic data are generated within a feature domain that is both resilient to noise and structurally consistent. The balanced feature set obtained after SMOTE is then used to train the KELM classifier. KELM is selected for its fast training, strong generalization, and noniterative learning mechanism. By incorporating a kernel function, KELM can efficiently handle nonlinear relationships in feature space, thereby improving classification performance.

In summary, the proposed methodology adheres to a sequential pipeline: image preprocessing, HOG feature extraction, SMOTE-based feature balancing, and KELM classification. This integrated method allows the model to leverage strong feature representation, balanced training data, and efficient kernel-based learning, thereby improving COVID-19 detection accuracy in CXR images. The comprehensive workflow of the proposed framework is depicted in Figure 1.

Outline of the Proposed Methodology.

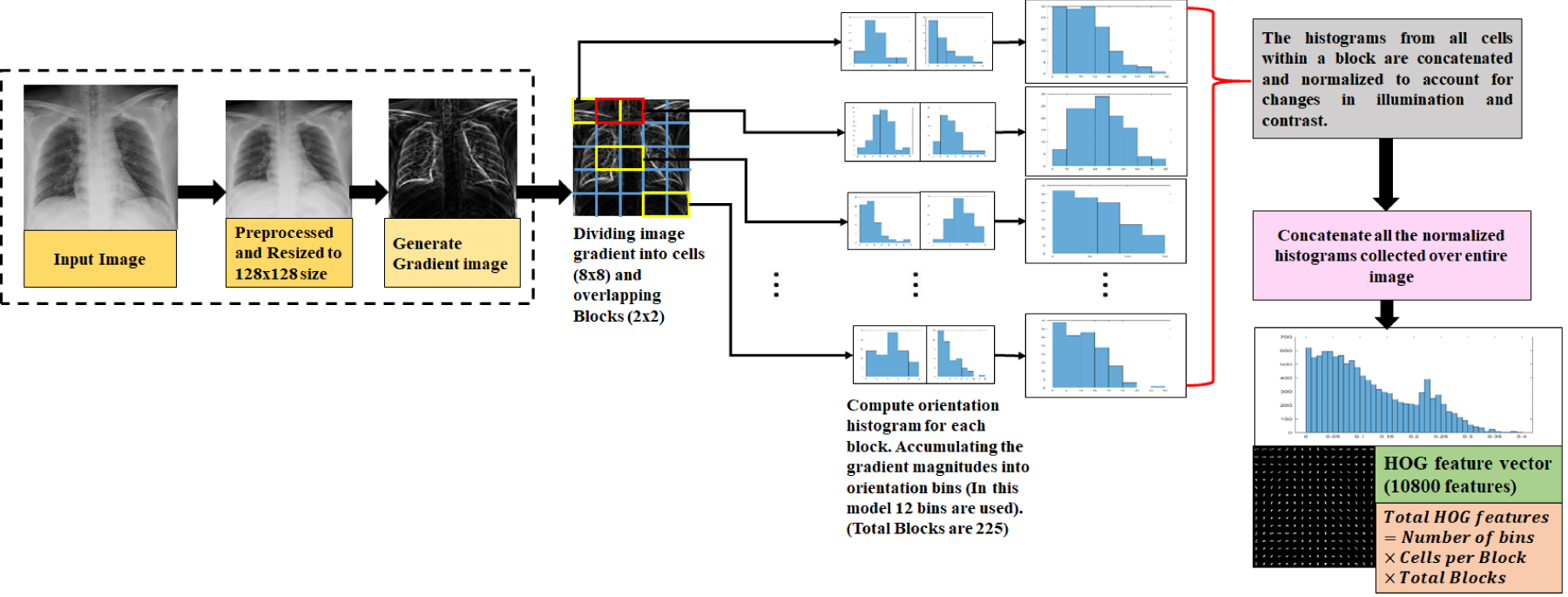

HOG Feature Extraction

In the realm of computer vision, effective feature extraction is pivotal for various applications, including object detection, image classification, and scene understanding. Among the many techniques developed for this purpose, HOG stands out for its robustness and effectiveness. This method has been instrumental in extracting meaningful features from images, particularly for object detection tasks such as pedestrian and vehicle detection (Dalal & Triggs, 2005). Figure 2 illustrates the steps involved in the extraction of HOG features from a CXR image. This figure shows the contours and edges in the radiographic data, which are essential for further analysis and interpretation.

Block Diagram Describing HOG Feature Extraction Method. Note. HOG = Histogram of Oriented Gradient.

The steps required to extract HOG features are listed below: Gradient computation: The first stage involves calculating the gradient magnitude and direction for each pixel in the image. This is typically achieved using gradient filters like the Sobel operator, which highlights the changes in pixel intensity. These gradients emphasize areas where there are strong transitions in intensity, which often correspond to the edges of objects. Cell division: Cells are discrete, nonoverlapping areas that are generated by image decomposition into smaller units. For assembling the gradient orientations, a histogram is built inside each cell. This histogram reflects the distribution of gradient directions within the cell, which can be indicative of local texture and edge patterns. Block normalization: To ensure that the feature descriptor remains consistent across varying lighting conditions and contrast levels, normalization is applied. Cells are grouped into larger regions called blocks. The histograms within each block are normalized to produce a feature vector that is less sensitive to changes in illumination and contrast. Feature vector construction: In the last step, feature vector for the image is created by concatenating the normalized histograms from each block. The extracted HOG features of the image that are captured in this vector, can be applied to a variety of object recognition or machine learning applications.

Unbalanced datasets have an adverse effect on the performance and reliability of machine learning models. The learning model’s approximation of the decision boundary can be skewed in cases when there is a significant disparity in the number of samples in each class. Several data-balancing strategies have been developed over time; one of the most popular and highly acceptable approach is synthetic minority oversampling technique (SMOTE) (Chawla et al., 2002). As the name suggests, it oversamples the minority class by generating synthetic samples.

Steps involved in the implementation of HOG–SMOTE-balancing approach are: Selection of a random instance from HOG feature space of minority class. Identifying K-nearest neighbor: SMOTE identifies Generating synthetic instances: For each instance, SMOTE generates synthetic examples by interpolating between the instance and its nearest neighbors. Specifically, it creates synthetic points by choosing random points along the line segment joining the instance and its neighbor (Le et al., 2019). Incorporate synthetic instances: These newly generated synthetic instances are then added to the minority class dataset, effectively increasing its size and helping balance the class distribution.

The synthetic samples are not exact replicas of existing instances but are instead created to fill gaps in the feature space, which helps in creating a more generalized decision boundary. SMOTE has its own advantages over random sampling methods. Unlike simple duplication, SMOTE generates diverse examples that can help models generalize better by providing a broader representation of minority class. Thus reducing the risk of overfitting by introducing variability in synthetic samples. But classic SMOTE might not be ideal for all types of data, especially in cases where the minority class has a complex distribution or is noisy. The proposed variation HOG–SMOTE improves the performance of classification model, by generating new instances using HOG feature vectors. HOG extracts relevant features by calculating gradients of each pixel and then decomposing image into nonoverlapping cells. Histogram is build inside each cell for assembling gradient orientations. The histograms within each block are normalized to produce a feature vector that is less sensitive to changes in illumination and contrast. Synthetic instances generated using HOG feature vectors are less prone to noise, and hence more efficient than traditional SMOTE.

Feedforward networks have been extensively used in many applications, but optimizing hyperparameters is necessary to achieve strong generalization results. Huang et al. (2004) introduced a faster and efficient learning algorithm called “Extreme Learning Machine (ELM)” which is a single-layer feedforward network (SLFN). Compared to other well-known SLFN learning algorithms, ELM is distinguished by its ease of implementation, tendency to achieve the lowest training error, ability to generate smallest weight norm, strong generalization capabilities, and faster processing speed. For any activation function that is infinitely differentiable, such as the sigmoid, radial basis, or exponential function, it is possible to randomly assign input weights and hidden layer bias values (Huang et al., 2006). The Moore–Penrose generalized inverse (Moore, 1920; Penrose, 1955) technique is used to find the output weights after the input weights are selected at random. Let there be

The output weight can be calculated with the help of following equations

A mapping from input space to

Overview of Dataset

There are a limited number of publicly accessible CXR images of individuals affected by COVID-19, primarily due to the relatively recent emergence of the disease. Among the few available resources, four datasets are utilized. Dataset-1 is one of the most used COVID-19 Radiography Database developed by Chowdhury et al. (2020) and Rahman et al. (2021). This database compiles publicly accessible datasets from multiple sources, forming one of the most extensive collections of CXR images of coronavirus-infected cases. In this study, the second version of the database was used, comprising 3,616 COVID-19 cases, 1,345 instances of viral pneumonia, 10,192 normal images, and 6,012 images showing lung opacity. To enhance the effectiveness of this experiment, we focused exclusively on three specific classes, ensuring clarity and precision in our findings. Notably, lung opacity cases were deliberately excluded, to maintain the integrity of our results. As a result, the proposed model was specifically trained to differentiate between COVID-19 and the two remaining classes.

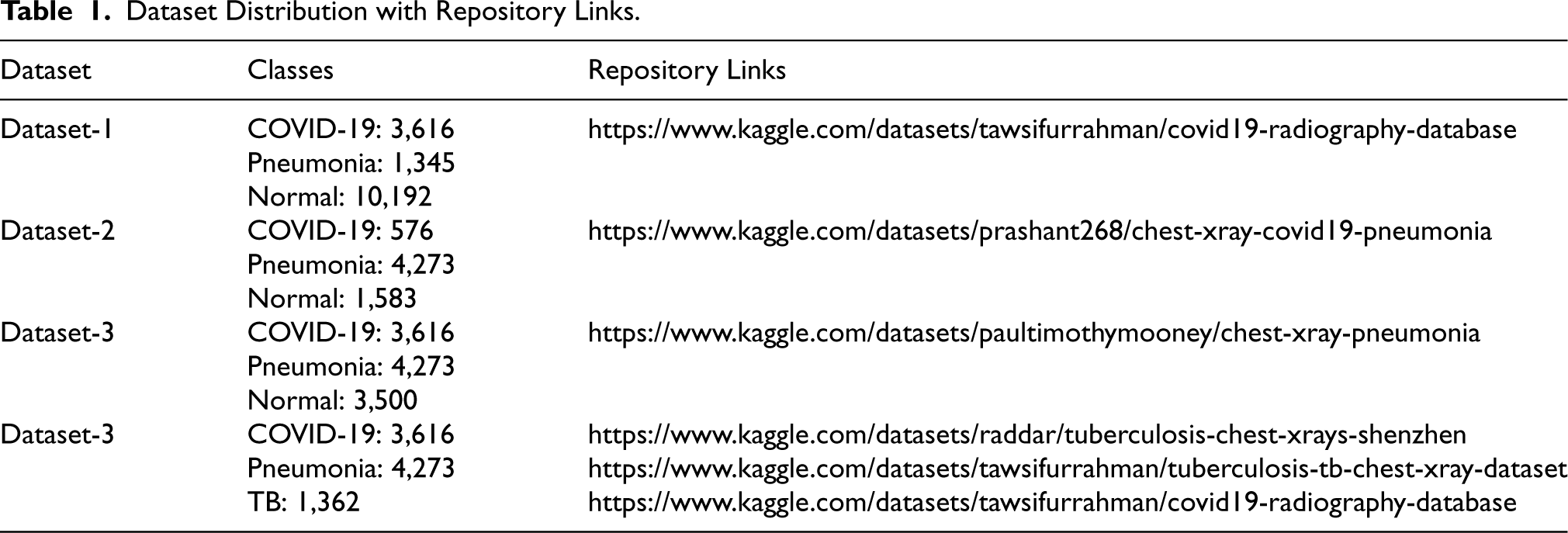

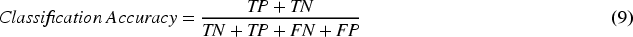

To evaluate the robustness of the proposed model, three more datasets were employed. Dataset-2 was sourced from the Kaggle repository and was assembled from a compilation of CXR images obtained from publicly accessible resources (Cohen et al., 2020; Kermany et al., 2018). Dataset-3 was prepared by combining two publicly available databases from the Kaggle repository. From Kermany et al. (2018), we included normal and pneumonia CXR images, and from Chowdhury et al. (2020) and Rahman et al. (2021), COVID-19 images were included to form the third dataset. In Dataset-4, a new class of TB was incorporated. There exists a possibility that individuals infected with COVID-19 may be erroneously diagnosed with other pulmonary diseases, such as pneumonia or TB. Consequently, this dataset comprises three analogous classes of lung infection diseases: COVID-19, pneumonia, and TB. We prepared this dataset by combining images from three Kaggle repositories. The data distribution and repository link is given in Table 1.

Dataset Distribution with Repository Links.

Dataset Distribution with Repository Links.

The raw images of the dataset are first resized to 128

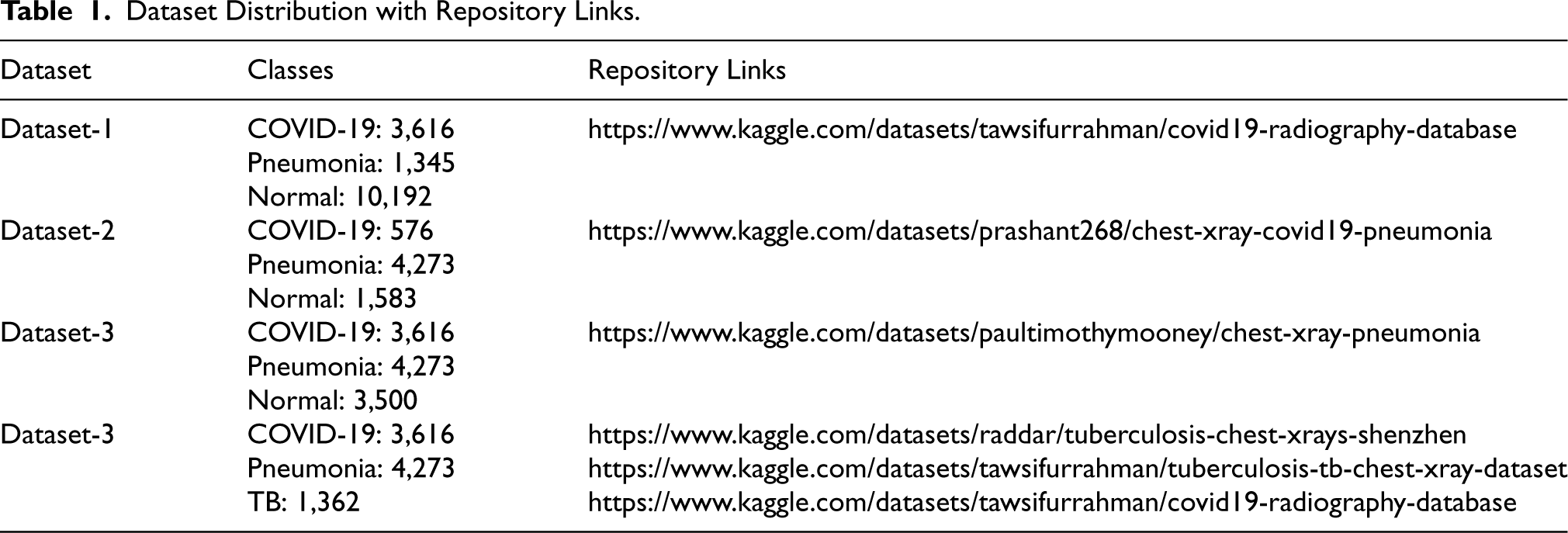

t-SNE Utilized for Visualizing Class Distribution in Datasets. To Prepare the Model and Evaluate its Performance, it Provides Information on Data Disparity, Class Overlap, and Clustering. Examining the t-SNE Plots Before (a) and After Applying HOG–SMOTE (b) Reveals Important Information About the Success of Data-Balancing Techniques and Ensures that the Minority Classes Have Been Adequately Enhanced Without Altering the Boundaries Between Classes. Note. t-SNE = t-distributed Stochastic Neighbor Embedding; HOG = Histogram of Oriented Gradient; SMOTE = Synthetic Minority Over-sampling Technique.

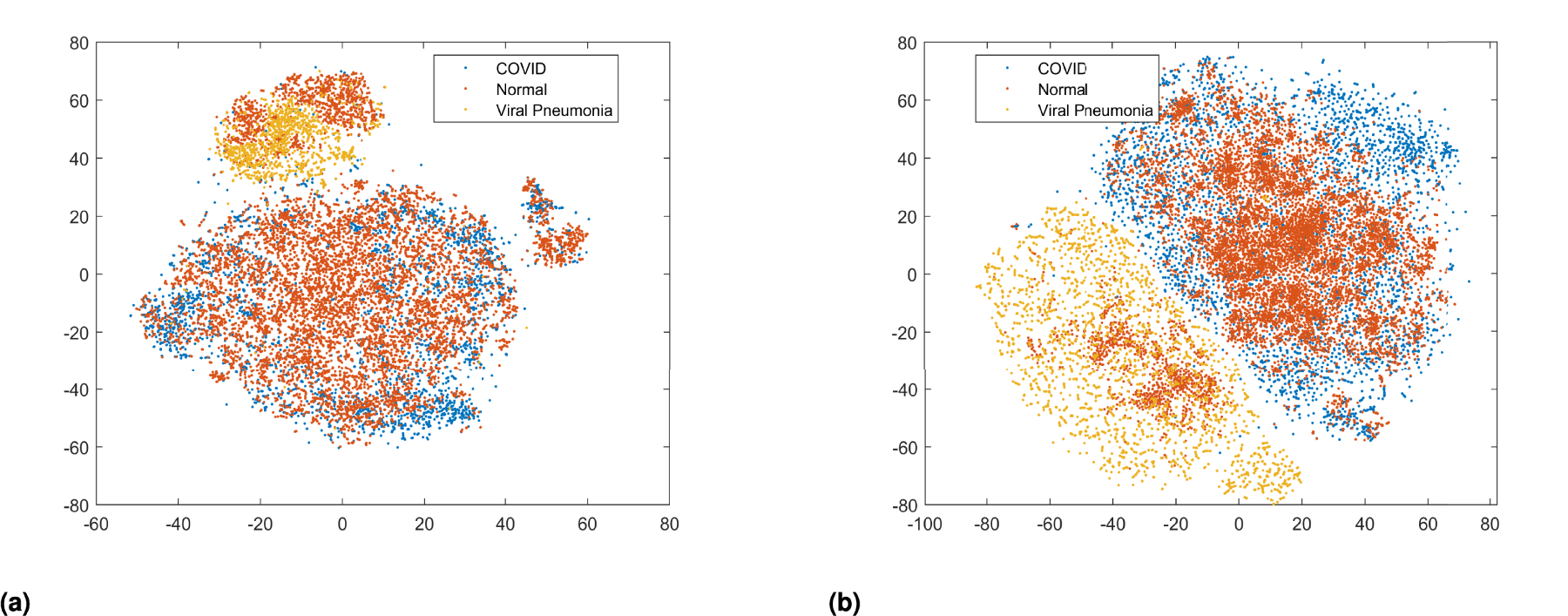

The effectiveness of the proposed method is evaluated through six key performance parameters: accuracy, which measures the overall correctly classified instances; sensitivity, indicating the true positive rate; specificity, reflecting the true negative rate; precision, assessing the proportion of true positive results among all positive predictions;

The mathematical representation of the performance metrics mentioned above is provided below

The HOG–SMOTE kernel-based extreme learning machine (HS-KELM) is implemented on the MATLAB R2023b platform. The software was installed on personal workstation with the configuration: 13th Gen Intel Core i7-13700F CPU (2.10 GHz), 16 GB RAM, 1 TB SSD and NVIDIA GeForce RTX 3060 12 GB GPU, which is utilized for performing all the experiments of the proposed model.

Results and Discussion

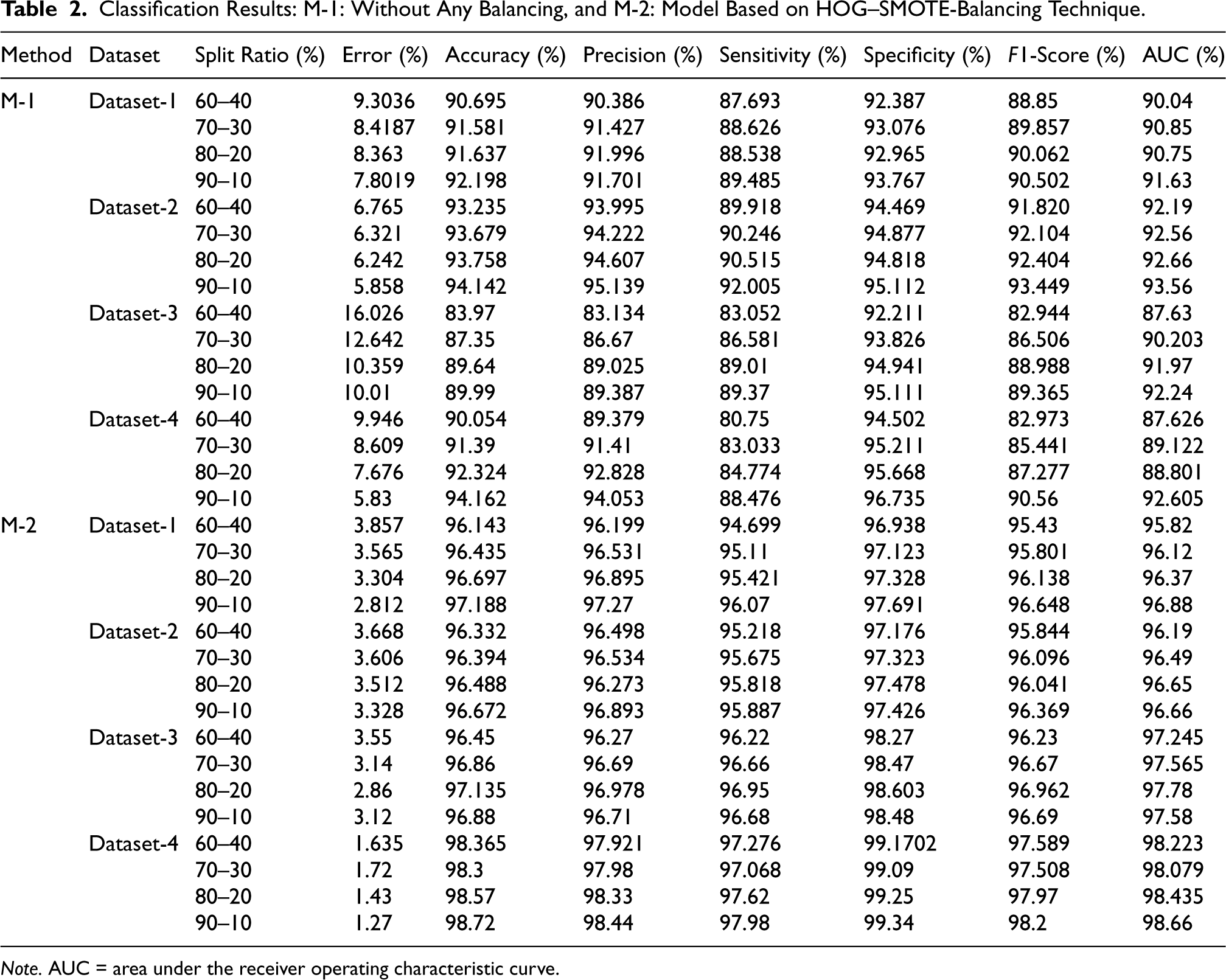

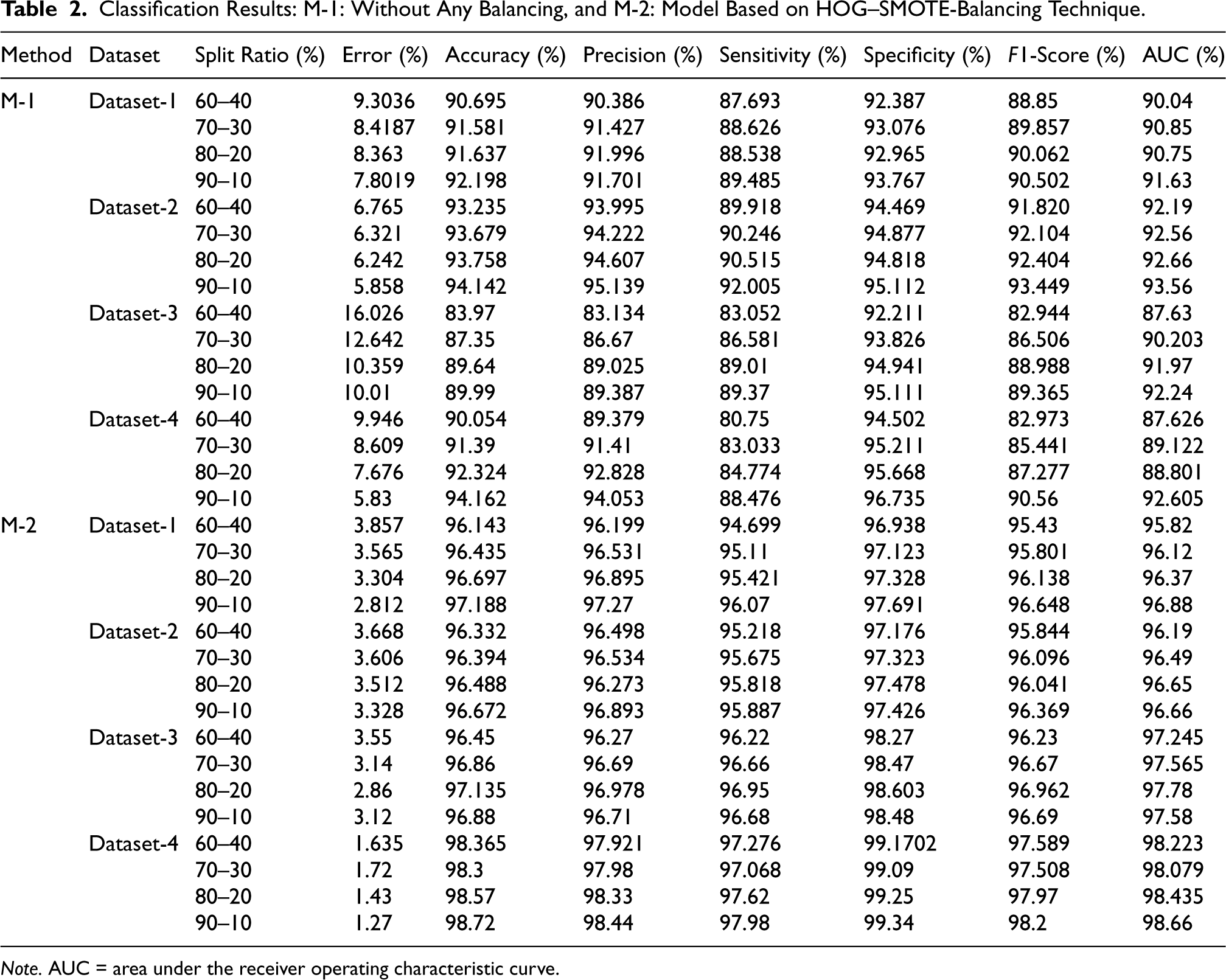

Before applying HOG–SMOTE for data balancing, experiments are initially conducted on the unbalanced datasets outlined in Table 1. The dataset comprises images that are a combination of RGB and grayscale, with varying dimensions. To standardize the images, all CXR images are converted to grayscale, resized to

Classification Results: M-1: Without Any Balancing, and M-2: Model Based on HOG–SMOTE-Balancing Technique.

Classification Results: M-1: Without Any Balancing, and M-2: Model Based on HOG–SMOTE-Balancing Technique.

Note. AUC = area under the receiver operating characteristic curve.

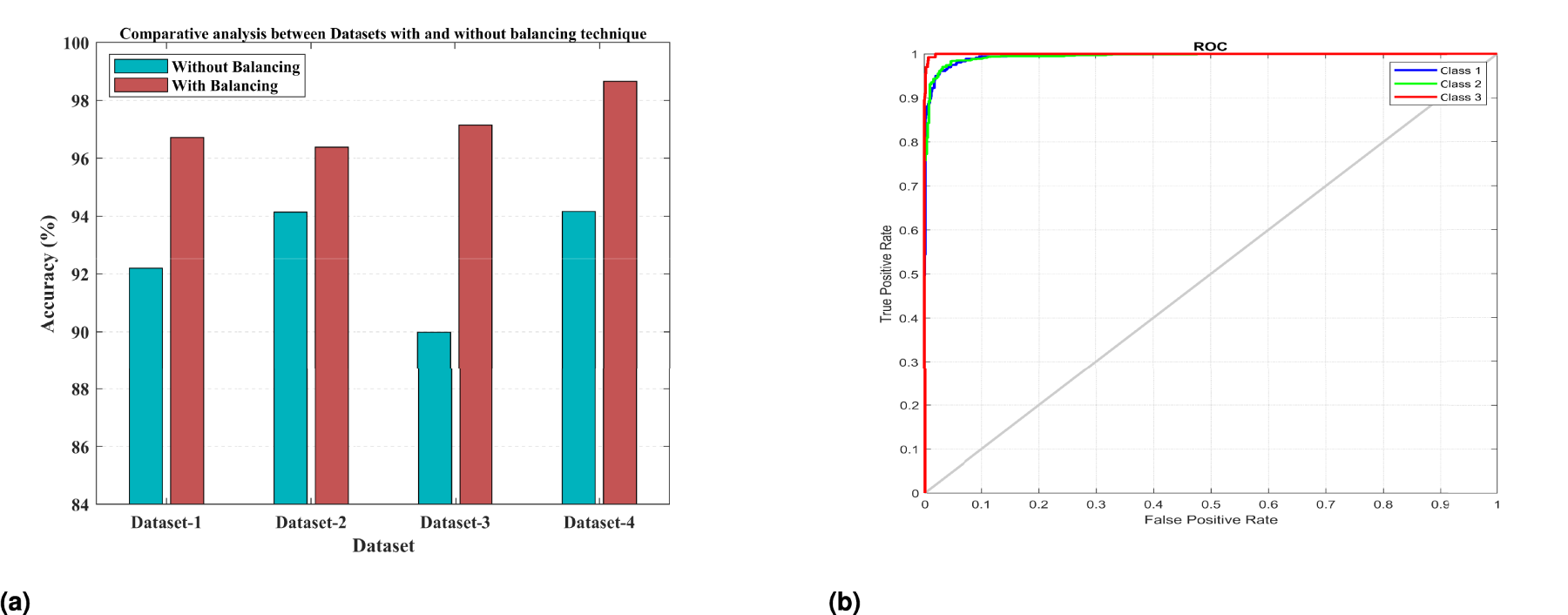

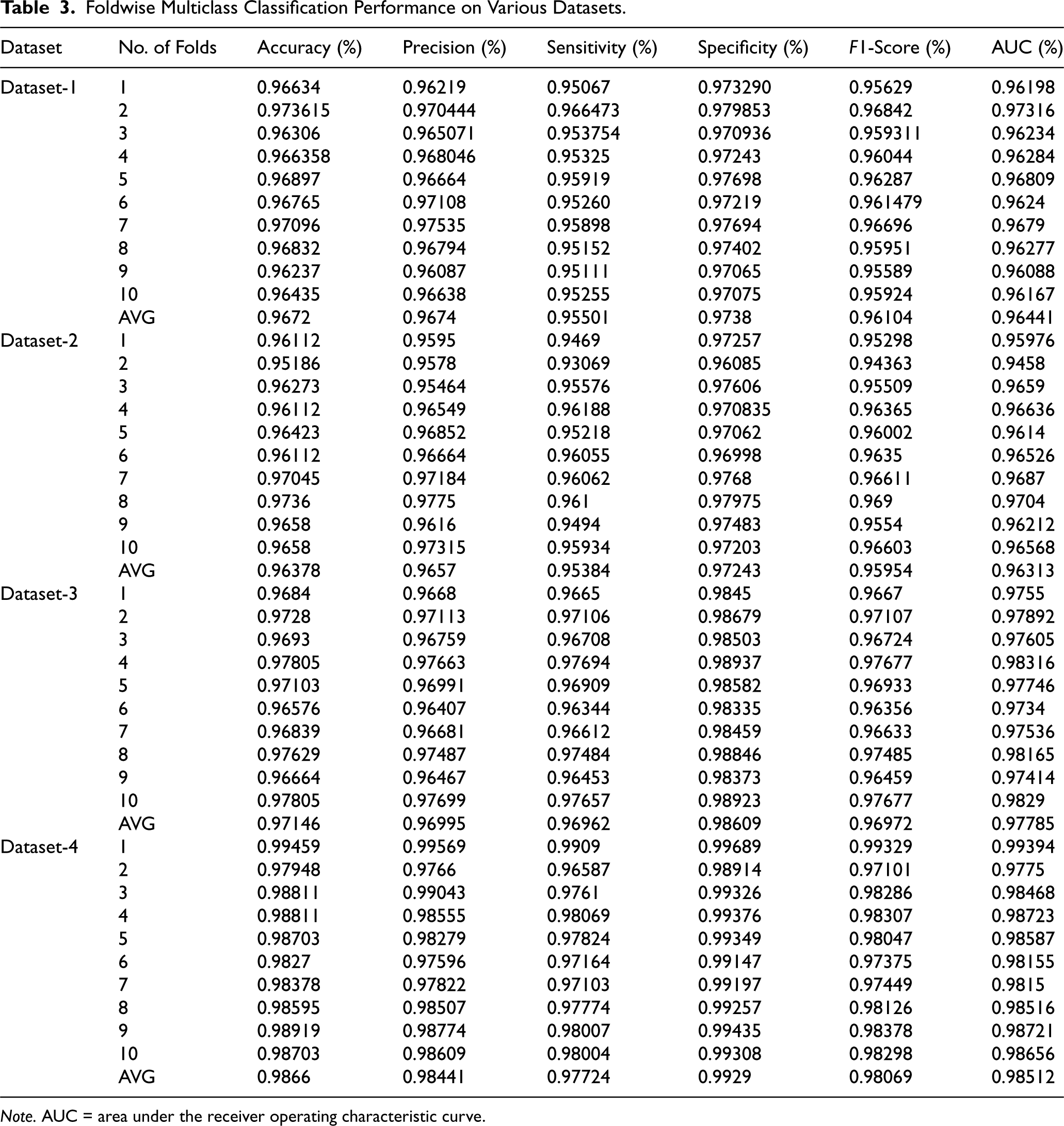

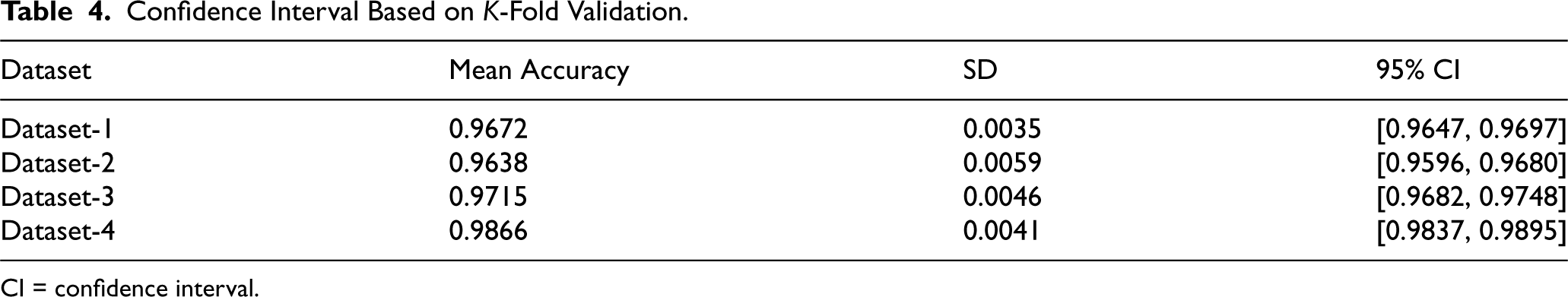

In the second experiment, a 10-fold cross-validation was conducted on Datasets 1–4 using a HOG–SMOTE-based balancing module. The results from each fold, along with the average outcomes, are presented in Table 3. Additionally, Table 4 specifies the confidence intervals for the accuracy attained in the classification of each Dataset 1–4. This module not only ensures the dataset is balanced but also addresses any disparities and overlaps among classes. Following the balancing and normalization of the dataset, it is employed to train a classification model based on the KELM. Effective separation of class boundaries is achieved through the application of the Radial Basis Function, which is recognized as the most appropriate kernel function for the ELM model. The results of this study indicate that KELMs demonstrate superior effectiveness and suitability for multiclass classification tasks compared to conventional machine learning classifiers, particularly concerning the specified COVID dataset. In this configuration, the KELM-based classifier model, when utilized in conjunction with the HOG–SMOTE-balancing algorithm, demonstrated superior performance, achieving an accuracy of 96.72%, 96.38%, 97.15%, and 98.66% on Dataset-1, Dataset-2, Dataset-3, and Dataset-4, respectively, surpassing all other models. The performance evaluation plots for the three-class classification model, including the ROC curve, are displayed in Figure 4.

Graphical Representation of Experimental Outcomes: (a) Comparison of Accuracy Metric Provided by KELM for Each Dataset: (Left Bar) Without Data Balancing, (Right Bar) With Balancing and (b) ROC of Proposed Model Based on KELM. Note. KELM = Kernel-based Extreme Learning Machine; ROC = receiver operating characteristic.

Foldwise Multiclass Classification Performance on Various Datasets.

Note. AUC = area under the receiver operating characteristic curve.

Confidence Interval Based on

CI = confidence interval.

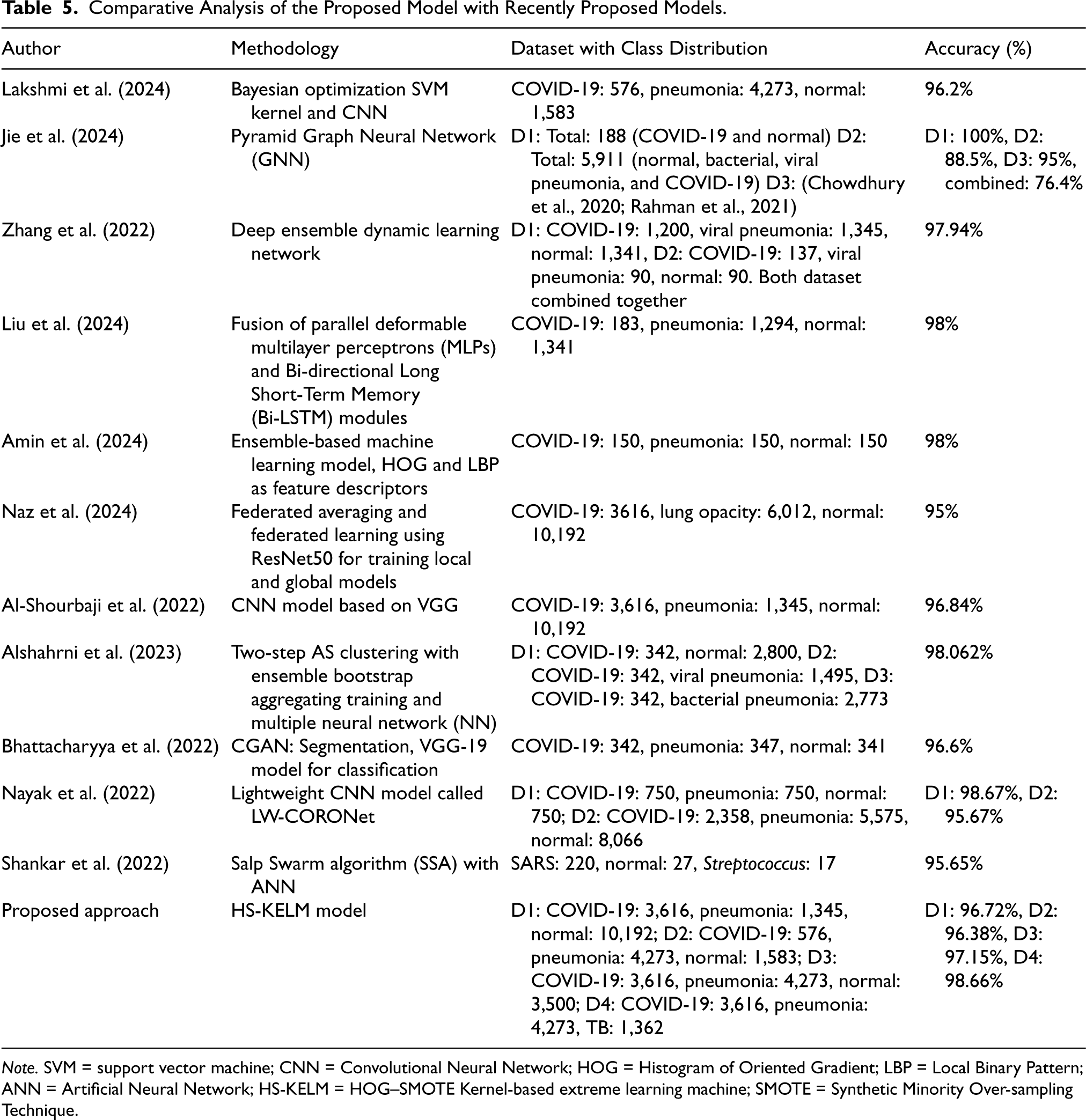

Table 5 presents a comparative analysis of contemporary DL architectures employed for the classification of COVID-19 via CXR scans. The following categories of models are examined: CNNs, transfer learning-based models, and hybrid/ensemble models. As demonstrated in Table 5, our study surpasses the performance of most existing state-of-the-art models. Additionally, the variation in datasets employed in our research enhances the robustness of the proposed model, thereby better illustrating its effectiveness compared to alternative approaches. The outcome obtained for Dataset-1 is highly comparable to the CNN model based on VGG as proposed by Al-Shourbaji et al. (2022). Compared to the CNN model, the proposed model achieves exceptional performance without requiring high-end GPU hardware. Additionally, the processing time is significantly reduced, making it a more cost-effective solution.

Comparative Analysis of the Proposed Model with Recently Proposed Models.

Note. SVM = support vector machine; CNN = Convolutional Neural Network; HOG = Histogram of Oriented Gradient; LBP = Local Binary Pattern; ANN = Artificial Neural Network; HS-KELM = HOG–SMOTE Kernel-based extreme learning machine; SMOTE = Synthetic Minority Over-sampling Technique.

In conclusion, the proposed medical image classification model, which integrates an effective imbalance handling technique HOG–SMOTE with the efficient KELM, presents a remarkable advancement over current state-of-the-art DL models. The combination of these two approaches enables the model to address the inherent challenges of class imbalance in medical datasets, while also delivering superior classification performance in terms of accuracy, sensitivity, and specificity. What distinguishes this model is its exceptional computational efficiency. Unlike DL algorithms that typically demand substantial computational resources, including high-end GPUs and extensive training times, our approach significantly reduces both the hardware requirements and processing time. As medical image datasets continue to grow in complexity and volume, the need for efficient and scalable solutions becomes increasingly critical. The proposed method addresses this challenge by offering a high-performance classification system that is both cost-effective and scalable, without compromising on diagnostic accuracy. In summary, the proposed imbalanced technique HS-KELM-based medical image classification model offers a powerful, resource-efficient alternative to traditional DL approaches, providing both superior performance and a significant reduction in computational burden, making it a promising candidate for widespread adoption in medical diagnostics. Future work could further explore optimizations and adapt the model to more diverse and complex real-world datasets, reinforcing its potential to set new standards in classification tasks.

Footnotes

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.