Abstract

Recently, due to the excellent computational efficiency and interpretability, discriminative correlation filter (DCF)-based tracking methods have received extensive attention in the field of unmanned aerial vehicles (UAVs). However, existing methods are usually susceptible to interference from significant appearance changes of the target object or background occlusion, which leads to tracking failure. To effectively address these issues, we propose a distortion-aware correlation filter with target mask (DACFTM) for UAV, which introduces a target regularization term to enhance the target perception ability of the tracking model. Specifically, we construct a target mask matrix based on the highest peak of the response map of the previous frame, thereby leveraging prior reliable localization confidence, and multiply it with the current feature map to obtain a regularization term containing only target information, effectively distinguishing the target from the background. In addition, to deal with tracking failure caused by large appearance changes, we propose a distortion-aware mechanism. When the quality of the response map corresponding to the filter is higher than a set threshold, we consider the filter is reliable and adopt the filter fusion strategy; otherwise, the saved high-quality filter is selected for the tracking in the next frame. Finally, we comprehensively evaluate the performance of DACFTM on three mainstream UAV benchmark datasets, and experimental results demonstrate that the DACFTM achieves impressive tracking performance.

Introduction

Visual object tracking is an important research topic in the field of computer vision. The tracker is initialized in the first frame, aiming to continuously track the target in the subsequent video sequences. However, due to the fact that the target is constantly disturbed by significant deformations, occlusions, complex environments and other challenges during the moving process, the design of high-performance trackers remains a difficult task. Currently, visual object tracking for unmanned aerial vehicles (UAVs) has been widely used in practical applications, including traffic monitoring (Xuan et al., 2019), autonomous driving, aerial film shooting (Bonatti et al., 2019), and other fields.

In recent years, discriminative correlation filter (DCF)-based tracking methods have achieved excellent results, especially in the field of UAVs (Chen et al., 2024; Jin et al., 2024). Early DCF-based trackers (Danelljan et al., 2015b; Henriques et al., 2014) benefited from circular shift sampling to obtain abundant training samples. Meanwhile, discrete Fourier transform (DFT) can convert the convolution calculation in the spatial domain into the dot product calculation in the frequency domain, which greatly reduces computational complexity and promotes the rapid development of related technologies. Based on previous works, the tremendous success of high-performance DCF-based trackers (Zhang et al., 2020, 2022c) can mainly be attributed to the following three aspects: various regularization terms with specific significance, various interference suppression strategies, and robust feature representations. Firstly, the temporal regularization term can emphasize the continuity of the time series to improve the generalization ability (Li et al., 2018b), and the channel regularization term enhances the tracking ability by giving the attention mechanism for learning each channel (Xu et al., 2019b). Secondly, the most representative one of the interference suppression strategies is background-aware correlation filter (BACF) (Kiani Galoogahi et al., 2017), which effectively suppresses background interference by modeling the background, thereby ensuring accurate tracking. Thirdly, as a necessary component for tracker design, robust feature representation methods have developed rapidly, such as histogram of oriented gradient (HOG) (Dalal & Triggs, 2005), color names (CNs) (Van De Weijer et al., 2009), and convolutional neural network (CNN) features (Lu et al., 2018). Among them, deep CNN features are currently the main factors to improve the precision and success rate of the tracker.

Although deep CNN features have made significant progress in improving the tracking performance, the complementarity of hand-crafted features and deep features is often overlooked. Specifically, hand-crafted features are more sensitive to spatial information compared to deep features (Zhang et al., 2022b), while deep features pay more attention to channel information (Xu et al., 2019b). Secondly, the current tracker design lacks effective improvements to specific designs in previous tracking frameworks. For example, when the quality of the response map deviates, existing tracker frameworks rarely check this situation, resulting in tracking failure. This not only loses the guiding significance that the response map can bring to the tracking quality, but also fails to correct abnormal situations such as multi-modality in a timely manner. Finally, most current trackers usually do not enhance the target information but weaken the influence of the background, which ignores the possibility of other candidate targets as the actual tracking objects. To sum up, in order to further improve the tracking performance, the problems analyzed above all need to be urgently addressed.

To solve the above problems, we propose an object tracking method based on a target mask regularization and distortion-aware mechanism. Inspired by Xu et al. (2019b), we used hand-crafted features and CNN features to perform grouped feature selection in the channel dimension, obtaining a compact feature representation. Secondly, we designed a distortion-aware mechanism to guide the filter selection by detecting the quality of the response map.

Extensive experiments conducted on multiple challenging tracking datasets show that the proposed tracking method achieves excellent performance. Our main contributions are as follows: A target mask regularization correlation filter model is proposed. We construct the target mask matrix through the highest peak of the response map of the previous frame, and multiply it with the current feature map to obtain the regularization term that only contains the target information, which can effectively distinguish the target from the background. A distortion-aware mechanism to guide the filter selection is proposed. We use the peak-to-sidelobe ratio and the highest peak to evaluate the quality of the response map. When the quality of the response map corresponding to the filter is higher than a set threshold, the filter is considered reliable; otherwise, the saved high-quality filter is selected. The optimal derivation process of the proposed model is presented. The proposed objective function with the target mask is decomposed into several sub-problems, and each sub-problem has a closed-form solution. In this way, the implementation of the tracking algorithm is simplified. We comprehensively evaluated the effectiveness of our method on UAV123@10fps, DTB-70 and UAVDT. The results show that our tracker achieves competitive performance compared to other advanced trackers, achieving superior accuracy due to the synergistic effect of these innovations and robust multi-feature fusion. The code and data will be made public at https://github.com/upup99/DACFTM.

Our Distortion-Aware Correlation Filter with Target Mask (DACFTM) is a general CF model. The rest of this article is organized as follows: in Section 2, we will review the tracking methods closely related to this work. Section 3 will briefly discuss the proposed method and its technical details. Section 4 will present the experimental details and corresponding results of this article. Finally, in Section 5, this article is summarized.

Related Work

DCFs for Object Tracking

In 2010, minimum output sum of squared error (MOSSE) (Bolme et al., 2010) algorithm took the lead in introducing the correlation filtering theory into the target tracking task, and performed excellently in accuracy and speed. However, due to the widespread existence of boundary effects, its ability to cope with complex target changes is poor. Code shift keying (CSK) (Henriques et al., 2012) algorithm follows closely, and develops cyclic dense sampling based on MOSSE to obtain training samples, and uses kernel correlation filtering method. Kernelized correlation filter (KCF) (Henriques et al., 2014) algorithm further optimizes CSK performance with multichannel HOG characteristics. Danelljan et al. (2014) paid attention to the value of color features in tracking, and proposed multi-channel color feature CN after comprehensively evaluating the effect of feature extraction in multiple color spaces. Li and Zhu (2015) combined the HOG and CN features to complement each other and become the commonly used manual features for subsequent correlation filtering tracking. DeepSRDCF (Danelljan et al., 2015a) replaces HOG feature with VGG (visual geometry group) network single-layer convolution depth feature, greatly improving tracking accuracy. GFSDCF (Xu et al., 2019b) uses Resnet network and integrates HOG and CN features, so the performance is improved but the speed is reduced. In addition, DCF employs the alternating direction method of multipliers (ADMMs) to find fast convergent and accurate iterative solutions, which is widely used in image processing (Xu et al., 2019a), finance (Lai et al., 2020), and other fields.

In the object tracking scene of UAV, the target often encounters more serious deformation, and the background also changes dramatically. In order to effectively deal with the impact of this kind of interference, Zhang et al. (2022a) proposed a target sensing background suppression method with dual regression, and specifically established a new regularization term to improve the recognition ability of the filter. Zheng et al. (2021) used adaptive hybrid tags to enhance the anti-interference ability of the model in response to sudden appearance changes. They believed that the predefined tag quality had a great impact on the robustness of the tracker. Although these trackers have improved their tracking performance to a certain extent, they ignore the rapid change of target appearance in the real world. In our work, we use target mask and distortion sensing mechanism to deal with such challenges. In this way, the tracker proposed by us shows a strong recognition ability in the object tracking task of UAV, can better adapt to complex and changeable practical application scenarios, and provides a strong guarantee for accurate object tracking.

CNNs for Object Tracking

CNN has achieved significant success in the field of computer vision. In object tracking, CNN can continuously track targets in video sequences by learning their appearance features and motion patterns. In recent years, tracking algorithms (Zhang et al., 2024b, 2024c, 2025) have been widely inspired by this method. MDNet (Nam & Han, 2016) is an early target tracking algorithm based entirely on CNN, which improves the robustness and generalization ability of trackers through multi-domain learning. C-COT (Danelljan et al., 2016) effectively improves tracking performance by introducing continuous convolution operators to handle target deformation and appearance changes, and adopting online learning strategies. CREST (Song et al., 2017) introduced the concept of residual learning for the first time. When there is a significant difference between the output of the base map and the true Gaussian response, the residual network supplements the output of the base map through summation operations, which helps to make the output closer to the true result. The SiameseRPN (Li et al., 2018a) algorithm is based on SiameseFC (Bertinetto et al., 2016) and applies the idea of extracting target candidate boxes from region candidate networks in object detection to target tracking, which greatly solves the problem of severe object deformation in target tracking. In addition, in the past three years, with the popularity of transformer-based models, many scholars have applied them to the field of visual tracking. STARK (Yan et al., 2021) uses a score discriminator to evaluate the score of the object. When the score exceeds a predetermined threshold, the tracking result will be used as an online template. Mixformer (Cui et al., 2022) has designed an efficient single-stage tracking network. Although it still adopts online template update strategy, it also shows strong tracking performance. In the domain of three-dimensional (3D) object tracking, several methods have been developed to enhance the discriminative capability of search area features through target-aware information propagation. For instance, Wang et al. (2021)introduced MLVSNet, which incorporates a target guided attention (TGA) module designed to transmit target information and emphasize relevant points in the search area. Similarly, Xiao et al. proposed a target-specific feature enhancement (TSFA) module (Qi et al., 2020) based on the MLP layers to embed the target point cloud features into the search region, thereby improving feature matching. Extending this idea, Hui et al. (2021) presented a Siamese voxel-to-BEV tracker that performs template feature embedding to integrate template features into potential candidates, aiming to better capture the 3D structural characteristics of the target object. Deep learning algorithms show great potential in the field of target tracking. However, state-of-the-art vision transformer based models (Zhang et al., 2025a, 2025b) typically rely on large-scale offline pre-training and demand heavy computational resources. These constraints often render them unsuitable for real-time deployment on resource-limited UAV platforms, highlighting the continued necessity for efficient, lightweight tracking solutions.

Proposed Method

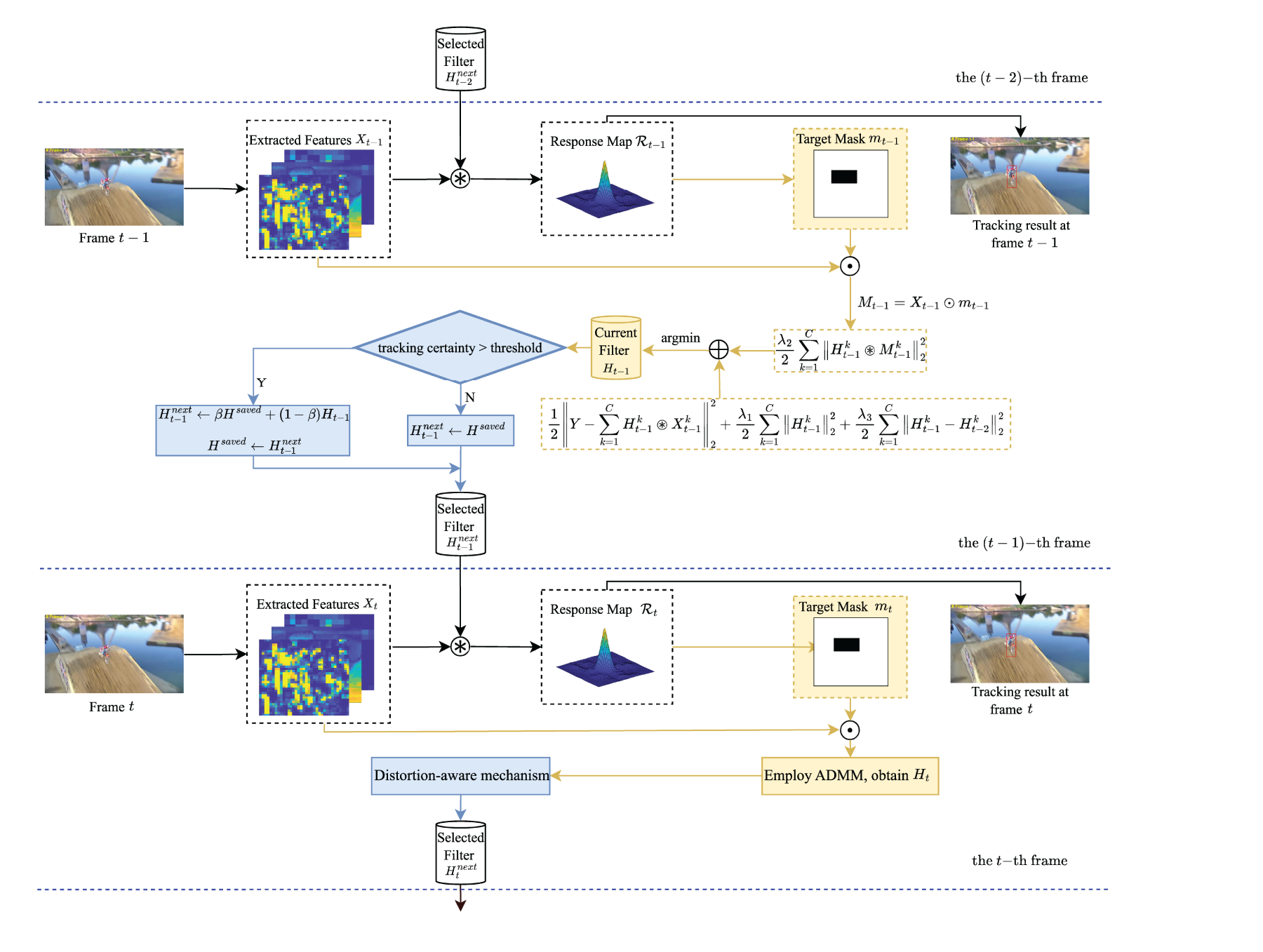

In this section, a detailed description of DACFTM will be provided. Its process can be summarized into three aspects: (1) target mask regularization; (2) distortion-aware mechanism; (3) high-quality filter selection. The overall flowchart of DACFTM is shown in Figure 1.

The framework of our proposed distortion-aware correlation filter with target mask (DACFTM) tracker. Here,

DCF (He et al., 2017) learns multichannel filters with multiple real training samples. Let

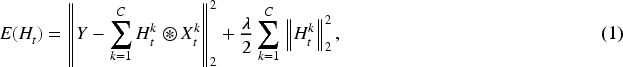

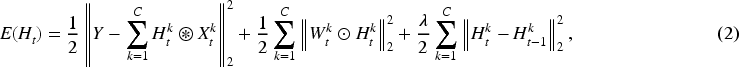

DCF is an effective visual tracking method, but it also brings inevitable boundary effects. The SRDCF algorithm based on spatial penalty thus emerged. At the same time, in order to solve the problem of online update, SRDCF established multiple training image models. Although this improves the tracking performance, it inevitably increases the complexity of the algorithm. Aiming at the problem of high complexity and low tracking efficiency, a correlation filter based on spatial–temporal regularization has emerged. This method can not only make a reasonable approximation of SRDCF in the case of multiple training samples, but also provide a more robust appearance model when significant appearance changes occur. The specific objective function is expressed as follows:

Generally, moving objects will continuously change after the initial frame. Given that UAV videos usually observe moving objects from a bird’s-eye view, and changes in the position of the camera and the position of the moving objects can cause greater changes in the background and foreground of the target. Therefore, in UAV videos, it is particularly crucial to focus on the information of moving objects or reduce the influence of background information. In fact, enhancing the information of moving objects should be given primary consideration, because in the process of weakening the influence of background information, the possibility of potential targets is often simultaneously reduced, which will subsequently have an adverse impact on the performance of the tracker.

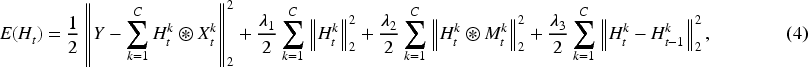

In this article, we propose to adopt the target mask method to enhance the information of the target object. As shown in Figure 1, DACFTM will generate a target feature block, where the target features are retained and the background feature values are set to zero. To ensure the accurate separation of the target and background regions in the template, we take the position with the highest peak in the response map of the previous frame as the center of the current target region and adopt the scale information of the tracking target. Let

The above operation serves as a crucial spatial gating mechanism. It effectively emphasizes features within the estimated target region while attenuating or zeroing out background features. By incorporating this

Different from traditional visual tracking tasks, in UAV videos, the tracked objects are more likely to be occluded and may even exceed the image boundary. Most existing trackers have difficulty repositioning the target when it reappears. In view of this, this article proposes a distortion-aware mechanism to guide the selection of filters. This mechanism is designed to detect such unreliable response maps and prevent the tracker from learning incorrect information. Instead of updating the filter with bad data, it allows us to select a previously reliable filter, thus preventing tracking failure and maintaining robustness.

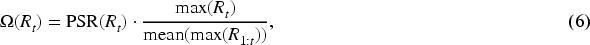

Above all, one problem that we must solve is how to detect the tracking failure caused by conditions such as occlusion or deformation with the help of the response map. Under normal circumstances, a clear response map has a sharp peak at the target position and a smooth shape in other areas. However, once the target is occluded or deformed, the response map will change drastically, usually showing a multi-peak shape, and the height of each peak will also decrease accordingly. Based on the above discussion, we can find that there are two indicators to evaluate the quality of the response map, namely (1) to evaluate the degree of the response map being a single peak, we adopt the peak-to-sidelobe ratio (Bolme et al., 2010),

In view of this, we decided to adopt a combined form of these two indicators. In order to be able to adaptively select the appropriate threshold, finally we give the specific definition of the evaluation index based on the peak-to-sidelobe ratio and the highest peak:

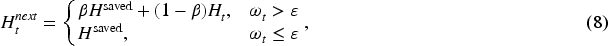

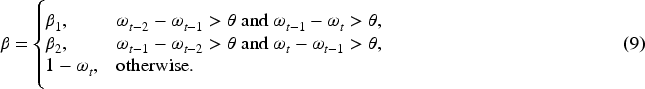

According to equation (7), we further propose a filter selection mechanism:

When

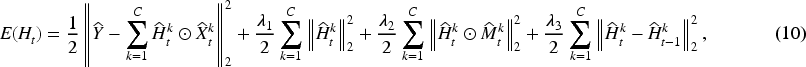

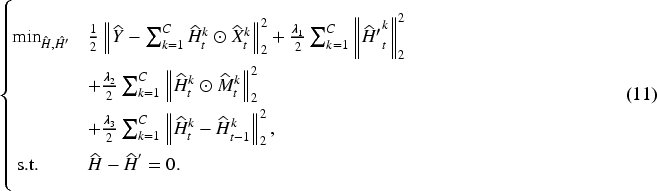

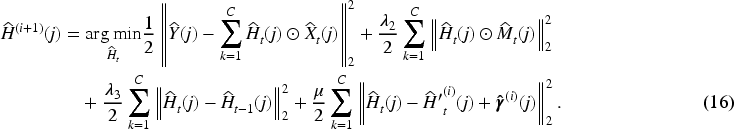

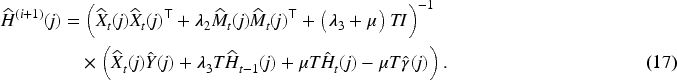

To enhance the computational efficiency, the calculation in the time domain is typically transformed to the frequency domain for the optimized calculation of the correlation filter. Applying the Parseval theorem to equation (4), the following equivalent optimization equation can be obtained:

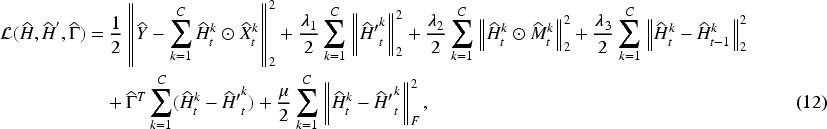

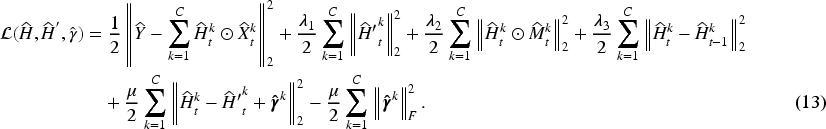

After introducing the auxiliary variable, the above equation becomes a bi-variable optimization problem. Usually, we can use the augmented Lagrangian multiplier to increase the convergence speed of the ADMM method. After augmenting the above equation, we can obtain the following equation:

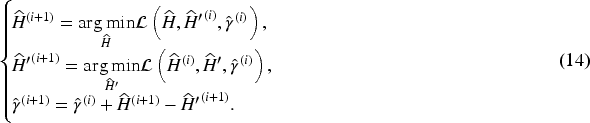

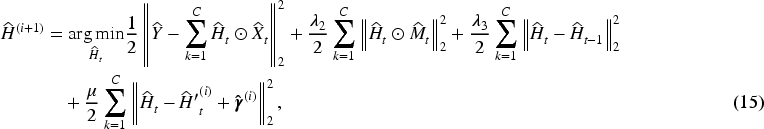

Next, by alternately updating

Here, the first two equalizes are the update formulas of the two sub-problems, and both

Therefore, for simple calculation, the dimension can be decomposed into the coordinate position of each pixel, that is, equivalently decomposed into

The

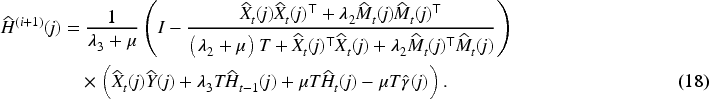

For the composite inverse matrix in the above equation, it can be further simplified using the Sherman-Morrison formula (Sherman & Morrison, 1950). Therefore, we can obtain as follows:

The above equation no longer contains the calculation of inversion but only simple multiplication and addition calculations of vectors, so it can achieve higher computational efficiency.

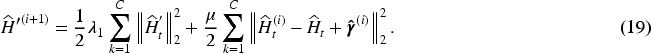

According to Chen et al. (2009) and Zhang et al. (2012), setting the first derivative of the above equation equal to zero, the optimal solution can be calculated as follows:

The

The Lagrangian multipliers can be updated as follows:

Similar to other trackers (Kiani Galoogahi et al., 2017; Li et al., 2018b), we adopt the online adaptive template scheme to train the correlation filter, which enhances the robustness of the tracker against appearance changes and illumination variations of the target. The online adaptive update method of the filter model is defined as follows:

In the target detection process, we need to extract the multichannel feature

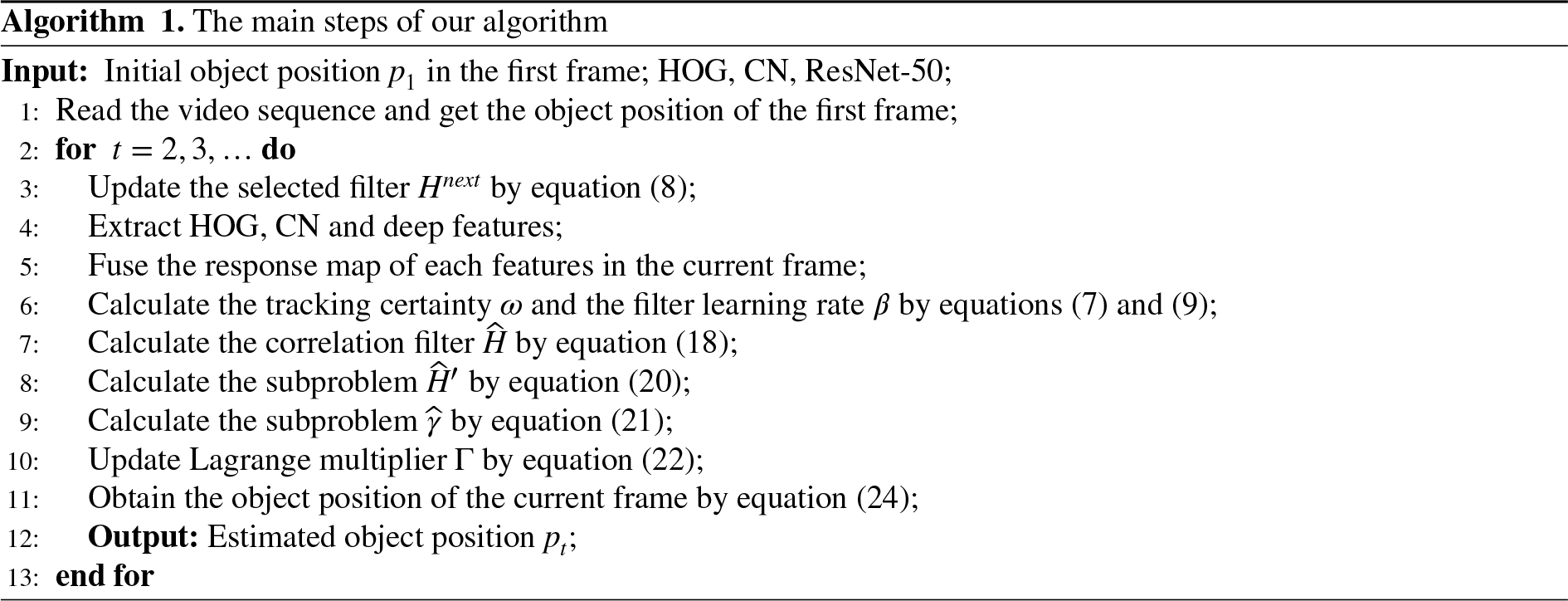

To verify the tracking performance of the DACFTM we proposed, we conducted a large number of comparative experiments and ablation experiments on three mainstream UAV tracking datasets against 18 advanced trackers. These extensive evaluations include detailed ablation studies to analyze component impact, and comparisons with recent state-of-the-art methods.

Implemented Details

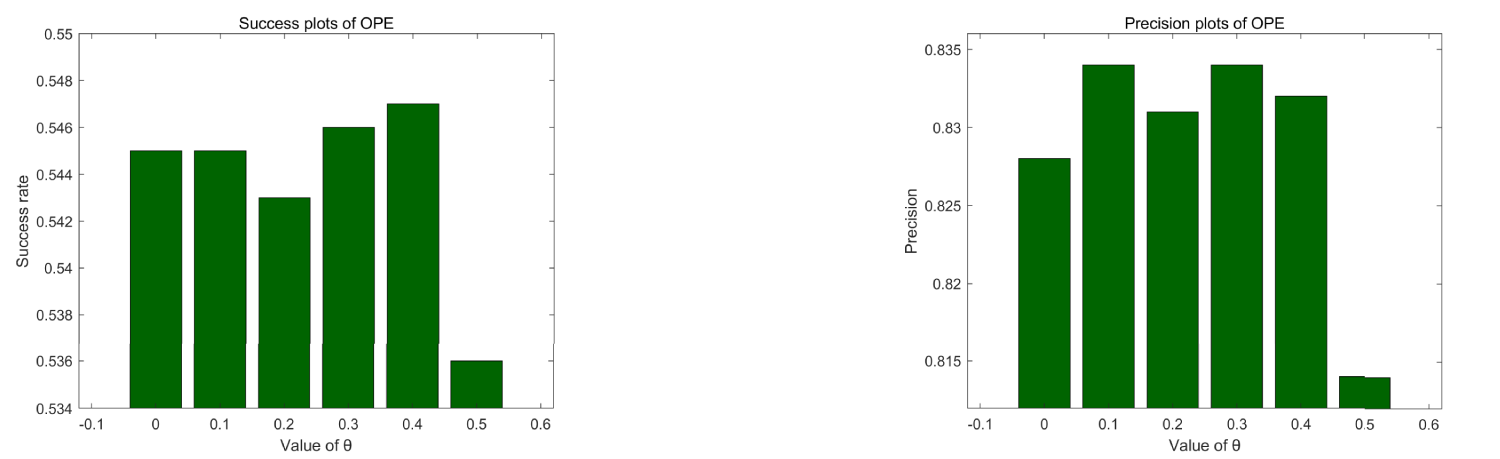

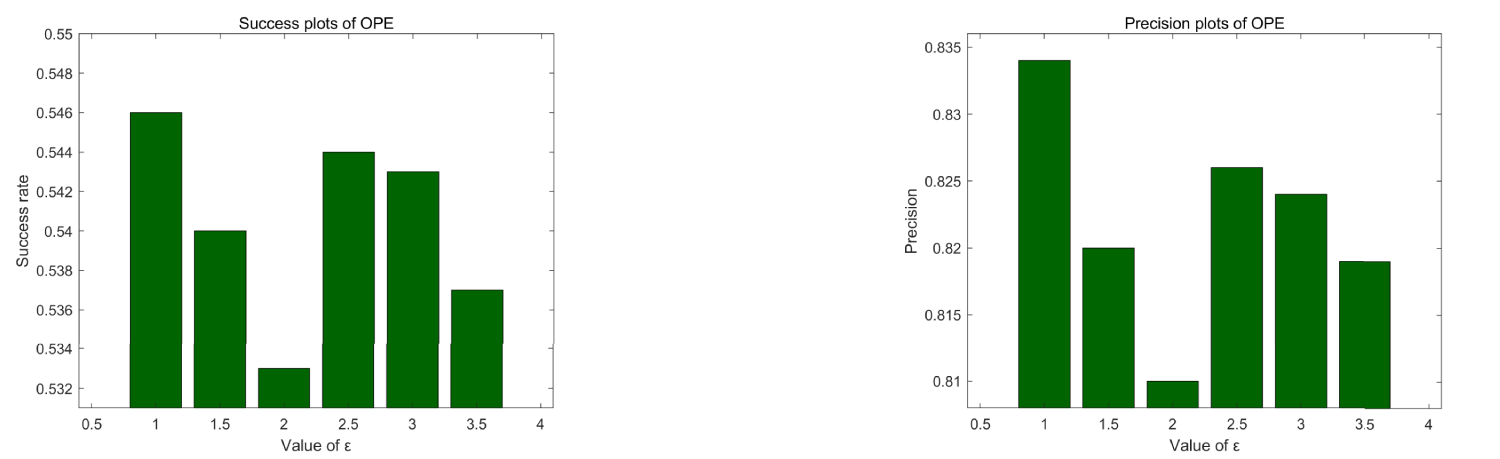

To guarantee that the comparison experiments can be conducted fairly and impartially, only the groundtruth of the target in the first frame are provided for all the tested videos. We implemented DACFTM in MATLAB 2020b, using MatConvNet-v1.0 as a toolbox with CUDA 10.2. The computational platform used a PC with a 2.5 GHz CPU and 16 GB of RAM. We extracted robust high-dimensional CNN features using hand-craft features such as CN, HOG, and ResNet-50 (He et al., 2016). Despite the inclusion of these multi-level features, our proposed DACFTM maintains high efficiency, achieving an average processing speed of 69.7 FPS, satisfying the real-time requirements for UAV tracking. Regarding the parameter selection for our experiments, the following values were specifically chosen: For the parameters in the objective function, we set

To obtain the results of all compared methods, we ensured a fair evaluation under the same conditions as described in this section. We achieved this by running the publicly available code of each tracker on the default drone benchmarks (UAV123@10fps, DTB-70, and UAVDT). In cases, where direct code execution was not feasible or less efficient, we utilized published benchmark scores, ensuring that all comparison results were obtained within a standardized testing framework.

Compared With Advanced Trackers

In this section, we compare our method with other advanced trackers.

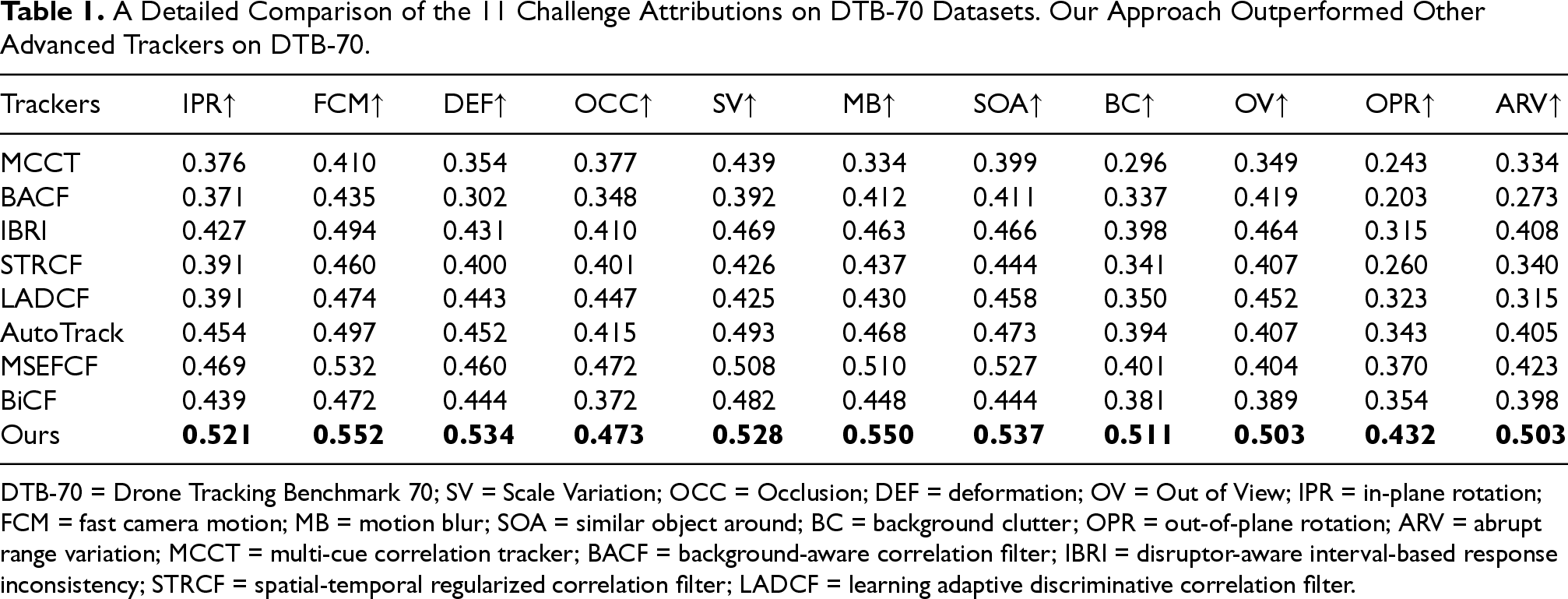

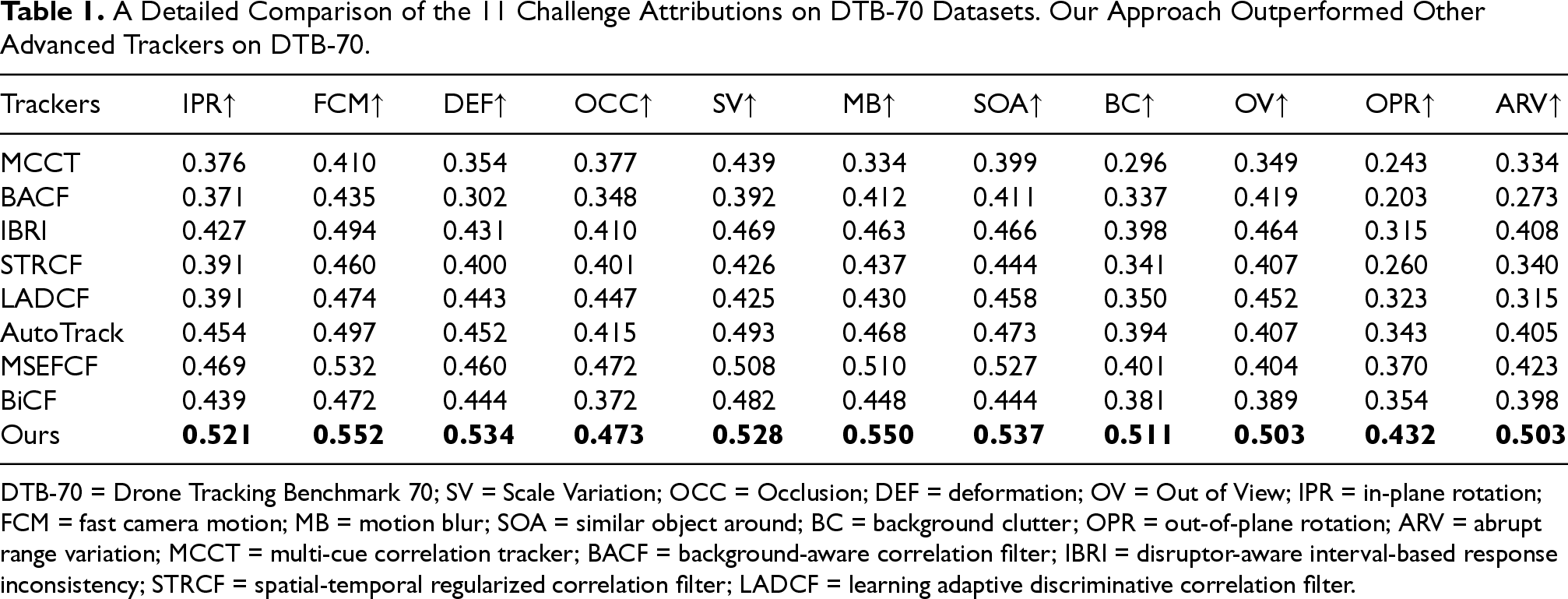

DTB-70

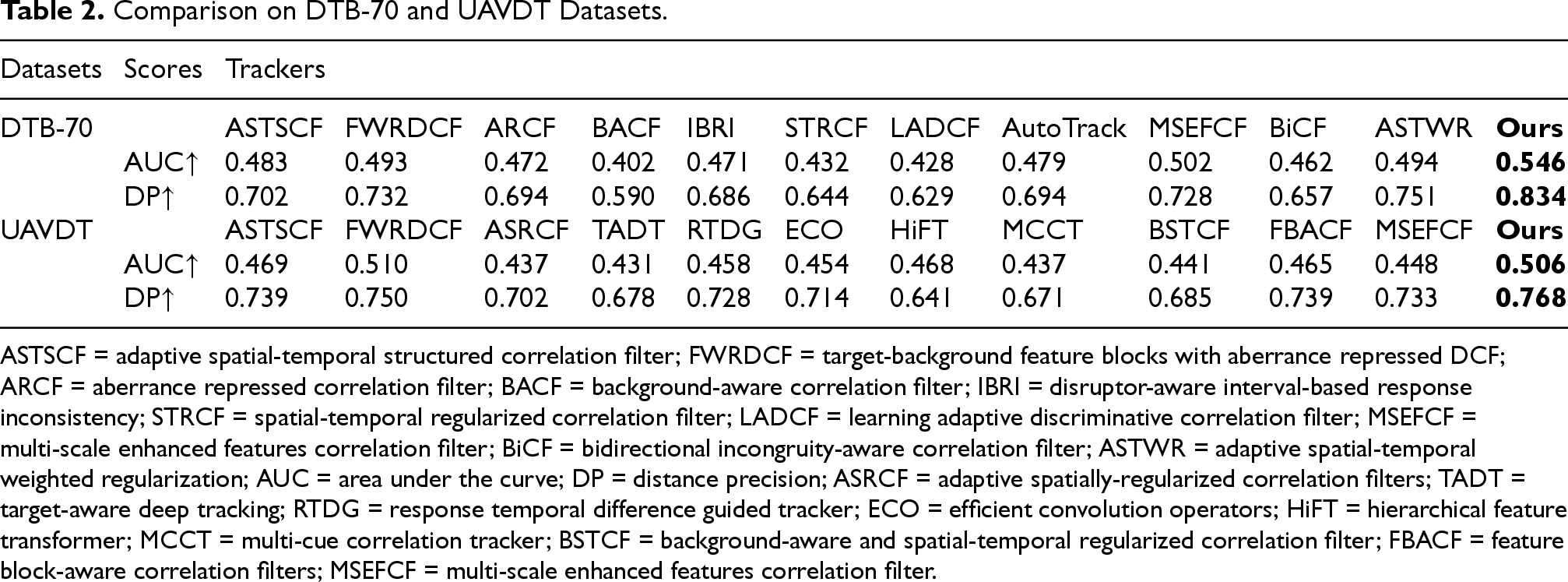

In Table 1, we evaluated DACFTM on the Drone Tracking Benchmark 70 (DTB-70), which contains 70 RGB video sequences from the perspective of unmanned aerial vehicles. In Table 2, we experimentally compared DACFTM with another eleven tracking methods. These tracking methods include ARCF (Huang et al., 2019), BACF (Kiani Galoogahi et al., 2017), IBRI (Fu et al., 2020), STRCF (Li et al., 2018b), LADCF (Xu et al., 2019c), AutoTrack (Li et al., 2020), MSEFCF (Yu et al., 2024), BiCF (Lin et al., 2020), ASTWR (Chen et al., 2024), ASTSCF (Li et al., 2025), and FWRDCF (Jia et al., 2025).

A Detailed Comparison of the 11 Challenge Attributions on DTB-70 Datasets. Our Approach Outperformed Other Advanced Trackers on DTB-70.

A Detailed Comparison of the 11 Challenge Attributions on DTB-70 Datasets. Our Approach Outperformed Other Advanced Trackers on DTB-70.

DTB-70 = Drone Tracking Benchmark 70; SV = Scale Variation; OCC = Occlusion; DEF = deformation; OV = Out of View; IPR = in-plane rotation; FCM = fast camera motion; MB = motion blur; SOA = similar object around; BC = background clutter; OPR = out-of-plane rotation; ARV = abrupt range variation; MCCT = multi-cue correlation tracker; BACF = background-aware correlation filter; IBRI = disruptor-aware interval-based response inconsistency; STRCF = spatial-temporal regularized correlation filter; LADCF = learning adaptive discriminative correlation filter.

Comparison on DTB-70 and UAVDT Datasets.

ASTSCF = adaptive spatial-temporal structured correlation filter; FWRDCF = target-background feature blocks with aberrance repressed DCF; ARCF = aberrance repressed correlation filter; BACF = background-aware correlation filter; IBRI = disruptor-aware interval-based response inconsistency; STRCF = spatial-temporal regularized correlation filter; LADCF = learning adaptive discriminative correlation filter; MSEFCF = multi-scale enhanced features correlation filter; BiCF = bidirectional incongruity-aware correlation filter; ASTWR = adaptive spatial-temporal weighted regularization; AUC = area under the curve; DP = distance precision; ASRCF = adaptive spatially-regularized correlation filters; TADT = target-aware deep tracking; RTDG = response temporal difference guided tracker; ECO = efficient convolution operators; HiFT = hierarchical feature transformer; MCCT = multi-cue correlation tracker; BSTCF = background-aware and spatial-temporal regularized correlation filter; FBACF = feature block-aware correlation filters; MSEFCF = multi-scale enhanced features correlation filter.

As shown in Table 2, our tracker achieved the best scores. The success rate was 5.2% higher than ASTWR, 6.7% higher than AutoTrack, and 8.4% higher than BiCF. The precision was 8.3% higher than ASTWR and 10.6% higher than MSEFCF. Secondly, we compared the performance with eight of these tracking methods in specific challenging scenarios. As shown in Table 1, DACFTM achieved the best performance in scenarios such as Scale Variation (SV), Occlusion (OCC), deformation (DEF), and Out of View (OV). Our tracker is mainly designed to address occlusion and changes in moving objects in UAV tracking, and DACFTM can exhibit the best results in these challenging scenarios.

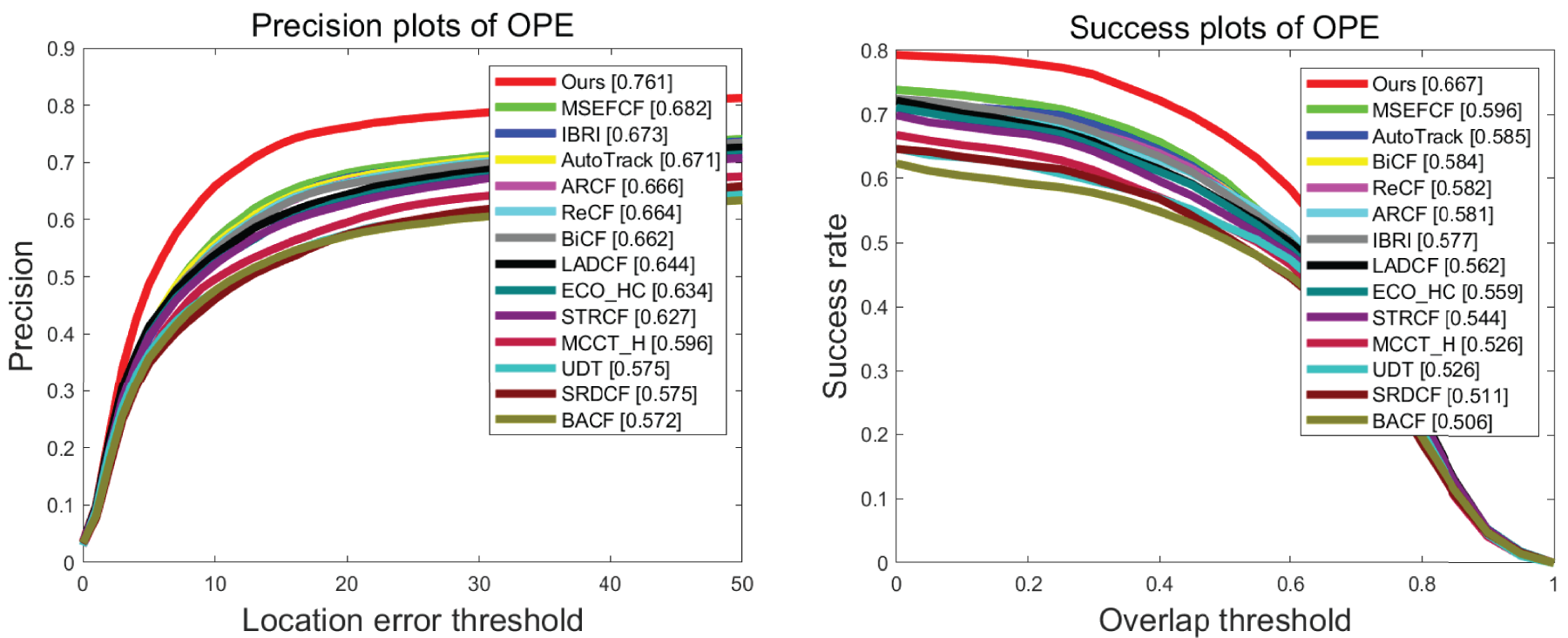

We comprehensively evaluated DACFTM on the UAV123@10fps dataset, which contains 91 UAV video sequences. We experimentally compared DACFTM with another 13 tracking methods. These tracking methods include MCCT_H (Wang et al., 2018), BACF (Kiani Galoogahi et al., 2017), IBRI (Fu et al., 2020), STRCF (Li et al., 2018b), LADCF (Xu et al., 2019c), Autotrack (Li et al., 2020), MSEFCF (Yu et al., 2024), ARCF (Huang et al., 2019), BiCF (Lin et al., 2020), ReCF (Lin et al., 2021), ECO_HC (Danelljan et al., 2017), UDT (Wang et al., 2019), and SRDCF (Danelljan et al., 2015b).

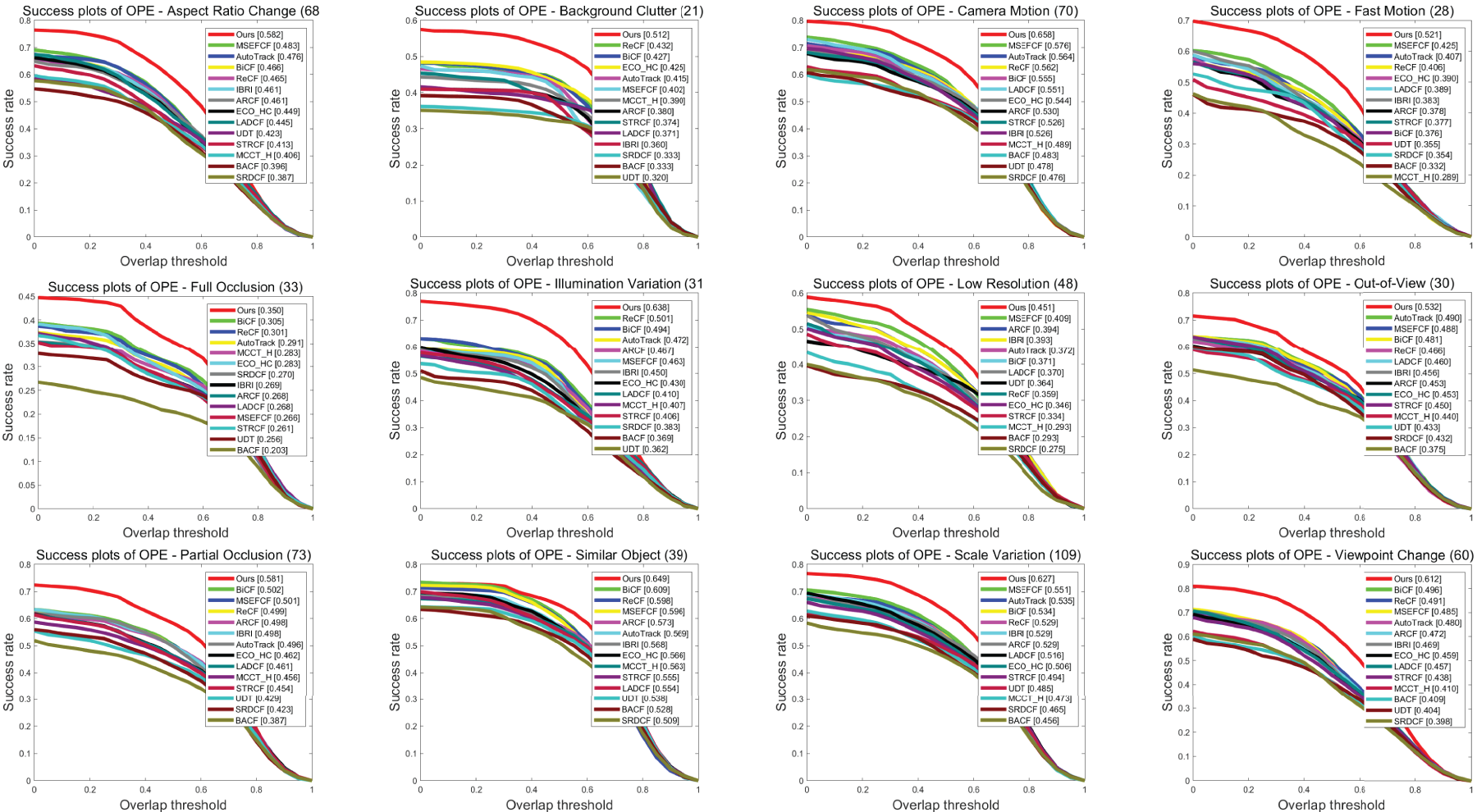

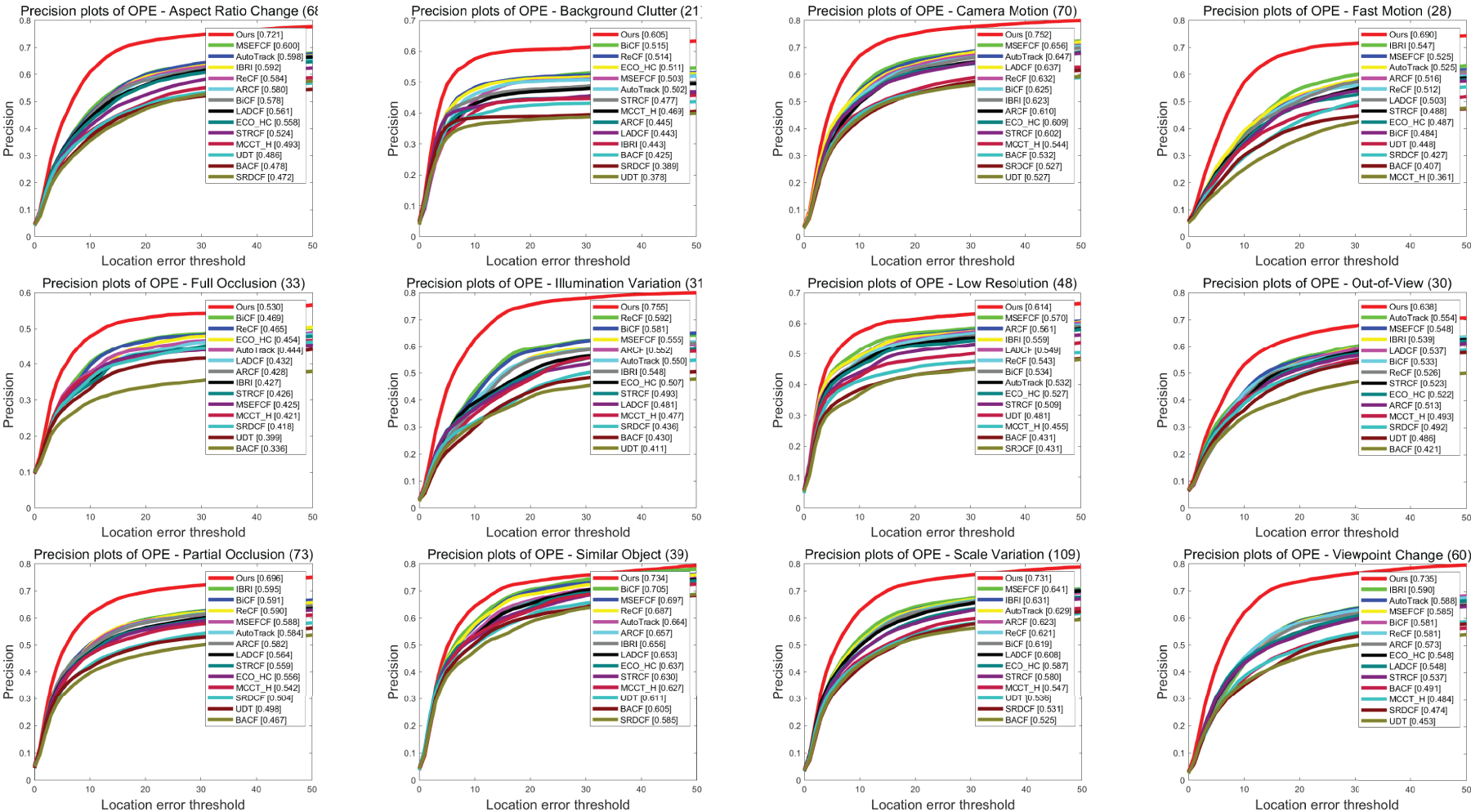

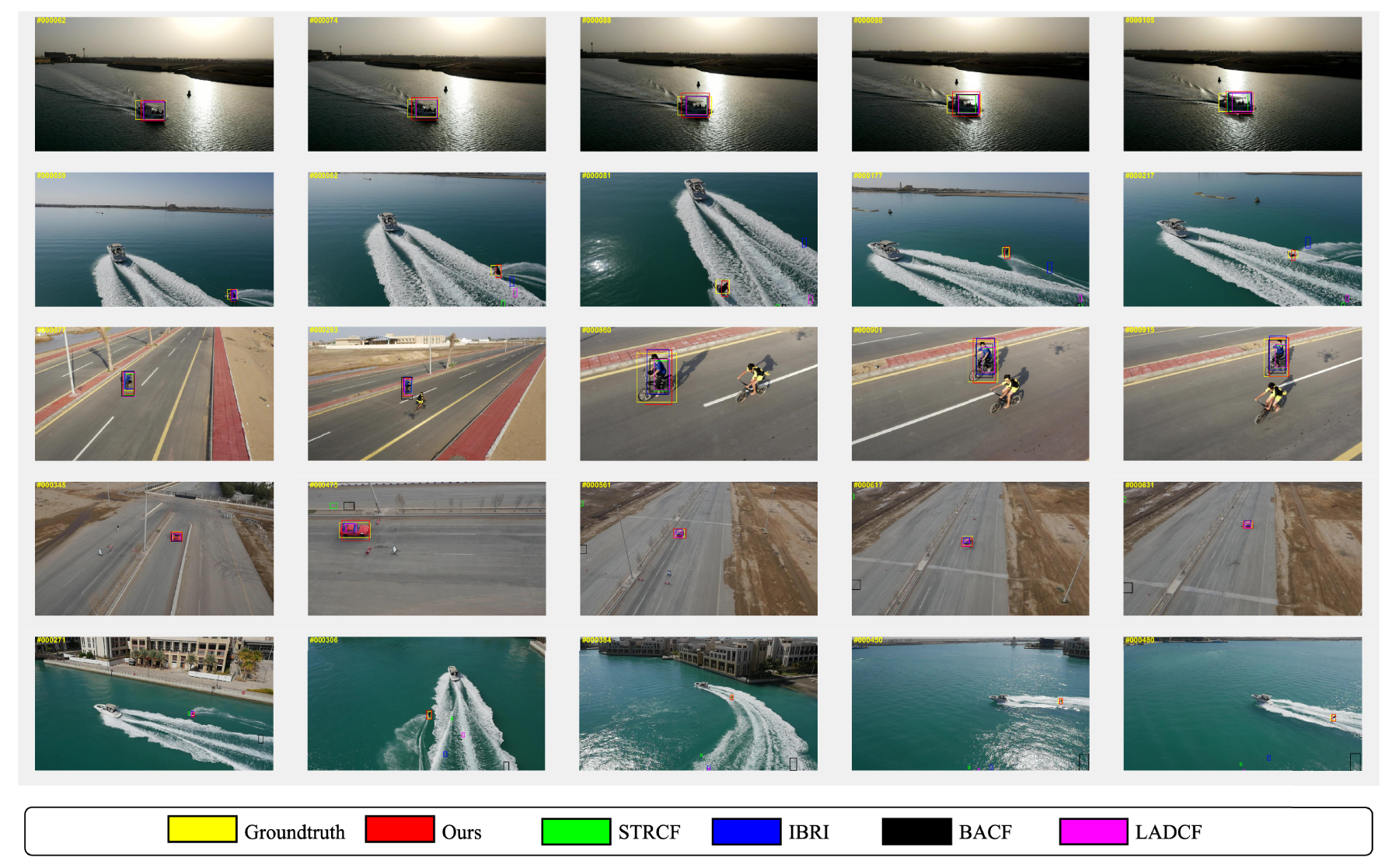

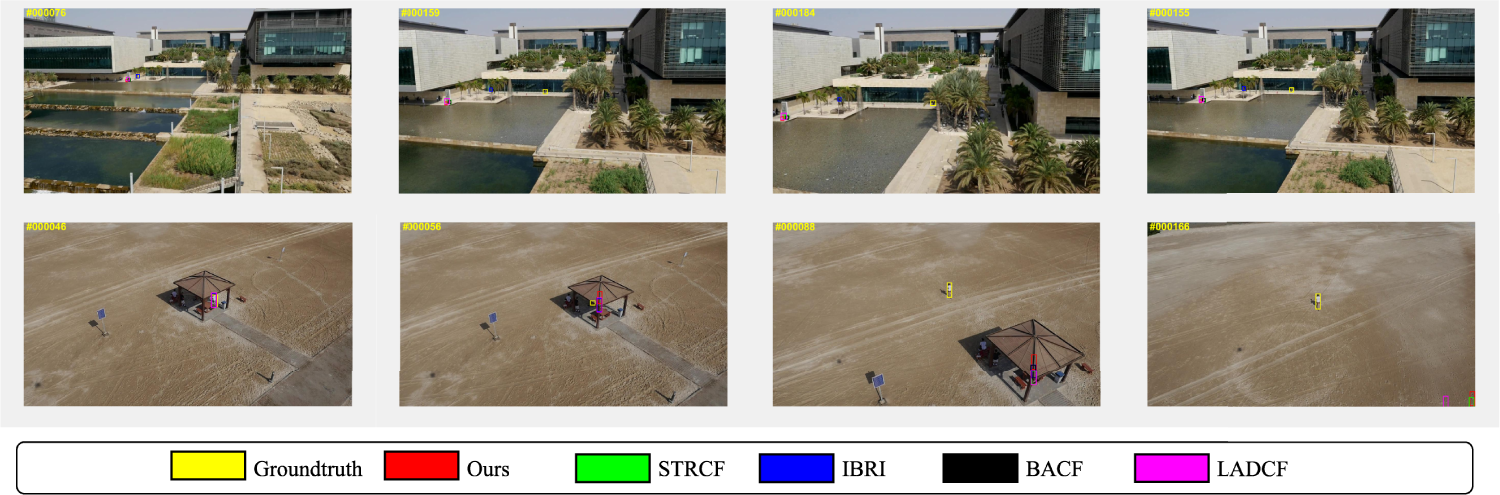

As shown in Figure 2, our work achieved the best scores in both tracking success rate and precision. The success rate was 8.2% higher than AutoTrack, 10.5% higher than LADCF, and 7.1% higher than MSEFCF. In terms of precision, it was 8.8% higher than IBRI, 7.9% higher than MSEFCF, and 9.9% higher than BiCF. Secondly, in order to evaluate the robustness of DACFTM, we compared the performance of each tracker in different challenging scenarios, respectively. As shown in Figures 3 and 4, our method achieved the best performance in scenarios such as SV, POC, low resolution (LR), and OV. Finally, we perform a qualitative evaluation for this dataset with four other tracking methods. For this aim, we select several different video sequences to assess the performance of the trackers. From Figure 5 , the tracking results of these trackers in these five sequences can be, respectively, observed. To further analyze the limitations of the proposed method, we present several representative failure cases in Figure 6, where the tracker performs poorly under extremely challenging conditions. As shown in these sequences, tracking failure mainly occurs in situations involving long-term severe occlusion, abrupt camera motion, fast target deformation, and significant background interference. Under such conditions, the response map becomes highly ambiguous, and even the distortion-aware mechanism may fail to recover reliable target information due to the absence of discriminative visual cues. Overall, our tracker demonstrates higher accuracy in tracking the target. For instance, in the wakeboard sequence, all the other trackers lost the target, but our tracker was still capable of successfully detecting the target.

Success rate and precision of compared algorithms on UAV123@10fps.

Comparisons of success rates for 12 challenging attributes on DTB-70. For ARC, BC, CM, FM, FO, IV, LR, OV, PO, SO, SV and VC, our DACFTM achieved better tracking performance compared to other advanced trackers. ARC = aspect ratio change; BC = background clutter; CM = camera motion; FM = fast motion; FO = full occlusion; IV = illumination variation; LR = low resolution; OV = out of view; PO = partial occlusion; SO = similar object; SV = scale variation; VC = viewpoint change; DACFTM = distortion-aware correlation filter with target mask.

Comparisons of precision for 12 challenging attributes on DTB-70. For ARC, BC, CM, FM, FO, IV, LR, OV, PO, SO, SV and VC, our DACFTM achieved better tracking performance compared to other advanced trackers. ARC = aspect ratio change; BC = background clutter; CM = camera motion; FM = fast motion; FO = full occlusion; IV = illumination variation; LR = low resolution; OV = out of view; PO = partial occlusion; SO = similar object; SV = scale variation; VC = viewpoint change; DACFTM = distortion-aware correlation filter with target mask.

Comparison of tracking quality with four other advanced trackers in five challenging image sequences. (from top to bottom: boat4, wakeboard2, bike1, car16_2, and wakeboard8).

Compare with the failure cases of four other advanced trackers in two challenging image sequences. (from top to bottom: bike2 and person14_1). All sequences in this figure show poor tracking performance under extremely difficult conditions, including abrupt camera motion, fast target deformation, and background clutter. These examples highlight the limitations of the proposed method when the visual appearance of the target is heavily corrupted.

Ablation experiment of the initial value selection of

Ablation experiment of the initial value selection of

Finally, we evaluated DACFTM on the UAVDT dataset, which contains 50 video sequences from the perspective of unmanned aerial vehicles, including 14 challenge attributes. We experimentally compared DACFTM with another 9 trackers. These tracking methods include ASRCF (Dai et al., 2019), TADT (Li et al., 2019), RTDG (Lin et al., 2021), ECO (Danelljan et al., 2017), HiFT (Cao et al., 2021), MCCT (Wang et al., 2018), BSTCF (Zhang et al., 2023), FBACF (Zhang et al., 2024a), MSEFCF (Yu et al., 2024), ASTSCF (Li et al., 2025), and FWRDCF (Jia et al., 2025).

We reported the performance of each tracker on this dataset in Table 2. Our DACFTM achieved the best scores in both tracking success rate and precision. The success rate was 6.5% higher than BSTCF and 4.1% higher than FBACF. The precision was 3.5% higher than MSEFCF and 4.0% higher than RTDG.

Ablation Studies

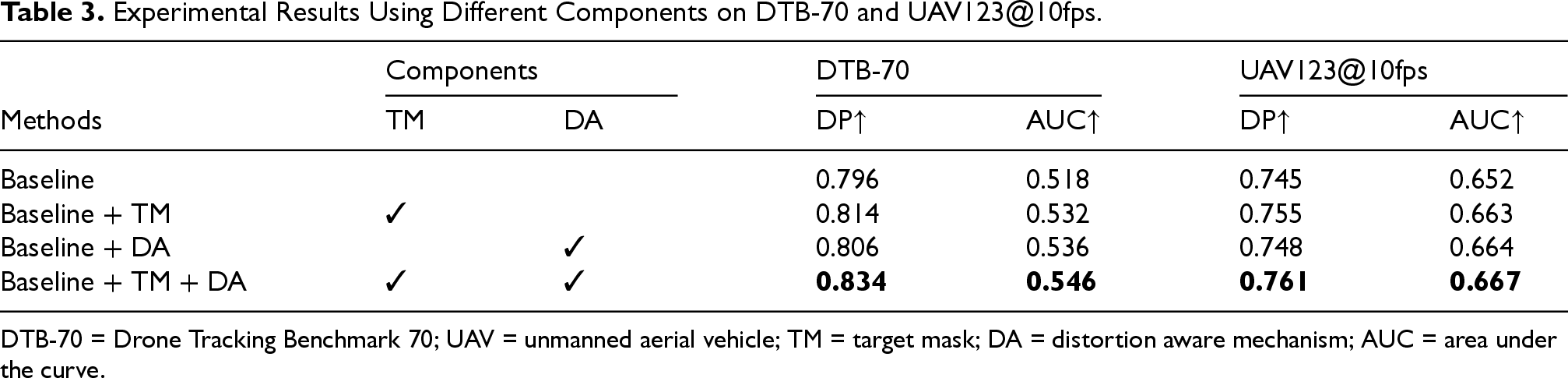

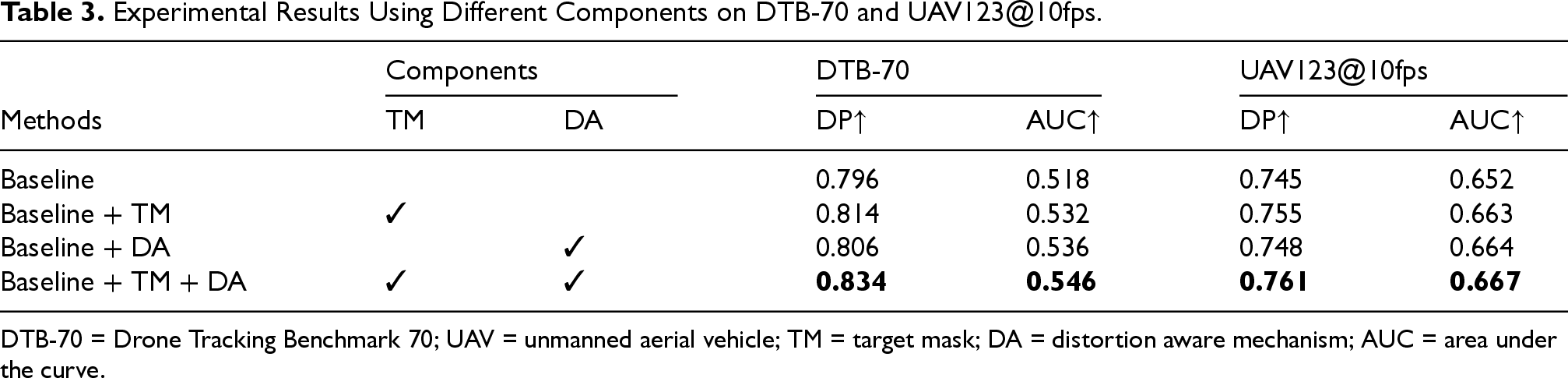

The Effectiveness of Different Components

We further conduct ablation experiments to demonstrate the effectiveness of the respective components of the DACFTM tracker, including target mask (TM) and distortion aware mechanism (DA). The baseline tracker is the tracker that does not use the target mask and distortion aware mechanism. Based on the baseline, we verified the effectiveness of our method through the combination of different components.

According to the results reported in Table 2. The tracker proposed in this article shows better tracking success rate and precision than other trackers. On the DTB-70 dataset, it is 4.4% higher in success rate and 10.6% higher in precision than MSEFCF. Secondly, as shown in Table 3, the proposed target mask and filter update strategy improve the performance of the baseline. The target mask and distortion-aware mechanism increase the success rate by 1.4%/1.8% and the precision by 1.8%/1.0%, respectively. Please note that the target mask and the distortion-aware mechanism we proposed are not linearly weighted. The target mask enhances the model’s perception of the target by acting on the objective function, and distortion-aware mechanism maintains a high-quality filter by judging whether the current response map is reliable. When we enable both components, our tracker achieves the optimal performance, which is 2.8% higher in success rate and 3.8% higher in precision than the baseline. These results verify the effectiveness of our proposed method.

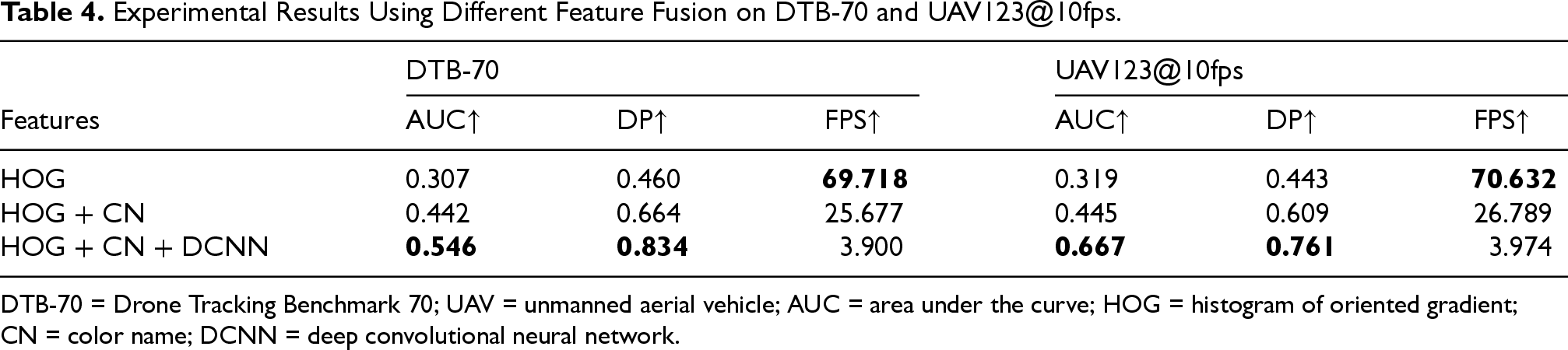

The Effectiveness of Different Feature

In order to be compared with other trackers that do not use ResNet-50 as the feature extraction network, we conduct a feature model influence experiment. As shown in Table 4, in the case of using only HOG features, our tracker still performs well in terms of AUC, and the speed is nearly 18 times faster than the model after feature fusion. When using HOG features and CN features, the speed is also 6 times faster. This demonstrates that while HOG provides essential structural information, CN further enriches the representation with discriminative color cues. Crucially, the subsequent integration of DCNN features (e.g., from ResNet-50) provides a significant performance boost by introducing high-level semantic understanding, proving that the combined power of these diverse feature types is indispensable for achieving state-of-the-art tracking performance in complex UAV environments. For instance, on the DTB70 dataset, adding DCNN features to HOG+CN boosts AUC from 0.442 to 0.546, and DP from 0.664 to 0.834. This highlights that DCNN features are the most critical component for achieving high accuracy and robustness in UAV tracking, primarily because they capture high-level semantic information that is highly discriminative and robust to the severe appearance changes and occlusions inherent in aerial video sequences.

Experimental Results Using Different Components on DTB-70 and UAV123@10fps.

Experimental Results Using Different Components on DTB-70 and UAV123@10fps.

DTB-70 = Drone Tracking Benchmark 70; UAV = unmanned aerial vehicle; TM = target mask; DA = distortion aware mechanism; AUC = area under the curve.

Experimental Results Using Different Feature Fusion on DTB-70 and UAV123@10fps.

DTB-70 = Drone Tracking Benchmark 70; UAV = unmanned aerial vehicle; AUC = area under the curve; HOG = histogram of oriented gradient; CN = color name; DCNN = deep convolutional neural network.

Conclusion

In this article, we designed and implemented a correlation filter with target mask regularization and also proposed a distortion-aware mechanism for guiding the selection of the filter. These two methods can be applied to any discriminative correlation filter design and are particularly practical in the UAV tracking scenario. On the DTB-70 dataset, the precision of our method is as high as 83.4%. However, compared with other trackers, the method proposed in this article has no obvious advantage in tracking speed. In the subsequent research, it is possible to consider using other benchmark models with faster tracking speeds to improve the usability in UAV video sequences. Secondly, through the observation of video sequences, we found that the method proposed in this paper has higher robustness in small target scenarios and can play a more excellent role in specific scenarios. Finally, the experiments conducted on multiple datasets fully demonstrate that our method has more excellent performance.

Footnotes

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported in part by Jinhua Public Welfare Technology Application Research Project under Grant 2025-4-043.

Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.