Abstract

This article presents a targeted narrative review establishing the historical and theoretical foundations for computational belief change implementation. Seeded by Doyle and London’s foundational 1980 taxonomy, we trace the evolution of belief revision from computational origins through the theoretical transformation of the Alchourrón, Gärdenfors, and Makinson (AGM) framework to contemporary approaches. Our analysis demonstrates how pre-AGM computational pragmatism relates to AGM theoretical constructs, revealing both continuities and transformations across this evolution. We analyze how each taxonomical category evolved in the post-AGM era, identifying the theoretical foundations and historical precedents that inform contemporary implementation challenges. This foundation enables subsequent research into robust computational blueprints that synthesize historical insights with formal guarantees, providing the baseline for systematic implementation analysis and engineering-focused belief change research.

Keywords

Introduction

Belief revision (BR) is the process by which an intelligent agent modifies its beliefs when confronted with new information that contradicts its current knowledge state (Gärdenfors, 1988). This fundamental cognitive and computational process involves determining which existing beliefs to abandon, which new beliefs to accept, and how to maintain consistency and coherence in the resulting belief system. Unlike simple belief addition, which merely incorporates new information, BR requires sophisticated mechanisms to handle conflicts, prioritize information sources, and preserve the most reliable or important beliefs while discarding those that have become untenable.

To illustrate the problem faced by BR, we adapt an example from Fermé and Hansson (2018, pp. 1–2). Suppose that the following statements are all true:

John was born in Portugal ( Pedro was born in Massaguassú ( John and Pedro are compatriots (

From these statements, we infer that Massaguassú is in Portugal. Now, assume that we received two new pieces of information:

Massaguassú is not a country ( Massaguassú is in Brazil (

Since the five statements are inconsistent, it follows that it is not viable to accept all five as true. There are several different approaches to dealing with this problem. Should we forget some of our previous beliefs? If so, which are the ones we choose to forget? And why? These are just a few of the questions that BR theories try to answer.

The formal study of BR encompasses both normative theories that specify how rational agents should revise their beliefs and descriptive theories that model how BR actually occurs in natural and artificial systems. At its core, BR addresses three fundamental operations: expansion (adding new beliefs without removing old ones), contraction (removing beliefs to restore consistency), and revision (adding new beliefs while removing conflicting old ones to maintain consistency) (Alchourrón et al., 1985).

BR is crucial for artificial intelligence (AI), as real-world AI systems must operate in dynamic, uncertain environments where information is incomplete, potentially contradictory, and subject to change (Doyle, 1979). Unlike static knowledge bases that assume perfect and unchanging information, intelligent agents must continuously update their understanding based on new observations, sensor data, user inputs, and changing environmental conditions.

It also plays a pivotal role in simulating human-like reasoning (Harman, 1986). By modeling cognitive processes, AI systems can more effectively emulate human thought patterns, which is invaluable in developing intelligent assistants, educational tutors, and collaborative systems, to name a few examples. Furthermore, BR supports the integration of learning and reasoning in AI (Mitchell, 1997). As systems increasingly combine data-driven methods, such as machine learning, with symbolic reasoning, BR facilitates the reconciliation of learnt patterns with logical rules through approximate operators (Chopra et al., 2001) and anytime algorithms (Wassermann, 1999) that balance computational efficiency with theoretical soundness. This integration fosters the development of robust hybrid AI systems capable of excelling in complex tasks (Bengio et al., 2013; Russell & Norvig, 2010).

Finally, BR is indispensable for maintaining consistency in knowledge systems. Systems such as expert systems and knowledge graphs rely on consistent information to ensure accuracy and utility (Davis & Lenat, 1982). Updating these systems without introducing logical errors is a fundamental challenge that BR effectively addresses. By enabling these diverse capabilities, BR functions as a key mechanism to advance the adaptability, reliability, and intelligence of AI systems (Russell & Norvig, 2010).

In summary, the importance of BR in AI manifests itself in multiple dimensions:

Problem Statement and Motivation

The field of BR faces a fundamental challenge in bridging the gap between theoretical foundations and practical implementation (Fermé et al., 2025; Fermé & Hansson, 2018). While the AGM framework provides rigorous normative principles for rational belief change, these operations are computationally intractable in the worst case (co-NP-complete) (Nebel, 1998), making exact implementation infeasible for large-scale applications and often requiring approximations that may compromise theoretical guarantees (Chopra et al., 2001; Delgrande et al., 2013; Williams, 1997). In contrast, pre-AGM computational approaches demonstrated practical feasibility but lacked systematic theoretical foundations that could ensure rational behavior or provide principled evaluation criteria. This theory-practice gap manifests itself in several critical ways.

The motivation for this research stems from the recognition that pre-AGM computational approaches, developed before theoretical foundations were established, may contain valuable implementation insights that have been overlooked by the post-AGM focus on theoretical development. These early systems were designed with explicit attention to computational tractability, incremental processing, and practical deployment constraints, concerns that remain central to contemporary AI applications but are often secondary in theoretical research.

Additionally, the proliferation of AI systems in dynamic, uncertain environments, from autonomous vehicles to conversational agents, has renewed interest in practical BR implementations that can operate under real-time constraints with limited computational resources. Understanding how pre-AGM approaches addressed these challenges, and how their insights can be integrated with AGM’s theoretical framework, is crucial for developing the next generation of intelligent systems.

To better understand progress in this field, it is essential to revisit the BR algorithms that preceded the influential AGM model, which has become the dominant approach. By comparing the state of pre-AGM algorithms with post-AGM developments, it is possible to understand how these models can be evaluated in the context of the AGM theory, and ultimately how they can be adapted for the implementation of BR in intelligent agents. We argue that the theory of belief change for belief bases has now matured to a stage where the next step, implementing BR in real-world agents, can be undertaken. This is due to advances within the research field itself, and also to the increased computational power of small devices over the past decades. With this understanding, we contend that the algorithms for BR proposed by the AI community in the 1970s and 1980s likely contain valuable insights into the implementation of BR mechanisms.

In this article, we analyze how the models proposed in the pre-AGM era can be evaluated in light of the AGM theory. We begin by analyzing pre-AGM BR algorithms, building on the classic article, ‘‘A Selected Descriptor-Indexed Bibliography to the Literature on Belief Revision” (Doyle & London, 1980). Doyle and London’s work provides a detailed overview of early research and pre-AGM approaches, offering a valuable framework for our analysis. We use this bibliography as a foundation for revisiting key contributions and outlining developments in the field of BR. Subsequently, we analyze how these contributions can be assessed in light of the AGM theory and ultimately how they can be adapted when implementing BR mechanisms in intelligent agents.

Rather than a systematic literature review, this article presents a targeted narrative review, grounded in Doyle and London’s work, which serves as a basis for tracing the evolution of BR research from its computational origins through theoretical formalization to contemporary implementations. This targeted narrative review establishes the historical and theoretical foundation for a systematic research program addressing computational BR implementation. By comprehensively analyzing the evolution from pre-AGM computational approaches through AGM theory to contemporary systems, we provide the baseline necessary for systematic implementation analysis and engineering-focused research. Subsequent work will build on this foundation to develop computational blueprints and implementation analysis frameworks that synthesize historical insights with formal guarantees.

Paper Organization

This article is organized as follows:

Related Work

The field of BR has been the subject of numerous surveys, reviews, and comparative analyses over the past four decades. These works provide different perspectives on the field’s development, theoretical foundations, and practical applications. This section positions our survey within this broader landscape of literature reviews and identifies how our work extends and complements existing analyses.

Foundational Surveys and Reviews

In ‘‘A Selected Descriptor-Indexed Bibliography to the Literature on Belief Revision,” which is the earliest comprehensive survey of BR research (Doyle & London, 1980), the authors established the taxonomical framework that forms the foundation of our analysis. This work was primarily descriptive, cataloging existing approaches without systematic theoretical evaluation. In ‘‘Knowledge in Flux,” Gärdenfors (1988) provided the first comprehensive theoretical treatment of BR, focusing on the AGM framework and its philosophical foundations rather than computational implementation.

In ‘‘How to Give It Up,” Makinson (1985) offered an early systematic examination of formal aspects of belief change, providing mathematical foundations that complemented Gärdenfors’ more philosophical approach. This work focused primarily on contraction operations and their formal properties, establishing important theoretical results that informed subsequent AGM development.

The first comprehensive handbook treatments of BR appeared in the mid-1990s, providing systematic coverage that synthesized the first decade of post-AGM research. The handbook chapter by Gärdenfors and Rott (1995) offered the first authoritative survey of AGM theory and its extensions, covering the fundamental postulates, construction methods, and representation theorems while addressing early criticisms and limitations. This work established the canonical presentation of AGM theory that would influence subsequent textbook treatments and became the standard reference for researchers entering the field. A decade later, Peppas (2008) handbook chapter provided updated coverage that reflected the maturation of the field, including comprehensive treatment of iterated revision, belief base approaches, and computational complexity results that had emerged since the mid-1990s. These handbook chapters serve complementary roles to book-length treatments, providing authoritative surveys that capture the field’s consensus understanding at specific historical moments while remaining more accessible than comprehensive monographs.

Post-AGM Theoretical Surveys

Following the establishment of the AGM framework, several comprehensive surveys examined its extensions and applications. In ‘‘Change, Choice and Inference,” Rott (2001a) provides extensive coverage of connections between BR and non-monotonic reasoning, examining how AGM principles relate to default logic, conditional logic, and other approaches to uncertain reasoning. This work complements our analysis by focusing on theoretical connections rather than historical development.

In ‘‘A Textbook of Belief Dynamics,” Hansson (1999c) offers comprehensive coverage of BR theory with emphasis on formal constructions and their properties. In their more recent work, Fermé and Hansson (2018) provide updated coverage including belief base approaches, kernel methods, and contemporary extensions. These works focus primarily on theoretical development rather than the historical evolution that is central to our analysis. The authors further extend their coverage in a later publication, but focusing only on the AGM development (Fermé et al., 2025).

In his survey of BR implementations, Williams (1998) examined practical aspects of BR systems, focusing on computational approaches and their relationship to theoretical foundations.

Specialized Domain Surveys

Several surveys have examined BR within specific application domains. A survey of ontology change by Flouris et al. (2008) examines BR principles as applied to ontology evolution and maintenance, providing detailed coverage of description logic extensions and practical implementation strategies. This work demonstrates how AGM principles have been adapted for specific logical frameworks.

In their work on belief merging, Konieczny and Pino Pérez (2002) survey approaches to combining information from multiple sources, extending AGM principles to multi-agent settings.

A complexity survey by Liberatore and Schaerf (1996) provides comprehensive coverage of computational complexity results for BR operations, examining both theoretical bounds and practical approximation strategies. This work complements our analysis by providing detailed technical results.

Computational and Implementation Surveys

Several works have surveyed computational approaches to BR implementation. In her work on resource-bounded BR, Wassermann (1999) examines how computational limitations affect BR implementation, providing important insights into practical constraints that complement our historical analysis of pre-AGM computational approaches.

In their survey of approximation methods, Schaerf and Cadoli (1995) examine various approaches to approximate BR, including compilation methods, anytime algorithms, and heuristic approaches.

In their work on approximate BR, Chopra et al. (2001) provide a systematic account of approximation as an explicit response to computational constraints in belief change. Rather than treating approximation as an ad hoc compromise, they characterize families of approximation operators and analyze their trade-offs with respect to AGM postulates and rationality criteria. This work remains primarily situated within the AGM framework, and the implementation of the proposed system is marked as future work.

Philosophical and Cognitive Perspectives

Several surveys have examined BR from philosophical and cognitive perspectives. In his work on BR and reasoning, Harman (1986) examines connections between BR and human reasoning, providing insights into descriptive aspects of belief change that complement AGM’s normative framework.

A survey of defeasible reasoning by Pollock (1987) examines connections between BR and defeasible reasoning, addressing some of the non-monotonic aspects that Doyle’s taxonomy identified as adjacent to BR proper.

These philosophical surveys provide important context for understanding BR as both a computational and cognitive phenomenon.

Contemporary Multi-Agent and Social Perspectives

Recent surveys have addressed BR in multi-agent and social contexts. In their work on dynamic epistemic logic (DEL), Baltag and Smets (2008) survey formal approaches to knowledge and belief change in multi-agent settings, providing sophisticated logical frameworks that extend beyond the individual focus of classical AGM theory.

Dragoni (1994) examines early approaches to distributed BR with a survey of BR in multi-agent systems, providing important coverage of extensions beyond single-agent settings. This work complements our analysis by addressing multi-agent aspects, but does not provide the systematic historical perspective central to our approach.

Positioning of Our Work

Our review differs from existing surveys in several important ways:

While our work builds upon and complements existing surveys, it provides a unique perspective that combines historical analysis, theoretical evaluation, and practical implementation insights within a unified framework. This approach fills an important gap in the literature by analyzing how the field has evolved from its computational origins to its current theoretical sophistication.

Background and Foundations

The philosophical roots of BR theory stem from epistemological inquiries into knowledge acquisition and belief change. The formal study of BR emerged from the intersection of AI, philosophy of science, and cognitive psychology in the 1960s and 1970s. In his influential concept of the knowledge level, Newell (1982) provided a crucial theoretical foundation by distinguishing among different levels of analysis in cognitive systems: the biological level, the symbol level, and the knowledge level.

At the knowledge level, Newell argued, intelligent behavior can be understood in terms of the knowledge an agent possesses and the goals it pursues, independent of the specific symbolic representations or computational mechanisms used to implement that knowledge. This perspective was revolutionary because it suggested that BR could be studied as a rational process governed by the principles of coherence, consistency, and goal-directed behavior, rather than merely as a collection of ad hoc computational procedures.

Newell’s framework influenced early AI researchers to develop principled approaches to knowledge representation and reasoning that could support rational BR. This led to the development of truth maintenance systems (Doyle, 1979), assumption-based reasoning frameworks (De Kleer, 1986), and other computational architectures designed to track the justifications for beliefs and manage belief dependencies systematically (Doyle, 1979).

The knowledge level perspective also highlighted the importance of distinguishing between the competence level (what an ideal rational agent should do) and the performance level (what computationally bounded agents actually do). This distinction became central to BR research, leading to both normative theories that specify ideal BR behavior and computational theories that address the practical constraints of implementing BR in real systems (Newell, 1982).

Early contributions from philosophers such as James (1896) and Peirce (1877) emphasized the dynamic character of belief systems and the necessity of rational methods for updating them. These philosophical investigations established several key principles:

Peirce and James are central to pragmatist ideas about belief and coherence, but the formal, systematic foundations of minimal change and entrenchment in BR were developed later by decision-theoretic and AGM-style authors (Levi; Alchourrón, Gärdenfors, Makinson) and by AI/logic researchers. Historical precursors such as Zimmerman (2022) and Hollingsworth (2022) also shaped the pragmatists’ views.

From the computational perspective, BR explores how intelligent systems (or agents) can update their beliefs in response to new information. This process is essential because, in real-world contexts, AI systems often need to handle dynamic, incomplete, or contradictory information. With a BR process in place, intelligent systems can maintain a consistent information base in the presence of new input, thereby supporting more accurate decision-making (Nebel, 1998; Wassermann, 1999).

The formal study of BR can be traced to early work in AI and philosophical logic during the 1960s and 1970s. However, the field was revolutionized by the seminal work of Alchourrón et al. (1985), which provided the first comprehensive formal framework for belief change operations.

Before the AGM framework, researchers developed various algorithmic approaches to BR, often developed for specific applications. In particular, the problem of how to revise a database or knowledge base appeared in the AI community during the 1970s. The survey article by Doyle and London (1980) provided a selected bibliography of about 250 papers related to this area. In its introduction, it states that “Belief Revision concentrates on the issue of revising systems of beliefs to reflect perceived changes in the environment or acquisition of new information.”

During the 1980s, several authors (Borgida, 1985; Dalal, 1988; Fagin et al., 1983; Katsuno & Mendelzon, 1991a, 1991b; Martins & Shapiro, 1988; Satoh, 1988; Winslett, 1988) proposed different models for performing belief change. However, these models were developed at the syntactic level, that is, with a commitment to the representation language. Therefore, as Nebel (1989) pointed out, ‘‘the question remains how belief revision can be described on an abstract level independent of how beliefs are represented and manipulated inside a machine.”

It remains unclear how to describe BR at the knowledge level, as Newell (1982) introduced in his seminal article ‘‘The knowledge level. In that work, he argued that ‘

These ideas later inspired the formal development of the belief–desire–intention (BDI) paradigm, in which agent behavior is explicitly modeled in terms of beliefs, desires, and intentions (Cohen & Levesque, 1990; Rao & Georgeff, 1995). While Newell did not propose a BDI architecture himself, his knowledge-level analysis provided a conceptual foundation that strongly influenced subsequent BDI models and agent-oriented reasoning frameworks. That paper exerted an enormous influence on the AI community, particularly on researchers in Knowledge Representation and Reasoning, including Brachman, Levesque, Moore, Halpern, Moses, Vardi, Fagin, Ullman, Shapiro, Borgida, and others.

The AGM Revolution

The AGM framework, developed by Alchourrón et al. (1985), Gärdenfors (1988), and Makinson (1985), represents the most influential normative theory of BR. Named after its creators’ initials, the AGM framework provides a set of postulates that specify how rational agents should revise their beliefs when confronted with new information. This work fundamentally transformed the field by:

Providing a unified theoretical framework for three types of belief change: expansion, contraction, and revision. Establishing rationality postulates that any reasonable belief change operation should satisfy. Introducing representation theorems that connected abstract postulates with concrete construction methods. Creating formal relationships between different types of belief change operations.

In the AGM literature, representation theorems provide equivalent characterizations of the abstract postulates in terms of concrete constructions. For example, contraction operators satisfying the AGM postulates can be represented as partial meet contractions, obtained by selecting preferred remainder sets via a selection function (Alchourrón et al., 1985; Alchourrón & Makinson, 1982). Other representation theorems give equivalent formulations in terms of epistemic entrenchment orderings (Gärdenfors & Makinson, 1988), kernel contraction (Alchourrón & Makinson, 1986; Hansson, 1994a), and Grove’s system of spheres for revision (Grove, 1988).

The AGM model characterizes specific change operations that represent the modifications an agent can perform on its knowledge. Each of these operations is defined by a set of rationality postulates that determine its behavior independently of implementation details. These postulates allow us to conceive of the change operations as “black boxes,” that is, mechanisms whose behavior is specified separately from how they are implemented (Alchourrón & Makinson, 1985).

Additionally, by the end of the 1980s, four equivalent methods for constructing AGM functions were introduced, along with semantics for such operations: partial meet functions (Alchourrón et al., 1985; Alchourrón & Makinson, 1982), epistemic entrenchment (Gärdenfors & Makinson, 1988), safe/kernel contraction (Alchourrón & Makinson, 1986; Hansson, 1994a), and sphere-systems semantics (Grove, 1988). Together, these developments elevated the AGM to the status of a standard model. The influence of Newell’s paper and the AGM model led to the abandonment of syntactic approaches.

However, the AGM framework is defined for belief sets, that is, sets of sentences closed under logical consequence (usually infinite sets). Hence, resource-bounded agents cannot employ the AGM model without modifications (Wassermann, 1999).

During the 1990s and 2000s, the AGM model was extended to belief bases, that is, sets of sentences not closed under logical consequence (Falappa et al., 2006; Fermé et al., 2008; Hansson, 1991b, 1994a). Several works advocate the use of belief bases instead of belief sets to represent states of knowledge (Fermé, 1992; Fuhrmann, 1991a; Hansson, 1991a, 1992a, 1994b; Nebel, 1989; Rott, 1998; Wassermann, 1999).

While the AGM model played a pivotal role in formalizing BR, its practical application in real-world systems has faced challenges, primarily due to computational complexity and resource limitations. Despite these obstacles, AGM-based concepts have been adapted and extended to address the requirements of dynamic decision-making systems (Nebel, 1998; Wassermann, 1999).

Transition From Practice to Theory

Our survey serves as a bridge between the practice-oriented pre-AGM era documented in Section 5 and the theory-oriented AGM framework that we examine in the subsequent Section 4. Doyle’s taxonomy provides the organizational structure for understanding how diverse computational approaches to BR were unified under the AGM theoretical umbrella, while our correspondence analysis reveals both the continuities and innovations that characterize this theoretical transformation.

The pre-AGM period was rich in concrete computational mechanisms that addressed specific implementation challenges. Consider three exemplary cases that illustrate our methodological approach:

Non-monotonic techniques, including defaults, circumscription, and default logic, inhabit a separate taxonomical family precisely because they solve a different problem than AGM revision: how to license tentative commitments in the absence of complete proof, rather than how to minimally revise a belief corpus when definitive information arrives (McCarthy, 1980; Reiter, 1980). Doyle’s taxonomical segmentation acknowledges the frequent co-occurrence of these techniques in implemented systems without conflating their distinct purposes, a distinction that AGM theory would later formalize through its focus on categorical belief change rather than uncertain inference.

The AGM Model

The AGM framework emerged in the mid-1980s as a response to the need for principled normative foundations for BR (Alchourrón et al., 1985). Although pre-AGM approaches had developed sophisticated computational methods, they lacked systematic theoretical foundations that could guide the development of rational BR systems and provide criteria for evaluating different approaches.

The framework was developed through a series of influential papers by Carlos Alchourrón, Peter Gärdenfors, and David Makinson, culminating in the comprehensive treatment in ‘‘Knowledge in Flux” by Gärdenfors (1988). The AGM approach was revolutionary in applying rigorous logical and set-theoretic methods to BR, establishing it as a legitimate area of formal investigation.

The underlying formal logical framework used in the AGM approach has the following characteristics:

A propositional language A consequence operator Monotonic: If Idempotent: Compact: If A set of formulas

The framework provides a systematic approach to belief change based on three fundamental operations:

It assumes that beliefs are represented as logically closed sets (belief sets) and that the underlying logic satisfies standard properties, including supraclassical monotonicity (Strasser & Antonelli, 2024), deduction, and compactness.

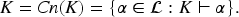

A belief set

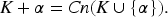

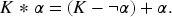

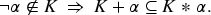

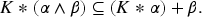

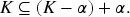

The AGM model is based on a set of rationality postulates that govern how belief sets behave when revised or contracted. These postulates ensure that BR is carried out logically and coherently. The basic AGM postulates for revision (K*1–K*6) are as follows:

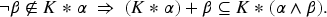

The supplementary postulates (K*7–K*8) address composite revisions:

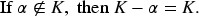

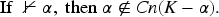

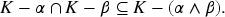

For contraction, the model has its own set of postulates, designed to ensure that beliefs are removed logically while minimizing information loss. The AGM postulates for contraction (K-1–K-8) are as follows:

The AGM framework operates with two distinct but related concepts for representing beliefs: belief sets and belief bases. These representational choices have deep implications for both the theoretical development and practical implementation of BR systems.

Knowledge sets (or belief sets) in the AGM context represent idealized and logically closed sets of beliefs that embody the theoretical foundations of rational BR (Alchourrón et al., 1985; Gärdenfors, 1988). These structures are characterized by deductive closure (

The practical limitations of logically closed knowledge sets led to the development of knowledge bases (or belief bases) as a more computationally tractable alternative (Hansson, 1999c; Nebel, 1998). Belief bases are finite, non-closed sets of beliefs that provide explicit representation of only the basic or fundamental beliefs held by an agent. This approach offers several advantages for practical implementation: finite size enables computational tractability, the distinction between explicit and derived beliefs allows for more efficient reasoning procedures, and the overall structure provides more realistic modeling of how actual belief systems operate in resource-bounded agents (Dalal, 1988; Fermé, 1992; Fuhrmann, 1988, 1991b; Hansson, 1989, 1991b, 1992a, 1994b; Nebel, 1989; Rott, 1998; Wassermann, 1999).

The choice between knowledge sets and knowledge bases reflects a fundamental tension in BR research between theoretical elegance and computational feasibility. While knowledge sets provide the mathematical precision necessary for formal analysis and the development of rationality postulates, belief bases offer the practical advantages required for real-world implementation. Later approaches often attempt to bridge this gap by developing algorithms that operate on belief bases while approximating the rational behavior specified by AGM theory for knowledge sets (Liberatore & Schaerf, 1996; Wassermann, 1999).

Constructive models in AGM theory serve as concrete mechanisms to implement belief change operations consistent with AGM postulates (Alchourrón et al., 1985). While the AGM postulates provide normative constraints on BR, they do not specify how belief changes are to be performed. Constructive models bridge this gap by offering explicit procedures for belief change operations (Gärdenfors, 1988).

The significance of constructive models can be highlighted in several respects: They provide explicit procedures for implementing belief change operations (Gärdenfors, 1988). They demonstrate that the AGM postulates are consistently satisfiable (Alchourrón et al., 1985). They offer insights into the relationship between different types of belief change (Levi, 1991). They serve as blueprints for practical implementations (Nebel, 1998).

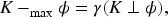

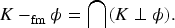

The development of constructive models begins with the concept of remainder sets (Alchourrón & Makinson, 1981, 1982). For a belief set For any

Maxichoice Contraction

The maximachoice contraction selects exactly one maximal subset of

Partial Meet Contraction

Partial meet contraction takes the intersection of some maximal subsets:

Full Meet Contraction

Full meet contraction takes the intersection of all maximal subsets:

Selection Functions and Preferences

The choice of the selection function

Revision

The core of the AGM model lies in the way the revision function is constructed. Revision in AGM theory addresses the fundamental problem of incorporating new information into a belief set while preserving consistency (Gärdenfors, 1988). Unlike expansion, which might lead to inconsistencies, revision ensures that the resulting belief set remains consistent whenever possible. The operation must balance two competing principles: Minimal change: Preserve as much of the original belief set as possible. Consistency maintenance: Ensure that the resulting belief set is consistent if the epistemic input is consistent.

As introduced before, the Levi identity formalizes revision as a two-step process in the context of revision operations. This identity demonstrates that revision can be reduced to a sequence of:

contracting by the negation of the new belief; and expanding with the new belief.

The Harper identity provides the converse relationship (Harper, 1976):

Partial meet revision extends the partial meet contraction framework to revision operations (Alchourrón et al., 1985). The process involves: Computing Applying a selection function Taking the intersection of selected remainders. Adding

Formally:

With his system of spheres, (Grove, 1988) provides an alternative semantic model for revision based on possible worlds. The framework consists of: A set of possible worlds. A system of nested spheres centered on A mechanism for selecting the closest

The revision operation selects the most plausible worlds where the new belief holds:

There are also specific types of revision that can be used with the AGM model. Selective revision (Fermé & Hansson, 1999) allows partial acceptance of new information: Transformation: New information undergoes a transformation before incorporation. Screening: Only certain aspects of new information are accepted. Modification: New information is modified during the revision process.

Multiple revision (Zhang & Foo, 2001) handles the simultaneous incorporation of multiple pieces of information: Package revision: Simultaneous revision with multiple beliefs. Iterative revision: Sequential application of revision operations. Prioritized revision: Revision with ordered sets of beliefs.

The classical AGM framework assumes that new information always takes priority over existing beliefs, as embodied in the Success postulate, which requires that newly received information must be incorporated into the revised belief set. However, this assumption has been challenged by developments in non-prioritized revision, which recognizes that not all new information deserves equal treatment or automatic acceptance (Hansson, 1997a).

Non-prioritized revision fundamentally challenges the Success postulate by introducing a more nuanced approach to information integration. Rather than mandating that new information must always be incorporated, this framework allows partial acceptance or complete rejection of new information based on its evaluated credibility and reliability. The system may determine that existing beliefs, particularly those with strong evidential support or high confidence levels, should be preferred over incoming information that appears dubious or contradicts well-established knowledge. This approach reflects a more realistic model of how rational agents actually process information in uncertain environments where the quality and trustworthiness of sources can vary significantly.

Parallel to these developments in information prioritization, researchers have also focused on adapting BR to work directly with belief bases rather than the idealized belief sets of classical AGM theory (Nebel, 1998). This shift involves fundamental changes in the way revision operations are conceptualized and implemented. When working with finite, non-closed sets of beliefs, revision procedures must explicitly distinguish between beliefs that are explicitly represented in the base and those that are merely derivable through logical inference. This distinction becomes crucial because different minimal change criteria may apply to explicit versus derived beliefs, leading to revision outcomes that can differ significantly from those predicted by classical AGM theory.

The combination of non-prioritized revision with belief bases approaches represents a significant evolution in BR theory, moving toward more realistic and implementable frameworks. These developments acknowledge the practical constraints faced by real agents: limited computational resources that make belief set closure infeasible, varying information quality that makes blanket acceptance policies inappropriate, and the need for revision procedures that can operate efficiently on finite representations while still maintaining principled approaches to belief change (Liberatore & Schaerf, 1996; Wassermann, 1999).

Importance and Criticisms

The AGM model was the first widely accepted formalization of how rational BR should be handled. It provided a clear, logical framework that could be applied in multiple domains, including AI, philosophy, and logic (Alchourrón et al., 1985).

One of the central ideas of the AGM model is the principle of minimal change. When revising beliefs, an agent should alter as little as possible to accommodate new information. This principle is crucial for maintaining the coherence of the agent’s beliefs over time and for preventing unnecessary disruptions in reasoning (Gärdenfors, 1988).

The AGM model applies to numerous fields within AI, such as knowledge representation, non-monotonic reasoning, ontologies, databases, and multi-agent systems. It is particularly useful for dynamic systems that must constantly update their knowledge as they interact with their environment (Allen, 1983).

By providing a set of logical postulates, the AGM model ensures that BR is rational and consistent, making it a critical tool for enabling AI systems to reason effectively in the face of new or conflicting information (Alchourrón et al., 1985).

The AGM model has inspired numerous extensions and refinements over the years, addressing limitations such as resource constraints in real-time systems or the need for more flexible revision methods. These works have further cemented their role in the ongoing development of BR theory (Hansson, 1999b).

It has also had an impact on practical implementations, such as:

Despite its widespread acceptance as the predominant framework for belief change, the AGM framework has been subject to considerable critique. This section offers an overview of such critiques, which can be viewed as limitations of the model.

Theoretically, logical omniscience and deductive closure create a mismatch with bounded agents. AGM treats derived and non-derived beliefs as indistinguishable once in the belief set, offering no internal mechanism to mark provenance or status (Levi, 1978, 1991; Rott, 1998). Deductive closure renders belief sets infinite for any nontrivial base; representing or computing with such sets is infeasible for real agents. Hansson (1993) and Hansson (2013) argues for finiteness of bases and outcomes, but standard AGM operations on finite inputs still yield infinite closures (Hansson, 1997a), forcing approximations that weaken guarantees (Chopra et al., 2001). Maintaining consistency while maximizing information retention is also computationally demanding in general.

The Recovery postulate claims that contracting by

AGM revision and contraction assume full epistemic deference to new inputs (Cross & Thomason, 1992). Even in preference-ordered settings such as Katsuno and Mendelzon (1991b), fresh information overrides entrenched beliefs, but immediately loses any privileged status once incorporated. This rigidity motivated operators that relax or violate Success, grouped as non-prioritized revision (Booth et al., 2012; Fermé & Hansson, 1999; Fermé et al., 2003; Fuhrmann, 1996; Hansson, 1991b, 1992b, 1997a, 1997a, 1997b; Hansson et al., 2001; Hansson & Wassermann, 2002; Konieczny & Pino Pérez, 2008; Makinson, 1997; Olsson, 1997) and non-prioritized contraction (Fermé & Hansson, 2001; Fermé et al., 2003). Remainder sets likewise treat all survivors as epistemically equivalent to the agent’s aims; (Levi, 1991, 1997) argues instead for preserving beliefs with the highest instrumental or epistemic value relative to goals.

Iterated change, that is, consecutive revisions or contractions, is underspecified in the AGM model: the original postulates give no constraints on how revision or contraction should depend on history (memory), enabling pathological sequences and instability (Boutilier, 1996). Fermé and Hansson proposed an organization of iterated review according to their memory:

Without memory: In this scenario, each belief set undergoes revision according to a predefined method that remains uninfluenced by the manner in which the belief set was initially acquired. Full meet revision is a classic example of this category. An analysis of these operators was conducted by Areces and Becher (2001). Full memory: opposed to the previous case, a full history of changes is maintained. Some operators of this class are (Brewka, 1991; Falappa et al., 2013; Konieczny & Pino Pérez, 2000; Lehmann, 1995). Partial memory: These operators store information on how it arrived at the belief set, which is used for future operations, but the stored information is not enough to do a rollback. Most of the proposed operators are of this category. According to Rott (2009), it is possible to further organize this category:

Epistemic entrenchment orderings face modeling issues: transitivity and totality can misrepresent context-dependent importance and may fail to capture realistic, non-linear hierarchies (Rott, 1992).

AGM is single-agent by design. Multi-agent settings require aggregation, conflict resolution, and source-sensitive trust handling that exceed the original scope (Dragoni, 1994). Some studies in this area include the work by Konieczny and Pino Pérez (2002, 2011) on merging, Castelfranchi (1997), Falappa et al. (2002, 2009), Paglieri (2006), Paglieri and Castelfranchi (2004, 2006), and Toulmin (2003), on argumentation, and Arló-Costa and Bicchieri (2007), Booth and Meyer (2006), Booth et al. (2010), and Zhang (2010) on game theory.

The binary acceptance stance is also too coarse for many applications; probabilistic beliefs, partial acceptance, and dynamic uncertainty demand graded representations and updates (Jeffrey, 1983; Spohn, 1988; Wassermann, 1999).

In knowledge-base maintenance, AGM-style global consistency checks are costly (Eiter & Gottlob, 1992). Local updates can produce hidden global conflicts, and preserving semantic dependencies during change is non-trivial (Hansson & Wassermann, 2002). Real-time systems add further constraints: strict latency bounds, resource limits, and continuous, possibly conflicting streams strain classical operators (Alechina et al., 2006; Wassermann, 1999).

With the AGM theoretical framework established, we can now examine the computational approaches that preceded and informed its development. These pre-AGM systems, while lacking systematic theoretical foundations, embodied algorithmic insights and implementation strategies that would later find formal expression in AGM theory and continue to influence contemporary BR implementations.

Pre-AGM BR

Before the AGM model, particularly in the late 1970s and early 1980s, 1 several computational approaches to BR emerged from artificial intelligence research, especially in the context of reasoning systems and knowledge representation. These algorithms were developed as part of efforts to create automated reasoning systems capable of handling uncertain and dynamic information. As a result, they focused primarily on practical implementation rather than theoretical foundations (De Kleer, 1986; Doyle, 1979; McCarthy, 1980).

Central to understanding this pre-AGM landscape is the bibliography by Doyle and London (1980), which provided the first systematic organization of the emerging field. The authors’ survey took on a task that, at the time, had not yet been formalized by a consensus logic of belief change: to make sense of a rapidly growing practice of belief maintenance and model update across AI subfields by carving the space into an interpretable taxonomy (Doyle, 1980). Their method was deliberately empirical and cross-disciplinary. Rather than propose a monolithic theory, they curated an indexed bibliography and attached to each item a bundle of descriptors that reflected what the artifact does (e.g., maintains dependencies), how it is represented (e.g., operator schemas; context layers), what kind of inference it licenses (e.g., default rules), and where it is used (e.g., planning and explanation). The result is a pragmatic and multi-axis classification: eight top-level families with fine-grained subcategories that span implementation and theory.

Doyle and London’s indexing process proceeds in three steps: Identify recurring Identify Identify

Then each bibliographic entry is multi-labeled along these axes. Crucially, the categories are not mutually exclusive: a single system might introduce a novel representation (context layers), rely on a specific procedure (dependency-directed backtracking) and presuppose an inference style (defaults), while being deployed in a particular application (explanation).

At the top level, the taxonomy comprises:

The prehistory of BR, before an agreed-upon logic of change, already identifies core tasks: (i)

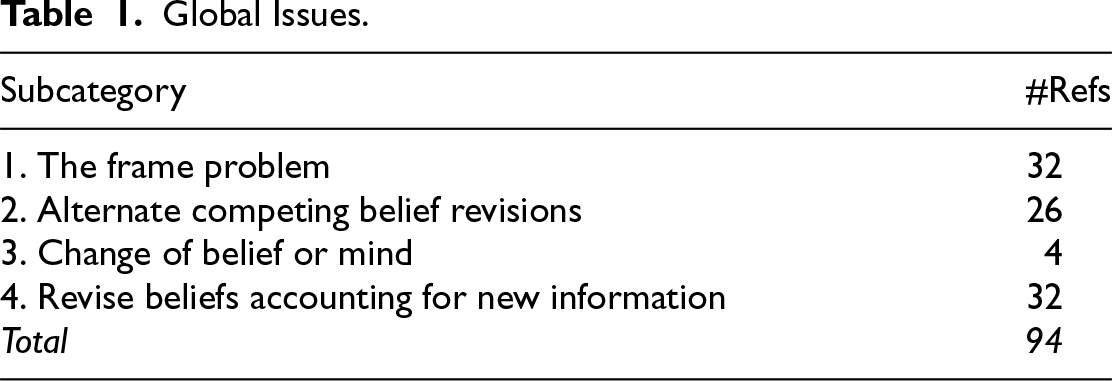

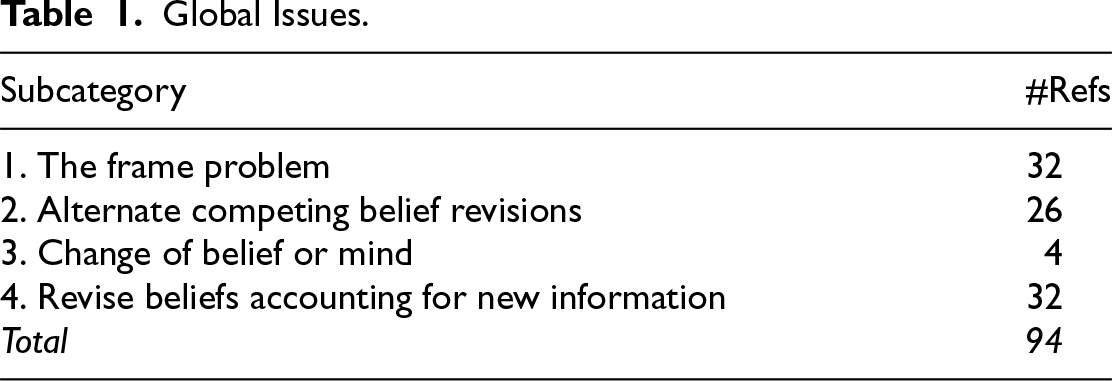

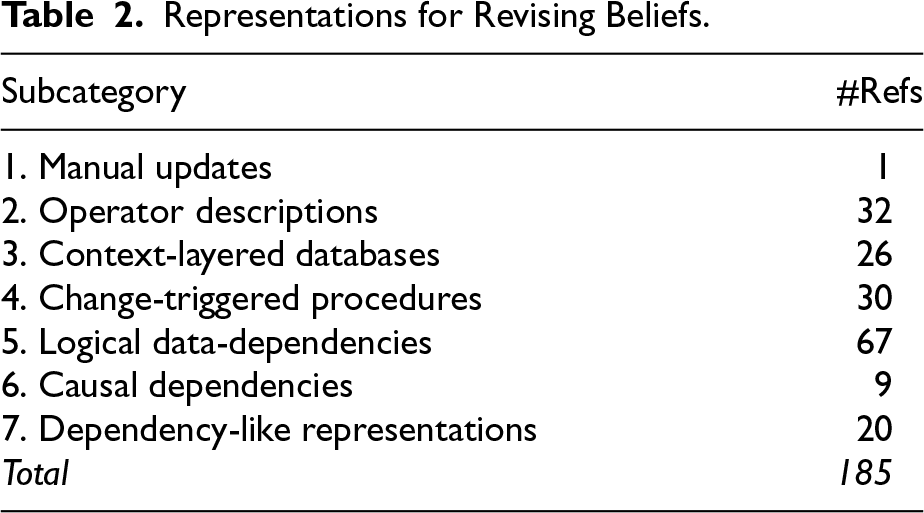

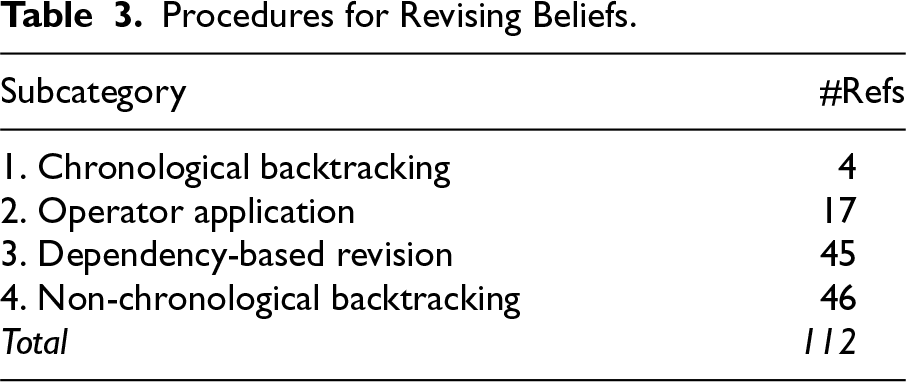

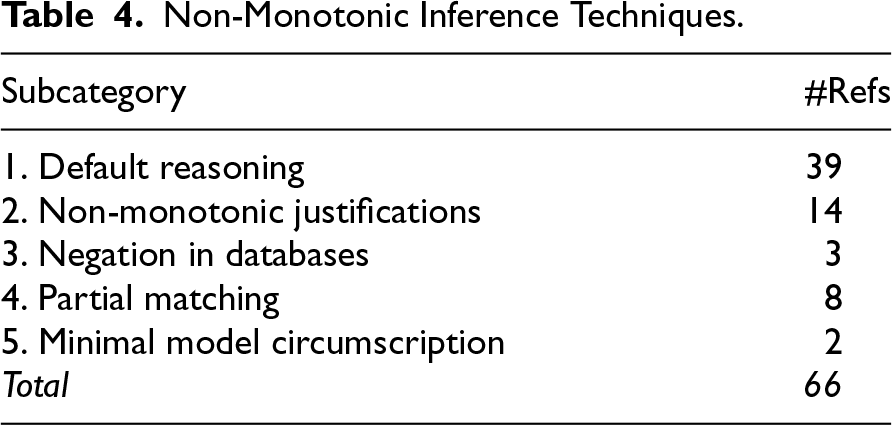

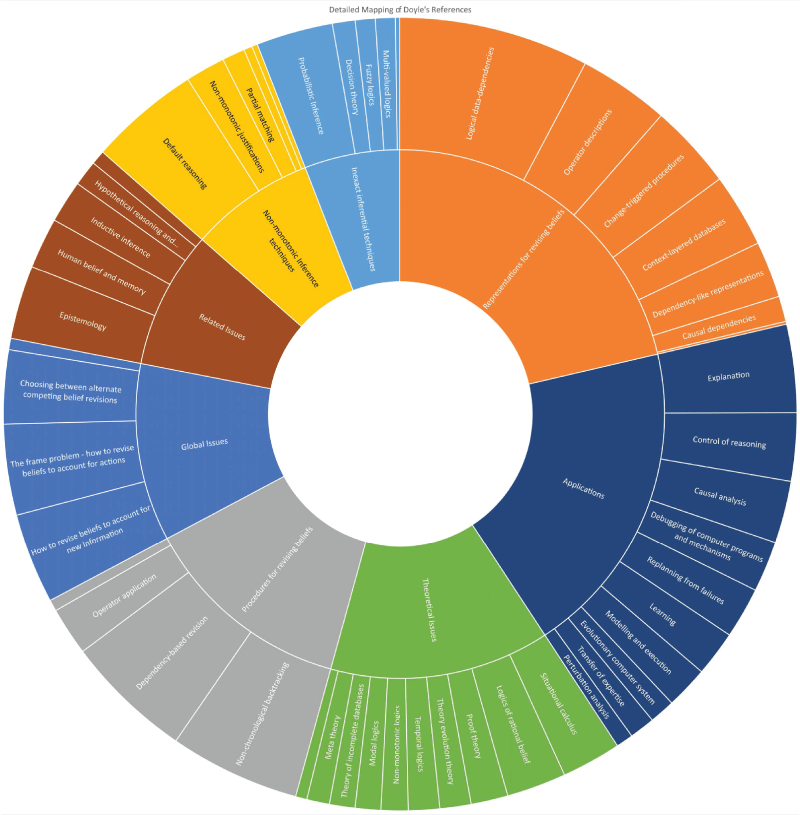

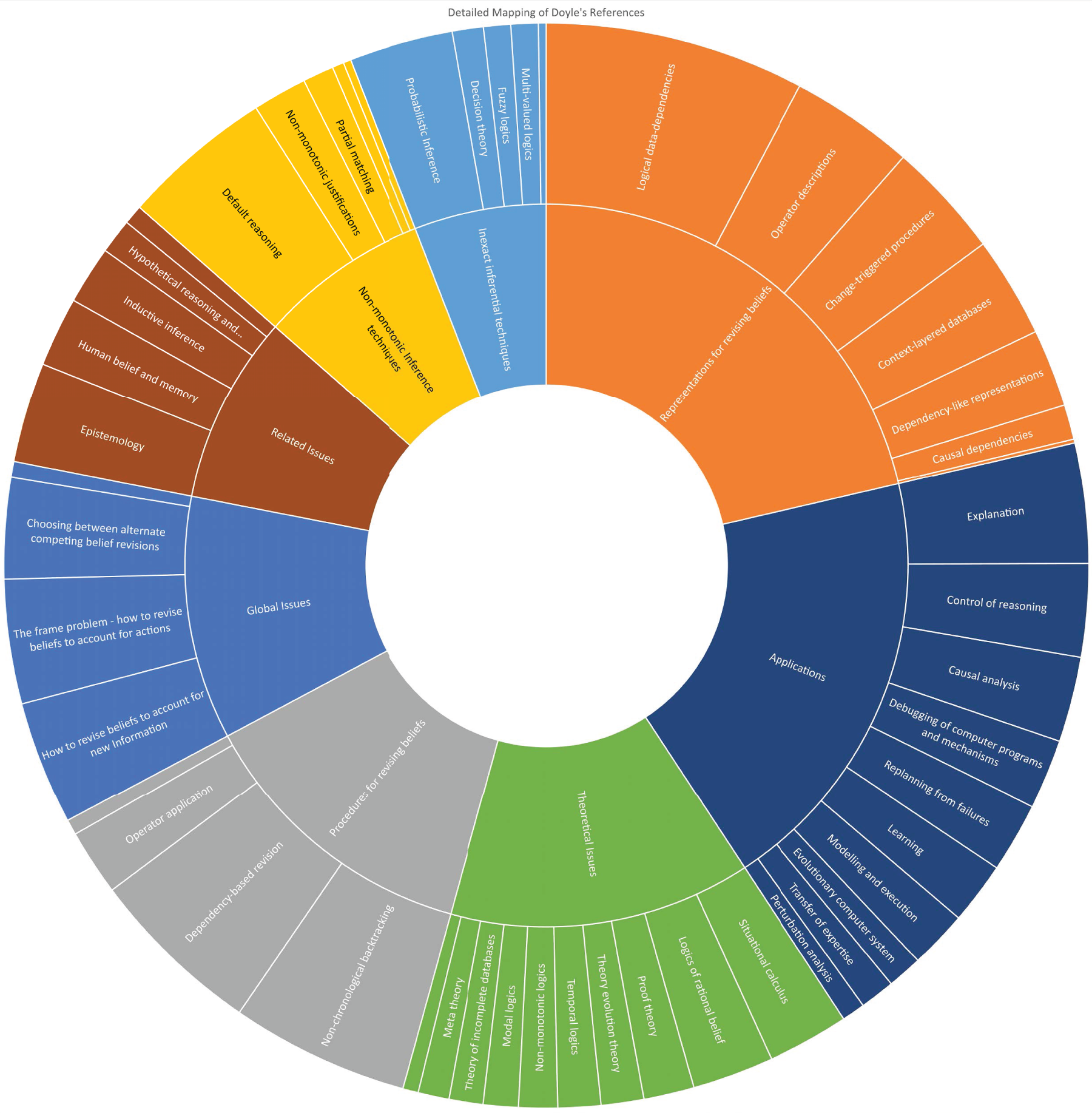

To understand the relative emphasis and development within each category of Doyle and London’s taxonomy, we conducted a quantitative analysis of the literature distribution across the eight classification areas. This analysis reveals not only which research directions received the most attention during the pre-AGM period but also provides insight into the computational priorities and theoretical interests that shaped early BR research. The distribution patterns illuminate the field’s evolution from predominantly procedural and representational concerns toward the more theoretical foundations that would eventually culminate in the AGM framework. Tables 1 to 8 present the numerical breakdown of the articles categorized by Doyle and London within each category, while Figure 1 provides a detailed visual representation of these research concentration patterns. For better visualization, a scaled version of this same figure is shown at the end of the article (Figure 2).

Global Issues.

Global Issues.

Representations for Revising Beliefs.

Procedures for Revising Beliefs.

Non-Monotonic Inference Techniques.

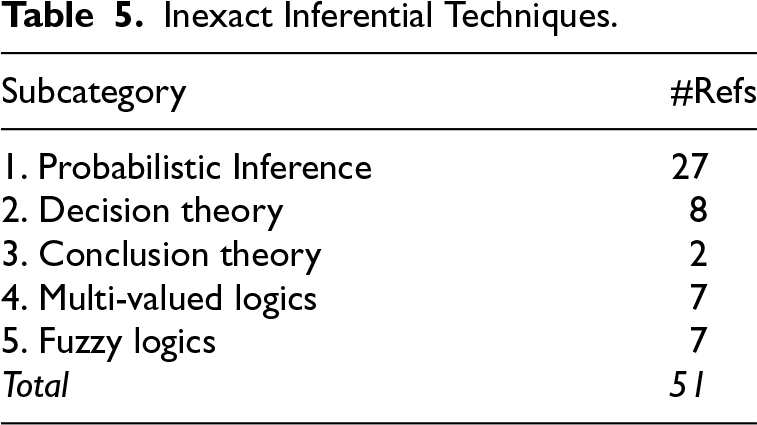

Inexact Inferential Techniques.

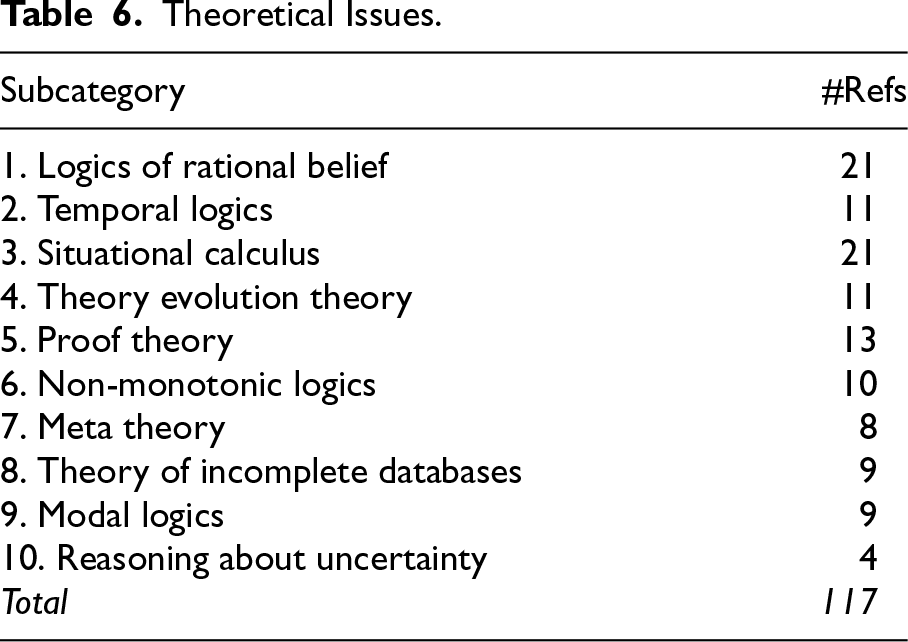

Theoretical Issues.

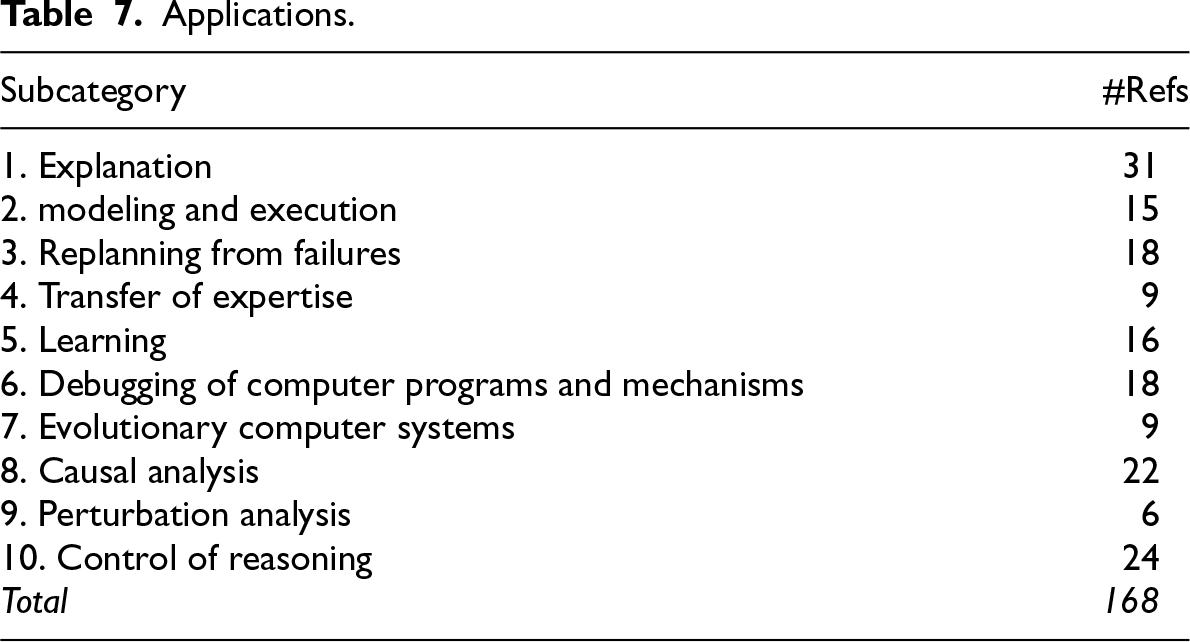

Applications.

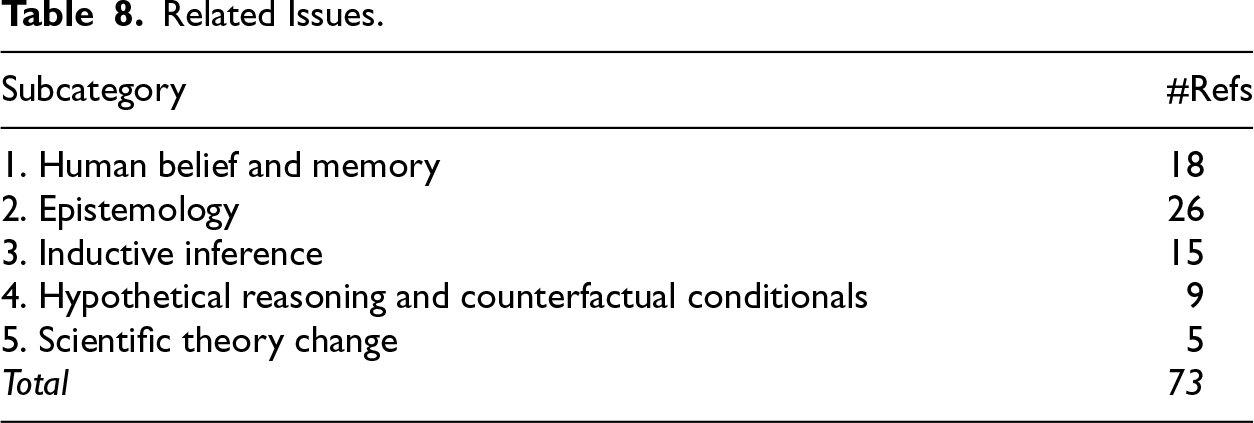

Related Issues.

Detailed mapping of Doyle’s references.

Detailed mapping of Doyle’s references (scaled).

For the purposes of this review, we focus particularly on extracting and analyzing the computationally representative systems that emerged from categories 2 and 3 of Doyle and London’s taxonomy, which deal with representations and procedures for BR. These systems embody the algorithmic innovations that would later inform both the development of AGM theory and contemporary implementations in intelligent agents. The computational approaches we examine include the TMSs by Doyle (1979), with their sophisticated dependency tracking mechanisms, the ATMSs by De Kleer (1986), that enabled reasoning with multiple contexts simultaneously, the probabilistic belief networks by Pearl (1986), that introduced uncertainty quantification into BR, and various dependency-based reasoning systems that pioneered incremental belief update strategies (McAllester, 1982). We will detail this approach in Section 6.

The development of TMSs by Doyle (1979) represents one of the most significant pre-AGM contributions to computational BR. TMS addresses the fundamental problem of maintaining consistency in knowledge bases by tracking the justifications for beliefs and automatically retracting beliefs when their supporting assumptions are withdrawn.

Core TMS Architecture

The TMS architecture consists of several key components:

The TMS operates through a cycle of assumption making, inference, contradiction detection, and dependency-directed backtracking. When a contradiction is detected, the system identifies the minimal set of assumptions that must be retracted to restore consistency, using the recorded dependency structure to guide this process efficiently.

Algorithmic Innovations

Doyle’s TMS introduced several algorithmic innovations that remain influential:

Implementation Details

Doyle (1978) provided detailed implementation descriptions and annotated code for TMS algorithms. Key implementation features include as follows:

Assumption-Based TMSs

De Kleer’s (1986) ATMS extends TMS by maintaining multiple consistent belief bases simultaneously, each corresponding to different assumption combinations. This approach avoids the computational overhead of repeatedly computing BRs by precomputing the consequences of different assumption sets.

ATMS Architecture

The ATMS maintains as follows:

Computational Advantages

ATMS provides several computational advantages over single-context TMS:

Probabilistic Belief Networks

Pearl’s (1986) work on probabilistic belief networks (later known as Bayesian networks) provided a fundamentally different approach to BR based on probability theory rather than logical inference. These networks represent probabilistic dependencies between variables and support BR through Bayesian conditioning.

Network Structure

Probabilistic belief networks consist of:

BR Algorithms

Pearl developed several algorithms for BR in probabilistic networks:

Computational Complexity

The computational complexity of BR in probabilistic networks depends on network structure (Pearl, 1986):

Default Reasoning Systems

Reiter’s (1980) default logic and related non-monotonic reasoning systems provided another pre-AGM approach to BR. These systems handle incomplete information by making default assumptions that can be retracted when contradictory information is discovered.

Default Logic Framework

Default logic extends classical logic with default rules of the form:

This rule states that if

Computational Approaches

Several computational approaches to default reasoning were developed:

Dependency-Based Approaches

Several pre-AGM systems focused explicitly on dependency tracking and management.

Reason Maintenance Systems

In his reason maintenance system, McAllester (1982) provides a different approach to dependency tracking that emphasizes computational efficiency and incremental reasoning.

Causal Networks

Various researchers developed causal network approaches that represent causal relationships explicitly and use them to guide BR processes (Dean & Kanazawa, 1989; Halpern & Pearl, 2005; Pearl, 2009; Spirtes et al., 1993; Verma & Pearl, 1990).

While many of these algorithms lacked the formalism introduced by the AGM model, they represented important efforts to address BR within the AI domain. These algorithms often adopted pragmatic approaches to updating belief bases, which included:

Additional Systems From Doyle’s Bibliography

Beyond the major systems detailed above, Doyle and London’s bibliography documents several other influential pre-AGM approaches that contributed to the computational foundations of BR:

Despite the lack of formalism, the work undertaken during this period contributed significantly to the initial development of BR within AI, establishing an important foundation for subsequent, more formal approaches.

From Computational Practice to Theoretical Foundation

The pre-AGM period established computational BR as a legitimate and necessary component of intelligent systems, with each approach contributing distinct algorithmic innovations that would later inform theoretical developments. The TMS pioneered dependency tracking and non-chronological backtracking (Doyle, 1979), ATMS introduced multi-context reasoning capabilities (De Kleer, 1986), probabilistic networks brought uncertainty quantification to BR (Pearl, 1986), and default reasoning systems provided frameworks for handling incomplete information (Reiter, 1980). Our mapping (Figure 1 and Tables 1 to 8) shows that 47% of papers focused on procedural approaches (Category 3), while 77% concentrated on representational innovations (Category 2), 70% focused on applications (Category 7), and 48% on theoretical issues—most of them related to practical aspects of BR (Category 6), demonstrating the field’s primary emphasis on computational tractability and practical implementation during this formative period.

Doyle’s contribution extends beyond mere cataloging; his work with London established a taxonomical framework that anticipated the theoretical developments that would follow with AGM while capturing the diversity of computational approaches being explored at the time (Doyle & London, 1980). This taxonomy proved remarkably prescient, identifying key dimensions of BR research that remain relevant today and providing a structured lens through which to understand the relationship between early computational work and later theoretical developments (Doyle, 1979, 1988). The distribution patterns in our analysis show that out of the eight categories in the taxonomy, Categories 2, 3, 6, and 7 received the most attention, reflecting the pragmatic priorities of AI researchers who needed working systems before formal theories were available. It is also important to mention that while there were many papers that fit into multiple categories, the papers in Categories 2, 3, 6, and 7 represent 85% of the works analyzed by Doyle and London.

This move naturally follows the historical thread presented in the origins of BR research. The foundational concerns of maintaining a model of the world, reasoning under changing information, and coping with inconsistency emerged from early AI domains such as planning, vision, and language processing, as well as from adjacent philosophical work on theory change (McCarthy, 1980; McCarthy & Hayes, 1981). Doyle’s taxonomy codifies these concerns as named axes of practice, transforming ad hoc engineering solutions into systematic categories of investigation. It represents a pivot point between the pre-AGM era of algorithmic devices, dependence tracking, non-chronological backtracking, and defaulting machinery, and the later AGM paradigm of postulates and representation theorems that would provide normative foundations for BR (Alchourrón et al., 1985; Gärdenfors, 1988). In essence, the taxonomy captures what engineers were already doing to keep beliefs coherent while the formal postulate-based account was still on the horizon.

The computational approaches examined in this section represent more than historical curiosities; they embody algorithmic insights and implementation strategies that remain relevant for contemporary BR systems. However, understanding their true significance requires evaluating them through the lens of the theoretical framework that emerged in the mid-1980s with the AGM model. The question that naturally arises is: how do these pre-AGM computational innovations relate to the rationality postulates and construction methods that AGM theory would later establish? To address this question systematically, we must develop a methodological framework that allows us to re-examine Doyle and London’s taxonomy and the computational systems it encompasses from a post-AGM theoretical perspective, assessing both their contributions to and limitations within the broader evolution of BR research.

Re-examining Pre-AGM Systems Through Post-AGM Theory

The emergence of the AGM framework in the mid-1980s fundamentally transformed the theoretical landscape of BR, providing rigorous axiomatic foundations that were absent during the pre-AGM era (Alchourrón et al., 1985; Gärdenfors, 1988). This transformation creates both an opportunity and a methodological challenge: how can we systematically re-evaluate the rich computational heritage of pre-AGM systems through the clarifying lens of AGM theory? Our approach addresses this challenge by mapping Doyle and London’s empirically derived taxonomy onto the theoretical framework established by AGM, thereby bridging the gap between historical computational practice and contemporary theoretical understanding.

Literature Selection and Scope

Our literature selection strategy is grounded in Doyle and London’s foundational 1980 bibliography while extending systematically to encompass key post-AGM developments. The selection process operates through three complementary approaches:

Our temporal scope extends from 1940 to 2024, with particular emphasis on the critical period from 1970 to 1990, which encompasses both the pre-AGM computational era and the emergence of AGM theory. We include work from AI, philosophy, logic, computer science, and related fields, maintaining interdisciplinary coverage that reflects the field’s diverse intellectual foundations.

Selection criteria prioritize works that (1) establish foundational concepts or methods; (2) provide computational implementations or algorithmic contributions; (3) bridge pre-AGM and post-AGM approaches; (4) demonstrate practical applications of BR principles; and (5) explicitly address the relationship between different theoretical or computational approaches. The detailed methodology is presented in Appendix ??.

Analytical Framework

Our analytical framework combines historical analysis with systematic theoretical evaluation through several complementary methodological components:

Methodological Implementation

To systematically implement this correspondence analysis, we reconstruct the distribution of entries across Doyle’s original taxonomy and analyze how each subcategory has evolved in the post-AGM literature. This reconstruction process involves several methodological steps.

While Doyle’s descriptor-based approach captures the complexity of early systems, its resulting lack of mutually exclusive categories (multi-labeling) and heterogeneous granularity pose challenges for a structured classification. However, these characteristics do not undermine its utility for our analysis. Rather, these limitations justify our AGM-aware refinements and highlight the theoretical progress made since 1980. Our methodology acknowledges these limitations while leveraging the taxonomy’s enduring insights about the fundamental dimensions of BR research.

It is important to note that while our analysis is comprehensive within its scope, certain aspects of contemporary BR research lie beyond the boundaries of both Doyle’s original taxonomy and our AGM-focused extension. Social dimensions of BR, including how social contexts and multi-agent interactions influence belief change, represent important areas of current research that were not anticipated in the pre-AGM era (Baltag & Smets, 2008). Similarly, emotional and psychological factors that affect human BR, while relevant for cognitive modeling applications, fall outside the computational and logical focus of both the original taxonomy and our theoretical analysis.

Our methodological framework provides the analytical tools necessary to examine how Doyle’s taxonomy evolved in the post-AGM era. We now apply this framework systematically to trace the theoretical transformation of each taxonomical category, demonstrating how empirical observations about computational practice were formalized into rigorous theoretical constructs while identifying both continuities and innovations in this evolution.

Taxonomical Evolution in the Post-AGM Era

Doyle’s descriptor-indexed taxonomy captured the practice of belief maintenance in the pre-AGM era through empirical observation and systematic categorization of existing computational approaches. The AGM paradigm fundamentally reframed this landscape by introducing a normative, postulate-based view of rational belief change supported by rigorous representation theorems (Alchourrón et al., 1985; Gärdenfors, 1988). In this section, we systematically revisit each of Doyle’s taxonomic categories and trace their evolution in the post-AGM literature, highlighting new theoretical derivations, conceptual unifications, and practical ramifications. Our aim extends beyond mere reference compilation to demonstrate how each theoretical thread matured into components of the modern BR toolkit and how AGM’s normative framework both validated and transformed pre-AGM computational insights.

The relationship between Doyle’s empirical taxonomy and AGM’s theoretical framework exhibits both continuity and transformation. AGM reframes BR as a normative problem about rational change subject to specific postulates, including closure, success, inclusion, consistency preservation, vacuity, extensionality, and additional constraints for iterated revision, while providing representation theorems that connect algebraic choice functions to belief change operators through constructions like partial-meet contraction and Levi and Harper identities (Gärdenfors, 1988). This theoretical foundation validates many pre-AGM computational intuitions while providing systematic justification for design choices that were previously ad hoc.

Global Issues: From Computational Problems to Theoretical Postulates

The Frame Problem and Action-Driven Change

Doyle’s original framing merged state change by action with belief change by information, reflecting the practical reality that early AI systems needed to handle both types of change within unified computational frameworks. Post-AGM theoretical work systematically separated these concerns, leading to the fundamental distinction between belief revision and belief update (Katsuno & Mendelzon, 1991b). This separation proved theoretically crucial: AGM-style revision integrates new information with minimal change to existing beliefs, while update handles world change due to actions or events by modeling how the world itself has changed rather than how our information about a static world has improved.

This theoretical distinction led to dual semantic frameworks that formalize the intuitive difference between learning new facts and accommodating world changes. BR employs semantic approaches, including faithful assignments (Katsuno & Mendelzon, 1991b), Grove’s (1988) system of spheres, and distance-based minimization (Dalal, 1988) that prioritize minimal change to belief content. Belief update, conversely, employs possible-model approaches and persistence principles (Winslett, 1991) to model how the world evolves over time. In contemporary planning and knowledge representation systems, the frame problem is typically addressed through action formalisms such as successor-state axioms or update operators that incorporate inertia principles, while AGM-style revision handles sensing, communication, and information-gathering activities.

Choosing Among Competing Revisions

Doyle’s theme of choice among alternatives becomes explicit and systematic in post-AGM representation theorems through the machinery of selection functions and preference orderings. Partial-meet contraction formalizes this choice process by selecting among maximal consistent subsets (remainders) through selection functions that encode preferences over different ways of restoring consistency (Alchourrón et al., 1985). Epistemic entrenchment provides an alternative formalization where choices are guided by resistance to contraction, with more entrenched beliefs being more difficult to give up (Gärdenfors, 1988).

The distance-based and sphere semantics developed by Grove (1988) and others Dalal (1988) reify choice as minimization problems or proximity relationships in logical space, providing computational methods for implementing preference-guided revision. This theoretical line matured into sophisticated frameworks for rational preferences over change, including Parikh’s (1999) work on relevance and splitting that enables modular BR, and various approaches to priority-based and relevance-sensitive change that maintain computational tractability while respecting normative constraints.

Change of Belief or Mind: Iterated Revision

The original AGM framework addressed single episodes of belief change, but Doyle’s subcategory of ‘‘change of mind” explicitly identified belief change over time as a distinct problem class, anticipating the need for principled approaches to sequences of BRs. Post-AGM work systematically addressed this limitation by studying iterated BR, asking how sequences of inputs should interact and what rationality constraints should govern belief evolution over time.

The Darwiche-Pearl (DP) (1997) postulates for iterated revision sparked extensive research into iteration policies, leading to a sophisticated taxonomy of approaches including natural and lexicographic revision (Boutilier, 1996), restrained and priority-based iterations that balance stability with responsiveness to new information (Booth & Meyer, 2006), and ordinal-ranking approaches based on the Spohn’s (1988) ranking functions that connect BR to conditional logic and default reasoning. These theoretical advances provide precise answers to Doyle’s question about ‘‘how to change one’s mind again” by specifying rational dynamics for belief evolution.

Absorbing New Information With Minimal Disruption

Doyle’s informal notion of ‘‘minimal disruption” receives precise theoretical treatment through AGM’s central theoretical results. The Levi identity (

The distance-based and sphere semantics provide equivalent minimization perspectives that make the notion of ‘‘minimal change” mathematically precise through metrics on possible worlds or logical theories (Dalal, 1988; Grove, 1988). Subsequent complexity and compilability results (Eiter & Gottlob, 1992; Liberatore & Schaerf, 1996) clarify the computational feasibility of these theoretical constructions, providing guidance for practical implementation while maintaining theoretical soundness.

Representations: From Data Structures to Theoretical Constructs

Manual Updates and Operator Descriptions

STRIPS-style add/delete lists, which Doyle classified as operator descriptions for manual updates, became the canonical vocabulary for action update in planning systems and were formally distinguished from informational revision (Fikes & Nilsson, 1971). Post-AGM theoretical work clarified that revision should be represented intensionally through belief sets, belief bases, and entrenchment orderings, while operator descriptions belong to the update side of the revision/update distinction (Winslett, 1991). This clarification resolved the pre-AGM conflation that Doyle noted between different types of belief change by providing distinct theoretical frameworks for different change scenarios.

Context Layers and Modularity

Doyle’s notion of layered contexts anticipated important theoretical developments in independence and modularity for BR. Post-AGM work made these intuitions precise through formal results like Parikh’s splitting theorem, which demonstrates that when a logical language decomposes into independent components, BR can be performed component-wise without undesirable interactions between modules (Parikh, 1999). Relevance and independence constraints became design criteria for practical BR operators and informed the development of belief base policies that respect modular structure (Hansson, 1999c).

Change-Triggered Procedures and Dependency Structures

Doyle’s emphasis on logical data dependencies evolved into the general theory of belief bases with kernel constructions and incision functions that provide systematic methods for base-level BR (Hansson, 1994a). To contract a belief base by a formula

This base-level perspective supports the fine-grained control over belief change—including traceability, minimal deletion, and explicit justification management—that Doyle sought in dependency graph approaches, while maintaining compatibility with AGM’s postulate-based framework. The kernel approach bridges the gap between AGM’s abstract theoretical requirements and the concrete implementation needs of practical BR systems (Fermé & Hansson, 2018).

Causal and Justificatory Encodings

Pre-AGM approaches to non-monotonic dependencies and causal relationships matured along two complementary theoretical directions in the post-AGM era.

DEL (Baltag et al., 1998; Van Ditmarsch et al., 2008) later provided event-driven action models with explicit preconditions and postconditions for updating epistemic states, offering systematic approaches to the causal and procedural relationships that pre-AGM systems handled through ad hoc mechanisms. These developments formalize Doyle’s intuitions about change-triggered procedures while providing rigorous semantic foundations.

Procedures: From Algorithms to Semantic Characterizations

Operator Application and Backtracking Strategies

Doyle’s operational catalogue of procedural approaches—including chronological and non-chronological backtracking, operator application, and dependency-based revision—evolved into semantic characterizations that subsume many specific algorithmic approaches under unified theoretical frameworks. Partial-meet construction corresponds to remainder selection guided by preference criteria; sphere-based semantics implements locality through distance relationships; ranking-based approaches organize preferences as priority queues over possible worlds or belief states.

Non-chronological backtracking, pioneered in systems like TMS (Doyle, 1979), corresponds to dependency-directed change at the belief base level through kernel and incision methods (Hansson, 1994a). Iterated revision policies lift these base-level procedures to handle streams of inputs while maintaining rationality constraints across multiple revision episodes (Boutilier, 1996; Darwiche & Pearl, 1997).

Dependency-Based Revision

Dependency-based revision became the canonical belief base revision pipeline in post-AGM theory: compute kernels to identify minimal inconsistent subsets; choose incisions according to minimality criteria or priority orderings; reconstruct a revised base from the remaining beliefs; apply logical closure if needed for specific applications (Fermé & Hansson, 2018; Hansson, 1994a). This systematic approach formalizes the dependency-tracking intuitions of pre-AGM systems while providing theoretical guarantees about the rationality of the resulting belief changes.

Specialized procedures for restricted domains—including Horn clauses, description logics, and other logical fragments—require adapted methods with appropriate definability and complexity guarantees (Eiter & Gottlob, 1992; Flouris et al., 2008). These domain-specific approaches maintain the essential insights of dependency-based revision while exploiting structural properties of particular logical languages to achieve better computational performance.

Non-Monotonic Inference: Orthogonality and Integration

Defaults and Justifications Versus Revision

Post-AGM theoretical work systematically clarified the relationship between BR and non-monotonic reasoning that Doyle’s taxonomy implicitly recognized, while also motivating accounts in which the two are seen as complementary perspectives on the same underlying dynamics of belief change. This relationship has been characterized both as orthogonality (where the two address fundamentally different problems) and as duality (where they represent complementary perspectives on rational belief change, Gärdenfors, 1991; Rott, 1991).

Gärdenfors (1991) demonstrates that BR and non-monotonic reasoning can be viewed as ‘‘two sides of the same coin,” with non-monotonic consequence relations corresponding to families of revision operations and vice versa. Rott (1991) further develops this connection by identifying two fundamental dimensions of belief change: the informational dimension (captured by AGM revision) and the motivational dimension (captured by non-monotonic inference), showing how these dimensions interact in rational belief dynamics.

BR addresses changing commitments in light of new factual information, while default logics and circumscription govern tentative inference patterns in the absence of complete information (McCarthy, 1980; Reiter, 1980). The KLM framework for rational closure (Kraus et al., 1990) and Spohn’s ranking functions (Spohn, 1988) provide rigorous semantics for default entailment that complement rather than compete with AGM-style BR.

Interaction Principles

Despite their theoretical orthogonality, AGM revision and non-monotonic reasoning systems exhibit systematic relationships at the semantic level through ranking functions and faithful assignments. The DP (1997) postulates for iterated revision constrain how conditional beliefs evolve across revision episodes, while natural versus lexicographic revision policies correspond to different approaches for re-prioritizing conditional information after receiving new inputs (Boutilier, 1996). These connections bridge Doyle’s category of ‘‘justifications” with modern conditional semantics and iterated belief change.

Inexact Inferential Techniques: Qualitative and Quantitative Bridges

Probabilistic and Graded Belief Change

Doyle’s category of inexact inferential techniques anticipated important connections between AGM’s qualitative approach and probabilistic methods for belief under uncertainty. While Bayesian conditioning differs fundamentally from AGM revision, principled theoretical bridges exist through limiting cases and qualitative probability theory.

Axiomatic connections between qualitative and quantitative belief have been extensively explored through multiple frameworks (Boutilier, 1994; Darwiche & Pearl, 1997; Friedman & Halpern, 1999; Goldzsmidt et al., 1993): Spohn’s ranking functions correspond to limit cases of probability measures (Spohn, 1988), where ordinal conditional functions emerge as qualitative abstractions of probabilistic belief; Jeffrey-style probability updates relate to specific iterated revision policies (Jeffrey, 1983), with connections formalized through imaging operators; and representation theorems establish conditions under which qualitative preference orderings correspond to underlying probability measures (Krantz et al., 1971).

Possibility and necessity measures produce possibility-based revision and update operators with AGM-like properties under appropriate qualitative axioms (Dubois & Prade, 1993), providing alternative approaches to uncertain belief change that maintain AGM’s insights about minimal change while accommodating numerical uncertainty representations.

Decision-Theoretic Choice Functions

The AGM framework’s selection step in partial-meet contraction can be enhanced with utility-theoretic considerations by choosing remainders that minimize expected loss or maximize expected value under relevant integrity constraints (Hansson, 1999c). This approach links belief change with value-guided decision making in applications where the costs and benefits of different beliefs can be meaningfully quantified, extending AGM’s normative framework to accommodate practical decision-making contexts.

Theoretical Issues: Formalization and Unification

Representation Theorems and Semantic Equivalences

AGM’s partial-meet and entrenchment representations (Alchourrón et al., 1985; Gärdenfors, 1988) were systematically unified with Grove’s sphere semantics (Grove, 1988) and distance-based characterizations (Dalal, 1988; Katsuno & Mendelzon, 1991b) through a series of representation theorems that demonstrate the equivalence of different approaches to formalizing minimal change. These theoretical equivalences instantiate Doyle’s concern with ‘‘proof theory” and ‘‘meta theory” through precise mathematical derivations that connect syntactic and semantic approaches to BR.

Belief Bases Versus Belief Sets

The distinction between belief bases (finite, non-closed sets of explicit beliefs) and belief sets (infinite, logically closed sets of all believed consequences) emerged as a central theoretical issue in post-AGM work (Hansson, 1994a; Nebel, 1998). Belief bases enable non-closed repositories with explicit traceability and fine-grained control over belief change, while belief sets provide the mathematical precision necessary for theoretical analysis. Kernel contraction and related methods provide constructive, modular approaches to base-level change with postulate soundness guarantees (Fermé & Hansson, 2018).

For restricted logical fragments including Horn clauses and description logics, definability preservation and interpolation properties become central theoretical concerns (Eiter & Gottlob, 1992; Flouris, 2006; Flouris et al., 2008), leading to specialized approaches that maintain the essential insights of AGM theory while exploiting the structural properties of particular logical languages.

Ontology Repair and Description Logic Extensions

The extension of AGM BR principles to description logic ontologies represents an important theoretical development that addresses practical needs in semantic web and knowledge graph applications. Description logics provide the formal foundations for ontology languages like OWL, which are widely deployed in enterprise knowledge management, biomedical informatics, and linked data systems (Baader et al., 2003).

Recent work has established formal connections between classical AGM contraction operations and ontology repair mechanisms. Baader and Wassermann (2024) demonstrate that AGM-style contractions can be defined for description logic ontologies through optimal repair strategies that minimize the removal of axioms while restoring consistency. Their approach adapts partial meet contraction to the description logic setting by identifying minimal inconsistent subsets (analogous to kernels in belief base contraction) and applying incision functions that respect the structural properties of description logic axioms.