Abstract

The RGB-D salient object detection (SOD) task aims to pinpoint salient objects in RGB and depth images, with the key challenge being effective multimodal integration. This article presents MHINet, a network tailored for RGB-D SOD, encompassing a dual-stream swin transformer encoder, adaptive fusion enhancement module (AFEM), multi-level feature interaction module (MFIM), and decoder. The dual-stream swin transformers extract multi-level features (outperforming traditional CNNs in capturing long-range dependencies). AFEM dynamically adjusts RGB-depth fusion ratios via channel attention, enhancing feature expression. MFIM uses middle-layer features to enable stable cross-level feature interaction, improving fusion efficiency. The decoder restores edge details via residual convolution. Experimental results on DUT, LFSD, NJU2K, NLPR, and SIP show MHINet outperforms state-of-the-art methods, validating its cross-modal detection capabilities for RGB-D SOD.

Introduction

Salient object detection (SOD) aims to detect the most prominent objects from images or videos containing various complex scenes. SOD has proven valuable in a range of computer vision tasks, such as photo-cropping (Wang et al., 2018), video object segmentation (Wang et al., 2015), semantic segmentation (Wang et al., 2022), and medical image segmentation (Zhao et al., 2021). Most previous SOD tasks used only RGB images (Cong et al., 2022b; Zhang et al., 2022a; Zheng et al., 2021; Zhu et al., 2019). Traditional SOD methods (Jiang et al., 2013; Yan et al., 2013) generally utilize handcrafted features or heuristic priors (e.g., color contrast Cheng et al., 2014, center prior (Jiang & Davis, 2013), background prior (Han et al., 2014)) to detect salient objects. However, these methods fail to capture sufficient semantic information. Recently, convolutional neural networks (CNNs) have been widely adopted in SOD tasks(Chen et al., 2022; Li et al., 2023a; Tang et al., 2022), surpassing traditional methods(Klein & Frintrop, 2011). For instance, sWei et al. (2020) proposed

Although these methods have achieved certain results in detection effects. However, detection of salient objects using methods based only on RGB in complex scenes takes much work. Fortunately, the rise of depth cameras like Kinect and RealSense has made RGB-D SOD, which incorporates depth information, an increasingly appealing area of research. Therefore, researchers introduced depth images to provide supplementary information, such as spatial structure, three-dimensional layout, and object boundary information (Piao et al., 2019), which, to some extent, overcomes the limitations of RGB images. However, challenges such as accurately distinguishing salient objects persist in complex scenes, including low light conditions, image clutter, and depth blur (Chen & Li, 2019). RGB-D detection generally outperforms RGB in such scenarios, yet effectively fusing complementary information from RGB and depth modalities remains challenging. For instance, Peng et al. (2014) proposed a simple fusion strategy by combining RGB-based scene saliency model with depth-induced saliency generated using a multi-context contrastive method. However, this method ignores the differences between modalities, resulting in inaccurate feature fusion. Wang and Gong (2019) designed a two-stream CNN to predict saliency maps from RGB and depth maps separately, followed by adaptive fusion. Zhang et al. (2021) infers latent variables through a generator model, and the inference model gradually updates the latent variables to achieve probabilistic cross-modal fusion. DMRA (Ji et al., 2022) uses deep refinement blocks and residual connections to fuse the complementary cues of RGB and depth streams. However, this fusion approach hardly captures the complex interactions between the two modalities.

The fusion of multi-level features is also widely used in SOD, as effectively integrating high-level semantic features with low-level details is crucial for accurately distinguishing image foreground from background. The most commonly used fusion method is top-down fusion, such as concatenating and summing features of adjacent layers (Ji et al., 2021; Wang et al., 2021; Zhou et al., 2021b) or using integration modules (Liu et al., 2021; Zhou et al., 2021a). However, the number of adjacent layers is limited, and the diversity of feature fusion is limited, making it impossible to achieve a complete integration of high-level and low-level features. Therefore, some methods (Sun et al., 2021; Zhang et al., 2020) directly integrate multi-level features before fusion to learn feature representations of features at different layers. These methods ignore the characteristics of each layer of features and limit the study of the complementarity between different features. In addition, the direct integration may cause the original information in the features to be destroyed.

In response, this article designs an adaptive enhancement fusion and multi-level feature interaction network to enhance RGB-D detection performance. The overall network framework designed in this article adopts an encoder–decoder structure, including three key components: feature extraction, feature fusion interaction, and feature decoding, and finally achieves SOTA performance. MHINet first uses a Swin Transformer to extract RGB and depth image features. Among them, the Swin Transformer can efficiently model global features through its moving window self-attention mechanism. Then, the adaptive fusion enhancement module (AFEM) is introduced, which combines channel attention with adaptive fusion to enhance the network’s expressive ability, while utilizing the semantic information of high-level features to provide rich contextual guidance. Then, a multi-level feature interaction module (MFIM) is used to efficiently process the high-level semantic features and low-level detail features in the fused features. Finally, the decoder is used to progressively integrate low-level features, extract image details and remove noise for precise predictions. Key contributions are as follows:

We proposed an innovative RGB-D SOD framework, MHINet, which follows an encoder–decoder structure. Through comprehensive experiments on five challenging datasets, MHINet outperforms existing optimal algorithms in four evaluation indicators. This outstanding detection performance is achieved through the coordinated operation of multiple novel components within the framework. We designed an adaptive fusion enhancement module (AFEM). By ingeniously combining the channel attention mechanism and an adaptive fusion strategy, AFEM enables effective multi-modal feature integration. It can dynamically adjust the information fusion coefficients of different modalities, realizing the information complementarity between RGB and depth images. This not only enriches the network’s expressiveness but also significantly improves the model’s ability to utilize cross-modal information. We developed a multi-level feature interaction module (MFIM). MFIM takes into account the characteristics of each level of features and uses middle-layer features as intermediaries to achieve stable interaction among multi-level features. Considering that direct interaction between high-level and low-range features may hinder feature fusion, this innovative design effectively overcomes this problem. As a result, the integration of details and semantic information is improved, leading to enhanced detection accuracy. In the decoder design, we introduced a unique approach of gradually incorporating interactive features generated by the MFIM in the step-by-step decoding process. Additionally, residual convolution blocks are used to reduce noise. This design effectively addresses the issue of detail loss and noise introduction during the encoding process, further improving the accuracy of salient object detection.

Method

Overview Framework

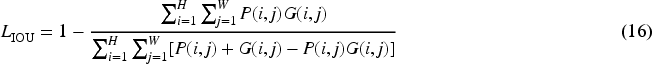

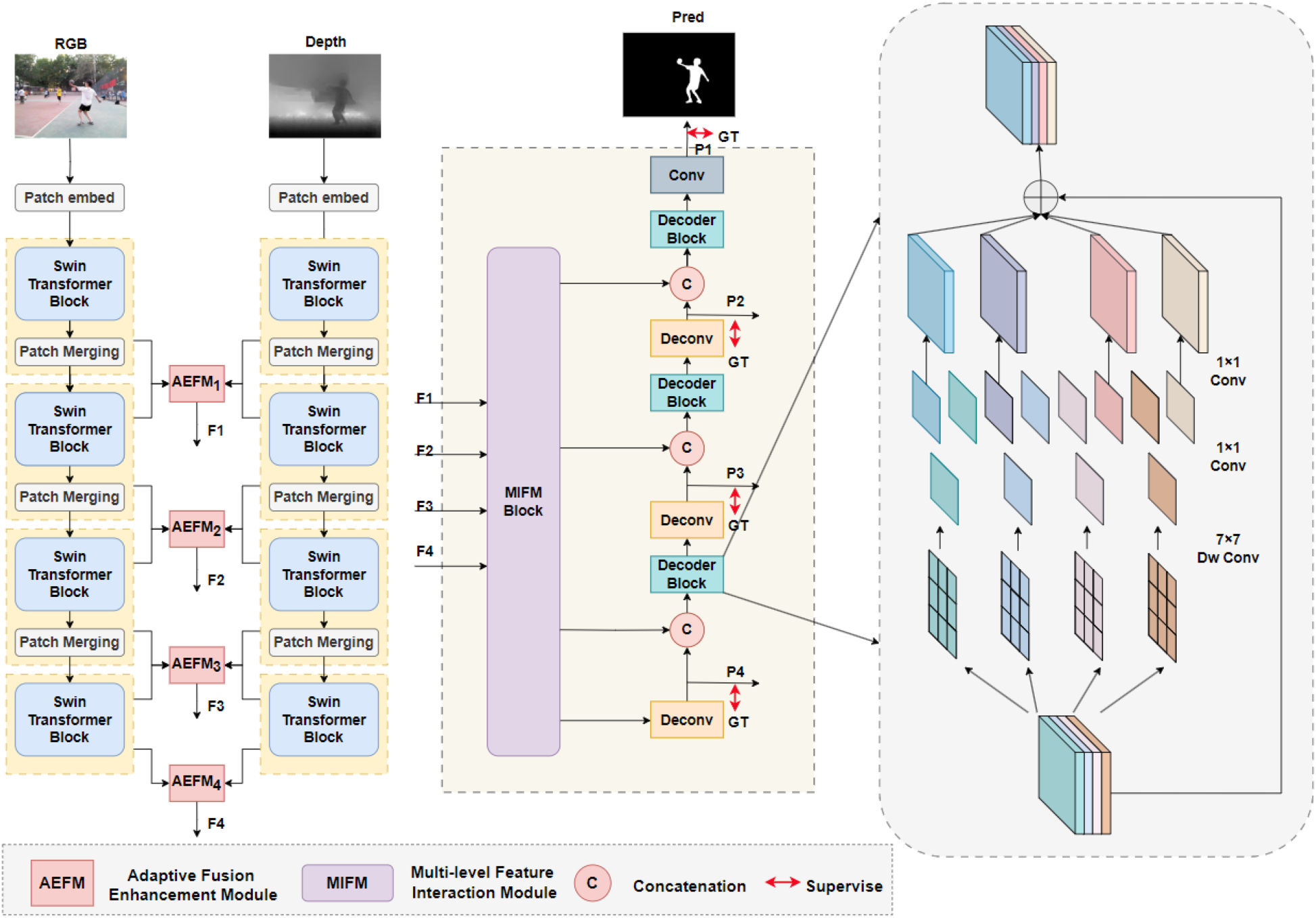

The MHINet proposed in this article includes two Swin Transformer encoders, an AFEM, a MFIM, and a decoder module. Its overall architecture is shown in Figure 1. First, the MHINet uses swin transformer as the backbone network to extract multi-level features for RGB images and depth images respectively. Then, the AFEM module is used to fuse the extracted RGB multi-level features and deep multi-level features. Then, the fused features

The overall structure of MHINet.

In the RGB-D SOD task, feature extraction is crucial. Traditional models usually rely on CNN networks for feature extraction, but the size of the receptive field limits the extraction of global features. Swin Transformer implements global information modeling through shift window operations and reduces the computational complexity to linear, thereby significantly reducing the computational burden. In the research of this article, Swin Transformer was selected to extract features, and the Swin-B version was selected to balance complexity and efficiency.

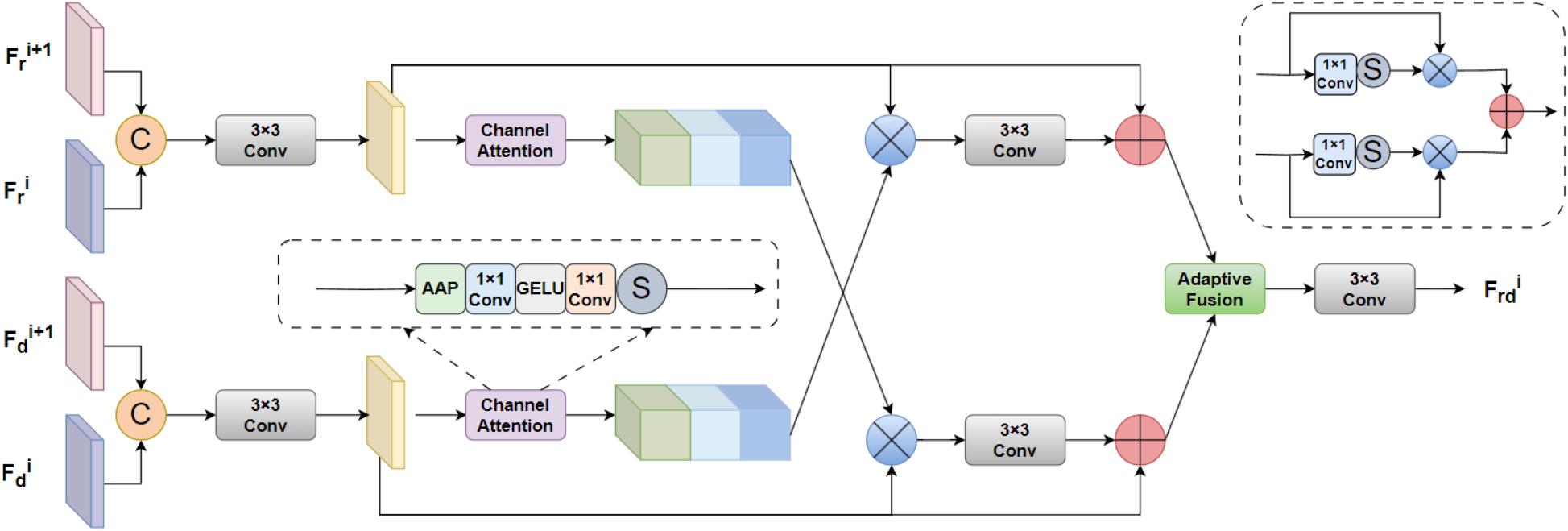

Adaptive Fusion Enhancement Module

Research shows that RGB features contain rich color and texture information, while depth features show spatial layout, that is, specific spatial location information. One difficulty in the RGB-D SOD task is to effectively use the complementary information in RGB and deep features to fuse different features. Therefore, this article designs a cross-modal AFEM that combines channel attention with adaptive fusion. Among them, the channel attention mechanism can help the network automatically weight different feature channels, enabling the network to focus on important feature channels and suppress irrelevant or noisy features, thereby improving the network’s feature expression ability. The correlation of the two features is reflected through the attention-crossover strategy. The ratio between RGB and depth image features is then adjusted through adaptive fusion learning of the gating values. In this way, the AFEM module can dynamically adjust the fusion coefficient of two different information according to the input features, enhance the network’s expression ability, and thus improve the fusion effect. In addition, smaller objects are generally difficult to detect, and most SOD methods are prone to missed detections. This article believes this is because the information in a single-size feature is insufficient, making it difficult to detect objects of various sizes. Therefore, the AFEM fuses high-level features into adjacent low-level features to obtain richer contextual information guidance, thereby improving the detection effect of multi-scale objects.

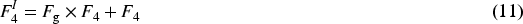

As shown in Figure 2, the AFEM first scales the high-level feature

The structure of the AFEM.

Among them,

After this, two-channel attention operations are applied to RGB and depth features, respectively, to obtain RGB attention weights and depth attention weights, and then cross-multiply the two attention weights with the corresponding features to enhance the complementary correlation between the two modalities, thereby generating RGB attention features and depth attention features. Then, the respective input features are directly added to them through jump connections to pass information to deeper layers, thereby alleviating the problem of gradient disappearance. Finally, adaptive fusion dynamically adjusts the fusion ratio of RGB and depth features, and obtain fused features

In summary, the adaptive enhanced fusion module obtains richer contextual information by integrating adjacent high-level features and can better adapt to multi-scale objects. In addition, the module uses a weighted crossover strategy to fully utilize the complementary information of RGB and deep features, and it introduces adaptive fusion to dynamically adjust the fusion ratio of the two features, thereby enhancing the feature representation capability of the network.

The AFEM fuses multi-modal features to obtain multi-level fusion features. Some existing methods (Ji et al., 2021; Liu et al., 2021; Zhang et al., 2022a; Zhou et al., 2021a) usually adopt a top-down layer-by-layer integration method for multi-level feature fusion. However, this method cannot take advantage of the diversity of features and cannot fully integrate features from different layers. Although other methods (Sun et al., 2021; Zhang et al., 2020) directly integrate multi-level features to enhance the features of each layer, they still cannot fully utilize the unique information of each level feature. It is known that low-level detailed features, such as textures and borders, can make the foreground and background of an image clearer. High-level complex features contain rich semantic information, which can identify object categories and highlight salient areas from the background. By fusing multi-level features, more comprehensive features can be obtained in complex scenes, thereby improving detection performance.

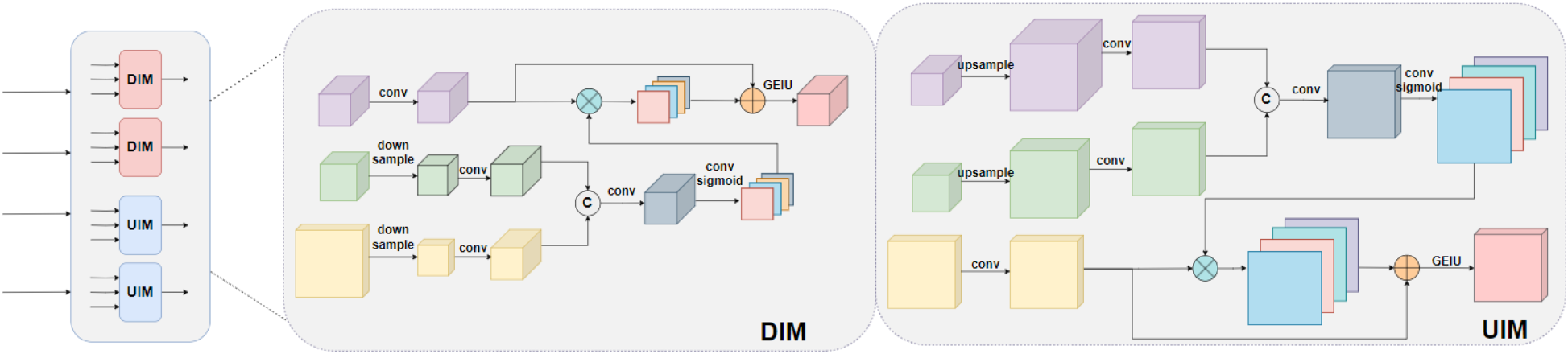

To address these issues, this article introduces a MFIM. This module realizes the fusion of high-level and low-level features by introducing the intermediate features

The structure of MFIM.

Among them,

The UIM is opposite to the lower interaction module. It upsamples and scales

Among them,

Usually, when the encoder performs feature extraction, the edge details of the image may be lost, and noise will also be introduced during the upsampling process. To address this problem, this article designs a decoder that restores details by gradually introducing interactive features generated by the MFIM module, while using residual convolution blocks to reduce the impact of noise.

As shown in Figure 1, this article inputs the interaction features (

Among them,

This article uses a deep supervision strategy (Lee et al., 2015) to generate a saliency prediction map by

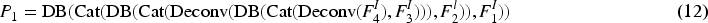

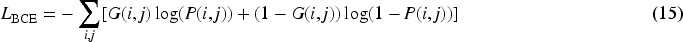

The BCE loss, a classic in SOD tasks, is a pixel-level loss that calculates the independent loss of each pixel. It can be calculated as follows:

Among them,

The overall loss

Experimental Settings

Datasets

To evaluate MHINet’s performance, this article evaluate five public RGB-D SOD benchmarks: NJU2K (Ju et al., 2014), NLPR (Peng et al., 2014), DUT(Wang et al., 2017), LFSD (Li et al., 2014), and SIP (Fan et al., 2020).

The dataset used in this article is specifically set as follows: the training set contains 2185 samples, including 1485 samples from NJU2K and 700 samples from NLPR. The test set contains the remaining samples of NJU2K and NLPR, as well as complete data from other datasets.

Evaluation Metrics

To quantitatively evaluate the data, this article uses a series of methods, including F-measure (Cong et al., 2018), E-measure (Fan et al., 2018), S-measure (Fan et al., 2017), and mean absolute error (MAE) (Perazzi et al., 2012).

F-Measure is a comprehensive evaluation metric, defined as the weighted harmonic mean of precision and recall. It is calculated as follows:

Among them,

E-Measure combines the local pixel values at the image level with the image level average to jointly capture image-level statistics and local pixel matching information. It is calculated as follows:

Among them, W and H are the width and height of the saliency map.

S-Measure evaluates the spatial structure similarity between the saliency map S and the ground truth Y. It is calculated as follows:

Among them,

MAE evaluates the average pixel-wise relative error between the ground truth and the normalized prediction by calculating the average of the absolute values of the differences.

Among them,

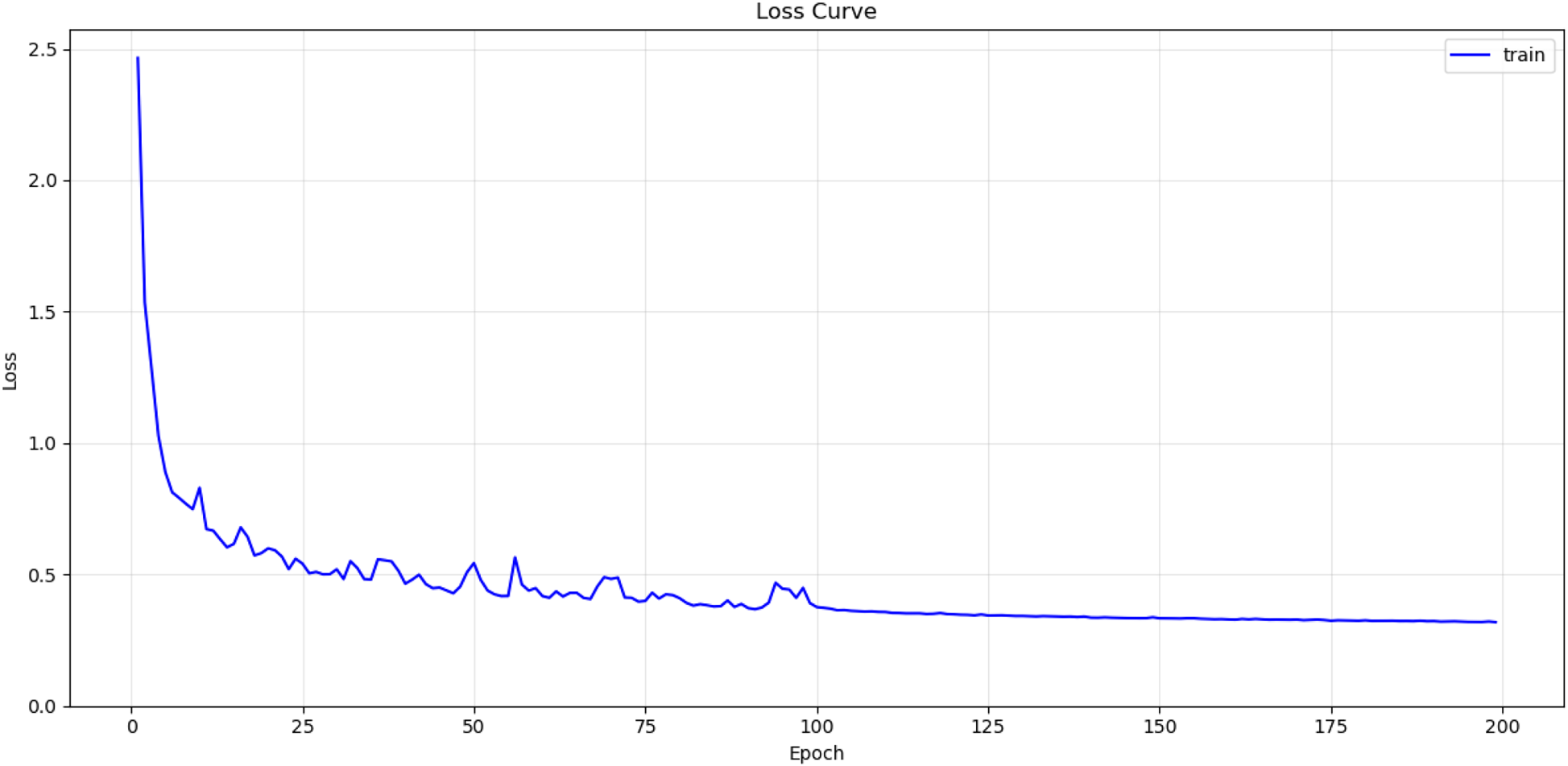

During the training and testing phases, the RGB and depth images are resized to

Training loss curve.

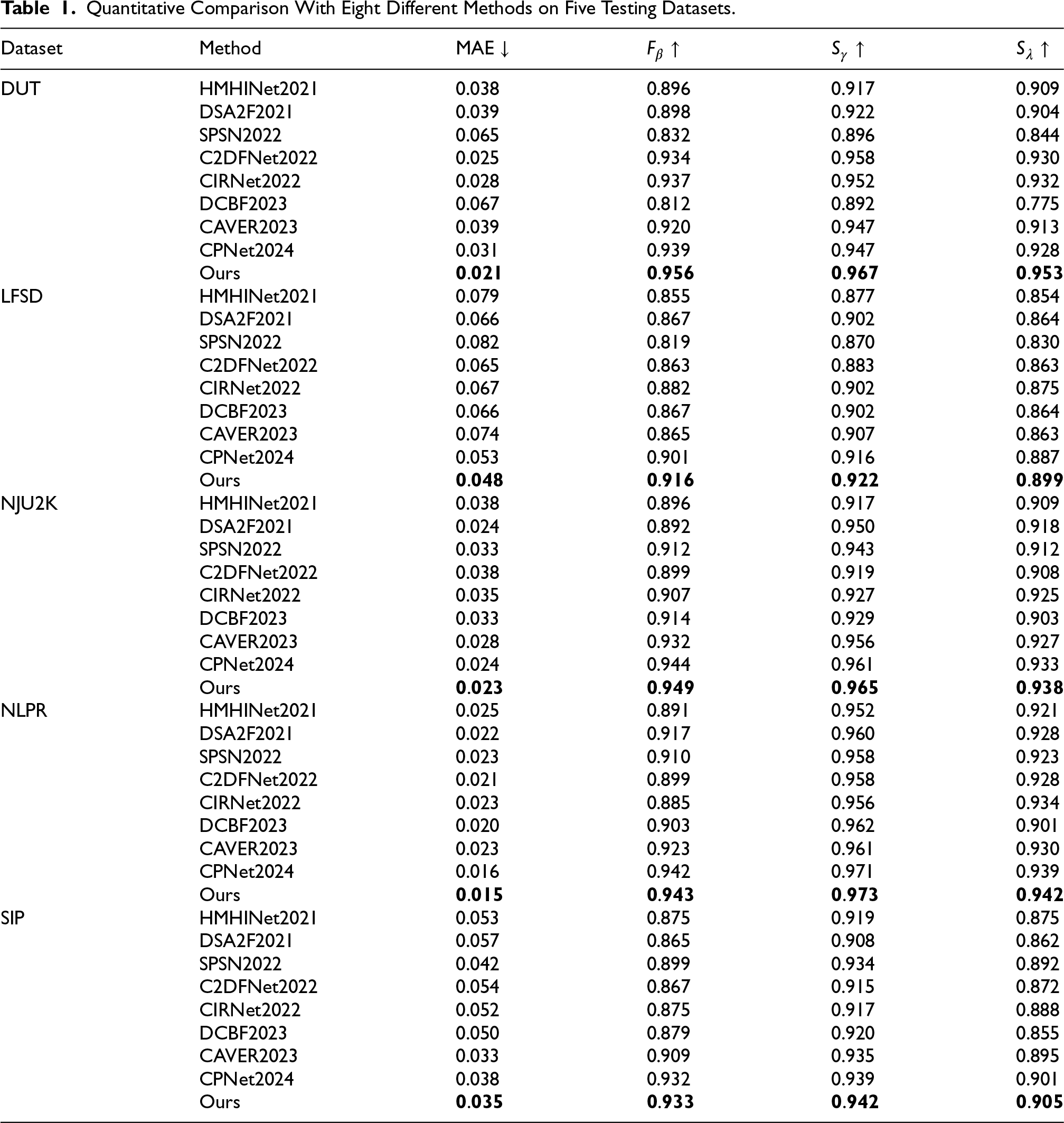

This article compares the proposed model with 8 RGB-D saliency target methods, including HMHINet(Li et al., 2021), DSA2F(Sun et al., 2021), SPSN(Lee et al., 2022), C2DFNet(Zhang et al., 2022b), CIRNet(Cong et al., 2022a), DCBF(Li et al., 2023b), CAVER(Pang et al., 2023), CPNet(Hu et al., 2024). In order to make a fair comparison, the saliency map prediction results of the above methods are provided by the author or obtained by running their published code and reproduction with default parameters.

Quantitative Analysis

The quantitative evaluation results presented in Table 1 illustrate the performance of MHINet across five distinct evaluation metrics. Notably, MHINet outperforms all methods in all four metrics, achieving the lowest MAE of 0.021 on the DUT dataset. This exceptional performance is accompanied by a high F-measure score of 0.956, which underscores the model’s efficacy in accurately identifying salient objects.

Quantitative Comparison With Eight Different Methods on Five Testing Datasets.

Quantitative Comparison With Eight Different Methods on Five Testing Datasets.

On the LFSD, NJU2K, and NLPR datasets, MHINet consistently achieves top results across multiple metrics. For instance, it outperforms other methods in the LFSD dataset, where the F-measure is improved by approximately 1.7% compared to the next best result. This dataset is particularly challenging due to its inclusion of small objects and complex backgrounds, indicating that MHINet exhibits a robust capability to handle difficult scenes effectively.

Additionally, on the NJU2K dataset, MHINet achieves a notable F-measure of 0.949 and a low MAE of 0.023, further validating its performance in diverse conditions. The results on the NLPR dataset also reflect strong metrics, with an MAE of 0.015 and an F-measure of 0.943, demonstrating the model’s versatility and adaptability.

Overall, the results substantiate the effectiveness and generalizability of MHINet, suggesting its potential as a leading solution for RGB-D SOD across various environments. The robust performance across datasets emphasizes the algorithm’s capability to perform reliably in complex and challenging scenarios.

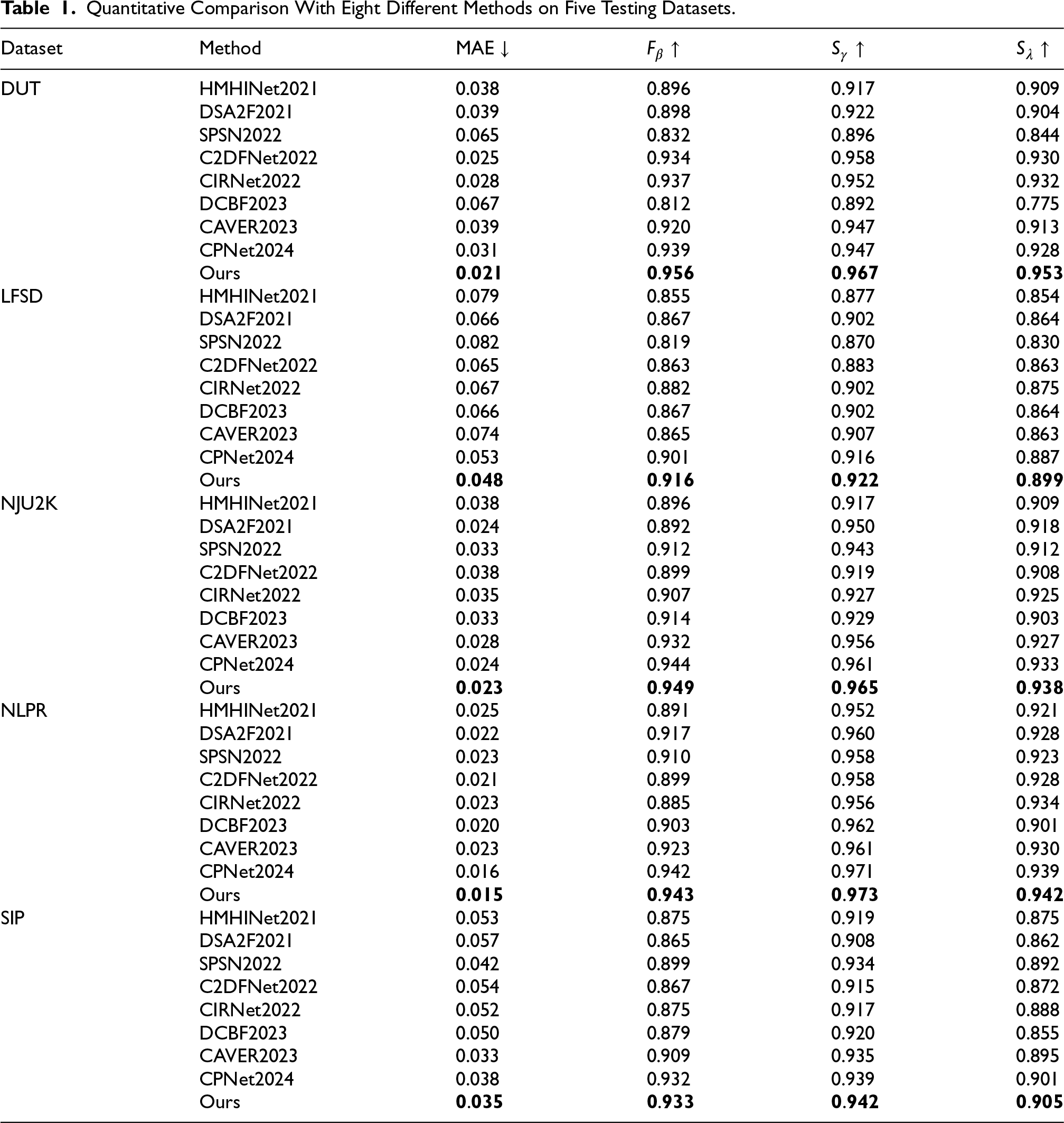

To further illustrate the superior performance of MHINet, Figure 5 showcases a series of representative results across various challenging scenarios. Lines 1 to 2 highlight the model’s effectiveness in cluttered backgrounds, where it successfully isolates salient objects despite distractions. Lines 3 to 4 demonstrate MHINet’s capability to accurately detect large objects, maintaining detail and precision. In lines 5 to 6, the model handles complex edges adeptly, showcasing its ability to delineate intricate boundaries. Lastly, lines 7 to 8 illustrate the resilience of MHINet in the presence of low-quality depth images, where it effectively mitigates depth noise to produce more accurate and detailed saliency maps. These results affirm the model’s robustness and adaptability in diverse conditions, reinforcing its applicability in real-world scenarios.

Qualitative visual comparisons of MHINet with other methods.

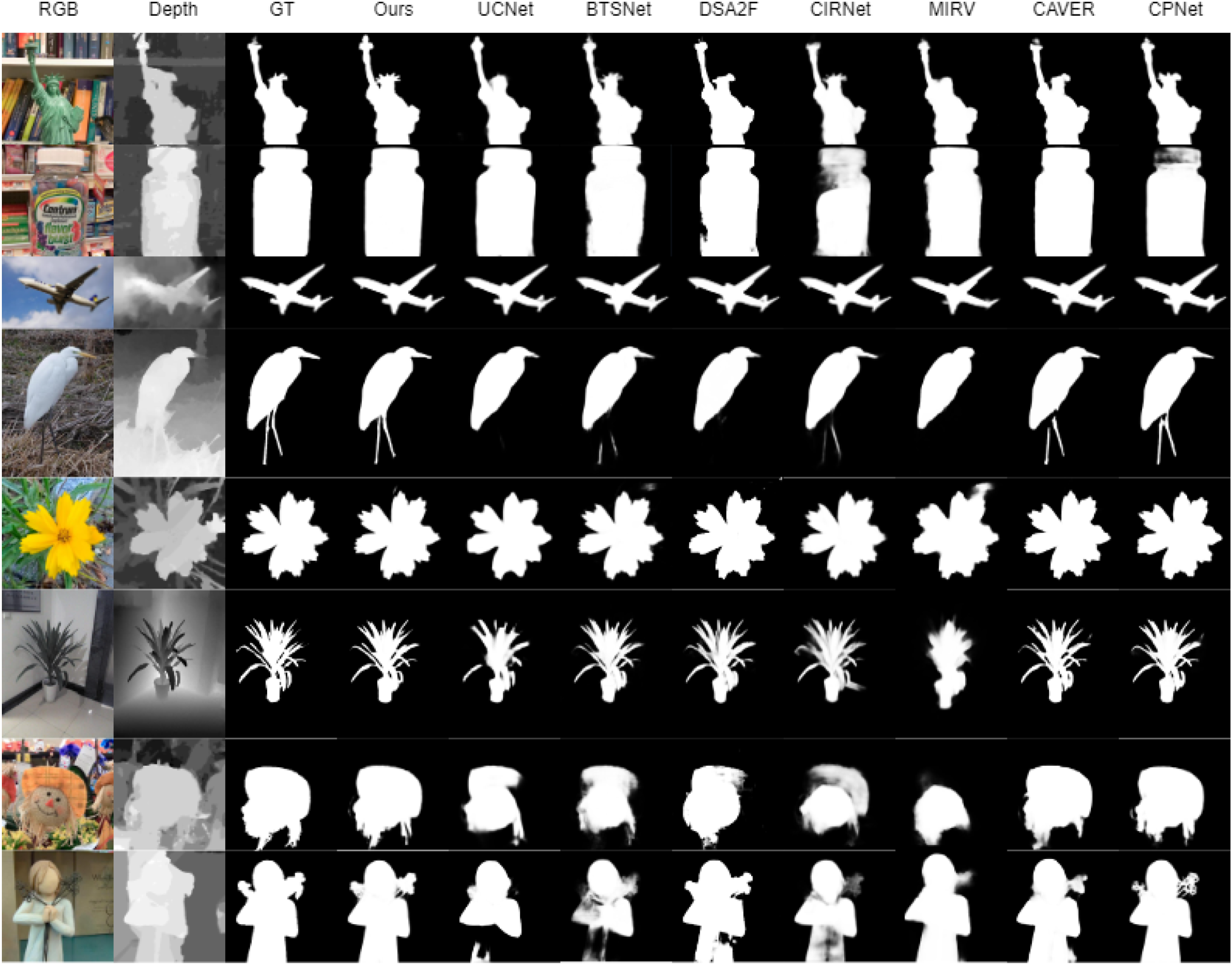

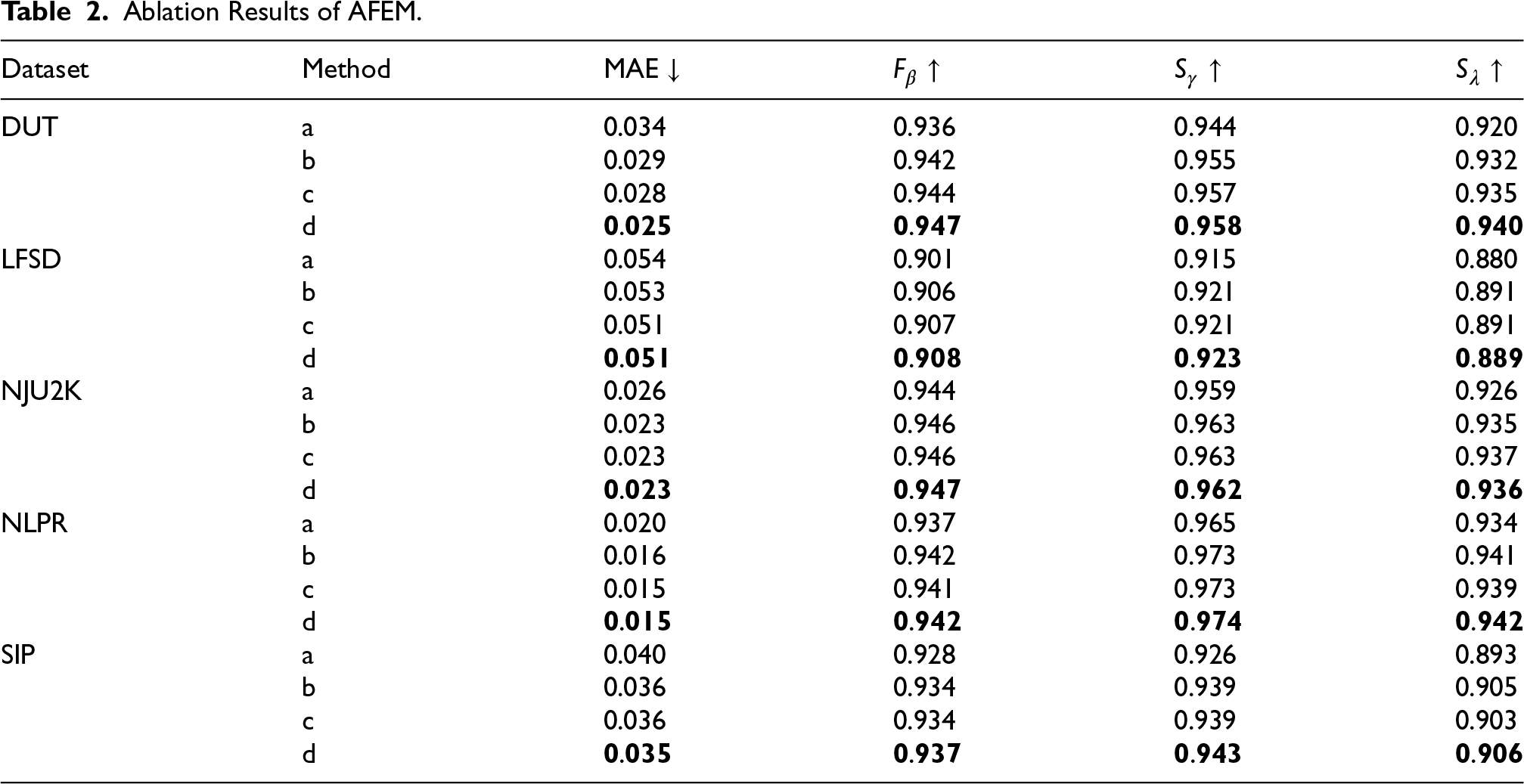

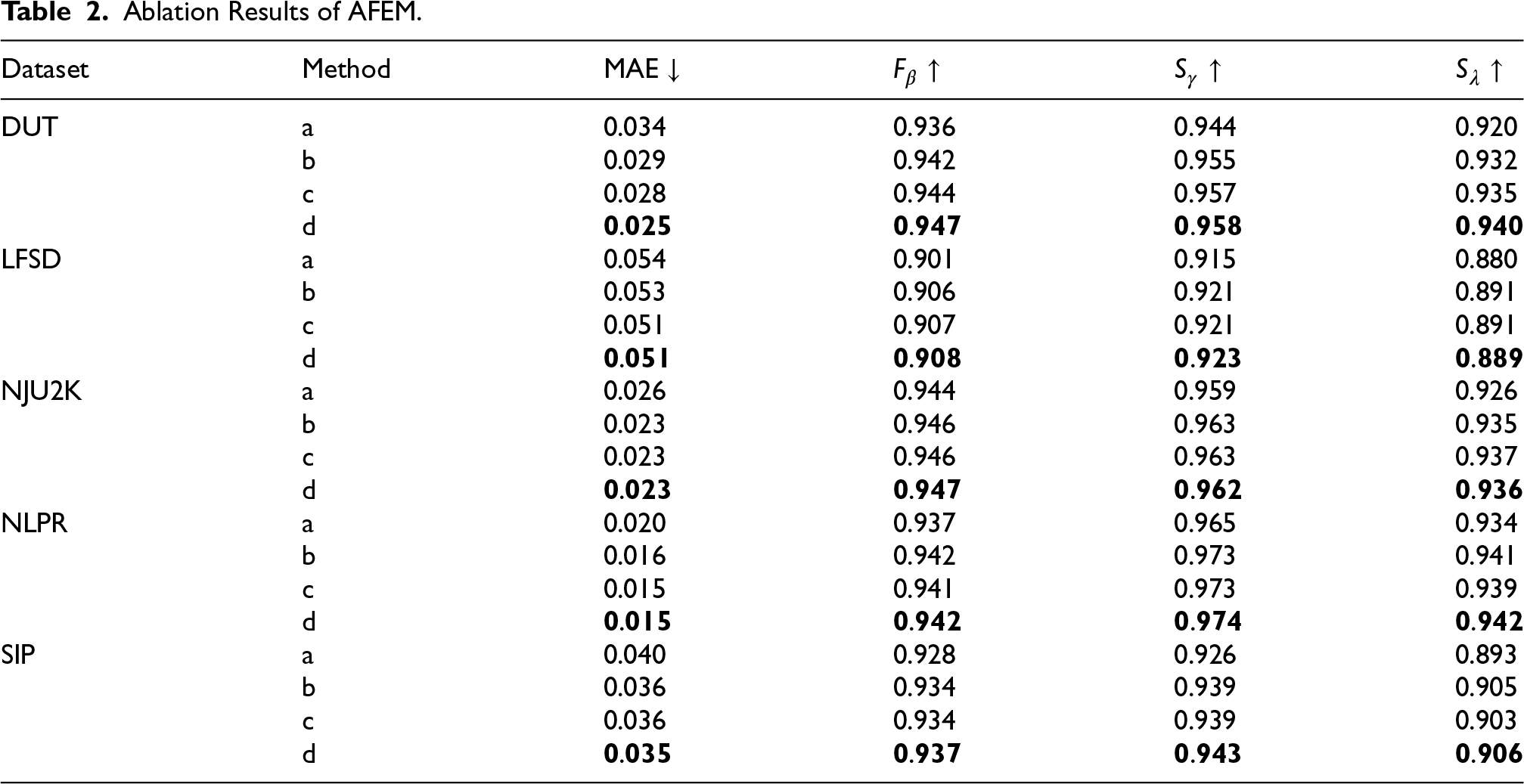

To evaluate the effectiveness of the main components of MHINet, ablation experiments are used to analyze the importance of each part. First, the effectiveness of AFEM is verified. The following experiments are conducted: (a) baseline model: only use CA attention to fuse the RGB and depth image of the current layer, and do not use MIFM module; (b) the AFEM module uses CA attention to cross-fuse the RGB and depth image of the current layer; (c) the AFEM module uses CA attention to cross-fuse the RGB and depth image of the current layer, and uses an adaptive fusion strategy for RGB and depth attention features; (d) the AFEM module used in this article, that is, the current layer features and adjacent high-level features of the RGB and depth image are fused.

According to the results in Table 2, it is evident that the performance of model a is relatively low on each data set, especially the MAE is higher on the DUT and SIP data sets, indicating that only using CA attention to fuse RGB and depth features alone cannot make full use of both. Complementary information of modalities. Through cross-fusion, the performance of model b is improved compared to model a on all data sets, indicating that the complementary information of RGB and depth images is better utilized in cross-fusion. When the adaptive fusion strategy is added to the c model, the performance continues to improve on data sets such as DUT and LFSD, indicating that the adaptive weighting strategy can enhance the expressive ability of fusion features. However, the improvement on the LFSD and NJU2K data sets is relatively limited, possibly due to the simple features of these data sets and the limited gain of adaptive fusion. Finally, the model d proposed in this article combines the fusion strategy of current layer features and adjacent layer advanced features, achieving the best results on all data sets, especially the MAE dropped significantly on the DUT and NLPR data sets. The fusion of multi-level features helps the model capture richer contextual information and significantly enhances the model’s ability to handle complex scenes. In summary, with the gradual improvement of module configuration, the performance of the model gradually improves, verifying the effectiveness of the AFEM module proposed in this article.

Ablation Results of AFEM.

Ablation Results of AFEM.

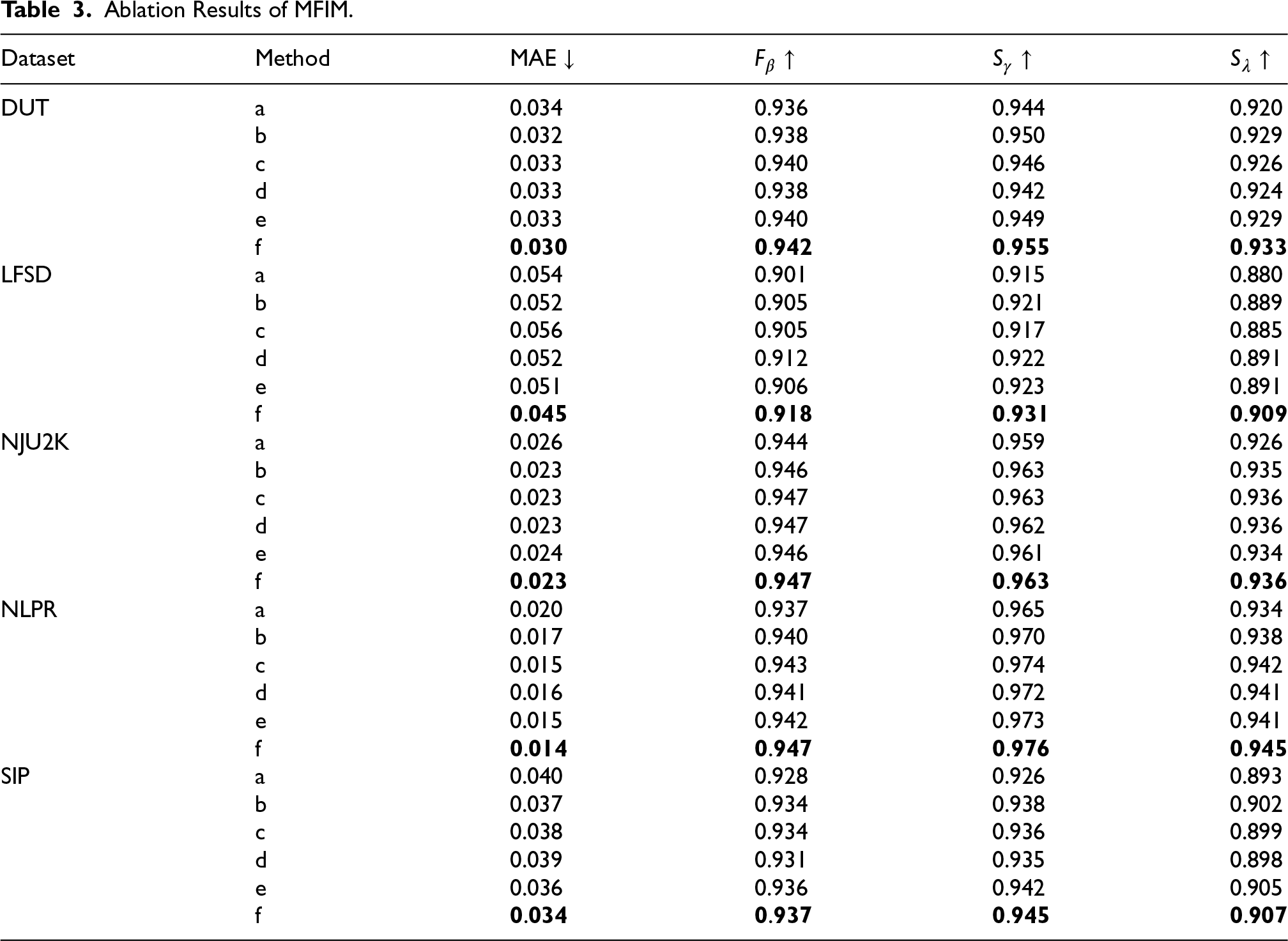

To evaluate the effectiveness of MFIM, the following experiments were conducted: (a) baseline model; (b) the MFIM module only uses DIM; (c) the MFIM module only uses UIM; (d) the MFIM module DIM and UIM are used in the lowermost layer and the uppermost layer, respectively; (e) the MFIM module uses DIM and UIM in the middle two layers, respectively; (f) the MFIM module used in this article uses DIM or UIM for all layers.

According to the results in Table 3, the performance of model a is relatively low on all data sets, especially on the SIP data set, where the MAE reaches 0.040, indicating that the model has problems in capturing different levels of features without using the MFIM module. Clearly lacking, resulting in a weak overall performance. In contrast, model b using DIM and model c using UIM both improve the performance of the model. Especially for model b, the F-measure and E-measure indicators on the DUT and NJU2K datasets are significantly improved, indicating that DIM can better capture depth features in these scenes. However, the MAE of model c increases slightly on the LFSD and SIP datasets, possibly due to the fact that UIM does not perform as well as DIM in processing complex depth information. Model d uses DIM in the bottom layer and UIM in the top layer. The results show that compared with b and c, the performance is stable on the NJU2K and SIP data sets, but the performance on other data sets is not significantly improved, indicating that it is only at the extreme level. The combined strategy using two interacting modules has a limited impact on overall performance. Model e uses DIM and UIM in the middle layer, which further improves the model’s performance on DUT and NJU2K data sets, and both F-measure and E-measure indicators are improved. This shows that multiple feature interactions at the intermediate level can more effectively capture feature information at different scales, making the model perform better in these complex scenes. Finally, the model f used in this article uses DIM or UIM for all layers, which has the best performance. Especially on the NLPR and DUT data sets, the MAE dropped to 0.014 and 0.030, respectively, and all indicators reached the best. This shows that applying the combined strategy of DIM and UIM at each level can give full play to the complementary advantages of multi-level features, making the model perform well on various data sets, especially in complex scenarios with stronger robustness and generalization ability. In summary, the experimental results verify the effectiveness of the MFIM module proposed in this article.

Ablation Results of MFIM.

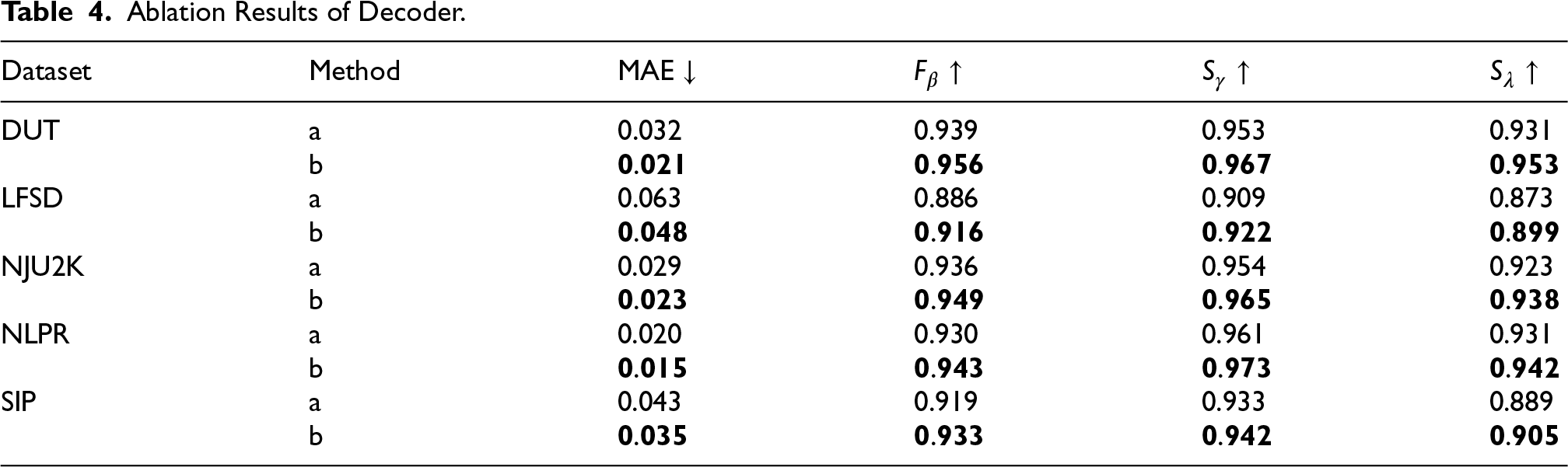

Finally, the effectiveness of the decoder module design was also verified, and experiments were conducted: (a) baseline model using a single-layer residual block as the decoder module; (b) the decoder module used in this article.

According to the results in Table 4, the performance of the baseline model a on all data sets is relatively weak, especially on the LFSD data set, where the MAE reaches 0.063, indicating that it is difficult for a single-layer residual block to effectively restore the edge details of the image. In terms of F-measure and E-measure indicators, the baseline model also performs relatively poorly. The decoder module b used in this article shows significant performance improvement. On the DUT and NJU2K data sets, the MAE is reduced to 0.021 and 0.023, respectively, and both F-measure and E-measure are significantly improved. Moreover, on the SIP data set, MAE dropped from 0.043 to 0.035, and S-measure increased from 0.933 to 0.942. In short, through experimental comparison, it can be seen that the decoder module proposed in this article effectively reduces the noise caused by upsampling and significantly improves the model’s detail recovery capability and overall prediction accuracy.

Ablation Results of Decoder.

This article proposes a straightforward yet effective framework to tackle the difficulties of cross-modal feature fusion and multi-scale object detection in RGB-D SOD. First, a cross-modal AFEM is designed, which dynamically adjusts the fusion weights of RGB and depth images through the channel attention mechanism and adaptive fusion strategy to improve feature expression capabilities. Secondly, a MFIM is proposed to effectively integrate high-level and low-level features to improve the ability of multi-scale object detection. In addition, the designed decoder module further improves the accuracy of detail recovery by introducing residual convolution blocks to reduce noise. The framework proposed in this article achieved excellent performance in five public data sets, significantly improving the accuracy of the prediction of saliency and proving the effectiveness of the proposed method.

However, the proposed method also has some limitations. In the RGB-D SOD detection model, although depth features provide complementary information to RGB features and improve the model performance, the quality of depth maps is limited by the resolution and accuracy of sensors. As a result, redundant features may occur when fusing with RGB features, which not only wastes computational resources but also interferes with the model’s ability to capture key information. Moreover, when the size of the salient object is too small, the model may have difficulty distinguishing these objects from the background, especially when the resolution is low or the target is far from the camera. Smaller target sizes may cause the model to fail to effectively extract salient features, leading to missed detections or false alarms.

For future work, more effective fusion strategies to avoid redundant features can be designed. Additionally, exploring higher-resolution enhancement techniques and focus strategies for specific targets could improve the model’s ability to detect small objects.

Footnotes

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Competing Interest

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.