Abstract

In the age of rapidly expanding textual data, extracting meaningful insights poses a significant challenge. To address this, we introduce TopS-Key, an advanced framework for automatic keyphrase extraction that integrates natural language processing, principal component analysis (PCA), and fuzzy decision-making method, fuzzy technique for order preference by similarity to ideal solution (Fuzzy TOPSIS). Our approach enhances traditional preprocessing by incorporating fuzzy string matching and normalization. To identify the most important semantic keyphrases, we calculate 10 feature scores and apply PCA to reduce the dimensionality of these features while preserving essential information. The Fuzzy TOPSIS method is used to rank keyphrases, treating keyphrase candidates as alternatives and principal components as evaluation criteria. Shannon entropy-based weighting is applied to determine the significance of each criterion in the Fuzzy TOPSIS process. We validate our framework using widely recognized datasets, including DUC 2001, SemEval 2017 Task 10, and Inspec, leveraging similarity and threshold functions. Evaluation metrics, precision, recall, and the F1 score are analyzed, adjusting thresholds to extract the top 3, 5, and 10 keyphrases. Experimental results show that our TopS-Key framework consistently surpasses existing methods across all datasets, demonstrating superior performance in keyphrase extraction. Furthermore, the TopS-Key model shows significant potential for applications in automated text summarization, information retrieval, and enhanced semantic document analysis, opening avenues for further exploration. Future research could focus on exploring alternative weighting methods for criteria in Fuzzy TOPSIS and applying the framework to other domains with unique challenges in keyphrase extraction.

Keywords

Introduction

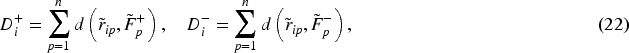

In this era of exponentially growing textual data, extracting meaningful knowledge is a monumental challenge. The internet is becoming increasingly populated with blog posts, new articles, research publications, forum posts, and social media posts from millions of people (Ortiz-Ospina, 2019) as shown in Figure 1.

Data Growth of Both Structured & Unstructured Data Worldwide. Source of Figure 1: https://twitter.com/timothy_hughes/status/619075227021090817.

Identifying descriptive keywords and keyphrases manually for each document is impractical due to the massive amount of text generated daily. But keyword extraction can help in extracting keywords or keyphrases from huge sets of data, providing insight into the type of topics being discussed. The term keyphrase refers to a phrase that sums up the main points discussed in a document (Siddiqi & Sharan, 2015). In other words, they are words and phrases that define the contents of the document precisely and compactly.

Due to their ability to provide a concise yet precise summary of a document’s contents, they can be utilized for a variety of purposes. A list of keyphrases can serve as a quick indicator for readers of the relevance of a particular document since it can help them determine whether or not it is relevant to their needs. Using good keyphrases can help users find relevant documents by supplementing full-text indexing. Although keyphrases offer well-known advantages, only a small percentage of documents use them. To address this need, research is being conducted on automated approaches to keyphrase extraction.

In the research domain, researchers have provided keywords in their publications to represent the main semantic topics. To find other related papers in the relevant literature (Siddiqi & Sharan, 2015), these keywords should be used with a search mechanism. Using keywords to find other relevant documents has become easier since automated search methods have emerged.

A keyphrase extraction is a fundamental process of natural language processing (NLP; Song et al., 2023b) that identifies and extracts keyphrases from a document so that vital information can be summarized. The majority of existing keyphrase generation programs approach this task as a supervised machine learning classification task, learning how true keyphrases differentiate themselves from other possible candidates based on labeled keyphrases (Zhang, 2008).

Our paper proposes a novel method for extracting keyphrases from text. First, we preprocess our text to find potential keyphrases using standard NLP preprocessing techniques and inherit fuzzy string matching and normalization. Then we fetch the feature scores for each potential keyphrase using algorithms, namely, term frequency (TF) method, inverse document frequency (IDF), TF–IDF (Ramos, 2003), phrase length, noun phrase density, position score, the semantic score using the latent Dirichlet allocation (LDA) method model (Onan et al., 2016), similarity score using the bidirectional encoder representations from transformers (BERT) with cosine similarity (Devlin et al., 2018), Euclidean distance, and Manhattan distance. Then, to reduce the number of features and also to retain the information contained, we apply principal component analysis (PCA). Then, we apply the fuzzy technique for order preference by similarity to ideal solution (Fuzzy TOPSIS) to rank the potential keyphrases, which are treated as alternatives. Principal components (PCs) are considered as the criteria, and the weights for each criterion are determined using Shannon entropy-based weighting, which quantifies the degree of uncertainty or disorder within the system. The integration of fuzzy logic allows for handling the inherent uncertainty and imprecision in the evaluation process, enhancing the robustness of the ranking. We examined the three metrics as the similarity threshold changes in all three datasets and calculated the decreasing rates. We implemented other state-of-the-art approaches in our datasets and calculated the average precision, recall, and F1 scores, compared them with our method, and concluded that our method outperformed them.

Our approach to keyphrase extraction addresses several limitations and research gaps found in traditional methods, resulting in a more effective and comprehensive solution. A significant shortcoming in many existing techniques is their inability to handle variations in language, such as synonyms, abbreviations, or alternative phrasing. Most methods fail to extract keyphrases when exact matches are required. To overcome this, our approach incorporates fuzzy string matching during preprocessing, enabling the identification of keyphrases even when there are variations in wording. This ensures a more exhaustive extraction of relevant key terms, which is often overlooked in traditional approaches. Traditional keyphrase extraction methods often rely on a limited set of features, which can lead to biased or incomplete results. In our method, we addressed this by considering 10 different feature scores for each potential keyphrase, which evaluate various factors such as frequency, positional importance, semantic similarity, and contextual relevance. By adopting this multidimensional feature analysis, our approach provides a more comprehensive and balanced evaluation of each keyphrase, addressing the gap where single-feature-based methods fall short.

The challenge of handling numerous features, which can introduce noise and reduce the efficiency of extraction processes, is often overlooked. To resolve this, we used PCA to condense the 10 feature scores into a smaller, more manageable set of PCs. This step retains the most relevant information while reducing redundancy and noise, ensuring that keyphrase rankings are driven by essential features. It addresses the issue of feature complexity that many traditional methods struggle with.

In many keyphrase extraction models, the assignment of weights to different features is either arbitrary or based on manual heuristics, which can lead to inconsistency and bias. Our approach eliminates this subjectivity by applying Shannon entropy-based weighting, which calculates the importance of each feature based on its variability in the data. This ensures that the feature weights are determined objectively, improving the reliability of the ranking process. This data-driven method of weighting is an important advancement over conventional techniques that rely on subjective decisions.

Moreover, keyphrase extraction often encounters challenges such as ambiguity, synonymy, and contextual variations in keyphrase meanings. Ambiguity arises when a keyphrase holds multiple possible interpretations depending on its usage context, while synonymy refers to the existence of semantically similar or equivalent terms expressed differently. Contextual differences further complicate the relevance and importance of keyphrases, as the same term may carry varying significance across different domains or textual settings. Our method specifically addresses these challenges through the integration of Fuzzy TOPSIS and Shannon entropy. The use of Fuzzy TOPSIS introduces a robust mechanism for handling ambiguity and imprecision, as fuzzy logic enables the model to assess the closeness of keyphrases to both ideal and anti-ideal solutions without relying on exact, crisp distinctions. This allows for more flexible and nuanced evaluation, accommodating varied interpretations and meanings of keyphrases. Shannon entropy weighting further complements this by objectively assigning weights to features based on their information content and variability, ensuring that no single feature disproportionately influences the ranking process. This data-driven weighting captures contextual variability by giving higher importance to discriminative features in different domains, allowing the model to adapt dynamically to varying textual contexts. Together, Fuzzy TOPSIS and Shannon entropy create a balanced, context-aware framework that effectively mitigates ambiguity, recognizes synonymous expressions, and accounts for contextual differences in keyphrase extraction.

A common limitation of existing keyphrase extraction methods is their inability to effectively balance multiple criteria, often leading to an overemphasis on certain features while neglecting others. This imbalance can result in suboptimal rankings, reducing the accuracy and relevance of the extracted keyphrases. To address this, we employ the Fuzzy TOPSIS, which enhances the traditional TOPSIS approach by incorporating fuzzy logic. This allows for the modeling of uncertainty and imprecision inherent in the evaluation process. By comparing potential keyphrases to both an ideal and an anti-ideal solution, Fuzzy TOPSIS ensures a more nuanced and balanced ranking, providing a comprehensive evaluation across multiple criteria while maintaining objectivity.

Beyond achieving state-of-the-art performance, our proposed keyphrase extraction framework offers substantial practical utility across various domains. In information retrieval systems and academic search engines, accurate keyphrase identification enhances indexing, improves search relevance, and facilitates research summarization. Additionally, the adaptability of our approach makes it ideal for recommendation systems, where keyphrase-based profiling supports personalized content delivery. Its robustness across different text domains further enables applications in news aggregation, content categorization, and educational tools requiring reliable, interpretable keyphrase extraction.

The structure of the paper is as follows: Section 2 reviews relevant literature and related work. Section 3 details the methodology behind the proposed approach. To illustrate its functionality, Section 4 presents a step-by-step example. The experimental setup, datasets, software implementation, and feasibility are discussed in Section 5. Section 6 covers threshold sensitivity analysis, ablation studies, comparative evaluations, and statistical performance assessments. In Section 7, we analyze the results and explore the limitations and challenges of the approach. Finally, in Section 8, we discuss the conclusion and future scope of the methodology.

Literature Survey

The keyphrase extraction task was introduced by Turney, who defined it as “the automatic selection of important and topical phrases from the body of a document” (Turney, 2000). A series of advances in keyphrase extraction methods has taken place in the past two decades, from traditional approaches to technologies based on deep learning. Automatically extracting relevant keywords from text can be accomplished using various techniques. There are many different ways to do this, from simple techniques such as counting the number of different words in the text to more complex techniques such as graph-based methods, supervised methods, etc. Let us discuss these methods.

Statistical Approach

A statistical approach to keyphrase extraction has evolved from foundational statistical measures to sophisticated language models, resulting in more nuanced and accurate methods of identifying keyphrases.

In keyphrase extraction, TF is the most influential statistical approach. Ramos (2003) used TF–IDF to select query expansion terms from documents. Further improvements to the statistical approach have been made using other methods. Campos et al. (2018) proposed a statistical approach based on the case, sentence position, TF, and dispersion of keyphrases. Rose et al. (2012) combined the degree of words, the frequency of words, and degree-to-frequency ratios into an unsupervised algorithm that can be applied to any domain, Sarkar et al. (2010) incorporated TF–IDF into the feature vectors as input to the neural network models. Liu et al. (2019a) used another statistical method LDA model, combined with multifeature weighting to extract keywords. Omuya et al. (2021) enhanced PCA for feature selection, improving classification performance by optimizing feature representation.

Graph-Based Approach

Through the evolution of graph-based models, several advancements have been achieved in keyword extraction. Many algorithms were built upon the foundational algorithm HITS (Hung et al., 2010) and PageRank (Joshi & Patel, 2018), such as the TextRank algorithm (Mihalcea & Tarau, 2004), in which, in order to extract keywords, text can be represented as a graph and terms are ranked according to their centrality within the graph. The PositionRank algorithm (Florescu & Caragea, 2017) uses graph-based ranking, positional information, and various heuristics to examine the content and position of words in a document to identify potential keyphrases, RAKE algorithm (Rose et al., 2012) creates a graph wherein nodes represent words and edges between them signify relationships. Recently, graph-based methods that use NLP techniques have been developed, such as FRAKE (Zehtab-Salmasi et al., 2021). This algorithm employs a combination of textural techniques and graph centralities to extract keywords, KECNW (Biswas et al., 2018; Chen et al., 2019) based on the exploitation of word co-occurrence graph features as a way of extracting keywords. Recent innovations include SDRank (Xu et al., 2025), which refines graph-based rankings by incorporating both local and global dependencies. Additionally, HCUKE (Xu et al., 2024) enhances graph-based ranking by integrating hierarchical clustering to group-related phrases before applying graph centrality-based scoring.

Supervised Approach

In supervised keyword extraction models, the keywords in the dataset are annotated so that a model can be trained on the labeled data. Zhang et al. (2006) used a pretrained model, a support vector machine (SVM) classification model for keyword/keyphrase extraction, Zhang (2008) considered keyword extraction as string labeling and applied the conditional random fields model to extract keywords. Hong and Zhen (2012) proposed an extended TF method that used linguistic features of a keyword and an SVM model for keyword extraction and optimization of the results. It uses BERT and ExEm for the representation of candidate keywords into vectors and performs classification tasks by considering keyphrase extraction as a sequence labeling problem. A recent advancement, BRYT (Ahmed et al., 2024), builds upon statistical principles by incorporating multiple ranking strategies and ChatGPT.

Other Approaches

Other approaches include fuzzy adaptive resonance theory neural networks (Munoz, 1997) used neural networks to represent words as a continuous vector. Pennington et al. (2014) utilized matrix factorization techniques to create word vectors based on global statistics of word co-occurrence BERT model is proposed (Devlin et al., 2018). Liu et al. (2019b) proposed RoBERTa, Grootendorst (2020) uses BERT embedding and cosine similarity and proposed KeyBERT. Automatic extractive text summarization (EATS) is proposed to generate keywords by topic modeling and performs EATS using clustering schemes driven by genetic algorithms (Hernández-Castañeda et al., 2022(@). Zhou et al. (2024) incorporated structure and position information in keyphrase extraction and AdaptiveUKE (Liu et al., 2024) used gated topic modeling. IKDSumm (Garg et al., 2024) integrates keyphrases into BERT for summarizing the disaster tweet. Recent studies have adapted TOPSIS for text ranking, including extractive summarization (Pati & Rautray, 2024) and robust hierarchical decision-making (Corrente & Tasiou, 2023), demonstrating its potential for keyphrase extraction. Additionally, optimization techniques for tokenization and memory management have been explored to enhance NLP processing efficiency in multilingual applications (Dodić et al., 2025). Furthermore, Fuzzy TOPSIS has also been explored in ranking and evaluation tasks, demonstrating its adaptability to text analysis. Wang (2022) integrated NLP techniques with Fuzzy TOPSIS for product evaluation.

Various techniques, including statistical methods, graph-based approaches, supervised learning, embedding-based models, PCA, TOPSIS, and fuzzy TOPSIS, have been individually employed for different aspects of keyword extraction and ranking. However, our approach differs by integrating multiple methodologies in a unified framework, leveraging their complementary strengths to enhance keyword extraction effectiveness and robustness.

Proposed Methodology

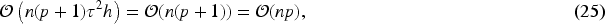

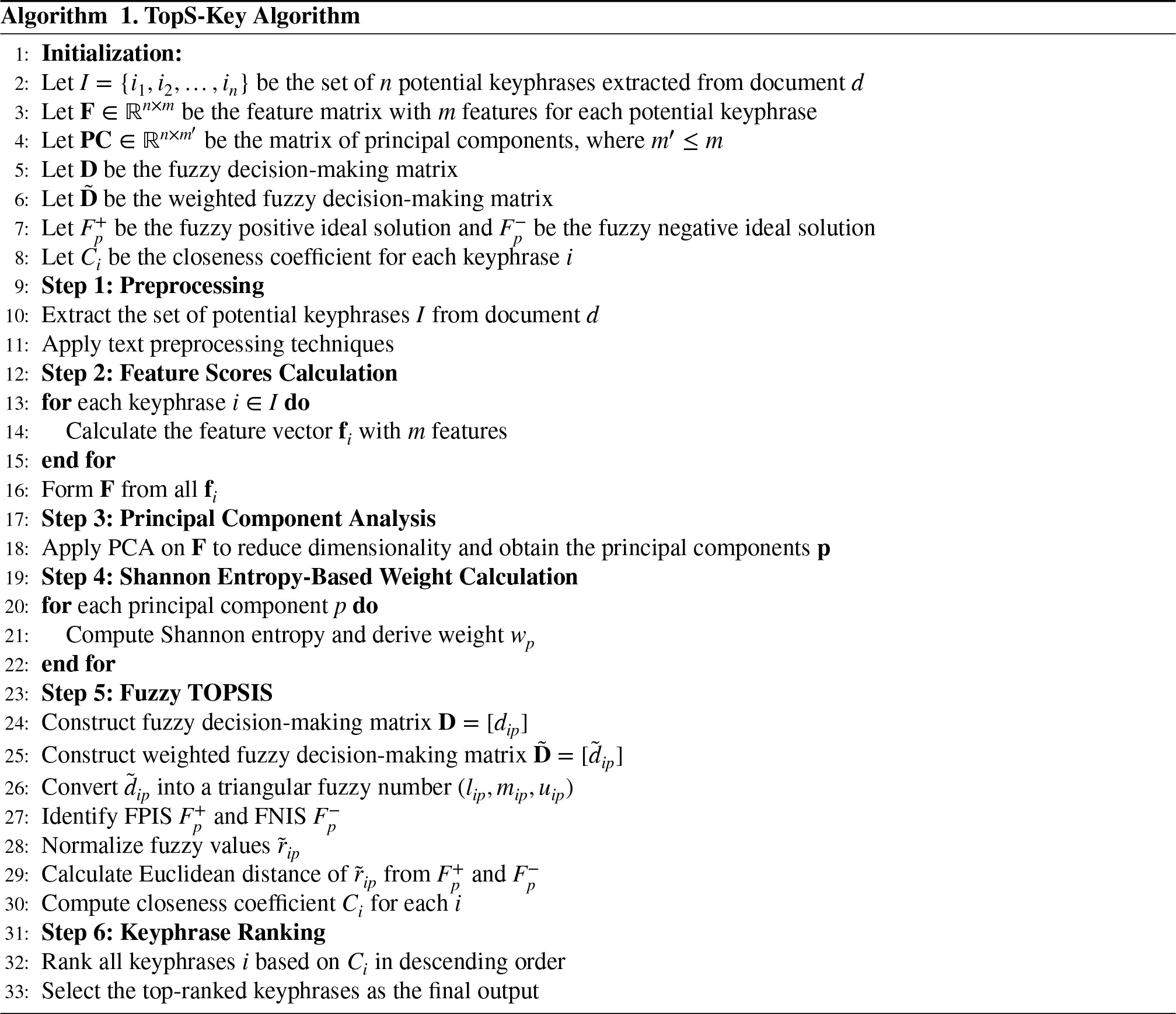

In this work, we propose a methodology that employs optimal combinations of NLP techniques, Shannon entropy, PCA, and Fuzzy TOPSIS. The flowchart in Figure 2 depicts the steps involved in implementing the proposed model. Our methodology is divided into four major steps: data preprocessing, feature scoring, dimensionality reduction using PCA, and keyword ranking using the Fuzzy TOPSIS method with Shannon entropy-based weights. Each of these steps is explained in detail in the following subsections.

Workflow of the Proposed TopS-Key Framework Integrating Preprocessing, Feature Scoring, PCA-Based Dimensionality Reduction, Entropy Weighting, and Fuzzy TOPSIS Ranking. Note. PCA = principal component analysis; Fuzzy TOPSIS = fuzzy technique for order preference by similarity to ideal solution.

As a first step, we preprocess the data. Along with the standard preprocessing steps, including tokenization, punctuation removal, stopword removal, POS tagging, and noun phrase extraction, we also perform fuzzy string matching and normalization.

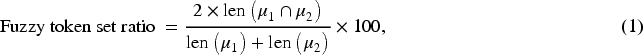

In fuzzy string matching (Hanani et al., 2021), strings are compared for similarities with minor variations and differences between them. We use fuzzy string matching because it is flexible, as it accommodates differences in spelling, spacing, or slight alterations of strings. A token set ratio calculated using equation (1) takes into account the intersection and union of token sets to calculate the similarity between strings. We consider the similarity threshold of 90 to ensure a relatively strict level of similarity between strings. In other words, if we lower the threshold, the strictness between the strings will decrease, and it will consider more phrases, which will create noise in the form of unrelated noun phrases. Therefore, it would increase the number of potential keyphrases.

In the normalization step, the fetched noun phrase is split into individual words, joined back together with a solitary space, and any extra spaces removed between words. After the preprocessing, we obtain a list of potential keyphrases, which are further analyzed to pull the actual keyphrases.

In this step, we generate feature scores for each potential key phrase. We considered 10 major features that are: TF, IDF, combination of TF–IDF, phrase length, noun phrase density, position score, latent Dirichlet semantic score, BERT embeddings with cosine similarity, BERT embedding Euclidean distance, and BERT embedding with Manhattan distance, respectively. Let us discuss these features in detail.

TF: We generate the first score using the statistical frequency-based approach, that is, the TF method. It is the score calculated by counting how many times each potential keyphrase appears in the document, then normalizing it according to how many potential keyphrases are there in the document. IDF: We generate the second score by using IDF, which measures how much information a term provides by comparing the total number of documents with the number of documents containing the term. It measures how rare a phrase is across a set of documents. It is used to give more weight to terms that are less frequent across all documents and, thus, potentially more informative. Using equation (2), the IDF score is calculated for each potential keyphrase.

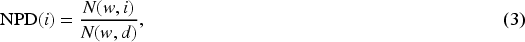

TF–IDF: We generate the third score by using TF–IDF, which combines how often a phrase appears in a specific document and how rare the phrase is across all documents. It gives higher importance to terms that appear frequently in a specific document but not in many other documents. Phrase Length: The fourth feature is phrase length, which simply counts the number of words in the noun phrase. It indicates the complexity or specificity of the phrase. Noun Phrase Density: We generate the fifth score by calculating noun phrase density, which measures how much of the document is covered by the noun phrase as a proportion of the total document length. Using equation (3), the noun phrase density is calculated for each potential keyphrase.

Position: We generate the sixth score by measuring the position score, which captures the location of a noun phrase within a document relative to the total length of the document. It depicts how early or late a noun phrase appears in a document, giving importance to phrases that appear earlier in the text. The idea is that phrases appearing earlier in a document may be more relevant, especially in documents such as research papers, where key information is often introduced early. LDA model: The seventh score we calculate is the semantic score using an unsupervised, probabilistic, topic-modeling-based LDA model. In this model, for each group of potential keyphrases, a corpus is created, which is converted into a bag-of-words representation. Then, the LDA model is constructed considering two topics. The LDA model tracks down a latent topic and clusters phrases with similar meanings into specific topics according to how they are used in the paragraph. Then, two types of probability distributions are generated:

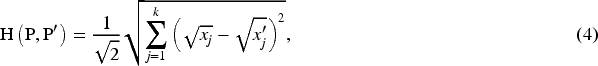

The probability distribution of topics within a paragraph P, which shows the likelihood that a paragraph belongs to a particular topic. The probability distribution of phrases within a topic P To calculate both of these probabilities, we use the Dirichlet distribution (Onan et al., 2016). We calculate the measure of similarity between these two probability distributions by calculating Hellinger’s distance between them using equation (4):

Then, we finally calculate the semantic score using equation (5), where BERT model: Using the BERT model, mean-pooled BERT embeddings for both the paragraph and the potential keyphrases are calculated. These embeddings understand the semantic meaning of each phrase and then quantify their semantic similarity or relevance of the potential keyphrase in the context of the paragraph. The mean-pooled embeddings for the paragraph are obtained by taking the mean of the token-level embeddings of the paragraph. These embeddings represent a condensed representation of the entire paragraph, capturing its semantic content in a fixed-size vector. Then, we calculate three types of distance between them: Euclidean Distance: It measures the straight-line distance between the embeddings of the noun phrase and the document using equation (6). The closer the noun phrase is to the document in the embedding space, the more semantically similar they are considered to be.

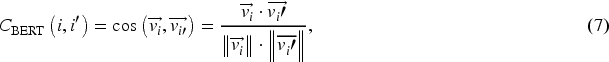

Cosine Similarity: It is the degree of similarity between the BERT embedding of each potential keyphrase and document. It is calculated using equation (7):

Manhattan Distance: It is also known as L1 distance, which sums the absolute differences between the components of the embeddings of the noun phrase and document. It is measured using equation (8):

Thus, as a result, we get these 10 feature scores for each potential keyphrase.

The selection of 10 distinct features for scoring potential keyphrases is based on a thorough review of prior research and the need to capture diverse, complementary aspects of keyphrase relevance. These features span statistical, syntactic, structural, and semantic dimensions to ensure a well-rounded evaluation. Statistical features such as TF, IDF, and TF–IDF capture the importance and uniqueness of terms within a document and across the corpus. Syntactic and structural features—such as phrase length, noun phrase density, and position score—reflect common patterns of keyphrases, which often appear early, are moderately long, and follow noun phrase structures. Semantic features add contextual depth: LDA scores align phrases with the document’s topics, while BERT-based similarity evaluates how well phrases represent overall content. Euclidean and Manhattan distances provide additional ways to measure semantic closeness, complementing the BERT score. This carefully selected set of features avoids redundancy while maximizing relevance and coverage. Reducing the set may miss important cues, while adding more can introduce noise. Thus, the 10 features strike a strong balance between theoretical soundness and practical effectiveness for keyphrase scoring.

Then, we apply PCA to reduce the number of features, retaining all the information provided by the features (Esposito et al., 2022; Marukatat et al., 2023).

Using PCA, data is transformed into a set of linearly uncorrelated variables called PCs, thereby reducing the dimensionality. In order to retain most of the variance, these components are arranged in such a way that the first few retain the most. As a result of PCA, information is compressed into a smaller number of components by transforming it into a new coordinate system that maximizes variance. This allows for the retention of essential patterns while discarding noise or less important information.

Firstly, we use

Afterward, we compute the covariance matrix of the standardized data using equation (10):

Then, we compute the eigenvalues and eigenvectors of this covariance matrix. Eigenvectors indicate the new PCs’ directions, while eigenvalues illustrate how much variance they capture.

We then order the resulting components by the amount of variance they capture. The amount of variance explained by a particular PC is related to the corresponding eigenvalue of that component. The eigenvalue represents the variance associated with the PC. The explained variance ratio (EVR) by a particular PC is calculated using equation (11):

Finally, the data is then projected onto the PCs, creating a new representation of the data as

Thus, we get a set of new features that are PCs that are linear combinations of the original weighted features. Since our goal in applying PCA is to reduce the dimensionality by identifying the most important components that account for the majority of the variance while ignoring the components that contribute less, we evaluate cumulative variance to determine how many PCs are needed to reach a certain percentage of the variance using equation (12). For our analysis, we select the PCs that together account for 90% of the variance.

We select the PCs until the cumulative variance reaches 90%. The number of PCs required to explain 90% of the variance can vary depending on the document.

PCA is used in our framework to address potential redundancy and correlations among the selected features. By transforming the original features into uncorrelated PCs, PCA preserves essential information while enhancing robustness and computational efficiency. This dimensionality reduction step ensures that the ranking process remains stable and efficient, free from overlapping signals, making it a crucial component of our methodology. Although PCA captures only linear relationships, we mitigate this limitation through several design choices. Specifically, PCA is applied independently for each document, which helps retain local contextual and statistical patterns specific to that document. Additionally, our framework constructs a modular feature space comprising semantic, statistical, and structural features, prior to dimensionality reduction, which ensures that key nonlinear relationships are already embedded in the input space. Finally, the downstream fuzzy TOPSIS-based ranking with entropy-adaptive weights introduces nonlinear decision modeling, effectively compensating for any minor loss of nonlinear dependencies during PCA. These measures collectively ensure that PCA remains both reliable and well-suited to our keyphrase extraction architecture.

The MCDM approaches incorporate multiple criteria, which are often at odds, to assess and select from a variety of alternatives. In this work, we apply fuzzy TOPSIS to rank the potential keyphrases (Nădăban et al., 2016) in order to identify the best keyphrases. We first rank the potential keyphrases using the fuzzy TOPSIS method, which incorporates fuzzy logic to handle uncertainties and imprecision in the decision-making process.

Following dimensionality reduction, we employ an enhanced variant of the Fuzzy TOPSIS technique to rank the candidate keyphrases. Fuzzy TOPSIS extends the conventional TOPSIS method by incorporating fuzzy logic principles, which enable it to effectively manage the inherent uncertainty and imprecision present in linguistic assessments and heterogeneous feature evaluations. The procedure comprises several key stages: initially, the construction of a fuzzy decision matrix derived from the evaluated feature values; subsequently, the calculation of feature weights utilizing Shannon entropy to ensure objectivity; next, the identification of the fuzzy positive ideal solution (FPIS) and fuzzy negative ideal solution (FNIS); and finally, the computation of the relative closeness coefficient for each candidate keyphrase.

To further refine the standard Fuzzy TOPSIS framework for the keyphrase extraction task, we introduce several significant improvements. Rather than assigning feature weights manually, we leverage Shannon entropy to automatically derive feature importance scores, ensuring a data-driven and unbiased weighting strategy based on the intrinsic variability of each feature across all candidate keyphrases. Additionally, to mitigate redundancy and retain only the most informative features, we employ PCA, treating the resulting PCs as criteria within the Fuzzy TOPSIS model. This approach enhances both computational efficiency and ranking effectiveness.

In our model, each candidate keyphrase is conceptualized as an alternative, systematically evaluated against the PCA-derived feature criteria. We incorporate a diverse set of syntactic, semantic, structural, and statistical features, ensuring their normalization and consistent integration within the fuzzy decision matrix. This uniform treatment facilitates equitable comparisons across varying feature types. Furthermore, we design customized linguistic scales and membership functions to represent feature evaluations as fuzzy numbers, enabling more accurate modeling of the inherent ambiguity and vagueness associated with NLP and multifaceted feature interpretation.

In order to get started with fuzzy TOPSIS, we define criteria, alternatives, and weights for each criterion. We consider potential keyphrases as the alternatives that we need to rank to find the predicted keyphrases. The criteria are the PCs that we found out in the previous section, and now we need to find the weights for these criteria. For finding the criteria weights, we used Shannon entropy-based weighting.

Shannon Entropy-Based Weighting

Shannon entropy is a concept from information theory introduced by Claude Shannon in 1948 (Shannon, 2001), which measures the amount of uncertainty or disorder in a system. To assign appropriate weights to each feature criterion within the Fuzzy TOPSIS process, we adopt a systematic, data-driven approach grounded in Shannon entropy theory. This allows us to objectively quantify the importance of each feature before proceeding to the weight computation.

To assign the importance of each feature criterion in a systematic and impartial manner during the Fuzzy TOPSIS process, we adopt Shannon entropy-based weighting. This method provides a principled, data-driven mechanism to quantify the significance of each criterion, avoiding any subjective bias or manual intervention.

The fundamental strength of Shannon entropy lies in its ability to capture the inherent diversity or unpredictability of each feature across all candidate keyphrases. A feature that exhibits high variability among keyphrases conveys more discriminative information, as it better differentiates between alternatives. Conversely, a feature with low variability contributes little to the decision-making process. By applying entropy, we ensure that more informative features are prioritized through higher weights, while less informative, redundant, or homogeneous features receive proportionally lower influence.

Moreover, the entropy-based approach is highly adaptable to different datasets and feature distributions. It automatically adjusts the weights based on the statistical behavior of the features without requiring prior assumptions or domain-specific tuning. This not only enhances the objectivity and scalability of the ranking framework but also ensures that the weighting process reflects the true informational content of the features, thereby improving the overall robustness and effectiveness of keyphrase selection.

To operationalize the entropy-based weighting, we begin by normalizing each PC value such that the sum of values for a given PC across all candidate keyphrases equals 1. This normalization step ensures that the values are proportional and suitable for subsequent entropy computation. The normalization process for a PC

Then, we calculate Shannon entropy-based weights for each PC across potential keyphrases for a given document. These weights are used to assess the relative importance of each PC in determining the significance of a potential keyphrase. It helps identify which PC is more informative by analyzing how diverse or uniform its values are. If a PC has high entropy, its values are more evenly spread out, indicating a high degree of uncertainty. Conversely, if a PC has low entropy, that means the values are more concentrated, suggesting that the information is more predictable or contains fewer variations. The formula for entropy

Then, we evaluate the degree of diversification for each

The weight of a PC is proportional to its degree of diversification. PC with higher diversification, that is, lower entropy, are assigned higher weights because they carry more distinct information. The weight

Now, we will continue with our Fuzzy TOPSIS calculations. We first construct a fuzzy decision-making matrix

In the traditional TOPSIS method, the crisp values are used for the purpose of evaluation, which may not adequately represent the uncertainty. So to resolve this limitation, each

After the construction of the fuzzy decision matrix, we identify the FPIS and FNIS. The FPIS represents the most desirable values across all criteria, capturing the highest level of performance, whereas the FNIS represents the least desirable, capturing the lowest level of performance, denoted by

These ideal solutions will be used as the benchmark values against which all the alternatives are compared.

Then, to ensure the comparability across different criteria, which may have varying scales or units, we normalize the fuzzy values using equation (20):

Then, we calculate the distance of each alternative from both the FPIS and FNIS using the Euclidean distance formula for triangular fuzzy numbers. These distances quantify how close each alternative is to the ideal solutions, helping to determine the relative desirability of the alternatives. The distance between two fuzzy numbers

Now, the total distances from the FPIS and FNIS for each alternative

Then, we evaluate the closeness coefficient

Finally, the alternatives are ranked on the basis of their closeness coefficients

We specifically adopt the Fuzzy TOPSIS method for keyphrase ranking due to several key advantages. First, keyphrase extraction inherently involves uncertainty and imprecision, especially when assessing multiple diverse features such as syntactic, semantic, and statistical components. Traditional ranking methods may not effectively capture this vagueness. Fuzzy TOPSIS integrates fuzzy logic, allowing us to model such uncertainty more accurately by representing feature evaluations as fuzzy numbers. Second, TOPSIS inherently considers both the best (positive ideal) and worst (negative ideal) criteria values, ensuring that selected keyphrases are not only close to desirable characteristics but also far from undesirable ones. This dual consideration provides a balanced and robust decision-making framework. Furthermore, by integrating Shannon entropy-based weights, we ensure data-driven, unbiased feature importance assignment without manual intervention. The combination of Fuzzy TOPSIS and entropy weighting thus allows us to objectively and effectively rank keyphrases while handling data ambiguity and avoiding arbitrary weight selection. Despite this expressiveness, the computational overhead remains tractable.

The overall time complexity of the proposed framework can be decomposed into two components, corresponding to the contextual embedding and the downstream processing stages. Formally, the cumulative complexity is expressed as equation (24).

The first term encapsulates the BERT-based contextual embedding phase, wherein the complexity arises from the multihead self-attention mechanism that scales quadratically with the input token length

However, since both

In this section, we provide a detailed illustration of each step involved in the proposed methodology. The process begins with the preprocessing of the text data, followed by feature scoring, dimensionality reduction, and finally, keyword ranking.

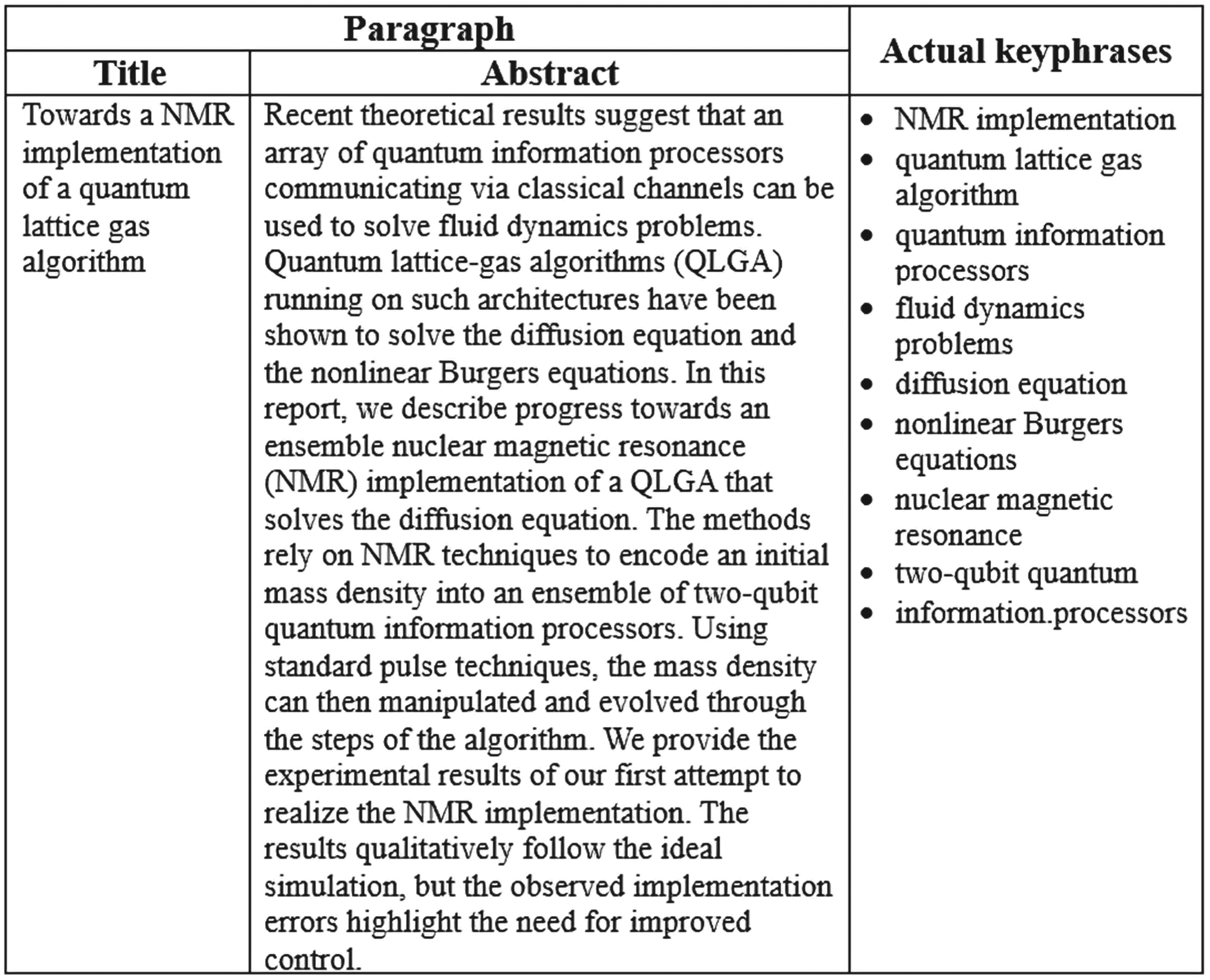

Let us consider an example with the title and abstract of a research paper (Pravia et al., 2002) as shown in Figure 3.

Paragraph and Actual Keyphrases of the First Entry of the Inspec Dataset.

Preprocessing is performed on the paragraph. We observed that after standard NLP preprocessing,

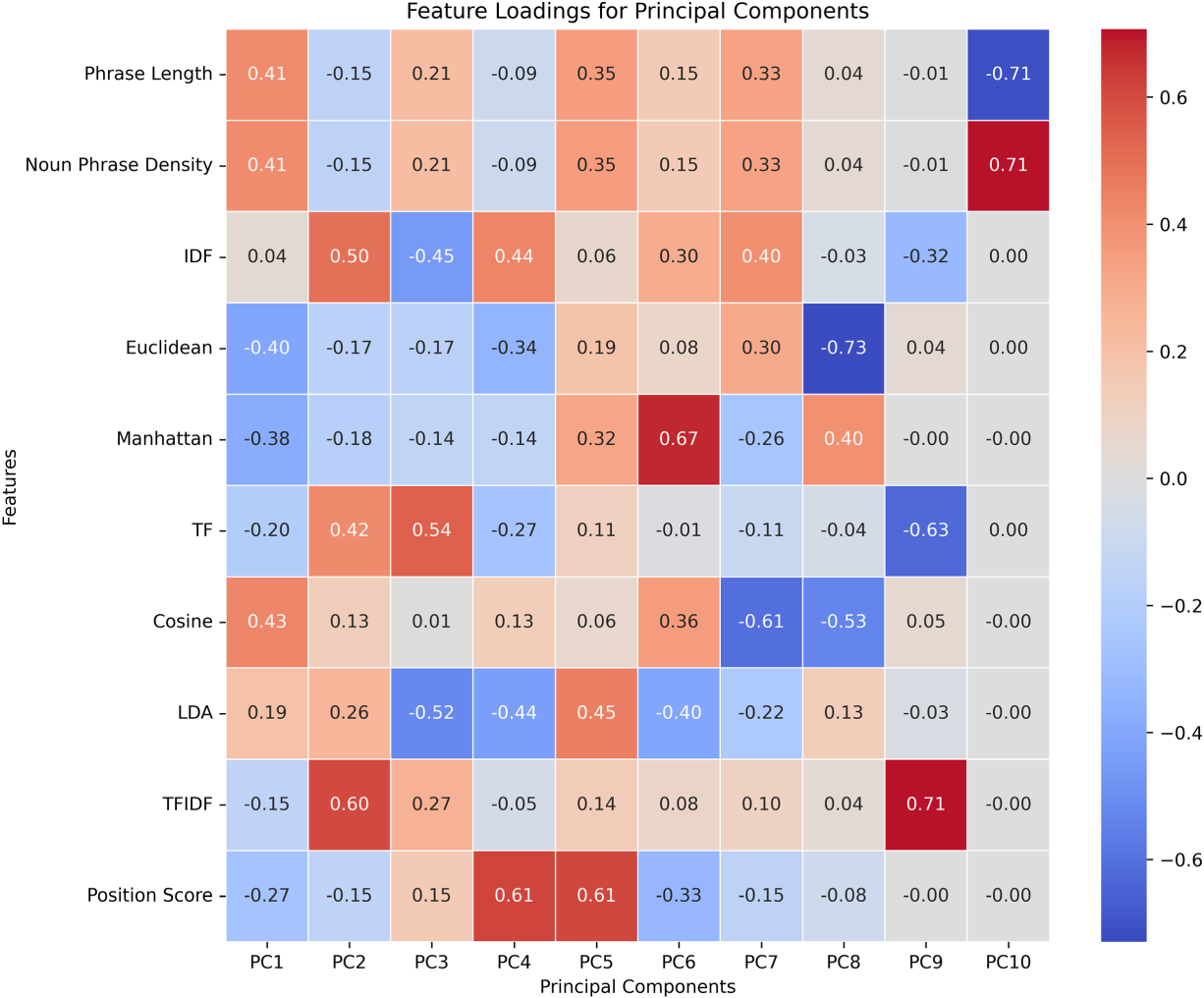

Heatmap Depicting the Influence of Features on the Principal Components.

Each cell in the heatmap corresponds to the contribution of a specific feature to a particular PC, with the color intensity indicating the magnitude of that contribution. A darker cell means the value is positive, indicating that a feature contributes strongly and positively to the associated PC. Lighter means negative values, suggesting a weaker or negative contribution.

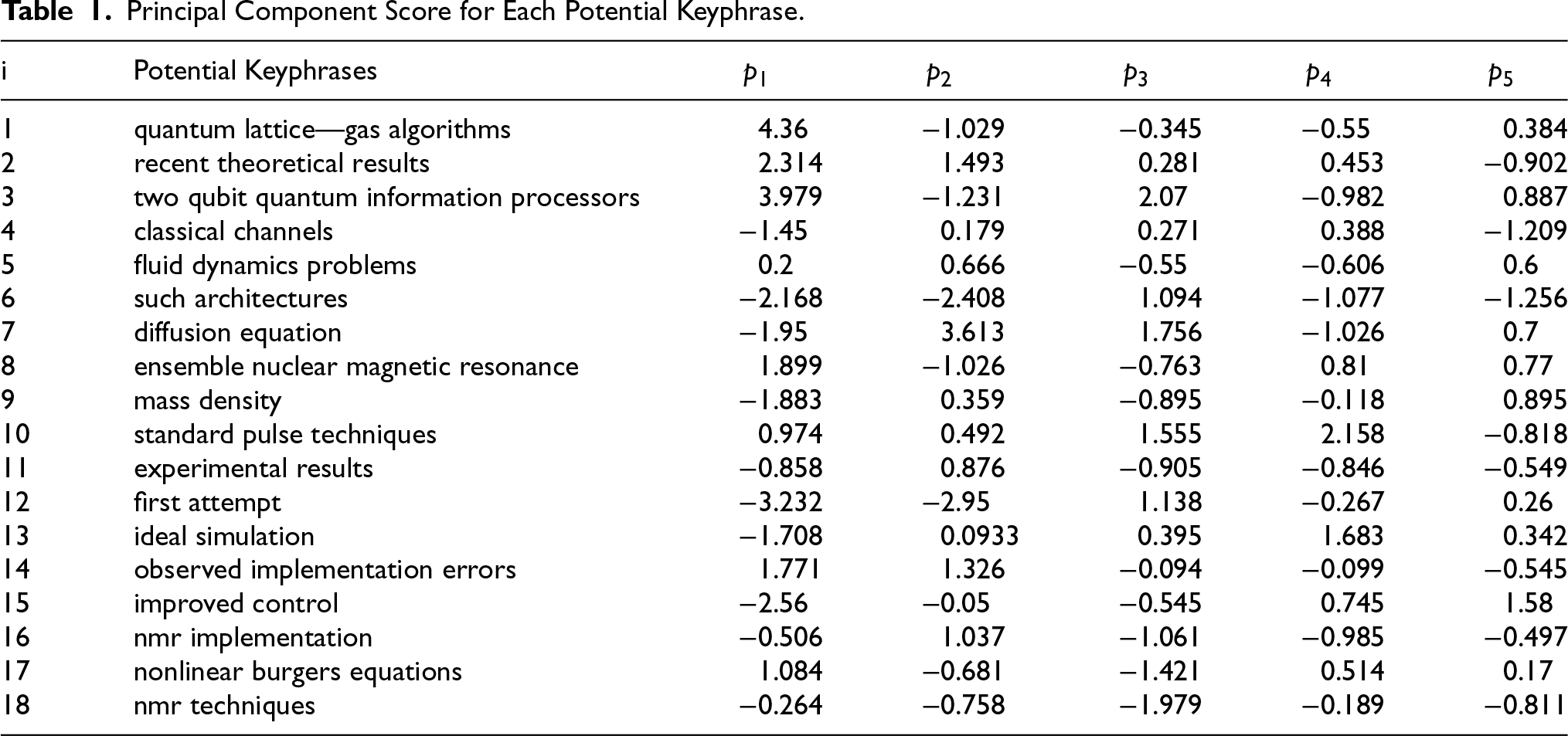

The number of PCs needed to reach at least 90% cumulative variance is selected. This ensures that the selected PCs retain a significant amount of the original variance. We have five PCs

Principal Component Score for Each Potential Keyphrase.

PCs basically represent directions of the data along which variance is maximized. The coefficients associated with these features can be positive or negative. A positive value for a

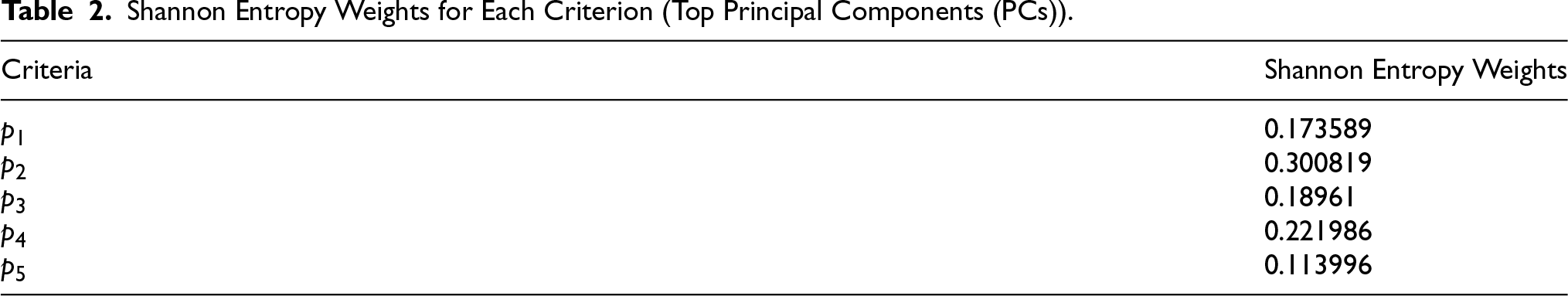

Then, we applied the MCDM technique, Fuzzy TOPSIS, to get ranks of the potential keyphrases, giving us the final keyphrases. We have 18 alternatives that we have to rank, five criteria, and using Shannon entropy-based weighting, we got weights for each

Shannon Entropy Weights for Each Criterion (Top Principal Components (PCs)).

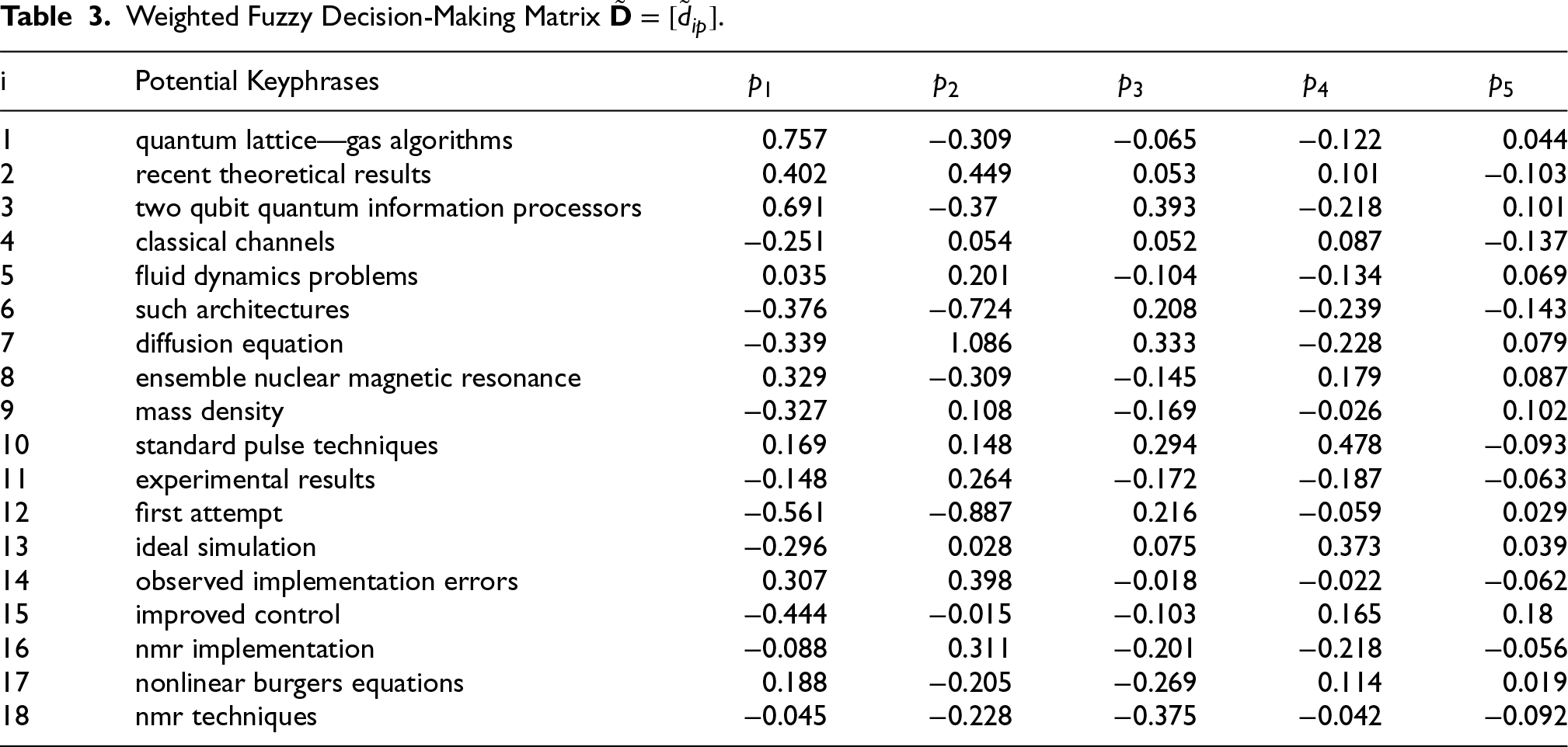

After that, we apply fuzzy TOPSIS for ranking our potential keyphrases. The fuzzy decision matrix

Weighted Fuzzy Decision-Making Matrix

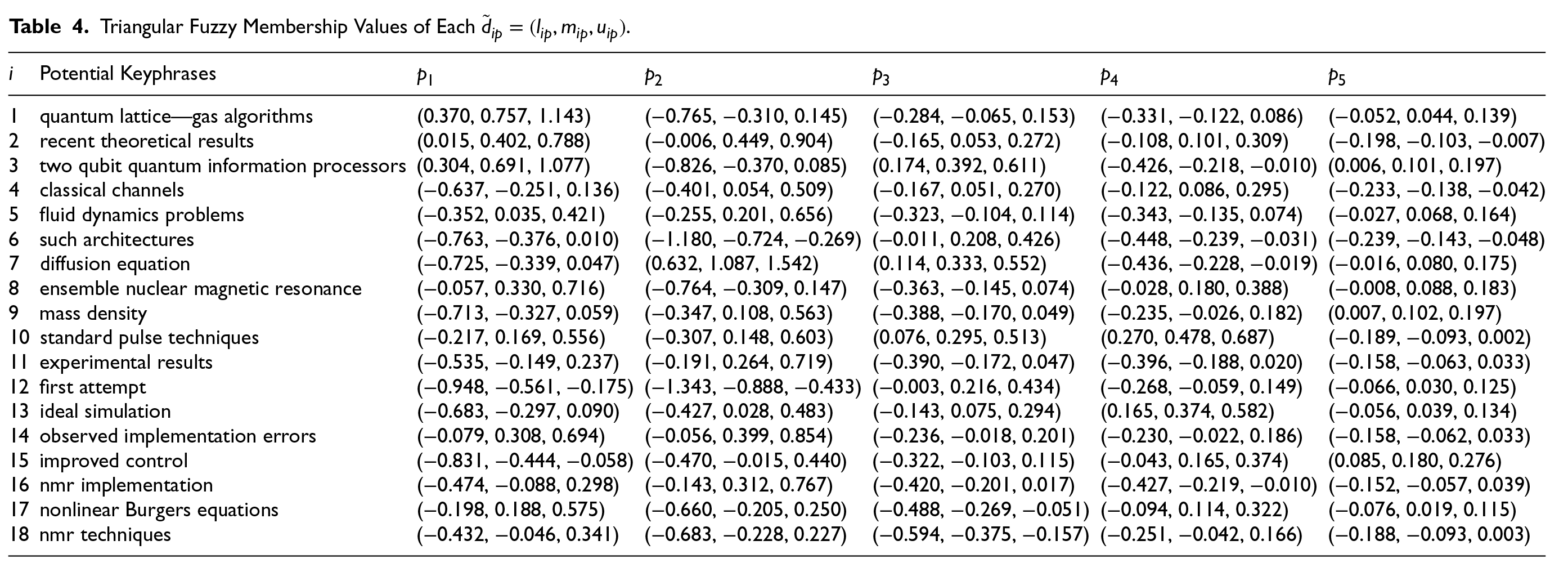

Then, we transform these crisp weighted values

Triangular Fuzzy Membership Values of Each

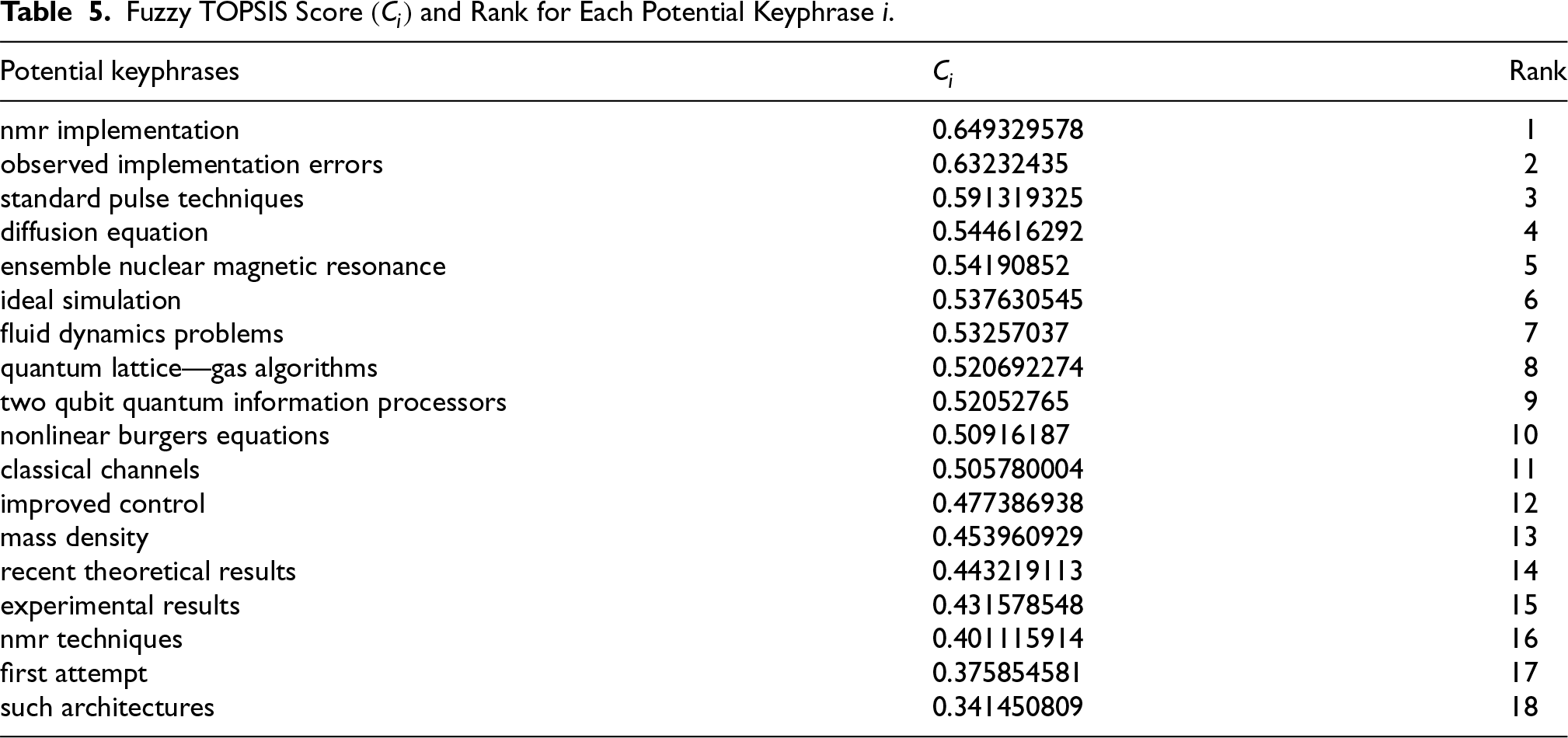

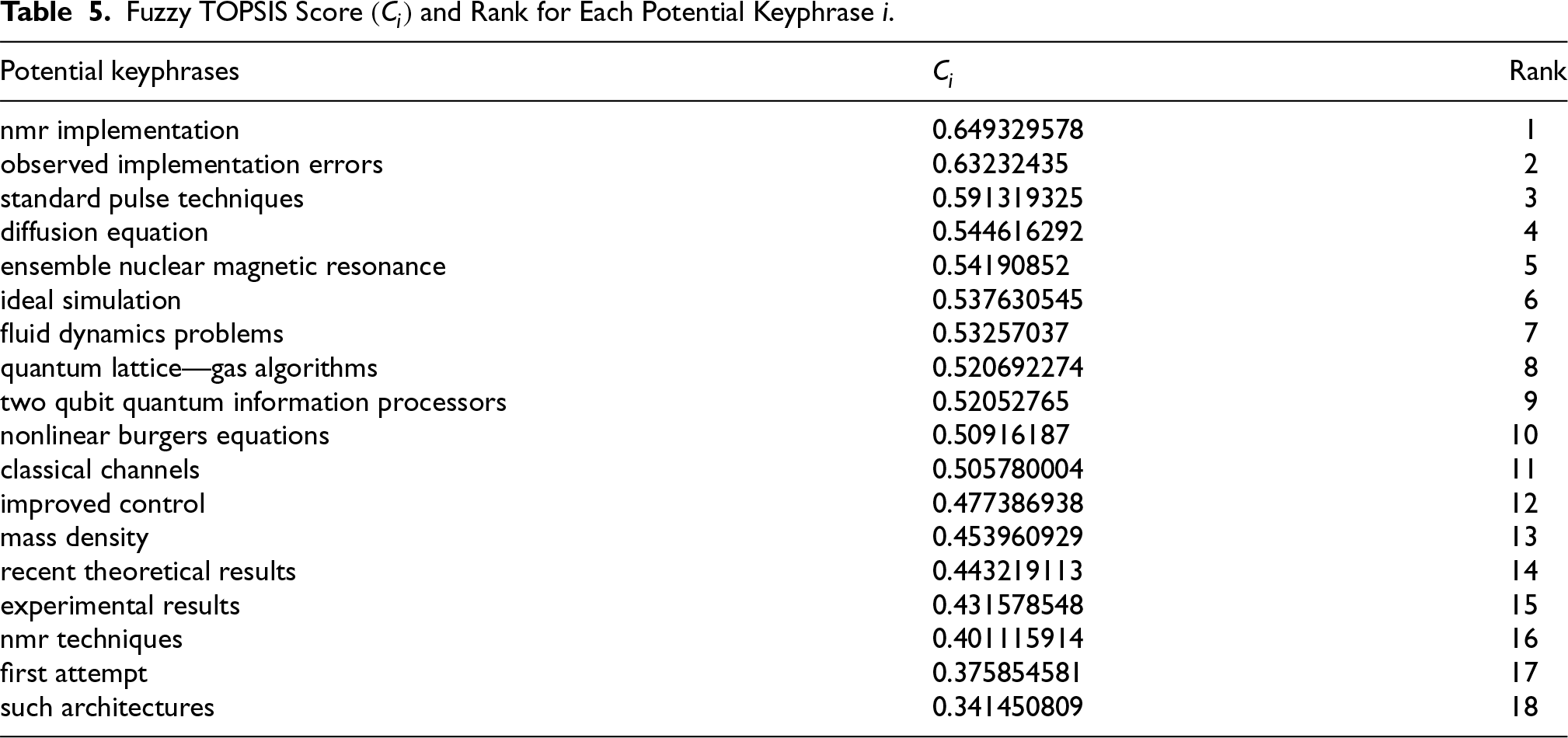

Then, we evaluate the FPIS and FNIS using equation (19), normalize the matrix using equation (20), and find the distance of each potential keyphrase from both FPIS and FNIS to evaluate how close the potential keyphrase is to the ideal solution using equations 21 and 22. Then, we finally evaluate the closeness coefficient

In this way, we are able to get ranked potential keyphrases, of which the top-ranked are considered to be the final predicted keyphrases.

This section outlines the experimental setup used to validate the proposed methodology. It includes a description of the datasets employed, details of the software environment and computational resources, and the evaluation metrics used to assess the effectiveness and efficiency of the approach. Each of these aspects is discussed in the following subsections.

Fuzzy TOPSIS Score

and Rank for Each Potential Keyphrase

.

Fuzzy TOPSIS Score

As part of the evaluation process, we use three publicly available benchmark datasets for keyphrase extraction. In this regard, note that some gold keyphrases are absent from the original text, and some keyphrases cannot be recognized as possible keyphrases. Therefore, extracting 100% of keyphrases is theoretically impossible.

The first one is the Inspec database (Hulth, 2003), where we retrieved 2,000 abstracts in English with their titles and keywords. Computers and control and information technology are the fields of study covered by these abstracts, which are based on journal papers published from 1998 to 2002. It has the least length as compared to the other datasets, that is, the least number of words per document.

The second dataset is SemEval 2017 Task 10 (Augenstein et al., 2017), which consists of 493 paragraphs derived from open-access ScienceDirect publications evenly distributed between computer science, material science, and physics.

The third dataset is DUC2001 (Wan & Xiao, 2008), which comprises 308 news articles gathered from TREC-9. In total, 30 topics of news were covered in the articles, and 740 words on average were written in each paper. It has the maximum length as compared to the other datasets, that is, the maximum number of words per document.

Software Implementation

The proposed methodology is implemented using Python 3.10.12, with relevant libraries including NumPy 1.24.3, pandas 2.0.3, SciPy (1.10.1), and scikit-learn 1.3.2 linguistic preprocessing tasks, including tokenization, lemmatization, and part-of-speech tagging, are performed using SpaCy (3.5.0). All experiments are conducted on a local machine equipped with an Intel® Core-i5-1035G1 CPU@1.00 GHz, 8 GB RAM, and a 64-bit Windows 11 Home Single Language operating system. No GPU acceleration is utilized, underscoring the lightweight and scalable nature of the proposed approach. This setup ensures the reproducibility of the research on standard computational resources.

Furthermore, the computational efficiency of the proposed methodology supports its applicability in real-time and large-scale scenarios. The PCA operates on a compact set of 10 feature dimensions, ensuring minimal processing time. Similarly, Fuzzy TOPSIS is applied to a limited set of candidate phrases per sentence, keeping the decision process lightweight. Empirical evaluation confirms that the complete pipeline executes efficiently on a standard CPU-based system without the need for GPU acceleration or high-end hardware. For more demanding real-time applications or larger textual datasets, further optimizations such as incremental PCA and approximation techniques for fuzzy decision-making can be integrated to enhance scalability. Additionally, recent advancements in memory and computational optimization, as highlighted in Dodić and Regodić (2024), offer promising avenues to reduce resource consumption without compromising accuracy. These factors collectively demonstrate the practicality and efficiency of the proposed approach for real-world deployments.

Evaluation Metrics

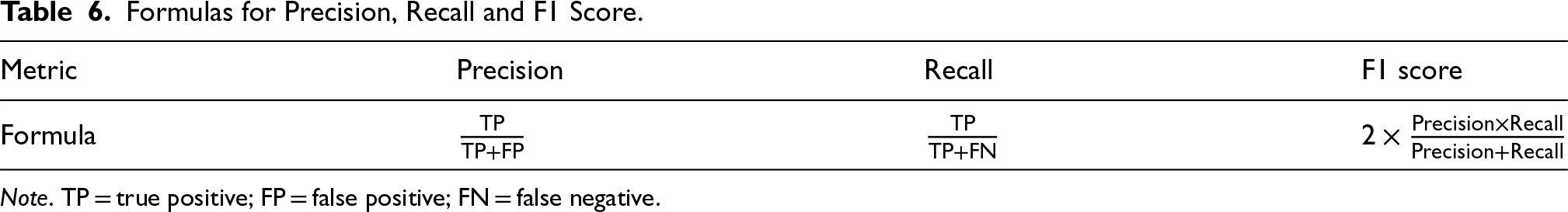

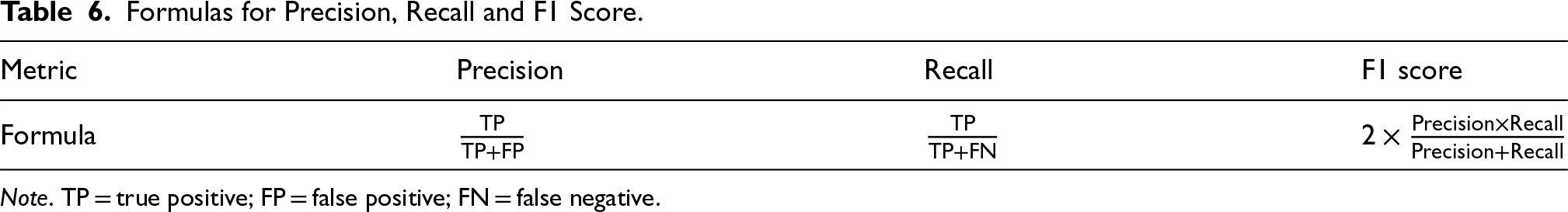

We calculate the efficiency of our proposed model, TopS-Key, by measuring three parameters: precision, recall, and F1 score. Each keyphrase extraction

Calculating these metrics usually involves looking for exact matches. However, since we evaluate semantic similarities, similarity, and threshold functions become crucial in evaluating the degree of similarity between predicted and actual keyphrases. When calculating the similarity scores between an actual keyphrase and its predicted counterpart, we use the threshold to determine whether the predicted keyphrase matches the actual.

The procedure encompasses an iterative evaluation where each actual keyphrase undergoes scrutiny against predicted counterparts, predicated upon a predefined similarity threshold. True positives (TPs) are ascertained when an actual keyphrase aligns with a predicted counterpart surpassing the stipulated threshold, while false positives (FPs) ensue when predicted keyphrases fail to meet this criterion vis-à-vis any actual keyphrase. False negatives (FNs) arise when an actual keyphrase is not matched by any predicted keyphrase above the threshold. Table 6 shows the formula used for the evaluation of these metrics.

Formulas for Precision, Recall and F1 Score.

Formulas for Precision, Recall and F1 Score.

Note. TP = true positive; FP = false positive; FN = false negative.

This section presents a comprehensive analysis of the results obtained from the proposed methodology. We explore the impact of different thresholds, evaluate the contribution of individual features through an ablation study, and conduct both comparative and statistical performance analyses to benchmark our approach. Each of these aspects is detailed in the following subsections.

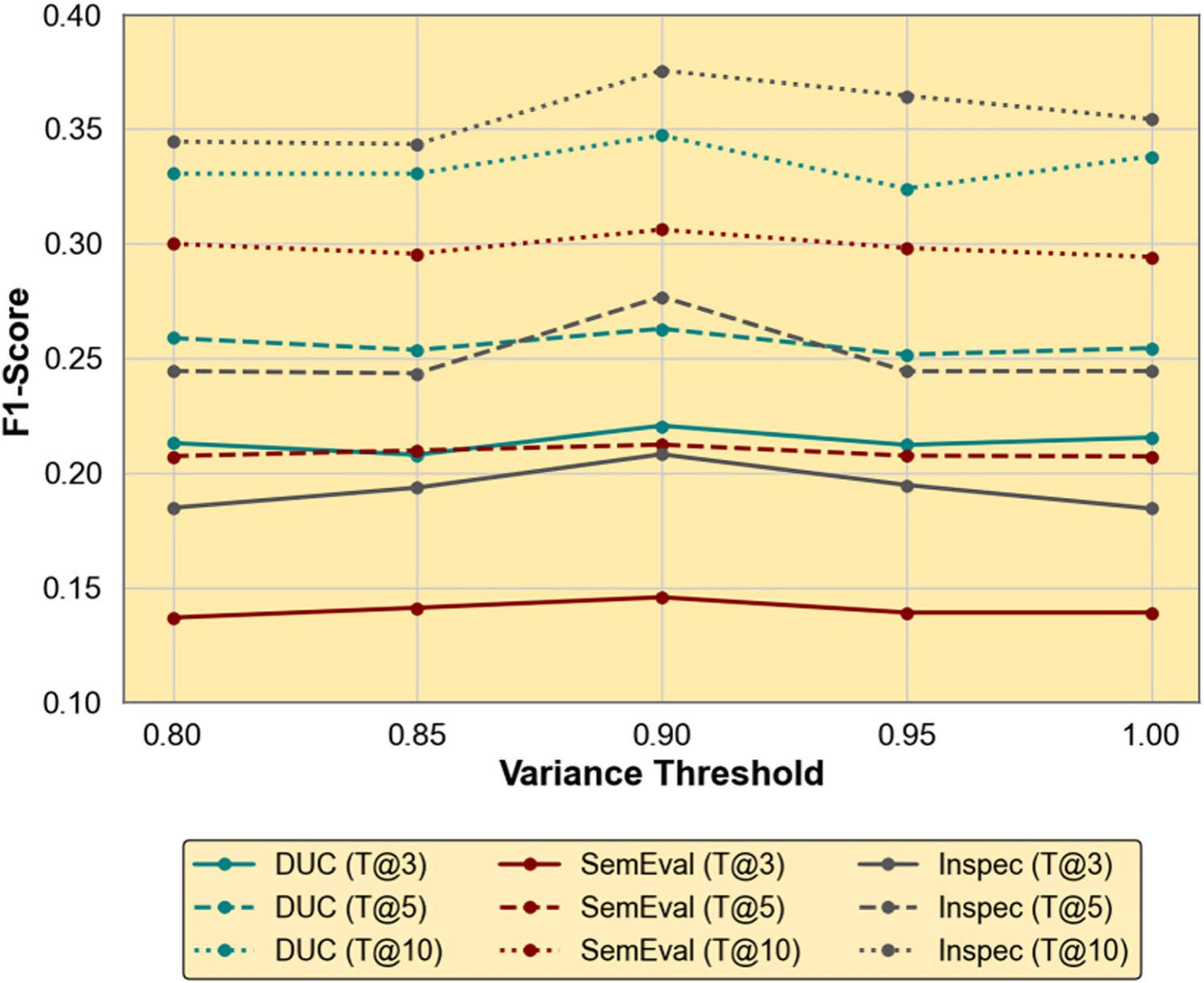

PCA Cumulative Variance Threshold Sensitivity Analysis

To evaluate the impact of dimensionality reduction on keyphrase extraction performance, we conduct a detailed sensitivity analysis by varying the variance retention threshold in PCA from 0.80 to 1.00. This investigation spans three benchmark datasets, DUC 2001, SemEval 2017 Task 10, and Inspec—each characterized by differing document structures and vocabulary distributions. Performance is assessed using the F1 score at Top@3, Top@5, and Top@10 ranks to jointly account for precision and recall across varying retrieval granularities.

Figure 5 illustrates the F1 score trajectories for each dataset and evaluation depth as a function of the retained variance. A consistent pattern emerges across all datasets: increasing the threshold from 0.80 to 0.90 results in performance gains at most top-

F1 Score Trends Across Varying Principal Component Analysis (PCA) Variance Thresholds on Three Datasets.

Beyond the 0.90 threshold, however, the F1 score trend either stabilizes or exhibits slight declines. For example, increasing the threshold from 0.90 to 1.00 results in a drop in performance for both Inspec and DUC at Top@5 and Top@3, with reductions reaching up to 6%. This confirms that 1.00 is the worst-performing threshold for these datasets, likely due to the inclusion of low-variance components that encode noise or redundancy, thereby reducing the discriminative power of the representation. At the lower end, 0.80 also yields suboptimal results, especially for DUC, suggesting that aggressive dimensionality reduction may suppress important semantic features.

Notably, SemEval displays relatively flat performance across all thresholds, with only marginal gains at 0.90. This stability may stem from its broader linguistic variability and longer texts, which render the PCA projection less sensitive to threshold variations. Even so, 0.90 still represents the most balanced setting, achieving the highest Top@10 F1 score with minimal drop elsewhere.

The Inspec dataset consistently outperforms the others at higher Top@

In summary, a PCA variance threshold of 0.90 offers the optimal tradeoff between dimensionality reduction and semantic preservation. It consistently enhances model performance across datasets and evaluation depths, while thresholds at 0.80 and 1.00 frequently yield the poorest results. Accordingly, we fix the variance retention at 90% in our final pipeline to balance accuracy, interpretability, and computational efficiency.

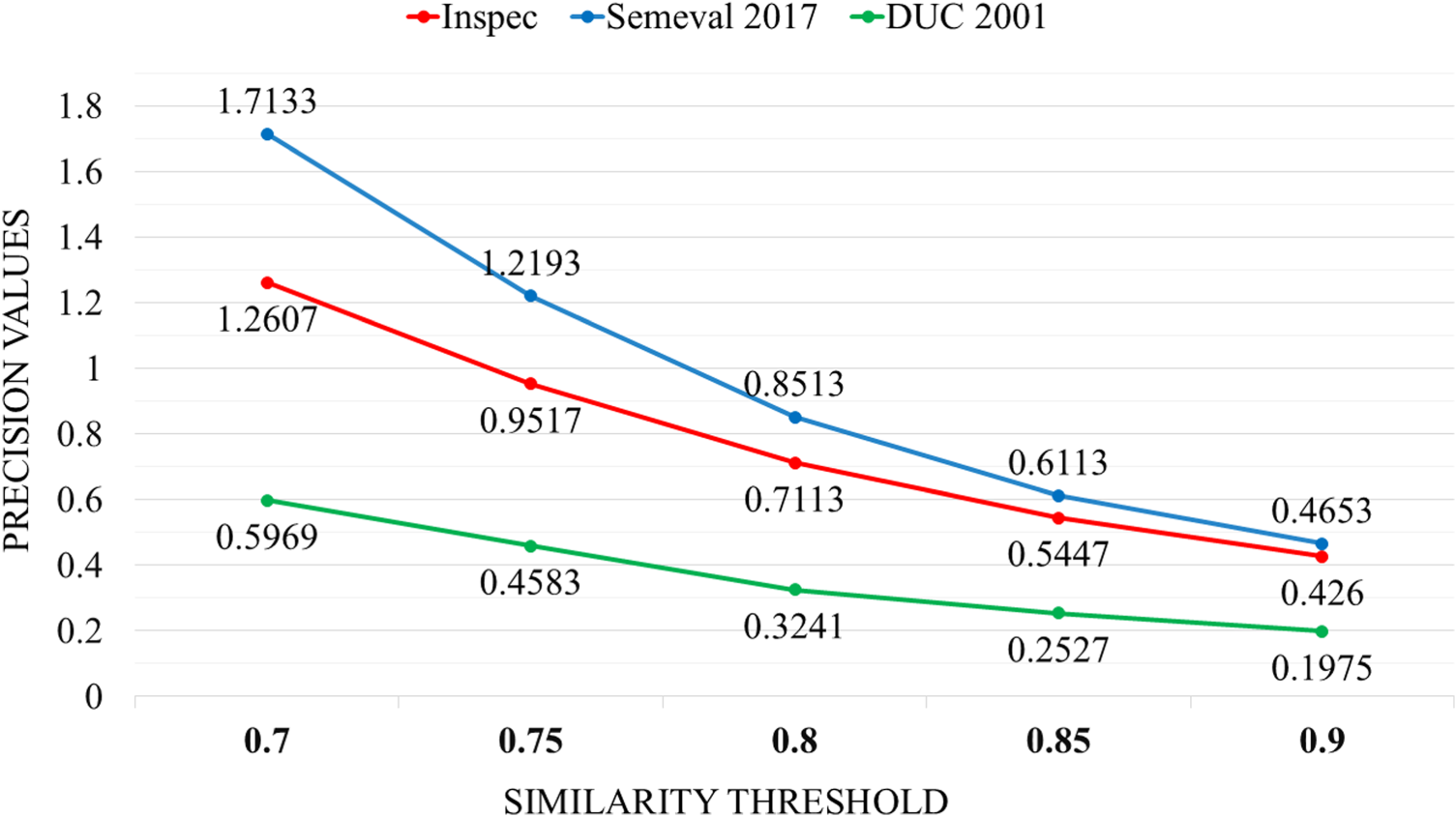

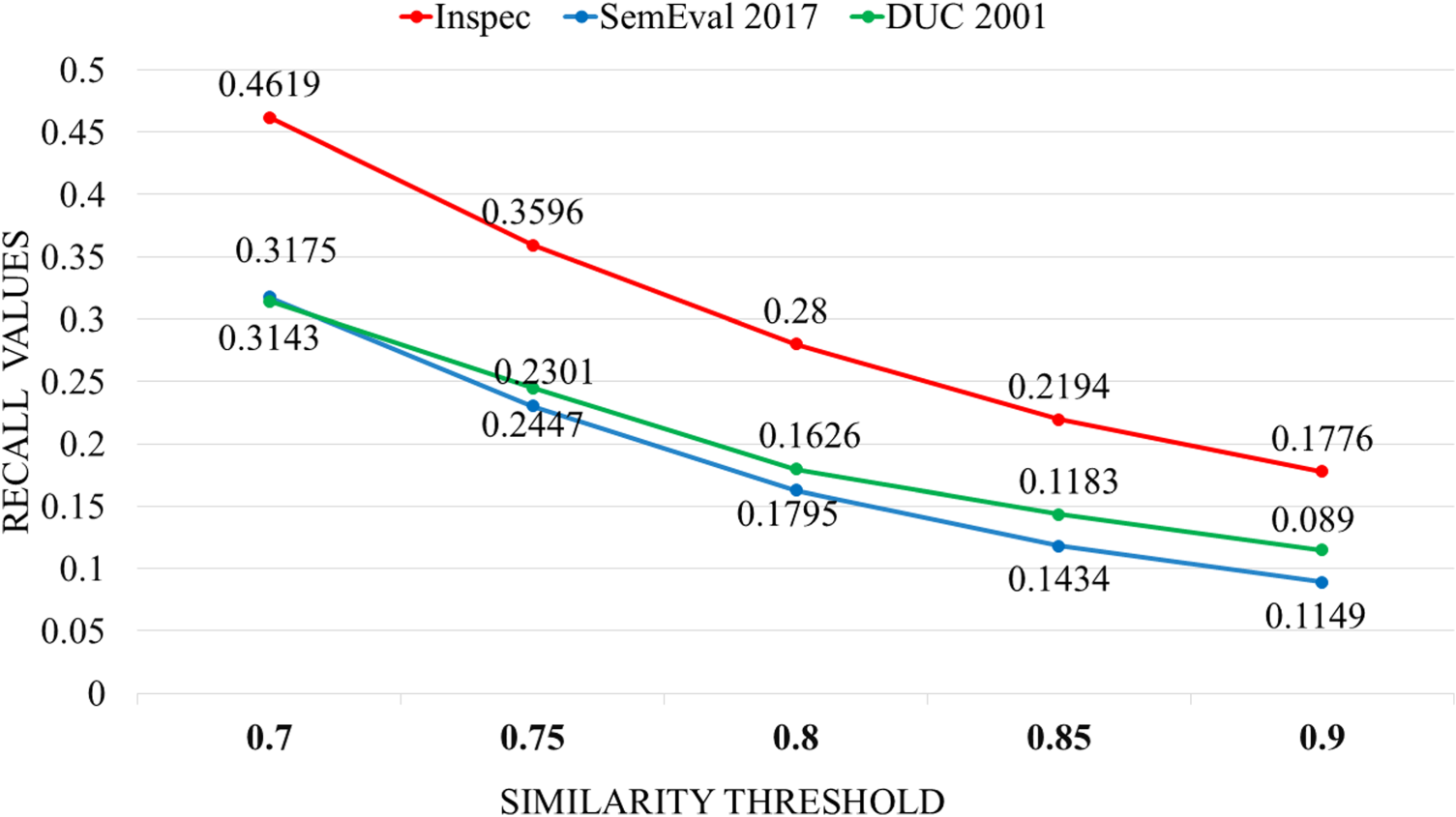

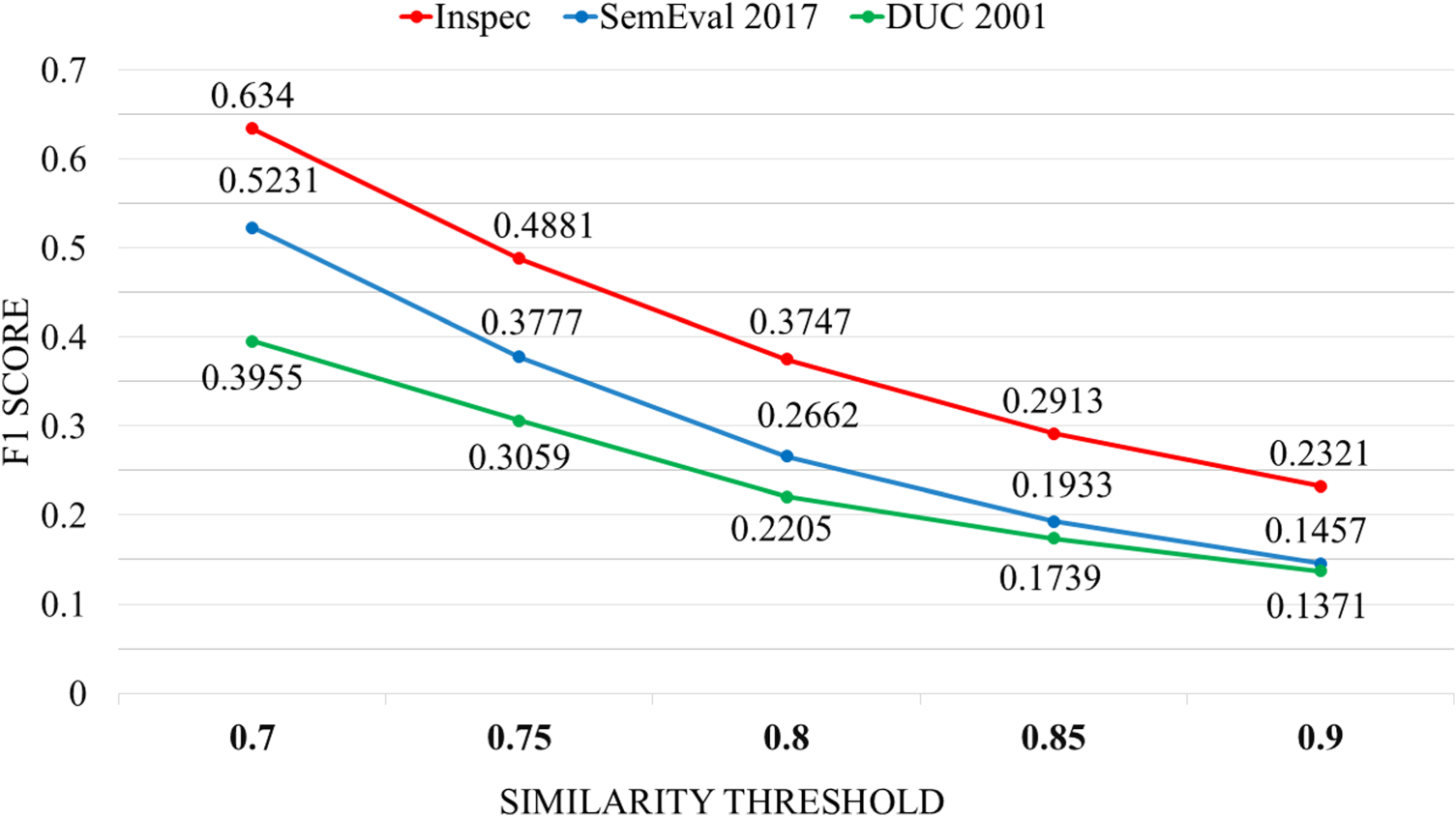

We first analyze the effect of varying similarity thresholds on performance using precision, recall, and F1 score, focusing on the Top@3 evaluation depth. Thresholds are varied from 0.70 to 0.90 in increments of 0.05.

In Figure 6, we observe a steady decline as the threshold increases. Higher thresholds impose stricter matching criteria, causing the model to become more selective in predicting keyphrases. As a result, fewer predictions are made, and the number of TPs decreases, which lowers the precision. Among the datasets, DUC 2001 consistently exhibits the lowest precision, likely due to its longer document length and higher lexical variability.

Precision Trends Across Varying Similarity Thresholds for All Datasets at Top@

In Figure 7, we observe that threshold increase leads to a decrease in recall. A higher threshold indicates a stronger signal, that is, a predicted keyphrase requires a higher level of similarity to be deemed as a match. This causes the method to miss some TPs, which subsequently results in lower recall. Also, it is observed that the dataset with the minimum number of words per document, that is, Inspec, has the maximum recall value as compared to other datasets.

Recall Trends Across Varying Similarity Thresholds for All Datasets at Top@

In Figure 8, for the F1 score, which balances precision and recall, we observe a similar declining trend. This is expected, as both precision and recall drop with higher thresholds. Notably, the best overall F1 performance is obtained at threshold 0.75, where the tradeoff between precision and recall is most favorable—particularly on the Inspec dataset. In contrast, the lowest F1 scores are observed at threshold 0.9, especially for DUC 2001. This suggests that overly strict thresholds penalize semantically relevant but lexically diverse phrases, resulting in performance degradation. As document length increases, this effect becomes more pronounced.

F1 Score Trends Across Varying Similarity Thresholds for All Datasets at Top@

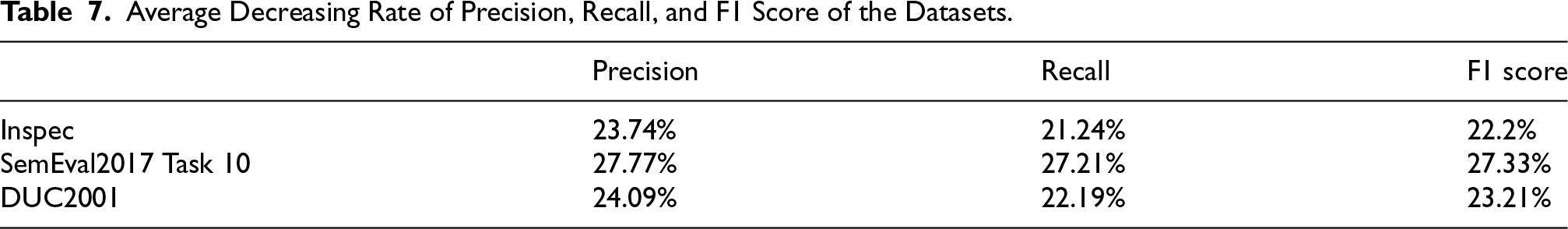

We calculated the average decreasing rate of precision, recall, and F1 score as the threshold increases in all the datasets, as shown in Table 7.

Average Decreasing Rate of Precision, Recall, and F1 Score of the Datasets.

Across all three datasets, the precision exhibits a steeper average rate of decline compared to recall, indicating that as the threshold increases, the model becomes more conservative and filters out more candidate phrases. This causes the proportion of relevant items among the retrieved set to drop more sharply, while recall, although affected, declines at a slightly slower pace. The F1 score decreases steadily, with the degradation remaining balanced across precision and recall, suggesting that the model responds uniformly to increasing threshold levels.

The best F1 performance is observed at a threshold of 0.75, where the tradeoff between precision and recall is most favorable, particularly evident in the Inspec dataset. In contrast, the worst F1 scores occur at threshold 0.9, especially for DUC 2001, due to its longer documents and more diverse vocabulary, which are disproportionately penalized under stricter similarity constraints.

Despite this, we select a threshold of 0.9 in our final configuration. This decision is motivated by the need for stricter semantic alignment between predicted and actual keyphrases. The 0.9 setting minimizes FPs and ensures that only highly precise, semantically valid keyphrases are accepted, which is desirable in practical applications requiring interpretability and reliability. Although there is a moderate tradeoff in recall and F1 performance compared to 0.75, the model remains robust across the tested threshold range, and 0.9 offers the most precision-oriented and semantically rigorous configuration.

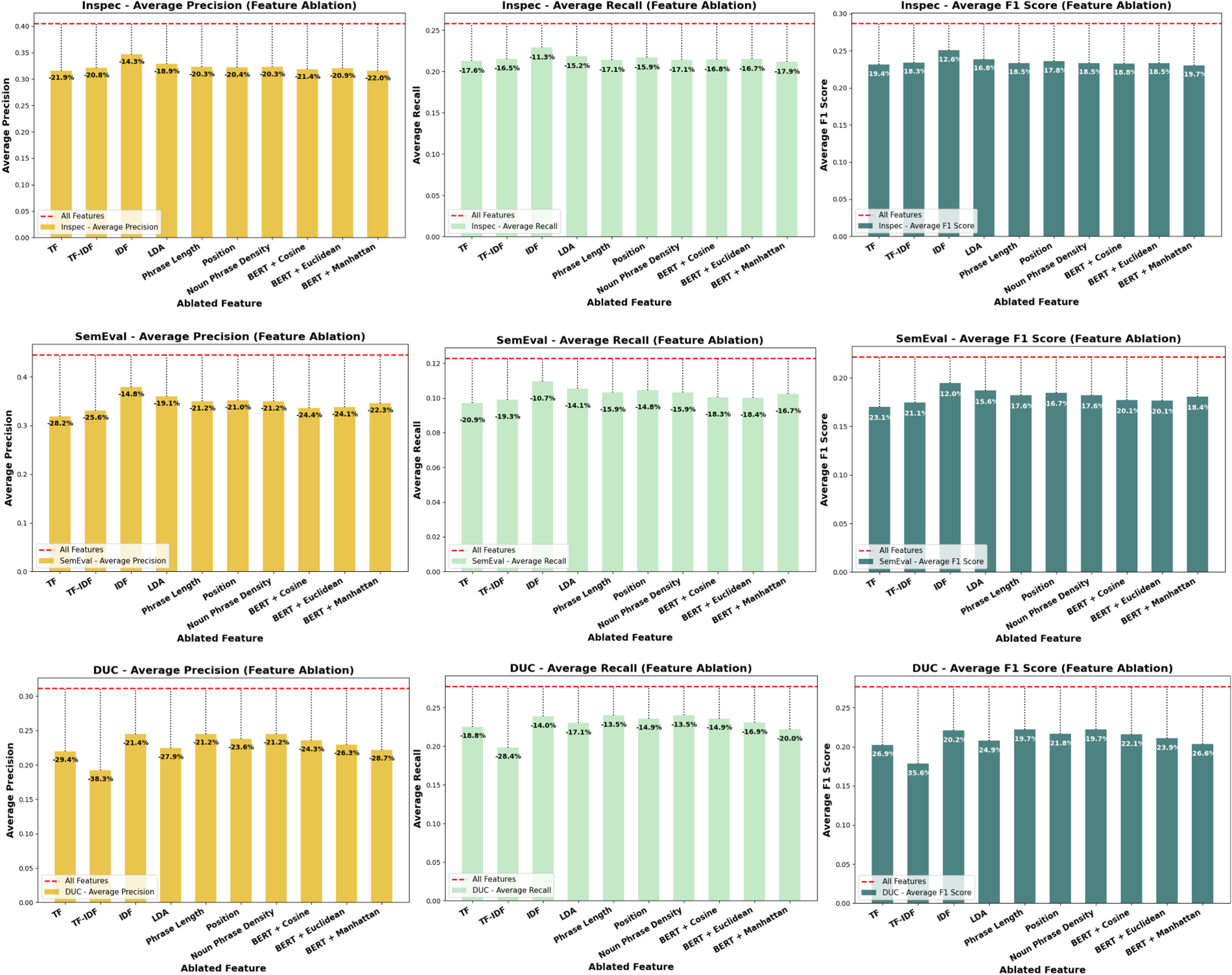

To systematically assess the contribution and significance of each feature within our keyphrase extraction framework, we conducted a comprehensive ablation study, a widely adopted technique in the field (Papagiannopoulou & Tsoumakas, 2020). In this study, we remove each feature individually and reevaluate the model’s performance on three benchmark datasets: SemEval2017 Task 10, Inspec, and DUC2001. The evaluation employed three standard metrics: average precision, recall, and F1 score of top-3, top-5, and top-10 ranked keyphrases. Figure 9 illustrates the results of this analysis across the three datasets.

Ablation Study Results Showing the Effect of Each Feature Across Datasets.

In Figure 9, we can observe that for the INSPEC dataset, the ablation results highlight the critical importance of certain features. Specifically, removing the BERT + Manhattan distance feature leads to the highest reduction in average F1 score, with a 19.7% decrease compared to the model using all features. The TF and IDF features also contribute significantly, showing respective F1 score drops of 19.4% and 12.6% upon removal. Additionally, the removal of the BERT + cosine similarity feature results in a 16.6% decrease, and the LDA topic coherence feature causes an 11.8% reduction. These findings indicate that semantic similarity and frequency-based features play the most influential roles in improving keyphrase extraction performance on the INSPEC dataset. The performance degradation after removing each feature confirms that all 10 features are essential, as their absence consistently reduces precision, recall, and F1 scores.

A similar pattern is observed for the SemEval dataset in Figure 9. Removal of the BERT + Manhattan distance feature results in the largest drop in average F1 score, amounting to 18.4%. The TF feature and IDF feature again show substantial influence, with respective F1 decreases of 17.5% and 10.2%. Moreover, the BERT + cosine similarity feature causes a notable decrease of 16.7%, while excluding the LDA topic coherence feature leads to a 12.5% drop. Other features, such as phrase length and noun position, exhibit moderate impacts on performance (between 8–11% decrease). The consistent decline in performance metrics after the removal of each feature illustrates that all ten features contribute meaningfully to the model's success.

For the DUC dataset, the ablation trends are consistent with the other datasets. The removal of the BERT + Manhattan distance feature yields the largest decline in average F1 score, with a decrease of 20.6%, further emphasizing its pivotal role. The TF and IDF features follow closely, leading to F1 score reductions of 19.6% and 11.7%, respectively. Other structural features such as phrase length, noun position, and noun phrase density exhibit smaller but noticeable reductions, generally around 7% to 10%.

Across all ablation configurations, the full model utilizing all 10 features consistently achieves the best F1 performance, confirming that each component contributes positively. The removal of the BERT + Manhattan distance feature leads to the worst-case performance, with F1 score drops ranging from 18.4% (SemEval) to 20.6% (DUC 2001), the most severe decline observed. This underscores its central role in capturing fine-grained semantic alignment. Frequency-based features such as TF and IDF also show high impact, with consistent reductions across datasets (e.g., up to 19.6% on DUC), reinforcing their complementary contribution. In contrast, features such as phrase length and noun position cause only modest performance drops, typically below 10%, suggesting a relatively lower influence on the final ranking, especially on longer documents where structural heuristics are less reliable. No single feature’s removal improves performance, indicating that the full model integrating all features offers the optimal balance of semantic, statistical, and structural cues.

This prominence of the BERT + Manhattan distance feature can be theoretically explained by its unique ability to capture fine-grained semantic deviation across embedding dimensions. While all BERT-based features leverage the contextual richness of transformer embeddings, the distance metric plays a crucial role in determining their effectiveness. Cosine similarity measures angular similarity, which is scale-invariant but insensitive to absolute differences in vector space. Euclidean distance captures geometric distance but tends to emphasize larger deviations disproportionately in high-dimensional spaces, often diluting the influence of smaller, semantically meaningful variations. In contrast, Manhattan (L1) distance computes the sum of absolute differences across all dimensions, offering greater sensitivity to small yet consistent deviations, especially valuable in BERT’s dense semantic space. This makes the BERT + Manhattan feature particularly adept at identifying subtle contextual misalignments between a candidate phrase and its surrounding text. Empirically, this observation is supported by our ablation results, where removing this feature resulted in the sharpest performance drop (up to 20.6% in F1 score), underscoring its essential contribution to semantic alignment in the proposed ranking model.

In this study, we establish two primary research hypotheses: (1) the proposed model consistently outperforms existing baseline keyphrase extraction methods across various experimental conditions, and (2) the model demonstrates robust and stable performance across different text domains, irrespective of document structures or writing styles. To validate these hypotheses, we conduct extensive comparative analyses against 15 baseline methods, encompassing statistical, graph-based, embedding-based, and hybrid techniques, across three benchmark datasets: INSPEC, SemEval, and DUC.

The consistently superior performance of TopS-Key across all datasets and evaluation settings can be attributed to its holistic treatment of semantic representation, feature weighting, and ranking. Unlike traditional and recent neural baselines, TopS-Key effectively balances contextual informativeness and discriminative relevance through entropy-guided dimensionality reduction and multicriteria decision-making. This allows the model to maintain high precision and recall, particularly in settings characterized by topical drift, variable document lengths, and noisy contexts, challenges that typically degrade the performance of other state-of-the-art methods.

The methods used for comparison are RAKE (Rose et al., 2012) using GloVe embeddings (Pennington et al., 2014), PositionRank (Florescu & Caragea, 2017), MultipartiteRank (Boudin, 2018), EmbedRank (Bennani-Smires et al., 2018), YAKE (Campos et al., 2018), RoBERTa (Liu et al., 2019b), KeyBERT (Grootendorst, 2020), TripleRank (Li et al., 2021), MDERank (Zhang et al., 2021), PromptRank (Kong et al., 2023), HGUKE (Song et al., 2023a), BRYT (Ahmed et al., 2024), AdaptiveUKE (Liu et al., 2024), HCUKE (Xu et al., 2024), and SDRank (Xu et al., 2025).

In our experiments, the GloVe embeddings are 300-dimensional, trained on the Common Crawl dataset. PositionRank uses a window size of 10, while MultipartiteRank sets its weight adjustment hyperparameter to 1.1. EmbedRank connects nodes using a cosine similarity threshold of 0.5. YAKE uses a window size of 1, an n-gram length of 3, and a deduplication threshold of 0.9. For RoBERTa, the max sequence length is 512. KeyBERT considers n-gram ranges of (1, 3) and uses an MMR diversity parameter of 0.7. TripleRank uses a window size of 2 and a damping factor

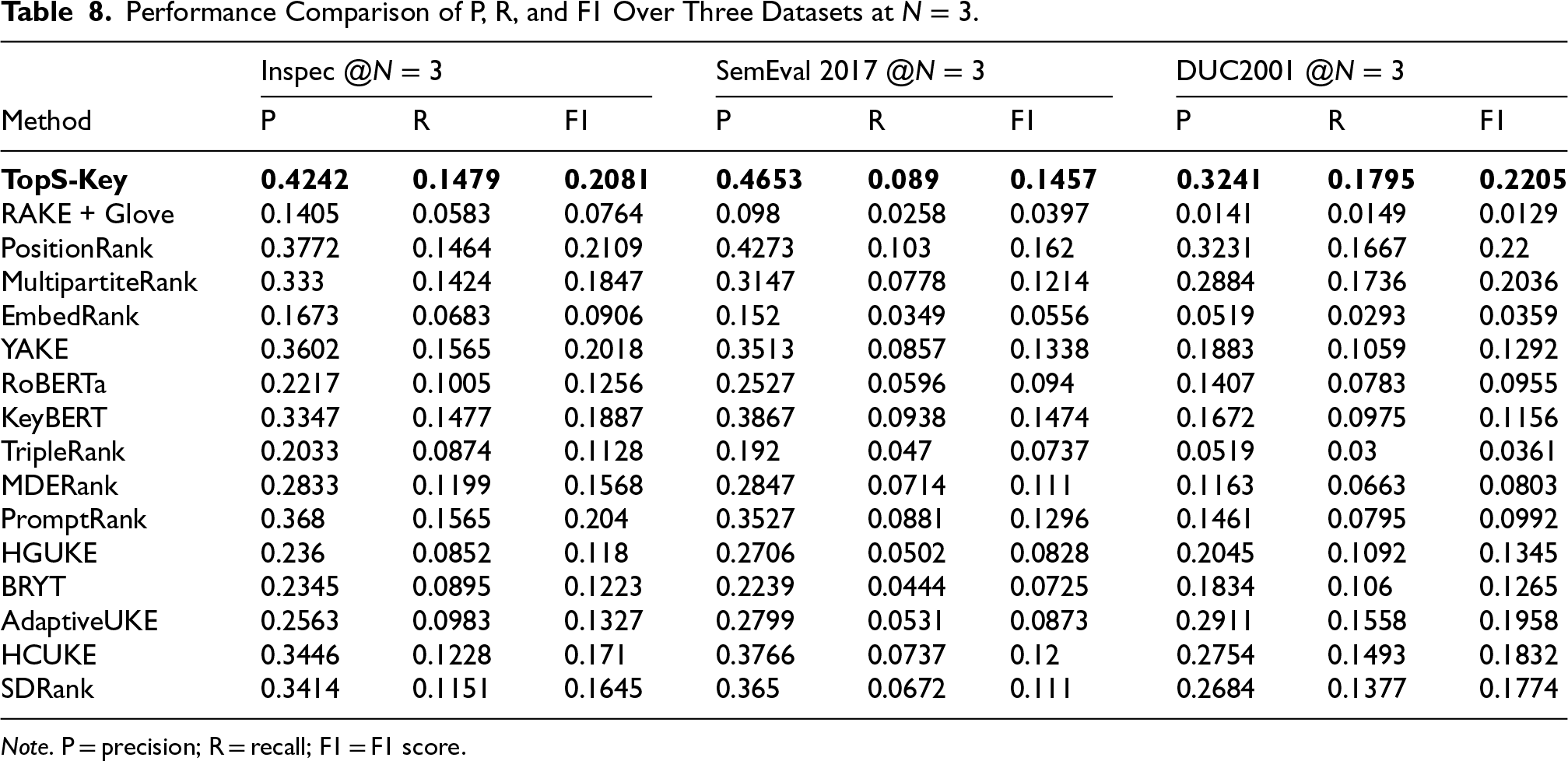

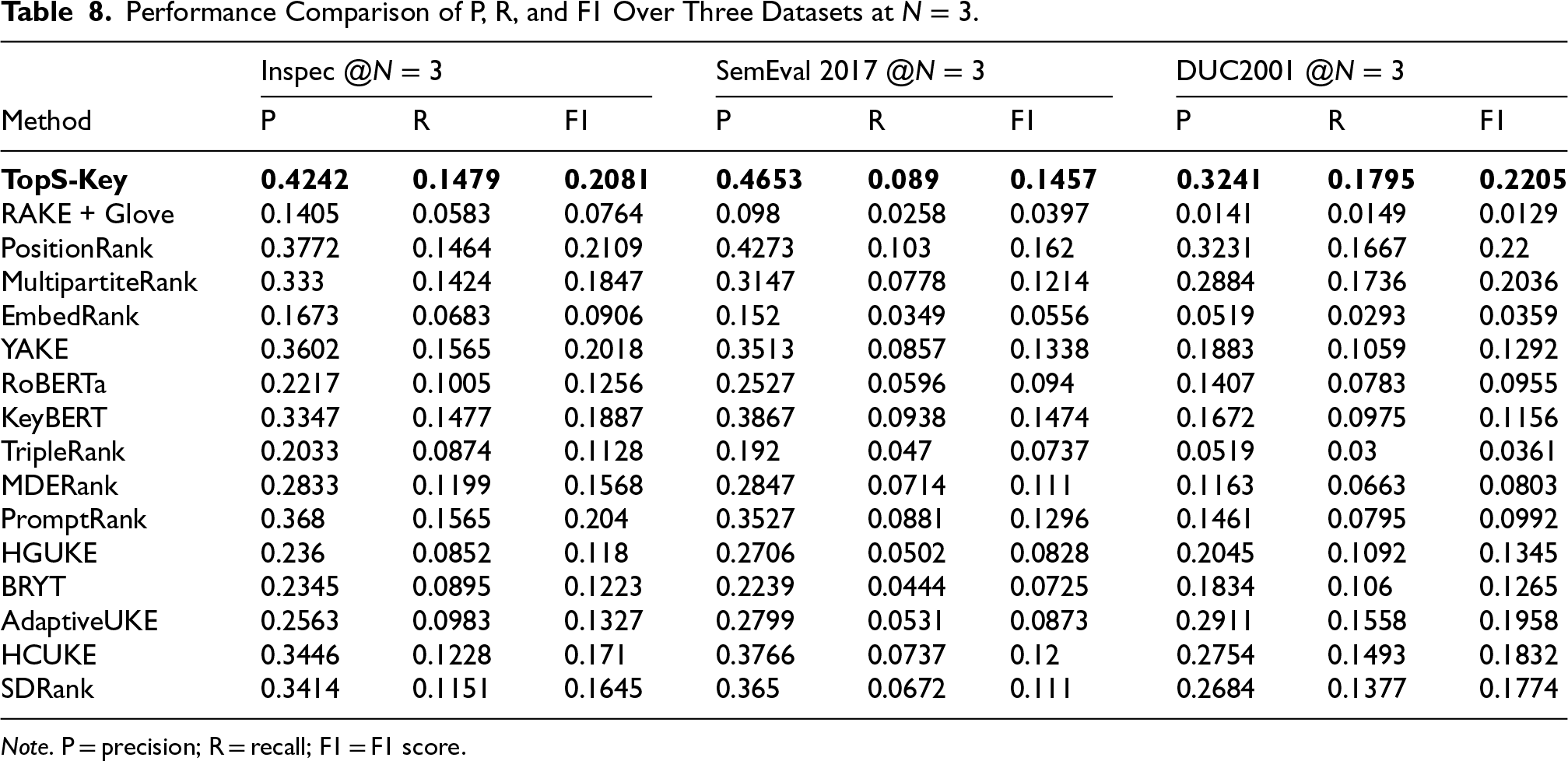

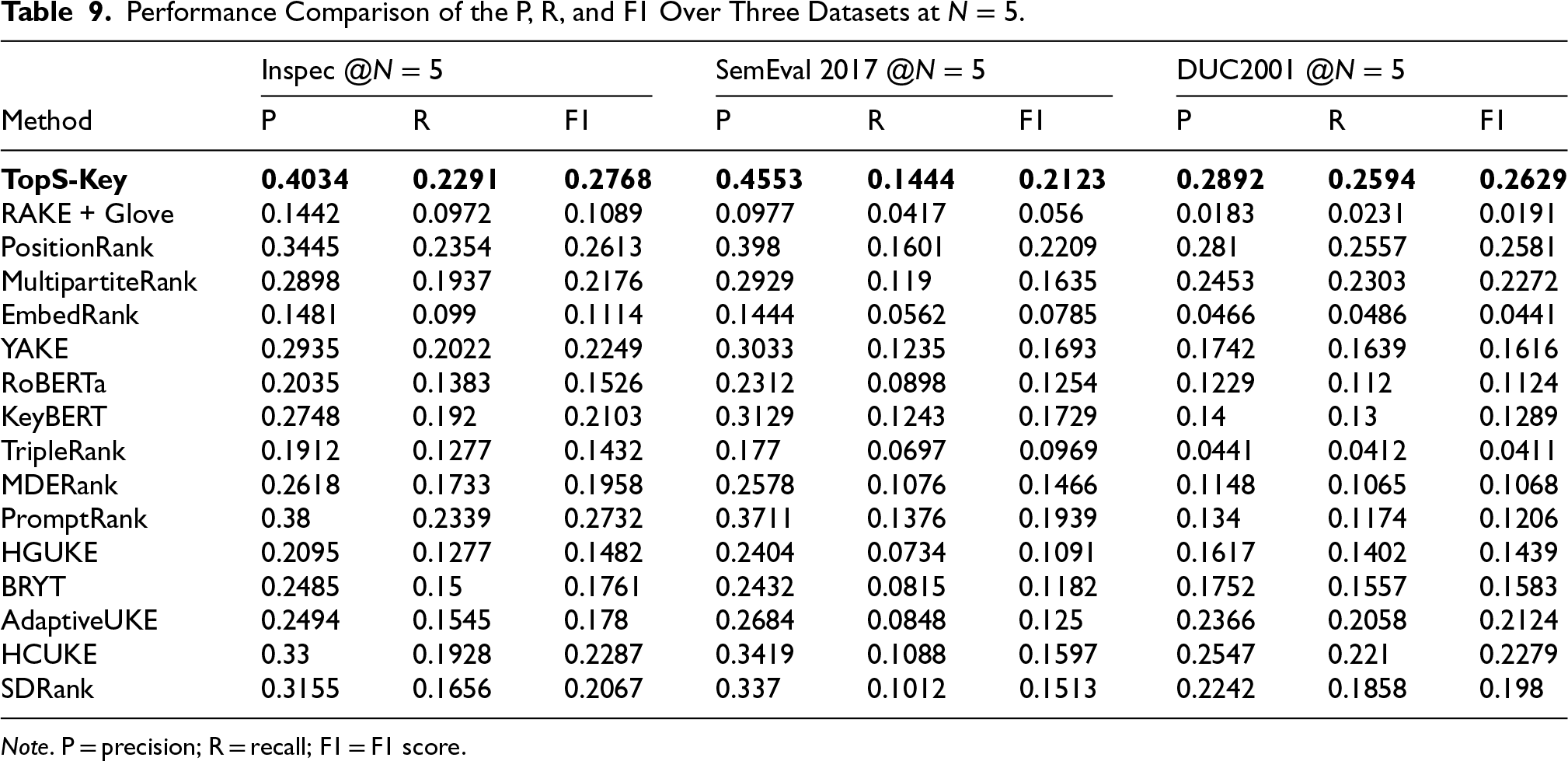

We calculated the scores precision (P), recall (R), and F1 score (F1) for

Performance Comparison of P, R, and F1 Over Three Datasets at

.

Performance Comparison of P, R, and F1 Over Three Datasets at

Note. P = precision; R = recall; F1 = F1 score.

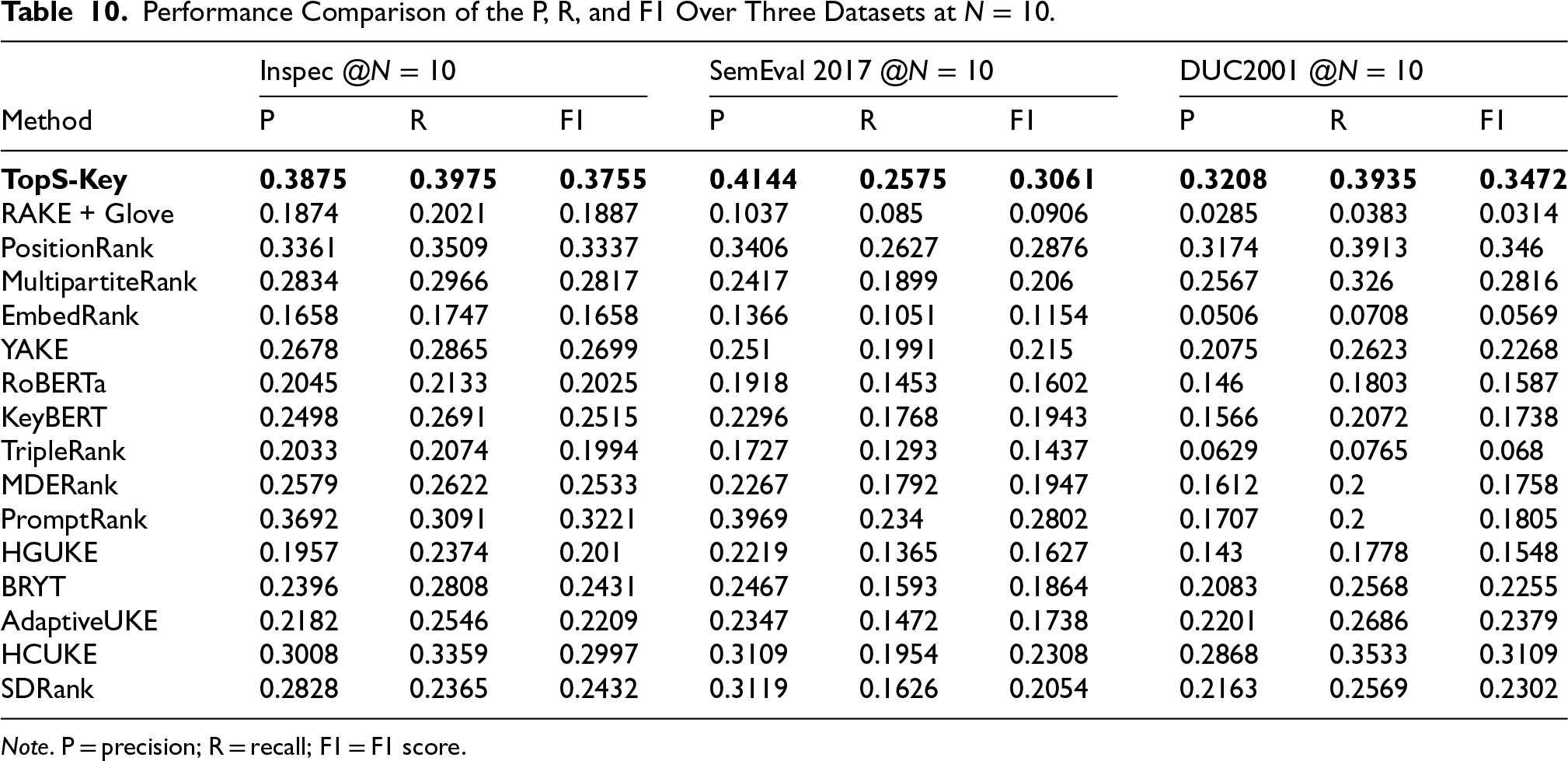

Performance Comparison of the P, R, and F1 Over Three Datasets at

Note. P = precision; R = recall; F1 = F1 score.

Performance Comparison of the P, R, and F1 Over Three Datasets at

Note. P = precision; R = recall; F1 = F1 score.

To ensure fairness, all models were evaluated on the same datasets, Inspec, SemEval 2017 Task 10, and DUC2001, using a unified semantic similarity-based evaluation protocol with a similarity threshold of 0.9. This consistent framework allowed us to compute precision, recall, and F1 score across Top-

We can observe that the results clearly indicate the superior performance of our proposed method, TopS-Key, compared to other existing approaches.

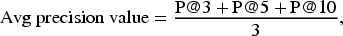

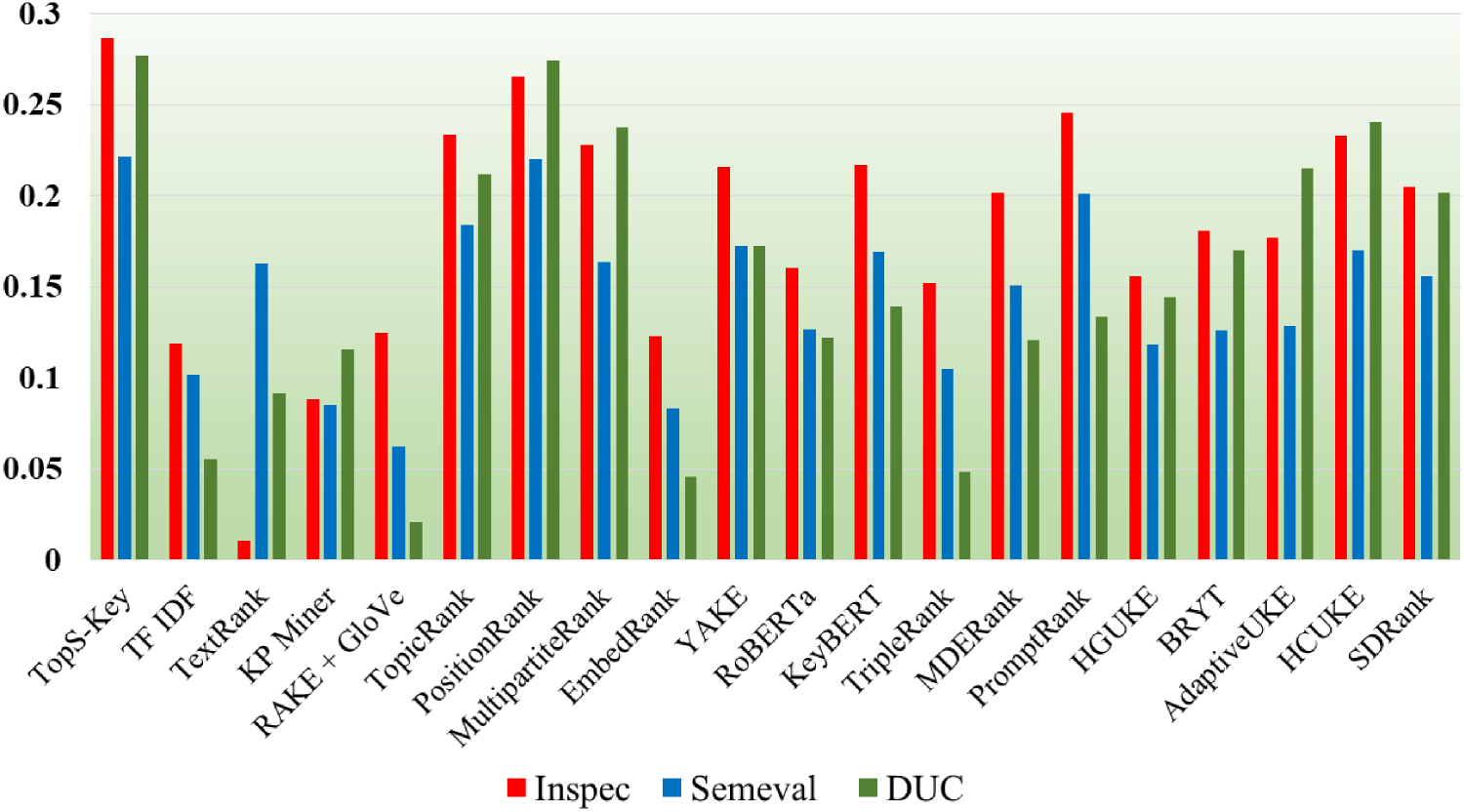

Now, we will illustrate the comparison of TopS-Key with the state-of-the-art approaches over precision, recall, and F1 score, taking the average of the scores at

In Figure 10, we display a comparison graph based on average precision for the three datasets. We observe that in DUC, the precision is the least as compared to other datasets, Inspec and SemEval. This indicates that for the longer documents that contain more words, including irrelevant information and noise, our proposed method predicts more keyphrases, many of which could be irrelevant or unnecessary. As the document’s length grows, the context in which words are used can become more complex or ambiguous, leading to more FP predictions. Longer documents may cover a broader range of topics, making it harder for the proposed model to pinpoint the most relevant keyphrases. As a result, the model may predict keyphrases that aren’t relevant to the core content of the document.

Comparison of TopS-Key with the State-of-the-Art Approaches Over Average Precision Value.

The precision values across the different datasets highlight the superior performance of the proposed method compared to many of the latest advanced methods in the field. It consistently outperforms most of the baseline approaches, achieving precision scores of 0.405 for Inspec, 0.445 for SemEval, and 0.311 for DUC. In contrast, traditional methods such as RAKE + GloVe show significantly lower precision across all datasets, as the proposed method outperforms these traditional methods by 169.5%, 182.4%, and 205.6% for Inspec, SemEval, and DUC, respectively, suggesting far more effective keyphrase extraction. While other advanced methods of recent years, including PositionRank, demonstrate competitive performance, where they lag by approximately 5% to 30% they still fall short, particularly on SemEval and Inspec. Even methods leveraging semantic embeddings and pretrained language models, such as RoBERTa (0.2099) and KeyBERT (0.2864), show moderate precision scores but trail behind by about 20% to 30%. This underscores the advantage of incorporating advanced features and optimization techniques, as the proposed method demonstrates up to 50% higher precision, particularly in datasets such as SemEval, where it achieves the highest precision.

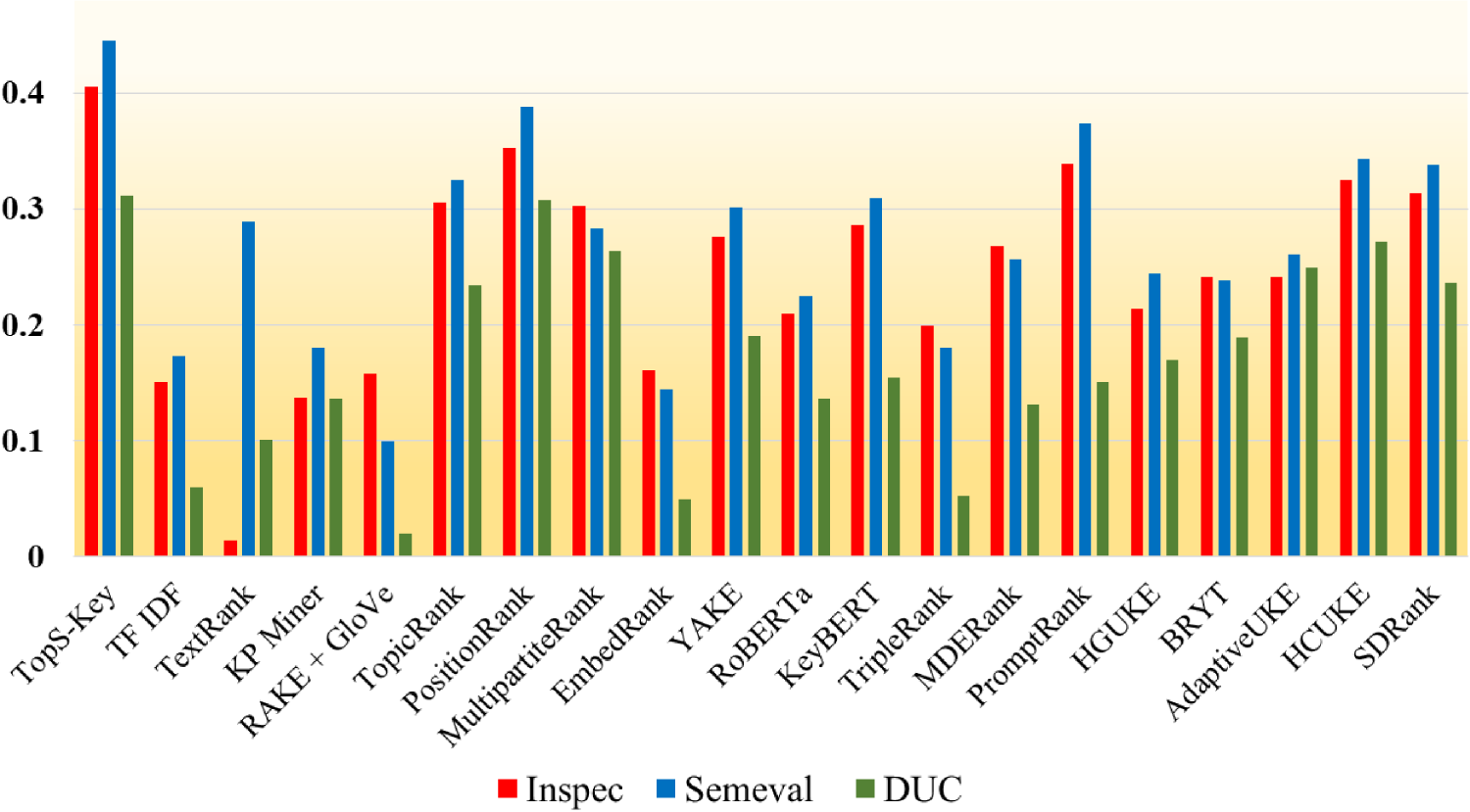

In Figure 11, we can observe that our proposed model has the highest recall compared to other methods, particularly excelling in longer documents, that is, in the DUC dataset. This indicates that our model is very effective at capturing the majority of actual keyphrases, even when the document length increases. The high recall suggests that the model performs comprehensive keyphrase extraction, ensuring that relevant phrases are not missed.

Comparison of TopS-Key with the State-of-the-Art Approaches Over Average Recall Value.

TopS-Key achieves recall scores of 0.2582 for Inspec, 0.1636 for SemEval, and 0.2775 for DUC. In comparison, traditional methods such as RAKE + GloVe, show much lower recall scores. The proposed method outperforms these methods by around 130% to 220%, indicating more effective keyphrase extraction. For example, on SemEval, PositionRank achieves 0.1586, trailing by about 3.4% behind the proposed method. Methods such as YAKE and KeyBERT, with recall scores of 0.2024 and 0.2029 for Inspec, and 0.1361 and 0.1283 for SemEval, show comparable performance but still underperform by around 20% to 30%. Even methods that use semantic embeddings and pretrained models, such as RoBERTa (0.1507) and MDERank (0.1851), lag behind by 30% to 50% in recall. Overall, the proposed method offers improvements of 10% to 50% over most of the competing methods, demonstrating its superior ability to capture relevant keyphrases, especially on datasets such as DUC and SemEval.

We also observe that while the model is successful at identifying most of the keyphrases, it also predicts a higher number of irrelevant phrases, leading to more FPs in longer texts. Thus, our model is highly effective in scenarios requiring high recall, ensuring that most keyphrases are identified.

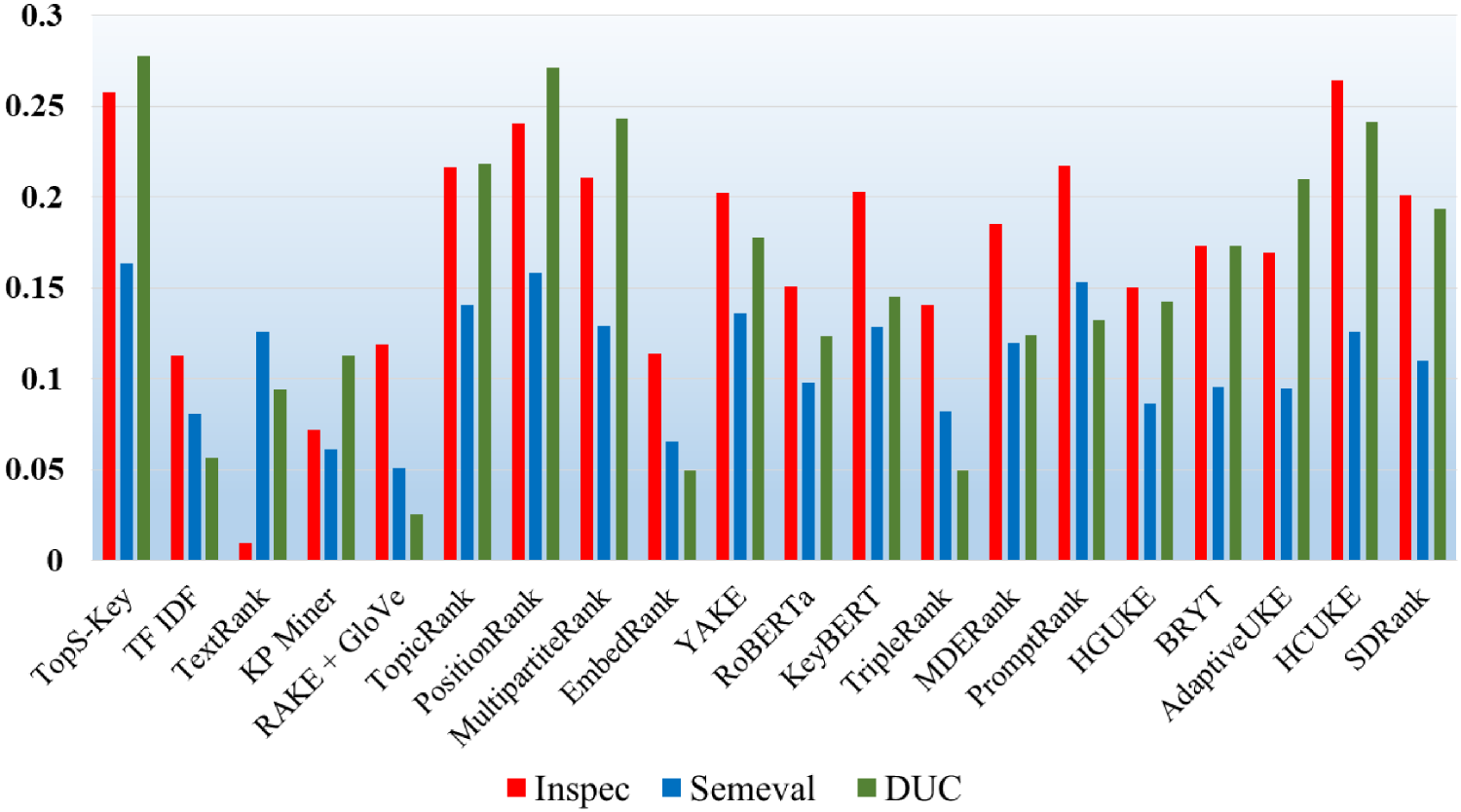

In Figure 12, we depict a comparison graph based on the average F1 scores of three datasets. As the harmonic mean of precision and recall, the F1 score tends to follow a more complex pattern. We can observe that our proposed model has the highest F1 score compared to other methods.

Comparison of TopS-Key with the State-of-the-Art Approaches Over Average F1 Score.

The F1 score results across the three datasets demonstrate that the proposed method outperforms many of the latest advanced methods in the field. It is specifically found that the proposed method achieves F1 scores of 0.2868 for Inspec, 0.2214 for SemEval, and 0.2769 for DUC, with Inspec achieving the best F1 and SemEval having the lowest. These scores are notably higher than traditional methods such as RAKE. This translates to the proposed method outperforming these methods by significant margins, ranging from 100% to over 200%. More advanced methods, including PositionRank, yield competitive F1 scores, with PositionRank achieving 0.2656 for Inspec, 0.2202 for SemEval, and 0.2747 for DUC. While these methods show some strength, they still fall short of the proposed method by about 5% to 10%.

Methods such as YAKE (0.2155 for Inspec and 0.1727 for SemEval) and KeyBERT (0.2168 for Inspec and 0.1695 for SemEval) perform similarly, but the proposed method still surpasses them by a notable margin of 10% to 30%. Even techniques that leverage advanced models, such as RoBERTa (0.1602 for Inspec and 0.1265 for SemEval) and MDERank (0.2019 for Inspec and 0.1508 for SemEval), show moderate performance, but the proposed method still achieves improvements of approximately 30% to 50% over these models. Overall, the proposed method consistently delivers superior performance, with improvements of 20% to 100% over most baseline and advanced models, particularly excelling on datasets such as Inspec and DUC.

The superior performance of TopS-Key can be attributed to its synergistic integration of multiple keyphrase relevance dimensions, statistical, contextual, syntactic, and positional, within a unified multicriteria decision-making framework. In particular, the use of contextual PCA enables effective dimensionality reduction while preserving semantically discriminative components, and adaptive Shannon entropy weighting highlights informative yet nonredundant features across candidate keyphrases. Moreover, the incorporation of Fuzzy TOPSIS ensures robust ranking by accounting for both ideal and anti-ideal reference points across multiple criteria. This carefully orchestrated combination enables TopS-Key to maintain high precision and recall even across domains with diverse vocabulary, structure, and content density.

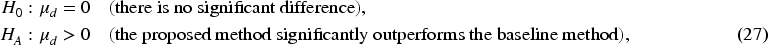

To validate the effectiveness of our proposed method, we perform a series of three statistical significance tests to ensure that the observed improvements are not due to random variations. These tests quantitatively assess whether the performance differences between our approach and the baseline methods are statistically significant.

Paired t-Test for Performance Evaluation

To assess the statistical significance of the performance difference between our proposed method and the baseline methods, we perform a paired

The paired

We conduct a paired

Paired T-Test Results on Precision Scores for Inspec (I), SemEval (II), and DUC2001 (III), Indicating Statistical Significance of TopS-Key.

In Figure 13(i)(Inspec), all

In addition to the paired

The

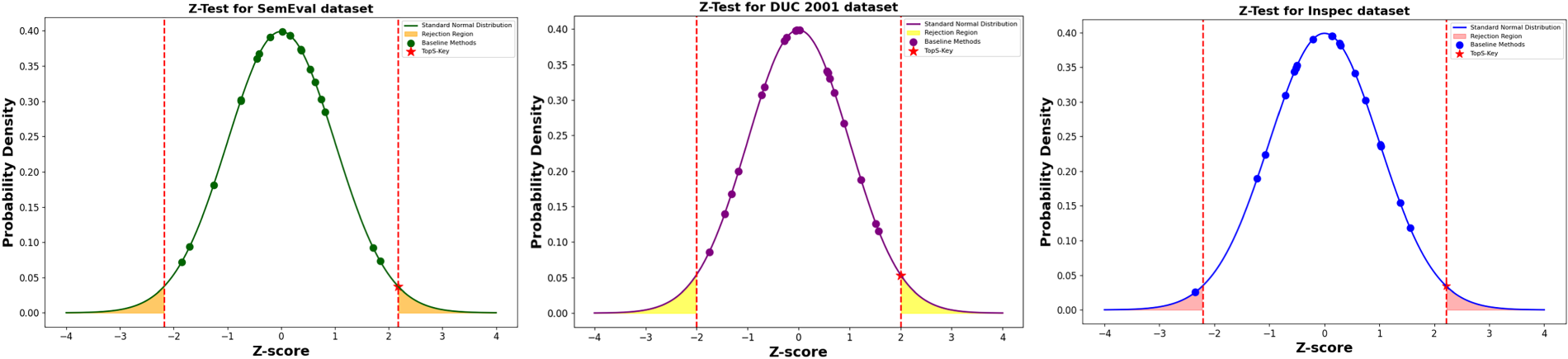

To validate the significance of the observed performance differences, we perform a two-tailed

Paired T-Test Results on Precision Scores for Inspec (I), SemEval (II), and DUC2001 (III), Indicating Statistical Significance of TopS-Key.

As shown in Figure 14, the

The null hypothesis assumes that TopS-Key performs similarly to the baselines. However, all baseline

Together, the paired

Following the paired

The

If

Since the ANOVA test only determines whether a significant difference exists but does not specify which methods differ, we apply the Tukey honestly significant difference (HSD) test for post-hoc pairwise comparisons.

Tukey HSD evaluates all possible pairwise differences among methods using the studentized range distribution (Abdi & Williams, 2010). The test statistic is computed using equation (33):

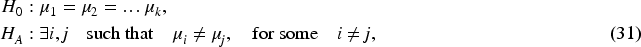

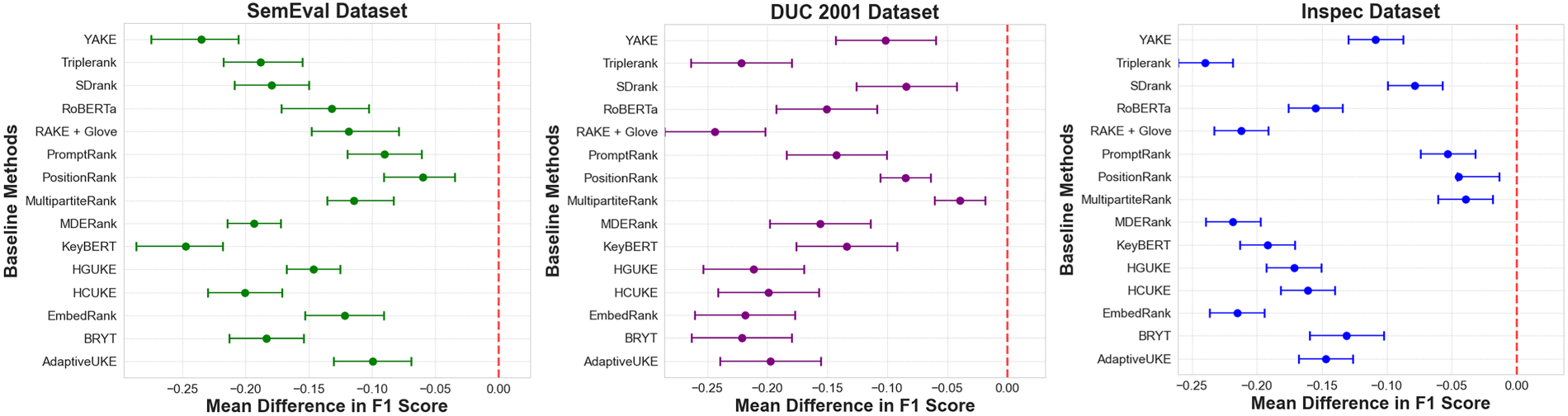

We further illustrate the F1 score distributions using Figure 15, where each box represents the distribution of F1 scores for a given method. The median, interquartile range, and outliers provide insights into the variability across methods. The statistical significance observed in the ANOVA and Tukey HSD tests is reflected in the variations among these distributions.

Tukey Honestly Significant Difference (HSD) Analysis Results on F1 Scores for Inspec (I), SemEval (II), and DUC2001 (III), Indicating Statistical Significance of TopS-Key.

Figure 15 presents the mean difference in F1 scores for baseline methods across the three datasets. The observed negative mean differences, consistently below zero, indicate that all baseline methods underperform compared to our approach. This confirms that our method achieves statistically significant improvements over existing techniques. The results allow us to reject the null hypothesis, affirming that the observed performance gains are not due to random variations but rather to the effectiveness of our feature-driven optimization. The larger performance gap in the DUC dataset further highlights our method’s robustness in handling complex and lengthy documents, while the consistent trends across all datasets reinforce its generalizability.

The consistently outstanding performance of our proposed method across all benchmark datasets is the result of its carefully designed integration of statistical, syntactic, contextual, and positional features within a robust multicriteria framework. Unlike traditional statistical techniques, which focus primarily on word frequency or co-occurrence statistics and fail to capture deeper semantic nuances or structural dependencies, our approach leverages a broad array of complementary features. This results in a more comprehensive evaluation of each keyphrase candidate’s importance.

Similarly, graph-based models MultipartiteRank and PositionRank build term co-occurrence graphs and extract keyphrases based on graph centrality measures. While effective in capturing local word relationships, they tend to ignore the broader contextual and semantic connections spanning across the entire document. Our method overcomes this shortcoming by incorporating structural insights alongside statistical and syntactic features, ensuring that both local dependencies and global context are accounted for. Nevertheless, PositionRank demonstrates consistently competitive performance across datasets, with its strength most evident on the INSPEC corpus. This dataset includes both titles and abstracts, where positional information strongly aligns with author-assigned keyphrases. By exploiting the occurrence of candidate terms in titles or early abstract segments, PositionRank capitalizes on structural regularities that are particularly informative in this domain, thereby achieving notably strong results. This explains why PositionRank secured the second-best overall performance in our comparative evaluation, despite being developed earlier than several more recent models.

Embedding-based methods, such as KeyBERT, EmbedRank, and BERT-based approaches, rely heavily on pretrained language models to represent semantic relationships. Although these models excel at capturing word and phrase meanings, they are often constrained by their dependence on external pretrained embeddings, which may not fully adapt to domain-specific language use or the unique characteristics of individual documents. Moreover, these embedding techniques typically evaluate candidate phrases in isolation, without integrating positional or structural information. Our approach, by contrast, dynamically adapts to each document using PCA to reduce feature dimensionality, ensuring that the most informative and document-relevant variance is retained.

Despite their novelty, recent advanced methods such as PromptRank, HGUKE, BRYT, AdaptiveUKE, HCUKE, and SDRank failed to achieve superior performance in our experiments due to their reliance on specialized mechanisms that do not generalize well across datasets. PromptRank leverages large language model prompting strategies that are sensitive to prompt design and domain mismatch, while HGUKE and HCUKE rely heavily on heuristic graph construction that may overlook semantic nuance outside their intended domain. Similarly, BRYT and AdaptiveUKE emphasize adaptive strategies tailored to particular data characteristics, but their effectiveness diminishes when applied to heterogeneous corpora such as INSPEC, SemEval, and DUC. SDRank, although the most recent, prioritizes semantic density but underutilizes positional and structural cues, which are particularly critical in datasets containing titles and abstracts. As a result, these advanced approaches exhibit strong performance in constrained settings but remain less competitive in broad benchmark comparisons.

A pivotal strength of our framework lies in the application of Shannon entropy-based weighting, which adaptively assigns importance scores based on the distribution variability of each feature. This dynamic weighting mechanism prevents over-dependence on any single feature type and ensures that discriminative features are prioritized depending on dataset-specific characteristics. Traditional statistical models, in comparison, assign uniform weights without adjusting to interfeature variability, potentially missing important contextual signals.

Furthermore, the integration of fuzzy TOPSIS allows our model to effectively handle the inherent subjectivity and ambiguity involved in ranking keyphrases. While graph-based and statistical models often apply rigid criteria to select keyphrases, fuzzy TOPSIS treats the process as a multicriteria decision-making task. This enables our method to evaluate all feature contributions simultaneously, providing a more balanced, flexible, and context-sensitive ranking mechanism. Such flexibility is particularly beneficial when processing documents with varying sentence structures, writing styles, and topic domains, scenarios where embedding-based models or graph algorithms may struggle to generalize.

Another crucial factor contributing to our model’s success is its use of syntactic and positional features, including part-of-speech patterns, term positions, and sentence-level embeddings. Unlike models such as YAKE, which use statistical and positional information but lack mechanisms for feature weighting and dimensionality reduction, our method combines these cues with adaptive weighting and PCA, allowing for effective noise reduction and better interfeature interaction modeling.

While individual components such as PCA, entropy-based weighting, or fuzzy TOPSIS have been explored separately in prior keyword extraction and ranking studies, the novelty of our approach lies in the synergistic integration of these elements into a single adaptive framework. Shannon entropy-based weighting dynamically evaluates the discriminative power of features, PCA reduces dimensionality and mitigates feature redundancy, and fuzzy TOPSIS provides a flexible multicriteria ranking mechanism that accommodates uncertainty and subjectivity in keyphrase evaluation. In isolation, each of these techniques addresses a specific aspect of the extraction process, but their combined application enables document-specific adaptability, robust interfeature interaction modeling, and context-sensitive ranking, which are unattainable by any single component alone. This integration not only improves precision and recall across diverse datasets but also enhances interpretability and generalizability, demonstrating a clear advancement over previous methods that relied on individual techniques without exploiting their complementary strengths.

In summary, the superior performance of our approach is driven by the synergistic integration of heterogeneous features, document-specific adaptability, dimensionality reduction through PCA, entropy-based adaptive weighting, and flexible evaluation via fuzzy TOPSIS. This thoughtful combination enables our model to consistently outperform baseline methods, whether statistical, graph-based, embedding-based, or hybrid approaches across all datasets in terms of precision, recall, and F1 score.

The evaluation results clearly indicate that our method achieves not only state-of-the-art accuracy but also a strong balance between interpretability, adaptability, and robustness. The significant improvements over 17 baseline methods, confirmed by statistical significance tests such as paired