Abstract

Test-time adaptation enables a pre-trained model to update its weights during inference, in order to adapt to a target domain that has a different distribution from the source domain. This adaptation occurs without any supervision and often in a more challenging source-free setting where no data from the source domain are used. While test-time adaptation has received considerable attention for classification tasks, domain adaptation is equally important for other computer vision tasks, such as object detection. Many approaches consider a static target domain, which fails to simulate real-world conditions, where the target domain is non-stationary and the target distribution can gradually change over time. In this work, we focus on the continual test-time adaptation scenario, in which the target domain is continually changing over time. Leveraging the mean teacher framework for object detection, we stochastically restore a small part of the student’s weights to the source pre-trained model weights during adaptation. Additionally, we aim to enhance performance by using contrastive learning. After a consistent experimental work, it is shown that our proposed method compares favorably with the standard mean teacher approach.

Introduction

In real-world applications, domain shifts are inevitable due to the dynamic nature of the environments. These dynamically changing conditions can lead to degradation of the performance of the models. Especially, object detectors can suffer from a significant performance drop, due to the more challenging localization task they perform. For example, the performance of an object detector trained on data from clear daytime weather can degrade when tested on data from rainy nighttime conditions. Therefore, it is essential to be able to adapt to new domains and bridge the gap of domain shifts in order to maintain optimal performance across various settings.

In test-time adaptation (TTA), a pre-trained model can adapt to a target domain that has a different distribution from the source domain, during the inference phase in an online fashion. Adaptation is used in a self-supervised manner, leveraging unlabeled data streams. Given that labeling data are both time-consuming and expensive, this further highlights the value of self-supervised adaptation. In addition, due to data privacy issues, data from the source domain are considered unavailable, which leads to a more demanding scenario. Existing studies consider a static target domain, and the model is adapted through pseudo-labeling, entropy minimization, batch-normalization statistics, etc. However, these approaches may be unstable in a continually changing environment, where the target distribution is dynamically changing. Furthermore, research is required to achieve improved performance of existing methods in a non-stationary target domain.

Approaches that use the mean teacher (MT) framework for test-time domain adaptive object detection comprise two identical models. The teacher generates pseudo-labels on the target domain, which are then used for updating student’s weights through backpropagation. Teacher’s weights are updated as the exponential moving average (EMA) of the student’s weights. A teacher can be considered as a weighted average of consecutive student models. Unfortunately, MT self-training can suffer from noisy pseudo-labels. As the domain continually changes, pseudo-labels become unreliable and mis-calibrated. This can result in a degradation in the performance of the detector. However, contrastive learning (CL) does not require accurate labels for the learning process. It can produce robust features by pulling together representations of similar instances and pushing apart representations of dissimilar instances, even with noisy pseudo-labels. Specifically, contrastive mean teacher (CMT; Cao et al., 2023) introduces object-level CL that focuses on learning representations at the level of individual objects. These representations are beneficial for both the localization and the classification tasks in object detection and can enhance the performance of the MT framework. Moreover, as the model is adapted to continually changing distributions for a long time, retaining knowledge from the source domain becomes challenging. This phenomenon is referred to as catastrophic forgetting. CoTTA (Wang et al., 2022) proposes to stochastically restore a small part of the students’ weights to the source pre-trained model weights during adaptation. This strategy aims to preserve the source knowledge and prevent forgetting.

In this paper, we integrate MT with object-level CL, similar to CMT, but in a source-free setting. The object-level CL is applied to a one-stage object detector, namely YOLOX (Ge et al., 2021), in contrast to CMT, which uses a two-stage object detector. We further enhance its performance with the stochastic restoration (SR) technique. Our method shows an improvement over the existing baseline.

The key contributions of this work can be summarized as follows: We propose a novel approach for continual TTA that combines MT with object-level CL and SR. Our method operates in a source-free setting, without using any data from the source domain, and can directly deploy the pre-trained model. Furthermore, it is agnostic to domain shifts that may occur during inference. We demonstrate that incorporating object-level CL into a one-stage object detector enhances its performance, highlighting that competitive results can be achieved without relying on more complex two-stage detectors. Our proposed method is thoroughly evaluated on a variety of standard datasets, which can serve as benchmarks for continual TTA techniques. Our approach achieves improved performance compared to the existing baseline for object detection.

Related Work

Unsupervised Domain Adaptation (UDA)

UDA refers to the setting in which there is a shift between the labeled source domain and the unlabeled target domain. Labeled data from the source domain are available during the adaptation process. Many methods aim to align the feature distributions between the two domains, using discrepancy losses or adversarial training (Chen et al., 2020a; Ganin et al., 2017; Tzeng et al., 2017). Recently, self-training methods have also received attention and have been extended from semi-supervised to unsupervised settings, utilizing pseudo-labels for supervision and relying on the MT framework (Tarvainen & Valpola, 2017).

Test-Time Adaptation (TTA)

TTA methods leverage the available test data during inference to adapt to the target domain. In numerous studies, TTA is regarded as a source-free domain adaptation, where labeled source data are unavailable and cannot be used for the adaptation process. The model is adapted for multiple epochs on the data from the target domain before being used to generate the final predictions. This process operates in an offline manner, so that the target data are available and can be fed into the model multiple times. However, in some applications where the network is updated in real-time, the target data may not be available beforehand. Additionally, predictions might be needed immediately and may be impossible to store the target data after making predictions. All these factors have led to the development of online TTA methods (Wang et al., 2024), which aim to adapt the model using only the current batch of available data.

Test data provide valuable information about the domain shifts, leading researchers to focus on updating batch normalization (BN) statistics during the testing process (Li et al., 2018). While these techniques require only a forward pass, recent TTA approaches perform a backward pass to update the weights of the model, by entropy minimization with respect to the BN parameters of the model (Wang et al., 2021). Other methods, especially used in object detection, utilize pseudo-labels to update the model and are focused on improving the quality of these predicted pseudo-labels (Chen et al., 2023; He et al., 2023). Typically, a student–teacher model is employed for this purpose (Chen et al., 2022, 2023; Deng et al., 2021; He et al., 2023; Li et al., 2022; Sinha et al., 2023). These self-training approaches, which are often combined with CL or other self-supervised learning techniques, assume that the pre-trained model on the source domain has some degree of generalization ability to the target domain due to the similarity between the two domains (Li et al., 2024; Wang et al., 2024). Therefore, the network parameters can be updated using the predictions on the new data, in order to improve performance.

Many works are designed under the assumption of having a batch of test data available, which is unrealistic for online real-world applications. Some other approaches focus on online TTA, where only one test sample is available in each step (Bartler et al., 2022; Zhang et al., 2022). Other studies require the retraining of the pre-trained model and cannot use it directly. For example, Liang et al. (2020) trained a specialized source model using the label-smoothing technique in conjunction with a weight normalization layer and Chen et al. (2023) had to compute the feature distributions of the source domain in an offline manner. Some methods use self-attention mechanisms to better focus on important features in the data (Li et al., 2024). There are also some approaches which aim to align the source and target domains, based on domain reconstruction techniques or to reduce domain shifts, based on extracted information from the data (Li et al., 2024).

Continual TTA

TTA methods aim to adapt the model to a single target domain while trained on a source domain. In real-world applications, a model can encounter many domain shifts, and it should be able to adapt to the gradual changes. Continual TTA considers the setting where the target domain is changing over time. TTA methods may lead to error accumulation and catastrophic forgetting when the target distribution is non-stationary (Wang et al., 2022).

The first method addressing the problem of continual TTA setting is CoTTA (Wang et al., 2022). CoTTA introduces weight and augmentation-averaged predictions to minimize error accumulation and SR to prevent forgetting. Some additional studies have been published that focus specifically on the continual TTA setting, such as RMT (Döbler et al., 2023) and AR-TTA (Sójka et al., 2023). RMT utilizes CL to bring the test feature space closer to the source domain, where the pre-trained model is well established. AR-TTA utilizes a small memory buffer to store exemplars from the source domain. All the aforementioned methods address the problem of continual TTA setting for classification, and some are extended for segmentation.

Research on continual TTA for object detection is not adequately explored. The proposed methods and the existing benchmarks are still quite limited. This problem was investigated in the “ICCV VCL 2023 Challenge B” (vcl_workshop_2023, 2023), but only one technical report has been published by one of the participating teams (Lin et al., 2023). Some other recent approaches include Mirza et al. (2023) and Yoo et al. (2024). Mirza et al. (2023) explore both classification and object detection, focusing primarily on online adaptation, but also tested in a continual adaptation scenario. The method adapts the model to out-of-distribution data by aligning activation statistics across multiple layers of the network. Yoo et al. (2024) investigate the online domain adaptation scenario for object detection in continually changing test domains, introducing architecture-agnostic adaptor modules that update only lightweight parts of the model to prevent catastrophic forgetting, resolve domain shifts through class-wise feature alignment, and determine when further adaptation is needed. Further development of specialized datasets, performance metrics, and baselines is essential, and more research is needed, in order to explore this area.

Methodology

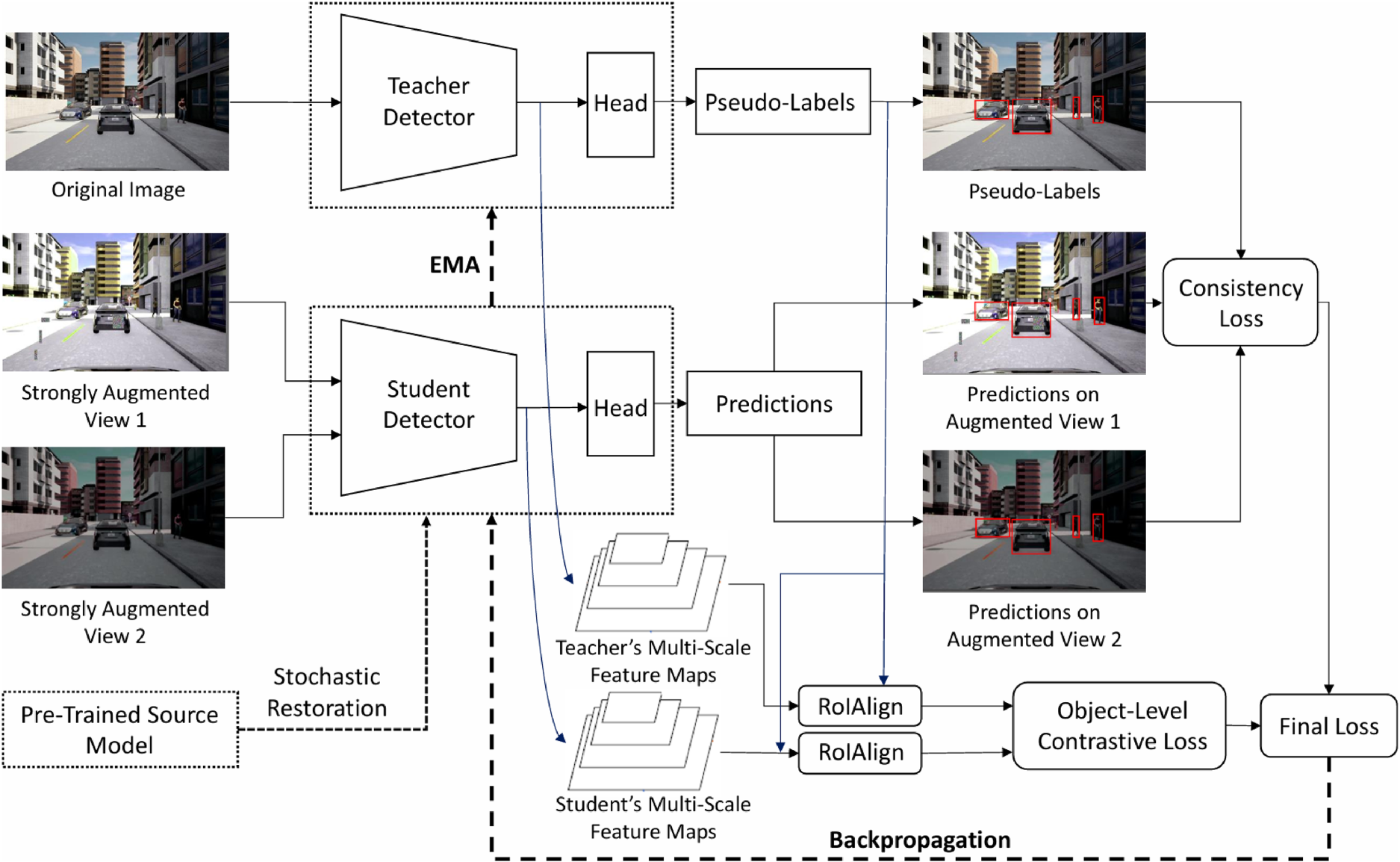

In this work, we combine the SR technique used in CoTTA (Wang et al., 2022) with the MT framework for object detection. We further enhance the model’s performance by using object-level CL inspired by the novel CMT (Cao et al., 2023). Our approach does not use data from the source domain during adaptation, and the pre-trained model can be deployed directly, without requiring retraining. We aim at enhancing the performance of the pre-trained model on the continually changing target domain by leveraging the sequentially provided test data. The model only has access to the test data of the current time step, which leads our setting to be an online setting. An overview of our proposed method is given in Figure 1.

An Overview of Our Proposed Method. The Original Target Image is Fed Into the Teacher Model, While the Student Model Receives two Different Strongly Augmented Views. The Student Detector is Updated Based on the Final Loss Through Backpropagation. The Teacher’s Weights Are Updated as the EMA of the Student’s Weights. A Small Part of the Student’s Weights is Stochastically Restored to the Source Pre-Trained Model’s Weights.

The MT architecture was initially proposed for semi-supervised learning, but was extended for unsupervised or self-supervised learning by leveraging pseudo-labeling. The MT for object detection consists of two identical detectors, which relate to the teacher and the student, respectively. Both detectors take as input the images from the target domain. The teacher generates pseudo-labels based on its predictions on the target data.

A consistency loss (bounding box regression loss and classification loss) is calculated between the pseudo-labels generated by the teacher detector and the predictions of the student. The student detector is updated based on the consistency loss through backpropagation. Then, the teacher’s weights

The mutual knowledge transfer that occurs in the MT framework has demonstrated promising results for domain adaptive object detection (Cao et al., 2023). Unfortunately, self-training can suffer from noisy pseudo-labels. As the domain continually changes, pseudo-labels become unreliable and mis-calibrated. This can result in a degradation in the performance of the detector. Many works have focused on correcting the pseudo-labels and trying to make them more reliable (Chen et al., 2023; He et al., 2023). In object detection, correcting pseudo-labels presents a greater challenge compared to classification, as it involves adjusting not just the labels themselves but also their corresponding positions. Furthermore, in continual TTA, the adaptation for a long time may lead to catastrophic forgetting. Inspired by the work in CMT (Cao et al., 2023), we use CL, which does not depend on accurate pseudo-labels during the learning process. Additionally, to minimize forgetting, we employ SR as presented in CoTTA (Wang et al., 2022). A detailed description of these methods is given in the following sections.

Continual TTA for a long time, by MT self-training can lead to forgetting. This happens because when there is a sequence of domain shifts, the model is continually adapted to the new domains, and after many updates of the model, the knowledge from the initial source domain is lost. Moreover, strong domain shifts may lead to very noisy pseudo-labels, introducing errors in the model’s updates. If the model’s update is significantly incorrect after encountering challenging examples, it may struggle to recover, even when the new data are not severely shifted. To preserve the knowledge of the source domain and mitigate the impact of incorrect model updates, we utilize the SR method proposed in CoTTA (Wang et al., 2022). In this approach, we stochastically restore a small part of the student’s weights to the source pre-trained model’s weights during adaptation.

A convolution layer within the student model, after a gradient update at time step

This restoration mechanism aims to stabilize learning by preserving source knowledge and reducing catastrophic forgetting during continual adaptation. SR can be interpreted as a structured regularization technique similar to dropout (Srivastava et al., 2014). By randomly resetting a small subset of trainable weights to their initial values prevents the model from deviating excessively from the source representation, thereby reducing the risk of catastrophic forgetting. This mechanism allows the entire network to remain fully trainable without suffering from model collapse, thus offering greater flexibility during adaptation.

In addition to the consistency loss discussed in Section 3.1, we also employ a contrastive loss for updating the student. This method was inspired by CMT (Cao et al., 2023) and is capable of generating robust features by bringing together representations of similar instances, while pushing apart representations of dissimilar instances, even when the pseudo-labels are noisy.

Initially, we extract object-level features based on the features and the pseudo-labels generated by the teacher. We then utilize these features to calculate a class-based contrastive loss, inspired by supervised CL (Khosla et al., 2020) and self-supervised CL (Chen et al., 2020b).

Object-Level Features

We generate pseudo-labels using the teacher detector. Regions of interest (RoIs) are given by the bounding boxes of the predicted pseudo-labels. Next, we extract the feature maps of the student’s and the teacher’s backbones. To extract object-level features from RoIs within an image, we use RoIAlign (He et al., 2017). This pooling operation addresses challenges related to misalignment between the RoIs and the underlying feature maps. Finally, we normalize the extracted object-level features according to standard procedures, as described in Khosla et al. (2020). If augmentations on the input images alter bounding boxes differently, we need to transform the bounding boxes of the pseudo-labels to align the two feature maps.

Class-Based Contrastive Loss

Inspired by supervised CL (Khosla et al., 2020), we utilize the classes predicted by the teacher to calculate the class-based contrastive loss:

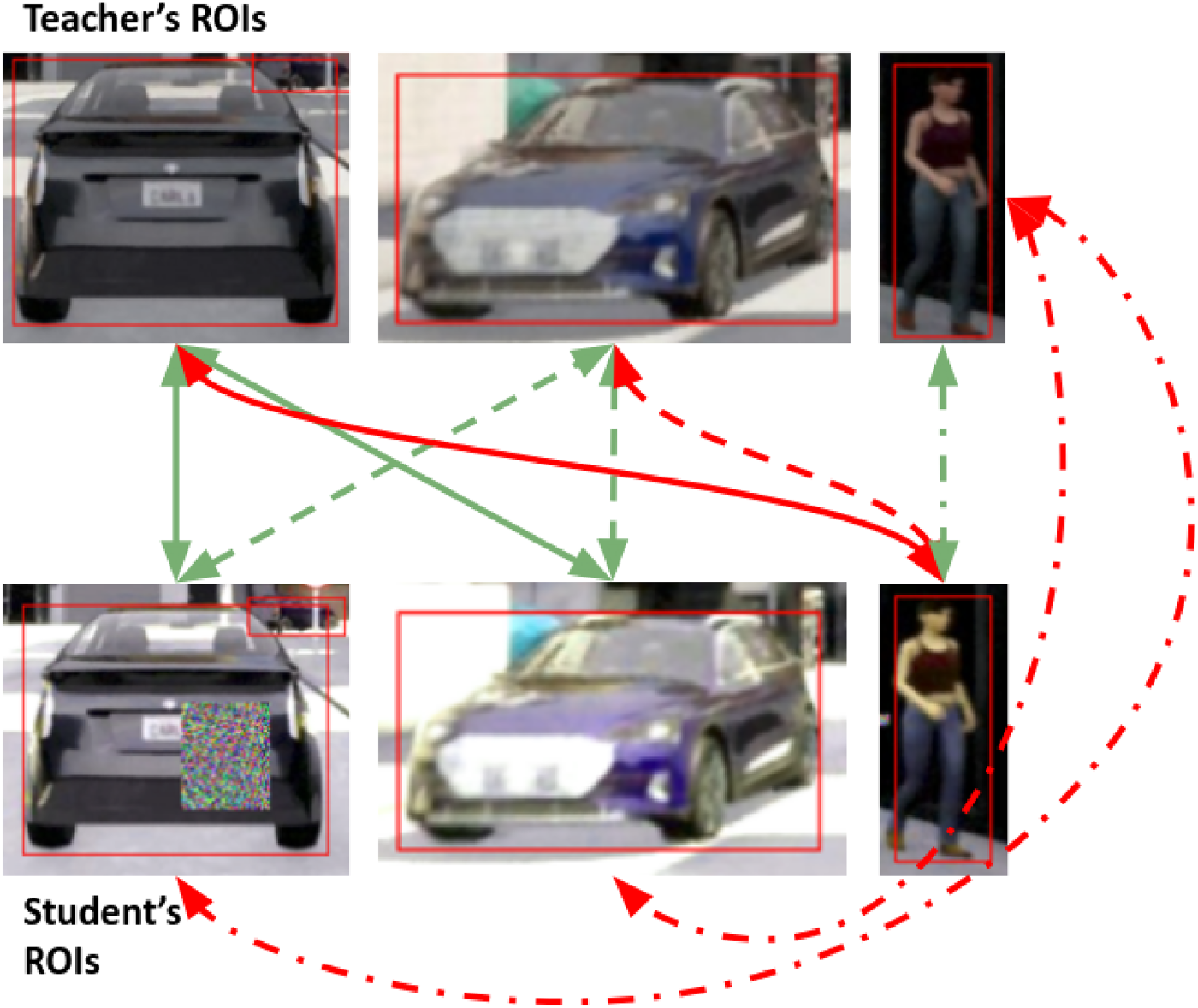

The object-level CL method is illustrated in Figure 2. In this example, the teacher’s object-level features of the original images are pulled closer to the student’s object-level features of the strongly augmented images when they have the same pseudo-labels, while features with different pseudo-labels are pushed apart (supervised contrastive loss).

Object-Level Contrastive Learning Method. Object-Level Features Are Extracted From Regions of Interest (RoIs) by using the Pseudo-Labels Along With the Feature Maps From Both the Teacher and Student Models. Contrastive Learning Aims to Pull Together Representations of Similar Instances (Shown by “Green” arrows) While Pushing Apart Representations of Dissimilar Instances (Shown by “Red” Arrows). The Continuous Arrows Illustrate the Relationships Between the First Detection of the Teacher and all Student Detections. The Dashed Arrows Represent Relationships Between the Second Teacher Detection and the Student Detections, While the Dash-dot Arrows Indicate Relationships Involving the Third Teacher Detection.

To maximize the benefits of object-level CL, multi-scale feature maps are extracted from the YOLOX detector. Our architecture employs a CSPDarknet backbone and a YOLOXPAFPN neck, which together produce three scales of feature maps corresponding to down-sampling factors of

Finally,

Datasets, Settings, and Metrics

In our experimental work, a consistent evaluation framework was employed that comprises a variety of standard datasets, namely SHIFT (Sun et al., 2022), KITTI (Geiger et al., 2013, 2012), Cityscapes (Cordts et al., 2016; Sakaridis et al., 2018), C-CLAD (Verwimp et al., 2023), and COCO-C (Hendrycks & Dietterich, 2019; Lin et al., 2014).

All datasets but the SHIFT dataset consist of images, while the SHIFT dataset is composed of videos. Our experiments followed a scenario as in Mirza et al. (2023) and Yoo et al. (2024), where they begin with a pre-trained model on the source domain, undergo several consecutive domain shifts, and finally return to the source domain to assess how well knowledge is preserved during the adaptation process. Synthetic images simulating domain shifts are used in four out of five datasets, with CLAD-D being the only one that includes realistic images from shifted domains.

Detailed information for understanding the particularities of each dataset is given in the following sections.

SHIFT

We evaluate our method on the continuous validation set of the SHIFT dataset (Sun et al., 2022), and we follow the setting that was specified by “ICCV VCL 2023 Challenge B” (vcl_workshop_2023, 2023). The SHIFT dataset consists of six classes and is divided into two main sets. The discrete set contains images depicting various weather conditions and times of day, while the continuous set consists of 40-second video sequences in which the driving conditions gradually change. The weather conditions in the dataset include clear, foggy, cloudy, overcast, and rainy, while the times of the day comprise daytime, night, and dawn/dusk.

We use the pre-trained model that was provided by the organizers of the challenge, which is a YOLOX object detector, trained on the SHIFT clear-daytime discrete train/val set. We evaluate our method on six validation videos presenting continuous domain shifts starting from the clear-daytime conditions. Another method that participated in the competition is outlined in Lin et al. (2023). However, this method uses information about domain shifts observed in the validation set and simulates these shifts during training to enhance the model’s performance. Thus, it may not be effective when domain shifts are unknown during the training process. Our approach is evaluated in a per-sequence manner, where the model is assessed on each sequence separately. After the evaluation in each sequence, the MT model (both teacher and student detectors) is reset to its source state before evaluating the next sequence. The evaluation metric is the average performance across all sequences.

To assess the effectiveness of our approach in adapting to the shifted domain while also retaining knowledge from the source domain, we divide each video into three parts. Then, we calculate the mean average precision (mAP) for each of these parts rather than solely reporting the overall mAP for the whole sequence. The first part contains information only from the source domain (first 20 frames), the middle part contains information about the shifted target domain (frames 180–220), and the last part loops back to the source domain (last 20 frames). These three mAP values are denoted as mAP_Source, mAP_Target, and mAP_Loopback, respectively. Then, we use the “Drop” metric that was specified in the “ICCV VCL 2023 Challenge B”:

The KITTI (Geiger et al., 2012, 2013) training set consists of 7,481 images, which are divided into two parts. One part, containing 3,740 images, is used as a training set, while the other part, comprising 3,741 images, is designated as the validation set. The network is initially trained on the 3,740 images from the training set. Afterward, the 3,741 images from the validation set are used to assess the network’s performance. This validation set serves as the source domain. Subsequently, we simulate four synthetic domains under varying weather conditions, as described in publications (Mirza et al., 2023; Yoo et al., 2024). Finally, we evaluate our proposed adaptation method on the following scenarios: Fog, Rain, Snow, and Clear, which includes a total of 14,964 images for domain adaptation across these four distinct domains. The Clear domain contains the images of the validation set of the Cityscapes dataset and is used to estimate the performance on the source domain after the adaptation.

Cityscapes

The network is initially trained on the Cityscapes (Cordts et al., 2016) training set, which consists of 2,975 images, and uses the validation set as the source domain, which includes 500 images. The Foggy Cityscapes dataset (Sakaridis et al., 2018) is a synthetic dataset that simulates fog in real-world urban scenes. The evaluation of the adaptation method during testing is conducted at three increasing levels of fog density. Finally, a set of clear-weather images from the Cityscapes validation set is included to assess the model’s performance under normal conditions after the adaptation process. Overall, the method is evaluated under the scenarios Low Fog, Medium Fog, High Fog, and Clear, which contains a total of 2,000 images.

CLAD-D

The “Continual Learning for Autonomous Driving (CLAD)” benchmark (Verwimp et al., 2023) is dedicated to autonomous driving, focusing on the problems of object classification and object detection. In this work, we encounter the dataset related to CLAD-D, which is a domain incremental continual object detection benchmark. It consists of four domains: Clear Weather-Daytime-City Streets, Clear Weather-Daytime-Highway, Night, and Rain-Daytime. This dataset is specifically designed for continual learning, where the model is trained sequentially across these domains and its performance is subsequently evaluated on all four. We introduce a setting suitable for continual TTA scenarios, based on these four domains. The domain Clear Weather-Daytime-City Streets is used for training the network, and the validation set of this domain serves as the source domain. This dataset contains 4,470 training images and 497 validation images. Then, the test sets from all four domains are used in the following sequence: Clear Weather-Daytime-Highway, Night, Rain, and Clear Weather-Daytime-City Streets. In total, 9,969 images are used for evaluating our domain adaptation technique.

COCO-C

The COCO dataset (Lin et al., 2014) is one of the most extensive and widely used datasets for training and evaluating computer vision algorithms. The COCO-C or corrupted COCO dataset simulates continuous and drastic domain shifts in the images of the COCO dataset. COCO-C is created using 15 types of realistic image corruptions (Hendrycks & Dietterich, 2019), such as image distortion or various weather conditions, to simulate various domain shifts from the original domain. For training the network, the 118,287 images of the COCO training set are used, and the 5,000 images of the validation set serve as the source domain. During adaptation, the model’s performance is evaluated sequentially on each set of corrupted images. Finally, the model is assessed on the original COCO validation set, referred to as Original, to evaluate its performance on the source domain after adaptation. This setting includes a total of 16 domains, and the adaptation method is evaluated on 80,000 images. The sequence of corruptions is as follows: Gaussian-Noise, Shot-Noise, Impulse-Noise, Defocus-Blur, Glass-Blur, Motion-Blur, Zoom-Blur, Snow, Frost, Fog, Brightness, Contrast, Elastic-Transform, JPEG-Compression, Pixelate, and Original. The same scenario is used in Yoo et al. (2024). This setting is particularly interesting for evaluating our adaptation method on long sequences and analyzing its performance.

Implementation Details

As mentioned earlier, we use the YOLOX object detector. In the MT framework, we set the smoothing coefficient

For the KITTI and CLAD-D datasets, the smoothing coefficient is

The hyperparameter tuning experiments revealed a clear tradeoff between adaptation and forgetting. When the model adapts too quickly to domain shifts, it tends to forget previously learned knowledge, especially in cases where large or abrupt adaptation steps are applied. Such rapid changes can lead to model degradation over time. To mitigate this, it is essential to find an optimal balance, where the model adapts efficiently to new domains while maintaining the integrity of previously learned information.

To reduce the computational complexity, SR occurs only after the last update of the student for the same augmented views of the input image, ensuring that intermediate steps do not require additional computational resources. In view of this, for each input image, the augmented views are processed five times. The model is also updated five times, with SR occurring only after the final update.

In CL, the teacher’s predictions are filtered by a confidence threshold, and non-maximum suppression is performed to generate the pseudo-labels. We use a confidence threshold of 0.7 and an intersection over union (IOU) threshold of 0.7 to retain predictions that are likely to be correct. Additionally, we extract multi-scale features from three stages of the backbone network. Other hyperparameters are the confidence threshold of the detectors, which is set to 0.01, the IOU threshold for non-maximum suppression is set to 0.7, and the optimizer used is stochastic gradient descent with a learning rate 0.00025, momentum 0.9, and weight decay 0.0005.

Last but not least, for the sake of clarity, it should be mentioned that the only difference from the metrics used for the SHIFT dataset lies in the definition of each domain. The source domain corresponds to the validation set of the domain on which the model was trained, and mAP_Source is calculated before the adaptation. The target domain includes all images where domain shifts occur. Finally, the loopback domain is identical to the source domain, but mAP_Loopback is calculated after completing the adaptation process. Ave_mAP indicates the total average mAP across all domains, including the loopback domain, while Target_Ave_mAP denotes the total average mAP for the shifted domains only.

Results

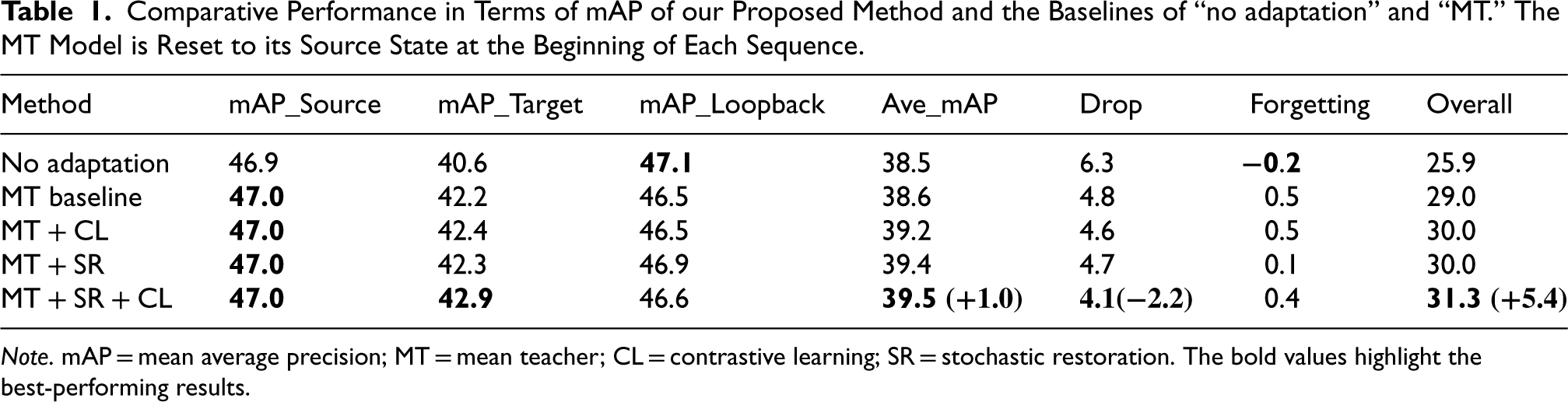

In Table 1, we report our results on the validation set of the SHIFT dataset (Sun et al., 2022) for sequences starting from clear-daytime conditions. Additionally, we include the baseline results for “no adaptation” and “MT” adaptation. Our method shows an improvement of +1.0 Ave_mAP compared to the existing “no adaptation” baseline. When SR and object-level CL are combined with MT, the “Drop” is reduced to 4.1, and the reduction equals to

Comparative Performance in Terms of mAP of our Proposed Method and the Baselines of “no adaptation” and “MT.” The MT Model is Reset to its Source State at the Beginning of Each Sequence.

Comparative Performance in Terms of mAP of our Proposed Method and the Baselines of “no adaptation” and “MT.” The MT Model is Reset to its Source State at the Beginning of Each Sequence.

Note. mAP = mean average precision; MT = mean teacher; CL = contrastive learning; SR = stochastic restoration. The bold values highlight the best-performing results.

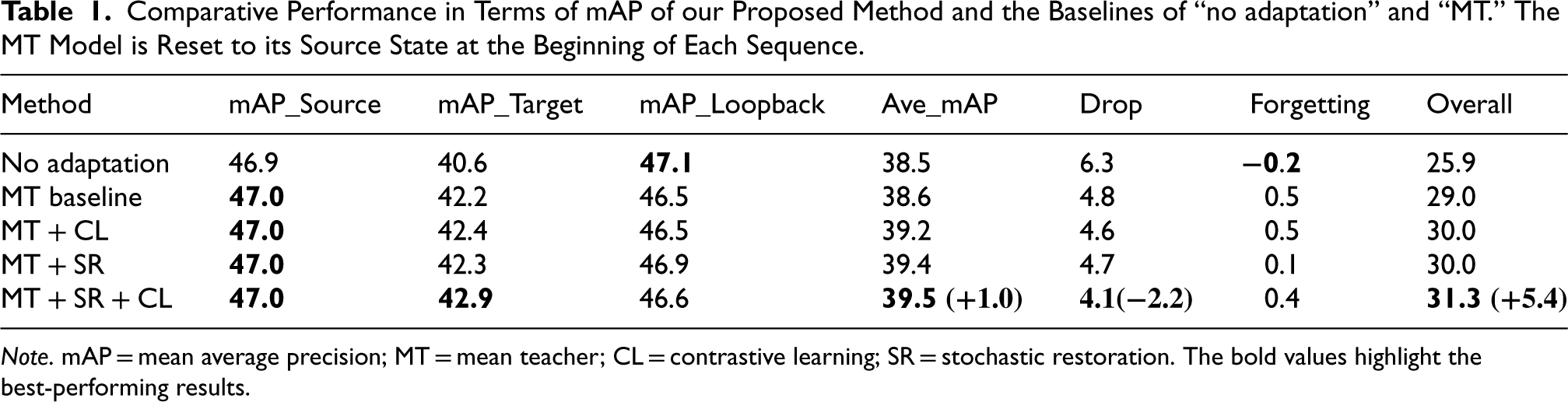

To simulate a more realistic setting, we conduct the same experiments as above, but without resetting the model to its source state at the beginning of each sequence. As previously discussed, TTA methods often encounter issues with overfitting when applied in dynamic environments, leading to catastrophic forgetting and subsequent deterioration of the detector’s performance. Resetting the model to its original source state can mitigate this problem, enabling the use of TTA methods even in continually changing target domains. However, in real-world applications, it is difficult to determine the appropriate timing for resetting to the source model. A recent study (Chakrabarty et al., 2023) introduces a technique to detect significant domain shifts and perform a reset to the source model accordingly. We choose to evaluate our method without resetting to the source model. The results of our experiments are presented in Table 2.

Comparative Performance in Terms of mAP of Our Proposed Method and the Baselines of “No Adaptation” and “MT.” The MT Model is not Reset to its Source State at the Beginning of Each Sequence.

Note. mAP = mean average precision; MT = mean teacher; CL = contrastive learning; SR = stochastic restoration. The bold values highlight the best-performing results.

We observe a significant deterioration in the performance of the “MT” baseline model without resetting. Specifically, the Ave_mAP for the MT baseline, which is 35.9, is even lower than that with “No Adaptation,” which is 38.5. Although the “Drop” is reduced to 5.8, the overall performance of the detector is degraded. Catastrophic forgetting is evident, particularly from the noticeable drop in the mAP_Loopback metric, which equals to 42.8.

The SR technique demonstrates its capability to improve over the “no adaptation” baseline, even without resetting to the source model, achieving an Ave_mAP of 39.3. When we employ only the CL with MT, we observe a significant drop in the performance of the detector, resulting in a 35.1 Ave_mAP. However, when both SR and CL are utilized with MT, the performance of the detector is improved, “Drop” is reduced, and the Ave_mAP is slightly lower than when we used the resetting and equals 39.3. Nevertheless, some forgetting is still present as indicated by the lower mAP_Loopback, which is 45.6. The adaptation to the target domain is improved, as indicated by the reduced “Drop” of 3.6.

Therefore, our method proves to be suitable and capable of retaining optimal performance even when the target domain is continually changing. This is primarily due to the SR technique, which can be characterized as a continual TTA method. The MT network alone faces significant issues when domains change continuously during adaptation. Consequently, it is not suitable for continual TTA problems, as it introduces catastrophic forgetting. Methods that utilize CL can, in some cases, mitigate forgetting by learning more robust and generalized features, leading to better adaptation to new domains. However, in the case of rapid domain shifts or long-term adaptation, catastrophic forgetting remains unavoidable. SR appears to be the most effective method for reducing catastrophic forgetting. This approach, which can be easily combined with other techniques, such as the MT framework, is highly effective in addressing continual TTA problems. Additionally, utilizing object-level CL can further enhance the adaptation process.

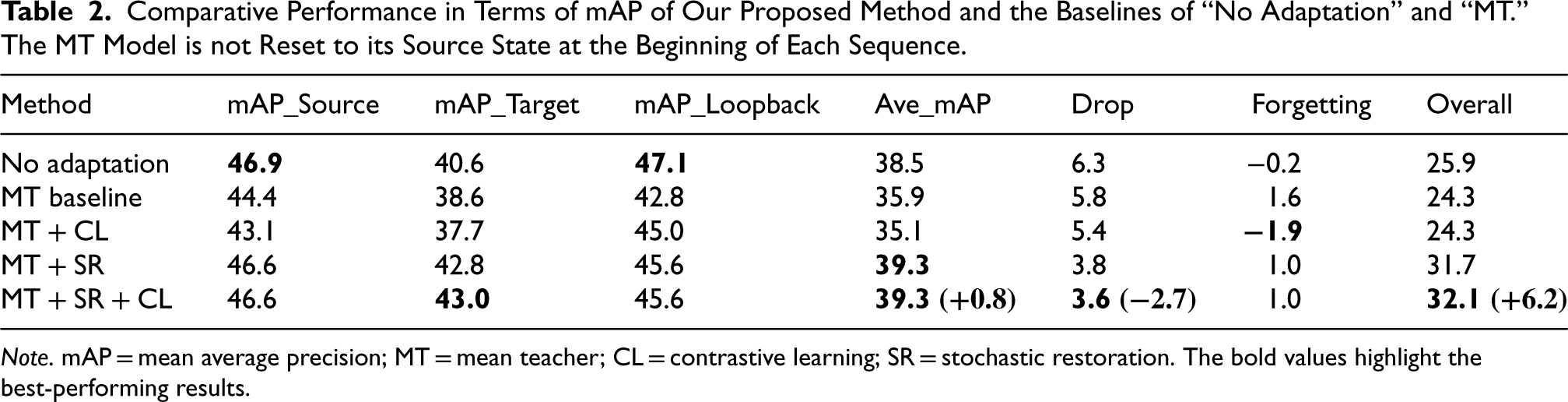

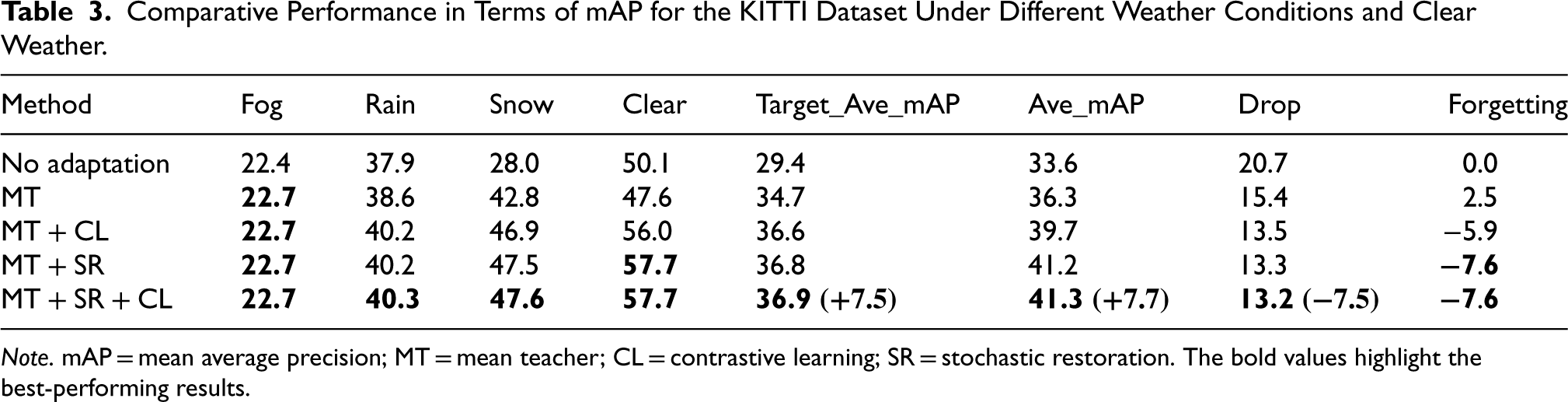

In Table 3, we present experimental results for the KITTI dataset under the continual setting mentioned above, including fog, rain, snow, and clear weather conditions. For the “no adaptation” baseline, “Forgetting” is 0.0 because the model is frozen. Therefore, the performance on the same images from the source domain, which are used as the loopback domain, remains exactly the same. The MT method shows noticeable improvements across all domain shifts except for the last one, suggesting that some knowledge from the source domain has been lost, as indicated by the “Forgetting” value, which increases to 2.5.

Comparative Performance in Terms of mAP for the KITTI Dataset Under Different Weather Conditions and Clear Weather.

Note. mAP = mean average precision; MT = mean teacher; CL = contrastive learning; SR = stochastic restoration. The bold values highlight the best-performing results.

When SR and CL are combined with MT, significant improvements are observed. The Target_Ave_mAP increases to 36.9, showing an improvement of +7.5 over the “no adaptation” baseline, and the Ave_mAP rises to 41.3, an improvement of +7.7. The “Drop” is reduced by

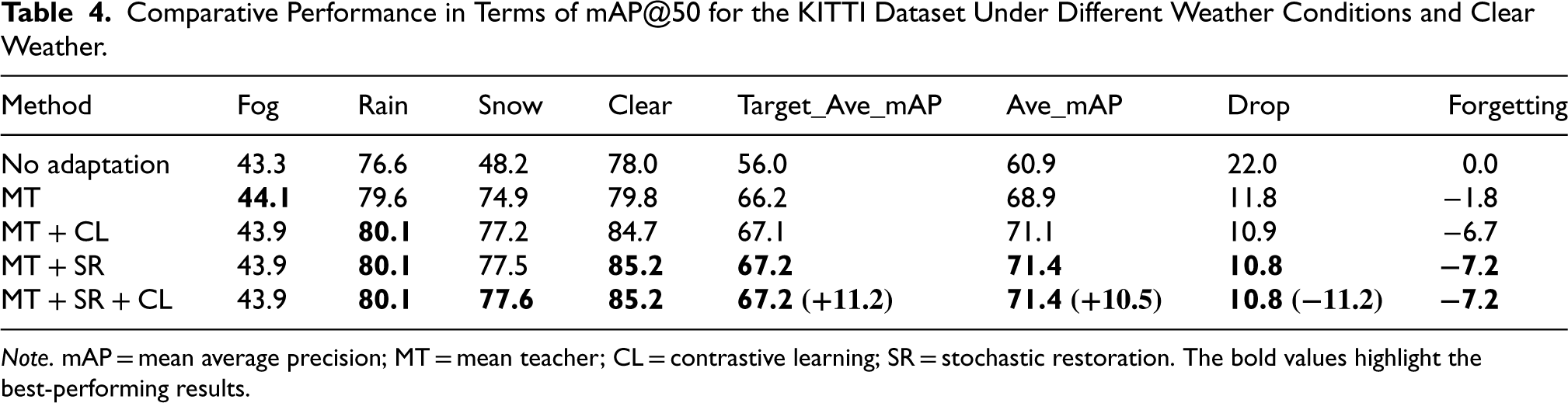

In Table 4, the mAP@50 results are shown for the KITTI dataset. The MT method shows improvements, particularly for the “Snow” domain, with no evidence of forgetting. This suggests that although localization accuracy, as indicated by the mAP results, is slightly reduced, the model remains capable of detecting objects in the source domain with similar effectiveness as before.

Comparative Performance in Terms of mAP@50 for the KITTI Dataset Under Different Weather Conditions and Clear Weather.

Note. mAP = mean average precision; MT = mean teacher; CL = contrastive learning; SR = stochastic restoration. The bold values highlight the best-performing results.

MT with SR and CL leads to further improvements. The Target_Ave_mAP increases to 67.2, a +11.2 improvement over the “no adaptation” baseline, and the Ave_mAP increases to 71.4, showing a +10.5 improvement. The “Drop” is reduced by 11.2, and “Forgetting” improves to

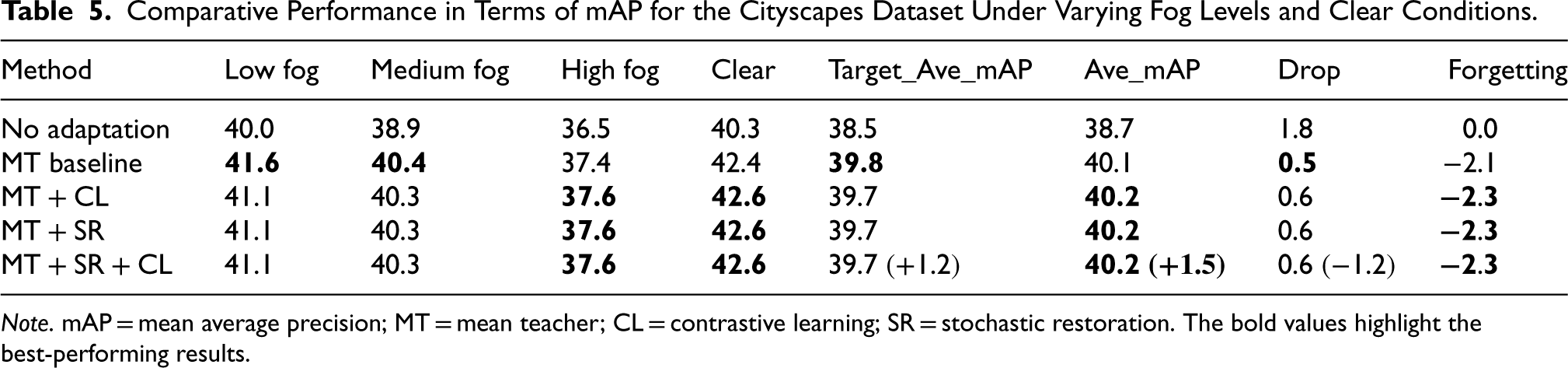

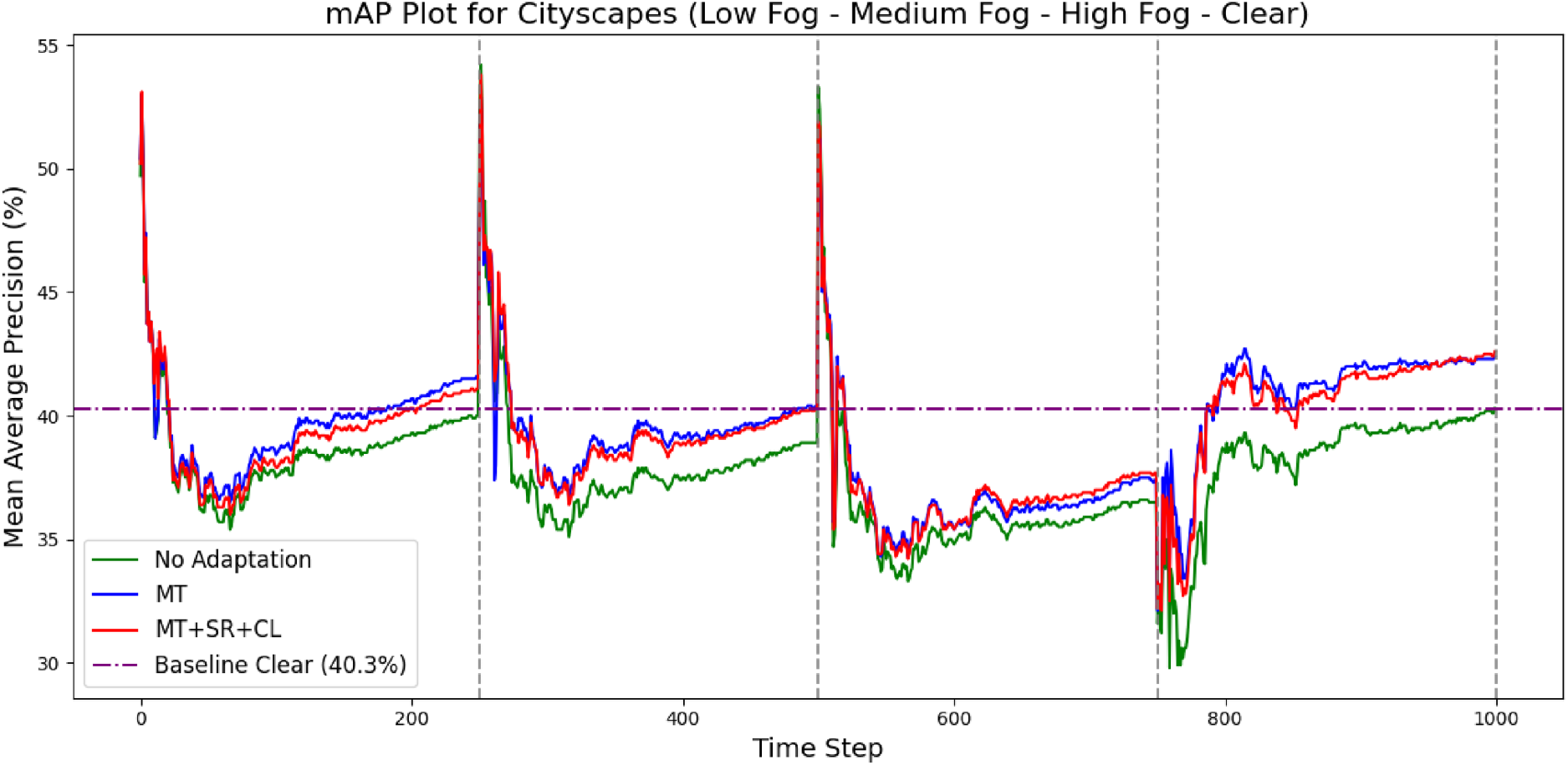

In Table 5, we present experimental results for the Cityscapes dataset under varying fog levels and clear conditions. The MT method shows improvements, particularly for the low and medium fog conditions, with minimal evidence of forgetting. Although the performance slightly decreases for the high fog condition, the model remains effective in detecting objects.

Comparative Performance in Terms of mAP for the Cityscapes Dataset Under Varying Fog Levels and Clear Conditions.

Note. mAP = mean average precision; MT = mean teacher; CL = contrastive learning; SR = stochastic restoration. The bold values highlight the best-performing results.

When SR and CL are combined with MT, the Target_Ave_mAP is 39.7, a +1.2 improvement over the “no adaptation” baseline, but there is a small decrease,

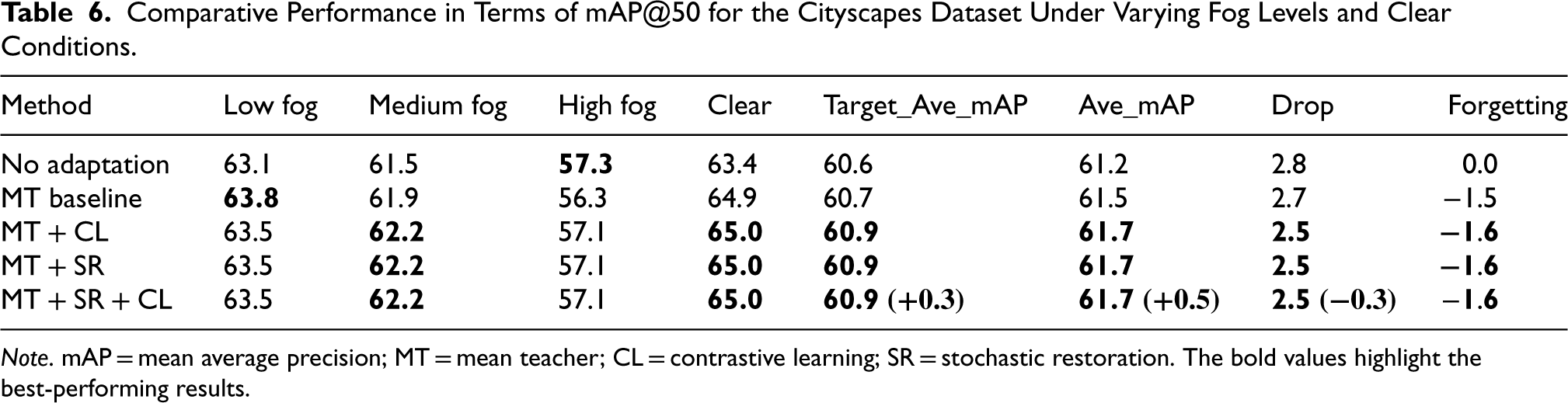

Table 6 presents the results in terms of the mAP@50 metric. More subtle performance differences are observed across the various methods. The proposed technique continues to demonstrate consistent performance gains in terms of adaptation and stability, as evidenced by slight improvements in Target_Ave_mAP and Ave_mAP metrics and reductions in “Drop” and “Forgetting.”

Comparative Performance in Terms of mAP@50 for the Cityscapes Dataset Under Varying Fog Levels and Clear Conditions.

Note. mAP = mean average precision; MT = mean teacher; CL = contrastive learning; SR = stochastic restoration. The bold values highlight the best-performing results.

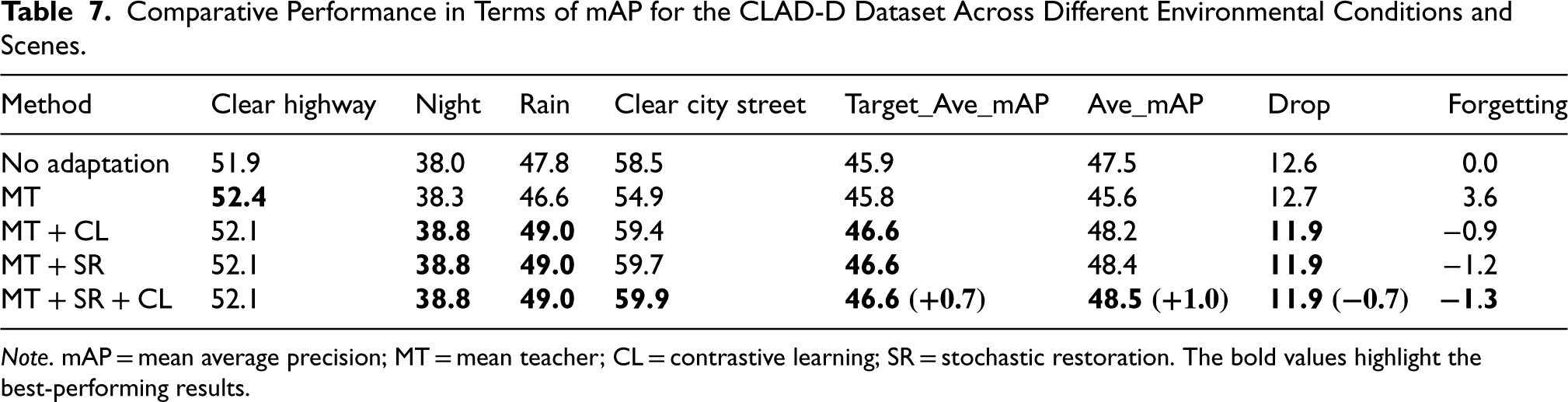

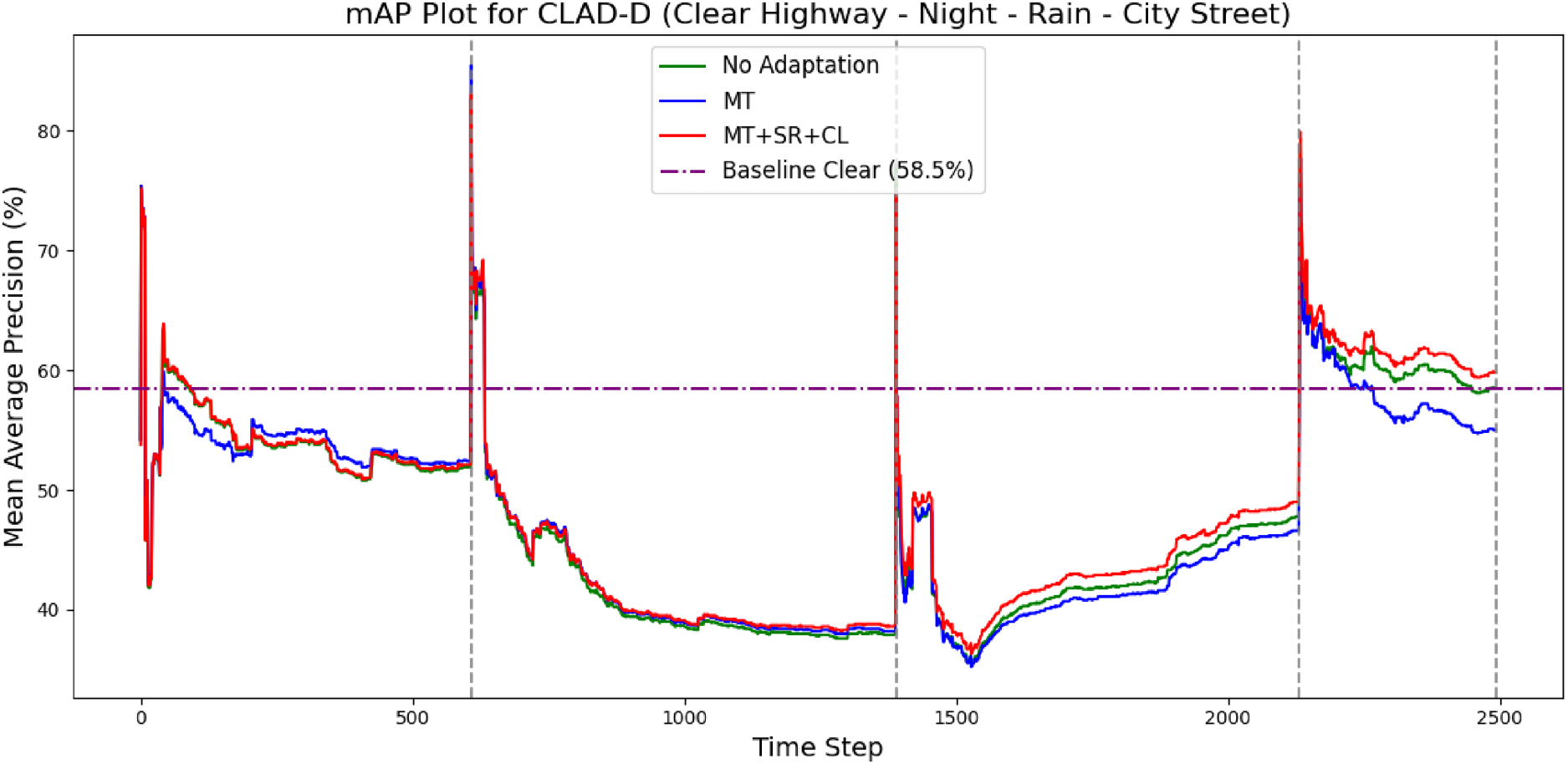

In Table 7, we present experimental results for the CLAD-D dataset under various environmental conditions and scenes. The MT method demonstrates slight improvements over “no adaptation,” especially for the clear highway and night conditions, although some performance degradation is observed for Rain and Clear City Street. There is a noticeable amount of forgetting, as indicated by the forgetting score of 3.6. However, when SR and CL are combined with MT, the Target_Ave_mAP increases to 46.6, a +0.7 improvement over the “no adaptation” baseline, and the Ave_mAP increases by +1.0 to 48.5. Additionally, the “Drop” is reduced by 0.7, and “Forgetting” improves significantly to

Comparative Performance in Terms of mAP for the CLAD-D Dataset Across Different Environmental Conditions and Scenes.

Note. mAP = mean average precision; MT = mean teacher; CL = contrastive learning; SR = stochastic restoration. The bold values highlight the best-performing results.

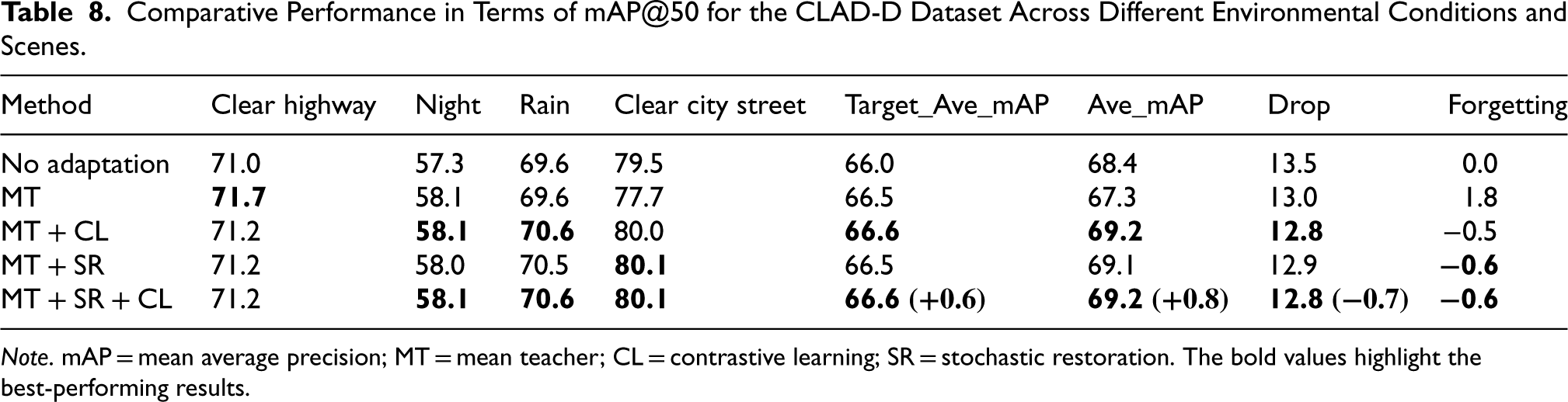

Table 8 presents experimental results in terms of mAP@50. As with Table 7, the MT, SR, and CL combination outperforms both the “no adaptation” and MT baselines, yielding improvements across evaluation metrics and reinforcing the robustness of the proposed approach.

Comparative Performance in Terms of mAP@50 for the CLAD-D Dataset Across Different Environmental Conditions and Scenes.

Note. mAP = mean average precision; MT = mean teacher; CL = contrastive learning; SR = stochastic restoration. The bold values highlight the best-performing results.

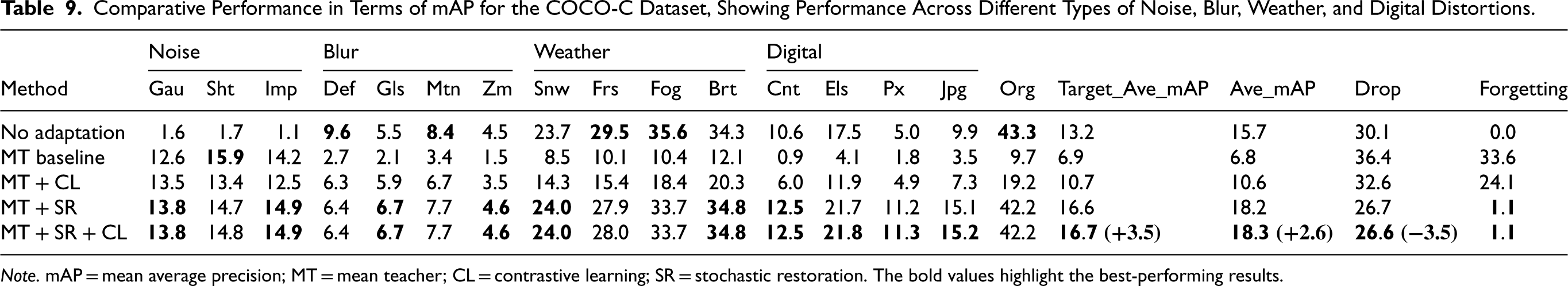

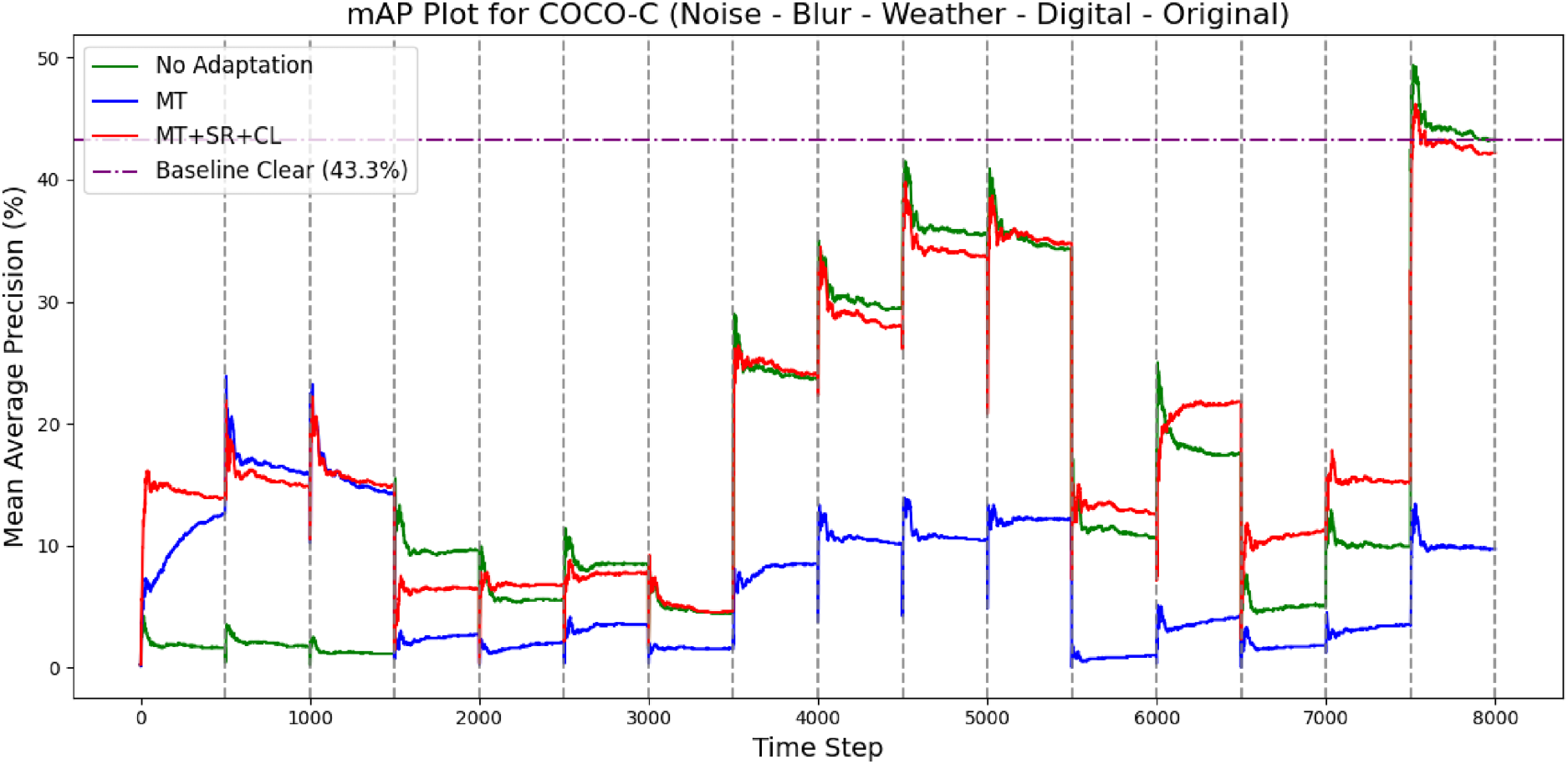

In Table 9, we present experimental results for the COCO-C dataset, showcasing performance across various noise, blur, weather, and digital corruptions. The “no adaptation” method shows limited performance across all conditions, with values ranging from 1.1 to 35.6, reflecting its struggle to adapt to the synthetic distortions. The “MT Baseline” method shows improvements, particularly in the “Noise” and “Blur” categories, where it achieves better results compared to “no adaptation.” Significant degradation of the model is observed in the “Org” domain, which contains the original COCO images. Both Target_Ave_mAP and Ave_mAP show a noticeable decline, indicating a degradation in performance. The “Drop” metric increases, suggesting that the model struggles to adapt to the new challenging domains, and the amount of forgetting is substantial, reaching 33.6, highlighting the model’s difficulty in retaining previously learned knowledge.

Comparative Performance in Terms of mAP for the COCO-C Dataset, Showing Performance Across Different Types of Noise, Blur, Weather, and Digital Distortions.

Note. mAP = mean average precision; MT = mean teacher; CL = contrastive learning; SR = stochastic restoration. The bold values highlight the best-performing results.

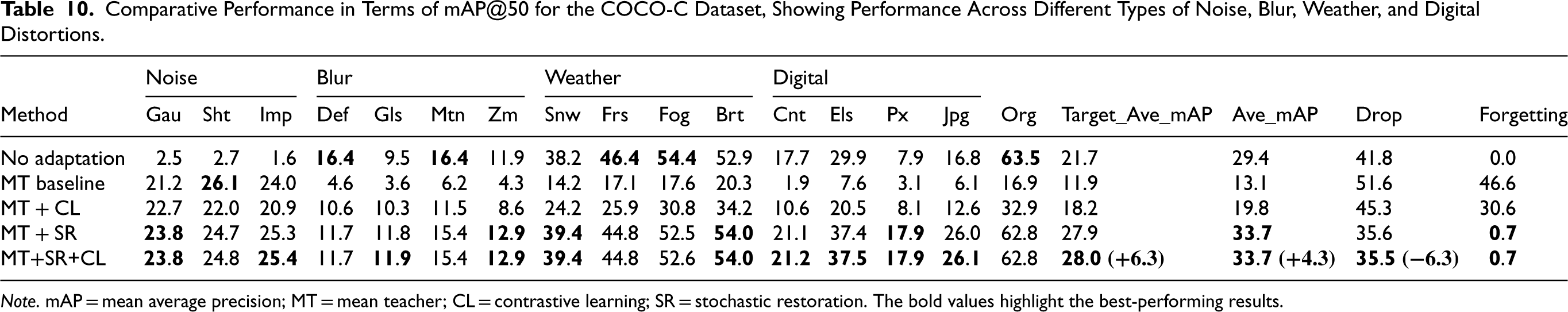

Comparative Performance in Terms of mAP@50 for the COCO-C Dataset, Showing Performance Across Different Types of Noise, Blur, Weather, and Digital Distortions.

Note. mAP = mean average precision; MT = mean teacher; CL = contrastive learning; SR = stochastic restoration. The bold values highlight the best-performing results.

Our approach significantly improves performance across most categories. For example, Target_Ave_mAP reaches 16.7, showing a +3.5 improvement, while Ave_mAP increases to 18.3, a +2.6 improvement. The “Drop” decreases to 26.6, and “Forgetting” is minimized to 1.1, indicating enhanced adaptation. The method outperforms both “no adaptation” and “MT baseline,” demonstrating its effectiveness in handling the various consecutive distortions. Although the “Drop” remains high due to the challenging nature of the setting, our method demonstrates robustness for long-term adaptation across multiple and diverse domain shifts.

In Table 10, the experimental results in terms of mAP@50 reinforce the robustness of our method and support the findings from the previous experiments. The method performs particularly well in noise and digital distortions, compared to the other approaches. Target_Ave_mAP reaches 28.0, a +6.3 improvement, and Ave_mAP equals 33.7, showing a +4.3 increase. “Drop” decreases to 35.5, reflecting a

Overall, our experimental results demonstrate that the proposed MT + SR + CL method consistently outperforms the MT approach and generally exceeds the performance of each individual component (MT + CL and MT + SR). In Table 4, the performance of MT + SR + CL is almost identical to that of MT + SR, while in Table 8, the results of MT + SR + CL are nearly equal to those of MT + CL. These observations indicate that, in all cases, employing our combined method achieves the best possible performance, eliminating the need to decide which individual components to use for each scenario. In the Cityscapes dataset (Tables 5 and 6), the improvement over individual components is imperceptible, possibly because there is not a strong domain shift, only fog levels change, meaning that the domain difference is limited, allowing even the “no adaptation” baseline to achieve strong performance.

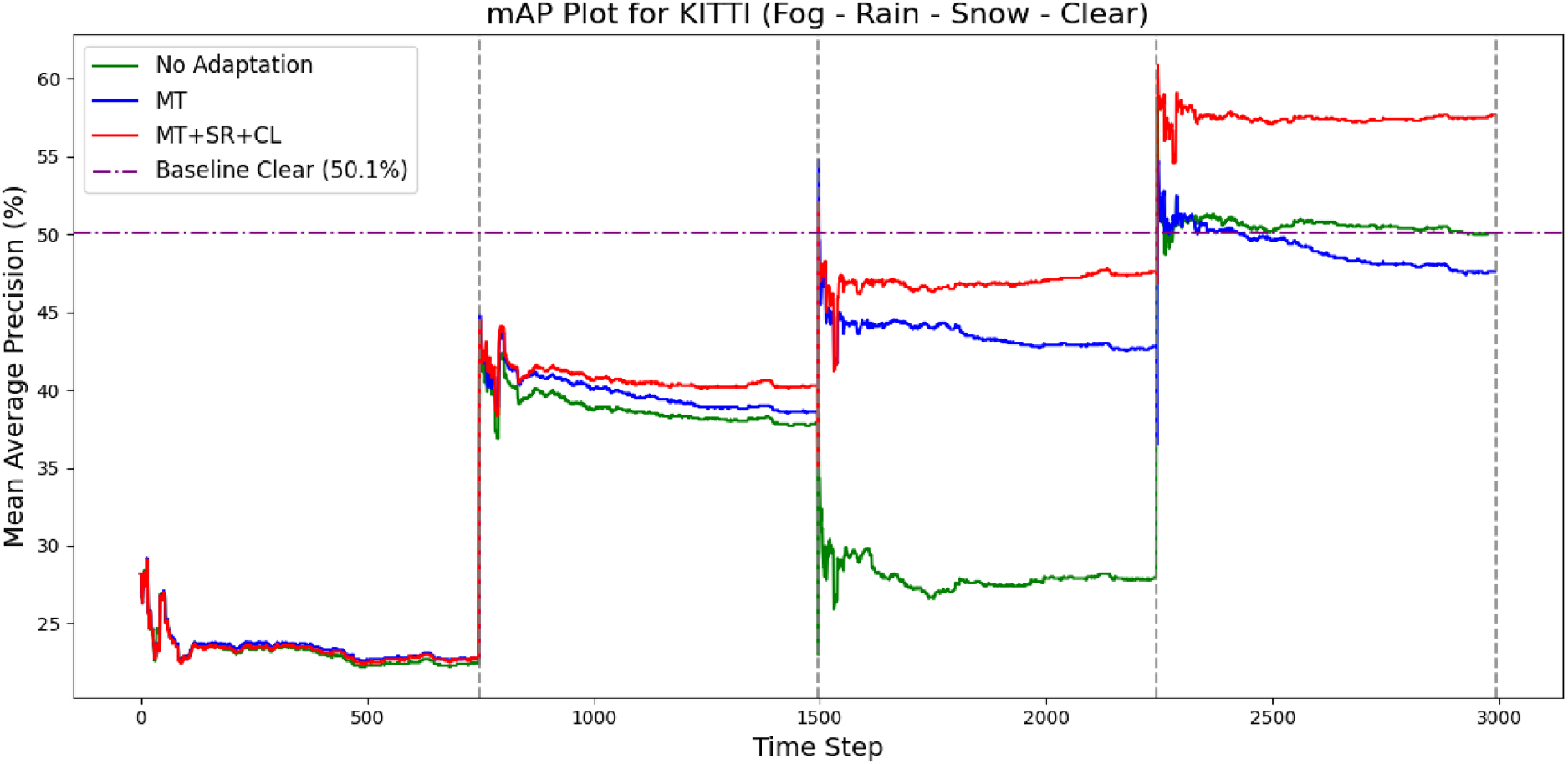

Figures 3, 4, 5 and 6 illustrate the evolution of mAP during the adaptation process across four datasets and provides valuable insight into how our proposed method compares to existing techniques. The plots highlight that the mAP of the “no adaptation” baseline drops when a domain shift occurs. For extreme domain shifts, such as in the KITTI dataset under the snow domain conditions, the model’s performance is completely degraded. This severe drop underscores the significant challenge posed by such domain shifts, where the model fails to generalize effectively. It also emphasizes the necessity of adaptation methods to maintain or improve performance in the presence of such shifts. Without adaptation, the model struggles to handle new environments, further validating the importance of implementing robust adaptation strategies.

Evolution of Mean Average Precision (mAP) During Adaptation for the KITTI Dataset.

Evolution of Mean Average Precision (mAP) During Adaptation for the Cityscapes Dataset.

Evolution of Mean Average Precision (mAP) During Adaptation for the CLAD-D Dataset.

Evolution of Mean Average Precision (mAP) During Adaptation for the COCO-C Dataset.

In contrast, the MT approach demonstrates a more robust performance, indicating that adaptation helps the model recover from these extreme shifts. However, in datasets such as KITTI, CLAD-D, and COCO-C, the performance on the source domain after adaptation is significantly lower due to catastrophic forgetting. Additionally, in the COCO-C dataset, long-term adaptation leads to further deterioration of the model’s performance after some domain shifts, where the model’s mAP declines to a point where it performs worse than the “no adaptation” baseline. This suggests that while adaptation can improve resilience to domain shifts, it is crucial to balance the adaptation process to avoid catastrophic forgetting and ensure stable, long-term performance across different domains.

Our proposed technique achieves improved performance under domain shifts and minimizes catastrophic forgetting, resulting in even better performance on the source domain after adaptation. Furthermore, it is well-suited for long-term adaptation, as demonstrated by the performance improvement on the COCO-C dataset. This highlights the effectiveness of our approach in maintaining model robustness and adaptability over time, even in continually changing environments.

In this study, we focus on continual TTA setting, in which the target domain is non-stationary, involving a sequence of domain shifts. We use the MT framework for object detection and integrate it with the SR technique. Furthermore, we seek to boost its effectiveness through object-level CL. Our proposed approach demonstrates an improved performance over the MT approach on several standard datasets. Additionally, our method is agnostic to the domain shifts that may occur during inference and has proved to be robust in long-term adaptation. This can be particularly valuable in real-world applications, where conditions often change over time.

Research in this field is relatively recent, and there are currently no widely accepted benchmarks for evaluating performance. The development of specialized datasets, performance metrics, and baselines is essential. CL stands out as an intriguing future direction that can be combined with self-supervision to address this challenging scenario. Future research may focus on enhancing the synergy between CL and SR technique, potentially by restoring only a subset of the network parameters. Given that SR significantly increases computational complexity, exploring more efficient mechanisms, such as restoring parameters only when significant domain shifts are detected, could be beneficial.

Advancements in continual TTA will enable the development of adaptive models for dynamically evolving domains, reducing the cost and time required to train new models for each domain. Experimental results are promising, indicating that adaptation during inference can enhance the performance and flexibility of deep learning algorithms.

Footnotes

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Competing Interest

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.