Abstract

In a facility location problem, we seek to locate a set of facilities in an area, where demand points (clients) may be present, so that some criterion is optimized. A well-known facility location problem is the p-median problem, where we seek to minimize the sum of distances between demand points and their nearest facility. We consider a variant of the p-median problem where distance constraints exist between facilities and between facilities and demand points. This problem can be used to model the requirements that arise when locating semi-obnoxious facilities. We first introduce integer linear programming and constraint programming (CP) models and implement them in Gurobi and OR-Tools, respectively. Then, we describe a greedy heuristic that can be used to prune branches during a search within an incomplete CP solver. We also introduce a number of domain-specific value ordering heuristics that can be applied within such a solver. The developed method is evaluated under different problem generation models, and compared to Gurobi and OR-Tools. The results demonstrate that our method is more robust than the two complete solvers, significantly outperforming either of them in certain classes of problems, both in terms of run times and the quality of the solutions found.

Introduction

Facility location problems are widely studied in operations research (OR), artificial intelligence (AI), computational geometry, and other disciplines. In such problems, we seek to locate a set of facilities in an area, where demand points (clients) that will be serviced by the facilities may be present, so that some criterion is optimized. The optimization criterion largely depends on the types of facilities to be located. When the facilities have beneficial properties (e.g., pharmacies), we typically want to locate them close to clients. In contrast, when the facilities are (ob)noxious, that is, they have hazardous effects (e.g., dump sites), we seek to locate them far from clients and/or each other. In between, we have the class of semi-obnoxious facilities that have both desirable and undesirable properties.

Prime examples of the first two categories are the p-median and p-dispersion problems, respectively. In the former, we seek to locate p facilities in an area where demand points are present, so that the sum of distances between demand points and their nearest facility is minimized. Hence, we have a minsum objective. In the latter, we seek to locate p facilities so that the closest distance between any two facilities is maximized. Hence, in this case, we have a maxmin objective. Apart from the difference in the type of objective function, another crucial difference between the two problems is that in p-dispersion there are no clients to be serviced by the facilities.

Here, we are concerned with a variant of the p-median problem where we again seek to minimize the sum of the distances between the clients and their closest facility, but in this case, we also have hard distance constraints between facilities and also between facilities and clients. These constraints impose lower bounds on the distance between the locations of any two facilities and also between any facility and any client. In the rest of the paper, we call this problem p-median with distance constraints (pMD). Although this problem was proposed as far back as 1984 (Moon & Chaudhry, 1984), and despite its usefulness in modeling the location of semi-obnoxious facilities (Krarup et al., 2002), it has received little attention. We consider the discrete uncapacitated case where the potential location points for the facilities are given, the facilities are heterogeneous (i.e., they have varying properties), and after the location has been completed, demand points are serviced by the facility that is located closest to them.

As an example of a pMD, consider the problem of locating a set of filling (gas) stations in an area. Filling stations are typical semi-obnoxious facilities. For their convenience, clients wish to have them placed at a close distance, but not too close, given the danger involved in case of an accident, as well as for other reasons (e.g., traffic and pollution). In addition, there are safety regulations that must be taken into account when opening filling stations. Among other constraints, such regulations impose minimum safety distances between the stations in an area and also between stations and clients. Another property that facilities such as filling stations have is that they are often heterogeneous. That is, they can have varying characteristics. In the case of filling stations such characteristics may be the overall size, tank capacity, types of fuel on offer (gasoline, diesel, natural gas, etc.), number of available fuel dispensers, etc. Such heterogeneous characteristics imply that the minimum allowed distances may not be uniform (e.g., large stations must be placed further away than smaller ones).

Location problems with distance constraints have received relatively little attention within the vast literature on location problems (Berman & Huang, 2008; Chaudhry et al., 1986; Comley, 1995; Moon & Chaudhry, 1984; Moon & Papayanopoulos, 1991; Tansel et al., 1982). Very recently, p-dispersion with distance constraints was studied, comparing integer linear programming (ILP) to exact and heuristic constraint programming (CP) approaches (Iosif et al., 2024b; Ploskas et al., 2023).

We start by giving an ILP formulation for the pMD, based on the ReVelle & Swain formulation for the p-median problem (ReVelle & Swain, 1970), extending it to cover the case of heterogeneous facilities and adding distance constraints. Then, we describe a CP model that can capture the pMD as a constraint satisfaction/optimization problem in a natural way. The CP (respectively, ILP) model has been implemented in the CP-SAT OR-Tools (respectively, Gurobi) solver. These are state-of-the-art solvers for CP and ILP, respectively. Experimental results reveal that these solvers struggle with large, hard instances of the pMD, often failing to find a feasible solution within 1 h of central processing unit (CPU) time. In addition, such large instances have extensive, and sometimes prohibitive, memory requirements due to the large sizes of the models. These problems highlight the need for heuristic and lightweight approaches that may sacrifice completeness to efficiently handle the challenges posed by large, hard problems.

Hence, we investigate the applicability of a CP-based heuristic method in pMDs, similar to the one proposed in Ploskas et al. (2023) for p-dispersion problems with distance constraints. This method employs a CP solver that incorporates a heuristic pruning technique, which tries to prune the search tree by reasoning, at any node, about the best cost that can be achieved. Specifically, after the first solution has been found, a greedy heuristic is run at each visited node to estimate the best cost that can be achieved if the subtree rooted at that node is explored. If this estimated cost is not better than the cost of the best solution found so far, then the current branch is cut off.

We also introduce four specialized value ordering heuristics that try to intelligently guide search by selecting appropriate sites to place the facilities using increasingly more complex information. The first two heuristics only exploit local information about the facility that we currently try to assign to a site. The third heuristic takes into account information about past assignments when making the decision, while the fourth heuristic, in addition, reasons about the possible future assignments.

We experimented with pMD problems of three types. In the first two, the clients and the potential facility sites are randomly placed in a grid, while in the third, we use the p-median benchmark instances (Beasley, 1985) as a basis to generate pMDs. Problems of the first two types, which typically have few solutions, can be very hard for Gurobi, as it often does not discover any solution within 1 h of CPU time, but mostly manageable by OR-Tools, except for the larger ones. In contrast, OR-Tools fares badly on problems of the third type, which typically has many solutions, while Gurobi finds most of these problems quite easy.

The heuristic CP solver’s performance is more stable across problem classes with different characteristics compared to standard ILP and CP solvers, despite not being the best in all classes. Specifically, it is by far more efficient and effective than Gurobi in grid-structured problems, and it also often outperforms OR-Tools, both in terms of run times and solution quality, being able to handle large instances of these types that are out of reach for the complete solvers. On the other hand, it is outperformed by Gurobi on most p-median-based instances (though it is quite efficient in some cases), but it is much more efficient than OR-Tools. In addition, experiments with the four specialized value ordering heuristics demonstrated that through the use of these heuristics, and especially the more informed ones, we can often obtain improvements not only in solution quality, but also in run times.

Finally, as our heuristic pruning method is often quite aggressive, meaning that it can cut off many branches and reduce the size of the search space significantly, it may also result in the omission of optimal and near-optimal solutions. To partially alleviate this problem, we investigate the application of the heuristic together with a sampling technique that calculates the best estimation over different orderings for the future variables (i.e., unassigned facilities). Experimental results show that through this technique, we find better solutions, albeit by paying a run-time penalty.

This paper is an extended version of a conference paper by Iosif et al. (2024a), where the p-median problem with distance constraints was studied. Here, we extend that paper in the following ways:

We describe our approach in more detail. We provide more extensive experimental results, offering deeper insights into the details and the performance of the CP heuristic solver. We give additional experimental results to assess the impact of the sampling technique proposed in Iosif et al. (2024a). And most importantly, we introduce four specialized value-ordering heuristics for the pMD and evaluate their effectiveness within the CP heuristic solver framework.

The remainder of this paper is structured as follows: In Section 2, we discuss related work. In Section 3, we give the necessary background on CP and ILP, and we formally define the pMD problem. In Sections 4 and 5, we present the ILP and CP formulations for pMDs, respectively. Section 6 details the proposed heuristic methodology, while Section 7 presents experimental results. Finally, Section 8 concludes the paper with a discussion and potential directions for future work.

Related Work

In the past few decades, a vast literature encompassing different models, problem characteristics, applications, algorithms, and heuristics for the various facility location problems, has been accumulated. The p-median problem is a prime example of a problem with “pull” objectives, where clients wish to have the facilities (e.g., pharmacies and stores) located close to them, whereas the p-dispersion problem is an example of a problem with “push” objectives, where we seek to place the facilities away from each other. In between we have the class of semi-obnoxious location problems where the facilities have both desirable and undesirable properties (Krarup et al., 2002). In this case, clients wish to have facilities located close to them, but not too close. For example, the residents of a suburb wish to have bars situated near them, but not too close because of the noise and traffic associated with such facilities.

The semi-obnoxious case can be viewed as a biobjective optimization problem. For instance, we might wish to minimize the total service cost and at the same time maximize the minimum distance between any facility and a client. Several strategies to handle such problems have been studied (Krarup et al., 2002). The problem can be treated directly as biobjective and we may try to find the Pareto set, which is anything but easy for location problems with multiple facilities (Ortigosa et al., 2015; Tutunchi & Fathi, 2019; Yapicioglu et al., 2007). As an alternative, a model with a single criterion can be derived, where the objective function is a weighted combination of both the pull and the push objective. Another option is to place a bound on the obnoxious effects, as a set of constraints, and hence to view the problem as having a single objective (Carrizosa & Plastria, 1999). This is the approach that we follow in this paper.

The p-median problem, which is

Apart from the standard ILP approach, other methods, such as Lagrangian relaxation (Beltran et al., 2006) and numerous other heuristics, have been proposed for the p-median problem. The greedy or myopic heuristic first solves the 1-median problem by locating one facility in such a way as to minimize the total cost for all demand nodes. Facilities are then added one by one in the same way, that is, trying to minimize the total cost at each step, until all p facilities have been located (Kuehn & Hamburger, 1963). A survey of heuristic and meta-heuristic methods for the p-median problem can be found in Mladenović et al. (2007).

Distance Constraints

There are several early works that considered maximum distance constraints between the demand nodes and the facility locations (Church & Meadows, 1977; Chvatal, 1979; Khumawala, 1973; Tansel et al., 1982). Tansel et al. (1982) were the first to include distance constraints in a facility location problem. Specifically, they studied the distance-constrained p-center problem. The distance constraints that they consider are between the demand points and the centers. They study the case where the network is a tree and they also provide the dual of this problem. They give polynomially bounded procedures for solving both problems.

Moon and Chaudhry (1984) were the first to systematically study location problems with distance constraints. They provided a thorough categorization of such problems according to several factors, such as the optimization criterion, the type of distance constraint (> or <), and whether constraints exist between facilities, or between facilities and clients, or between both. In the terminology they introduced, the pMD is called Median

Chaudhry et al. (1986) proposed heuristics for selecting a maximum-weight set of locations such that no two are closer than a given distance from each other. Moon and Papayanopoulos (1991) considered the problem of locating two facilities so as to minimize the maximum of combined Euclidean distances to unweighted existing points when the facilities must be separated by at least a specified distance. An interactive graphical method that produces near-optimal solutions was proposed. Comley (1995) studied the problem of locating a small number (up to three) of heterogeneous semi-obnoxious facilities that interact with each other as well as with other existing facilities. The minimum Euclidean distance between any pair of such facilities was maximized using a quadratic 0–1 programming algorithm.

Berman and Huang (2008) studied the problem of locating homogeneous obnoxious facilities on a network so as to minimize the total demand covered, subject to the condition that no two facilities are allowed to be closer than a prespecified distance. Drezner et al. (2018) proposed the Weber obnoxious facility location problem where we seek to locate one facility so that the weighted sum of distances between the facility and demand points is minimized, with the additional requirement that the facility location is at least a given distance from demand points because it is obnoxious to them. Drezner et al. (2019) considered a continuous multiple obnoxious facility location problem where a given number of facilities must be located in a convex polygon with the objective of maximizing the minimum distance between facilities and a given set of communities subject to distance constraints between facilities.

Welch and Salhi (1997) studied the location of obnoxious facilities with interactions between them. Location problems with distance constraints that restrict the placement of facilities near certain demand points have also been studied (e.g., Brimberg & Juel, 1998; Maier & Hamacher, 2019; Orloff, 1977).

Although CP has been successfully applied to many combinatorial problems, there are very few works investigating CP-related methods for facility location problems (Cambazard et al., 2012; Fazel-Zarandi & Beck, 2009; Ploskas et al., 2023; Sorkhabi et al., 2018). But very recently, ILP and CP models, as well as a CP-based heuristic method, were proposed for the p-dispersion problem with distance constraints (Iosif et al., 2024b; Ploskas et al., 2023).

Background and Problem Definition

We first give some necessary background on CP and ILP and then we formally define the pMD problem.

Constraint Programming (CP)

CP is a paradigm for solving combinatorial problems that integrates a wide range of techniques, mainly from AI and OR. In CP, the user states the constraints on the feasible solutions for a set of decision variables, and a solver is then used to deliver a feasible solution, or the optimal one according to some criterion. In a constraint satisfaction problem (CSP)

A set of A set A set

In a binary CSP, any constraint involves at most two variables. If in addition to the hard constraints, there is an objective function to be optimized then we refer to the problem as a constraint satisfaction and optimization problem (CSOP). In many cases, in addition to the decision variables, the model of a CSP, or a CSOP, may include extra (auxiliary) variables that are useful for modeling purposes. Similarly, a model may include redundant constraints, that is, constraints whose removal does not change the set of solutions.

A solution to a CSP is a complete assignment, that is, an assignment involving all variables, that satisfies all constraints in

Complete algorithms for CSPs are based on backtracking depth-first search interleaved with constraint propagation. Backtracking search tries to assign the decision variables with values from their domains so that constraints are satisfied, while constraint propagation filters values from domains that are deemed inconsistent according to some local consistency property that is applied to the constraints.

It is well known that one of the cornerstones of CP and a major reason for its success in various domains is constraint propagation (Bessiere, 2006). CP solvers typically propagate binary constraints using the local consistency property called arc consistency. For binary problems, a value

CP solvers also offer an array of global constraints that are very useful at the modeling level, as their use results in more compact models, but also speed up search as solvers typically provide fast specialized propagation algorithms for such constraints. A global constraint can capture a relation between an arbitrary number of variables. A well-known such constraint is the

Search in a CP solver is guided by variable and value ordering heuristics. After a branching decision (e.g., a variable assignment or a value removal from a domain) propagation kicks off. If this results in an empty domain, in which case we have a domain wipe-out (DWO), the search algorithm rejects the most recent branching decision and moves on to the next one. A standard general-purpose variable ordering heuristic is dom/weighted degree (wdeg) (Boussemart et al., 2004). This heuristic assigns a weight to each constraint, initially set to one. Each time a constraint causes a DWO, its weight is incremented by one. Each variable is associated with a wdeg, which is the sum of the weights over all constraints involving the variable and at least another (unassigned) variable. The dom/wdeg heuristic chooses the variable with a minimum ratio of current domain size to a wdeg.

Although value ordering heuristics have received less attention than variable ordering ones, as their effect on search effort is usually less profound in CSPs, they can be quite effective in CSOPs. The task of a value ordering heuristic is to choose a value for the current variable under consideration during a search. The guiding principle when designing value-ordering heuristics is to select the value most likely to succeed, that is, be part of a solution. There is a wide variety of such heuristics and they can be divided into static and dynamic (Rossi et al., 2006). Static heuristics produce a fixed ordering of values before the search begins, while dynamic ones determine the order as the search progresses. Static heuristics are generally computationally inexpensive, whereas dynamic heuristics guide the search using a more informed strategy, but are typically quite expensive to compute. Hence, the static lexicographic ordering of values is very commonly used. Like other types of heuristics, value ordering heuristics can be general-purpose or domain-specific. General-purpose heuristics use generic properties that CSPs share in common, while domain-specific ones incorporate expertise and deep knowledge of the problem at hand.

When faced with a CSOP, CP solvers typically add a bounding constraint when locating a solution, forcing any subsequent solution to have a better value according to the optimization function compared to the solution just found. This constraint is propagated, potentially resulting in domain pruning. If the filtering achieved is weak then the search in a CSOP degenerates to almost an exhaustive enumeration of the solutions. In contrast, if the filtering is strong then a CP solver may exhibit very good performance on a CSOP.

Integer Linear Programming (ILP)

Another paradigm for solving combinatorial problems is ILP. ILP is widely used in various fields, such as OR, to find optimal solutions where all or some (mixed-integer) of the decision variables are integers. This requirement often arises in practical applications where decisions involve discrete choices, such as assigning tasks to machines, scheduling shifts, or location problems, as in the case of this paper. ILP is a type of optimization paradigm where the objective function and the constraints are linear. ILP formulations are mathematical models, which can then be solved using specialized algorithms, such as branch and bound, cutting planes, and branch and cut.

An ILP problem is generally formulated as follows:

Binary variables are a special case of integer variables in ILP, where the variables are restricted to take values in the set

Solving ILP problems involves specialized algorithms and software tools known as solvers. These solvers are designed to handle the complexities associated with the discrete nature of ILP problems and provide optimal or near-optimal solutions efficiently.

The p-Median Problem With Distance Constraints

In a p-median problem with distance constraints (pMD), p facilities in a set of facilities

Between each pair of facilities

The Euclidean distance between two nodes

A common assumption in the literature on location problems with distance constraints is that the lower distance bound

In the following, as is common in the literature, we assume that the pairwise distances between all nodes in the network

To summarize our notation, we have:

The goal is to minimize the sum of distances between each demand point and its nearest located facility, subject to the satisfaction of all the distance constraints.

ILP Formulation

In this section, we present a binary ILP model for pMD. The model is based on the formulation of ReVelle and Swain (1970) for the p-median problem, extended to the case of heterogeneous facilities with distance constraints. Although alternative formulations for the p-median problem exist, it is accepted that ReVelle and Swain’s (1970) formulation exhibits integer-friendly properties, and a recent study by Ploskas and Stergiou (2022) demonstrated that it is the most efficient option when modeling the p-median problem with homogeneous distance constraints.

Dealing with heterogeneous facilities introduces the need for additional decision variables, to know which specific facility is located in which candidate location, whereas in ReVelle and Swain (1970), and any other p-median formulation, we only need to know if a site hosts a facility or not. The introduction of new decision variables necessitates the generalization of the constraints that forbid the location of two facilities in the same place and the location of a single facility in multiple places. Also, compared to ReVelle and Swain’s (1970) formulation, in the pMD model there exist two additional sets of constraints to capture the distance constraints existing between facilities and between facilities and clients. In the following, we make use of the following additional notation:

As we deal with heterogeneous facilities, we need

The model for pMDs can be expressed as:

The objective function 1 aims at minimizing the sum of the distances between the clients and their nearest located facility. Constraint 2 ensures that each facility site can host at most one facility, while Constraint 3 guarantees that each facility should be hosted at exactly one facility site. Constraint 4 specifies that

Constraint 7 models the distance constraints between facilities. It ensures that each facility is at a safe distance from all other facilities by not allowing two facilities

Finally, Constraint 8 is the distance constraint between facilities and demand nodes. This constraint ensures that any facility site that is opened is at a safe distance from every demand node. Instead of setting variables

The pMD is modeled as a CSOP

For each facility For each variable For each variable There is a variable The objective function is

The

We also considered adding to the model an AllDifferent constraint over all variables in

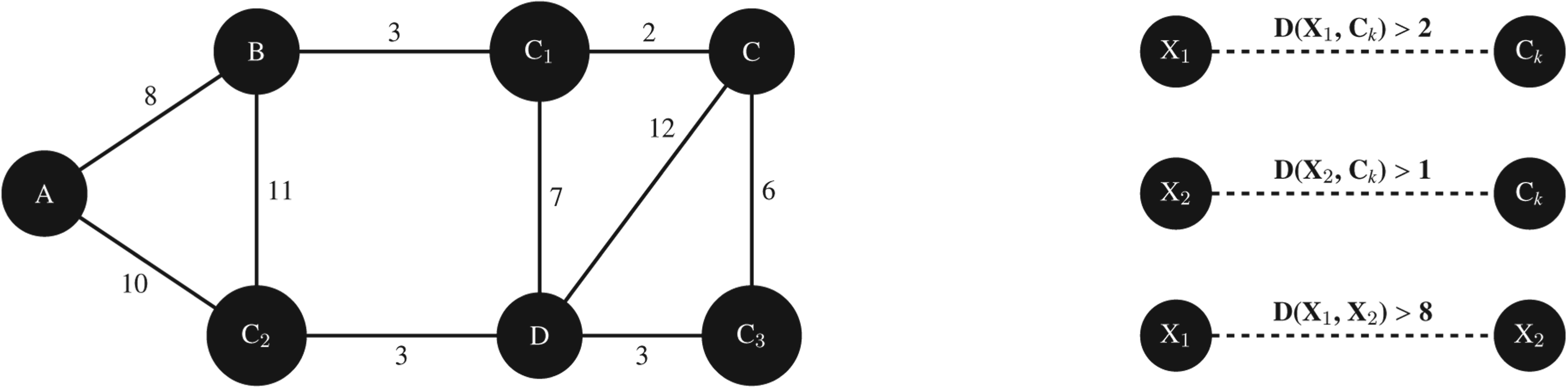

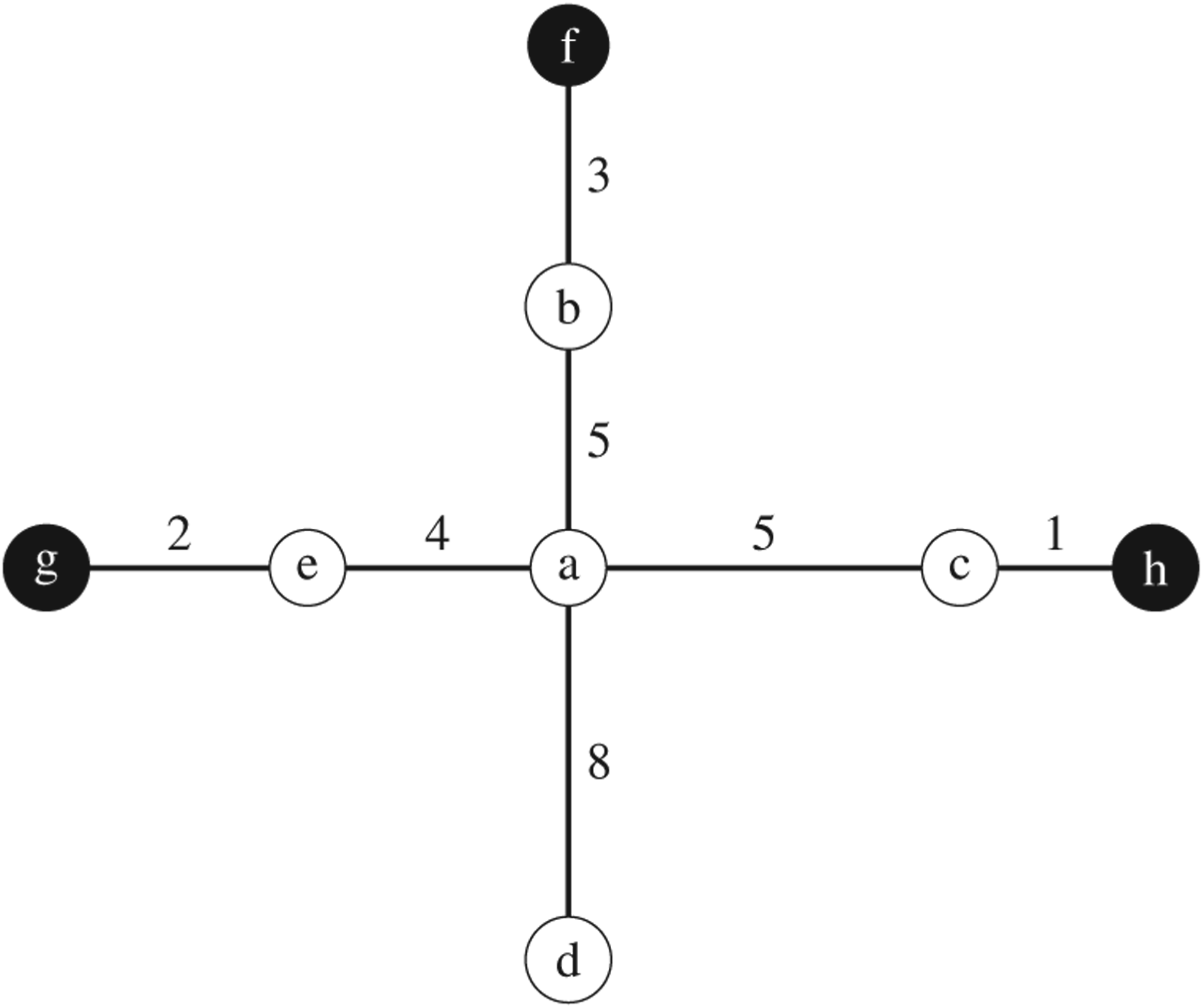

Let us now give an example of a small pMD to illustrate the CP model.

There are two facilities to be located (i.e.,

Decision variables:

Illustration of the p-median with Distance Constraints (pMD) Problem from Example 1. On the Left, an Undirected Graph of the Problem is Given. The Larger Nodes Represent Demand Nodes (e.g., Node C Auxiliary variables: z

Ploskas et al. (2023) proposed a heuristic technique for the p-dispersion problem with distance constraints that tries to prune early the parts of the search tree for which it seems unlikely that their exploration will improve the value of the optimization function. Specifically, the cost of the first feasible solution found is used as the initial lower bound denoting the cost of the best solution found so far. Thereafter, at each node of the search tree, after the currently tried assignment,

We continue this work by adapting this method to pMDs. In our case, the cost of the first feasible solution found is used as the initial upper bound since we have a minimization problem. Besides the bounding technique, our heuristic method uses a simpler model of the problem. As our experiments demonstrate, a reason for the failure of OR-Tools to solve large instances of the pMD is the size of the model it constructs, mainly because of the very large domains, along with a large number of auxiliary variables. However, in practice, it is not uncommon for location problems to include a very large number of potential facility sites (hence very large domains in the CP model).

To alleviate this problem, we propose to use a much simpler model (albeit losing propagation power), dropping all the auxiliary variables and relevant constraints, resulting in a model with only the p-decision variables and the distance constraints among pairs of variables, and between variables and clients. The optimization function can now be handled procedurally within the solver by simply computing the cost of any new solution found, so as to determine if this cost is better than the cost of the best solution discovered so far. If so, then the bound is tightened. Considering the small pMD demonstrated in Example 1, the simpler model is as follows:

This simple model has 17 fewer variables and 17 fewer constraints compared to the model of Example 1, as the auxiliary variables and the relevant Element constraints are not present. As a downside, propagation is weakened because the objective function is no longer linked to the decision variables. Therefore, as we also discuss below, any tightening of the bound cannot result in the pruning of the decision variables’ domains.

In the following, we first describe the operation of a CP solver that can use the branch pruning heuristic, and then we detail the inner workings of the heuristic.

Search Framework

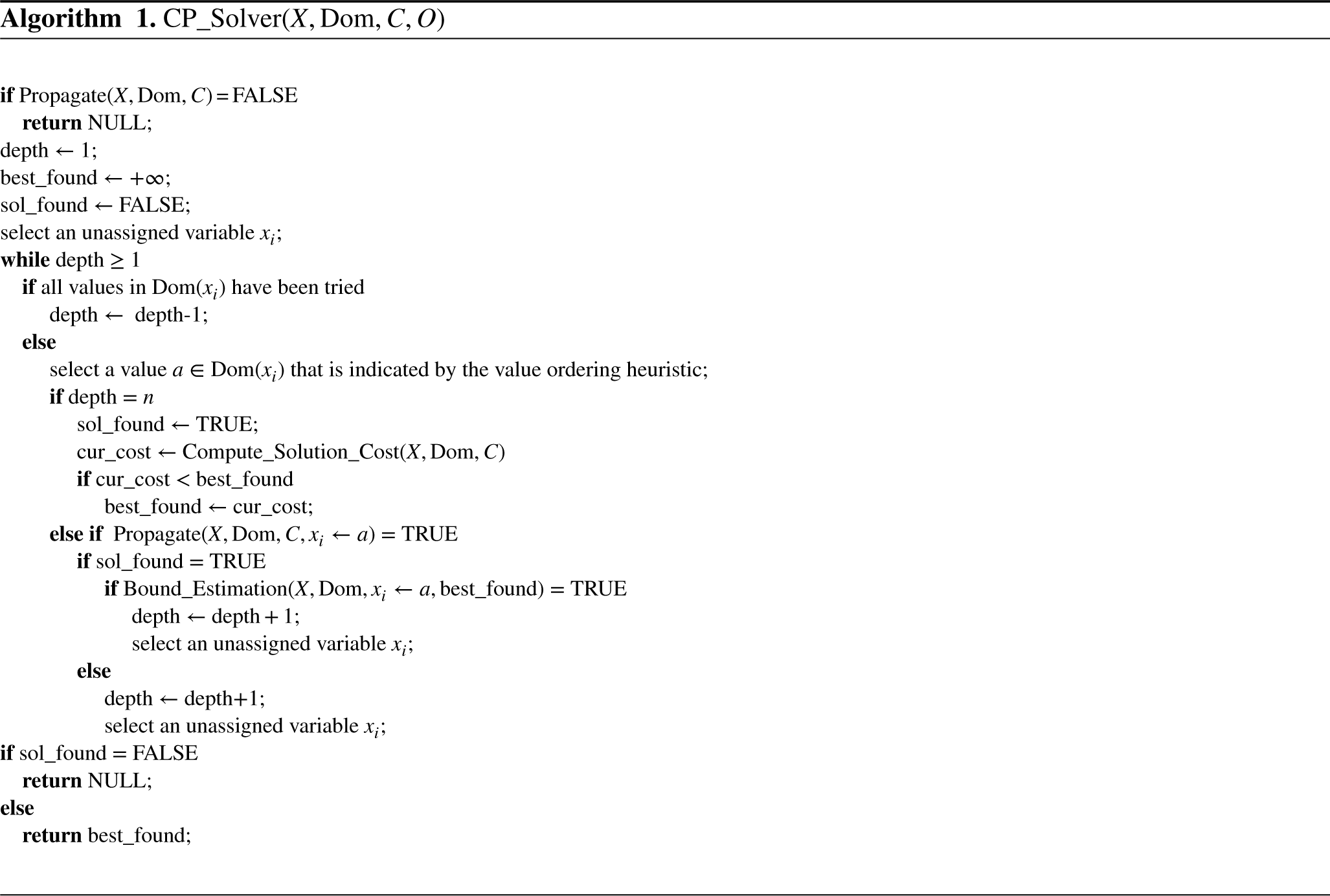

Algorithm 1 gives a high-level description of the solver in which the pruning heuristic has been implemented. Given a pMD

If all variables have been assigned (

If not all variables have been assigned yet, function Propagate is called to propagate the value assignment that has just been made. If no failure occurs, the heuristic bounding mechanism is triggered by calling function Bound_Estimation (Algorithm 2), provided that at least one feasible solution has already been found (i.e., sol_found = TRUE). If this function succeeds, meaning that the estimated cost is better than the best bound found up to that point then the algorithm moves forward by increasing the depth of search and selecting a new unassigned variable. On the other hand, if propagation fails or the estimated bound is not better than the value of best_found then the current branch is abandoned and a new value for the current variable is selected, as indicated by the value ordering heuristic. If all values for this variable have been tried at this depth of search, then the algorithm backtracks to the previously selected variable and tries a new value for it.

If the value best_found remains unchanged upon termination (i.e., sol_found = FALSE), then the algorithm has proved that the problem is infeasible and the solver returns NULL. Otherwise, the best cost found is returned. In the former case, the heuristic part of the algorithm (i.e., the bounding mechanism) will never be triggered, as no feasible solution will have been found. Hence, the search space will be systematically explored in a typical CP solver fashion until a backtrack to depth 0 occurs, proving that the problem is infeasible.

Branch Pruning Heuristic

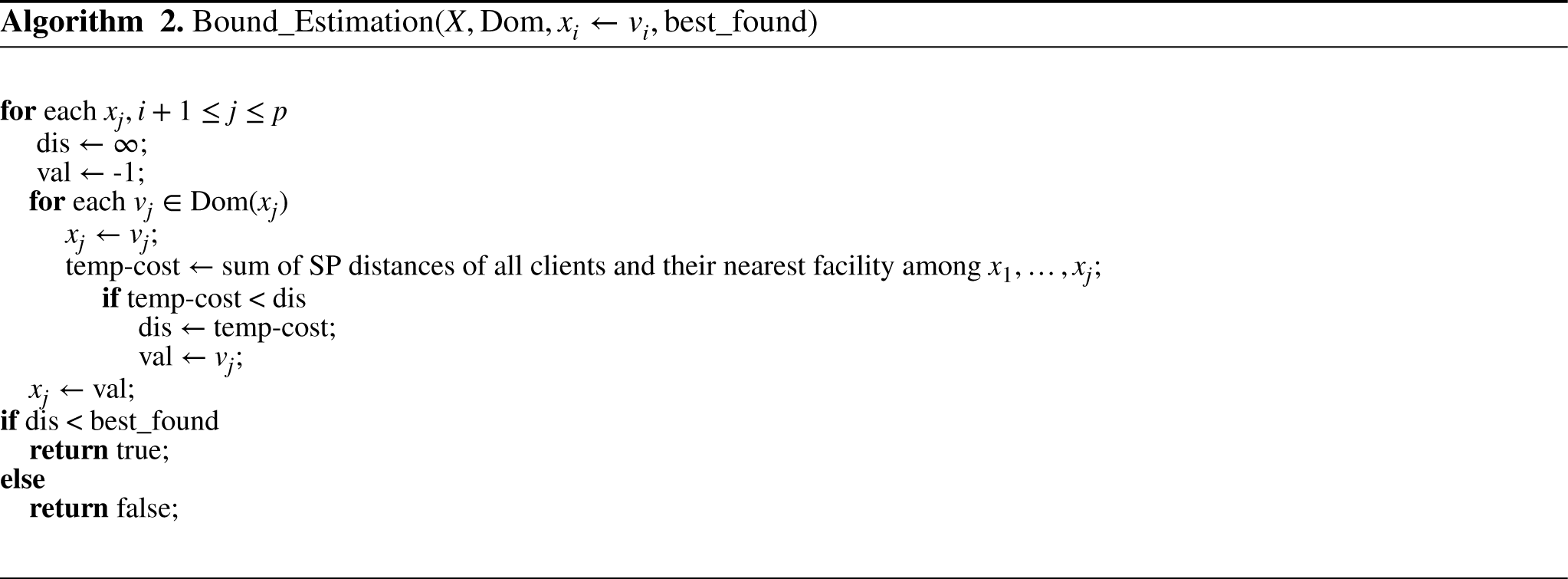

Let us now describe the function Bound_Estimation (Algorithm 2) that implements the branch pruning heuristic. As in Ploskas et al. (2023), at each node, we relax the problem by considering only the objective function (i.e., the distance constraints are not taken into account) and we heuristically estimate the cost that can be achieved if the subtree rooted at that node is explored. But in our case, a lower bound estimation is computed, as we deal with a minimization problem.

The estimation of this lower bound is performed using the reasoning of the greedy heuristic for the p-median problem. Specifically, assuming that

An Example of Erroneous Pruning and a Sampling Technique

The following example demonstrates that the greedy bound estimation that is performed at every node can overestimate the cost, which may result in the omission of the optimal solution.

Consider a network An Example p-Median with Distance Constraints (pMD) Problem where the Branch Pruning Heuristic Misses the Optimal Solution.

The optimal solution is

As the example demonstrates, an important drawback of the heuristic bound estimation procedure is that the bound computed at each node is based on a single run of the greedy heuristic with a fixed ordering of the unassigned variables. In the above example, if the order in which the greedy heuristic visits the future variables is reversed (i.e., first

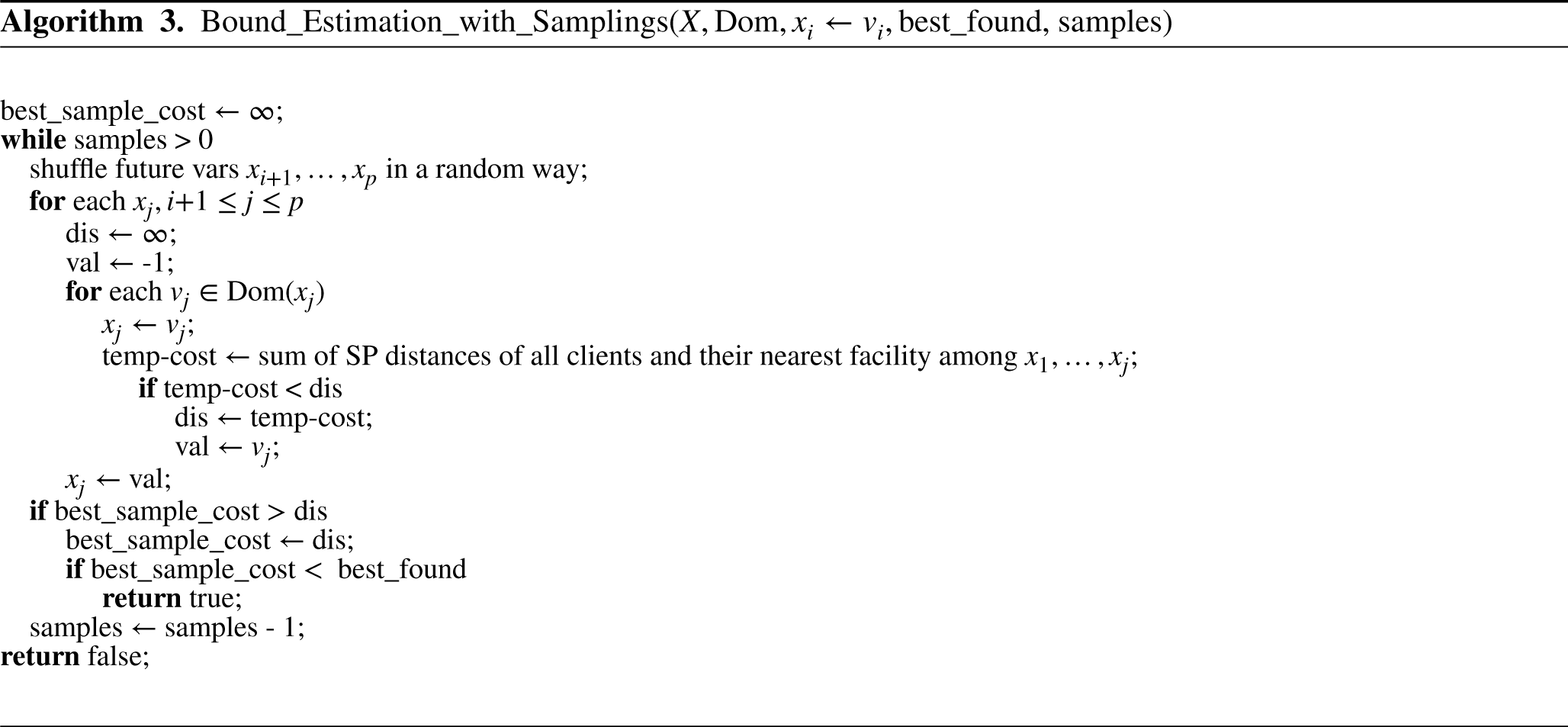

To address this, we enhanced our method through a technique that randomly samples over the possible orderings of the unassigned variables, computing a bound using the greedy heuristic for every sampled ordering. To minimize unnecessary computations, we set the number of samples equal to the number of all possible permutations (i.e., different variable orderings) if the latter is smaller than the former. If the estimated lower bound of a sample is lower than the cost of the best solution found so far, then the current branch is accepted. This results in weaker pruning, and therefore higher CPU times, but a better approximation of the optimal solution, as our experiments demonstrate. This technique (depicted by Algorithm 3) modifies the function Bound_Estimation by repeatedly randomly reordering the future variables and running the greedy heuristic on each obtained ordering.

We now introduce four domain-specific value ordering heuristics for pMDs. These heuristics use information about the current state of search to select a value assignment for the current variable that may maximally contribute to the improvement of the objective value. The first two heuristics only consider the current variable and its available values, meaning that they only have a local view of the problem, but they are also cheap to compute. The third heuristic takes into account the already assigned variables too, whereas the fourth one, which is computationally the most expensive, additionally considers the possible assignments to future variables.

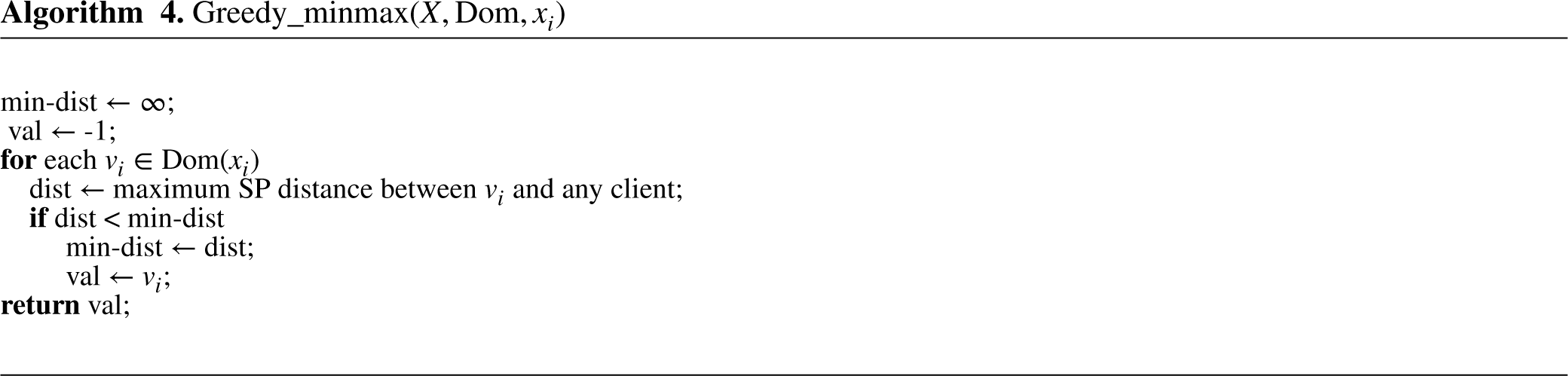

Greedy-Minmax

We call the first heuristic greedy-minmax. This value ordering heuristic selects the value that would induce the smallest cost in the objective function if we were to solve the 1-center problem. The p-center problem is similar to the p-median and differs only in the objective function. The goal is to determine the locations of p facilities in a way that minimizes the maximum distance between any client and their closest facility. The 1-center problem is a special case of the p-center, where the goal is to locate a single facility (i.e.,

Therefore, the site that will be selected for the location of the facility under consideration will be the one whose maximum distance from a client is the smallest among all potential sites. Specifically, suppose that we try to pick a value for the current variable

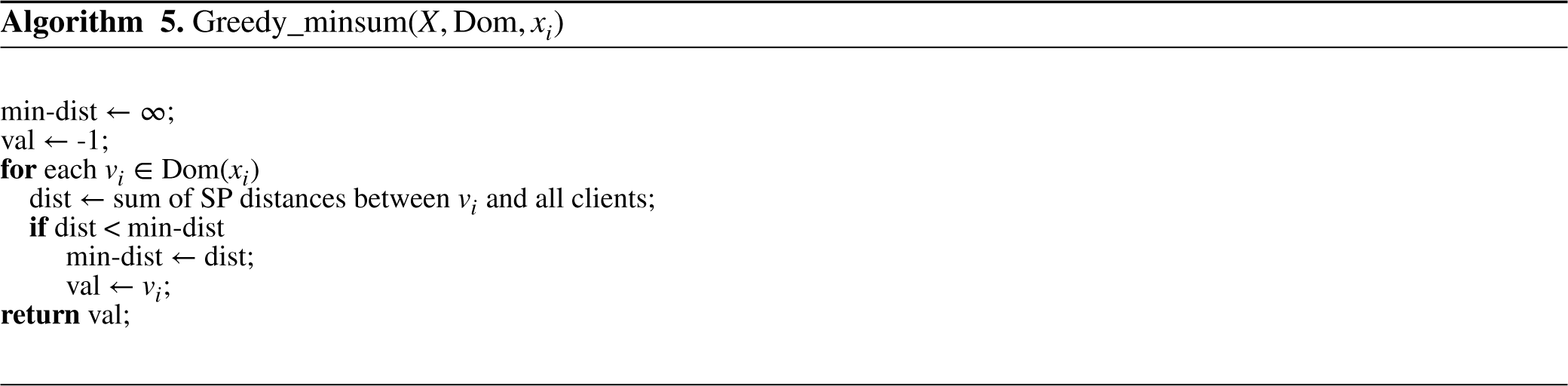

Greedy-Minsum

The greedy-minsum value ordering heuristic also holds a local view of the problem. However, instead of solving the 1-center problem, it considers the 1-median. In other words, for each value

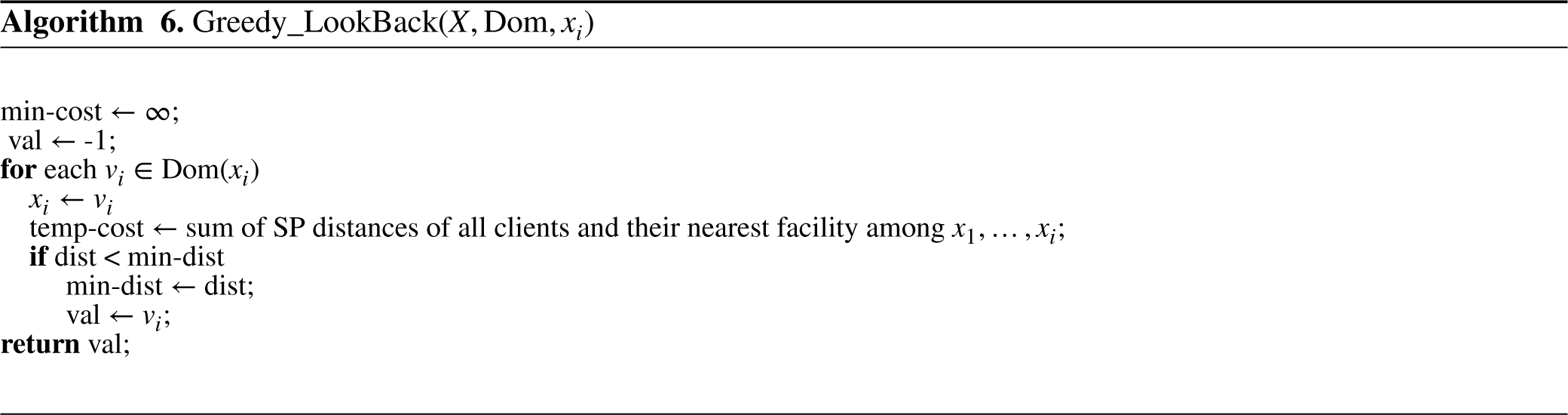

Greedy Look-Back

In contrast to the two previous value ordering heuristics, the third one, which we call greedy look-back, exploits information about past assignments. That is, while trying to find the best location for the current facility, it also considers the locations of the facilities that have already been placed. This leads to more precise estimations and therefore may result in more informed branching, as the location of the current facility may not alter the service status of a client (i.e., there is a facility already located closer to them).

Specifically, for each value

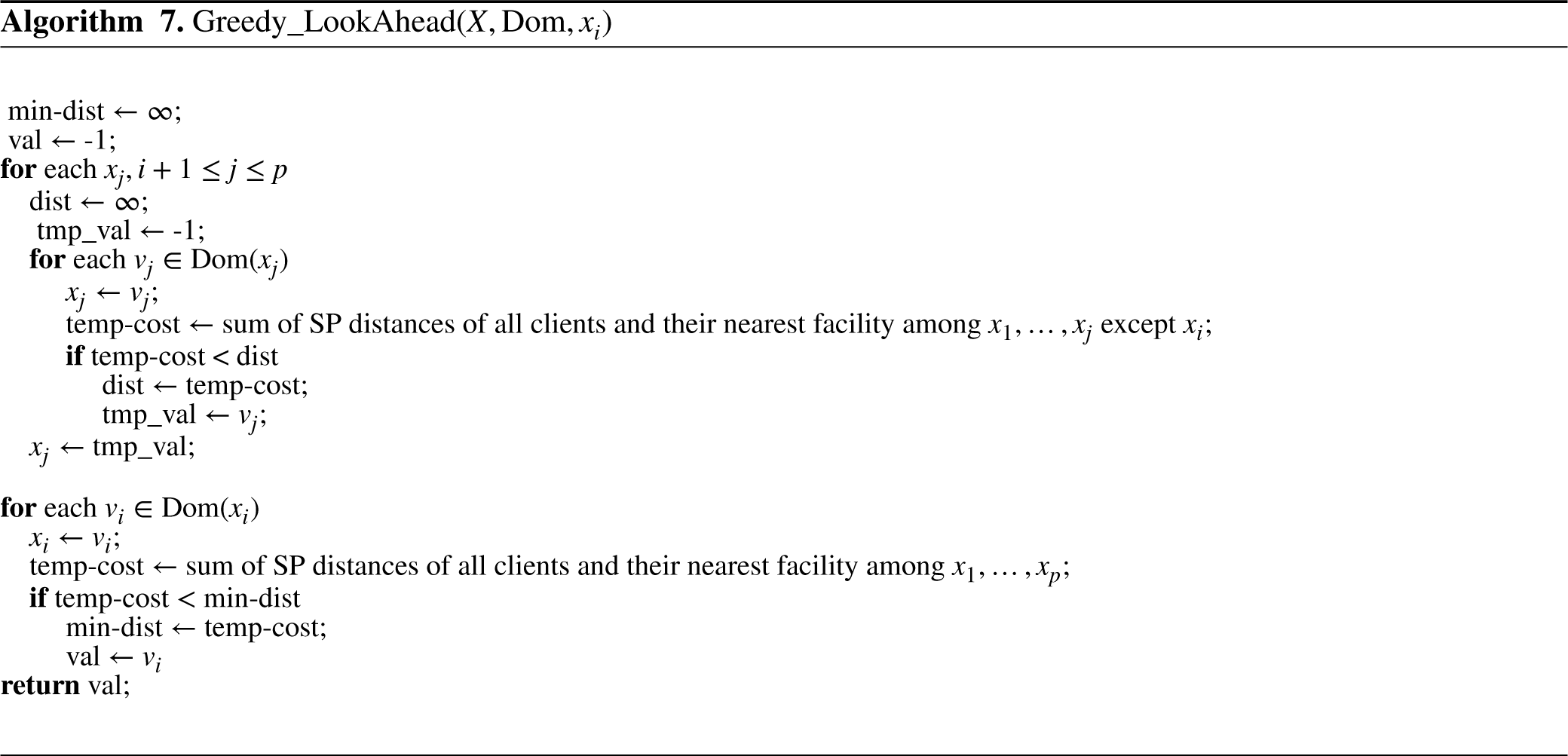

Greedy Look-Ahead

The final heuristic is called greedy look-ahead. This heuristic considers the information derived from previous assignments, as greedy look-back does, while also trying to estimate the best cost achievable under the current branching decision. This is done by greedily assigning values to future variables (i.e., unassigned ones), considering the relaxed problem (i.e., without distance constraints).

In more detail, suppose that there are

Algorithm 7 depicts this process.

We also experimented with a similar heuristic, using a slightly different approach for the estimation of the best value to branch on. This heuristic is computed as follows: for each

Experiments

Computations were performed on an Intel i7 CPU 8700 with 16 GB of main memory, a clock of 3.2 GHz, an L1 cache of 348 kB, an L2 cache of 2 MB, and an L3 cache of 12 MB, running under CentOS 8.4. We set a time limit of 3,600 s for all the experiments reported below. The ILP model was solved using Gurobi 9.0.3 (Gurobi Optimization, LLC, 2023) and was stored in compressed sparse column format, as the constraint matrix can sometimes be too large to be stored as a full array. The CP model was written in the CPMpy modeling tool (Guns, 2019) and compiled into CP-SAT OR-Tools (O.-T. Development Team, 2024). CPMpy is a CP modeling library in Python, based on Numpy, that provides direct solver access. While a native OR-Tools implementation via the Python API was feasible, CPMpy allows us to maintain solver flexibility (for further experimentation) without sacrificing efficiency or model integrity. In fact, we have actively verified that the model generated by CPMpy is identical to the native OR-Tools model.

CP-SAT OR-Tools is a state-of-the-art CP solver that leverages SAT (satisfiability) methods. Since this solver does not support real-valued domains, which are present in our problems, we scale all values by a sufficiently large factor to convert them into integers. The results are then transformed back to their original values. In addition, OR-Tools includes several built-in variable and value selection heuristics. In preliminary experiments, we tested various settings, such as prioritizing decision variables

The heuristic CP approach was implemented in a custom (heuristic) solver written in C, following the structure of Algorithm 1. This solver will be denoted as CP

Problem Generation Models

Given the lack of benchmarks for the pMD, we experimented with pMD instances generated in three different ways. The first two place the candidate facility sites and the clients on the nodes of a grid, while the third uses the p-median benchmark library as a basis to create pMDs.

Grid-Based Generation Model

The grid generation model creates problems embedded in an

We assume that the weight of each edge in the grid is equal to 1. Therefore, given that we have a grid, the length of the shortest path between any client and any potential facility site can be, at best, equal to the Manhattan distance between them. For each distance constraint

Minimum Weighted Covering Generation Model

The second random generator is a variant of the first one and is based on the generator used in Berman and Huang (2008) for minimum weighted covering location problems with distance constraints. It again creates pMDs embedded in an

The generator first randomly creates a tree with

We have tried two different ways for the generation of the distance constraints:

For any constraint between variables The variables are split into two sets, with the first one corresponding to facilities that need to be placed further apart, and the second to facilities that can be closer to clients and to one another. The distances for the constraints are drawn from three intervals, the low, the medium, and the high one. For a constraint where both involved variables

The p-median based generator takes instances from the p-median benchmark dataset (Beasley, 1985), consisting of problems with 100–900 nodes and 5–200 facilities. We randomly select

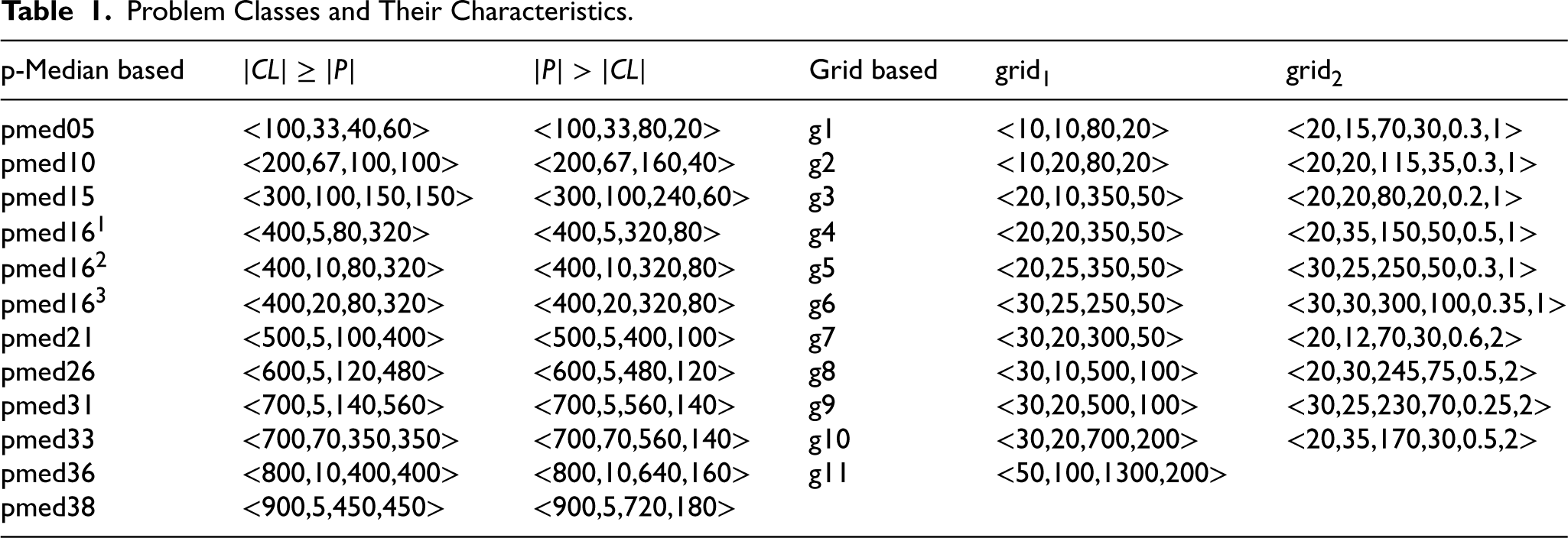

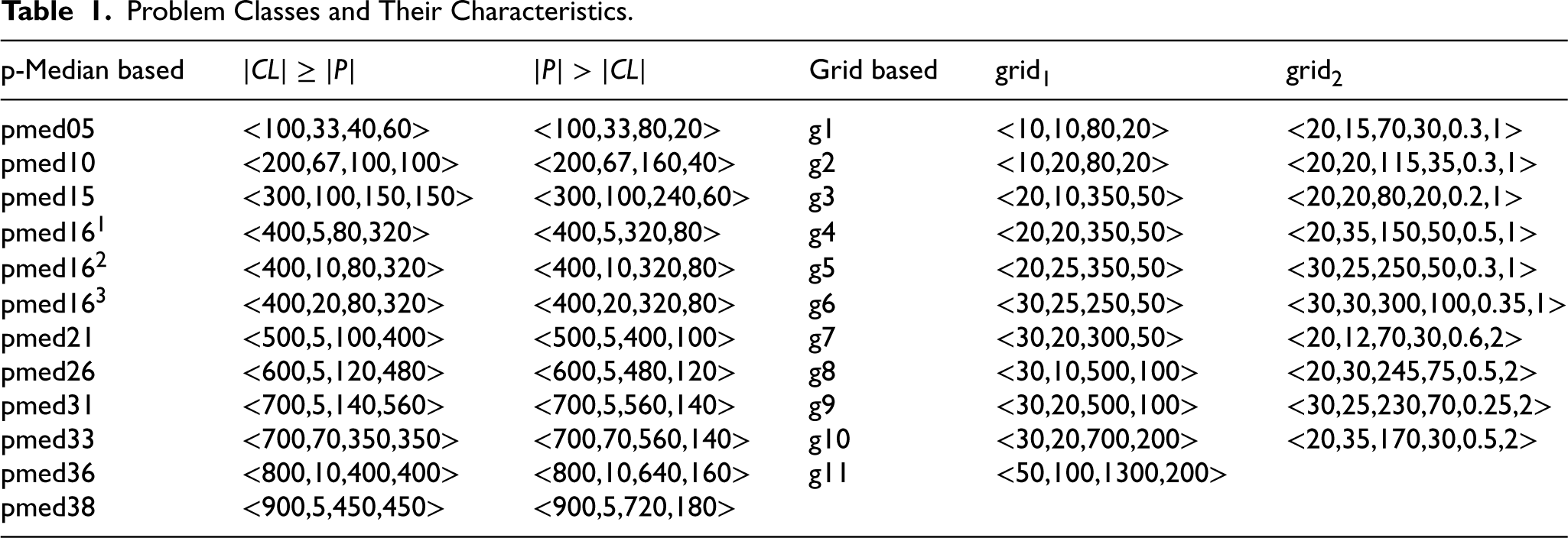

From now on, we will refer to the grid model of Subsection 7.1.1 (respectively, 7.1.2) as grid

Table 1 details the classes generated for pMDs using the three-generation models. For the p-median-based ones, we give the name of the p-median benchmark used as basis. Each such class is defined by the parameters

Problem Classes and Their Characteristics.

Problem Classes and Their Characteristics.

We first evaluate the performance of the custom solver, which implements the branch pruning heuristic, by comparing it against Gurobi and OR-Tools on instances generated using the three models described above. Then, we evaluate the domain-specific value ordering heuristics, and finally, we give results from the sampling method of Section 6.3.

Comparing the Solvers

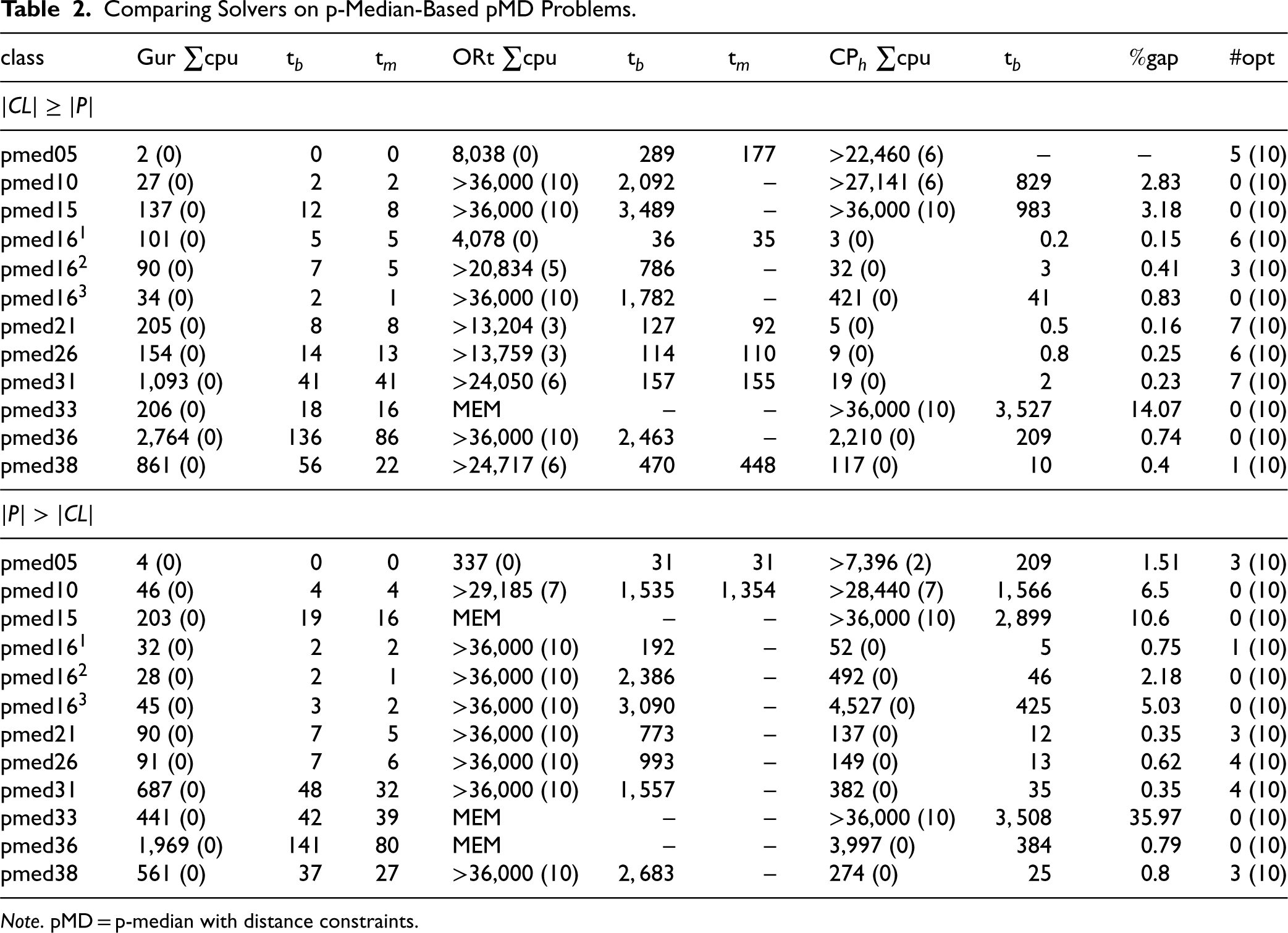

Table 2 compares the three solvers on p-median-based problems. We report the total CPU times taken by Gurobi, OR-Tools, and CP

Comparing Solvers on p-Median-Based pMD Problems.

Comparing Solvers on p-Median-Based pMD Problems.

Note. pMD = p-median with distance constraints.

Evidently, Gurobi outperforms OR-Tools in all classes of the p-median-based problems in terms of total CPU time and mean cost. In fact, Gurobi finds these problems quite easy, terminating within the time limit in all instances. Hence, it is clearly the best solver for these types of pMDs. Looking at the performance of

However, if we compare CP

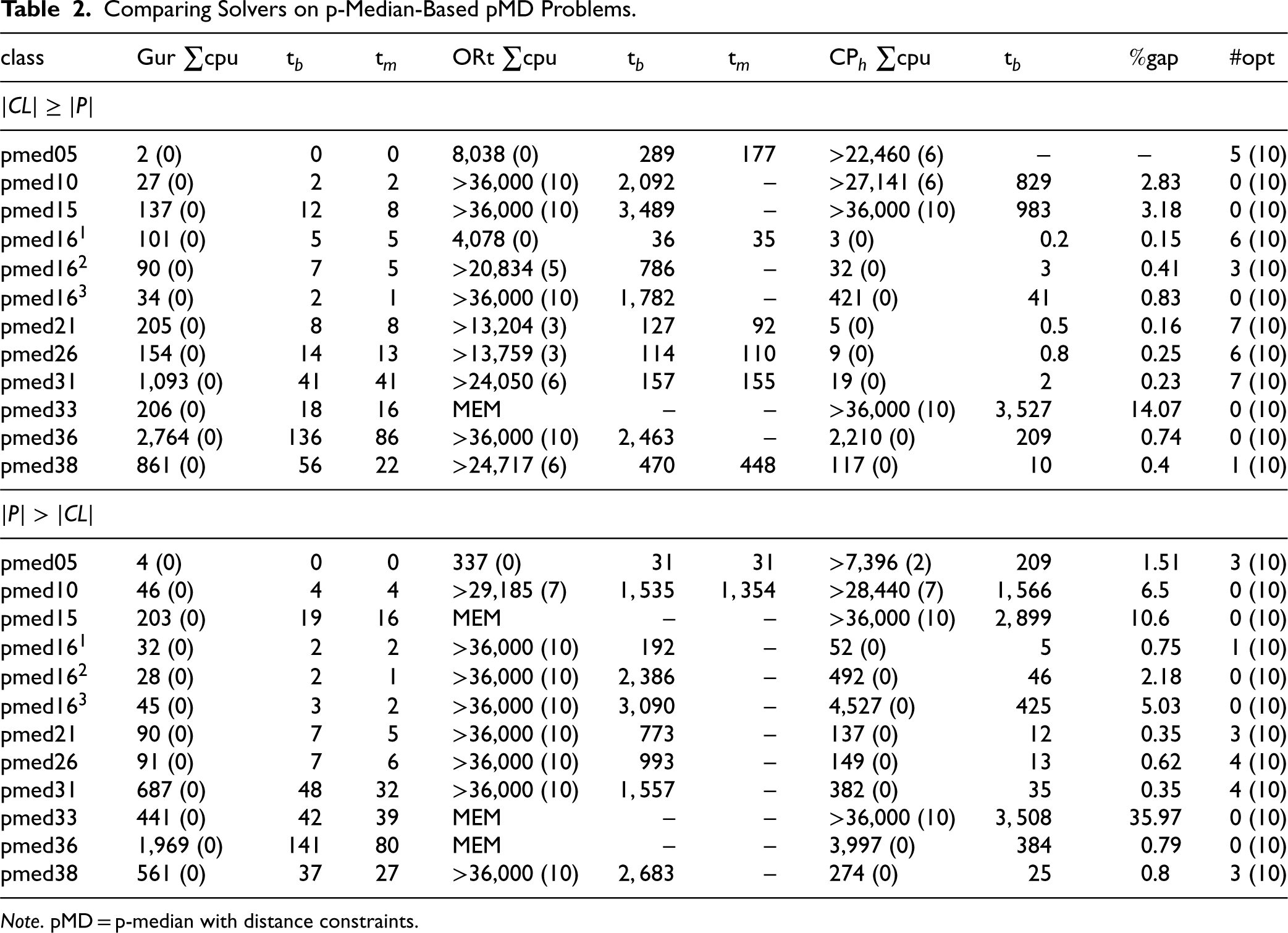

Table 3 compares the three solvers on problems generated using the grid models. The columns are the same as in Table 2, except that the mean values of the cost are presented rather than the optimality gaps. This is because the optimal solutions are unknown in many instances of many classes, as Gurobi and OR-Tools did not manage to terminate within the time limit. The lowest mean objective value is highlighted in bold. For Gurobi and OR-Tools, we give in brackets (in the cost column) the number of instances in which they managed to find at least one solution. For CP

Comparing Solvers on Grid-Based pMD Problems.

Note. pMD = p-median with distance constraints.

The lowest mean objective value for each problem class is highlighted in bold. In case of ties, the value of the best-performing solver is highlighted.

Interestingly, the results differ significantly from those in Table 2. OR-Tools performs better than Gurobi in general, solving problems in classes that are unreachable for the latter. Gurobi seems to struggle to even find any solution to most problems of various classes. In fact, in only 7 out of 21 classes, it is able to locate at least one solution in all instances of the class. On the other hand, OR-Tools is able to locate at least one solution in all instances in 16 out of 21 classes. Gurobi suffered memory exhaustion in g10 and g11 classes of grid

CP

Taking a deeper look at the performance of the solvers, an important factor that seems to affect it is the number of solutions existing in a problem. Gurobi benefits from the presence of many solutions in an instance, while this does not hold for CP

On the other hand, grid-based problems, where Gurobi falters, typically have few solutions. For instance, we counted only 11,872 solutions until termination in class g3 of grid

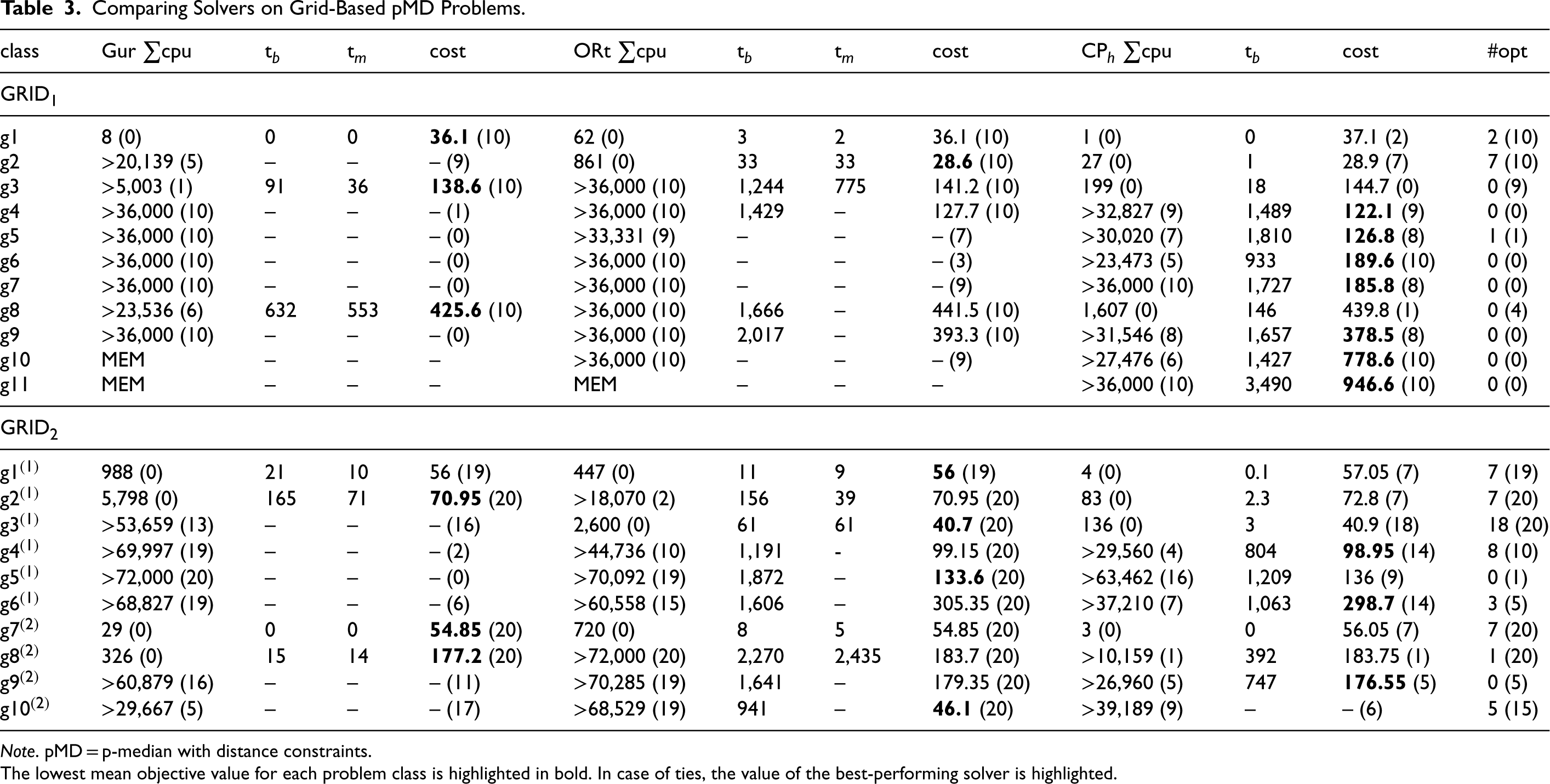

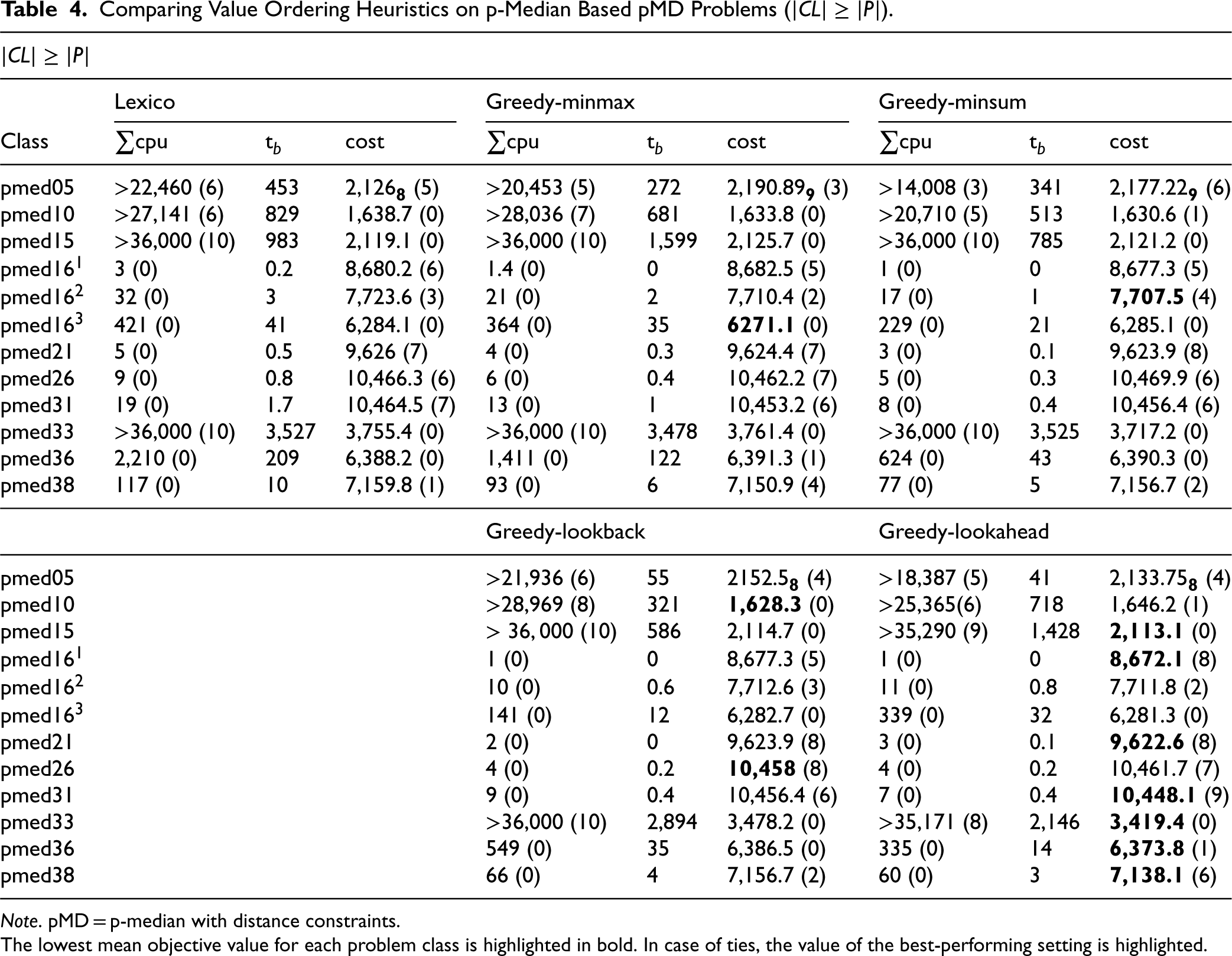

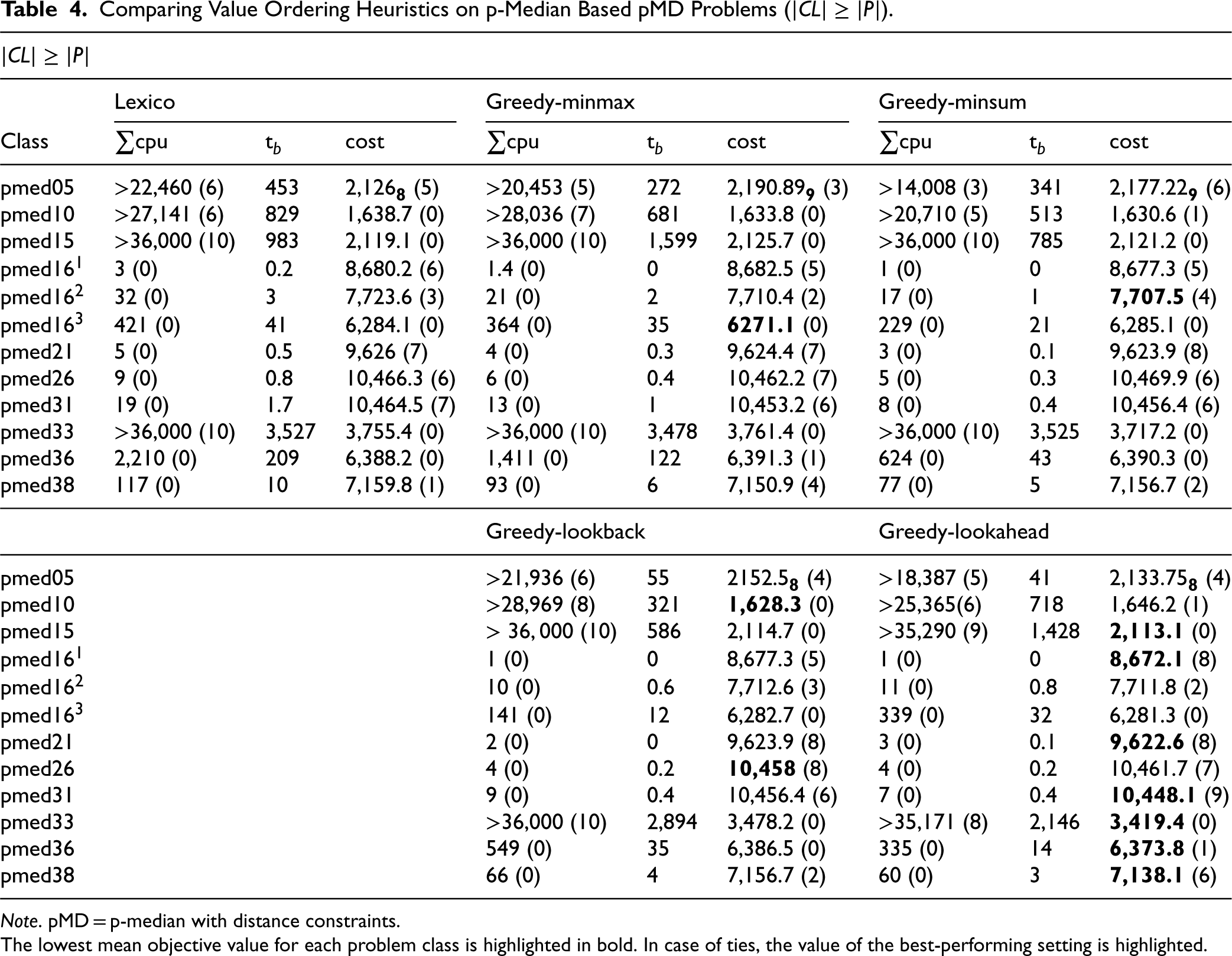

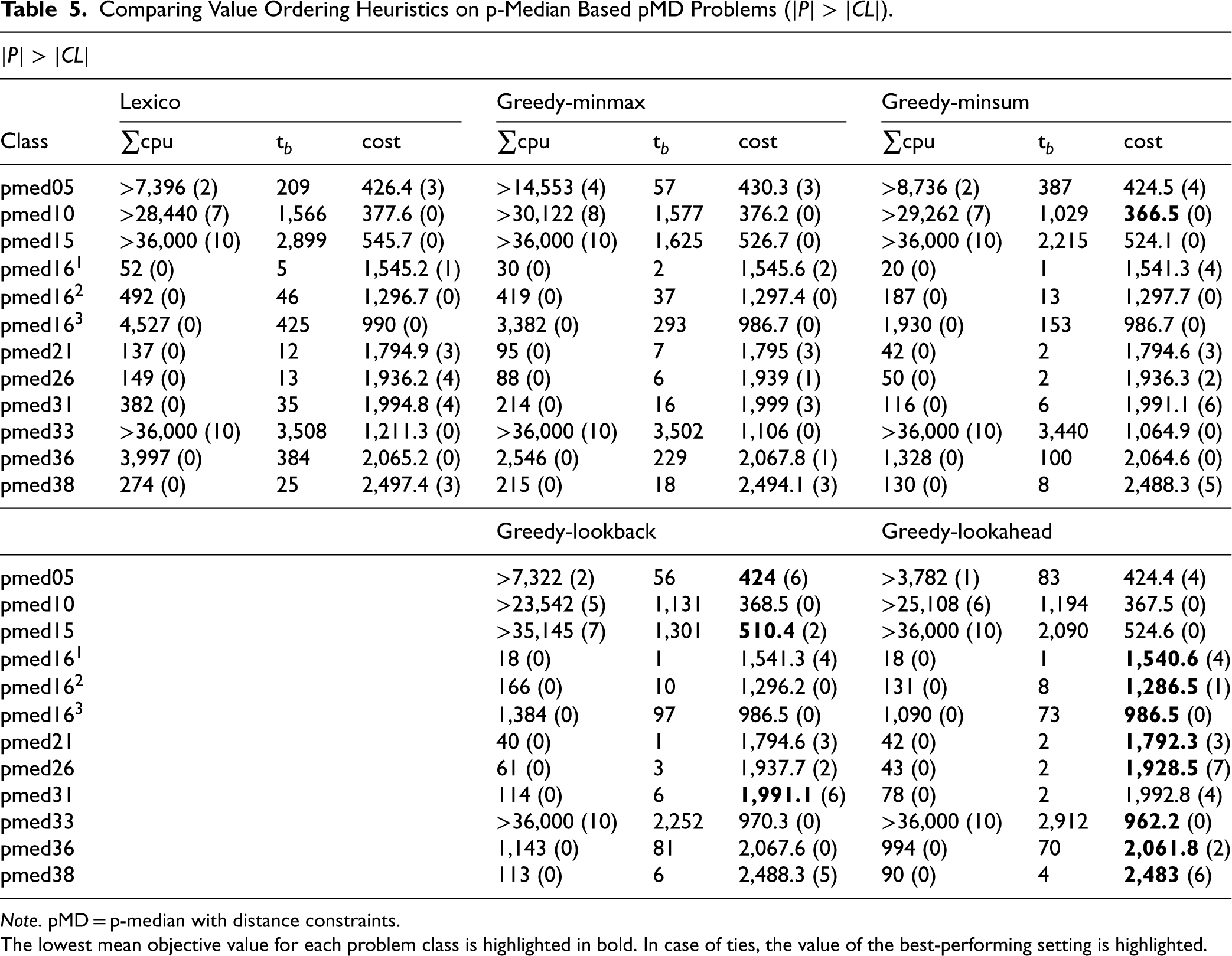

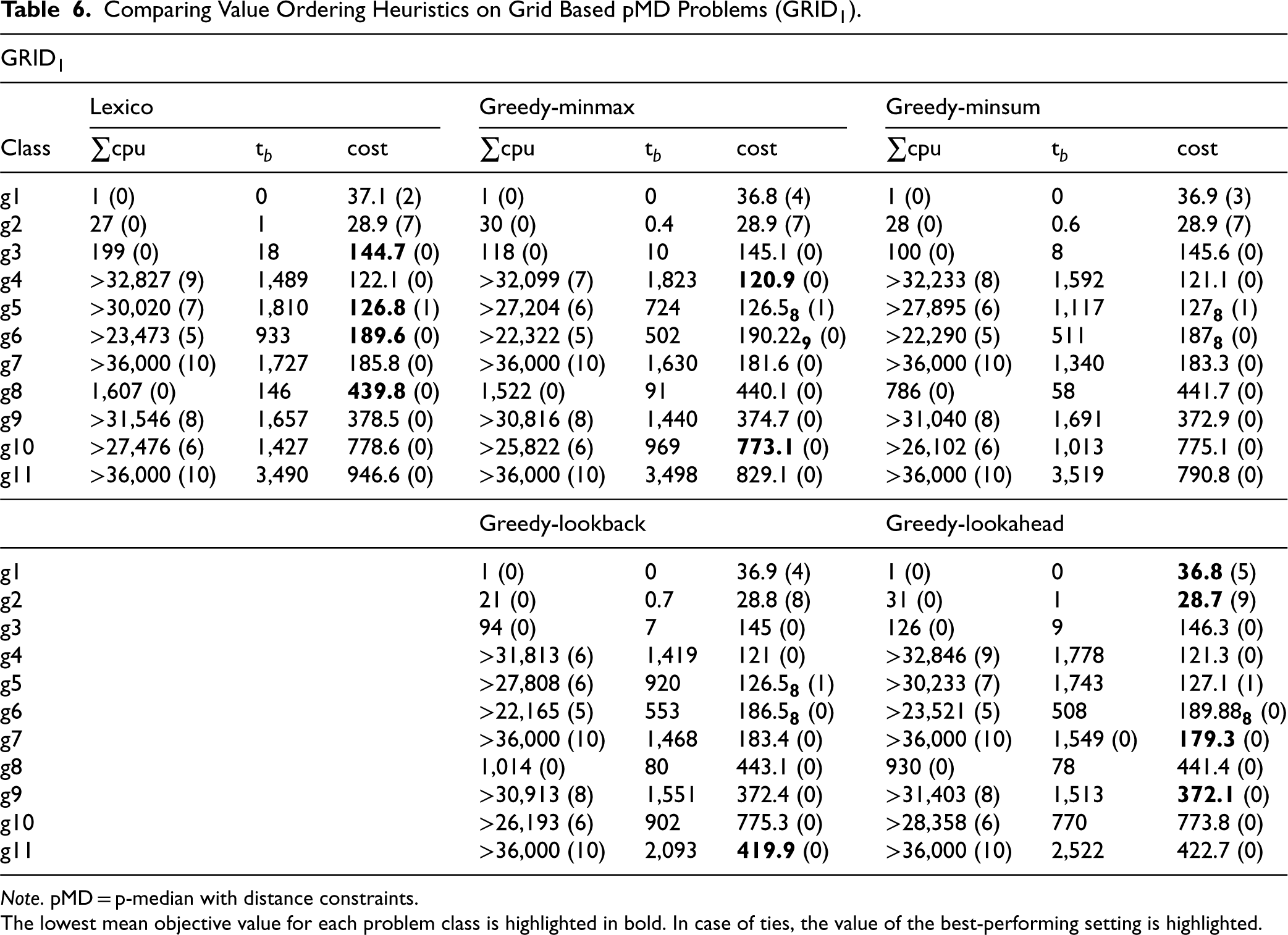

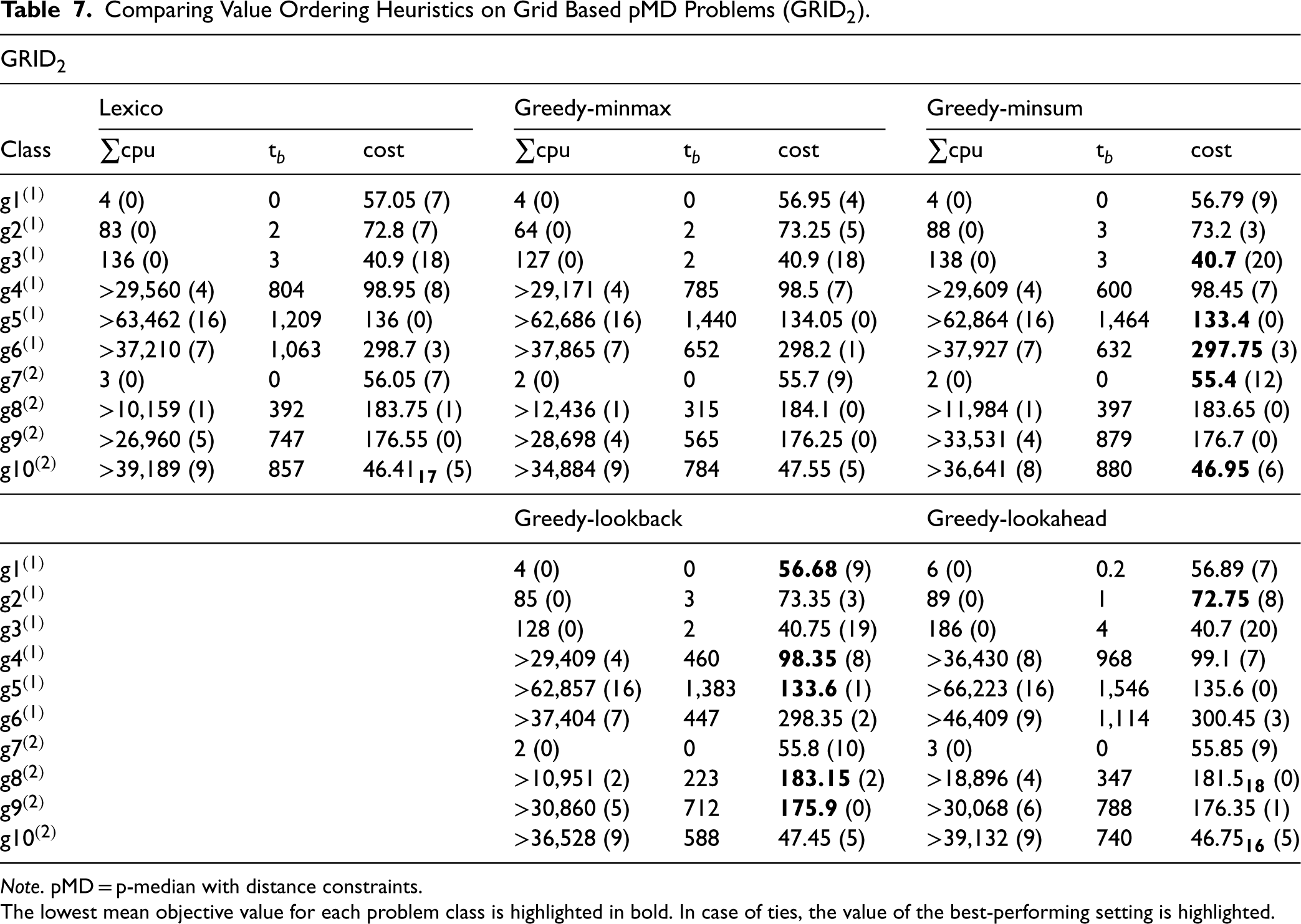

Tables 4 to 7 evaluate the performance of CP

Comparing Value Ordering Heuristics on p-Median Based pMD Problems (

).

Comparing Value Ordering Heuristics on p-Median Based pMD Problems (

Note. pMD = p-median with distance constraints.

The lowest mean objective value for each problem class is highlighted in bold. In case of ties, the value of the best-performing setting is highlighted.

Comparing Value Ordering Heuristics on p-Median Based pMD Problems (

Note. pMD = p-median with distance constraints.

The lowest mean objective value for each problem class is highlighted in bold. In case of ties, the value of the best-performing setting is highlighted.

Comparing Value Ordering Heuristics on Grid Based pMD Problems (

Note. pMD = p-median with distance constraints.

The lowest mean objective value for each problem class is highlighted in bold. In case of ties, the value of the best-performing setting is highlighted.

Comparing Value Ordering Heuristics on Grid Based pMD Problems (

Note. pMD = p-median with distance constraints.

The lowest mean objective value for each problem class is highlighted in bold. In case of ties, the value of the best-performing setting is highlighted.

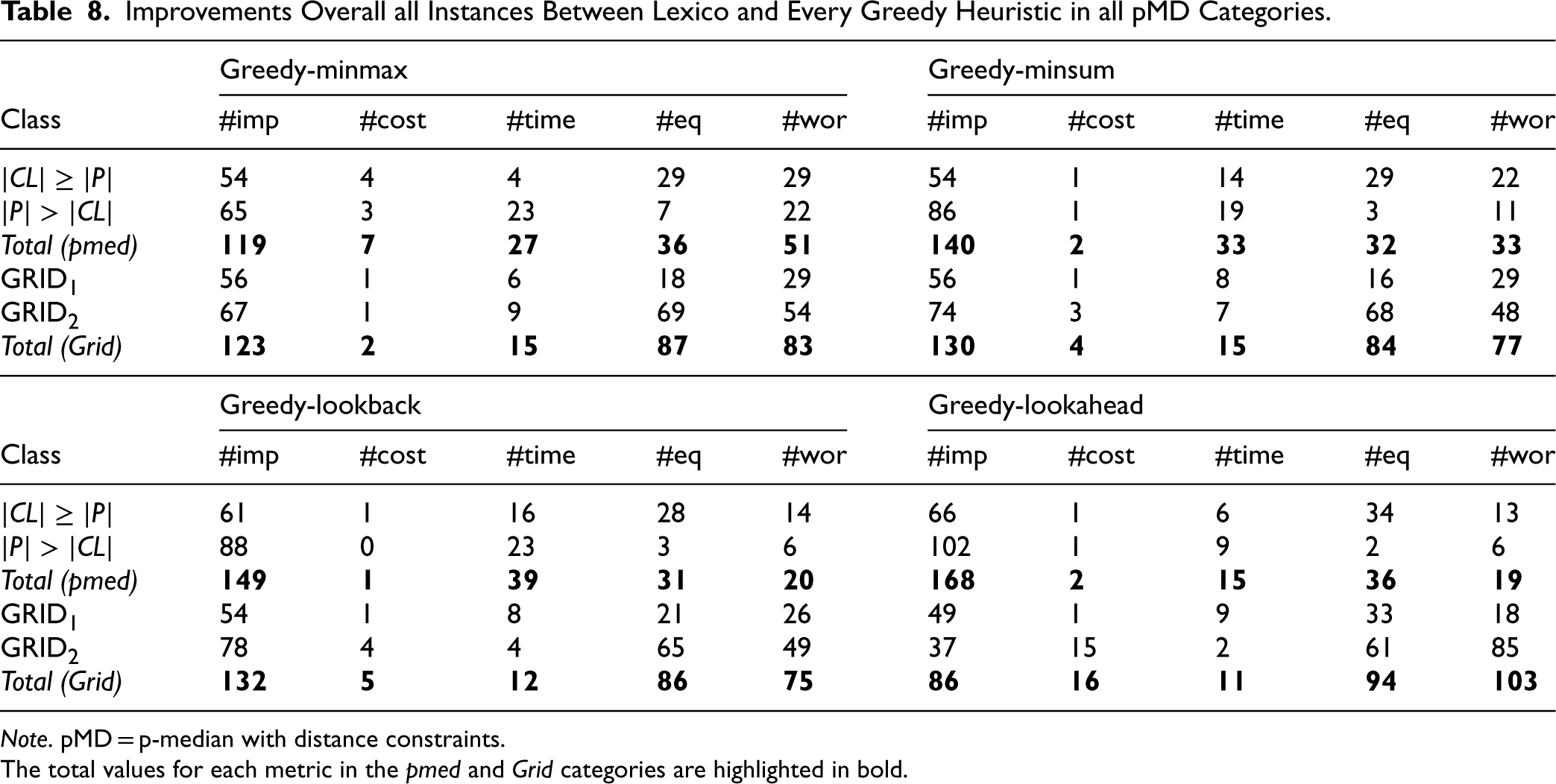

When evaluating the heuristics, we need to consider their effect on the quality of the solutions that the solver discovers, as well as on the CPU time taken. Table 8 summarizes the results, focusing on these two criteria. Specifically, we give the number of instances over each of the four categories, where each domain-specific value ordering heuristic achieved better, worse, or similar results compared to lexico. Let us clarify how the comparison is made by explaining what the numbers in the different columns of the table indicate.

Improvements Overall all Instances Between Lexico and Every Greedy Heuristic in all pMD Categories.

Note. pMD = p-median with distance constraints.

The total values for each metric in the pmed and Grid categories are highlighted in bold.

Regarding run times, we consider that a heuristic improves the performance if it achieves at least a

Tables 4 and 5, as well as Table 8, demonstrate that in the p-median-based classes, greedy-minmax, greedy-minsum, and greedy-lookback provide slight improvements compared to lexico. However, greedy-lookahead exhibits the best overall performance, as it is able to reduce the total run-times in most cases (often, quite considerably), while also notably improving the quality of the solutions discovered. For example, in classes pmed21 to pmed31 (

Accordingly, Tables 6 and 7, as well as Table 8, demonstrate that the value ordering heuristics achieve better results than lexico in most classes of the grid categories, especially in

In summary, greedy-lookback and greedy-lookahead demonstrated the best performance among the proposed value ordering heuristics, with respect to both solution quality and run-time. Our results from p-median-based classes indicate that when faced with problems that have many feasible solutions, and therefore a higher probability of many promising paths, a heuristic such as greedy-lookahead can be quite useful. However, using such a heuristic can sometimes be both expensive and misleading when dealing with highly constrained problems (e.g.,

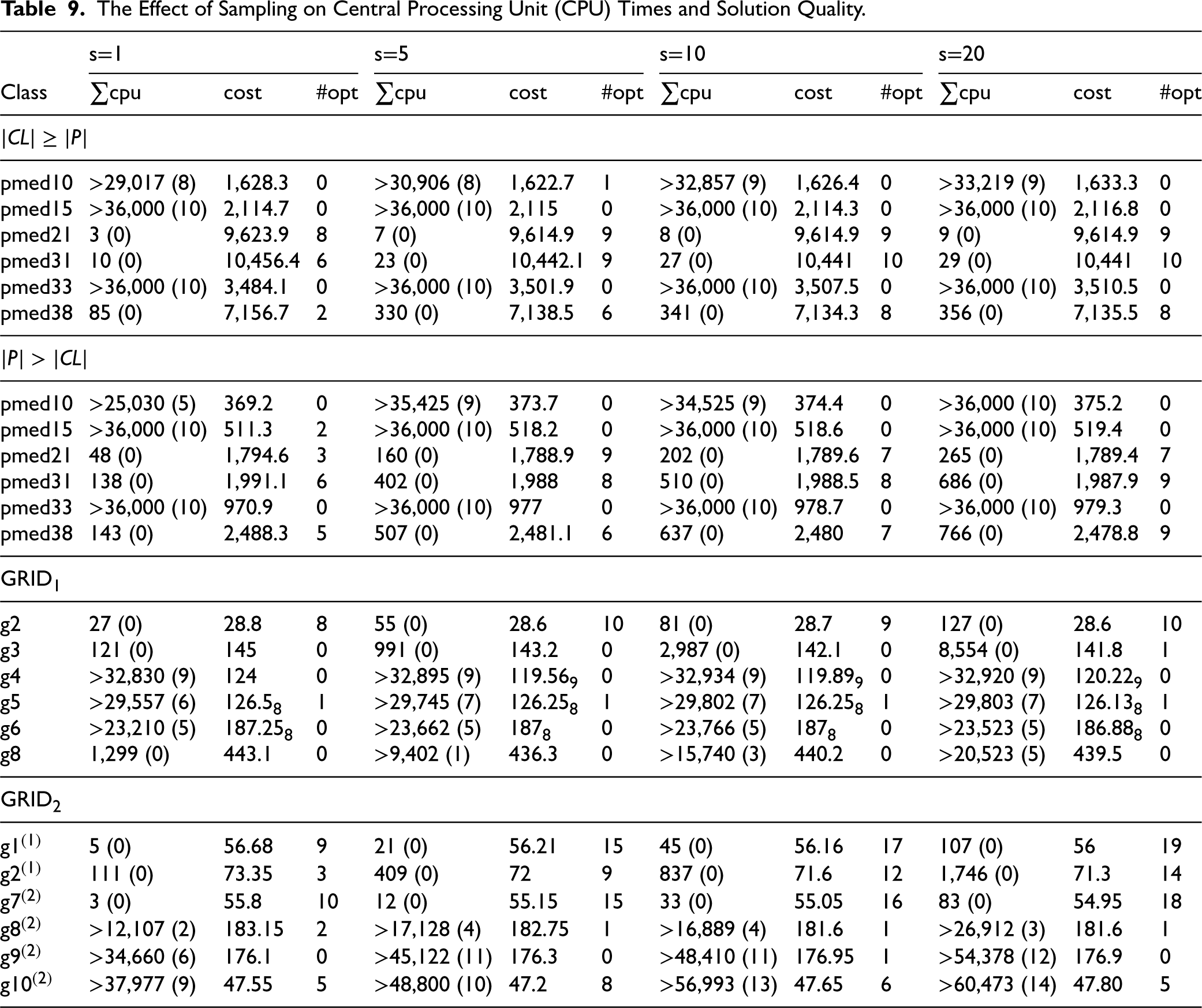

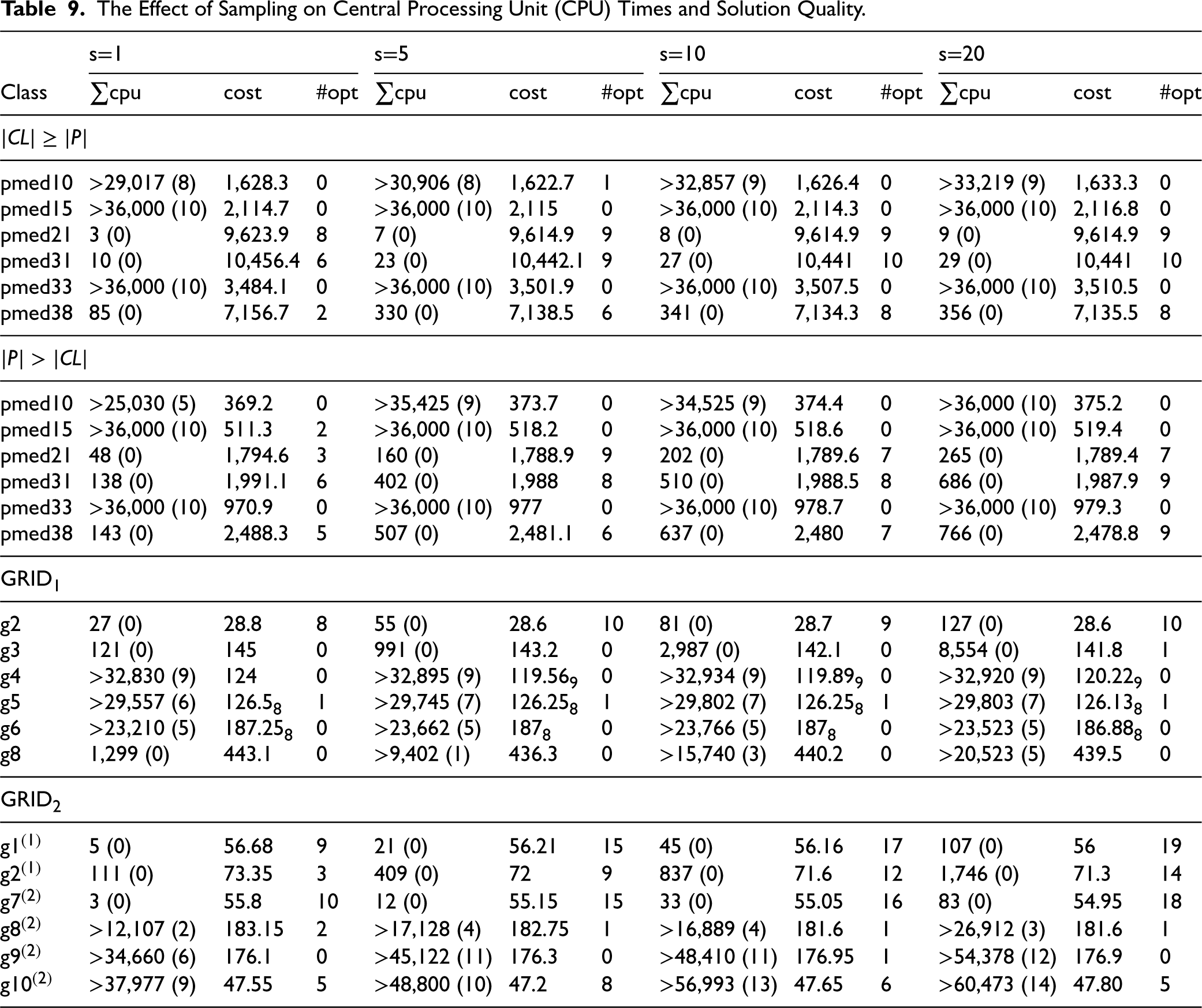

Table 9 demonstrates the effect of the sampling technique on CPU times and solution quality. Four different settings, with varying numbers of samples, are compared. For each number of samples, we give the total CPU times (

The Effect of Sampling on Central Processing Unit (CPU) Times and Solution Quality.

The Effect of Sampling on Central Processing Unit (CPU) Times and Solution Quality.

As expected, as the number of samples grows, so does the CPU time. This is due to the weaker pruning that is entailed by the lower bounds being computed, which means that larger portions of the search space are explored, and also to the overhead caused by the repeated runs of the greedy heuristic while computing the bounds. This overhead can have a significant impact on problems that CP

To conclude, in small problems, or when the cost of the solution is of prime importance, compared to the run time, the sampling method can be useful, as it helps to obtain solutions of better quality. However, in large problems, or when the run time is crucial, the sampling method seems to add an unnecessary CPU time overhead.

We have investigated the pMD, a variant of the p-median problem where distance constraints exist between facilities and between facilities and clients. This problem can be used to model the location of semi-obnoxious facilities. We proposed ILP and CP approaches toward modeling and solving pMDs. We also demonstrated how a recent heuristic CP approach for the p-dispersion problem with distance constraints can be adapted and applied to the pMD. Results showed that incorporating a greedy branch pruning heuristic into a CP solver along with employing a simpler model of the problem, provides a solution tool that displays more stable performance across problem classes with different characteristics compared to standard ILP and CP solvers, despite not being the best option in all the classes tried. Additionally, we proposed four specialized value ordering heuristics designed to guide the search toward promising directions by exploiting information of the current search state. Results showed that in many cases these heuristics in combination with the branch pruning method were able to provide solutions of better quality compared to lexicographic value ordering, as well as better run times. Finally, we showed that the estimation of the greedy bounding heuristic can be more precise (although sacrificing CPU time performance) by trying different orderings for the variables it considers during the computation of the bound. In this way, more optimal solutions can be obtained and the objective cost can be reduced. As for future work, it would be very interesting to consider real-world case studies for the pMD and to investigate the applicability of the heuristic CP approach to other types of constraint optimization problems from domains such as scheduling and resource allocation.

Footnotes

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.