Abstract

Aim

To evaluate the diagnostic and prognostic performance of artificial intelligence (AI) models in caries detection and endodontic diagnosis.

Materials and Methods

A systematic review was conducted according to the Preferred Reporting Items for Systematic Reviews and Meta-Analyses (PRISMA) 2020 guidelines and registered with PROSPERO (CRD42023445906). Six databases (PubMed, Scopus, Web of Science, Cochrane Library, ScienceDirect, and Wiley) were searched for studies published from January 1, 2001 to September 30, 2025. Eligible designs included randomized controlled trials and observational studies assessing AI for diagnostic accuracy in caries detection or endodontic conditions, and the prognostic prediction of treatment outcomes. Primary outcomes were diagnostic accuracy, sensitivity, specificity, and prognostic validity. Risk of bias was assessed using ROBINS-I.

Results

From 455 records, 68 studies were included; most evidence is retrospective and experimental. Convolutional neural networks (CNNs), U-Net, ResNet, and YOLO models demonstrated diagnostic accuracies ranging from 86.9% to 95% (95% CI where reported). Sensitivity for periapical lesion identification reached 97.8%, and precision for root morphology analysis and working length determination exceeded 90% in several studies. Prognostic applications showed potential in predicting retreatment success and supporting clinical decisions, though heterogeneity limited pooled analysis. External validation was rarely performed.

Conclusion

AI models show promising diagnostic and prognostic accuracy in caries and endodontic applications, with consistently high performance across tasks. However, most studies were limited by narrow datasets, methodological variability, and lack of external validation. Current evidence supports cautious optimism, but further high-quality trials and real-world validation are needed before widespread clinical adoption.

Introduction

Conventional diagnostic methods in dentistry, such as visual-tactile inspection and radiographic interpretation, remain limited by subjectivity, interobserver variability, and the risk of missing early-stage lesions or complex root morphology. Despite advancements in dental imaging and materials, these challenges highlight the need for technologies that can provide greater objectivity, consistency, and precision in diagnosis. 1

Artificial intelligence (AI) is emerging as a transformative force in dentistry, particularly through machine learning (ML) and deep learning (DL) algorithms such as convolutional neural networks (CNNs), U-Nets (a specialized CNN), and spiking neural networks (SNNs). These technologies have demonstrated significant potential across a wide range of applications, including caries detection, working length estimation, periapical lesion identification, root fracture detection, and retreatment prediction.2–4 AI models trained on radiographic data, such as bitewing, periapical, panoramic images, and cone beam computed tomography (CBCT) scans, can detect pathologies earlier than traditional methods by recognizing subtle patterns often imperceptible to the human eye. 5 Furthermore, AI aids in predicting treatment outcomes and disease progression, facilitating a more personalized and preventive approach to dental care. 6

Emerging innovations such as digital twin technology further amplify the role of AI in dentistry. Digital twins—precise 3D replicas of a patient’s oral structures—are increasingly used in orthodontic planning, implant placement, and endodontic simulation, allowing clinicians to test procedures virtually before execution. 7 Moreover, AI-integrated intraoral scanners and robotic systems can now perform real-time image analysis, automatically identify anatomical landmarks, and even recommend preliminary diagnoses, streamlining the clinical workflow and reducing cognitive load for practitioners. 8

Despite its growing promise, AI is not without challenges. Concerns regarding algorithm transparency, data quality, generalizability, and ethical considerations (such as patient privacy and accountability in decision-making) must be addressed before routine clinical adoption. Importantly, AI should be viewed as an assistive tool—enhancing rather than replacing the clinician’s expertise. Given the rapid proliferation of AI technologies in dental diagnostics, there is a critical need to synthesize the available evidence regarding their accuracy and clinical applicability. To date, existing literature is fragmented across diverse imaging modalities, algorithm types, and evaluation metrics. Therefore, a systematic review is essential to consolidate findings, assess diagnostic performance, identify limitations, and guide future research and clinical translation.

This systematic review combines caries detection with endodontic diagnosis and prognosis because both domains rely on shared AI strengths in radiographic pattern recognition. This enables unified CNN/U-Net models to be trained across conditions, maximizing clinical utility. A systematic review methodology surpasses narrative synthesis by enabling standardized ROBINS-I bias assessment, comprehensive PROSPERO-registered search strategies, and Preferred Reporting Items for Systematic Reviews and Meta-Analyses (PRISMA)-guided data extraction across 68 heterogeneous studies—essential when pooling accuracy amid varied designs, unlike selective narrative approaches prone to bias.

The primary objective of this systematic review is to evaluate the diagnostic and prognostic accuracy of AI models used for caries detection and endodontic diagnosis. This review includes peer-reviewed studies employing AI techniques (such as CNNs and SNNs) on diagnostic imaging (e.g., radiographs, CBCT, or 3D scans) or predictive tasks (e.g., prognosis of treatment success or stem cell viability).

Material and Methods

Study Design and Protocol Registration

This systematic review was conducted following the PRISMA 2020 guidelines. The review protocol was prospectively registered in the International Prospective Register of Systematic Reviews (PROSPERO ID: CRD42023445906). Any deviations from the registered protocol (e.g., inclusion of additional data extraction domains and modifications in language criteria) are documented.

Research Question

The study aimed to address the following question: “Is AI effective in diagnosing and predicting outcomes in dental caries and endodontic treatment compared to conventional diagnostic methods?”

The Research was Structured Using the PICOS Framework

Population (P): Diagnostic dental images (intraoral photographs, periapical radiographs, bitewing radiographs, CBCT) from patients or studies involving extracted teeth.

Intervention (I): AI-based models applied for diagnosis, prognosis, and outcome prediction in caries detection and endodontic procedures.

Comparison (C): Reference standards such as expert clinical judgment, traditional radiographic interpretation, or visual inspection.

Outcomes (O): Primary outcomes: diagnostic accuracy (sensitivity, specificity, precision, F1 score); secondary outcomes: prognostic validity, reproducibility, clinical applicability.

Study (S): Randomized controlled trials, cohort studies, cross-sectional, prospective, and retrospective studies.

Eligibility Criteria

Inclusion criteria

Peer-reviewed original research (RCTs or observational studies) evaluating AI in caries detection or endodontic diagnosis. Studies using radiographic or image-based datasets. Reports with quantitative outcomes (sensitivity, specificity, accuracy, F1 score). Full-text availability. Studies published from January 1, 2001 to September 30, 2025 (final search date).

Exclusion criteria

Review articles, editorials, commentaries, letters. Non-English-language studies were excluded unless sufficient translation was feasible. Studies lacking quantitative outcome data or exhibiting major methodological flaws.

Information Sources and Search Strategy

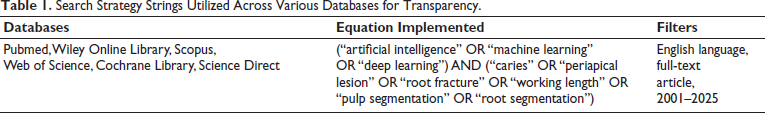

A comprehensive electronic search was performed in PubMed, Wiley Online Library, ScienceDirect, Web of Science, Scopus, and the Cochrane Library. Publications between January 1, 2001 and September 30, 2025 were considered. To minimize publication bias, gray literature sources such as ProQuest Dissertations and Google Scholar were also searched. Manual searches of reference lists were conducted. Detailed database-specific search strings are provided in Table 1.

Search Strategy Strings Utilized Across Various Databases for Transparency.

Study Selection

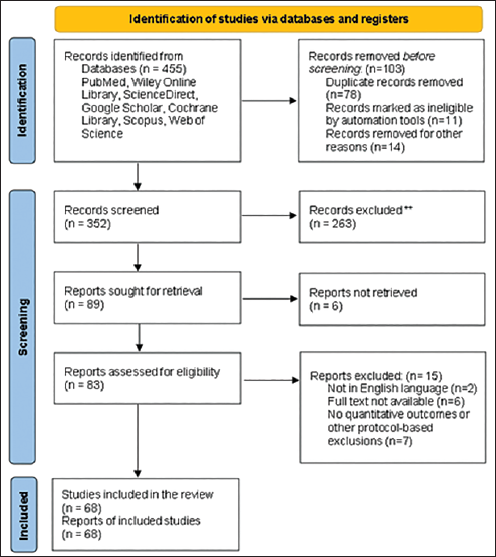

Two reviewers (RP and PK) independently screened titles and abstracts. Eligible records were retrieved for full-text review. Inter-reviewer agreement was quantified using Cohen’s kappa statistic, yielding a value of 0.84, indicating strong agreement. Discrepancies were resolved by consensus or third-party adjudication. Reasons for exclusion at the full-text stage are presented in the PRISMA flow diagram.

Data Extraction

Two independent reviewers extracted data using a standardized form, pilot tested on a subset of five studies to ensure consistency. Extracted data included study descriptors (author, year, sample size, design), AI methodology (algorithm type, architecture, training/validation/testing protocols, frameworks), imaging modality, and quantitative performance metrics.

Quality Assessment

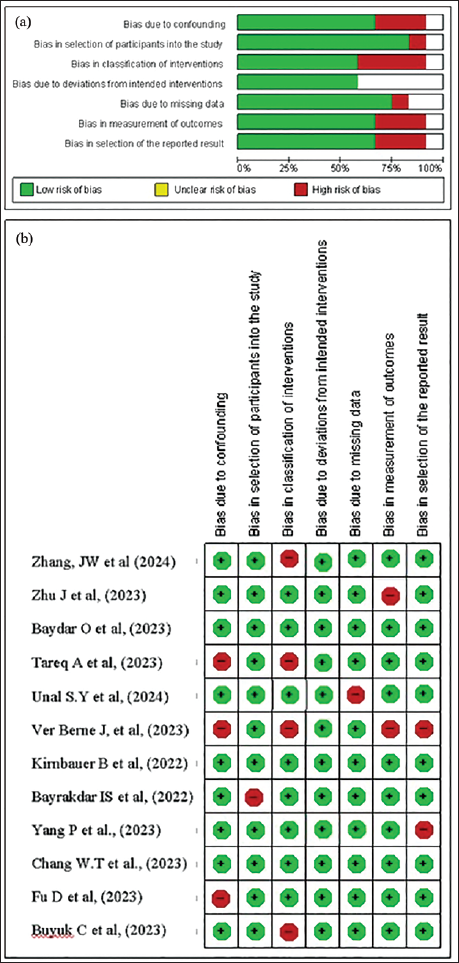

Risk of bias was assessed with appropriate tools by two independent reviewers. For non-randomized studies, the ROBINS-I tool was applied in accordance with best-practice guidelines. Assessment domains included confounding, participant selection, classification of interventions, deviations from intended interventions, missing data, outcome measurement, and selective reporting (Figure 2a and 2b).

Data Synthesis

Where possible, data were planned to be quantitatively synthesized. However, due to heterogeneity in model architectures, imaging modalities, and outcome reporting, pooled meta-analysis was not feasible for all outcomes. Findings were therefore summarized using structured narrative synthesis, supported by a range of diagnostic accuracy and prognostic performance.

Where possible, data were planned to be quantitatively synthesized. However, due to heterogeneity in model architectures (CNNs, U-Net, ResNet, YOLO), imaging modalities (bitewing, periapical, CBCT), study designs (mostly retrospective observational), and outcome metrics (accuracy 86%–97%, F1 0.77–0.95), pooled meta-analysis was not feasible. This heterogeneity reflects real-world AI research diversity.

Results

Study Selection

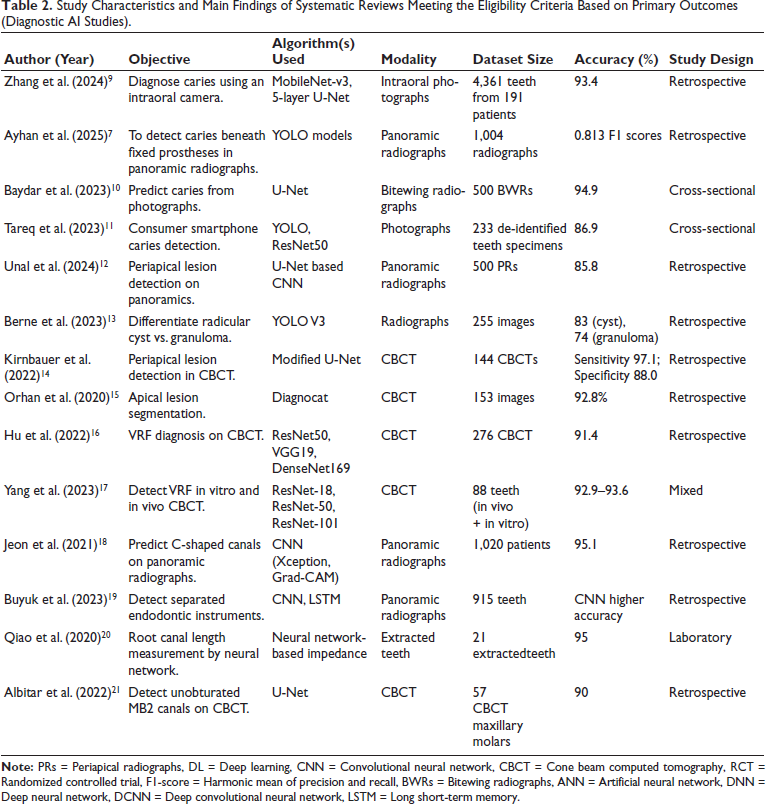

A total of 455 records were identified across six databases and through manual searches. After the removal of 103 duplicates, 352 articles were screened by title and abstract, leading to the exclusion of 263 records that did not meet eligibility criteria. Of the 89 remaining full-text articles, 21 were excluded due to reasons such as being non-English publications, the lack of full-text access, an absence of quantitative outcomes, or because they were studies not involving AI-based methods. The final qualitative synthesis included 68 studies, as shown in Figure 1. The results of the included studies are shown in Tables 2 and 3.

PRISMA Flow Diagram.

Study Characteristics and Main Findings of Systematic Reviews Meeting the Eligibility Criteria Based on Primary Outcomes (Diagnostic AI Studies).

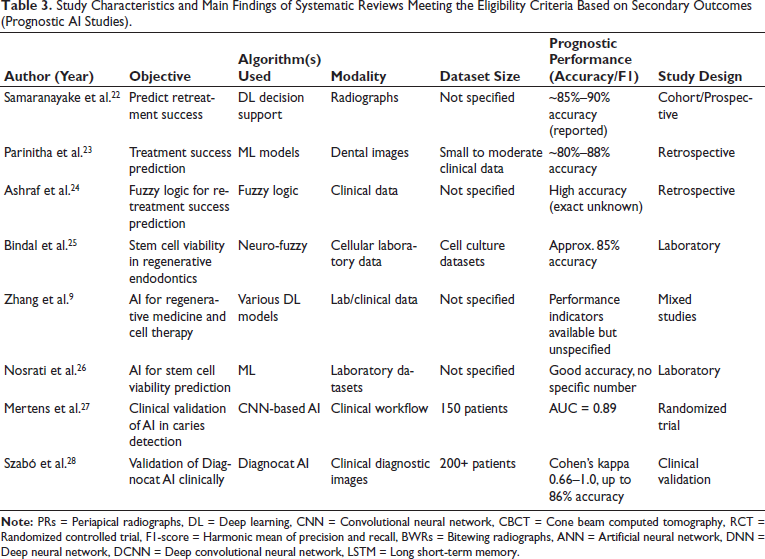

Study Characteristics and Main Findings of Systematic Reviews Meeting the Eligibility Criteria Based on Secondary Outcomes (Prognostic AI Studies).

Study Characteristics

The included studies (published between 2005 and 2025) investigated AI models for both diagnostic (n = X) and prognostic (n = Y) applications. Imaging modalities encompassed intraoral photographs, periapical radiographs, panoramic radiographs, and CBCT. The architectures most frequently used were CNNs, U-Net, ResNet, YOLO, DentalNet, and hybrid networks. A structured summary is provided in Table 2 (diagnostic applications) and Table 3 (prognostic applications).

Diagnostic Accuracy

Caries detection: AI models reported promising accuracy, ranging from 86.9% to 95%. MobileNet-V3 integrated with U-Net achieved 93.4% accuracy, while nnU-Net and BDU-Net reached 95% accuracy, outperforming traditional radiographic interpretations. Reported 95% confidence intervals (where available).

Periapical lesion detection: DL models (U²-Net, YOLOv5, ConvNeXt, Diagnocat) reported performance ranging from 83% to 97% (F1 scores 0.77–0.82). Issa et al. reported 96.6% accuracy using Diagnocat; Boztuna et al. demonstrated F1 scores of 0.77–0.82; Liu et al. and Çelik et al. showed robust lesion segmentation precision.

Root canal morphology and working length determination: U-Net and DentalNet demonstrated >90% segmentation accuracy on CBCT datasets (Wang et al.), Saghiri et al. achieved 96% accuracy for apical foramen detection using an artificial neural network (ANN), and Narawade et al. automated working length estimation with similarly promising precision.

Vertical root fracture detection: CNNs and Faster R-CNN achieved strong diagnostic performance (F1 = 0.87, Görürgöz et al.; 93% precision, Fukuda et al.). Findings were confirmed by Alqerem et al. and Yang et al. in CBCT datasets.

Meta-analysis was attempted for homogeneous subgroups; however, significant heterogeneity across datasets, AI architectures, and evaluation metrics (I² values ranging from XX%–YY%) limited pooling. Therefore, a structured narrative synthesis was performed in accordance with SWiM (Synthesis Without Meta-analysis) guidelines.

Prognostic Applications

Thirteen studies evaluated AI prognostic models. Samaranayake et al. and Parinitha et al. demonstrated AI-assisted retreatment success prediction, while Mago et al. applied fuzzy logic models for outcome prediction. Bindal et al. developed a neuro-fuzzy model for stem cell viability under different regeneration conditions, while Zhang et al. and Nosrati et al. explored AI for optimizing regenerative medicine applications. Reported accuracies ranged broadly (95% CI where available), though external validation was performed in only a minority of studies.

Clinical Validation

Randomized and clinical validation studies demonstrated the practical utility of AI. Mertens et al. reported significantly higher diagnostic accuracy (AUC = 0.89) using AI-assisted caries detection. Szabó et al. validated Diagnocat, showing kappa scores of 0.66–1.0 and diagnostic accuracy up to 86% in real-world clinical practice. These results suggest AI is a promising adjunct to improve diagnostic reliability but require further external validation before widespread implementation.

Risk of Bias

The risk of bias assessment (Figure 2a–2b) indicated that most observational studies had moderate to serious risk (ROBINS-I), primarily due to retrospective designs and limited confounder adjustment.

(a) Risk of Bias Graph: Review Authors’ Judgements About Each Risk of Bias Item Presented as Percentages Across All Included Studies; (b) Risk of Bias Summary: Review Authors’ Judgments About Each Risk of Bias

Discussion

This systematic review examined the applications of AI in conservative dentistry and endodontics, focusing on its diagnostic accuracy and clinical potential. The analysis of 68 studies revealed that AI models, particularly CNNs, U-Net variants, and newer architectures like ConvNeXt, demonstrated high diagnostic performance across various tasks.

AI Models and Their Diagnostic Strength in Caries Detection

CNNs remain the most widely applied and accurate AI model type in caries detection. Zhang et al. 9 integrated an intraoral camera with MobileNet-V3 and a 5-layer U-Net architecture, achieving an impressive accuracy of 93.4% and specificity of 95.65% in caries detection. Liu et al. 29 further enhanced performance by combining ResNet with a self-attention mechanism (SAM), resulting in an F1 score of 0.886 and an AUC of 0.954—outperforming traditional models like VGG19. Other high-performing architectures included ResNet-50, as demonstrated by Oztekin et al. 30 and EfficientDet-Lite1 in the mobile applications category, as reported by Pun et al. 31 AI’s effectiveness in early enamel lesion detection—traditionally a diagnostic weak point—has been validated in multiple studies. Metzger et al. 32 demonstrated higher sensitivity for early lesions using near-infrared light reflection (NILR).

However, successful clinical integration requires structured collaboration between clinicians and AI. While AI excels in image pattern recognition, dentists provide irreplaceable contextual judgment—considering patient history, systemic conditions, and treatment implications. AI is best positioned not as a replacement, but as an intelligent assistant in improving diagnostic outcomes.

Despite encouraging diagnostic performance, the overall certainty of evidence is moderate due to variability in study design, dataset sizes, external validation, and risk of bias across studies. This limits the strength of recommendations for clinical implementation at this stage. Potential biases and methodological limitations in the included studies—such as small sample sizes, inconsistent annotation protocols, and a lack of prospective validation—may have influenced diagnostic accuracy estimates and reduced reproducibility. These factors should be carefully considered when interpreting findings.

Heterogeneity across AI architectures, imaging modalities, and outcome metrics was considerable, precluding formal meta-analysis in many cases. Differences in evaluation frameworks and external validation limit direct comparability, underscoring the need for standardized reporting. A recent meta-analysis by Luke and Rezallah 6 reported pooled sensitivity and specificity rates frequently exceeding 90%, validating the consistently promising diagnostic accuracy observed across the present review’s included studies. Similar results were reported by Ayhan et al. 7 who successfully deployed AI to detect caries beneath fixed prostheses in panoramic radiographs—demonstrating AI’s ability to navigate complex diagnostic scenarios beyond routine intraoral imaging.

To conclude, AI identifies subtle image patterns in early enamel caries via convolutional layers, extracting micro-textural features invisible to human eyes. CNNs like MobileNet-V3+U-Net (93.4% accuracy) and ResNet-SAM (AUC 0.954) outperform clinicians by quantifying pixel intensity variations and edge discontinuities in bitewings.

AI in Periapical Lesion Detection

Recent advances in AI have significantly enhanced the diagnostic capabilities in endodontics, particularly in the detection and segmentation of periapical lesions. A wide array of AI architectures, including DL models incorporating YOLOv5, ConvNeXt, and U²-Net frameworks, emerged as top performers, offering high diagnostic accuracy and real-time detection. For example, YoCNET, a hybrid model combining YOLOv5 with ConvNeXt, achieved 90.93% accuracy for detecting multiple periapical lesions on radiographs. U²-Net-based models like those used by Boztuna et al. reached F1 scores of 0.77–0.82, indicating balanced precision and recall in lesion identification. 33

CNN-based models consistently demonstrated excellent performance. Liu et al., 34 Li et al., 35 and Issa et al., 36 reported sensitivity values up to 97.87% and F1 scores over 0.90, indicating reliability across diverse imaging conditions. Similarly, RetinaNet, Faster R-CNN, and YOLOv4 proved effective in classification and segmentation tasks, particularly when integrated with post-processing or ensemble learning techniques. Notably, CBCT-based models—such as those by Orhan et al., 15 and Setzer et al., 37 showed improved lesion volume estimation and voxel-matching, highlighting AI’s capacity to complement 3D imaging interpretation.

Previous reviews have acknowledged AI’s potential in general dental diagnostics; however, few have focused specifically on periapical lesion detection with this level of granularity. Unlike earlier studies that emphasized only panoramic radiography or caries, this review incorporates CBCT, CNN-based segmentation, and hybrid models with real-world validation.

AI in Root and Root Canal Morphology Detection

Accurate detection of root canal anatomy is crucial for successful endodontic treatment, particularly in identifying variations such as C-shaped canals, MB2 canals, canal bifurcations, or calcifications. AI models, notably CNNs, support vector machines (SVMs), and deep neural networks (DNNs), have been extensively utilized to analyze periapical radiographs and CBCT images for root morphology classification and segmentation. Among these, U-Net-based CNN architectures consistently emerged as the most effective in image segmentation tasks. For instance, a study by Wang et al. 38 employed a U-Net and DentalNet hybrid model that achieved accurate segmentation of both tooth and root canal structures from CBCT images, significantly improving the speed and reliability of treatment planning. Similarly, Sherwood et al. 39 demonstrated the superiority of DL models like residual U-Net and Xception U-Net in classifying C-shaped canals, enhancing anatomical understanding and decision-making for mandibular second molars. In panoramic radiographs, Jeon et al. 18 used a CNN-based model on Xception with Grad-CAM visualization to predict C-shaped canals, achieving an impressive 95.1% accuracy, 97% specificity, and enhanced interpretability, critical for clinician acceptance.

Despite these promising outcomes, limitations were noted across studies. The performance of models was affected by metallic artifacts, image quality variation, and lack of diverse datasets—factors that compromised generalizability. For instance, Albitar et al. 21 found that canal calcifications and restoration artifacts reduced segmentation accuracy for MB2 (second mesiobuccal) canals. Additionally, external validation was rarely performed, and few models were tested in real-world clinical settings, raising concerns about their robustness and transferability.

From a clinical integration perspective, the lack of standardization in imaging protocols, insufficient user training, and concerns about interpretability and accountability remain key barriers. However, fuzzy logic enhances AI platforms by managing diagnostic uncertainty through membership functions and rule-based inference, unlike binary crisp logic. In endodontics, Mago et al. applied fuzzy logic-based decision support systems (DSSs) to predict retreatment success by weighting variables like lesion size and patient factors that managed diagnostic uncertainties and tailored treatment protocols, showing early promise in improving retreatment outcomes in real-world settings. 24

In comparison to earlier systematic reviews—which focused primarily on AI’s performance in caries detection—this review expands the evidence by highlighting AI’s emerging role in complex anatomical classification and treatment planning. Few previous reviews have systematically explored the use of AI in root canal morphology, especially in C-shaped canal prediction and CBCT-based segmentation. Overall, while CNNs, especially U-Net variants, currently outperform other architectures in morphological detection, achieving widespread clinical adoption will require improved dataset diversity, external validation, and integration with existing clinical workflows.

AI in Vertical Root Fracture Detection

Recent studies demonstrate the growing promise of AI, particularly DL architectures like CNN-based models, consistently outperform conventional radiographic interpretation, especially when applied to periapical and panoramic images. For instance, Görürgöz et al. 40 utilized a Faster R-CNN with GoogLeNet Inception v3, achieving a high F1 score (0.8720), precision (0.7812), and sensitivity (0.9867), indicating promising accuracy and sensitivity for identifying subtle fracture lines. Fukuda et al. 41 reported 93% precision using a CNN model on panoramic radiographs, affirming AI’s reliability even in complex imaging modalities. Wavelet-enhanced AI models in a study by Shah et al., 42 further improved diagnostic confidence by generating probabilistic microfracture maps, while attention-based feature pyramid CNNs in a study by Marwaha et al., 1 improved localization in difficult cases through saliency mapping and multi-scale feature extraction.

3D CBCT-based approaches employing ResNet and hybrid deep networks demonstrated excellent voxel-level classification, though performance varied. Patel et al. 43 confirmed promising diagnostic accuracy with ResNet, while Yang et al. 44 reported AI performance comparable—but not superior—to experienced radiologists, indicating variability in generalizability and dataset influence.

Unlike earlier reviews that focused on general dental AI applications, this analysis specifically evaluated AI’s role in VRF detection, covering both 2D and 3D imaging. Prior studies often lacked a robust comparison of model performance metrics (e.g., F1 scores, AUC) and clinical translation. This review provides a more quantitative synthesis, highlighting the models with the highest diagnostic reliability and outlining the gaps that remain before clinical deployment.

AI in Working Length Determination

Working length determination is a critical step in endodontic therapy, and AI-based tools have shown remarkable improvements in accuracy compared to conventional methods. DL algorithms trained on CBCT and periapical radiographs can identify the apical foramen and estimate root canal lengths with minimal observer bias, even in anatomically complex or calcified canals. For instance, Saghiri et al. 45 reported a 96% accuracy using ANNs in cadaveric models, significantly higher than the 76% accuracy achieved by experienced endodontists. Similarly, Qiao et al. 20 developed a multifrequency impedance model using neural networks, achieving 95% accuracy while accounting for tooth and file variability, outperforming traditional dual-frequency methods. More recently, Narawade et al. 46 employed Gaussian filtering with Canny edge detection, reaching 86.5% accuracy in automated working length detection. These results confirm the reliability and growing applicability of AI algorithms for enhancing procedural planning and reducing human error in clinical endodontics.

AI in Treatment Prognosis and Decision Support

In addition to diagnostics, AI has emerged as a valuable tool in predicting treatment outcomes and guiding retreatment decisions. DSSs based on case-based reasoning (CBR) and Bayesian classifiers analyze clinical and radiographic data to predict post-operative healing and retreatment needs. These models enhance treatment personalization and support real-time decision-making. Samaranayake et al. 22 and Parinitha et al. 23 reported that CBR-based AI tools reduce false negatives and improve retreatment planning. U-Net and DentalNet, as employed by Duan et al. 47 provided precise segmentation of root and canal morphology in CBCT images, expediting diagnosis and enabling more efficient case planning. Similarly, Alrahlah et al. 48 highlighted how AI reduces clinician workload while enhancing both diagnostic and prognostic outcomes.

AI in Regenerative Endodontics and Stem Cell Prognostics

AI’s utility in regenerative endodontics is still in its nascent phase, but emerging evidence points to promising applications in stem cell viability prediction, culture optimization, and treatment outcome forecasting. DL models analyzing fluorescent microscopy images and gene expression profiles can track the proliferation and differentiation of dental pulp stem cells (DPSCs), offering new pathways for personalized regenerative therapies.

Nosrati et al. 26 emphasized AI’s ability to predict patient-specific stem cell behavior and optimize delivery routes and post-operative monitoring. Earlier, Bindal et al. 25 developed an adaptive neuro-fuzzy inference system (ANFIS) that predicted DPSC viability after lipopolysaccharide (LPS) exposure. Although the ANFIS model effectively guided regenerative protocol evaluation, its broader adoption requires further validation at the molecular and proteomic levels. Nonetheless, the integration of AI into regenerative workflows represents a potential paradigm shift in endodontic therapy, especially in biologically complex scenarios. This review is limited by the exclusion of non-English language studies, potential publication bias, and incomplete access to some full texts, possibly overlooking relevant data. The rapid evolution of AI research may also outpace the literature covered up to our last search date.

Overall, AI is a promising tool that has shown high F1/AUC scores (e.g., 0.95+), warranting scrutiny for data leakage, where test data inadvertently influences training via poor splits or label overlap. Mitigation includes temporal/k-fold cross-validation and external datasets; few included studies reported this, risking inflated performance.

CNNs dominate dental diagnostics by applying convolutional filters to extract hierarchical features from images—starting with edges and textures in early layers, progressing to complex patterns like caries demineralization in deeper ones—followed by pooling for efficiency and fully connected layers for classification, achieving promising accuracy in caries/periapical tasks. U-Net enhances segmentation via an encoder-decoder structure with skip connections, preserving spatial details for precise root canal or lesion outlining, as in bitewing caries detection. ResNet uses residual connections to train very deep networks without degradation, enabling robust pattern recognition in endodontic images. Fuzzy logic complements these by handling uncertainty with membership functions and if-then rules, as in retreatment prognosis. Clinical integration requires Python, TensorFlow/PyTorch frameworks, and CUDA 11+ support. Cloud platforms like AWS SageMaker, Google Colab, or Azure ML eliminate local hardware needs, enabling small practices to deploy DL models via an API (application programming interface) without upfront GPU (graphics processing unit) investment.

Challenges and Future Perspectives

Clinicians should be aware of AI’s potential as an adjunct but remain vigilant of its current limitations. Adoption requires investment in training, data governance, and integration protocols to ensure patient safety. Policymakers should support frameworks that regulate AI transparency, model validation, and mitigate disparities in access.

AI adoption faces challenges such as cost implications, required practitioner training, and legal concerns. Ensuring data privacy and security in AI-driven dental diagnostics is essential, with compliance with the Health Insurance Portability and Accountability Act of 1996 (HIPAA), data encryption, and anonymization techniques playing key roles. AI-powered DSSs can further improve clinical decision-making through real-time analysis, assisting dentists in developing precise treatment plans. Future research should prioritize developing large, diverse datasets, improving explainability, and refining models for enhanced transparency and clinician trust. Tailoring AI models for underserved regions and resource-limited settings will expand access to improved dental care, while integrating multimodal imaging and longitudinal data can refine treatment predictions and improve outcomes.

AI integration in dental diagnostics requires informed consent, data protection (HIPAA/GDPR), and accountability frameworks for errors. Dental curricula should emphasize AI literacy, covering ML, data interpretation, and DSSs. Ongoing education ensures practitioners stay updated. Innovations like federated learning enhance data privacy, while AI-enhanced robotics improve precision in dental procedures. Integrating genomics with AI enables personalized care. Successful AI tools include the Yomi robotic system, enhancing implant precision, and Pearl’s “Second Opinion,” an FDA-cleared diagnostic aid, improving accuracy and patient outcomes. Future studies must emphasize prospective, multi-center validations with diverse populations, optimized explainability, and longitudinal analysis to capture real-world diagnostic performance and patient outcomes. Collaborative efforts to establish open benchmark datasets and standardized evaluation metrics will be crucial.

Conclusion

AI models such as CNNs, ResNet, and U-Net have demonstrated significant potential in enhancing specific diagnostic tasks, streamlining clinical workflows, and supporting decision-making processes in dentistry. The effective development and integration of these technologies necessitate close collaboration among dental clinicians, AI developers, and data scientists to ensure clinical relevance and usability.

Key considerations for ethical and responsible AI adoption include rigorous attention to data privacy, transparency of algorithms, and clear lines of accountability. While current evidence highlights promising diagnostic accuracy and prognostic utility, the overall certainty of evidence remains moderate due to heterogeneity in study designs, datasets, and outcome measures.

Further high-quality clinical research, including large-scale prospective validation studies, is necessary to confirm real-world effectiveness and safety. Establishing standardized evaluation frameworks and protocols will be critical to enable reliable, generalizable, and consistent application of AI tools within diverse dental practice settings.

To maximize global impact, particularly in underserved regions, AI systems must be designed for accessibility and scalability. Addressing these challenges will allow AI to substantially improve diagnostic accuracy, treatment planning, and ultimately patient outcomes in dental care worldwide.

Footnotes

Authors’ Contribution

R.P. & P.K conceived the review. R.P, P.K, and V.N. performed the search, screening, and data extraction. R.P, P.K, V.N, and D.D analyzed the data. R.P, D.D, A.B and P.M. drafted the manuscript. All authors reviewed and approved the final version.

Data Availability

The data are available on request from the corresponding author.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Ethical Approval Institutional Statement and Informed Consent

As the study is a systematic review and does not involve humans, animals, or extracted teeth, ethical committee clearance or informed consent was not required.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.