Abstract

Researchers and advocates recognize that adult day services (ADS) play a key role in delivering person-centered care to individuals living with Alzheimer’s Disease and related dementias (ADRD). However, few ADS centers are routinely measuring person-centered outcomes, thereby limiting our ability to understand the impact of ADS on these key indicators of well-being. Guided by Kitwood’s framework of person-centered dementia care, this study aimed to identify valid, reliable, and practical person-centered outcome measures that reflect ADRD quality-of-life domains. Twenty-two ADS practitioners and researchers (N = 22) participated in a two-round e-Delphi review to evaluate 10 potential outcome measures across four person-centered domains: meaning and purpose; engagement; social networks; and sense of belonging. Based upon scores on select characteristics in Round 1 of the e-Delphi review (e.g., ease of administration, relevance to population), consensus was reached in Round 2 (66.67% supermajority) on the following measures: the PROMIS Meaning and Purpose in Life Scale (76%); the Friendship Scale (76%); and the General Belongingness Scale (67%). Panelists could not reach consensus on the Life Engagement Test (52%) and the Engagement in Meaningful Activities Scale (48%), so both were retained for future evaluation. The scales identified in this e-Delphi review will now be incorporated into real-world data collection beta-testing to evaluate feasibility, practicality and impact of ADS on these person-centered outcomes.

What This Paper Adds

Identifies person-centered outcome measures for Alzheimer’s Disease and related dementias (ADRD) that are both valid and practical for adult day services (ADS).

Describes ADS practitioners and researchers’ perspectives around the relevance and value of utilizing specific quality-of-life (e.g., engagement, belonging, meaning, and social connectedness) outcome measures in ADS.

Emphasizes barriers to implementation (e.g., staff training, time constraints, and challenges in later disease stages) that must be addressed to effectively integrate person-centered outcomes in ADS.

Application of Study Findings

Practice: Provides ADS practitioners with vetted tools to guide person-centered care planning to enhance quality-of-life outcomes for people living with ADRD.

Policy: Underscores the need for regulatory and funding frameworks that support the systematic collection and use of person-centered outcomes in ADS.

Research: Establishes a foundation for studies aimed at testing and refining the use of person-centered measures in real-world ADS settings.

Introduction

Adult day services (ADS) are community-based programs that meet the health, nutritional, social, and daily-living needs of 250,000 adults with cognitive or functional impairment each day in the United States while offering respite and support to family caregivers (Fields et al., 2014). ADS clients receive supervised activities, meals, and opportunities for social and cognitive engagement that can benefit well-being (Harris-Kojetin et al., 2016). Roughly a quarter of U.S. ADS participants are persons living with dementia (PLWD; Singh et al., 2024), a population that is expected to triple by 2050 (Nichols et al., 2022). Despite the growing need for ADS, particularly to support the needs of PLWD, lack of uniform policy regulation around ADS has created variability in services across sites and a dearth of regulatory data to measure their impact (Sadarangani, Anderson, Vora, et al., 2022; Sadarangani, Zagorski, et al., 2021; Sadarangani, Zhong, et al., 2021).

A growing body of work at the national level has recognized the untapped potential of ADS for systematic outcomes data collection to improve dementia care (Anderson et al., 2020; Jarrott & Ogletree, 2019). In addition, outcomes recently adopted by the National Adult Day Services Association (NADSA)—focused on function, health utilization, and cognition—were foundational in standardizing data collection in ADS yet largely problem-focused measures (Anderson et al., 2020). While these align with payer priorities and target deficit management, they may not reflect the values of PLWD and their caregivers (Fazio et al., 2018; Sadarangani, Gaugler, et al., 2022). In an effort to improve standards of health and social care for PLWD, our study sought to identify person-centered outcome measures that are practically feasible to administer, relevant, and valuable for assessing quality-of-life domains among PLWD in ADS.

In a prior qualitative study (Scher et al., 2025), we conducted rigorous focus groups with ethnically diverse adult day center participants living with dementia to elicit what made them want to attend each day and how participation influenced their quality of life. These discussions identified key domains—such as engagement, belonging, and meaning—that mattered most to participants and thus informed the conceptual foundation for this work. We subsequently aligned those participant-defined domains with validated outcome measures (Mast et al., 2021), and then, sought to determine which measures among them were feasible for collection in real-world adult day center settings (See Figure 1 for the sequential phases of the research project in three aims).

Sequential phases of the research project in three aims.

To that end, in the current study, we surveyed staff in ADS centers, using the e-Delphi (e.g., or online Delphi) method to assess the pragmatism and value of these measures—specifically, whether they were practical to collect and useful for program improvement (Aim 2 in Figure 1). The decision to anchor this study in the perspectives of ADS providers was guided by the conceptual approach of person-centered dementia care (Kitwood, 1998) given that ADS providers are knowledgeable of the psychological needs of PLWD such as comfort (e.g., trust that comes from others), inclusion (e.g., being involved in the lives of others), and identity (e.g., what makes a person unique); and simultaneously attuned to their real-world challenges of data collection in resource constrained settings. This lens motivated the decision to choose measurement instruments that align with the values, preferences, and strengths of PLWD and their care partners (Kitwood, 1988).

Accordingly, the underlying constructs reflect the perspectives of people living with dementia, whereas the feasibility assessment reflects the perspectives of providers who would be responsible for implementing the measures. By intentionally integrating both viewpoints, our approach ensures that the resulting set of outcome measures remains person-centered while also being realistic for routine use in community-based dementia care contexts.

Methods

The e-Delphi method was used to identify and evaluate person-centered outcome measures for PLWD in ADS. Developed almost 75 years ago, the Delphi approach uses panels of experts to anonymously weigh in on specific topics with the end goal of reaching a consensus (Linstone & Turoff, 1975). The Delphi method is an iterative process that often consists of multiple rounds of initial surveys, data analysis, refinement of questions/items, and follow-up surveys (Dalkey & Helmer, 1963). Researchers develop and distribute surveys to expert panels, the experts answer both closed and open-ended questions, and researchers analyze the data. If consensus is not reached, the researchers repeat this process with surveys that reflect the results of the previous round. The Delphi method has been used in a wide variety of disciplines, most commonly in the medical and healthcare fields (Khodyakov et al., 2023). While widely used, there is considerable variability in the approaches used to conduct Delphi panel studies particularly with regard to data analysis and reaching consensus. In this study, the researchers relied upon guidance provided by the RAND Corporation, the original developer of the Delphi Method (Khodyakov et al., 2023). This study utilized an e-Delphi approach by using the online survey platform (e.g., Qualtrics) to collect data.

Participants (Delphi Panel Selection)

Delphi panels typically consist of experts on a certain topic or in a certain field. In this study, ADS staff, researchers, and policy advocates were identified as experts given their first-hand knowledge of capabilities of participants and staff members in this setting. With the support of NADSA—the leading voice of the rapidly growing ADS industry and the national focal point for ADS providers (NADSA, n.d.)—and the study team’s extensive national research networks, we purposively sampled expert stakeholders who work in: (a) management or administrative leadership at ADS sites, (b) clinical staff in ADS sites (i.e., registered nurses and social workers), (c) health and health services researchers with experience in outcome measures and quality indices, (d) researchers focused on ADS, (e) methodologists working in outcomes-related research and questionnaire development, and (f) policy advocates within organizations focused on home and community-based services. All recruitment took place over a 2-month period to enable greater participation and flexible advance scheduling. The study team sent out a personalized letter describing the study to relevant stakeholders and followed up by e-mail and phone. The study team had weekly consultations with NADSA leadership during the recruitment period to gauge interest in the study and address challenges in identifying potential participants.

Selecting Person-Centered Outcome Measures

The study team identified a battery of potential outcome measures that aligned with the person-centered domains from the focus groups from the previous study (Scher et al., 2025). The following inclusion criteria were used in the initial identification of these measures: (1) reflective of the domains identified in the focus groups, (2) psychometrically sound and in common use, (3) in the public domain and without costs or fees; (4) written at a level that was appropriate for the target population; and (5) easy to administer within a brief time period (~5–7 min). To find measures that met this criteria, the study team relied on a review of existing person-centered outcome measures that have been tested in samples of people living with dementia (Mast et al., 2021). The researchers also conducted a review of recent literature and reached out to experts in the field to identify additional person-centered outcome measures that addressed relevant domains that were not already captured. This process yielded 10 measurement tools across four person-centered domains–meaning and purpose in life, engagement, social networks/friendships, and community, belonging and inclusion–presented in Table 1. Reviewers evaluated the 10 outcome measures in the two-round Delphi review (for detailed descriptions of the outcome measures, see Supplemental Appendix 1; Cella et al., 2010; Eakman, 2012; Hagerty & Patusky, 1995; Hawthorne, 2006; Lubben et al., 2006; Malone et al., 2012; Ryff, 1989; Scheier et al., 2006; Steger et al., 2006; Stoner et al., 2018).

Measurement Tools That Met the Inclusion Criteria Within Each Person-Centered Domain.

Materials and Procedure

All study procedures were approved by NYU Institutional Review Board. Informed consent was obtained electronically, with participants providing their signature and date through the online survey platform prior to beginning the study. Participants received a $50 electronic Amazon gift card each time they completed a survey (e.g., $100 total for Rounds 1 and 2). Prior to administering the survey to the panel, the study team beta tested the survey with an ADS provider and implemented suggested changes before launching the final version.

The Delphi procedure consisted of two rounds of panelist responses collected through Qualtrics. Round 1 of the Delphi took place from January to March 2025 (e.g., the Round 1 survey can be found in Supplemental Appendix 1). Twenty-six potential Delphi panel members were invited to participate in Round 1. Of these, 22 participated, yielding an 84.6% participation rate for Round 1. The reviewers were provided a rubric to evaluate the measures in terms of ease of use, relevance, and value using Likert-type scales. Sample questions included, “How easy would this tool be for your staff to administer?” and “How relevant or pertinent is the information collected in this tool to the lives of people in your center?” In addition, participants could respond to two open-ended questions on the perceived facilitators and barriers of using the measures in ADS practice (See Supplemental Appendix 1). The study team provided reviewers with a copy of each instrument and supplemental information including mode of completion, response format, item scoring and interpretation, target population, time to administer, psychometrics, and strengths and limitations.

After Round 1 was complete, the study team aggregated feedback, narrowed down outcome measures based on the feedback, and provided reviewers with a summary in Round 2. Round 2 took place from June to July 2025 (e.g., the Round 2 survey can be found in Supplemental Appendix 1). Twenty-two potential Delphi panel members were invited to participate in Round 2. Of these, 21 participated, yielding a 95.5% participation rate for Round 2. In Round 2, participants reviewed the top tools selected in each outcome domain, selected which tool they believed was the best choice (e.g., or neither option), and shared additional feedback, concerns or suggestions. For both Rounds 1 and 2, repeated reminders were sent out periodically to encourage full participation.

Data Analysis

Data analysis protocols were closely based upon the RAND guidelines (Khodyakov et al., 2023). First, survey data were entered into R (Version 4.4.3) and cleaned. Missing data on individual items were excluded (e.g., percentages were calculated based on the available sample size for each item). The research team defined reviewer consensus a priori at a supermajority (66.67%). Survey respondent and ADS characteristics were summarized using frequencies and percentages for categorical variables and mean and standard deviation for continuous variables. Prior to data summarization for Round 1, survey responses were dichotomized (e.g., “Easy” vs. “Not Easy” for ease of administration), then summarized using frequencies and percentages. For Round 2, respondents’ instrument preferences across all measurement domains were summarized using frequencies and percentages. The process is summarized in Figure 2. Summary tables and figures were generated to visualize survey data across measurement domains and survey rounds. Finally, qualitative data from open-ended responses were analyzed using thematic analysis (Braun et al., 2019).

Delphi survey selection progress.

Results

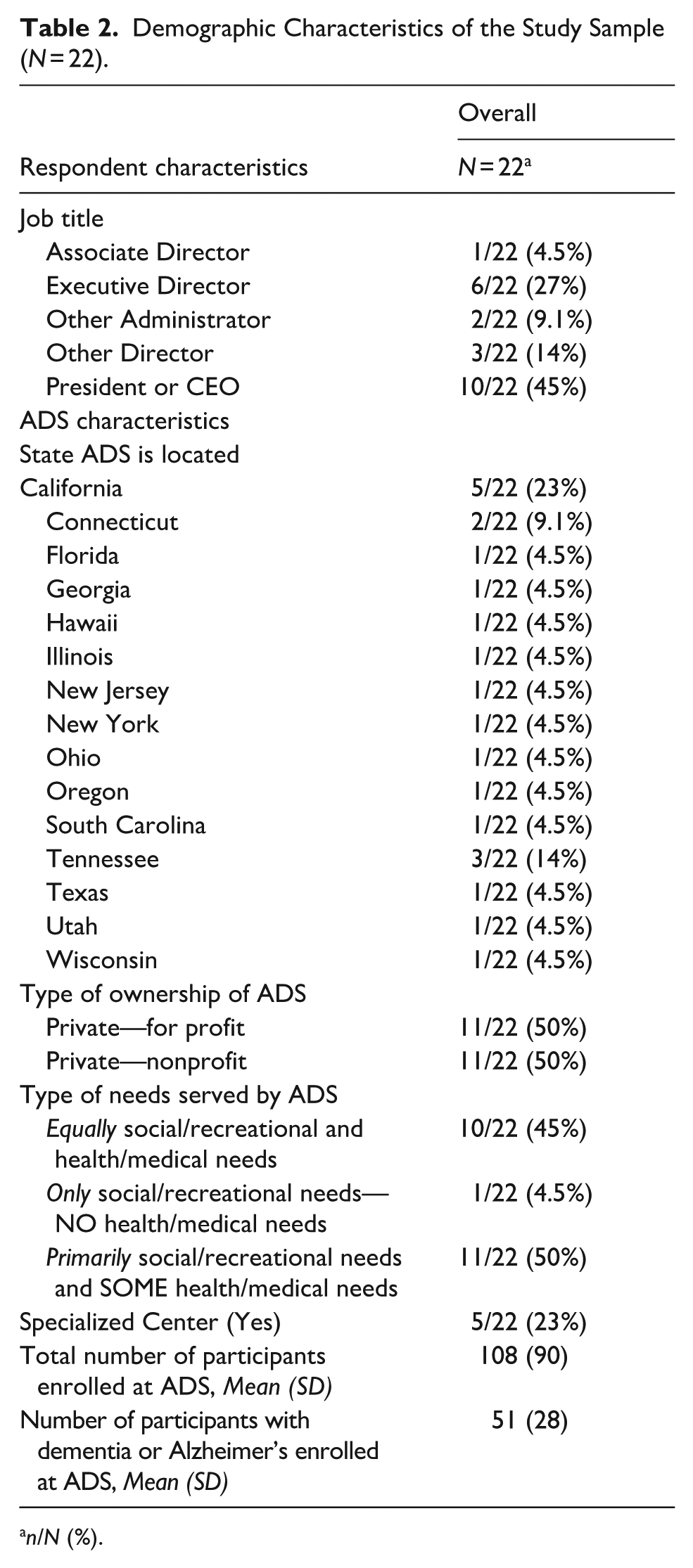

Characteristics of the study sample and the ADS they attended are presented in Table 2. Respondents had a range of job titles including executive director (27%), other administrator (9.1%), and president or CEO (45%). Most ADS were located in California (23%), Tennessee (14%), or Connecticut (9.1%). Half of ADS were private—for profit (50%) while the other half were private—nonprofit (50%). In addition, approximately half of ADS provided primarily social/recreational needs and some health/medical needs (50%). The average number of attendees enrolled at the ADS was 108 (SD = 90). Of those enrolled, the average number of attendees living with dementia was 51 (SD = 28).

Demographic Characteristics of the Study Sample (N = 22).

n/N (%).

The results of Round 1 of the Delphi panel are presented in Table 3. Within the meaning and purpose in life domain, most participants felt that the PROMIS Meaning and Purpose in Life Scale was the easiest to administer (71%), relevant (57%), valuable (62%), elicited informative data that justified time spent administering the questionnaire (55%). PROMIS was thought to yield data that would support a person-centered approach to dementia care (70%), was appropriate for people in early to middle stages of dementia (60%), and was a tool staff would most likely administer (70%) in a real-world context. For the engagement domain, participants provided comparable ratings for both the Life Engagement Test and the Engagement in Meaningful Activities Scale, in terms of ease of administration (67% vs. 55%), relevance (59% vs. 64%), value (77% vs. 68%), and eliciting informative data that justified in time spent administering the questionnaire (71% vs. 60%), providing data that would support a person-centered approach to dementia care (82% vs. 73%), was appropriate for people in early to middle stages of dementia (68% vs. 64%), and was a tool staff would most likely administer in a real-world context (77% vs. 59%). Within the social network domain, most participants felt that the Friendship Scale was the easiest to administer (52%), relevant (59%), valuable (50%), justified in time spent administering the questionnaire (48%), providing data that would support a person-centered approach to dementia care (52%), appropriate for people in early to middle stages of dementia (55%), and most likely to be administered in the real world (65%). Finally, for the community, belonging and inclusion domain, most participants felt that the General Belongingness Scale was the easiest to administer (82%), relevant (64%), valuable (59%), justified in time spent administering the questionnaire (67%), providing data that would support a person-centered approach to dementia care (73%), appropriate for people in early to middle stages of dementia (64%), and most likely to be administered in a real-world context (77%).

Findings From Round 1 of the Delphi Panel.

The results of Round 2 (Table 4) further supported the findings of Round 1, as the panelists reported that the best choice questionnaires were the PROMIS Meaning in Life Questionnaire (76%), Friendship Scale (76%) and General Belongingness Scale (67%). Because two of the questionnaires within the engagement domain (the Life Engagement Test and the Engagement in Meaningful Activities Scale) did not pass the reviewer consensus at a supermajority (66.67%), the research team determined that both scales would be chosen in the final round.

Findings From Round 2 of the Delphi Panel.

The qualitative findings related to all questionnaires are summarized in Supplemental Table 1. Panelists generally felt that selected measures were short, easy to administer, informed ADS programs, and could be embedded into regular ADS workflows. However, panelists expressed concerns around whether PLWD–especially at later stages in disease progression–could feasibly complete the questionnaires. For example, the panelists felt that some question items were too abstract and response options were too complex, which could confuse PLWD. Further, some items asked participants to recall a month in the past which may be too difficult for PLWD to complete.

Panelists questioned whether language and education barriers in the center would make certain questionnaires difficult to complete and whether staff members were sufficiently trained to respond to issues that may arise with responses to some of the questionnaires. In addition, some panelists wondered about how often they could feasibly conduct these data given staff shortages (e.g., every 3 months), while others sought guidance on how they could translate the results of the questionnaires into actionable change.

Discussion

This e-Delphi study advances the field of adult day services (ADS) by proposing a pragmatic, person-centered outcomes toolkit tailored to people living with dementia (PLWD) and the realities of ADS workflows. Across two rounds with strong participation (Round 1: 22/26, 84.6%; Round 2: 21/22, 95.5%), panelists achieved consensus on outcome measures that reflect domains PLWD and care partners in ADS have expressed matter most to them and that are simultaneously feasible for routine use in centers: meaning and purpose, social networks/friendships, belonging, and engagement (Scher et al., 2025). The panelists’ choices resonate with a recent compendia of validated person-centered measures used with PLWD (Mast et al., 2021), but they are refined through an ADS-specific lens that privileges brevity, cognitive accessibility, and administrative fit. Where the broader literature catalogs what is validated, our panel advances this knowledge but focusing on validated measures that ADS sites can actually collect with fidelity. This is a crucial distinction for real-world uptake and consistent, reliable administration in ADS.

For decades, ADS outcomes have been largely payer-centric and deficit-focused (e.g., utilization, function, cognitive decline), despite consensus dementia care guidance emphasizing strengths-based, person-centered care (e.g., comfort, inclusion, identity; Alzheimer’s Association, n.d.; Anderson et al., 2020; Sadarangani, Anderson, Westmore, & Zhong, 2022). By elevating brief, validated measures that capture PLWD’s lived experience of connection and purpose, our study complements existing ADS outcome recommendations rather than replacing them. The resulting toolkit can sit alongside problem-oriented indicators to yield a fuller picture of program impact. Importantly, most selected instruments emphasize the present (“how I feel now/these days”), which aligns with two practical truths surfaced by panelists: (1) many PLWD, especially in later stages, face recall challenges that complicate “past month” framing; and (2) ADS are best positioned to shape a meaningful day, not to single-handedly determine a meaningful life. This emphasis on the present within the selected measures helps ensure both validity and actionability in center workflows.

Implementation Considerations

Panelists affirmed feasibility and value of measures but flagged four adoption needs:

Accessibility of Measures: Negatively worded items, abstract phrasing, and retrospective time frames may confuse some PLWD. Centers may need scripts, plain-language clarifications, and visual response aids. Where self-report is not feasible, structured proxy input (e.g., care partner or staff) with clearly labeled provenance should be considered.

Workflow Fit: Participants favored embedding the instruments within existing touchpoints (e.g., bi-annual or quarterly intake and periodic reassessments) to minimize burden. A brief universal screen plus targeted follow-ups may further reduce workload.

Response Capacity: Collecting signals about loneliness, exclusion, or low purpose obligates centers to act. Sites will benefit from micro-protocols (e.g., “If Friendship Scale ≤X, then. . .”) that train staff to respond sensitively and route findings to concrete programing (buddy systems, small-group activities, faith/culture-specific offerings).

Data Systems: To reduce burden, centers will need simple scoring sheets and templated entry fields in EHRs or secure spreadsheets. Visual dashboards can turn scores into action lists for activity directors and social workers.

Policy and Practical Implications

Despite barriers, this study is highly relevant to long-term care policy and dementia practice. ADS value has been framed largely in payer/cost terms, driving deficit-focused metrics. Person-centered outcomes are additive: they make the unique impact of ADS visible to payers and the public, especially post-COVID, when many centers closed or saw reduced census (Sadarangani, Zhong, et al., 2021; Sadarangani, Gaugler, et al., 2022). Our toolkit captures loneliness and belonging (e.g., Friendship Scale), aligning with state/federal priorities and enabling performance-linked reimbursement and targeted grants (Esmail et al., 2020; Sadarangani et al., 2024). These data also help caregivers—key consumers—choose services, supporting uptake by providers.

Study Strengths and Limitations

This study has several strengths and notable limitations. We used a practice-anchored, community-engaged design co-developed with NADSA and ADS practitioners, placing emphasis on feasibility and likelihood of adoption. Panel engagement was high across two rounds (high response, minimal attrition). We curated validated measures for brevity, clarity, and administrative fit, producing a pragmatic toolkit rather than a catalog and positioning ADS providers for near-term use. Limitations included a modest panel with geographic skew (notably California and Tennessee), potentially underrepresenting other regions/models. We relied on the Delphi method which lacks uniform standards for consensus (Khodyakov et al., 2023). Consistent with the RAND approach, we used a supermajority threshold (≥67%), within typical ranges (~50%–100%; Nasa et al., 2021). As a result, in one domain we retained two equally favored measures. Finally, we assessed feasibility and perceived value but not in-situ psychometric performance, indicating the need for real-world validation.

Future Directions

By embedding measures in NADSA’s data system for low-burden, standardized reporting, we intend to pilot the full toolkit (retaining both engagement measures) across geographically and operationally diverse ADS. This will allow us to generate in-situ psychometric evidence—completion, time, missingness, distributions, sensitivity to change—and compare performance by subgroup. In parallel, we will co-develop an implementation package (scripts, plain-language guidance, visual aids, scoring sheets, decision thresholds, program mapping, simple dashboards) with training/coaching so staff can act on signals (e.g., loneliness, low belonging). To drive policy uptake and sustainability, we plan to partner with state agencies and managed care to integrate these metrics into quality contracts, value-based payment, and reporting frameworks, supported by lightweight templates that automate scoring and feedback loops.

Conclusion

ADS are uniquely positioned to deliver person-centered dementia care, yet they have lacked a feasible way to evidence and communicate their strengths. This e-Delphi study offers a practical, consensus-informed suite of measures that ADS centers can incorporate into routine practice. By grounding selection in what PLWD value and what staff can reliably and pragmatically administer, the field is poised to generate transparent, comparable data that can inform programing, bolster caregiver trust, and support policy and payment reforms sustaining ADS as critical community infrastructure for PLWD.

Supplemental Material

sj-docx-1-ggm-10.1177_30495334251408570 – Supplemental material for Identifying Person-Centered Outcome Measures for Use in Adult Day Services: An E-Delphi Consensus Study

Supplemental material, sj-docx-1-ggm-10.1177_30495334251408570 for Identifying Person-Centered Outcome Measures for Use in Adult Day Services: An E-Delphi Consensus Study by Clara J. Scher, Keith Anderson, William Zagorski, Shahrzad Siamdoust, Jackie Finik and Tina Sadarangani in Sage Open Aging

Footnotes

Acknowledgements

We would like to acknowledge our colleagues at the National Adult Day Services Association as well as the adult day center staff who participated in this research.

Ethical Considerations

This study was approved by the NYU Institutional Review Board.

Consent to Participate

Participants were directed to an online consent form via Qualtrics detailing the study’s purpose, procedures, risks, and benefits. Participants then indicated their voluntary agreement to participate by providing their online signature and clicking a button at the end of the form.

Author Contributions

Sadarangani conceptualized the study and edited the manuscript draft. Scher drafted the introduction, methods, and results sections. Finik conducted the analyses. Siamdoust contributed to the analysis and manuscript editing. Anderson co-conceptualized the methods and discussion sections and assisted with interpretation of findings. Zagorski edited the manuscript to ensure findings were meaningful to stakeholders.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by a grant from the Alzheimer’s Association (GRANT ARCOM-24-1249390).

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

The data are unavailable to access publicly due to privacy and ethical restrictions.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.