Abstract

Verification and validation (V&V) is an integral part of any simulation study. Validation assesses how accurately conceptual models represent the real system, while verification ensures correct implementation in software. V&V plays a critical role in business and manufacturing, where simulation models imitate complex real-world systems. However, comprehensive statistically grounded literature reviews on V&V of simulation models, particularly from a business management and manufacturing domain standpoint, are scarce. This study addresses that gap by performing topic modelling to identify prominent research themes, then reviewing all important research articles to outline the evolution of quantitative methodologies and algorithms on V&V. We also highlight various research gaps and potential directions for future work. For this study, we reviewed the abstracts of more than 6,000 articles indexed in Scopus and Web of Science, along with a comprehensive analysis of 300 research articles.

Keywords

Introduction

Simulation models are commonly used in various application domains to represent real-world systems or processes that are typically too expensive to observe or experiment with (Banks, 1999). Law (2024) called simulation a method of last resort. Advancements in computing power have facilitated widespread adoption of simulation methods and tools (Mourtzis, 2020). Common simulation approaches include traffic simulation, simulation gaming, system dynamics (SD), discrete event simulation (DES), agent-based simulation (ABS), Monte Carlo simulation, Petri nets, etc. (Jahangirian et al., 2010). In operations management and operations research, simulation is considered to be the second most popular technique, after mathematical modelling (Amoako-Gyampah & Meredith, 1989; Jahangirian et al., 2010; Pannirselvam et al., 1999).

While simulation has become an essential tool for practitioners, its involvement often leads to different levels of awareness of the complexities in developing a simulation model. Building a reliable and efficient simulation model for a complex system is challenging and calls for verification and validation (V&V). In this case, V&V of a simulation model refers to checking its validity, credibility and usability, which are important concerns (Harper et al., 2021). Numerous scholars have defined the terms ‘validation of a simulation model’ and ‘verification of a simulation model’ based on their purposes. Sargent (2020) explains that verification ensures the conceptual model (abstraction of a real system) is correctly programmed in software, whereas validation checks how closely a conceptual model represents a real system. Verification techniques help debug the simulation code, while validation techniques assess the appropriateness of assumptions in the conceptual model and alignment with the real system. It is essential to acknowledge that a simulation model is intended to analyse a system for a specific purpose; therefore, its validity should be assessed solely for that purpose.

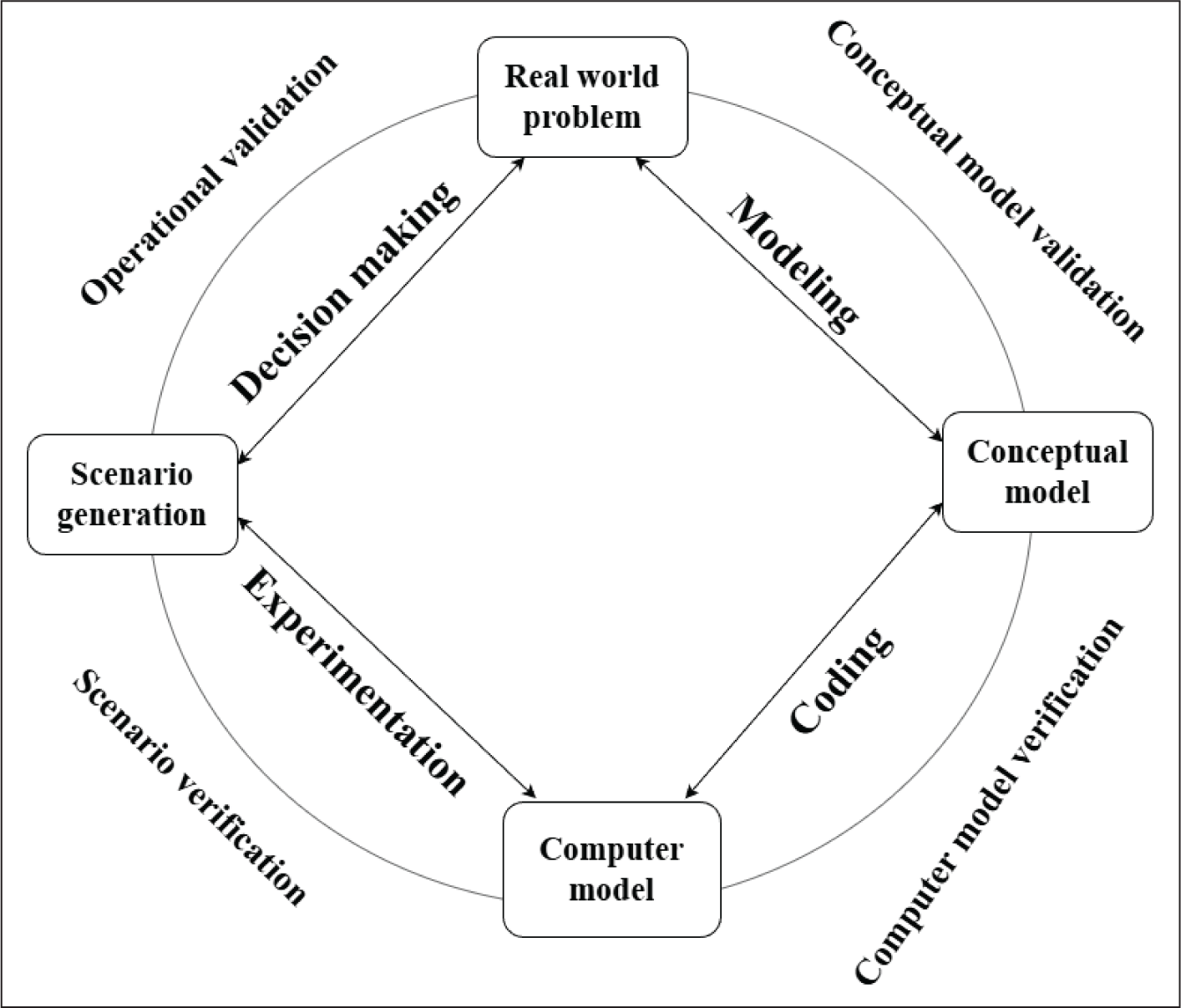

Robert G. Sargent (1981), a pioneer in this field, introduced a simplified framework for the development of different phases of a simulation model. Since its inception, this framework has been extensively used and refined by other researchers. The latest version of this framework, presented by Harper et al. (2021), is displayed in Figure 1. According to this framework, a simulation study comprises four stages. The first stage refers to defining and understanding the real-world problem. The second stage builds a conceptual model of the real-world problem. The third stage develops a computer programme (i.e., simulation model) of the conceptual model. Finally, in the fourth stage, the simulation model output is analysed and compared with the real-world data. One must be extremely careful while progressing from one stage to another, as even small errors can lead to an unreliable model. To reduce this risk and increase trust, one must perform V&V at each stage of the framework.

Tako and Kotiadis (2021) investigated the role of simulation models in managerial decision-making and argued that model validity in organizational settings is often linked to its usefulness in evaluating policy alternatives rather than predictive accuracy. Any study using a simulation model is ‘practically’ incomplete without a proper V&V process (Law, 2022). However, many articles using simulation models either omit V&V or address it insufficiently (Sargent, 2020). Thus, the situation becomes increasingly challenging for more complex models (Brailsford et al., 2019). The limited attention to V&V and its insufficient application advocates the need to raise awareness about V&V among researchers and practitioners (Kabak et al., 2024).

This article originates from a comprehensive literature review of simulation models in business and manufacturing. Throughout this process, we observed the need to raise awareness about V&V. This article aims to introduce the concepts of V&V to practitioners who utilize simulations or contribute to model development but may overlook its role in ensuring reliability and accuracy. Even for those familiar with V&V, our systematic approach to literature review through data collection and topic modelling is relevant and raises important research questions.

The main research objectives of this article are to: (a) identify important V&V techniques and methodologies; (b) investigate the status of V&V of simulation models, focusing on those used in business management or manufacturing; and (c) uncover research gaps present in the current literature.

To our knowledge, this is the first systematic review of V&V in simulation models with business and management applications. Nine prominent research themes emerged from thematic analysis via topic modelling. A manual review of all methodological articles identified several important research gaps for future research. This review provides modelling researchers with a unified view of V&V techniques across DES, SD, ABS and related simulation paradigms, supported by topic-model-based theme identification and a systematic corpus construction process.

Our approach for the systematic literature review can be broken into four steps:

Collect data on published research articles from the Web of Science (WoS) and Scopus databases, apply suitable filters to keep only relevant articles and screen the remaining abstracts for analysis. Obtain bibliometric statistics, trends and insights from the databases and then perform a thematic analysis on the text corpus built using the titles, keywords and abstracts of the relevant articles. The process starts with applying topic modelling using latent Dirichlet allocation (LDA) to identify an optimal number of prominent themes (or topics) in the research area, followed by a qualitative judgement-based construction of topic labels. Manually review all methodological articles to outline the evolution of quantitative methods and algorithms for V&V of simulation models. Most of the verification techniques are qualitative in nature, so more discussion was done on the evolution of validation techniques than on the verification techniques. The validation methodologies have been grouped together based on the data availability of the real system that is being emulated using the simulation model. Identify potential and intriguing research gaps in the literature that can yield crucial future research directions.

The remainder of the article is organized as follows. The second section presents the background on V&V of simulation models with a focus on the business and manufacturing domains and the research questions that motivate our study. The third section discusses the data collection process and a brief descriptive analysis of the data on 300 articles. The fourth section is devoted to topic modelling and the qualitative construction of nine topic labels. The fifth section outlines the evolution of V&V methodologies. Major research gaps are discussed in the sixth section, and the seventh section concludes with important remarks and limitations.

Background

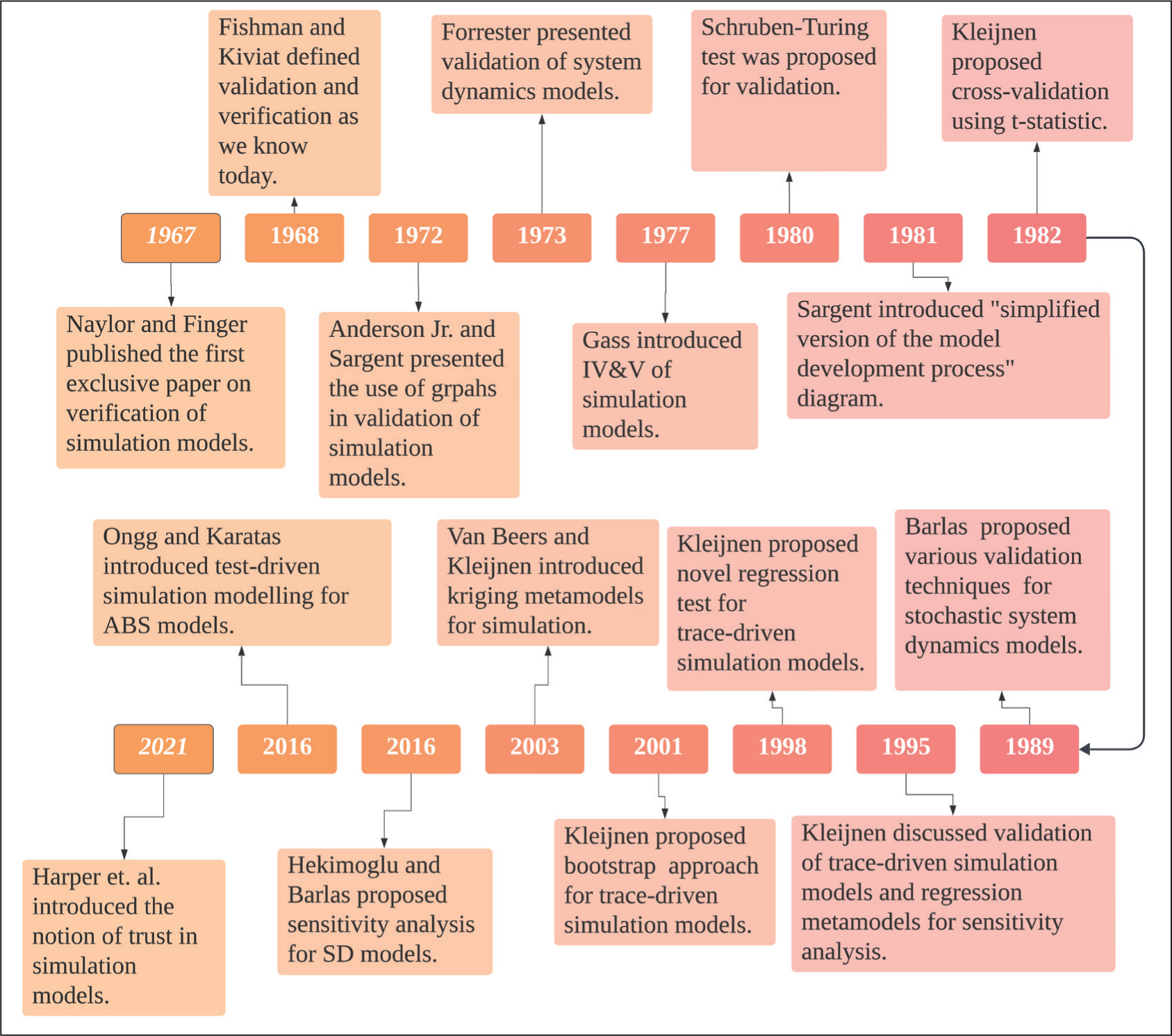

Research on V&V of simulations began in the 1960s, with Fishman and Kiviat (1968) providing its formal definition. Research on V&V gained momentum when epistemological questions like ‘Why are so many models built and so few used?’ started appearing in the literature (Landry et al., 1983). Dimensions such as credibility and acceptability (Robinson, 2002), representativeness and usefulness (Landry et al., 1983), efficiency and effectiveness (Landry & Oral, 1993) and trust (Harper et al., 2021) have been discussed in the literature. In 1993, the European Journal of Operational Research published a special issue on ‘Model Validation in Operational Research’, where papers primarily discussed model validation from an epistemological perspective. These papers were summarized by Landry and Oral (1993). The Winter Simulation Conference has since emerged as a prominent platform for discussion and publication on V&V methods, with new approaches introduced almost every year. These techniques vary by the type of simulation method, context, data availability and several other factors (Roungas, 2016). The literature also discusses quantitative techniques based on various statistical tests and methods, qualitative approaches such as face validity through experts and independent verification and validation (IV&V) by third parties (Robinson & Brooks, 2010). In this context, scholars such as Robert G. Sargent, Jack P. C. Kleijnen and Osman Balci have made significant contributions to the literature by proposing a wide spectrum of V&V techniques for different stages of simulation model development (Figure 1). The timeline of key publications is depicted in Figure 2.

Timeline of a Few Key Publications.

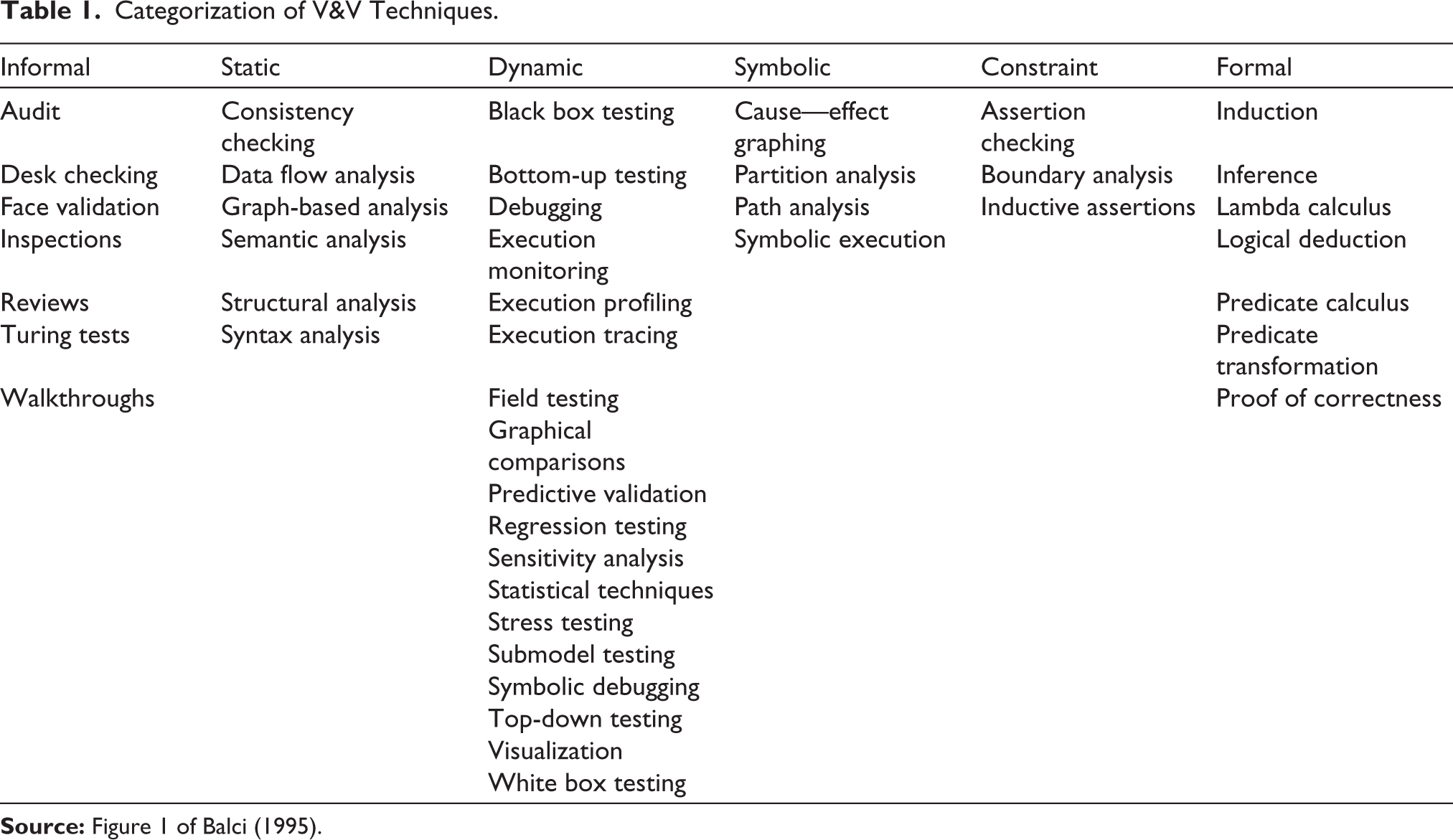

A variety of simulation techniques have been used across domains. According to Jahangirian et al. (2010), frequently implemented techniques for simulation models in business and manufacturing include DES, SD, hybrid, ABS, Monte Carlo simulation, Petri nets and intelligent simulation. DES is the most used simulation method (approximately 40%). Balci (1995) categorized various V&V techniques into different segments (Table 1), and this list remains widely used. For a detailed history of V&V of DES simulation models, refer to Sargent and Balci (2017). While many techniques apply to DES, they are also applicable to other simulation methods. SD is the second-most-used simulation method, and Yaman Barlas is one of the pioneers in proposing various V&V techniques for SD models, including a six-step behaviour validation procedure (Barlas, 1989). The hybrid simulation method combines two or more standalone simulation methods (e.g., DES, SD, ABS) to model a complex real-world system. However, the V&V of hybrid models is rarely reported (Brailsford et al., 2019). ABS is based on DES and object-oriented programming (North & Macal, 2007), so it typically uses V&V techniques developed for DES.

Categorization of V&V Techniques.

To summarize, various simulation methods have been employed across sectors, and the literature offers diverse strategies to enhance the credibility of simulation models. Despite this, there is an evident lack of a comprehensive review on V&V of simulation models that can address the following research questions:

RQ1: What are the prominent research themes in V&V literature? RQ2: How have V&V methodologies evolved over time? RQ3: What major research gaps remain for future work?

This article uses a combination of qualitative and quantitative analyses of 300 carefully chosen articles to answer these research questions.

Data Collection and Insights

This section describes the data collection procedure and the important descriptive summary of the data on V&V of simulation models for business management and manufacturing applications. Inspired by the studies by Liao et al. (2017), Han et al. (2020), Mustak et al. (2021), Dohale et al. (2022), Naz et al. (2022), and Psarommatis and May (2023), we follow a four-step approach for data collection: (a) searching database, (b) applying filters, (c) removing duplication and (d) abstract screening.

Data Collection

Two popular databases, Scopus and WoS, were used to identify relevant research articles. We used three keywords to search for papers in the databases: ‘validation’, ‘verification’ and ‘simulation model’. The Scopus database offers a comprehensive coverage of over 4,000 publishers and includes approximately 15,000 reputable peer-reviewed journal articles, monographs, conference proceedings, etc. The WoS database includes documents from over 3,300 publishers and more than 12,000 high-quality research articles. All WoS articles meeting our criteria were also available in Scopus; therefore, we limit our discussion to Scopus for brevity.

Database Search

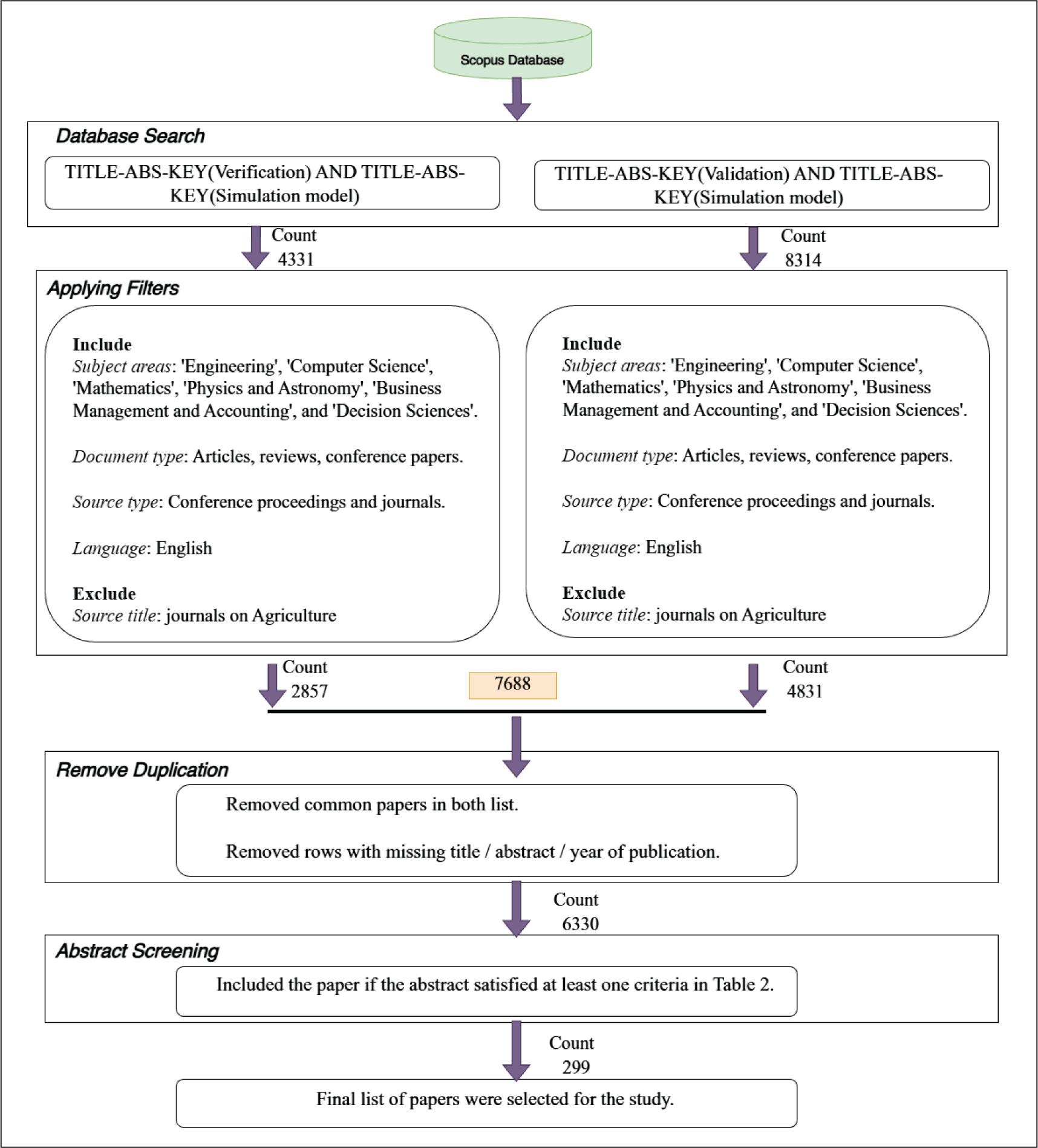

The data collection process in Scopus started with the search strings ‘Verification and simulation model’ and ‘Validation and simulation model’ to find all relevant manuscripts. The search resulted in 4,331 and 8,314 documents, respectively. We then passed these documents through a few filters to ensure relevance and quality.

Applying Filters

We restricted our database to journals and conference proceedings, excluding trade journals, books and reports. Furthermore, we limited document types to only articles, reviews and conference papers for this study. Next, a subject area filter was applied to include relevant articles and exclude the items that appeared in unrelated areas (e.g., agriculture). The retained articles consisted of papers on V&V of simulation models with: (a) interesting real-life applications and (b) novel methodologies. For real-life application-oriented papers, we focused on business management and manufacturing domains. In the Scopus database, Engineering contains two very relevant domains, that is, ‘operations research’ and ‘industrial and manufacturing engineering’, whereas Computer Science includes ‘modelling and simulation’ and ‘software’. The methodologically novel results and innovative algorithms may be published in core mathematics journals and not necessarily in the application areas. Simulation models are also commonly used in physics-based applications to study complex processes/phenomena that are otherwise too expensive or even infeasible to observe. Therefore, we explored the allied area ‘physics and astronomy’.

Remove Duplication

The search string ‘Verification and simulation model’ produced 2,857 articles, whereas the search string ‘Validation and simulation model’ resulted in 4,831 documents. Of the 7,688 articles, only 6,330 documents were unique and complete with respect to the title, abstract and year of publication.

Abstract Screening

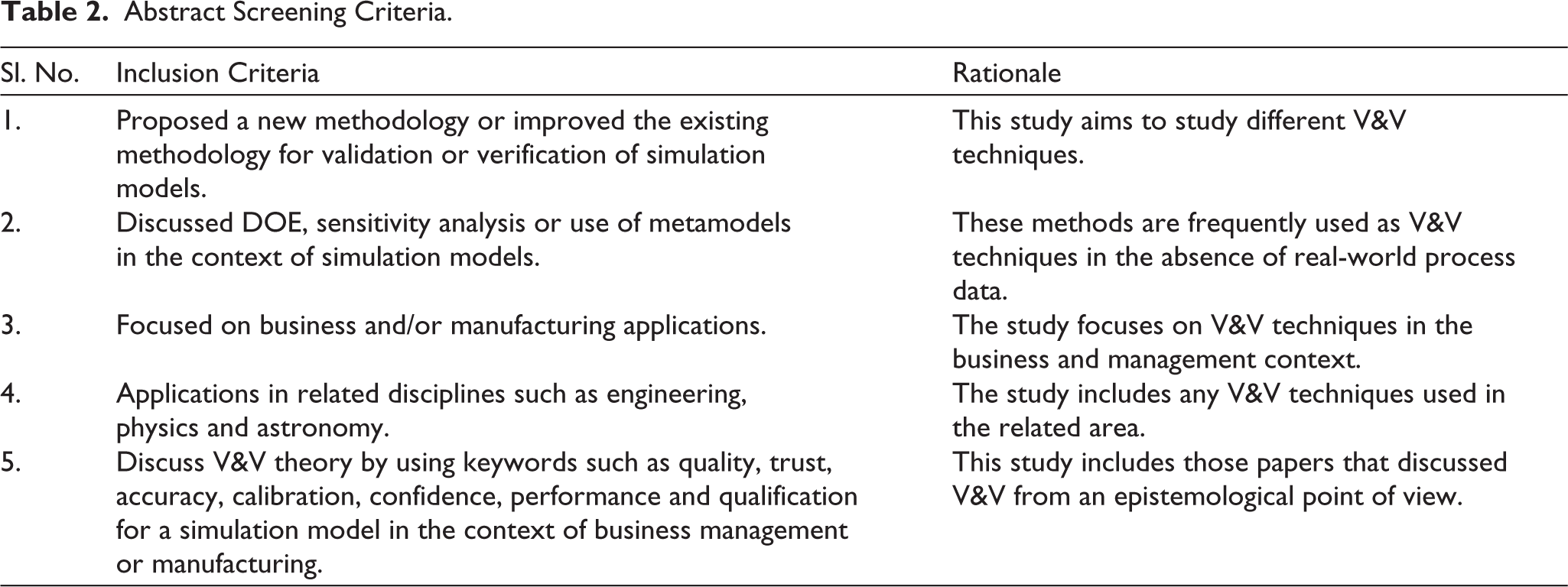

We screened the abstracts of 6,330 articles and, when necessary, diligently reviewed the full text. Using the practices and criteria used by Naylor and Finger (1967), Landry et al. (1983), Barlas (1989), Kleijnen (1995b) and Sargent (2010), we tabulated a set of criteria for further shortlisting the relevant articles (see Table 2).

Abstract Screening Criteria.

It is clear from Table 2 that Criteria 1 and 2 focus on articles with a methodological contribution on V&V, whereas Criteria 3 and 4 support the articles on business management, manufacturing and related applications. The fifth criterion includes those papers that discussed V&V through epistemological keywords such as quality, reliability and calibration. If the abstract of an article satisfied one or more criteria, it was included in the finalized list. Ultimately, a total of 299 papers were selected after the abstract-screening process. We manually included Naylor and Finger (1967), which did not appear in either database (WoS or Scopus) but is an important contribution to the field. Figure 3 presents the flow diagram of the data collection process.

Search and Selection Process of Research Articles from the Scopus Database.

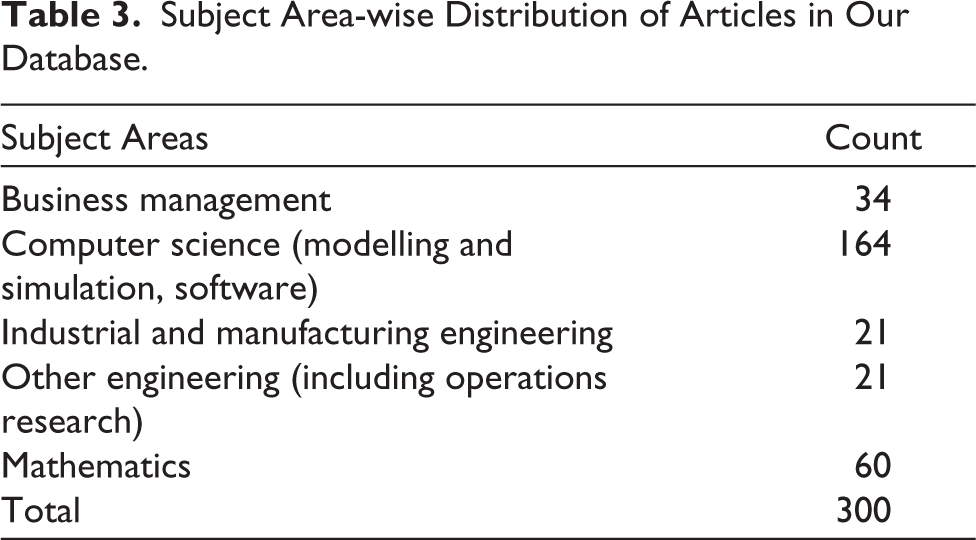

We manually categorized the final list of 300 papers (299 plus 1) into 5 subject areas. Table 3 presents the distribution of papers from different domains.

Subject Area-wise Distribution of Articles in Our Database.

A majority of the articles are related to computer science, which includes ‘modelling and simulation’ and software development papers, and mathematics, which mostly comprises methodology papers. The application papers fall under business management and manufacturing areas, whereas engineering papers contain a mix of both methodology and applications.

Data Insights

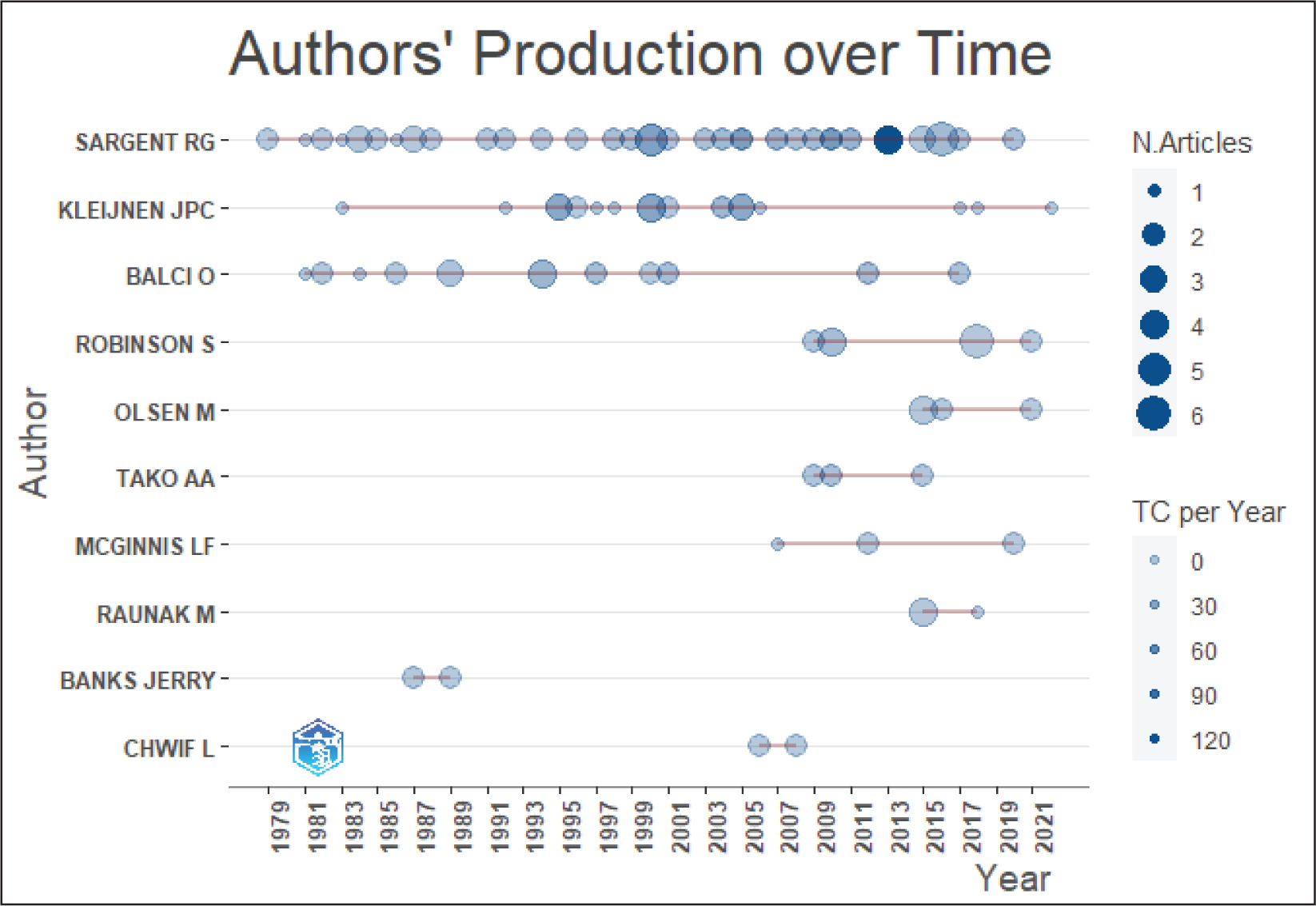

We used the Bibliometrix package of R (Aria & Cuccurullo, 2017) to summarize the bibliometric records of 300 articles. Some of the notable findings are as follows. Although the first paper on V&V of simulation models was published in 1967 (Naylor & Finger, 1967), there was a significant increase in the average number of research papers published after 1980, and the number has remained steady. Nearly 45% of the articles have been published in ‘Winter Simulation Conference’ proceedings, followed by journals such as the European Journal of Operational Research, Simulation Series, Journal of the Operational Research Society and International Journal of Production Research. The same trend has also been observed by dos Santos et al. (2022). Five authors—Robert G. Sargent, Jack P. C. Kleijnen, Osman Balci, Yaman Barlas and Stewart Robinson—have contributed to approximately 31% of all articles (see Figure 4).

Prominent Authors Who Published on V&V of Simulation Models (Graph Generated Using the Bibliometrix Package).

VOSviewer (Van Eck & Waltman, 2010) was also used to generate detailed scientometric patterns on co-authorship, co-citation, etc., but instead of discussing such summaries, we focused on topic modelling of the text corpus to identify prominent themes in this area (to answer RQ1); then we manually reviewed the relevant articles to trace the evolution of methodologies on V&V of simulation models (to answer RQ2).

Topic Modelling

Topic modelling is an objective approach to analyse a large corpus of text data that can otherwise be extremely challenging to process manually (Jelodar et al., 2019; Maier et al., 2021; Vayansky & Kumar, 2020). Using LDA, a widely used natural language–processing technique for topic modelling (Vayansky & Kumar, 2020), we extracted the latent and semantic themes (or topics) from the corpus built using 300 selected articles. Alternative methods include latent semantic analysis and probabilistic latent semantic analysis (Churchill & Singh, 2022).

Our corpus of text for topic modelling includes the titles, abstracts, authors’ keywords and indexed keywords. The corpus of data had to be cleaned before applying LDA for topic modelling. First, all punctuation marks such as @, “, ” and $ were removed. Second, the entire corpus was converted to lowercase. Next, the corpus was tokenized (i.e., split into individual words), wherein the collection of all tokens (words) is known as a bag of words. The final pre-processing step involved the removal of stop words (common English words such as ‘a’, ‘an’, ‘the’ and others). After all the pre-processing, we built a document—term matrix (DTM) constructed as a list of unique words per article and a dictionary of unique words.

The DTM and the dictionary were passed to the LDA Model API of the Gensim (Řeh̊uřek & Sojka, 2010) library in Python for generating important themes (or topics). The LDA assumes that the collection of D articles (i.e., corpus) is statistically generated by t distinct themes, and each theme is statistically generated by w distinct words (see Jelodar et al., 2019, for details). The algorithm generates two probability distributions: θd—for each document d ∈ D, and ϕt—for each of t distinct themes over all distinct words present in the corpus. Typically, Dirichlet distributions are used for the two probability distributions, and the parameters represent topics per document ratio and words per document ratio, respectively (Churchill & Singh, 2022). The number of topics (k) to be extracted from the corpus must also be pre-specified while running the LDA Model. The output of the LDA Model is a list of k sets of words, T1,T2, …, Tk where Ti consists of the most consistent words defining a particular topic (theme). The next sub-section deliberates on how to objectively arrive at the optimal number of topics, while the sub-section ‘Thematically Labelled Topics’ discusses the suitable labelling of the themes based on Ti and requires qualitative input from a subject expert.

Optimal Number of Topics

The topic modelling literature discusses various measures to determine an optimal number of topics. Churchill and Singh (2022) broadly classify these evaluative measures into three different categories: ‘coherence’, ‘coverage’ and ‘qualitative’. Interestingly, the quality of topics cannot be solely measured by quantitative methods, and human intervention is also required to find the optimal number of topics (Blei et al., 2003; Churchill & Singh, 2022).

A set of words is called coherent if they fit together meaningfully in an interpretable way. The coherence calculates a topic’s score by comparing the semantic similarity of its words. For example, the set of words

Output: The set of optimal topics Sk* = {T1, T2, …, Tk}.

1. Define D as the list of 300 documents created using the title, abstract and keywords of articles.

2. Pre-processing:

a. Remove punctuation from D.

b. Convert all text in D to lowercase.

c. Remove standard stop-words (e.g., ‘a’, ‘an’ and ‘the’).

d. Split the cleaned text into individual tokens.

3. Construct a document—term matrix (DTM) of size 300 × unique words using the tokens.

4. Construct a Dictionary Ü list of unique words across all articles.

5. Topic Modelling and Coherence Calculation:

for k = 2 to 30

a. Generate topic set Sk = {T1, T2, …, Tk} Ü LDAmodel(Dictionary, DTM).

b. Compute the average coherence score Cv(k) for the topic set Sk.

end for

6. Determine Optimal Topics:

a. Find Sko, where ko = ar gmax{Cv(k), k = 2, …, 30}.

b. Refine Sko based on the subjective assessment of the resulting topics to obtain Sk* with k* £ ko.

7. Return Sk*.

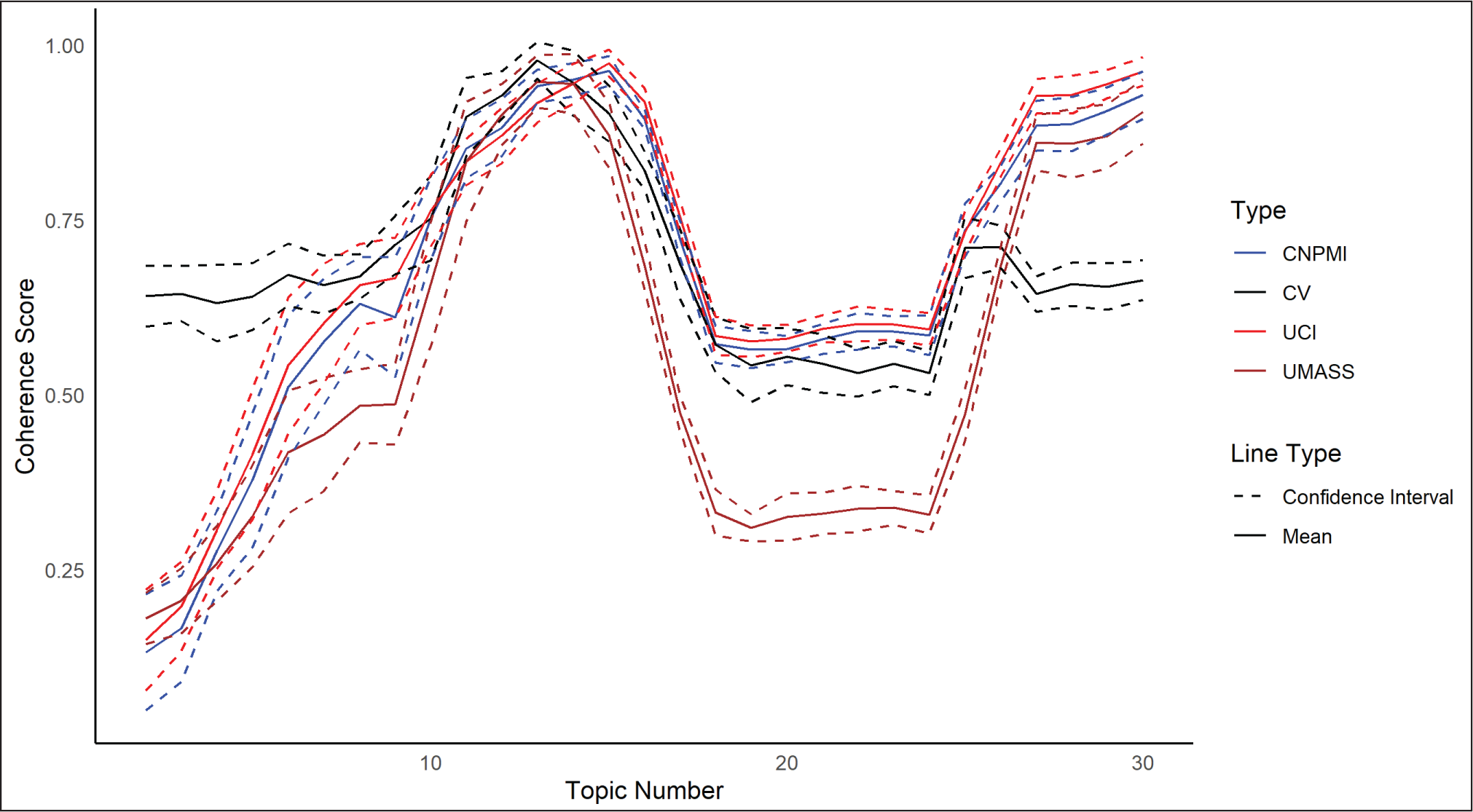

We analysed the distribution of the average coherence of the ‘best topics found by LDA’ versus k, ranging from 2 to 30. The objective would be to find k, which maximizes the average coherence score. To obtain a robust estimate of the optimal number of topics, we repeated the entire procedure of Cv computation 10 times with different random seeds and computed the mean Cv curve along with their 95% confidence intervals (see Figure 5). We generated such curves for three other coherence scores, CNPMI, CUCI and CUMass, which measured semantic similarity using different sizes or types of sliding windows and either used NPMI or pointwise mutual information (Campagnolo et al., 2022). Figure 5 presents the standardized values of these four measures for a fair comparison. All these curves exhibit a similar pattern in terms of maxima, minima and the change of slope.

Distribution of Cv, CNPMI, CUCI and CUMass with Respect to the Number of Topics k ∈ {2, 3, …, 30}.

It is clear from Figure 5 that the average coherence curves exhibit multiple peaks, with the first peak occurring at k ∈ {12,13,14}. The coherence then increases to similar maximal values after k = 27. The reason for the second peak is perhaps the formation of duplicate topics with fewer documents. Thus, simply maximizing the average coherence score with respect to k appears to be inadequate (for our data set), and subjective human judgement would be required to find the optimal number of topics. The qualitative assessment of the topics obtained by the analysis revealed that the themes start to repeat for k > 9. As a result, we used k = 9 as the optimal number of topics to properly capture the prominent themes from our database of 300 articles.

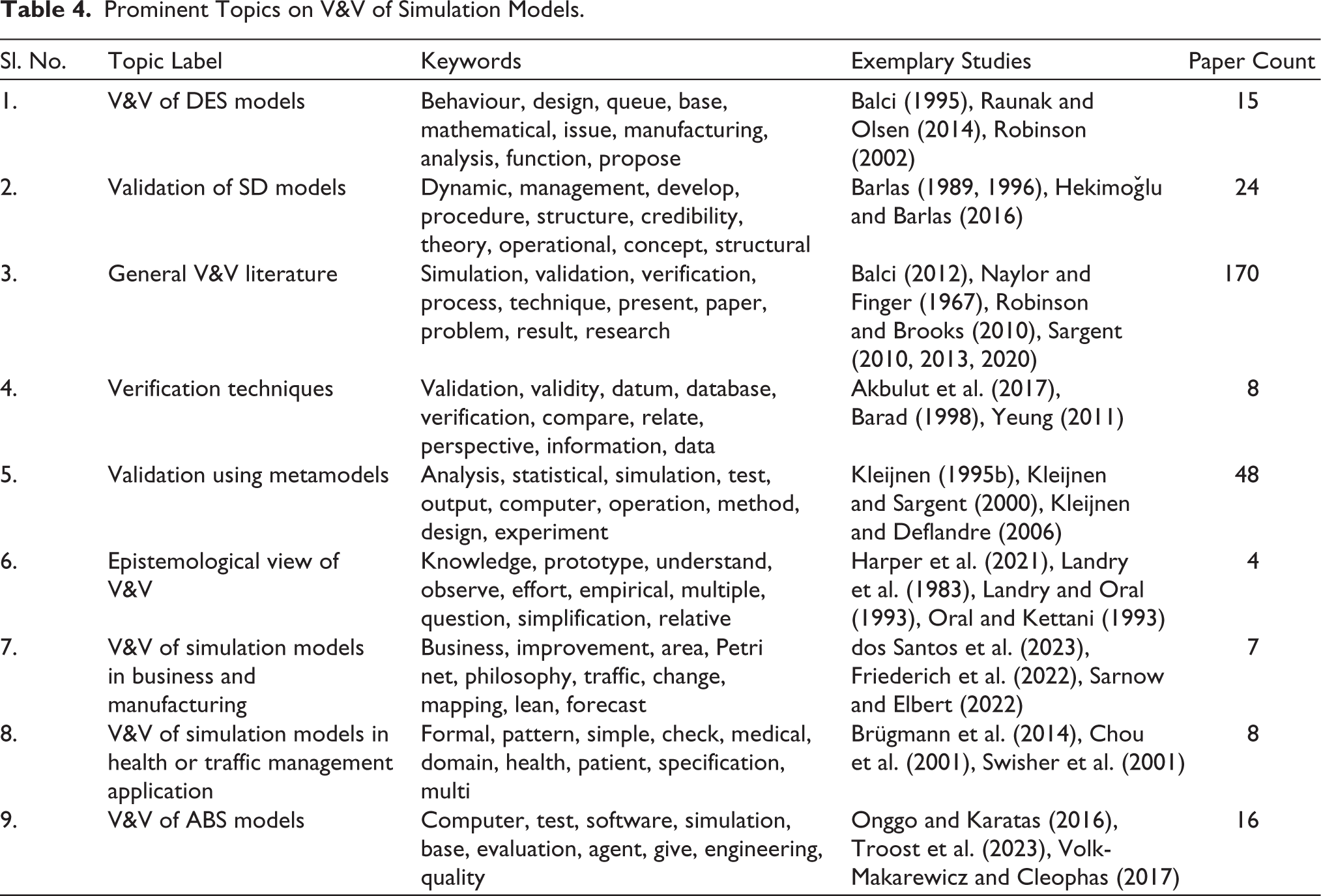

The LDA model generates a set of keywords for each topic (Churchill & Singh, 2022). We labelled these topics based on our experience. For instance, the second topic in the final list of nine topics comprised dynamic, management, development, procedure, structure, credibility, theory, operational, concept and structural. Keywords such as ‘dynamic’, ‘structure’, ‘structural’ and ‘credibility’ suggested a topic of ‘Validation of SD models’, as the validation of SD models’ literature focuses on the structural nature or structure of SD models. Table 4 presents the keywords generated by the LDA model for each topic, topic labels assigned by us, a list of noteworthy publications and the count of papers related to each topic found by the Gensim LDA package. Note that one paper may contribute to two or more themes. The next section discusses these labelled topics in more detail and is a response to RQ1. This thematic labelling helped us in categorizing and summarizing the vast literature on V&V on simulation modelling, which in turn facilitated the understanding of the evolution of V&V methods discussed in the ‘Evolution of V&V Methodologies’ section. The model was trained with nine topics using a chunk size of 10 and 10 passes over the corpus, with a symmetric Dirichlet prior of 0.2 for the document–topic distribution (α). The model was further configured with 100 iterations and a fixed random state of 100 to maintain consistency across runs.

Prominent Topics on V&V of Simulation Models.

Thematically Labelled Topics

Of the nine topics, there are separate themes on V&V of DES models, SD models and ABS models; two themes for ‘verification methodology’ and ‘validation using metamodels’; one theme comprises general V&V literature; and a few other themes address interesting health, traffic, business management and manufacturing applications. Next, we briefly review important citations from each topic.

V&V of DES Models

Radiya and Sargent (1987) developed a formalism for DES models based on six different elements to analyse and validate a DES model. Barad (1994) introduced decomposing timed Petri nets (TPN)—based methodology for the verification of a DES model. However, the methodology’s shortcoming is that it applies only to steady-state and non-terminating DES models. Jacobson and Yücesan (1999) discussed the validation of a DES model structure using the theory of computational complexity to analyse different components of a DES model. Robinson (2002) explored the quality dimension in terms of three components: content, process and outcome of a DES model for business applications. Chwif et al. (2006) proposed a prescriptive V&V technique for a DES model when there are no data on the real system being studied. Strang (2012) showed how a basic spreadsheet tool can be used to verify the distributional assumptions of arrival and service rate. Can and Heavey (2012) compared genetic algorithms and artificial neural networks for building metamodels for DES models. Raunak and Olsen (2014) developed the criteria to measure the validation of a DES model. Montevechi et al. (2022) proposed ‘generative adversarial networks’ to compare the DES model output to the real-system output. Law (2024) discussed various tests, such as the Kolmogorov—Smirnov test, spectrum test and lattice test, for assessing the quality of random number generator—an important component of DES model validation. Rowe and Wright (2001) discussed a method whereby expert analysis can be used to validate simulation models independent of the input and output data. Balci (1995) and Raunak and Olsen (2014) emphasized face validation of input and output data through subject matter experts, goodness-of-fit tests and sensitivity analysis. Lastly, Lugaresi et al. (2022) presented an innovative online validation technique for evaluating the accuracy of DES models used for manufacturing plants.

Validation of SD Models

The validation of SD models focuses on the conceptual modelling part (Tako & Robinson, 2010), as the peculiarities of SD models make ordinary statistical tests unfit for validation (Barlas, 1989). Taylor (1983) presented one of the earliest papers to discuss the validation of SD models and introduced the concept of ‘loop analysis’ for this purpose. Barlas (1989) classified validation tests for such models into two categories: structural validity tests and behaviour validity tests. Barlas argued that the logical sequence of tests should be as follows: first, structural validity tests; second, structurally oriented behaviour tests; and finally, pattern prediction tests. The paper proposed a six-step behaviour pattern validation procedure designed to overcome the limitations of standard statistical tests, which often assume normality, independence and stationarity of data (see Barlas, 1996; Barlas & Carpenter, 1990, for more details). Hekimoğlu and Barlas (2016) demonstrated the sensitivity analysis of SD model output behaviour patterns using a linear regression model. Edali (2022) addressed the issue of manually identifying the parameter space for different output behaviour models in sensitivity analysis and proposed a random forest-based metamodel approach. Pruyt and Kwakkel (2022) presented an exploratory SD modelling approach that evaluates model performance based on its ability to reproduce plausible system behaviour under varying assumptions rather than precise numerical prediction. Their work emphasizes that in policy-oriented decision-making contexts, validation should focus on behavioural robustness across scenarios rather than relying solely on traditional goodness-of-fit measures.

General V&V Literature

This theme consists of papers that discuss the V&V of simulation models, regardless of the simulation technique or area of application, including seminal contributions by Robert G. Sargent and Osman Balci. The maximum number of papers have been assigned to this theme by the Gensim LDA package, which is expected, given that papers in our database discuss V&V without focusing on any specific simulation technique or application. Naylor and Finger (1967), the oldest work in our database, discussed validation from a philosophical viewpoint, such as ‘rationalism’, ‘empiricism’ and ‘positive economics’, and various goodness-of-fit tests, such as ‘analysis of variance’ and the ‘Kolmogorov–Smirnov test’, among others, for validating simulation models. Sargent has consistently discussed the inclusion of V&V at every stage of the simulation study, ranging from Sargent (1981) to Sargent (2020). The original framework in Sargent (1981) defined four distinct phases of V&V: ‘data validation’, ‘conceptual model validation’, ‘model verification’ and ‘operational validation’. The data validation process checks both the quality of the original data and the accuracy of any transformation process performed on real data. Synthetic data are tested using an appropriate experimental design. Conceptual model validation evaluates the model’s underlying theories, assumptions, mathematical structures, logic, links and causal relationships using face validation, traces and distribution fitting to data at the model-building stage. The use of advanced simulation software helps reduce model verification efforts; however, static and dynamic testing can be used to check whether the conceptual model has been correctly coded and executed. The final step, operational validation, verifies whether the model output is reasonable over the system’s range by comparing the real and simulated outputs graphically, conducting hypothesis tests and calculating confidence intervals. A more complex framework was developed by Sargent (2013) that included separate structures for ‘real system’ and ‘simulation model’. Robinson and Brooks (2010) discussed the idea of IV&V of an industrial simulation model developed for nuclear decommissioning and waste management. The IV&V review emphasized ensuring that the right V&V steps were taken during the model-building process, rather than doing ‘after-the-fact V&V’. Balci (2012) explored 12 major processes of the lifecycle of large-scale complex simulation models from problem formulation to model building. Robinson et al. (2020) outlined a sequential perspective on simulation model validation, in which conceptual validation through the appropriate specification of system boundaries, feedback mechanisms and aggregation levels precedes any output-based assessment. Their work suggests that deficiencies at the conceptual modelling stage may carry over into simulation results and cannot be adequately addressed solely through subsequent statistical validation. Fonseca i Casas (2023) discussed a taxonomy comprising three categories of assumptions: ‘systemic data assumptions’, ‘systemic structural assumptions’ and ‘simplification assumptions’. The paper presented a ‘W3H testing table’ that facilitates the systematic selection of validation tests for each category of assumption. The authors suggested an ‘assumptions table’ as a systematic method for recording and overseeing assumptions, encompassing their validation status and review criteria. This is especially crucial in the realm of Industry 4.0 and digital twins, where the simulation model requires ongoing validation as the actual system progresses.

Verification Techniques

Woodward and Mackulak (1997) proposed event graphs based on reverse engineering to detect logical errors in both static and dynamic properties of a DES model code. Krishnamurthi and Thallikar (1998) proposed an algorithm to detect deadlock in DES models and integrated it into the SIAM commercial simulation language. Barad (1998) proposed TPN as an analytical verification technique, specifically for steady-state DES or queuing simulation models. Krahl (2005) discussed various techniques to debug a simulation model, such as ‘read the manual’, animation, model traces and pre-emptive bug prevention. Yeung (2011) addressed deadlocks for a multi-agent manufacturing system. Roungas (2016) suggested an automated verification technique based on the unit testing method. Yacoub et al. (2020) developed a DES model–based formalism in PROMELA to verify sophisticated software like video games. Sargent (2020) summarized the current verification based on the type of approach used to build the simulation model. The first approach is to use high-level programming languages such as Python and R to build a complete simulation model. The second one is to use a ‘simple’ simulation language that provides some basic functionality such as event or time flow routines or random number generators. The third approach is to use ‘advanced’ simulation packages that provide some advanced functionality, such as model execution or graphic capabilities. The final approach is to utilize full-blown simulation packages, such as AnyLogic or Simio, which offer comprehensive functionalities including model building, execution, testing, scenario generation and animation. It is expected that the verification efforts will reduce as one moves from the first approach to the fourth.

Validation Using Metamodels

A metamodel is a statistical surrogate of a simulation model that approximates the relationship between the inputs and outputs of a process/system/phenomenon that aim to capture the essential characteristics (Kleijnen, 1995b, 2009). Metamodels are widely utilized in the simulation literature for four primary purposes: (a) understanding the structure and input–output relationship of a system, (b) prediction, (c) optimization and (d) validation of a simulation model (Kleijnen & Sargent, 2000). When used in conjunction with the design of simulation experiments (also referred to as computer experiments), metamodels can be used to perform a sensitivity analysis of a simulation model (Kleijnen, 1995b). Jack P. C. Kleijnen is a pioneer in this field. Kleijnen (2009) mainly discussed regression and kriging metamodels (KMs). Other types of metamodels for validation include Bayesian metamodeling (Pousi et al., 2013), multiple regression integrated K-means clustering algorithm (Irfanoglu et al., 2013), genetic programming-based, ANN-based metamodels for DES models (Can & Heavey, 2012) and random forest metamodels (Edali & Yücel, 2019). This category ranks second in paper count, as the KM is a much-discussed topic in core statistics and machine learning literature.

Epistemological View for V&V

Landry et al. (1983) started a discussion on the dimensions of trust, credibility and confidence in simulation models. Gass (1977) and Harper et al. (2021) advocated the collaboration between the modeller and different stakeholders (e.g., domain experts, end users or practitioners) during different stages of simulation studies to help build trust in the simulation models. Landry and Oral (1993) further explored the validation of models from an efficiency (doing things right) versus effectiveness (doing the right thing) standpoint.

V&V of Simulation Models in Business and Manufacturing

Production, logistics and manufacturing applications are dominant in the literature, with DES models most commonly used to build simulation models. Cochran (1987) proposed two quantitative V&V techniques, based on the Turing test and mathematical programming, for validating large-scale production simulation models. Rabe et al. (2008) claimed that less attention had been given to the V&V of simulation models in production and logistics compared to defence applications. Sarnow and Elbert (2022) developed a qualitative framework for V&V of ‘generic simulation models’ (GMs)’—a type of DES model—for solving a logistics problem. This study highlighted the potential of reusable models in operational decision-making processes. dos Santos et al. (2023) used ‘K-nearest neighbours (K-NN)’ classification with a ‘p-control chart’ to periodically validate these types of simulation models. Friederich et al. (2022) proposed a data-driven framework for building simulation models as a basis for digital twins for smart factories. Bitencourt et al. (2024) presented a systematic literature review on V&V of digital twin models for manufacturing applications.

V&V of Simulation Models in Health and Traffic Management Application

This topic includes papers that used simulation models in different health or traffic management applications and discussed their V&V. Traffic–highway and health simulation models are common in operations management, decision sciences, information systems and engineering, and share methodological similarities to our primary applications of interest in business and manufacturing.

For instance, Fitzsimmons (1971) designed a simulation model (SIMSCRIPT) to assess emergency medical systems. Swisher et al. (2001) built a DES model of a physician’s clinic and validated it using various tests from Balci (1998). Bountourelis et al. (2011) modelled an intensive care unit that incorporated two key parameters: patient blocking and bed occupancy. They utilized animation for conceptual model validation and graphical comparison of key parameters with real data for operational validation.

Ryan (1979) employed graphical methods to validate an existing bus operation simulation model. Rousseau and Bauer (1996) discussed factor analysis and design of experiments (DOE) for sensitivity analysis of multivariate outputs from large-scale transportation simulation models. Afshar and Azadivar (1992) developed a microscopic traffic simulation model to investigate freeway work zones with lane closures and safety hazards, which was validated against field data using graphical tools. Rao et al. (1998) discussed a multistage validation framework for traffic simulation models that consisted of conceptual and operational validations using a t-test and a Kolmogorov–Smirnov test. Chou et al. (2001) built a computer simulation model of pre-timed traffic signals for an urban city in Taiwan to analyse and reduce the average waiting time of vehicles at intersections of the city, validating it via a χ² goodness-of-fit test. Brügmann et al. (2014) developed a traffic simulation package in Maude, a declarative programming language, utilizing state-of-the-art techniques for the V&V of the model.

V&V of ABS Models

An ABS model is typically a combination of a DES model with object-oriented programming. Thus, many of the V&V techniques for DES are also applied to ABS. However, validating the rules that regulate the agents of a model is the primary concern in ABS models (Onggo & Foramitti, 2021). Verification techniques, such as structured code, debugging walk-throughs and unit testing, are popular in this literature (North & Macal, 2007). Arifin et al. (2010) presented one of the earliest articles that proposed a V&V methodology specifically for the ABS model. They proposed a docking technique that compartmentalizes a simulation model, blocking the flow of errors from one compartment to another. Other notable methodological contributions include parameter sweeping, white box validation, black box validation, code debugging (Gerrits et al., 2017), a generic testing framework (Gürcan et al., 2013), a modified metamorphic technique (Olsen & Raunak, 2016), a test-driven approach (Onggo & Karatas, 2016) and a meta-algorithm (Volk-Makarewicz & Cleophas, 2017). Gore et al. (2017) introduced a statistical debugging–based verification approach for ABS that systematically analyses execution traces to identify behavioural deviations at the agent level. By linking individual agent interactions to emergent macro-level outcomes, their method enables quantitative trace validation specifically suited to the path-dependent and non-linear dynamics characteristic of ABSs.

Evolution of V&V Methodologies

We manually reviewed the relevant articles in our database to outline the evolution of quantitative methods developed in the literature for the V&V of simulation models. This refers to RQ2.

Verification Methodologies

The objective of verification is to find and correct bugs or errors in simulation code. A majority of the verification techniques in the literature are qualitative in nature or present general debugging guidelines (Balci, 1995; Roungas, 2016), but none are without issues (Kleijnen, 1995b).

From a quantitative approach standpoint, Kleijnen (1995b) discussed testing of subroutines that generate pseudorandom and non-uniform distributed random numbers. Krishnamurthi and Thallikar (1998) proposed a deadlock detection algorithm and interfaced it with the commercial simulation language ‘SIMAN’ for DES models. Yeung (2011) discussed the issue of deadlocks in multi-agent manufacturing systems and proposed a formal verification procedure to identify the same.

Other articles presented interesting verification methodologies that compared the simulation model output with that of a metamodel, a new simulation model or a mathematical model. For instance, Barad (1998) used TPNs to decompose a queue simulation model into a Petri net graph and compared its results with the simulation model output. Akbulut et al. (2017) proposed event-oriented model building tools such as ‘simulation graphs’ as compared to ‘process-oriented’ models like AnyLogic or Simio, comparing the outputs to verify their simulation models. Henderson and Bryce (2019) used a DES model, called ‘force flow model’ (FFM), to simulate the manpower dynamics of the Canadian armed forces and compared the simulation model output with the analytical results obtained from a differential equation model.

Validation Methodologies

Recall that validation refers to assessing how close a conceptual model is to a real system (Sargent, 2020). Assuming that the conceptual model is coded (programmed) correctly, the objective of ‘validation’ is to assess the accuracy of the simulation model. The applicability of the validation technique depends on the context, such as the type of simulation (i.e., DES, SD, hybrid, ABS) and the availability of inputs and outputs of the real-world system. Data from the real system play a vital role in validating a simulation model (Kleijnen, 1995a). In the categorizing task of topic modelling, topics 2 and 5 (and partly Topic 1) revealed that validation methods vary based on the availability of real-system data. Therefore, we present the evolution of validation methodologies under three categories: (a) both input and output of real-world system data are available, (b) only real-world system outputs are available (and not the inputs), and (c) neither input nor output data of the real-world system are available.

Availability of Both Input and Output Real Data

This scenario, known as a trace-driven simulation study, involves running the simulation model on a given set of inputs and comparing the output set with the real-system output.

The relevant literature starts with a basic Student’s t-test proposed by Kleijnen (1995b) that tests the difference between the average simulated output and the average real-system output. In the same paper, Kleijnen proposed an F-distribution-based naive test using a two-dimensional hypothesis; that is, the means of simulated output and real output are identical, and there is a positive correlation between simulated and real output. Mathematically, the null hypothesis is H0: β0 = 0 and β1 = 1, where β0 and β1 are coefficients of the linear regression model E(w|v) = β0 + β1v, where v and w are real-system and simulation outputs, respectively. Kleijnen et al. (1998) observed that the naive test frequently rejected valid models and proposed a modification referred to as a novel test, which tests for the equality of means and variances of the two sets of outputs (i.e., simulation model and the real system) corresponding to the given set of inputs. Mathematically, the null hypothesis is H0: γ0 = 0 and γ1 = 0, where γ0 and γ1 are coefficients of the linear regression model E(D|Q = q) = γ0 + γ1q, where D represents the difference between the real and simulation output, and Q is the sum of the two outputs. Kleijnen et al. (2001) noted that the normality assumption/approximation may not hold for a small number of observations, making the F-test invalid. As a result, a bootstrap methodology was proposed for validating the simulation model. Recent advances include Martens et al. (2006), who introduced a neural network based on a multi-layer perceptron and radial basis function to validate simulation models, while dos Santos et al. (2023) proposed the usage of digital twin to validate the model using updated data with machine learning and control charts.

Availability of Only Output Real Data

Typically, the input data for the real-world system is unavailable if the objective is to build a simulation model that matches a real system’s historical data or when a real-world system is observable but there is no controllable input that can be tuned or experimented with (Kleijnen, 1999). Consequently, the basic notion of pairing the outputs of the simulation model and the real system is violated.

Naylor and Finger (1967) discussed several tests, such as analysis of variance, χ² tests, factor analysis, Kolmogorov–Smirnov tests and a few others that could measure the ‘goodness of fit’ of the simulated model output with the real-system (or historical) output. Hsu and Hunter (1974) suggested a Bayesian approach to compare real and simulated generated data. Ringuest (1986) developed a χ² statistic to test whether the difference between simulated and real output falls within a permissible limit. Kleijnen (1999) applied established methods, including the two-sample Student’s t-test, Johnson’s modified Student’s statistic and Jackknifing, for the validation of simulation models in this context. Borgonovo et al. (2021) examined the role of uncertainty quantification in simulation models used for decision-making and highlighted how variability in input assumptions can significantly influence model behaviour. Their study underscores the importance of incorporating input uncertainty into the validation process, particularly in management contexts where reliable parameter estimates are often unavailable. Doudareva and Carter (2022) provided a list of popular data-driven validation techniques, including nonparametric tests, factor analysis and time-series analysis.

Non-availability of Real Data

There is an abundance of business management and manufacturing applications where real systems cannot be observed or experimented with (Kleijnen, 1999). In such cases, one can only hope to build robust simulation models, and sensitivity analysis serves as a popular approach to validate the influence of the inputs and the effect of structural changes on the model (Kleijnen, 1995, 1999).

Design of experiments (DOE) and metamodels are two key tools in the sensitivity analysis methodology. DOE is employed to identify optimal combinations of input parameters for running the simulation model, and an appropriate metamodel helps understand and analyse the relationship between the model’s inputs and outputs. Often, the choice of metamodel has an impact on the optimal design. Popular designs for determining the inputs of the simulation model include sequential design, full factorial design, fractional factorial design and orthogonal arrays (Kleijnen, 2017).

The validity of a metamodel must be confirmed before sensitivity analysis. For regression-based metamodels, Kleijnen (1983) proposed cross-validation using the t-statistic, and Kleijnen and Deflandre (2006) proposed a Bootstrap approach. Linear regression metamodels (LRMs) assume a linear relationship between simulation model inputs and outputs, which may consist of first-order or second-order polynomials, with optional interaction terms. Kleijnen (1995) recommended using full or fractional factorial designs to achieve high accuracy for LRMs. dos Santos and Nova (2006) showed that LRMs often fail to provide an accurate global fit for smooth response functions with arbitrary shapes and proposed the idea of non-linear meta-models (NLMs). Van Beers and Kleijnen (2003) introduced the usage of KMs as compared to low-order polynomial metamodels in simulation experiments with large input domains (see Kleijnen, 2017, for more discussions on LRMs and KMs). Recently, Kleijnen and Van Beers (2022) proposed cross-validation-based tests for KMs.

Over the past decade, the scope of V&V in simulation modelling has expanded beyond traditional standalone environments to encompass data-driven and real-time simulation systems. Emerging paradigms such as digital twins, cloud-based distributed simulations and artificial intelligence (AI)–assisted model generation using large language models have introduced new validation challenges related to dynamic model updating, code reliability and consistency across heterogeneous computational platforms.

Research Gaps and Future Directions

A comprehensive review and analysis of the V&V of simulation model literature in our article has revealed several important research gaps that can serve as the entry points for future research, directly addressing RQ3.

Although validation techniques often involve substantial quantitative methodologies, expert judgement does play a role. Verification methods remain largely qualitative, with suggestions and guidelines for careful debugging. This is the first important research gap, where more focus is required on developing novel methodologies to objectively verify the correctness of the computer codes that implement conceptual models.

Schruben–Turing test (Kleijnen, 1995) is a popular tool for testing the validity of a simulation model, where the outputs of the real system and the simulation model are presented together to an expert. If the expert can segregate the two sets of outputs, then the simulation model is considered unreliable. Despite the clear nature of the test, this validity testing is typically conducted manually by an expert. However, given the advancements in machine learning and AI, one can develop an automated segregation technique for the two sets of outputs requiring a methodology more sophisticated than a simple clustering technique, particularly for SD or hybrid models with non-scalar responses.

The current literature on the validation of SD models primarily focuses on behaviour validation (Barlas, 1989). There is a scarcity of validation methodology that exploits the structure of the SD simulation model. Future research should focus on building customized validation techniques for SD, ABS and other hybrid simulation models that can exploit the structural arrangement of the model components, geometry and other important features of simulation model output.

Additionally, when the output, and not the input, of the real system is available, validation is akin to an inverse problem in computer experiments (Bhattacharjee et al., 2019; Ranjan et al., 2008, 2016). Consideration of relevant conceptual aspects from the larger domain of inverse problem methodologies for the validation of simulation models in this context can be both insightful and helpful.

In the absence of both input and output data of a real system, the reliability of a simulation model is typically assessed via sensitivity analysis. Moreover, Kleijnen has developed a series of methodologies on sensitivity analysis via linear regression and KMs when simulation model output is scalar in nature (e.g., in a DES model). However, sensitivity analysis for simulation models with non-scalar response (e.g., in SD models) has been inadequately discussed (preliminary work done by Hekimoğlu and Barlas, 2016). Furthermore, most of these methodologies for reliability testing assume continuous inputs and outputs. In this context, sensitivity analysis for simulation models with categorical outputs and/or mixed inputs (i.e., continuous, discrete, stochastic) is yet to be investigated and provides a promising avenue for future research.

Although a plethora of techniques have been developed and discussed for V&V of DES models, several for SD models and a few for ABS models, techniques for the V&V of other types of simulation models, such as hybrid models, distributed simulations and simulation gaming, are lacking. Each simulation model brings its unique challenges and presents an opportunity for innovation in developing efficient V&V methods. A generalized framework for determining appropriate V&V techniques across various types of simulation models will be greatly beneficial to practitioners in this area.

The utility of DOE in the context of V&V cannot be overstated. DOE provides a structured and efficient approach to explore the behaviour of simulation models across a range of input conditions. While DOE is well established for investigating input–output relationships and optimizing simulation runs, its broader potential in V&V remains largely untapped—especially in the context of SD and hybrid simulation models. For instance, DOE could be systematically employed to test model robustness under diverse scenarios (verification) or to structure comparisons between simulated and real-world outputs (validation). However, the current literature offers limited guidance on how to adapt DOE frameworks to handle the non-scalar, feedback-driven and often qualitative nature of SD model outputs. This presents a valuable opportunity to develop tailored DOE methodologies that align with the unique characteristics of complex simulation models and to encourage greater utilization of recent advancements in DOE techniques.

Finally, topic modelling, particularly in the context of a literature review, reveals an additional research gap. The current practice of finding the optimal number of topics involves optimizing the average coherence score (or similar goodness-of-fit criterion) of topics along with expert judgement. Understandably, forming the topic labels is qualitative in nature, but finding the optimal number of topics can be made less subjective with further research. Dissatisfaction with the current practice is also noted by Baimakhanbetov (2023), Doogan and Buntine (2021) and Krasnov and Sen (2019). Although topic modelling has become a popular tool for analysing large bodies of simulation literature, current approaches typically treat all documents equally, regardless of their scholarly impact. However, citation counts can serve as a proxy for influence, and incorporating them into topic modelling either during corpus construction or as a weighting mechanism can highlight influential themes. Despite its potential, this integration remains underexplored in the context of simulation research, presenting a valuable opportunity for methodological innovation.

Concluding Remarks

Our study was motivated by the absence of a comprehensive systematic review of the vast V&V literature. The combination of topic modelling, bibliometric analysis and a manual review of 300 research articles provided an in-depth understanding of the literature on V&V of the simulation models commonly used in business management and manufacturing domains. The implementation of LDA for topic modelling resulted in the identification of nine prominent topics and research themes. The bibliometric and scientometric analyses helped find significant authors, important keywords and the edifying chronology of the literature. Moreover, the bibliometric maps and charts highlight the relationships among prominent authors, popular keywords, crucial documents and significant sources. This literature review has further enabled us to outline the evolution of popular V&V methodologies and identify the research gaps that can lay the foundation for future researchers in this area.

Through this research endeavour, we learned that the Winter Simulation Conference, European Journal of Operational Research, Journal of the Operational Research Society and International Journal of Production Research are some of the prominent platforms that disseminate research articles on the V&V of simulation models. Furthermore, Robert G. Sargent, Jack P. C. Kleijnen, Osman Balci and Yaman Barlas are pioneers and have made notable contributions in the field. Upcoming researchers can use this information on the overall domain to garner new insights into the V&V of simulation models.

For business management and manufacturing, DES models are the most popular, followed by SD and ABS models. Although several statistical tests have been developed to validate simulation models, the verification techniques continue to involve qualitative suggestions and guidelines. In this case, the validation tests can be categorized according to the availability of the inputs and outputs of the real system. When both inputs and outputs of the real system are available, standard tests such as t-test and χ² test can be used to validate the simulation models. However, if we have access to only real-system output and not the inputs, then factor analysis and Kolmogorov–Smirnov test are used. On the other hand, when both input and output are unavailable, sensitivity analysis with linear regression and KMs serve to validate the reliability of simulation models.

Although the contribution of this article stems from the inception of a comprehensive review on this topic, the categorization of articles into nine major themes and the evolution of quantitative methodologies provide valuable insights for researchers. Furthermore, the critical takeaway for future researchers lies in the identified research gaps. The key highlights include the inadequacy of the current practices in finding the optimal number of themes in topic modelling, the lack of quantitative methodologies for the verification of simulation models and the need to develop a generalized framework for deciding which V&V technique to use for different types of simulation models.

Our research relied on corpus built for this specific study. While extremely useful in helping us come up with our findings, these findings are based on this specific corpus built only on titles, keywords and abstracts for topic modelling analysis. Employing a quantitative analysis of the full text of the articles can potentially yield more comprehensive results in future research.

Footnotes

Acknowledgements

We would like to thank Professor Abhishek Mishra for inviting us to submit this review paper.

Data Availability

The list of 300 articles used for building the text corpus in this systematic literature review is available from the authors upon reasonable request.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.

Funding

The authors received no financial support for the research, authorship and/or publication of this article.