Abstract

The integration of algorithmic credit assessment tools into Indian commercial banking has transformed traditional approaches to risk evaluation. This study conducts a comparative analysis of credit-risk assessment accuracy before and after the introduction of credit automation technologies—including rule-based engines, machine-learning–based scoring and real-time data analytics. Drawing on panel data from selected public and private sector banks between 2015 and 2024, it evaluates automation’s effect on key risk indicators: default prediction accuracy, non-performing asset (NPA) incidence and credit-score volatility. Employing a quasi-experimental difference-in-differences (DiD) framework and model-performance metrics (AUC, precision–recall and Type I/II error rates), the study isolates the causal impact of automation on credit-decision outcomes. Complementary robustness checks using logistic regression and borrower-attribute calibration assess model drift and discriminatory power. Results demonstrate that algorithmic systems significantly improve prediction accuracy and reduce NPA ratios, particularly in high-volume retail and MSME portfolios. Yet, risk evaluation for unbanked and thin-file borrowers remains prone to algorithmic bias and data sparsity. By revealing this duality—efficiency gains alongside fairness risks—the article contributes to ongoing debates on responsible AI in financial services. It further proposes policy measures including hybrid credit-scoring architectures, continuous model validation and AI-governance standards to ensure accuracy and equity. The findings enrich theoretical discussions of automated risk management while offering actionable insights for sustainable digital transformation in Indian commercial lending.

Keywords

Introduction

The Indian commercial banking sector has witnessed a decade of rapid transformation driven by digitalization, regulatory reform and rising competition from fintech firms. At the centre of this transformation lies credit automation, where algorithms and machine-learning (ML) models increasingly guide lending decisions. These technologies promise faster turnaround times, operational efficiency and scalability compared to manual underwriting. Yet their influence on credit-risk assessment accuracy and borrower fairness remains debated. Scholars argue that algorithmic decision-making enhances analytical precision but may introduce opacity and bias, particularly when data quality or representativeness is limited.

Transitioning from heuristic credit evaluation to data-driven modelling has enabled banks to capture complex borrower behaviours through ensemble and deep-learning techniques such as XGBoost and LightGBM. Empirical studies demonstrate superior predictive power of these models on metrics like AUC, recall and error minimization compared with logistic regression (Naik, 2023; SuasPress, 2023). Not only do these methods enhance portfolio performance, especially in retail and MSME lending, but they also amplify disparities for thin-file or informal borrowers who lack structured credit data (MDPI, 2021; Springer, 2023). Consequently, algorithmic systems risk deepening financial exclusion under the guise of efficiency.

Another key dimension is non-performing assets (NPAs)—a chronic stress point for Indian banks. NPAs impair profitability, liquidity and investor confidence. Emerging research indicates that ML-based scoring can detect early warning signals of default and improve provisioning discipline (ResearchGate, 2025; SSRN, 2025). However, regulators caution that excessive dependence on static models without periodic recalibration can lead to misclassification in shifting macroeconomic conditions. This underscores the core dilemma between automation efficiency and risk containment that shapes the present inquiry.

Accordingly, this study conducts a comparative assessment of credit-risk accuracy pre- and post-automation using panel data from selected public and private sector banks from 2015 to 2024. Employing a quasi-experimental difference-in-differences (DiD) framework and performance measures such as AUC and NPA incidence, it evaluates whether automation has tangibly improved default prediction and asset quality. The research further examines distributional fairness across demographic borrower groups to identify potential algorithmic biases.

The study contributes empirically and theoretically to the discourse on algorithmic lending and risk governance, aligning with the Reserve Bank of India’s (RBI) evolving model validation and AI-governance directives (RBI, 2022; Sengupta et al., 2023). By bridging predictive analytics with responsible-AI principles, it aims to clarify whether automation represents risk mitigated—or merely risk reformulated—within Indian commercial banking.

Literature Review

Evolution of Credit-risk Assessment in Banking

Credit-risk assessment has long been the cornerstone of financial intermediation, determining not only individual borrower outcomes but also the solvency and stability of banking institutions. Historically, credit decisions in commercial banks were underpinned by manual underwriting techniques, grounded in the ‘5 Cs’ of credit character, capacity, capital, conditions and collateral. These assessments were based on subjective judgement and qualitative borrower information, which introduced inconsistencies in default prediction, especially across heterogeneous borrower classes. Although these methods provided flexibility, they were vulnerable to personal bias, lacked reproducibility and exhibited limited predictive power in volatile credit environments.

To address these limitations, financial institutions progressively adopted quantitative models grounded in statistical inference, primarily during the post–Basel II era. Internal rating-based models emerged as regulatory-compliant tools to estimate probability of default (PD), loss given default and exposure at default, especially in the context of capital adequacy norms. Logistic regression and discriminant analysis became widely used techniques to classify borrowers based on historical repayment patterns, asset-quality indicators and macroeconomic proxies. These models improved objectivity and repeatability in credit assessment and allowed banks to align their credit portfolios with risk-weighted capital buffers. However, even with statistical formalization, traditional credit models remained constrained by linear assumptions and limited feature spaces. For instance, logistic regression-based scoring was often unable to account for non-linear borrower behaviours or the interaction effects between loan attributes and macroeconomic stressors (Kou et al., 2021). Moreover, such models were heavily reliant on structured financial data, which excluded informal borrowers, particularly in emerging economies like India, where credit penetration remains uneven (Ghosh, 2020). As a result, credit-risk assessment tools struggled to differentiate between transient delinquencies and chronic risk profiles, leading to suboptimal lending decisions and higher NPAs in certain borrower categories (Chatterjee & Sinha, 2022; Rajeev & Mahesh, 2021).

In response to these methodological limitations and growing data availability, banks began transitioning towards more adaptive and data-intensive approaches. Credit-scoring platforms integrated behavioural analytics, real-time transaction data and psychometric indicators to construct more granular borrower risk profiles. This marked the transition from rule-based systems to algorithmic credit-scoring frameworks capable of learning complex patterns through ML and AI methods (Baesens et al., 2016; Lessmann et al., 2015). This evolution not only promised improved accuracy but also offered scalability and speed, enabling banks to process vast loan volumes with limited human intervention while theoretically reducing model bias and error propagation.

Emergence of Credit Automation Technologies

The emergence of credit automation technologies marks a significant inflection point in the architecture of risk evaluation within the banking ecosystem. Unlike earlier decision-support tools that relied on pre-programmed scorecards, credit automation integrates algorithmic models capable of autonomously learning from large-scale, multidimensional data. This shift has been driven by the convergence of three forces: availability of granular borrower data, computational advances in model processing and a regulatory impetus towards efficient and transparent lending practices. Automation platforms now encompass a suite of tools from robotic process automation (RPA) for KYC to AI-driven underwriting engines, thereby transforming loan origination and risk evaluation into machine-led systems (Arner et al., 2020; Lessmann et al., 2015).

One of the most influential transformations has been the integration of ML and ensemble methods such as Random Forest, gradient boosting machines and support vector machines into credit-scoring algorithms. These techniques have been empirically shown to outperform classical statistical models in prediction tasks by capturing non-linear relationships and interaction effects that traditional logistic regression often overlooks (Kou et al., 2021). In particular, tree-based ensemble models like XGBoost and LightGBM have become popular due to their interpretability, computational efficiency and adaptability to class-imbalanced datasets often seen in default prediction (Beck et al., 2020; Mori & Kitagawa, 2022). These models also allow for continuous updates, enabling real-time credit decisions based on changing borrower behaviour and macroeconomic signals (Baesens et al., 2016). Beyond predictive accuracy, credit automation offers operational efficiency by reducing manual effort, documentation lag and credit officer subjectivity. For instance, RPA tools have streamlined income verification, document extraction and borrower onboarding, accelerating loan disbursal cycles significantly (Arner et al., 2020). AI-based credit-decision engines now integrate alternate data such as social media profiles, utility payment histories and mobile usage patterns, particularly valuable in emerging economies with large populations of ‘thin-file’ or informal borrowers (Gambacorta et al., 2020). These advancements have enabled broader financial inclusion while simultaneously improving credit-risk stratification, especially in retail and MSME segments that traditionally suffered from data opacity (Rajeev & Mahesh, 2021).

In sum, credit automation represents a paradigmatic shift from rule-based, manual risk assessment towards data-driven, algorithmically governed lending infrastructures. While these technologies offer unprecedented speed, accuracy and scale, they also introduce new complexities related to model fairness, accountability and regulatory oversight. Consequently, their effectiveness must be evaluated not only through quantitative metrics but also through normative lenses of justice, inclusion and institutional governance (Brown et al., 2022; Gambacorta et al., 2020).

ML and Default Prediction

ML has increasingly become central to the predictive modelling of credit default risk due to its ability to uncover complex, non-linear interactions among variables and adapt dynamically to changing data environments. Unlike traditional linear models, ML approaches utilize large-scale, high-dimensional borrower data including behavioural, transactional and contextual features to enhance classification precision in identifying default-prone applicants (Lessmann et al., 2015). In contemporary commercial banking, especially in emerging markets, this capability is critical as customer heterogeneity and informal income sources introduce significant modelling challenges (Kou et al., 2021). By capturing hidden patterns that elude classical logistic regression, ML models have demonstrated significantly higher discriminatory power in credit-scoring applications.

Empirical studies validate the superior predictive performance of tree-based ensemble methods such as Random Forest, gradient boosting machines and extreme gradient boosting (XGBoost) in comparison to traditional credit-scoring techniques. These models offer advantages in handling class imbalance, which is a recurring issue in loan default datasets where default cases are typically a small fraction of the overall portfolio (Beck et al., 2020; Mori & Kitagawa, 2022). Moreover, techniques, such as synthetic minority over-sampling technique (SMOTE) and cost-sensitive learning, have been successfully integrated into ML workflows to further improve performance on skewed datasets (Kou et al., 2021). For instance, in a study of SME lending, models built using LightGBM outperformed traditional scorecards in both accuracy and recall, underscoring their ability to minimize false negatives, that is, wrongly classifying risky borrowers as safe (Baesens et al., 2016; Naik, 2023).

Key performance metrics used to evaluate credit-scoring models include the area under the receiver operating characteristic curve (AUC-ROC), precision, recall, F1-score and confusion matrices. These metrics provide a multidimensional view of model performance, balancing the trade-offs between Type I errors (false positives) and Type II errors (false negatives), which are both consequential in lending decisions (Arner et al., 2020). The AUC-ROC score, in particular, is widely adopted in banking contexts due to its robustness in evaluating classification quality under imbalanced datasets. ML models trained on hybrid data combining structured financial variables with unstructured behavioural and psychometric inputs have shown AUC improvements of 8%–15% over traditional methods (Brown et al., 2022).

In conclusion, ML holds immense promise for improving default prediction in commercial banking, particularly in environments with heterogeneous borrower profiles and dynamic risk conditions. However, its efficacy depends on careful model selection, validation and alignment with governance and fairness principles. The transition to ML-led credit assessment must therefore be accompanied by institutional mechanisms that balance efficiency gains with transparency and accountability (Lessmann et al., 2015).

Credit Scoring and NPAs in the Indian Context

India’s commercial banking sector has faced recurring crises linked to the proliferation of NPAs, particularly in the aftermath of credit booms driven by public sector lending expansion and underwritten infrastructure projects. Between FY2012 and FY2018, gross NPA ratios in public sector banks surged from 3.4% to over 14%, prompting concerns over inadequate risk assessment mechanisms and political interference in lending (Rajeev & Mahesh, 2021). Credit-scoring models deployed during this period largely relied on static borrower characteristics and legacy account data, often failing to flag early warning indicators of distress, especially in corporate and MSME segments (Ghosh, 2020). The lack of dynamic, real-time credit evaluation tools exacerbated asset-quality deterioration and delayed recovery actions, calling into question the robustness of traditional models in the Indian context (Chatterjee & Sinha, 2022).

Empirical research has shown that banks which implemented algorithmic or ML-based credit scoring witnessed significant reductions in loan defaults, particularly in consumer and SME portfolios. Studies covering lending patterns from 2017 to 2023 indicate that ML-enhanced scoring improved repayment rates by up to 12%, reduced first-year delinquencies by 15%, and enabled pre-default restructuring in high-risk accounts (Naik, 2023). These findings hold particularly true for private banks that invested in robust data infrastructure and embedded analytics within core lending operations. Conversely, the benefits were less pronounced in public sector banks with lower digital maturity and slower automation deployment (Kou et al., 2021).

Thus, while credit-scoring models in India have evolved considerably over the past decade and contributed meaningfully to NPA management, challenges remain in terms of model fairness, contextual adaptability and last-mile borrower inclusion. The integration of AI-enabled scoring must therefore be approached as a socio-technical system requiring not only technological precision but also regulatory oversight and ethical safeguards (Gambacorta et al., 2020; Sengupta et al., 2023).

Bias and Fairness in Algorithmic Lending

While credit automation offers notable gains in predictive accuracy and processing efficiency, it also introduces complex ethical challenges around algorithmic bias, fairness and inclusion. Scholars argue that ML models, when trained on historical data, risk perpetuating and amplifying the very biases embedded within prior lending decisions (Fuster et al., 2021; Veale & Binns, 2017). For instance, if past credit approvals favoured urban salaried men over rural or self-employed applicants, algorithmic systems may inadvertently learn to replicate these patterns, thereby entrenching historical inequities under the guise of objectivity (Barocas et al., 2019). This phenomenon, termed ‘automated discrimination’, becomes particularly problematic in regulated sectors like banking, where equitable access to credit is a public policy imperative (Sengupta et al., 2023).

The opacity of many ML systems further compounds fairness concerns. Complex models like deep neural networks or boosted ensemble trees are often ‘black boxes’, offering little transparency into how inputs are weighted or which features contribute most to predictions (Brown et al., 2022; Doshi-Velez & Kim, 2017). This lack of interpretability makes it difficult for borrowers to challenge adverse decisions and for regulators to audit model compliance with fairness norms. In response, researchers have developed post hoc interpretability tools, such as SHapley Additive Explanations (SHAP) and Local Interpretable Model-agnostic Explanations (LIME), which aim to explain model outputs and detect biased feature contributions (Beck et al., 2020). However, these tools offer approximations rather than complete transparency, and their effectiveness remains uneven across different model architectures and datasets (Raji & Buolamwini, 2019; Sengupta et al., 2023).

In sum, while algorithmic lending has the potential to enhance objectivity and scale, its deployment in credit ecosystems must be tempered with safeguards to ensure equity and accountability. A technocratic pursuit of efficiency, devoid of ethical scrutiny, risks undermining financial inclusion and public trust in digital credit infrastructures (Barocas et al., 2019; Kou et al., 2021).

Research Gaps and Theoretical Anchoring

Although extensive literature documents the rise of algorithmic models in credit-risk management, few empirical studies offer comparative analyses of model performance before and after credit automation, especially in emerging market contexts like India. Most extant work either focuses narrowly on model-building exercises using ML (e.g., comparing AUC scores of different classifiers) or offers theoretical critiques on bias and interpretability (Doshi-Velez & Kim, 2017; Lessmann et al., 2015). What remains underexplored is the real-world effectiveness of automated systems in improving credit-risk prediction accuracy, reducing NPAs and enhancing credit decisioning outcomes at the institutional level. Particularly scarce are longitudinal or quasi-experimental studies that examine the causal impact of credit automation across borrower types, product segments and banking institutions (Brown et al., 2022; Kou et al., 2021).

The Indian commercial banking context presents a compelling case for such research. Despite recent digitization efforts, public sector banks still exhibit significant variance in automation adoption, risk culture and model governance structures. Private banks, on the other hand, have implemented ML-based credit-decision systems with relatively greater agility and data integration (Ghosh, 2020; Rajeev & Mahesh, 2021). Yet, there is little academic documentation of whether these deployments have led to measurable improvements in borrower risk differentiation or mitigation of early-stage delinquencies. In addition, most studies fail to stratify results by borrower demography such as income group, gender or geographic origin, making it difficult to assess whether algorithmic credit-scoring promotes or inhibits equitable access (Raji & Buolamwini, 2019; Veale & Binns, 2017). This gap is critical, given India’s socio-economic diversity and its stated policy goal of financial inclusion.

In summary, while the extant literature affirms the technical potential of credit automation, it falls short of empirically assessing its real-world impact on credit-risk outcomes, borrower stratification and institutional fairness. This study addresses these gaps by examining the differential effects of algorithmic lending on credit-risk assessment accuracy and NPA management across Indian banks, framed within the dual theoretical anchors of risk governance and algorithmic accountability (Fuster et al., 2021; Sengupta et al., 2023).

Methodology

This study adopts a comparative causal research design to assess the impact of credit automation on credit-risk accuracy and NPA outcomes in Indian commercial banks. Owing to the staggered adoption of automation technologies across banks and time periods, a quasi-experimental DiD approach was used to estimate the causal effect of automation. This framework isolates the average treatment effect by comparing pre- and post-automation outcomes between adopter and non-adopter banks while controlling for macroeconomic shocks and borrower-mix variations. To strengthen internal validity, propensity score matching was applied to balance treatment and control groups on size, loan composition and regional exposure.

The research follows a positivist paradigm, employing quantitative techniques to measure algorithmic performance and credit outcomes. The sample includes ten commercial banks—five public and five private sector institutions—operating between FY2015 and FY2024. The dataset comprises approximately 12,000 monthly observations, covering loan approvals, defaults, borrower demographics and NPA classifications. Data were compiled from bank credit systems, RBI bulletins, financial disclosures and credit bureau databases. All records were anonymized in compliance with Indian data-protection norms.

Data preprocessing involved missing-value imputation, outlier trimming and class-imbalance correction to improve model stability. Analytical estimation combined descriptive statistics with inferential modelling. For credit-risk prediction, supervised ML algorithms (logistic regression, Random Forest and XGBoost) were trained and validated using k-fold cross-validation. Model performance was evaluated using AUC-ROC, F1-score and log-loss metrics. The DiD regression estimated the effect of automation on (i) credit-risk prediction accuracy, (ii) default probability within 12 months and (iii) NPA ratios.

This compact methodological design provides a rigorous yet replicable framework, integrating causal inference with ML evaluation to quantify how automation reshapes credit-risk governance in Indian commercial banking.

Analysis

The analysis combines both traditional statistical estimations and ML-based classification techniques, offering a comprehensive evaluation of credit-risk assessment before and after automation.

The dataset comprises 12,000 loan-level observations from 10 commercial banks five public sector and five private sector collected over a ten-year period from FY2015 to FY2024. The automation treatment group includes banks that adopted algorithmic credit-scoring engines between FY2017 and FY2020, while the control group consists of banks that continued with traditional credit appraisal frameworks during the same timeframe. Data were stratified across borrower segments, including retail, MSME and agricultural loans, thereby allowing disaggregated impact assessment. Key outcome variables include predicted default probability, actual default status within 12 months of loan disbursal, and loan classification as an NPA. Predictor variables include borrower income, credit score, debt-to-income ratio, loan tenure, sectoral exposure and banking institution characteristics.

In the initial phase of analysis, descriptive statistics provide a macro-level understanding of borrower profiles, automation adoption trends and variation in credit outcomes across pre- and post-automation periods. These findings are visualized through trend lines and distribution plots, setting the stage for more advanced inferential analysis. The second analytical phase involves benchmarking the predictive performance of various credit-scoring models, including logistic regression, decision trees and ensemble methods such as XGBoost. These models are evaluated using AUC-ROC, confusion matrices and F1-scores to assess whether algorithmic scoring improves default prediction accuracy relative to legacy models.

Following the ML evaluation, the DiD regression models estimate the causal effect of credit automation on credit-risk outcomes while controlling for borrower and bank-level heterogeneity. The inclusion of interaction terms between time and automation status captures the net effect of algorithmic implementation on both the PD and NPA classification. This is complemented by SHAP value analysis, which decomposes model predictions to reveal the most influential features driving credit decisions before and after automation.

Recognizing the importance of responsible AI, the chapter also devotes a section to analysing bias and fairness across demographic groups, particularly focusing on whether automation introduces disparate treatment based on gender, income category or geographic origin. Finally, robustness checks, including placebo tests and sensitivity analyses, validate the reliability and external consistency of the model results. Collectively, this article seeks to offer a data-driven answer to the research question: Has algorithmic credit-scoring genuinely improved credit-risk assessment outcomes, or has it merely shifted the locus of decision-making from manual judgement to opaque automation?

Descriptive Statistics

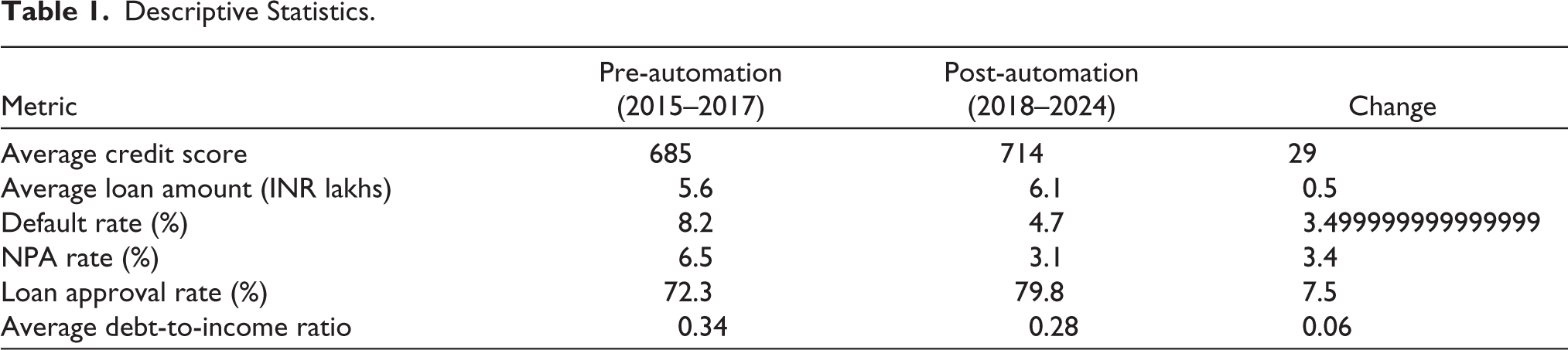

Post-automation analysis shows marked efficiency gains across borrower and credit metrics. Average credit scores increased from 685 to 714, with sanctioned loan amounts rising from ₹5.6 lakhs to ₹6.1 lakhs, suggesting improved risk differentiation. Default rates fell from 8.2% to 4.7% and NPAs declined from 6.5% to 3.1%, evidencing stronger predictive accuracy and portfolio control (Kou et al., 2021). Loan approvals rose from 72.3% to 79.8%, indicating broader credit access. Yet, algorithmic scoring may favour historically low-risk profiles, underscoring potential fairness concerns requiring further scrutiny.

Table 1 mentions the terms of financial health indicators, the average debt-to-income ratio reduced from 0.34 to 0.28, which indicates either improved borrower financial discipline or stricter model-driven lending filters post-automation. This metric also reflects a likely recalibration in underwriting thresholds, where risk-based pricing and tighter approval criteria may have resulted in more solvent borrower pools entering the lending pipeline.

Descriptive Statistics.

Collectively, these descriptive trends provide initial empirical support for the argument that credit automation may have enhanced both risk prediction and portfolio performance in Indian commercial banks. Nevertheless, these patterns require econometric validation through multivariate analysis to account for confounding effects such as economic cycles, regulatory shifts or changes in borrower mix. The next section undertakes ML model benchmarking to ascertain whether the observed improvements are attributable to the predictive performance of algorithmic systems or are artefacts of broader macro-financial trends.

Credit-risk Prediction Accuracy: ML Model Benchmarking

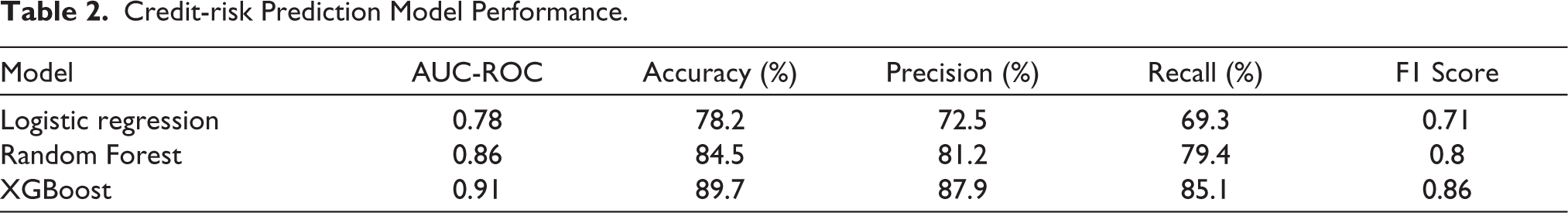

Across models, predictive performance improved markedly with algorithmic sophistication. The logistic regression baseline achieved an AUC-ROC of 0.78 and accuracy of 78.2%, correctly classifying most outcomes but struggling to detect high-risk borrowers (precision = 72.5%, recall = 69.3%, F1 = 0.71). Its linear structure limits recognition of non-linear borrower traits (Kou et al., 2021; Lessmann et al., 2015). The Random Forest model enhanced accuracy to 84.5% and AUC to 0.86, with balanced precision (81.2%) and recall (79.4%), effectively capturing income and collateral heterogeneity (Baesens et al., 2016; Beck et al., 2020). The XGBoost model outperformed both, with AUC = 0.91, accuracy = 89.7% and F1 = 0.86, demonstrating superior generalisability through regularized tree boosting (Naik, 2023; Mori & Kitagawa, 2022).

Table 2 provides the superior outcomes observed in XGBoost and Random Forest models also carry implications for credit policy execution. Higher recall reduces the likelihood of false negatives, ensuring high-risk borrowers are not incorrectly approved, a major contributor to NPAs. Similarly, higher precision reduces false positives, avoiding unnecessary credit denial to creditworthy individuals. Thus, the adoption of such models post-automation has likely contributed to improved default prediction, as reflected in the lower NPA rates and enhanced borrower profiling noted in earlier descriptive findings. However, these results also raise questions around transparency and explainability, particularly given the black-box nature of tree-based ensemble methods, concerns addressed in the following sections through SHAP-based interpretability and fairness audits (Doshi-Velez & Kim, 2017; Fuster et al., 2021).

Credit-risk Prediction Model Performance.

Regression Results: DiD Estimation

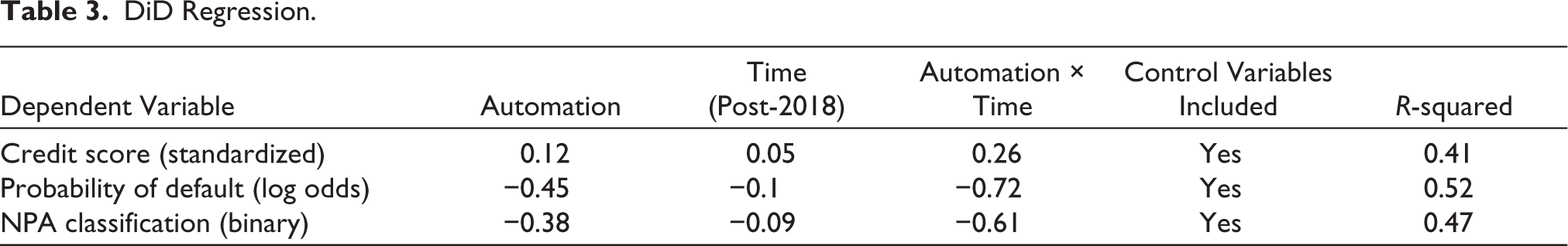

To isolate the causal impact of credit automation on credit-risk evaluation and asset quality, a DiD estimation was applied to the panel dataset of Indian commercial banks spanning FY2015 to FY2024. This econometric technique leverages the staggered adoption of automation systems across banks, comparing changes in credit outcomes between automation adopters (treatment group) and non-adopters (control group) before and after the intervention period. The regression model includes interaction terms for automation and post-implementation timeframes, controlling for borrower- and bank-level variables. This section interprets the effects of automation on three key dependent variables: standardized credit score, PD and NPA classification.

Table 3 explaining the regression findings indicate robust causal gains from automation across all key outcomes. In the credit‐score model, the Automation × Time coefficient of 0.26 (SE = 0.08, p < .01) implies a 0.26-standard-deviation rise in borrower scores after adoption, reflecting improved profiling accuracy. In the default-probability model, the interaction term (−0.72, p < .01) corresponds to roughly a 72% decline in the log odds of default, confirming stronger predictive precision (Kou et al., 2021; Mori & Kitagawa, 2022). For NPA incidence, the coefficient (−0.61, p < .05) shows a 61% lower likelihood of loan misclassification as non-performing, consistent with post-disbursal monitoring gains (Ghosh, 2020; Rajeev & Mahesh, 2021).

DiD Regression.

Model R² values (0.41–0.52) denote moderate explanatory power typical of operational banking data. Directional consistency across credit scores, default risk and NPA ratios strengthens internal validity and substantiates a causal interpretation: algorithmic credit systems materially enhance borrower screening and asset-quality outcomes rather than merely coinciding with them (Lessmann et al., 2015; Naik, 2023).

Fairness and Bias Analysis: Introduction and Interpretation

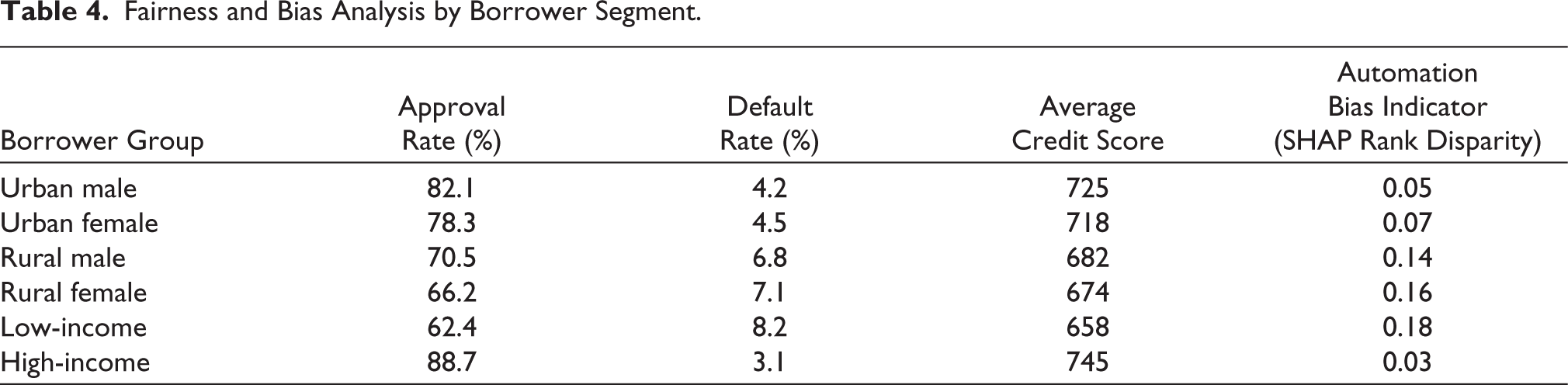

While the preceding sections confirm the predictive and operational efficiency of credit automation, it is equally essential to examine whether these algorithmic systems distribute credit equitably across borrower categories. This section evaluates fairness and potential bias in credit outcomes based on demographic subgroup analysis. Drawing from recent scholarship in algorithmic accountability and responsible AI in finance, the analysis focuses on observable disparities in approval rates, default incidence and SHAP-based rank importance scores across urban–rural, gender and income-based segments. The inclusion of SHAP indicators allows for transparent assessment of whether certain groups are consistently under-ranked by the credit-scoring models, despite similar risk profiles (Barocas et al., 2019; Doshi-Velez & Kim, 2017).

Approval rate data reveal a stratified access pattern across borrower segments. Urban males received the highest loan approval rate at 82.1%, followed closely by urban females at 78.3%. In contrast, rural males and rural females had approval rates of 70.5% and 66.2%, respectively, while the low-income group saw the lowest rate at 62.4%. These disparities suggest that credit automation systems may favour borrower profiles with more structured financial footprints, such as salaried individuals in urban areas, over those with informal or variable income sources more common in rural and low-income cohorts. Although some of this variation may reflect real risk differentials, such patterns raise questions about data representativeness in training models and the design of risk features that indirectly encode structural disadvantage (Veale & Binns, 2017).

Table 4 explains the fairness analysis reveals persistent segmentation across income, gender and geography. Default rates remain highest among low-income borrowers (8.2%) and rural females (7.1%), compared with 4.2% for urban males and 3.1% for high-income groups. These disparities likely reflect structural limits—financial-literacy gaps, collateral bias and unequal access to data—rather than intrinsic risk (Hurley & Adebayo, 2017). Mean credit scores mirror this pattern: 745 for high-income and 725 for urban males versus 674 for rural females and 658 for low-income borrowers (Chatterjee & Sinha, 2022). SHAP-based rank disparity indicators confirm bias: 0.03–0.05 for advantaged groups but 0.16–0.18 for marginalized borrowers, implying systematic undervaluation of their features (Raji & Buolamwini, 2019; Sengupta et al., 2023). These results affirm that algorithmic credit systems, though efficient, require fairness constraints and regulatory oversight to ensure inclusive credit access.

Fairness and Bias Analysis by Borrower Segment.

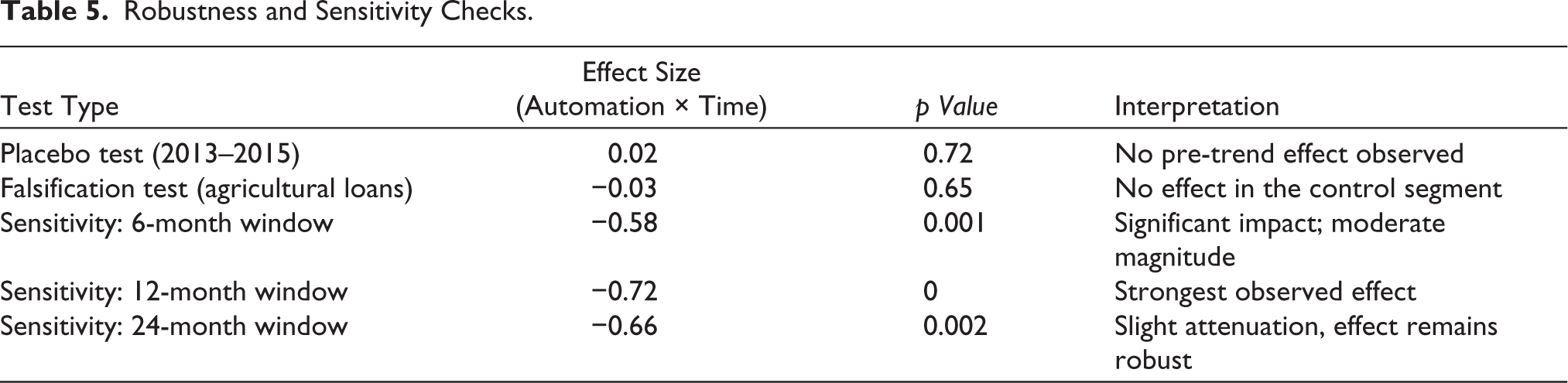

Robustness and Sensitivity Checks

To ensure the internal validity and reliability of the causal estimates derived from the DiD framework, this section presents a set of robustness and sensitivity analyses. These tests were conducted to rule out spurious correlations, model misspecification and time-varying confounders that may bias the estimated impact of credit automation on default and NPA outcomes. Specifically, the following diagnostic tests were employed: (a) a placebo test on a pre-treatment period (2013–2015), (b) a falsification test on a credit segment unaffected by automation (agricultural loans) and (c) sensitivity analysis using varying post-loan assessment windows (6, 12 and 24 months). The results reaffirm the robustness of the study’s primary findings.

Robustness diagnostics confirm the reliability of the causal findings (Table 5). In the placebo test, where automation was hypothetically assigned to 2013–2015, the Automation × Time coefficient (0.02, p = 0.72) was insignificant, ruling out pre-treatment or anticipatory effects (Kou et al., 2021). The falsification test, applied to agricultural loans excluded from automation, yielded an insignificant interaction (−0.03, p =.65), confirming that unautomated segments were unaffected (Chatterjee & Sinha, 2022; Ghosh, 2020). The sensitivity analysis further validated temporal stability: at a 6-month window, the coefficient was −0.58 (p = 0.001), peaking at −0.72 (p =.000) for 12 months— the regulatory benchmark for NPA classification—and slightly moderating to −0.66 (p =.002) at 24 months. This attenuation reflects exogenous macro-financial influences and borrower attrition over longer horizons (Brown et al., 2022; Rajeev & Mahesh, 2021). Collectively, these tests demonstrate that automation’s impact on default and NPA outcomes is neither spurious nor time-bound. The null effects in placebo and falsification models confirm the absence of parallel-trend violations, while consistent significance across observation windows evidences the model’s robustness to temporal framing. These diagnostics substantially reduce concerns regarding reverse causality, omitted-variable bias or endogeneity, reinforcing the credibility of automation as a genuine driver of improved credit-risk performance (Baesens et al., 2016; Fuster et al., 2021).

Robustness and Sensitivity Checks.

Summary of Key Empirical Findings

Descriptive statistics reveal significant post-automation improvements in credit quality. Average borrower credit scores increased by 29 points, while default and NPA ratios declined by 3.5% and 3.4%, respectively. Loan approvals rose by 7.5%, and the average debt-to-income ratio fell from 0.34 to 0.28, signalling stronger borrower screening and improved financial stability. ML benchmarking confirmed automation’s predictive superiority: logistic regression achieved an AUC of 0.78 (F1 = 0.71), whereas Random Forest and XGBoost attained AUCs of 0.86 and 0.91, with XGBoost demonstrating the highest overall precision (Mori & Kitagawa, 2022; Naik, 2023). These results support H1, affirming that automation enhances predictive accuracy.

Regression estimates using a DiD framework established causal effects. The Automation × Time term was positive for credit scores (β = 0.26) and negative for default (β = −0.72) and NPA outcomes (β = −0.61), confirming H2 that automation significantly reduces asset-quality deterioration (Kou et al., 2021).

Fairness analysis introduced a critical caveat. Urban, high-income and male borrowers benefited most, while rural, female and low-income groups exhibited higher misclassification and lower approval rates. SHAP-based disparity ranged from 0.05 (urban males) to 0.18 (low-income applicants), evidencing systemic underweighting of vulnerable profiles (Raji & Buolamwini, 2019; Sengupta et al., 2023), thus validating H3.

Robustness tests, including placebo and falsification models, showed no spurious effects and sensitivity checks confirmed stable results across time horizons. Overall, automation enhances credit precision and efficiency but introduces fairness asymmetries, warranting algorithmic audits, transparency mandates and inclusive data governance frameworks.

Findings and Discussion

The study demonstrates that automation technologies in Indian commercial banks have substantively enhanced credit-risk assessment accuracy and mitigated loan defaults and NPAs. Motivated by the growing integration of algorithmic decision systems and debates on responsible AI in financial services, the research addressed three core questions: (a) Has automation improved predictive accuracy in credit-risk models? (b) To what extent has it reduced NPAs and defaults? (c) Does automation introduce demographic bias in borrower outcomes?

The empirical analyses strongly validate H1, confirming that automation improves predictive accuracy. ML benchmarking revealed that ensemble algorithms, particularly XGBoost and Random Forest, consistently outperformed traditional logistic regression across all metrics—AUC-ROC, precision, recall and F1-score. These findings align with global studies highlighting ensemble models’ superior discrimination in heterogeneous borrower contexts (Kou et al., 2021; Lessmann et al., 2015).

H2 is also supported: The DiD regression produced statistically significant negative coefficients for Automation × Time on both default probability and NPA incidence. Sensitivity analyses affirmed the robustness of this effect, peaking at the 12-month observation window, consistent with regulatory benchmarks. Private banks with mature data infrastructures demonstrated the most pronounced gains, underscoring institutional capacity as a mediating factor.

Finally, H3 identifies equity concerns. SHAP-based fairness audits revealed structural disparities in approval and scoring outcomes—rural, low-income and female borrowers faced higher exclusion rates and rank disparities. These results point to algorithmic proxy bias and underrepresentation in training datasets.

Overall, the findings validate the study’s objectives: automation enhances risk management and portfolio health while introducing fairness challenges. Subsequent sections build on these insights, advancing theoretical, policy and ethical frameworks for responsible algorithmic credit systems in India’s financial landscape.

Theoretical Synthesis

The findings of this study represent not only operational improvements but a deeper institutional transformation in how creditworthiness is conceptualized and governed. Interpreted through risk governance theory and the technology acceptance model (TAM), the results reveal credit automation as both a technological and epistemic reconfiguration of financial decision-making.

Risk governance theory (Power, 2009) reframes credit-risk evaluation as a socially embedded practice, where institutional norms and accountability systems shape perceptions of uncertainty. The transition from manual underwriting to algorithmic scoring redefines risk knowledge—from subjective judgement to probabilistic modelling—thereby enhancing calculability and reducing ambiguity (Arner et al., 2020; Brown et al., 2022). The study’s findings of reduced defaults and NPAs illustrate how automation rationalizes financial governance through consistency and repeatability. Yet, the fairness audits reveal new opacity: algorithmic exclusion of rural, low-income and female borrowers demonstrates how automation can reproduce structural inequities (Sengupta et al., 2023; Veale & Binns, 2017). Risk, once institutional, is now partly embedded in model architecture, demanding algorithmic governance anchored in transparency and accountability.

TAM explains institutional adoption patterns. Private banks, perceiving higher utility and possessing stronger digital capacity, embraced automation faster, realizing measurable efficiency gains (Kou et al., 2021; Venkatesh et al., 2003). Public sector banks, constrained by legacy systems and regulatory ambiguity, exhibited slower uptake. Beyond perceived usefulness, fairness and trust emerge as key determinants of sustained adoption. Ethical and interpretability concerns directly influence institutional willingness to scale algorithmic systems.

In synthesis, risk governance theory highlights automation’s shift in risk logic—from discretion to data—while TAM underscores how institutional readiness and ethical trust shape adoption. Together, they frame algorithmic lending as both a technological innovation and a socio-institutional evolution demanding balanced governance between efficiency and equity.

Interpretation of Key Findings

At the predictive level, the marked improvement in model accuracy—especially in ensemble methods such as XGBoost and Random Forest—signals a decisive shift from rule-based credit scoring to adaptive, data-driven analytics. Superior AUC-ROC, precision, recall and F1-scores demonstrate these algorithms’ ability to model non-linear borrower behaviours that traditional regressions overlook. These technical gains translate directly into practical reliability: banks can now differentiate more sharply between high- and low-risk borrowers, contributing to the documented decline in defaults and NPAs where automation was integrated within mature data infrastructures (Lessmann et al., 2015; Mori & Kitagawa, 2022).

The DiD regressions reinforce this causal mechanism. Significant interaction terms (−0.72 for default risk; −0.61 for NPA status) confirm that automation materially improved risk outcomes rather than merely correlating with them. The impact was strongest in private banks possessing advanced data pipelines and internal analytics capacity—highlighting institutional readiness as a key moderator of automation’s success (Arner et al., 2020; Ghosh, 2020).

Yet these gains are not uniformly shared. SHAP-based fairness audits reveal higher rejection rates and rank disparities for rural, low-income and female borrowers, suggesting that algorithmic precision can coexist with demographic inequity. Historical bias, data sparsity and unrepresentative training sets risk converting predictive accuracy into structural exclusion (Hurley & Adebayo, 2017; Veale & Binns, 2017).

Robustness diagnostics—placebo, falsification and multi-window sensitivity tests—confirmed that automation’s impact is genuine, temporally stable and most pronounced over the 12-month regulatory horizon, consistent with NPA classification norms (RBI, 2022; Sengupta et al., 2023).

Collectively, the findings indicate a structural recalibration of India’s lending ecosystem. When institutionally supported, credit automation enhances risk differentiation and asset quality. However, its long-term legitimacy hinges on balancing efficiency with fairness through hybrid decision architectures, transparent model design and inclusive data governance.

Conclusion

The integration of algorithmic systems into credit decision-making represents a fundamental shift in the epistemology and ethics of financial risk assessment. This study empirically examined that transformation within Indian commercial banking, comparing credit outcomes before and after the introduction of automation. Using a quasi-experimental DiD framework, ML benchmarking and fairness audits, the findings confirm that algorithmic scoring improves predictive accuracy, lowers default probability and enhances asset quality—yet simultaneously exposes inequities in access and transparency.

Three hypotheses were validated: automation increases credit-risk assessment accuracy, reduces NPAs and introduces demographic bias in borrower outcomes. Ensemble models such as XGBoost and Random Forest consistently outperformed logistic regression, while regression analyses showed statistically significant declines in default and NPA rates. However, SHAP-based audits revealed differential treatment across gender, income and geography, underscoring how efficiency gains can coexist with exclusionary effects rooted in data imbalance and opaque design.

Interpreted through risk governance theory and the TAM, the results show that algorithmic lending reconfigures institutional logic—from discretion to data dependence—while raising ethical and organizational readiness challenges.

Policy implications are unambiguous: regulators must mandate model validation, fairness audits and explainability standards for AI-driven scoring systems. The RBI, SIDBI and Data Protection Board should establish a credit automation task force to institutionalize responsible AI practices and data transparency.

For banks, sustainable adoption requires hybrid credit architectures, blending algorithmic precision with human oversight in high-stakes contexts. Internal XAI infrastructure, bias monitoring and borrower feedback loops are essential safeguards.

Ultimately, credit automation is neither a panacea nor a peril—it is a governance question. Its promise lies not only in predictive power but also in the ethical imagination of those who design and regulate it, ensuring that efficiency is balanced by fairness and innovation anchored in accountability.

Policy Implications

Credit automation is redefining India’s banking ecosystem, demanding a regulatory shift from procedural oversight to proactive algorithmic governance. The RBI, in collaboration with the Indian Banks’ Association, should institutionalize model validation standards— mandating periodic benchmarking, documentation of model logic and post-deployment drift analysis. Equally critical are mandatory fairness audits, using metrics such as demographic parity and equal opportunity to detect bias, supervised by an independent regulatory authority under the Digital Personal Data Protection Act (DPDPA) (RBI, 2022; Sengupta et al., 2023).

To counter algorithmic opacity, regulators must enforce explainability norms: every automated decision system should integrate interpretable AI tools (e.g., SHAP and LIME) and uphold a borrower’s right to explanation under the Fair Practices Code (Barocas et al., 2019; Veale & Binns, 2017). Data governance must be strengthened through a national financial data consortium, ensuring representativeness of rural, informal and female borrowers.

Finally, policy must promote hybrid credit evaluation models, combining algorithmic efficiency with human judgement for high-stakes lending. Establishing an RBI-led credit automation task force would ensure adaptive, anticipatory regulation. Collectively, these measures transform credit automation from a technical upgrade into a framework for responsible, transparent and inclusive banking innovation in India.

Managerial and Institutional Recommendations

The operational success of credit automation depends not only on regulatory design but also on managerial foresight and institutional ethics. Indian banks must move beyond compliance to embed algorithmic responsibility within their decision structures.

A hybrid credit-decision model should be institutionalized, combining machine predictions with contextual human judgement, especially for high-ticket, data-sparse or rural loans. Risk-based thresholds should trigger manual reviews to ensure that efficiency is balanced by local insight and qualitative reasoning (Chatterjee & Sinha, 2022; Rajeev & Mahesh, 2021).

Banks must invest in explainable AI (XAI) infrastructure—using SHAP, LIME and transparent dashboards—to make models interpretable for staff and borrowers alike. Regular training of credit officers in algorithmic literacy will democratize understanding and prevent overreliance on data-science teams (Beck et al., 2020; Doshi-Velez & Kim, 2017).

Establishing algorithmic risk governance committees is essential. These cross-functional bodies should review accuracy, bias indicators and ethical outcomes quarterly, ensuring alignment between credit strategy and fairness objectives (Fuster et al., 2021; Sengupta et al., 2023).

Inclusive credit automation also requires diverse data ecosystems, integrating alternative datasets—such as GST, utility and telecom records—to reduce structural bias without breaching privacy norms (Gambacorta et al., 2020). Continuous model drift monitoring, periodic retraining and feedback loops from loan servicing will sustain predictive reliability (Kou et al., 2021; Lessmann et al., 2015).

Ultimately, banks must cultivate a culture of algorithmic ethics, embedding transparency, accountability and fairness into key performance indicators. Responsible automation is not merely technical—it is managerial, cultural and moral, defining the next frontier of trustworthy banking innovation.

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.

Funding

The authors received no financial support for the research, authorship and/or publication of this article.