Abstract

The growing prevalence of AI-generated media, particularly podcasts, calls for closer examination of how algorithmic framing shapes audience perception and affective states. As an auditory news format, podcasts offer a distinctive context in which framing effects may be amplified yet remain insufficiently examined. Established research in journalism demonstrates that negative framing, while often enhancing engagement, tends to heighten emotional arousal and cognitive bias. In AI-generated news, these tendencies risk being amplified by large-scale automated production and optimization. This study investigates listener responses to news podcasts generated by large language models (LLMs), comparing the effects of constructive versus non-constructive framing on emotional states and self-efficacy. We operationalize non-constructive framing as narratives that emphasize conflict, failure, and unresolved consequences without offering pathways for resolution, whereas constructive framing systematically integrates solution-oriented perspectives, future-oriented trajectories, and cues that foreground individual or collective agency. We developed a generative podcast pipeline that integrates prompt engineering and text-to-speech (TTS) synthesis to produce paired podcast versions from identical news content. A mixed-methods empirical study (N = 65) compared the two framing strategies in terms of their effects on emotional response and self-efficacy. The results showed that constructive framing led to a significant reduction in negative emotions compared to non-constructive framing. Furthermore, constructive framing enhanced listeners’ perceived self-efficacy in contexts where the news topics afforded interpretable and actionable forms of agency, highlighting how framing interacts with topic characteristics to shape motivational outcomes. By extending established framing-effect theories into the domain of AI-generated auditory news, this work provides empirical insights into how framing mechanisms in AI-generated media shape user experience and well-being, and inform the design of AI-generated media systems that balance engagement optimization with ethical considerations of emotional impact and agency support within human–computer interaction.

Introduction

Artificial intelligence (AI) is rapidly evolving, making AI-driven media an increasingly common part of daily life. Traditionally, news framing—the process of selecting and presenting information to shape audience interpretation (Klein et al., 2019)—has been the purview of human editors guided by professional norms and contextual judgment (Ettema, 2007; Kim, 2015). The emergence of large language models (LLMs) and text-to-speech (TTS) systems, however, transforms this into an automated, algorithmic process. Unlike human journalism’s editorial accountability, AI framing relies on prompt-level instructions and algorithmic optimization (Sonni et al., 2024; Voinea, 2025). This transition introduces unique challenges: because LLMs are often trained on engagement-oriented datasets, they may inherently prioritize sensationalism or bias (Acerbi, 2023), potentially defaulting to negative narratives without the ethical oversight typical of human newsrooms (Devinney et al., 2024). Consequently, understanding how these automated framing decisions alter audience perception is critical for responsible AI deployment.

Podcasts have become one of the fastest-growing channels for news consumption (Research, 2025). Despite their ubiquity, prior research on AI-generated media has concentrated mainly on textual or visual outputs (Grimberg, 2024; Taylor, 2024), leaving audio formats largely underexplored (Fang et al., 2024). Unlike text, audio carries rich paralinguistic cues, such as tone, rhythm, and pacing, which neuroscientific evidence indicates can activate emotional brain regions more strongly than written words alone (Pell et al., 2015). In audio news, framing operates through both narrative content and vocal delivery, which in AI-generated podcasts is produced by synthetic speech rather than human narration. This makes podcasts a uniquely potent yet under-examined medium for studying how AI-driven framing affects emotion and cognition (Zhang, 2023). While established research confirms that synthetic media can rival human content in emotional intensity and credibility (Kalpokas, 2020), existing studies have rarely examined how these interactions influence complex psychological outcomes, such as listeners’ self-efficacy. Instead, studies have often focused on surface-level features, like the perceived gender of the AI voice (Zhang, 2023), rather than the underlying narrative structure. Consequently, ethical questions remain open, particularly regarding how to deploy AI-generated media responsibly while mitigating risks such as bias amplification or misinformation (Fang et al., 2024).

This study is grounded in media framing theory (De Vreese, 2005), which posits that narrative presentation influences audience cognition (Kim, 2016). Research has consistently shown that traditional media often employs negative framing (Pedersen, 2014), a strategy known to capture attention (Saarela, 2020) and increase content sharing (Nabi, 2003). In AI contexts, such tendencies may be further intensified by engagement-driven optimization (Voinea, 2025), heightening emotional strain through conflict-oriented storytelling (Fernandes et al., 2024; Shad, 2025). Conversely, constructive framing, which prioritizes solution-oriented narratives and future possibilities (McIntyre, 2015), remains less studied in algorithmically generated news. Exploring constructive narratives is critical because they may help counter the emotional fatigue caused by conventional, problem-focused news. To address this, our work compares constructive and non-constructive framing strategies in AI-generated podcasts, specifically examining their combined effects on listeners’ emotional response and perceived self-efficacy. Here, “non-constructive framing” refers to content emphasizing conflict or failure without pathways for resolution (Van der Meer et al., 2019), while “constructive framing” integrates solution-oriented and agency-enhancing perspectives (McIntyre, 2015).

This research asks: How do listener responses differ when identical news resources are compiled into podcasts by large language models using constructive versus non-constructive framing strategies? A key focus of our research is self-efficacy, as it reflects the listener’s belief in their ability to influence their environment based on the information they heard (Overgaard, 2023; van Venrooij et al., 2022). Emotion is another focus, as emotional responses are more intensely triggered by auditory stimuli than text (Miao et al., 2024). To investigate the question, we developed a generative AI pipeline integrating GPT-4 and TTS technology. The pipeline processes identical source news articles as input and, using structured prompt engineering, generates two podcast formats: one framed constructively and the other non-constructively. We then conducted a controlled study with 65 participants to examine how these framing strategies affect emotional and efficacy-related outcomes.

Empirical analyses revealed that podcasts framed constructively not only lowered negative affect but also enhanced listeners’ perceived self-efficacy relative to non-constructive ones. This study makes two core contributions. First, it provides experimental evidence demonstrating how framing in AI-generated podcasts influences listeners’ emotions and self-efficacy states, highlighting how framing strategies in AI-generated podcasts influence listeners through narrative presentation in an auditory format. Second, it offers a set of design implications for building AI-mediated media systems that balance engagement with psychological well-being. Together, these insights contribute to broader discussions of AI media governance, content ethics, and personalized interaction design in HCI.

Related Work

Challenges in AI-Generated News

AI-generated news has rapidly become an integral component of contemporary media production (Research, 2025). Advanced large language models (LLMs), such as GPT-4, are increasingly adopted by news organizations to summarize events, draft articles (Ioscote et al., 2024; Lopezosa et al., 2023; Visvam Devadoss et al., 2019), and tailor narratives to specific audiences (Fu, 2023; Zhang, Li, Gu, Lu, & Gu, 2024). By identifying linguistic patterns across large-scale datasets, these systems substantially increase the speed and efficiency of journalistic workflows, responding to the industry’s growing demand for continuous and cost-effective content production (Torkamaan et al., 2024).

As AI systems move from experimental tools to routine elements of news production, however, they introduce a range of ethical and epistemic challenges (Sandrini, 2023; Xu et al., 2019). One central concern is their tendency to reproduce and stabilize biases embedded in training data (O’Connor & Liu, 2024). Prior research demonstrates that LLMs reflect social and cultural patterns present in their source corpora (Nazar, 2020; Woolley, 2020), which can lead to the reinforcement of stereotypes related to gender, race, or occupation (Fang et al., 2024). Unlike human editors, whose decisions are guided by professional norms and editorial judgment, algorithmic systems lack contextual moral reasoning. Although mitigation approaches such as reinforcement learning from human feedback (RLHF) offer partial safeguards (Pagano et al., 2023), these mechanisms remain constrained by predefined normative assumptions (Gallegos et al., 2024).

A further challenge concerns the opacity of generative models. Their “black box” decision-making processes (Adadi, 2018; Mitova et al., 2023) limit users, ability to trace how specific inputs produce particular outputs, increasing the risk of factual distortion (Mahmood et al., 2023) and undermining audience trust (Araujo et al., 2023; Chen, 2024). Existing responses to this issue include explainable AI frameworks (Mitova et al., 2023; Zhou, 2020) and automated fact-checking techniques (Du, 2023; Kim et al., 2024). However, these efforts primarily address factual accuracy and transparency, while paying less attention to subtler editorial dimensions such as narrative framing. In particular, how AI systems frame factual information—whether in constructive or non-constructive ways—remains underexplored (Caramancion, 2023; Dierickx et al., 2023). This gap motivates our investigation into how framing choices in AI-generated news shape psychological and ethical outcomes.

The Influence of News Framing on Audiences

Framing theory (Entman, 1993; Scheufele, 1999) posits that emphasizing particular dimensions of an issue systematically shapes how audiences interpret and respond to information. In commercial journalism, negative framing, which emphasizes conflict, crisis, or threat (Nabi, 2003; Scheufele, 1999), is a persistent strategy because it drives attention and engagement (Trussler, 2014; Van der Meer et al., 2019). Research indicates that such emphasis fuels sensationalism (Pedersen, 2014), anxiety (Ricciardelli et al., 2024), and cynicism (Baden et al., 2019; Pedersen, 2014; Soley, 1992) while marginalizing constructive civic dialogue. In contrast, constructive frames redirect the narrative towards solution-oriented perspectives, explicitly connecting societal challenges with actionable solutions. Empirical research indicates that constructive journalism can mitigate negative psychological effects (Gyldensted, 2015) while simultaneously enhancing self-efficacy—ones belief in his or her ability to effect change (Overgaard, 2023; van Venrooij et al., 2022). This effect arises because constructive frames often provide actionable information or highlight achievable interventions, allowing audiences to perceive that they can influence outcomes. Empirical studies associate constructive journalism with reduced anxiety, balanced emotional responses to social issues (Diakopoulos, 2019; McIntyre, 2018), and increased prosocial engagement (Kleemans et al., 2017; van Venrooij et al., 2022). This distinction is consistent with our study,s operationalization of constructive framing as narratives that balance problem awareness with agency-enhancing solutions.

Despite the evidence, the role of AI systems in shaping framing patterns remains under-examined (Bucher, 2018; Carlson, 2018). Human editors deliberately adjust framing strategies to balance engagement with social responsibility (Kim, 2015), whereas algorithmic systems may default to engagement-driven patterns learned from data (Mussgnug, 2024). Consequently, AI-curated journalism risks reinforcing the same negativity biases long present in traditional media (Lee et al., 2022; Thurlow et al., 2020).Our study addresses this research gap by systematically comparing constructive and non-constructive AI-generated podcasts, thereby examining how automated framing influences emotional and motivational responses, which is a necessary foundation for designing AI systems that optimize both engagement and psychological well-being.

Scalability and Impact of AI-Generated Podcasts

Podcasts have emerged as a significant digital media format, capturing widespread interest due to their expressive and linguistic diversity (Mañas-Pellejero & Paz, 2022), particularly resonating with younger audiences who often prefer on-demand, multitask-friendly media (Lopez et al., 2023; Stephani et al., 2021). Their adaptability to modern lifestyles, which enables listeners to absorb information aurally (Stephani et al., 2021) while commuting, working, or exercising, positions podcasts as a potential future cornerstone of news dissemination (Renisyifa et al., 2022). In this context, aural framing, which combined scripted content and paralinguistic cues such as intonation and pauses, can enhance emotional and cognitive responses (Tobin & Guadagno, 2022). These features make podcasts a critical yet underexplored area for AI-generated news research.

While AI-driven content generation has surged, research on AI-generated podcasts remains limited. Most existing work concentrates on improving recommendation algorithms (Aluri et al., 2023; Liang et al., 2023; Nazari et al., 2022; Zhang, Li, Gu, Lu, Shang, et al., 2024) or automatic summarization and transcription (Karlbom, 2021; Rezapour et al., 2022; Vaiani et al., 2022), leaving the creative generation of podcasts underexplored (Rime et al., 2023; Tian et al., 2024). Nevertheless, recent years have seen the emergence of various applications in industry and practice. Prior to the widespread use of large language models (LLMs), some studies explored the use of natural language processing (NLP) to generate podcast content (Almeida et al., 2022) or streamline the podcast creation process (Rime et al., 2022). More recently, platforms like Podcastle (Podcastle, 2024) and Beyondwords (Beyondwords, 2024) have begun incorporating Generative AI and text-to-speech (TTS) technologies into podcast production, signaling a paradigm shift toward scalable audio content generation. Despite their advancements, these tools are still in their early stages, prioritizing technical feasibility over systematic evaluation of narrative impact (Robins et al., 2024). Critical questions persist about how AI-curated framing strategies, such as constructive versus non-constructive storytelling, affect listener engagement, perception, and behavioral outcomes.

Current tools lack rigorous frameworks to assess ethical dimensions like the psychological consequences of narrative choices, leaving a gap between technical capability and audience-centric optimization. Our study addresses this disconnect by developing an LLM-based pipeline to structure news content and generate podcast variants with controlled constructive and non-constructive framings, enabling systematic comparisons of their psychological and behavioral effects on listeners.

Formative Study

Before developing the main experiment, we conducted a formative study to better understand listeners’ perceptions of AI-generated news podcasts and to identify user-centered design requirements. The study aimed (1) to examine real-world podcast listening habits and contextual motivations, and (2) to elicit participatory feedback on desirable features and ethical expectations for generative news content. These insights ensured that the technical pipeline would be grounded in authentic listening practices and human-AI interaction values.

Participants and Procedure

We recruited 8 regular podcast listeners (F1-8, 4 female, 4 male; age, M = 24.25, SD = 3.03) through snowball sampling, prioritizing diversity in listening frequency (7 daily listeners, 1 weekly listener) and engagement with news-oriented content (5 regular consumers, 3 occasional). To minimize homophily bias, each participant was asked to refer acquaintances with contrasting listening behaviors, for instance, frequent news listeners versus entertainment-focused users. The sample covered a range of engagement levels (seven daily listeners, one weekly listener) and listening durations averaging 4.6 hours per week, consistent with global patterns among young adults (Research, 2024). Informed consent was obtained from all participants prior to data collection.

We created three generative podcast probes using GPT-4o (OpenAI, 2024) and Microsoft Azure Text-To-Speech (TTS) (Microsoft, 2024) to gather feedback. Each probe lasted 1.5 to 2 minutes and was created simulating three common news podcast styles: a monologic news briefing (single narrator), a dyadic dialogue between two AI hosts discussing multiple viewpoints, and a hybrid format alternating narration and simulated expert clips. Neutral topics such as renewable-energy innovation were chosen to avoid biasing emotional responses.

The study was conducted through two 120-min online workshops, with 4 participants in each session. Each workshop followed a three-phase protocol.

Phase 1 (30 min): Contextual Inquiry. Participants used Miro boards to visually map their typical listening routines, detailing temporal and spatial contexts (e.g., during their commute, while doing chores).

Phase 2 (50 min): Probe Evaluation. Participants listened to the AI-generated probes and rated their engagement on 5-point Likert scales, followed by a moderated group discussion focusing on tonal authenticity, content coherence, and emotional resonance.

Phase 3 (40 min): Co-Design Activity. In the final phase, participants were asked to design their own ideal generative podcast and articulate their design rationales. This activity provided a deeper understanding of their preferences and ensured their active participation in the design process.

Key Insights

Participants demonstrated a versatile interest in podcasts. They listened in relaxed as well as busy contexts such as during commutes, household chores, or while coding, characterizing podcasts as “background companions” during secondary attention activities, which illustrated the adaptability and ubiquitous nature of podcasts. The key insights we have learned from participant responses fall into three primary categories:

News as a suitable content type for AI generation. Listeners generally considered news an appropriate genre for automation due to its emphasis on real-time and factual precision, which AI is well-equipped to handle. As F2 emphasized, ”News exists to deliver real-time updates efficiently, AI can process breaking stories way faster than any human team ever could.” F5 added, ”While human reporters verify facts manually, AI can cross-check sources in seconds.” Participants also believed AI could deliver a more neutral tone, as F6 noted, ”AI won’t downplay climate data because some CEO donated to a campaign,” a point F3 expanded by stating, ”It sounds like a wire service, just facts, no spin.” However, several acknowledged that algorithmic neutrality does not eliminate training-data bias. F1 observed that AI’s emotionally restrained delivery might actually suit factual news: ”The tone is rather flat and straightforward, makes it more suitable for reporting emotion-neutral news.” F4 observed that AI’s emotionally restrained delivery might actually suit factual news: “Most news isn’t supposed to sound dramatic anyway, so the lack of flair feels appropriate.” Overall, these factors collectively position news as the ideal testing ground for developing AI podcast systems that prioritize factual integrity over performative storytelling, directly informing our pipeline’s design to optimize automated news compilation and delivery.

Dyadic dialogue as a more engaging presentation style. Most participants preferred two-host conversations to single-voice narration, finding them livelier and easier to follow. As F2 put it, ”AI can connect more emotionally with a dialogue because the turn-taking mimics real-life conversations,” The dynamic nature of two-person interactions was also highlighted, with F5 emphasizing, ”You don’t just hear information; you feel the chemistry between voices, which brings energy that solo narrations lack.” Additionally, dialogue was seen as well-suited for presenting differing perspectives through simulated debate. As F6 noted, ”When one voice asks questions or repeats points, it acts like a built-in highlighter,” while F7 added, ”The back-and-forth breaks down dense information, so the information can be easily understood.” Participants also identified unexpected benefits regarding synthetic voice limitations. F3 explained, “You stop noticing the robotic tone when you’re following an exchange.” F4 also agreed, “it shifts attention away from the voice itself.” Even critics who usually doubt AI saw practical benefits, as F1 admitted, “having two AI voices talking is so much better than those single-voice reports that just feel like a lullaby.” For these reasons, dyadic dialogue was considered an effective way to enhance both engagement and comprehension in AI-generated podcasts.

Modular segmentation as a critical requirement. Participants emphasized the need for modular news segments. Firstly, AI’s ability to create self-contained segments was seen as a major advantage for flexible listening. Most participants listened while multitasking (e.g., commuting and chores) and typically engaged in 10- to 35-min sessions. As F3 noted about longer podcasts, “If I get interrupted, it’s hard to find my place again.” F7 suggested, “An ideal AI podcast should compress top stories into brief, standalone updates.” The second driver for modularity was the need to minimize cognitive load, especially for a multitasking audience. F1 described the ideal format as “bulletins that function as standalone pieces, like podcast snackables.” This suggests that AI-generated content should be concise and clearly structured for consumption in short, interrupted bursts.

Collectively, these findings informed the design principles of our pipeline: focus on news as an AI-appropriate genre, employ dialogic framing for engagement, and structure episodes modularly for accessibility. These user-centered insights directly guided the system architecture described in the next section.

GenPod Pipeline

To systematically examine how constructive and non-constructive framing shapes listener experience, we developed GenPod, a generative podcast pipeline that integrates LLM-based content generation with text-to-speech (TTS) synthesis. GenPod automatically produces paired podcast episodes with contrasting narrative frames while holding topic, length, and voice parameters constant.

Grounded in journalistic framing theory and constructive reporting practices (McIntyre & Gyldensted, 2018; Van Dijk, 1985), GenPod generates podcast content directly from verified news sources (e.g., Xinhua News (News, 2024), People’s Daily (Daily, 2024)). For each topic, the pipeline produces one constructive and one non-constructive version. Full prompt specifications are provided in the supplemental materials.

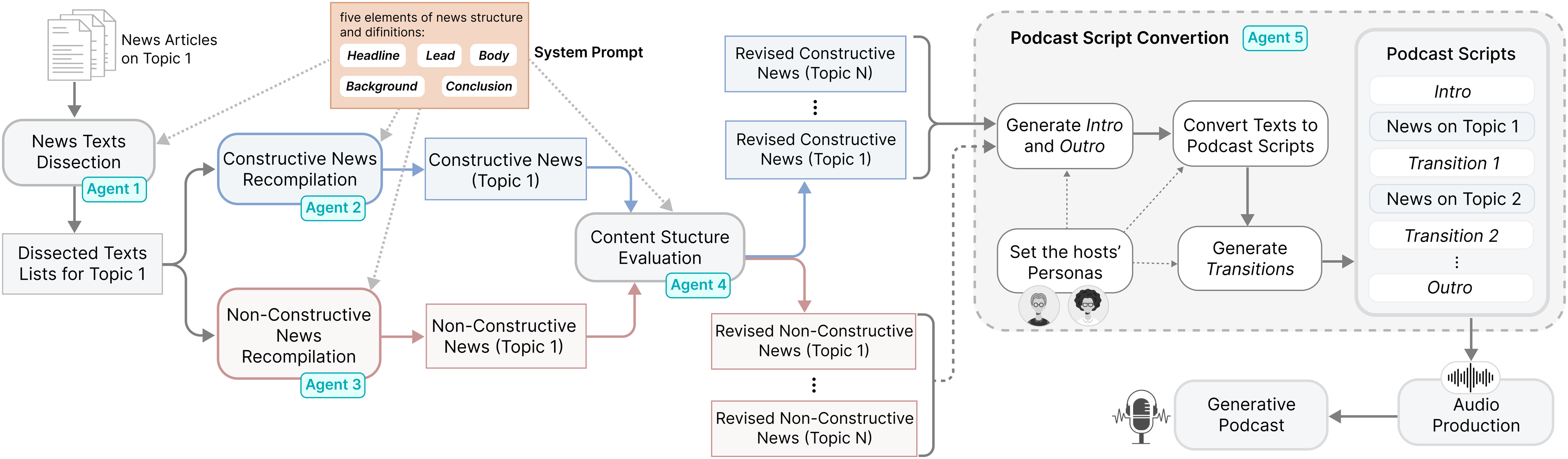

As illustrated in Figure 1, the GenPod pipeline consists of four stages: (1) structured decomposition of source news articles, (2) frame-specific recomposition of content, (3) conversion into dialogic podcast scripts, and (4) audio production using synthetic voices. Overview of the GenPod Workflow for Producing constructive and Non-constructive podcasts. The System Begins With Topic-aligned news Articles Collected From Reputable Media Outlets and Ultimately Generates two Podcast Variants. The Pipeline Operates Through Five Sequential Agents, Supported by a Shared System Prompt Used Across the first Four. Agent 1 Segments the Source Articles Into Fundamental Components, which Agents 2 and 3 Reconstruct according to constructive or Non-constructive Framing Strategies. Agent 4 Verifies the Structural Coherence of the Reconstructed Content, while Agent 5 Converts the Refined Text Into Podcast Scripts. Finally, Text-To-Speech (TTS) Synthesis and Audio Post-production Yield the Completed podcasts

Structured Decomposition of News Sources

To preserve factual consistency during reframing, GenPod first decomposes news articles into standardized structural elements based on established journalistic conventions (Robescu, 2008; Van Dijk, 1985). Each article is segmented into five components: headline, lead, body, background, and conclusion. Multiple articles covering the same topic are processed to ensure content completeness and robustness.

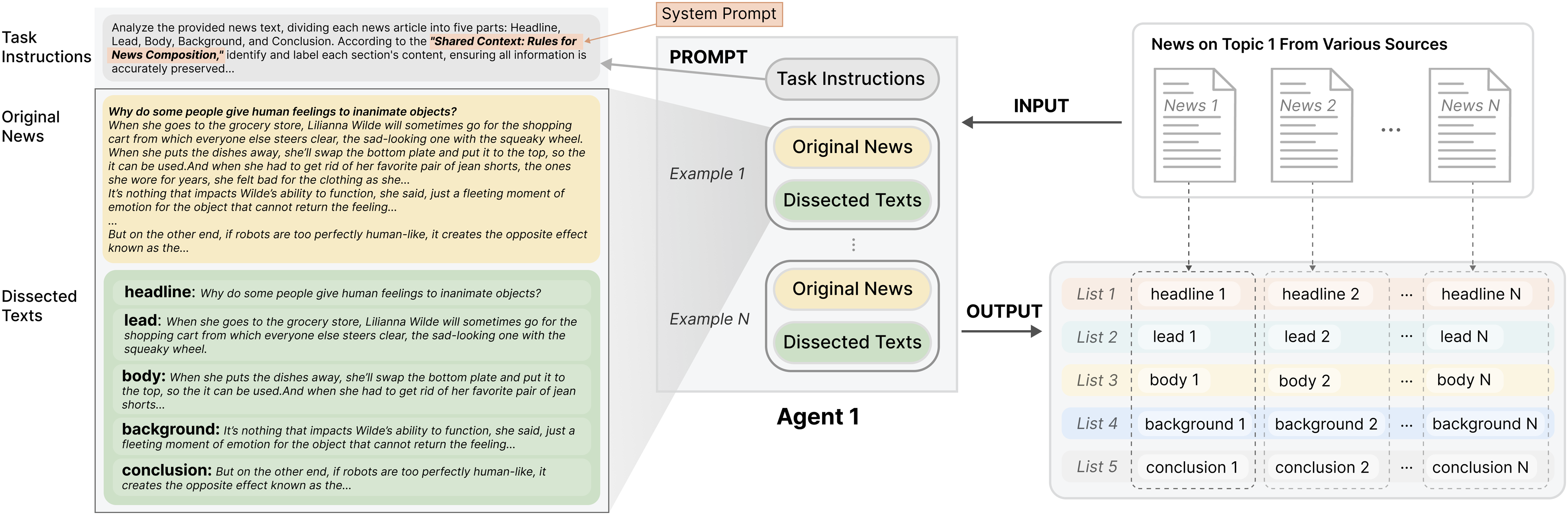

Agent 1, the initial processing component, uses chain-of-thought prompting (Wei et al., 2022) to break down articles into these key elements by detecting semantic boundaries. To avoid misinterpretation, this extraction is strictly verbatim. It then groups similar elements from all sources (e.g., compiling all headlines into a single list). Few-shot examples were provided to help the agent accurately distinguish between components. As detailed in Figure 2, the agent outputs five consolidated lists of news elements, preserving source integrity while structurally preparing the content for the next stage of analysis. The Structure and Role of Agent 1. Its prompt Consists of Task-specific Instructions and Several Exemplars that Direct the Model to Break Down each Original Article Into Five Predefined Sections—headline, Lead, Body, Background, and Conclusion—without Modification. The Underlying System prompt Defines these Elements, while the Examples Illustrate their Application. When multiple Related news Articles are provided, Agent 1 Extracts and Organizes Content by Element Type, Producing Five Categorized Lists that Form the Input for the Next Stage

Recompiling News With Constructive and Non-Constructive Frames

Theoretical basis to ensure the Large Language Model (LLM) could operationalize the two narrative styles, we provided it with clear definitions and key characteristics summarized from established media studies frameworks. Constructive news framing was defined by its emphasis on potential solutions and positive events, often adopting an optimistic tone (McIntyre & Gyldensted, 2018), and non-constructive framing was defined by its prioritization of conflict amplification, unresolved tensions, and immediate negative consequences(Baden et al., 2019; van Venrooij et al., 2022).

Technical implementation Based on the definitions, GenPod utilized two distinct agents, Agent 2 and Agent 3, each incorporating characteristics of constructive and non-constructive news, respectively. The separation prevents the two frames, which differ significantly in tone and focus, from overlapping. The agents work from the structured lists generated by Agent 1, which ensures that original details are retained and avoids excessive compression that could compromise content quality.

For constructive outputs, Agent 2 adheres to directives like: ”Rebuild the news body combining the main features of constructive news, make sure to dedicate content to implemented or proposed solutions. Incorporate quotes from experts outlining actionable steps, and conclude with a forward-looking perspective emphasizing collective agency.” Non-constructive prompts explicitly demand: ”Highlight escalating conflicts and stakeholder disagreements. Use urgency-inducing metaphors (e.g., ‘time bomb,’ ‘breaking point’) and direct quotes emphasizing negative outcomes. Exclude mitigation strategies or optimistic projections.”

An additional component, Agent 4, assesses the outputs against structural criteria (e.g., element completeness and solution coverage in the constructive track) and suggests revisions. Prompt designs and generated outputs were reviewed by two journalism practitioners to confirm alignment with professional news-writing conventions.

Dialogic Script Conversion

In the third stage, Agent 5 converts the recompiled plain-text news into dialogue scripts. Inspired by prior work on conversational media formats (Earkind, 2024; Ginns & Fraser, 2010), GenPod adopts a dyadic dialogue structure involving two hosts. The scripts follow a consistent template: one host introduces topics using headlines and leads, while both collaboratively elaborate on body content, background context, and conclusions. To maintain experimental control, the conversion process preserves the original framing tone and avoids adding subjective commentary or stylistic embellishments. Identical dialogue structures are used across framing conditions to isolate framing effects from narrative form.

Audio Production

Finally, the dialogic scripts are rendered into audio using GPT-SoVITS (Ijiga et al., 2024), a neural TTS system supporting high-quality voice synthesis. One male and one female synthetic voice were used across all podcast episodes to control for acoustic variation. The same voices were applied to both framing conditions. Generated voice segments were combined into complete podcast episodes with standardized pacing and minimal post-processing.

Methodology

The user study was designed to assess the effects of the GenPod pipeline (described in Chapter 4) on listeners’ psychological states. Specifically, we sought to determine how constructive versus non-constructive AI-generated podcast framing impacts audience emotions and self-efficacy. To explore this question, we conducted a mixed-methods approach. The core of the study was a between-group experimental design, which provided quantitative data from questionnaires, supplemented by qualitative insights from semi-structured interviews.

Participants

We recruited 66 participants (33 female, 32 male, 1 non-binary; M = 23.7, SD = 3.8) through online university communities and social networks. All participants reported listening to podcasts at least once per week and were functionally fluent in Chinese. Demographic data, including age, gender, and education level, were collected to ensure that there were no systematic differences between experimental groups. Recruitment sought diversity in listening habits to reflect the demographic composition of typical young adult podcast audiences. Participants provided informed consent and received a modest monetary incentive for their time. This study has been reviewed and approved by the authors’ institution, and ensured that the human research planning, conduct and reporting are consistent with the local governing laws and regulations. Informed consent was obtained from all participants prior to data collection. Participants were compensated at a rate of approximately $10 USD per hour, in accordance with local standards.

Preparation of Study Materials

Two distinct topics were selected for the experimental stimuli, both centered on highly salient and contentious debates on Chinese internet platforms: 1) the challenges faced by food delivery workers and 2) the controversies surrounding sports fandom during the Olympics. These topics were chosen specifically for their viral public engagement and polarized discourse, providing rich material for testing narrative framing strategies.

Topic 1 focused on a controversial Guangzhou regulation (issued July 2024) that mandated industry-wide suspensions for delivery riders who accrued three weekly traffic violations. The policy had ignited a fierce public debate, with opinions split between protecting public safety and unfairly penalizing gig economy workers. Topic 2 centered on the Chinese Olympic Committee’s public condemnation of toxic ”fan circle” behaviors during the Games, such as the organized harassment of athletes and referees, which had prompted new regulatory measures.

To ensure the AI pipeline had sufficient and balanced material, we sourced five relevant articles for each topic from reputable mainstream media (e.g., China Youth Daily (Daily, 2025)) and official announcements. The selection process was curated to balance perspectives by including articles that contained both constructive elements (e.g., solutions and positive outcomes) and non-constructive elements (e.g., problems and negative outcomes). While any single news outlet may have inherent biases, we mitigated this by sourcing from a variety of outlets to provide a more comprehensive view.

Using the GenPod pipeline, these source articles were processed to generate two distinct podcast episodes. The Constructive Podcast (CP) (length 3:46) and the Non-Constructive Podcast (NP) (length 3:44) both contained segments on the two topics, with each topic segment lasting approximately 80–90 seconds. To ensure experimental control and isolate the effect of framing, the two podcast versions differed only in framing strategy. All other variables were held constant, including the same introductory and concluding text, identical AI-generated voices, and the same calm instrumental background music. The same text-to-speech (TTS) engine, voice identity, and default prosody settings were used across conditions, and no emotion-specific parameters or post-processing adjustments were applied. A acoustic check showed comparable delivery across the two versions (CP: meanF0 = 214.6 Hz, speechrate = 3.87words/s; NP: meanF0 = 212.9 Hz, speechrate = 3.82words/s), indicating minimal paralinguistic variation. The final podcasts, along with detailed transcripts and timestamped outlines, were uploaded to ”Xiaoyuzhou,” a major Chinese podcast platform.

Procedure

Participants began by completing a pre-experiment questionnaire to establish their baseline emotional state via the 10-item Positive and Negative Affect Schedule (PANAS) scale (Watson et al., 2007). They were then assigned to one of the two experimental conditions: the Constructive Podcast (CP) group (n = 33) or the Non-Constructive Podcast (NP) group (n = 33). Random assignment was used to minimize potential selection bias between conditions. All participants were instructed to focus on the podcast while listening and to refrain from engaging in other activities (e.g., browsing, messaging, or multitasking) during playback, in order to ensure full engagement.

Immediately after listening, participants completed a post-experiment questionnaire. This included a comprehension check (e.g., summarizing the second news topic) to verify they had paid attention. The PANAS scale was administered a second time to measure post-exposure emotional state. Additionally, participants’ self-efficacy was assessed using three custom questions tailored to each news topic, with all items measured on a 7-point Likert scale. The full set of custom self-efficacy questions is provided in the Appendix.

Following the questionnaire, a randomly selected subset of seven participants took part in semi-structured interviews. To facilitate a direct comparison, participants in the interview first listened to the podcast version they had not been assigned to during the experimental phase. The interviews explored how the different versions impacted their emotional responses and self-efficacy, with participants encouraged to articulate the perceived differences and the reasons for them. All interviews were audio-recorded, transcribed verbatim, and analyzed thematically.

Data Analysis

Of the 66 questionnaires initially collected, one was excluded from the analysis for failing to pass the integrity check, resulting in 65 valid participant responses. We categorized the levels of feeling alert, inspired, determined, attentive, and active from the PANAS scale as positive emotions, while the levels of feeling upset, hostile, ashamed, nervous, and afraid were classified as negative emotions (Watson et al., 2007).

The qualitative interview data was analyzed using a thematic analysis approach (Braun, 2006). An initial coding scheme was developed inductively, allowing themes to emerge directly from the participants’ transcripts. Two researchers collaborated on this initial annotation and scheme development. Following this, the two researchers proceeded to independently code the full set of transcription data. Upon completion, they conducted an iterative review process to consolidate and reconcile the coded datasets. This involved in-depth discussions to resolve any discrepancies and collaboratively refine the final coding results.

Findings

The Effect of Framing on Emotions

Negative emotions Prior to the experiment, an independent-samples t-test confirmed that the two groups did not differ significantly in their baseline negative emotion scores (t(63) = 0.42, p = .67), indicating comparable initial emotional states.

The change in negative emotion scores was calculated as the difference between post- and pre-experiment PANAS scores (post–pre). Therefore, negative values represent a reduction in negative emotions after listening to the podcast.

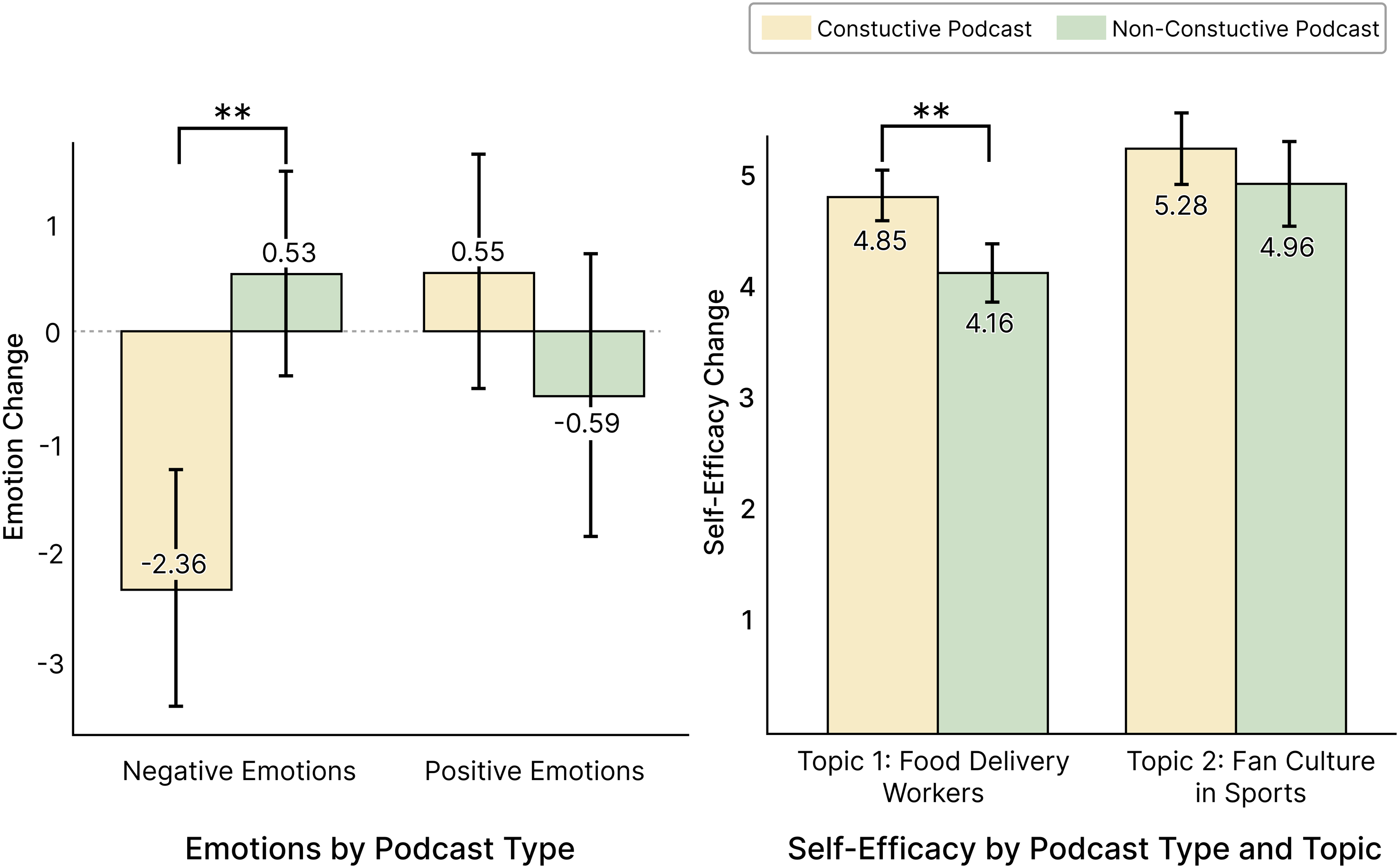

An analysis of variance (ANOVA) revealed a statistically significant difference in the impact of the Constructive Podcast (CP) versus the Non-Constructive Podcast (NP) on participants’ negative emotions (F (1, 63) = 7.815, p < 0.01). As illustrated in Figure 3, participants who listened to the CP reported a significant decrease in negative emotions (M = −2.36, SD = 4.46), whereas those in the NP condition reported a slight increase (M = 0.53, SD = 3.85). Post-survey Quantitative Results for emotion and Self-efficacy. Emotional Responses Were Measured after Exposure to the full Podcast Episode. ** Indicate Significance of p < 0.01

Positive emotions No significant difference was found between the two groups in baseline positive emotion scores (t(63) = −0.58, p = .56).

In contrast, the analysis did not reveal any significant difference in the change in positive emotions between the CP condition (M = 0.55, SD = 4.44) and the NP condition (M = −0.59, SD = 5.31).

Qualitative results thematic analysis revealed three recurring factors that shaped participants’ emotional experiences: the perceived visibility of solutions, the temporal framing of the issues, and the overall narrative tone.

The Non-Constructive Podcast (NP) was consistently associated with feelings of anxiety and depression. Participants attributed this to its intense focus on severe, complex societal problems without offering any recourse. For listeners of NP, the perceived lack of actionable solutions amplified feelings of helplessness. As P3 explained, after comparing the two versions, ”when it came to the delivery workers’ struggles, the non-constructive version felt like a total dead-end. It just presented this awful situation as if there was absolutely no way out, no matter what anyone tried.” The sense of hopelessness was exacerbated by the NP’s narrow temporal focus on current problems, which omitted historical context or future possibilities. P5 noted, ”the NP report on sports fandom was just so depressing. It made the whole culture sound like it was permanently toxic and could never change. It really brought my mood down. ”

In contrast, the Constructive Podcast (CP) was praised for providing a more balanced approach. By incorporating timelines of progress such as recent policy changes, it helped contextualize the challenges and alleviate negative emotional states. P1 commented, ”The CP version didn’t sugarcoat how hard it is for the workers, but hearing that new laws had already reduced accidents last year gave it a sense of perspective.” By interweaving challenges with tangible progress, CP provided what P6 termed ”a harsh but hopeful reality check.” P4 articulated, “the constructive one showed the problem, but then it immediately followed up with ‘and here are the actual steps people are taking to fix it’. That shift from just problems to solutions made all the difference in how I felt.”

The CP was also noted for its measured tone, which participants associated with calmness, objectivity, and hope. P3 remarked, ”Hearing those stories, even small ones, about other workers who improved their situations… it made me feel like there was a path forward, something to aspire to.,” P5 reflected a similar sentiment regarding the other topic, ”The CP’s sports fandom report highlighted efforts to reduce toxicity, giving me hope that the situation could improve.” While CP did not significantly increase positive affect scores, participants’ accounts suggest that CP primarily elicited subtle, low-arousal positive states that may not be fully captured by standardized positive affect scales, which tend to emphasize high-arousal emotions such as excitement or enthusiasm. Conversely, the NP’s singular focus on problems was perceived as demotivating. P1 explained, ”It only discusses the severity of their struggle, making it hard to feel motivated,” P6 pointed out, ”Even when the NP did mention a potential solution, it immediately emphasized its negative consequences, so it still felt pointless. ”

The Effect of Framing on Self-Efficacy

Topic 1: food delivery workers As to the topic of food delivery workers, self-efficacy scores differed significantly between the two conditions (F (1, 63) = 8.530, p < .01). As shown in Figure 3, participants in the CP group reported significantly higher self-efficacy (M = 4.85, SD = 0.90) compared to those in the NP group (M = 4.16, SD = 1.01).

Topic 2: fan culture in sports For the topic of fan culture in sports, the analysis found no significant difference in self-efficacy between the CP (M = 5.28, SD = 1.27) and NP (M = 4.96, SD = 1.51) conditions.

Qualitative results The qualitative data provides a clear explanation for this divergence between the two topics. The differences in self-efficacy appeared to be contingent on how participants perceived the actionability of the solutions and whether those solutions were framed as individual versus collective efforts.

For the food delivery topic, the Constructive Podcast (CP) framed the issue in terms of collective interventions, such as union efforts and legislative reforms. This approach allowed participants to see themselves as part of a broader system of change, which generally enhanced their feelings of empowerment. As P2 remarked, ”Hearing about delivery apps introducing insurance programs made me realize I could support those companies through my consumer choices.” CP thus made abstract issues feel actionable. P3 noted, ”Knowing that there are already actionable steps in place made me feel like this issue is not just addressable, but that progress is already happening.” P6 reinforced this, saying, ”It’s not about me solving everything, but about seeing where I fit into the bigger picture. ” Participants reported feeling more capable of contributing to positive change because the solutions seemed both actionable and part of a larger, ongoing effort.

In contrast, listeners of the Non-Constructive Podcast (NP) were more likely to feel passive and powerless. P2 stated, ”NP only highlights the difficulties faced by food delivery workers, like lack of job security and the high risk of accidents, without offering hope, leaving me feeling powerless.” Similarly, P5 expressed, ”as if there was nothing that could be done to make things better.” The lack of solutions and forward-thinking perspectives in NP led to a sense of helplessness, contributing to lower self-efficacy among listeners.

However, this pattern was less evident in cases where issues were described as socially diffuse or structurally entrenched, with participants reporting persistent feelings of limited control despite constructive framing. For the sports fandom topic, participants in both conditions reported limited gains in self-efficacy, reflecting a shared perception that the issue was deeply entrenched and resistant to individual influence. P3 characterized fan culture as ”deeply entrenched and beyond the reach of individual efforts.” Although CP introduced potential interventions such as educational campaigns, many participants regarded these solutions as abstract, unrealistic, or dependent on actors beyond their control. P4 described the proposed actions as “too abstract to implement,” while P7 noted that they were “reliant on celebrities who don’t care.” This perceived lack of controllability undermined self-efficacy gains, as P6 remarked: ”What am I supposed to do—march into a stadium and lecture strangers about toxicity?”

Some participants also felt that CP’s positive tone risked diluting the perceived seriousness of the issue. As P5 explained, ”the positive tone of CP, while well-intentioned, made the issue seem less serious. It felt like they were downplaying the severity of the problem.” Meanwhile, NP’s framing of sports fandom reinforced a sense of immutability. P4 observed that ”NP’s take on fan culture made it feel like an unstoppable force, so why even try?”

Discussion

This study examines how constructive framing functions in AI-generated podcast news, extending prior research that has largely focused on text-based or visual media formats (McIntyre, 2019; Overgaard, 2023). While previous studies suggest that constructive or solutions-oriented framing can reduce negative emotional responses (Thier, 2023), these findings are primarily grounded in human-authored content and conventional news delivery. Our results indicate that similar reductions in negative emotion can also be observed in AI-generated, audio-only news, suggesting that constructive framing effects may generalize beyond traditional media formats.

At the same time, our findings reveal important differences from established patterns reported in prior constructive journalism research. Although constructive podcasts reliably reduced negative emotions, they did not lead to consistent increases in positive emotions. The divergence refines earlier assumptions that reductions in negative affect and gains in positive affect tend to co-occur in constructive media exposure (McIntyre, 2019). Instead, our results align with media effects research suggesting that negative and positive emotions are not simply opposite ends of a single continuum and may respond differently to the same content manipulation (Fredrickson, 2001; Watson et al., 1988). Qualitative responses further suggest that listeners often described feelings of relief or reassurance rather than elevated positive mood, a pattern consistent with prior findings that short-term news exposure more readily influences negative affect than positive emotional states (Mares & Woodard, 2012). One possible explanation lies in the distinction between hedonic and eudaimonic forms of well-being. PANAS primarily captures high-arousal states (e.g., excitement and enthusiasm), whereas constructive framing appears to elicit lower-arousal states such as calmness, relief, or hope. Therefore, the lack of a significant increase in PANAS-measured positive affect does not mean participants experienced no positive effect; it likely reflects the type of positive emotions elicited.

The influence of constructive framing on self-efficacy was more limited and varied across topics. Rather than indicating an absence of effect, this pattern suggests that self-efficacy outcomes may depend on the characteristics of the news issue itself. Prior research has shown that self-efficacy is more likely to increase when media narratives present concrete, relatable situations and clearly articulated action pathways (Locke, 1997; McIntyre, 2015). In our study, topics perceived as abstract or structurally complex appeared less conducive to such effects, even when framed constructively. This finding helps clarify the mixed results reported in earlier studies on constructive journalism and agency (Curry & Hammonds, 2014; Dahmen et al., 2021), indicating that framing alone may be insufficient to foster perceived control in the absence of accessible forms of action.

In the context of AI-generated podcasts, these patterns may be further shaped by the nature of synthetic speech. Unlike human narration, AI-generated voices typically follow standardized expressive patterns, which may influence how listeners interpret emotional tone and motivational cues (Nass & Moon, 2000; Triantafyllopoulos et al., 2023). While vocal delivery was controlled and showed minimal acoustic variation across conditions, the observed results suggest that narrative framing and vocal delivery should be considered jointly when examining psychological outcomes in AI-mediated audio news. Together, these findings underscore both the potential and the limits of constructive framing in AI-generated media and point to the need for more nuanced models that account for modality, topic structure, and production context. Building on these findings, we articulate a set of design implications that address ethical risk mitigation, transparency in AI-mediated media systems, and the role of adaptive framing and experimental toolkits in shaping responsible AI-generated podcast experiences.

Design Implications

Constructive framing design mitigates ethical risks in AI-mediated media by counterbalancing algorithmic tendencies. The integration of constructive framing strategies in AI-generated news systems, exemplified by GenPod, represents a significant approach to mitigating the amplification of algorithmic biases inherent in generative media. Research indicates that AI, particularly in automated content generation, can unintentionally reinforce stereotypes (Nazar, 2020), disseminate misinformation (Zuboff, 2019), or prioritize sensational content that appeals to emotional responses over objective facts (Loviglio, 2024). Such biases influence not only the presentation of information but also the audience’s perception and interaction with media (Shah, 2022).

GenPod, a tool designed to test narrative framing strategies, offers a promising solution to these ethical challenges. By employing constructive framing techniques, GenPod facilitates the systematic evaluation and modification of content presentation to counteract the adverse effects of algorithmic bias. Constructive framing increases engagement with institutions and reduces polarization, thereby reducing biases reinforcement (van Antwerpen et al., 2023). This approach is consistent with previous media framing studies, which suggest that news framing can affect not only the understanding of specific issues (Rowbotham et al., 2019) but also public attitudes and behaviors (Wahl-Jorgensen, 2020).

Furthermore, the challenge of misinformation and the spread of false narratives in AI-generated media highlights the necessity of constructive framing. Some content recommendation algorithms, particularly in social media, have been observed to prioritize engagement-maximizing content, which can include sensational or emotionally charged material (Chavalarias et al., 2023). By integrating constructive framing strategies, AI can be guided to produce content that is balanced and encourages critical thinking rather than emotional manipulation. Research on algorithmic curation has highlighted the risks of favoring sensational content, which can contribute to social division and misinformation (Lischka, 2023). GenPod’s implementation of constructive framing strategies could thus provide a corrective measure, promoting responsible AI use in the media ecosystem.

In practical terms, GenPod offers AI developers insights into optimizing framing strategies to minimize ethical risks in content creation. This includes testing various framing models to ensure AI systems produce media adhering to ethical standards, such as fairness, accuracy, and diversity. Additionally, it provides a framework for enhancing transparency in AI systems, ensuring that decisions about news framing are not arbitrary but grounded in ethical design principles. For AI developers, these insights are crucial for building systems that are both efficient and socially responsible.

Building a responsible AI media ecosystem requires a shift to transparency-driven design. The proliferation of AI-generated content in media and communication fundamentally transforms narrative construction and public discourse. As AI systems increasingly assume content creation roles, operational transparency becomes imperative. Many AI algorithms function as “black boxes,” concealing their decision-making processes during content generation and framing (Bonezzi et al., 2022). This opacity engenders user mistrust and raises ethical concerns regarding information manipulation and algorithmic bias (Fritz, 2022).

GenPod’s research offers critical insights into addressing these transparency challenges through systematic design approaches in AI media ecosystems. By developing explainable framing strategies and visualization systems, GenPod advances the discourse on AI’s role in media narrative construction. These systems could incorporate frame type identifiers that explicitly reveal AI framing decisions, thereby demystifying content generation processes. This approach satisfies ethical transparency requirements while enhancing user trust through clarification of AI content construction methodologies.

Transparency indicators in AI-generated news serve an essential function in contemporary media environments (Piasecki et al., 2024). By explicitly documenting framing decisions including algorithmic logic, data sources, and contextual parameters, media organizations can cultivate more informed audience engagement. Such transparency enables users to critically evaluate content consumption by understanding the factors influencing information presentation. This becomes particularly crucial in an era where misinformation and biased narratives can rapidly proliferate, potentially distorting public perception. Empirical research demonstrates that algorithmic process transparency can mitigate these risks by enhancing user comprehension and system trust (Hunter, 2023).

GenPod’s findings further emphasize the necessity of integrating transparency mechanisms into AI media governance frameworks. By providing structured methodologies for visualizing and explaining AI-driven content decisions, GenPod establishes a model for ethical AI implementation in media contexts. This model facilitates the development of governance policies that ensure AI system accountability and alignment with societal values. As AI capabilities evolve and media applications expand, transparent and explainable systems become fundamental to maintaining narrative integrity and reliability.

The transition toward transparency-driven design represents a critical requirement for responsible AI media ecosystem development (Baig et al., 2024). By addressing algorithmic opacity and promoting clear communication of AI decision processes, transparency mechanisms support ethical media governance while empowering critical user engagement. GenPod’s contributions highlight the importance of developing systems that prioritize transparency, thereby fostering a media environment that balances technological innovation with ethical responsibility.

GenPod serves as an experimental toolkit for systematically testing framing strategies in diverse contexts. GenPod, the generative podcast pipeline, emerges as a tool in AI and media research, providing a comprehensive platform for examining the effects of different framing strategies on audience engagement and media consumption. Unlike traditional tools that primarily focus on podcast content production (Austin & Samuel, 2023; Podcastle, 2024), GenPod systematically tests and optimizes ethical framing strategies across diverse sociotechnical contexts. This positions GenPod as not only a tool for understanding emotional responses but also as a comprehensive experimental toolkit that can inform future AI media governance.

The experimental value of GenPod lies in its ability to simulate and analyze the effects of various framing strategies on audiences. By offering a controlled environment to test how different frames influence perception and engagement, GenPod enables researchers to collect empirical data on the effectiveness of ethical framing strategies. This data is crucial for developing AI systems that are both effective in content delivery and aligned with ethical standards. For example, by experimenting with frames that emphasize inclusivity and diversity, researchers can evaluate how such strategies affect audience perceptions and contribute to a more balanced media narrative.

Furthermore, GenPod’s role in AI media governance is highlighted by its potential to provide data-driven insights into the ethical implications of framing strategies. As AI continues to significantly influence media content, understanding the nuances of how different frames impact user engagement becomes essential (Choi, 2024). GenPod allows researchers to explore these dynamics, offering a foundation for developing governance frameworks that ensure AI-generated content adheres to ethical principles. This aligns with broader efforts in AI governance to create systems that are transparent, accountable, and socially responsible (Camilleri, 2024).

By using GenPod as an experimental toolkit, researchers can also investigate the potential for AI systems to adaptively respond to audience feedback, optimizing framing strategies in real-time. This adaptability is key to creating media experiences that are both engaging and ethically sound. Through iterative testing and refinement, GenPod can help identify which framing strategies resonate most positively with audiences, providing actionable insights for AI developers and media practitioners.

Adaptive framing based on psychological states creates meaningful AI-mediated media experiences. Adaptive framing in AI-generated content offers significant potential for enhancing audience engagement by tailoring media experiences to individual psychological states. By considering factors such as content sensitivity, user preferences, and contextual elements, AI systems can create more personalized and impactful media interactions (Donini et al., 2024; Salma et al., 2024). This approach utilizes insights into self-efficacy and emotional responses, enabling content customization that resonates deeply with audiences and fosters meaningful engagement (Gu, 2024).

AI’s ability to dynamically adjust framing based on psychological states provides a pathway to more personalized media experiences (Donini et al., 2024). For example, content that aligns with a user’s emotional state or self-efficacy can enhance engagement by making the media experience more relevant and relatable. This personalization can empower users by delivering content that not only informs but also connects on a personal level, potentially increasing their involvement with the media they consume. However, dynamic framing must be carefully managed to avoid ethical issues such as the creation of echo chambers (Williamson, 2024) or emotional manipulation (Louati et al., 2024), where content excessively caters to user preferences at the expense of diversity and authenticity. To address these risks, it is essential to incorporate safeguards and design strategies that ensure the ethical use of adaptive framing. One approach is to integrate ethical review mechanisms within the dynamic framing process (Wang et al., 2022), ensuring content diversity and authenticity are maintained.

This could involve setting parameters that prevent over-personalization, thereby avoiding the reinforcement of narrow perspectives or biases. Additionally, transparency indicators should be included in AI-generated content to clarify the basis of dynamic framing decisions. By disclosing the user data and contextual factors that inform framing choices, media organizations can enhance user trust and understanding, aligning with broader calls for transparency in AI systems (Chen & Shu, 2024).

Moreover, adaptive framing based on psychological states should be designed to enhance user engagement without compromising ethical standards. This involves balancing personalization with exposure to diverse viewpoints, ensuring users receive a well-rounded media experience. By fostering an environment where content is both personalized and diverse, AI systems can contribute to a more informed and engaged audience, ultimately leading to more meaningful media interactions.

Limitations and Future Work

This study has several limitations that should be considered when interpreting the findings. First, our investigation was based on a limited set of two AI-generated podcast episodes and a corresponding participant sample, which constrains the generalizability of the results and, with only 65 participants, provides limited statistical power to detect complex interactions or smaller effects, such as those for Topic 2. Including a broader range of news topics, podcast episodes, and participant populations in future studies would allow for more robust validation and help clarify whether the observed patterns hold across diverse content and audiences.

Second, participants listened to podcasts in distraction-free settings, whereas real-world consumption often occurs alongside other activities, which may attenuate framing effects. Additionally, cultural and demographic factors, as well as technical variables such as synthetic voice characteristics, may shape how listeners perceive framing and agency, suggesting the need for cross-cultural and cross-contextual investigations.

Beyond empirical extensions, future work should also explore how the design implications identified in this study can be implemented within existing AI media systems. In particular, an open challenge lies in enabling AI-generated podcasts to adapt framing or delivery strategies in response to listeners’ psychological states. For example, future systems might explore real-time or session-level adaptation based on inferred emotional responses, while balancing personalization, transparency, and ethical constraints.

Conclusion

This paper introduced GenPod, a generative AI pipeline that utilizes text-to-speech technology to create news podcasts in both constructive and non-constructive formats. GenPod enables systematic investigation of how different narrative presentation styles influence listener perceptions. In a between-subjects study (N = 65), we examined how these two framing strategies influence listeners’ emotional responses and perceived self-efficacy. Our findings show that constructive podcasts-emphasizing collective action and actionable solutions-significantly reduced negative emotions and enhanced self-efficacy compared to non-constructive content focused on systemic failures. These findings demonstrate that narrative framing in AI-generated media directly shapes users’ emotional and motivational responses, underscoring the importance of deliberate framing design in HCI systems.

Beyond empirical evidence, this work emphasizes the need to align generative AI capabilities with psychosocial and ethical principles. By integrating responsible framing strategies into content generation, GenPod illustrates how synthetic media can support more constructive audience engagement and contribute to the development of emotionally sustainable AI-mediated communication.

Supplemental Material

Supplemental Material - Designing for Agency and Affect: Constructive Framing in AI-Generated News Podcasts Reduces Negative Emotion and Enhances Self-Efficacy

Supplemental Material for Designing for Agency and Affect: Constructive Framing in AI-Generated News Podcasts Reduces Negative Emotion and Enhances Self-Efficacy by Wen Ku, Yihan Liu, Wei Zhang and Pengcheng An in Design for Augmented Humanity

Footnotes

Acknowledgments

The authors would like to thank all participants in the listener study and colleagues who provided valuable feedback on this research.

Author Contributions

Wen Ku contributed to conceptualization, methodology, formal analysis, investiga-tion, original draft writing, and visualization. Yihan Liu contributed to software, validation, and data curation. Wei Zhang contributed to methodology and formal analysis. Pengcheng An contributed to resources, review and editing, project administration, and funding acquisition. All authors have read and agreed to the published version of the manuscript.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported by the National Natural Science Foundation of China (NSFC) Youth Grant under Grant No. 62307024.

Declaration of Conflicting Interests

Pengcheng An is a member of the journal’s editorial board. However, this author did not participate in the peer review process of this manuscript. We hereby declare that there are no conflicts of interest.

Data Availability Statement

The original contributions presented in this study are included in the article. Further inquiries can be directed to the corresponding author.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.