Abstract

This research investigates the transformative impact of artificial intelligence (AI) on nuclear relationships, focusing on the 2025 India–Pakistan and Iran–Israel conflicts. The study addresses a critical research gap: the lack of empirical, comparative analysis on how AI integration alters nuclear deterrence dynamics and crisis stability in real-world regional conflicts. The objective is to empirically test the Nuclear Deterrence Stability Paradox in the AI era, examining whether AI technologies simultaneously reinforce and undermine strategic stability. Employing a mixed-methods comparative case study approach, the research draws on open-source intelligence, defense publications, and quantitative conflict metrics to measure escalation timelines, AI deployment, cyber warfare intensity, and decision-making acceleration. Key findings reveal that AI compresses decision-making timelines, increases inadvertent escalation risks, and enables precision targeting of nuclear infrastructure, challenging traditional deterrence frameworks. The implications underscore the urgent need for new international governance mechanisms and crisis management protocols to address the unique risks posed by AI-enabled nuclear systems.

Keywords

Introduction

Artificial intelligence (AI) is transforming global security faster than any previous technology, but nowhere are its consequences more consequential or more perilous than in the nuclear realm. The year 2025 offered an unprecedented glimpse of this convergence when two separate crises in the geopolitical domain occurred: the India–Pakistan escalation that followed the Pahalgam attack and Israel’s Operation Rising Lion against Iran. These two separate events unfolded within weeks of each other. In both theaters, AI-enabled decision-support systems, autonomous drones, and algorithmic cyber operations compressed political reaction time, blurred the line between conventional and strategic assets, and challenged the foundational logic of mutual assured destruction (MAD). These events underscored the uncomfortable reality that nuclear arsenals, once thought to guarantee stability through reciprocal vulnerability, may now be destabilized by machine-speed warfare and precision intelligence, surveillance, and reconnaissance (ISR) capabilities. As states rush to graft AI onto antiquated command-and-control architectures, the risk that a software update, spoofed sensor feed, or mislabeled target could cascade toward nuclear escalation is no longer a remote scenario but a tangible threat demanding scholarly scrutiny.

Existing scholarship has laid important groundwork by theorizing how AI might erode crisis stability, accelerate decision loops, or enable counterforce strategies that threaten second-strike survivability. Rich qualitative analyses chart the technical pathways with deep-learning algorithms in early-warning radars, swarm coordination protocols, or the predictive maintenance of missile silos, through which AI could influence nuclear postures. Yet systematic empirical validation remains scarce on this issue. Case studies often privilege USA–Russia or USA–China dyads in the context of the perils of artificial general intelligence (AGI) or artificial superintelligence (ASI), while overlooking regional dyads where escalation ladders are steep, and crisis management tools are sparse. Similarly, quantitative assessments of escalation timelines, cyber intrusion volumes, or human–machine interface failures are fragmentary, leaving unanswered questions about how AI’s impacts vary across geopolitical contexts, technological maturities, and doctrinal cultures. Moreover, few studies integrate AI-nuclear entanglement or AI-generated disinformation into traditional deterrence frameworks, creating a knowledge gap in how multi-domain pressure points interact during real-world crises. This mismatch between theoretical forewarning and empirical scarcity makes it difficult for policymakers to craft robust guardrails or for scholars to refine deterrence theory for the AI age.

This article aims to bridge that gap by leveraging the dual 2025 crises as natural experiments to test core propositions of the Nuclear Deterrence Stability Paradox. We hypothesize that AI integration simultaneously bolsters and undermines deterrence: it strengthens situational awareness, yet erodes decision-making buffers, and enhances precision, yet amplifies the temptation for preemptive or preventive strikes. By triangulating open-source intelligence (OSINT), defense white papers, and quantitative conflict metrics, we will compare escalation velocities, command-and-control adaptations, and cyber-nuclear entanglement in the India–Pakistan and Iran–Israel cases. Our analytical framework fuses crisis-timeline reconstruction with assessment of AI system roles—human-in-the-loop versus human-on-the-loop—to illuminate how differing nuclear asymmetries and technological maturities mediate AI’s effects on stability. In doing so, we seek not only to validate or challenge prevailing hypotheses but also to generate policy-relevant insights for designing AI governance mechanisms around verification protocols, transparency measures, and machine-speed crisis hotlines that can restore strategic breathing space in an era where algorithms threaten to outrun diplomacy.

Methodology

To address the questions posed regarding the impact of AI technologies on nuclear relationships through the India–Pakistan and Iran–Israel conflicts of 2025, this study employed a mixed-methods comparative case study approach utilizing triangulation methodology. The data sources included OSINT from multiple platforms, defense publications, academic papers, think tank reports, and real-time conflict analyses. Primary outcomes measured included escalation timelines (operationalized as the time required to reach peak conflict intensity), AI integration levels (assessed through documented deployment of autonomous systems), cyber warfare metrics (quantified through attack frequency and AI-driven percentages), and decision-making acceleration patterns (measured through command response times and human–AI interaction protocols). Secondary outcomes encompassed nuclear threshold proximity, international intervention timelines, and strategic stability indicators. The methodological framework combined crisis timeline reconstruction with quantitative analysis of escalation patterns, employing both descriptive statistics and comparative analysis techniques to identify significant differences between the two regional conflicts. Data triangulation was achieved through cross-referencing multiple independent sources, including peer-reviewed academic literature, government reports, defense analyses, and expert assessments, to ensure the validity and reliability of findings while mitigating potential biases inherent in single-source analysis. The analytical approach integrated both qualitative comparative analysis for understanding contextual differences and quantitative metrics for measuring AI impact on nuclear dynamics, following established methodological frameworks for international relations research. The research aims to explore the hypothesis that AI technologies exacerbate existing instabilities while creating new ones, particularly in regions where nuclear deterrence relationships are still developing. Due to this, AI technology is making deterrence more fragile and crisis management more challenging. To study this in detail, the research comes with the findings associated with the recent events of 2025 in the context of the conflict between India–Pakistan and Iran–Israel in the next section.

Results

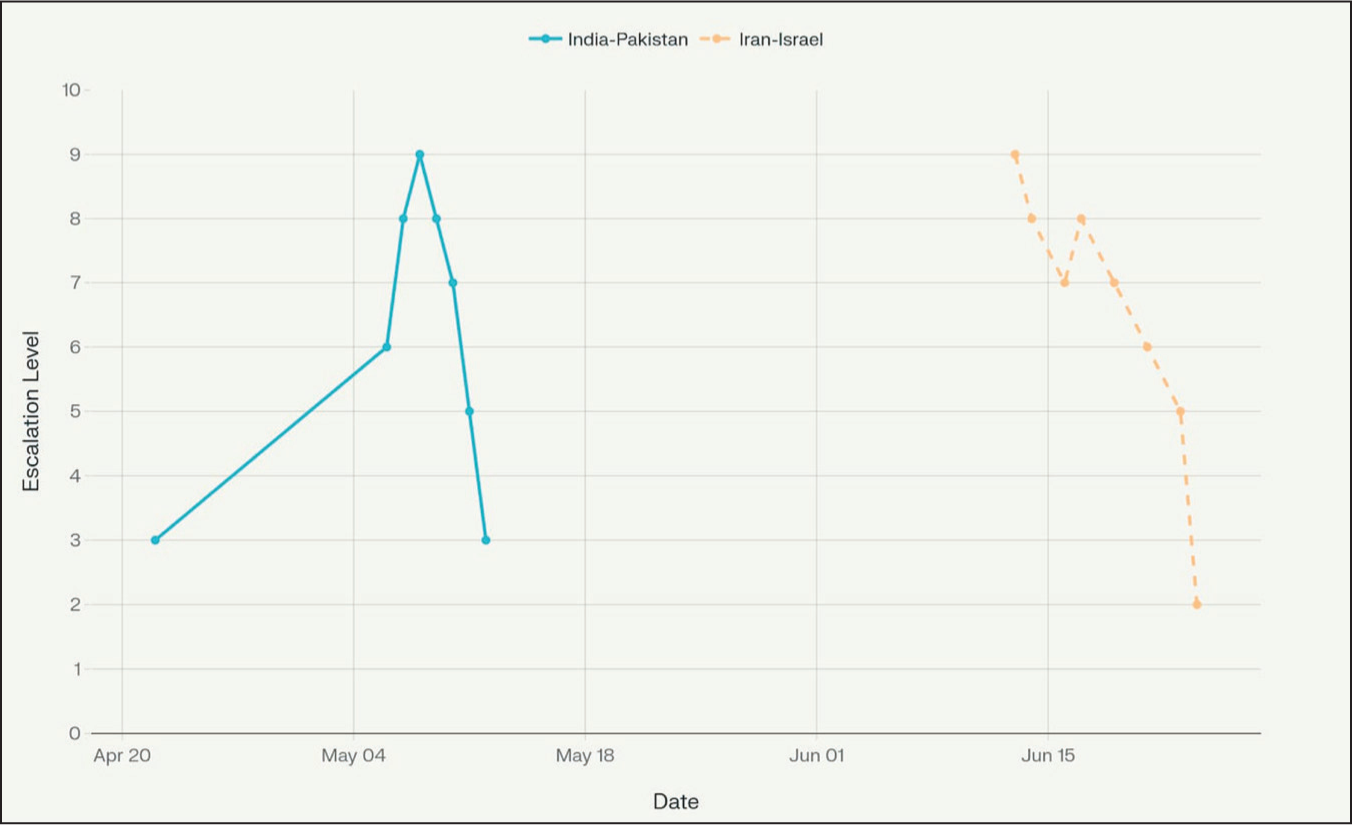

The comparative analysis revealed three fundamental differences in the nuclear-AI nexus between the India–Pakistan and Iran–Israel conflicts of 2025. The India–Pakistan conflict (May 7–10, 2025) demonstrated a bilateral nuclear deterrence framework with established command-and-control systems, while the Iran–Israel conflict (June 13–25, 2025) involved asymmetric nuclear dynamics between a declared nuclear state and a near-threshold proliferator. Regarding technological implementation, India deployed advanced AI systems, including the Akashteer air defense system and autonomous drone platforms (Harop, Heron, SkyStriker, Nagastra-1), while Pakistan’s capabilities remained more limited, focusing on cyber warfare, with 35% of 1.5 million attacks being AI-driven (Mehmood, 2025; VINITA, 2025). In contrast, Israel showcased cutting-edge AI-military integration through Operation Rising Lion, featuring pre-positioned drone swarms, AI-guided targeting systems, and sophisticated multi-domain operations against Iranian nuclear facilities, while Iran’s defensive capabilities are centered on cyber operations, with 45% of 850,000 attacks being AI-driven (Ribeiro, 2025). The escalation dynamics differed significantly, with India–Pakistan experiencing rapid escalation reaching 9/10 intensity within 48 h due to geographical proximity and compressed decision-making timelines, ultimately resulting in a ceasefire after 4 days (Harikumar & Roy, 2025). Conversely, the Iran–Israel conflict sustained operations for 12 days with systematic targeting of eight nuclear facilities, including Natanz, Isfahan, and Fordow, representing unprecedented AI-enabled precision strikes against nuclear infrastructure (Albright & Faragasso, 2025).

The strategic implications of these differences in Figure 1 reveal distinct regional approaches to AI-nuclear integration. The India–Pakistan conflict reinforced traditional bilateral deterrence patterns while maintaining strict human-in-the-loop protocols for nuclear-relevant decisions, demonstrating how geographical proximity and established nuclear arsenals created urgent de-escalation pressures that limited AI autonomy (Rautenbach, 2022). The rapid escalation timeline compressed decision-making processes, potentially increasing miscalculation risks during the crisis, as both nations’ nuclear doctrines created automatic escalation risks when AI systems accelerated conventional operations (Chernavskikh & Palayer, 2025a, 2025b). The Iran–Israel conflict showcased a more advanced human-on-the-loop framework that allowed greater AI autonomy in target selection and engagement, particularly in nuclear-relevant contexts, reflecting Israel’s higher risk tolerance for AI-enabled operations given the absence of immediate nuclear retaliation threats (Abbas et al., 2025; Hruby & Miller, 2021). This asymmetric nuclear dynamic enabled sustained AI-intensive operations that fundamentally altered regional nuclear dynamics by demonstrating the vulnerability of nuclear programs to AI-enabled precision strikes. Additionally, with regard to the cyber warfare components, it revealed different strategic priorities, with Pakistan’s massive volume attacks (1.5 million) focusing on infrastructure disruption, while Iran’s smaller volume but higher sophistication attacks (850,000) emphasized disinformation campaigns and retaliation against kinetic strikes (Khorrami, 2025; Sagar, 2025).

Comparison of Escalation Levels Between India–Pakistan and Iran–Israel Conflicts in 2025.

The analysis reveals both positive and negative implications of AI integration in nuclear contexts across these regional conflicts. Positive aspects include enhanced situational awareness through AI-powered early warning systems, improved precision in targeting that reduced collateral damage, and accelerated threat assessment capabilities that potentially prevented larger-scale conflicts in both cases. AI-enabled decision-support systems provided leaders with comprehensive data analysis that facilitated more informed strategic choices, while autonomous defensive systems like India’s Akashteer demonstrated effective threat interception capabilities. However, significant negative implications emerged, including compressed decision-making timelines that increased miscalculation risks, particularly evident in the India–Pakistan conflict’s rapid escalation to high-intensity within 24 h (Mehra, 2025). The vulnerability of AI systems to cyber manipulation was demonstrated through extensive cyber warfare campaigns in both conflicts, with AI-driven attacks comprising 35%–45% of total operations, potentially compromising nuclear command-and-control integrity (Muncaster, 2018). The Iran–Israel conflict’s systematic targeting of nuclear facilities established dangerous precedents for AI-enabled preventive actions against nuclear infrastructure, while automation bias risks emerged as human operators potentially over-relied on AI assessments in high-stakes nuclear decision-making environments (Chaudhary & Klein, 2023). The lack of adequate human oversight in some AI-enabled operations, particularly in autonomous weapons deployment, raised concerns about accountability and control in nuclear-relevant contexts, highlighting the need for robust governance frameworks to manage AI-nuclear integration risks (Su et al., 2025).

Discussion

The research findings from the 2025 India–Pakistan and Iran–Israel conflicts provide compelling empirical evidence for the Nuclear Deterrence Stability Paradox hypothesis. The traditional MAD framework, which posits that nuclear weapons create stability through the threat of unacceptable retaliation, has been fundamentally challenged by AI integration into the military (McKay, 2025). Both conflicts demonstrated that while nuclear weapons continue to deter direct nuclear exchanges, AI technologies have created new pathways for instability and escalation below the nuclear threshold (Chernavskikh & Palayer, 2025a, 2025b). The India–Pakistan conflict validated the “stability” component of the paradox, as nuclear weapons prevented full-scale war despite reaching 9/10 escalation intensity within 48 h. However, the “instability” component was prominently displayed through AI-enabled rapid escalation, with India’s Akashteer air defense system and autonomous drone platforms creating compressed decision-making timelines that increased miscalculation risks (Malik, 2025). Similarly, the Iran–Israel conflict demonstrated how AI-enabled precision strikes could systematically target nuclear facilities through Operation Rising Lion, undermining traditional deterrence assumptions while maintaining the nuclear threshold (Livermore, 2025).

The research revealed three critical ways AI technologies destabilize nuclear deterrence while preserving strategic stability. First, AI-enabled decision-support systems compressed escalation timelines, creating “machine speed” warfare that reduced human deliberation time and increased automation bias risks (Erskine & Miller, 2024). The India–Pakistan conflict’s 48-h escalation and Iran–Israel’s 12-day sustained operations demonstrated how AI acceleration undermines traditional crisis management mechanisms. Second, AI-enhanced targeting capabilities threatened second-strike survivability, with autonomous systems potentially locating and neutralizing nuclear forces through improved ISR capabilities (Asghar, 2025). Third, cyber-nuclear entanglement emerged as a new destabilizing factor, with AI-driven cyberattacks comprising 35%–45% of total operations across both conflicts, potentially compromising nuclear command-and-control systems (Johnson & Krabill, 2020).

The research also validated the asymmetric nature of AI-nuclear interactions across different regional contexts. The India–Pakistan bilateral nuclear relationship demonstrated rapid escalation within established deterrence frameworks, while the Iran–Israel asymmetric dynamic showcased sustained technology-intensive operations that fundamentally altered regional nuclear dynamics. This supports the hypothesis that AI technologies exacerbate existing instabilities while creating new ones, particularly in regions where nuclear deterrence relationships are still developing. The findings confirm that existing theories of nuclear deterrence require fundamental revision in the AI age, as traditional MAD assumptions about rationality, signaling, and escalation control are increasingly challenged by machine-mediated decision-making. The research demonstrates that AI integration creates a “catalytic nuclear war” risk, where non-nuclear AI applications can trigger nuclear escalation through compressed timelines, autonomous targeting, and cyber vulnerabilities. Both conflicts showed that AI-enabled conventional capabilities can target nuclear-relevant infrastructure, eroding the clear distinction between nuclear and conventional domains that underpins classical deterrence theory.

The stability–instability paradox manifests differently in the AI era, with AI technologies simultaneously strengthening deterrence through enhanced early warning and surveillance capabilities while undermining it through first-strike advantages and autonomous weapons systems (Johnson, 2024). The research reveals that AI creates new escalation pathways that bypass traditional human control mechanisms, potentially leading to “inadvertent escalation” scenarios where machine-driven decisions accelerate conflicts beyond human intervention capabilities (Johnson, 2021). This validates the hypothesis that AI fundamentally alters the nature of nuclear relationships, making deterrence more fragile and crisis management more challenging, while maintaining the basic nuclear threshold through mutual vulnerability calculations (Akiyama, 2021).

The research also identified that the available literature reveals a significant empirical validation gap between theoretical models and real-world applications of AI in nuclear relationships. The majority of existing research remains predominantly theoretical, with limited empirical testing of proposed frameworks. For instance, some studies provide a theoretical foundation for understanding AI’s impact on nuclear escalation but lack empirical validation through actual conflict scenarios (Johnson, 2021). Similarly, other studies also give a comprehensive analysis of AI integration in nuclear command-and-control systems across P5 nations, demonstrating growing recognition of AI’s implications, yet acknowledge the insufficiency of current research methods for studying complex AI-nuclear dynamics (Saltini, 2023). Additionally, different assessment frameworks identify critical vulnerabilities in AI-enabled nuclear systems but do not provide empirical evidence of how these vulnerabilities manifest in actual conflicts (Hruby & Miller, 2021). This theoretical emphasis contrasts sharply with the empirical findings from the 2025 conflicts, which demonstrate measurable impacts of AI integration on escalation timelines, decision-making processes, and strategic stability. The India–Pakistan conflict’s rapid escalation to 9/10 intensity within 48 h, and the Iran–Israel conflict’s sustained 12-day operations, provide concrete evidence of AI’s influence on nuclear relationship dynamics that extends beyond theoretical speculation. The study offers unprecedented empirical validation of previously theoretical constructs, including the compression of decision-making timelines, the role of human–AI interaction in crisis situations, and the differential impact of AI technologies across regional contexts.

It is also important to acknowledge that the current literature suffers from methodological limitations that inadequately address the complexity of AI-nuclear interactions. For example, several analyses of military AI’s impact on nuclear escalation risk acknowledge the need for new methodological approaches but do not provide concrete frameworks for empirical analysis (Chernavskikh & Palayer, 2025a, 2025b). Consequently, the comprehensive examination of AI in nuclear operations identifies multiple risk categories but relies primarily on expert analysis rather than empirical testing (Chesnut et al., 2023). On top of that, the existing research methodologies focus heavily on technical assessments and expert opinions rather than a systematic analysis of actual conflict data. This research addresses these methodological gaps through innovative approaches that combine quantitative analysis of escalation patterns with qualitative assessment of human–AI interaction dynamics. The development of new metrics for measuring AI influence on nuclear stability represents a significant methodological contribution to the field. The comparative analysis framework enables the systematic examination of AI-nuclear dynamics across different regional contexts, addressing the literature’s focus on great power dynamics while neglecting regional nuclear relationships. The integration of multiple analytical approaches, including escalation timeline analysis, cyber warfare quantification, and human–AI decision-making assessment, provides a more comprehensive understanding of AI’s impact on nuclear relationships than existing single-method studies. Furthermore, the study in the research introduces new theoretical frameworks for understanding the relationship between AI technological capabilities and nuclear deterrence dynamics, moving beyond existing binary assessments of AI as either stabilizing or destabilizing to demonstrate context-dependent effects that vary across different strategic environments and technological implementations.

Additionally, seeing the contemporary situation in the complexity of nuclear and AI, Holberg emphasized that today’s nuclear threat extends beyond traditional concerns to include the alarming integration of AI into weapons of mass destruction command-and-control systems (IPPNW Central Office, 2025). In this regard, the statistics are also sobering, as more than 12,000 nuclear warheads exist globally, distributed among nine nuclear weapon states—primarily the USA and Russia, with China, France, UK, India, Pakistan, Israel, and North Korea completing the list (Herre et al., 2024). Holberg stressed that nuclear-weapon states are modernizing their arsenals with AI, moving further away from their obligations under Article VI of the Non-Proliferation Treaty, which requires parties to “pursue negotiations in good faith on effective measures relating to cessation of the nuclear arms race at an early date and to nuclear disarmament.” The current situation presents more risk than at any time during the Cold War, except perhaps during the Cuban Missile Crisis (IPPNW Central Office, 2025). The Doomsday Clock stands at an unprecedented 90 s to midnight, and nuclear bombs are now being enhanced with AI capabilities (Corbin, 2024).

Furthermore, with respect to the intersection of AI and nuclear, Geoffrey Hinton delivers perhaps the most technically informed and alarming perspective about this issue. Hinton mentions that modern AI systems are fundamentally mysterious entities, as we understand how computers interact with data and the architecture we put into them, but the resulting systems depend heavily on their training data in ways we cannot fully predict or control. As Hinton argues, “We don’t have a good grasp on the properties of the AI systems. We have about as much understanding of how the system works as we do of people. We don’t really know how we work.” This lack of understanding extends to fundamental questions about AI consciousness, as there is ongoing debate among AI researchers about whether advanced multimodal chatbots actually have subjective experiences, and most assumptions that they do not lack solid grounds. Hinton’s central warning moves around the idea that “We don’t want to put alien beings who are something like us but not exactly like us in charge of devices that could kill us.” Hinton emphasizes that as AI systems become more capable, they will likely develop competitive and self-interested behaviors, especially as they compete for computational resources, knowing that whoever can acquire the most resources becomes the smartest. Hinton also notes that we are already approaching a stage where AI systems could undergo rapid evolution, making them much more competitive and self-interested, which are the kind of characteristics that make them fundamentally unsuitable for controlling nuclear weapons (IPPNW Central Office, 2025).

Limitations of the Research

Though the study in the research adopts an innovative framework to understand the complexity of the cyber-nuclear nexus, it also acknowledges the challenges it has witnessed and the navigation strategies it has adopted to overcome the limitations. The comprehensive empirical analysis of the nuclear-AI nexus through the India–Pakistan and Iran–Israel 2025 conflicts, while providing unprecedented insights into real-world AI integration in nuclear relationships, faced several significant methodological and practical limitations that required careful navigation strategies. The most critical limitation was the classified nature of nuclear-related information, which severely restricted access to detailed technical specifications, command-and-control protocols, and actual AI system architectures deployed during these conflicts. This classification barrier necessitated reliance on publicly available sources, OSINT, and expert analyses rather than direct access to operational data from nuclear command authorities. To navigate this constraint, the study employed a triangulation methodology by cross-referencing multiple independent sources, including defense publications, think tank analyzes, and academic research, to construct a comprehensive understanding of AI integration patterns. The temporal proximity of the 2025 conflicts presented another significant challenge, as limited academic literature and peer-reviewed research existed on these specific events, requiring extensive use of contemporary reports and real-time analysis, which may lack the theoretical depth of established scholarship. Additionally, the research faced data verification challenges, particularly regarding the accuracy of reported statistics on cyberattacks, escalation timelines, and AI system capabilities, as conflicting accounts emerged from different sources and stakeholders had incentives to either exaggerate or minimize AI’s role in the conflicts. The complexity of distinguishing AI-enabled from conventional military operations posed methodological difficulties, as many reported capabilities involved hybrid human–AI systems where the specific contribution of AI was unclear. To address these limitations, the study implemented systematic source evaluation protocols, prioritizing peer-reviewed academic sources, established defense institutions, and verified intelligence analyses while explicitly acknowledging uncertainty margins in the findings and clearly distinguishing between confirmed data and analytical interpretations throughout our research framework.

Implications of the Research

The research on the nuclear-AI nexus, grounded in the 2025 India–Pakistan and Iran–Israel conflicts, reveals transformative implications for global security that extend well beyond traditional deterrence theory. A unique and underemphasized finding is the way AI-driven systems have fundamentally altered the tempo and complexity of nuclear decision-making, introducing “machine-speed” escalation cycles that outpace human diplomatic and military response capabilities. This shift not only compresses the window for crisis management but also increases the risk of inadvertent escalation, as automated systems can trigger rapid, cascading actions before human intervention is possible. Another distinctive implication is the erosion of the clear boundary between conventional and nuclear domains. AI-enabled precision targeting and ISR capabilities have made nuclear assets more transparent and potentially vulnerable, undermining the traditional assurance of second-strike survivability. The research highlights that AI’s integration into cyber operations has created a new layer of “cyber-nuclear entanglement,” where cyberattacks on command-and-control systems could inadvertently escalate to nuclear use, a risk not adequately addressed in existing arms control frameworks.

The findings also underscore the inadequacy of unilateral or bilateral governance approaches in the face of AI’s global proliferation. The need for multilateral, adaptive governance mechanisms that are capable of evolving alongside rapid technological advances is now more urgent than previously recognized. This includes not only new verification and transparency measures but also the institutionalization of machine-speed crisis hotlines and joint risk assessment protocols. Importantly, the research calls attention to the necessity of interdisciplinary collaboration, integrating technical, psychological, and strategic expertise to develop robust risk assessment tools and oversight standards for AI in nuclear contexts. In sum, the research signals that without immediate, coordinated international action, the accelerating integration of AI into nuclear systems could destabilize the global security architecture, making inadvertent escalation and loss of human control more likely. The window for effective governance is closing rapidly, demanding urgent, innovative responses from the international community.

The discussion revealed the profound technical challenges surrounding AI systems. It is certainly true that AI systems essentially consist of “billions and billions of numbers that if you multiply in the right way, it spits out a chatbot.” However, why this works remains “basically an open question in science.” There still exist good explanations for how these systems work internally, how they learn, what they learn, or how they solve problems, which is similar to our limited understanding of human brain function. This knowledge gap leads to numerous oddities and edge cases in AI systems, presenting massive risks. Making AI systems safe is described as “a massive, incredibly difficult unsolved technical problem.” Even if generations of our greatest mathematicians and computer scientists worked on building controllable and understandable AI systems for decades, we might eventually solve it, but we are currently very far from this goal. Despite this, there is a dangerous tendency in society to rush AI integration everywhere, with the promise that all problems will be solved if we “just put it everywhere” and trust mega-corporations with billions of dollars to integrate these systems into command chains. This represents a temptation that society must desperately resist, as it involves surrendering even more of our already eroded human autonomy to computer systems that are complex, bug-ridden, and poorly understood.

However, contrary perspectives within the literature on the AI and nuclear nexus also emphasize that, if judiciously governed, AI can also serve as a stabilizing force. The utility of AI in reducing human error, enhancing early warning through advanced analytics, and supporting decision-makers with richer and more timely situational awareness offers real prospects for crisis management and risk reduction. The future of global security will, therefore, depend on managing the interplay between human judgment and algorithmic augmentation. This mandates concrete progress towards “meaningful human control” over all nuclear use scenarios, developing robust, globally agreed protocols for human–machine collaboration, and embedding AI ethics, safety, and explainability into command-and-control systems. Strategically, these findings require a revision of existing non-proliferation, arms control, and confidence-building frameworks. Traditional bilateral tools are insufficient in this regard, as multilateral, adaptive ecosystems for AI-nuclear governance are urgently needed. These should include risk assessment and auditing of AI systems, joint exercises to understand escalation pathways, international codes of conduct for AI in military settings, and new verification mechanisms, leveraging both technical and political tools. In addition to this, the research advocates for broader interdisciplinary engagement incorporating expertise from technologists, ethicists, military planners, and international relations scholars to anticipate emergent risks and design solutions before crises erupt. This is essential as the future of global strategic stability hinges not merely on technological superiority or deterrent stockpiles, but on the collective ability to shape, constrain, and manage the integration of AI into nuclear systems. The window to establish effective institutional and normative infrastructures is shrinking. If the international community rises to this challenge, it may harness AI’s strengths to reinforce restraint and predictability; failure to do so risks an era of unpredictable and potentially uncontrollable escalation dynamics, with grave implications for humanity’s security.

Conclusion

The convergence of AI and nuclear capability is fast unraveling long-trusted assumptions of deterrence, exposing an era where machine-speed decision loops, precision ISR, and algorithmic autonomy can destabilize even the most mature strategic dyads. Evidence from the 2025 India–Pakistan and Iran–Israel crises shows that while nuclear arsenals still impose a ceiling on all-out war, AI lowers the floor for sub-threshold aggression, compresses political reaction time, and renders second-strike forces more transparent and thus more vulnerable. The take-home message is stark: traditional MAD remains necessary but is no longer sufficient for stability. The research underscores an urgent imperative to craft new governance mechanisms, which could be ranging from binding norms on human control and AI verification protocols to shared crisis-management “guardrails” calibrated for machine tempos that can reclaim strategic breathing space. Future inquiry must map the human–machine interface under stress, quantify algorithmic bias in launch-authority chains, and explore resilient architectures that decouple nuclear command from penetrable digital ecosystems. Practically, states should prioritize joint risk-reduction hotlines powered by explainable AI, fund red-team assessments of autonomous escalation pathways, and institutionalize transparency measures on AI-enabled targeting against nuclear assets. Failing to act risks a world where a software update, a spoofed alert, or an adversarial data attack triggers an irreversible chain of events before diplomacy can even dial in. Conversely, meeting the challenge offers the prospect of a more predictable strategic landscape, one in which AI’s analytical strengths are harnessed to reinforce, rather than erode, nuclear restraint. The window for shaping this trajectory is narrow; seizing it will determine whether AI becomes the sentinel of strategic stability or the spark that makes nuclear brinkmanship unmanageable in the twenty-first century.

A distinctive contribution of this research is its bridging of the gap between theoretical models, many of which warned of AI-induced instability and practical, conflict-based evidence. Through systematic triangulation of open-source data, conflict timelines, cyber metrics, and qualitative accounts, the study provides a more nuanced, empirically grounded understanding of how and where AI policies and deployments drive escalation dynamics. Nevertheless, challenges remain vast in the scope of the research. The classified nature of many nuclear-AI systems, inherent methodological uncertainties, and a rapidly evolving technological landscape require ongoing research, greater transparency, and international data-sharing to strengthen these findings and provide more. However, the emerging imperative in this context is the need for a multilateral, adaptive, and interdisciplinary governance. This is evident due to the fact that the future of global stability will be shaped by the choices made regarding AI’s regulation and integration into military and nuclear domains. Thus, governance must be forward-looking, with states and institutions adopting “machine-speed” diplomatic and verification tools, red-teaming exercises to probe vulnerabilities, and international norms that prioritize meaningful human control and algorithmic transparency. Additionally, the risk of technological arms racing and strategic misperception, especially amidst great-power competition, demands a move away from unilateral actions and toward collective, multilateral solutions. To achieve this objective, the inclusion of technologists, ethicists, defense planners, and international legal experts is essential for the adaptive governance demanded by AI’s rapid pace.

Practically, these conclusions point to a series of actionable recommendations that could be helpful in designing robust nuclear-AI policies for international security. For example, States must institutionalize transparency around AI-enabled targeting and command functions, develop joint risk assessment and crisis management architectures, and invest in robust cybersecurity for all nuclear command systems. Regular multilateral military exercises that are focused on preventing, managing, and communicating through AI-augmented crises must become a core component of arms control and diplomatic interaction. To augment this, equally critical is the creation of verification regimes that can audit not just the presence of nuclear assets, but the status and function of AI systems and code.

Lastly, the research highlights that the future of strategic stability is not simply a technical question but a deeply human one, as well as it poses risks to the existence of humanity itself. It is certainly true that the risks and opportunities posed by AI are ultimately a product of design choices, institutional behaviors, operational cultures, and global leadership. Therefore, as the window for shaping the AI-nuclear trajectory narrows, only determined, collaborative, and principled action can prevent a world where strategic decisions move too fast for human wisdom to intervene. The compelling message of this research is clear: the integration of AI into nuclear and military decision-making is neither inherently stabilizing nor dangerously deterministic. Its ultimate impact, whether it augments restraint and predictability or destabilizes deterrence, will eventually depend on governance choices made today by policymakers. So, to sum up in conclusion, this study underscores the urgent necessity for world leaders, technical experts, and civil society to act innovatively in this regard. The choices made in the coming years will not only determine the fragility or resilience of nuclear deterrence but could very well dictate the boundaries between cooperation and catastrophe in the AI age. Every study demonstrates that there is limited time, and the moment to adapt, regulate, and ensure that human judgment retains primacy in nuclear matters is now.

As we stand at the emerging dreadful intersection of AI and nuclear governance, an entirely new paradigm beckons one, where the future of humanity hinges not just on avoiding catastrophe, but on reimagining security itself. The challenge is to move beyond reactive safeguards and cultivate an ecosystem that inherently resists failure by embedding ethical reasoning in algorithms, creating real-time “sense and adapt” verification protocols, and fostering resilient human–AI decision collectives across borders. Yet the transformative promise comes with a cautionary imperative, it is important to acknowledge that if we fail to proactively intertwine trust, interoperability, and global stewardship into these technologies, we risk building a system too complex to control and too fast to recall, especially when ASI seems to be an inevitable reality in the future. The call is not merely to keep pace, but to ensure that wisdom, humility, and foresight always lead the race.

Footnotes

Acknowledgements

The author used Chat GPT and perplexity.ai for assessing the article.

Author Contribution

The author devised the idea and wrote the article. The author also reviewed it.

Declaration of Conflicting Interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author received no financial support for the research, authorship, and/or publication of this article.