Abstract

Digital content creation is a growing area of labour in Canada. Alongside the development of this labour market, it has been reported there are rising issues of harassment, racism, and racial representation. Research germane to this area has provided rich qualitative accounts of how harassment and social oppression impact marginalized content creators. This study builds on this scholarly area to demonstrate quantitatively the ways in which harassment manifests in a Canadian setting. Using data from an online survey targeting Canadian content creators (

Introduction

Tarte Cosmetics, a cosmetic brand popular with influencers, has made multiple headlines regarding #TrippinWithTarte, its annual promotional campaign where they invite dozens of influencers to an extravagant all-expenses paid-trip in exchange for social media posts showcasing the experience and products gifted (Kircher, 2024). Annually hosted since 2015, this trip has been plagued by accusations of a lack of diversity and racism, with public outcry regarding the uneven racial distribution of invited creators, as well as reports of mistreatment by invited racialized creators (Mendez, 2022; Saha, 2023). The issues brought up by #TrippinWithTarte are not unique to the brand, as it has been reported widely that the influencer labour market is plagued by a lack of racial representation and racially motivated mistreatment and harassment (Graham, 2019; Talbot, 2019). Claims like this have become widespread across all genres of content creation, and there is a lack of empirical research detailing how pervasive racial discrimination is in the content creator industry, both in terms of its makeup as well as in how it structures racialized content creators’ experiences.

Research on racism in digital media is situated within critical race theoretical frameworks that critique the perceived neutrality of platforms, which uncovers the ways in which racial domination is practiced tacitly through policies, affordances and lack of intervention (Benjamin, 2019; Hamilton, 2020; Matamoros-Fernández and Farkas, 2021). Among this literature, a notable stream has focused on the proliferation of racism and incivility in online environments (Eschmann et al., 2023; Ortiz, 2019), which has been expressed in the form of online harassment (Lawson, 2018). These forms of incivility have been theorized to be an extension of the perceived neutrality of platforms, as racist and/or aggressive expressions become ‘free speech’, which works to individualize racism and maintain the perception that platforms are bias-free environments that facilitate various forms of expression (Ortiz, 2021).

When looking specifically at incivility vis-à-vis the ‘platformized cultural industries’ – namely, the cultural production that is shaped and organized by digital platforms’ economic, infrastructural, and governmental logics (Nieborg and Poell, 2018) – a growing body of research has examined the rise of online harassment, particularly though phenomena such as ‘cancellations’ (Lee and Abidin, 2021; Lewis and Christin, 2022) and ‘anti-fandoms’ (Duffy et al., 2022; Rauchberg and Maddox, 2024). These forms of incivility have been studied as follower-driven responses to perceived moral transgressions and have been framed as increasingly normalized and widespread forms of disciplining creators, with research also identifying that these practices affect marginalized populations in higher proportions (Sobieraj, 2020; Uttarapong et al., 2021). The management of this harassment by content creators has also been subject to scholarly inquiry, with studies identifying how creators engage in invisibility and sociotechnical pushback practices as a means of taking control of harmful hypervisibility (Stegeman et al., 2024; Zuzunaga Zegarra, 2024).

Platform-mediated employment is a growing area of labour in Canada, with estimates reporting that in 2023, 927,000 Canadians had engaged in some form of platform-dependent gig work, which represents approximately 3.3% of the total Canadian population (Hardy, 2024). In this context, it becomes imperative to investigate the form and experience of those participating in content creation as a form of precarious, platform-dependent work. This is especially critical when considering the structural inequalities and harassment that shape this labour sector (Cottom, 2020; Hund, 2023).

This study examines racial discrimination in the Canadian content creator environment through analysis of an exploratory online survey targeting Canadian content creators working in Canada's most diverse cities (

Understanding workplace harassment

Workplace harassment, which also goes by the names workplace bullying or mobbing, is broadly defined as a spectrum of repeated and regular hostile relations at work directed towards subordinates, coworkers or superiors that results in negative outcomes for those being harassed (Einarsen et al., 2020a). Workplace harassment can include physical, psychological, and sexual harassment (Otterbach et al., 2021). Empirical research highlights that although workplace harassment can be conceptualized as an interpersonal issue, workplace settings play an important part in creating, structuring and reinforcing negative work relations (Einarsen et al., 2020b; Francioli et al., 2018; Rai and Agarwal, 2018). Some factors that impact working environments negatively are workplace stress and pressure, as well as workplace governance that tacitly supports hostile behaviours through lack of policy and procedural direction (Salin and Hoel, 2020). Therefore, we can understand workplace harassment as mediated through structural constraints that allow for the proliferation of hostile work relations.

Workplace harassment has been linked to a number of adverse consequences for victims, such as negative mental health outcomes, disengagement and lack of productivity, and substance use (Francioli et al., 2018; Rai and Agarwal, 2018). Other important factors in understanding the prevalence and scope of workplace harassment include belonging to marginalized groups, such as women, racialized peoples, and immigrants (Krieger et al., 2006). Incidences of workplace harassment have been found to be aimed at those in lower positions of power, who are more often women and racialized individuals (Otterbach et al., 2021; Salin, 2021). Interestingly, the literature on this topic remains inconclusive, as studies looking at gender and race-based differences in workplace harassment are inconsistent (Krieger et al., 2006; Otterbach et al., 2021; Rosander et al., 2020). For instance, Krieger et al. (2006) find in a dataset comprised of union members employed in a variety of blue-collar workplaces in Boston, US, White men reported the highest prevalence of workplace abuse. Similarly, Otterbach et al.'s (2021) cross-cultural analysis of survey data from 36 countries finds that women in countries with greater gender equality measures are most likely to perceive experiencing workplace harassment.

These results may be explained by understanding how workplace harassment is measured, as well as the cultural context that informs perceptions of what constitutes this term. Some of the issues with regard to measurement are that many studies rely on perceived subjective measures of harassment, as well as the role of social desirability bias in mediating self-disclosures of harassment (Nielsen et al., 2010). Looking at cultural context, the social location of respondents intercedes to shape understandings of harassment. Krieger et al. (2006) theorize that white working-class men are likely to have higher standards for treatment in the workplace and are therefore more likely to identify and name workplace harassment. Additionally, Otterbach et al. (2021) discuss how increasing gender equality in society at large allows for the naming and identification of workplace harassment, which leads to increased reports of such behaviour.

In a Canadian context, dominant narratives of multiculturalism and tolerance may contribute to perceptions of the incidence and impact of online harassment, specifically when looking at racialized forms of harassment (Fleras, 2014; Soltani, 2017). Canada's discourse of multiculturalism often works to deny or minimize racism, and frames racially based aggressions as individualized conflict. For racialized individuals, this has the effect of amplifying the harm and undermining their sense of safety, since experiences of racism are invalidated as such. Therefore, it remains important to (1) understand workplace harassment as socio-culturally contingent, and (2) situate its perception and consequences within the groups it is experienced in. While platform-mediated work may not follow the structure of a ‘regular’ workplace, the rise and prevalence of this type of labour requires research to expand its understanding of workplace harassment to include platformized work environments, and the specific and substantive ways in which this workplace harassment is mediated, amplified, and reproduced through platforms and digitally mediated interpersonal interactions.

Harassment in the platformized workplace

Online harassment has become a widespread problem experienced by individuals doing work in the platformized cultural industries. Online harassment can be understood as the ‘intentional behaviour aimed at harming another person or persons through computers, cell phones, and other electronic devices, and perceived averse by the victim’ (Vitak et al., 2017). In particular, research about online harassment has focused on its deployment towards marginalized identities, with much research focusing on how women, persons of colour, and persons identifying as LGBS + are singled out as targets (Cote, 2017; Matamoros-Fernández, 2017; Sobieraj, 2020; Uttarapong et al., 2021).

To understand the forms of harassment experienced by content creators, it is first important to situate this form of labour within the broader structures that shape and maintain it. Content creation labour is platform-dependent, where platforms – which can be understood as sociopolitical regimes (Cottom, 2020) – act as intermediaries for creators, through hosting their content as well as cataloguing and promoting it (Poell et al., 2021; Srnicek, 2016). This develops a structure of dependency for creators, who must comply with platforms’ opaque governance and visibility mandates through trial and error, folk theorizations of algorithmic behaviour, and constant engagement (Arriagada and Ibáñez, 2020; Bishop, 2019; Savolainen, 2022). Given this dependency, creators are particularly vulnerable to the whims of platforms and their algorithmic behaviours, with research finding that this vulnerability is compounded for marginalized creators due to the effects of racism, sexism and intersecting forms of oppression (Glatt, 2024; Love, 2025). To add, creator labour is not considered formal employment, which leaves workers without protections such as paid time off, retirement funds, and HR guidelines to protect them from harm (Duffy et al., 2023). These conditions result in precarity, as creators experience employment insecurity on multiple levels: Through algorithmically mediated visibility, through the continuous demand to adapt to opaque and ever-changing platform demands, and through the absence of institutionalized labour protections.

This is the backdrop against which content creators carry out their work. However, it is also important to consider how the labour practices that comprise content creation labour may expose creators to further vulnerability. As a means of generating relational ties with followers, content creators mobilize and commercialize their authenticity, which Marwick (2015) describes as ‘strategic intimacy’. This entails continuous self-disclosures and engagement with followers, which works to support perceptions that there is unfettered access to creators’ private lives. These labour practices are geared towards maintaining follower engagement and enabling monetization and are a compulsory aspect of content creation labour. Research is now identifying how these practices carry a heavy burden for creators. Compulsory self-disclosure practices open up creators to critique and harassment, which usually intersects with misogyny and patriarchal beliefs about how women should act (Duffy et al., 2022). Further, ‘backlash’ to creator's self-disclosures relies on identity-based prejudices that call on misogynist and racist scripts to reinforce and reify social hierarchies (Sobieraj, 2020). This has deeper impacts for marginalized creators, who face systemic exclusions that limit their visibility (e.g., tokenism, lower pay and commercial exclusion) and therefore face the imperative to perform relational labour in order to generate income (Glatt, 2024).

Forms of and responses to harassment in content creation cultures

When looking specifically at content creators, online harassment has been discussed in terms of ‘cancellations’, whereby networked campaigns seek to address abuses of power through boycotting and targeted online attacks (Lee and Abidin, 2021; Lewis and Christin, 2022). Cancellations, which draw from Black digital discursive practices and broadly situated within what is known as ‘cancel culture’ (Clark, 2020), have been discussed as a tool for individuals in low positions of power to collectively enforce social norms through moral shaming (Marwick, 2021).

This form of networked power has become so widespread within the platformized cultural industries that it has generated dedicated ‘drama’ channels where creators analyze and discuss cancellations (Lewis and Christin, 2022). Studying drama content in YouTube, Lewis and Christin (2022) find that cancellations function as a ritualistic performance of morality and accountability, through which network values are expressed, contested and reaffirmed. Similarly, Lee and Abidin (2021) find in Korea's content creation culture that cancellations operate as networked power leveraged when audiences perceive creators as abusing their privilege.

Other forms of online harassment in content creation and media cultures have also been subject to scholarly attention. Duffy et al. (2022) study of influencer ‘antifandoms’ explores how online communities coalesce around shared dislike or hatred towards media personalities like content creators. These communities – which mostly target women – provide space for followers to critique creators who transgress moral boundaries. These boundaries, as Duffy et al. (2022) find, are marked by gendered expectations of femininity and womanhood, with critiques relying on misogynist and identity-based insults. Similarly, Sobieraj's (2020) analysis of online harassment levied at women journalists identifies the abuse experienced is structural, as it is directed toward perceived threats to hegemony, which focus on those whose identity or ideas are perceived as threatening. This may explain why many report the virulence of digital abuse is unevenly distributed towards those whose social locations have been historically devalued (Cote, 2017; Lawson, 2018).

These forms of harassment can be observed towards high-profile creators who have amassed significant visibility. It should be noted that harassment is not relegated to highly visible creators – harassment occurs in many small ways to all creators regardless of their identity and visibility, and smaller cases of harassment are understudied in the literature (Gillespie et al., 2023). This tells us the risk of online harassment is endemic to working in the platformized cultural industries (Duffy et al., 2023). However, there is little information about how the widespread risk of online harassment affects content creators differentially.

Some research has identified how creators manage and respond to this negative attention. Stegeman et al. (2024) study finds how online sex workers engage in various strategies to limit their visibility. Although it seems counterintuitive, visibility management becomes imperative for sex creators facing higher levels of harassment. These strategies include not showing body parts like their face, limiting certain acts to paid subscribers, trying to appear more ‘vanilla’ in public platforms, and cultivating small communities. When looking at responses to harassment, Zuzunaga Zegarra's (2024) study into Canadian creators’ experiences of racism finds that creators engage in a variety of practices in response to harassment and racism, such as using comment filters to hide pejorative terms. Other strategies involve using visibility as a means of shaming or ‘calling out’ harassers, like responding to hateful comments with smiley faces, or reposting mean comments to mobilize supporters.

These examples showcase how creators actively manage harassment through either pre-emptive practices or reactive behaviours. Further, these examples show how these mitigating practices work around the logics and structure of platforms, in that creators modify their practices to leverage platform tools as well as circumvent algorithmic amplification/exposure. The role of platform governance in mitigating harassment is limited, and often places the responsibility for managing harassment on the individual creators. It is important to determine how lacking platform governance may have harmful effects onto creators.

Racism in digital media

The study of racism in online settings is a growing area of research. There is a variety of research available on the topics of ‘online hate’, harassment, and negative experiences online; however, there is a documented ‘under-exploration’ of these social phenomena through the use of critical race theories (Benjamin, 2019; Hamilton, 2020; Matamoros-Fernández and Farkas, 2021; Nadim, 2023). Some argue this is a foundational part of internet studies, as the internet and cognate knowledge was created around notions of ‘colour-blindness’ and the potential of being bias-free. However, multiple decades after its use became widespread, there is more research being done that identifies how internet and communication technologies reproduce and magnify social inequality (Daniels, 2013). In this context, it is imperative to theorize how platformized environments are shaped by everyday practices that support racial domination, and how these practices and the environments in which they happen systematically obscure the articulation of race to present a neutral, bias-free façade.

While the structure of the internet has been historically understood in the context of a neutral, bias-free space, it has also been extensively discussed as a medium that hosts racism, aggression, and bullying, and where overtly racist rhetoric has become normalized (Eschmann et al., 2023; Ortiz, 2019). Some online spaces have become havens for racist vitriol, and it is theorized that this overtly aggressive behaviour is exacerbated due to perceptions that digital spaces are not subject to the same social norms that govern face-to-face interactions (Bouvier and Machin, 2021). Other studies find that those on the receiving end of the vitriol understand their experiences of online racism as not being ‘real’, and therefore do not feel that it is worth discussing or reporting (Ortiz, 2019). This framing works to make racist behaviours appear as individual problems, which decouples overt racism from structural and systemic racism, and reinforces perceptions that platforms are neutral and bias-free (Ortiz, 2021).

These harmful practices do not remain as isolated incidents – they become embedded within the very structure of platformed environments. Research on the affective dimensions of platforms highlights how digital spaces host and amplify racialized affects – such as exclusion and marginalization – which, through repetition, are normalized and sedimented into the cultural and sociotechnical fabric of these environments (Papacharissi, 2014; Ruckenstein, 2023). Through this process of sedimentation, practices of racial domination become naturalized within platform cultures, embedding themselves into everyday interactions in ways that are often obscured by a façade of neutrality. In this context, it is crucial to question how platform settings structure racialized individual's perception of racism and its impact, as well as how ‘neutral’ platform environments can amplify and sustain racism due to their tacit support and amplification of oppressive practices.

It is important to note that online spaces also offer environments for racialized people to challenge racist rhetoric and build community, although the impact of these discourses may be limited given the constraints of the mediums used (Bailey, 2021; Jackson et al., 2020). For instance, Childs (2022) finds that Black beauty creators use platform affordances to call out colorism and pressure brands towards more inclusive practices, with some success at creating more inclusive consumer choices. However, critique is mediated by platform dependency: Creators must balance activism with the economic risk of losing brand partnerships or algorithmic visibility. Managing and responding to racism online carries a burden for racialized individuals, leading to ‘racial battle fatigue’, or the accumulation of stress from racial microaggressions (Williams et al., 2019). In this way, critique is limited by the market logics of platforms, constraining how directly racism can be named and directly affecting the well-being of racialized individuals.

Research questions

Employment through digital platforms is a developing area of labour in Canada, with Statistics Canada reporting that in 2022, 1.5 million Canadians had completed some form of gig work during a 12-month period (Hardy, 2024). Given gig work is comprised of various platform-mediated labour activities (Dedema and Rosenbaum, 2024), content creation has not been studied independently, and therefore not much is known about the demographic composition of this emerging labour sector. Furthermore, the literature identifies racism and harassment as evolving risks for racialized creators. Research on racism and online harassment vis-à-vis content creation has mostly focused on qualitative studies of cancellations and racist incidents (Duffy et al., 2022; Glatt, 2024; Lawson, 2018; Lee and Abidin, 2021; Lewis and Christin, 2022). In this context, there has been an underexploration of how these risks specifically affect the labour of racialized creators.

This exploratory study relies on quantitative analysis of experiences of online harassment among content creators in Canada, with a focus on whether racialized creators experience harassment differently from white peers. In this way, this study provides a substantive contribution to our knowledge of the experiences of this emerging labour sector. The following questions frame the analytic inquiry, and form the key components of this study:

Methods

Study design

This exploratory study uses data from an online survey targeting content creators doing work in Canada's most diverse cities. Specifically, the survey targeted Instagram creators working in Montreal, Toronto, and Vancouver. The sampling strategy for the survey followed a criteria-based approach using cluster sampling (Baltes and Ralph, 2022; Thompson, 1990). Using the software Crowdtangle, I collected all public posts indexed under the six most-used geographically based hashtags, as measured by number of posts indexed under each hashtag (e.g., #torontoblogger, with 1548,893 posts). Public data was collected in 3-month ranges, with data collection taking place between September 2021 to August 2022. Accounts were identified for inclusion into the sampling frame using the following criteria: must have over 2500 followers, must be an account linked to a person (i.e., no business accounts), and must have an email associated with the account. All genres of content creation were included in the sample. Based on these parameters, a total of 650 accounts were identified, of which 113 (18% response rate) responded. These criteria were implemented to target the highest number of active content creators.

Instagram was chosen to recruit Canadian content creators given its prominence as a marketing hub (Dopson, 2023). While the survey focused on Instagram, the study is platform-agnostic: Creators increasingly work across multiple platforms, tailoring their content to their affordances and algorithmic cultures of each platform (Hund, 2023). In this context, this study examines how racialized creators experience harassment and racism across social media, and the specific ways in which different platforms reinforce social inequality.

In exploring experiences of racism among content creators, it remains important to reflect on how my social location shapes my scholarly inquiry. As a racialized, cisgender, heterosexual woman, I situate my analysis within the epistemological position that race is a contingent and contextual

Data collection and analysis

This study received Ethics approval. Potential participants were emailed a link to an online questionnaire covering demographics, account specifications, experiences of online harassment, and, for those identifying as part of a racial/ethnic group, experiences of racism as content creators. To ensure validity, questions were adapted from Statistics Canada's General Social Survey Cycle 30 (2016) and Census of Population (2020), and Harrell et al.'s (1997) Psychometric properties of the Racism and Life Experiences Scales. Questionnaires with more than 25% missing data were excluded, as demographic variables comprised the first quarter of the survey and were essential for profiling Canadian content creators. After exclusions, 103 valid responses remained. Sensitive questions received many ‘don’t know/no response’ answers, which were treated as missing; percentages shown reflect valid responses. To preserve confidentiality and analytic validity, categories with fewer than three cases were merged (e.g., age groups) or, if merging was not viable (e.g., non-binary gender), removed.

All variables were treated as categorical variables. To address low cell counts, five-point Likert scale questions were converted into variables with three ordinal categories. To answer the research questions, racial differences in experiences of online harassment were studied using chi-square test of independence. Chi-square goodness-of-fit tests were used for variables that only had one independent group (i.e., univariate frequencies are presented as in Table 5, rather than bivariate contingency tables as in Table 1). Given the sample size, research questions, and lack of knowledge of the makeup of the Canadian content creator population, these non-parametric tests are appropriate to determine race-based differences in experiences of harassment among creators. The statistical software R (version 4.3.3) was used for all analyses. Significance was accepted at

Sociodemographic characteristics of study participants.

Results

Descriptives

Respondents were asked to answer a variety of questions regarding their socio-economic background (see Table 1). The creators self-reported to be 49.5% white, and 50.5% racialized. In the sample, 87.1% identified as female, while 12.9% were male. Under half of the sample was aged 20–29 (45.5%), while a third were aged 30–39 (33.3%). A fifth of the sample was aged 40 and over (21.2%). In terms of education, a quarter (25.2%) of respondents had high-school and/or some post-secondary, while two-thirds of the sample had a Bachelor's degree (63.1%), and 11.7% reported they had a graduate degree. Compared to census data, this sample of content creators is highly educated, as in Canada, only 26.7% of the population has a bachelor's degree or higher (Statistics Canada, 2022).

Under a third (31.4%) of respondents reported they earned $49,000 or less per year, while over a third (39.3%) reported they earned between $50,000 and $99,999 per year. A third of the sample (29.2%) reported they earned over $100,000 per year. When comparing to the income distribution in Canada, only a fifth (21.2%) of Canadian workers earn over $100,000 (Statista, 2024), which makes this a high-earner sample. When comparing these demographic categorizations across racial background, no significant differences were found.

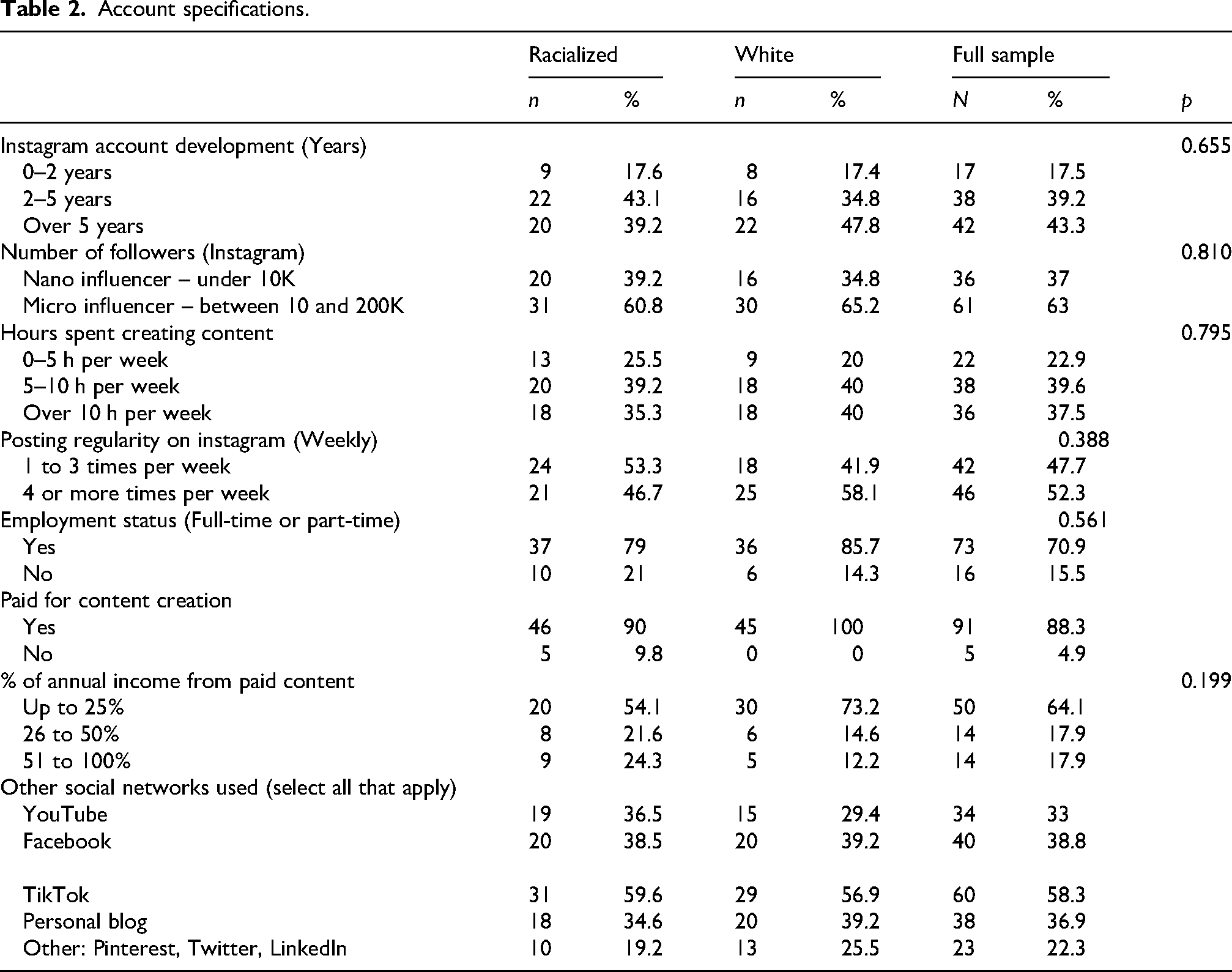

Respondents were asked a variety of questions regarding their content creator work (see Table 2). When looking at the number of followers respondents had in Instagram, under two-thirds of the sample (63%) had between 10,000 and 200,000 followers, while 37% had under 10,000 followers. Almost all of the sample used multiple social media networks to do their work (95.1%), the most popular of these networks was TikTok, with 58.3% of respondents using it. Facebook (38.8%), personal blogs (36.9%), and YouTube (33%) were also popular. Over two-thirds of the sample (70.9%) reported they had a full-time or part-time job outside of content creation, while 15.5% reported not having a job. The majority of the sample (88.3%) had been paid for content creation. When looking at the proportion of their annual income provided by content creation, almost two-thirds of the sample (64.1%) reported content creation made up 25% or less of their annual income, while 17.9% reported it was 26–50%, and 17.9% reported it was 51–100%. These results were not significant across racial background.

Account specifications.

Online harassment and racism

The first research question asked

These results showcase how harassment has become a widespread risk associated with working in the platformized cultural industries in Canada, affecting content creators of different backgrounds. These results are reflective of other studies looking at how cancellations have become a commonplace networked tool used to balance power dynamics between visible content creators and the invisible groups that follow them (Christin and Lewis, 2021; Lee and Abidin, 2021; Lewis and Christin, 2022). While there have been theorizations that these forms of networked power may affect creators belonging to minority groups in higher proportions (Duffy and Meisner, 2022; A. E. Marwick, 2021; Sobieraj, 2020), these findings demonstrate the risk of harassment exists for

Incidence of online harassment.

Respondents were asked to indicate their agreement with questions regarding their perceptions of the consequences of online harassment, and the changes they had implemented to their work practices as a means of mitigating the effects of this risk. Nine in ten respondents (91.2%) agreed online harassment was harmful to content creators, with similar results when asked if they agreed online harassment was a serious issue facing their line of work (86.8%). However, over half of the sample (51.7%) agreed online harassment is part of being a content creator. These somewhat paradoxical results suggest that while harassment is widespread, its incidence and/or severity is relatively low and therefore not a quotidian concern for some content creators. These results were not significant across racial background.

When looking at how the sample responded to online harassment, respondents were asked whether they agreed social network platforms have effective policies to protect them from harassment. Under two-thirds of the sample (60.7%) disagreed with this statement. Respondents were asked whether they changed their behaviour online to avoid harassment, with over half (53.8%) agreeing that they do, while 58.2% reported they tried not to talk about controversial topics. These results were not significant across race and the distributions across racial groups were similar.

However, when respondents were asked if they agreed they felt safe sharing information about themselves online, results across racial background varied. Only 17.2% of the racialized content creators sampled agreed they felt safe sharing information, compared to 44.7% of White creators. The chi-square test of independence performed to assess this difference was statistically significant (χ²=11.352,

These results can be understood in several ways. First, racialized and white creators could have differing understandings of what constitutes harassment with regard to its severity and incidence, which would explain the lack of significance between groups. In Canada, dominant narratives of multiculturalism and tolerance often minimize racism, reframing racial harassment as isolated conflict (Fleras, 2014; Soltani, 2017). Such framing can contribute to underreporting among racialized creators, while simultaneously amplifying harm by invalidating their experiences. This theorization is supported by research on workplace harassment which finds inconclusive data on whether marginalized populations experience a higher incidence of workplace harassment due to varying socio-cultural understandings of what constitutes this harm (Krieger et al., 2006; Otterbach et al., 2021; Rosander et al., 2020).

Second, we can analyze these results through a social power lens, which is where individuals with less social power may feel more intimidated or stressed by hostile behaviours, which could produce feelings of a lack of safety and belonging (Salin, 2021). This would explain why racialized creators are more likely to feel unsafe sharing information online. Although online harassment in this context is not explicitly tied to overt racism, its consequences reflect systemic forms of racism embedded in internet structures. These tacit forms of technological discrimination produce differential outcomes for racialized creators, often resulting in heightened feelings of vulnerability (Benjamin, 2019; Daniels, 2013; Noble, 2018).

Effects of online harassment.

*

Experiences of racism.

*

The second research question asked

In addition, 52.8% of racialized respondents reported they often or always had observed limited participation in opportunities or access to resources for racialized peoples (χ²= 6.5,

When asked if they had been called insults or received aggressive comments related to their race, 76.9% of racialized respondents responded that had never or rarely happened to them (χ²= 33.385,

Limitations

Limitations of this study include low sample size, which limits the strength of the statistical analyses. In addition, the use of a non-probability cluster sampling strategy limits the representativeness of the sample, given that the clusters selected may not mirror the population. Selection bias may also limit the representativeness of the sample, as individuals with particular experiences or opinions might have been more likely to participate.

Furthermore, the statistical parameters of the population of content creators in Canada remain unknown, which limits researchers’ ability to determine if this sample is representative. Recent Statistics Canada Labour Force Surveys have gathered information on the number of Canadians engaging in digital platform employment; however, these surveys aggregate a broad range of platform-based workers, including those engaged in delivery, task-based services, and social media (Hardy, 2024). Therefore, there is currently no disaggregated data capturing the size, demographics, or characteristics of the Canadian content creator population. This absence of baseline data poses a significant limitation for sampling and generalizability in studies of this distinct segment of the Canadian digital economy. In light of this gap, the present study offers an exploratory contribution by providing initial insights into the composition of, and social challenges faced by content creators in Canada.

Discussion and conclusion

Analysis of online harassment demonstrated online harassment occurs widely, and that the majority of respondents sampled experienced a form of harassment. The most predominant form of harassment was through direct forms of communication, such as emails, comments, instant messages or postings. However, a large number of respondents also reported experiencing public campaigns containing threatening or aggressive comments. In this context, it becomes imperative to conceptualize online harassment as an established workplace hazard that creators must manage regularly.

An important dimension of online harassment is that it may not affect all populations equally. Findings showed that although both racial groups strongly believed online harassment was harmful to content creators, only 17.2% of racialized respondents felt safe sharing information online, compared to 44.7% of white respondents (χ²=11.352,

Situating these findings within the content creator labour economy, it is critical to theorize the compounding and material effects of these disparities for racialized creators. Sharing personal information with followers is a key mode of affective and immaterial labour through which content creators build personal brands, cultivate community, and monetize their platforms (Duguay, 2019; Glatt, 2024; Raun, 2018). For racialized creators, the fear or inability to safely engage in this form of labour due to harassment not only incurs emotional harm but could result in material consequences – including reduced visibility, lower engagement, fewer sponsorships, and ultimately, diminished income opportunities. In this way, experiences of online harassment for racialized creators are not merely symbolic or emotional; these experiences directly intersect with and undermine their material opportunities.

This highlights how creators not only encounter racialized harassment but must also manage the emotional and strategic labour of responding to it. Existing research shows that creators facing high levels of harassment often adopt practices of invisibility or selectively engage with platform tools to mitigate exposure (Stegeman et al., 2024; Zuzunaga Zegarra, 2024). These remain largely individualized coping strategies, underscoring the need to interrogate the structural conditions that necessitate such responses in the first place. Other studies find that racialized digital interactions produce stress and other adverse outcomes that racialized people attempt to mitigate by seeking online safe spaces that affirm their identities (Williams et al., 2019).

Many qualitative studies have theorized the disproportionate and complex ways in which online harassment affects racialized and marginalized creators (Duffy et al., 2023; Zuzunaga Zegarra, 2024). This study contributes a valuable quantitative dimension to these discussions, offering findings that nuance existing arguments. That is, while harassment is a shared experience among all creators, a key analytic focus should be on its

When looking specifically at racism, the findings demonstrate that for racialized content creators, online racism is not closely associated with overt and/or interpersonal racism. In this context, structural forms of racism appear to be the main way in which this sample experienced racial discrimination. Structural racism manifests in how racialized respondents understood their access to opportunities, as well as through knowledge sharing that provided evidence that racial discrimination exists. While these two measures address perceptions of racial inequity, it is important to understand that these perceptions, which are communally sourced, may offer racialized creators the knowledge to understand, name and contest the invisible barriers they face while doing their work (Bailey, 2021; Jackson et al., 2020).

Furthermore, it is essential to examine how these communal perceptions of racism contribute to the reproduction of systemic oppression within platformed spaces. These perceptions do not emerge in isolation – they circulate through digital environments, shaping a tacit understanding of who belongs and who does not. As Ruckenstein (2023) suggests, networked environments are not neutral informational structures, they are saturated with affect. In this context, such spaces carry and reproduce racialized emotions – feelings of exclusion, surveillance, and precarity – that reinforce broader structures of racial domination. These affective dynamics manifest through recurring narratives about who is afforded visibility and opportunity, and through collective stories reaffirming the presence of racism within the content creator labour environment.

Here, it is imperative that platforms take a proactive role in governing the online environments they host to create spaces that allow everyone to work without fear of harassment. This goes beyond content moderation; it requires a rethinking of platform governance itself. Central to this is challenging the long-standing framing of platforms as neutral environments. The assumption of platform neutrality bolsters the dominant practices that support identity-based harassment, racial domination, and oppression. In doing so, it obscures the ways these harms are reproduced and stabilized through technical systems (Benjamin, 2019; Hamilton, 2020; Ortiz, 2021). Addressing online harassment structurally requires that we interrogate how neutrality functions ideologically to maintain and reify systemic oppression.

In this study, based on a survey of Canadian content creators, I thus display the complex labour environment in which content creators do their work, and the ways in which racialized creators are differentially affected by online harassment and racism. This study puts forth a substantive contribution to our knowledge of online harassment by demonstrating how it happens widely in the content creator sphere, regardless of racial identity. However, the effects of online harassment were found to be experienced differently according to racial group. In this context, it becomes imperative to reconceptualize our understanding of online harassment vis-à-vis platformized labour and shift it from a focus on incidence to a focus on consequences. That is, how harassment affects certain populations differentially, and how through these effects it reproduces social inequality. Shifting our analytical focus to these factors would allow us to investigate how creators address and manage this endemic risk, as well as narrow the research locus to centre on marginalized creators as a way of understanding how online harassment may hinder democratic, inclusive engagement with the platformized cultural industries.

Footnotes

Ethical approval

This study was approved by the Queen's University General Human Research Ethics Board (approval: 6034435) on September 07, 2021.

Funding

The author disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Social Sciences and Humanities Research Council of Canada (SSHRC; grant number 752-2021-2211).

Declaration of conflicting interest

The author declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.

Informed consent

Respondents gave written consent before starting the survey.