Abstract

Using Google as a case study, this paper analyzes the online advertising sector's search industry. Arguing that search engines are not engines, it examines Google Search as a supply chain composed of five nodes: Crawler, Indexer, Ranker, Publisher, and Data Center. The final product of this chain is the search service presented to Google consumers, complete with ads and ancillary information. Empirically showing that production and exchange are one economic process in most of these supply chain nodes, we argue that treating them separately is neither accurate for description nor feasible for theory. In reality, Google Search produces and exchanges simultaneously. We observe that the social sciences lack an approach to analyze such unitary production/exchange relations in computational industries. Addressing this blind spot, the paper offers an alternative to describe how the search industry works by bridging supply chain literature in management and the economization approach in sociology. We introduce the digital–industrial economization approach to analyze Google Search's supply chain, in which a variety of nodes concurrently produce, exchange, barter, maintain, and/or store search products and their inputs. We present the term prodexchange to characterize these novel integrated production/exchange combinations.

Introduction

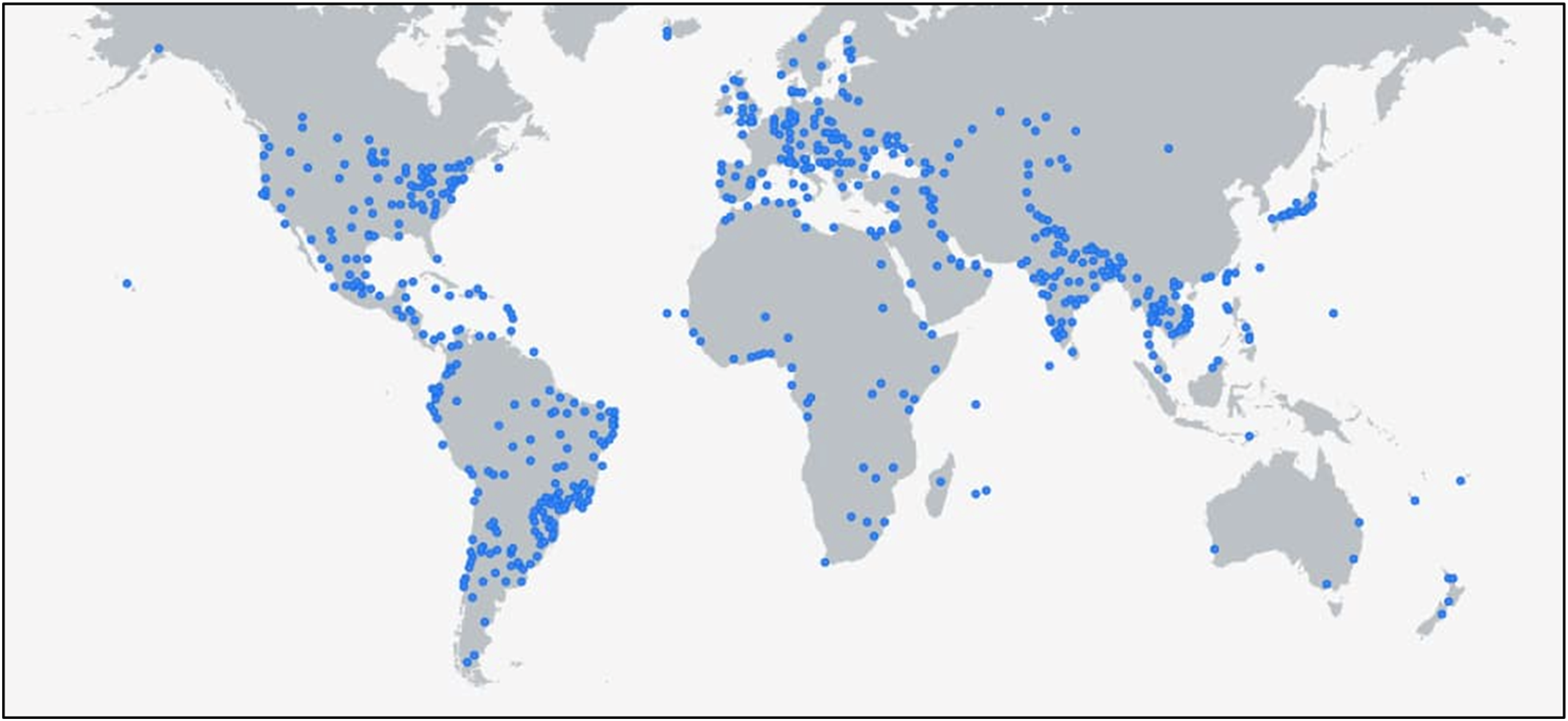

A search engine is one of the largest economic infrastructures ever built. If considered a single facility, Google would be larger than the city of New Orleans, employing 183,323 full-time workers—and roughly as many subcontracted workers—as of the end of 2024 (Alphabet Inc., 2025). 1 This computational–industrial megacorporation delivers a variety of products, with Search as its main profit stream with a massive consumer base. As the largest and most profitable advertising corporation in history as of 2025, Alphabet reported $350.0 billion in revenue—a 14% increase from the previous year—and a net income of $100.1 billion (Alphabet Inc., 2025). Search advertising accounted for the majority of ad revenue at 57.7%, followed by video ads (11.3%) and display ads (10.8%). Google Cloud contributed 10%, with the remaining revenue coming from Google Play, hardware, and miscellaneous income (Alphabet Inc., 2025).

Such success is due to Google Search's immense popularity across the globe. Approximately 90% of all searches go through Google, 2 making googling one of the most pervasive and common human experiences in world history, a fact underlined even by its competitors. Calling the internet “the Google Web,” Microsoft's Chief Executive Officer Satya Nadella once said, “You get up in the morning, you brush your teeth, and you search on Google.” 3

Despite its global relevance, the material workings of the search industry have only been researched in a limited fashion within the social sciences, with the exception of a body of expanding critical literature that focuses on its consequences. Yet many of these critical studies pay scant attention to the search process itself, at times assuming that we “know” what search is. This paper observes that search engine operation is not obvious, and lacks certainty, and social science can learn much from taking a closer look at the internal workings of search engines by opening their “hoods.” Moreover, we show that an underdeveloped examination of search hampers opportunities for regulation in the online ad sector, an economic universe that by and large funds today's internet (MacKenzie and Caliskan, 2025).

To start, the use of the term “engine” is misleading. In reality, a search engine is neither a machine nor a device operated by a user to browse the internet. It is an industry that comprises competing search firms such as Google, Microsoft (Bing), Yandex, Yahoo, Baidu, and DuckDuckGo operating on a global scale. These firms each produce a private index that claims (successfully) to represent the entire World Wide Web. Their search products invite consumers to type queries which prompt those firms to search for answers in their own private indexes and then display a limited number of results presented with ads. Google dominates this sector with near-monopolistic power, a claim currently under legal scrutiny in the US, which Google denies (DoJ, 2023).

So, how does the search industry really work? To answer this, we draw on interviews, participant observation, and training courses that are part of a larger corpus of data from our research on online advertising. That research includes 121 interviews with 97 participants in online advertising and attendance at six in-person and 12 online sector conferences, including analyzing all Google Marketing Live events. We completed Google's asynchronous marketing and ad tutorial programs and attended 35 other advertising industry meetings (all but one online), and one of us was self-trained as a Google evaluator, passing an online certification test. We also draw on McGowan's experience as an advertising sector professional, working in online ad agencies from 2014 to the time of writing. Additionally, we completed two online training courses on search engine optimization (SEO) and managed two SEO campaigns for a total of nine months with a combined budget of 7500 USD.

We also conducted four design experiments to dismantle and re-design a search engine: first with strategy and design graduate students, second with professional economic sociologists, third with anthropologists, and finally with management scholars. 4 Using design as research helped us better analyze the general workings and components of a search engine and the design principles that inform its making and maintenance, helping us clearly observe it as a supply chain. We argue that social scientists have much to learn from intangible design methods that can help researchers and design professionals take apart a working complex system such as a supply chain to study it. Such empirical examinations of material components of economic platform formations such as search engines work by reverse-engineering their components and processes with other designers or scientists.

Search engine firms construct and manage a complex computational–industrial supply chain that simultaneously produces and exchanges specific inputs in order to manufacture a search service product. The operations research subdiscipline of management examines such complex processes through supply chain analysis, defining a supply chain as a “network of materials, information, and services processing links with the characteristics of supply, transformation, and demand” (Chen and Paulraj, 2004: 119). In this paper, we analyze the search industry's supply chain by describing its nodes, namely Crawler, Ranker, Indexer, Search Results Publisher, and Data Center. Revising Mentzer et al.'s (2001) approach to nodes as places where the production or movement of goods and services take place, we define a node in a digital–industrial supply chain as the largest autonomous single site of processing, producing, storing, or exchanging data or other material inputs.

Drawing on computer scientist Niklaus Wirth's (1975) formulation, “algorithms + data structures = programs” and its elaboration by anthropologist and computer scientist Paul Dourish (2016), we argue that it is more accurate to see search supply chain nodes as sites where specific programs are at work. Google's algorithms alone cannot produce an index, nor can they crawl or rank data. They rely on receiving data that Google does not produce but obtains through bartering with consumers; it then processes the data using its own algorithms within a specific program. Crawling, ranking, indexing, and publishing function as programs, not merely as algorithms. The distinction is significant in computer science. An algorithm consists of a set of rules designed to perform a specific task, but unlike a program, algorithms cannot execute tasks on their own. They are implemented within a program—a concrete, executable process running on a machine (or, in the case of search, ensembles of tens of thousands of interacting machines)—by consuming existing data to generate new data. Using a common analogy from computer science, algorithms are like recipes, while programs represent the act of cooking. To serve the dish, you need the recipe (algorithm), the ingredients (data), and a fully powered kitchen (Data Center).

Thus, as the nodes of the search supply chain produce their output, they simultaneously enter into a barter exchange relation with actors or other networks owned by actors (such as websites)—a fact not addressed in the supply chain literature. Without such exchange relations, search supply chains do not work. To address this gap, we propose a theoretical strategy by bridging supply chain literature with the stacked economization approach to study platforms (Caliskan et al., 2024) to make visible and analyze the synchronicity of production and exchange processes. We refer to this perspective as the digital–industrial economization approach and propose the term prodexchange to examine how exchange practices themselves function as input creators within production processes on a digital–industrial platform that simultaneously or separately stacks production and modes of economization such as barter, market, or gift. Caliskan et al. (2024) define economization in platform economic contexts as follows: Economization is a distinct process that involves designing, defining, and linking the foundational elements of a socio-technical agencement (e.g., devices, actors, networks, and representations) with an organized intention that is seen as economic by scientists or other actors … We [define] platforms as 1) stacked economization processes that combine or independently operate various economization modes, including gift-giving, bartering, and marketization, 2) equipping actors with the means to imagine and discover their needs in an exploratorium, 3) where a digital–material interaction design entails layers of power relations. (p. 3)

The paper is organized as follows: First, we present a literature review that examines how social sciences approach platforms in general, and search in particular. Next, we provide a comparative analysis of supply chain literature, focusing on the search supply chain as a convergence of upstream, midstream, and downstream economic processes. Finally, we apply a digital–industrial economization approach, integrating supply chain frameworks with the stacked economization approach, to analyze the intertwined production and exchange relations at each node of the search supply chain.

The paper examines Google's search engine supply chain as a coming together of five nodes: the Crawler, Indexer, Ranker, Search Results Publisher, and Data Centers. The Crawler gathers data from the digital world, feeding it to the Indexer, which organizes it into a private catalog shared across global Data Centers. The Ranker customizes and prioritizes search results for users based on relevance and quality. The Search Results Publisher presents what we call “Searchpaper” pages that include ads and curated content, resembling a newspaper. Finally, Data Centers act as the infrastructural backbone, hosting computational services through an extensive network, making Google a leader in Data Center operations.

Next, drawing on an interpretation of the research findings, we articulate the digital–industrial economization approach. This perspective uncovers how various modes of economization, such as gift, market, and barter, are integrated into and interwoven with production processes themselves. Through this lens, we demonstrate that the synchronicity and/or simultaneity of production and exchange reflect a new form of economic activity, which we term “prodexchange.” Also drawing on empirical research on labor platforms that document stacked exploitation (McKenzie, 2024), this approach captures the simultaneity of production and exchange processes, challenging traditional distinctions between the two that are curious agreements in Marxist, institutionalist, and neoclassical approaches.

Finally, our analysis shows that it is misleading to call actors who interact with Google mere “users.” In reality, these users are Google consumers who barter their personal or website data for other economic gains. These series of barter exchanges constitute a concrete and consequential economic relationship that maintains the very supply chain of Google Search. 5 It is misleading to frame this unequal barter relationship as merely voluntary or gift-like. (Viewing gift-giving as a freely chosen and in that sense voluntary act would be a misunderstanding of the anthropological literature on gifts, but that interpretation tends to be built into the everyday understanding of “gift.”) When consumers refuse to exchange their personal data, they effectively become digitally invisible. Similar to how most students find it difficult—if not impossible—to withhold information when asked by a professor, the relationship between Google and its users is marked by a significant power imbalance. In such unequal dynamics, the exchange of data is not voluntary; it is automatic and almost unavoidable.

The paper concludes with a discussion of future research avenues, particularly how the concept of prodexchange and the digital–industrial economization approach could be applied to other computational industries, how the final marketized exchange processes in Google's ad exchange, AdX, might be studied as a corollary of this analysis, and finally, the regulatory and taxation implications of our supply chain analysis of Google Search.

Literature review

There are two dominant approaches to understanding economic platforms, neither fully explaining how they function. 6 The first is articulated by Tirole and Rochet and locates a platform as a market, yet a bit more developed, and a bit more complex: if markets cross-subsidize between users who transact, then they are platforms (Rochet and Tirole, 2003). Such platform anachronism (Caliskan et al., 2024) lends itself to prompt analysis and regulation, yet without explaining how platforms work on the ground. The second approach is platform reductionist (Caliskan et al., 2024) analyzing only the consequences of platforms and registering their negative consequences (Zuboff, 2015), again, without helping us understand how they work on the ground. It seems like the entire field of social science leaves aside the consequential question, how does a platform such as Google Search work?

There are exceptionally powerful accounts of platforms, as well as Google, that do not reproduce reductionist tendencies (Auletta, 2009; Hillis et al., 2012; Ippolita, 2013; Levy, 2011; Luchetta, 2014; Schmidt and Rosenberg, 2014; Spink and Zimmer, 2008; Vaidhyanathan, 2011). First, platforms have social networking capabilities that require users to make profiles and connect with others to access their systems, generating personal and interactive data (Ellison and Boyd, 2013). For example, Google users have more agency and a better experience with the platform when they are logged in (Rogers, 2023). Platforms are also distinctly programmable infrastructures (Helmond, 2015) that can connect technically with other platforms to enable the data flows that power them (Van Dijck et al., 2018). They are made durable through a variety of sociotechnical relationships (McIntyre et al., 2021). Programmability enables them to consolidate as they expand, “platformizing” the web (Plantin et al., 2018) and while doing so creating a lucrative “audience economy” for advertisers (Van der Vlist and Helmond, 2021). Being among the architects of these practices, Google's dominance is not accidental; its infrastructure was scaffolded via corporate lock-ins and made durable via its shaping of the web through “backlinks, trusted users, and advertising” (Ridgway, 2023). Our work recognizes and builds on these material political qualities of user-profiles and platforms’ infrastructural power.

The ability to wall off their systems from the open web, identify users via profiles, and attribute data to those profiles give platforms the capacity for a variety of economic processes. There is literature that argues these systems have ushered in a new age of “platform capitalism” (Srnicek, 2017). This draws attention to how platforms generate value from both the labor of their users and by facilitating new forms of economization. This is evidenced across contexts, such as gig work labor environments (Gallagher et al., 2023), sites of worker resistance against platformization (Chen, 2018), “informational capitalism” practices of knowledge workers (Fuchs, 2010), labor's reaction to dishonest algorithmic governance and scams (Grohmann et al., 2022), platform-mediated labor and its relation to urban contexts (Pollio, 2021), the commodification of attention (Birch and Cochrane, 2022) or solidarity (Soriano and Cabañes, 2020) economies in platform contexts. These arrangements externalize responsibility while centralizing power over consumers (Vallas and Schor, 2020), uniquely positioning platforms to generate value outside of regulations.

There is also an extensive body of work that successfully explores algorithmic bias, inequalities, racial profiling, and social disadvantages perpetuated by platforms that are relevant today (Allhutter et al., 2020; Benjamin, 2019; Eubanks, 2018; Van Doorn, 2017). In one example, Amrute (2016) examines the experiences of Indian Information Technology workers in Berlin to reveal how race and class are intertwined in the global tech industry. She finds that these workers, though privileged as skilled migrants, face racialization and class stratification that impact their professional and social lives.

Specific to search supply chains, Google results have been found to discriminate against people of color, despite what appear to be often intensive efforts by Google to suppress discriminatory results, at least in English (Rogers 2023). Noble attributes this to the hidden infrastructure of partners, connections, and economic mechanisms (2019). Search bias can also perpetuate right-wing extremism, argue Norocel and Lewandowski (2023), because of its algorithmic reliance on the popularity of sources as a metric for relevancy. Ethical dimensions of Google's Autocomplete feature include issues of framing beliefs, individualizing suggestions, and reinforcing biases, and calls its political neutrality and user agency into question (Graham, 2023), privileging Google's “hierarchy of concerns” to users (Rogers, 2023).

Many interpret Google through metaphors, and Google LLC is no exception. Rogers’ recent retrospective provides a comprehensive review of how artists and cultural commentators have described Google Search over the past 20 years (2018). Technical studies showed that Google indexed a small fraction of the web (Lawrence and Giles, 2000) and this selectivity positions Google as a powerful algorithmic “editor” of the internet (Pariser, 2011). Consequently, metaphors such as the “deep web,” “dark web,” and “orphaned websites” emerged, referring to sites that are either unindexable or left unindexed by the search industry (Rogers, 2018). Based on this analysis, Rogers argues that Google is best understood as “mass media” (2018). We concur but extend this notion further, proposing that the supply chain of Google's mass media publishes the Google Searchpaper, as we discuss below in detail. Google's Search extends beyond disseminating information and content; it not only utilizes the infrastructure it has created but continuously develops new frameworks as well (Rieder, 2022). Mager et al. (2023) take this a step further by reframing Google as an active “answer machine.”

Although the perspectives reviewed have helped us understand specific elements of how platforms operate and why they are important to study, they have nevertheless described limited aspects of the Google Search elephant—the trunk, the side, the tail, and their various outcomes. We agree with Nieborg, Poell, and van Dijck's warning that these disparate considerations of platforms do not adequately help us describe and imagine their general political economic working (2023). We still lack a general socio-technical understanding of what Google Search is and how it works.

Drawing on critical platform studies and offering an alternative to platform reductionism and anachronistic tendencies, Caliskan et al. (2024) propose a new research program. They define a platform as a stacked economization process bringing together a variety of modes of economization such as gift, barter, and market entailing layered relations of power. Although the economization research program argues that exchange and production are stacked too and thus should not be treated separately, the economization model has rarely been applied to production relations. By focusing predominantly on exchange, this framework inadvertently risks reproducing a flaw it originally critiqued in other economic studies. While it replaced a static market view with a dynamic agencement model, it has a tendency to exclude the analyses of production. Empirical studies of marketization, assetization, performativity, and market devices have a tendency to address markets exclusively—at best treating production as the production of exchange relations, but very seldom the production of goods/services that are supplied to a market. 7 We argue that an analysis of the intertwined production and exchange relations in Google's search supply chain may suggest the possibility of developing a more dynamic and nuanced framework, which we term the digital–industrial economization approach, that can be applied in other platform contexts.

In developing this framework, we draw on conventional supply chain literature in the operations research subdiscipline of the field of management. Theories of supply chain management evolved as scholars agreed that supply chains are more complex, nuanced, and dynamic than often claimed (Chen and Paultraj, 2004; Cooper et al., 1997; Croxton et al., 2001). In response, Carter et al. (2015) advocated for a clear understanding of how they work before an analysis of their management. Their work helps us understand supply chains as networks with many links, rather than simple dyadic relationships (Choi and Dooley, 2009), with nodes as agents and links as transactions (Carter et al., 2015). Supply chains are now seen as complex adaptive systems (Choi et al., 2001); with agential nodes that are sometimes the product of the convergence of other supply chains (Carter, Rogers, and Carter et al., 2015). Hald and Spring (2023) invite scholars to approach supply chains by using Actor-Network Theory, centering heterogeneity and performativity in analysis. Drawing on these approaches and by isolating the nodes of Google Search's supply chain, recognizing their agency and internal workings as they stack modes of economization, we show that we can better understand how Google Search operates.

Google Search supply chain and its nodes

The Crawler

All search supply chains start with crawling data; this first node continuously feeds Google with fresh information. Google interprets the online world through Uniform Resource Locators (URLs), which function as addresses for accessing specific pages. URLs consist of a protocol specification for communicating with a website, such as Hypertext or File Transfer Protocol. This is followed by the domain name, which identifies the page's location. Next is the path, directing to a specific site on the page.

The Crawler starts with a list of known “seed” URLs. It then “crawls,” typically by following the links from the corresponding websites, then repeating the process for the websites to which the links take it, and iteratively expanding its “knowledge” of the content and structure of the web. Crawler bots are also barter bots, bartering data with a right to be seen by Google. A bot converts the domain name into a technical internet protocol address. This process enables the Crawler to connect to the server hosting the specific page. Identifying itself as “Googlebot,” and essentially digitally knocking on the door of a page, the Crawler requests access and thus proposes a barter. Websites can instruct Googlebots on which parts of the website are off-limits for crawling, thus letting bots know the nature and extent of barter. Once permission to barter is granted, the Crawler downloads and stores the content and the specific links embedded in that page. If permission is not granted, the host website refuses barter economization and thus stays out of the crawling process, losing the right or opportunity to be “googled.” If we use Niklaus Wirth's (1975) formulation, “algorithms + data structures = programs,” the program of crawling works by bringing together Google's algorithm and consumers’ data that feed the data structure necessary to produce crawl data.

The more linked and popular a page is, the higher it is ranked later. Therefore, web designers and SEO experts ensure that crawlers can access their sites and link their sites to many others. This is actually an acceptance of barter, and then the link population will also be used in further bartering this information. The more links one has, the higher is bartering power, and thus the more attention one gets from Google.

The Crawler does not merely observe a passive digital world, collect information, and then pass it on to the Indexer. It actively shapes its environment in a variety of ways. It prioritizes certain websites, choosing to crawl some before others. For example, if a website is not optimized for mobile, it is crawled less often, causing its representation in the Crawler's database to become outdated more quickly. Thus, the Crawler creates a push factor for websites to be accessible across multiple channels. In addition to this, bots respond to curated and optimized websites more frequently than websites that do not have budgets for optimization. For example, an organization without resources for editing its website may end up not removing duplicated or out-of-date content. The Crawler will note these duplicates and punish such websites by ranking them down. To be “loved” by Google, pages require significant ongoing maintenance, called SEO.

The Indexer

When the Crawler completes its barter and processing, Google assembles crawled data that looks like books stacked up at random. Pages that do not conform to Google's criteria are eliminated from the data set, and the remaining crawled data include text, images, videos, and other media. The Indexer begins to work on these data by decompressing and then sorting them by the parameters defined by Google such as type of content, language, and HTML types. The Indexer is like a librarian who processes that random stack of books behind the counter at a library.

Once the data are parsed into groups, they are further processed to eliminate repetitive content. Then, the Indexer carries out security scans to eliminate unsafe or deceptively optimized websites. The Indexer then analyzes the content for link processing. This involves identifying the other pages within a website's network and assessing the authority of the pages that link to them. Google considers these backlinks as a sign of a website's importance. After this processing, the index is stored on servers, and each piece of content is indexed based on its attributes. Google prioritizes “high-quality” information and stores it separately from “low-quality” information. Google also uses consumer behavior to inform how it indexes content, for the company knows that a great majority of consumers only click on the content the first page of the Publisher shows them. Chasinov (2020) showed that only 6.6% of consumers are willing to go to the second page of Google's Publisher; analysis of second-page clicks supports this statistic. Studying 4 million Google search results, Dean (2023) found that <1% of consumers click on a link located on the second page. Google's supply chain aims at “perfecting” the first page. Thus, it would be problematic to assume that Google invests too much in making ready the parts of the index that would infrequently present to the consumer. After all, a great majority of people read only its first page, thus in reality a great majority of the index never meets a great majority of human eyeballs.

The Ranker

After the Indexer completes its task, it passes its product, “the index,” to the Ranker. The Ranker, in collaboration with the Publisher, determines which portions of the index will be displayed to specific consumers. To achieve this, it employs signals and programs to order pages by quantifying webpage importance through links, penalizing low-quality content, targeting manipulative links, interpreting consumer behavior, and processing query context. Additionally, ancillary algorithms assist consumers by suggesting queries before they finish typing.

Although Google keeps the specifics of its algorithms confidential, insights into the Ranker's operations have emerged through a recent leak by Yoshi-code-bot and revelations from the 2023 DoJ v. Google case (Yoshi-code-bot, 2024; DoJ v. Google, 2023). Ranker operates through 2596 modules, each dedicated to specific evaluation tasks using 14,014 attributes at the time of this writing. Attributes are single data points, resembling production line metrics in a conventional factory. Signals, formed by combining attributes, are used to define priorities. For example, a signal might represent an overall product quality by aggregating multiple metrics. These signals influence what results appear first and how relevant they are to consumers.

Attributes are grouped into three main categories: consumer experience, page quality, and external evaluations of page quality. Consumer experience is key: it teaches Google about a page's authority and relevance. Various types of clicks—good, bad, longest-lasting—and post-click behavior are used to enhance rankings. Although Google claims not to use clicks directly for rankings, industry professionals argue otherwise, citing experiments that demonstrate their importance.

Keywords entered by users are critical, as they must align with website content. Effective keyword usage by websites includes placing them in title tags, meta descriptions, headings, and within the text. However, “keyword stuffing” can lead to penalties. Signals based on content quality include originality, comprehensiveness of details, and clarity of page design. Mobile-friendliness, page speed, and safe browsing also influence rankings.

External evaluations involve backlinks—links from other websites to a page. The number and quality of backlinks impact rankings, with authoritative sites (e.g., universities or government domains) carrying more weight. Links from related topics are preferred over irrelevant ones. Additionally, newer content often ranks higher, particularly for time-sensitive topics or news. Regularly updated content is favored. In essence, Google's Ranker evaluates not only what users search but also their behavior, creating a complex interplay of signals to prioritize content.

The Publisher

Google's Search Engine Results Page (SERP) is often assumed to display results directly relevant to a user's query. This assumption is only partially correct. In reality, only a small portion of the SERP consists of “organic results,” as Google refers to them—the results retrieved from the index based on search. The prominence of these organic results on the first page, both in terms of space and position at the top, diminishes as the search intent shifts from navigational to informational and, finally, to transactional queries. Transactional queries often bypass organic results altogether.

Beyond organic search results, Google incorporates up to 10 distinct types of content on the SERP that consumers did not explicitly request. These elements are not drawn from Google's index but are superimposed onto the page through automated editorial interventions, akin to how a newspaper editor selects content they believe readers would find relevant. These additional elements include Paid Ads, as well as Marketing Boxes, Featured Snippets, the Knowledge Graph, Local Packs, Video Results, Image Packs, News Boxes, and the People Also Ask (PAA) feature.

The SERP functions much like a newspaper, carefully planned and edited to address specific user queries while incorporating editorial judgment in choosing what to show, and now thanks to Google's artificial intelligence (AI) Bot Gemini, writing the answer itself. This is why we propose redefining SERP as the “publisher node.” The Publisher actively interacts with consumers and barters their data to anticipate their preferences. In this sense, the SERP can be viewed as a “Searchpaper,” akin to a newspaper but composed of search results and advertisements. Searchpapers, such as those produced by Google, Yandex, Baidu, Bing, or DuckDuckGo, represent a collection of curated online pages designed to present a mix of results and ads.

Newspaper = News as information + Ads Searchpaper = Search results as information + Ads

Google begins to barter data with consumers as soon as they open the search bar by visiting google.com or using their phones and browser's default engine. The browser is either owned by Google (in the case of Chrome) or, in non-Google browsers such as Firefox or Safari, Google is usually the default search engine. Especially if the search bar is opened by using Chrome as the browser, Google knows much about a particular consumer's search history, priorities, and geographical location. As soon as the first letter is typed in the search box, the supply chain begins to interact with the consumer via Autocomplete, a query prompt ancillary service Google uses. Imagine we type “t,” and Google suggests a series of queries, nudging the user to start bartering with Google. The proposed completions change as we continue typing. In our example, we continue to type the next five letters, “telev.” Google suggests that we are searching for “television.” We click on the suggestion instead of typing the whole word, accepting a barter—we give Google information and Google gives us what it decides we’re searching for. Similarly, we might have mistyped “television” as “tleviosin.” Google would still display “television,” either by prompting or automatically showing the results.

As soon as a consumer hits the enter button, the Publisher makes a decision regarding the kind of Searchpaper front page it will publish for the consumer's attention. Following nudging the consumer to finish their search query typing, Google categorizes the search type by using a taxonomy whose specifics the corporation does not disclose. However, the online advertisement industry actors and Google itself categorize three general types of search queries, each with a different logic of publication: transactional, informational, and navigational search queries.

In our example of “television,” the Publisher analyzes the keywords to guess its intent and decides that it is a transactional search. This decision determines the kind of page the Publisher will edit for the consumer. Now the Ranker and the Publisher will work together to make the page the consumer will see in less than a second. To be exact, consumers never “search” themselves, they type a query, and then Google's programs carry out a search, rank, and display operation for the consumer. First, the program searches the index located in a Data Center in the closest possible geographical proximity to the consumer. Most of the search results are already “ready” for search terms that rarely change. Google accepts that on average only 15% of all search results are new. 8 For 85% of the time, it is something that someone like a particular consumer has already searched for. The Publisher keeps finding a similar set of results 85% of the time yet making tweaks on a continuous basis. This does not mean that the Publisher shows the same results to everyone for the results have to be ranked for the particular consumer.

Following matching the query and the relevant info in the index, the matched information is ranked to determine the order in which it should appear on the published page. As soon as the results are ready, the Publisher moves to 10 other possible editing and publishing operations. Consumers cannot see the organic results without the Publisher finishing the production of the remaining “inorganic” parts of its Searchpaper's front page. Organic results are published somewhere on the page the Publisher edits and designs, considering both consumer experience and the commercial goals of the advertiser. The place, the size, and the detail of the specific parts of the published pages depend on the type of the query. The page that the Publisher edits to appear in response to a transactional search query is often overwhelmingly transactional, leaving almost no room for “organic” results on the first page.

Figure 1 shows the published front page resulting from the search “television,” showing the initial view on a computer screen without scrolling. The query results in this demonstration occupy only about 10% of the area: around half of that is a Wikipedia entry, and BestBuy occupies an equal amount. Thus, half of even the immediately visible organic results display things with commercial interests. In searches such as this, we can observe that Ranker and Indexer do not play a significant role. One does not need a costly supply chain to display only two results, Wikipedia and BestBuy, to a consumer.

First page of Searchpaper for “television.” Google Search Prompt: “television,” last accessed 17 June 2024, at 13:44.

Precisely, 85.4% of the above first page is devoted to one or more of the remaining 10 published items. The largest item on the page is the “paid ads.” The paid results occupy a much larger surface area than organic results, covering 30% of the page's surface. To be displayed before the organic results, companies bid for certain keywords in Google Ads. The highest bidder with the most relevant ad gets an advantageous space on the page. Then, 14% of the page is earmarked for a marketing search box that encourages the consumer to continue searching for a specific TV model. Once the consumer clicks anything within this box, the new page eliminates an already very small space for “organic” results altogether, and the Publisher broadcasts a digital shopping mall that markets television sets, with Google receiving a percentage of each sale.

The other two types of search, navigational and informational queries, entail less commercial potential and are treated differently by the Publisher. A consumer typing a navigational query is after a particular website address of an institution. For example, a consumer typing “The New School” into the search bar may be looking for The New School University, or “a new school” around their neighborhood. The Publisher responds to such queries with a completely different page. If the consumer is known, for example, via their search history, the Publisher retrieves possibly relevant information from the Index, and the Ranker ranks it according to the consumer.

In this case, let us imagine that the consumer is a professor at The New School and Google knows who he is, where he lives, his search history, and many other things about him. His university uses Gmail for correspondence and Google Drive for storing everything. Google correctly assumes that he is not college shopping or looking for a new school. The top part of the published page has digital buttons that link to images, news, maps, and videos, followed by a knowledge row with links to information on cost, acceptance rate, login, careers, the application portal, and more. Then comes the “organic result” or the university's website information, with a new search bar embedded in it to search within the university's website, covering almost 60% of the page. There is a sliver of a Paid Ad, displaying The New School, taking up 2% of the page. At the bottom, one can see a PAA box.

Finally, informational search queries, the third type, are those where consumers seek to learn something. Representing the majority of search queries, they are crucial for both the search supply chain and the advertising industry. For Karen, a senior vice president of a major online marketing company, “Without these searches, online ads just wouldn't work. We use them smartly to learn about our consumers. They help us find and engage potential consumers and boost our brand's visibility.” Imagine a consumer types “best tourist attractions in Edinburgh,” presenting an informational search. Google guesses that she is most probably planning a trip to Edinburgh and would like to get information on what to do there.

The Publisher operates at peak performance when handling informational queries displaying nearly all 10 publishing items across a couple of pages the first of which is visible in Figure 2. The first page opens with a top link bar featuring buttons that blend marketing and information in a single row, followed by paid ads with two layout designs. The first design is an ad carousel. Clicking on the navigational arrows on the right side of the carousel extends the window to include six more tour ads, continuing up to two more-page strips with a total of 33 ads. It is expensive to have a place on this carousel due to its prime location and the inclusion of pictures. Below the carousel, more paid ads are displayed, some with smaller visuals on the right. In total, 41% of the entire page consists of ad spaces sold by Google. The second-largest space is a Knowledge Graph on the upper right-hand side, providing information about Edinburgh from various sources, along with a link to Google Maps, occupying 30% of the first page. To the end of the page, one sees the title of the result of the organic search, “Top Sights in Edinburgh.” Notably, the first page does not display any organic search results. Only by scrolling to the second page does one see the query results. In this case, the Publisher decides to display 0% of the answers to the query our imaginary consumer typed. As one continues scrolling down, the Publisher displays a Local Pack, Video Results, an Image Pack, a News Box, and finally a PAA box. All these items have links that enhance advertising and marketing opportunities for Google's search engine and do not address the search query.

First page of Searchpaper on the informational query. The last query prompt on google.com was on 20 June 2024, at 20:36 using Chrome.

One may contest whether “best tourist attractions” is an informational query at all. After all, the query suggests a potential transactional intention. Hence, if one typed “Edinburgh” only, the search displays 100% organic results. There is no transparent standard that Google uses and shares with the public on its policy of how to interpret searches and how to economize and publish the front page of the Searchpaper.

The last published item on the front page is a novelty Google introduced in May 2024. Google has been using machine learning in a variety of its services from autocomplete to ranking for a long while. In February 2023, Google chatbot Bard was announced and began to offer its services in the UK and US in March 2023, and was rebranded as Gemini in February 2024. A month later, the Publisher node of the search supply chain integrated “AI Overview” in select geographies such as the US, displaying informational search queries. The AI Overview Box's placement before organic results, usually right after paid advertisements, is a consequential change in the ways in which the front page is edited by Google.

Data Centers

It is often overlooked that digital–industrial systems operate through vast machine constellations, which we call megamachines (MacKenzie and Caliskan, 2025). The Google megamachine is composed of more than 130 Data Centers located in 40 regions, with a further nine under construction. Each region brings together three to four deployment zones with specific functions. It may be misleading to call them “Data Centers,” as this would be analogous to calling a factory a “value center” or a bank a “money center.” In reality, Data Centers are vast networks of computational machines and engines that produce concrete services by combining human labor, water, air, and electricity. They are not mere centers of data, discs, or servers, but rather material computational industrial nodes of supply chains that also carry out what Salling and Winthereik rightly call inventorying in their innovative study of Meta's Data Centers in Denmark (2025).

We refer to Data Centers as megamachines (a term that we adapted from Mumford, 1967) because they are colossal computational plants that store and deliver the data crucial for the successful working of Google's Search supply chain. Storaging is not a passive process where one unloads things in a warehouse. It entails production, carried out by human and non-human labor. It uses hundreds of thousands of machines for materially writing, retrieving, and processing data; connecting and maintaining hard discs and servers; securing the place and its data; managing its power supply by distributing electricity often coming from nuclear and carbon (oil, gas, and coal) power plants, and cooling them with water (and tons of it); maintaining water and diesel pumps, power generators, servers, and discs; maintaining back-up systems for water pumps as well as electric and data cables; and finally, destroying tens of thousands of broken or old disc drives and other equipment (Figure 3).

An incomplete picture of the Megamachine.

Any machine within the Google supply chain network can be integrated into the supply chain megamachine, enhancing interoperability across the organization, only limited by geographical distance among Data Centers. A Data Center is the last stop of a data journey as it starts when a Google consumer types a query. As it changes shape and volume, it travels from a consumer's computer via a variety of cables and wireless signals to the particular Data Center that is geographically closest. There it is processed, edited, and finally published by that Data Center's servers (often hundreds or even thousands of them, even in the case of a single query).

Each facility houses tens of thousands of hard disk drives (HDDs), and solid-state drives stacked in rows similar to library shelves (Hu, 2015; Johnson, 2019). These drives are connected through fiber optic cables and power cords, thus they each need to have at least two ports: one for data transfer and another for the electric power that energizes disc parts responsible for data storage and retrieval. Understanding the material workings of an HDD, still, an important disc technology for the highest-volume storage in megamachines, is crucial to comprehend the operation of a Data Center and thus Google's Search supply chain itself.

The HDD, a compact memory device, has over 100 parts and operates through a system of spinning platters, actuator arms, and electromagnetic heads, with data stored as magnetic patterns. Each platter consists of glass or ceramic substrates, coated with a magnetizable cobalt alloy, a protective carbon layer, and a friction-reducing lubricant. High-speed motors spin the platters and move the actuator arms to access data sectors. These energy-intensive devices consume significant power (3–15 watts per drive) and in aggregate generate vast heat requiring extensive water-based cooling systems. Despite Google's aim to be carbon-free by 2030, its Data Center emissions have surged 48% since 2019, highlighting the ongoing dependency on coal and other non-renewable energy sources for cooling and operation. Ironically, coal, a driver of the 19th-century industrial revolution, continues to power many of today's megamachines (Hu, 2015).

Three things enter a Data Center via submarine and underground cables and pipes: data, electricity, and cold water, and two things come out: data and hot water. This huge, distributed megamachine is the most energy-consuming part of the supply chain. Furthermore, all bartered data are kept in megamachines, with the help of thousands of workers operating millions of smaller engines. Finally, Data Centers are the most important physical places in Google's search supply chain for the operations of the Publisher. Without the infrastructural and inventory service the megamachine supplies, the Google Search supply chain would be inoperable.

Search engine supply chains also require heavy investment in their maintenance, systematic experimentation, and human evaluation. In 2020, Google implemented on average nearly 13 changes daily, driven by over 13,000 online and 148,000 offline experiments alongside a million quality assessments from human testers (Google, 2020). These efforts resulted in 4725 launches, with each new feature undergoing a meticulous process of ideation, prototyping, testing (both offline and live traffic experiments), and approval by senior committees (Google, 2020). Human evaluators, subcontracted via external companies, use Google's Search Quality Rater Guidelines, which emphasize criteria such as Expertise, Experience, Authoritativeness, and Trustworthiness, to evaluate search results and inform system improvements. Google's live traffic experiments test search changes on unsuspecting user groups, analyzing their interactions to refine or reject updates. Offline experiments, however, rely on historical data to simulate scenarios, ensuring proposed changes are viable before live implementation.

Together, these methods form a continuous, data-driven feedback loop, maintaining the efficiency and relevance of Google's search system while introducing a layer of unperceived consumer engagement during testing. This intricate and dynamic process reflects more than a static supply chain; it embodies a seamless integration of production and exchange or prodexchange.

Digital–industrial economization approach

In the preceding section, we showed how production and exchange in the Google Search supply chain are braided as a single unitary economic practice. We propose the term prodexchange to describe this complex economic practice that serves as the condition of possibility for search supply chains. Testing this assertion in our interviews with people who work for Google, other search engineers and industry actors, participant observation in contexts of search maintenance and deployment, and more importantly, verifying its validity in design research, we argue that the social sciences should approach digital–industrial supply chains with a stacked economization approach that is armed with an agile concept that is better aligned with the empirical realities it explains: prodexchange.

As we discussed above, prodexchange is a new economic practice that is located in stacked economization relations. Deploying prodexchange on a theoretical bridge between management and the sociology of economization sheds light on such economic complexities. Building on this, we propose a digital–industrial economization approach to analyze the workings of the platformized search industry. Utilizing design methods, we deconstruct Google's search engine and reveal that the term engine does not capture the complex processes involved in delivering Google's search. This product is the result of a rigorous supply chain management process that intricately weaves together production and exchange relations. Supply chain literature is instrumental in identifying the nodes within such a supply chain that simultaneously produce, exchange, maintain, and store products and inputs. The dynamic operation that facilitates this multifaceted interaction is prodexchange itself.

Figure 4 illustrates the prodexchange of input and output products within Google's search supply chain. This process produces the search service utilized by Google Searchpaper to market ad spaces to ad buyers, publishers, and Google itself. The process begins with the prodexchange of data. The Crawler passes its crawled data to the Indexer, which compiles a vast index. Next, the Ranker engages in an prodexchange by personalizing and ranking potential search results. This involves a continuous barter exchange with consumers, whose data are essential for producing ranked results. Finally, the Publisher node creates, edits, and presents a Searchpaper by prodexchanging a complete set of representations. This step involves maintaining a continuous barter relationship among consumers, publishers, and ad buyers as they interact with Google Searchpaper.

Prodexchange in Google Search supply chain.

Table 1 summarizes all input and output products of the five nodes of the supply chain. Google Search's supply chain draws on the continuous work of five autonomous nodes of prodexchange. Take one out from the supply chain and replace it with the product of another company, and Google's Searchpaper could still be published. Thus, Google's supply chain management system can only be analogically called an engine with the cost of using a misleading analogy because parts of engines cannot be taken out. An engine would break down.

Google search supply chain prodexchange matrix.

SERP: Search Engine Results Page.

In search industry supply chains, companies such as Google strategically locate a variety of economization modes that are used as production processes themselves. It may sound counter-intuitive, but our empirical research shows that exchange comes before production in this supply chain, a surprising outcome for the supply chain literature. Google's supply chain deployed barter exchange in almost every node of its supply chain management as a condition of the possibility of production itself, not the other way around. Another company could have chosen to marketize its supply chain by purchasing crawl data to assemble a different index. Alternatively, such as Microsoft Bing does, a company might choose to stack a gift mode of economization in prodexchanging its crawl data. Such choices are not engineering requirements of search engines, but political economic preferences that are informed by the commercial interests of a given Searchpaper publication company.

But is prodexchange really new? One could argue it resembles traditional industrial relations—for example, in shoemaking, in which business owners purchase labor and materials, and production and exchange often overlap on the shop floor. However, we argue that prodexchange represents a categorically distinct phenomenon, for several reasons. In conventional industry, production involves transforming materials, with the exchange of inputs—labor, tools, and raw materials—typically occurring before the production process begins, even if payment is delayed. In digital production, by contrast, value is often generated and exchanged simultaneously. This is largely due to the representational nature of digital materials: they can be used, transformed, and transmitted all at once. For example, when a web crawler gathers and aggregates user data, the resulting dataset becomes more than the sum of its parts—it is being exchanged and produced in the same instant. Second, many forms of prodexchange are temporally bound. A user's attention, for example, can be captured and monetized only at a specific point in time. Once that moment passes, the opportunity for economic valuation vanishes. One cannot buy or sell yesterday's attention; it must be economized in real-time. Third, platform prodexchange often involves the participation of customers who are not formally recognized as producers. Unlike traditional labor relations, in which work is performed knowingly, under contract, or informally, platform consumers generate economic value—through googling and engagement—without explicit awareness or compensation. This blurring of the producer–consumer boundary is made possible precisely because production and exchange are interwoven—hence the concept of prodexchange. Finally, prodexchange takes place in a context in which we can still observe some consecutive and separate relations of exchange and production. Our argument is not that all production and exchange relations are simultaneous—but that many, and crucial ones, are, particularly in the search industry.

We want to end this section with a caveat. This paper did not study all economization processes involving Google, and deliberately excluded an analysis, for example, of Google's AdX. 9 Google demarketizes many products of its supply chain nodes in order to assemble and profit from AdX, its colossal exchange platform that brings together a variety of markets.

Conclusion

This paper has presented an analysis of the Google Search supply chain, revealing a complex interplay of prodexchange processes. By employing a combination of research methods, we have analyzed and dismantled Google's search engine and made visible its complex, multifaceted nature. Our analysis shows that the term “search engine” is a misnomer. Google Search is not merely an engine, nor a simple tool used by consumers; it is a vast computational industry drawing on a supply chain operating on a global scale. At the heart of this supply chain lies barter-driven economization, in which users, consumers, and website owners exchange their data with Google in return for receiving, among other things, the right to search and be searched in Google's private index.

This analysis helps us bridge the gap between the supply chain management literature and the economization approach in sociology. Both fields have not only neglected the study of how search engines work but have also examined general exchange and production relations by theoretically separating them (supply chain literature) or by choosing exchange-dominated contexts to develop studies of their “production” (economization approach). Building a theoretical bridge, we have developed the digital–industrial economization approach, which could shed light on blind spots such as prodexchange in the search industry. This theoretical framework allows for a nuanced analysis of platform economic contexts in which it is very common to braid production and exchange processes.

By examining each node of Google's supply chain using the industrial economization approach, we have demonstrated that production processes are fundamentally interwoven with exchange relations rather than merely preceding or following them. For instance, the Crawler not only collects data from websites but barters data with site owners who, in turn, receive the right to be indexed and/or potentially ranked high in search results. Similarly, people who input search queries are not passive users but active consumers, who barter their personal data in exchange for search services. In this way, we make visible how search supply chain nodes do not function in isolation or in a linear sequence of production followed by exchange.

Our empirical findings underscore the importance of re-evaluating economic, sociological, and management concepts in light of the digital–industrial economizations epitomized by Google Search. The industrial organization literature in economics, which typically analyzes market power in terms of a firm's ability to set prices above production costs, fails when confronted with a platform that operates extensively through barter and other non-monetary exchanges. Google's massive market power in the online advertising sector is not merely a function of pricing strategies, as industrial organization economics might assume, but is rooted in its ability to produce and manage a successful barter-driven search supply chain. This stacked economization process has significant implications for understanding competition and regulation of non-market economic preconditions of market power.

Moreover, our study highlights the limitations of existing supply chain theories when applied to digital–industrial contexts. Traditional supply chain models often emphasize the sequential movement of goods and services through production and distribution channels. However, in Google's case, the supply chain is characterized by concurrent production and exchange activities that are facilitated by programs (as opposed to mere algorithms) operating within a vast computational infrastructure. By adopting Wirth's (1975) notion that “algorithms + data structures = programs,” we have shown that each node functions as a program that consumes, barters, and generates data through prodexchange processes. Our empirical findings are in rapport with how computer scientists have designed such supply chain nodes in Google and elsewhere and other empirical studies that have documented stacked exploitation relations within dynamic platform economization contexts. 10

The digital–industrial economization approach provides a robust framework for analyzing these complexities. It enables us to see how different modes of economization—barter (in Google), gift (in Bing), and market exchange (in all)—are integrated into the production processes themselves. For example, Google's Data Centers and their megamachines, not only support computational processes but also serve as sites where data are stored, processed, and exchanged, all of which are essential for maintaining the prodexchange dynamics of the supply chain. This perspective also sheds light on how ancillary processes, such as feedback collection and experimentation, are integral to the continuous refinement and profitability of a search industry business.

Our findings have implications for various stakeholders, including policymakers, regulators, and scholars. Recognizing users as consumers who engage in barter transactions with Google challenges the prevailing notion of users as passive recipients of services. This reclassification has potential regulatory and taxation consequences. For instance, if users are considered consumers engaged in economic exchange with platforms, then the data they provide could be regarded as having financial value to those platforms, and subject to consequential legal and financial considerations, including taxation policies that currently do not take account of barter transactions. How is it possible to imagine a more developed taxation and regulation framework that is not blind to non-market exchange relations such as barter at a time when most platform economic prodexchange draws on barter in the first place? Regulators need to consider how prodexchange platforms contribute to market power and whether existing laws are sufficient to ensure fair competition and protect consumer interests beyond markets.

Our study opens avenues for future research. The digital–industrial economization approach and the concept of prodexchange can be applied to other computational industries, such as social media platforms, e-commerce sites, and cloud computing services. Each of these industries involves complex supply chains in which production and exchange are deeply intertwined. Investigating these industries through the lens of digital–industrial economization could yield new insights into their operational dynamics and socio-technical maintenance.

Additionally, exploring the final marketized economic processes in advertising exchanges (such as AdX) could serve as a corollary to our analysis. While this paper focused on the upstream and midstream processes of Google's supply chain, examining how ads are bought and sold in real-time bidding environments would complement our understanding of how production and exchange culminate in market transactions. This could further elucidate the mechanisms through which platforms such as Google monetize their services and solidify their market positions.

Moreover, our analysis emphasizes the critical role of data as both input and output in the Google Search supply chain. Data functions as a barter currency in the exchanges between Google and its users, as well as between Google and website owners. This dual role of data complicates traditional economic models that typically treat inputs and outputs as distinct commodities. In the context of prodexchange, the materiality of data is continuously transformed within the supply chain, blurring the lines between consumption and production too.

The implications of this data-centric prodexchange are profound, particularly concerning privacy, data ownership, and the ethical use of information. Users often provide personal data without fully understanding the extent to which it is utilized within search supply chains. The barter nature of this exchange raises questions about informed consent and the fairness of economic transactions. As regulatory bodies worldwide grapple with data protection laws, such as the General Data Protection Regulation in the European Union, our findings highlight the need for policies that address the complexities of data exchanges inherent in prodexchange relationships.

From a competition standpoint, the prodexchange model sheds light on how Google has established and maintained its hegemonic position in the search industry. By operating a supply chain that leverages barter exchanges at each node, Google creates high barriers to entry for potential competitors by demarketizing all products of its search supply chain nodes before the market kicks in. Demarketization is a mode of reverse economization, in which a product is not commodified in order to be used exclusively for prodexchanging other things. New entrants would not only need to replicate the technological infrastructure but also establish comparable prodexchange relationships with consumers and content providers. This entrenchment of market power through prodexchange dynamics suggests that antitrust interventions may need to consider structural remedies beyond traditional measures such as imposing fines or regulating marketized operations only.

Our study also touches upon the environmental impact of Google's operations. Data centers, as the fifth node in the supply chain, consume vast amounts of energy and resources. The environmental footprint of maintaining such a megamachine is significant, raising concerns about sustainability. The continuous cycle of data production and exchange in the prodexchange model necessitates constant computational power, which has implications for energy consumption and carbon emissions. Future research could look deeper into the environmental costs of prodexchange-driven supply chains and explore strategies for mitigating their ecological impact. It is important to note that Google's centralized bidding systems may be more energy efficient than decentralized and more competitive bidding infrastructures as shown elsewhere (MacKenzie et al., 2023).

Our research primarily centers on the supply chain's internal mechanisms and the prodexchange processes within the supply chain. While we have touched upon the marketized exchange processes in Google's advertising platforms, a more comprehensive analysis of the downstream effects is required to complement the digital–industrial economization approach, a project that we are pursuing simultaneously.

In conclusion, prodexchange processes must be brought into sharper focus. By shedding light on these interwoven production and exchange dynamics, we can better grasp and engage with the complexities of platform economies. Overlooking these processes leaves us ill-equipped to understand or regulate the constantly evolving digital–industrial economization landscapes. In an era marked by increasingly stacked relations of economization, relying on outdated and rigid theoretical frameworks designed to understand only the market mode of economization limits our capacity for thick social descriptions and sound regulation. It is essential to develop new, agile approaches that align with the realities of contemporary platform economies, better equipping us to navigate and shape their future.

Footnotes

Acknowledgments

We would like to acknowledge the help of Paul Dourish, Mikko Laamanen, Kevin Mellet, Ilker Birbil, Pinar Yolum, Sareeta Amrute, Kean Birch, and two anonymous reviewers. Thanks to Agah Suat Atay for the formatting help. Also, thanks to numerous Alphabet employees who helped us understand search better yet chose to remain anonymous. A number of sentences and paragraphs of this article were copy-edited by using Open AI's Chat GPT in temporary chat mode which prevents Open AI from using our writing for training its models. These AI copy-edited texts were recopy-edited by human authors. The authors did not use AI for research, ideation, and original writing purposes.

Informed consent statements

All subjects gave consent and those who chose to be anonymous were anonymized in research notes and writing of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the United Kingdom Economic and Social Research Council, Grant Number ES/V015362/1.

Declaration of conflicting interest

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data availability statement

No data is available publicly for anonymity considerations.