Abstract

Resolving the dichotomy between the human-like yet constrained reasoning processes of cognitive architectures (CAs) and the broad but often noisy inference behavior of large language models (LLMs) remains a challenging yet exciting pursuit, aimed at enabling reliable machine reasoning capabilities in LLMs. Previous approaches that employ off-the-shelf LLMs in manufacturing decision-making face challenges in complex reasoning tasks, often exhibiting human-level yet unhuman-like behaviors due to insufficient grounding. This present article start to address this gap by asking whether LLMs can replicate cognition from CAs to make human-like decisions. We introduce

Keywords

Introduction

Large language models (LLMs) have gained considerable popularity for a wide range of prediction and decision-making tasks, spanning applications, such as robotics and control, neural question-answering, scene understanding, code generation, mathematical reasoning. LLMs are trained on massive datasets, can be used both as discriminative scoring functions as well as generative models, and their capacity allows them to accumulate and retain vast amounts of knowledge (Andreas, 2022; Brown et al., 2020; Dong et al., 2022; Francis et al., 2022; Hu et al., 2023; Tatiya et al., 2023). Typical LLMs’ use resembles system-1 reasoning process (Hagendorff et al., 2023; Sloman, 1996), offering quick, intuitive responses for everyday tasks. And advancements in multi-agent LLM frameworks and emergent capabilities such as in-context learning (Vaswani et al., 2017; Coda-Forno et al., 2024; and Dong et al., 2022) have pushed LLMs toward system-2 reasoning process (Tversky & Kahneman, 1974), for example, ‘‘chain-of-thought’’ reasoning (CoT) (Bhattamishra et al., 2023), enabling more deliberate cognition for complex decisions (Brown et al., 2020; Webb et al., 2022). However, issues such as discrepancies in human-like reasoning (Liu et al., 2024), problems with insufficient grounding (Yao et al., 2023), and hallucination (Chakraborty et al., 2025) persist. Specifically, when using off-the-shelf LLMs to augment decision-making in manufacturing, where the value stream map (VSM) (Rahani & Al-Ashraf, 2012) with intertwined variables is vital for smart scheduling (Rossit et al., 2019), plant managers often struggle with using LLMs’ unhuman-like and noisy predictions (Makatura et al., 2024) (also see Appendix: LLM Conversation Examples).

This article is part of a larger project aimed at augmenting LLMs with human cognition to improve manufacturing efficiency, structured in two phases.

We present a case study of

The present article poses three research questions: What are the properties of a neural network representation of the decision-making process in CAs? Answering this question sets the ground for developing a context-aware domain knowledge base for augmenting decision-making in LLMs. What level of complexity in behavior representation can LLMs capture? Previous research used LLMs’ conceptual embeddings to predict human-reinforced decisions (Binz & Schulz, 2023), indicating that embeddings from LLMs could be trained to predict human-like behaviors. By incorporating more training sets using CAs, the study addresses the limitation of high data collection costs with human subjects and aims to broaden the investigation into the extent to which innate LLMs can learn human cognition. Can we inform the LLMs with knowledge about the reasoning process of the CAs? Answering this question offers insights into knowledge transfer from domain-specific bases to LLMs, and opens up new research directions for equipping LLMs with the necessary knowledge to computationally model and replicate the internal mechanisms of human cognitive decision-making.

The following sections are sequentially arranged as follows: related work; an explanation of

This section starts by integrating cognitive psychology principles into LLMs, along with decision intelligence in manufacturing and cognitive decision-making. It then highlights the domain limitations of these approaches. It concludes by discussing the current integrating of CAs and LLMs, and points out how our approach differs from others.

Relating Cognitive Psychology to Human-Like Artificial Intelligence

Human-like artificial intelligence (HLAI) has been a goal since the emergence of machines (McCarthy, 2007). In recent years, the development of transformer-based LLMs has revolutionized HLAI, demonstrating impressive human-level capabilities. However, LLMs sometimes fail to display human-like behavioral traits. Analyzing the areas where LLMs currently fall short in replicating human cognition and behavior highlights the problems in exhibiting human-level capabilities that are unhuman-like (Dorobantu, 2021), including behavior discrepancies between LLM inference and human reasoning (Binz & Schulz, 2023; Liu et al., 2024), insufficient grounding (Yao et al., 2023), and hallucination (Chakraborty et al., 2025).

The challenges mentioned have catalyzed an integration of cognitive psychology with LLMs, toward human-like trustworthy LLMs. Recent studies have used cognitive psychology experiments to investigate and comprehend behaviors in these models, focusing more on behavioral insights than on conventional performance metrics (Binz & Schulz, 2023; Coda-Forno et al., 2024). In addition, the use of LLMs’ neural representations has been applied in behavioral psychological science research, which involves and not limited to prompt engineering, feature extraction, and fine-tuning:

Common Model of Cognition, Cognitive Architectures, and Cognitive Models

To work toward integrating human-like behavioral traits into LLMs, we use a suite of tools rooted in the common model of cognition (CMC) to provide a wider range of tasks into the training dataset. CMC embodies unified Theory of Cognition (Laird et al., 2017; Newell, 1990), a theoretical framework that presents a model of human cognition codified as a computational architecture. The CMC is a brain-inspired framework validated by large-scale neuroscience data. The CMC identifies core components and processes fundamental to human cognition, including memory, perception, motor actions, and decision-making. The model assumes a cyclical process where these components interact to produce human behavior. The CMC includes a feature-based declarative long-term memory, a buffer-based working memory, a system for pattern-directed action invocation stored in procedural memory, and specialized systems for perception and action (Stocco et al., 2021).

The CMC integrates essential features from various CAs (Anderson, 2009; Kotseruba & Tsotsos, 2020, 2025; Laird, 2012), which propose a set of fixed mechanisms to model human behavior, functioning akin to agents and aiming for a unified representation of the mind. By using task-specific knowledge, these architectures not only simulate but also explain behavior through direct examination and real-time reasoning tracing.

Two representative CAs related to the CMC are ACT-R and Soar (Laird, 2021). Other CA could also be chosen from a recent extensive review (Kotseruba & Tsotsos, 2020, 2025), as long as a trace is available.

The two most commonly used representations in ACT-R are declarative knowledge and procedural knowledge. Declarative knowledge consists of chunks of memory (e.g., the production line comprises five sections), while procedural knowledge performs basic operations, moves data among buffers, and identifies the next instructions to be executed (e.g., lower defect rate will lead to higher efficiency rate).

One primary difference between these two architectures is that ACT-R was designed to model human behavior and has a track record of predicting human performance and timing to the millisecond level. In contrast, Soar places less emphasis on replicating human behavior and more on developing general agents with cognitive capabilities (Laird, 2021).

Decision Intelligence in Manufacturing

Industry 4.0 aims to create ‘‘intelligent factories,’’ where advanced manufacturing technologies facilitate smart decision-making through real-time communication and cooperation among humans, machines, and sensors (Hozdić, 2015). One example of this is smart scheduling, which employs advanced models and algorithms using sensor data (Rossit et al., 2019).

Decision intelligence (Leyer & Schneider, 2021) is a crucial component of smart scheduling and comprises three stages.

A value stream map (VSM) is a critical tool in manufacturing decision intelligence, functioning as an flowchart that visualizes and controls the production line (Manos, 2006). VSM meticulously tracks metrics such as inputs, outputs, processes, overall equipment effectiveness (OEE), and cycle times (CT). However, plant managers encounter significant challenges when transitioning VSM in production management from decision support to decision augmentation. These challenges stem from the difficulty of applying VSM concepts to complex, real-world scenarios characterized by numerous intertwined variables (Makatura et al., 2024).

Cognitive Decision Making

Representative CAs, for example, Soar, ACT-R, have been used to build models that automate decision-making tasks, for example, Marewski and Mehlhorn (2011) and Kang (2001). Among them, the ACT-R CA is applied to build models across psychology and computer science that are closely aligned with human behaviors. It has a track record of accurately predicting human performance and timing across a variety of tasks (see Plitt & Russwinkel, 2024), which meets our needs for developing synthetic agents that can provide human-like cognitive reasoning in learning and training environments.

The ACT-R modeling approaches include: (a) strategy or rule-based, where different problem-solving strategies are implemented through various production rules and successful strategies are rewarded (Best & Lebiere, 2003; Wu et al., 2023); (b) exemplar or instance-based, which relies on past experiences stored in declarative memory to solve problems (Gonzalez et al., 2003); and (c) hybrid approaches that combine strategies and exemplars (Prezenski et al., 2017).

A few features distinguish the use of ACT-R in creating decision-making models that involve learning:

However, ACT-R does not have LLM-like dialogic interaction with other ACT-R models, which limits their usability for decision-making. Intuitively, a solution could take the best of both CAs and LLMs, where ACT-R models serve as synthetic agents to instruct LLMs. They do this by providing knowledge of cognitive decision-making through LLMs’ training, which includes aspects such as learning. The trained LLMs can then be generalized to unseen problems.

Integration of Cognitive Architectures and LLMs

Efforts have been made toward leveraging the strengths of both CAs and LLMs to create a more robust unified theory of computational cognitive models. Some approaches include using the implicit world knowledge of LLMs to replace traditional declarative knowledge mechanisms (Wray et al., 2024), employing Chain-of-Thought reasoning to enhance the symbolic mechanisms for procedural knowledge (Kirk et al., 2024), and leveraging language models as external knowledge sources for cognitive systems, while exploring ways to improve the effectiveness of knowledge extraction (Kirk et al., 2023).

Moreover, Sumers et al. (2023) examines how principles from CAs can guide the design of LLM-based agent frameworks, demonstrating a comprehensive integration effort that spans from knowledge representation to interaction with the environment. Additionally, Sun (2024) proposes a direction for creating computational CAs using dual-process models and hybrid neurosymbolic methods. Using the Clarion CA Sun (2006) as an example, Sun illustrates the theoretical opportunities for incorporating LLMs into Clarion’s modules of perception, memory, motor control, and communication, leveraging LLMs’ natural language processing and generalization abilities. This present study builds upon previous research; however, we have adopted a different perspective by leveraging CAs to ground the decisions of LLMs in a data-driven manner. We aim to examine the properties of a neural network representation of the decision-making process in CAs and investigate whether knowledge from CAs can be preserved in an embedding space and infused into LLMs through the transfer of learning.

Problem Definition: Design for Manufacturing

This article presents a case study of training a cognitively inspired LLM for decision-making in the DFM domain. We define the terminology that constitutes our decision-making problem. The DFM problem setting is a prototypical manufacturing production-line workflow, from supplier to customer, for which there exists a VSM (Figure 2), which allows for tracking the efficiency at different sectors of the process and abstracts the overall problem for mathematical modeling and optimization. Decision candidates come from sectors such as body production, pre-assembly, assembly. Early sectors pose potential efficiency problems in the workflow and may warrant optimization (triangles), while later stages are governed by first-in-first-out (FIFO) processes. The metrics at each stage include cycle time (CT), overall equipment effectiveness (OEE), and/or mean absolute error (MAE).

A Value Stream Map of our manufacturing task process. Adapted from a Bosch manufacturing production handbook.

Focused on maintaining stable output for manufacturing plants, we consider plant managers’ feedback alongside the VSM structure to define the decision-making problem that aim to reduce total production time while minimizing total defect rate increase (see Figure 1(1) define decision-making problems). When facing unseen DFM problems, which are yet constrained to fixed decision candidates and unknown decision metrics.

VSM-ACTR, A Human-Like Decision Making Cognitive Model

The ACT-R CA was chosen to develop the cognitive model for our task because it has a track record of accurately predicting human performance and timing across a variety of tasks, which meets our need to develop synthetic agents with individual differences in learning and training, for example, Marewski and Mehlhorn (2011) and Plitt and Russwinkel (2024). We created the VSM-ACTR cognitive model, which is a rule-based ACT-R cognitive decision-making model for the DFM problem that implements multiple problem-solving strategies through a combination of production rules.

VSM-ACTR has incorporated the meta-cognitive processes that reflect on and evaluate the progress of chosen strategies—with an emphasis on headcount (manufactoring) cost evaluation, through a reward structure that enables a process akin to reinforcement learning. This system enables the model to dynamically assess the impact of decisions on headcount costs, computing a reward or penalty for each decision cycle. These rewards or penalties then dynamically adjust the utility of the productions associated with each decision-making cycle. This helps the model to exhibit a human-like learning progression, that is inherited from its knowledge and ACT-R’s mechanisms. Below we briefly introduce the model and the model details can be found in Wu et al. (2024) and Wu et al. (2025)

Declarative Memory

VSM-ACTR integrates the prototypical decision process with insights into how cognitive models represent different levels of expertise, for example, Martin et al. (2004) and Paik et al. (2015), categorizing users into three levels of expertise: novices, intermediates, and experts. Novices engage in decision-making using deliberative chunks. Intermediates can manage key metrics such as CT and OEE but struggle with the systematic analysis of intertwined variables. Experts, on the other hand, make judgments systematically. The cognitive model employs three types of knowledge chunks: decisions, decision merits, and goals. The ‘decision chunk’ encodes eight slots including reduction time (goal), decision-making state (novice, intermediate, expert), and related variables. The ‘‘decision merits chunk’’ holds information on sector weights, defect increases by sector, and comparative defect rate increases. The ‘‘goal chunk’’ captures the initial production conditions and the ultimate goal of achieving the optimal decision.

Production Rule Sets

Three sets of production rules represent the decision-making behaviors of novice, intermediate, and expert decision-makers. These sets comprise a total of 18 rules, each driven by goal-focused objectives across 20 states, covering actions such as choosing strategies, actions, working memory management, decisions, and evaluations.

We use the expert production rule set as an example (Figure 3), once the decision-choice center decides to activate a set of expert decision productions, the process begins by perceiving the problem and retrieving related decision-making metrics from chunks. The imaginal buffer then acts as a working memory platform, holding and manipulating relevant information during the decision-making process. It allows the model to construct new mental representations or modify existing ones based on incoming data or problem-solving needs. This involves using the imaginal buffer to assess the relationships between the decision target and decision metrics, particularly considering the impact of each sector’s weight on the defect rate change, and determining the final defect rate increase for each sector. These results are stored in the imaginal buffer and later retrieved for comparison. This enables the model to select the sector with the lowest defect increase. After one decision-making cycle, the model evaluates the headcount cost, rewarding or penalizing the entire process based on the evaluation results and decision strategy used before looping back to the next decision-making round.

Production rules control structure for expert decision making and their use of the ACT-R Goal and Imaginal buffers. Adapted from Wu et al. (2025).

The model can learn while performing tasks through a mechanism leading to varying levels of expertise, as shown in Figure 4. The model mimics human decision-making behavior through differentiating knowledge representations.

Level of expertise mechanism in VSM-ACT-R. Excerpted from Wu et al. (2025).

(a) Obtaining decision representations from VSM-ACT-R. (b) LLM feature extraction for behavior prediction.

The model also simulates the learning progress through the

With the aim of making the model assess the effectiveness of decisions while learning—akin to human metacognition, self-assessing and self-correcting in response to self-assessment (Nelson & Narens, 1994)—we consequently developed a dynamic reward function that rewards actions after self-evaluating the chosen strategy.

VSM-ACTR uses the temporal difference (TD) algorithm from reinforcement learning (Sutton & Barto, 2018) as expressed in Eq. (2). Each production rule in the ACT-R model has a utility—a value or strength—associated with it, which is updated using the TD algorithm:

The utilities of production are learned as the model runs, based on the rewards or penalty that are received. We designed the reward function as

To answer the question of whether VSM-ACTR decisions demonstrate learning progression, and capture individual differences, this study first uses descriptive statistics and linear regression to show the average progression of decision types across trials. It then use a mixed linear model to assess and illustrate the effects of trials on decision types across ACT-R model personas, with repeated measures of trials, and random effects to account for individual differences. Last but not least, it uses ordered logistic regression to analyze and understand the relationship between the number of trials and an ordinal dependent variable of learning progress from novice to expert.

We ran the VSM-ACTR model 2,012 times to understood its behavior (Ritter et al., 2011). Each time, we asked it to run 15-16 trials until the model achieved stable expert behavior. We collected data with decision types encoded as 0, 1, and 2 for novice, intermediate, and expert strategies.

Figure 6 shows a significant positive impact of trial exposure on decision-making progression, evidenced by a linear coefficient of 0.086 (

Trend of decision types over trials, blue line is average decision types, red line is variance, decision type 0 is novice, 1 is intermediate, and 2 is expert.

Reduced embedding map to full traces from VSM-ACTR one trail.

A mixed linear model regression confirms the effect of trials on decision-making and further reveals a variance of 0.007 in the random group effects, suggesting that the trials themselves predominantly explain the variability in decision type, while the individual differences exists. Threshold analysis using ordered logistic regression reveals significant transition thresholds. The transition from novice to intermediate has a significant threshold of 0.88 (

With the validated model in hand, we then explain the

Cognitive Decision-Making Knowledge

This study curated VSM-ACTR decision-making knowledge through VSM-ACTR’s traces, which capture the reasoning steps in real time using a concurrent protocol. These traces log the cognitive operations executed by the modules at each decision point. The traces exhibit metacognition, which involves awareness and understanding of one’s own decision-making processes. This is represented through model traces that demonstrate the use of the imaginal buffer for accessing working memory, procedural memory matching and firing, and the self assessment of strategy effectiveness. Traces also exhibit executive function (Gilbert & Burgess, 2008), which involves the evolution of decision-making results across trials and shows how decisions adapt through learning and experience.

As shown in Table 1, the model begins by establishing the goal (line 1) and then proceeds with a novice strategy (line 3, BRUTE/Novice). For the production rules associated with each strategy, the utility of each production rule is updated based on the received reward and the time since the last selection. For instance, the reward computation based on cost analysis (line 6) for the BRUTE choice results in a reward of

Learning An Embedding Space of Decision Traces

The next step is to convert the traces into vectors that LLMs can process. To retain executive function processes, we log decision results and strategy traces, which are then numerically encoded. For instance, 0’ represents a decision for reduced time in the preassembly section, and 1’ for assembly. Encoded data are subsequently fed into the neural network as single vectors.

To retain both executive function and metacognition processes, this study employs a semantic extraction and dimensionality reduction approach. This approach aims to transform a vast number of cognitive reasoning stamps into a vector format that balances information retention with computational efficiency. Traces for each task are processed through a sentence transformer to obtain semantic embeddings for each timestamp. A sum of ranked explanatory effects (SREE) analysis is then applied to determine the number (

Transfer of Learning

Fine-tuning, which involves optimizing model weights for a specific task, has been widely applied in the transfer of learning (Guo et al., 2019). Aiming at transferring human-like decisions with learning, the targets are the encoded vectors that represent executive function processes of each VSM-ACTR persona. The transfer of learning has been reformulated into a classification fine-tuning task, where the final layer of contextualized embeddings—capturing the in-context meaning of tokens by recombining them with other tokens’ embeddings—is used as features. These selected contextualized embeddings provide the richest semantic information while balancing minimal information loss and reduced computational costs for fine-tuning. Additionally, Low-Rank Adaptation (LoRa) was employed for its computational efficiency (Hu et al., 2022). The current

Experiments

Use Semantic Mapping to Evaluate Cognitive Decision Making Traces Vector

To answer RQ1 regarding the properties of a neural network representation of the decision-making process in CAs, we conducted a semantic mapping analysis of the first two principal components of the learned embeddings of each trace. The goal is to explore how the neural network has the potential to learn guided perception, memory, goal-setting, and actions — key components of cognitive decision-making — in an embedding space. We then used MANOVA analysis to examine how the learned embeddings correspond to the semantic of ACT-R’s components, including procedural memory, imaginal memory, goal knowledge, utility updating, and decision-making actions.

Feature Extraction for Behavior Prediction

To answer RQ2: What level of complexity in behavior representation can LLMs effectively capture? This study adopted the similar method of LLMs’ feature extraction for behavior prediction (Hussain et al., 2024). We created datasets consisting of LLMs’ last contextual embeddings as features and the corresponding different levels of VSM-ACTR decisions as targets. We obtained embeddings by passing prompts that included all the information that VSM-ACTR had access to on a given trial and then extracting the hidden activations of the final layer, as shown in Figure 5(b).

The first dataset used targets as VSM-ACTR decisions, where ‘‘0’’ indicates preassembly and ‘‘1’’ indicates assembly. The second dataset’s prompt template added an explanation of the strategy adopted by VSM-ACTR (see Appendix: LLM System Prompt Templates) and used compound targets comprising both the decisions and the strategies reflecting the learning trajectory (novice, intermediate, and expert). The targets were encoded as follows: 0, 1, and 2 for preassembly choices using novice, intermediate, and expert strategies, respectively, and 3, 4, and 5 for assembly choices following the same pattern. With these two datasets, we fitted a regularized logistic regression model using 10-fold cross-validation for the first dataset and multinomial regression using 10-fold cross-validation with L2 regularization for the second. Model performance was assessed by measuring the goodness of fit through negative log-likelihood (NLL) and the predictive accuracy of hold-out data.

Knowledge Transfer

To answer RQ3: whether LLMs can be informed with knowledge about the reasoning processes of CAs, we use a case study to examine whether

Base Model and Data

The case study uses the LlaMa-2 13B (Touvron et al., 2023) model as the base model because it demonstrated effectiveness and efficiency in NLP tasks (Huang et al., 2024). As a state-of-the-art LLM, LlaMa has been trained on trillions of tokens from publicly available datasets. Unlike other transformer-based models such as the GPT family, which can only be accessed at the user’s end, LlaMa’s architecture, including its pre-trained weights, is fully accessible. Furthermore, evidence that its internal representations can be trained to become more aligned with human neural activity has been presented (Binz & Schulz, 2024).

To determine the target size that can effectively perform the fine-tuning task while balancing efficacy and resource limitations, we referred to Kumar et al. (2024), who showed evidence that LlaMa-2 13B would maintain competitive performance in resource-limited text classification with datasets of nearly 1,000 rows per class. Based on this, we created a dataset that contains the 2,012 decision-making trials, obtained by running the developed VSM-ACTR model across 32 problem sets; each ACT-R persona was run for 15 to 16 trials until stable expert behavior was achieved.

Experiment Metrics

The fine-tuning process employs cross-entropy as the loss function and uses Adam optimization. Training involves a train-test split of 0.2 and a batch size of 5 for both training and validation phases. The learning rate was set to 1e

Baseline Models

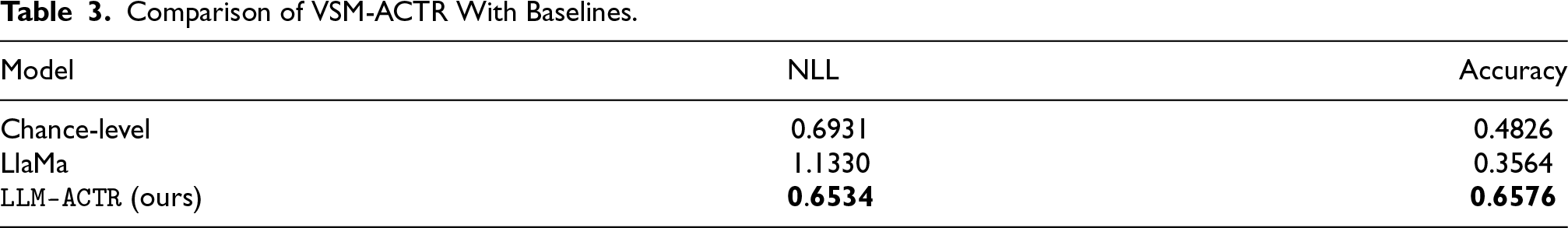

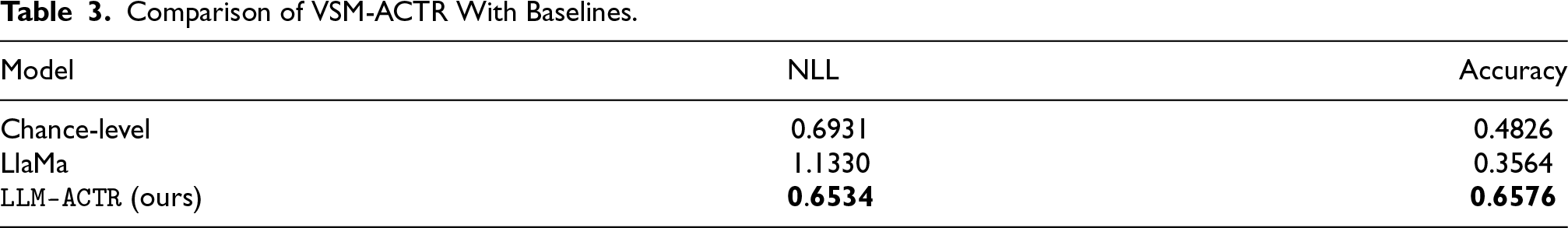

To assess the model’s ability to make human-like decisions, we first split the data into train and validation sets to reserve a set of unseen problems. We then compared the predictive negative log-likelihood (NLL), a measure of goodness-of-fit, of

A random choice model serves as the basic form of control condition to distinguish the effects of treatment from chance (Gaab et al., 2019). This approach allows assessing the extent to which decisions are influenced by knowledge versus being purely stochastic. On the other hand, using LlaMa without fine-tuning as a baseline provides a reference point to measure the impact of knowledge transfer on the model’s performance.

Results

Finding Useful Cognitive Decision Making Embeddings

The approach of distilling executive function processes captures the evolution of decision-making results across trials and illustrates how decisions adapt through learning and experience, all represented as a sequential single vector. This approach is easy to use for downstream tasks but retains only partial knowledge of cognitive decision-making

In addition, Figure 9 displays the reduced embeddings of both metacognitive and executive function processes corresponding to the semantic mapping of ACT-R’s components. The MANOVA analysis was conducted to assess the overall effect of the independent variables, including label categories or ACT-R components, on the combined dependent variables—components of reduced embeddings. This analysis reveals a significant relationship with the semantic mapping of ACT-R’s components. For instance, the Wilks’ lambda value (0.0004) suggests that the label or ACT-R component categories explain nearly all the variance in the dependent variables, indicative of a strong group effect. The statistical tests applied—Wilks’ lambda, Pillai’s trace, Hotelling-Lawley trace, and Roy’s greatest root—all demonstrate strong significance, as evidenced by p-values less than 0.05 across all tests. It shows that the semantics of symbolic and subsymbolic representations of cognitive models can be learned using a neural network, and the principal components retained successfully capture the essential variance related to these cognitive processes, providing a way to preserve cognitive decision-making knowledge in a compact embedding space.

Assessing Behavior Complexity Captured by the Innate LLM

Table 2 shows that

Evaluation for Single and Multi Facets Targets.

Evaluation for Single and Multi Facets Targets.

We first report training and validation losses, across 10 epochs, to reveal the fine-tuned model’s learning and generalization behavior. Initially, the training loss begins at approximately 0.73, with a slight fluctuation observed in subsequent epochs, peaking around epoch 2 and showing a notable dip at epoch 7. In contrast, the validation loss starts at around 0.64 and remains remarkably stable throughout the epochs. This consistency in validation loss, coupled with a generally downward trend in training loss after its initial variations, suggests that the model is learning effectively.

We report next in Table 3 the comparison of the

Comparison of VSM-ACTR With Baselines.

Comparison of VSM-ACTR With Baselines.

Following results for RQ1 that the semantics of symbolic and subsymbolic representations of cognitive models can be learned using a neural network, we conducted a preliminary experiment to extend

After retaining a randomly-chosen 240 full cognitive reasoning traces from the VSM-ACTR model, we processed both executive function and metacognition processes using a semantic extraction and dimension reduction approach (see Figure 5a). The resulting embeddings were concatenated into 240 one-dimensional tensors. We then addressed the issue of ragged tensors due to the individual difference by padding, then calculated the standardized mean values of these tensors to serve as a content vector.

The preliminary experiment extends

Infusing holistic VSM-ACTR traces as content vectors through fine-tuning.

Comparison of NLL across 10 epochs for fine-tuning only and fine-tuning with cognitive content vectors.

The LlaMa model with the modified hidden layer is fine-tuned with 2,012 data points for the binary classification task. The content vectors are set to be trainable. To assess the model’s ability to make human-like decisions, we first split the data into train and validation sets to reserve a set of unseen problems. We then compared the predictive NLL of

The results (Figure 9) show that the addition of the vector representation of VSM-ACTR’s holistic traces during fine-tuning resulted in a slightly decreased mean and reduced variance of NLL across 10 epochs, demonstrating better model fitting and stability compared to fine-tuning only. It indicates that allowing the model to integrate and learn from the cognitive vector during training potentially leads to more nuanced and human-like decision-making capabilities, as captured by the cognitive features encoded in the vector. However, the influence of the cognitive content vector is limited and warrants further investigation, partly because the stochastic simulation of the VSM-ACTR produces decision-making vectors of various lengths. This study addresses ragged tensors by padding, but this approach potentially dilutes or changes the semantics of each vector. To improve the impact of the cognitive vector, additional techniques such as vector optimization will be needed.

Main Insights/Takeaways

This article starts to show how to enable LLMs to replicate cognitive decision-making in CAs via a data-driven approach. We introduce

Further more, (iii) this study presents a developing framework

This development opens up new research directions for equipping LLMs with the necessary knowledge to computationally model and replicate the internal mechanisms of human cognitive decision-making (Oltramari, 2023; Oltramari et al., 2021). It also complements ongoing work showing that LLMs could possibly be transformed into cognitive models through knowledge transfer, (Binz and Schulz 2024), Coda-Forno et al. (2024) and Coda-Forno et al. (2024). For example, Binz et al. (2024) shows that through fine-tuning, LLMs’ internal representations can become more aligned with human behaviors.

Limitations and Future Work

One limitation also stems from the novelty of this study. How closely can we claim that cognitive model personas replicate human behaviors? Currently, our focus is on tuning the model to align with general patterns of learning and error-making; however, VSM-ACTR still requires more granular human data for cognitive fine-tuning. The closer the VSM-ACTR model aligns with human behavior, the more accurately it can represent human decision-making processes and explain human behavior.

However, the more meaningful questions arise from considering the landscape of enabling machine cognitive reasoning. We must ask ourselves what we can learn about cognitive decision-making when we infuse knowledge from CAs into LLMs. For now, our insights are limited to the observation that knowledge from cognitive models can be preserved in an embedding space and could be learned by LLMs, and that embeddings from LLMs can be trained to predict human-like decisions. While this is interesting in its own right, it certainly is not the end of the story. Looking beyond the current work, transitioning from transferring cognitive models’ human-like decisions to LLMs, to guided perception, memory, goal-setting, and actions, will provide the opportunity to apply a wide range of explainability techniques to LLMs’ cognitive decision-making.

One application of this further work can be used to address a common limitation in machine learning innovations—cross-domain generalization, (Akrout et al., 2023) and Yoon et al. (2024).

Credit Author Statement

Siyu Wu: Conceptualization, Methodology, Software, Experiments, Writing - Original Draft, Writing - Review & Editing. Alessandro Oltramari: Conceptualization, Funding Acquisition, Methodology, Software, Writing - Review & Editing. Jonathan Francis: Methodology, Experiments, Writing- Review & Editing. C. Lee Giles: Conceptualization, Writing - Review & Editing. Frank E. Ritter: Writing - Review & Editing.

Footnotes

Acknowledgments

The authors thank anonymous reviewers for constructive feedback.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.