Abstract

Knowledge graphs (KGs) feature ever more frequently as symbolic components in neurosymbolic research and systems. But even though a central concern of neurosymbolic artificial intelligence is to combine neural learning with symbolic reasoning, relatively little neurosymbolic research focuses on leveraging the logical representation and reasoning capabilities of Web Ontology Language (OWL)-based KGs. The objective of this position article is to inspire more neurosymbolic researchers to embrace the OWL and the Semantic Web by raising awareness of the benefits, capabilities, and applications of OWL-based KGs, particularly with respect to logical reasoning. We describe the ecosystem of open W3C standards-based resources available that support the adoption and use of OWL-based KGs; we describe tools that exist for engineering custom OWL ontologies tailored to particular research needs; we discuss the encoding of background KG knowledge in subsymbolic embedding spaces and various applications of this approach; we discuss and illustrate the reasoning capabilities of OWL-based KGs; and we describe several promising directions for research that focus on leveraging these reasoning capabilities. We also discuss the specialised resources needed to undertake research on OWL-based KGs in neurosymbolic systems. We use the example of NeSy4VRD, an image dataset with a custom-designed companion OWL ontology. The scarcity of this kind of resource should be addressed to accelerate research in this field.

Keywords

Introduction

Following a long gestation spanning decades, neurosymbolic artificial intelligence (NeSy AI) has recently blossomed into a recognised subfield of AI. While neural and symbolic traditions of AI have been tribally rival, recently there is a vibrant diversity of approaches blending the two (Sarker et al., 2021). Prompted by analysis of the limitations of deep learning (in, e.g. Chollet, 2018; Kautz, 2022; Marcus, 2018a, 2018b, 2020), and despite the recent advances resulting from scaling up deep learning, as evidenced in large language models (LLMs), increasing number of researchers are drawn to NeSy AI. The shared motivation is to explore combinations of neural learning and symbolic knowledge representations in order to get the best of both worlds, in a shared belief that this is the best route for advancing AI towards artificial general intelligence.

Knowledge graphs (KGs) are representations of symbolic knowledge that conform to a graph model, where nodes are concepts and entities of interest, and edges are relationships between them (Hogan et al., 2021; Kejriwal et al., 2021). As NeSy research has expanded, so has the frequency with which KGs feature as symbolic components in hybrid, NeSy systems (Hitzler, 2021). One example of this is the progressively developing theme of ‘deep deductive reasoning’ (Bianchi & Hitzler, 2019; Ebrahimi et al., 2021a, 2021b), where neural networks (NNs) are trained to reason over KGs. KGs have also been shown to be helpful when data samples are expensive, difficult or impossible to obtain, such that there is a lack of data with which to train robust deep learning-based systems, as in few-shot and zero-shot learning scenarios (Chen & Chen, 2021; Chen & Geng, 2021; Geng et al., 2021).

The Web Ontology Language (OWL) (Hitzler et al., 2012; Motik et al., 2012) is a key component of the Semantic Web technology stack (The Semantic Web Stack, 2022; The Semantic Web Wiki, 2001). OWL ontologies (semantic schemas enriched with logic that describe domain knowledge symbolically) govern Semantic Web (SW) KGs by specifying what assertions of knowledge (types of triples) are admissible and inadmissible. Inference semantics (ontological rules) associated with OWL constructs permit reasoning algorithms to reason over OWL ontologies (and associated KG data, vast or tiny), both to infer new knowledge (new triples) and to enforce logical consistency constraints. With suitable ontology design, inference can be used as prediction, and incremental (on-the-fly) reasoning can facilitate real-time interaction. In summary, OWL-based KGs can be used as symbolic deduction engines in NeSy systems. The NeSy system AlphaGeometry (Trinh et al., 2024) combines an LLM with a symbolic deduction engine (Horn clause geometry and algebra rules, plus inference algorithms) to solve geometry problems. OWL-based KG technologies offer researchers the option to explore combining neural learning and symbolic reasoning in ways analogous to AlphaGeometry, by using OWL-based symbolic deduction during NN training and/or inference.

Given that combining neural learning with symbolic reasoning is central to NeSy AI, it is surprising how scant the literature is that explores applications of OWL reasoning in NeSy systems. A systematic mapping study of 476 recent papers that combine Semantic Web technologies with machine learning (Breit et al., 2023) reports that only 29 (about 6%) mention using semantic processing modules of some kind (where, by ‘semantic’, the study means symbolic knowledge representation). The dominant use cases for such modules relate to rulesets (learning them, improving and applying them) and to data enrichment. The study also finds that of these 29 papers, only 20 (about 4% of the total) mention using reasoning capabilities to infer knowledge. We consulted that study’s companion SWA and machine learning systems KG resource, SWeMLS-KG (Ekaputra et al., 2023), to find those 4% of papers. Of the 17 we identified, we found only five to use OWL reasoning. Another recent survey and vision paper (d’Amato et al., 2023) reviews the role of KGs in machine learning, pointing out gaps and opportunities, and also observes that KG symbolic reasoning methods are under-explored and largely disregarded.

One factor explaining this under-exploration may be the cross-disciplinary nature of the endeavour. NeSy research with OWL-based KG reasoning requires researchers to be familiar not just with deep learning, KGs, and logic, but with Semantic Web technologies, especially OWL ontologies, as well. The authors of d’Amato et al. (2023) point to the prevalence of huge public KGs and to the perceived scalability limitations of symbolic reasoning methods in the face of such large KGs as an explanation. A third factor may be that the possibility of using OWL-based KG technologies to tailor symbolic deduction engines for NeSy systems has been under-recognised. After all, the Semantic Web was not conceived with such applications in mind.

The objective of this article (which builds on Herron et al., 2023) is to argue the case for NeSy AI research using OWL-based KGs. We hope to inspire more NeSy research using OWL-based KGs by raising awareness of their benefits, capabilities and flexible applications, especially with respect to reasoning. OWL-based KGs are exemplars of the symbolic knowledge representation and symbol manipulation and reasoning machinery that critiques of deep learning, such as by Chollet (2018), Kautz (2022) and Marcus (2018a; 2018b; 2020), advocate be incorporated in NeSy systems. By drawing upon illustrative examples from our own research in visual relationship detection as well as from the literature, and by describing promising research directions, we hope to convince readers of the potential of OWL-based KGs. We also discuss how to enable more NeSy research using OWL-based KGs through the creation of resources such as the recently contributed NeSy4VRD (neurosymbolic AI for visual relationship detection). NeSy4VRD represents one step towards addressing the scarcity of the specialised resources required for NeSy AI research using OWL-based KGs: resources that combine datasets for neural learning with companion OWL ontologies that describe the domain of the data in order to support pertinent symbolic reasoning.

Benefits and Capabilities of OWL-Based KGs

In this section, we describe benefits and capabilities of OWL-based KGs. We illustrate capabilities by giving examples showing how and why OWL-based KGs can be utilised in NeSy systems.

Open Standards and Reusable Resources

OWL (the Web Ontology Language) is a key component of the W3C open standards ecosystem of the SW (Berners-Lee et al., 2001; Hitzler et al., 2010; The Semantic Web Stack, 2022; The Semantic Web Wiki, 2001). Open standards facilitate interoperability and promote development of reusable, often free, software resources that make it easy to work with OWL ontologies and OWL-based KGs. Amongst the many such resources are (i) public SW KGs like DBpedia (Lehmann et al., 2015), Wikidata (Vrandečić & Krötzsch, 2014) and Yago (Tanon et al., 2020); (ii) public repositories of curated OWL ontologies like BioPortal (Whetzel et al., 2011) and OBO Foundry (Jackson et al., 2021) in the biomedical domain; (iii) RDF stores like GraphDB (it is not open, but it has a free version) (Ontotext GraphDB, 2023) and RDFox (it is not open, but it has a free academic license) (Nenov et al., 2015); and (iv) efficient OWL reasoners like HermiT (Glimm et al., 2014), Pellet (Sirin et al., 2007), RDFox, and ELK (Kazakov et al., 2011).

Custom Ontologies and Custom KGs

Reusing state-of-the-art ontologies and/or public KGs is a ‘good practice’ option. But researchers can also design their own custom, domain-specific OWL ontologies tailored to their datasets and unique needs. They can then use these to govern and enable reasoning within custom OWL-based KGs. Custom ontologies can also be aligned with publicly available ontologies to enhance interoperability (Euzenat & Shvaiko, 2013).

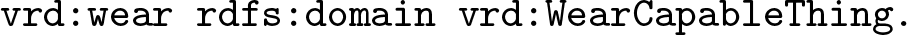

This custom approach is the one taken in our research into visual relationship detection in images, for which we designed a custom OWL ontology called VRD-World (Herron et al., 2023). This ontology describes the domain of the everyday images of the VRD dataset (Lu et al., 2016), as reflected in the object classes and relationships referred to in the

An example neurosymbolic system architecture for detecting visual relationships in images. By using a Web Ontology Language (OWL)-based knowledge graph with an appropriate ontology as a symbolic deduction engine, feedback from OWL reasoning can influence loss to guide neural learning.

We designed two versions of a class hierarchy for use with our VRD-World ontology. Both hierarchies contain classes that map to the broad range of everyday object classes present in the images and annotations of the VRD dataset (e.g.

KGs (of all kinds) have inspired a large amount of NeSy research into encoding KG symbolic background knowledge into vectors as KG embeddings. The embeddings preserve semantic similarity and reflect this similarity by proximity within the embedding vector space (Chen et al., 2020; d’Amato, 2020; Dai et al., 2020; Nickel et al., 2015; Rossi et al., 2021; Wang et al., 2021). The primary application area of KG embeddings so far has been tasks relating to KG completion: link prediction (relating individuals in a KG) or type prediction (classifying individuals in a KG). Regardless of the model used to generate the embeddings (of which there are many), these link and type prediction problems are typically cast as neural classification problems, where the embedded KG knowledge is used for training and where methods exploiting the proximity principle are applied (as a form of geometric inference) to help make predictions.

Like all KGs, OWL-based KGs are readily used in NeSy research that leverages KG embeddings. OWL2Vec* (Chen et al., 2021) is one embedding model designed for this purpose. Notice, though, that these applications of KG embeddings focus on leveraging KG symbolic background knowledge only. So even if the KG in question is OWL-based, its reasoning capabilities are generally not employed in these applications.

Link inference and type inference performed by logical reasoning are, however, the bread and butter of OWL reasoners. When an OWL reasoner infers the knowledge that is entailed by the inference semantics of a governing OWL ontology in the presence of KG data, it completes the KG by introducing new, explicit (knowledge) triples that were previously implicit. This process is called materialisation. The logical soundness of these inferences is guaranteed, whereas embedding-based KG completion is approximate and potentially error prone. The extent to which the KG is extended (completed) is commensurate with the richness of the inference semantics of the governing ontology and the nature of the KG data present at the time of materialisation. Our point is that OWL-based KGs can add important value in any NeSy task associated with KG completion. OWL reasoning can be used to complete a KG automatically, as far as possible, and then NeSy KG completion (NN emulated reasoning) can be used for special cases that the OWL ontology in question does not address or that OWL cannot address in general.

As discussed by Buffelli and Tsamoura (2023), the phrase ‘knowledge injection’ is used with different meanings. It can be used to refer to the injection of knowledge in symbolic form, such as in logic tensor networks (LTNs) (Badreddine et al., 2022; Serafini & d’Avila Garcez, 2016), with its Real Logic axioms that are woven into loss functions. It can also refer to the injection of knowledge represented in subsymbolic form, e.g. as embeddings. One subcategory of subsymbolic knowledge injection involves the use of KG embeddings (e.g. Myklebust et al., 2022) as domain knowledge supplements to primary training data. The hypothesis here is that ‘data + knowledge’ can enhance deep learning. This is what the authors of Fu et al. (2023) mean when they speak of knowledge injection. According to Sheth et al. (2019), this approach is called knowledge-infused learning. Much of the research in this area focuses on language models. Using KG entity linking techniques, concepts and entities mentioned in text are matched with corresponding KG entities. The (pre-computed) embeddings of matched KG entities are then looked-up and injected into language models (typically during fine-tuning), often deep within transformer self-attention blocks. For example, (Peters et al., 2019; Roy et al., 2023; Yamada et al., 2020; Zhang et al., 2019) explore variations on this theme and all report performance improvements from injecting KG embeddings as background knowledge.

The efficacy of this approach to KG knowledge injection has come into question, however. Having examined several established language model knowledge injection frameworks and repeated the published experiments, the authors of Fu et al. (2023) conclude that whatever is injected has an effect indistinguishable from that of Gaussian noise. They surmise that fine-tuning does not permit pre-trained language models sufficient opportunity to disentangle and assimilate the latent knowledge in the injected KG embeddings.

We suspect that the prevailing paradigm of matching text to KG entities, and injecting the corresponding embeddings, may limit the use of KG knowledge. KGs express knowledge relationally and KG embedding spaces attempt to represent that relational knowledge subsymbolically. The knowledge is implicit in the relations between pairs and clusters of vectors in the embedding space, and in how clusters are distributed across the space. There may be little usable knowledge residing in individual embeddings considered in isolation (i.e. single points in space). Discordance between heterogeneous text and KG embedding spaces may also be a factor contributing to the findings by Fu et al. (2023). We suggest that new paradigms crafted to facilitate the harvesting and injection of relational knowledge warrant exploration.

Advances in subsymbolic KG knowledge injection will in part be driven by advances in KG embedding models. As discussed in papers such as Abboud et al. (2020), Chen et al. (2023) and d’Amato et al. (2023), KG embedding models currently capture only a portion of the rich semantics of OWL ontologies. Some embedding models focus on capturing lexical and syntactic patterns such as entity/word correlations; others focus on capturing aspects of logical relationships such as hierarchical structure; integrating numeric literals remains a challenge. Research into methods that can embed more of OWL’s logical expressiveness and logical relationships, and that can embed the multiple aspects mentioned here jointly, remains preliminary (e.g. Jackermeier et al., 2024).

With any application of KG embeddings – KG completion, knowledge injection deep into NNs, or otherwise – the reasoning capabilities of OWL-based KGs may potentially add important value. It seems intuitive that fully materialised OWL-based KGs, where everything implicit has been made explicit, contain more embeddable knowledge and should lead to embedding spaces that better reflect the totalities of symbolic domain knowledge entailed by KGs. However, more embeddable knowledge (more triples) does not necessarily lead to better performance in downstream tasks that consume KG embeddings. Studies such as Iana and Paulheim (2020) show that downstream performance may degrade. Nevertheless, the authors of d’Amato et al. (2023) share this intuition and call for extensive research to study the potential of materialised KGs in KG embeddings, so that guidance can evolve around whether, when, and why to use KG materialisation.

OWL-Based KG Reasoning, Rules, and Symbolic Deduction Engines

Despite recent successes, large language models are notorious for their lack of reliability in reasoning. In contrast, the reliability of OWL reasoning is guaranteed because it is grounded in formal Description Logics (DLs). DLs are decidable fragments of first-order logic with strong connections to set theory (Baader et al., 2007, 2017; Brachman & Levesque, 2004; Nardi & Brachman, 2003). A prominent example is

OWL reasoning falls into two broad categories. One category involves the inference of new knowledge, where entailed but implicit triples are made explicit by materialising them (inserting them into the KG). The other category involves checking the logical consistency of ontologies and KGs. Both categories rely on logical inference rules, but whereas the rules of the former category have logical consequents, the rules of the latter category do not. Both categories of reasoning are commonly used for debugging during OWL ontology development (Jiménez-Ruiz, 2010; Kejriwal et al., 2021). What appears to be less well recognised is that both categories of OWL reasoning can also be leveraged in NeSy systems. Some RDF triple stores (like GraphDB and RDFox) do their OWL reasoning as each triple is inserted into the KG. This has important implications for the feasibility of integrating OWL-based KG reasoning into NeSy systems. New knowledge that is entailed by the insertion of a triple (or a small set of triples) is immediately available for inspection, and triple insertion attempts that violate logical consistency rules are immediately rejected (so as to maintain the overall logical consistency of the KG). Both types of response (or feedback) provide information that can be leveraged in the context of hybrid NeSy systems. As is depicted in Figure 1, such OWL-based KG technologies can be used to assemble symbolic deduction engines that perform domain-specific and system-specific OWL reasoning on-call and on-the-fly. Figure 1 shows the feedback from on-the-fly OWL-based KG reasoning being used to guide neural learning by influencing the calculation of loss.

Depending on the application, the KGs of such symbolic deduction engines may need to contain only minimal data at any one moment. As long as a governing OWL ontology is present, fast OWL reasoning can proceed against small number of inserted data triples, and these might be deleted just as rapidly once the momentary reasoning service has been performed and the feedback delivered. OWL reasoning in such symbolic deduction engines can be leveraged not just during NN training but during inference as well. For instance, predictions of visual relationships generated at inference time can be inserted into a KG in order to verify their semantic validity. Ones found to be semantically invalid (i.e. that lead to logical inconsistency) can be filtered from the set of predictions.

Before we discuss examples of NeSy systems found in the literature that use OWL reasoning, we first review some basic OWL reasoning examples. Link inference is driven mainly by the inference semantics associated with the characteristics (e.g. symmetry and transitivity) and relationships (e.g. inverses, subproperties and equivalent properties) declared for the object properties of an OWL ontology. Suppose a property

The authors of Chang et al. (2020) leverage OWL type inference in a more elaborate way such that a tutoring system can react intelligently in response to interactions with human learners. A custom OWL ontology models the tutoring system domain and contains descriptions of classes that correspond to tutoring system actions. Data regarding learner interactions with the system are progressively loaded into the system’s KG. The OWL reasoner HermiT reasons over the KG to infer new knowledge based on the learner interaction data. In the process, each learner interaction is classified as belonging to one of the tutoring action classes, resulting in inferred triples such as, say,

OWL reasoning can be extended by accompanying OWL ontologies with complementary rules. Such rules are constructed with reference to the classes and properties defined in the ontology, and they typically infer new knowledge (new triples). Various rule technologies for extending OWL exist, such as SPARQL rules (Harris & Seaborne, 2013), SWRL (Semantic Web Rule Language) rules (Horrocks et al., 2004), and (more commonly, today) Datalog rules (Abiteboul et al., 1995; Ceri et al., 1989; Green et al., 2013).

One example in the literature, (Donadello et al., 2019), uses a custom OWL ontology that describes dietary and physical activity domains and healthy lifestyle behaviours, supplemented with SPARQL rules representing unhealthy lifestyle behaviours, in a digital healthcare NeSy system. User diet and activity data are loaded into the system’s KG. The RDFpro tool (Corcoglioniti et al., 2015) drives the reasoning and rule execution. If a SPARQL rule is satisfied, an unhealthy behaviour has been detected, and the rule infers (creates) instances of rule violations in the KG. The rule violations are then rendered into natural language to encourage healthier user behaviours. The authors of Mouakher et al. (2019) use a custom OWL ontology supplemented with SWRL rules as part of a system for monitoring vineyards. Data gathered by a wireless sensor network measuring micro climate conditions around a vineyard are funnelled into the system’s KG. The Pellet OWL reasoner reasons over the KG to infer new triples that are interpreted as predictions of risk of particular diseases and pests. The authors of Nakawala et al. (2019) use a custom OWL ontology supplemented with SWRL rules as part of a NeSy system for recognising surgical processes for robot-assisted surgery. A CNN recognises the current surgical workflow step; an LSTM RNN predicts the next surgical workflow step; and by reasoning over these inputs in the presence of the ontology and SWRL rules, the Pellet OWL reasoner infers supplementary surgical context information, such as the surgical phase, surgical instruments used, and actions to be taken.

The basis of the logical knowledge representation formalism for combining OWL ontologies with Datalog rules is defined by Grosof et al. (2003). This formalism permits rules that refer to ontology vocabulary to be layered on top of ontologies, and it permits logic programming algorithms to reason efficiently over large ontologies. In the VRD dataset setting, a Datalog-like rule describing when it is reasonable to infer the visual relationship

In the body of this rule, the first two conditions rely on leveraging OWL type inference; the third condition extends OWL’s capabilities by evaluating the spatial relationship between the bounding boxes of the two objects. Suppose an object detection NN predicts

Promising Research Directions

In this section, we describe some application areas where the potential for leveraging OWL-based KGs and OWL reasoning in NeSy systems looks promising.

Using OWL Reasoning to Enhance Annotations and Strengthen Weak Labelling

OWL reasoning has been used to enhance annotations. The authors of Edris et al. (2017) use a small ontology crafted using a fuzzy DL, and a reasoning engine that uses a tableaux algorithm for doing

The VRD dataset we use in our research has weak labelling in the sense that its images are not exhaustively annotated, either in terms of objects or relationships. The visual relationship annotations of its images are sparse and arbitrary, and hence the supervision they provide during NN training is partial and inconsistent across images. OWL reasoning can mitigate weak labelling by augmenting it and making it more consistent. The properties of our VRD-World ontology (which correspond to predicates in annotated visual relationships) are rich in characteristics and relationships that carry inference semantics for link prediction (see Section 2.4). Our experiments with OWL reasoning over VRD-World have shown that the average number of annotated visual relationships per VRD training image increases by a factor of 2.5. Supplementing the ontology with Datalog rules that extend OWL reasoning is expected to yield further augmentation.

The augmentation of the ground-truth annotations for each image could, in theory, be performed in real-time within a NN training loop, but the same reasoning and augmentation would be performed repeatedly, each time each image is re-encountered in successive training epochs. So, in our case, it is more efficient computationally to do the annotation augmentation once, upfront, by materialising a KG containing the annotations of all images and saving the augmented annotations to a file. But the option of using OWL reasoning to perform annotation augmentation on-the-fly, is worthy of note because settings may arise where this facility is advantageous. Either way, augmented, denser and more consistent annotations are likely to provide a less noisy loss signal for neural learning.

The examples just discussed share the notion of using OWL reasoning to infer plausible annotations in the absence of explicit annotations. This notion has broad application. Within supervised learning, it may apply to many datasets (like the VRD dataset) that are not exhaustively annotated. It is also relevant in semi-supervised learning (where some examples are labelled, others not), and potentially in unsupervised learning problems as well. Further, the notion of inferring plausible annotations may be valuable in

Enabling NNs to Emulate OWL Reasoning

One approach to NeSy AI involves introducing structural extensions to NN architectures and injecting background knowledge as strong priors in weight matrices. An example of this approach is Kopparti and Weyde (2019). As part of our research, we have begun to explore this approach to NeSy AI by considering the feasibility of transferring OWL-based KG knowledge to NNs (or otherwise equipping them) so that they might emulate aspects of OWL reasoning. One idea involves representing the transitive closure of an OWL ontology class hierarchy (as inferred by OWL reasoning) as a binary adjacency matrix (per graph theory) that can be injected into a classification NN as the weight matrix of a supplementary classification output layer. A proof of concept exercise indicates that this tactic should permit a classifier to emulate the subsumption reasoning (type inference) capabilities of an OWL-based KG. For example, suppose a NN classifier that initially classifies a data sample

The potential for leveraging cross-over synergies such as this between OWL-based KGs and NNs is ripe for exploration and development. One avenue for investigation might involve adding more learnable layers to a classifier following the class generalisation extension layer just described so that learning might proceed driven by generalised class predictions. Another option might be to extend the solution just described for generalising multi-class, single-label predictions to the multi-class, multi-label setting. A further option is to apply this technique for transferring OWL class hierarchy knowledge to a NN to the transference of OWL property hierarchy knowledge to a NN. Our Predicate Prediction NN (in Figure 1), for example, predicts predicates (which map to OWL properties) that relate two objects. The VRD-World ontology declares that property

Using OWL Reasoning for Applying Logical Constraints

Much NeSy research explores using background knowledge expressed in first-order logic, propositional logic or logic programming as constraints to guide neural learning, often by manipulating loss to encourage constraint satisfaction. Examples are (i) the NN training framework LTN which allows fuzzy, first-order Real Logic knowledge axioms (constraints) to be defined over training data; (ii) the set of propositional logic constraints specified for the ROAD-R dataset (Giunchiglia et al., 2023); and (iii) the (Prolog) rules defined by Cornelio et al. (2023). OWL-based KG technologies can be used for the same purpose. Recall from Section 2.4 that OWL reasoning spans two categories of logical inference, one which infers new knowledge and one which checks for logical consistency. Both categories of inference can be leveraged to enable OWL-based KGs to participate in research associated with the logical constraints approach to NeSy AI.

First we consider the latter category. We assume a context of on-the-fly OWL reasoning, which permits logical consistency checks to be applied at the point of triple insertion. In this setting, OWL’s logical consistency inference rules can be leveraged as though they were logical constraints. If an OWL-based KG rejects the insertion of a triple, this event signals a violation of a consistency rule and therefore the violation of some logical constraint. Such events can thus be used to penalise loss. The number and nature of the logical constraints covered by OWL’s logical consistency rules varies with the design of the OWL ontology. If one opts to design a custom ontology, one can arrange for specific logical constraints to exist that will be covered automatically by OWL’s logical consistency checks, much like one might craft a logic programme with specific inference intentions in mind. For a given rejection event, it may be feasible to surmise which logical constraint was violated based upon knowledge of the ontology’s design and of the triple(s) that triggered the violation. Alternatively, the OWL reasoner Pellet provides explanations for logical inconsistencies to an extent. The Protégé editor also has facilities and plug-ins that provide explanations for detected ontology inconsistencies.

As a concrete example, we discuss the use of domain and range restrictions as logical constraints in connection with the VRD dataset. We describe how these have been used in the context of LTN and then compare that approach with how they can be used in OWL ontologies. According to Donadello and Serafini (2019), negative domain and negative range LTN Real Logic axioms (constraints) are used to train binary classifiers for the predicates of the VRD dataset. The VRD dataset has 100 object classes, so to train a binary classifier for predicate

OWL can express equivalent logical constraints, and can do so more concisely. The VRD-World ontology can express the equivalent of the close to 100 negative domain constraints (1) by defining the (disjoint) classes

Similarly, the logical constraint equivalent of the close to 100 negative range LTN Real Logic axioms can be expressed (1) by defining the (disjoint) classes

Figure 1 shows how an OWL-based KG with an appropriate ontology (such as VRD-World) can be used, in the guise of a symbolic deduction engine, to leverage ontological rules as logical constraints to guide neural learning. Suppose the Object Detection NN predicts that

can be inserted into the KG. OWL type inference will infer that

In addition to illustrating that OWL-based KGs can emulate the logical constraints approach to NeSy AI, this example also illustrates an important advantage possessed by OWL-based KGs over other approaches to using logical constraints. The research by Donadello and Serafini (2019) shows that the logical constraints approach to NeSy AI is exposed to the risk of combinatorial explosion, where the number of constraints requiring expression grows too rapidly with the number of classes in the dataset. Almost 200 LTN

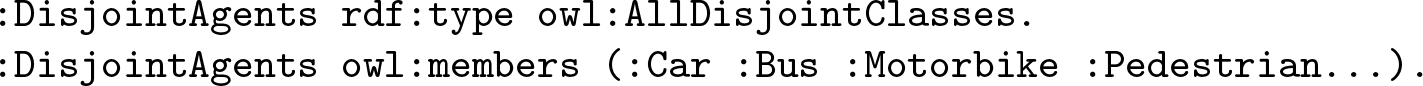

This comparative advantage possessed by OWL for expressing background knowledge (and logical constraints) concisely is reinforced by considering a different example. The autonomous vehicle driving videos and annotated bounding boxes of the ROAD-R dataset (Giunchiglia et al., 2023) are accompanied by 243 manually specified propositional logic constraints that define the permissible combinations of labels for 10 agent classes, 19 agent action classes and 12 agent location classes. Amongst the 243 logic constraints, 45 have a format such as

Similarly, 66 of the ROAD-R propositional constraints express pairwise mutual exclusiveness amongst the 12 agent location classes. Counterparts of these can be represented in OWL using just two more such axioms.

In fact, by making appropriate use of OWL’s constructs for declaring domain and range restrictions, disjoint classes, disjoint properties, functional properties, and the like, it may well be possible to design an OWL ontology that emulates all of the 243 propositional logic constraints specified for the ROAD-R dataset. Doing so would, in theory, make it feasible to repeat the ROAD-R experiments by Giunchiglia et al. (2023) using and OWL-based KG as a symbolic deduction engine instead of using the original propositional constraints with a SAT solver as a reasoning engine.

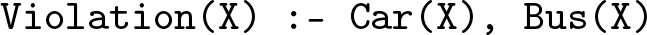

Now we consider how OWL’s other category of logical inference – the one that infers new knowledge – can be leveraged in the context of using logical constraints to guide neural learning. An alternate strategy for using OWL to emulate the propositional logical constraints of the ROAD-R dataset is to employ the concept of integrity constraints described by Kharlamov et al. (2016). Rather than checking and enforcing ontology (KG) logical consistency, integrity constraints employ Datalog rules that supplement an OWL ontology to represent logical constraints. Such constraint rules, if satisfied, infer explicit new triples into the KG which can then be queried and interpreted as signals of constraint violations. For example, the Datalog integrity constraint rule

declares that an agent cannot be both a car and a bus. Similarly, the hypothetical ontology would permit the creation of rules like

to establish that a traffic light (TL) cannot be red and green at the same time. Note that, unlike in the propositional case, Datalog rules provide additional granularity for describing cases in which an image contains more than one TL. This approach using integrity constraints could also be applied to the VRD case, with rules like

Integrating OWL-Based KG Reasoning With Existing NeSy Frameworks

OWL-based KG symbolic knowledge and deductive reasoning can be integrated with and leveraged by existing logic-based NeSy frameworks such as LTN. LTN functions that encapsulate interactions with OWL-based KGs can, in theory, participate in LTN Real Logic knowledge axioms used to train NNs. One precondition is that there is sufficient contextual information contained in LTN tensors (or otherwise) to permit RDF triples to be constructed and inserted into a KG to drive reasoning, and/or to enable KG queries to be formulated. The only other precondition is that the results of KG interactions can be mapped to fuzzy truth values in

One application of this idea involves using OWL-based KG reasoning to manage the risk of combinatorial explosion (described in Section 3.3) to which the logical constraints approach to NeSy AI is exposed. A prime cause of exposure to this risk derives from the fact that logical constraints (as used by LTN and the ROAD-R dataset, for example) are restricted to being expressed in terms of the low-level, granular object classes present in data and their annotations. The option to express constraints more concisely, in terms of higher-level, more general classes, is not available. In contrast, OWL ontologies routinely possess rich class hierarchies that permit ontological rules to be defined in terms of high-level, general classes, which affords simplicity and parsimony.

To illustrate, consider again the research undertaken by Donadello and Serafini (2019), where the use of negative domain and range constraints leads to the need for an intractable number of LTN Real Logic knowledge axioms to be crafted. This time, however, suppose that we integrate interactions with an OWL-based KG (acting as a symbolic deduction engine) into our LTN Real Logic knowledge axioms in order to map the granular classes present in the data to higher-level, more general classes defined in the class hierarchy of the VRD-World ontology. Using this strategy, we can imagine replacing the original (close to) 200 negative domain and range LTN constraints used to train a binary classifier for VRD predicate

A more compute-efficient implementation of the proposal just described is also feasible. In this setting, we only wish to exploit the type inference (subsumption reasoning) capabilities of OWL reasoning. But, as we saw in Section 3.2, for a given OWL ontology, these capabilities can be fully encoded in the adjacency matrix of the transitive closure of the ontology’s class hierarchy. So instead of interacting with an OWL-based KG to use OWL reasoning to map granular classes to higher-level classes, we can instead use the adjacency matrix to do the mapping.

Integrating OWL-based KG reasoning with LTN in the manner just described is a specialised approach to blending OWL (a DL) with (fuzzy) first-order logic (FOL). Another approach to blending OWL with FOL is to translate it into FOL. This leads to opportunities to extend OWL reasoning capabilities by augmenting OWL ontologies with supplementary FOL axioms (or ontologies) that express things OWL cannot. Tools that support this approach include Hets (The Heterogeneous Tool Set) (Mossakowski et al., 2007) and Gavel-OWL (Flügel et al., 2022). The resulting integrated FOL ontologies (OWL-to-FOL axioms, plus supplementary FOL axioms) that such tools produce are reasoned over using established FOL Automated Theorem Provers (ATPs).

The ‘translate OWL to FOL’ strategy just described and the ‘translate OWL to Datalog’ strategy mentioned in Section 2.4 are instances of the same pattern: (i) translate OWL into logic space X; (ii) optionally extend OWL with supplementary knowledge expressible in logic space X; and (iii) reason using the logical inference technology established for logic space X. Such strategies widen the window of opportunity for leveraging the available OWL resources in NeSy frameworks.

Enabling NeSy Research Using OWL-Based KGs With NeSy4VRD

Sections 2 and 3 focus on inspiring more NeSy research using OWL-based KGs by highlighting their benefits, capabilities, and applications, especially with respect to reasoning, and particularly in symbolic deduction engine settings. But inspiration alone may not be enough. To undertake NeSy research with OWL-based KGs and reasoning in a practical way, researchers need to also be enabled with appropriate dataset resources. Resources are needed that combine data for neural learning with strongly-aligned, companion OWL ontologies describing the domains of the data in order to support directly pertinent symbolic OWL reasoning. Such resources are scarce. We suspect this scarcity represents a silent barrier that inhibits NeSy research using OWL-based KGs that might otherwise be undertaken. As well as echoing our observations, (d’Amato et al., 2023) calls for a central repository for such specialised resources in order to simplify their discovery. One resource of this kind (one which belongs in such a repository) is NeSy4VRD (neurosymbolic AI for visual relationship detection). NeSy4VRD was co-developed and published by the authors of this article (Herron et al., 2023) to help address the scarcity issue.

NeSy4VRD consists of the following components and services:

the images of the original VRD dataset (Lu et al., 2016) (distributed with permission from one of the principals associated with its creation) in order to make them publicly available once again; quality-improved versions of the original VRD visual relationship annotations that have been comprehensively customised and extended to enable the engineering of a robust ontology; a strongly-aligned, custom-designed companion OWL ontology, called VRD-World, that precisely describes the domain of the images and visual relationships; sample Python code for loading the annotated visual relationships into a KG hosting the VRD-World ontology, and for extracting them from a KG and restoring them to their native format; support for extensibility of the annotations (and, thereby, the ontology) in the form of (a) comprehensive Python code enabling deep but easy analysis of the images and their annotations, (b) a custom, text-based protocol for specifying annotation customisation instructions declaratively and (c) a configurable, managed Python workflow for customising annotations in an automated, repeatable process; comprehensive documentation describing (a) how to use the extensibility support infrastructure, (b) how to share annotation/ontology extensibility projects undertaken by researchers in pursuit of their private research interests, (c) how to reuse shared extensibility projects and use the NeSy4VRD workflow to compose them in novel combinations and (d) how the ability to undertake, share, reuse and compose NeSy4VRD extensibility projects represents a new model of collaborative data annotation that we call Distributed Annotation Enhancement.

The NeSy4VRD dataset package (VRD images, quality-improved visual relationship annotations and companion VRD-World OWL ontology) is distributed on Zenodo.

1

The NeSy4VRD extensibility support infrastructure and comprehensive documentation are available on GitHub.

2

A central concern of NeSy AI research is to explore ways of combining neural learning with symbolic background knowledge and reasoning. OWL-based KGs are exemplars of symbolic knowledge representation and reasoning technology and machinery. They can do everything that general KGs can do in terms of representing symbolic knowledge and generating embeddings, plus they can perform sound deductive reasoning to both infer new knowledge and enforce logical consistency, and they can do so in the guise of symbolic deduction engines. Given these attractive features, OWL-based KGs warrant more research attention from the NeSy community than they have received. Their potential for contributing to NeSy AI is not being fully explored. By describing and illustrating their benefits, capabilities, and flexible applications, we have endeavoured to inspire more such research. By having contributed NeSy4VRD – a specialised and scarce dataset resource – to the NeSy community, we hope to have lowered barriers to entry and thereby enabled more such research. A recent overview of NeSy systems (Sheth et al., 2023) reports success using an OWL-based KG to boost expert user satisfaction with large language model performance. Like us, the authors strongly advocate the use of KGs (general and OWL-based) as symbolic components in NeSy systems.

Footnotes

Acknowledgements

This work has been partially supported by the Academy of Medical Sciences Network Grant (Neurosymbolic AI for Medicine, NGR1

Funding

The author(s) received no financial support for the research, authorship and/or publication of this article.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.