Abstract

Using a novel dataset on Twitter activity as well as a novel corpus of law journal publications, this paper examines the impact of social media activity on the scholarly success of U.S. law professors. We find that joining Twitter increases citation counts by an average of 22% per year and improves article placements by up to 10 ranks for law professors, relative to a synthetic control group. These positive returns apply across nearly all classes of scholars and are magnified for those who post frequently about their own work. The identified citation boost would be even larger than 22% if it were not partially offset by a decline in citations to articles published pre-Twitter. Overall, our results suggest that social media participation yields concrete benefits in the legal academy—indeed, benefits outstripping those that prior studies have identified in other disciplines—along with a number of potential downsides.

1. Introduction

Over the past two decades, legal scholars have written extensively about their use of social media to disseminate ideas and engage with new audiences. Twitter (now X) has been a particular focus of attention (Becker, 2012; Risch, 2015). Familiar topics of debate include whether and to what extent professional norms ought to apply on platforms like Twitter (Hessick, 2018; Skinner, 2011), the ethical concerns raised by posting on legal issues (McPeak, 2019; Vinson, 2010), and the intellectual risks and rewards of participation (Duval, 2018; Goldberg, 2017; Walker, 2016). To date, however, evidence remains anecdotal.

In this paper, we present the first empirical study of the impact of social media activity for legal scholars, focusing primarily on academic citations and secondarily on article placements. We do this by creating a novel dataset on Twitter behavior among U.S. law professors and combining this information with citation panel data, which we derive from a novel, large corpus of law journal articles. The combined dataset enables us to measure how nearly all tenured and tenure-track professors at U.S. law schools have used Twitter, along with how their scholarship has fared according to two standard (if imperfect) metrics.

To isolate the causal significance of the decision to use Twitter, we construct a synthetic controls estimator for each law professor who joined the platform, based on a weighted aggregate of the 100 professors not on Twitter who had the most similar pre-Twitter citation profile. 1 Under a set of transparent and largely testable assumptions, the synthetic version of any given Twitter-using professor creates a plausible counterfactual for what their citations and placements would have looked like had they never used the platform. We then compare predicted citations and article placements for the professors on Twitter with their actual citations and placements. We also explore possible heterogeneity tied to the scholars’ personal characteristics, law school characteristics, primary field of scholarship, and other factors.

We find a significant and broadly distributed “Twitter bump” within the legal academy. On average, law professors on Twitter received 22% more citations per year than did their synthetic control counterparts and 137% more citations per year to articles published after joining Twitter. Citations to articles published by these professors before joining Twitter, however, went down relative to the control group—suggesting that their post-Twitter articles served as partial substitutes for their older articles with regard to citations. Law professors on Twitter also placed their articles in higher-ranked journals than did their synthetic control counterparts, with an average placement 10 ranks higher than their pre-Twitter trajectory would have predicted. By and large, these benefits from Twitter did not vary based on scholars’ race, gender, area of focus, law school rank, length of time in the academy, follower count, or number of tweets, although they were magnified in the case of professors who used Twitter to promote their own research.

These results demonstrate that social media participation has become an important driver of scholarly success in law, for better and worse. The lack of heterogeneous effects across most classes of professors is consistent with the optimistic view of social media as a means to democratize and diversify the marketplace of ideas. At the same time, the rewards of social media participation risk fueling greater presentism, self-promotion, and self-commodification within the academy. Key questions for future research include whether and how the relationship between social media activity and scholarly success will change in the wake of Twitter’s ongoing transformation, and why Twitter seems to have generated professional benefits so much larger in law than in other academic disciplines.

Our findings build on a growing body of literature that considers the impact of social media on scholarly success across various disciplines. Researchers have identified a positive relationship between Twitter mentions of an academic article and citations to that article in coloproctology (Jeong et al., 2019), communications (Chan et al., 2022), ecology (Lamb et al., 2018; Peoples et al., 2016), ornithology (Finch et al., 2017), political science (Klar et al., 2020), physics (Shuai et al., 2012), and numerous other fields (Barakat et al., 2018; Chau et al., 2021; Desphande et al., 2022; Eysenbach, 2011; Halvorson et al., 2023; Hayon et al., 2019; Parwani et al., 2020; Weissburg et al., 2024). Yet because these studies are observational in nature, they do not allow causal inferences to be drawn, as any correlations between Twitter mentions and scholarly impact indicators may reflect unobserved attributes of the articles at issue (novelty, quality, importance of the result, reputation of the author, and so on) rather than the influence of social media. And even these correlations have in many instances been “weak or negligible” (Fang et al., 2021, p. 918; see also Haustein et al., 2014; Winter, 2015).

Using an instrumental variable strategy, two studies have found that Twitter activity about research papers in economics leads to an increase in citations (Chan Ho et al., 2023; Sofer, 2024). 2 Three studies using randomized designs have likewise found that tweeted articles receive more citations than do untweeted articles from the same journals, in cephalalgia (Peres et al., 2022) and cardiovascular medicine (Ladeiras-Lopes et al., 2022; Luc et al., 2021). At least three other studies that randomly selected articles for Twitter promotion, however, have found no detectable citation boost, in life sciences (Branch et al., 2024), neurosurgery (Vieli et al., 2024), and public health (Tonia et al., 2016). Overall, the picture that emerges from the extant literature remains “inconclusive” (Chan et al., 2022, p. 4), further underscoring the need for the present inquiry. 3

2. Data and Methods

2.1. Dataset and Descriptives

To be able to study scholars’ academic impact in relation to their social media activity, we first had to create three different datasets: on legal scholars, legal scholarship, and the Twitter behavior of legal scholars. Our focus is on professors based at U.S. law schools.

2.1.1. Legal Scholars Database

To generate a dataset of legal scholars and their university affiliations, we relied on the Association of American Law Schools’ (AALS) annual Directory of Law Teachers, starting with the 1979–1980 edition. 4 Our data extraction process exploited the directories’ formatting. Each page contains two columns listing law teachers and their positions, with indentation. School names are indicated at the top of the column as necessary but are omitted when they are the same as the previous column or page. Thanks to the directories’ consistent layouts, we were able to create layout-specific optical character recognition (OCR) algorithms to turn the scanned pages into machine-encoded text and then extract names and university affiliations for all tenured and tenure-track faculty members. 5 The spelling of names and universities is not always consistent across AALS directories, and the OCR process leads to occasional misspellings. We therefore used a combination of algorithmic 6 and manual corrections to standardize all named entities. The result is a panel dataset at the scholar-year level for 19,526 scholars. To rank the associated law school at the scholar-year level, we relied on each school’s U.S. News & World Report ranking over time. 7

2.1.2. Legal Scholarship Database

To generate a dataset on legal scholarship, we purchased the entirety of all legal academic articles available through Lexis in 2022. This includes both the text of each article and the associated metadata. The corpus comprises 167,881 articles from 186 publications, starting in 1954. 8 The majority of publications are student-edited law reviews, although the corpus also includes peer-reviewed legal journals such as the Journal of Legal Analysis and the Journal of Legal Studies. From the text of these articles, we first extracted all scholarship citations using the open-source tool eyecite. 9 We then generated a citation network that tracks the flows of citations between articles and scholars over time. Because the corpus does not include nonlegal journals, our citation network omits references to and from publications outside the legal domain. In other words, the citations used in our study are strictly internal to our corpus. To rank journals, we relied on the combined score in the Washington and Lee rankings from 2021. 10

2.1.3. Legal Scholars Twitter Database

To track the behavior of legal scholars on Twitter, we began with the 2021–2022 edition of the Law Professor Twitter Census, accessible through The Faculty Lounge website (Crawford, 2021). This census relies on self-reporting and reporting by other users to track the Twitter handles of full-time faculty members at law schools. The census is therefore incomplete, although it is the most comprehensive such list of which we are aware. 11 Foreshadowing our analysis, we note here that in the absence of selection effects, the underinclusiveness of the Law Professor Twitter Census should bias any estimates of a “Twitter bump” downward, rendering them particularly conservative. 12 After cleaning the data and deleting nonexistent Twitter handles, we removed scholars outside the United States. The final list contains 1,133 Twitter handles with associated faculty names. We successfully matched 1,086 scholars to entries in the AALS directories. For each handle, we used the Twitter Research API (now discontinued) to extract all public tweets by each law professor through September 2022. This includes the text of the tweet as well as some metadata, such as the frequency with which a tweet was “liked” or shared. The Twitter Research API does not include deleted tweets, but rather presents a momentary snapshot of available tweets during the time in which it was accessed. Importantly, we also collected the year in which each Twitter handle was created.

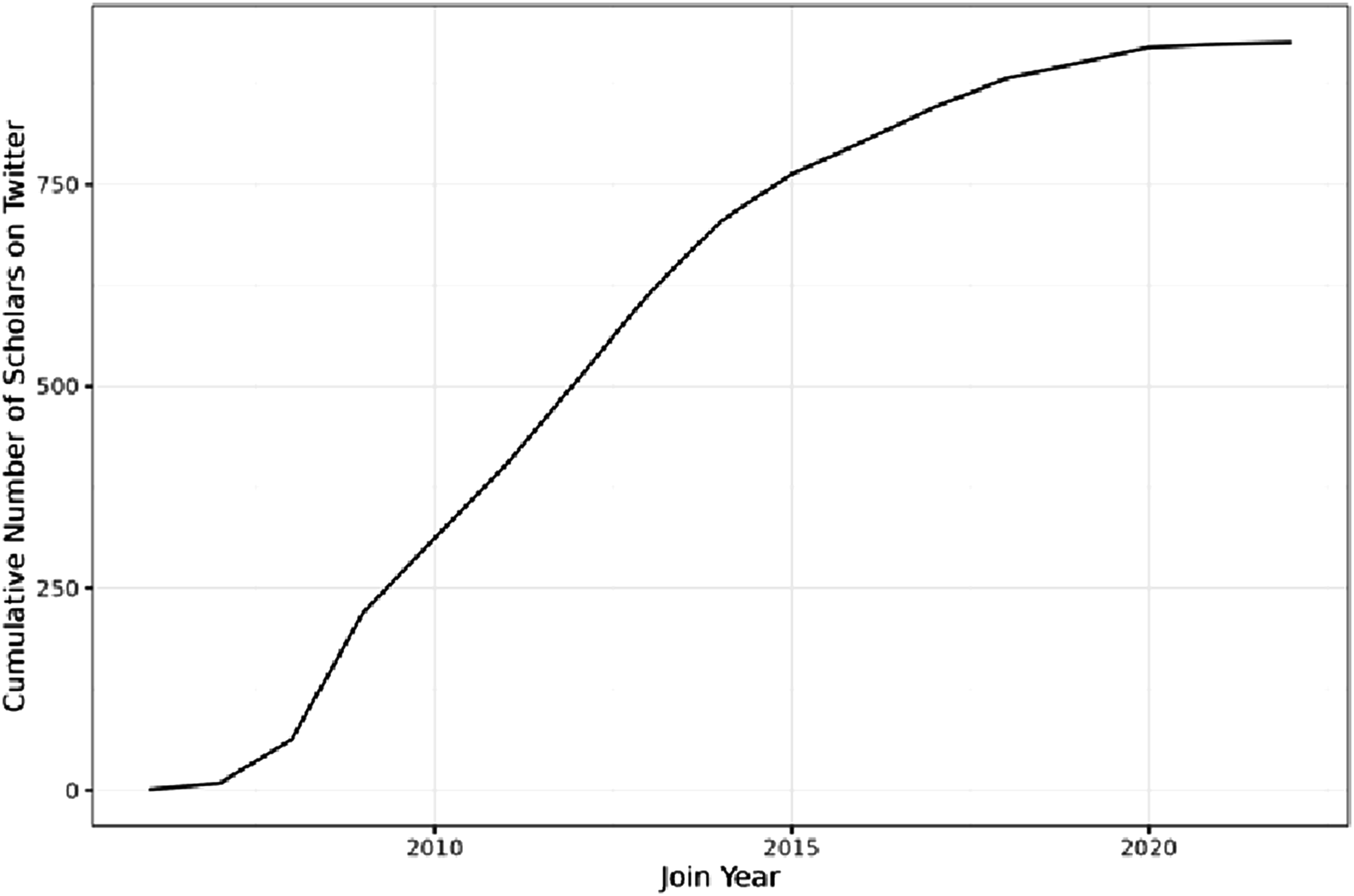

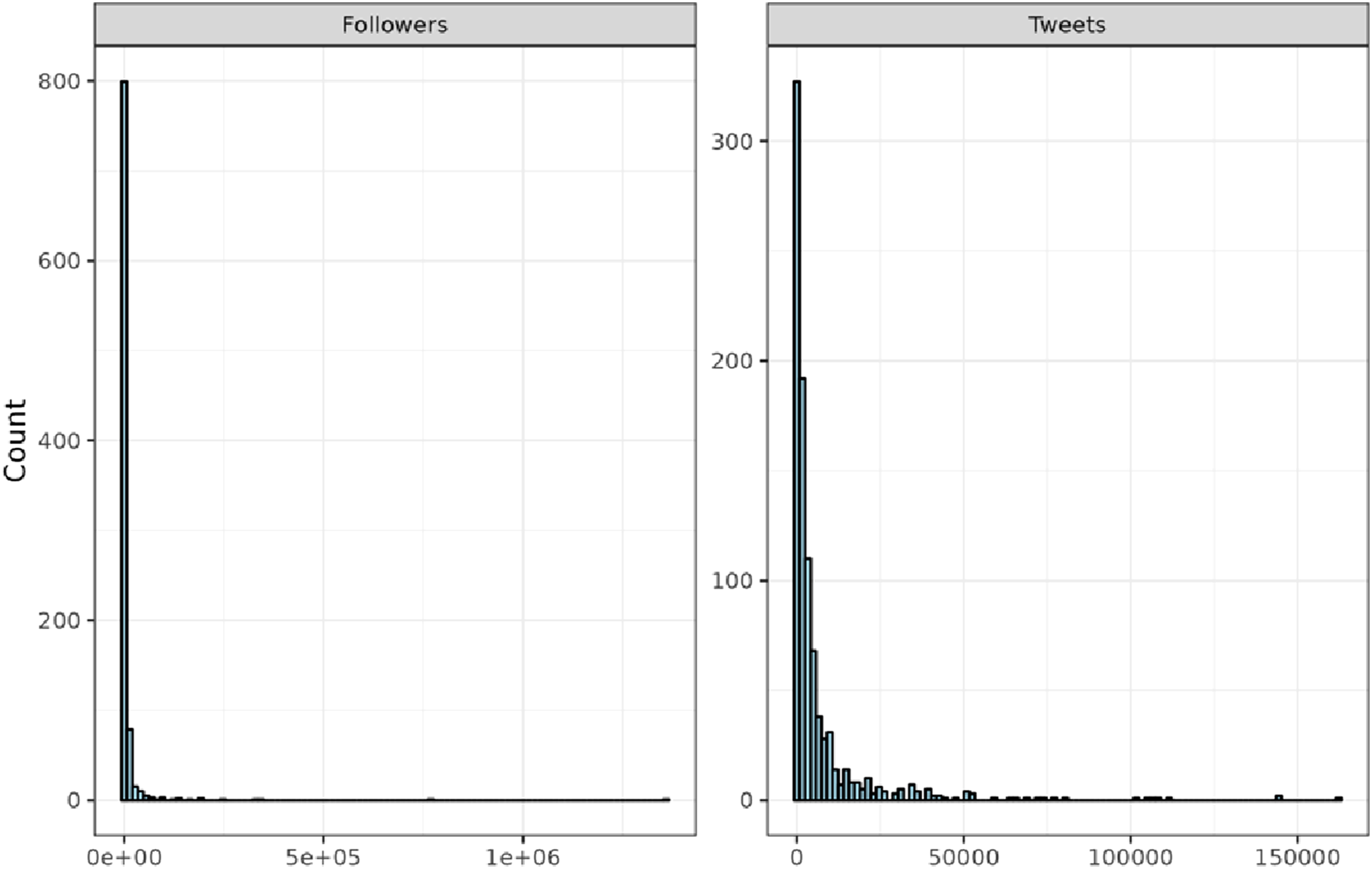

Figure 1 depicts the cumulative number of legal scholars on Twitter over time. The first scholar joined in 2006, the year Twitter went live. Eight more joined in 2007, and 54 joined in 2008. 2009 saw the largest single-year increase with 157 scholars. Figure 2 depicts histograms of the number of followers and tweets at the scholar level. The data is heavily left-skewed, with only 11 scholars exceeding 100,000 Twitter followers. Laurence Tribe has by far the largest Twitter following in the dataset with 1.3 million; after Tribe comes Richard Painter with over 767,000 followers. Cumulative Number of Legal Scholars on Twitter Histogram of Scholars by Number of Followers (Left) and Tweets (Right)

2.1.4. Combining the Datasets

In a final step, we combined all datasets. After standardizing names across the AALS directories, Lexis, and the Twitter census, we used the resulting standardized spelling as a unique identifier of scholars across the three. 13 The result is a panel dataset at the author-year level for 10,403 scholars. 14

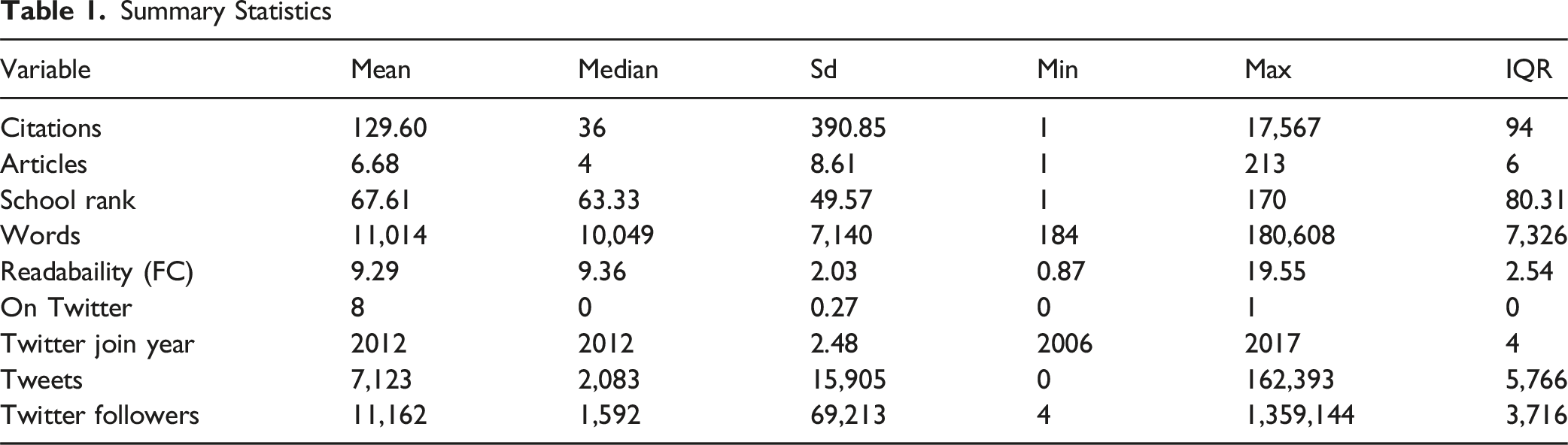

Summary Statistics

2.2. Methodology

Our analysis seeks to identify the professional benefits that legal scholars receive from joining Twitter. At heart, the question that motivates our inquiry is causal in nature, which we study using observational data. In such situations, it is common for researchers to avoid causal language and instead argue that the object of inquiry is a conditional correlation. We believe that the better approach in this case is to use causal language in a transparent fashion, with a clear account (provided at the end of this Section) of the assumptions the reader needs to believe in order to interpret our results causally. 15

One approach to studying the impact of Twitter on citations might compare citation rates of scholars before they join the platform with rates afterward. Such comparisons, however, would not take into account any time trends that coincide with the joining of Twitter. For instance, if a scholar’s citation rates were already on an upward trajectory prior to joining, this approach would inappropriately attribute to Twitter any subsequent continuation of this trend. A second approach might compare post-Twitter citation rates of scholars who are on the platform with rates of scholars who are not (for example, Deshpande et al., 2022). However, this approach runs the risk of serious endogeneity concerns stemming from omitted variable bias, as the choice to join Twitter is not random. Any observed difference could therefore be driven by other variables that correlate with this choice, such as a tendency to write more popular or provocative scholarship.

We take a third approach. As explained above, our final dataset is a panel in which we observe both scholars who are on Twitter and scholars who are not on Twitter repeatedly over time. We consider the joining of Twitter as the relevant “treatment,” so that scholars who eventually join Twitter are our “treated” units and scholars who never join Twitter are our “control” units. With this framework in place, our analysis focuses on estimators for causal inference with panel data. Among the different estimators, we choose the synthetic controls estimator introduced by Abadie et al. (2010) because of its intuitiveness, its comparatively low computational cost, and its robustness to overfitting as compared to more complex estimators. 16

For any given treated scholar, relevant scholars in the control group are gathered in a “donor pool.” To ensure computational feasibility and to reduce difficulties resulting from overfitting with too many control units (Abadie, 2021), we restrict each donor pool to the 100 scholars who have the smallest difference with the treated scholar in cumulative pre-Twitter citations. The control units in the donor pool are then weighted to generate a synthetic control observation for the treated scholar. The weights are chosen so that the synthetic control unit’s outcomes resemble the treated unit’s outcomes prior to the intervention as closely as possible, subject to certain constraints. 17 Because the synthetic control unit is a weighted combination of scholars who never join Twitter, comparing the post-treatment outcomes of the treated scholar with the post-treatment outcomes of the (synthetic) control scholar yields an estimate for the treatment effect. Following recommendations (Ferman, 2021) and common practice in the literature (Billmeier & Nannicini, 2013; Donohue et al., 2019; Kreif et al., 2016), our matching variables in applying this method are pre-treatment outcomes. Importantly, this approach avoids the potential for specification searches and p-hacking (Ferman et al., 2020). We fit the model on a period of up to ten years prior to the year in which the Twitter handle is created, and we observe outcomes for up to ten years after scholars join Twitter.

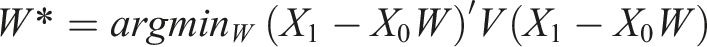

Formally, let

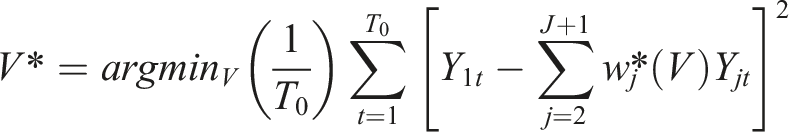

To choose

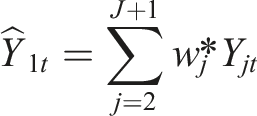

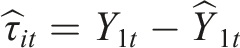

Our estimator is the average treatment effect on the treated scholars (ATT). For each period

The estimated treatment effect in period

To conduct inference, we employ a placebo analysis. Under this placebo analysis, we remove the treated unit and expand the donor pool by one additional control unit. We then iteratively pick a control unit from the donor pool, label it as a “pseudo-treated” unit, and implement the synthetic controls estimator. We repeat this process for every unit in the donor pool. Intuitively, this process allows us to ascertain which estimates our estimator yields under the assumption that the true treatment effect is 0. When we compare this with the actual estimate for the “treated” unit, it allows us to infer whether the observed effect is statistically different from 0.

Like any causal estimator, the synthetic controls estimator relies on a number of assumptions that need to be fulfilled in order for the estimates to be interpreted causally (Abadie et al., 2010). Four assumptions have direct implications for our analysis. First, the method assumes that the treated unit can be well approximated through the donor pool (“convex hull assumption”). In our data, we observe that the three most frequently cited scholars, Cass Sunstein, Mark Lemley, and Lawrence Lessig, all joined Twitter. Their citation counts are so anomalously high that they cannot be well approximated by units in the donor pool. We thus omit these three scholars from our study. We also omit two scholars for whom joining Twitter coincided with other notable events that led to a drastic decline in citations. 18

Second, the method assumes sufficient data in the pre-treatment period to generate plausible synthetic control units. To that end, we remove treated scholars with fewer than 30 total pre-Twitter citations out of concern that estimates for these scholars would be too speculative. This group includes 175 scholars.

Third, the synthetic controls method relies on the assumption that there are no time-varying, unobserved confounders—in other words, that the relationship between the treated and the synthetic control unit would have remained the same as in the pre-treatment period, absent treatment. This assumption would be violated in the presence of “shocks” that coincide with the treatment and that differentially affect treated and control units. One potentially plausible such shock would be present if scholars who join Twitter simultaneously undergo other changes. For instance, some scholars may choose to join Twitter as a byproduct of a larger choice to enhance their impact on popular discourse or public policy. In that case, the joining of Twitter may coincide (for example) with a decision to write articles that are shorter or more generally accessible, which in turn may affect citations through channels other than the joining of Twitter itself. Although the assumption of the absence of time-varying, unobserved confounders is inherently unverifiable, we conduct some additional analyses to explore its validity. These results provide suggestive evidence that the scholarly output of law professors on Twitter did not undergo changes consistent with the intention of broadening public appeal.

The fourth and last important assumption is that no “anticipatory effects” are present, such that the treated scholars (who join Twitter) change their behavior in anticipation of and prior to the treatment. Because signing up for a social media platform is typically a private decision that goes unannounced ex ante, we do not see any good reason to assume anticipatory effects in our setting. In addition, we note that a violation of this assumption generally biases estimates downward. 19

We also include main results using the alternative general synthetic controls estimator with interactive fixed effects (Xu, 2017). This estimator is sometimes preferred for aggregated data with many treated units (Clarke et al., 2023; Xu, 2017). However, because this estimator cannot adequately address subgroup analyses, we employ it only for robustness tests. 20

3. Results

Our primary outcome of interest is citations, as the most direct and familiar measure of academic success. We begin by exploring the impact of Twitter on citation counts, with particular attention to heterogeneity across different types of scholars. We then focus on secondary outcomes, including article placements (as another proxy for scholarly success) and article characteristics such as length and complexity (as a proxy for scholarly style).

3.1. Main Results: Citation Counts

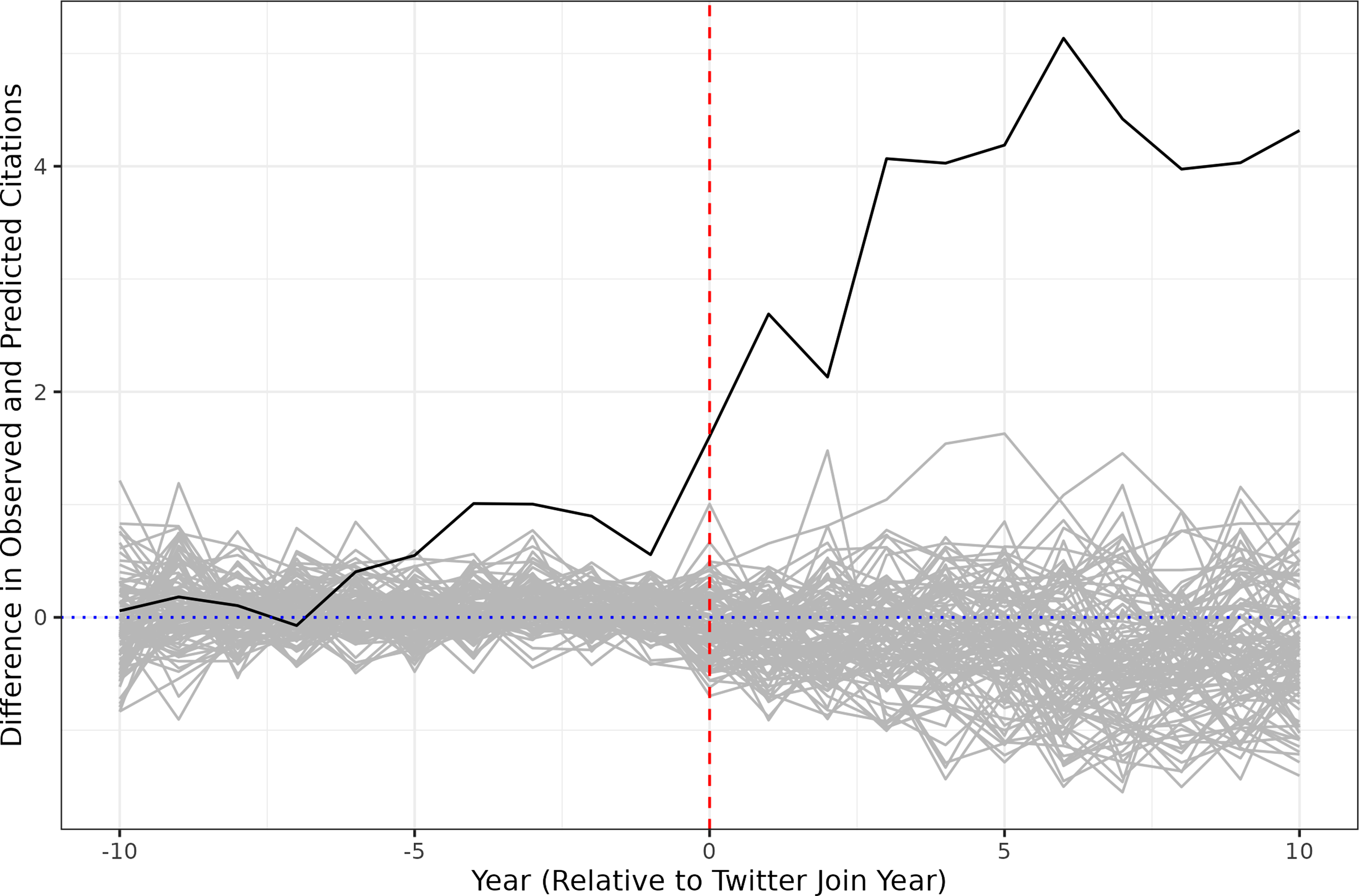

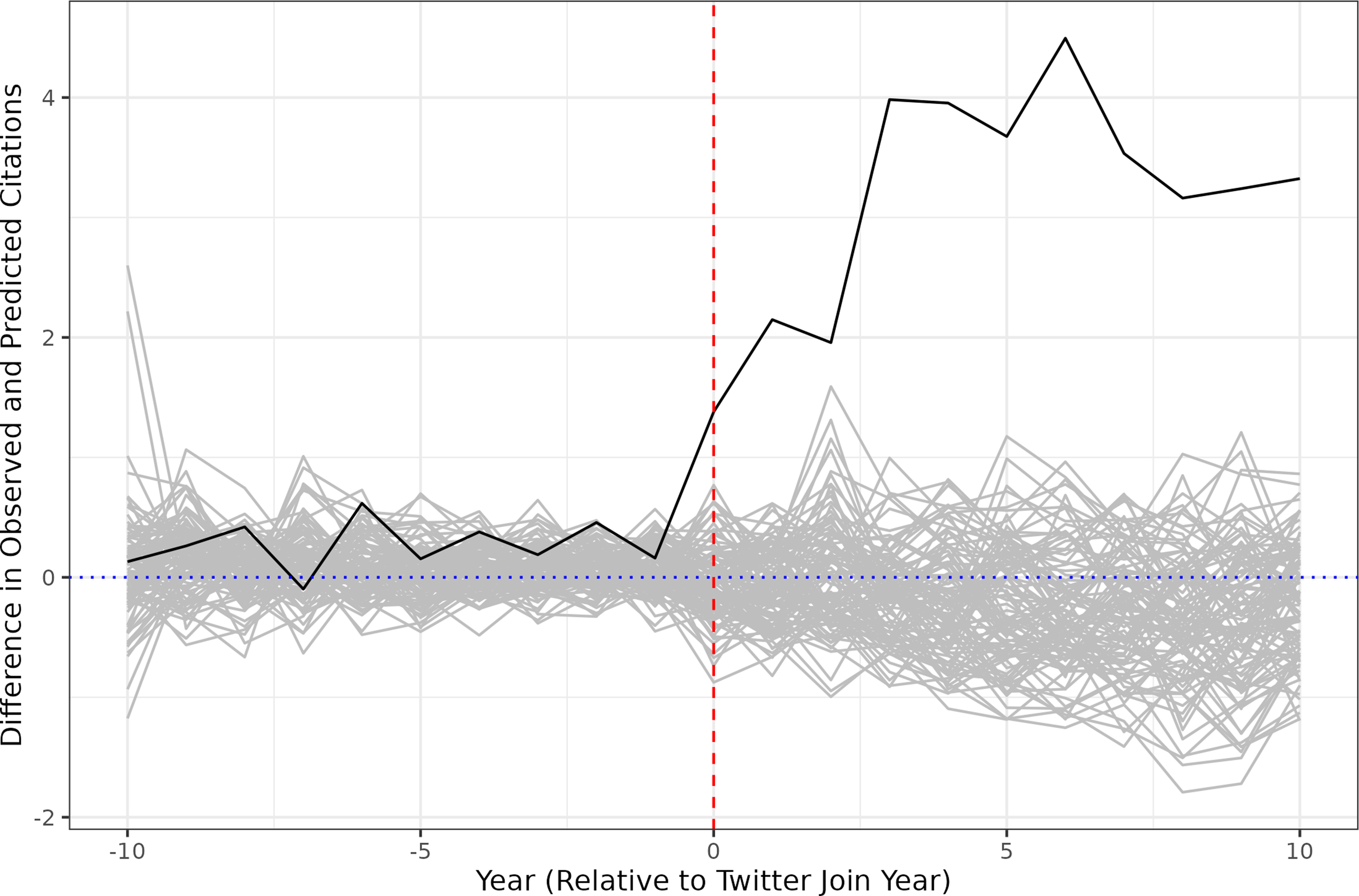

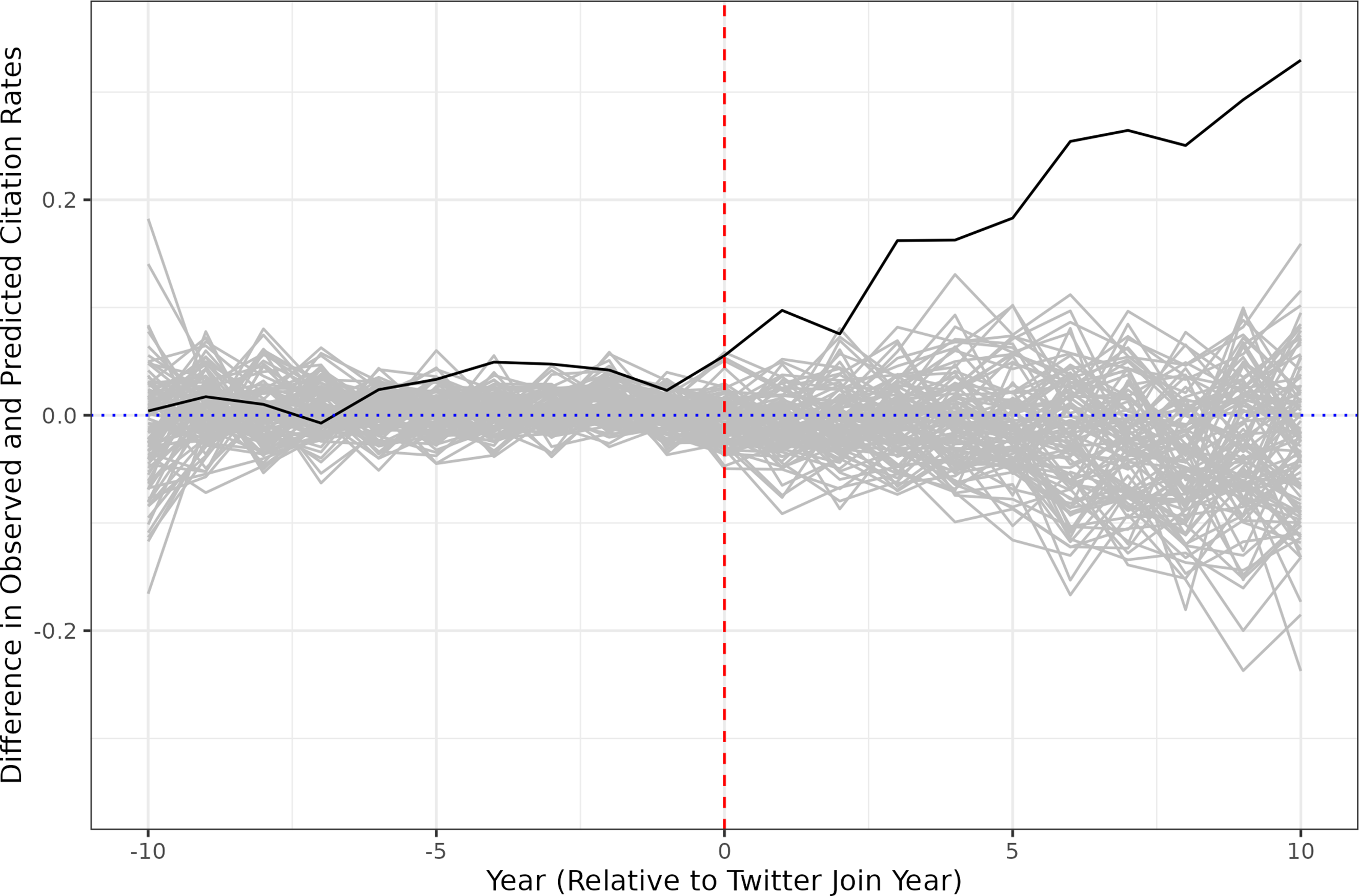

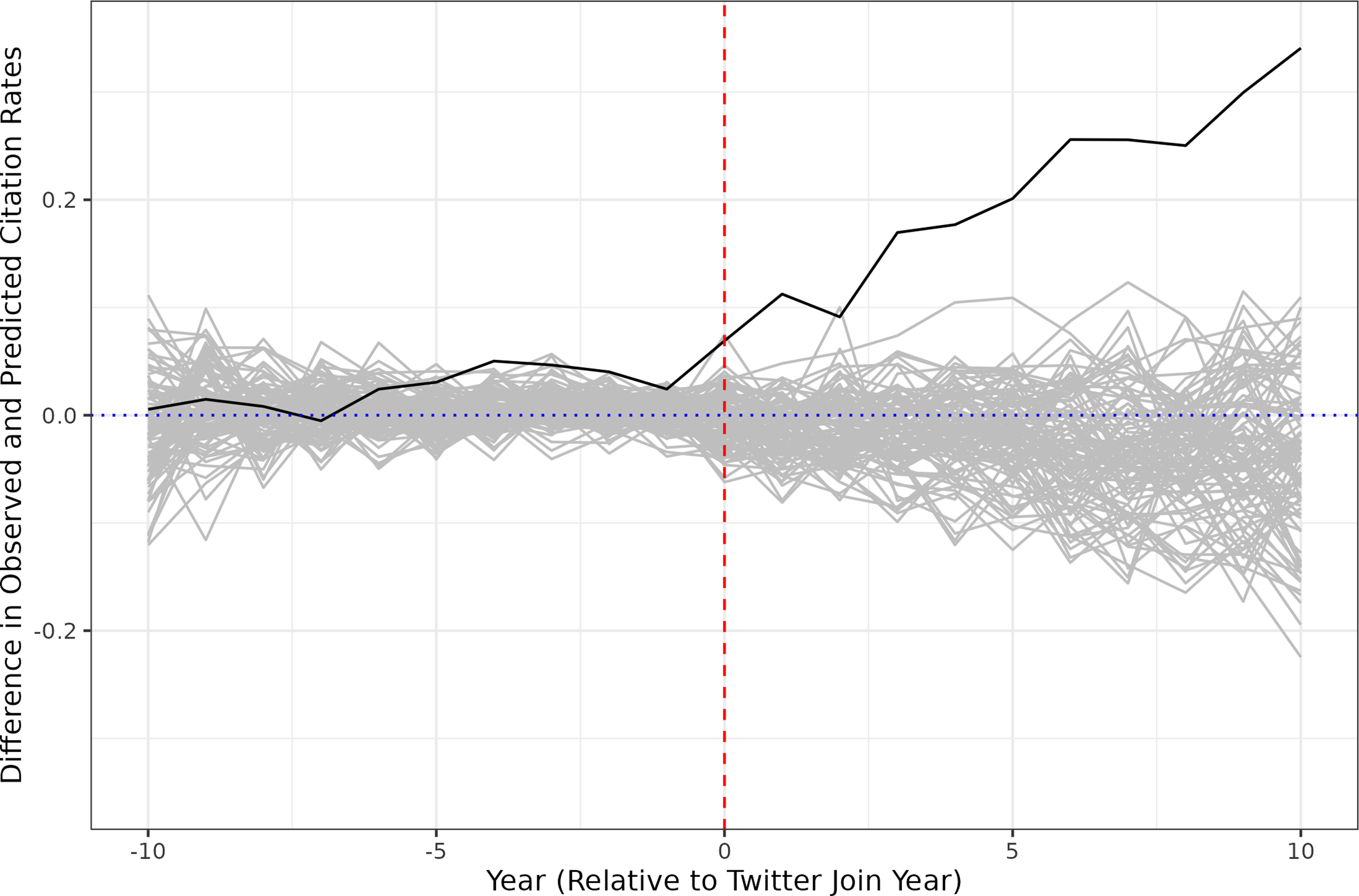

Figure 3 shows the impact of Twitter on citation counts for legal scholars who joined the platform. The x-axis depicts years, with 0 marking the year in which a scholar’s Twitter handle was created. The y-axis depicts the relative difference between actual and predicted citation counts, where the predicted citation count is based on the synthetic controls method applied at the scholar level.

21

The black line represents the average difference across all scholars on Twitter. The gray lines are derived from a placebo analysis that applies the same methodology to scholars not on Twitter. Synthetic Controls Estimates

As can be seen in Figure 3, prior to joining Twitter, the predicted citations for scholars on Twitter are consistent with their actual citations. After joining, however, there is an immediate, dramatic increase in citation frequency that far exceeds the anticipated level based on the pre-treatment trajectory. This increase also far exceeds anything that is observed in the placebo analysis, suggesting that it is not the result of random chance. Across all scholars on Twitter, citations increase by an average of 22% per year in the post-treatment period.

We note that there is an upward trend in the pre-treatment window starting around the fifth year prior to the intervention, a trend that is even more pronounced in the raw citation counts (Figure A.1). This pre-treatment increase could demonstrate that some scholars are changing their research production process in the runup to joining Twitter. In that case, at least some of the measured treatment effect could plausibly be attributed not to social media itself, but to preceding shifts in scholarly work. To assess the magnitude of this issue, we conduct an additional analysis. Specifically, we apply the synthetic controls method under the assumption that the treatment occurred not in

In our main analysis, we omit scholars with fewer than 30 citations under the assumption that a significant citation history is required to employ the synthetic controls method effectively. In the Appendix, we provide results when excluding scholars with fewer than 15 citations (Figure A.4) and fewer than 50 citations (Figure A.5), showing that our results are not sensitive to this parameter. Under our robustness test employing the generalized synthetic controls method (Figure A.6), the average citation increase is as high as 40% (p = 0.02).

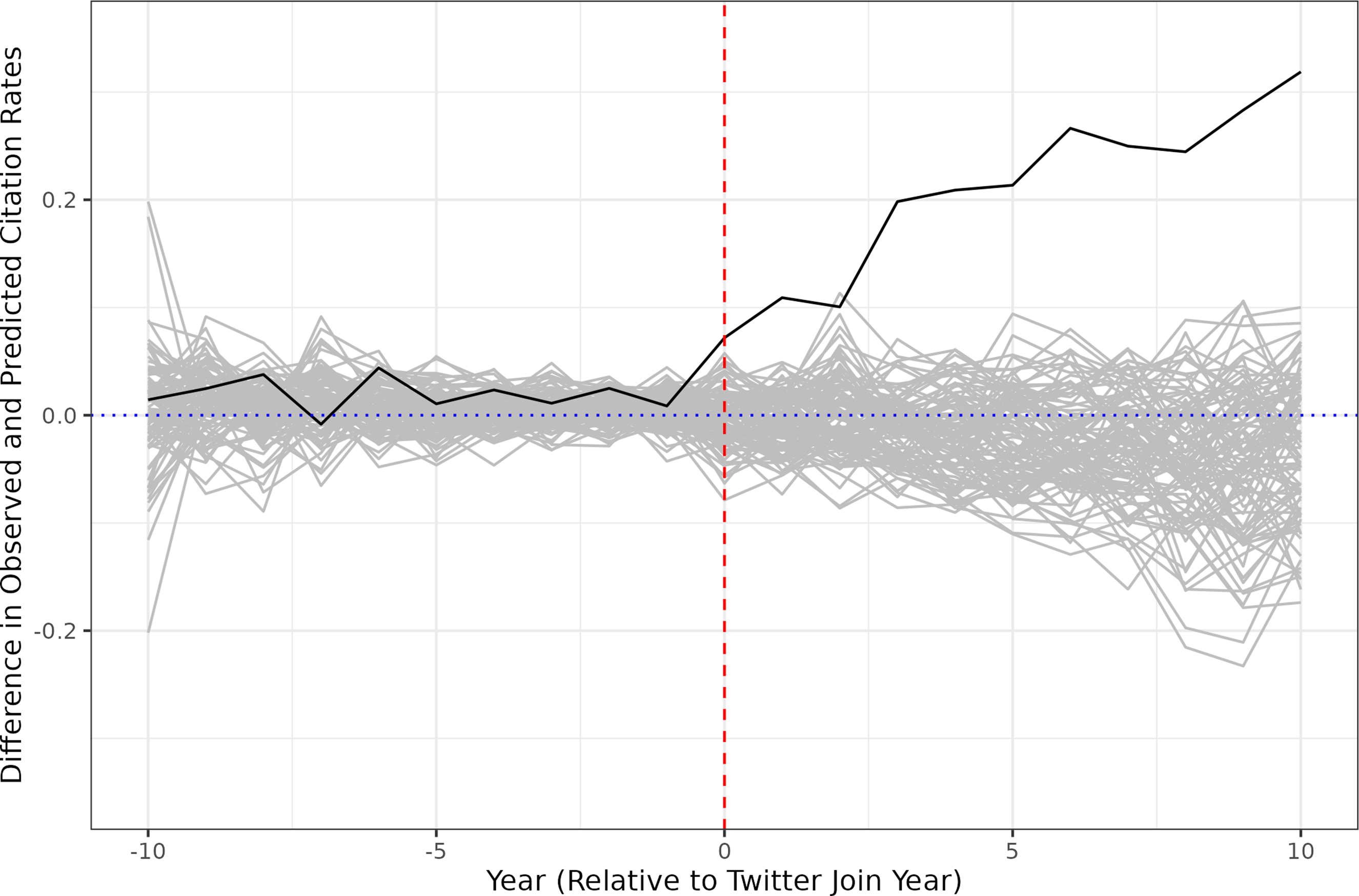

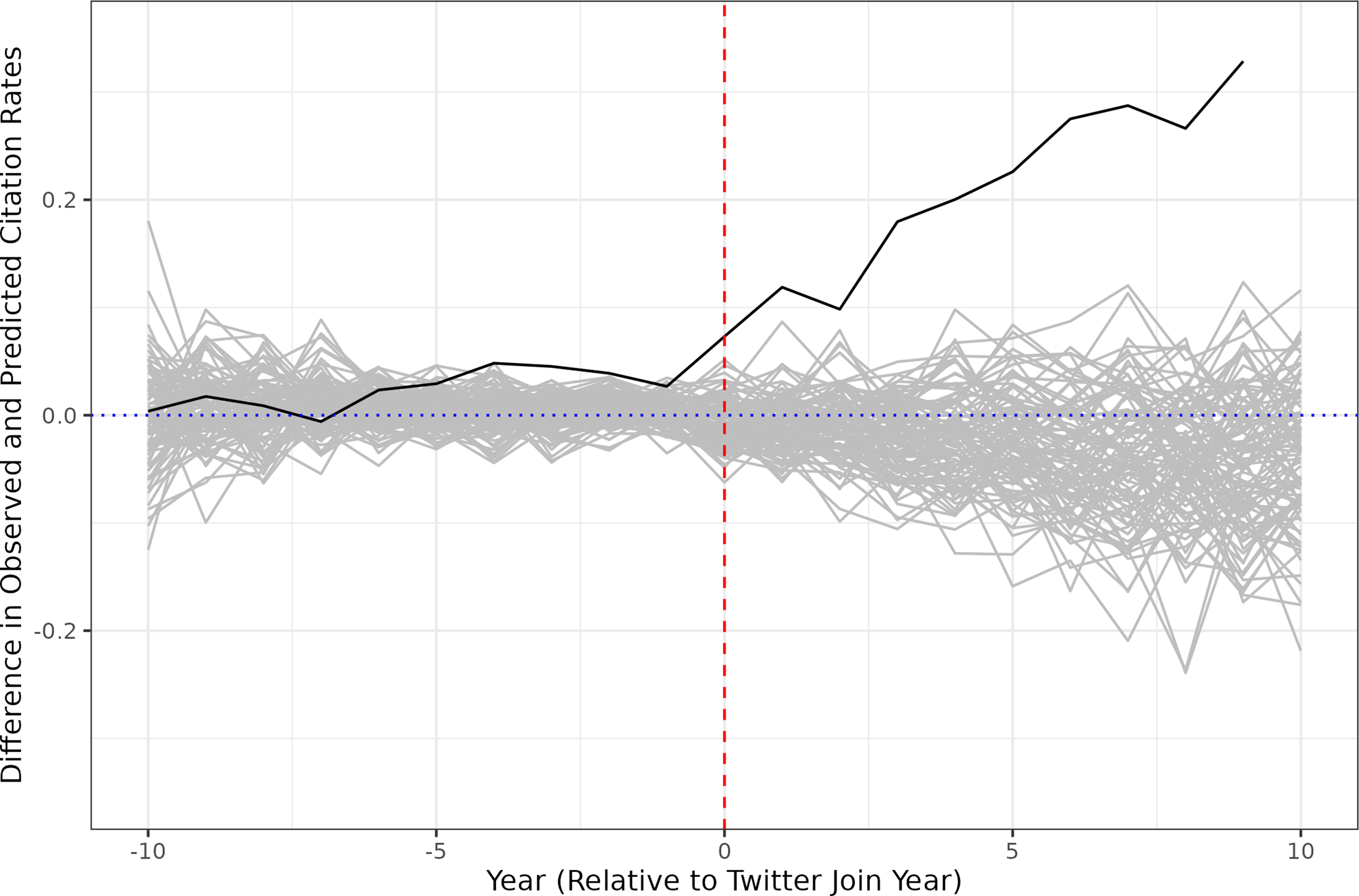

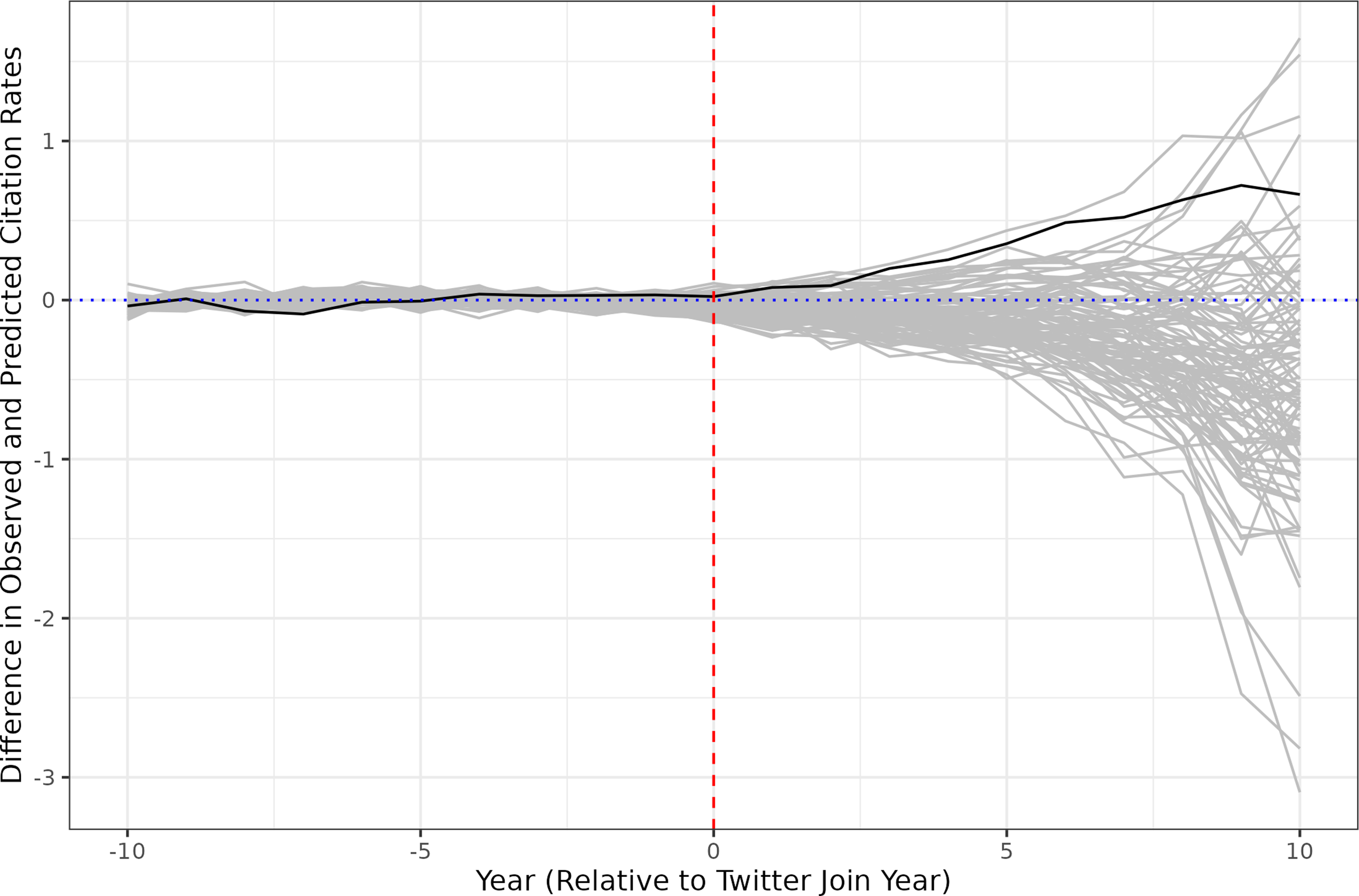

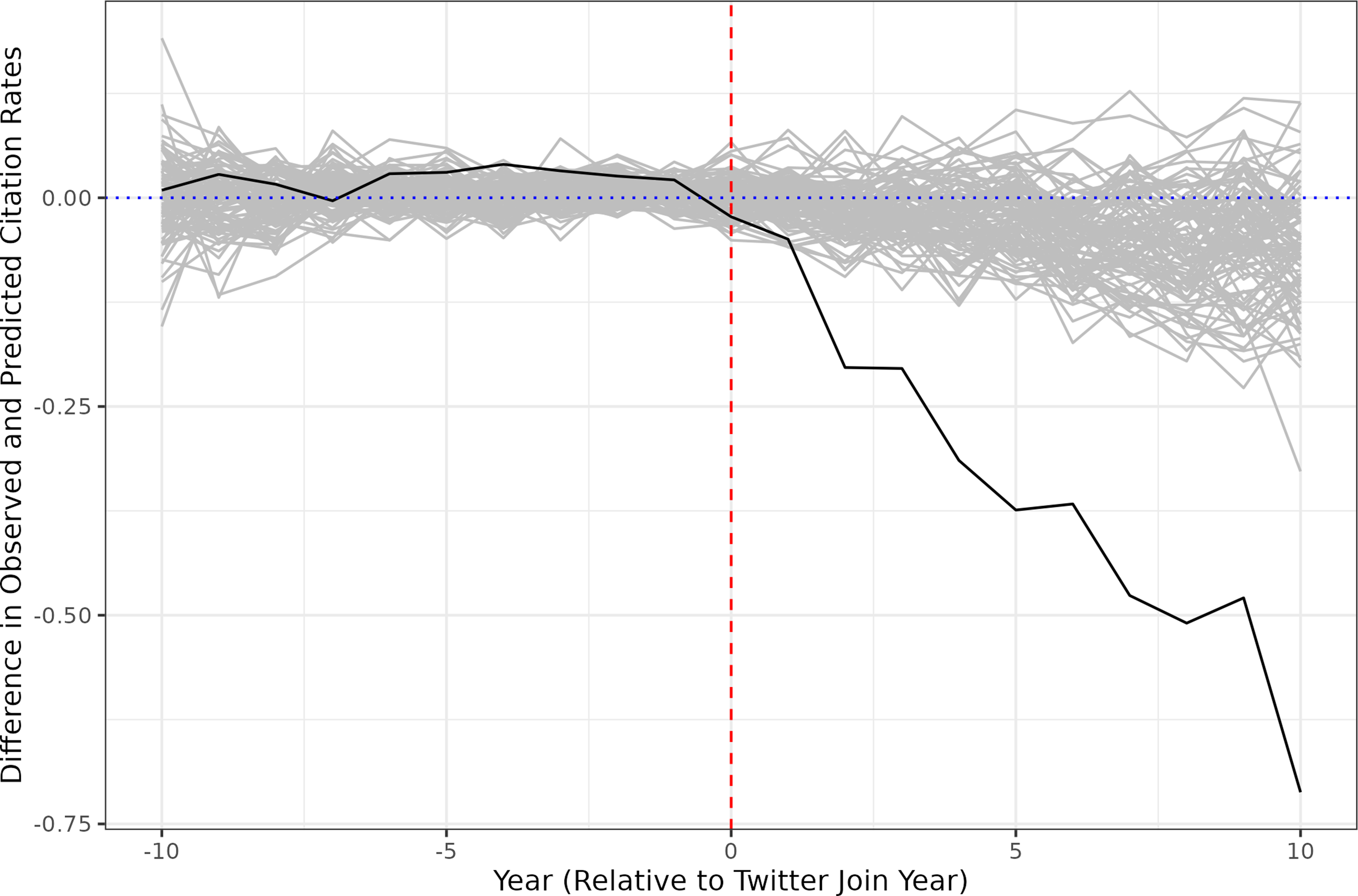

The results displayed in Figure 3 raise the question whether the increase in citations is primarily driven by new articles that have been published after scholars join Twitter, or whether there is a similar increase in citations to articles published prior to their joining. We investigate this question in Figure 4, which depicts the impact of Twitter on citations to pre-Twitter articles only.

22

Synthetic Controls Estimates for Pre-Twitter Articles

We observe a sharp decline in citations. In the ten years after they join Twitter, legal scholars receive on average 37% fewer citations per year to their pre-Twitter articles. This suggests that our main results in Figure 3 are the combination of two distinct developments: First, after scholars join Twitter, other scholars cite their pre-Twitter articles less than expected. Second, after scholars join Twitter, other scholars cite their post-Twitter articles more than expected—much more. The increase in citations to new articles is large enough to offset the decrease in citations to old articles and still result in an overall positive effect.

The striking difference in Twitter’s impact on newer versus older work suggests that different articles by the same scholar may be acting as substitutes for one another in the marketplace for citations. One reason for this phenomenon could be that multiple articles support the same or similar propositions, and Twitter shifts attention to newer articles. Another explanation could be that citations are driven in part by a desire to acknowledge, appease, or draw the attention of particular scholars based on who they are, rather than what they have written. In that case, a reference to any of these scholars’ works fulfills its function, with Twitter prompting exposure and citations to more recent work.

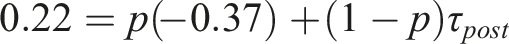

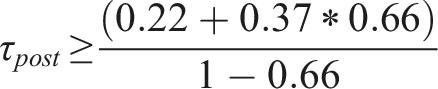

We cannot run an analysis in which we include only articles written after scholars have joined Twitter, because these scholars would then have no citation information prior to treatment and the synthetic controls estimator could not be implemented. But to gain more empirical traction on the “substitution” phenomenon just described, we can apply a lower bound to the increase in citations to post-Twitter articles. The motivating intuition is that the more a scholar’s total citations are dominated by their older (pre-Twitter) work, the larger the boost to their newer work must be to generate the observed 22% overall increase despite the 37% decline in citations to older work.

More formally, let

We can observe in our data that in the ten years following the joining of Twitter, 66% of citations go to pre-Twitter articles. We assume this to be a lower bound of

This analysis indicates that relative to their synthetic control counterparts, scholars on Twitter receive on average at least 137% more citations per year to their newer articles. In other words, the joining of Twitter is expected to more than double citations to work that is published from that point onward. In interpreting this number, however, it is important to bear in mind that overall citation rates to post-Twitter articles are lower. Thus, even a constant shift in citations from pre-Twitter to post-Twitter articles would result in a much greater relative change to post-Twitter article citations than to pre-Twitter article citations. 23

3.2. Heterogeneity

The impact of joining Twitter on citation counts may differ for different types of academics. To explore possible heterogeneity, we repeat our analysis for several subgroups of legal scholars.

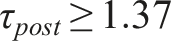

We first investigate how treatment effect size interacts with activity on Twitter. In particular, we separate scholars into deciles according to (1) their follower count, (2) their number of tweets, and (3) the number of tweets that promote the tweeter’s own scholarship. To identify the latter, we initially filtered tweets to those containing links to academic articles. 24 A research assistant then labeled a sample of 1,000 filtered tweets for whether they promote a scholar’s own articles. Next, we trained a popular machine learning classifier relying on the RoBERTa model (Liu et al., 2019) on a set of 850 labeled tweets and evaluated it on a holdout set of 150 tweets. After verifying the classifier’s strong performance (Accuracy & F1-Score = 0.86), we used it to identify all self-promoting tweets.

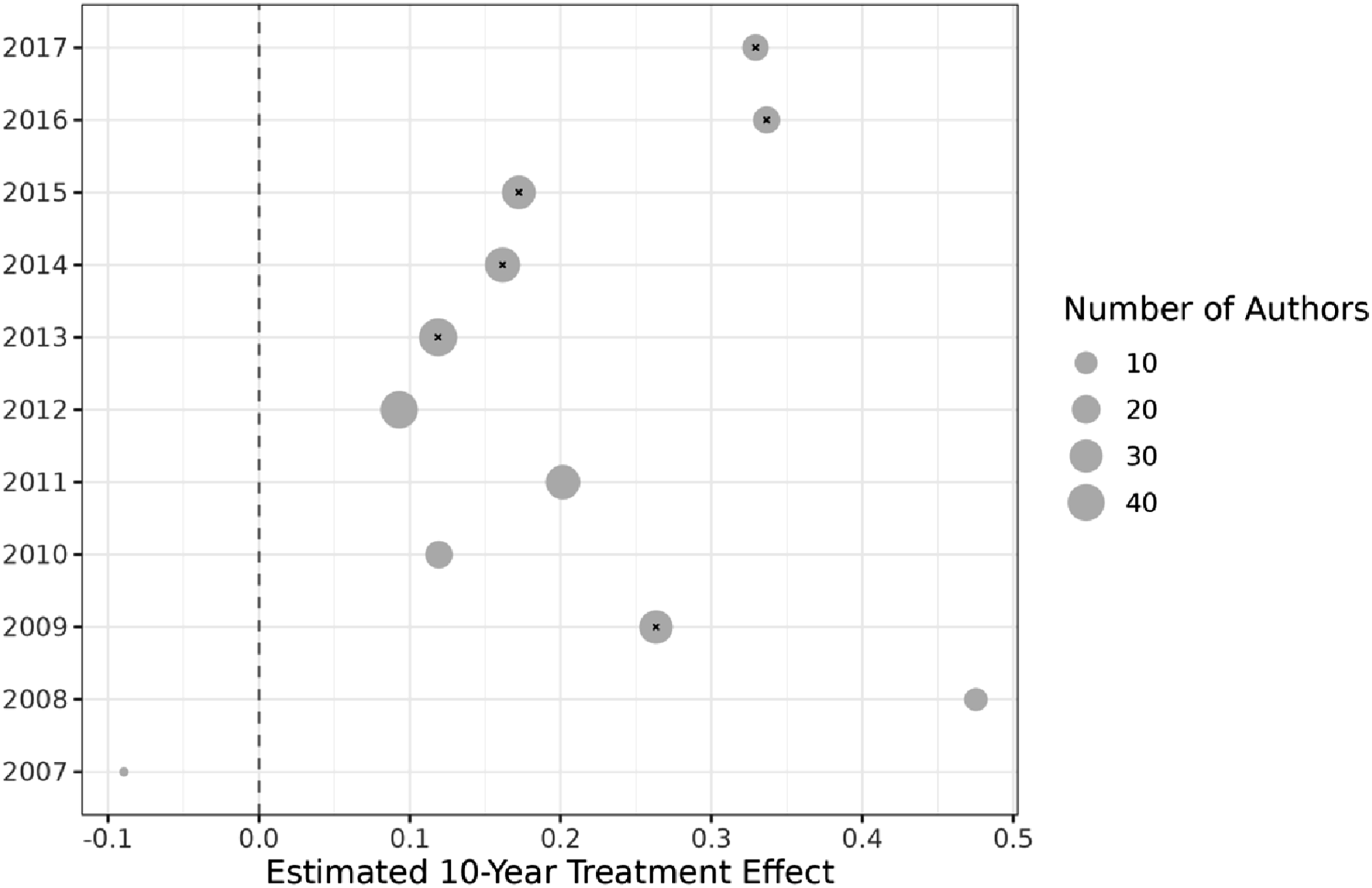

As can be seen in Figure 5, there is no strong correlation between follower counts or tweets and estimated citation increases. The estimates for follower counts, in particular, appear to follow an inverse U-shape, suggesting that the highest returns accrue to those with a moderate Twitter following. Self-promotional activity is more strongly correlated with estimated citation increases, providing evidence that part of the causal mechanism may be greater visibility of research among those who post about it regularly. Estimated Treatment Effect Heterogeneity

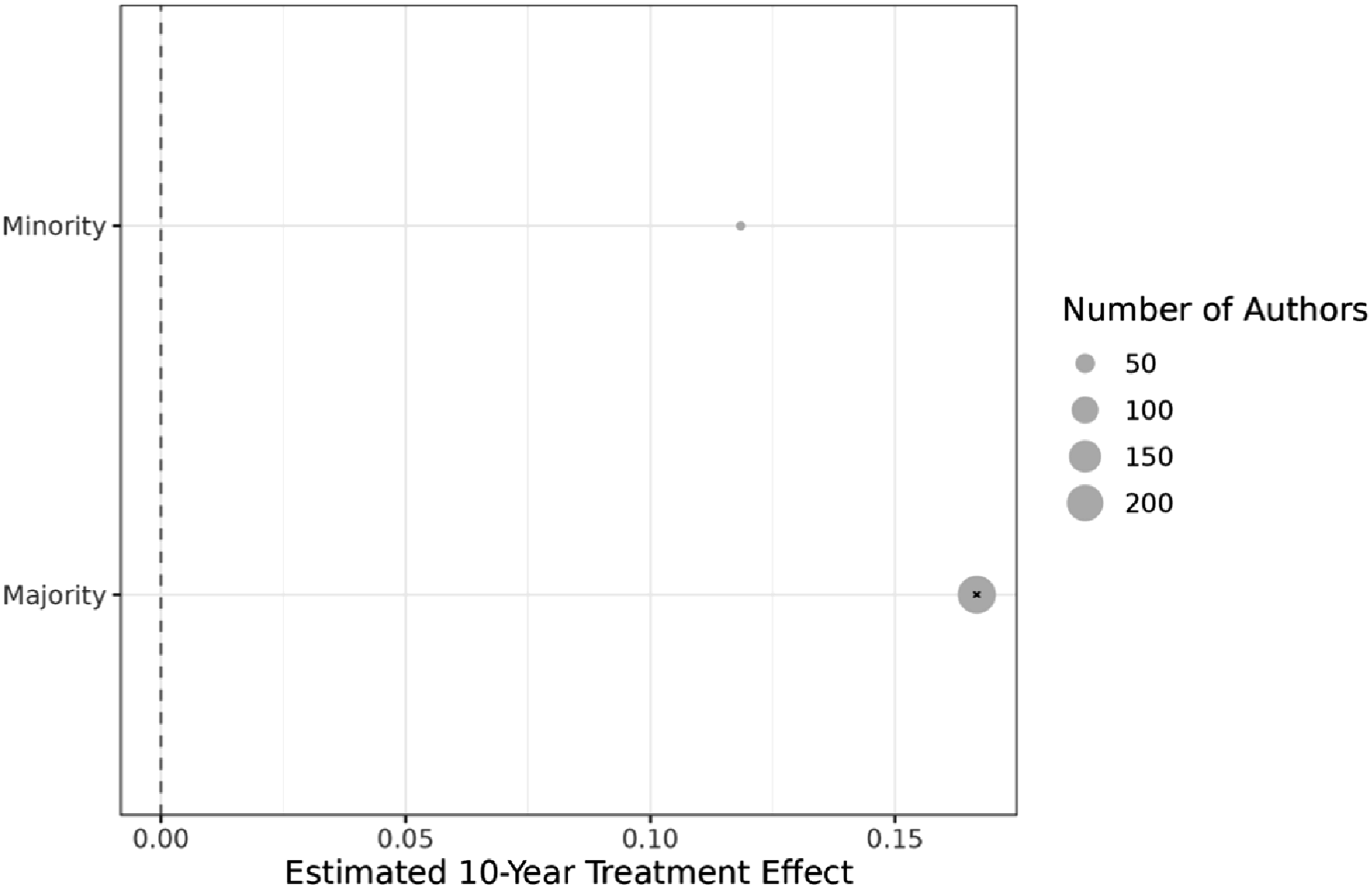

We turn next to possible heterogeneity based on race and other personal characteristics.

25

For every scholar on Twitter, a research assistant hand-collected information on the year the scholar joined the academy, along with information on their primary areas of research as listed on their faculty CV or webpage. One standard, statistical approach for inferring race is Bayesian Improved First Name and Surname Geocoding (BIFSG). However, we manually inspected the results of this approach and found that it is very unreliable in our context, perhaps due to socioeconomic factors.

26

We thus took an alternative tack, relying on the 2016–2017 AALS directory. This directory is the most recent to contain a list of legal scholars who self-identify as belonging to a racial or ethnic minority group.

27

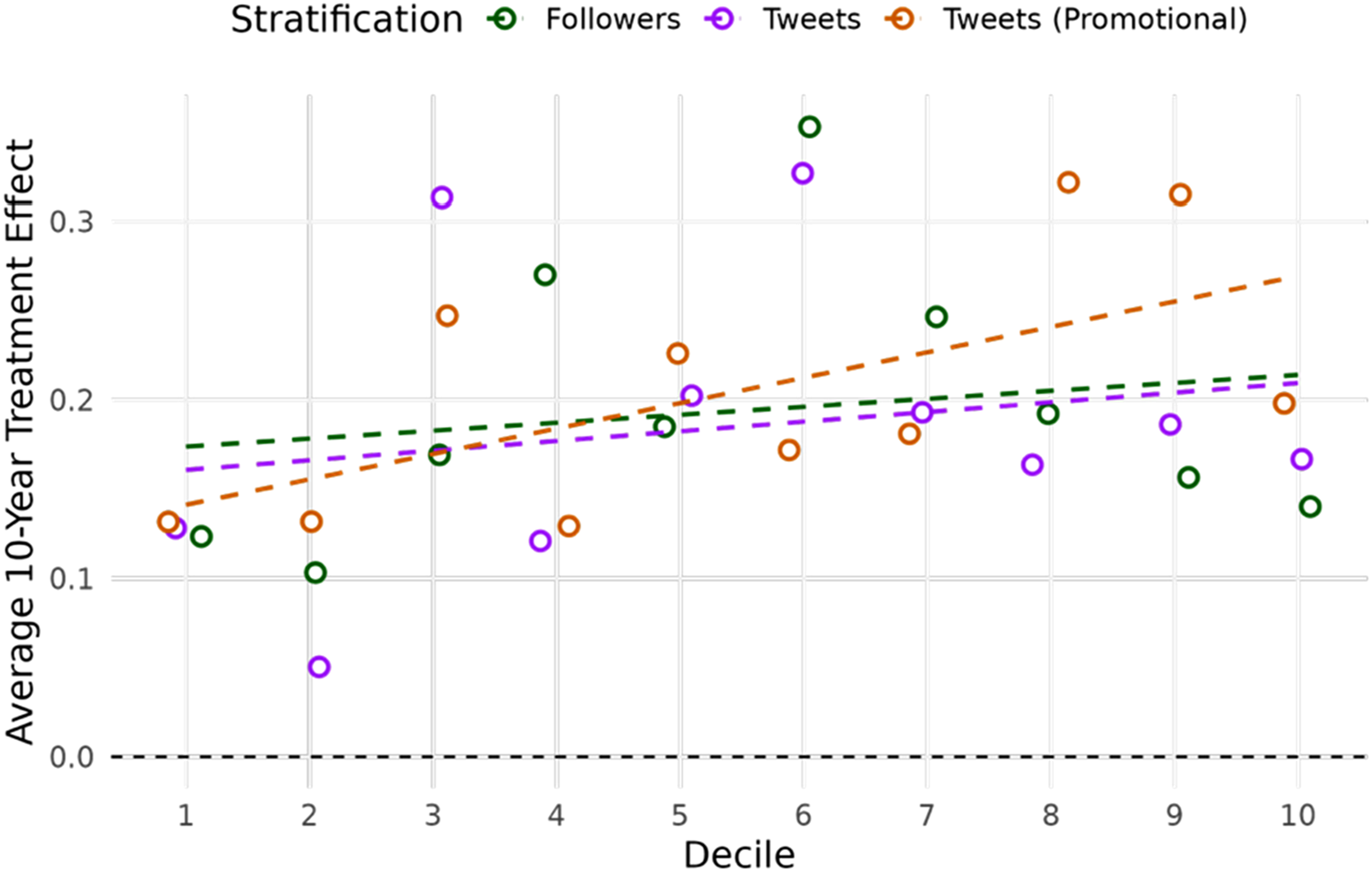

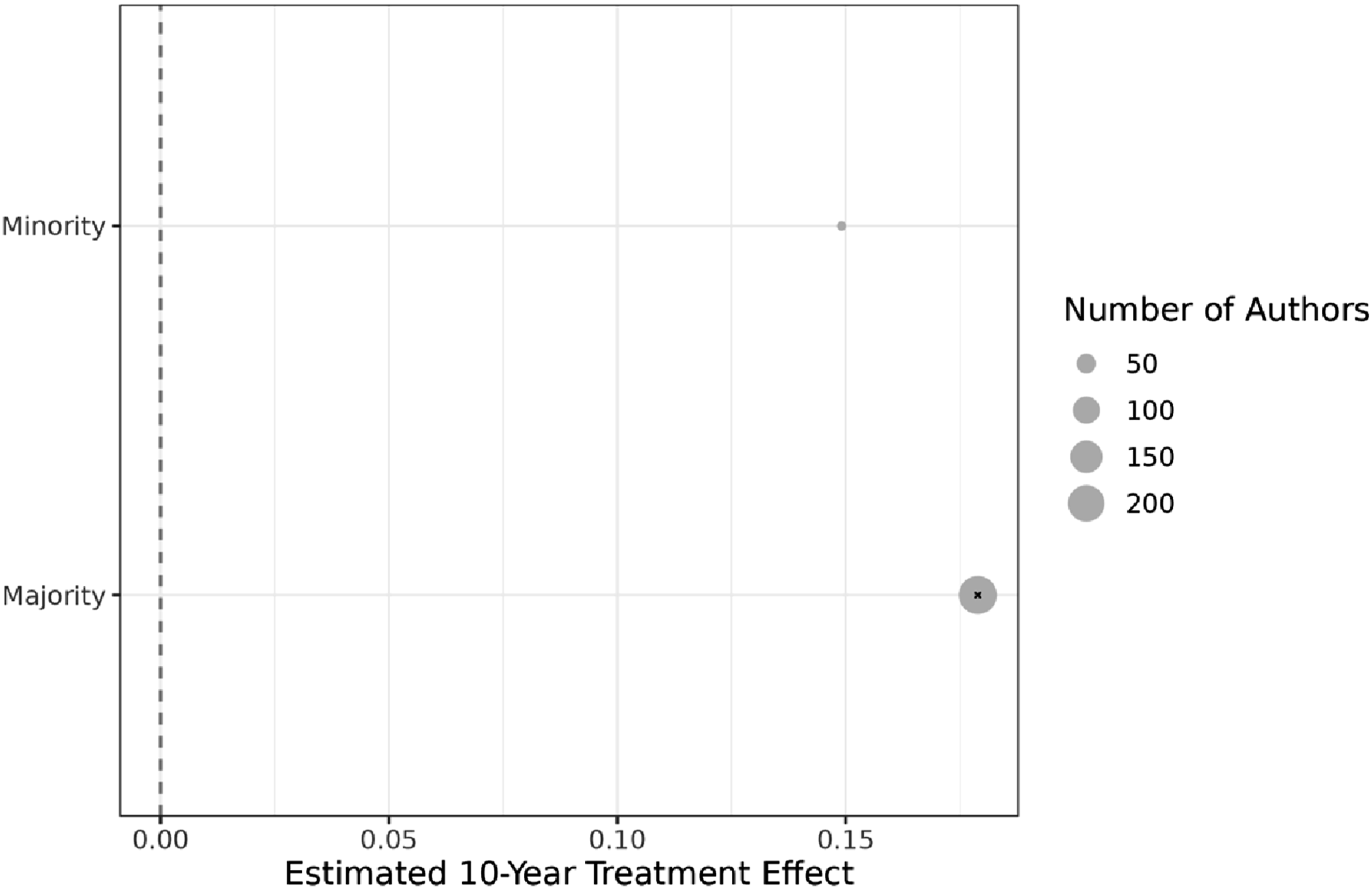

We note that this self-identification scheme appears to be significantly underinclusive, as only 16 scholars from the Twitter census are so listed. Because the direction of the bias is at least transparent, we prefer this approach to estimates derived through BIFSG. (That said, a comparison of Figure 6 to Appendix Figure A.8 indicates that the results are the same under both approaches.) As Figure 6 shows, these scholars are estimated to receive slightly lower citation increases from joining Twitter. We use a dot in this figure and in the following four figures to indicate statistical significance at a 5% level. Accordingly, Figure 6 also shows that the estimates for “minority” scholars are not statistically significant given the small sample size. Estimated Treatment Effect by Race/Ethnicity

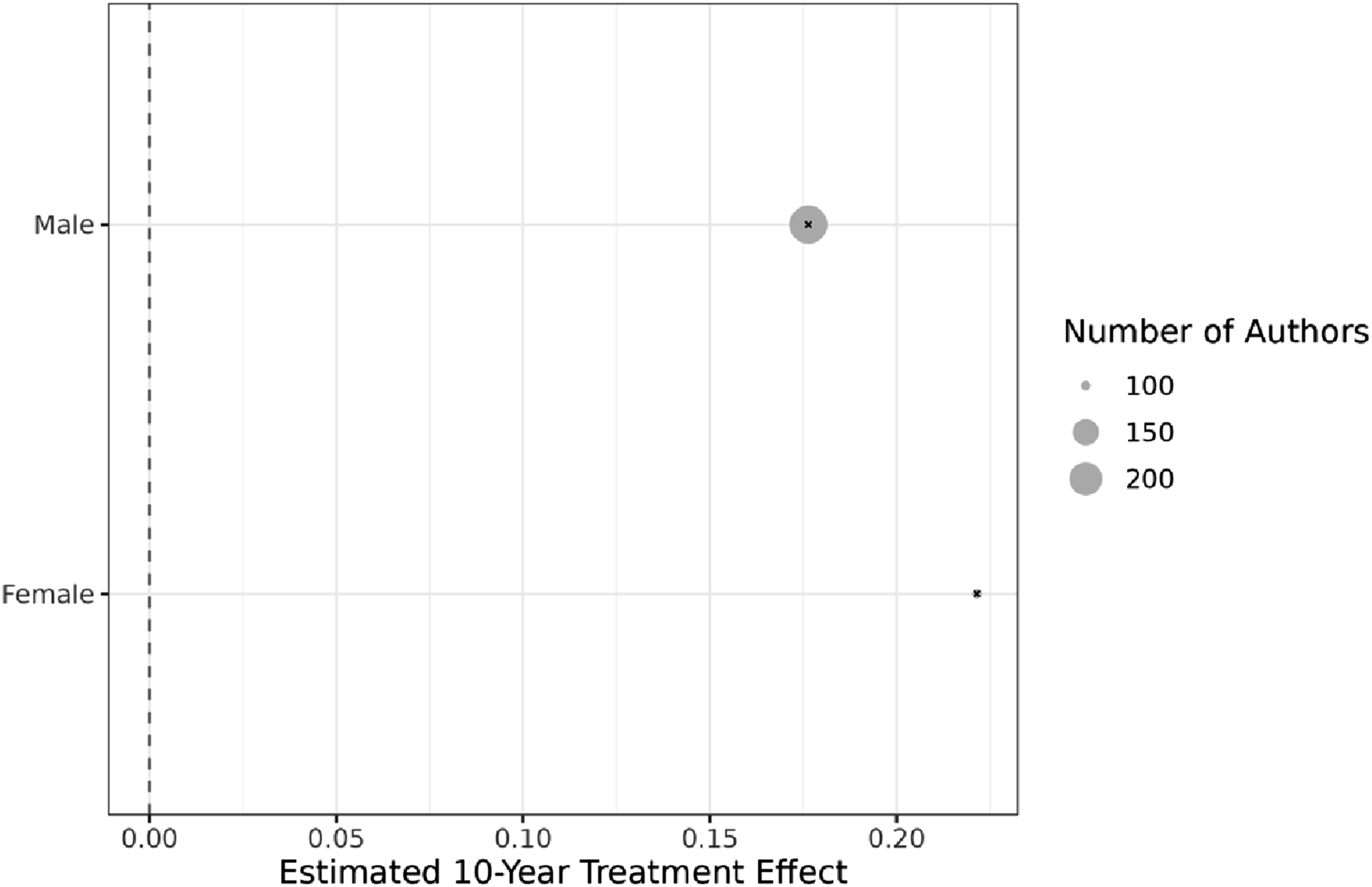

Next, we distinguish between male and female scholars. To identify a scholar’s gender, we rely on genderize.io, a popular algorithmic approach to name-based inference (see, for example, Fan et al., 2024; Ke et al., 2022; Rechlin et al., 2022). 36% of scholars on Twitter are predicted by the algorithm to be women.

28

Figure 7 breaks down results by gender, showing that both male and female scholars receive positive returns from joining Twitter, with female scholars receiving about 6% higher returns on average. The latter result is especially notable, given that recent research has found that women are significantly less likely than men to promote their own scientific papers on Twitter (Peng et al., 2025). Estimated Treatment Effect by Gender

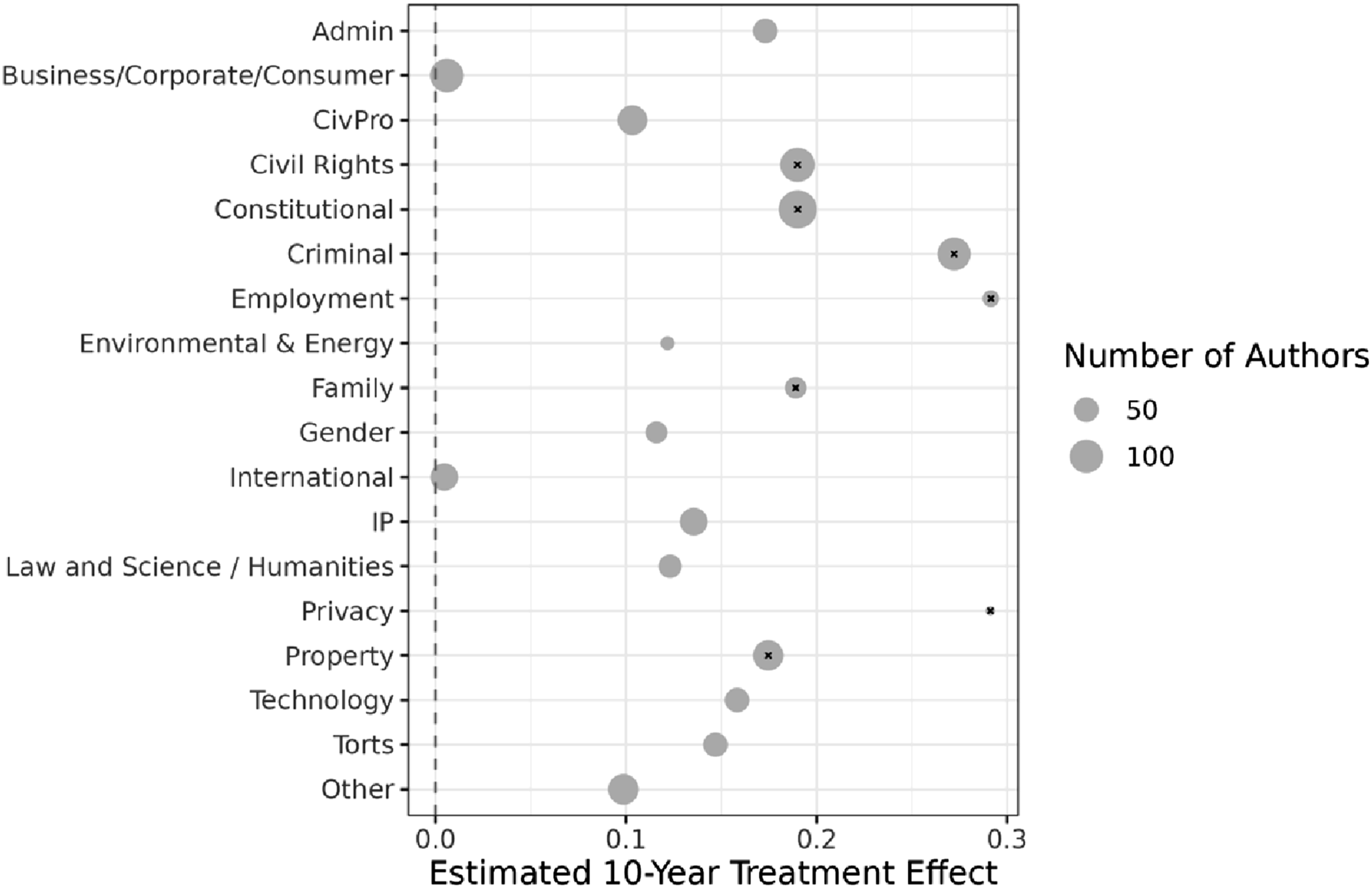

Figure 8 breaks down our results by scholars’ primary areas of focus, standardized across research categories. The largest, statistically significant effect is estimated for employment law (31% average citation increase per year), followed by constitutional law (19%) and civil rights law (17%). Overall, citation increases are estimated to be substantially positive for all subject areas except business/corporate/consumer law and international law. However, we note that not all estimates are statistically significant (as indicated, again, by a dot). Estimated Treatment Effect by Subject Area

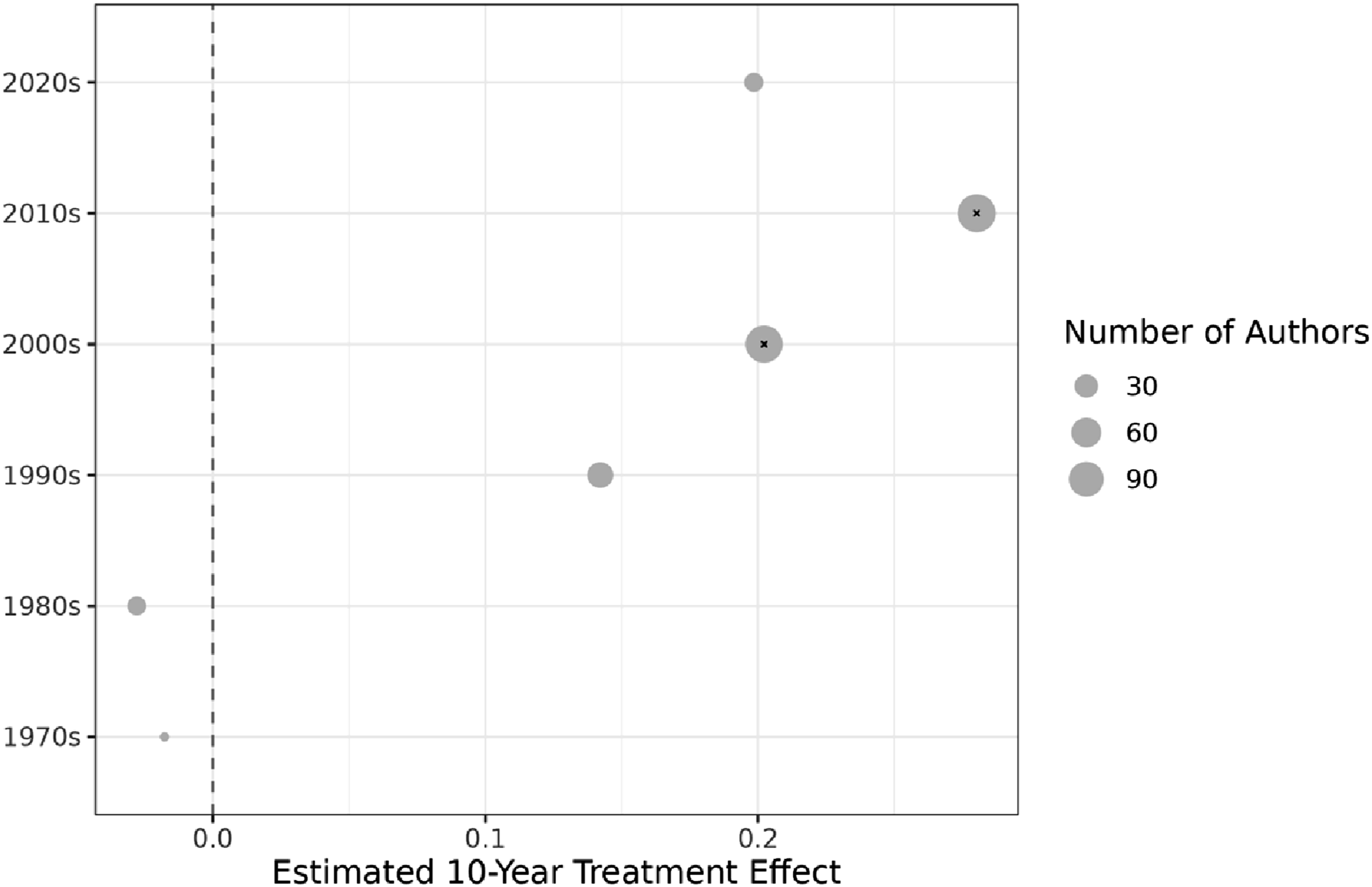

Figure 9 assesses our estimates conditional on the decade in which a scholar joined the legal academy. This information was manually collected by a research assistant. As can be seen, all cohorts who joined the academy during or after the 1990s are estimated to receive positive returns from joining Twitter, with statistical significance in the largest cohorts of the 2000s and the 2010s. In contrast, more senior scholars who joined the academy in the 1970s or 1980s yield negative treatment effects, albeit not statistically significant. In the Appendix (Figure A.9), we further disaggregate results by the year in which scholars joined Twitter, showing similarly balanced results without outliers. Estimated Treatment Effect by Decade of Entering the Academy

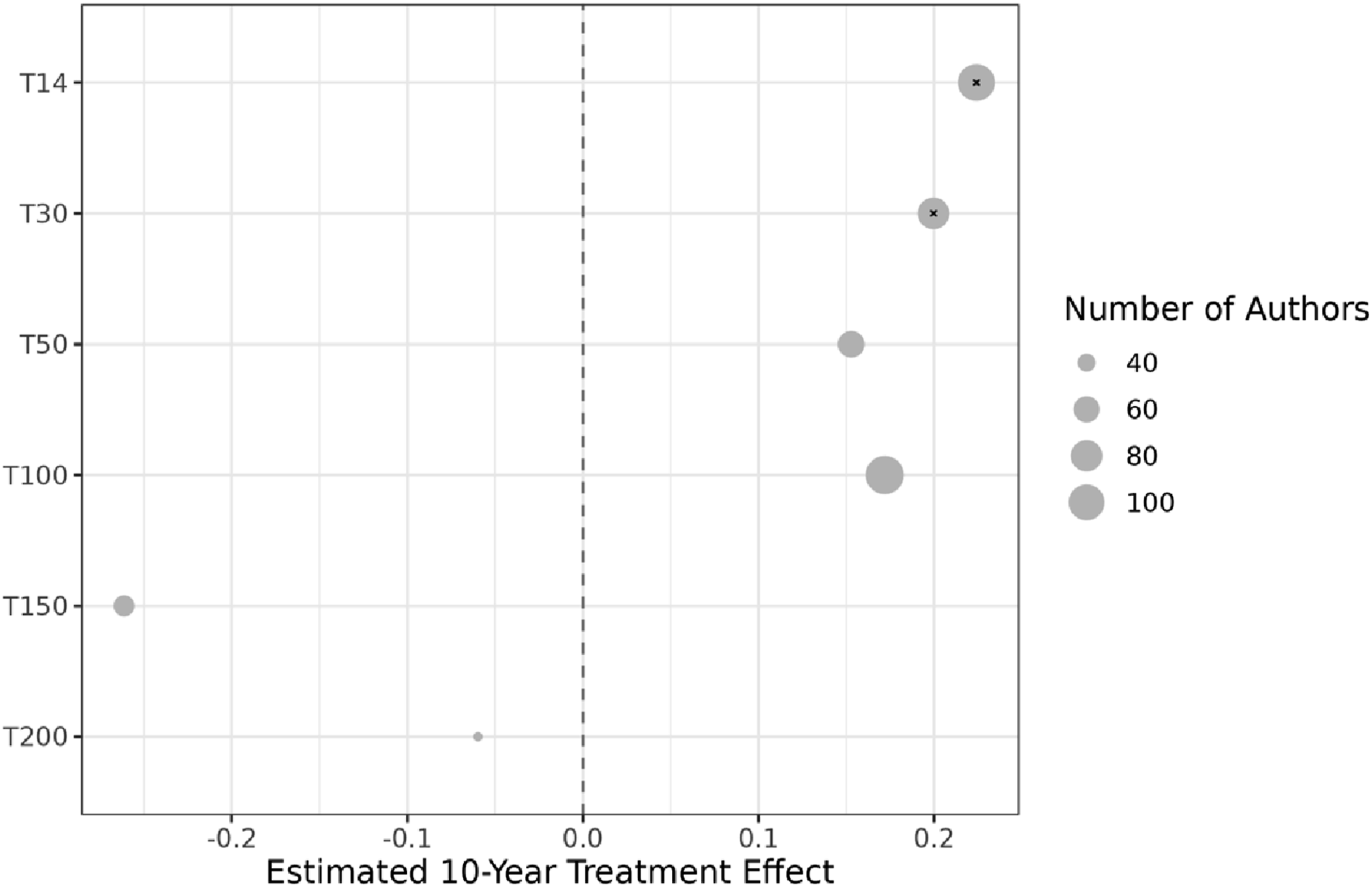

In a last step, we break down our results by the tier of the school at which a scholar teaches, with a school’s rank based on U.S. News rankings. We find in Figure 10 that scholars from schools at all tiers in the top 100 are estimated to receive comparable, positive citation returns. Estimates for scholars at schools ranked below the top 100 are negative, albeit not statistically significant. Estimated Treatment Effect by Institutional Rank

3.3. Secondary Outcomes

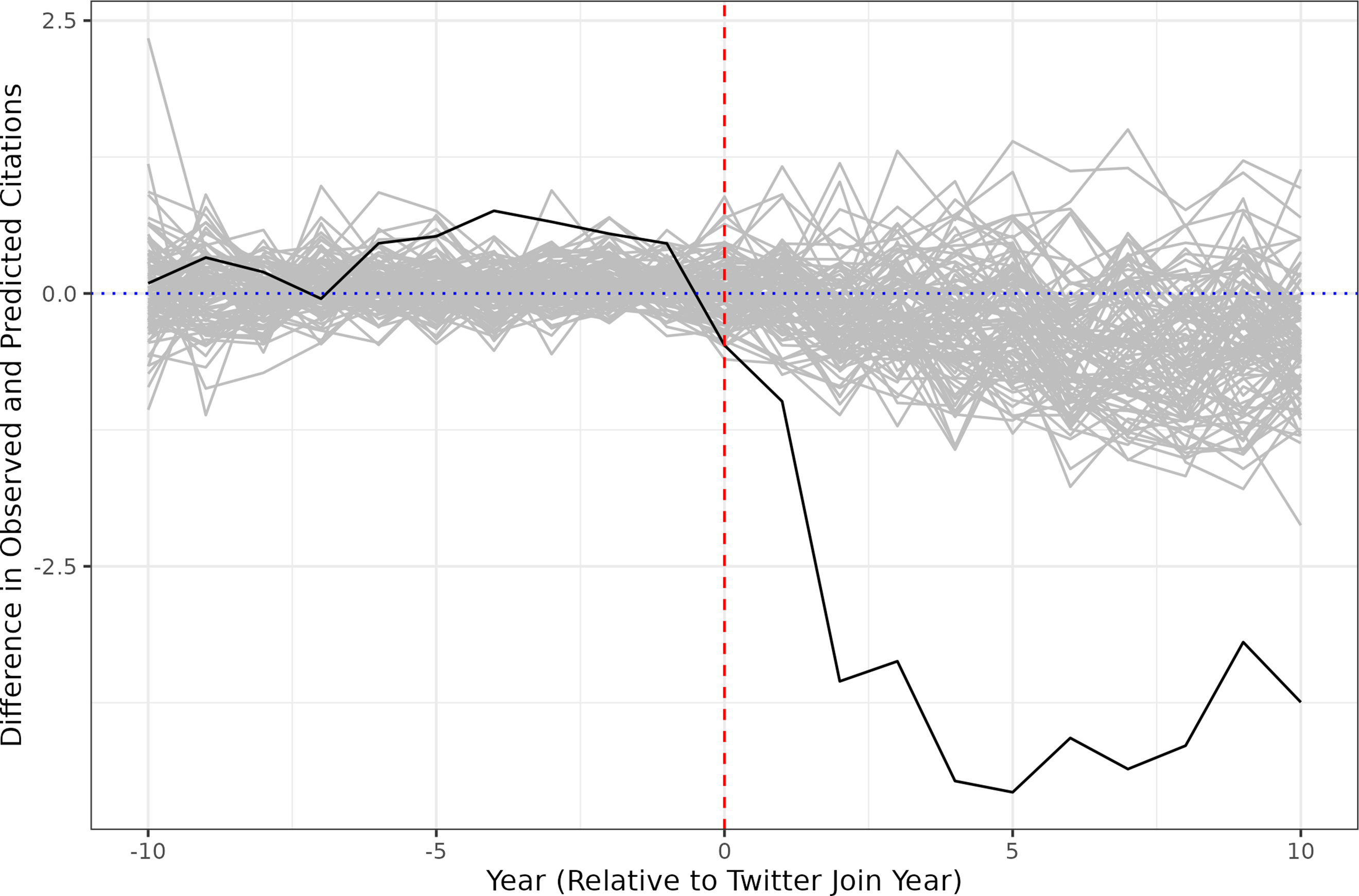

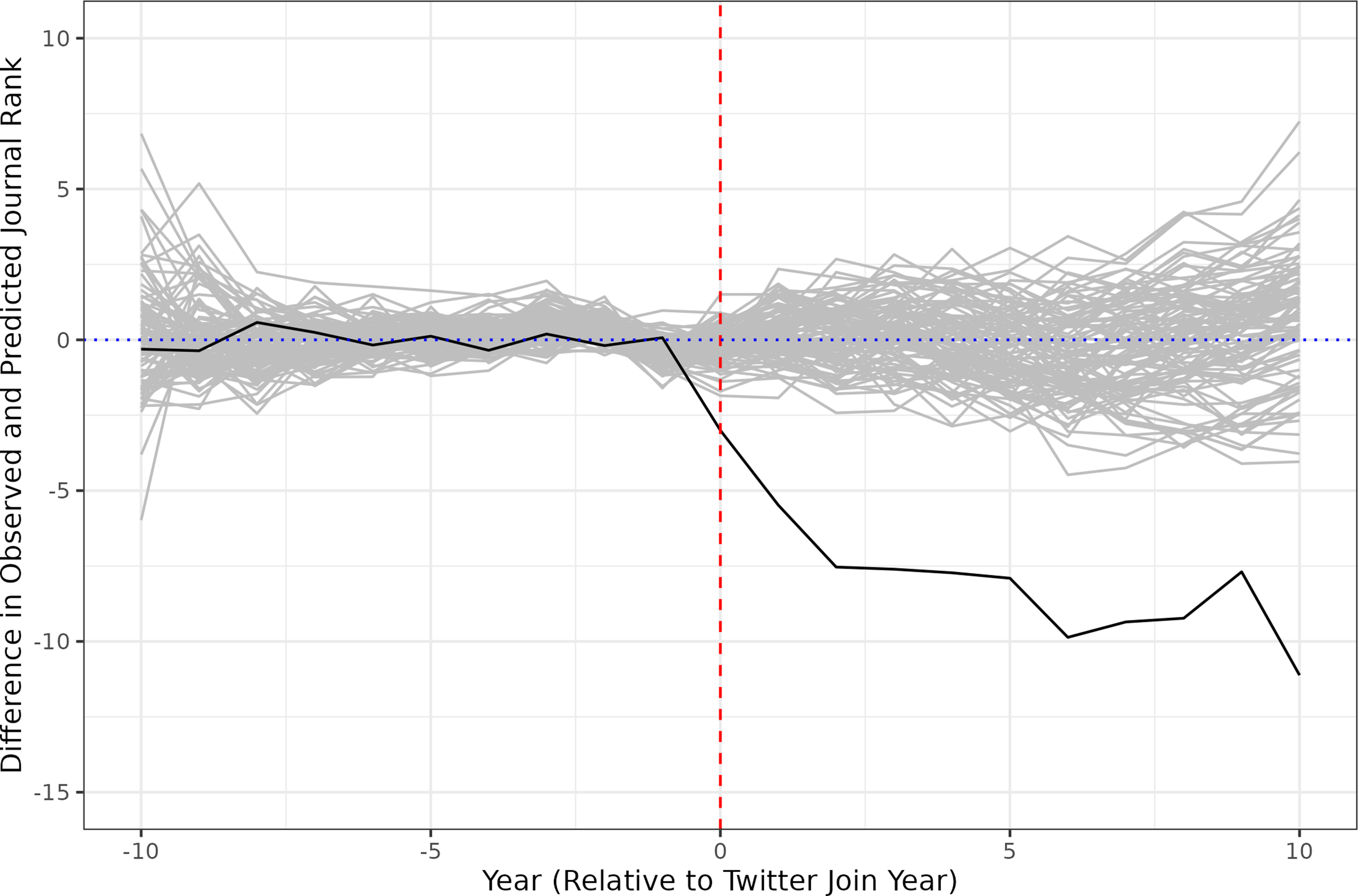

In addition to our primary measure of academic impact, citations, we consider alternative outcome metrics. First, we repeat our primary analysis examining the rank of the journals in which a scholar publishes their work. To determine this rank, we use a sliding window approach, whereby a scholar’s average journal rank (as determined by Washington and Lee rankings) in year Synthetic Controls Estimates for Journal Placements

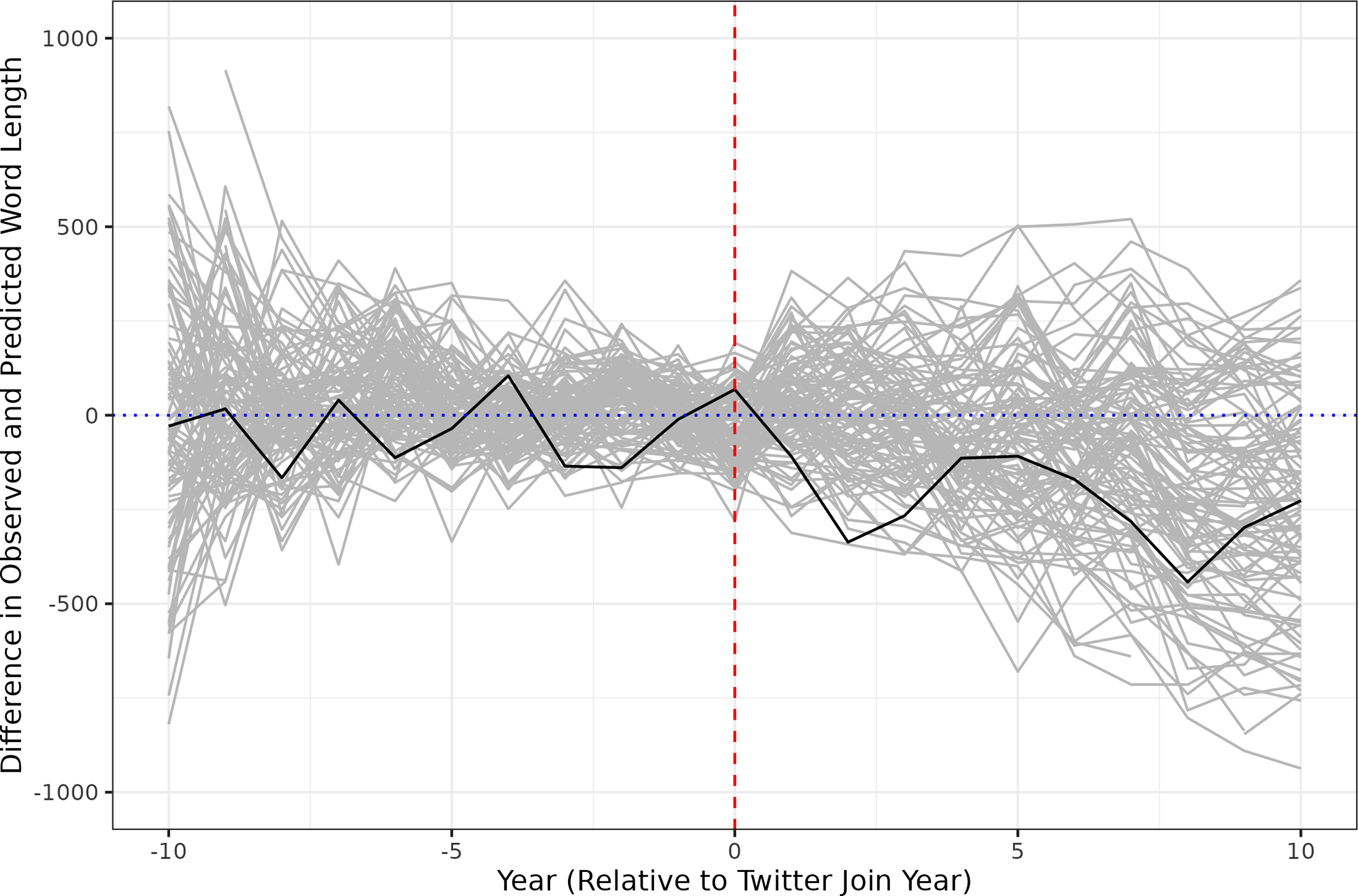

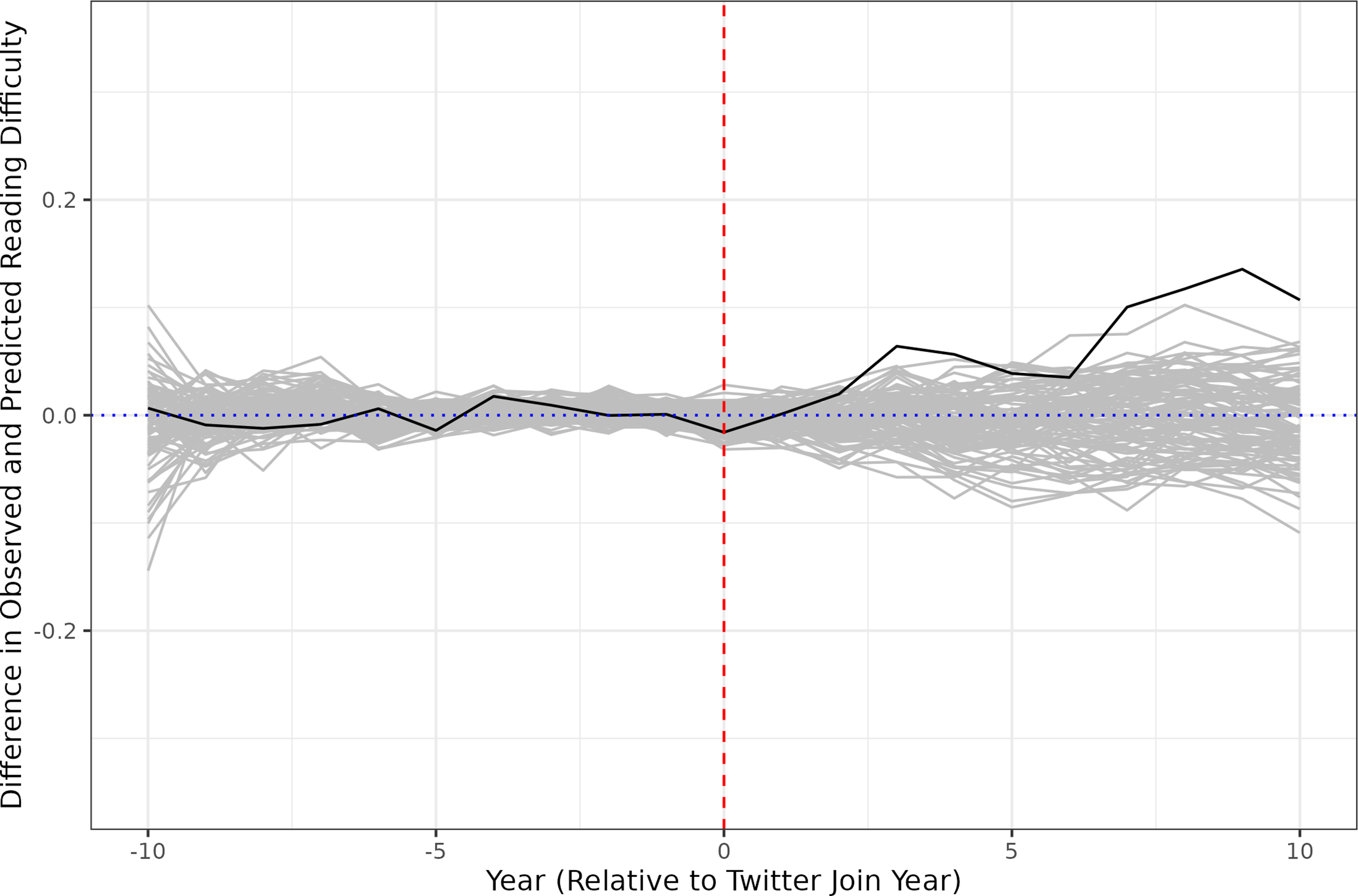

In discussing the assumption of no time-varying confounders, we noted above that a scholar’s decision to join Twitter may coincide with other changes to their process of scholarly production. In particular, scholars who join Twitter at least in part to increase their impact on popular discourse or public policy may strategically shift how they write with that end in mind. To investigate this possibility, we repeat our analysis, focusing on the academic articles’ stylistic markers. It is not possible to assess writing styles holistically. Our analysis is limited to an examination of article length (Figure 12) and readability (Figure 13). We measure readability by Flesch-Kincaid grade levels, with higher values indicating less readability. There is a minor upward trend in Flesch-Kincaid grade levels, which may suggest articles becoming harder to read after scholars join Twitter. However, this increase is substantively small and largely consistent with what is observed under a placebo regime. Overall, we find no significant evidence of stylistic changes in the published work of scholars on Twitter. Synthetic Controls Estimates for Article Length Synthetic Controls Estimates for Reading Difficulty

In addition to the outcome metrics of citation counts and article placements, we investigated whether scholars on Twitter are more likely to move to higher-ranked schools and whether scholars on Twitter change the frequency with which they publish. Both lateral moves and academic publications are relatively rare events, however, making it difficult to approximate pre-treatment fit. Even if the pre-treatment history could be well approximated, estimates could suffer from significant bias (Ferman & Pinto, 2021). We therefore omit these analyses for lack of reliability. We also note that while it would be of interest to study the impact of social media on alternative measures of scholarly influence, such as paper downloads on platforms like the Social Science Research Network (SSRN), these data are not available over time, making a historical analysis impossible. 29

4. Discussion

We find a clear and substantial “Twitter bump” for law professors who are active on the platform, measured both by citation counts and by article placements. In our main result, law professors experience an average 22% net increase in citations per year after they join Twitter, relative to a composite of “matched” professors who do not join. This 22% figure is the product of an average 37% annual decrease in citations to pre-Twitter publications and at least a 137% annual increase in citations to post-Twitter publications. Because our methodology does not involve randomly selecting certain authors or articles for promotion on Twitter, we cannot rule out the possibility that our findings are driven in part by changes in law professors’ behavior that coincide with, but are not caused by, their decision to join the platform. Yet our synthetic controls approach allows for causal inference under a set of fairly modest assumptions, and we do not find any persuasive evidence that would be consistent with this alternative hypothesis (such as a divergence in writing styles or productivity rates between those professors who use Twitter and those who abstain). Our results, in other words, suggest that Twitter participation not only predicts but also drives higher citations and better placements in legal scholarship.

This Part briefly explores some of the descriptive and normative implications of these results. We first address what such a significant Twitter bump means for the legal academy. We then consider why social media effects may be larger in law than in other disciplines, before considering whether these effects are likely to hold in the wake of Twitter’s transformation and how they might be investigated further in future work.

4.1. Implications for the Legal Academy

Law professors and law schools are routinely ranked on the basis of citation counts (Fischman, 2024; Heald & Sichelman, 2019; Leiter, 2000; Ruhl et al., 2020; Sag, 2024; Shapiro, 2021; Sisk et al., 2025). For any given article, this count is likely to be low; one study from 2007 reported that 79% of law journal articles receive ten or fewer citations and that 43% are never cited at all (Smith, 2007). Legal scholars also compete aggressively to place their articles in higher-ranked journals, even though such placements are only loosely correlated with citation counts (Brophy, 2009; Callahan & Devins, 2006; Harrison & Mashburn, 2015). As Callahan and Devins relate, legal scholars “jockey for placement in higher-ranked reviews”—sometimes through the controversial practice of trying to “trade up” offers from lower-ranked reviews—for a range of reasons, including “the belief that relative placement marks an article’s quality, fortifies one’s prestige, and improves prospects for career advancement” (2006, p. 375).

Against this backdrop, a Twitter effect of the magnitude that we find has the capacity to shape individual scholars’ reputations and job prospects. Consider, for example, Willey and Knapp’s recent paper (2025) ranking “The Top Legal Scholars of 2024” based on total citations to articles published in 2018, 2019, and 2020. Both the title of this paper and the fact that it has been downloaded over 7,000 times testify to the perceived importance of citation counts within the legal academy. A 22% uptick attributable to Twitter is greater than the gap between the 50th-ranked scholar on this list (190 citations) and the 75th-ranked scholar (160 citations), or between the 75th-ranked scholar and the 100th-ranked scholar (134 citations), and it could easily be the difference between making the list or not. The 137% yearly increase in citations to newer articles that we find attributable to Twitter would, of course, make an even bigger difference in a scholar’s position on such lists. An average law journal placement ten ranks higher may be an even more enticing benefit for legal scholars who see “great status attached to placing articles in the top general interest law reviews” (Fontana, 2020, p. 326), independent of the articles’ readership.

For those who worry about the qualitative impact of social media’s rise on the legal academy, our findings give additional cause for concern. A Twitter bump of such scale means that there is likely to be a significant professional price to pay for not joining the platform in terms of citations, placements, and all the associated status benefits, even as many users report that there is a significant personal price to pay for joining the platform in terms of time, mental health, and exposure to online harassment or abuse (McClain et al., 2021; Oldemburgo de Mello et al., 2024; Posner, 2017). 30 These personal costs may be especially high for women and people of color (Amnesty International, 2018). Provocatively put, contemporary law professors must pay the “Twitter tax” with their uncompensated labor, or else risk losing influence and opportunities to the growing number of colleagues who are active on social media.

Some of our subsidiary findings may also raise concerns. For instance, the fact that law professors on Twitter experience a relative decrease in citations to their pre-Twitter oeuvre, at the same time that they experience a (larger) relative increase in citations to their post-Twitter oeuvre, seems to suggest that: (1) law professors frequently publish new work that is similar in substance to, and therefore substitutable for, their older work; (2) law professors on Twitter are especially likely to publish work that is substitutable in this sense; and/or (3) those who cite law professors on Twitter make a special effort, consciously or unconsciously, to cite their recent writings. Either way, it would appear that a presentist bias influences patterns of citation in the legal literature.

The fact that professors on Twitter place their articles in higher-ranked journals than their pre-Twitter trajectory would predict—without any discernible change in the articles’ length or style—likewise suggests a bias in favor of scholars who use the platform, in this case by law journal editors rather than authors. Critics have long accused the legal scholarship “game” of being rigged or flawed on any number of dimensions (Lawprofblawg & Bush, 2018); we do not mean to romanticize the pre-Twitter era. But the advent of social media, our results imply, may introduce new skews in citation and publication practices.

That all said, some of our other findings tell a more positive story. In particular, the Twitter bump in law does not appear to be limited to professors who teach at “elite” schools, to professors who write on certain topics, to professors of a certain race or sex, to professors at certain stages in their careers, or even to professors who are especially prolific or widely followed on the platform. The big divide is, simply, between those professors who are active on Twitter and those who are not. This lack of heterogeneity in citation and placement effects across subgroups of scholars, along with the observation that female law professors receive slightly higher citation returns, might support the optimistic view of social media as a democratizing force within the academy (Kreis, 2019), one that disrupts rather than reproduces or exacerbates preexisting hierarchies. In addition, the lack of any strong correlation between follower counts or tweet volumes and estimated citation increases ought to allay anxieties that the Twitter bump in law is the product of an “online celebrity” culture or that it requires a great amount of tweeting to achieve. And while the larger citation boost for professors who tweet about their own research might be seen to reward self-promotion, it is arguably a sign of a well-functioning intellectual system that a greater amount of commentary about one’s scholarship yields greater scholarly returns.

Overall, then, our results paint an ambiguous picture. The size of the Twitter bump that we find and the skew toward articles published post-Twitter imply that social media behavior has been altering academic outcomes in ways that may have little to do with the quality of the scholarship. The distribution of this effect, however, implies that the professional benefits from social media use are fairly widely and equitably reaped across the legal academy.

4.2. Is the Twitter Bump Especially Large in Law?

Prior research suggests that tweeting’s effect on citations and related indicators is small to nonexistent in many disciplines. One of the most comprehensive studies that randomly selected articles for Twitter promotion across 11 life-sciences journals, for example, did not discern any citation bump (Branch et al., 2024). A recent meta-analysis observes that while every study thus far has found that “tweeted papers receive more citations than ones that are not tweeted … the differences are not statistically significant, or not replicable, or not robust” (Clancy, 2025). By contrast, we find that law professors who are active on Twitter tend, for no other observable reason, to receive substantially more citations as well as substantially better article placements. This raises the intriguing possibility that the Twitter effect is larger in law than in other disciplines.

We hasten to note that the existence of such an interdisciplinary disparity remains somewhat speculative at this point. Once standard errors are taken into account, the 22% average citation bump that we find could be as low as 14% (though also as high as 30%), reducing the gap between law and other disciplines. More important, within the literature on social media’s effects on scholarly impact indicators, different studies have used different methodologies and units of analysis. Indeed, this study is the first to use a synthetic controls approach. It is therefore impossible to make a direct, apples-to-apples comparison between our results and those that have been reported previously.

Still, the abnormally large Twitter bump that we detect in law deserves further inquiry. The discrepancy is not driven by differences in citation rates across disciplines, because we benchmark our results against typical citation rates within the field. Two hypotheses strike us as particularly plausible. First, legal scholarship’s relative lack of “scientific rigor” (Posner, 2011, p. 860) and “widely shared standards of quality” (West & Citron, 2014, p. 2; see also Snell 2019) might make it more susceptible to social media influence, as compared to disciplines in which citation practices are dictated to a greater extent by consensus professional norms. Twitter’s effect on citations, that is, may be larger in fields where authors enjoy greater discretion regarding whom and what to cite. Second, the endlessly debated role of current students in running most law journals (Cotton, 2006; Friedman, 2018; Lindgren, 1994; Wise et al., 2013) might increase the impact of Twitter, insofar as student editors tend to be more attentive to social media cues when deciding which pieces to accept for publication. The phenomenon of law professors pitching new articles to student editors through Twitter reflects and reinforces this possibility. 31

4.3. Open Questions and Future Research

Perhaps the most basic question raised by this study is whether its results will hold up now that Twitter has been acquired, rebranded, and reshaped by Elon Musk. Anecdotal and empirical evidence indicate that academics have broadly reduced their engagement with the platform since Musk’s takeover in 2022 (Bisbee & Munger, 2025; Kisley, 2024; Moody, 2024). U.S. law professors appear to have migrated to Bluesky in particular (Crawford, 2024; see also Quelle et al., 2025), although it is unclear at this time whether Bluesky or any other platform will ever rival Twitter’s previous reach and cross-ideological character (Wang et al., 2024). We believe that the most plausible mechanisms driving our results—all of which relate to the heightened visibility of scholars who are active online—are likely to apply to some extent to social media more broadly. But this will have to be tested by future scholars. And such testing has become much more difficult for Twitter/X itself, now that the company has restricted research access to its application programming interface (Gotfredsen, 2023; Poudel & Weninger, 2024). In the worst-case scenario, data limitations could mean that this is the last as well as the first study of the Twitter/X effect in law.

To the extent that studies such as this one can be conducted in “the Post-API era of Internet research” (Poudel & Weninger, 2024, p. 1), there are myriad follow-up questions that might be investigated beyond the persistence of the Twitter bump. The discussion above, for example, points toward the potential value of investigating differentials in social media effects across academic disciplines, ideally with a standardized methodology. To our knowledge, no such study has yet been performed.

Within law or any other discipline, future researchers might (data permitting) investigate additional indicators of scholarly success beyond citation counts and article placements, such as download counts, 32 entry-level and lateral job offers, conference participations, and news media mentions. Researchers might examine whether scholars are more likely to cite and to be cited by other scholars who are active on the same platforms, whether because of network effects or “back-scratching and self-promotion-by-proxy” dynamics fostered by social media (Horwitz, 2023). Researchers might also perform content analyses to explore whether and in what ways social media participation predicts changes in the style or substance of users’ academic work. In law at least, the social media effect that we find for citations and placements is sufficiently large as to warrant a range of follow-on studies.

5. Conclusion

This is the first study to investigate the effects of social media on scholarly impact indicators in law. Using a synthetic control methodology that constructs counterfactual versions of law professors who joined Twitter, we show that participation on the platform significantly increased citation counts and improved article placements. In many fields, “there is no detectable citation bump” from Twitter activity (Branch et al., 2024, p. 1). In law, this bump is not only detectable but also strikingly large and broadly distributed across the professoriate. It remains to be seen whether these results will hold up now that Twitter has become X or whether, instead, they will come to be understood as capturing as a unique phase in the evolution of the legal academy: the period in the early 2000s when social media activity drove scholarly success to an extraordinary degree.

Footnotes

Acknowledgement

For helpful comments, we thank Trevor Branch, Josh Chafetz, Evelyn Douek, Daniel Epps, Jens Frankenreiter, James Hicks, Colleen Honigsberg, Michael Livermore, Kyle Rozema, Ganesh Sitaraman, and three anonymous reviewers. For excellent research assistance, we thank Peter Adelson, Zehua Li, Bennett Lunn, Alex Salinas, and Leah Wilson. Data necessary to replicate the results of this article are available upon request. Certain datasets are subject to licensing or other restrictions and cannot be shared.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Notes

Appendix

Synthetic Controls Estimates (Absolute) Synthetic Controls Estimates (Relative) Omitting 10% of Scholars with Significant Pre-Treatment Trends Synthetic Controls Estimates (Absolute) Omitting 10% of Scholars with Significant Pre-Treatment Trends Synthetic Controls Estimates (Relative) Omitting Scholars with Fewer than 15 Citations Synthetic Controls Estimates (Relative) Omitting Scholars with Fewer than 50 Citations Generalized Synthetic Controls Estimates (Relative) Synthetic Controls Estimates for Pre-Twitter Articles (Absolute) Estimated Treatment Effect by Race/Ethnicity Using BIFSG Estimated Treatment Effect by Year of Joining Twitter