Abstract

We investigate whether users’ reputations on Airbnb are racially biased. We do so by comparing the ratings of vacation rentals cross-listed on two sharing economy platforms: Airbnb and HomeAway. While the sociodemographic characteristics of hosts are salient on Airbnb, they are not on HomeAway. We find that when the perceived race of hosts is salient (i.e., on Airbnb), Black hosts are penalized: they receive lower ratings than they would have received for the same property if they were non-Black. This gap in evaluations has negative monetary implications for Black hosts on the Airbnb platform. We experimentally replicate these findings in a controlled setting, establishing a causal link between the perceived race of hosts and the ratings they receive. An additional experiment explores the underlying mechanism, revealing that people stereotypically expect Black hosts to perform worse than white hosts. Our findings suggest that because reputations are themselves biased, they lead to systematic indirect discrimination based on race. Unlike direct discrimination, discrimination generated via reputation systems is more elusive to detect and, consequently, more challenging to regulate.

Introduction

Are users’ reputations in the sharing economy racially biased?

The last two decades have witnessed a series of technological developments enabling the emergence of the “sharing economy” - online platforms that promote the sharing of goods, services, resources, and talents among users through the internet (Shehzad et al., 2015). Because transactions in the sharing economy tend to be more personal and intimate than other e-commerce transactions, often involving access to people’s homes or the use of their possessions, these transactions demand a higher level of trust between users. As a result, social demographic characteristics such as race and gender tend to become more salient, and users tend to rely more on stereotypes and biases in their interactions in the sharing economy (Abrahao et al., 2017).

Indeed, an emerging body of literature suggests that disparities in the sharing economy are significant in magnitude and in their implications. One research project, focusing on the short-term accommodation platform Airbnb, found that non-Black hosts charge approximately 12% more than Black hosts for an equivalent rental, and that rental inquiries from guests with distinctively Black names are 16% less likely to be accepted compared to identical inquiries from guests with distinctively white names (Edelman & Luca, 2014; Edelman et al., 2017). Another study of the Uber ride-sharing platform found that in some locations, passengers with Black-sounding names were subject to longer wait times and more frequent cancellations (Ge et al., 2016, see also: Simonovits et al., 2023).

Studies on the online product marketplace eBay have also documented disparities based on perceived race. In one field experiment involving baseball card auctions, it was shown that cards held by a dark-skinned hand sold for approximately 20% less than cards held by a light-skinned hand (Ayres et al., 2015). Recently, scholars have suggested that reputation systems that provide concrete information about users (Bolton et al., 2004; Chevalier & Mayzlin, 2006) may reduce the reliance on stereotypes, and as a result, decrease racial disparities in the sharing economy, especially if discrimination is belief-based (Abrahao et al., 2017; Cui et al., 2020; Laouénan & Rathelot, 2022; Nunley et al., 2011; Park et al., 2023; Tjaden et al., 2018). In other words, if discrimination were based on either statistically accurate or mistaken beliefs and stereotypes, then concrete information about users generated by reputation systems would reduce it. Indeed, in a study by Cui et al. (2020), it was found that prospective Airbnb guests with Black-sounding names were less likely to be accepted as guests compared to guests with white-sounding names. Yet, when guests had a reputation on the platform, this tendency disappeared. Another study showed that reputation can partially counteract the tendency of users to trust those who are similar to them (Abrahao et al., 2017). In response, other scholars have argued that reputation systems can help only those who manage to obtain at least one review but might negatively affect those people who have not participated in interactions or not received reviews for the interactions in which they have participated. Because biases affect the tendency to interact and to leave a review, reputation systems cannot fully reduce inequality (Kas, 2022; Kas et al., 2022; Yu & Margolin, 2022).

We contribute to this debate by asking whether the evaluations given by users in the sharing economy (ratings) may themselves be racially biased and therefore may not fulfill the promise of eliminating belief-based discrimination in the sharing economy. Why would reviews and reputations be biased? Studies in social psychology suggest that stereotypes tend to color people’s expectations, perceptions, and evaluations of the others’ performance. Thus, the same task performance might be evaluated more positively when produced by white people compared to non-white people, or by men compared to women (Bohren et al., 2019; Swim et al., 1989; Wynn & Correll, 2018). In the context of the labor force, various studies have shown that racial and gender stereotypes affect the evaluations of workers. As a result, minorities and women experience manifold disadvantages at work, including biases in hiring, salary setting, evaluation, and promotion decisions (Bertrand & Mullainathan, 2004; Castilla, 2008; Ridgeway, 2011; Wynn & Correll, 2018). In one field experiment, it was found that in an online mathematics Q&A forum, women’s posts were evaluated more negatively than men’s when there were no prior evaluations. It was further demonstrated that prior evaluations have a lingering effect: following a sequence of positive evaluations, women’s posts were evaluated more positively than men’s (Bohren et al., 2019).

To explore our prediction, we employ a quasi-experimental research design and analyze a dataset of vacation rentals that are cross listed on two sharing economy platforms: Airbnb and HomeAway. On Airbnb, hosts are required to post their photographs and other personally identifiable information, making the perceived race of the hosts easily identifiable, while on HomeAway, hosts are not required to do so, often making their perceived race unknown. 1 We hypothesize that

Black hosts receive lower ratings on Airbnb compared to HomeAway (for the same vacation rentals; net of the differences in ratings between the two platforms for non-Black hosts).

Note that we limit our predictions to ratings and do not address discrimination in the booking behavior of guests toward Black hosts as compared to other hosts. In other words, we focus on evaluations of identical vacation rentals.

Although in our sample Black hosts receive higher ratings than non-Black hosts overall, we find that when the perceived race of hosts is salient (i.e., on Airbnb), Black hosts receive lower rating scores compared to the scores they would have received for the exact same vacation rental if they were non-Black hosts. We also show that the bias in evaluations has negative monetary implications for Black hosts on the Airbnb platform. Our experimental results support the findings of the market analysis in a controlled setting and establish a causal link between the perceived race of hosts and the ratings they receive. Finally, an additional experiment explores the mechanism driving the results and shows that people stereotypically expect Black hosts to perform worse than white hosts.

Our findings suggest that because reputations are themselves biased, they lead to systematic indirect discrimination based on race. Unlike direct discrimination, discrimination generated via reputation systems is more elusive to detect and, consequently, more challenging to regulate.

As the sharing economy continues to grow and expand its reach, the consequences of biased reputations are becoming increasingly concerning. Biased reputations may negatively impact a growing number of reputation owners across various markets, ranging from private sellers on platforms like Amazon or eBay to hosts on Airbnb or ride-share drivers. Beyond sharing economy interactions, our findings are relevant to all market areas where interactions are regulated, and obligations enforced by reputations, rather than, or in addition to, the law. In many relational contractual relationships, reputation plays a significant role in regulating the behavior of the parties involved. The fear of damaging one’s reputation can deter opportunistic market behavior, with parties weighing the long-term reputational costs of exploiting contractual loopholes. Often, parties will prioritize maintaining a strong reputation over pursuing breach-of-contract claims, especially in long-term relationships. In fact, in some close-knit communities, reputation surpasses legal sanctions as a market regulator (Ellickson, 1991; Allen & Dean, 1992; Bernstein, 1992).

The heavy reliance on reputations as regulators of market behavior, alongside or instead of the law, raises the concern that stereotypes and cultural beliefs about race will systematically generate racial inequalities. While the desired solution would be to improve the quality and accuracy of reputational information to align reputation with the actual quality of services and products and to reduce the impact of biases and stereotypes, this is a very challenging task. Addressing the issue of stereotypically biased reputations requires navigating the challenges posed by systematically biased yet authentic reviews by consumers.

Potential solutions to the problem of stereotypically biased reputations in online platforms might include masking the demographic information of a reputation owner or revealing it only after expectations have been formed. Studies suggest that information conveyed to a consumer earlier in the decision-making process is more likely to influence decisions, so revealing bias-inciting information later in the process may decrease bias overall. Another potential solution is lessening the salience of demographic information (e.g., smaller photos of the reputation owner) to minimize the activation of stereotypes. Our findings also suggest that evaluation measures in rating systems should be chosen carefully to avoid racial biases. Finally, given the challenges involved in disentangling the effects of biases from the truthful and accurate components of reputations, our findings call for empirical experimentations by platforms to assess the effects of different designs on the accuracy of ratings.

The rest of the paper is organized as follows. The next section describes the setting, analysis, and results of the empirical investigation of Airbnb-HomeAway. Then we present experimental evidence of a racial bias in evaluations of hypothetical experiences with accommodation rentals. The following section presents the findings of an experimental survey aimed at exploring racial stereotypes and expectations regarding vacation rentals. Finally, the paper concludes with implications, potential solutions and associated hurdles.

Racial Biases in Reputations on Airbnb

To test for racial biases in Airbnb ratings, we compiled a unique dataset incorporating information on vacation rentals cross-listed on two short-term accommodation platforms: Airbnb and HomeAway. Although both platforms are online marketplaces for short-term accommodation, they vary in terms of whether, and to what extent, the social demographic characteristics of users are salient in transactions between hosts and guests. To facilitate trust, Airbnb requires hosts to provide personal photographs and first names, which are displayed to potential guests. At the time the data was collected, hosts’ photographs and names were presented on the front page of the relevant listing. In contrast, HomeAway did not (at the time the data was collected) require hosts to provide photographs; in most cases, personal photos of hosts were not used on the platform at all. 2 Hosts’ names were only displayed at the very bottom of the HomeAway property page, under a section titled ‘Contact Owner,’ which required some scrolling to view.

With such vacation rentals, guests oftentimes do not meet the hosts during their stay. 3 As a result, guests on Airbnb usually develop perceptions of their host’s race and gender before starting their stay, while on HomeAway, the host’s race and gender is generally unknown at the time of booking and often remains unknown throughout the stay. Our study leverages these differences between the platforms to determine if the same vacation rental is evaluated differently by guests when host’s perceived race is known and if the host is a Black host (on Airbnb) compared to when the perceived race of the host is unknown (on HomeAway).

Rating scores on both platforms are collected at the end of the guests’ stay. Guests can review their hosts, and vice versa, but neither party can see each other’s ratings until both have submitted their review or the submission period has ended. The platforms often send review reminder notifications to both guests and hosts after the visit is completed. On Airbnb, guests rate hosts on five factors using a 5-point scale: cleanliness, communication, check-in, location, and value. The average score for all factors and all reviews is presented on the front page of the listing and is used in our analysis. On HomeAway, ratings are also on a 5-point scales, and averages are presented on the front page of the listing.

Materials and Methods

Data for the main analysis was collected during February 2019 by AirDNA, a company that provides data on short-term vacation rentals on online platforms. We requested data on all the cross-listed rentals on Airbnb and HomeAway, in the US cities of New York, Los Angeles, Houston, and Chicago. The locations were chosen for their large, racially diverse populations. The company identified 4346 cross-listed properties by matching photos, descriptions, and geographical locations of the properties listed across the two platforms. Among these, only 1581 listings had an active link to the Airbnb property. A research assistant coded the perceived race and gender of hosts based on their photographs and first names, marking gender or race as ‘missing’ if these were not discernable. Additionally, when ambiguity arose, the assistant was instructed to mark the race as ‘unknown’. Both authors then independently reviewed the coding, resolving disagreements collaboratively. If a consensus could not be reached, the coding was designated as ‘unknown’. Hosts were also coded to identify non-binary gender presentation or hosting as a couple. Overall, 14% of hosts had an ‘unknown’ race classification, and 19% were identified as presenting as a couple or family. These cases were retained in the analysis. After cleaning the data and removing observations with missing variables, the final dataset consisted of 1084 observations of 542 cross-listed rentals. Recall that the dataset includes vacation rentals cross-listed on both Airbnb and HomeAway. While the perceived race of hosts is known for Airbnb, it is unknown for HomeAway. Consequently, for each rental in the dataset there are two observations: the ratings received on Airbnb and the ratings received on HomeAway.

Our analysis examines the differential effects of the salience of the perceived race (on Airbnb) on Black hosts compared to non-Black hosts. To estimate the impact of the Airbnb platform on ratings received by Black hosts, we compare the average rating gap between Airbnb and HomeAway for Black hosts to the same rating gap for non-Black hosts. This is to say, we capture the effects of the salience of the perceived race (on Airbnb) for Black hosts net of the platform effects (on Airbnb) for non-Black hosts.

Note that while Airbnb offers various types of listings, including private homes, private rooms, and shared rooms, rentals on HomeAway are predominantly vacation homes. Thus, the cross-listed rentals on the two platforms (and included in our data) are mainly vacation homes. Other than the display of user photographs on Airbnb, two additional differences between the platforms are relevant to our analyses. First, Airbnb enables hosts to charge extra fees for additional guests, an option not available on HomeAway. Second, Airbnb charges host fees exclusively per booking, with no annual fees, while HomeAway offers hosts the option to be charged either per booking or annually.

We estimate property fixed effects OLS regression models predicting the effect of the Airbnb platform on a Black host (‘Black Host’ X ‘Airbnb’), controlling for the platform effect (‘Airbnb’), the published nightly rate, the extra-people fees, and the response rate. For property

Note that, because the model includes property fixed effects, the direct effect of the perceived race of hosts is not included, as it does not vary between the platforms. However, the interaction term between the race of the host and the platform (‘Black Host’ X ‘Airbnb’) is included, capturing the effect of perceived race on ratings.

To detect the impact of identifiable perceived race on ratings, we must assume that all other differences between the two platforms affect the ratings of Black and non-Black hosts similarly. Our identifying assumption is that guests on both platforms do not differentially value the vacation rentals owned by Black hosts (compared to non-Black hosts). Whereas the clientele of Airbnb may be different than that of HomeAway, the selection of clients across the two platforms is a concern only if the preferences of clients for the types of rentals offered by Black hosts relative to those offered by non-Black hosts are different across the two platforms. We address this selection concern and provide evidence to support our identifying assumption in a few ways. Most notably, we show that including all the observed characteristics of vacation rentals in a regression model predicting ratings (such as the ‘number of bedrooms’ X ‘Airbnb’ and the ‘number of bathrooms’ X ‘Airbnb’) produces a larger effect size of being a Black host on Airbnb (‘Black host’ X ‘Airbnb’). We assume that preferences for observed characteristics of vacation rentals are correlated with preferences for unobserved characteristics of vacation rentals. We thus conclude that the differences in the valuation of the characteristics of rentals across the two platforms do not drive the differences we document between the ratings of Black hosts and non-Black hosts (for a similar strategy, see: Chevalier & Mayzlin, 2006; Mayzlin et al., 2014). In addition, we complement the analysis with an experimental study (Experiment 1) conducted on a sample of clients of Airbnb and HomeAway. We show that clients of Airbnb and HomeAway do not differ in their evaluations of otherwise identical vacation rentals.

Another concern is that the natural experiment may be contaminated by guests who visit both platforms and compare the same rental across Airbnb and HomeAway. In such cases, even if these guests book and leave reviews on HomeAway, they may have already learned about the host’s race through their search on Airbnb. Since the presence of such guests is not negligible (as explored in Experiment I), our estimates may therefore be conservative, potentially underestimating the effect of host information on Airbnb for these individuals, leading to a downward bias in our results.

Results

Biased Ratings

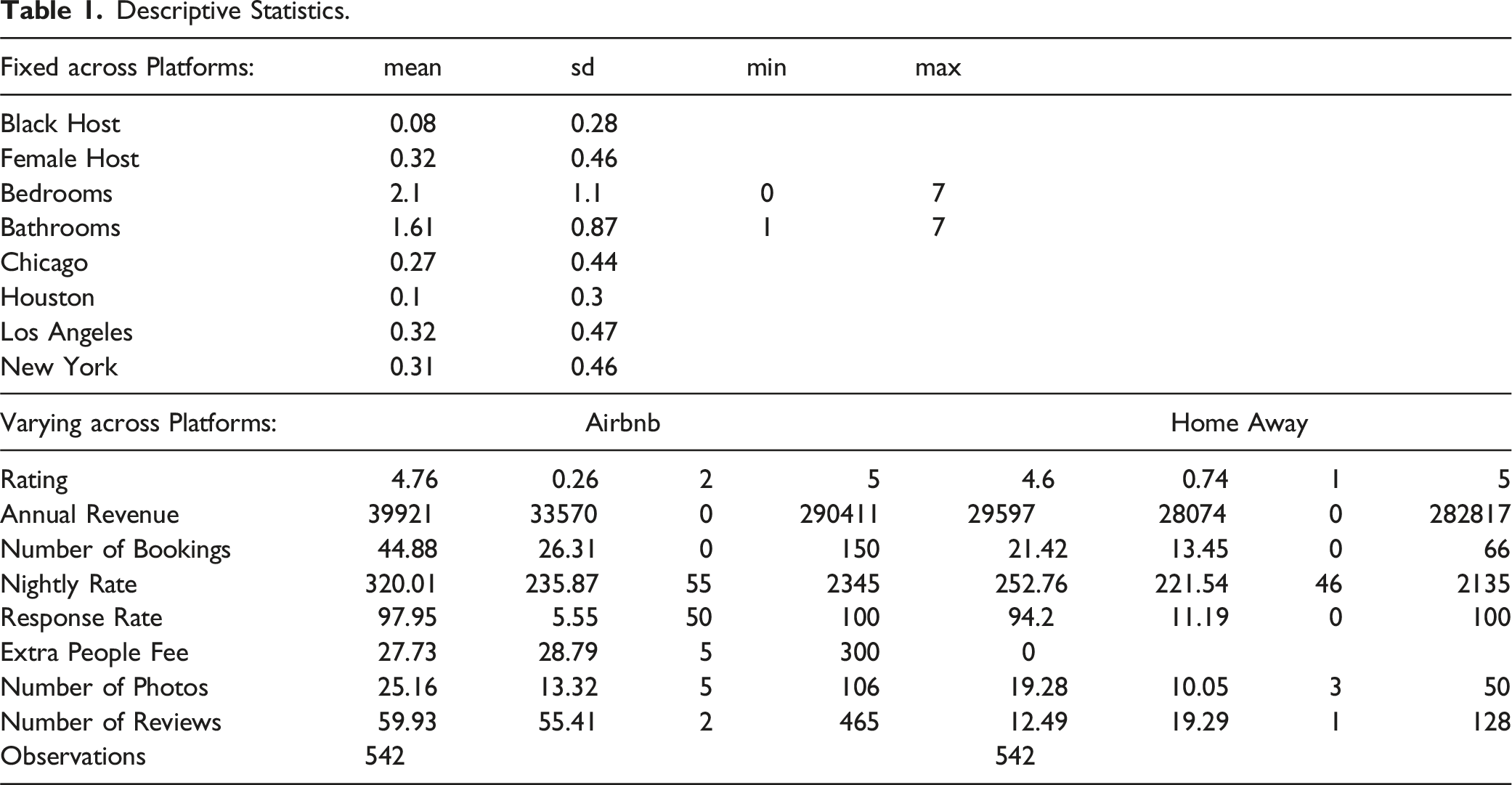

Descriptive Statistics.

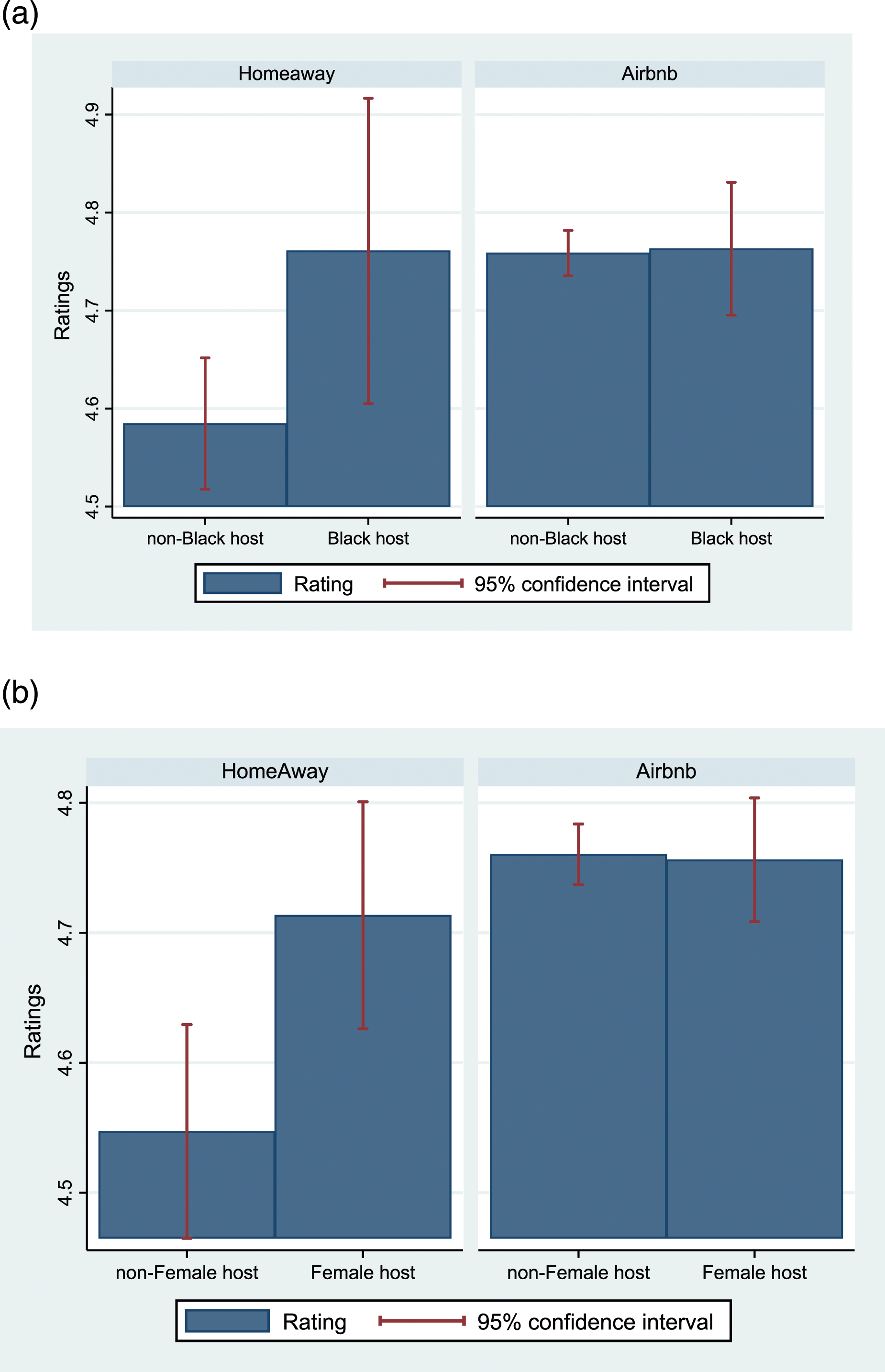

Figure 1(a) and (b) present differences in ratings for all the cross-listed rentals in our dataset, by the perceived race and gender of hosts. (a) and (b) Ratings by perceived race and gender.

The figures show that although all vacation rentals are cross-listed on both platforms, Black hosts and female hosts receive significantly better ratings than non-Black hosts and non-female hosts on HomeAway—but not on Airbnb

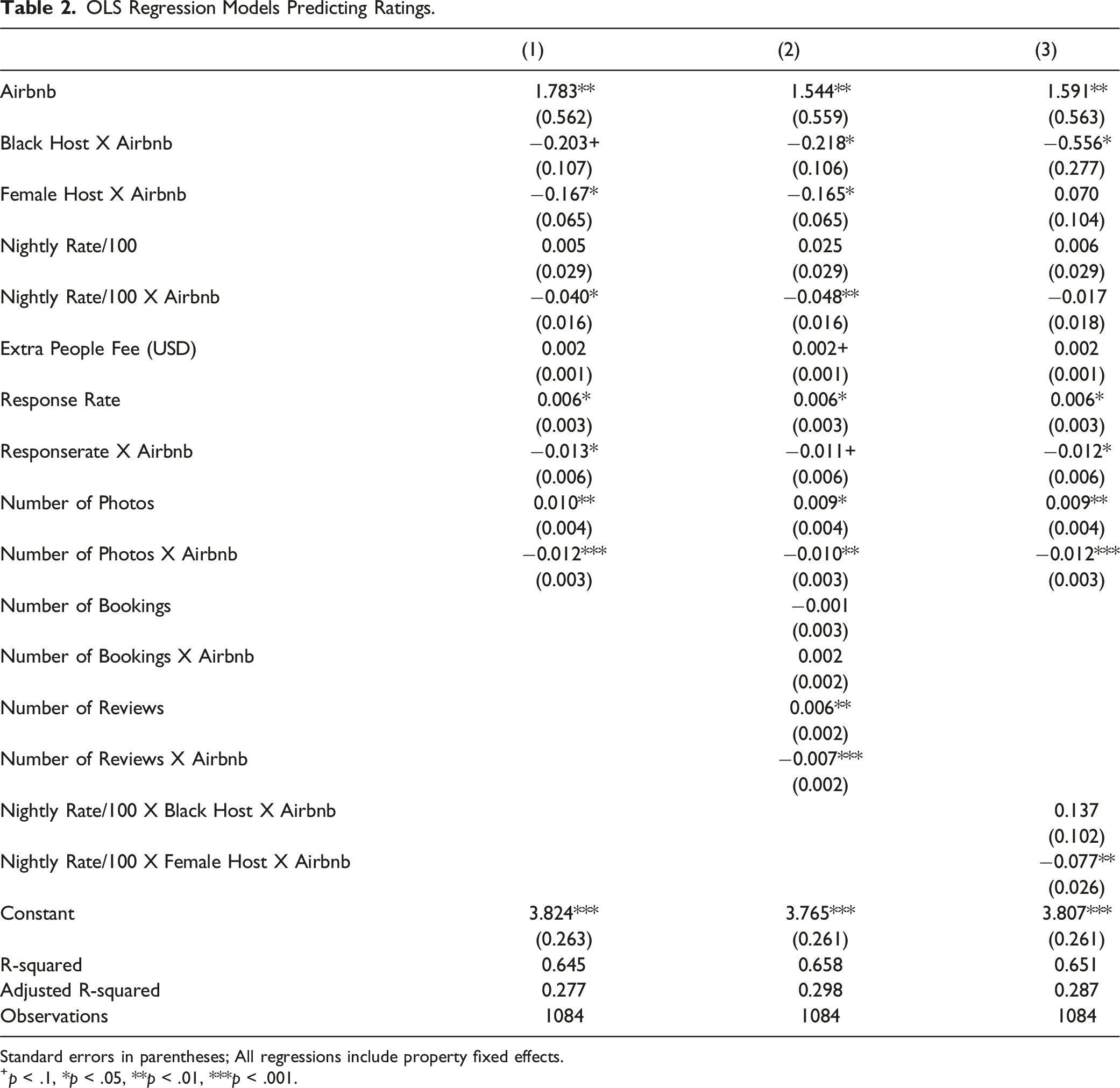

We estimate OLS regression models with property fixed effects to predict the effect of race on hosts’ ratings. By using a dummy variable for each vacation rental, the models hold constant all fixed traits of properties (such as the perceived race, gender, or number of bathrooms). The coefficient ‘Airbnb’ captures the effects of being evaluated on Airbnb compared to being evaluated on HomeAway. The interaction term ‘Black Host’ X ‘Airbnb’ captures the additional effects of race (Black host) on Airbnb (where hosts’ photographs are presented). The reference category in the models is a ‘non-Black Host’ on HomeAway. Because the models include property fixed effects, all the fixed traits of properties like the race and gender of hosts (that do not vary between platforms) are held constant. Because nightly rates, ‘extra people fees’, response rates, and the number of photos sometimes vary across the two platforms—even for the same vacation rental—we do control for them in our regression models (the ‘extra people fees’ on all HomeAway listings is equal to zero). Note that on both platforms, nightly rates are determined by hosts.

OLS Regression Models Predicting Ratings.

Standard errors in parentheses; All regressions include property fixed effects.

+p < .1, *p < .05, **p < .01, ***p < .001.

Model 2 additionally controls for the number of bookings and the number of reviews that tend to vary across the two platforms. Note that unlike the controls that were included in model 1, the number of bookings and the number of reviews might themselves be affected by the ratings of hosts (and not only affect the ratings). After including these two controls, the results remain similar in magnitude and significance (Model 2, N = 1084, p = .041). Finally, in Model 3, we test whether the effects of race on Airbnb vary by the price of the rental (the interaction terms: ‘nightly rate’ X ‘Black host’ X ‘Airbnb’). We find that for Black hosts, penalties are similar across rentals regardless of their price.

Female hosts are also penalized in the ratings they receive on Airbnb (Models 1 and 2), specifically when they host more expensive rentals (model 3). Our original research hypothesis did not address gender. Yet, these findings suggest that reputations are also stereotypically framed by the gender of hosts (Ridgeway, 2011) and support our general claims about the potential biases in reputation systems in the sharing economy.

Because the distributions of ratings vary across the two platforms, in Table A1, we estimate similar models to those in Table 2, using a standardized dependent variable of ratings. The effects we obtain exhibit higher levels of statistical significance.

In Table A2, we present robustness checks for the results from our preferred specifications (Table 2, columns 1 and 2), using alternative specifications, additional controls, or data restrictions. We first incorporate more comprehensive controls for the perceived race of hosts. Our findings indicate that the differences in ratings between white and Asian hosts, as well as between white and Latinx hosts, are not statistically significant. Furthermore, these differences do not account for the observed disparities between Black hosts and non-Black hosts (Table A2 in the appendix, models 1–2). We also confirmed that the results are robust when restricting the sample to only Black and white hosts (details available upon request). To ensure that the results are not driven by guest evaluations of listings managed by professional property managers, we replicate the analysis on a sample including only listings managed by private hosts (N = 900).

5

Effects for Black hosts remain similar in magnitude and significance to the effects we report in Table 2 (Table A2 in the appendix, models 3–4). To account for the fact that two listings of the same property in the two platforms are related, we replicate the regression models with clustered standard errors. The effects obtained remain similar in magnitude and statistical significance (Table A2 in the appendix, models 5–6)

Selection Bias Concerns

The interpretation of the ‘Airbnb’ X ‘Black host’ coefficient as a racial bias assumes that the two platforms attract similar clientele with respect to their preferences for the characteristics of vacation rentals listed by Black and non-Black hosts. Yet, there is a concern that the clients who choose Airbnb tend to systematically value more the types of properties and services offered by non-Black hosts, compared to guests on HomeAway. In this section, we discuss the validity of our identifying strategy and present empirical evidence to support it.

To investigate the potential selection bias, we compare a specification without control variables to a full-control specification that includes all the observable characteristics of vacation rentals available in our dataset, interacted with ‘Airbnb’ (such as the interaction of ‘number of bedrooms’ and ‘Airbnb’ and the interaction of ‘number of bathrooms’ and ‘Airbnb’). We assume that the selection on the observed traits of vacation rentals is associated with the selection on the unobservable traits of vacation rentals (Altonji et al., 2005). Thus, if the inclusion of the observed characteristics of vacation rentals to the model predicting ratings attenuates the coefficient on our variable of interest – ‘Airbnb’ X ‘Black Host’ – we can expect that the inclusion of additional unobserved characteristics of rentals would further attenuate the effects. This would imply a selection on the preferences of guests. However, comparing the no-control specification with the full control specification (Table A3), we find that including all the observed characteristics of vacation rentals produces a larger effect size of our variable of interest. Specifically, including the perceived gender of hosts, the nightly rates, the ‘extra people fees’, the response rate, the number of photos, the number of bookings, the number of reviews, the number of bedrooms, the number of bathrooms, the minimum stay, and the city or neighborhood (all interacted with the Airbnb indicator) produces a larger effect size of our variable of interest. This supports our assumption that the differences in the valuation of the characteristics of rentals across the two platforms do not drive the differences we document in the ratings between Black hosts and non-Black hosts (for a similar strategy see: Chevalier & Mayzlin, 2006; Mayzlin et al., 2014).

Taken together, the empirical evidence alleviates concerns about selection bias based on all factors observable to prospective guests and correlated with the perceived race of hosts, such as location, neighborhood, amenities, photos, past guests’ ratings and reviews on both platforms, as well as the race and gender on Airbnb. Assuming that the extent of selection on observed explanatory variables serves as a guide to the extent of selection on unobservable variables (Altonji et al., 2005), we conclude that our identification strategy is not compromised.

Economic Disadvantages

Finally, we turn to estimate the economic value of hosts’ rating scores. We wish to establish that biased reputations generate economic disadvantages for Black hosts on Airbnb. Previous empirical studies have shown that users’ rating scores on sharing economy platforms—like Airbnb and eBay—are associated with economic value (Resnick et al., 2006; Teubner et al., 2017). Here, using our within vacation-rental design, we find that for the exact same rental, higher rating scores generate a greater annual revenue for hosts.

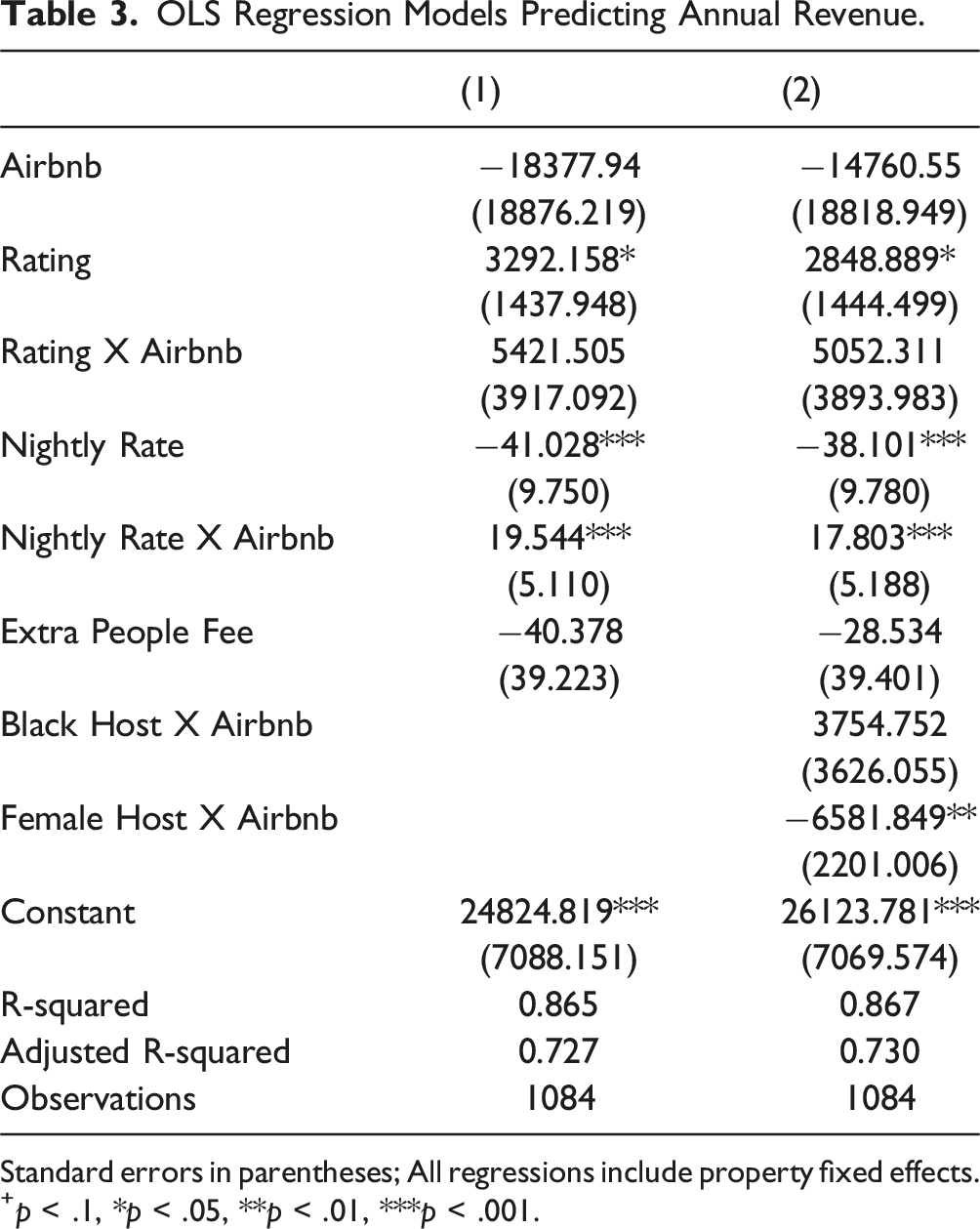

OLS Regression Models Predicting Annual Revenue.

Standard errors in parentheses; All regressions include property fixed effects.

+p < .1, *p < .05, **p < .01, ***p < .001.

The results in Model 2 further suggest that female hosts are directly penalized on Airbnb (in addition to the penalty through their lower rating scores). On average, female hosts on Airbnb generate about $6600 less in annual revenue compared to what a non-female host would have earned for the exact same vacation rental. Note that annual revenues are determined by the nightly rates and the number of nights booked. Because nightly rates are set by hosts, it is impossible to determine whether the observed gender differences stem from user discrimination (fewer bookings/nights booked) or a tendency among female hosts to set lower nightly rates(on Airbnb relative to HomeAway). Investigating the outcomes for hosts by gender requires a separate and thorough analysis, which we leave for future research.

Supplementary Experiment I: Biased Reputations and Clientle Differences

To further address the abovementioned selection bias concerns and to provide additional evidence for a causal relationship between the perceived race of hosts and the ratings they receive, we supplement the main analysis with an additional survey experiment. The purpose of the survey experiment is twofold: (i) to explore whether the reviews that Black hosts receive are worse than the reviews that white hosts receive for the same exact vacation rental (ii) to explore differences in the review related behaviors of clients of Airbnb compared to clients of HomeAway.

Following our findings in the main analysis we formally hypothesized that: 6

The ratings of Black male hosts will be lower than the ratings of white male hosts.

Materials and Methods

The experiment was conducted during January 2022. American participants on AMTurk were first asked to report whether they had used Airbnb, HomeAway or other vacation platforms in the past and about their tendency to leave reviews. They were then asked to imagine that they just rented a vacation rental through an online marketplace. In the listing, the host had written: “Enjoy your stay in this cozy, newly remodeled studio! This studio is centrally located, and it is only a short drive from downtown and the beaches. Wifi and parking are included.” Yet, participants were instructed to imagine that their overall experience was mixed. The location was great. The studio was not well cleaned. Although it was well equipped, amenities were only in a working condition.

Hosts were presented to the participants by first names that signaled either Black or white male identities. Specifically, participants were randomly assigned to one of eight possible hosts: Darnell, Tyrone, Darius, Rasheed (Black sounding names), or Ethan, Edwin, Tayler, and Greg (white sounding names; for our selection of names, see Gaddis, 2017). 7 Thus, participants were randomly assigned to one of two possible hosts: a Black male host or a white male host. They were then asked to review their experience on a 0-5 scale across six factors -- cleanliness, accuracy, communication, check-in, location, and value – using the same scale and factors as Airbnb’s rating system. Because the cleanliness of the vacation rental was described to participants as “not well cleaned” and the overall experience as “mixed,” and due to the potential troubling stereotypical association between Black people and moral impurity or uncleanliness (Eberhardt et al., 2004; Gerald, 2009; Sherman et al., 2012), we predicted that reviews of the rental’s cleanliness and value would reflect the greatest biases against Black hosts.

Altogether, 497 people participated in the experiment. Of these, 34% identified as female, 14% as Black respondents, 5% as Asian, and 22% as having tertiary education (see Appendix Table A4 for the sample characteristics). 8 Of all participants, 358 participants (72%) reported using Airbnb in the past, 185 participants (37%) reported using HomeAway, and 95 participants (19%) reported using both. There was no statistical difference in the demographic characteristics between the clients of HomeAway and Airbnb, except for tertiary education (18% of Airbnb clients vs. 30% of HomeAway clients. Fifty-one participants (10%) reported having used other vacation rentals in the past. Although another 51 participants (10%) reported they had not used vacation rentals in the past, 18 of them mentioned using a specific platform (see Appendix Table A5 for the descriptive statistics of the variables used in the analysis).

Results

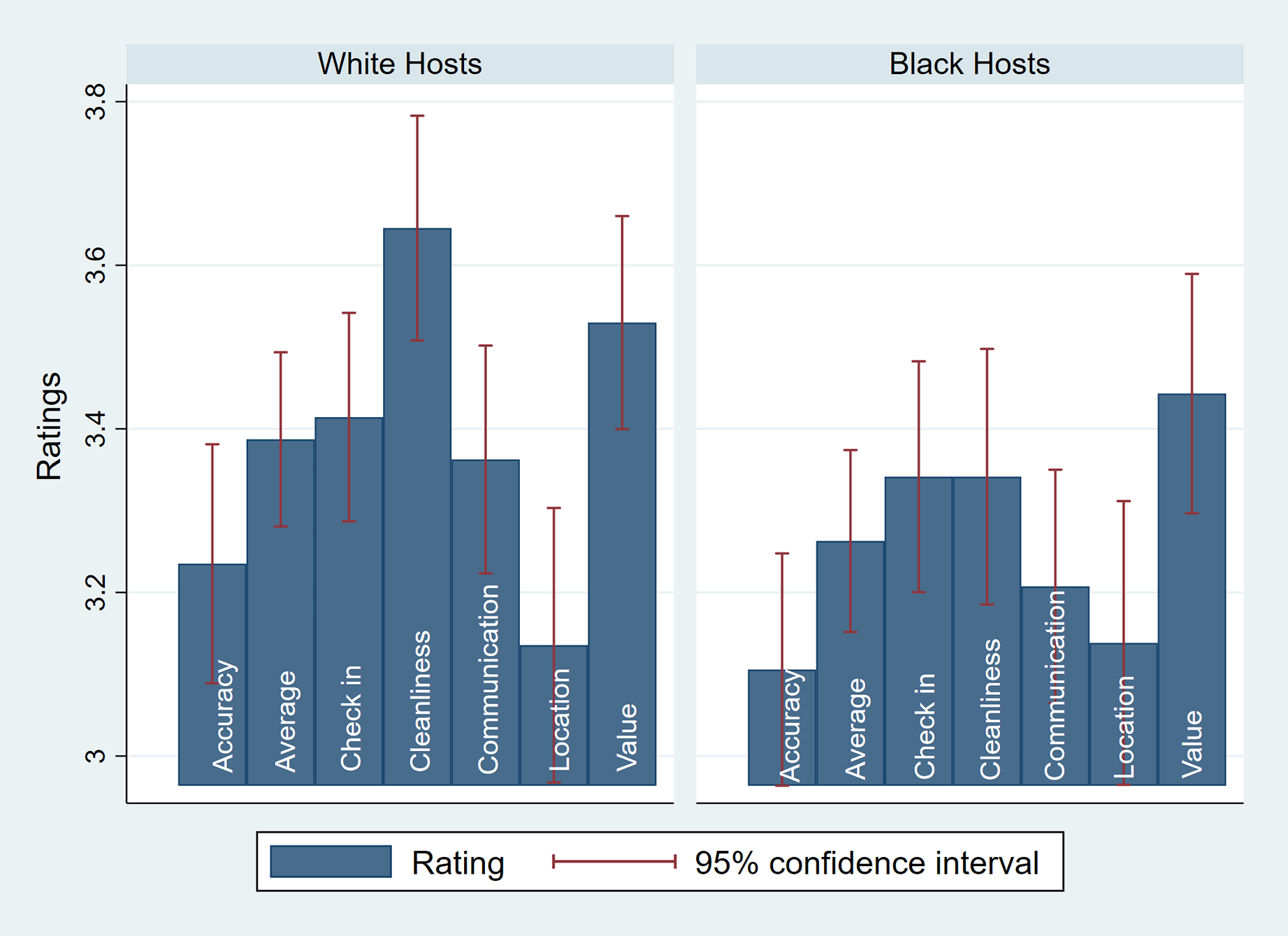

In Figure 2, we present participants’ ratings (all five components and the average) by hosts’ perceived race. Experiment I: Ratings, by perceived race of hosts.

The average ratings of white hosts in the experiment is 3.39, compared to 3.26 for Black hosts (p = .056 based on a t-test comparing the ratings of Black and white hosts). To better understand the patterns in our results, we ran OLS regression models predicting the average ratings as well as each individual component (Appendix Table A6). As predicted, we find that Black hosts receive lower overall ratings, driven by the cleanliness and value components, compared to white hosts. 9

To explore whether ratings provided by Airbnb clients differ from those provided by HomeAway clients -- and thus to address concerns about selection bias raised in the main analysis -- we compared the responses of participants who are clients of Airbnb to those of HomeAway clients. We ran additional OLS regression models to predict the average ratings and each component based on the perceived race of the host, using a sub-sample of participants who are clients of Airbnb, HomeAway, or both (Appendix Table A7; N = 445). These models include a variable capturing whether the participant is a client of Airbnb (‘Client of Airbnb’) and interaction terms between the perceived race of the host and users’ platform experience (‘Black Host’ X ‘Client of Airbnb’). In all models, being a client of Airbnb had no statistically significant effect on the ratings. Similarly, the interaction terms between the perceived race of the host and users’ platform experience were no statistically significant in any of the models.

Additionally, we conducted an unreported regression model restricting the sample to participants who were exclusive clients of either Airbnb or HomeAway. In this sub-sample, being a client of Airbnb also had no statistically significant effect on the ratings. Similarly, the interaction terms between the perceived race of the host and users’ platform experience were not statistically significant across all models. Taken together, these findings suggest that the negative ratings of Black hosts in the experiment are not generated by differences between Airbnb and HomeAway users.

In sum, the results of the experiment support the findings of the online market analysis in a controlled setting, providing evidence of a causal relationship between the perceived race of hosts and the ratings they receive. Furthermore, in the experiment, effects are not driven by differences in the ratings provided by Airbnb and HomeAway clients.

Supplementary Experiment II: Racial Stereotypes and Biased Expectations

Finally, to better understand the mechanisms driving the biased reputations we observe, we turn to experimentally investigate the racial stereotypes people hold in the context of vacation rentals. Specifically, we wish to explore the content of people’s stereotypes, beliefs, and expectations about Black hosts and white hosts of vacation rentals.

People’s stereotypes, beliefs, and expectations play a crucial role in shaping how they perceive and evaluate the performance of others (Nelson, 2009). These preexisting mental frameworks color every new piece of information individuals encounter. Consequently, if guests have lower expectations of Black hosts compared to white hosts, these biased expectations can significantly shape their experiences. This, in turn, may result in prejudiced evaluations of rentals hosted by Black individuals, leading to biased reviews.

Following the literature and the results of our main analyses, we hypothesize that:

Participants will have lower expectations of Black male hosts compared to white male hosts.

In our main analyses, the effects of being a Black female host on rating scores (compared to being a Black male or a white female host) were positive but not statistically significant. This may be due to the relatively small sample size of female hosts, which included only 21 white female hosts and 25 Black female hosts.

Yet, the literature in social psychology on intersectionality suggests that some of the stereotypes about Black women tend to be different both from the stereotypes about white women and from the stereotypes about Black men. Additionally, this body of literature has shown that in some (but not all) contexts Black women are viewed more positively than Black men and white women. More specifically, Black women tend to be viewed as more agentic than white women and warmer than Black men (Livingston et al., 2012; Ridgeway & Kricheli-Katz, 2013). Building on this literature, we hypothesize that in the context of vacation rentals:

Expectations of Black female hosts are higher than expectations of Black male hosts or white female hosts.

Materials and Methods

The experiment was conducted during April 2020. American participants on AMTurk were asked to imagine that they were planning a vacation and were considering renting a vacation rental property through an online marketplace. They were then told that the host was describing the rental as a “cozy, newly remodeled studio,” that is “centrally located” and is only “a short drive from downtown and the beaches.” “Wifi and parking are included.” Hosts were presented to the participants by their first names, which were selected to signal whether the host was white, Black, female, or male. Specifically, participants were randomly assigned to one of eight possible hosts: Alison, Ann, Brad, Darnell, Greg, Keisha, LaToya, Tyrone (for Black- and white-sounding name selection, see Gaddis, 2017). 10 Participants were thus randomly assigned to one of four possible hosts: a Black male host, a Black female host, a white male host, or a white female host.

We also varied the description of the vacation rentals so that half of the participants were told that the vacation rentals they were considering were luxurious and relatively expensive, while the other half were not informed of any specific details regarding the price of the rental. Participants were then asked to rate their expectations on a 0–5 scale for factors such as cleanliness, accuracy, communication, check-in, location, and value, using the same factors and scales employed by Airbnb’s rating system. Note that while in experiment I participants were told that their experience was mixed and that the studio was not well cleaned, in experiment 2 the participants were only given the description of the property by the host and were asked to form their own expectations.

Altogether, 646 people participated in the experiment. We excluded from the sample 45 participants who did not pass the attention test, and 7 participants who reported understanding that the experiment was testing for the effects of race on expectations. The final sample for the analysis includes 592 participants (see Appendix Table A8 for the sample characteristics).

Results

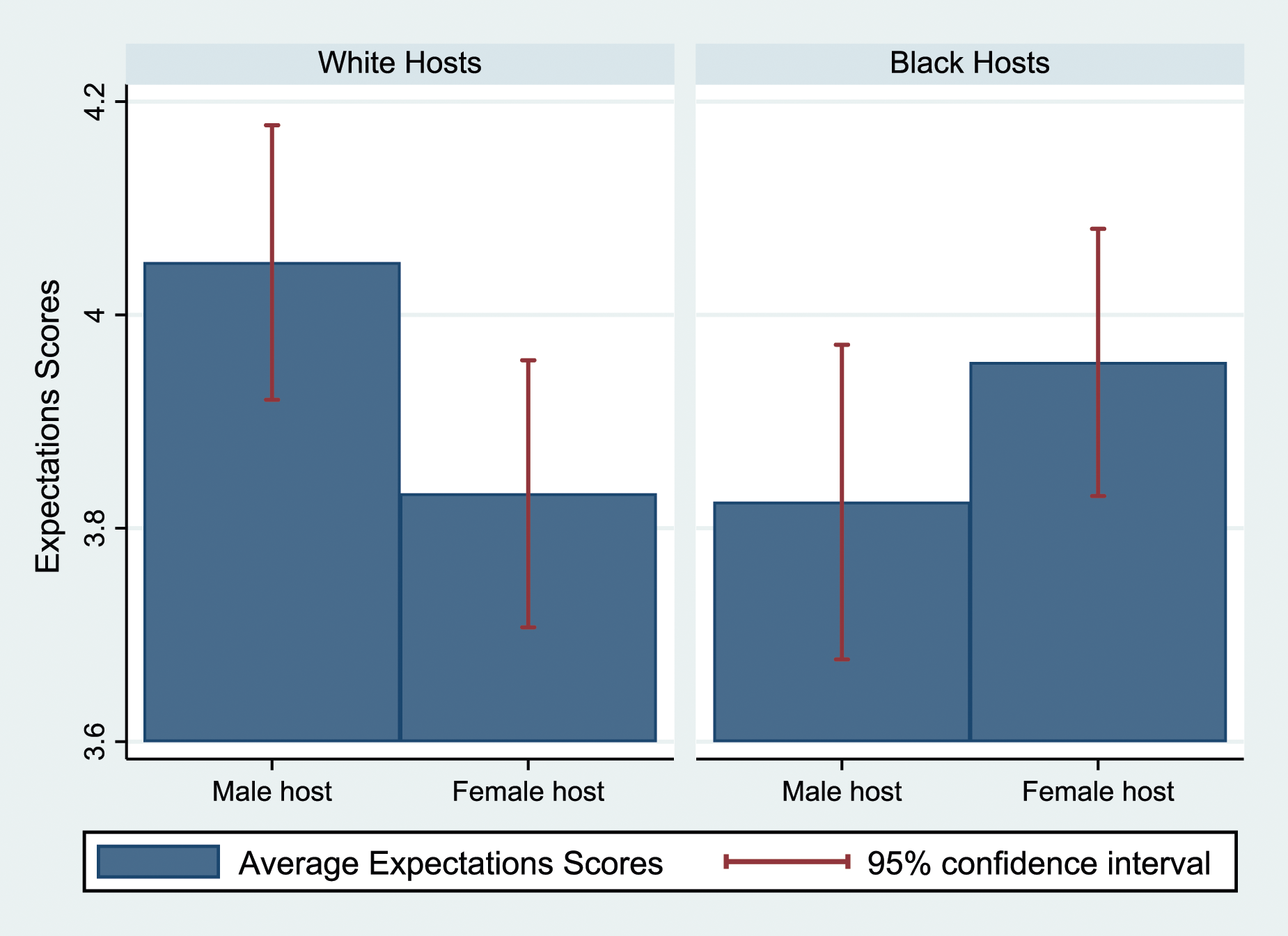

In Figure 3, we present the expectations of participants (average of all five factors), by hosts’ race and gender (see Appendix Table A9 for the descriptive statistics). Experiment II: Expectations, by perceived race and gender of hosts.

The average expectations score for white male hosts in the experiment is 4.05, compared to 3.83 for white female hosts (p < .01 for a t-test comparing the expectations of white female and male hosts). The average expectations score for Black male hosts is 3.82 (p < .05 for a t-test comparing the expectations of Black and white male hosts). Finally, the average expectation score for Black female hosts is 3.96 (p < .1 for a t-test comparing the expectations of female and male Black hosts; p < .1 for a t-test comparing the expectations of Black and white female hosts). To better understand the patterns in our results, we ran OLS regression models predicting the average expectations scores and each of its components (Appendix Table A10). The results indicate that participants have lower expectations for cleanliness, accuracy, communication, and location from white female hosts compared to white male hosts. Additionally, participants expect Black male hosts to perform worse than white male hosts in communication, location, check-in, and value.

Expectations of Black female hosts are higher across all dimensions, except cleanliness, compared to both white female hosts and Black male hosts. Interestingly, even for stereotypically feminine traits like cleanliness, expectations of white female hosts were lower than the expectations of white male hosts. This may be because vacation rentals are perceived more as businesses rather than private homes. Relatedly, research has found that men’s wage advantages in the labor force tend to be similar across male-dominated, female-dominated, and gender-balanced jobs (Budig, 2002). Additionally, we do not find significant differences in the expectations of Black hosts based on the race of participants, and therefore, we do not find evidence for race-based in-group/out-group bias. 11 This lack of bias may be attributed to the internalization of stereotypes and biases (Brown et al., 2002). Because these regression models are testing multiple hypotheses simultaneously – that the coefficients are identical for all four types of hosts defined by gender and race – we verify the robustness of our results using the Romano and Wolf (2005, 2016) methodology to adjust p-values for multiple hypotheses testing.

Discussion and Implications

Summary of Findings and Limitations

Our findings suggest that the reviews given on Airbnb, and by extension on sharing-economy platforms, are racially biased. We show that when the perceived race is salient, Black hosts are penalized in terms of the ratings they receive: when the perceived race of hosts is salient (i.e., on Airbnb), Black hosts receive lower rating scores compared to the scores they would have received for the exact same vacation rental if they were non-Black hosts. Biased reputations are linked to biased expectations and generate economic disadvantages for Black hosts. Our results, therefore, contribute to the large body of literature documenting the challenges faced by Black people in their market interactions (Austin, 1994; McMillen & Singh, 2020; Avenancio-León & Howard, 2022; Brown, 2024).

Our research design allows us to provide novel market-based evidence for the existence of racial biases in evaluations on the online vacation platform of Airbnb. Our findings thus contribute to the literature on racial discrimination, which has predominately relied on field experiments to show causality (Bertrand & Duflo, 2017).

Our findings contribute to the burgeoning literature on reputation systems. Although reputation systems might reduce direct racial discrimination on online platforms by providing concrete information about specific users (Abrahao et al., 2017; Cui et al., 2020; Laouénan & Rathelot, 2022; Nunley et al., 2011; Tjaden et al., 2018), because reputations themselves are biased, they lead to systematic indirect discrimination based on race. Unlike direct discrimination, discrimination generated via reputation systems is more elusive to detect and, consequently, more challenging to regulate.

Our study has some important limitations. Most notably, we limit our analysis only to properties that are cross listed on the two platforms. Thus, the dataset includes only high-end vacation rentals whose hosts chose to cross-list them. Relatedly, our sample is a relatively small sample of vacation rentals. In addition, we do not have data about the differences in clientele between Airbnb and HomeAway. Finally, we only have information on the aggregated reputations of hosts and not specific ratings made by individual guests. We therefore cannot account for the traits of guests nor for the dynamics of biased reputations.

Note also that the presence of host names on HomeAway may, in some cases, reveal the perceived race of the hosts. To the extent that these names indicate race, the effects observed in our main analysis are likely conservative and downward biased, potentially underestimating the full impact of knowing the host’s race. Another limitation is that photographs may reveal not only race but also racial stereotypicality (e.g., hairstyles such as cornrows), which can conflate perceived race and class (Carbado & Mitu, 2003; Eberhardt et al., 2006). Finally, hosts who choose Airbnb may have more attractive photographic imagery or may be less concerned about imagery or bias compared to those who choose to post only on platforms that do not require photographs (see Ravina, 2019). In this sense, the universe of hosts in our dataset may be selectively composed. Nonetheless, our analysis, restricted to this sample, shows that, on average, the premium for Black hosts on Airbnb compared to HomeAway is smaller than that for non-Black hosts on Airbnb relative to HomeAway

Policy Guidelines

Our findings should alert platforms and policymakers aiming to improve the quality and accuracy of reputational information to better align reputations with the actual quality of services and products, while reducing the impact of biases and stereotypes. Many researchers who have identified problems with reputation systems, such as reliability issues, have also offered solutions and proposed reforms. However, addressing the issue of stereotypically biased reputations requires navigating the challenges posed by systematically biased yet authentic consumer reviews.

One straightforward solution that concerned platforms might adopt to address the problem of inaccurate, unconsciously biased ratings would be to eliminate the ratings system from online sharing economy platforms. This could prevent guests on Airbnb, for example, from providing biased ratings and potential guests from relying on them. A similar proposal was raised in the context of biased taxicab tipping after research documented lower tips given to Black taxi drivers by passengers (Ayres et al., 2004). It was suggested to prohibit tipping and instead adopt a mandated “tip included” regulation. Biased tipping is similar to biased ratings in their often unconscious, unintended nature. Like ratings, tips result from evaluating services in retrospect without realizing that stereotypes and cultural beliefs might bias perceived experiences. Nonetheless, eliminating rating systems from sharing economy platforms altogether would decrease trust in the platforms and might substantially harm the experiences of guests and their ability to choose vacation rentals that meet their needs.

Concerned platforms might instead adopt masking policies, decrease the salience of demographic information (e.g., smaller photos), or disclose the race of hosts only after expectations are already formed (e.g., only after a reservation is made on Airbnb or upon arriving at the vacation rental). Because evaluations can be racially biased only if the perceived race of the host is known, masking the race of Airbnb hosts (and, by extension, users of all sharing economy platforms) would reduce racial biases in the evaluations hosts receive. However, it is unclear whether learning the perceived race of hosts after expectations are already formed would reduce racial biases (for example, learning the host’s race only upon arriving at the vacation rental).

The results of studies about the effects of masking individual information at the early stages of an interaction on hiring discrimination are mixed (Åslund & Skans, 2012; Bøg & Kranendonk, 2011; Goldin & Rouse, 2000; Lumb & Vail, 2000). In one seminal study, it was found that female musicians who performed in ‘blind’ auditions (behind a screen) were more likely to pass the audition and be hired than female candidates who performed in full view (Goldin & Rouse, 2000). In another study conducted in Sweden (Åslund & Skans, 2012), anonymous applications were found to eliminate the effects of both ethnic and gender biases on the probability of being invited to an interview; however, the positive effects of masking persisted at the final hiring stage only with regard to gender, not ethnicity. Similarly, in a study conducted in the Netherlands (Bøg and Kranendonk, 2011), masking ethnicity positively affected the probability of being invited to an interview but had no effect on final hiring decisions. Thus, while masking demographic information may offer a partial solution to reducing biases, the mixed results of prior studies suggest that it may not fully eliminate discriminatory outcomes. Further research is needed to explore the effects of delaying the disclosure of demographic information on platforms like Airbnb, or decreasing its salience, on racial biases in reputations.

An additional measure that concerned platforms might adopt is to empirically evaluate the potential biased impact of each evaluation question clients are asked to consider and report, as well as the weight the platform assigns to each question when calculating the final rating score. The results of Experiment I suggest that racial biases in the context of vacation accommodations are driven by racially biased perceptions of cleanliness. In other words, if Airbnb did not specifically ask guests to rate the cleanliness of rentals, or if it assigned this measure a lower weight, biases would likely decrease. Relatedly, algorithms can be designed to recognize and address biased ratings. For example, if Airbnb notices that Black hosts consistently receive lower ratings from certain guests, even when other guests rate them highly, the platform could adjust by removing those outlier ratings or giving them less weight when calculating host reputations.

Importantly, since various features of platform design may influence the salience of demographic characteristics and the activation of biases, platforms should routinely and empirically assess how different features affect biased reputations.

Airbnb hosts, as well as other service providers on sharing economy platforms who are systematically disadvantaged by biased rating systems, may seek remedies by filing discrimination claims under existing anti-discrimination laws against the platforms that operate these rating systems and solicit reviews.

Whereas our study documents discrimination of hosts by guests, facilitated by Airbnb, anti-discrimination laws typically address discrimination against customers by firms rather than discrimination by customers, such as the discrimination of hosts by Airbnb guests. An exception to this general tendency is Section 1981 of the Civil Rights Act of 1866, which protects both contractual parties from discrimination in the making and enforcing of contracts. Bartlett & Gulati (2016) highlight privacy- and autonomy-related concerns associated with regulating discrimination by individual customers and point to efficacy-related considerations, arguing that banning discrimination by customers would infringe on customers’ basic liberties—potentially generating resentment—and likely be ineffective. Rather than advocating for banning discrimination by customers, they propose an intervention focused on firms, like Airbnb. This intervention would impose a duty on firms to refrain from facilitating discrimination by customers on their platforms and to design customer choices in ways that reduce discriminatory biases. In the case of Airbnb, this would imply that Airbnb would have a duty to refrain from requiring hosts to upload their photos and from presenting hosts’ photos, unless they find a way to effectively address biased ratings. It might also imply that even when photos are not presented but guests still meet their hosts in person, the platform would be required to refrain from displaying hosts’ ratings until it implements solutions to counteract guests’ biases. The measures discussed above, which platforms might adopt to reduce discrimination generated by biased user ratings, suggest that platforms like Airbnb are well-equipped to influence customer behavior and mitigate discrimination against hosts through policy and design changes.

Evaluating the application and possible interpretation of existing anti-discrimination law concerning biased ratings is beyond the scope of this article. Yet, we wish to very briefly outline some potential solutions and associated hurdles. 12 Until direct legislation prohibiting platforms from facilitating discrimination by their customers is adopted (Bartlett and Gulati, 2016), courts might interpret existing statutes more broadly, such as Section 1981 of the Civil Rights Act of 1866 (Section 1981), to address claims involving biased reputations by hosts against platforms. 13 Section 1981 prohibits racial discrimination in the ‘making and enforcing’ of contracts. As amended in the Civil Rights Act of 1991, Section 1981 now defines this right to include the ‘enjoyment of all benefits, privileges, terms, and conditions of the contractual relationship.’ Nonetheless, claims under Section 1981 would only apply to racial discrimination and not to other forms of bias, such as gender or sexual orientation. Claims under Section 1981 would face additional hurdles regarding the causation standard and the intent requirement, as biased reputation systems typically involve disparate impact rather than intent, and because it is difficult to disentangle implicit biases from genuine, accurate evaluations of experiences. 14

In summary, addressing the racial biases inherent in reputation and rating systems will require concerted efforts from platforms and policymakers. While solutions such as eliminating ratings or masking demographic information may offer partial relief, they come with trade-offs that could undermine trust in the platform or reduce the ability of consumers to make informed decisions. Moving forward, platforms should focus on empirically testing the impact of different design features to ensure fairer outcomes.

Implications for Private Ordering

As the results of our study highlight the potential costs associated with reliance on reputations, they raise concerns that additional arenas of market interactions—beyond the sharing economy—regulated by reputations, either alongside or without reliance on formal law, might be susceptible to biases and discrimination.

A vast body of literature on relational contracts suggests that a party’s reputation acts as a significant constraint on opportunistic behavior, with parties keenly aware of the long-term reputational costs of exploiting contractual loopholes (Ellickson, 1991; Allen & Dean, 1992; Bernstein, 1992; Greif, 2005). In a groundbreaking study, Stewart Macaulay showed how businesses frequently avoid strict legalistic adherence to contracts, especially in long-term relationships (Macaulay, 2018). Companies often refrain from pursuing breach-of-contract claims, as maintaining a good reputation and fostering future business prospects are more important than winning short-term legal battles. Although relational theory initially focused on complex, high-stakes contracts between sophisticated parties, it has since been applied to consumer contracts. The argument is that reputation also serves as a constraint on opportunism, as firms are aware that enforcing low-quality terms could harm their long-term reputation (Gillette, 2004; Bebchuk & Posner, 2005).

Another branch of this literature focuses on specific communities (e.g., diamond merchants and Midwestern farmers) where reputations work better as market regulators than the threat of legal sanctions. In such often homogeneous and close-knit communities, the threat of reputational sanctions and the concomitant loss of future business lead parties to keep their promises and ethically cooperate with each other (Ellickson, 1991; Allen & Dean, 1992; Bernstein, 1992).

These market phenomena underscore the importance of accurate reputational information. Yet, scholars argue that reputations are not always reliable, that consumers are not very good at assessing reputation, and that reputation owners have an incentive to impede unfavorable (but truthful) reputations (Heymann, 2011; Arbel, 2019). Our findings point to a related but slightly different direction: not only are reputations unreliable, but they also tend to be systematically biased and framed by stereotypes and cultural beliefs about race and gender. As a result, when people use them, disadvantages follow.

Our findings indicate that reputation systems, although designed to foster trust and facilitate transactions, can inadvertently perpetuate systemic, indirect discrimination based on race. They illustrate the dynamics of inequality, highlighting how contractual interactions between hosts and guests both reflect and perpetuate inequality. These interactions are framed by stereotypes and cultural beliefs about race, which shape guests’ evaluations of their vacation experiences. Consequently, guests provide authentic yet biased ratings, further influencing other guests’ likelihood of interacting with hosts and leading to economic penalties for Black hosts.

Supplemental Material

Supplemental Material - Biased Reputations: Using Cross-Listed Properties to Identify the Negative Effects of Perceived Race on Users’ Reputations on Airbnb

Supplemental Material for Biased Reputations: Using Cross-Listed Properties to Identify the Negative Effects of Perceived Race on Users’ Reputations on Airbnb by Tamar Kricheli-Katz and Tali Regev in Journal of Law & Empirical Analysis

Footnotes

Acknowledgments

For very helpful comments we thank Ian Ayres, Jonathan Masur, Nadav Levi, Yaniv Yedid-Levi and participants at the 2022 Penn-NYU Empirical Contracts Workshop, the 2022 Annual Conference on Empirical Legal Studies (CELS) and the 2022 European Labor Economics Association Conference. We also thank Tom Tzur, Haggai Porat and Danielle Loiter for excellent research assistance. The reputation experiment was preregistered on the OSF website at ![]() .

.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.