Abstract

Navigating ambiguity is increasingly recognized as a critical competency necessary for thriving in a complex world and for shaping futures. This paper introduces the d.school Ambiguity Navigation Instrument (DANI), a curriculum-aligned assessment tool that measures learners’ attitudes toward and strategies for navigating ambiguity. Unlike existing instruments that frame ambiguity as an inherently negative condition to be merely tolerated and an individual’s relationship to ambiguity as a stable psycho-social trait, DANI conceptualizes navigating ambiguity neutrally, and as a learnable skill, composed of two distinct factors: Navigating Ambiguity Attitude (NA Attitude) and Navigating Ambiguity Actions (NA Actions). A validation study at two universities demonstrates DANI’s effectiveness in detecting changes in students’ relationship with ambiguity following design-focused educational experiences. The instrument provides educators with a reliable method to assess curriculum effectiveness and offers students a starting point for reflection on their approach to ambiguity. DANI contributes to design education assessment, with potential applications beyond design, to support learning environments and experiences from all disciplines that better prepare students for the inherent ambiguity of the world.

Keywords

Introduction

We are living in a world in which technology and its ripple effects are evolving faster than we can keep up with. Information and tools that were previously only accessible to experts are now more broadly available. This creates amazing opportunities, but it also raises an important question: how do we prepare the next generations to not only thrive in a future we can’t predict, but also to be bold shapers of the future? This question lies at the heart of our mission as educators.

Our current education system was built for a world in which careers followed straight lines and knowledge stayed put. That world doesn’t exist anymore. Today’s students need to develop the cognitive flexibility, ethical reasoning, and creative problem-framing skills required to take on wicked problems, 1 explore uncharted territory, and creatively envision and shape preferable futures. How might we reimagine learning environments and experiences so that they cultivate not only technical competence but also the capacity to navigate ambiguity—perhaps the most essential skill for those who will boldly architect the world of tomorrow?

Learning experiences often shield students from the complexity and ambiguity of the world outside of the walls of the classroom. And in doing so they foster skills, mindsets, and biases—such as the need for closure, rigid thinking, and rejection of the unusual and different—that are antithetical to what students need to thrive in the world.

In contrast, many studies showcase how pedagogies that preserve ambiguity—at different stages of development, from early childhood to university—can foster productive mindsets and skills (Bonawitz et al., 2011; Deslauriers et al., 2019; Kapur & Bielaczyc, 2012; Yadav et al., 2011). In our work as design 2 educators, we strive to intentionally embed different levels of ambiguity in the learning experiences we create for our students, and have explicitly and implicitly incorporated navigating ambiguity as a key learning outcome.

Navigating ambiguity manifests in two critical dimensions: interpreting what is and envisioning what could be. When interpreting what is, students learn to make sense of incomplete information, recognize patterns in complexity, and extract meaningful insights from their observations and experiences. They develop comfort with plurality—understanding that multiple interpretations can coexist without requiring immediate resolution. When envisioning what could be, students cultivate the ability to generate multiple possibilities, to hold competing ideas in productive tension, and to prototype potential futures without premature commitment. This dual capacity—to both analyze the present with nuance and imagine alternative futures with creativity—forms the essence of navigating ambiguity as a competence.

When we looked for ways to assess how students develop the competence of navigating ambiguity in our classes, we found a variety of instruments designed to measure individuals’ responses to ambiguity (Budner, 1962; Ellsberg, 1961; Frenkel-Brunswik, 1949 3 ; Furnham & Marks, 2013 4 ; Lauriola et al., 2016; MacDonald, 1970; McLain, 1993, 2009; Norton, 1975; Rubiales-Núñez et al., 2024 5 ; Rydell & Rosen, 1966; Xu & Tracey, 2015). However, we found two main shortcomings with these instruments:

(1) They frame an individual’s relationship to ambiguity as a stable psycho-social trait rather than a set of attitudes and skills that can be learned and developed.

(2) They aim at measuring “ambiguity tolerance,” which positions ambiguity as an inherently negative condition that—at best—can be tolerated.

On the first shortcoming, tolerance to ambiguity might in fact be malleable (Endres et al., 2015). Furthermore, we believe that both of these characteristics of the existing instruments may bias how learners interpret the results and reinforce a fixed mindset—that is, they believe that their ability to navigate ambiguity is a trait they can’t change. In doing so, these instruments fail to capture and convey the possibilities that can be harvested from embracing ambiguous situations, such as the development of nuanced interpretations from data and experiences, the generation of multiple ideas to address a problem, and the ability to envision a multiplicity of futures.

Our Objective

In light of the limitations of available instruments to assess the ability to navigate ambiguity, we have developed a new instrument called the d.school Ambiguity Navigation Instrument (DANI) that reliably measures learners’ attitude toward, and understanding of, strategies for taking action when faced with ambiguity. DANI illuminates the attitudinal and behavioral dimensions of navigating ambiguity without implying a negative valence for ambiguity. We used the design abilities shown in Figure 1 as the starting point for developing the instrument, as described below. Furthermore, we sought to create a tool that could be used to support teaching and curriculum development in design-oriented courses and that also has the potential to be used across multiple domains.

Navigating ambiguity as a meta-ability supported by other design abilities.

Instrument Development

Method

During instrument development (Pilot Study), we reviewed design publications related to Navigating Ambiguity and other design abilities (Carter, 2016; Carter & Doorley, 2018; d.school, 2019; Small & Schmutte, 2022) and research related to attitudes toward ambiguity (cited above). We conducted interviews with design educators to understand their conceptualizations of design abilities in practice. In the interviews, educators shared their definition and understanding of design abilities, perceptions of the knowledge and attitudes students need in order to navigate ambiguity effectively, how they teach navigating ambiguity, and the potential use and value of an instrument to measure it. Following the interviews, we conducted two meetings with educators to share a synthesis of our findings and to develop a conceptual framework for the instrument. A subset of these educators also participated in two rounds of item creation. This iterative process ensured strong face validity 6 of DANI items.

The pilot version of the instrument included 52 assessment items. Assessment items were designed using three types of prompts commonly found in psychometric instruments: I feel, I think, I do. In drafting items, we deliberately omitted the word “design,” referring instead to “problem-solving” or “challenges.” Likewise, we avoided terms such as “brainstorming” and similar jargon, in favor of plain language, such as “generating ideas.” This ensured that the instrument would be effective for those without prior design experience. The pilot version also included nine demographic items and three open-ended prompts (one in each section) that enabled respondents to identify items that were confusing and provide feedback about their experience answering the questionnaire.

The instrument was piloted over a 2-month period with a range of learners from the United States and abroad. To ensure that the instrument would be effective for those without prior design experience, we targeted students outside of our design classes. Detailed demographics of pilot respondents are summarized in Appendix A (Table A1) showing a range of experience with design.

In the process of co-creation of the initial 52 pilot items with the design educators, only items that related to five of the initial set of eight design abilities were included: Navigate Ambiguity, Learn from Others, Synthesize Information, Experiment Rapidly, and Move between Concrete and Abstract. The three design abilities not included (Communicate Deliberately, Design Your Design Work, and Build and Craft Intentionally) meaningfully overlapped with other design abilities to the extent that they could not be isolated. In some cases, items were removed because they could not be framed without referring to “design” explicitly, which would have limited the instrument’s value only to those with prior design experience.

Pilot Study Results

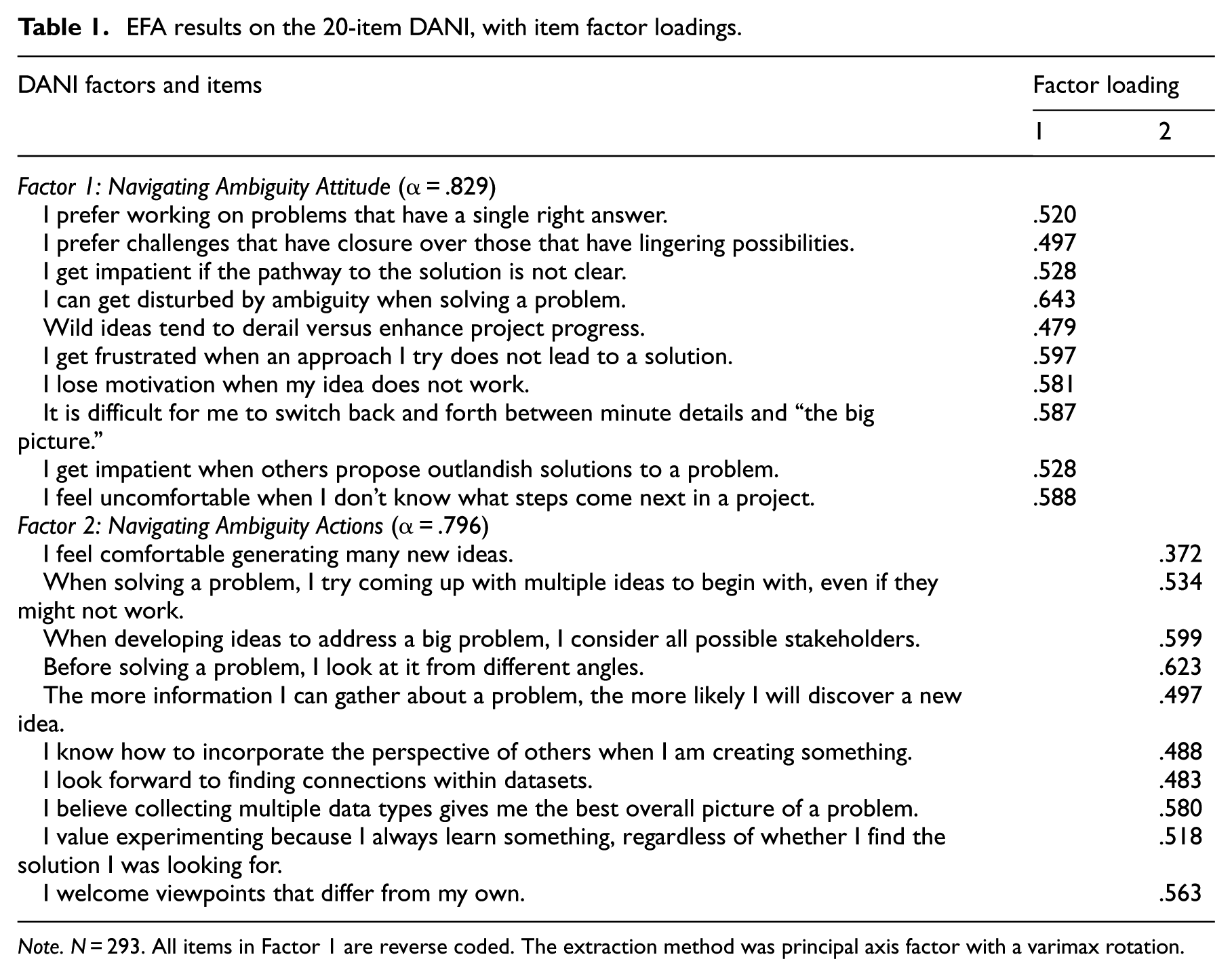

A total of 292 responses were collected, which exceeds the minimum recommendation of 100 for item-level, statistical power (Kline, 1994). Item Response Theory (IRT) analyses identified the items that best distinguished respondents from one another. Open-ended comments from the pilot survey identified items that were confusing. Exploratory Factor Analysis (EFA) 7 was conducted to determine the best grouping of items. By these means, we were able to reduce the instrument from 52 to 20 assessment items, clustered into two factors, with each factor comprising 10 items. The two factors, Navigating Ambiguity Attitude (NA Attitude) and Navigating Ambiguity Actions (NA Actions) are described below. No factor solution grouped responses by specific design abilities, suggesting that these design abilities are interrelated. Although they can be taught discretely, in practice they operate in an interdependent (and probably iterative) manner.

Repeated factor analyses based on the 20 items confirmed the efficacy of the 20-item instrument. Cronbach alpha for NA Attitude was α = .829; Cronbach alpha for NA Actions was α = .796. The two factors correlated at approximately r = .238 (p < .001), indicating that both constructs provide different and meaningful explanatory value. Table 1 summarizes construct reliability and item-to-factor correlations for each factor.

EFA results on the 20-item DANI, with item factor loadings.

Note. N = 293. All items in Factor 1 are reverse coded. The extraction method was principal axis factor with a varimax rotation.

As mentioned above, the EFA resulted in a two-factor solution, meaning that items clustered into two distinct groupings. One group of 10 items was predominately comprised of statements that included the phrase, “I feel.” We labeled this factor NA Attitude. The second factor of 10 items were predominately comprised of items that included the phrases “I think” or “I do.” We labeled this factor NA Actions. NA Attitude assesses a student’s attitude toward navigating ambiguity. Nearly all items in that construct relate to the Navigating Ambiguity design ability, but include a few items related to Learning from Others, Synthesizing Information, and Moving from Concrete to Abstract. NA Actions assesses a student’s proclivity to engage in actions related to creative problem-solving. This construct includes items related to Experimenting Rapidly, Learning from Others, and Synthesizing Information (Powers et al., 2022).

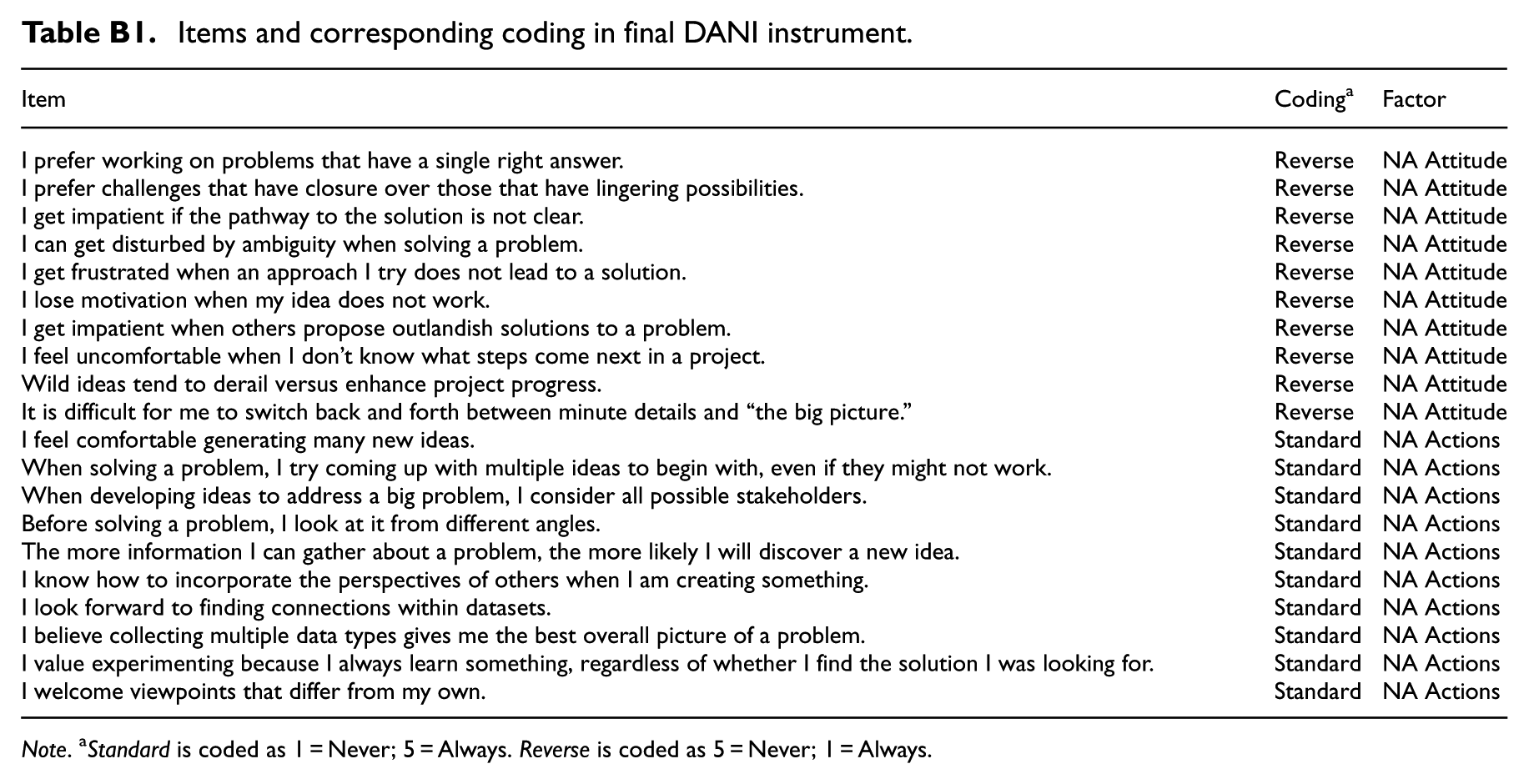

Description of the Final Instrument

DANI is a 20-item, self-report questionnaire (see instrument items in Appendix B, Table B1). All items have the same response options along a five-point Likert scale: Never, Rarely, Sometimes, Often, Always. The survey takes approximately 5 min to complete. The survey measures two constructs: NavigatingAmbiguityAttitude (NA Attitude) and Navigating Ambiguity Actions (NA Actions). A complete assessment yields three scores: (1) NA Attitude, (2) NA Actions, and (3) NA Total Score.

NA Attitude provides a snapshot of how comfortable individuals feel solving ambiguous problems. The items reflect frustration or discomfort with open-ended projects with unspecified outcomes and/or without well-defined procedures. Those who score high on this factor are hypothesized to not get discouraged or impatient with such challenges. They may be more likely to understand both the big picture and small details of a project. Or they might enjoy the challenges that come with exploring multiple solutions to a problem. A low score on NA Attitude items could indicate someone who is goal-oriented and/or prefers to follow rules or specific steps.

NA Actions reflects the behaviors individuals might exhibit or strategies they might use when engaging in ambiguous projects. Those who score high on this factor are likely to engage in strategies and behaviors that promote problem solving, making connections, or generating ideas. Someone with a low score on NA Actions likely struggles with some of the actions or strategies that facilitate completion of ambiguous tasks.

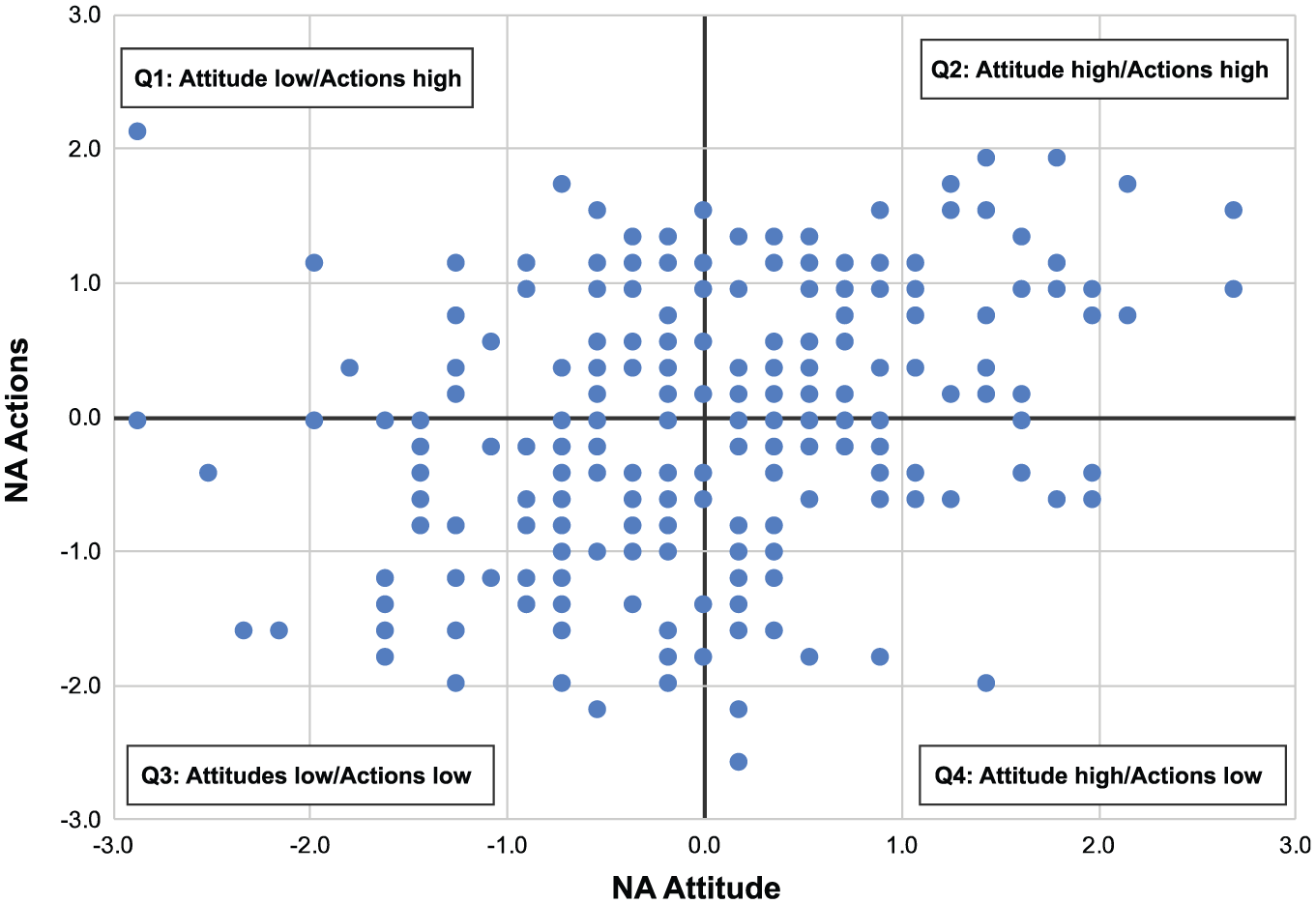

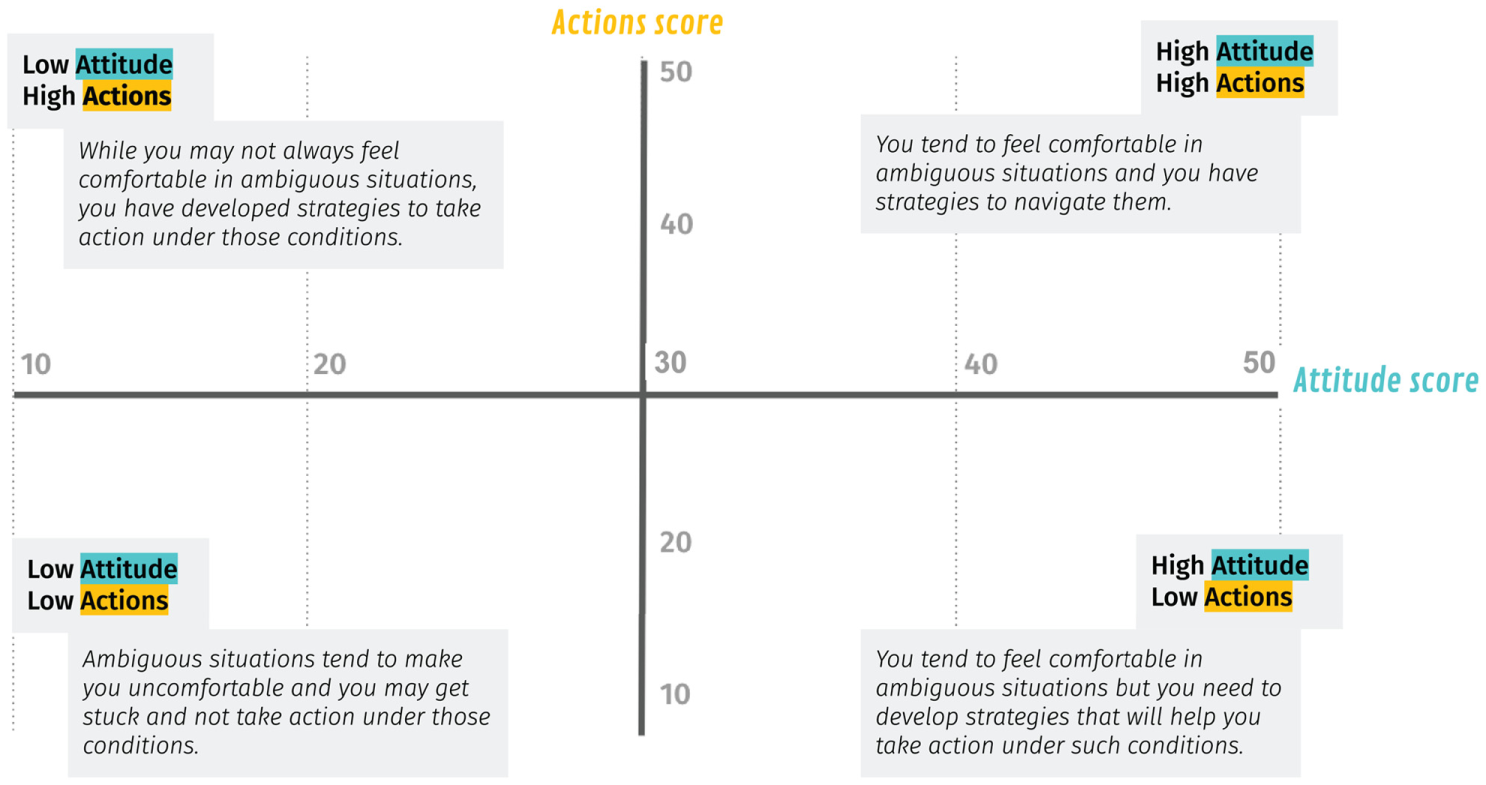

The 10 items comprising the NA Attitude factor are reverse coded (Never = 5; Always = 1). Summing the 10 items yields an NA Attitude score, with a higher score indicating someone who has a positive attitude or feels more comfortable with ambiguous projects or situations. All 10 items that comprise the NA Action factor are coded normally (Never = 1; Always = 5). Summing the 10 items results in the NA Action score. A higher number reflects a propensity to utilize a broader number of effective behaviors and strategies when facing an ambiguous challenge. See Figure 2 for the distribution of scores from the pilot study.

Distribution of pilot study scores, followed by interpretation of each quadrant.

NA Total Score is derived by summing scores on NA Attitude and NA Actions. As long as all NA Attitude items are reversed scored, higher scores always mean greater propensity and lower scores indicate lesser propensity. Although it is expected that a higher score reflects increased ability and vice versa, this cannot be known until criterion reliability is assessed. At this stage of development, scores reflect students’likelihood of successfully engaging in ambiguous challenges.

The following interpretations of scores correspond to each of the quadrants (Q1-Q4) in Figure 2:

Attitude low/actions high (Q1): Indicates propensity to consider a range of strategies and behaviors in tackling ambiguous projects, but respondents may be discouraged, frustrated, or uncomfortable doing so.

Attitude high/actions high (Q2): Scores suggest comfort with open-ended projects and decreased likelihood of a respondent getting discouraged when steps are unknown. Respondent is likely to consider a range of strategies and behaviors in seeking solutions to complex challenges.

Attitude low/actions low (Q3): Respondent likely feels frustrated or uncomfortable engaging in ambiguous, open-ended projects, and may not be equipped with strategies and behaviors that would produce effective results.

Attitude high/actions low (Q4): Respondent reflects confidence about navigating ambiguity but may lack the strategies to do so effectively.

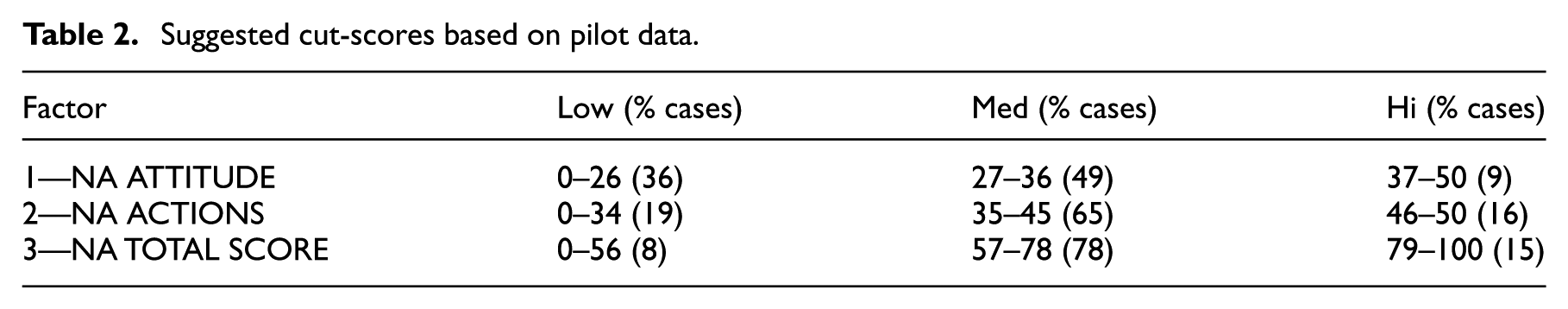

Based on our analyses of pilot version scores, we recommend the cut-scores in Table 2 as a starting point for interpreting respondents’ results. With increased use of the instrument, cut-points can be more accurately aligned with instructional outcomes.

Suggested cut-scores based on pilot data.

Instrument Validation

Method

One year after DANI was designed and piloted, a validation study was conducted to assess the extent to which DANI aligned with, and effectively addressed, design courses’ curriculum and instruction (Chen et al., 2023). The validation study focused on construct validity, which refers to the extent to which NA Attitude and NA Actions—as operationalized by DANI— align with how they are conceptualized, taught, and assessed in courses based on design abilities.

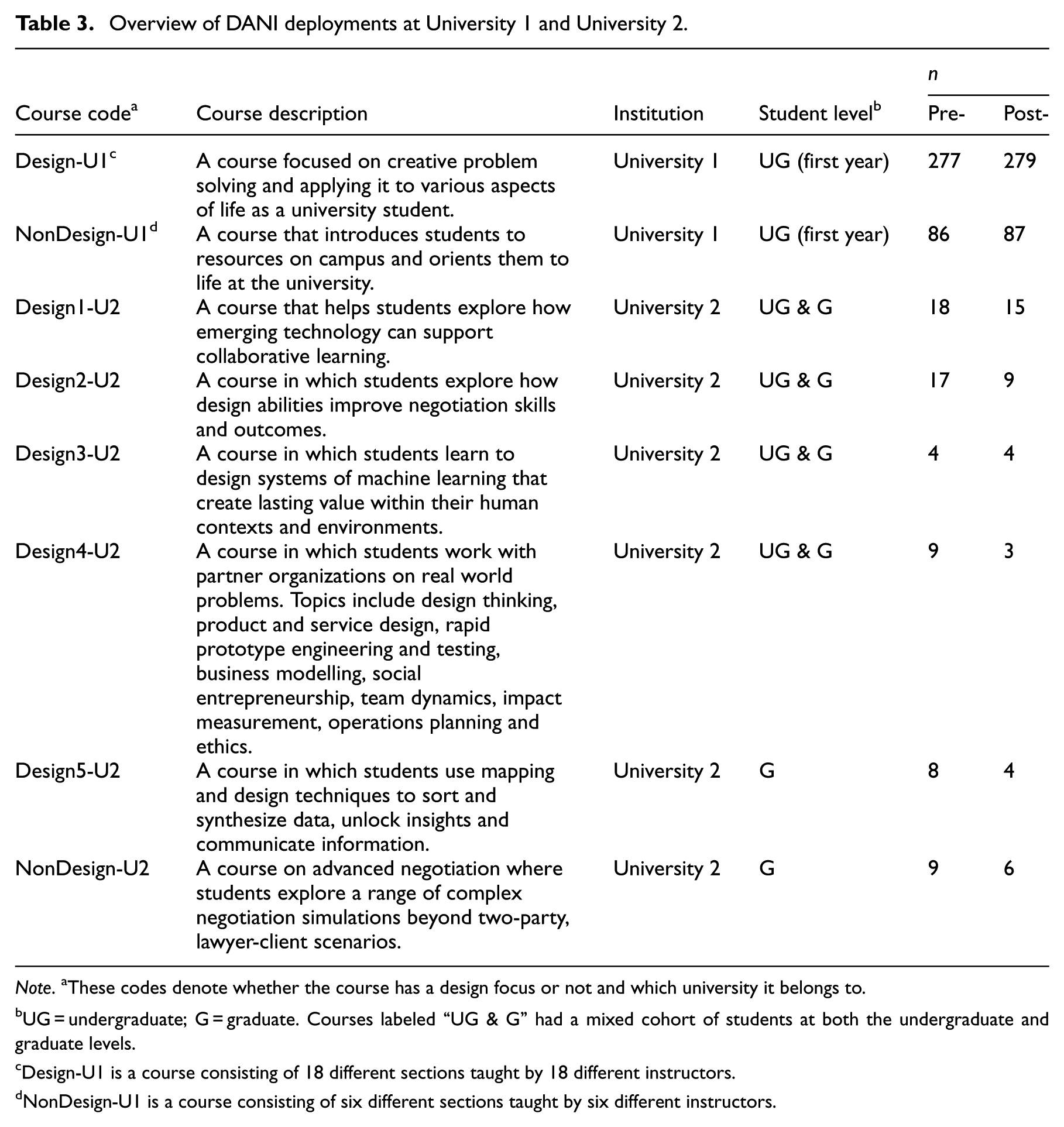

DANI was deployed in two courses at a large, public, U.S. research university (University 1) and six courses at a large, private, U.S. research university (University 2), in the first week and last week of each course (hereafter referred to as pre- and post-course). Each of the two courses at University 1 consisted of multiple sections, with each section taught by a different instructor. Students from University 1 were required to complete the survey. Responding to the survey was voluntary for students from University 2. At both universities, students were introduced to the 20-item questionnaire as a “reflective survey” and were informed that their responses were anonymous and would not impact their grades. Course instructors did not have access to student responses. Table 3 provides an overview of the institutions, course titles, design focus, student population, and the number of pre-/post-responses. The demographics of the validation study’s respondents are shown in Appendix A, Tables A2 to A4.

Overview of DANI deployments at University 1 and University 2.

Note. aThese codes denote whether the course has a design focus or not and which university it belongs to.

UG = undergraduate; G = graduate. Courses labeled “UG & G” had a mixed cohort of students at both the undergraduate and graduate levels.

Design-U1 is a course consisting of 18 different sections taught by 18 different instructors.

NonDesign-U1 is a course consisting of six different sections taught by six different instructors.

Additionally, lead course instructors at University 1 and University 2 were asked to determine the extent to which their courses covered various design abilities, through a curriculum alignment form, which was administered twice. The first version of this form was a collaborative document that included two closed-ended and two open-ended response questions. Responses to the first administration were often unspecific and difficult to interpret, so we created a second version, which was a 15-item survey. Each item asked instructors to rate the extent of coverage of each design ability on a five-point Likert scale. Ratings of either moderate or extensive were recorded as one point, such that the maximum possible curriculum alignment score was 15.

Results

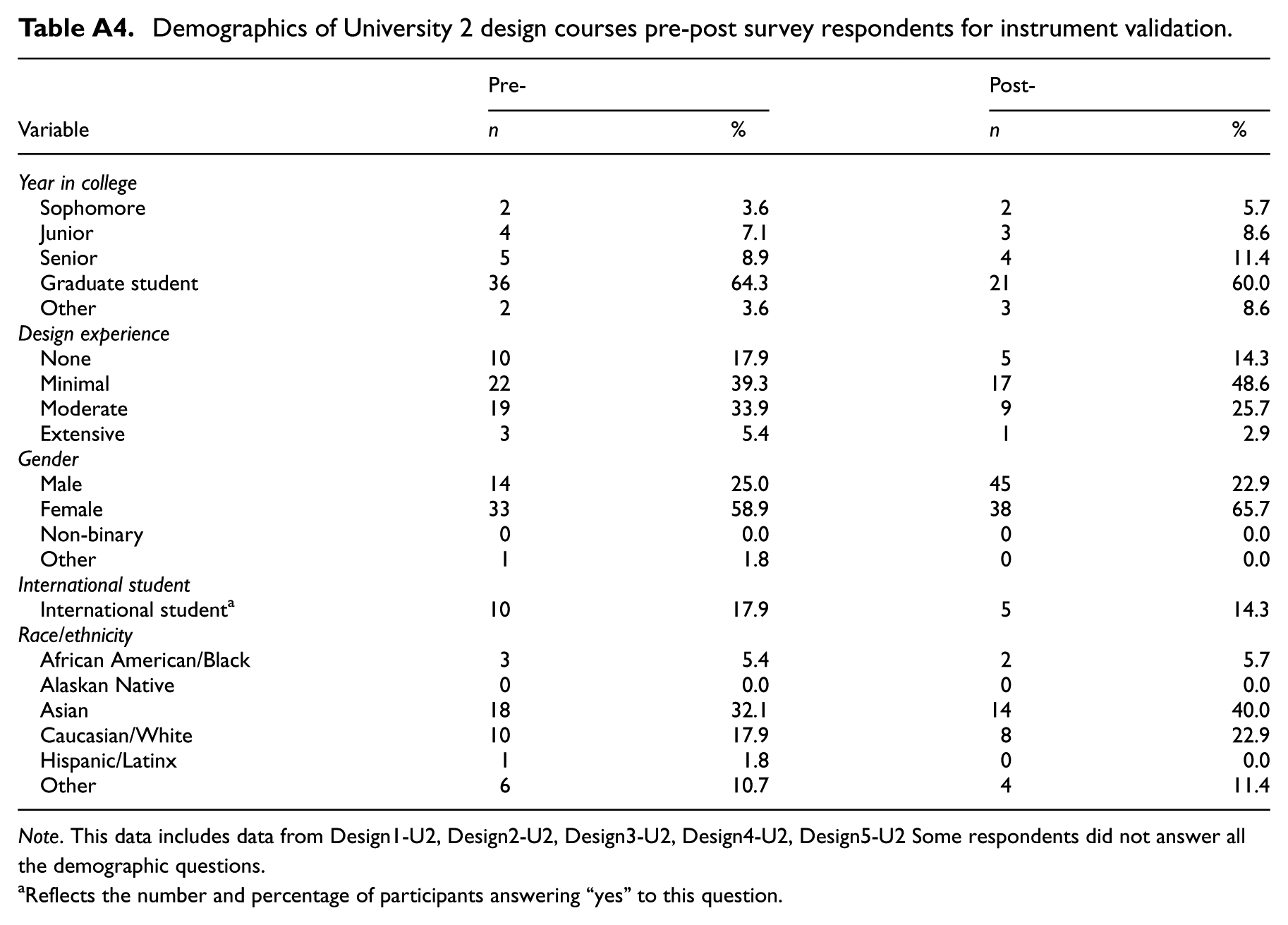

At University 1, DANI was deployed in one design course and one non-design course, both enrolling first-year undergraduate students. At University 2, DANI was deployed in five design elective courses (open to graduate and undergraduate students of all disciplines/majors) and one non-design course. For the eight courses across both institutions, 428 pre- and 407 post-course responses to DANI were collected.

Based on pre-survey results, we calculated Cronbach alpha reliability for both institutions. At University 1, Cronbach alpha reliability results were α = .689 for NA Attitude and α = .724 for NA Actions. University 2 results were α = .806 for NA Attitude and α = .602 for NA Actions. Both reliability scores are within an acceptable range of α = .600 or above. 8 Cronbach alpha reliabilities for both institutions are within conventionally acceptable bounds and comparable to the DANI pilot reliability scores for NA Attitude (α = .829) and NA Actions (α = .796). Notably, Cronbach alpha reliability scores for both universities were comparable, indicating that both populations were appropriate candidates for the instrument.

Paired t-tests were used to analyze NA Attitude, NA Actions, and NA Total responses across courses at both institutions. NA Total includes the scores of all 20 items. As expected, both NA Actions and NA Attitude scores are highly correlated to NA Total. As a result, little added information was provided by the NA Total score, so we do not provide analyses for that variable.

Comparison of Design and Non-Design Courses (University 1)

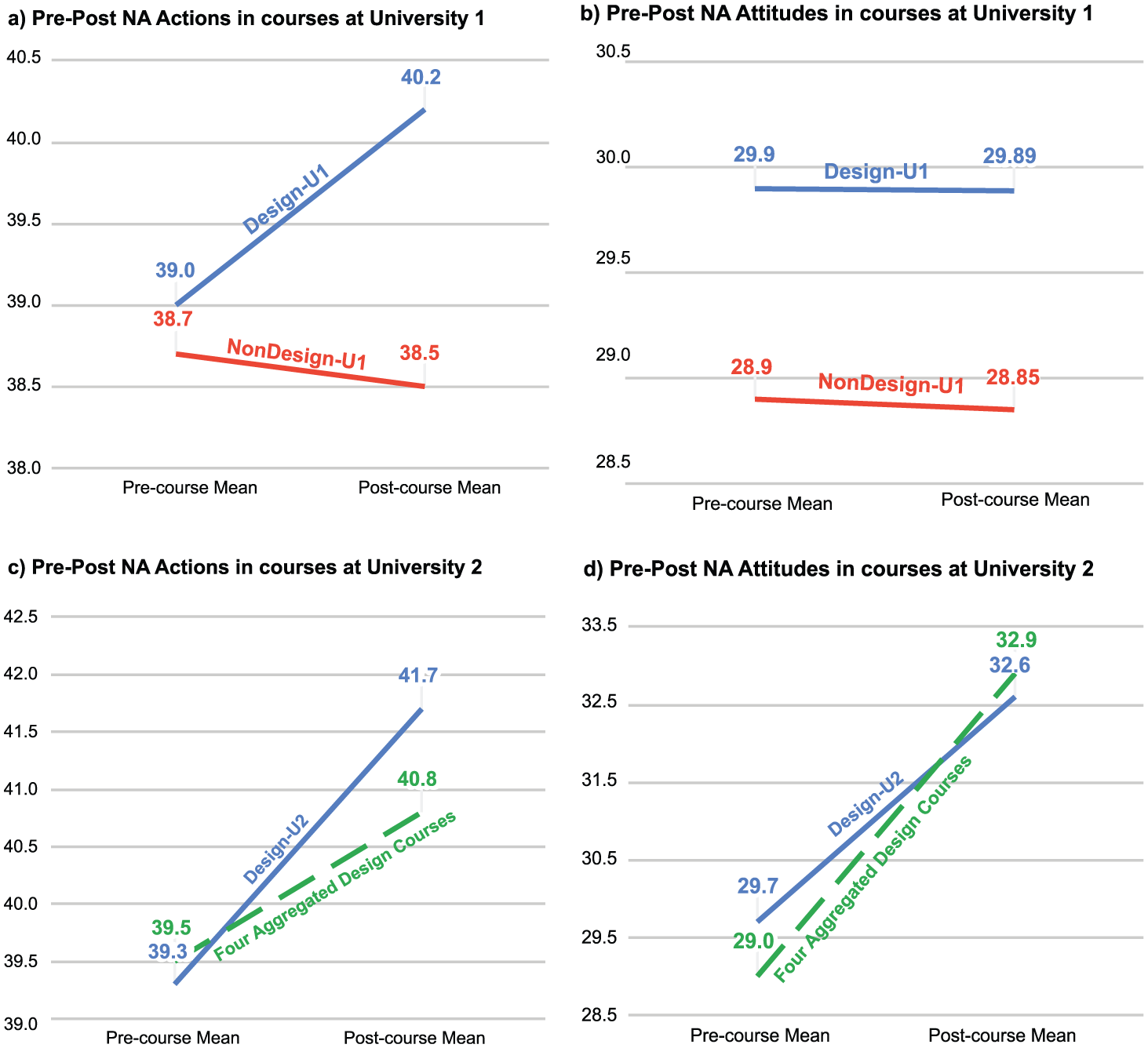

We conducted within-course, paired sample t-tests for Design-U1 (a design course) and NonDesign-U1 (a comparison course) to determine whether there were significant differences between pre- and post-ratings of students’NA Actions and NA Attitude within each course. In Design-U1, there was a significant increase in NA Actions (t(261) = 4.748, p < .001) with an effect size of small to medium (Cohen’s d = 0.293). In contrast, there were no significant differences in NA Actions and NA Attitude for the pre- and post- NonDesign-U1 scores.

We compared the differences between pre- and post- course mean values from the DANI responses of Design-U1 versus NonDesign-U1. As shown in Figures 3a and 3b, results from a two-way ANOVA and independent sample tests showed that the post-course values for Design-U1 students were statistically higher for NA Actions (t(333) = 2.878, p = .004) when compared to NonDesign-U1 students, with a medium effect size (Cohen’s d = 0.381). There was no significant difference in NA Attitude between the post-course values of Design-U1 students and NonDesign-U1 students.

(a-d) Pre-Post Course NA actions and NA attitude mean values.

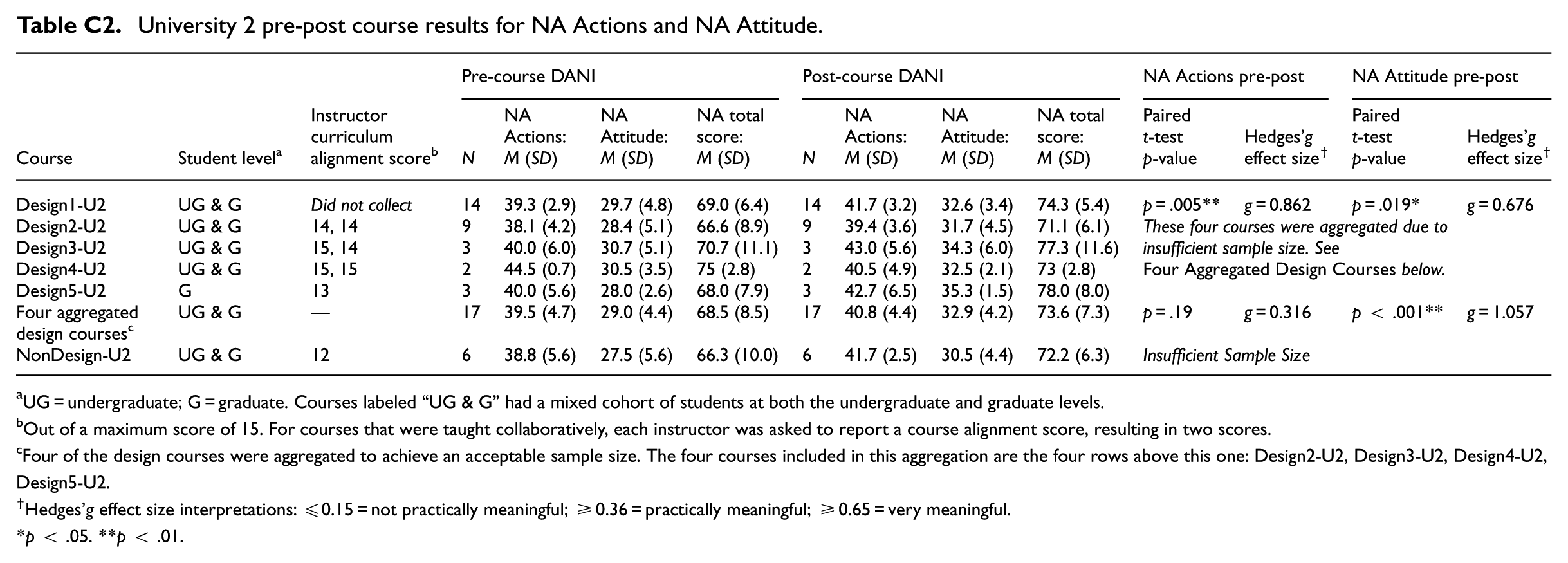

Comparison Across Multiple Design Courses (University 2)

DANI was administered in six courses at University 2, including five design courses and one non-design comparison course.

Within-course, paired sample t-tests were conducted for the Design1-U2 course that garnered 10 or more responses from each pre- and post-course DANI administration. The other design courses were aggregated to achieve an acceptable sample size. NonDesign-U2, the only non-design course studied, received an insufficient number of responses from which to conduct analyses. The pre- and post-course results for University 2 are shown in Figures 3c and 3d. The distributions and full results are shown in Appendix C (Tables C1 and C2).

All design courses evidenced highly significant pre-post increases in NA Attitude. For the four aggregated design courses, there was a significant increase in NA Attitude (t(16) = 4.576, p < .001) with a very high effect size (Hedges’g correction = 1.057). For Design1-U2, there were significant increases in both NA Actions (t(13) = 3.426, p = .005) with high effect size (Hedges’g correction = 0.862) and NA Attitude (t(13) = 2.687, p = .019) with high effect size (Hedges’g correction = 0.676).

Curriculum Alignment

On the instructor curriculum alignment self-report, the lead instructor described Design-U1 as somewhat cumulatively addressing the five design abilities (on a 5-point Likert scale ranging from Not at All to Extensively) with approximately 55% of a student’s course grade based on these abilities. The alignment score of the curriculum of Design-U1 with the design abilities was 11/15.

A member of the teaching team for the Design1-U2 course from University 2 reported that their course curriculum moderately addressed design abilities, and approximately 90% of a student’s course grade was based on the five design abilities underlying DANI. Instructors of the other four design courses at this university completed the curriculum alignment survey with one or two members from each course teaching team submitting responses. There was little to no variance among the alignment scores for each of these courses, which ranged from 13 to 15 points.

See full results of Instructor Curriculum Alignment Score and corresponding DANI Construct scores in Appendix C (Tables C1 and C2).

Discussion

We set out to develop an instrument that could capture students’ stance toward ambiguity in the context of learning experiences in a way that: (1) positions navigating ambiguity as a skill that can be learned and developed; and (2) does not position ambiguity as an inherently negative condition to be overcome (as is the case for pre-existing instruments that measure tolerance to ambiguity). We hope that such a tool will enable educators to assess learning experiences they have created for their students and design ways to better mirror the ambiguity of real life contexts. In addition, such a tool has the potential to provide students with valuable formative feedback about how their attitudes and skills may change as a result of engaging in a learning experience that exposes them to ambiguity.

We found comparable Cronbach alphas for both institutions, which suggests that: (1) the populations of each are appropriate candidates for the DANI instrument, and (2) we can expect the instrument to behave similarly at both institutions. Because DANI detected relatively consistent patterns of significant changes within and across courses in both NA Actions and NA Attitude at University 1 and University 2, we believe that the instrument is reflecting actual course outcomes. In cases where no change was detected, we believe that no change occurred in the constructs assessed.

It is important to note that pilot data confirmed that NA Attitude and NA Actions are independent constructs, meaning that one’s score on one factor does not predict a score on the other. For example, one can score high on NA Attitude and low or moderate on NA Actions. This profile would suggest that one is inclined to engage ambiguous problem challenges, but may not use strategies that are typically effective.

The collective results from our study at two universities demonstrate that DANI is a valid assessment of collective student change in design courses that strive to build learners’ ability to navigate ambiguity.

Limitations of the Instrument

DANI was developed to support design curricula and pedagogy and is a curriculum-aligned, reliable instrument for assessing student propensity to navigate ambiguity. Being a curricular instrument, DANI is assumed to assess attitudes and behaviors that are changeable as a result of instruction and/or practice. Its aggregated scores can be used to assess course and program outcomes. The instrument has not been tested for its ability to predict other learning outcomes in design courses, nor whether students will or will not be successful in future design work, though these are interesting possible directions for future study.

At present, DANI is most accurate when assessing groups of students (i.e., a class or combination of classes)—the margin of error of an individual’s score is wide, while the margin of error of scores of an entire group is narrow. DANI has not been assessed for criterion validity, the extent to which low or high scores reflect actual propensity or ability to navigate ambiguity, nor for test-retest reliability, which would indicate expected reliability of multiple administrations to the same students. Additionally, since this instrument relies on self-assessment, its effectiveness is impacted by the accuracy of the students' self-reporting. As a result, DANI is not intended to be a measure of individual student change, nor should it be construed as a psychological instrument. Further work to validate DANI at the individual level has the potential to broaden the applications of the tool.

Interpretation of Changes in NA Scores in Different Courses

The results of the validation study revealed different patterns of pre-post changes for the different courses. To summarize:

In University 1’s courses (for first-year undergraduate students): ○ NA Actions increased for the design course and not for the non-design course; ○ NA Attitude did not change for either course.

In University 2’s courses (for a majority of graduate students, with some undergraduates): ○ NA Attitude increased for all design courses; ○ Both NA Attitude and NA Actions increased for one of the design courses (Design1-U2)

While there are too many differences between the courses from the two universities to be able to productively contrast the results, 9 they support the validity of DANI to be used in different contexts. Furthermore, a few questions and hypotheses emerge from the results within each set of courses.

Q1: Does design instruction affect students’ attitude toward ambiguity?

While we were not able to collect enough responses from the non-design course at University 2 to act as a control, the difference in pre-post changes in NA Actions for the design and non-design courses at University 1 supports our hypothesis that learning design abilities can contribute to students’ capacity to act under ambiguous situations.

Q2: How can a change in only one dimension of NA be interpreted?

While NA Actions showed a pre-post change for the design course at University 1, there was no pre-post change in NA Attitude for that course. One might expect an attitudinal change to precede a change in behavior. 10 However, the students’ capacity to recognize and name their emotions in the face of ambiguous situations may be limited. While the role of emotions in learning is supported by research (Immordino-Yang, 2015; Zull, 2002), this does not always translate to students’ learning experiences. Exploring changes in NA Attitude scores in more recent versions of the Design-U1 course —which now builds in opportunities for students to reflect on their emotions and those emotions’ contribution to their learning journeys— may allow us to explore this hypothesis further.

For the aggregate dataset from four design courses from University 2 we saw a different pattern of pre-post change: only NA Attitude changed. In this case, some of the nuances of the individual courses may have been lost in the aggregation, making it difficult to interpret this result. Therefore we focused on understanding the one course from University 2 that had enough responses to be analyzed on its own, and we discuss it next.

Q3: Which pedagogical approaches may contribute to changes in NA scores?

Both NA Attitude and NA Actions increased for one of the design courses from University 2 (Design1-U2). We interviewed a member of its teaching team to begin to understand what pedagogical approaches may contribute to the change in NA scores. Some of the elements of the course design and teaching practices stood out as candidates:

structuring the course as a sequence of design challenges that increase in duration, scope, and open-endedness (and therefore, ambiguity);

consistently requiring weekly student reflections focused on their process and emotions;

using creative methods for eliciting student reflections that may be more effective in getting students to recognize and name their emotions and be aware of their actions (e.g., asking them to record short videos responding to prompts such as “After the first design project, I’m feeling _____ because _____”);

consistently sharing all reflections with the whole class (e.g., as video or text compilations), which may activate a dimension of social learning where students learn not only from their experience but also from the reflections of their peers, and may emphasize the role of emotions in their learning journey;

helping students develop psychological safety 11 through activities and reflections, which may have contributed to their learning in the course and/or the way in which they responded to the DANI questions (i.e., they may have been more open to acknowledging emotional responses and more aware of their process).

It is worth noting that some or all of these elements are likely to be present in other courses from University 2. In fact, the course architecture as an evolving sequence of design challenges and the use of reflection are common approaches among the teaching teams from this unit of the university.

Q4: To what extent might DANI be valid in non-design courses?

As described above, DANI was developed using a framework of design abilities as a starting point. However, in validating the instrument, it was deployed in design courses in which instructors may explicitly refer to these abilities 12 as well as courses that do not. 13 The results in both instances were comparable, suggesting that DANI has potential for application in a broader set of courses and curricula.

The ability to—and value of—navigating ambiguity transcends the field of design and it would be worthwhile to explore how DANI may add value to educators teaching courses outside of design that are actively engaging students in navigating ambiguity as a learning outcome.

Pedagogical Applications

Since concluding the DANI validation studies, we have been piloting the use of the instrument in a variety of contexts in order to refine our hypotheses regarding its utility for learners and instructors. We have found two promising use cases: (1) as a tool to assess the effectiveness of a learning experience in helping students better navigate ambiguity; and (2) as a starting point for individual and group reflection on navigating ambiguity. Through its use among learners ranging from first-year college students to graduate students to professionals, we find that completing the DANI questionnaire and engaging in discussion of the results gives people language to describe familiar experiences that were previously hard to articulate. This provides an entry point for further reflection and discussion with peers that helps learners develop a more nuanced understanding of ambiguity and their ability to navigate it.

In Figure 4 we show a 2 × 2 diagram we have used to describe DANI results during a debrief. After completing the DANI questionnaire, students learn which quadrant their answers correspond to. This is a starting point to surface how they tend to feel and act when faced with ambiguity. 14 Our goal is to help students develop a more nuanced understanding of their emotions and behaviors, discussing how their response may differ according to the context (personal vs. professional situations, different sources of ambiguity, perceived stakes, etc.).

Diagram we use to explain DANI results to students.

At a course level, we find that measuring pre- and post-course results provides a useful assessment of curricular alignment with learning outcomes related to navigating ambiguity. In workshops and conversations with educators across different disciplines, DANI has been useful as a tool to help them reflect on their own stance toward ambiguity and on their students’ learning journeys. We have invited these educators to map opportunities to embed “intentional ambiguity” in their courses—through different methods and activities, as well as through different ways of framing challenges (e.g., by using language that makes a challenge more open-ended, or being strategic about when to provide information). As educators incorporate these changes into their curricula, students’ pre-post DANI results can provide feedback on how those changes impact progress toward course learning outcomes related to navigating ambiguity.

As one example of how having an assessment tool like DANI can influence curriculum development, in the Fall 2022 curriculum of the Design-U1 course (the one used in the validation study) the ability of navigating ambiguity and other design abilities were not explicitly mentioned. However, since we now have a reliable way to measure students’ stance toward navigating ambiguity, it is now included in the curriculum and named as a learning outcome. In subsequent versions of the course, pre- and post-course DANI data have been collected, and students reflect on the concept of navigating ambiguity at moments spread out throughout the course and interwoven with course projects and activities.

We have also used DANI in a week-long professional development workshop to help educators reflect on how the learning experiences they create foster mindsets and skills that prepare students for the real world. Specifically related to the sequence of activities in a particular class session, we encourage educators to engage students in exploring and doing first, before providing theories and explanations, strategically leveraging ambiguity. This approach has been supported by studies across developmental stages and domains (Bonawitz et al., 2011; Deslauriers et al., 2019; Kapur, 2008). In this context, we have educators complete the DANI questionnaire to reflect on their own outlook and stance on ambiguity, to then make connections with learning outcomes for their students.

Future Directions

As mentioned previously in this article, we see potential benefit in further developing DANI as a reliable and valid tool for individual (vs. group) assessment. This would involve adding more items, conducting test-retest reliability, and significantly reducing item- and construct-level error. Probably the biggest challenge would be ensuring criterion validity, that is, the extent to which the instrument accurately reflects competence in navigating ambiguity in practice.

While developing such an instrument would be challenging, attempting to do so creates the potential to empirically identify cross-cutting features, principles and/or skills that support navigating ambiguity. Deepening our understanding of this ability would support our exploration of DANI as a pedagogical tool and open up further opportunities for study. For example, this would enable exploration of how navigating ambiguity may correlate with other learning outcomes and assessments—including, but not limited to, grades—which would further support the development of curricula, both in and beyond the field of design.

Similarly, we are enthusiastic about the potential to explore how Navigating Ambiguity may be correlated with and contribute to the growing literature on other constructs such as Possibility Thinking (Craft, 2015; Glăveanu et al., 2024), Creative Agency (Royalty et al., 2014), and Futures Consciousness (Ahvenharju et al., 2018, 2021). Addressing each briefly in turn, Possibility Thinking refers to a person’s “orientation toward what ‘could be,’‘could have been,’‘will be,’ and ‘can never be’” (Glăveanu et al., 2024, p. 125). The Possibility Thinking Scale (ibid) contains items that reference “many possible solutions,”“new ways of looking at things,” and “that mistakes represent new possibilities” (p. 146)—ideas that are closely related to the dimensions of Navigating Ambiguity outlined in this paper. The Creative Agency scale seeks to measure individuals’ confidence to think and act creatively, and some of its items align with DANI items; namely those related to finding diverse sources of creative inspiration, working on problems that do not have an obvious and/or single solution, and changing the definition of problems. Lastly, Futures Consciousness is concerned with individuals’ awareness of possible and desirable futures. In particular, Navigating Ambiguity aligns closely with the “openness to alternatives” dimension of Futures Consciousness, which “is strongly linked to the capability of embracing and appreciating change, seeing the value of alternative ways, and questioning established truths” (Ahvenharju et al., 2018, p. 9). Further exploration could reveal ways that one’s capacity to navigate ambiguity correlates with each of these constructs and how the pedagogies designed to foster one may also support the other.

At present, the implementation and interpretation of DANI—like many assessment tools—poses challenges for its use in the classroom. An instructor who is interested in using it would first have to figure out how to administer the questionnaire and then be comfortable analyzing and interpreting the results. In an effort to increase the utility of the instrument in practice, we have begun designing an instructor-friendly interface that would streamline the administration of the instrument and provide key analyses of the results. This would enable instructors to use DANI to support their teaching, as described above, and perhaps uncover possible uses we have yet to imagine.

Footnotes

Appendix A: Respondent Demographic Data

Demographics of University 2 design courses pre-post survey respondents for instrument validation.

| Variable | Pre- | Post- | ||

|---|---|---|---|---|

| n | % | n | % | |

| Year in college | ||||

| Sophomore | 2 | 3.6 | 2 | 5.7 |

| Junior | 4 | 7.1 | 3 | 8.6 |

| Senior | 5 | 8.9 | 4 | 11.4 |

| Graduate student | 36 | 64.3 | 21 | 60.0 |

| Other | 2 | 3.6 | 3 | 8.6 |

| Design experience | ||||

| None | 10 | 17.9 | 5 | 14.3 |

| Minimal | 22 | 39.3 | 17 | 48.6 |

| Moderate | 19 | 33.9 | 9 | 25.7 |

| Extensive | 3 | 5.4 | 1 | 2.9 |

| Gender | ||||

| Male | 14 | 25.0 | 45 | 22.9 |

| Female | 33 | 58.9 | 38 | 65.7 |

| Non-binary | 0 | 0.0 | 0 | 0.0 |

| Other | 1 | 1.8 | 0 | 0.0 |

| International student | ||||

| International student a | 10 | 17.9 | 5 | 14.3 |

| Race/ethnicity | ||||

| African American/Black | 3 | 5.4 | 2 | 5.7 |

| Alaskan Native | 0 | 0.0 | 0 | 0.0 |

| Asian | 18 | 32.1 | 14 | 40.0 |

| Caucasian/White | 10 | 17.9 | 8 | 22.9 |

| Hispanic/Latinx | 1 | 1.8 | 0 | 0.0 |

| Other | 6 | 10.7 | 4 | 11.4 |

Note. This data includes data from Design1-U2, Design2-U2, Design3-U2, Design4-U2, Design5-U2 Some respondents did not answer all the demographic questions.

Reflects the number and percentage of participants answering “yes” to this question.

Appendix B: Final DANI Instrument

Items and corresponding coding in final DANI instrument.

| Item | Coding a | Factor |

|---|---|---|

| I prefer working on problems that have a single right answer. | Reverse | NA Attitude |

| I prefer challenges that have closure over those that have lingering possibilities. | Reverse | NA Attitude |

| I get impatient if the pathway to the solution is not clear. | Reverse | NA Attitude |

| I can get disturbed by ambiguity when solving a problem. | Reverse | NA Attitude |

| I get frustrated when an approach I try does not lead to a solution. | Reverse | NA Attitude |

| I lose motivation when my idea does not work. | Reverse | NA Attitude |

| I get impatient when others propose outlandish solutions to a problem. | Reverse | NA Attitude |

| I feel uncomfortable when I don’t know what steps come next in a project. | Reverse | NA Attitude |

| Wild ideas tend to derail versus enhance project progress. | Reverse | NA Attitude |

| It is difficult for me to switch back and forth between minute details and “the big picture.” | Reverse | NA Attitude |

| I feel comfortable generating many new ideas. | Standard | NA Actions |

| When solving a problem, I try coming up with multiple ideas to begin with, even if they might not work. | Standard | NA Actions |

| When developing ideas to address a big problem, I consider all possible stakeholders. | Standard | NA Actions |

| Before solving a problem, I look at it from different angles. | Standard | NA Actions |

| The more information I can gather about a problem, the more likely I will discover a new idea. | Standard | NA Actions |

| I know how to incorporate the perspectives of others when I am creating something. | Standard | NA Actions |

| I look forward to finding connections within datasets. | Standard | NA Actions |

| I believe collecting multiple data types gives me the best overall picture of a problem. | Standard | NA Actions |

| I value experimenting because I always learn something, regardless of whether I find the solution I was looking for. | Standard | NA Actions |

| I welcome viewpoints that differ from my own. | Standard | NA Actions |

Note. aStandard is coded as 1 = Never; 5 = Always. Reverse is coded as 5 = Never; 1 = Always.

Appendix C: Validation Study Results

University 2 pre-post course results for NA Actions and NA Attitude.

| Course | Student level a | Instructor curriculum alignment score b | Pre-course DANI | Post-course DANI | NA Actions pre-post | NA Attitude pre-post | ||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| N | NA Actions: M (SD) | NA Attitude: M (SD) | NA total score: M (SD) | N | NA Actions: M (SD) | NA Attitude: M (SD) | NA total score: M (SD) | Paired t-test p-value | Hedges’g effect size † | Paired t-test p-value | Hedges’g effect size † | |||

| Design1-U2 | UG & G | Did not collect | 14 | 39.3 (2.9) | 29.7 (4.8) | 69.0 (6.4) | 14 | 41.7 (3.2) | 32.6 (3.4) | 74.3 (5.4) | p = .005** | g = 0.862 | p = .019* | g = 0.676 |

| Design2-U2 | UG & G | 14, 14 | 9 | 38.1 (4.2) | 28.4 (5.1) | 66.6 (8.9) | 9 | 39.4 (3.6) | 31.7 (4.5) | 71.1 (6.1) | These four courses were aggregated due to insufficient sample size. See Four Aggregated Design Courses below. | |||

| Design3-U2 | UG & G | 15, 14 | 3 | 40.0 (6.0) | 30.7 (5.1) | 70.7 (11.1) | 3 | 43.0 (5.6) | 34.3 (6.0) | 77.3 (11.6) | ||||

| Design4-U2 | UG & G | 15, 15 | 2 | 44.5 (0.7) | 30.5 (3.5) | 75 (2.8) | 2 | 40.5 (4.9) | 32.5 (2.1) | 73 (2.8) | ||||

| Design5-U2 | G | 13 | 3 | 40.0 (5.6) | 28.0 (2.6) | 68.0 (7.9) | 3 | 42.7 (6.5) | 35.3 (1.5) | 78.0 (8.0) | ||||

| Four aggregated design courses c | UG & G | — | 17 | 39.5 (4.7) | 29.0 (4.4) | 68.5 (8.5) | 17 | 40.8 (4.4) | 32.9 (4.2) | 73.6 (7.3) | p = .19 | g = 0.316 | p < .001** | g = 1.057 |

| NonDesign-U2 | UG & G | 12 | 6 | 38.8 (5.6) | 27.5 (5.6) | 66.3 (10.0) | 6 | 41.7 (2.5) | 30.5 (4.4) | 72.2 (6.3) | Insufficient Sample Size | |||

UG = undergraduate; G = graduate. Courses labeled “UG & G” had a mixed cohort of students at both the undergraduate and graduate levels.

Out of a maximum score of 15. For courses that were taught collaboratively, each instructor was asked to report a course alignment score, resulting in two scores.

Four of the design courses were aggregated to achieve an acceptable sample size. The four courses included in this aggregation are the four rows above this one: Design2-U2, Design3-U2, Design4-U2, Design5-U2.

Hedges’g effect size interpretations: ≤0.15 = not practically meaningful; ≥0.36 = practically meaningful; ≥0.65 = very meaningful.

p < .05. **p < .01.

Acknowledgements

Drs. Jamie Powers, Jini Puma, and Helen Chen were instrumental in the design and data analysis of the DANI Pilot and Validation studies. We also would like to thank our colleagues and fellow educators who contributed their time and expertise to the development of this tool. Their knowledge, ideas, and feedback throughout this process provided invaluable insight into how we conceptualize navigating ambiguity and led directly to the development of the items in this instrument. We would also like to thank the instructors in whose courses we gathered data for the validation study. Their collaboration and insights into the process of administering the instrument helped make this research possible.

Author Contributions

This is a collaboration between design educators from two different institutions who regularly work together on developing programs and learning experiences, and Quality Evaluation Designs, an education research and evaluation firm. Throughout this paper, we’ll refer ambiguously to “we” to mean the two groups collaborating. In practice, we divided the work as described below.

Design Education Team: Leticia Britos Cavagnaro, Erica Estrada-Liou, Christina Hnatov.

Contribution: conducted literature review of ambiguity measures and associated constructs, recruited participants for the pilot and validation studies, participated in the iterative process of item creation, interpreted results, conducted follow up interviews with instructors, prototyped different pedagogical applications of the instrument, created the conceptual scaffold for the manuscript, created the figures and tables, wrote the manuscript.

Instrument Development Team: Gary Lichtenstein & Thien Ta (Quality Evaluation Designs).

Contribution: structured instrument design and piloting process, analyzed pilot data, developed User Guide, designed the validation study and analyzed the data, contributed to sections of the manuscript.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Ethical Approval and Informed Consent Statements

After a Determination of Research with Human Subjects review, Ethical & Independent Review Services (E&I) established that an IRB review was not required for the instrument pilot (E&I Assigned Study ID #21195-01, October 25, 2021) nor for the instrument validation (E&I Assigned Study ID #22183-01, August 30, 2022).

ORCID iDs

Data Availability Statement

Data are included in the Appendices. Additional data available upon request.