Abstract

With the advent of Large Language Models, such as ChatGPT, and, more generally, generative AI/cognitive technologies, global knowledge production faces a critical systemic challenge. It results from continuously feeding back non- or poorly-creative copies of itself into the global knowledge base; in the worst case, this could not only lead to a stagnation of creative, reliable, and valid knowledge generation, but also have an impact on our material (and subsequently our social) world and how it will be shaped by these rather uninspired automatized knowledge dynamics. More than ever, there appears to be an imperative to bring the creative human agent back into the loop. Arguments from the perspectives of 4E- and Material Engagement Theory approaches to cognition, human-technology relations as well as possibility studies will be used to show that being embodied, sense-making, and enacting the world by proactively and materially interacting with it are key ingredients for any kind of knowledge and meaning production. It will be shown that taking seriously the creative agency of the world, an engaged epistemology, as well as making use of future potentials/possibilities complemented and augmented by cognitive technologies are all essential for re-introducing profound novelty and creativity.

Keywords

Introduction and overview

Large Language Models (LLMs) have emerged as revolutionary AI technologies in recent years culminating in the release of ChatGPT in late 2022. These technologies have caused significant discussions and transformative impact across various fields reaching deep into economy and society. These systems are at the forefront of natural language processing and simulating human cognitive capacities, empowering machines to understand and generate human-like text, pushing the boundaries of AI capabilities. They are capable of mimicking and affecting almost every human cognitive ability, such as understanding and generating texts, creating images and videos, coding, designing, song writing, voice production, architectural designing, support in sales and marketing processes, decision making and planning, handling and interpreting big data, etc., and—to some degree—being creative.

The hype around ChatGPT, its successors, and similar AI-based systems/applications is omnipresent and these technologies have a huge influence on almost every domain of our professional and private lives. The quality of hybrid work, in the sense of a close cooperation between human cognition and machine intelligence has reached a new level and quality. And, one has to concede that the results are—at first sight—impressive. Most likely, they will get even better in the not-too-distant future (Dwivedi et al., 2023; Yang et al., 2023).

However, Mira Murati, Chief Technology Officer at OpenAI, when asked about the challenges and limitations of ChatGPT, admitted that “ChatGPT is essentially a large conversational model—a big neural net that’s been trained to predict the next word—and the challenges with it are similar challenges we see with the base large language models: it may make up facts. 1 ” LLMS are designed to “predict” responses rather than “knowing” them or even “understanding” their meaning. “While LLM chatbots are powerful content generation and analysis tools, they do not have any sense of truth or reality beyond the words that tend to co-occur in their training data and processes” (Hannigan et al., 2023, p. 6). Thus, the challenge is to develop strategies and an awareness of (epistemic) responsibility to lower the epistemic risks of such technologies (understood as the likelihood of how accurately these systems’ responses represent the world).

This paper discusses the impact of LLMs (Large Language Models in Artificial Intelligence, such as ChatGPT), Generative Large Language Multi-Modal Models 2 or GLLMMMs (Gollems) or, more generally, of cognitive/AI technologies on the dynamics of global knowledge production. More specifically, we will focus on creativity and processes of bringing forth radical or profound novelty. One of the claims is that cognitive technologies have, cause, and are affected by a serious second order, systemic, and long term problem.

It will be shown that today’s generative AI technologies will most probably neither be profoundly creative nor capable of bringing forth radical/profound novelty, as they are implemented as “prediction machines” and represent technological manifestations of self-reinforcing (pattern- and symbol-based) knowledge-to-knowledge feedback loops and a kind of self-fulfilling prophecies in knowledge-to-world enactment.

Even though the results of these cognitive technologies sometimes give the user the impression of being creative, their “creativity” is based on a logic of adapting, recombining, “mixing,”“recycling,” extrapolating, and predicting on the basis of past or already existing knowledge and statistical patterns. It is only due to the vast knowledge corpus on which these technologies are based, that they sometimes produce seemingly novel or creative outcomes. Taking a closer look at the underlying technology (i.e. neural networks/technologies, neurally inspired learning algorithms, etc.) reveals however that—taking also into account that the “creative results” are fed back into the system (i.e. the global web) by human users—these technologies fall additionally prey to being caught in a vicious circle of self-reinforcing knowledge-to-knowledge feedback loops finally leading to rather limited and repetitive behavioral patterns and user interactions. In other words, cognitive technologies can become trapped by continuously feeding back non-creative copies of themselves into the global knowledge base.

Overview

The objective of this paper is not only to offer an alternative account on creativity and novelty (being based on the 4E approaches and on the creative agency of the world) but also to develop a human-technology relations perspective suggesting which role cognitive technologies could play in this approach.

To arrive at such a perspective, we will first discuss the negative impact that such technologies have on creativity/creative agency on a global scale (such as—in its most extreme form—a decline or even standstill of creativity) from a LLM-internal viewpoint. Moving on to a human-technology relations perspective reveals that these shortcomings of cognitive technologies can only be overcome when we bring human creativity and epistemic reliability back into the loop. Arguments from 4E approaches in cognitive science (e.g. Gallagher, 2023; Newen et al., 2018), Material Engagement Theory (Malafouris, 2013, 2018, 2019), as well as possibility studies (Glaveanu, 2020; Glăveanu, 2023) will be used to show that being embodied, sense-making, being situated in and (proactively) interacting with and enacting the world, as well as corresponding (Ingold, 2013) with the world and its future potentials/possibilities are essential for being profoundly creative. We will argue that experiencing (future) potentials requires embodied agency and direct contact with an unfolding world and that bringing forth radical novelty is based on an understanding of creativity that is grounded—at least in part—in the creative agency of the (real) world-in-becoming. Furthermore, it will be shown how cognitive technologies can complement and augment such an approach to knowledge creation (Brinkmann et al., 2023). Finally, the implications of such an engaged epistemology (De Jaegher, 2021) and a novel perspective on forms of collaboration with cognitive technologies in creative processes for the educational domain will be discussed.

Producing extrapolations from past “pseudo experiences”

ChatGPT is an instance of generative AI or cognitive technologies that is based on so-called LLMs (Large Language Models) (Dwivedi et al., 2023; Floridi, 2023; Floridi & Chiriatti, 2020). Compared to, for instance, intelligent search engines, they have a strong generative and “cognitive” aspect: they are capable of generating “novel” content rather than just searching, analyzing, or aggregating existing data or information. As “cognitive technology,” these technologies pretend to operate at the level of “cognition,” knowledge, meaning, and (adaptive) learning. In other words, they do not only display behavioral dynamics that are based on just following rigid rules or routines, but are capable of adapting and learning. This is achieved by employing complex machine learning algorithms, deep (neural) learning strategies that are inspired by findings from neuroscience and computational neuroscience, as well as various cognitive models from cognitive science (Dwivedi et al., 2023; OpenAI, 2023; Yang et al., 2023). What makes these systems so interesting and powerful is their ability to adapt and learn from examples in an inductive and generalizing manner; this goes back to approaches and technologies that were developed already in the 1980/1990s (e.g. Hertz et al., 1991; Koch & Segev, 1989; Peschl, 1997; Rumelhart & McClelland, 1986; McClelland & Rumelhart, 1988).

Large Language Models (LLMs) are trained on vast amounts of data (in most cases documents and files scraped from all over the WWW (e.g. Dwivedi et al., 2023), such as Wikipedia, WWW-pages, discussion forums, social media platforms, text and image repositories, etc., generally speaking, knowledge artifacts). These data are processed by complex neural networks that are designed in such a way that they “interpret,” adapt, “make sense” of, and learn from these data. LLMs make use of a neural network architecture and (learning) algorithms that are called transformers (e.g. Thoppilan et al., 2022) integrating existing (already learned) knowledge with annotated novel examples, putting them in context, relating and adapting to them, etc. Transformers “read” through these enormous amounts of data (text, images, etc.) and—by applying these (learning) strategies—relate these data to each other and “discover”/spot (spatio-temporal-”semantic”) statistical patterns, categories, sequences, etc. in order to make predictions about what pattern, word, etc. should come next. In that sense, LLMs do not really know or “understand” what they are learning, but they do a great job of finding out which knowledge artifact (statistically) follows another. For an outside user, this looks as if the AI system has cognitive or sometimes even creative capabilities, even more so, when it is used in a dialogical manner (i.e. the user engages in a meaningful and empathic conversation/dialog with the machine; OpenAI, 2023; Thoppilan et al., 2022; Yang et al., 2023).

However, before we get into the discussion about humans interacting with an AI system, we will take an “isolated” and internal perspective of LLMs first to understand their basic workings, epistemological assumptions, as well as limitations. In the second part of this paper, we will introduce the 4E approach to cognition as a launchpad to a wider human-technology relations perspective. This offers a more comprehensive picture and opens a novel perspective on human and non-human creativity and their interplay.

Simply put, LLMs acquire/“learn” and apply a strategy of predicting the next knowledge artifact on the basis of previous(-ly learned) items and already existing similar patterns or sequences (Maslej, 2023; OpenAI, 2023). 3 Here, the concept of “knowledge artifact” is understood to be a word/symbol, image, sound, or, generally speaking, a pattern (in the machine/AI system) with a representational function (e.g. a pattern of neural activations and/or synaptic weights that directly or indirectly refers to a phenomenon [in the world]).

These patterns are thus the result of a complex “experiential” learning/training process that is based on vast amounts of existing data being drawn from various sources on a global scale, mainly from—sometimes annotated/tagged by humans—documents on the internet.

In this context, it is important to keep a couple of things in mind:

A. Taking a closer (and LLM-internal) look reveals that these technologies are not at all about “knowledge” or even “meaning” in the sense we as humans understand or experience it; rather, they are processing on, operating with, and correlating ultra-large scale amounts of globally collected data into statistical regularities and patterns. This is achieved by applying the above-mentioned algorithms that are extracting and generalizing patterns from mostly online-documents (comprising text, numbers, [tagged] images, sounds, etc.). We have to be careful, however, in not projecting (our own) meaning into these knowledge artifacts. In a way they are “pseudo experiences,” as these patterns are not directly grounded in “real world” experiences; rather, they are “borrowed descriptions” of the world in the form of documents that have been created mostly by human living cognitive systems. Hence, these patterns do not “represent” the knowledge of the world in a strict sense. Rather, they represent the current state and distribution of (statistical) regularities that can be found in the data throughout the internet, on a purely syntactic/quantitative/pattern level. Hence, no meaning or knowledge is involved in a strict sense; in this context it is important to take a closer look at the extensive discussions concerning semantics in AI systems, such as Searle’s (1980) Chinese room arguments, Newell and Simon’s (1976) investigations, or the symbol grounding problem (Harnad, 1990), as well all the subsequent discussions in the field of philosophy of mind/cognitive science; for example, Bechtel (1988), Bermudez (2020), Friedenberg and Silverman (2016), and Newell (1980). While these are valid concerns, as discussed in the second part of the paper, we will also see that these issues need to be seen in a different light when adopting more recent positions in cognitive science and a human-technology relations perspective.

B. Based on these patterns, similarities and temporal sequences can be predicted by, for instance, mostly neural network based algorithms (see above). These predictions are presented to the user as “answers” to his/her questions/prompts or as outputs for the conversation with the machine. In other words, these technologies are producing extrapolations and re-combinations of past“experiences,” borrowed from humans and codified on a quantitative and statistical level without “understanding” of the knowledge or meaning involved (Floridi, 2023).

C. As we will see below, what is missing in these systems/LLMs, is their grounding in a living body and their embeddedness in the real world. Contrary to machines, humans have bodies and are interacting with their environments as well as with material affordances in a direct/physical manner in order to make sense of their world. This is in contrast to machines that interact only via (representational) patterns with their own representational world (such as via symbols/words about or images of the world).

Engaging in a Vicious Circle of Recycling Existing Knowledge on a Global Level

While a LLM-centric view has brought to light some of the limitations of LLMs, taking into account that such technologies are always embedded in an environment and involve interaction with human users opens up new perspectives and (epistemic) dynamics. Human-technology relations and postphenomenology (e.g. Ihde, 1990; Rosenberger & Verbeek, 2015; Verbeek, 2005) constitute a field studying the complex and dynamic interplay between humans and technology. At their core, they do not (only) investigate technologies in terms of their functional properties and capacities, but the focus is on the mediating role of technological artifacts for human experiences and practices. In other words, “they investigate technology in terms of the relations between human beings and technological artifacts, focusing on the various ways in which technologies help to shape relations between human beings and the world” (Rosenberger & Verbeek, 2015, p. 9).

Cognitive technologies can be counted perhaps among the most powerful tools that allow an idealized human-world relationship to be replaced with a human-technology-world relation (Ihde, 1990). By providing a distinct experience of the world, they do not only shape our perception and thinking of the world, but subject and object are mutually constituted as an emergent phenomenon in their technology-mediated relation (Verbeek, 2005). At the same time, as has been shown above, these technologies are shaped by our cognitive products (e.g. in the process of designing and training them). In turn, this leads to a circular relationship and feedback dynamic discussed in detail in the following sections in which we will assume this human-technology relations perspective.

Human-machine interaction, and particularly, human-generative AI/cognitive technology interaction, has brought about a new level and quality in knowledge work and dynamics. Let’s take a closer look at what is happening when human cognitive systems are interacting with generative AI technologies from a systems science and human-technology relations perspective. In the very first step, human experience (and knowledge thereof) gets codified as a knowledge artifact; this is carried out by a human translating his/her experiences/knowledge into language, a document, image, sound file, data base, etc. At some point, this knowledge artifact is included (“uploaded”) to the global knowledge base (i.e. the internet, etc.). This is the point of departure where knowledge enters a feedback loop:

In most cases, when users are employing generative cognitive/AI technologies, they will engage in a kind of “conversation” with the “system.” Humans are prompting generative AI and the resulting answers provided by AI enter and spread in their minds. These responses mingle with our thoughts and trigger associations and inspiration.

In many cases, these artificially generated responses will be simply copied and accepted as sufficient or adequate for the task at hand. In other instances, they are slightly adapted or customized by the user/knowledge worker.

In any case, this results in “novel” knowledge artifacts that have been created (or simply copied) by the human user/knowledge worker.

One way or another, these knowledge artifacts find their way back to the Internet (“uploading”) and thus become available worldwide again.

The generative AI will make use of them and “learn” from them by adapting its “knowledge”/pattern base accordingly.

Here the cycle begins anew when someone makes use of this modified knowledge base by interacting with the cognitive technology/AI…

Actually, this circle of interaction between knowledge artifacts from the internet and human users is not really new. It happens all the time, when a user (e.g. a scientist, knowledge worker, or an artist) makes use of knowledge from any kind of source or printed or digital medium, creates novel artifacts and publishes or uploads his/her own knowledge products. However, what has changed dramatically, is the pace of these processes, the global level and accessibility, the quality of responses and interactions as well as the level and depth of “cognitive interventions” provided by generative AI technologies.

So long as the artificially generated knowledge appears to be of high quality or creative to the (overall population of) user(s), he/she is likely to trust and rely more and more on these responses or solutions with less reflection. That is, human users tend to invest less of their own thinking and creative capacities to create novel and reliable knowledge in such cases, even when expected to produce genuinely “novel” knowledge.

Assuming this is happening globally as LLMs gain popularity and trust the share of artificially generated knowledge artifacts is growing over-proportionally compared to human-generated knowledge; more and more artificially generated knowledge artifacts are flooding, incorporated, and fed back into the global virtual knowledge base, which in turn is (re-)used by other users. The result is what we are already experiencing today: we are flooded with an overflow of low-quality, superficial, almost irrelevant, increasingly less creative, and mostly unreliable (“fake”) knowledge. It has become almost impossible to distinguish who is actually talking to me, or what is the actual source of knowledge? As Y.N. Harari points out, the answer to these questions has far-reaching consequences: “If I am having a conversation with someone, and I cannot tell whether it is a human or an AI, that’s the end of democracy.” 4

Assuming a postphenomenological perspective (Ihde, 1990), the mediating role of cognitive technologies between humans and the world becomes predominant over being grounded in the real world in such a scenario. This leads to a more or less closed feedback loop. In the epistemological domain, this results in a self-reinforcing knowledge dynamics, in which (then mostly artificially generated) knowledge artifacts are reproduced again and again (potentially, with slight changes). Due to their conservative and past-driven character the algorithms have built in a tendency and dynamics to reinforce both non-creativity and unreliability. This brings about a kind of stabilization or even stagnation in the already existing overall knowledge base of the “system” (independently of its meaning and validity). It follows a similar dynamic that can be observed in a complex system with a point attractor reproducing itself in a never-ending feedback loop.

So, what would happen in the most extreme case? If there is less and less (human) creative input, novelty, or validated knowledge provided by human users due to overreliance on AI generated content, the global knowledge ecosystem ends up in a vicious circle. Most often, low-quality knowledge is self-reinforced in a feedback loop. In other words, we are caught in a “continuing unpleasant situation, created when one problem causes another problem that then makes the first problem worse.” 5

Evidence for such a scenario and its consequences can be found in a recent study by the University of Oxford (and others) where Shumailov et al. (2023) showed that iterated/recursive training of LLMs on its own (generated) data will lead to what the authors refer to as “model collapse” (see also Brinkmann et al., 2023, p. 1862): “If most future models’ training data is also scraped from the web, then they will inevitably come to train on data produced by their predecessors… What happens to GPT versions GPT-{n} as generation n increases?… We discover that learning from data produced by other models causes model collapse– a degenerative process whereby, over time, models forget the true underlying data distribution” (Shumailov et al., 2023, p. 1f). Beyond that, the authors show that web-scale data sets can be easily poisoned even if only a small fraction of data is malicious (e.g. by being scraped from untrustworthy web sources) and gets introduced to the training set in the learning process. In more general terms, Chen et al. (2023) showed that the overall quality of the behavior and performance of LLMs (more specifically, GPT-3.5 and GPT-4) is decreasing over time.

As a metaphor, think of the paper recycling process: with each cycle, the quality of the paper decreases unless “new” or high-quality paper is introduced and added to the loop. In a way, excessive use of generative AI technologies leads to a form of negative spiral in “knowledge recycling” or recombination of knowledge, a proliferation of the trivial and non-creative on a global scale. Generative AI becomes a huge trivialization machine providing a more or less epistemologically closed world of an already predefined and re-ren-…represented/-generated knowledge space representing it in every possible variation.

Of course, (re-)combination and forms of recycling have always been considered as one of the main strategies for creating novelty and creativity across a wide variety of fields, such as evolutionary/systems biology, psychology, economy, innovation studies and others (e.g. Amabile, 1996; Boden, 2004; Felin & Kauffman, 2023; Ingold, 2022; Koppl et al., 2015, etc.). Although these strategies are powerful and bring about novel solutions or innovations, these approaches also have some limitations. As is pointed out by, for instance, Felin and Kauffman (2023) there has been a long-standing discussion about choosing a particular solution amongst a large number of possible combinations.

More specifically, the question is how to select for the right combinations and how meaningful (both for the organism and the environment) novelty may emerge from more or less randomly operating (re-)combination. As will be discussed in the second part of the paper, this concerns the role of the environment (in relation to organism’s needs) in such creative processes. The environment plays a dual role in this context: first, in its “regulating” function through selection, providing specific constraints for the organism in its precarious situation. Second, by offering affordances and possibilities (for novel forms of interaction) that prove advantageous. In this case and as further explored below, the interplay between the creative agent and its material surroundings can be considered a key source for novelty that goes beyond (re-)combination.

The image of knowledge recycling painted above is admittedly extreme, as it excludes human agents providing novel knowledge or “irritation” for the knowledge base; however, it portrays a tendency that has several severe consequences for processes of knowledge production on a global scale: as will be discussed below, in order to keep knowledge production “alive” and creative, it is necessary to introduce “sufficient”/significant amounts of novel (and reliable) knowledge into the loop by human creators. However, as our own experience as well as insights from cognitive/neuro-science show, 6 cognitive systems choose the path of investing less (intellectual and emotional) energy when the opportunity arises to avoid the effort required for thorough observation and deep thinking, exposing ourselves to the vagaries of the new, or to be truly creative.

Although, in a slightly different context, this corresponds to what N. Postman suggests and summarizes in the foreword of his book “Amusing ourselves to death” when he compares Orwell’s and Huxley’s vision on technology and its impact on human thinking (and living):

“As he [Huxley] saw it, people will come to love their oppression, to adore the technologies that undo their capacities to think.

What Orwell feared were those who would ban books. What Huxley feared was that there would be no reason to ban a book, for there would be no one who wanted to read one. Orwell feared those who would deprive us of information. Huxley feared those who would give us so much that we would be reduced to passivity and egoism. Orwell feared that the truth would be concealed from us. Huxley feared the truth would be drowned in a sea of irrelevance… As Huxley remarked in Brave New World Revisited, the civil libertarians and rationalists who are ever on the alert to oppose tyranny “failed to take into account man’s almost infinite appetite for distractions….” Huxley feared that what we love will ruin us.“ (Postman, 2006, p. xix)

Finally, we have to be aware that these artificial/automatized (knowledge) processes are not restricted to local communities or places; they can be accessed from almost anywhere in the world, at any time, and at almost no cost to users. They take place on a global scale and, thus, have direct impact on the global knowledge production processes/ecosystem.

“Agere Sine Intelligere”

Enacting the World by Interacting With It

However, knowledge recycling is not the end of the story yet. As suggested above, we have to leave behind a LLM-internal/centric perspective and adopt a lens taking into account a human-technology relations approach (e.g. Ihde, 1990; Rosenberger & Verbeek, 2015; Verbeek, 2005), if we want to arrive at a better understanding concerning processes of interaction between humans, the world, and (cognitive) technologies. As will be shown below, this applies all the more, if one is interested in creative agency involving co-creation of artifacts. From a cognitive (science) perspective the so-called 4E approaches offer a valuable theoretical framework for these questions, as their focus is on the relationship between cognitive systems and their environment. In that sense, there is a close relationship to the concepts of human-technology relations, as “[h]uman-world relations are practically ‘enacted’ via technologies.” (Rosenberger & Verbeek, 2015, p. 12) 4E approaches in cognitive science suggest that cognition, by definition, is not only about internal mental/knowledge states and processes, but about how a living system is interacting with its environment. The claim is that, in order to ensure its survival (in a broad sense), a living system makes use of its cognitive and bodily abilities. According to these approaches, cognition is always embedded in the cognitive system’s environment, it is embodied and extends into the environment by enacting and interacting with it (e.g. Clark, 2008; Di Paolo & Thompson, 2014; Froese & Di Paolo, 2011; Gallagher, 2023; Menary, 2010; Newen et al., 2018; Rolla & Figueiredo, 2023; Shapiro, 2014; Varela et al., 2016; Ward et al., 2017, etc.).

This implies that in the 4E paradigm the body and extra-neural processes of a cognitive system play a crucial role for cognition. Mental processes are no longer abstract and detached from the body, but are part of a bodily living creature trying to sustain its process of being alive in a precarious context. They are partly constituted by or partly made up of wider (i.e. extra-neural) bodily structures and processes that are responsible for enabling, regulating, and constraining cognition (e.g. Menary, 2010). Furthermore, these embodied activities have to be seen as being embedded in the material environment through engaging in sensorimotor interaction with it. This leads to an individual (temporal) interaction path between the cognitive system and its environment representing the history of engagements between these two systems. Through this engagement a cognitive system does not only shape its environment, but, in turn, is also shaped by it giving rise to a mutual coupling. Hence, the nervous system, the body, and the environment are constitutive of our cognition and enact both the internal and external world (e.g. Di Paolo & Thompson, 2014; Hutto, 2023).

When Knowledge “Leaves the Building:” Enacting Self-fulfilling Prophecies by Materializing Self-fulfilling (Knowledge) Realities

As a result, we find artifacts in the world that are the outcomes of the human cognizer’s enacting and materializing knowledge via interacting with the world. However—taking into account what has been discussed above—if human (creative) knowledge is mostly based on already recycled and automatized knowledge, most of these artifacts will be a manifestation of such relatively low-quality knowledge in the long run. Human cognitive systems (and machines) are shaping and transforming the material world on the basis of this (re-cycled) knowledge; and, in turn, following an enactive, human-technology relations, and ontological design approach (e.g. Willis, 2006), we see that humans and their cognition are shaped by these artifacts as well.

Hence, what has been re-produced and recycled by algorithms (i.e. more or less meaningless patterns aka “knowledge”) and human cognition, now leaves the epistemological domain and enters the ontological realm: it directly impacts certain aspects of the material world by transforming them into artifacts embodying this knowledge. Going one step further, the increasing use of cognitive technologies is amplifying their influence on the material world that becomes more and more the result of a complex interplay between automatized and human knowledge production and material transformation. Thus, we are no longer caught only in a vicious cycle of an epistemic feedback loop, but also in an ontological one. As is suggested by Brinkmann et al. (2023), this leads to what is referred to as “machine culture” in the form of feedback loops that may materially enact themselves in the form of self-fulfilling prophecies (e.g. Watzlawick, 1984, p. 95ff); even worse, we are trapped in self-reinforcing loops constantly criss-crossing the boundaries between the epistemological and the ontological.

In the most unfavorable scenario, this might lead to a diminishing prospect for human intervention in a creative and reflected manner resulting in loss of autonomy and diversity (e.g. Brinkmann et al., 2023). This applies all the more if the machine or artificial intelligence (AI) is directly coupled to its material environment (e.g. via sensor and motor systems, autonomous devices, robots, or even autonomous weapons,…). Recent and extensive discussions on potentials for risky emergent behaviors of LLMs (e.g. Hendrycks et al., 2023; or from the recent openAI technical report on gpt-4 (OpenAI, 2023, p. 52f/14f, footnote 20) show that such a scenario in which AI can act like an autonomous agent in the material world, interact with other (artificial) systems, or is equipped with devices and tools that are capable of physically transforming the material world in an autonomous manner is not just science fiction or in the distant future. This is what Floridi (2023, p. 5) refers to as “confederated AI” where various LLMs are made interoperable instantiating an AI-to-AI and AI-to-World bridge comparing them to LEGO-like AI systems that are cooperating in a modular and seamless way.

As an illustration, consider the following examples:

-Researchers at MIT showed (June 2023) how an AI was getting more and more successful in designing and automatically manufacturing pathogens/viruses that could ignite a pandemic, 7 as well as in how to spread the virus efficiently, and how these LLMs can be augmented with chemistry tools (i.e. robotic chemical synthesizer) capable of actually producing necessary molecules. 8

-Think of what already can be observed in the field of education (e.g. Kasneci et al., 2023): teachers/professors are using generative AI to create their exams and questions, students use chatGPT to answer them and solve the given problems, teachers/professors, in turn, use these technologies to automatize their evaluation process.

-Or, think about the emerging real world dynamics and implications when cognitive technologies speculate on the stock market, companies are using the same technologies for making their business decisions, users/customers are influenced by and dependent on these technologies when they make their choices on buying the products from these companies,…

In all of these cases we run the risk of being caught in (material/epistemological/social) self-reinforcing and self-fulfilling loops. Or, as Brandeis Marshall claims, we are at risk of “being humans on the outside but bots on the inside” and that we do not care or are no longer aware that algorithms are making most decisions for us. 9

In the most extreme case, the human is more and more pushed out of the loop—he/she acts and is educated to behave more or less like a machine. This could be an instance of what Floridi (2023) means by AI having “agency without intelligence:”“We have decoupled the ability to act successfully from the need to be intelligent, understand, reflect, consider or grasp anything. We have liberated agency from intelligence.. agere sine intelligere” (Floridi, 2023, p. 6). What will happen when such an “AI takes over” and is driving our lives as humans?

(Against) The End of Creativity and Profound Novelty?

Taken all together, it seems that—in the worst case—we are in danger of approaching a decline or the end of creativity and of our ability to bring forth profound novelty/innovation (on a broad scale). Perhaps, this sounds exaggerated or like “AI/technology bashing,” however we have to be aware that creative and critical thinking could become endangered when knowledge is reproduced in such an endless recycling process of decreasing epistemic and creative quality. The claim is that such a feedback loop of AI generated knowledge and real-world artifacts results in a vicious circle leading to a decline or even standstill in creativity and creation of profound novelty (that goes beyond re-combining existing knowledge).

The reason for this development is not only people’s convenience or laziness, the ease of access to these technologies, and users’ desire to outsource their thinking and creative efforts to technology. As we have seen, it is the rather conservative and past-driven mechanics built into the global knowledge ecosystem (including humans, cognitive technologies, artifacts and their interactions) as a whole that is driving this negative spiral; a dynamics that is based on a logic of combining, re-combining, mixing, re-mixing, and recycling existing knowledge (and artifacts) over and over again. We have to be aware, however, that such a “decline/end of creativity” would not come in a single step nor be evident immediately, but would undermine us slowly and almost unnoticed.

The following issues prove to be even more problematic in this context:

These knowledge processes take place in a purely quantitative and syntactic (algorithmic) universe without relationship to “living/lived meaning.”

Production or “creation” of “novel knowledge” is based on a logic of extrapolating past “experiences”/knowledge and making use of them to solve problems and/or predict the future in these technologies. This, in principle, cannot lead to fundamentally novel knowledge, insights, solutions, or meaning, as the space of possible combinations (or possible distribution of statistical patterns) is already (implicitly) predefined/-given; the impression of novelty results from combining and calculating new variations of statistical distributions of already existing patterns and categories and making them explicit. One has to be aware however, that the underlying knowledge space is so incredibly vast that it surprises a human user and it may appear to him/her that “novel knowledge” has been generated through such cognitive technologies. However, it is only due to our cognitive limitations that we humans seem to be impressed or surprised by the “creative” capabilities and results of such machines.

Finally, and perhaps most importantly, these systems lack direct (embodied) access to the world. That is, they are trapped in their quantitative representational/epistemological realm without having qualitative access to or direct interaction with the world. One can compare the inner workings of cognitive technologies to the prisoners in Plato’s cave allegory (Plato’s Republic 514a ff): they know the world only from the shadows that their captors project on the wall, they are decoupled from “real world” experience.

The last point in particular seems to be of significant importance in the context of creativity and bringing forth novelty: it concerns the decoupling and dissociation of these technologies from the world. As we will see, it seems that this is one of the main reasons for the limitations both in their creative and sense-making capacities.

Following Michael Polanyi and Gregory Bateson, Ihde and Malafouris (2018) use the example of the blind man with the cane to discuss what happens when technology interposes itself between humans and their environment: Where does the blind man’s self end and the rest of the world begin? From a phenomenological perspective, it can be argued that the blind man using a stick does not sense the stick, but the presence or the absence of objects in the outside environment. Although the stick offers the actual means for this exploration, it is itself forgotten. (Ihde & Malafouris, 2018, p. 205)

In the context of our discussion about cognitive technologies, the impact is twofold: we can perceive and act on the world only through technology-mediated access and the world “presented” to us is itself already its technological representation. As Ihde and Malafouris claim, we tend to forget about this double mediation. On the one hand, this makes technology powerful. On the other hand,—in the case of cognitive technologies—we run the risk of losing what is our proprium, namely, our fundamental capacity of making sense of and enacting the world by directly interacting with it, and as a consequence, of losing a great deal of our cognitive and creative abilities.

The Role of the Possible: Experiencing (Future) Potentials Requires Embodied Contact to an Unfolding World and Distributed (creative) Agency

Assuming a 4E understanding of cognition (Newen et al., 2018; Ward et al., 2017, and many others, see above) claiming that cognition is embodied, embedded, extended, and enactive, we can see (and also experience in every moment of our lives) that we humans are bodily engaged with and coupled to the material world. We have direct access to the material qualities of the world through our sensory systems and can transform our environment by making use of our motor systems (and mediating tools). In a nutshell, the 4E/enactivist approach is based on two premises: “(1) perception consists in perceptually guided action and (2) cognitive structures emerge from the recurrent sensorimotor patterns that enable action to be perceptually guided.” (Varela et al., 2016, p. 173) Thus, human cognitive systems are engaged in a process of enacting their inner and outer world through an activity of sense-making and interacting with the world (De Jaegher & Di Paolo, 2007; Thompson & Stapleton, 2009).

Hence, as humans we are not decoupled from the world, quite the contrary: in fact, it is this active and close material engagement with the world that brings about novelty and meaning, and thus is relevant for the cognitive system and its survival (understood in the broadest possible sense here). Being closely and physically coupled and receptive to the world is essential for sense-making and creating (relevant) knowledge. In other words, as we are always operating in an uncertain and open-ended environment, high sensitivity to emerging possibilities/potentials is required. Under such precarious conditions “brain-bound/mind-based-only”predictions that are based on already existing knowledge turn out to be of limited use (e.g. Felin et al., 2014; Kauffman, 2014, 2016). As will be shown, a strategy of prediction has to be replaced by a strategy of anticipating future potentials and possibilities. This requires being intimately coupled to the world, in sharp contrast to the abstract and automated knowledge processes taking place in a decoupled AI system: their relevance, validity, and creative potential cannot be judged solely in the representational domain.

Of course, one could argue, for instance, that changing one’s perspective could bring about a novel insight or reconceptualization of an existing phenomenon or object without explicitly changing or materially transforming it. As we have seen above in the quotation from Varela et al. (2016, p. 173), in an enactive approach, perception and cognition are always guided by action (and vice versa) by engaging in recurrent sensorimotor activities with the environment. This implies that changing perspective can be considered as a form of interaction with the environment in the sense of that this new viewpoint might enact novel emerging cognitive structures. In other words, a novel aspect of an existing object is revealed, although it is not the object itself that has (been) changed, but the patterns of interactions. The object carries the potential to be perceived and understood differently. From the perspective of an object’s potential, this situation can be considered “semi-dynamic” as the object itself remains rather passive and does not change; it is only the brain-body structure and the new context (e.g. of taking a new perspective) that brought about this form of novelty. As will be shown in the sections to come, a whole new dynamic and quality in terms of novelty emerge when a cognitive system switches to a more “invasive” mode of interaction by also transforming and shaping its environment. Both the creative agent and his/her environment engage in a process of co-creation that unleashes the full potential of novelty and new patterns of interaction.

While biological cognitive systems are physically embedded in their environment, AI systems are—as has been discussed above—only interacting with their own representations of the world, which are in turn the result of human generated content and the interaction-history of prompts. In such a context, shifting perspective means making explicit and revealing what the system already knows. From the outside, this might look as if the algorithm has generated something novel, but from a strictly AI-system internal perspective, this turns out to be, in the best case, some kind of recombination of what it already knows.

As we will see below, the point is that humans co-create novelty by this dynamic interplay between their cognitive activities and their external environment, while AI systems pretend to achieve this by interacting with their already existing and limited internal representations of the world that are already predefined human generated content.

From the Actual to the Possible—Introducing Distributed (creative) Agency and the Role of Creative Agency of the World

Returning to our question concerning creativity and novelty, it can be shown that novelty is more than the result of recombining existing knowledge artifacts (e.g. Felin & Kauffman, 2023; Ingold, 2022). In particular, if one is interested in bringing forth radical or profound novelty and innovations, other sources that go beyond a past-driven logic of re-combination (of knowledge or [statistical] patterns) need to be tapped. And these sources have a great deal to do with our discussion of a cognitive system’s embodied coupling to its unfolding environment.

Most classical approaches suggest that creativity follows a strategy of recombination of existing knowledge (e.g. Amabile, 1996; Boden, 2004; Ingold, 2022). Thus, it can be achieved almost exclusively ``internally'' in the sense of ``mind-based'' only and sometimes even in a more or less automatized mode and decoupled from the world mode (such as in cognitive technologies). This paper argues, however, that creating radically and profoundly novel knowledge requires an alternative strategy. In such a more advanced and future-oriented approach to novelty and innovation the environment plays a critical role. As is shown by, for instance, Ingold (2013, 2022), Malafouris (2013, 2018), Glaveanu (2020), Kerr and Frasca (2021), Poli (2017), or Peschl (2019a, 2019b), novel knowledge, and as a consequence, novel forms of behaviors emerge from interacting with, acting in and with, as well as transforming the world (and the creative agent). In the context of cognitive technologies, this is in line with a human-technology relations approach (e.g. Ihde, 1990; Rosenberger & Verbeek, 2015; Verbeek, 2005) focusing on mutual co-shaping processes between human cognizers and technological artifacts.

Such a perspective is based on the assumption that the real world has more to offer and is richer compared to what our brains can think and know of it and, even more so, compared to the quantified statistically based knowledge structures being employed in cognitive technologies. In the context of creativity and creating profound novelty, one has to go even one step further: we should not only take into consideration the status-quo of the world in the sense of its actual state at the present moment (compare Kauffman’s (2014) or Björneborn’s (2020) concept of the actual), but we have to focus on its unfolding or becoming. In other words, we are moving our attention from the actual to the possible (e.g. Glaveanu, 2020, 2021; Glăveanu, 2023; Kauffman, 2000, 2008, 2014; Peschl, 2019a). As an example, think of what happens in the process and experience of drawing something novel: Glanville (2007) notes that after …having drawn something, we look at it later and see in it a different something that was not part of what we were thinking about as we drew it. This experience is at the heart of designing. There are two factors that are central: (1) we look and then we draw; and (2) we see something new, not previously intended. (Glanville, 2007, p. 1189f)

A similar perspective on creative processes can be found in concepts and approaches describing them as “conversation with the material” (Schön, 1992), “thinging” (Malafouris, 2013), or as engaging in a relationship of correspondence (Ingold, 2013, 2022) with the world. What all these approaches have in common is that they take into account the creative agency of the world as well as the (pro-) active and embodied engagement of the creative agent with the world by enacting it.

Novelty From Enacting the Possible

Oftentimes “what previously was not intended” manifests in the difference between the intended ideas or plans in our mind and what the external world actually “allows,” affords, challenges or even “teaches” us to do or transform. We have to learn and adapt to the possibilities as well as constraints of the world, and, even more importantly, understand how the creative agent him-/herself is transformed in these feedback processes. As Ross (2023) shows, the most extreme form of being exposed to this “creative agency of the world” is what happens when we experience an “accident.” She claims that “reality becomes a triggering cause for wide and fertile possibilities.. [and that] the best way to come up with truly novel and transformational thoughts is to incorporate things that are not already in the cognitive system” (Ross, 2023, p. 5). This implies a distribution of (creative) agency and shifts the burden of the creative agent being fully responsible for his/her creative outcomes—at least partially—to the creative agency of the world. This is in line with what we have discussed above in the context of the 4E approaches to cognition suggesting we direct our understanding of cognition beyond the mind toward the “mind-in-the-world,” a mind that is engaging in and enacting the world. As Ross notes, [this] more transactional perspective on thought sees… not just the intentions and agency of the sensemaking person but as emerging from the engagement with the world and takes seriously the cognitive agency of things outside the brain—thus positing a model of finely grained co-collaboration. Most important for the argument here, things insert knowledge and possibilities into the cognitive system that were not there before. (Ross, 2023, p. 5)

In this context, the interesting point from the above quotation is the reference to “possibilities that were not there before.” As, for example, Glăveanu (2023, p. 2) or Poli (2010, 2017) argue, possibilities are not only in our mind or imagination, they are real. We can find these ideas as well in, for instance, Kauffman’s (2014, p. 6) discussion about “res extensa” (ontologically real actuals) and “res potentia” (ontologically real possibles), or in relation to adjacent possibles (Björneborn, 2020). Indeed, these ideas can be traced back as far as to Aristotle’s (1991) Metaphysics, where he distinguishes between actuality (energeia, entelecheia) and potentiality (dunamis). What makes the concept of the possible/possibilities so interesting is that it can be understood as becoming a source of novelty, because possibilities, in the “moment they are acted upon, turn into something ‘real’. In fact, the possible and the actual are intertwined if we consider them temporally—they continuously feed into and transform each other through the course of action” (Glăveanu, 2023, p. 2). In other words, in interacting with and acting upon the world we engage in a co-creative activity making use of the creative agency of the world and transforming (future) potentials into actuals. In a way, this offers an alternative perspective in the notion of enactment: enacting the world can be understood as enacting/transforming future potentials into actuals.

In short, when we as humans engage with the unfolding of the material world we are exposed to the (creative) agency of the world. In this context, we have to keep in mind that we do not only perceive already existing phenomena and objects; rather, we are also confronted with and sense the “not-yet” (Bloch, 1975) in the form of not-yet realized potentials and affordances (Gibson, 1986; Heras-Escribano & De Pinedo-García, 2017; Rietveld et al., 2018; Rietveld & Kiverstein, 2014; Yakhlef & Rietveld, 2020) and try to make sense and use of them; they become a source for our own creativity. Creativity is no longer based exclusively on the (creative) agency of our mind that produces ideas in an “outside-the-box-thinking” or recombination mode, but—to some extent—on the (creative) agency of the world. We are actively engaging in a process of correspondence (Ingold, 2013, 2014) with unfolding future potentials in the world or, as Scharmer (2016) suggests, we are “learning from the future [potentials] as it emerges” (as opposed to learning from the [already existing] past).

Resonance and an Engaged Epistemology as Foundation for Being Responsive to Future Potentials

Of course, this is not a one-way relationship only. The creative agency of the world and of our minds form a circular process of co-enacting each other, finally, leading to a relationship of coupling (e.g. Maturana & Varela, 1980), creative co-becoming, and unity. Novelty emerges precisely at the interface between the future potentials of the world and the cognitive system in the zone of their interactions. Both systems engage in a relationship of resonance (e.g. Rosa, 2019). In the context of creating novelty, this means it is necessary to be able to listen, to be receptive and open to what the creative agent is called to do in order to shape the future. However, it is not just about these supposedly passive qualities: resonance implies both receiving and responding (Rosa, 2019; Susen, 2020). Therefore, resonance involves engaging in a dialogic relationship with the world. This dialog is not limited to a linguistic level, but, more importantly, encompasses the existential domain which is closely related to purpose. In other words, the issue here is how the identity of an individual responds and corresponds (Ingold, 2013, 2022; Peschl, 2019a, 2019b) to this “calling” from the emerging potentials in the world. The challenge, then, is to establish an “existential resonance-axis” (Rosa, 2019) connecting the innermost of a creative agent (i.e. his/her [future] purpose) with the unfolding future potentials of the world at an existential (and finally operational) level. This means that the objective is to come in (positive) resonance with what wants to emerge in the world and with what is at the very core of the cognizer’s existence (i.e. the personal purpose).

This can be achieved by getting in a close and “intimate” relationship and coupling with the world. As suggested by De Jaegher’s (2021)engaged epistemology approach, this relationship is not about dominating the other (i.e. our environment or another person), but consists in acknowledging that “in our knowing of things, we never fully know them… We often tend to know things (and people) in an overly deterministic manner, that is: where a big part of the knowing is determined by the knower, and a smaller part by the known. This is an over-determining, and thereby limiting knowing… it is a dialectical balancing between the being of the knower (who lets be) and the being of the known… Knowing is changing, for both knower and known and for their relation” (De Jaegher, 2021, p. 11f) Hence, as a prerequisite for creating profoundly novel knowledge, the creative agent has to assume a receptive and receiving attitude toward the future potentials he/she encounters in order to be able to respond to them in a sensible manner or even being transformed by them. In a way, sense-making becomes anticipatory sense-making with an attitude of epistemological humility. This implies that the goal is to establish the conditions for a co-creative unfolding and mutual transformation that is beneficial for all involved stakeholders through positive resonance.

Both, such an engaged epistemology and a future-oriented process of knowledge creation are situated at the interface between knowing and not-knowing. It acknowledges that there is more-than-knowing to knowing in the sense that potentials/possibles may hold more than our mind knows or our knowledge expects (see discussion about the creative agency of the world above). Similarly as in the relationship between the actual and the possible, knowing and not-knowing are intertwined and the process of knowing and creating novel meaning always takes place in a process of the co-becoming of the knower with the known. Knower and known are coupled and both are engaging in a process of mutual transformation (De Jaegher, 2021, p. 18; Glăveanu, 2023, p. 2f).

The “Possibility-Loophole” and Its Role for Cognitive Technologies

What are the implications of the above considerations about getting into positive resonance with future potentials and an engaged epistemology for our understanding of novelty and what does this mean for machine creativity in cognitive technologies?

Novelty as Emergent Behavior: Forming a Novel Unity in a Relationship of Correspondence and Resonance

Novelty does not arise in the knowers mind or in the world, but at the interface of their interaction and co-enaction. By resonating and corresponding with each other, they form a unity manifesting novelty by mutually transforming their joint potentials into novel actuals. As an example, consider a couple dancing together: both partners have their mental states, behavioral patterns, and their intentions. By dancing together they not only execute their behavioral patterns, but they listen to each other, offer each other affordances; they become sensitive to unfolding possibilities and future potentials, they provide “worlds in becoming” to each other, respond to each other, and eventually will tune into a state of correspondence and resonance. Being in such a state, they become sources for novelty and creativity for each other. This resonance manifests and emerges in novel dancing figures, joint (novel) behavioral patterns, as they become, co-enact, and merge into a single system performing these figures. Generally speaking, this novel unity is not limited to material or behavioral patterns, but can be found in interactions with the world, linguistic conversations (such as in a dialog; Bohm, 1996; Isaacs, 1999), social interactions, or in organizational behaviors (e.g. Tsoukas, 2009), future- and potential-driven emergent innovation processes (Peschl, 2020; Peschl & Fundneider, 2017; Scharmer, 2016), etc.

This leads to an understanding of creativity and knowledge creation beyond a deterministic and (re-)combination-based process in which the emergence of novelty is key. This process questions the Newtonian entailing law explanatory framework and is intrinsically unprestatable (Kauffman, 2000, 2014; Longo et al., 2012), “meaning that they emerge non-algorithmically. Even more: we can have no entailing mathematical theory of the emergence of opportunities” (Koppl et al., 2015, p. 15). This approach suggests to create new boundary conditions that act as a niche or an enabling space (Peschl & Fundneider, 2012, 2014); that is, a space enabling new adjacent possibles to be transformed into (novel) actuals. As Kauffman shows, these dynamics can be found in a wide variety of domains: “This is the emergent, creative, unentailed, becoming of the evolving biosphere, beyond law. …I claim the same kind of becoming is true of human life and culture and economy and art and civilization. New Actuals create adjacent possible opportunities in which new Actuals arise in a continuous unprestatable co-creation… it constitutes a New Empty Niche that creates a new but unprestatable set of adjacent possible opportunities for evolution” (Kauffman, 2014, p. 6).

Hence, profound novelty emerges from…

-Bodily engagement with the world and not only from a creative activity that is solely based in the creative agent’s brain/mind; in other words, novelty does not only emerge from ideas or mind-driven ideation(-first) processes;

-Openness and receptivity to the qualities of an unfolding world by embracing an engaged epistemology (De Jaegher, 2021);

-Sensing future potentials;

-Learning from as well as being driven and transformed by emergent future potentials/possibilities and the creative agency of the world (Scharmer, 2016);

-Integrating the creative agency of the cognitive system with the creative agency of the world by interacting with the world. Creativity and novelty arises/emerges at the interface between these two systems;

-Proactively acting in the world and shaping it by transforming potentials into actuals;

-Creating novel niches and landscapes of affordances (Heras-Escribano & De Pinedo-García, 2017; Rietveld & Kiverstein, 2014) functioning as enabling spaces (Peschl & Fundneider, 2012, 2014) co-enacting novel joint behavioral patterns as emergent phenomena;

-Engaging in a relationship of correspondence and resonance.

As can be seen, we propose a perspective on the creation of profound novelty that is strongly “world-oriented” and emphasizes the crucial role of the interaction and relations between the cognitive system and its environment as well as the creative agency of the world. Moreover, potentials/possibilities in the world are key for driving this emergent process.

The Possibility-Loophole: Reintroducing Profound Novelty to Machine Creativity

Coming back to our original question whether cognitive technologies are capable of creating profound novelty, or how they might contribute to such creative processes, we can see from the above considerations that these technologies lack essential capabilities, such as embodied interaction with the world and sense-making.

Moreover, taking into consideration what has been discussed above, two additional capabilities seem to be at the core of what cognitive technologies are missing for creating profound novelty:

(a) Engaged epistemology: it is this kind of “intimate” relationship to/with the world (in the sense of De Jaegher’s, 2021“engaged epistemology”) finding its expression in a relationship of co-becoming and correspondence that AI-driven/cognitive technologies are incapable of. As we have seen, their access to the world is always mediated through linguistic or statistical categories. Their “openness” to the world is limited to predefined patterns that can be found in documents or other digital artifacts. Although these technologies are adaptive by nature, the source of their adaptation/learning processes remains within the boundaries of these digital formats (i.e. “representations” of the world). This counteracts what has been discussed above with respect to radical epistemic openness/receptivity and humility being a prerequisite for overcoming our limitations of knowing, since these representations always project and throw a web or pattern of categories over the world. As we have seen, knowing implies giving up control and a transformation or change for the knower, the known as well as their relation.

(b) Sense of emerging (future) potentials: As a consequence, cognitive technologies cannot not be fully receptive to the otherness and qualities of the (real) world, as they are trapped in the space of their representational categories. This limitation is especially evident when it comes to the realm of the possible/future potentials: while these technologies excel in prediction, they struggle with anticipating and dealing with emerging future potentials because they lack a sense of what potentials are. As discussed, this results from their cognitive/learning strategy that is based in the realm of actuals, more specifically, on extrapolating (representations of) past experiences and (statistically) re-combining existing knowledge. For cognitive technologies, the realm of possibles exists only as a determined space of possible combinations and redistributions of statistical patterns. They are not physically exposed to the richness and otherness of real world potentials (as they are disembodied); they cannot sense possible affordances, and they lack the capability of making sense and use of potentiality by interacting and getting in resonance with the world.

Based on what has been discussed so far, future potentials serve as the loophole for the emergence of profound novelty: being grounded in the world and its becoming, they go beyond the limitations of the space of possible recombinations (of existing knowledge about actuals). They manifest the creative agency of the world by “invading” or irritating our cognitive system and its preformed structures, categories, or hypotheses as “surprises” (Clark, 2018; Gallagher, 2022; Constant et al., 2024), unexpected phenomena, “accidents” (Ross, 2023), etc. In the best case, these potentials can be integrated with the cognitive system’s creative processes and transformed into actuals enacting the above discussed state of coupling and positive resonance. In order to reach such a state in a cognitive system-artifact-environment ecosystem, it is necessary (a) to be bodily grounded in the open and unbiased perception and understanding of the now and the present state of the actual world as suggested by the engaged epistemology approach (De Jaegher, 2021), (b) to identify, understand, and learn from emerging future potentials (“what ‘wants’ to emerge?;” cf. Scharmer, 2016), and (c) to make use of these potentials as a source for enacting and realizing an ecosystem that delivers value to all involved sub-systems or stakeholders. In that sense, positive resonance may translate into novelty and innovations leading to a sustainable and prosperous future that benefits all stakeholders.

Even though cognitive technologies cannot exploit this loophole, as they do not have direct access to potentials, we suggest engaging in a novel relationship with them in making use of their capacities and the affordances they offer us in the context of creating novelty. Rather than just using or “consuming” cognitive technologies as tools for inspiration, providing ideas in brainstorming processes and outside-the-box-thinking exercises, or as a source for novel knowledge, it is proposed that they be proactively embedded into the process of creating profound novelty: While humans are bodily situated in the world and provide their capabilities of learning from future potentials and meaning making as well as interacting with, acting on, and enacting aspects of the environment, cognitive technologies contribute their ability to make accessible and explicit the vast space of existing possibilities and their combinations that is based on their knowledge artifacts that could not be explored by a human mind in its entirety.

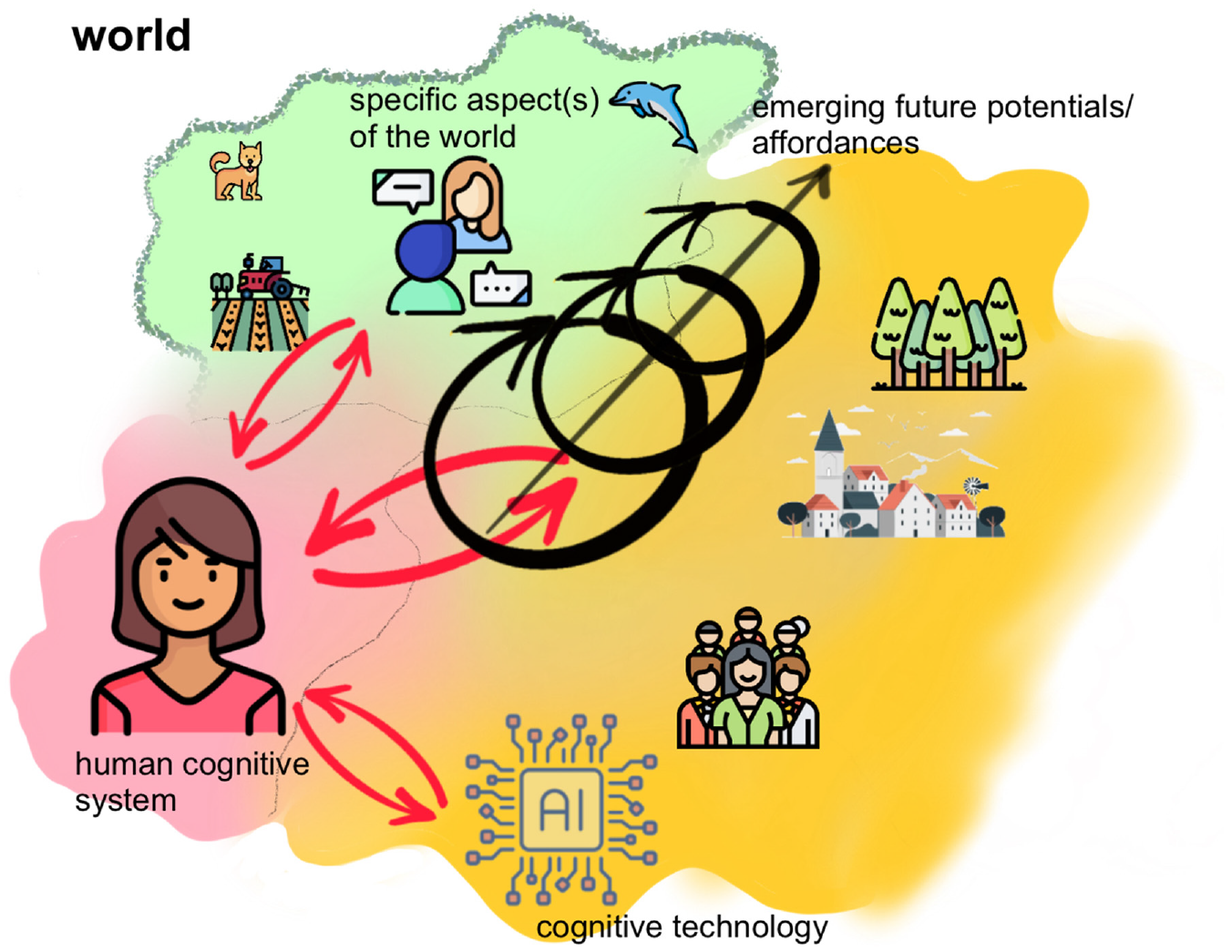

Jointly, they form a “human-world-cognitive machine ecosystem” as suggested by the human-technology relations approach that establishes a socio-onto/epistemic-technology space/field being grounded in the interactions of these stakeholders and subsystems. What is of interest for us in the context of creating novel knowledge, is the space “inbetween:” The focus is on the interactions and relations between these subsystems as well as on their unfolding over time. This leads to a process of co-enaction of the human-world-cognitive machine ecosystem in which human and technological/AI capabilities as well as the creative agency of the world are integrated and entangled. Similarly as discussed above, one can think about this relationship as a field of emerging new potentials for a technology-augmented yet human-grounded process of creating novel knowledge. It unfolds at the interface between the cognitive agent, his/her environment, and the cognitive technology he/she is using (see Figure 1). The involved systems engage in a “dance” constituting a novel unity representing a co-creative ecosystem.

A co-creative ecosystem: Creating novelty in the space/field of emerging future potentials at the interface between the cognitive agent, his/her environment, and cognitive technologies (some of the icons used here have been designed by Freepik).

As a possible scenario, think about a future-oriented innovation process (e.g. emergent innovation; Kerr & Frasca, 2021; Peschl, 2020), in which humans engage with their environment in order to identify and make use of emerging future potentials. As we have seen, this cannot be accomplished through cognitive technologies by themselves. It requires bodily engagement and cognitive abilities having been discussed in the context of engaged epistemology (De Jaegher, 2021). It concerns sensing and making sense of potentials and creating novel meaning that serves as a proposal for possible innovations and prototypes. Cognitive technologies come into play, when it comes to prototyping and translating this novel meaning into concrete artifacts or innovations. On the one hand, they may assist processes of exploring and exploiting possibilities developed by a human agent; on the other hand, these technologies may support processes of developing scenarios or user journeys by illustrating them with AI tools that generate images, texts (e.g. for storyboards, etc.), processes of business modeling, evaluating and optimizing results from prototyping, etc. In any case, there is a close cooperation between these subsystems in which the human agent guides the process and is at the same time guided (and transformed) by the creative agency of the world and technology.

As suggested by human-technology relations approaches (e.g. Ihde, 1990; Rosenberger & Verbeek, 2015; Verbeek, 2005), distributed (creative) agency is extended to cognitive technologies and now comprises the co-enactment of the human agent, the environment, and the cognitive machine. The human agent plays the role of integrating both the creative inputs from the environment and from the cognitive machine, as both offer novel affordances and potentials. As we have seen, novelty no longer is produced in a “brain-bound” or mechanistic/statistical process, but emerges in a process of co-becoming between the human cognitive system and his/her environment. In being able to physically interact and act on the environment, he/she is exposed to the flow of unforeseen and “unprestatable” (Kauffman, 2000, 2014) emerging potentials that can be translated into novel insights and knowledge for the technology-augmented production or enactment of novel artifacts or interventions in the environment and/or the cognitive machine—ideally, these subsystems engage in a state of positive creative resonance. This leads to the co-emergence of new ways of knowing and being that can be understood as co-creative “worldmaking activities.” However, we have to keep in mind that “[a]s the imprints of intelligent machines grow deeper, it is imperative to ensure a harmonious co-creation of culture where both humans and machines augment, rather than eclipse, each other” (Brinkmann et al., 2023, p. 1864).

In this sense, by tapping the “possibility-loophole,”humans are brought back in the loop of the creative process contributing profound novelty to the human-world-cognitive machine ecosystem. Since cognitive technologies are inherently integrated in such a innovation/knowledge creation process, they can benefit from the possibility-loophole by being “fed” with profoundly novel knowledge that serves as input for subsequent training cycles of the underlying LLMs or neural networks. In this way, the vicious cycle of knowledge recycling discussed above can be broken and the human-world-cognitive machine ecosystem can contribute to improving the overall quality of global knowledge production and creative processes.

Concluding Remarks and a Positive Outlook

Admitting that the future of our complex world is not only unknown but unknowable (e.g. Sarasvathy et al., 2003) has several consequences for our understanding of creativity and innovation: first, it is obvious that such a future cannot be predicted and, thus,—in most cases—we lack the foundations for making decisions and “plans” in order to achieve a specific goal or desired state. In many cases, we do not even know what the “best solution” or exact goal should be. Second, as an implication, we need to revise our mode of operating in such an environment: neither predicting or planning ahead on the basis of past knowledge nor discovering a solution turns out to be an adequate strategy, as both the problem- and the solution space change permanently. Rather, it has to be replaced (at least in part) by an approach of (pro-)actively acting in and interacting with the world to shape and co-create the future, to create niches and make use of (adjacent) possibles (Björneborn, 2020; Kauffman, 2014) enabling the emergence of novelty in the world. Third, shaping our future environment is possible, as this future does not yet exist, however, it emerges as a novel whole; it results from the interaction of a multitude of actors and processes and cannot be reduced to its constituent parts and dynamics. This implies that we have to walk the fine line between a hylomorphic approach (e.g. Ingold, 2013; Malafouris, 2014; Malinin, 2019) of projecting our own ideas and concepts onto the world on the one hand, and being guided and attracted by its emerging potentials on the other, if we want to create profound novelty and understand creativity.

It was not the intent of this paper to paint a black future for creativity and innovation resulting from the global use of cognitive technologies. Rather the opposite: If applied wisely and according to what they really can provide and what not (see our discussions above), these technologies offer new opportunities for creative processes and new forms of collective and co-creative human-world-technology agency. If we understand creativity and the creation of novelty no longer in terms of coming up with mostly “mind- and/or cognitive technology-based” novel ideas in the first place, but as an emergent process in which a diversity of novel landscapes of affordances (Rietveld et al., 2018; Rietveld & Kiverstein, 2014) arise from an (en- and pro-)active interaction with the world and with cognitive technologies, both the quality and pace of novel knowledge and artifact creation can be increased. These technologies might become even more powerful if we do not just use them as creators of novelty, but engage in a thriving human-world-machine relationship taking into account and making use of our capacities to interact with the world in an embodied manner, our ability to sense and make sense of future potentials in the world, harnessing and tapping the creative agency of the world, as well as feeding these collaborative creative results back into the training processes of cognitive machines.

Beyond that, besides the many positive points discussed in the media as well as in academia (e.g. Dwivedi et al., 2023; Floridi, 2023), one can see the important positive implications for the following fields:

Industrial revolution in the cognitive (and creative) domain: Automation has always meant some easing and standardization of hard routine work. While in previous industrial revolutions automation led to reduction of manual work through machines (transforming the material world), classical computers and software entered the field of standardized and routine knowledge work (e.g. using algorithms or complex spreadsheets, large databases, etc. for doing standard calculations). Cognitive technologies have brought about a new level and quality of automating knowledge work. In short, the logic of deduction was replaced by a primacy of inductive learning, adaptation, and generating (novel) knowledge artifacts. Some of our not-so-interesting and challenging intellectual and creative work can be outsourced to these machines, leaving—in the best case—more time for the “really” non-trivial, creative and demanding tasks.

Automation versus augmentation of human cognitive and creative abilities: However, as shown in this paper, we have to go beyond the concept of automation, if we are interested in creating profound novelty. As suggested by Brynjolfsson (2022) or Brinkmann et al. (2023), we have to shift our attention from automation to augmentation of our cognitive and creative abilities so that cognitive technologies complement them and enable humans to achieve things that they could not have done without them. “Complementarity implies that people remain indispensable for value creation… In contrast, when AI replicates and automates existing human capabilities, machines become better substitutes for human labor and workers lose economic and political bargaining power” (Brynjolfsson, 2022, p. 273). Fostering the aspect of complementarity has led us to focus on the interaction between humans, world, and cognitive technologies. Novelty emerges at this interface of joint (creative) agency and in the space “inbetween” (see also Figure 1).

Infiltrating sound and creative human knowledge into the global knowledge base: How can we escape both model collapse through data poisoning and recursive application of cognitive technologies (Brinkmann et al., 2023; Shumailov et al., 2023) and the decline of human creativity? “We note that access to the original data distribution is crucial: in learning… one needs access to real human-produced data. In other words, the use of LLMs at scale to publish content on the Internet will pollute the collection of data to train them: data about human interactions with LLMs will be increasingly valuable” (Shumailov et al., 2023, p. 2). Hence, although it might sound paradoxical, to keep LLMs alive and purposeful, a promising future of cognitive/generative AI technologies depends heavily on the constant “infiltration” of creative input as well as novel and reliable knowledge by human users committed to creating sound and novel knowledge. Only if such infiltration takes place on a large scale will it be possible to break the vicious circle described above and bring novel meaning, inspiration, trust, etc. to the global knowledge base so that its dynamics will come alive again.