Abstract

Anonymous online platforms are known hotspots for toxic content, but scholarly analysis often focuses on user behavior rather than the underlying platform architecture. This study examines Talk.jp, a new Japanese anonymous textboard, to argue that toxicity is not merely an emergent behavior but is structurally produced by key platform affordances. Specifically, this study identifies three features—its real-time engagement metric (ikioi), its ephemeral thread-based architecture, and its minimal moderation—as central to fostering a toxic environment. Using a mixed-methods approach that combines computational analysis (sentiment and toxicity detection over a dataset of 118,910 comments) with qualitative review, this article demonstrates how these affordances create a feedback loop. This system incentivizes and amplifies a “loud minority” of toxic users, whose antagonistic contributions come to define the platform's overall discourse. The findings show that while overtly toxic comments are a minority, the platform's design ensures they have a disproportionate impact. This study contributes to the literature by shifting the analytical focus from user psychology to platform design, using a non-Western case study to highlight the universal impact of architectural choices. The results suggest that mitigating online toxicity requires not just reactive moderation of users, but proactive structural reform of the platforms themselves.

Introduction

The rise of social media and online forums in the past decade has transformed public discourse, but it has also facilitated the proliferation of online toxicity. Scholars and policymakers alike are concerned about how these toxic online subcultures influence society at large: from eroding civility in discourse (Anderson et al., 2014; Kümpel & Unkel, 2023) to fueling radicalization (Elley, 2021; Marwick et al., 2022; Thorleifsson, 2022), but the debate on its primary causes remains unsettled. A common explanation points to anonymity as a key driver of disinhibition and malevolent behavior (Ederer et al., 2024; Fichman & Peters, 2019; Lapidot-Lefler & Barak, 2012; Pandita et al., 2024; Suler, 2004). This article challenges the simplistic narrative that anonymity itself is the main culprit. Using the Japanese anonymous textboard Talk.jp as a case study, I argue that toxicity is not only facilitated by the possibility of posting anonymously but, to a greater extent, structurally produced by a specific combination of other platform affordances. Specifically, the interplay between a real-time engagement metric (ikioi), a disposable thread-based architecture, and a policy of minimal moderation creates a competitive environment that systematically incentivizes and amplifies a “loud minority” of toxic users, ultimately shaping the platform's antagonistic discourse.

This “structural” perspective helps re-contextualize findings from existing platform studies. Research on Anglophone platforms has found that a substantial portion of this malevolent activity can be linked to nonmainstream online textboard, imageboards, and forums: 4chan is arguably the most studied among these platforms, with numerous academic inquiries into its unique cyberculture and negative impact on online discourse (Colley & Moore, 2022; Hine et al., 2017; Jokubauskaitė & Peeters, 2020; Papasavva et al., 2020; Rieger et al., 2021; Yoder et al., 2024). Similarly, pseudo-anonymous forums like Reddit (de Wildt & Aupers, 2023; Efstratiou et al., 2022; Gruzd et al., 2020; Massanari, 2015, 2017) and more ephemeral, fully anonymous applications like YikYak (Bayne et al., 2019; Black et al., 2016; Clark-Gordon et al., 2017; Saveski et al., 2016) and Jodel (Kasakowskij et al., 2018; Mensah, 2025; Reelfs et al., 2022), have been subject to scholarly examination, revealing insights into the dynamics of toxic online discourse and community behaviors.

While insightful, these studies often center on user ideologies or community norms. In Japan, the analogues of these toxic spaces are found on anonymous textboards like 5channel (formerly 2channel) and its successors (Fujioka, 2020; Fujioka & DeCook, 2021; Zeyu, 2019). Past research on these platforms has frequently highlighted their role as breeding grounds for hate speech propagated by ultra-nationalist users known as netto-uyoku (Kaigo, 2013; McLelland, 2008; Nagayoshi, 2021; Sakamoto, 2011; Tsuji, 2017). However, focusing primarily on the users overlooks the underlying architecture that makes their rhetoric so visible and persistent. These platforms do not function as merely passive containers for pre-existing toxicity: it is arguable that their very design can be seen as an engine for it (Gillespie, 2018; Munn, 2020).

To investigate this mechanism, this study conducts a large-scale quantitative analysis of Talk.jp's “News Plus” board, a section where users anonymously post and comment on news threads. By analyzing a dataset of 118,910 posts, I will demonstrate how the platform's affordances directly influence user behavior and discourse patterns. This research contributes to the debate on complete anonymous platforms (CAPs; also termed “high-level anonymous platforms”) which are conceptualized as having “minimal to no degree of information on users’ personal and social identifiers” (Mensah, 2025, p. 6), by showing how specific design choices, rather than just user anonymity or ideology, can cultivate a toxic environment, offering a valuable lesson for platform governance and design in Japan and beyond.

Literature Review

This literature review establishes the theoretical foundation for the study by first tracing the shift in toxicity research from a focus on user psychology to one on platform architecture. It then provides a deep analysis of the specific mechanics and cultural context of Japanese “chan-style” textboards, using Talk.jp as a primary example. Finally, it situates the study within the legal and social context of hate speech in Japan.

From User Psychology to Platform Design in Toxicity Research

Online Disinhibition Effect

Early research into online hostility centered on the psychological effects of anonymity. Suler's (2004) foundational “online disinhibition effect” proposed that the lack of face-to-face cues and accountability online emboldens individuals to express sentiments they would suppress offline (Ederer et al., 2024; Meriläinen & Ruotsalainen, 2024; Pandita et al., 2024; Suler, 2004). This perspective suggest that users feel shielded from consequences (Christopherson, 2007), leading to greater boldness in language and a loss of empathy for their targets (Falla et al., 2021). Homogeneous online groups often undergo group polarization, drifting toward more extreme positions as members continually echo and amplify each other's sentiments (Cinelli et al., 2021; Darius & Stephany, 2019; Vaccari et al., 2016). Notably, this polarization is especially strong under anonymity, as individuals feel less personal accountability and more group solidarity (Bae, 2016; Huang & Li, 2016; Paskuda, 2016; Wang, 2017). Over time, the repetition of toxic narratives (e.g., xenophobic tropes) in these communities can normalize extreme ideas. Even ironically presented content can inadvertently normalize extremist ideologies, blurring the line between trolling and genuine belief (Askanius, 2021; DeCook, 2020). This convergence of anonymity, disembodiment, disinhibition, and lack of accountability creates an echo chamber where toxic attitudes are both performatively enacted and sincerely reinforced (Pandita et al., 2024).

Toxic by Design

However, a growing body of research argues that focusing solely on user psychology is insufficient (e.g., Gillespie, 2018; Munn, 2020). Scholars now emphasize that platforms are not neutral containers for speech; their design choices are a more crucial factor in shaping discourse. As Munn (2020) argues, online toxicity is not merely the product of hateful individuals but is actively facilitated by the architectural design of social media platforms. He contends that platforms like Facebook and YouTube are “angry by design,” using technical systems that are optimized to maximize “engagement” above all other values (Kroger et al., 2024; Reimer, 2023). For instance, Facebook's News Feed algorithm privileges incendiary and polarizing content because it consistently generates high engagement, creating a stimulus-response loop that normalizes the expression of outrage (Al-Zaman, 2024; Chen & Cheung, 2019; Sano-Franchini, 2018). Similarly, YouTube's recommendation engine is criticized for leading users toward progressively more extreme and radical content in its pursuit of engagement, effectively creating a “pipeline for radicalization” (Berjawi et al., 2024; Champion & Frank, 2021; Ribeiro et al., 2020). Munn's analysis shifts the focus from user psychology to the platform's affordances, showing how design choices (such as recommendation algorithms and comment systems) create an environment where toxic communication is not just possible, but actively encouraged and rewarded. Content moderation is a primary example of such an affordance. Gillespie (2018) details how moderation policies are not simple technical decisions but are deeply political and cultural choices with predictable consequences. A platform's decision to implement minimal moderation, for instance, is an active design choice that can predictably cultivate a toxic environment. This study adopts this structural approach, positing that the architecture of a platform is a primary driver of the toxicity observed within it (Klein & Majdoubi, 2024).

What Is Talk.jp? Characteristics of Chan-Style Platforms

Talk.jp, launched on July 7, 2023, is a direct descendant of 5channel (formerly 2channel), a textboard founded in May 1999 by Hiroyuki Nishimura, in its turn the spiritual successor to a prior bulletin board named Ayashii World (Kaigo & Watanabe, 2007). From its inception, 5channel was built on the principle of unfettered anonymous expression, allowing users to post without registering an account or revealing their real-world identity (Fujioka & DeCook, 2021). This core affordance, combined with a vast array of topic-specific boards (板, ita) covering everything from news and technology to hobbies and gossip, allowed it to grow into Japan's most popular online community, with an influence comparable to mainstream media (Katayama, 2007).

Core Interaction Mechanics

Talk.jp inherits 5channel's architecture of anonymity and ephemerality, making it a fertile ground to investigate how toxic discourse evolves in a novel setting (Figure 1). The site is organized hierarchically:

A Screenshot of the “News” Section on Talk.jp, Illustrating the Platform's Hierarchical Organization. The Top Navigation Bar Displays the Main Thematic Categories. Within Each Category Are Topic-Specific Boards, Circled in Red. The Main Content Area Lists Individual Discussion Threads, Each with Its Own Title, Comment Count (Speech Bubble Icon), and Real-Time Activity Score or Ikioi (Upward Trend Icon).

Interaction consists of users adding responses (レス, resu) to a thread. Image and video sharing are not natively supported in the same way as on modern social media. Instead, users post links to external hosting services. Keeping on this line, Talk.jp deliberately eschews the affordances that define contemporary social media: there are no “likes,” upvotes, user profiles, follower counts, or algorithmic content feeds. While this preserves the classic, unmediated feel of 2channel, it also perpetuates its well-documented problematic affordances, listed below.

The Cultural Context of Anonymity

Talk.jp operates within the cultural framework of anonymity established by 5channel. In this model, the default mode of interaction is namelessness. This is often celebrated as a mechanism for enabling 本音 (honne), or the expression of one's true opinions, free from the social pressures and consequences of the real world (Katsuno & Yano, 2002; Lebra, 1976). 5channel's founder, Hiroyuki Nishimura, famously argued that this system creates a true meritocracy of ideas, where arguments are judged on their content alone, stripped of the authority that comes with a persistent identity (Furukawa, 2003). The downside of this model is equally well-documented (Sakamoto, 2011; Tonami, 2023). The shield of anonymity is frequently used to engage in behavior that would be socially unacceptable otherwise, including severe defamation, hate speech, harassment, and the spreading of malicious rumors (Klik, 2023; Massanari, 2017; Munn, 2020). This has led to the enduring criticism of such platforms as little more than “toilet graffiti” (便所の落書き, benjo no rakugaki). To structure this anonymous space, Talk.jp provides two core identity mechanisms: a temporary ID that resets daily for all users, and an opt-in “tripcode” system for those wishing to establish a persistent, verifiable pseudonym (Figure 2).

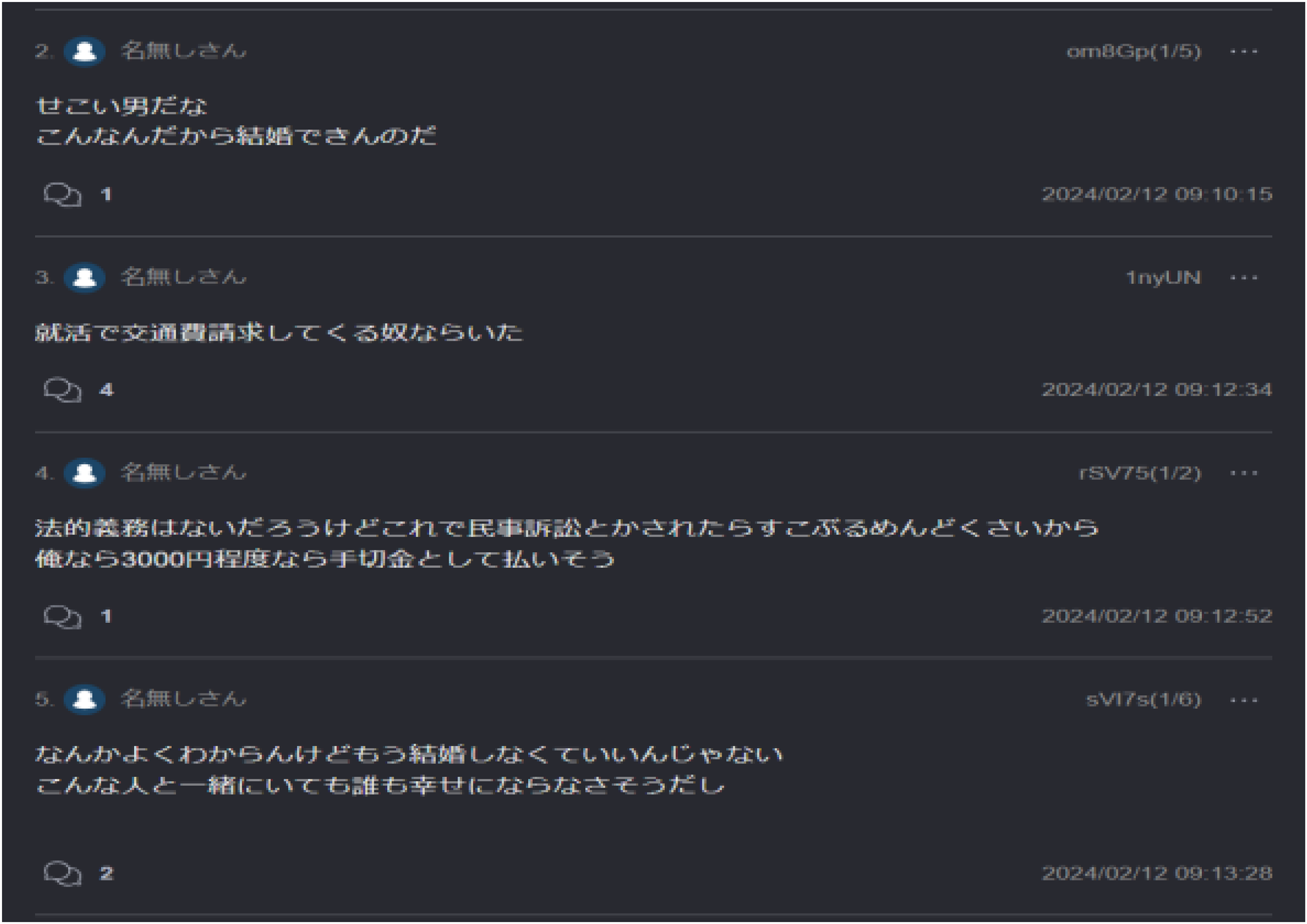

A Detailed View of User Comments Within a Talk.jp Thread. The Screenshot Illustrates Several Key Features: (1) The Default Anonymous Handle, “名無しさん” (Nanashi-San, or “Mr/Ms. Anonymous”), Which Most Users Post Under. (2) A Temporary User ID That Is Appended to Each Post, Allowing for Identification Within a Single Thread While Preserving Long-Term Anonymity. (3) A Precise Timestamp Marking the Date and Time of Posting. (4) A Reply Counter (Speech Bubble Icon) Indicating the Number of Direct Responses a Comment Has Received, Functioning as a Measure of Engagement.

Momentum and Ephemerality: The Pulse of the Platform

Discourse on Talk.jp is characterized by two defining temporal dynamics inherited from 5ch: 勢い (ikioi), which measures and directs attention in real-time, and DAT落ち (dat-ochi), which enforces a constant cycle of conversational death and rebirth. Talk.jp inherited the ikioi display function that was a long-standing feature of 5ch. The word ikioi translates to “momentum,” “vigor,” or “force,” and in this context, it is a numerical value displayed next to each thread title that represents its current level of activity or “hotness.” This metric functions as the platform's primary attention-directing affordance. In a system with thousands of active threads across hundreds of boards, ikioi serves as a real-time, user-generated ranking system (Okumura, 2013). This creates a powerful feedback loop: a thread that gains traction and a high ikioi becomes more visible, attracting more participants, which in turn drives its ikioi even higher. This system fosters a fast-paced, competitive conversational environment that privileges rapid-fire debate, breaking news, and viral controversy over slow, deliberative discussion. This user-driven ranking system is a prime example of what Munn (2020) describes as a platform being ‘angry by design,’ as it structurally rewards high-engagement controversy over quiet civility.

The second defining temporal characteristic of talk.jp is its designed ephemerality, enforced by the process of dat-ochi.

1

There are three primary conditions that trigger dat-ochi:

Reaching 1,000 Posts: Each thread has a hard limit of 1,000 responses (including the initial post). This is the most common reason for a popular thread to end.

This system of enforced ephemerality is a core design feature, not a technical limitation (Hamano, 2008). Conversations are not intended to be permanent, ever-expanding documents. They have a defined and often very short lifespan. This design forces a constant cycle of conversational renewal. As a popular thread approaches the 1,000-post limit, participants will coordinate to create a “next thread” (次スレ, tsugi-sure), often copying the initial post and rules from the current thread to ensure continuity. This creates a high-velocity cycle of thread birth, life, and death, reinforcing the platform's cultural focus on immediate, transient discourse rather than the slow accumulation of persistent knowledge. While dat-ochi threads remain readable in the archive, the conversational energy moves on, ensuring the platform's “front page” is always fresh.

Hate Speech and Toxicity in the Japanese Context

I distinguish hate speech (attacks targeted at a protected or identity-based group) (Matsuda, 1989) from the broader notion of toxic speech, which includes any rude, aggressive, or harassing language likely to drive people away from civil discussion (Jigsaw, n.d.). Hate speech is an extreme form of toxicity with severe societal implications, and it thrives in online enclaves where social norms against bigotry are weak (Brown & Sinclair, 2024; Hietanen & Eddebo, 2023). Japan, like many democracies, has struggled to curb hate speech online due to legal and cultural tensions between free expression and protection from harassment (Powell, 2022). While many European countries enforce stringent anti-hate regulations, the United States (and by extension U.S.-hosted platforms) take a more libertarian stance (Martin, 2018). Japan's regulatory approach has been comparatively laissez-faire, with a 2016 Hate Speech Act that lacked penalties and only recent moves to address cyberbullying after tragic cases (Powell, 2022). In practice, sites like 5channel/Talk.jp operate with minimal oversight, relying on community self-moderation or volunteer administrators. 2 This creates an online environment where toxicity can flourish, sometimes faster than society's ability to understand or legislate against it (Schneider & Rizoiu, 2023).

Research Questions

Building on the preceding analysis of platform architecture, this study investigates how the specific design of the Japanese anonymous textboard Talk.jp structurally shapes toxic discourse. As detailed in the literature review, Talk.jp's core mechanics—absolute anonymity, an engagement-driven ranking system (ikioi), and enforced ephemerality (dat-ochi)—create a unique environment for communication. The analysis focuses on the “News Plus” (ニュース速報+) board, one of the most active boards on the platform. On this board, users initiate discussion threads by posting links to external news articles with a text post showing the content of the news, providing a clear view of how reactions to real-world events are mediated by the platform's architectural constraints.

This study is guided by the following research questions:

By addressing these questions, this article moves beyond a simple description of toxicity to analyze the platform's structural role in fostering and amplifying it. We hypothesize that the ikioi system, by rewarding rapid and high-volume engagement, structurally favors controversial and antagonistic content, and that a disproportionate amount of this toxicity is generated by a “loud minority” of users.

Methodology

Research Design

I adopted a mixed-methods approach, combining large-scale computational text analysis (Grimmer et al., 2022) with targeted qualitative content examination (Hsieh & Shannon, 2005). This design allowed for quantifying toxicity and interpreting its nature and context. The analytical workflow proceeded in three main phases: sentiment analysis, toxicity detection, and qualitative thematic analysis. In brief, I first measured the general sentiment of Talk.jp comments and identified the most negative discussion threads. Toxic language was then assessed in those threads using a machine learning API, and finally, I manually reviewed samples of toxic comments to understand their content in context.

Data and Sampling

The dataset comprises user comments from Talk.jp's News + board over the first 125 days of the site's existence. As described in the literature review, Talk.jp is defined by high user engagement and its ikioi metric. Therefore, I collected all posts from 121 threads that demonstrated high community resonance, defined as threads approaching or exceeding 1,000 comments. This approach of sampling the most popular or active discussions is a common strategy in studies of large online forums, as it ensures the analysis focuses on content that is most visible and impactful within the community (Lampe & Resnick, 2004; Mensah, 2025; Reelfs et al., 2022). The sampling period spanned from Talk.jp's launch on July 7, 2023, through November 7, 2023.

A custom Python script was developed by the author for this study, using the Requests and BeautifulSoup libraries to retrieve thread pages and parse comments. Each comment's text and metadata were stored in a structured dataset (CSV file). In total, the dataset contains 118,910 comments, reflecting essentially all messages from the selected 121 threads. Each data entry includes the comment text, timestamp, an anonymized user ID (a hash as displayed by Talk.jp for each poster), the thread title, and source URL. I emphasize that Talk.jp does not require a login, so user IDs are session-based and do not reliably indicate unique individuals across threads. This inherent anonymity limits user-centered analysis, but it is suitable for content-level analysis, which is my focus.

Sentiment Analysis

I applied a Japanese sentiment analyzer to every comment to gauge its emotional tone. Specifically, Asari, a Python-based sentiment analysis tool designed for Japanese text (Hironsan, 2023), was chosen after a thorough testing of numerous tools. Asari classifies text sentiment along positive and negative dimensions, outputting a score between 0 and 1 for negativity. For the study's purposes, I extracted the “negative sentiment” score for each comment, storing it as Asari-Neg. This gave us a continuous measure of how negative or positive each comment was.

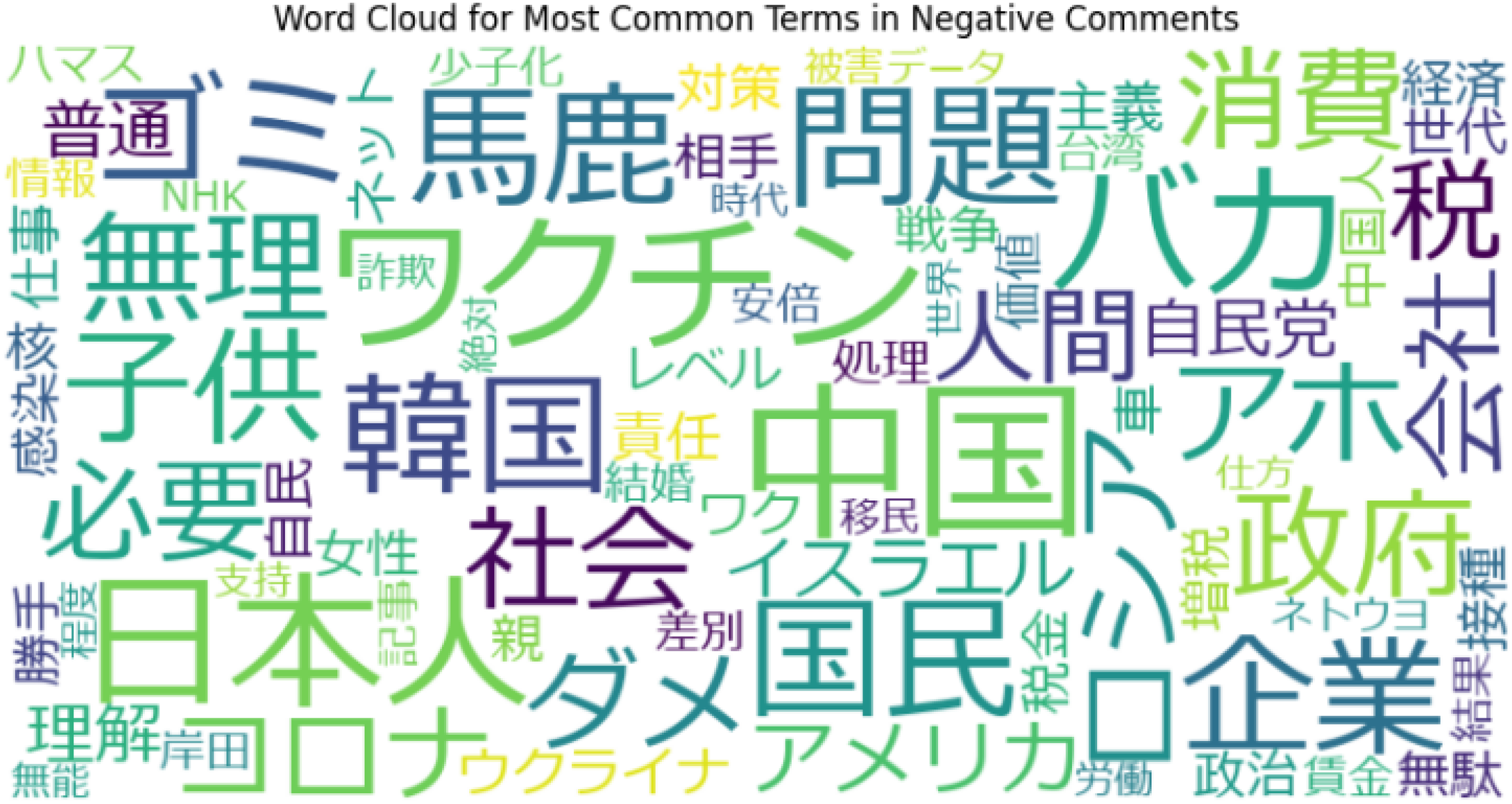

Using these sentiment scores, multiple analyses were conducted: (1) a word frequency analysis of highly negative comments, (2) a temporal trend analysis of negativity over time, and (3) identification of the most negative threads. For (1), I filtered the dataset to comments with an Asari-Neg score > 0.7 (on the 0–1 scale). This threshold captured the subset of comments that were clearly negative in tone. I then aggregated and counted the words appearing in these negative comments, generating a list of the most frequent terms associated with negativity on Talk.jp. These frequent terms were visualized via a word cloud (Figure 3), with word size indicating frequency. This helps identify topical themes that users discuss negatively. For (2), I aggregated sentiment scores by date to compute daily and weekly average negativity. For (3), I computed each thread's mean sentiment and then looked for threads with exceptionally high negativity. To do this systematically, I used a rolling average of comment negativity within each thread over time. I then identified the top 10 most negative threads and recorded their topics and start dates. This two-step process provided a quantitative map of where and when negativity concentrates on Talk.jp.

Word Cloud Visualizing Negative Topics, Analyzing the Most Frequent Words Inside the Comment Corpus. Negativity Cutoff of 0.7.

Toxicity Detection

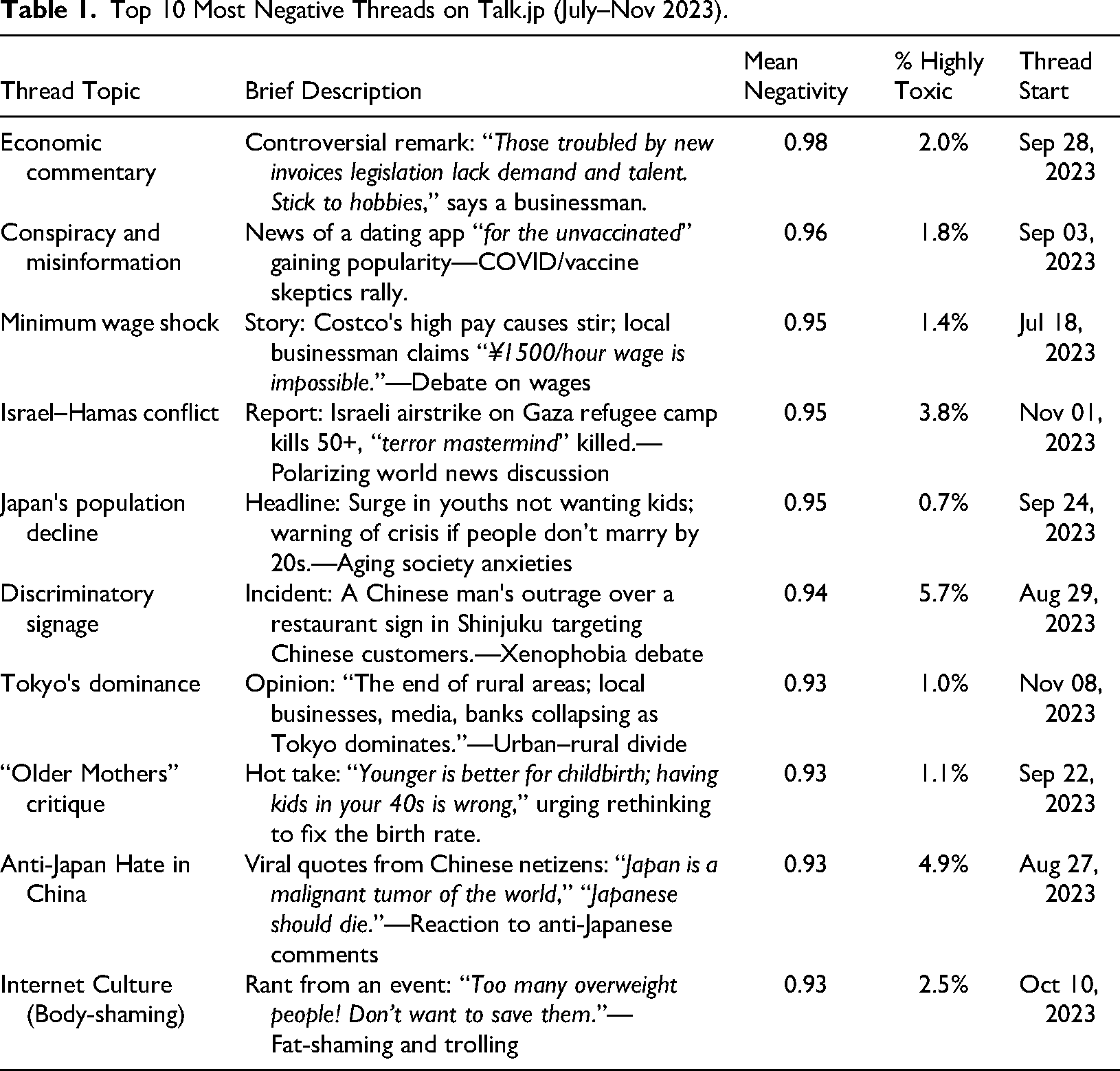

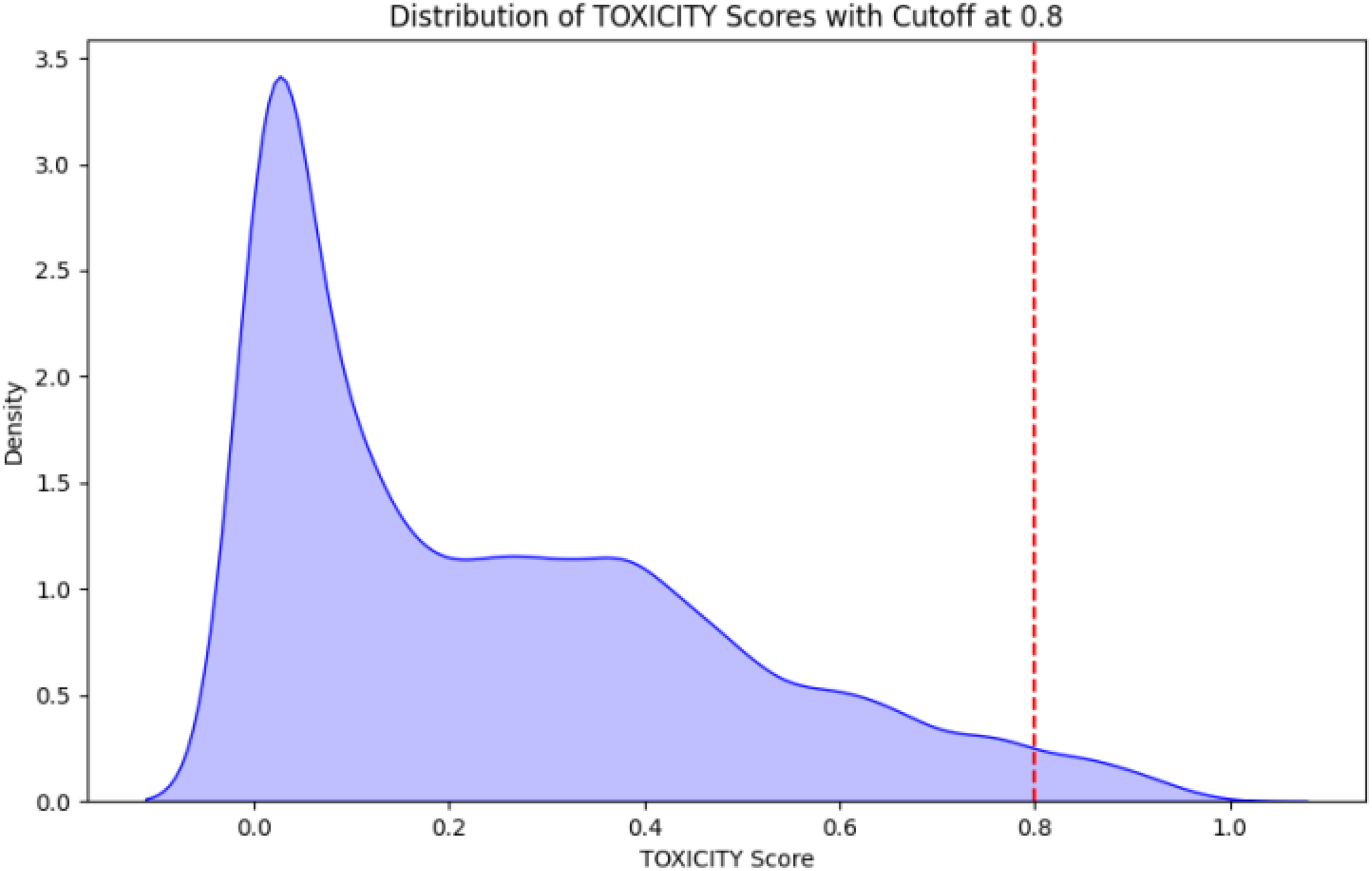

Sentiment analysis, as described above, captures general negativity but does not directly indicate toxic language (for instance, a sad or pessimistic comment can have negative sentiment but not be toxic). To specifically measure toxic and harassing content, I employed Google Jigsaw's Perspective API. Perspective is a widely used tool for content moderation research; it uses machine learning models to score text on various attributes such as toxicity, insults, threats, and so on. The toxicity score (range 0 to 1) reflects the model's estimate of how likely a comment is perceived as “a rude, disrespectful, or unreasonable comment that is likely to make people leave a discussion” (Jigsaw, n.d.). Higher scores indicate more toxic content. From the 10 most negative threads, a sample of 10,004 comments was submitted to the perspective API to record each comment's toxicity score (perspective supports Japanese input). I then examined the distribution of toxicity scores across these threads. In particular, comments were flagged with toxicity > 0.8 as “highly toxic,” which typically correspond to clear cases of harassment or hate speech (Gruzd et al., 2020). Afterwards, I plotted a distribution curve (Figure 7) of toxicity scores to see the overall skew. Additionally, for each of the top threads, I calculated the average toxicity and the percentage of comments exceeding the 0.8 high-toxicity threshold. These results were tabulated for comparison across threads (Table 1).

Top 10 Most Negative Threads on Talk.jp (July–Nov 2023).

Qualitative Thematic Analysis

I extracted a subsample of comments that the perspective API flagged as toxic (score > 0.8) from the top threads. I then employed a thematic analysis approach to identify recurring themes, targets of abuse, and notable linguistic features. I also examined the immediate conversational context to see if toxic comments were challenged, ignored, or amplified by subsequent replies. This manual review was crucial for adding nuance to the quantitative scores and understanding the specific nature of toxic discourse on the platform. The approach here was informed by prior ethnographic work on toxic communities, and I remained conscious of the ethical challenges of engaging with extreme content (Colley & Moore, 2022; Maloney et al., 2022).

Ethical Concerns

This study relied on observational data from a publicly accessible online forum. To protect users, all data were handled in aggregate, and no personally identifying information was collected or stored. The user IDs analyzed are temporary and session-based, assigned by the platform itself. Because no private data was accessed and no interaction with users occurred, a formal consent process was not required; however, ethical considerations guided every stage of data collection, analysis, and reporting.

Results

Negative Discourse Themes

To address the first research question regarding the topics and characteristics of toxic discourse, we first analyzed the content and temporal patterns of negative commentary. A keyword frequency analysis of the most negative comments on Talk.jp reveals several dominant topics. Figure 3 (word cloud) visualizes the terms that appeared most frequently in comments with high negative sentiment (negativity score >0.7). Prominent Japanese terms related to public health, such as “ワクチン” (wakuchin, vaccine), “コロナ” (corona, COVID-19), and domestic policy, “税” (zei, tax), and “子供” (kodomo, child), were highly frequent. Economic concerns were also central, with terms like “企業” (kigyou, enterprise), “賃金” (chingin, wages), “経済” (keizai, economy) appear often. Another significant category involved international relations, with frequent mentions of “中国” (Chugoku, China) and, to a lesser extent, Korea. A defining characteristic of the discourse was the widespread use of direct insults and derogatory language, including “バカ” (baka, idiot) and “ゴミ” (gomi, trash). Finally, terms related to foreign conflicts, such as “ハマス” (Hamas) and “イスラエル” (Israel), were present but less prominent in the corpus.

Cyclical Negativity Patterns

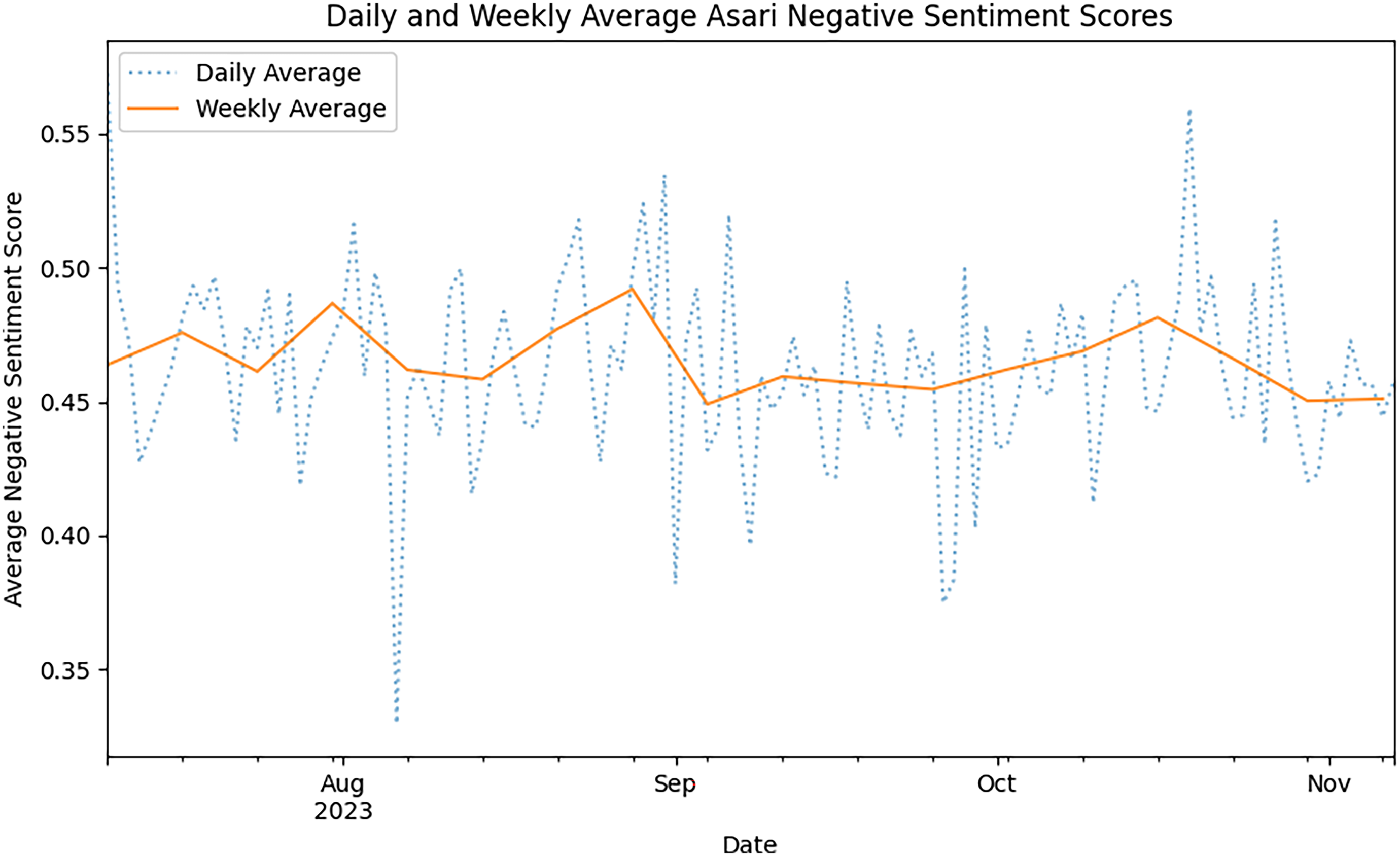

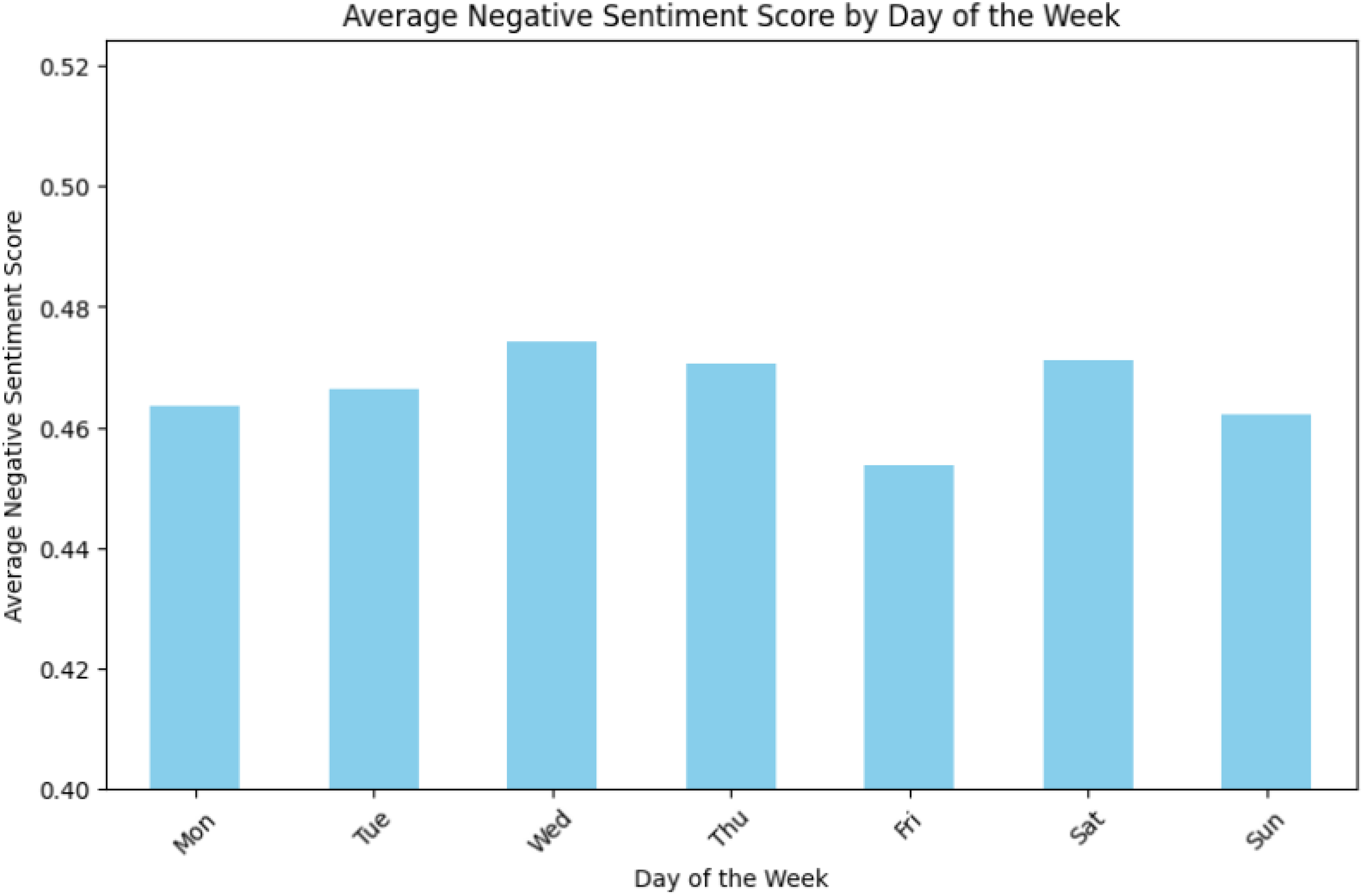

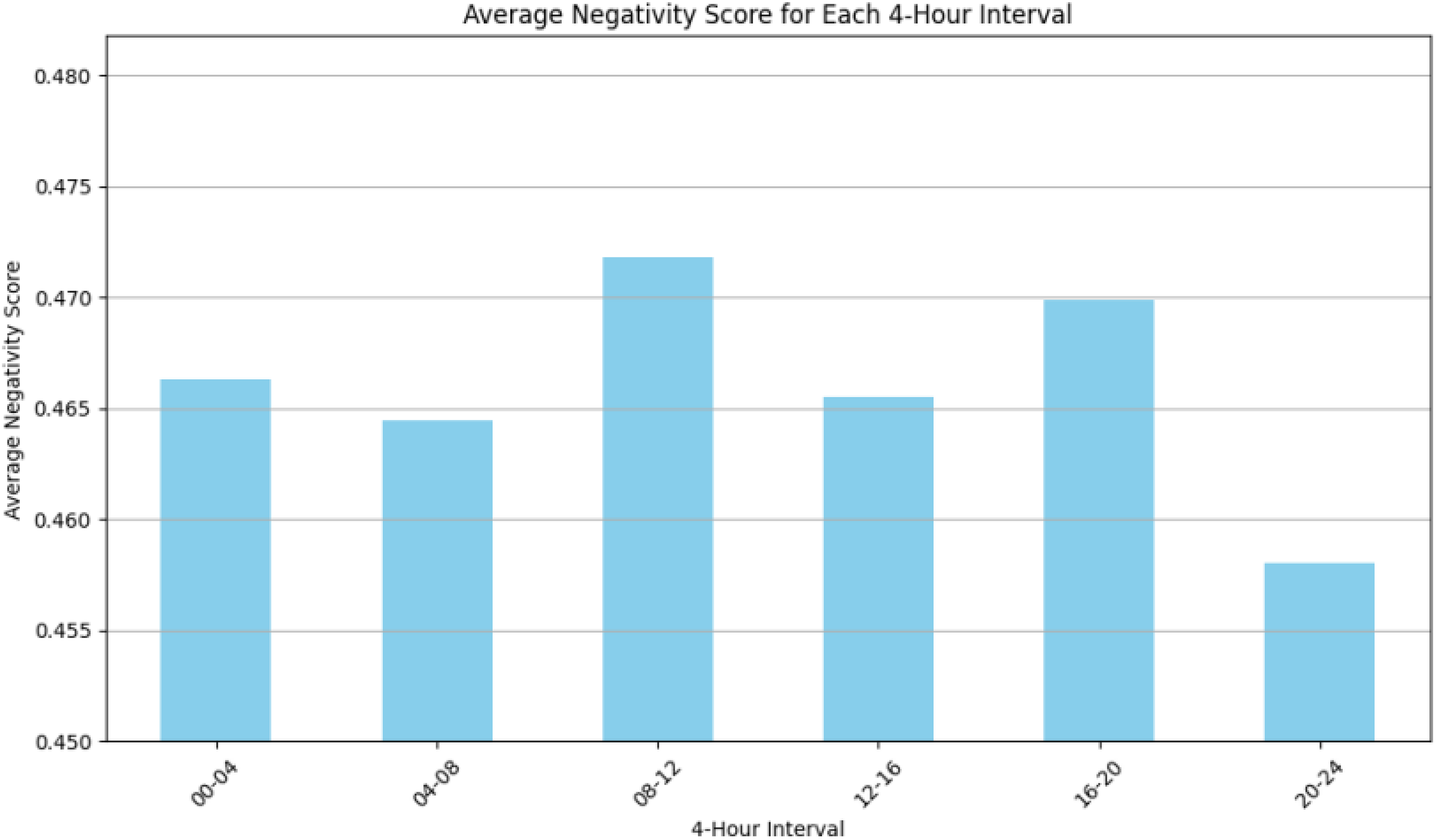

The average daily sentiment score fluctuated around a stable baseline throughout the observation period, with no significant long-term trend (Figure 4). This baseline was punctuated by several short-term spikes. For example, a prominent spike in late September 2023 directly corresponds with an inflammatory thread launched on the site (Table 1). On a weekly basis, a clear rhythm was observed (Figure 5). Average negativity was highest on Wednesdays and lowest on Fridays. Notably, negativity on Mondays was not exceptionally high compared to other days of the week. A diurnal analysis, for which comments were grouped into 4-hour intervals, showed that negative sentiment peaked during typical work and commute hours (8:00–12:00 and 16:00–20:00). Conversely, negativity levels were significantly lower in the late evening (20:00–24:00) and early morning (0:00–4:00), as shown in Figure 6.

A Line Graph Showing the Fluctuation of Asari’s Negative Sentiment Scores Daily (Dotted) and Weekly (Solid) from July to November 2023.

Distribution of Average Negative Sentiment Scores Across Different Days of the Week, Showing Subtle Variations, with Wednesday Registering the Highest Average Negativity and Friday the Lowest (y-Axis Zoomed In from 0.40).

Bar Graph Illustrating the Average Negative Sentiment Scores in 4-Hour Intervals Across a 24-Hour Period, Highlighting Peaks During Typical Work and Commute Hours and a Decrease in Negativity Postwork Hours (y-Axis Zoomed In from Value 0.4).

Kernel Density Estimate Plot That Visualizes the Distribution of Toxicity Scores from the 10 Most Negative Threads of the Dataset.

Top Toxicity Hotspot Threads

While the preceding analyses depict broad trends, a deeper understanding requires focusing on the peaks of toxicity, that is, the specific threads where antagonistic interactions were most concentrated. Using a rolling average of comment negativity, the 10 threads with the highest mean negativity scores were identified from the dataset. Table 1 lists these threads, detailing each topic, its mean negativity score, the percentage of “highly toxic” comments (perspective API score > 0.8), and its start date.

Prevalence and Distribution of Toxic Comments

To characterize the nature of toxic comments, the distribution of TOXICITY scores from the 10 most negative threads was plotted (Figure 7). The analysis reveals a unimodal and strongly positive-skewed distribution. The vast majority of comments are concentrated in a large, primary peak at the low end of the spectrum, with toxicity scores clustering around 0.1. Beyond this peak, the density decreases, forming a long tail that extends towards the higher scores. Notably, there is a secondary, much broader “shoulder” in the distribution, centered around the 0.3–0.4 score range. This indicates a concentration of comments with mild-to-moderate toxicity, though it does not form a distinct second peak. As shown, very few comments cross the severe toxicity cutoff of 0.8.

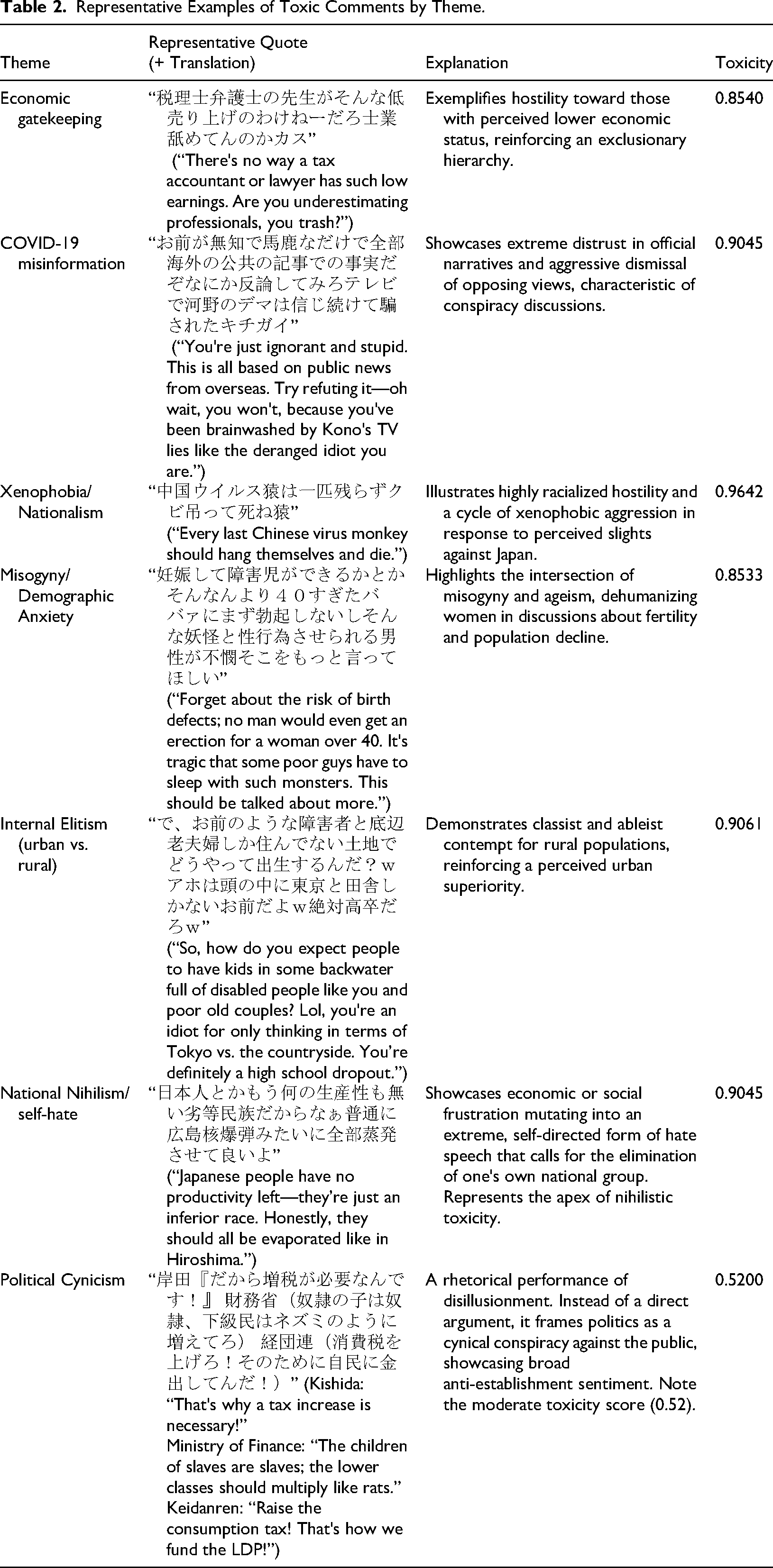

Qualitative Analysis of Toxic Themes

To add depth and context to the quantitative findings, this section presents a qualitative analysis of representative comments from the most toxic threads. The following table provides examples of highly toxic posts, categorized by their primary rhetorical theme. These examples were chosen to illustrate the specific ways in which users express hostility, from direct xenophobic attacks and misogyny to more complex forms of political cynicism and national nihilism. Each entry includes the original Japanese text and its English translation, along with a brief analysis of the rhetoric it exemplifies.

Note on Offensive Content: the following quotations contain explicitly hateful, misogynistic, and otherwise prejudiced language. They are provided verbatim only to illustrate the characteristic tone and rhetoric observed in these threads, which is central to this study's examination of structural toxicity. Their inclusion does not imply endorsement of such views, but rather demonstrates how hateful expressions emerge and circulate in anonymous online communities. Readers who may be sensitive to such content are advised to proceed with caution (Table 2).

Representative Examples of Toxic Comments by Theme.

Discussion

This study investigated the structural production of toxicity on the Japanese anonymous textboard Talk.jp and arrived at three key findings. First, toxic discourse primarily centered on polarizing socio-political topics such as xenophobia, economic anxiety, and misogyny (RQ1). Second, the platform's core architectural feature—the ikioi engagement system—was found to directly amplify the visibility of the most antagonistic threads (RQ2). Finally, quantitative analysis revealed that highly toxic comments were a concentrated phenomenon, constituting only a small fraction of the overall discourse within these threads (RQ3).

These findings indicate that negative discourse on Talk.jp is fueled by political, economic, and social grievances. The prevalence of pandemic-related terms reflects a broader trend of public skepticism and conspiracy-focused narratives seen globally during the crisis (Hosoda, 2023; Toriumi et al., 2024). The persistent negative focus on China and Korea confirms patterns of internet nationalism previously documented in Japan (Sakamoto, 2011; Shibuichi, 2015), where geopolitical tensions are channeled into online xenophobia. The pervasive use of insults across all topics highlights how the platform's anonymity likely lowers inhibitions, fostering a generally hostile environment rather than topic-specific abuse (Meriläinen & Ruotsalainen, 2024). Together, these threads paint a picture of a space unified by resentment towards authority and outsiders, expressed with a characteristically harsh tone (Al-Zaman, 2024; Chen et al., 2024; Sato et al., 2024; Tsugawa & Ohsaki, 2015).

The temporal patterns suggest that negativity on Talk.jp is not constant but is rhythmically tied to both external events and the typical life routines of its users. The event-driven spikes in daily negativity indicate that while the platform has a baseline level of toxicity, specific triggers (whether news events or controversial internal discussions) can rapidly intensify user anger. This suggests a reactive, rather than a continuously degrading, environment. The weekly pattern, with its mid-week peak on Wednesday, defies the common “Monday blues” trope and may instead reflect an accumulation of work-related stress that is vented online. The decline in negativity into the weekend supports this interpretation. This finding aligns with broader research on emotional well-being, which has identified dips in mood mid-week (Keller, 2018). Most tellingly, the diurnal cycle reveals a “human rhythm” to online toxicity. The peaks during work and commute hours suggest that users may be posting during moments of heightened daily stress. The subsequent drop in negativity during evening and nighttime hours corresponds to periods of rest and relaxation. This strongly supports the conclusion that online toxicity is not an isolated phenomenon, but is deeply influenced by the pressures and contexts of users’ offline lives (Chatterjee et al., 2020; Firth et al., 2024; Rasmussen et al., 2020).

The second research question asked how platform architecture, specifically the ikioi system, influences the concentration of toxic content. The findings in Table 1 provide a clear answer. The methodology employed targeted threads with high ikioi, meaning those with the most visibility and engagement on the platform. This analysis reveals that these high-visibility threads are the primary loci of toxic discourse. For example, the threads on “Discriminatory Signage” and “Anti-Japan Hate in China” had the highest percentages of highly toxic comments (5.7% and 4.9%, respectively). They were high-ikioi threads at the center of the board's attention. This demonstrates a direct link between the platform's core engagement mechanic and the amplification of toxicity. The ikioi system, by rewarding rapid and controversial engagement, structurally ensures that the most antagonistic and hateful discussions are also the most visible, directly answering RQ2. The finding that the user-driven ikioi system propels the most toxic threads to prominence provides a powerful case study for the “angry by design” thesis (Munn, 2020). Unlike platforms driven by corporate algorithms, Talk.jp shows how even a decentralized, user-directed mechanism can structurally reward and elevate antagonistic content, creating a self-reinforcing cycle of outrage. This dynamic is further nuanced by the general cynicism observed; direct attacks on specific politicians were less common than a broader anti-establishment mockery, a finding that aligns with Kaigo's (2013) work on the nonpartisan nature of online disdain in Japan.

Overall, the Top 10 threads list shows that major news events and divisive socio-political issues are flashpoints for toxicity on Talk.jp. External triggers (like a war) or provocative news (like discriminatory incidents and controversial quotes) quickly become rallying grounds for users to unleash negative sentiments. This finding is consistent with observations on other platforms: spikes in online toxicity have been recorded around elections, terrorist attacks, or inflammatory news articles on Facebook and Twitter as well (Aleksandric et al., 2022; Guimarães et al., 2020). Notably, the results mirror those of Salminen et al. (2020), who found that topics such as racism, conflicts, and war had higher average toxicity in comments, whereas topics like science or arts had lower toxicity. Talk.jp's user base might be different from an English-language news site's commenters, but human nature in reacting to contentious topics shows parallels.

The analysis of the distribution of perspective API TOXICITY scores addresses the prevalence and nature of hostile discourse on Talk.jp. The findings show that while the most active threads are broadly negative in sentiment, overtly toxic comments are a minority phenomenon. Within the sample of 10,004 comments drawn from these high-negativity threads, only 2.5% (∼250 comments) had a toxicity score above the 0.8 “highly toxic” threshold. This indicates that roughly one in every forty comments is extremely toxic, supporting the argument that severe toxicity is concentrated in a small fraction of content rather than being a widespread behavior. This aligns with findings on other platforms, where a small minority of content often accounts for a disproportionate amount of the overall toxicity (Guimarães et al., 2020; Mall et al., 2020).

However, the low prevalence of highly toxic comments does not fully capture the mechanics of hostility on the platform. A deeper look at the distribution of scores (Figure 7) reveals a more complex dynamic. The chart is heavily right-skewed, with a dominant peak near the low end (scores < 0.2), confirming that the vast majority of comments are not abusive. The long tail, while representing a small number of comments, is the output of a “loud toxic minority” whose severe hostility can disproportionately poison the perceived tone of a discussion.

This dynamic is central to the platform's “structural toxicity.” The shape of the distribution points to a “slippery slope” where users can gradually escalate from normative disagreement (the main peak) into more antagonism (the shoulder and tail). The platform's danger is not simply that 2.5% of its content is toxic, but that its structure facilitates this escalatory path, allowing the “loud minority” to constantly emerge from and sow hostility within otherwise standard discussions. The environment is therefore structured not just to tolerate, but to continuously generate pockets of severe antagonism.

Conclusion

This study investigated the production and amplification of toxicity on the Japanese anonymous textboard Talk.jp, moving beyond user-centric explanations to focus on the platform's architecture. The analysis yielded three key findings. First, toxic discourse on the platform's most active news board is not random, but coalesces around predictable and polarizing socio-political themes such as xenophobia, economic anxiety, and misogyny. Second, the platform's core engagement mechanic (the user-driven ikioi ranking system) was shown to be a structural driver of toxicity, systematically amplifying the visibility of the most antagonistic and controversial discussions. Finally, the findings revealed that while negativity is pervasive, highly toxic comments are a concentrated phenomenon, representing only a small fraction of the total discourse.

The central take-home message of this study is that platform design is not a neutral container for speech but an active force in shaping it. The case of Talk.jp demonstrates that even non-algorithmic, user-driven systems can be “toxic by design,” creating a structural incentive for outrage that is just as powerful as the corporate algorithms of mainstream social media. This has significant implications. For platform designers and policymakers, it underscores the need to focus on architectural interventions, such as rethinking engagement metrics that reward conflict, rather than relying solely on reactive content moderation. For researchers, it highlights the importance of analyzing the specific mechanics of platforms to understand why toxic discourse flourishes.

Limitations

This study has several limitations that should be noted. The prevalence of slang, irony, and sarcasm in anonymous textboard discussions can pose a challenge for automated tools, potentially leading to false negatives or positives in toxicity scores. Context-dependent insults may be overlooked by the Perspective API, which, like any AI model, carries biases from its training data (Hosseini et al., 2017). Additionally, this research focused on the “News Plus” board, where socio-political toxicity is likely concentrated; these findings may not generalize to other, more casual boards on Talk.jp. Future research could expand this analysis across different sections of the platform to develop a more holistic view.

Footnotes

Acknowledgments

The author gratefully acknowledges the support of The Telecommunications Advancement Foundation (TAF) for providing a travel grant that enabled the presentation of preliminary findings from this research at the 75th Annual International Communication Association Conference.

Ethical Statement

This study was conducted in accordance with ethical guidelines for internet research. The research relied exclusively on observational data from Talk.jp, a publicly accessible online forum. To protect user privacy, all data were handled in aggregate, and no personally identifying information was collected or stored. The user IDs analyzed are temporary, session-based identifiers assigned by the platform, not by the researcher. As the study was based on publicly available data with no interaction with users or access to private information, the research did not require formal review by an Institutional Review Board (IRB). For the same reasons, a formal informed consent process was not required, as users post content to a public forum with an implicit understanding of its public nature. All stages of data collection, analysis, and reporting were guided by these ethical considerations to ensure the anonymity and respect of the platform's users.

Funding

The author disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by The Telecommunications Advancement Foundation (TAF) (Overseas Travel Expense Grant, February 2025).

Declaration of Conflicting Interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

The dataset generated and analyzed for this study is available from the corresponding author upon reasonable request.