Abstract

The proliferation of artificial intelligence (AI) technologies is affecting public service delivery and governance in Africa. There has been increased interest in the legal and ethical aspects of these initiatives. However, at the center of these AI projects is the communication question, in particular, how governments frame AI, communicate about the projects, and engage citizens and other stakeholders in their design and implementation. The communication question is even more pertinent in a digital environment embedded in Western-centric techno-frames. A synthesis of literature shows that communication systems in African public service are embedded in colonial legacies of poor technology infrastructure, inadequate internet access and digital literacy, and language exclusion, among others. Improving communication with citizens in the context of AI-driven public service requires a focus on incorporating African voices into the development and implementation of digital systems to combat and overcome the above-mentioned barriers. Through a decolonial perspective, including drawing insights from two cases: Mbaza Chatbot (Rwanda) and Masakhane NLP project (Pan-African), this paper develops a decolonial framework to guide communication about AI, especially in public service delivery.

Introduction

Governments globally are increasingly incorporating artificial intelligence (AI) into public service delivery, albeit at different paces and magnitudes, with the West in the lead. Countries like Estonia, Germany, and France use blockchain technology to manage migration processes (EMN-OECD, 2022). In the United States, an automated risk assessment system is used by the Judiciary to determine bail and sentence limits (McKay, 2020). These initiatives have not been without criticism. McKay (2020), for instance, argues that since algorithms of AI tools like COMPAS used for automating judicial decisions do not provide adequate information on individuals being assessed but rather provide group data, they reinforce existing racial and gender biases undermining the principle of individualized justice.

Despite these persistent risks and ethical challenges, dominant discourses on AI, especially in government policy documents and legacy media both in the Global North and Global South frame AI as “efficient,” “modern,” and “innovative,” summarized under the banner of “AI for development.” This is problematic in various ways. First, it depicts AI as a neutral tool essential for efficiency and governance (Couldry & Mejias, 2019), thus obscuring the power dynamics inherent in the development, use, and regulation of technology. This demonstrates how governments and corporates could depoliticize technology to achieve their selfish ends. This is especially problematic for the Global South, which is grappling with many neoliberal and neocolonial challenges, including digital inequalities, inadequate infrastructure, data coloniality, and algorithmic control (Zuboff, 2019). Moreover, Africa's adoption of AI follows Western-centric guides that exclude local realities, including communicative ones (Birhane, 2020). The result is narratives deeply embedded within the modernization discourses where the success of AI initiatives is often measured by technocentric metrics of efficiency, accuracy, and the extent of uptake and integration of technology while leaving out contextual relevance (Birhane, 2020; Westenberger et al., 2022). Importantly, “by focusing solely on technical metrics, we ignore who benefits from the system and who is harmed or excluded” (Couldry & Mejias, 2019, p. 28). In this sense, the signification of development as it relates to AI adoption in service delivery, especially in Africa, must be interrogated.

In addition, a modernization discourse means a diffusion of an innovation communication framework; the framing of local communities as passive recipients, suppression of community engagement and feedback, impeding local ownership, and alternative communication methods (Couldry & Mejias, 2019; Manyozo, 2012). In Sub-Saharan Africa, this communicative imbalance is even more pronounced. The region often serves as a testing ground for AI technologies developed elsewhere, frequently funded by international development organizations and multinational tech firms (Birhane, 2020). The narrative of “AI for development” is again put to question.

Against this backdrop, this research asks the question: In view of AI-driven risks and existing gaps, what would a decolonial framework for communicating AI in public service delivery in Africa entail? Premised on the definition of communication as shared meaning (Craig, 1999), it develops a communication framework that advances principles of inclusivity, epistemic justice, and accountability. The framework also grounds governance models in African epistemologies and cultures to enable countries across the continent to be active and equitable players in the AI ecosystem.

Methodologically, this paper provides a descriptive case analysis of two instrumental cases: Mbaza Chatbot (Rwanda) and Masakhane Natural Language Processing (NLP) project (Pan-African). The cases provide insight into AI governance in Africa through a decolonial communication perspective on dominant frames that shape AI-driven public service delivery in Africa.

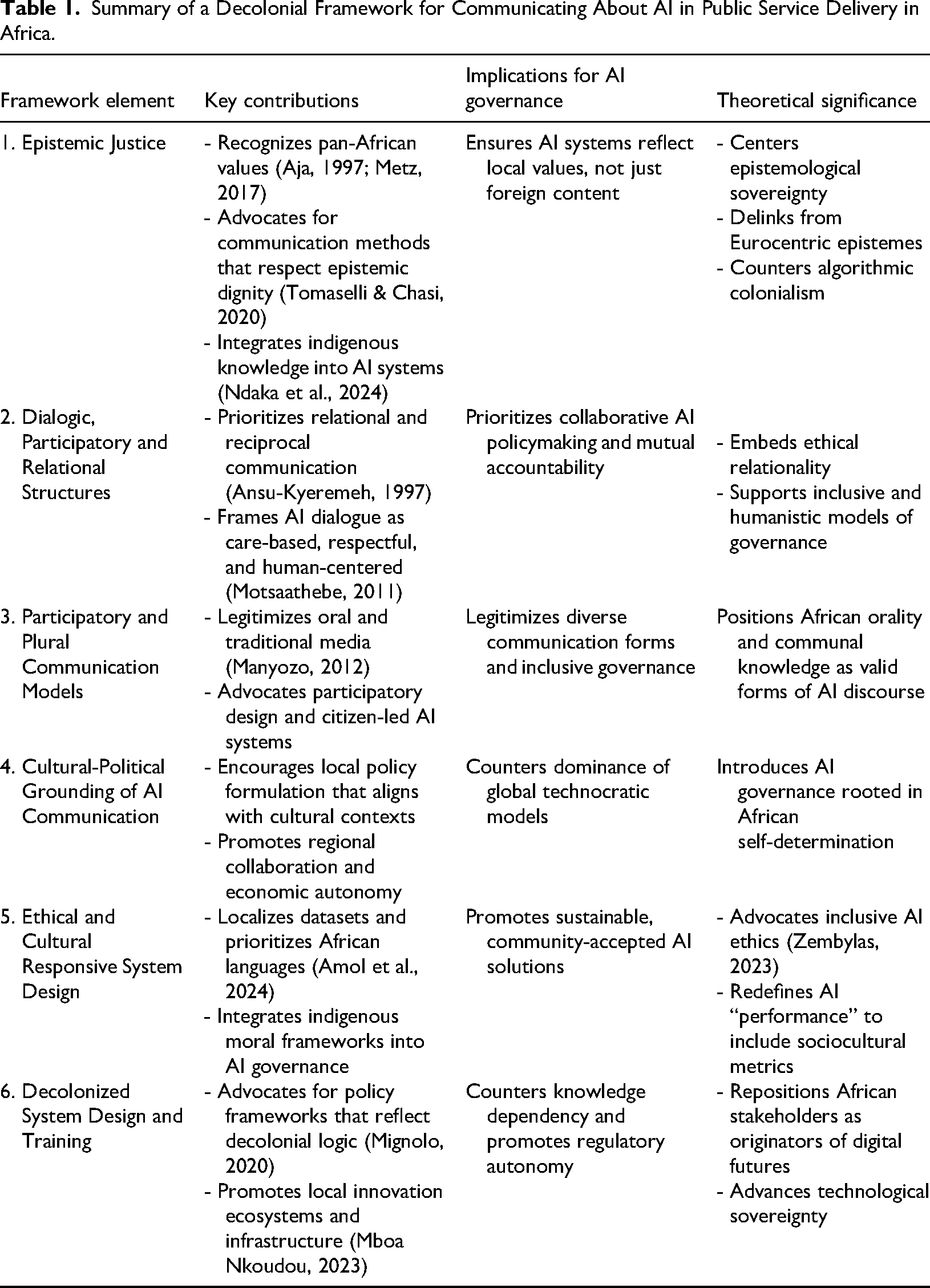

Summary of a Decolonial Framework for Communicating About AI in Public Service Delivery in Africa.

AI as Development: Interrogating a Modernization Discourse

African governments increasingly frame Artificial Intelligence (AI) as a transformative tool for development. National AI strategies in Kenya (2023), Nigeria (2021), and Rwanda (2022) echo a modernization discourse, suggesting that AI will enhance productivity, efficiency, and national progress. Rwanda's AI strategy also describes AI as having the potential to “dramatically improve lives” and accelerate the country toward development goals. Similarly, South Africa's AI policy (see Department of Communications and Digital Technologies, 2024) emphasizes trust and accountability, yet lacks a robust framework for citizen engagement.

The potential of AI public service delivery to contribute to Africa's socio-economic status is not in question, what is problematic is the techno-centric Western lens through which states make sense of and consequently communicate about AI initiatives. A modernization discourse is consistent with a top-down non-participatory communication framework. Such frameworks often fail to account for local realities such as the varying education levels among citizens and level of financial inclusion within a nation (Hassan, 2023). Statistics show low broadband penetration rates in African countries such as Nigeria (39%) and South Africa (58%) (Abdulkareem, 2024). Unequal access to internet and internet connectivity is a challenge for communication between citizens and the public service. Older and marginalized populations may not have adequate digital skills to properly utilize the platforms or even be aware of their presence (Nalubega & Uwizeyimana, 2024).

By applying a Western framework to AI-driven public service, it follows that these projects use imported logics, assumptions and priorities, at the exclusion of the socio-cultural and epistemological contexts of the communities they purport to serve. From a cultural communicative perspective, the privileging of dominant Western languages in AI systems perpetuates linguistic imperialism and semantic biases that reproduce colonial stereotypes against the Global South (Maina et al., 2024). Trained in Western datasets, the AI systems also constitute representation erasure as observed in automated facial analysis algorithms and which fail to accurately identify faces of individuals from phenotypic subgroups (Buolamwini & Gebru, 2018). As an attempt to address contextual gaps, Google's Uliza Llama is trained with Kiswahili tokens, allowing it to be culturally and linguistically sensitive to local languages and needs (Amol et al., 2024). However, the overwhelming absence of African languages in the mainstream language model-building systems is a striking exclusion.

AI ethics is also an area of communication that decoloniality debates contribute to. In most societies, ethics is embedded in culture, including how people communicate. However, mainstream literature on AI ethics is largely framed within Western philosophical viewpoints (Adams, 2021), which prioritize the individual as an agent. These standards are limited, as they frequently fall short of aligning with the communication methodologies in African contexts. Considering that the African system of ethics and communication is more dialogic, spiritual, and collectivist-oriented (Manyozo, 2012; Motsaathebe, 2011). In the case of smart city developments in South African municipalities, Kimari et al. (2023) found that alienation from the collection of personal data creates a sense of AI technologies being imposed and ultimately seen as harmful, thus undermining trust in the value proposition of these technologies within South African society.

Additionally, Africa's digital economies remain vulnerable to external exploitation because most of the countries do not have the legal infrastructure to govern AI and other digital platforms. Only 36 out of 54 African countries have established data protection laws, and only 26 have implemented a data protection authority (Salami, 2024). This leads to vulnerabilities for privacy breaches, data extraction, cybercrime, and the inability to bargain in the international market (de Souza et al., 2024). Even when laws are in place, they are usually not implemented in a way that would overrule the power that many global corporations hold, which can render laws and implementation processes ineffective.

These gaps reflect how top-down approaches to technology continue to reproduce forms of exclusions, including communicative ones. There is certainly a need for a communication framework that not only recognizes these exclusions but also strives to address them.

Case Analysis: Mbaza Chatbot and Masakhane NLP

Mbaza Chatbot Initiative (Rwanda)

Developed by the Rwanda's Ministry of ICT and Innovation in collaboration with Deutsche Gesellschaft für Internationale Zusammenarbeit (GIZ), Mbaza is a flagship AI-powered conversational chatbot meant to automate public service delivery, especially in health (Gwagwa et al., 2023). The chatbot uses natural language processing (NLP) and machine learning to improve user interaction over time. Operating in both Kinyarwanda (central language) and English, it is accessible mainly through Rwanda's e-government portal, Irembo, which enables it to pull up-to-date information from public databases. It can also be accessed via other digital platforms such as WhatsApp (Gwagwa et al., 2023). Lauded for its role during the Covid-19 pandemic, the official sources depict it as a tool to democratize access to information, enhance service delivery efficiency, and foster citizen trust in state institutions (GIZ Rwanda, 2022).

By centralizing Kinyarwanda, the official language used by almost 99.6% of the population in Rwanda, the chatbot, addresses the epistemic dominance of global languages like English and French in AI systems (Gimpel & McBride, 2023). This not only enhances accessibility and relevance but also strengthens the chatbot's potential to serve as an inclusive public service solution in the country. Still, questions arise regarding the replicability of this localized approach in other African nations that may face resource limitations, especially since Rwanda was supported by a foreign partner to develop Mbaza.

When first deployed, the chatbot faced many technical limitations, with Kinyarwanda speech-to-text tools scoring a particularly high 60.1% word error rate (WER) (Gimpel & McBride, 2023). The iterative refinement process reduced this WER to 18.9%, thus showing the importance of iterative improvement in AI tool development in African contexts. The ability of the chatbot to respond to complex and nuanced voice queries is however still low (Rutunda et al., 2025). Engaging the open-source SpeechBrain community demonstrates the importance of drawing upon both local and global expertise to address the needs of under-resourced language technologies, while iterative refinement informed by the active participation of Rwandan communities highlighted the necessity of localization for improved technical precision (Rutunda et al., 2025). Nevertheless, while these technical improvements enhanced usability, the initial high error rates reveal that language models continue to struggle with the complex properties of African languages.

The Mbaza Chatbot prioritizes modalities of use preferred by its end-users, predominantly frontline community health workers (CHWs). The chatbot delivers its services in text and voice formats for maximum accessibility and inclusivity. Nevertheless, users using the voice option achieved an average satisfaction of 97.5% with the chatbot (Rutunda et al., 2025). These user-preferred modalities were developed in conjunction with Rwandan CHWs and allowed access to a larger range of Rwandans, particularly those in rural areas with varying digital literacy levels (ibid). It demonstrates how participatory design can ensure that digital services meet end-user needs rather than being imposed from the outside.

The challenges of the development of the Mbaza Chatbot reveal how much decolonial work still needs to be done. This project still lacks in-country AI talent and enough quality and diverse datasets of African languages to advance the project as much as possible (Gimpel & McBride, 2023). Additionally, infrastructure must also be considered, as barriers in rural areas prevent full implementation in all provinces within Rwanda. If there are limited opportunities for AI training and development and unreliable internet access and electrical power, many rural communities in Rwanda and other African countries will be unable to properly access and use localized AI tools to address public service delivery gaps. By addressing these infrastructural problems, the project could be adopted and used to benefit all Rwandans, regardless of their area of residence.

The case study highlights not only the significant benefits to be had from improving technology through localization of linguistic resources, user-preferred modalities, and iterative refinement led by local communities, but also demonstrates the constraints that must be addressed in order to decolonize this sector in the continent.

Masakhane NLP (Pan-African)

Masakhane is a community-driven, Africa-centered initiative within natural language processing (NLP) that directly contests the prevailing paradigms of data colonialism by promoting African languages and researchers in NLP development. The inclusion of 144 contributors from 17 African countries (Orife et al., 2020) illustrates this initiative's decolonization efforts, challenging traditional Western-centric norms and dominance in the field.

Though not government led, the project's attributes can be leveraged by African governments in various public sectors to not only improve service delivery but to ensure ownership and trust in AI initiatives.

To begin with, Masakhane's decentralization strategy shifts power away from Western-centric institutions, countering their dominance in defining research agendas and development goals. By prioritizing African participants, this initiative empowers African researchers and disrupts established knowledge hierarchies (Yan & Xu, 2024). However, the question remains whether this model, as a bottom-up strategy, is robust enough to address existing structural inequalities, such as funding biases that benefit Western institutions.

The diversity of participants enhances the relevance and applicability of NLP models by including a range of African languages datasets and ensuring contextual understanding of their semantic nuances (Orife et al., 2020). Masakhane's prioritization of African languages as a counter-hegemonic effort directly addresses the issue of linguistic dominance in the creation and use of AI technology. It therefore promotes African-centric technology that values local community needs. However, the enormous variety of distinct languages spoken across Africa and the limitations that arise when this variety cannot be supported by current levels of computational power. Moreover, its dependence on open-source collaboration and volunteer participants may limit the initiative's ability to scale, highlighting resource limitations that demand further intervention.

Masakhane's datasets and resources are openly published to help redress the relative absence of African language datasets. By producing a large collection of novel datasets, including machine translation and named entity recognition datasets for 28 African languages, this initiative provides opportunities to develop a wide variety of local NLP tasks for a global audience (Orife et al., 2020; Yan & Xu, 2024). However, dataset creation requires constant policy revision at the regional and global scales, necessitating greater international effort in language normalization. Masakhane's use of transfer learning represents an empirical finding with real-world impacts on the development of models for languages with limited data.

The human validation and annotation processes of the Masakhane network guarantee the quality and contextual relevance of the datasets created within its ranks (Ravindran, 2023). However, these activities are voluntary and therefore subject to burnout in the context of under- and un-paid labor, potentially limiting the dataset's value. Policy reform and economic intervention need to consider formalizing the labor force that volunteers to create these tools.

Masakhane's limitations and shortcomings, in spite of all the positive implications of a distributed team, reveal how institutional, structural, and economic inequities still impact African AI endeavors. Funding for research and open-source projects on the African continent still falls short of equitable levels when compared to the funding that is provided on the European and American continents (Yan & Xu, 2024; Orife et al., 2020). However, this can only happen if the world starts to recognize African scientists as leaders in global research standards rather than just collaborators from the global periphery.

Developing a Decolonial Framework for Communicating about AI in Africa

While Africa is not homogeneous, literature shows that there are certain values that cut across communities (Aja, 1997; Metz, 2017). It is these values, and the case analysis that I rely on to develop a decolonial framework for AI-driven public service in Africa, not as a prescription, but as a starting point through which stakeholders can imagine an inclusive communication structure.

Mignolo (2011) reminds us that delinking from coloniality requires a focus not only on the content of conversations but its terms. This means that the means of communication is just as important as the end. We therefore must interrogate how what it means to integrate AI into public service delivery is produced, shared and owned. This I argue can only be achieved first, through communication methodologies that seek epistemic justice. For Tomaselli and Chasi (2020), public communication for social change in Africa should be rooted in ethics and care and restore the dignity of citizens. In other words, communication should be healing, especially for a continent that has long suffered exploitation, exacerbated in the digital age. Africa, despite advancing technologically, cannot develop without embracing indigenous knowledge systems as a way of addressing systemic exclusions of language, culture, and even technology.

In a decolonial AI model for public service delivery, therefore, communication channels and processes need to support a framework of communal and indigenous knowledge systems. Ndaka et al. (2024) advocate for community-based governance mechanisms with trust, transparency, and open communication that begins from the point of system design with would-be users. Indigenous knowledge systems also provide effective solutions to some technology governance challenges, but only if effectively integrated into these systems (Ndaka et al., 2024). Mboa Nkoudou (2023) further suggests that to move past coloniality, Africans must develop their technology applications. This means that AI systems would be local products as is the Masakhane NLP case, and not imports, thus increasing adoption of its applications and promoting creativity and innovation, as well as decreasing dependency.

Second, communication structures for engagement on AI-driven service delivery processes and systems should be dialogic and relational. This is a move from the Western instrumental and transactional technocratic models of AI governance. Motsaathebe (2011) presents Ubuntu philosophy, rooted in human dignity, as a way of ensuring communication is reciprocal and respectful. Again, Tomaselli and Chasi's (2020) ideas on African solidarity as an ethical relationality that requires listening, care, and a shared sense of responsibility among community members contribute to this framework. This thought is also expressed by Ansu-Kyeremeh (1997, p. 105), who posits that “good communication is not just the expression of personal opinion, but what builds solidarity in a group.” Developing a communication framework based on African concepts, such as Ubuntu, ensures that AI systems are inclusive and managed humanely with responsibility toward the parties involved. This ensures a positive reception, usage, trust, and sustainability of AI projects (Ndaka et al., 2024).

Third, an AI public service delivery communication framework should incorporate plural and participatory methods. Thus, the recognition of oral media forms like storytelling and rituals as legitimate communication modes (Ansu-Kyeremeh, 1997; Manyozo, 2012). Plural and participatory models not only ensure feedback and afford agency to participants but also dismantle power hierarchies (Manyozo, 2012). Public forums that provide a platform for all to participate freely ensure the marginalized demographics are involved. The example of the Mbaza Chatbot, in particular, how the model adopted local feedback from Rwandan CHWs and community members to optimize its voice interface for local use, underscores the efficacy of participatory design principles. In addition, developing digital interfaces of public service applications in local languages can encourage users from the local populace to access digital services, thus improving trust in government services (Akpojivi, 2024).

Fourth, a communication framework for AI-driven service delivery in Africa should be situated within local cultural and political contexts and not global. Communication provides an opportunity to resist and negotiate existing global power hierarchies translated into AI systems. For example, by moving towards community-informed governance models and abandoning market-led governance and top-down governance of AI, there is greater assurance for addressing challenges of equity and sustainability. This requires African countries to rethink and develop economic models that serve the communal interest, as well as promote stronger collaboration among them to overcome challenges associated with domination by corporations that are controlled from elsewhere and are exploitative of African markets and economies.

Additionally, system design and model training need to reflect diverse ethical and cultural experiences. For example, the success of algorithmic systems is defined and measured by its overall performance. Varshney (2024) cautions against AI design that is guided and influenced only by performance, as this only reflects particular populations and worldviews. The ethical and cultural considerations for designing socially responsible AI systems need to integrate various African cultural belief systems. Decoloniality advocates and scholars point to the imperative of including educational and participatory epistemologies as a significant intervention in tackling algorithmic coloniality to foster digital sovereignty (Zembylas, 2023). Such strategies, when guided by African values and pedagogy, enhance digital agency and address the digital exclusion problem while creating inclusive and context-specific AI systems with enduring legitimacy (Varshney, 2024). In comparison to other COVID-19 chatbots or medical solutions implemented across the region, the Mbaza Chatbot’s design for the localized context shows that the incorporation of local knowledge in technology design leads to more successful implementation.

Finally, Africa needs to overhaul its AI governance through a decolonial lens. Although the concept of decolonized AI governance has been accepted globally and in Sub-Saharan Africa, the extent of the acceptance has yet to yield tangible results in terms of the formulation of decolonization-responsive national policies. Ayana et al. (2024, p. 2) found that out of the 10 countries in Sub-Saharan which have AI national strategies and frameworks, 80% are “decolonization-aware” and one is “decolonization blind.” However, most of the documents do not reflect the concept of decolonization as the rationale for adopting them. As an outlier within the SSA region, Rwanda's proposed strategy does have significant responsiveness in terms of articulating the need for locally adaptive and decolonized governance policies. According to Ayana et al. (2024), the limitation to the operationalization of the concept is due to constrained regulatory capacity, as well as by adopting general regulatory templates or standards from other global regions, such as the GDPR standards from the EU, and limited incorporation of the decolonization of AI policies or frameworks across countries.

Some practical approaches for decolonizing AI include localizing and prioritizing AI training datasets in African languages and contexts, as well as re-skilling educational institutions in AI-based technologies to build an educated workforce equipped with the knowledge and skills to develop appropriate systems in African languages and that address problems peculiar to Africa. This work has already begun, evidenced by the already existing data corpus in Africa language as documented by Amol et al. (2024). However, there is more to be done in terms of translating the data into development of African AI systems and digital technology in general. Another is for Africa to support infrastructure, technologies, and ecosystems for AI that are independent from Eurocentric dominance and corporate control, to support public services and address societal challenges. Mboa Nkoudou (2023) suggests these approaches enhance legitimacy and sustainability.

African governments and other stakeholders need to be proactive in embracing a decolonized AI governance agenda that will position Africa as a knowledge creator, a policymaker, and an innovator in ways that challenge the epistemic dominance embedded into global digital infrastructures. This can be done by centering African languages, knowledge systems, and ways of innovation. In effect, African societies must be willing to assume the leadership of the global technology agenda by ensuring that African perspectives of digital transformation are given prime consideration in the development of technology and policy discourse around the world. Mboa Nkoudou (2023) posits that this also empowers local actors to innovate and lead in the AI ecosystem, including the creation of policy and standards, with the ultimate outcome being technological sovereignty. Community-driven data stewardship is one response to the historical underrepresentation of African languages. By relying on the community to collect, annotate, and maintain data resources for AI training as is the case of Masakhane NLP project, localized data contexts can be captured (Orife et al., 2020).

Table 1 below provides a summary of the decolonial framework of communicating AI:

Conclusion

The challenges of AI driven public service delivery can be linked to the way governments communicate with and about AI. This directly links to policy. The decolonial framework for communication developed addresses these policy gaps including embedding AI initiatives within local languages, communication methods and ethics. Mandating inclusive, multilingual AI policies to address the historical omission of African languages in technology systems for instance, is critical in addressing the historical and continued exploitation through technology, particularly as it relates to global AI development and the coloniality within it. The case of Kinyarwanda, integrated in Rwanda's Mbaza Chatbot, is an exemplar of the implementation of localized language policy in AI systems. Through employing a relatively significant amount of local language data specifically catered to the needs of Rwandans, the chatbot showcases one iteration of language-centered innovation. However, while it also challenges the existing power dynamic of global languages such as English, the infrastructural and state capacity needs that make such localized language inclusion possible in other nations vary drastically throughout the African continent (Gimpel & McBride, 2023). Consequently, localized, multilingual policies must take into account this diversity in state- and infrastructural capacity in order to be scalable to multiple local and cultural contexts across Africa.

Returning to the research question, this study demonstrates that real progress toward decolonized AI in public service is possible when local expertise, participatory design, and epistemic inclusions are central to the development, evaluation, and implementation of AI applications.

Footnotes

Funding

The author received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.