Abstract

This paper presents a critique of the argument that artificial intelligence (AI) has the potential to overcome social hierarchies and achieve equality. It contends that this position is misguided insofar as it commits a category error by confusing epistemic automatism with moral agency. This study challenges the separation of instrumental and moral rationality, as well as formal and substantive equality, to demonstrate how, despite popular imagination and representations of neutrality, AI functions to reify existing hierarchical systems while obscuring the range of human choices made about its design and deployment. The idea of AI as a leveler is a secular feat of metaphysical illusion in the tradition that Nietzsche criticized: projecting justice onto a bodiless future of technology. The paper argues that algorithmic optimization cannot replace normative judgment, ethical deliberation, and political action, which are necessary for true equality.

Keywords

Introduction

A nearly stereotypically utopian argument in contemporary discourse is the notion that artificial intelligence (AI) can transcend entrenched social hierarchies and usher in an era of unprecedented equality. That's an idea worthy of exploration, a conclusion made irresistible by the runaway proliferation of AI technology in all aspects of human life and the exponential absurdity that it can be found in everything that makes modern life what it is. This paper aims to evaluate critically the assertion that AI will naturally function as a leveling force in society. It postulates that this faith is grounded in a fundamental confusion between instrumental rationality—the cold, calculating efficiency of means to ends—and moral progress. This conclusion is reminiscent of the Frankfurt School's sustained critique of Enlightenment reason, as it is in its insulated technology that “Enlightenment reverts to mythology” (Horkheimer & Adorno, 2002, p. xviii).

Moreover, this techno-utopianism is informed by a metaphysical optimism—a kind of faith in technological salvation—that, as Morozov (2013) describes it, suffers from a version of “technological solutionism” (p. 5) that is neither epistemically warranted nor ethically defensible. This paper demonstrates how the understanding of parity, as articulated and realized in practice through unpacking the primary elements of the “equality claim,” often fails to grapple with the intricate social and political context in which AI is produced and applied. It also contends that these systems are not neutral and are capable of encoding and amplifying preexisting social biases, leading to what O’Neil (2016) has coined as “weapons of math destruction (WMDs)” (p. 3). As Noble (2018) contends, they are the “algorithms of oppression” (p. 15), causing systematic harm to depowered groups, especially women and people of color.

This oversight, Benjamin (2019) argues, may lead to a “New Jim Code,” in which the seeming neutrality of technology can perpetuate and even justify racial injustice (p. 6). Finally, a refusal to address these challenges runs the risk of imposing unhealthy results for genuine social justice and loose architectural structures of power and control, such as what Zuboff (2019) refers to as “surveillance capitalism” (p. 8), which consolidates rather than undermines oppressive formations.

Theoretical Methodology Context

Critical theory—especially as formulated by the Frankfurt School—provides a powerful critique of instrumental rationality and the way it shapes technology, including AI. Horkheimer and Adorno claim that instrumental rationality is preoccupied with efficiency and with means-ends thinking, neglecting to reflect on the ethical or social character of the end. This exclusion creates the risk that technological systems, such as AI, may operate as systems of domination and social control rather than as technologies for liberation (Chandran, 2016; Mesquita Sampaio de Madureira, 2009; Smulewicz-Zucker, 2017). These basic criticisms are enriched by postcolonial, feminist, and racial justice critiques that claim that the so-called neutrality of AI is a mask covering and perpetuating existing inequalities. Postcolonial theorists such as Menon (2023) and Ingram (2018) highlight the ways that digital neocolonialism in AI, as evidenced through exclusionary systems and biases, reproduces historical power imbalances, especially in the contexts of regions like Africa. This aligns with feminist and racial justice frameworks (Buolamwini & Gebru, in Marques, 2022; Perdomo, 2024), which emphasize that algorithmic discrimination extends beyond mere technical errors; it reflects systemic biases that often re-victimize, further oppress, and marginalize women and racialized groups (Perdomo, 2024; Eubanks, as cited in Perdomo, 2024).

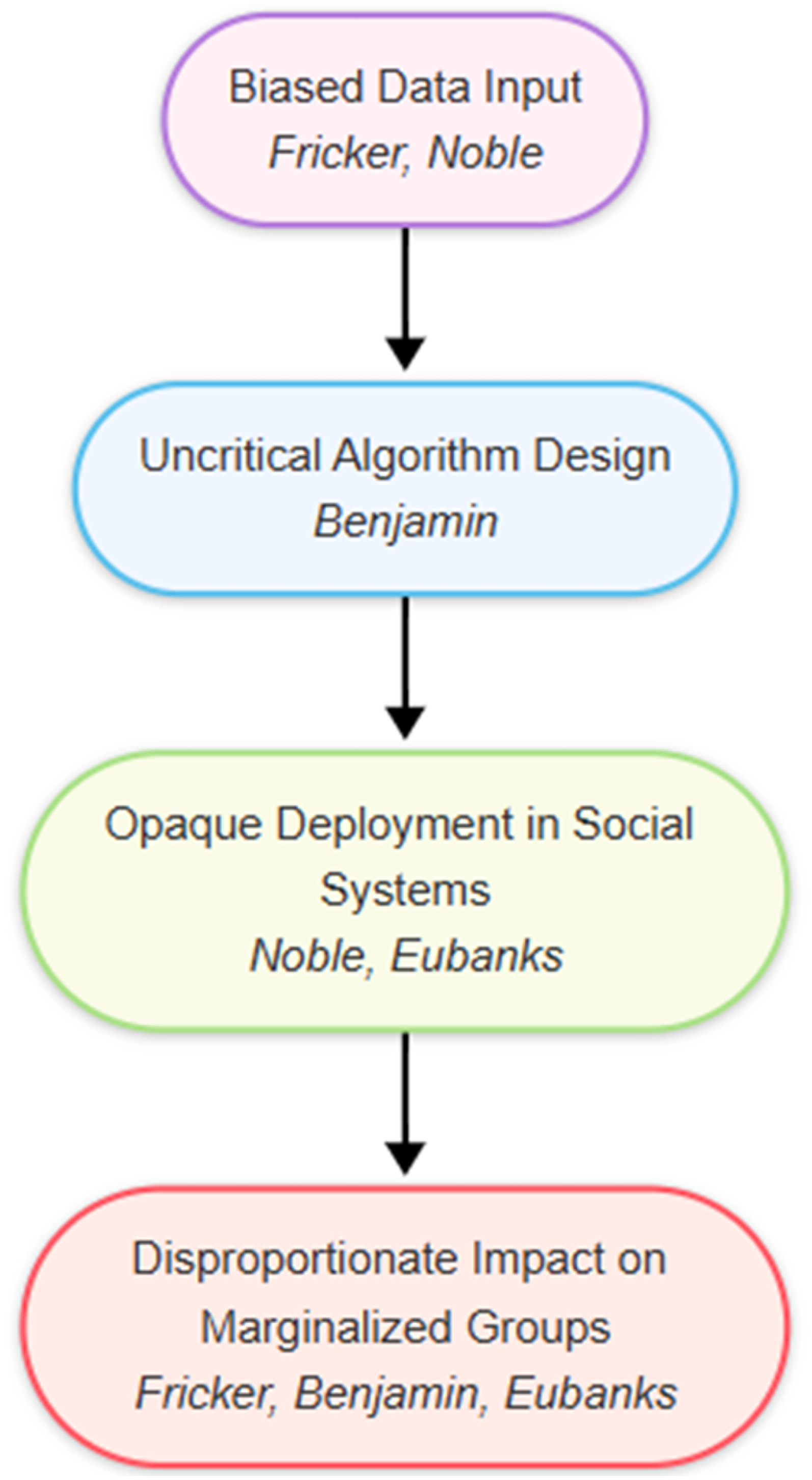

There is also a synthesis here of the critical, postcolonial, feminist, and racial justice lenses, and this is complemented by the concept of epistemic injustice. Fricker's (2007) analysis is particularly relevant to AI ethics, where what counts as important knowledge about AI development is often shaped by current social biases, leading to technology systems that systematically marginalize or distort a wide range of perspectives (cf. Perdomo, 2024; Rafanelli, 2022). A similar dose of skepticism of utopian aspirations for technological egalitarianism is offered in the Nietzschean caution that AI solutions may (cosmetically) be fairer but may also put a twist on or worsen the actual (imbalance of) power (Blau, 2020). Collectively, these positions provide a case for an ethically reflexive and inclusive stance toward AI and the necessity of intentionally acknowledging and addressing the embedded biases in AI to mobilize its technologies for social justice, as opposed to replicating cycles of domination and exclusion.

Literature Review

The diffusion of AI innovations has stimulated a subtle debate on whether knowledge-driven inventions will increase or alleviate social inequality. When used in labor markets, AI-based automation often causes job loss and wage polarization, the latter being detrimental for lower-technical-skills workers versus the ones with higher technical skills (Farahani & Ghasemi, 2024; Jia, 2024). Furthermore, bias can be encoded or amplified algorithmically in AI systems even in instances when they reinforce discrimination affecting vulnerable populations, leverage training data sets that reproduce and exacerbate societal inequalities, and embed them in algorithm-driven decisions, particularly in decisions such as hiring, credit scoring, and criminal justice (Alegria & Yeh, 2023; Ferrer et al., 2021; Özer et al., 2024). The inegalitarian distribution of AI-induced new things and lingering digital divides suggest that gains will remain skewed toward the wealthier regions and people, deepening digital divides between haves and have-nots and between haves and more-haves (Akter, 2024; Okolo, 2023; Tsvyk & Tsvyk, 2022). But AI could also improve the efficiency of social processes and equity of access to resources in domains like education, healthcare, and finance—but only if the offspring of AI's ethics are intentionally sought, and AI's governance is sought with them, and if the discrimination inherent in AI's algorithms is confronted (Farahani & Ghasemi, 2024; Zajko, 2022). New analysis underscores the need to diversify AI development teams and go beyond simply representation profiles to power redistribution as the lever for shaping socially just and locally relevant technological systems (Joyce & Cruz, 2024; Okolo, 2023; Zajko, 2022). The literature suggests inclusive governance/design must be intentional: technologies are defaults that risk repeating oppressive power and inequality dynamics, but with intervention technology can be part of moving toward equity and inclusion.

The incorporation of AI into labor markets has yielded significant sector-specific and demographic effects, exacerbating job polarization and increasing economic inequality. Empirical data indicate that AI-driven automation disproportionately affects low-skilled workers, resulting in a 23.4% reduction in positions within traditional middle-skill sectors, while simultaneously prompting a 31.7% rise in highly specialized roles associated with AI development and digital transformation (Brahmaji, 2024). The disparities in the distribution of these impacts across various industries are clear: on the one hand, industries such as finance and healthcare directly gain from AI in terms of increased efficiency and productivity; on the other hand, blue-collar manual jobs, especially in manufacturing and logistics, face a higher threat of displacement owing to automation (Song, 2024; Sultana et al., 2024). Second, heterogeneity based on demographics is stark: women and highly educated workers both experience higher probabilities of being displaced and of role complementarity (depending on the elaboration of job tasks and the sectoral exposure) (Pizzinelli, 2023).

The socioeconomic implications of such technological shift(s) are enormous, leading to the deepening of the divide between high-skilled laborers and low-skilled laborers and hence the increase in wage polarization, which is a notion highly concerning for low-income and middle-income countries not having full access to digital infrastructure and new forms of economic opportunities (Farahani & Ghasemi, 2024; Jia, 2024). Minority or vulnerable groups are particularly vulnerable to these potential threats, as they experience barriers in access to upskilling and employment, driving existing inequalities (Fan, 2024). In some domains, such as education and healthcare, while AI adoption can lead to more efficient and accessible services, it also has the potential to deepen digital and algorithmic divides in areas where access to technology and digital literacy are insufficient (Farahani & Ghasemi, 2024; Patil, 2025). Policy responses thus underscore the pressing need for proactive reskilling and upskilling programs to facilitate the movement of displaced workers to emerging jobs (Brahmaji, 2024; Fan, 2024). Such initiatives must be accompanied by just systems through which AI is deployed and access to training is made available (Güngör, 2025; Sultana et al., 2024). Effective exploitation of AI's transformational opportunity for inclusive growth will rely on robust ethical governance and proactively targeting policies to tackle adaptation gaps and prevent further marginalization.

The inequities generated by AI function in complex, nuanced ways and lend themselves to rich interdisciplinary critiques. Pioneering research by O’Neil (2016), Friedman (2005), and Noble (2018) explains how algorithms can reinforce historical injustices, in particular along lines of race and economy. Feminist, postcolonial, and critical race theories also reveal the epistemic injustices and silencing of non-dominant voices in the deployment of AI (Marques, 2022; Noble, 2018; O'Neil, 2016; Zajko, 2022). It has been empirically evidenced that AI systems are many a time biased owing to limited diversity among data or faulty learning policies, which has contributed to discrimination based on race, gender, age, and so forth (Fazil et al., 2024; Ferrer et al., 2021; Jain & Menon, 2023). These algorithmic systems increasingly act as gatekeepers in realms like credit scoring and policing, and in turn they work to reproduce digital discrimination and the larger social hierarchy (Ferrer et al., 2021; Zajko, 2022). Interdisciplinary critiques problematize the apparent agnostic stance of AI technologies and some fairness-enhancing interventions and emphasize the limitations of such interventions, calling instead for participatory design, focus group strategies, democratic deliberation, and justice-based governance (Marques, 2022; Weinberg, 2022).

The literature also points to sector-specific effects, such as job loss and wage polarization, that differentially affect lower socioeconomic status individuals. At the same time, however, it recognizes the potential for AI to mitigate inequities in areas such as healthcare and education if developed and governed responsibly (Farahani & Ghasemi, 2024). In spite of these insights, however, the field continues to be dominated by studies conducted in a Western, high-income setting and too little comprehensive inquiry from the Global South. This further highlights the necessity of more place-based and longitudinal, inclusive research that can help to contest entrenched power relations and structural injustices (Ferrer et al., 2021; Weinberg, 2022; Zajko, 2022). Overall, while AI poses risks and opportunities, ensuring equity will need researchers, developers, and policymakers to explicitly and from the outset prioritize fair, accountable, and human-centric design and governance of AI systems at all levels.

The Equality Claim: A Critical Examination

Advocates of the idea that AI can serve as a force for equality commonly make two central claims, often echoing the position of intellectual luminaries such as Friedman (2005), who suggested that technology flattens the world by making it possible to be shared or used regardless of where people live, what they do, who they are, and how much they make.

Expanded Access: AI enhances access to education, knowledge, and economic opportunities, promoting inclusivity for individuals from diverse backgrounds. Automated Objectivity: AI automates biased human judgment, potentially eliminating discrimination in areas such as hiring, lending, and the criminal justice system.

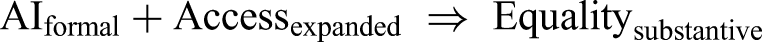

Despite their apparent rationality, these statements warrant additional scrutiny, particularly in light of their fundamental assumptions and inherent limitations. This is reflected in the following equation, which is symptomatic of the flawed logic that often underlies the so-called “equality claim”—and according to which algorithmic equality would imply social justice, as if each form of procedural equality were equivalent to socially desirable and acceptable results.

Interpretation:

AIformal → AI operating through formal, rule-based algorithms, characterized by its inherent logic and mathematical precision. Accessexpanded → Increased access to information, resources, or opportunities facilitated by AI technologies. Equalitysubstantive → Genuine, context-sensitive social equality that addresses historical and structural injustices (Figure 1).

Why Formal AI Access and Expanded Distribution Do Not Lead to Substantive Equality.

Equality Models: Formal versus Substantive.

First, the concept of Accessexpanded is insufficient. As the economist Sen (1999) has pointed out, justice is not so much about resources as it is about promoting real “capabilities”: the actual ability to realize what a person values. Access to a learning base is without meaning if one does not have the “capability” to use it based on factors such as prior education, available time, and cultural capital (p. 75). AI-powered access would more likely be a tool for those already equipped, magnifying discrepancies rather than leveling them.

Second, the notion of AIformal operating with objectivity is a dangerous fiction. Those systems are built on existing data, which is a “fossil record of past discrimination” (O’Neil, 2016, p. 21). An algorithm designed to predict job success using information from a company with a history of discriminating against certain types of successful workers will learn and then automate that discrimination, doing so under the guise of technical objectivity. As Benjamin (2019) observes, such systems can produce a “gloss of progress” over existing inequality, rendering it even more resistant to contestation (p. 54).

Therefore, the equation is not valid because it does not take power into account. Restricting forms of power determines who is a legitimate knower, as Fricker (2007) writes on epistemic injustice; power relations govern the identification of whose knowledge counts as valuable and who is a legitimate knower. We do not create an AI system that understands these power dynamics, so it cannot adapt. It will merely formalize and intensify them, not toward a justly imagined equality, but toward the efficient and encrypted perpetuation of injustice. Equality worthy of the name requires a deep sense of historical and contextual understanding, as well as an awareness and appreciation for the exercise of power, things that cannot easily be captured in a formal algorithm.

The Category Mistake: Moral Agency and Algorithmic Optimization

The fundamental mistake lies in assigning moral properties to an entity that lacks them. This term was first employed by Ryle (1949) to explain mistakes such as asking for the whereabouts of “the university” after having been presented with all the colleges, libraries, classrooms, and offices; the university is not another building in the set but rather a description of how the listed building is arranged (p. 16). Treating AI as a moral agent is a “category mistake” in the language of metaphysics because agency is not a property that an algorithm can possess; it is in a different conceptual category altogether.

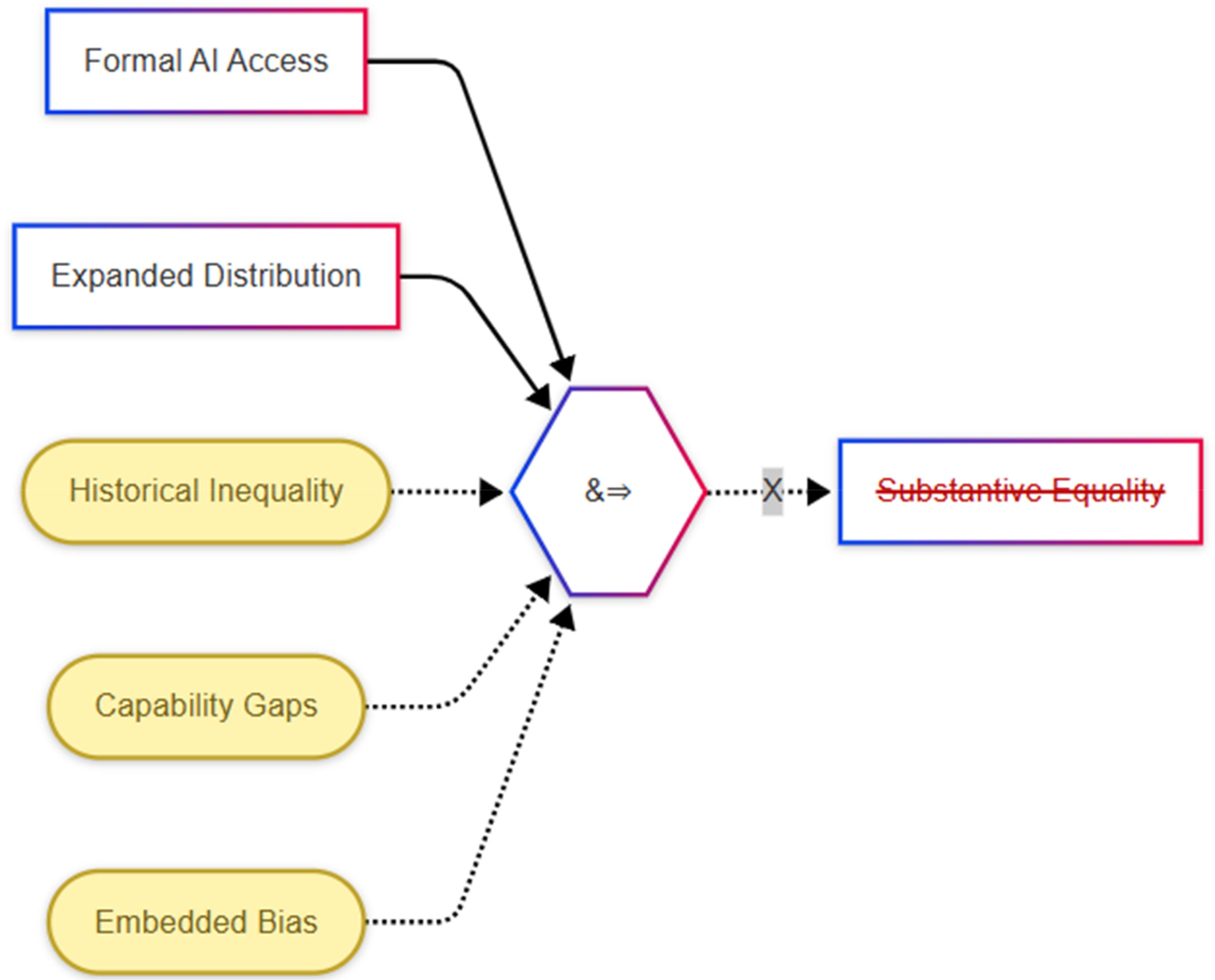

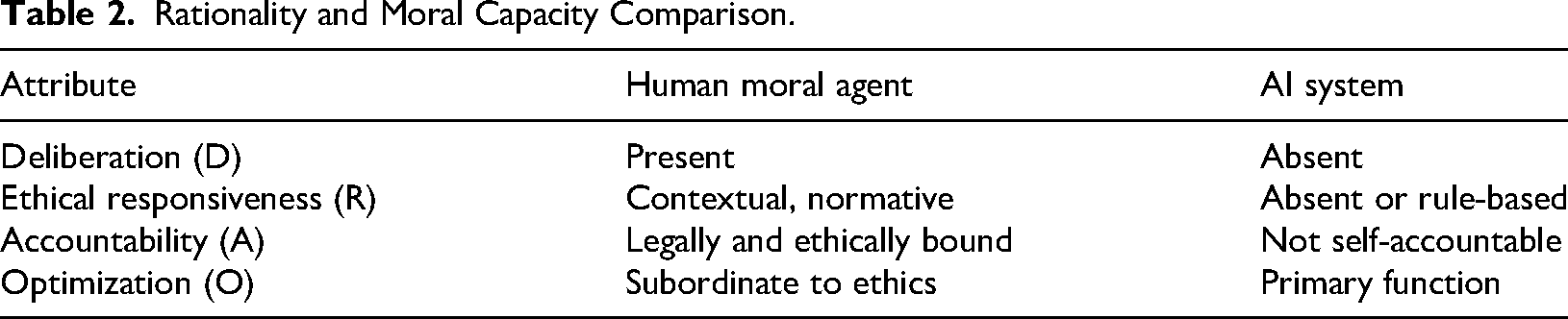

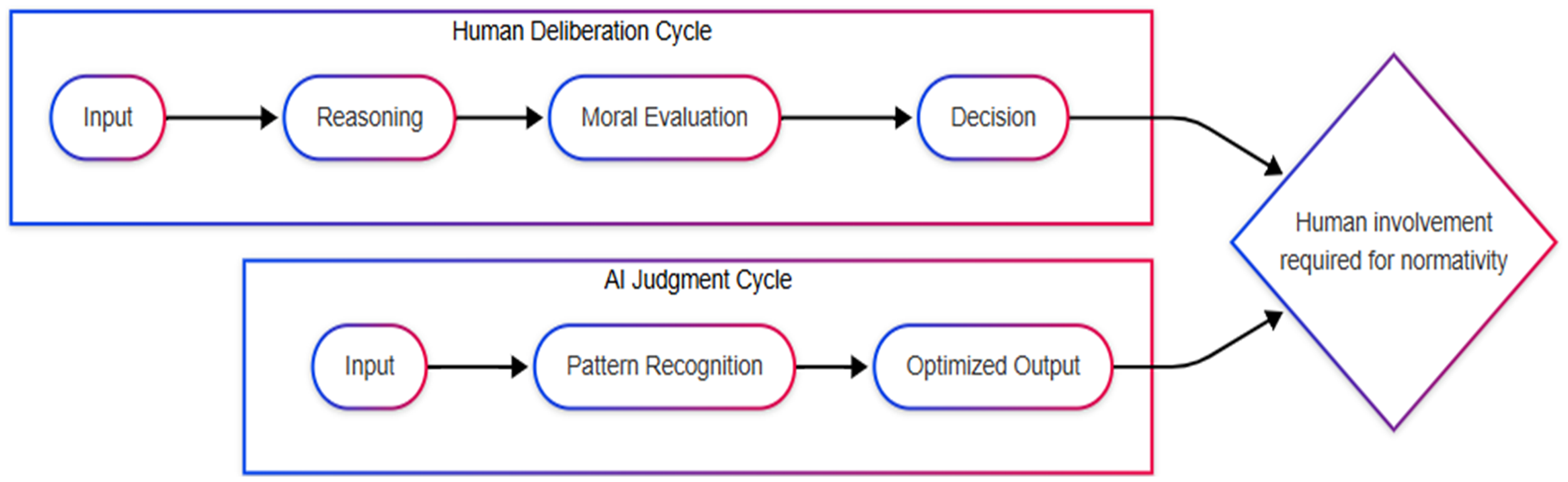

Moral agency comprises a system of capacities involving, among other things, the ability to reason about ends (Wertrationalität, or value-rationality), not just optimization of means (Zweckrationalität, or instrumental rationality), which is a distinction that sociologist Max Weber (1978, pp. 24–26) once drew. It also demands that there exist a first-person, phenomenological experience—consciousness, feeling, and being subjectively involved in the world. Indeed, as the philosopher John Searle (1980) famously demonstrated in his “Chinese Room” thought experiment, a system can manipulate symbols in a manner consistent with a formal list of rules (the syntax) to generate the correct answer without necessarily understanding the sense (the semantics) in which those symbols are meant. However, no matter how advanced they are, AIs all function at the level of syntax. They are designed to maximize particular output possibilities, given formal or informatic input, and are not endowed with semantic understanding, authentic moral reasoning, or ethical judgment of their own accord (Figure 2 and Table 2).

The Category Mistake: Mistaking Optimization for Moral Agency. Rationality and Moral Capacity Comparison.

Moral Concepts Misapplied to AI.

We can articulate this critical distinction more analytically using the following equations:

Where:

D: deliberation over ends (the capacity to reflect on and evaluate different goals and values) R: responsiveness to reasons (moral understanding and the ability to be guided by ethical considerations) A: accountability (the willingness to accept responsibility for one's actions and decisions) O: optimization of given tasks based on data (the primary function of AI systems)

Category Mistake Defined:

Such a framework reveals the deeply mistaken attribution of moral agency to AI systems that are at their core devices for optimization, not moral consideration. AI systems are complex instruments that can be used to help humans in decision-making and reaching certain goals. However, we should not view them as autonomous moral agents capable of displacing human judgment and ethical responsibility.

When we see “justice” or “equality” in AI, these no longer appear because we put them there (i.e., they are engineered in response to human need/ethics) rather than being there per se. Humans determine the purpose, design the technique to achieve it, and then interpret the findings. Hence, the moral consequences of AI deployment should be conscientiously considered and regulated by human agents who possess moral judgment and are ethically responsible.

The Role of Human Agency in Technological Design

Human agency is fundamental to the design and use of AI systems, which are imbued with human judgment, intentionality, and decision-making representing the goals, values, and ethical frameworks legitimized within those systems (Popa, 2021). The decisions, aims, and desires made during the design and implementation process are of significant influence over the results of the AI that is produced, which makes human action and freedom an inherent part of technological responsibility. AI systems that are thoughtfully designed with human objectives and values in mind can be aligned with ethical norms and social goals and lead to fair and desirable scenarios (Popa, 2021). The teleologicalist approach observes that at the very basic level, AI's pursuit of goals maps to human-defined objectives, and hence humans have the ultimate responsibility for AI's actions. This responsibility is of even greater importance when addressing bias and inequality, as “human-in-the-loop” decisions on data selection and algorithm creation and deployment have previously led to AI systems that either perpetuate or exacerbate systemic discrimination, most notably in respect to oppressed sections of society (Ayyad, 2022; Farahani & Ghasemi, 2024; Murphy, 2023; Tariq, 2025). For example, gender bias is often a product of the fact that AI models reproduce biases in the training data; that is to say that gender balance and gender equity should be explicitly included in design (Franzoni, 2023).

Meeting those challenges requires that AI continue to be shaped by human-centered design. These include involving a wide range of stakeholders at each of the stages of data collection and preprocessing, from algorithmic tweaking to post-hoc bias correction, so that inclusivity, transparency, and accountability are the need of the hour (Chen et al., 2023; Pulivarthy & Whig, 2025). Reliable data auditing, re-tuning algorithms, and underwriting testing conditions for all are the normal practices where AI systems need to be running justly (Pulivarthy & Whig, 2025; Tariq, 2025). On a more general level, responsible AI is far from being neutral when it comes to social and normative responsibilities but needs to be taken on purposefully and proactively; that is, design(er)s and developers are supposed to have their antenna up as to what may come out of their choices and act accordingly, both to avoid harm and in the service of social justice (Murphy, 2023; Popa, 2021). However, the issue of human agency is fraught, as human biases may play a role in the construction and assessment of AI systems, and human interests may have a marginalizing effect on how AI affects the world, which is downplayed or even ignored (Zhu et al., 2024). In this respect, further research, reflective governance, and open conversation need to be aimed at the advancement of AI-based technologies that can be used to benefit people.

The Nietzschean Objection: Beyond Technological Eschatology

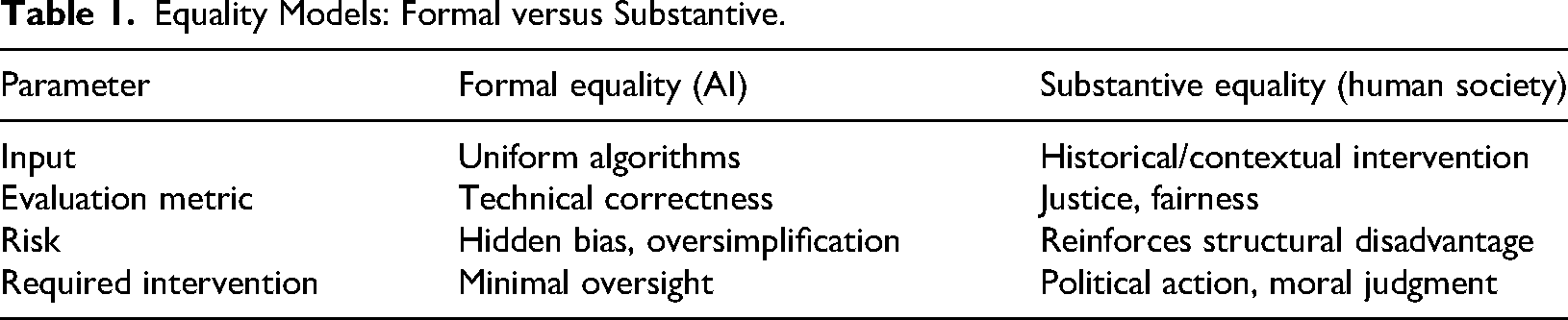

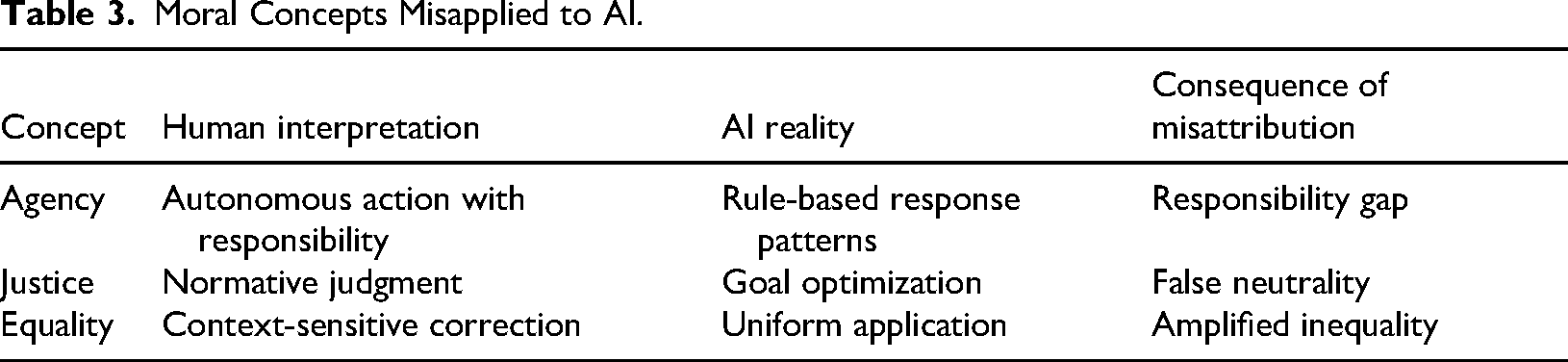

Nietzsche's deconstructive critique of the “true world” idea in Twilight of the Idols is of particular interest in this context. Nietzsche (2005) describes the historical process by which this metaphysical fiction seductively “finally became a fable” until concluding the unthinkable step was taken: "With the true world we have also abolished the apparent one!” (p. 11). What he means is that by eliminating the ideal, we are brought face-to-face with the messy, contingent reality in which we live. The world where AI cures systemic bias is the one that has failed to do so. It is a metaphysical blunder that only repeats the “true world” in silicon, moving ethical work into technology and thus shifting human political responsibility (Figure 3).

From Metaphysics to Machines: AI as the New “true world.”

It could, in that sense, be considered a secular eschatology. Political theorist Schmitt (2005) famously claimed that “all significant concepts of the modern theory of the state are secularized theological concepts” (p. 36). The dream of a godlike, objective AI that will resolve human disputes and save society from its sinful past is the secular heir to the vision of an all-knowing, impartial god. The result is not technological realism, however, but what the historian Nye (1994) refers to as the “technological sublime”—a quasi-religious wonder that imagines transcendent and redemptive possibilities in our handiwork and thereby diverts attention from their actuality and situatedness in the political and social aspects of history (p. 27).

This anticipation results in what Arendt (1958) dreaded as the substitution of political action by pure administration. Action, Arendt tells us, is our unprogrammable, pluralistic, and quintessentially human power to initiate something new together in the public space. When we substitute automated systems for critical social decisions, we are not engaging in politics; we are abdicating our role in the political process. What we are doing is erecting a form of bureaucracy, or, in Arendt's expression, “rule by nobody” (p. 40). Indeed, this picture portrays AI as a messianic figure that can be applied to address the centuries-old problems of human inequality. This claim is not just epistemically unfounded; it is ethically and politically catastrophic. It absolves people and institutions of their most fundamental responsibilities: the messy, complex, and endless process of collectively achieving justice.

Ethical Governance of AI: Political Will and Institutional Reform

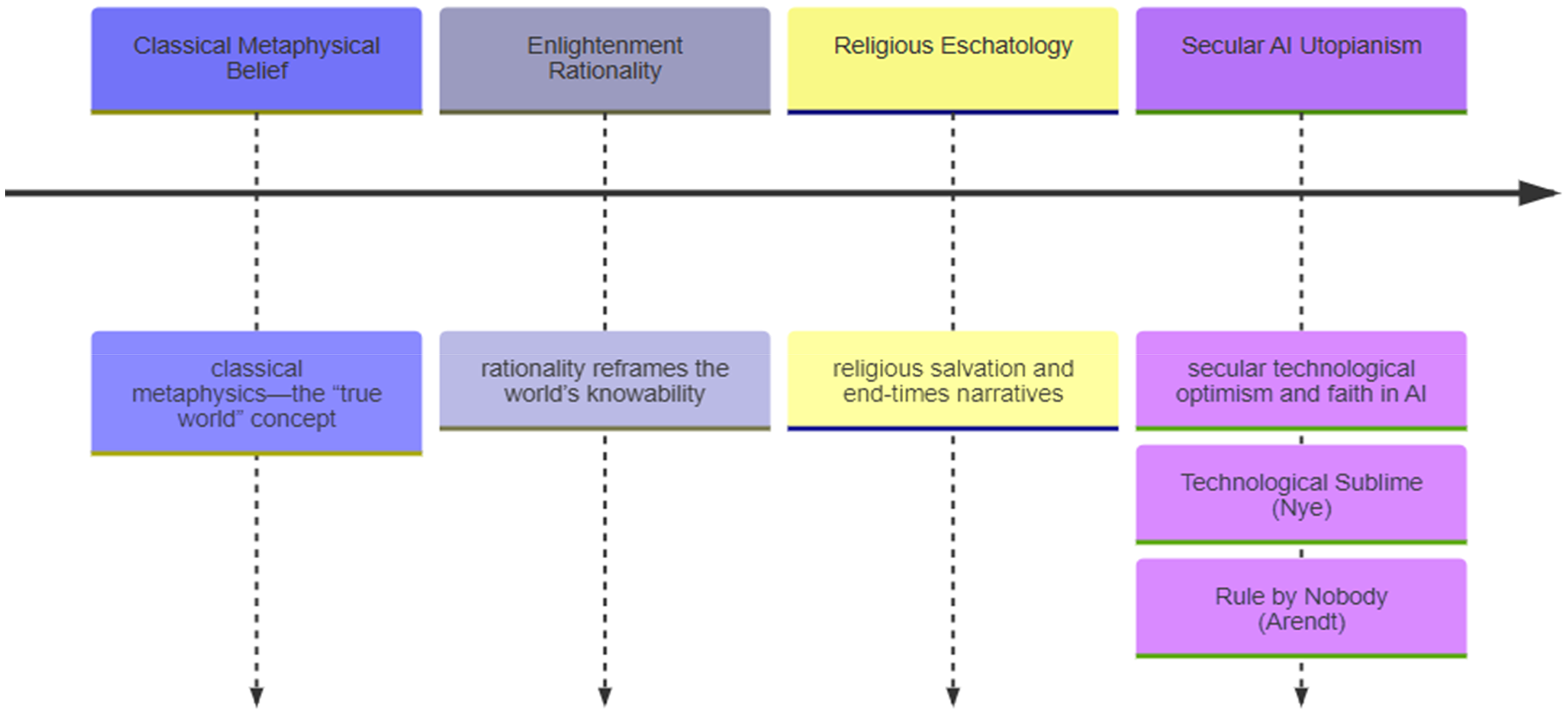

This critique is overly fatalistic. When regulated, made transparent, and held accountable, AI can be a method to advance equality and social justice. This “governance-as-solution” argument is partly correct. It is essential to establish robust ethical requirements, such as those outlined in the EU's AI Act, to mitigate the risks associated with and align AI systems with social values.

However, this position often underestimates the profound difficulty of its requirements. First, it assumes the sort of transparency that may not be technically feasible or cost-effective. In The Black Box Society, Pasquale (2015) explains that a key characteristic of many influential algorithms is their obfuscation: The algorithms are often intentionally veiled to protect intellectual property or block those who might try to game them. When source code is publicly available, the complexity of deep learning systems may be so high that even their designers cannot understand their inner logic, a phenomenon referred to as the “black box” problem.

Second, this perspective regards ethics as an add-on technical layer to a system, overlooking the fact that technology is intrinsically political. Technological objects, as Winner (1980) famously pointed out, are never neutral; they are made to function, on purpose or not, in such a manner that they yield specific social effects. A decision about what data to train on or what variables to optimize is not a technical decision but an ethical and political one, and one that makes explicit the values already present in the system's architecture. AI is still ethically inert; it will transmit and magnify whatever values it has been programmed to embody (Figure 4).

Components of Ethical AI Governance and their Limits.

Moreover, holding such technology accountable for the degree of power it holds is essential for good governance. It requires sustained political will and fundamental institutional reform under pressure from an emergent powerful economic logic that Shoshana Zuboff (2019) has called “surveillance capitalism,” the new but data-extracting and behavior-prediction imperative at the heart of this logic, which often has nothing to do with public accountability and user empowerment (p. 202). Hence, ethical AI cannot be just about “ticking the box” and complying with regulations—it must also strive to scrutinize these power arrangements and politically commit to challenging the institutional arrangements that create inequalities in the first place.

Policy Implications and Recommendations

The development of AI has posed immediate ethical and regulatory challenges and underlined the urgency of ensuring the formation of a comprehensive policy framework. The Federal Policy Guidelines Policymakers acknowledge that ethical AI is an advantage, and they should start having complex regulations that set those guidelines as a requirement for new algorithms. Practical principles, such as UNESCO's “Recommendation on the Ethics of AI,” provided universal considerations to inform a regulatory framework around cultural, educational, and scientific values (Morandín-Ahuerma, 2023). International precedents include the GDPR (General Data Protection Regulation) of the European Union, which, although based on the principles of transparency and accountability, primarily focuses on personal data protection. Additionally, various models in the United States and Asia depend on the diverse philosophies of their respective regulators (Kashefi et al., 2024). Responsible governance will require adaptive and flexible policies that will embrace multi-stakeholder participation (i.e., input from academia, industry, and civil society) to cope with ethical struggles and to foster responsible protocols (Kashefi et al., 2024). Public trust is especially crucial, as studies indicate that public opinion on AI is mixed and includes concerns about privacy, bias, and discrimination (Robles & Mallinson, 2023). Active involvement of communities through open conversation and transparent policy processes can help to place trust in building and design governance frameworks that reflect social values and highlight procedural fairness and symbolic representation in public institutions (Robles & Mallinson, 2023).

Institutional and international collaboration are essential to make AI laws compatible and address global ethical challenges. Technical foundations for ethical AI are provided by standard-setting organizations such as ISO (International Organization for Standardization), IEC (International Electrotechnical Commission), and IEEE (Institute of Electrical and Electronics Engineers), and international forums that focus on collaborative policy making to secure balance between benefits and risks (Ashraf & Mustafa, 2025; Kashefi et al., 2024). They propose to concentrate on interpretability, explainability, bias mitigation, and privacy or environmental aspects in AI systems such as energy-efficient methods (Lamberti, 2024). The trade-off between innovation and responsibility is made more challenging by the intersection and enforcement complications of jurisdiction. Of trending interest is the convergence of AI governance globally and the ascendancy of soft law and self-regulation (Ashraf & Mustafa, 2025). But industry self-regulation alone can undermine accountability, so effective approaches should integrate public oversight with organized private-sector involvement (Hadi & Jasim, 2024). The work on the latest ethics and AI needed ongoing discussion between the stakeholder and research to address new ethical problems as AI advances, and the policymaking keeps following the technology change (Ashraf & Mustafa, 2025). In the end, establishing robust AI governance frameworks hinges on monitoring the broader regulatory context and tempering innovation with ethics, ensuring that the manner in which AI is used aligns with both societal norms and the rule of law.

The Political Economy of AI: Embedded in Platform Capitalism

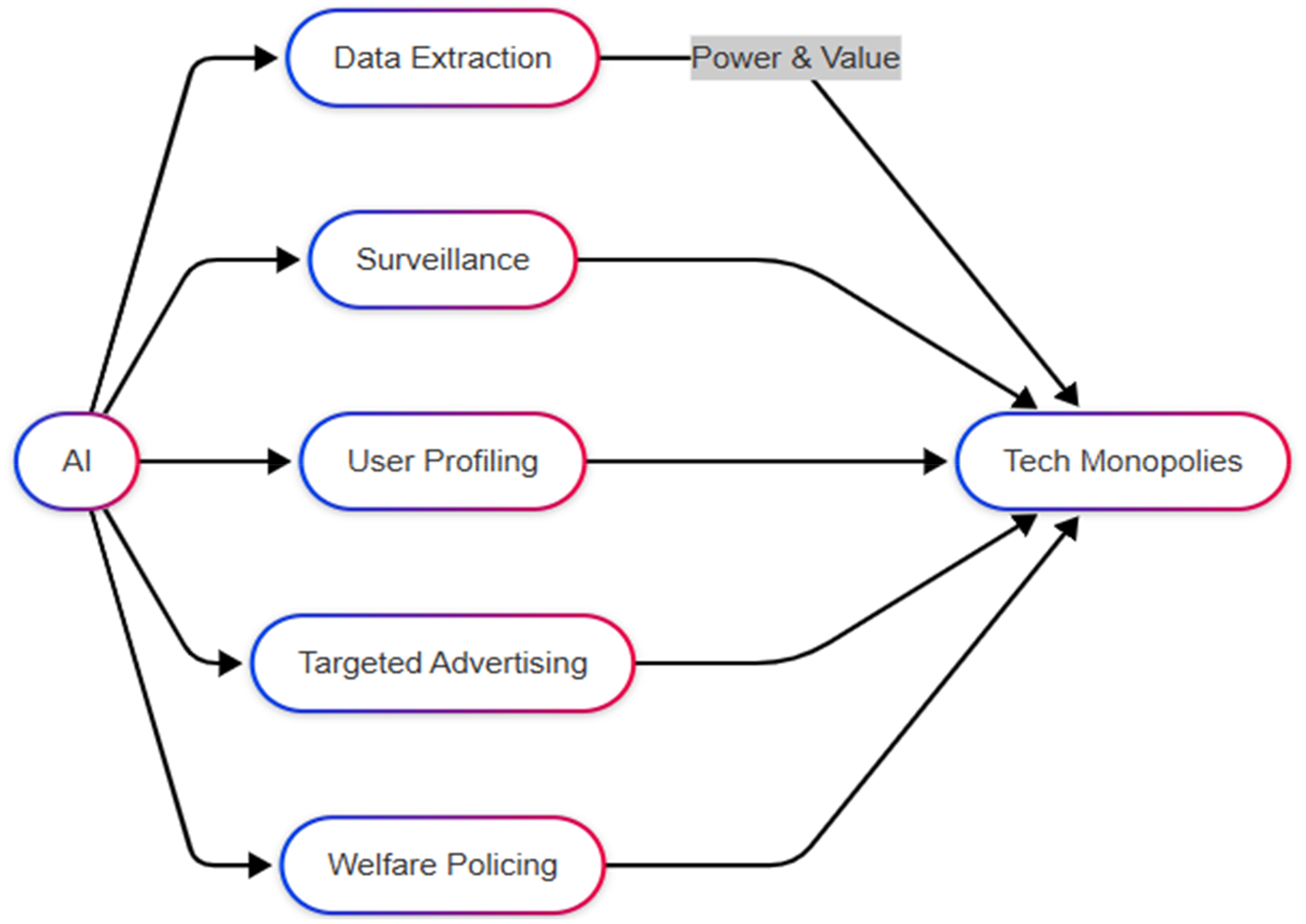

The global political economy is another complicating factor in the development of AI. Rather than growing in a neutral space, AI represents the driving agency of “platform capitalism,” as Srnicek (2017) terms it (Figure 5).

AI Embedded in Platform Capitalism.

The model is predicated on data extraction and control, building expanded network effects to create a monopoly, and utilizing digital infrastructures to mediate social and economic life (p. 43). These very material circumstances impose a tremendous gravitational pull on the project of AI's development, drawing it into, at best, a mixed bag and, far more likely, an oppositional relation to the ends sought by social justice.

Data Monopolies, A Core Feature of this Economy, Directly Produce Biased Systems

Data are not a natural resource, and as such, a raw data set does not exist; data are a social product obtained from different populations, which inherently contain all existing societal biases. As a few monopolistic platforms aggregate this data, their user bases (which are not representative of global humanity) are often implicitly taken as the “normal” model. As Noble (2018) shows, the business logics that animate these platforms ensure that search algorithms, for example, are optimized for profit rather than truth or equity. Thus, we have “logic of skewed results, misrepresentation, and invisibility” for marginalized groups, for example, women of color (p. 64) (Figure 6).

Epistemic Injustice Pipeline in AI Deployment.

Moreover, the logic of extraction of platform capitalism drives surveillance programs that disproportionately impact the vulnerable. In her seminal book Automating Inequality, Eubanks (2018) offers a sobering account of how AI is used to build a “digital poorhouse.” These policing tools, which limit access to welfare, housing, and health services, serve to discipline and control the poor, making possible high-tech forms of containment that represent “a powerful combination of private corporate interests and public-sector austerity” (p. 17).

A well-designed algorithm, even in the hypothetical, is still subject to the material conditions that ultimately bind it. The idea of AI functioning as a standalone leveler is unrealistic; its foundations, rooted in a capitalist paradigm of sui generis extraction, were established from the outset. The urge for profit, the vendor's relentless capture of every speck of life as behavioral data, and the vendor's concentration of power over us—these factors bend AI's course. The almost inevitable result is that AI is often turned against us as the tool that, among other things, optimizes the current vectors of power distribution.

Empirical Cases

Algorithmic Bias in Hiring (e.g., Amazon's AI Recruiting Tool)

The issue of hiring bias in algorithms emerged when Amazon scrapped its AI hiring tool, citing concerns that it was discriminatory against résumés that included the word “women's” (Dastin, 2018). The use of Amazon's AI recruiting tool, which was biased toward females, and the extensive adoption of AI in resume screening have impacted millions of applicants worldwide (Chang, 2023; Li et al., 2023). Studies show that discriminatory algorithms can lead to systemic inequalities that affect the opportunities of individuals and, in turn, their social and economic prospects (Bayana, 2025; Omar & Burrell, 2024). This incident highlights an important theoretical point that is often overlooked: gender discrimination, when concealed behind the veneer of neutral algorithmic decision-making, is unlikely to lead to the debiasing of society (cf. Bolukbasi et al., 2016; Noble, 2018).

While some claim that AI can be utilized “to counter human biases, decrease discrimination, and increase variety” (Raji & Buolamwini, 2019), in reality, positive beliefs on these fulfillments are contradicted by practices—that these representations of the AI equality claims may contradict historical biases embedded in data (Crawford, 2021; Mehrabi et al., 2021). While AI tools in hiring offer tremendous promise, this case provides a sobering reminder of the urgency of analyzing the ethical dimensions of hiring AI and the danger that biases can go dormant with AI (Raghavan et al., 2020).

COMPAS Risk Assessment in the U.S. Criminal Justice System

In the last decade, AI-informed and algorithmic tools have become a growing presence in policing and criminal justice, transitioning from simple data analysis tools to sophisticated predictive models designed to enhance the efficiency and accuracy of sentencing, bail, and parole decisions (Rhee, 2023; Talukder & Shompa, 2024). For example, the COMPAS (Corretional Offender Management Profiling for Alternative Sanctions) risk score software, which is used in the U.S. criminal justice system to predict recidivism, has been criticized due to racial biases observed in its results (Angwin et al., 2016; Dressel & Farid, 2018). Investigations, particularly the work of ProPublica, revealed that if they had not later committed further crimes, Black defendants were nearly twice as likely as white defendants to be misclassified as high risk for reoffending; whites were far more likely to be incorrectly deprioritized but go on to reoffend (Angwin et al., 2016).

Through this discrimination, Black defendants are more likely to be characterized as high risk, as one study found a 77% greater likelihood of being labeled high-risk than white defendants (Singhvi, 2024; Sreenivasan et al., 2022). These differences have profound societal outcomes, including high levels of imprisonment and sustained racial and class inequality. This instance exemplifies Foucault's concept of governmentality, where predictive policing and risk assessment constitute algorithmic discipline and social management that inscribe institutional biases and perpetuate existing power relations (Foucault, 1991; Harcourt, 2015). While COMPAS and similar tools are often heralded as objective, data-driven decision support systems, evidence has emerged that they can serve to reinforce systemic inequities along racial and class lines and have disparate effects on disempowered communities (Richardson et al., 2019).

Facial Recognition and Racial Surveillance

Recent investigations undertaken by Buolamwini and Gebru have revealed that facial recognition technology exhibits markedly lower accuracy rates for individuals with darker skin tones, thereby highlighting a significant shortcoming in its implementation (2018). For example, their “Gender Shades” initiative discovered that commercial facial recognition systems exhibited error rates as high as 34.7% for darker-skinned women, in stark contrast to less than 1% for lighter-skinned men (Buolamwini, 2017; Buolamwini & Gebru, 2018; Sezen, 2024). These findings challenge the assumptions of techno-utopianism, a belief widely held in the tech community that technology is inherently predisposed to promote inclusiveness and equity. Instead, the evidence shows that, far from humanizing them, algorithms may reinforce patterns of biometric exclusion and bias (Keyes, 2018; Raji & Buolamwini, 2019). Moreover, this point has implications for affective recognition and embodiment in AI systems, as the examples in facial recognition suggest that algorithms can be misled or overlook the subtle expressions and identities of people at the periphery (Crawford, 2021). This conclusion raises serious concerns about the ethics of developing or using facial recognition without taking the time to examine its sociopolitical impacts.

Algorithmic Content Moderation and Marginalized Voices

Algorithmic content has received much concern, as some members of Instagram and TikTok's Black and gay users all report excessive suppression or shadow bans (Bishop, 2019; Noble, 2018; Velasquez et al., 2023). In “shadowbanning,” users’ content becomes invisible to others, but they do not know anything about it at all. This practice has proven especially problematic lately. Disadvantaged users often develop folk theories to make sense of these opaque modes of moderation; however, these efforts, rather than proving advantageous or assisting with knowledge transfer, often add to their confusion and reduce their participation (Delmonaco et al., 2024). This tendency is closely related to the postcolonial critique, which questions the so-called “universal” values and norms embedded in the designs and moderation practices of these platforms (Birhane, 2021, p. 11).

Algorithms for content moderation are routinely designed in the image of Western norms and values, with non-Western expressions dismissed and historical wrongs perpetuated. We know from the experiences of Global South users that these systems amplify power relations and erase minoritized speech (Shahid & Vashistha, 2023). Considered globally in the interest of digital justice of the algorithms, through these never-ending problems, we realize that algorithmic decision-making deepens systemic disparities and excludes marginalized voices while reinforcing the narratives of the hegemonic culture (Lewis et al., 2023). This discussion points to the need for models of content moderation that recognize and respond to (rather than merely reflect) the complex and varied lives of specific users (Noble, 2018). Scholars present and complicate a turn toward content moderation, which demands repair of harm and violence to human beings, instead of sweeping the problem under the rug and proposing the introduction of a decolonized framework grounded in the safety and empowerment of marginalized communities (Peterson-Salahuddin, 2024; Siapera, 2022).

Case Studies of Ethical AI Applications

The design and execution of AI applications seeking to reduce bias and promote ethical decision-making have been thoroughly examined in numerous case studies spanning various sectors, notably finance and healthcare. Within the financial domain, AI systems engineered according to Diversity, Equity, and Inclusion (DEI) principles underscore the importance of employing transparent algorithmic processes along with robust accountability mechanisms. These include routine audits and independent assessments designed to ensure adherence to ethical benchmarks. By emphasizing inclusivity throughout data gathering and model formulation, financial institutions have succeeded in lessening intrinsic biases and enhancing trust among stakeholders. Similarly, in the health AI community, a number of such frameworks that come with bias detection and mitigation tools as well as anonymized techniques have recently come up and have seen great advancements on the fairness, privacy, and transparency fronts.

The above cases are illustrative of the fine line between experimentation and moral duty in getting a fair result (Muvva, 2025). There have been many different technical and organizational methods used to address bias and promote fair AI development. Explainable AI (XAI) techniques, such as SHAP (SHapley Additive exPlanations), LIME (Local Interpretable Model-agnostic Explanations), and counterfactual reasoning, have resulted in more transparent and fairer models, as exemplified by a language translator application where these techniques provided interpretability and fairness of outputs (Deokar et al., 2024). Moreover, fairness-aware machine learning algorithms and adaptive learning techniques have effectively fostered fairness across a spectrum of demographics, mitigating bias while adhering to ethical principles (Mishra et al., 2024). The establishment of comprehensive ethical frameworks provides concrete strategies for addressing issues related to bias, privacy, and transparency in AI development and application (Sargiotis, 2024). Additionally, strong accountability strategies—such as continual audits and active stakeholder participation—are imperative for sustaining transparency and cultivating user trust (Chauhan et al., 2025). Nevertheless, these accomplishments are accompanied by obstacles: striking a meaningful balance between fairness and model efficacy poses a significant challenge, as efforts to mitigate bias may adversely affect accuracy. Furthermore, tailoring solutions to specific domains and devising scalable approaches are essential for ensuring the ethical integration of AI technologies across various industries without undermining established standards (Nathim et al., 2024; Samala & Rawas, 2024).

Content: Highlight specific instances where AI has been employed ethically or in attempts to mitigate bias, showcasing both successes and limitations. This can provide a balanced view of AI's potential when combined with human oversight.

Conclusion

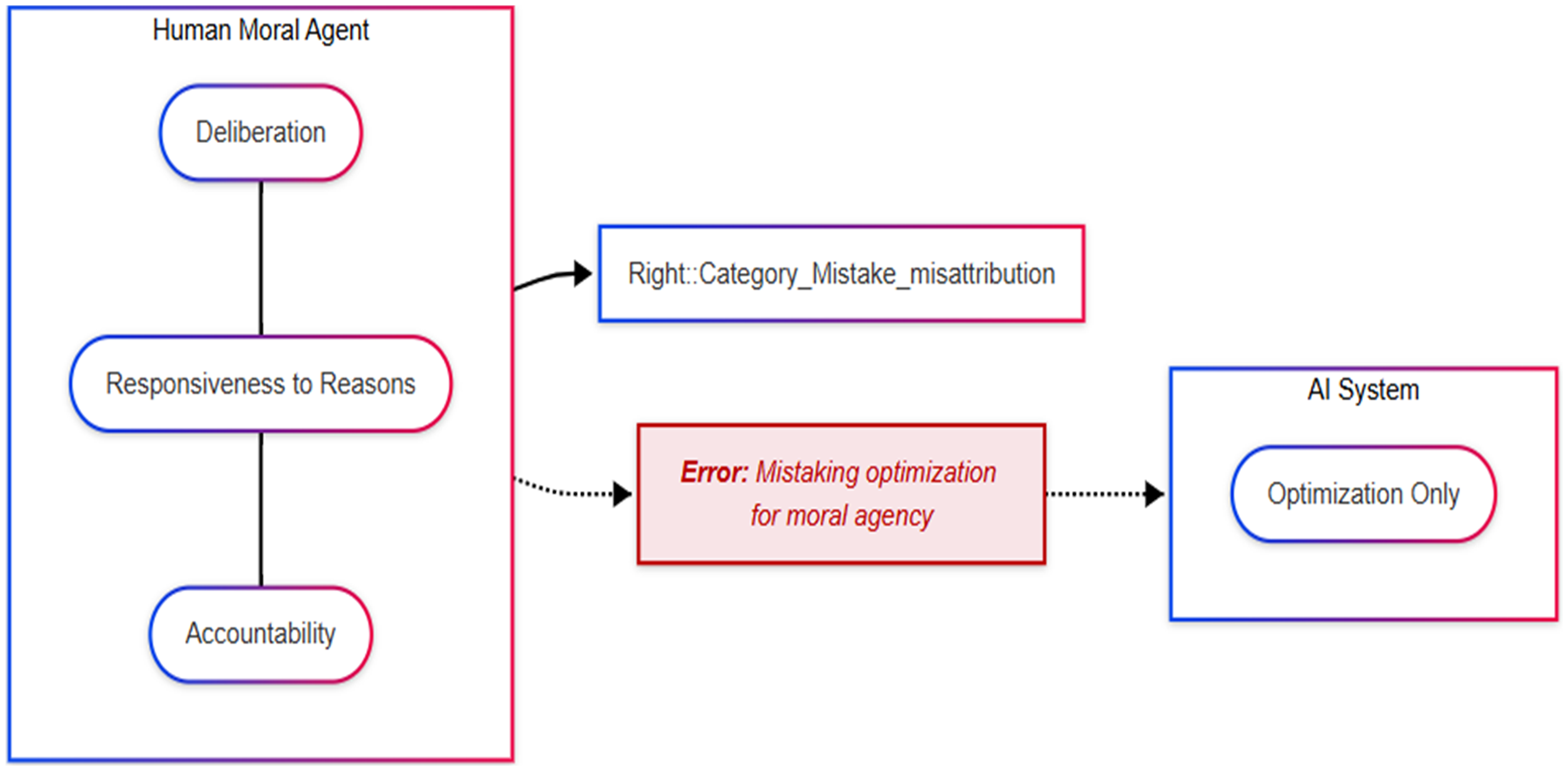

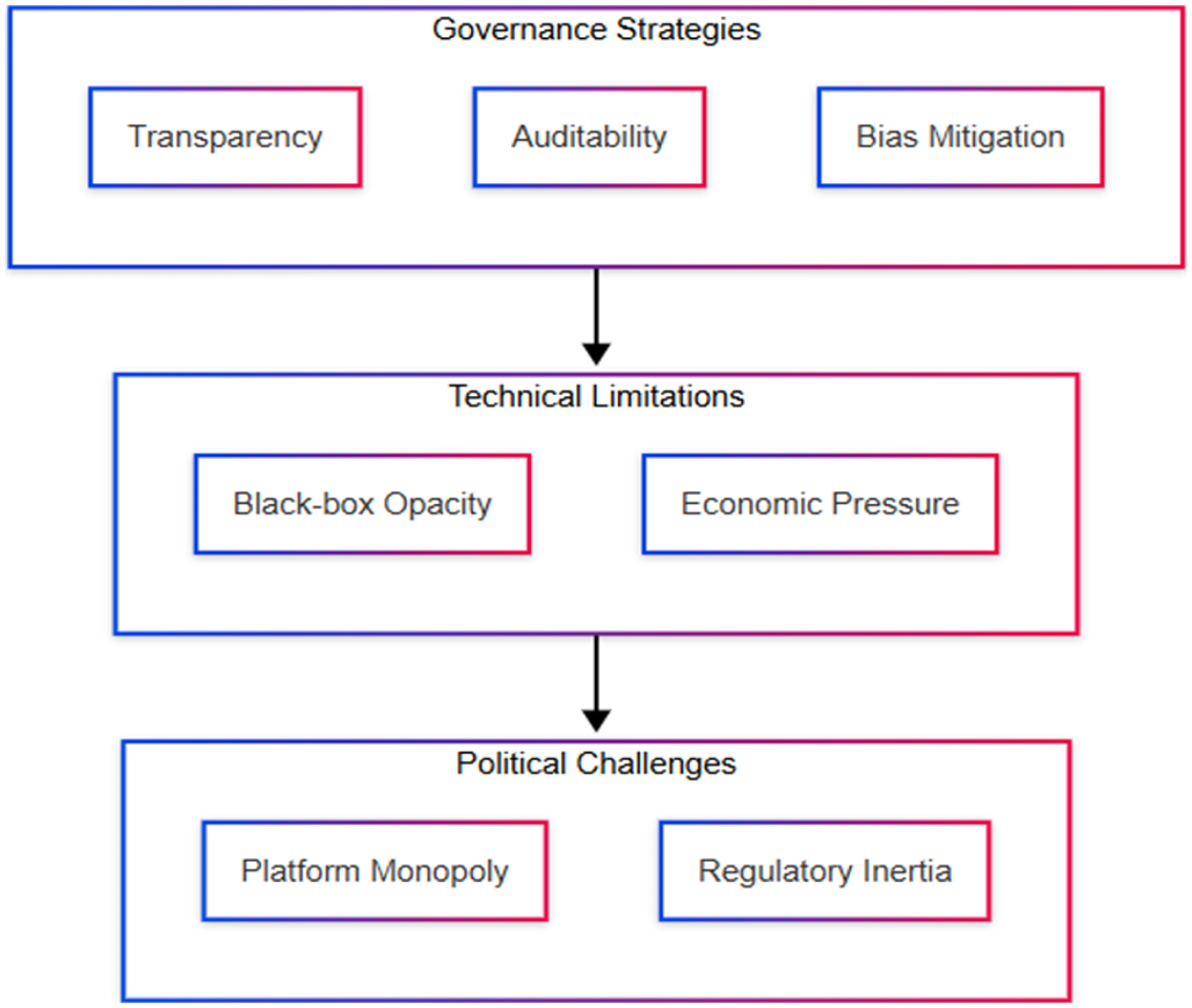

The central argument of this paper is that the claim that AI will abolish hierarchy is both conceptually confused and empirically ungrounded. The standard narrative, encapsulated in the equation AIformal + Accessexpanded ⇒ Equalitysubstantive, fundamentally misunderstands the nature of equality and the limitations of AI as a technological tool. It relies on a category mistake: attributing moral agency (D + R + A) to a system that is fundamentally designed for algorithmic optimization (O) (Figure 7).

Ethical Deliberation Versus Algorithmic Optimization.

AI can certainly be advantageous in the human task of achieving justice, but only if it already exists. It replaces neither political action, ethical reflection, nor social transformation. The reasoning behind the dream that AI will eliminate hierarchy is not just an unfounded account of justice but rather a confusion over where to place ethical capacities that cannot be attributed to a non-deliberative system.

Is AI inherently breaking down the hierarchy, or is it further stabilizing it by making a hidden hierarchy—hidden beneath the language of technological neutrality—that much easier to maintain? By mechanizing the decision-making process and the human choices built into it, AI technology can prevent us from making visible how existing power structures and inequalities are maintained. Therefore, it is critically important to be deeply skeptical of the hype surrounding AI as an equalizer, reminding us that absolute equality demands commitment, ethical reflection, and transformative political action. The road to a more just and fair society does not lie in algorithmic utopianism but in the slow, often uncertain and challenging process of building a more democratic and accountable world.

Footnotes

Acknowledgments

The author acknowledges no external contributions to the writing of this paper, except software for the improvement of grammar and language.

Funding

The author received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.