Abstract

Advances in artificial intelligence (AI) have facilitated its adoption in professional service sectors such as finance and healthcare, but AI projects exhibit a failure rate nearly double that of non-AI projects. These failures, considered corporate crises, have sparked public criticism and damaged corporate–public relationships. Within the framework of the media evocation paradigm, this study examines public perceptions of the roles of AI and humans, along with the subsequent attribution of responsibility and expectations for corporate crisis communication strategies in AI-service-failures. Four semistructured focused group interviews were conducted with a purposively selected sample of participants (N = 21), stratified by AI expertise (with vs without) and AI usage experience (extensive vs limited). Key findings reveal three critical insights: (1) ontological perceptions: the public predominantly rejects the notion of AI as a unique social actor within AI-service-failure crises, instead conceptualizing it as an advanced instrument; (2) responsibility attribution framework: an Organization-AI-User responsibility triangle demonstrates asymmetric accountability—corporations bear maximized responsibility, AI systems are absolved of moral/legal responsibility, and user accountability remains conditional and context-dependent; (3) human-centered expectations: the public's demands align with human-centered AI principles. These expectations prioritize corporate apologies, precautionary measures, and corrective actions over evasive strategies like the contested “mirror strategy” that enables involved companies to deflect responsibility for AI failures by attributing them to broader societal factors.

Keywords

Introduction

Recent breakthroughs in machine learning and natural language processing have enabled artificial intelligence (AI) systems to execute cognitive tasks previously exclusive to human capabilities (Arikan et al., 2023; Leo & Huh, 2020). The operational advantages of AI implementation—particularly labor cost rationalization, operational efficiency enhancement, and profit margin optimization (Alimamy & Nadeem, 2022; Arikan et al., 2023)—have precipitated unprecedented adoption rates. Industry analyses reveal that 72% of professional service organizations had integrated AI solutions into core business processes by 2024 (McKinsey & Company, 2024), indicating a paradigm shift in technological adoption patterns.

Although such advancements present new opportunities for innovation across service sectors such as finance, healthcare, and marketing (Araujo et al., 2020; Leo & Huh, 2020; Wirtz et al., 2018), the failures of AI systems have raised substantial concerns. According to estimates by the RAND Corporation (2024), over 80% of AI-driven projects fail, twice the failure rate of non-AI projects. Notable instances of AI misconduct, such as Microsoft's chatbot Tay making racist remarks (Victor, 2016) and Google's search engine categorizing hairstyles associated with Black individuals as “unprofessional” while labeling those linked to White individuals as “professional” (Wineberg, 2018), have elicited significant public outcry.

While industry recognizes AI-service-failures as corporate crises (Olavsrud, 2024), scholarly research primarily takes the media equation paradigm in human–machine communication, which examines whether and how human interaction patterns were unconsciously transferred to human–AI interfaces such as AI service (Nass & Moon, 2000; van der Goot & Etzrodt, 2023). Existing literature predominantly employed experimental designs to analyze responsibility attribution between AI systems and corporate entities and forced-choice designs to probe responsibility attribution between AI and humans (Liu & Du, 2022; Ryoo et al., 2024; Shank & DeSanti, 2018). However, these studies—presuming anthropomorphic accountability frameworks while neglecting ontological prerequisites for culpability—insufficiently address three critical dimensions of public perceptions in crisis communication contexts regarding AI-service-failures: (1) the ontological status of AI agents, (2) the coconstitution of human–AI responsibility attribution, and (3) the expectation of corporate stances and crisis communication strategies (CCSs). Given the rising complexity of both the essence of different AI systems and the interaction between them and human users nowadays, such a theoretical limitation hinders the full application of extant findings across evolving AI-service-failure scenarios (Heyselaar, 2023; Xie et al., 2025), necessitating deeper investigation into how these conceptual constructs shape public expectations of corporate crisis responses.

To fill this gap, this study was guided by the media evocation paradigm—which emphasizes a reflective and negotiated understanding of AI and human identity, alongside its communicative implications from a philosophical perspective transcending mere functionality (Turkle, 2007; van der Goot & Etzrodt, 2023). This framework promotes an intersubjective understanding of human–AI interactions by consciously reconceptualizing AI systems through their functional essence, thereby enabling simultaneous examination of public perceptions toward advanced AI and human actors’ roles within boundary-transcending communicative dynamics in AI-service-failure contexts (Guzman & Lewis, 2020; Peter & Kühne, 2018; van der Goot & Etzrodt, 2023). Specifically, this study employed semistructured focus group interviews to investigate public conceptualizations of AI, the human role in AI-service-failures, and the consequent patterns of responsibility attribution and corporate crisis communication demands.

Theoretically, the results extend yet complicates core tenets of the media evocation paradigm by demonstrating that the public consciously conceptualizes AI as nonsentient tools rather than hybrid social actors during crises, thereby establishing an asymmetric accountability framework within the Organization–AI–User responsibility triangle—where corporations bear maximal responsibility due to perceived controllability and stewardship roles, AI systems are absolved of moral/legal culpability, and user accountability remains conditional. The results also reveal how crisis contexts trigger intersubjective negotiation of sociotechnical relationality, where AI's ambiguity catalyzes human agency reconfiguration.

Practically, organizations must prioritize accommodative crisis responses, institutionalize precrisis safeguards, develop tiered user education with cocreative feedback channels, and champion ethical stewardship via “ethics-by-design” protocols—collectively necessitating a paradigm shift from reactive tactics to proactive human-centric AI governance that embeds accountability to navigate failures and sustain legitimacy.

Literature Review

From AI Failures to Corporate Crises

While AI systems demonstrate operational sophistication comparable to human services, they remain susceptible to systemic failures due to inherent algorithmic limitations (Choi et al., 2021). The proliferation of AI adoption across industries has correspondingly increased the incidence of algorithmic failures, documented across technical and social domains (Arikan et al., 2023; Honig & Oron-Gilad, 2018; Washburn et al., 2020).

This study synthesizes the service and public relations scholarship to conceptualize an AI-service-failure as a corporate crisis. Service scholarship fundamentally positions AI-service-failures as violations of customer anticipations (Gursoy et al., 2019; He & Harris, 2014; Leo & Huh, 2020; Tian & Oviatt, 2021). Such events, which breach public expectations, are defined in public relations scholarship as crises that result in negative repercussions for the organization involved and require prompt responses to stakeholders (Coombs, 2010). Taken together, consequently, AI-service-failures can be viewed as corporate crises to service companies (Prahl & Goh, 2021; Srinivasan & Sarial-Abi, 2021), where the subjects involved include humans (the customer/user and the involved company) as well as the AI system—a rather new subject in the discussion of crisis communication, and more broadly, in the context of public relations.

The Understudied Terrain of Public Understanding of AI

As AI established itself as a novel entity, advancing beyond rudimentary treatment of AI-service-failures as conventional corporate crises necessitates foundational insights into public interpretations of AI's roles. However, scholarship in this domain remains underdeveloped, constrained by algorithmic opacity that obstructs lay comprehension of sociotechnical impacts—concurrently limiting academic inquiry (Cui & Wu, 2021; Selwyn & Gallo Cordoba, 2022; Yigitcanlar et al., 2024). Given public cognitions determine technological adoption (Zhai et al., 2020), understanding how the public negotiates AI's capabilities with its limitations—especially amid evidence challenging AI's superiority (Kabalisa & Altmann, 2021)—is critical for aligning crisis responses with societal expectations (Sartori & Bocca, 2023).

Despite limited existing research, current scholarship identifies two predominant perspectives regarding public perceptions of AI: (1) the instrumentalist view, framing AI as programmed tools executing predetermined functions (Paschen et al., 2020; Zhang et al., 2019); and (2) the anthropomorphic view, emphasizing recognition of AI's perceived autonomy in decision-making processes affecting human agency (Logan & Waymer, 2024). These perspectives represent dual facets of AI as both an inanimate machine and an intelligent agent, yet they exist in tension due to ontological differences. Notably, this theoretical dichotomy is synthesized through the media evocation paradigm in human–computer interaction (HCI) research, which reconciles both views by reconceptualizing AI systems as novel social actors exhibiting simultaneously tool-like functionality and agency manifestation (Turkle, 2005; van der Goot & Etzrodt, 2023).

The Media Evocation Paradigm

The media evocation paradigm constitutes a cognitive framework that reconfigures ontological boundaries between humans, machines, and communication within AI contexts, foregrounding deliberate ontological contemplation of AI and its resultant sociocognitive implications. The concept of media evocation originated in Turkle's (2005) seminal work The Second Self: Computers and the Human Spirit, which posits computational technologies as “evocative objects” that defy ontological categorization, provoke self-reflection, and destabilize conventional boundaries between human and machine. This framework was further expanded in Evocative Objects (Turkle, 2007), emphasizing how technologies occupy an intermediary ontological status—neither fully human nor inanimate—thereby catalyzing existential inquiries about agency and cognition (van der Goot & Etzrodt, 2023). Hence, AI systems’ operational errors may serve as epistemic triggers for societal introspection, inducing a distinct conceptualization of AI-induced crises category where AI might constitute an emergent social actor.

The media evocation perspective draws on while further developing the computers-as-social-actors (CASA) framework (Nass & Moon, 2000), which demonstrates that humans unconsciously ascribe social agency to machines exhibiting linguistic interactivity. On the one hand, according to the CASA, empirical evidence confirms that AI systems capable of anthropomorphic behaviors (e.g., natural language generation) activate user heuristics akin to human-to-human interaction (Nass et al., 1993), predisposing the public to perceive AI as social actors during crises. On the other hand, media evocation further develops the profundity of CASA by highlighting the liminality of AI's ontological status in public consciousness. Despite pervasive AI integration, its recurrent errors and sub-superintelligent capabilities position it as a hybrid entity and a unique social actor—transcending mechanistic tools yet falling short of human equivalence. Notably, the media evocation paradigm posits conscious public recognition of computational systems as intentional social agents, contrasting with CASA's proposition of unconscious application of human interaction schemata (van der Goot & Etzrodt, 2023). Such a cognitive dissonance between AI's perceived agency and its operational limitations fosters reflexive public discourse about human–machine relationality. This theoretical integration necessitates reconceptualizing public perceptions of AI as an emergent social actor spanning the continuum between nonintelligent machines and human employees during AI failure crises. To address this gap and examine the theoretical implications of the media evocation paradigm for AI-service-failure contexts, our study poses the following research question.

Responsibility Dynamics in AI-Service-Failure Crises

Attribution theory accounts for the public's tendency to assign blame following service failures. Drawing on Weiner's (1985) framework, individuals seek to identify failure causation to assess responsibility and determine accountability (Lee & Cranage, 2018; Ryoo et al., 2024). Responsibility attribution operates through three dimensions: (1) locus of causality (distinguishing between internal or external causes), (2) controllability (assessing the actor's control over the cause), and (3) stability (evaluating the likelihood of recurrence) (Coombs, 2004; Weiner, 1985). Events exhibiting high controllability with internal causality typically suggest intentionality, heightening responsibility attribution toward the actor (Coombs, 2004). However, these dimensions manifest complexly when applied to AI and service organizations, given AI's unique ontological status and the resulting corporate stewardship role. A synthesis of extant research limitations and substantive findings provides a foundation for exploring this distinctive attribution dynamic.

As previously noted, predominant quantitative investigations into public perceptions of responsibility attribution in AI failures inevitably yield limitations due to reliance on forced-choice measurements (Liu & Du, 2022; Ryoo et al., 2024; Shank & DeSanti, 2018). These approaches frame responsibility as a zero-sum construct between AI systems and corporations, thereby presupposing AI's capacity as an accountable entity—a problematic premise that neglects AI's inherent nonaccountability (Turkle, 2005; van der Goot & Etzrodt, 2023). Such methodological framing imposes an ontological categorization of AI as a qualified responsibility-bearer, lacking empirically validation. Importantly, while individuals may theoretically acknowledge AI's liminal status as a hybrid social actor under the media evocation paradigm, substantial gaps persist in understanding how the public negotiates this conceptualization with AI's practical incapacity for moral–legal accountability. Specifically, empirical evidence remains scarce regarding whether lay (nonspecialist) or professional (AI-specialist) perceptions align with scholarly constructs when evaluating AI's role in concrete service failures. This dissonance between AI's anthropomorphized agency in human–AI interaction frameworks (Nass & Moon, 2000) and its mechanistic limitations as a nonsentient tool necessitates systematic empirical inquiry into how competing ontological perspectives shape responsibility attribution patterns in crises—a critical gap in extant research.

Notably, existing research and empirical cases converge on the consensus that public blame predominantly targets service providers in failure incidents (McColl-Kennedy et al., 2011). This attribution pattern persists in AI failure cases such as IBM facing scrutiny for Watson's erroneous cancer treatment recommendations (Ross & Swetlitz, 2018), and Apple Inc. encountering gender discrimination allegations due to credit limit disparities in its Apple Card algorithm (Vigdor, 2019). Although these cases resonate with extant literature's call for organizations’ practices to build “responsible AI” (Rakova et al., 2021), the underlying attribution mechanisms remain speculative rather than empirically validated. Current explanations postulate that within AI failures, service firms exhibit heightened accountability vulnerability. AI systems operate through nonsentient algorithms lacking error awareness or intent (Prahl & Goh, 2021; Shank & DeSanti, 2018), diminishing perceived internal control locus. Concurrently, as AI functions as corporate agents advancing organizational interests, their failures reflect firm performance. Legal–ethical frameworks further mandate responsibility assignment to human-operated entities capable of harm mitigation, amplifying blame relevance toward firms (Johnson & Verdicchio, 2019). Consequently, AI failure accountability extends beyond the AI systems to encompass firms, prompting critical examination of technological implementations (Bigman et al., 2023; Ryoo et al., 2024).

In sum, this study addresses two critical limitations in existing research: the problematic presumption of AI as a qualified accountability bearer—which overlooks empirical investigations of public perceptions—and insufficient analysis of corporate responsibility patterns. Building on public understanding of AI, we therefore: (1) examine variance in responsibility attribution toward AI systems during service failures, (2) acknowledge the improbability of firms avoiding public blame, (3) investigate how ontological perceptions of AI and corporations shape attribution dynamics, and (4) explore the extent to which users are assigned accountability within AI-service-failures—an underspecified dimension in current scholarship. Thus, our second research question is formulated as follows:

CCSs in AI-Service-Failure Crises

Effective crisis communication necessitates investigating public understanding of AI, as it constitutes the foundation for organizational damage repair postcrisis (Alexander, 2014; Cheng et al., 2024; Coombs, 2007; Coombs & Holladay, 2005). While CCSs in non-AI contexts are well-established—encompassing verbal/nonverbal organizational responses (Huang et al., 2005) and categorized through frameworks like Benoit's (2014) image repair theory (denial, evasion of responsibility, reducing offensiveness, corrective action, and mortification) and Coombs’ (1998) situational crisis communication theory (defensive-to-accommodating continua including attacking the accuser, denial, excuses, justifications, ingratiation, corrective action, and full apologies)—scholarly attention to their adaptation for AI-service-failure crises remains scarce.

To the best of our knowledge, before the conduction of our study, only Prahl and Goh (2021) have systematically analyzed CCSs’ applications in AI failures, identifying prevalent strategies: apology/mortification involving responsibility acceptance with forgiveness-seeking (Coombs & Holladay, 2008), corrective action for malfunction rectification (Coombs, 2010), and denial refuting failure occurrence or responsibility (Huang et al., 2005). They further propose the “mirror strategy”—an emergent approach that redirects blame toward societal/human factors in AI's integrity-violation contexts (Bigman et al., 2023; Prahl & Goh, 2021). This strategy explicitly attributes failures to extrinsic societal or human determinants, thereby diverting culpability from the organization (Prahl & Goh, 2021). Such attribution aligns with the cognitively coherent nature of AI's integrity violations, given that algorithms frequently operationalize human decisions embedded with societal biases from training data (Bigman et al., 2023).

Notwithstanding these conceptual advances, empirical validation of CCSs’ efficacy in AI contexts remains absent. Consequently, our final research question examines how public expectations mediate the effectiveness of established versus emergent CCSs in AI failure contexts, specifically regarding organizational stances and strategic responses:

Method

Overview

Data were collected through four semistructured focus group interviews (two conducted online and two in-person) using purposive sampling. Focus group interviews are particularly effective for generating unique insights through group interactions, facilitating the emergence of metaphors and comparisons, and providing a collaborative platform for participants and researchers to address specific issues (Tracy, 2020). This study employed focus group interviews for two primary reasons. First, compared to traditional service failures, the public is generally less familiar with AI-service-failure crises. The group discussion format is conducive to stimulating public discourse and uncovering detailed insights that might otherwise remain unexplored (Morgan, 1988). Second, real-world discussions about AI failures often emerge from collective public opinions, which can significantly influence corporate reputations. By engaging in group discussions, participants continuously shape their perceptions of AI-service-failures through interaction with others. Thus, the focus group format allows us to simulate this process and observe potential dynamic changes in perceptions.

Participant Recruitment and Group Allocation

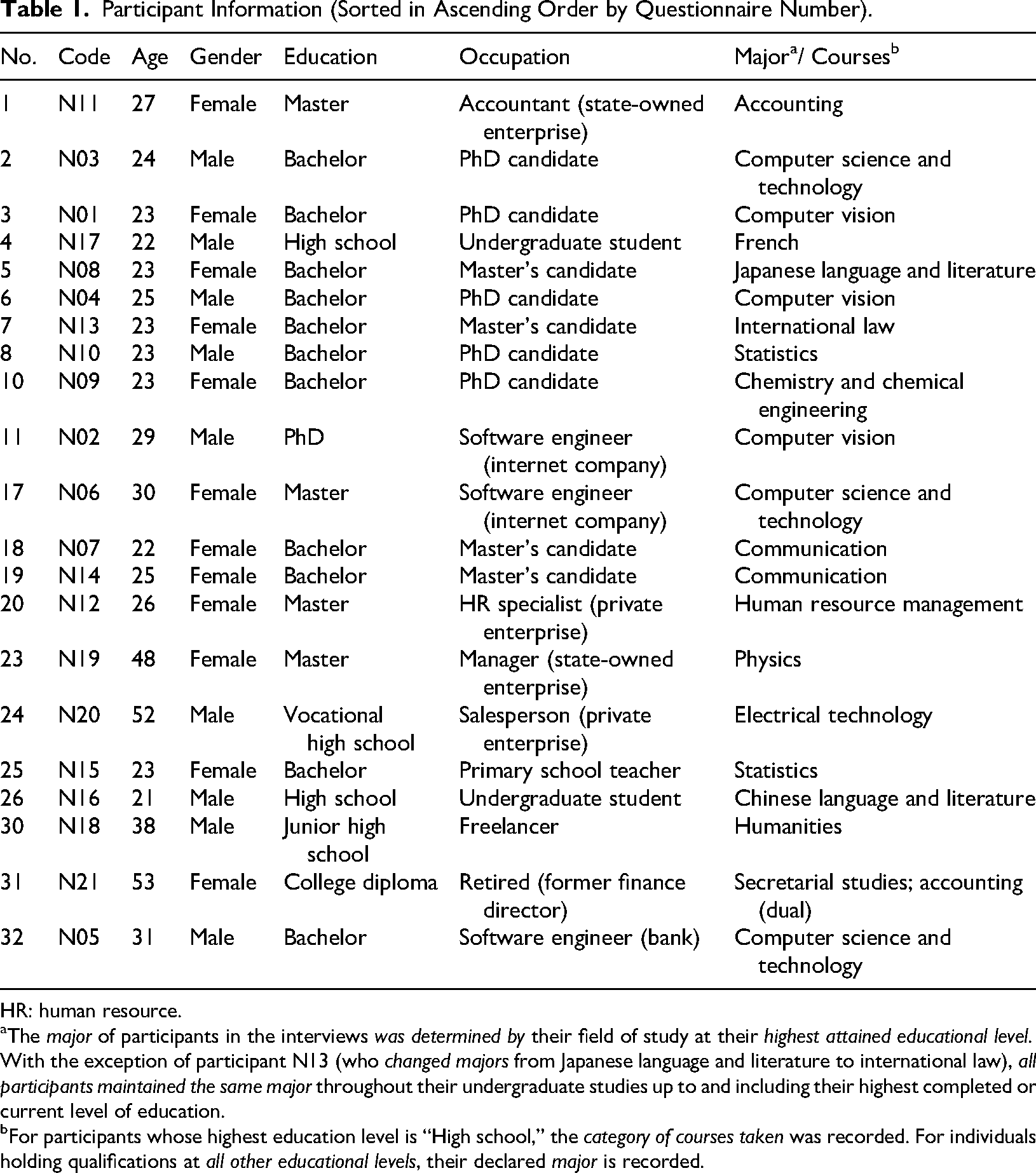

Between December 2024 and January 2025, 21 participants (57.1% female) with an average age of 29.10 (SD = 10.00, range = 21–53) were recruited through purposive sampling and divided into four groups based on member homogeneity (Grunig, 1990). Specifically, the researcher distributed recruitment questionnaires for purposive sampling via the WeChat platform (i.e., a Chinese mainstream social media platform). In addition to collecting demographic information, the recruitment criteria included (1) whether participants had professional backgrounds in AI—determined by examining respondents’ undergraduate, master's, or doctoral degree majors and current professions (if employed), where all participants in this study with AI expertise who were employed maintained professional alignment by working in programming or algorithm-related fields—and (2) their levels of AI usage experience, measured through a nine-item AI experience scale adapted from Liu (2021) asking “Have you heard of or used AI-service products in the following domains?” (1 = never heard of, 2 = heard but not used, 3 = used; α = .88), along with a 9-point Likert item measuring AI usage frequency: “How frequently do you use AI-service products in general?” (1 = never, 9 = always). This sampling design enabled an examination of the potential influence of varying levels of AI knowledge and usage on individuals’ perceptions of AI agents, as suggested by prior research (Gambino et al., 2020; Horstmann & Krämer, 2019).

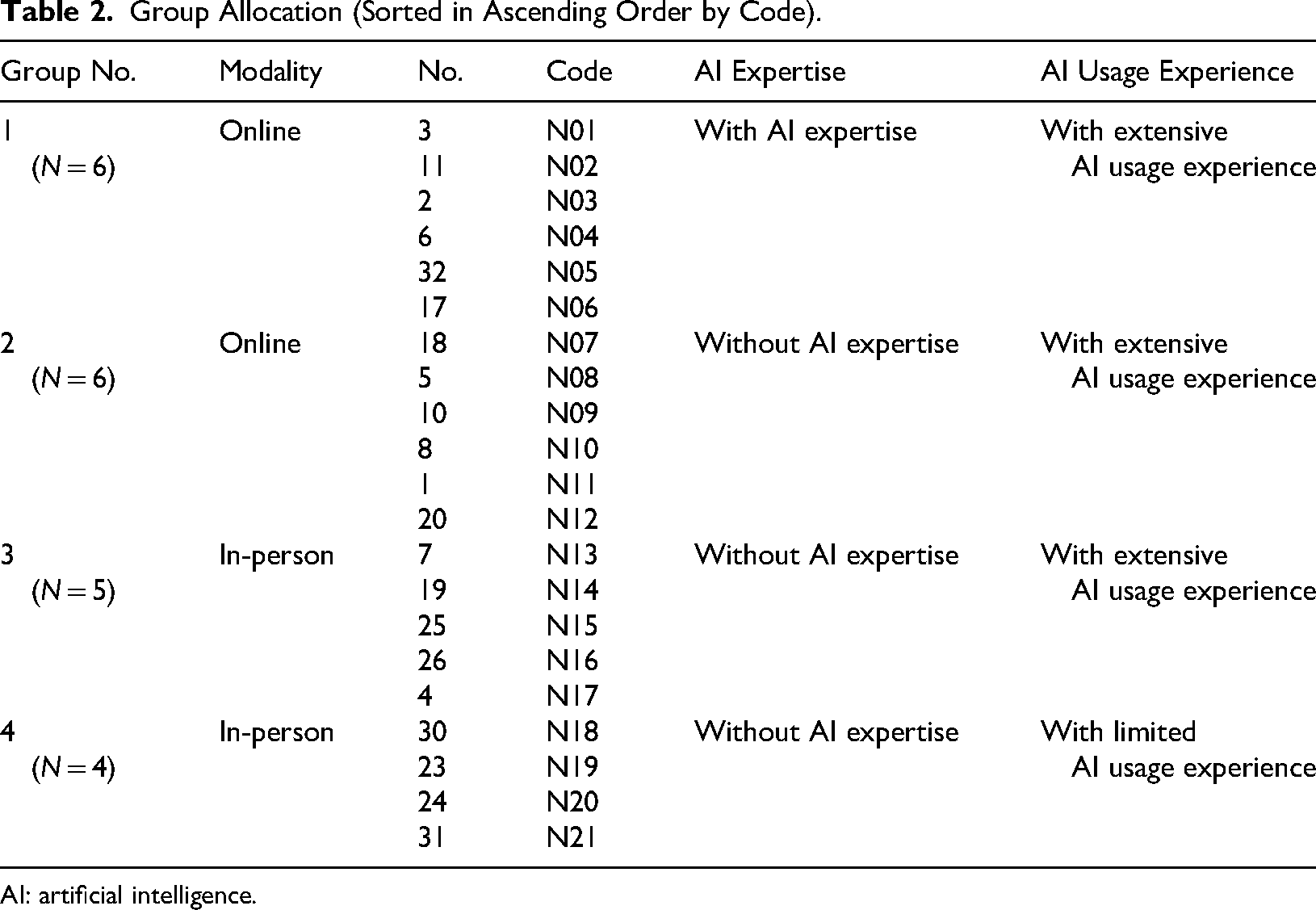

A total of 32 questionnaires were collected, with 24 respondents expressing initial willingness to join group interviews and subsequently confirmed as meeting purposive sampling requirements. Prior to formal group interviews, the researcher conducted detailed conversational verification via telephone follow-ups or online messages with these 24 respondents to ensure accurate assessments of their AI professional backgrounds and AI usage experiences, mitigating potential information inadequacy from simplified online questionnaires. Ultimately, 21 participants were formally screened and engaged in focus group interviews, coded N01–N21 (see Table 1 for participants’ information), and divided into four groups (group 1: participants with AI expertise and extensive AI usage experience (online interview); group 2: participants without AI expertise but with extensive AI usage experience (online interview); group 3: participants without AI expertise but with extensive AI usage experience (in-person interview); group 4: participants without AI expertise and with limited AI usage experience (in-person interview)) based on the two criteria assessed through the information collected and the index measurement respectively, along with interview modalities (see Table 2 for group allocation).

Participant Information (Sorted in Ascending Order by Questionnaire Number).

HR: human resource.

The major of participants in the interviews was determined by their field of study at their highest attained educational level. With the exception of participant N13 (who changed majors from Japanese language and literature to international law), all participants maintained the same major throughout their undergraduate studies up to and including their highest completed or current level of education.

For participants whose highest education level is “High school,” the category of courses taken was recorded. For individuals holding qualifications at all other educational levels, their declared major is recorded.

Group Allocation (Sorted in Ascending Order by Code).

AI: artificial intelligence.

As for criterion (1), the group of participants with AI expertise (group 1) consisted of six AI professionals majoring in computer vision or computer science and technology, including students and software engineers. The three groups of participants without AI expertise (groups 2, 3, and 4) comprised of 15 individuals, including students, full-time employees, a freelancer, and a retiree with backgrounds in accounting, Chinese language and literature, communication, chemistry and chemical engineering, electrical technology, French, human resource management, humanities, international law, Japanese language and literature, physics, statistics, and secretarial studies. Among these three groups, two groups (groups 2 and 3) of participants (N = 11) reported extensive AI usage experience, while one group (group 4) of participants (N = 4) had limited AI usage experience. Given that the AI-expert group inherently possessed substantial AI usage experience, which is also statistically supported (as will be discussed below), no further subgrouping based on AI usage experience was necessary for this group. As for criterion (2), for groups (groups 1, 2, and 3) with extensive AI usage experience, the 95% confidence intervals for the mean values of participants’ (N = 17) AI experience (M = 2.69, SD = 0.21, P < .001) and AI usage frequency (M = 8.12, SD = 1.11, P < .001) both exceed their respective scale midpoints. In contrast, for the group (group 4) with limited AI usage experience, the 95% confidence intervals for the mean values of participants’ (N = 4) AI experience (M = 1.75, SD = 0.11, P < .05) and AI usage frequency (M = 1.75, SD = 0.96, P < .01) both fall below their respective scale midpoints.

Interview Procedure

The focus group interviews were conducted as follows. First, the moderator introduced the AI-service-failure discussion topic through warm-up questions: “Have you ever encountered system malfunctions, operational errors, or unsatisfactory outcomes when using AI services? If so, could you describe specific instances of such experiences?” Following participants’ experience sharing, the moderator synthesized common patterns in these service-failure narratives—collectively termed “AI-service-failure”—and presented two classic cases (Microsoft's Tay chatbot and Google's algorithmic bias incidents) to reinforce conceptual understanding and establish terminological consensus. Second, the moderator guided semistructured discussions through three thematic lenses corresponding to the research questions: (1) participants’ perceptions of AI's ontological status in AI-service-failures (RQ1), (2) participants’ understanding of the multifaceted attribution of responsibility among AI and human within AI-service-failures (RQ2), and (3) participants’ expectations regarding organizational crisis communication around AI-service-failures (RQ3). The interviews concluded with formal acknowledgments. All interviews, spanning 66–98 min, were audio-recorded with participants’ consent.

Results

Public's Understanding of AI: The Unsupported Social Actor Perception Within the “AI as Tools” Framework

Participants predominantly reject the conceptualization of AI as an emergent stakeholder in service-failure crises, instead perceiving it as a sophisticated technological instrument—distinct from conventional machinery in its complexity and functionality. This ontological framing contrasts with the CASA paradigm, which posits that individuals unconsciously attribute social agency to interactive computational systems (Nass & Moon, 2000). Rather than endorsing the anthropomorphism of AI, participants consistently characterized it as a nonsentient product devoid of intentionality or autonomy, highlighting its inherent unpredictability and associated operational risks. Furthermore, the public's perception of AI as merely a tool is significantly informed by its lack of emotional empathy and creativity. I personally believe that AI is essentially a tool, as it is fundamentally a defined computational process that yields a specific result. (N03) I think its (AI's) current level of development is still more like a tool. I feel that when it comes to higher levels of emotional creativity, it may still be in a very rudimentary stage. (N05)

Notably, when introduced to the media evocation paradigm (Turkle, 2007), divergent perspectives emerged based on participants’ technical expertise. Individuals with AI expertise analogized AI systems to developmental entities—akin to children capable of imitative learning but lacking the cognitive capacity to comprehend the ethical or practical consequences of their outputs. This perspective aligns with established research characterizing AI as “stochastic parrots” of information processing due to their fundamental lack of semantic comprehension, suggesting that AI's learning capabilities likely occupy an intermediate position between mere information replication and authentic cognitive learning (Kerner, 2023; Logan & Waymer, 2024). In contrast, nonexpert participants adopted a more instrumentalist perspective, evaluating AI's role primarily through its functional utility rather than its ontological classification. Specifically, they were more inclined to accept the analogy of AI as akin to children based on its functionality rather than its ontological status. (AI) may be somewhat analogous to a child. For instance, in the language learning phase of a child, they might not initially understand that you are speaking in a dialect; they lack the concept of dialects altogether. (But) They might simply replicate what the adults at home say because they have heard it. I feel this is somewhat similar. (N01) I can accept that its level of learning and cognition can be likened to that of human infants or young children. However, I have significant doubts regarding its emotional capabilities. I believe its “brain” is composed of algorithms. The analogy to a humanoid child is more about defining its abilities, particularly its imitative learning capability and its level of intelligence. (N07)

Crucially, both groups exhibited resistance to the theoretical construct of AI as a hybrid social actor—an entity occupying an ambiguous position between human agency and inanimate objecthood (van der Goot & Etzrodt, 2023). This collective skepticism underscores the limited applicability of stakeholder theory (Coombs, 2020) in AI-related crisis contexts, suggesting that AI is more appropriately categorized as an innovative product category rather than an autonomous decision-making entity. These findings also complicate prevailing assumptions in HCI literature and highlight the need for further investigations into the complexity of public perceptions of AI's ontological and functional boundaries within the crisis communication domain.

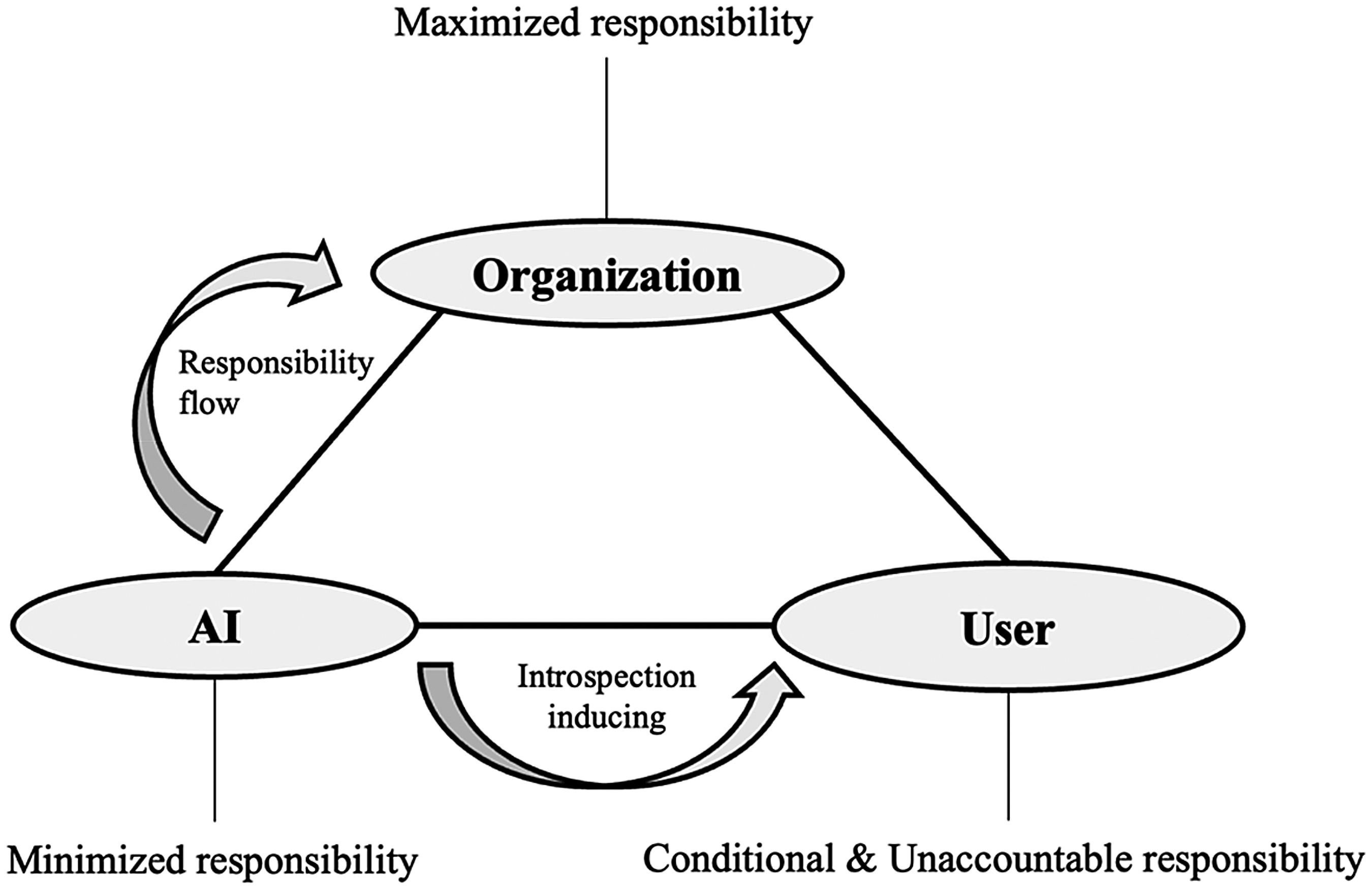

Public's Responsibility Attribution in AI–Service–Failures: The Organization–AI–User Responsibility Triangle

During the interviews, an Organization–AI–User responsibility triangle emerged (see Figure 1), characterized by maximized corporate responsibility, minimized AI responsibility, and conditional and unaccountable user responsibility. In line with the perception of AI as an instrument, participants do not consider AI capable of bearing responsibility during service-failure crises. Instead, they predominantly attribute blame to the human parties involved in the interaction. There is a prevailing belief among participants that assigning accountability to AI is largely futile, as AI is not recognized as a legal entity but rather as a tool that can be either improved or discarded when necessary. In contrast, participants assert that the responsibility for AI-service-failures should be collectively borne by the companies that develop and maintain the AI systems, as well as the users who engage with these technologies. This perspective suggests that assigning responsibility to AI entities effectively obscures the critical role of human actors in every phase of AI design, deployment, and application (Sartori & Bocca, 2023; Yigitcanlar et al., 2024). They emphasize the role of companies as stewards of the technology, highlighting their obligation to ensure responsible AI development and deployment. Simultaneously, they urge users to reflect critically on their use of AI tools, recognizing the potential for misuse or misunderstanding.

Organization–AI–User Responsibility Triangle. AI: Artificial Intelligence.

However, it is important to incorporate AI into this framework of responsibility, as its unpredictable behavior provides a critical avenue for the public to hold companies accountable while simultaneously prompting self-reflection regarding their own actions as users. Such an intersubjective significance, as articulated by Turkle (2007), suggests that the dynamics of accountability in the context of AI are complex and multifaceted. These findings contribute valuable insights that expand upon existing quantitative research, deepening our understanding of the nuances surrounding accountability in AI-service-failures. AI, as I understand it, is essentially an algorithm. I am unsure how one might apply a punishment to it, such as providing reassurance to citizens. This reflects some public emotions, or how we can truly make it aware of such feelings. However, in my view, it (AI) is just a tool and likely cannot comprehend the concept of punishment. Holding an algorithm accountable and punishing it actually seems meaningless to me; I tend to believe that responsibility should be more reasonably distributed between the companies and the users. (N02) I think the responsibility associated with AI is different from that of people. To put it simply, AI is trained by humans, so the responsibility lies with those who train it. They should understand the limits of the AI's capabilities. Users may not be aware of this. If users feel the blame lies with the AI, it connects back to the trainers, but I don’t think that should be classified as responsibility. This is because the training methods or technologies employed may not actually reach that level. (N06) The AI is not a legal entity; it does not have the capacity for independent action. Therefore, I believe it cannot be held responsible in a legal sense. As for the AI tool, companies can implement safety measures, controlling what it is allowed to output. (N15) I still believe that AI can be viewed as a high-risk or hazardous product, rather than as an employee of the AI company or enterprise. If a problem arises that causes damage, according to our understanding, the product itself can only be destroyed and cannot be held accountable or punished. (N17)

On the one hand, there is a clear public awareness of corporate accountability in relation to AI technologies. Participants, irrespective of their usage experience with AI, unanimously agree that the ultimate responsibility for AI systems resides with the companies that develop and operate them. They contend that these corporations, as influential social entities that provide AI services for profit, must assume the commercial risks associated with any failures of their AI systems. Notably, nearly all participants emphasized the obligation of these enterprises to implement rigorous data cleaning and ensure robust safety measures. This perspective underscores the roles of companies as “stewards,” “gatekeepers,” and “regulators” in fostering “responsible AI” practices (de Laat, 2021). An especially important aspect now is the process known as data cleaning. This means that when training a model, you must clean the data according to a series of evaluation criteria. This is the company's responsibility and an essential step in the model training process. Essentially, you must remove any data that contains offensive or discriminatory content; this is the company's obligation. Data cleaning involves preparing a large dataset for training this model, and you must ensure a certain level of cleanliness. The evaluation criteria must be very strict—any data that is discriminatory should not exist. (N03)

On the other hand, there exists a spontaneously developed reflexive awareness among the public regarding their decision-making processes as AI users, which corresponds with the perception of AI as a mere tool. When recalling their experiences with AI-service-failures, participants demonstrated a rapid self-reflective awareness, recognizing that issues may have arisen during their interactions with AI prior to the failure, contributing to the subsequent malfunction. However, participants also articulated the practical difficulties of holding AI users accountable for their actions. The Chinese cultural maxim “fa/bu/ze/zhong” (法不责众)—“the law does not punish the multitude” complicates this issue. Participants noted that while users may encounter operational problems, if most individuals in society utilize AI in similar ways, it becomes challenging to attribute blame to any specific user. Additionally, the unpredictable nature of the “butterfly effect” suggests that, when considering the algorithmic opacity and complexity associated with AI, along with the nonmalicious intentions of users, it is inherently difficult for individuals to anticipate how their interactions with AI might influence others and society during the algorithm's iterative processes. This renders accountability even more elusive. Such multifaceted understanding underscores the necessity for a balanced approach to accountability, recognizing both corporate responsibilities and the complexities that characterize user interactions with AI technologies. I think I might first feel confused and wonder if there were some issues when I was adjusting the AI. And I would consider the AI to be blameless. (N09) It's possible that users make mistakes when using the service, such as pressing the wrong button or misusing certain features. After all, AI services involve interaction with humans, meaning both parties contribute to the outcome. (N19)

Public Demands From a Human-Centered AI Perspective: The Appeal of Corporate Apology, Precautionary Measures, and Corrective Actions in AI-Service-Failure Crises

Given that AI is not perceived as a morally or legally accountable social actor, the imperative for corporate apologies and corrective measures emerges as both a strategic necessity and an ethical obligation in managing AI-service-failure crises. Participants consistently underscored that AI applications must prioritize the enhancement of societal well-being, a principle firmly aligned with the tenets of human-centered AI (HCAI). This paradigm emphasizes the integration of human values, ethical considerations, and societal benefits into AI design and deployment, positioning AI as a tool for augmenting human capabilities rather than replacing or undermining them (Shneiderman, 2020).

From a pragmatic standpoint, participants argued that corporate apologies serve as a critical mechanism for restoring public trust and mitigating reputational damage following AI failures. Such apologies signal organizational accountability and a commitment to rectifying systemic shortcomings, thereby fostering stakeholder confidence in the company’s ability to manage AI-related risks (Romenti et al., 2025).

From an ethical perspective, participants emphasized that companies bear a moral responsibility to acknowledge their failure to deliver reliable and superior AI-driven service products. This expectation aligns with broader societal demands for corporate transparency and ethical stewardship in the age of AI. By expressing genuine regret and implementing corrective actions, organizations not only adhere to HCAI principles but also demonstrate their commitment to aligning technological innovation with human-centric values.

Thus, the integration of apologies and corrective measures into corporate CCSs represents a dual imperative: addressing immediate stakeholder concerns while advancing long-term organizational legitimacy in the context of AI governance. An inappropriate analogy might be if you create a knife that is not sharp enough. As a professional knife maker, you cannot blame the world for not having harder materials. Instead, you should think about how to make a sharper knife under the existing conditions. As developers of AI, your aim is to improve human society, so when such problems arise, you bear that responsibility. This holds true from both a pragmatic perspective and a moral standpoint. I feel that way. (N10) I think I would hope that the company would apologize to me… to say they’re sorry for the offense or that their model's capabilities are insufficient. (N01)

Furthermore, empirical evidence from participant responses revealed substantive criticism of the conceptual framework termed the “mirror strategy”—an approach that locates the etiology of AI system failures within broader societal structures (Prahl & Goh, 2021). Critics contended that this strategy represents an ethically untenable position through its systematic abdication of corporate accountability. By attributing causal responsibility exclusively to macro-level social phenomena, organizations employing this strategy circumvent their fiduciary obligations to implement robust data governance protocols while simultaneously disregarding institutional imperatives to uphold corporate social responsibility mandates.

This strategic framing engenders problematic normative implications, as articulated through participant feedback. The discursive transfer of accountability from corporate actors to amorphous societal forces creates perverse institutional incentives that may compromise ethical AI development practices. Moreover, the epistemological foundations of the “mirror strategy” appear fundamentally misaligned with established principles of technological stewardship outlined in contemporary AI ethics frameworks. Consequently, our results suggest that the practical implementation of this strategy may yield outcomes diametrically opposed to its purported public relations objectives, potentially exacerbating stakeholder mistrust rather than cultivating institutional legitimacy. I definitely cannot accept that. First of all, when a company releases a product, it is primarily for profit. If a problem arises, it is completely dismissive to blame society as a whole so easily. Yes, society certainly has its issues, but if we dig deeper, we can also say that the problems stem from capitalism, which has led to social polarization and inequalities, or discrimination. So, if you approach it this way, it becomes a logical paradox without providing any solutions…If a company wants to develop a genuinely effective strategy, what matters is solving the problem rather than assigning blame. At the very least, they should not present a helpless response or fail to take a stance. Being completely hands-off, like an indifferent bystander, would definitely provoke opposition or a confrontational attitude from some people. (N08) I also find it unacceptable because the company is capable of doing more; there's no reason for them to appear so innocent. For instance, before releasing the product, they could have done more internal testing to anticipate potential issues. Even after the product is launched, they can carry out better regulatory oversight from the background. They clearly have many things they could do, yet in the end, they act as if they are unable to manage any of this and are powerless. I feel this is a significant attempt to shirk responsibility. (N12)

Participants further contended that, in the age of AI, companies must prioritize proactive crisis management and strategic communication efforts during the precautionary phase to preemptively mitigate potential crises. They underscored the critical importance of developing comprehensive and user-friendly guidelines for AI products, tailored to accommodate individuals with varying levels of AI literacy. These guidelines should not only transparently outline the functionalities and benefits of AI tools but also explicitly communicate their associated risks and limitations. Additionally, they should clearly delineate the respective responsibilities of users and companies across different usage scenarios, thereby reducing ambiguity and fostering accountability.

This approach reflects a more sophisticated and effective corporate communication strategy, one that harmonizes the company's interpretative authority with the public's right to access accurate and actionable information. By doing so, organizations can cultivate a more informed and engaged public discourse, which in turn promotes responsible innovation and ethical stewardship of AI technologies. Such practices align with the principles of public engagement and inclusiveness, ensuring that diverse stakeholders are equipped to participate meaningfully in discussions about AI's societal implications (Buhmann & Fieseler, 2021). This proactive and transparent communication framework not only enhances trust between companies and their users but also contributes to the broader goal of fostering a more equitable and informed digital society. I think companies need to provide the public with clear instructions on how to use their products, similar to a user manual. They also have the responsibility to simplify this usage as much as possible to encourage more users to purchase their services. This could also include exploring other ways to monetize their offerings. I believe it's important to regard AI as a tool, much like a knife; users may misuse it, even if they have been instructed on how to operate it. However, I feel that the responsibility may still lean towards the public's acceptance, provided that the company properly informs them. For example, they could have users sign agreements or similar documents…If the service is challenging to use, such as having a lengthy and complicated user manual, then that is the company's issue. They must simplify these materials in order to successfully sell their service or mitigate potential risks. (N04) I completely agree with the idea of prior instructions and disclaimers. AI companies should sign agreements in advance and inform customers about all potential risks as much as possible. (N14)

Besides, participants expressed the view that companies currently demonstrate a notable degree of immaturity in their precrisis preparedness and risk management practices. They argued that organizations should adopt a more measured approach by slowing their aggressive pursuit of AI product opportunities and redirecting their focus toward strengthening proactive crisis mitigation strategies. This shift would enable companies to move beyond merely implementing reactive remedial measures after a crisis has occurred, thereby fostering a more resilient and sustainable operational framework.

However, it is also critical to emphasize that companies cannot rely solely on “precrisis disclaimers” as a means of absolving themselves of responsibility. The inherent complexity of assessing and monitoring whether the public has correctly utilized AI tools—such as those designed for AI-assisted driving—presents significant operational and ethical challenges. These challenges are compounded by the dynamic nature of user interactions with AI systems, which often involve unpredictable behaviors and varying levels of user competence. Consequently, companies must adopt a more holistic approach that integrates robust precrisis planning, transparent communication, and ongoing user education to ensure responsible AI deployment and mitigate potential risks effectively. However, companies cannot fully rely on a “disclaimer” to absolve themselves of responsibility, as it is difficult to verify whether users are using the AI correctly and where they might have gone wrong. For example, in the context of autonomous driving, many details are challenging to reconstruct, making it hard to assign blame. Such disclaimers can reduce some of the company's risks, but they will not eliminate all of them. (N20)

Discussion

The findings of this study yield critical theoretical and practical insights into public understandings of AI-service-failures, addressing the limitations of extant quantitative studies. This integrated framework—encompassing public cognition, responsibility attribution, and organizational conduct expectations—demonstrates substantive theoretical alignment with the media evocation paradigm while concurrently delivering actionable guidance for corporate crisis communication in the AI era.

Theoretical Implications

This research articulates three core contributions that advance scholarly insights into public cognition of AI crisis communication.

Consciously Reconceptualizing AI's Ontological Status in Crises Contexts: Rejecting Anthropomorphism for Instrumentalism

Diverging from the anthropomorphic assumptions of the CASA paradigm under the media equation framework (Kim & Sundar, 2012; Nass & Moon, 2000; Reeves & Nass, 1996), participants consistently and consciously rejected conceptualizing AI as a hybrid social actor—much less a legitimate stakeholder—despite this conceptualization constituting a core aspect of crisis communication scholarship (Coombs, 2020). Instead, they framed AI systems as technologically advanced yet fundamentally nonsentient instruments. This ontological demarcation—predicated upon AI's perceived absence of moral agency, emotional capacity, and legal personhood—challenges predominant CASA-based theoretical constructs positing that humans automatically attribute quasi-human qualities to interactive technologies exhibiting features like natural language generation (Nass & Moon, 2000; Nass et al., 1993).

Notably, the interviews revealed that nonexperts adopted an even more instrumentalist perspective toward AI's ontological status than AI experts. This finding proves intriguing yet remains conceptually coherent with existing scholarship. For instance, the Executive Director of Northeastern University's Institute for Experiential AI characterizes AI systems as “stochastic parrots” capable of output generation without semantic comprehension (Kerner, 2023)—a metaphor echoed by participants of the current study describing AI as “resembling children or juvenile animals” (N01 and N07) whose learning capabilities occupy an intermediate position between mere information replication and authentic cognitive processing. This disparity suggests that AI experts conceptualize AI as systems exhibiting intelligence, creativity, and adaptability often indistinguishable from human capacities (Logan & Waymer, 2024). Conversely, nonexperts predominantly probably evaluate AI through performance-based metrics. Hence, frequent AI failures understandably reinforce nonexperts’ instrumentalist framing of AI as tools rather than autonomous agents. In essence, nonexperts’ perception of AI emerges from observed “capabilities” (defining functional baselines), whereas experts emphasize developmental “capacities” (representing theoretical maxima). This dichotomy mirrors the gap between real-world AI performance and promotional demonstrations of AI systems.

Crucially, however, both nonexpert and expert perspectives align in form with the media evocation paradigm's core proposition: public cognition involves conscious negotiation of AI's role (Turkle, 2005, 2007; van der Goot & Etzrodt, 2023), rather than automated responses to autonomy cues (e.g., operational independence or linguistic capabilities). However, it should also be noticed that a critical theoretical tension emerges from the divergence between lay perceptions and scholarly paradigms. While media evocation theory characterizes AI as “evocative objects” or “subject object” blurring human–machine boundaries (Suchman, 2011; Turkle, 2007), empirical evaluations in crisis contexts revert to instrumentalist interpretations. Such divergence reveals a substantive misalignment with media evocation theory's conceptual expectations. Participants predominantly situated AI within an artifact ontology—a sophisticated tool requiring greater user expertise.

This instrumental orientation does not inherently advance or regress the media evocation theory. In this study, ontological assessments occurred within a responsibility-attribution framework (AI-service-failure contexts), compelling participants to evaluate AI through socioethical accountability lenses. Under these conditions, AI's hybrid identity complexity became subordinated to human accountability imperatives, potentially constraining participants’ richer ontological contemplation. Consequently, AI's complicated status in abstract philosophical discourse fails to permeate crisis responsibility frameworks, where mechanistic interpretations dominate public reasoning. Given this theoretical tension, context-sensitive ontological models distinguishing between everyday human–AI interaction dynamics and high-stakes failure scenarios requiring unambiguous accountability structures facilitate deeper and multifaceted comprehension of publics’ AI ontological perceptions.

Intersubjective Negotiation of Human–AI Relationality: Asymmetric Accountability in the Organization–AI–User Triangle

The tripartite responsibility framework reveals a pronounced asymmetry in blame attribution, with corporations bearing maximized accountability, users granted conditional exoneration, and AI systems absolved of moral/legal culpability. This hierarchy aligns with attribution theory’s emphasis on controllability and intentionality (Weiner, 1985), as companies are perceived to possess both the technical capacity and fiduciary duty to prevent AI failures through rigorous data governance, algorithmic transparency, and user education. The public’s reflexive awareness of corporate stewardship roles—evident in demands for preemptive risk mitigation and postcrisis corrective actions—reflects an evolution in stakeholder expectations, wherein technological providers are held to heightened ethical standards akin to public trustees (de Laat, 2021). Conversely, the conditional exoneration of users, contingent upon their nonmalicious intent and systemic constraints (e.g., algorithmic opacity, “butterfly effects”), highlights the inadequacy of individual-centric accountability models in complex sociotechnical systems. This asymmetry carries significant implications for organizational governance, urging enterprises to institutionalize “ethics-by-design” protocols rather than relying on reactive legal disclaimers or user agreements.

The Organization–AI–User responsibility triangle emerges as a product of intersubjective cognition during AI-service-failures, theoretically resonating with the media evocation paradigm. Such crises catalyze not merely perceptions of AI's role but fundamental human processes of self-reflection, self-other reconstitution, and world-making (Blumer, 1969; Carey, 1989), wherein public cognition differentially constitutes corporate entities (embodied by executives) and users as distinct social actors with varied accountability weights within the triad (Edwards & Edwards, 2022; van der Goot & Etzrodt, 2023). When situating humans and AI within responsibility dynamics, the public engages in three-tiered intersubjective work: reallocating human accountability across corporate and individual users, recontextualizing AI's ontological ambiguity, and recalibrating sociotechnical relationality. This represents an epistemological advancement beyond conscious ontological assessments of AI, as participants embed cognition within a tangible macro-social sphere where AI's identity paradoxes become catalysts for reimagining human agency.

Furthermore, transcending descriptive accounts of asymmetric responsibility attribution requires contextual calibration through expanding the Organization–AI–User triangle framework to incorporate contingency dimensions. In accordance with the interviews of this research and existing studies, we propose four dimensions potentially reconfiguring attributional weights: (1) failure severity gradient: catastrophic outcomes (e.g., fatal autonomous vehicle crashes) probably amplify corporate accountability through control amplification effects, whereas minor malfunctions (e.g., recommendation errors) increase conditional user exoneration via tolerability thresholds (Liu & Du, 2022; Song et al., 2023); (2) actor-visibility spectrum: identifiable users (e.g., clinicians using diagnostic AI) might approach corporate accountability levels, while anonymous users (e.g., social media crowds) remain protected by systemic diffusion (Khachaturov et al., 2025); (3) explainability-transparency nexus: opaque AI failures (e.g., deep learning fraud detection) can trigger corporate absorption of user responsibility, whereas interpretable systems (e.g., rule-based chatbots) activate shared culpability (Singhal et al., 2024); (4) cultural-institutional matrices: individualistic societies (e.g., USA) likely emphasize corporate accountability, contrasting with collectivist contexts (e.g., China) diffusing responsibility through institutional ecosystems that highlights shared obligation norms (Gelfand et al., 2004).

The potential existence of these contingencies demonstrates that the dynamic nature of the Organization–AI–User responsibility triangle is beyond static asymmetric attributions. Future research should empirically validate the influence of these factors—alongside other latent variables—on public responsibility attribution in AI-service-failures to refine the framework. Such advancement would transform the triangle from a descriptive model into a prescriptive public relations tool, enabling proactive alignment of CCSs with predicted attribution landscapes. This repositioning organizational rhetoric as participatory sense-making within evolving sociocognitive frameworks—rather than reactive justification—potentially advances a paradigm shift in AI crisis governance.

A Critical Examination of Sociotechnical Accountability: Human-Centered Crisis Communication Beyond Performative Apologies

The strong public endorsement of apologies, precautionary measures, and corrective actions—coupled with vehement rejection of the “mirror strategy”—signals the uniqueness of crisis communication norms for AI failures. Participants’ emphasis on substantive accountability over symbolic gestures challenges organizations to transcend performative compliance with HCAI principles (Shneiderman, 2020), advocating instead for systemic reforms in AI development life cycles. The repudiation of blame-shifting tactics like the “mirror strategy” underscores a potential growing public sophistication in discerning corporate evasion of duty, particularly when such strategies exploit societal inequities to deflect scrutiny. These findings extend situational crisis communication theory (Coombs, 1998) by providing implications applicable to AI-specific contexts including (1) proactive AI transparency: clear communication of AI capabilities/limitations during precrisis phases to align public expectations (Shneiderman, 2020); (2) AI ethical stewardship: demonstrable commitments to algorithmic auditing, bias mitigation, and user empowerment (Koshiyama et al., 2024); and (3) cocreative engagement: collaborative problem-solving with stakeholders to design failure-resilient AI systems (Buhmann & Fieseler, 2021).

Theoretically, such expectations originate from conscious public negotiation of AI's ontology as tools, illustrating how intersubjectively negotiated cognition shapes behavioral demands (van der Goot & Etzrodt, 2023). Such corporate conduct expectations advance beyond ontological understanding and responsibility attribution, engaging the ultimate dimension of media evocation: critical reflection on societal relationality wherein technological advancement catalyze re-examination of human interdependence (Mead, 1967; Turkle, 2005).

Within this study's context, such reflection constitutes the convergence of public AI-ontology cognition and triadic responsibility attribution into sociorelational imperatives. Instrumental perception of AI manifests through demands for corporate prevention measures and rejection of responsibility-deflecting strategies. Cognition of the Organization–AI–User responsibility triangle crystallizes in expectations that enterprises assume accountability through apologies, thereby reinforcing human agential primacy within sociotechnical systems. Ultimate affirmation of human-centric axiology emerges through insistence on corrective actions and deliberative cocreation, ensuring collective participation in AI governance to actualize human-centered technological futures—a process in which crisis becomes the crucible for establishing public–corporate trust and renegotiating the moral architecture of human–machine coexistence.

Practical Implications

Following the completion of our interviews, a newly published study (Romenti et al., 2025) analyzed 152 cases of incidents and controversies caused by AI algorithmic and automation failures documented in the AIAAC database—an independent, nonpartisan public interest initiative promoting transparency in AI governance. Their analysis revealed that companies employed denial postures in 37.5% of cases, while only 17.4% adopted rebuilding postures. This discrepancy between prevalent corporate practices and our interview-derived recommendations underscores the critical importance of our practical implications. From the public perspective, organizations should prioritize adopting and championing the following strategies:

Prioritize Accommodative CCSs

Organizations must proactively adopt accommodative strategies—specifically corporate apologies paired with demonstrable corrective actions—when addressing AI-service-failures. The participants’ rejection of evasive tactics like the “mirror strategy” underscores that deflecting blame to societal factors erodes trust and intensifies reputational damage. Public expectations align with HCAI principles, demanding transparent accountability for failures (Ozmen Garibay et al., 2023; Shneiderman, 2020). Crisis messaging should explicitly acknowledge organizational responsibility, express genuine regret, and outline concrete steps to rectify system flaws (e.g., algorithmic audits, bias mitigation protocols) (Koshiyama et al., 2024). This approach not only fulfills social obligations but also strategically rebuilds stakeholder confidence more effectively than defensive justifications (Romenti et al., 2025).

Institutionalize Precrisis Safeguards and Transparency Mechanisms

Given the maximized responsibility attributed to organizations, proactive risk governance is critical. Participants emphasized the necessity of rigorous data cleaning, algorithmic transparency, and user-centric design before AI deployment. Organizations should establish internal “AI stewardship” frameworks, including: (1) comprehensive prelaunch testing across diverse scenarios to identify failure vulnerabilities; (2) clear public documentation of AI capabilities, limitations, and ethical guidelines; and (3) accessible user agreements that transparently delineate user/organizational responsibilities without relying on disclaimers to absolve accountability (Chiu & Lim, 2021; Kiseleva et al., 2022). These measures preemptively align public expectations with system functionality, reducing ambiguity during crises.

Develop Tiered User Education and Cocreative Engagement

While user accountability remains conditional, organizations must address the “butterfly effect” of user–AI interactions through tailored education. This involves: (1) designing intuitive, literacy-adaptive usage guidelines (e.g., simplified manuals for novice users, advanced tutorials for professionals); (2) implementing ongoing feedback channels for users to report ambiguities or errors; and (3) facilitating cocreative workshops with stakeholders to iteratively improve AI usability and failure resilience (Buhmann & Fieseler, 2021; Ryan & Stahl, 2020). Such initiatives recognize users’ reflexive awareness of their role while mitigating operational risks stemming from misunderstandings or unintended misuse.

Reject the “Mirror Strategy” and Champion Ethical Responsibility

The resounding public criticism of the mirror strategy necessitates its abandonment. Organizations should instead position themselves as ethical responsibility undertaker by publicly committing to bias mitigation and societal benefit. This includes: (1) openly addressing training data limitations and algorithmic biases in crisis communications; (2) collaborating with regulators, academia, and civil society to develop industry-wide accountability standards; and (3) investing in “ethics-by-design” AI development that prioritizes human welfare over profit-driven expediency (Brey & Dainow, 2024; Camilleri, 2024). By framing crises as opportunities for systemic improvement—rather than deflecting blame—firms reinforce their role as responsible stewards of transformative technologies. These implications collectively advocate for a paradigm shift from reactive crisis containment to proactive, human-centric AI governance (Ozmen Garibay et al., 2023). Organizations that embed accountability, transparency, and cocreation into their operation will not only navigate AI failures more effectively but also cultivate sustainable public trust in an increasingly algorithmic society (Robles & Mallinson, 2025).

Limitations and Future Directions

Several limitations of this study should be acknowledged. First, 11 of the 21 participants in this study are current students, resulting in a student-dominated sample. As students may lack professional service experience compared to more mature adults with broader societal exposure, their self-relevance regarding AI-service-failure topics could be diminished. This limitation potentially constrains the generation of in-depth discussions and insights during group interviews. Consequently, the generalizability of these findings may be limited. Future studies should endeavor to explore nonstudent populations’ perceptions of agency and responsibility attribution in AI-service-failures, thereby enriching sample representativeness.

Second, the educational level of the sample in this study exceeds the national average in China, which may introduce bias in the interview findings. According to the 2020 Population Census of China (National Bureau of Statistics, 2020), only approximately 15.5% of China's population possess college degrees and above. Nevertheless, approximately 81.0% of participants in this study hold college degrees and above. Consequently, the implications derived from the results of the interviews may suffer from limited applicability across different demographic groups. For instance, while not all highly educated individuals possess AI expertise, their agentic perception of AI may incline toward viewing it fundamentally as a tool, informed by their awareness of the algorithmic programming behind AI. This perspective potentially strengthens their stance that enterprises should bear responsibility as developers, regulators, or stewards. Conversely, individuals with lower educational attainment and without AI expertise may perceive AI as more enigmatic or mysterious. This uncertainty and lack of understanding regarding its underlying principles might lead them to attribute a more agentic, even lifelike, social role to AI. Future research should explicitly investigate these potential dynamics across populations with diverse educational backgrounds for a more comprehensive understanding of public perceptions. Particular attention should be paid to identifying practical AI product usage guidelines—especially during the precautionary phase of AI-failure-induced crises—considering how varying educational level shapes distinct levels of knowledge, cognitive frameworks, and AI literacy.

Third, the absence of formal member triangulation following the group interviews diminishes the interpretive validity of the findings. Although the moderator implemented real-time triangulation procedures during sessions (e.g., confirming participants’ agreement with peer statements) and conducted cross-group comparisons posthoc (e.g., analyzing response variations between participants with/without AI expertise and with extensive/limited AI usage experience), the feedback mechanism for transcript verification remained informal. Future research should address this methodological gap to enhance study credibility.

Lastly, as noted in the theoretical implications, future research should both develop context-sensitive ontological models that differentiate routine human–AI interactions from high-stakes failure contexts requiring clear accountability structures, and empirically examine how contingency factors—such as failure severity gradients, actor-visibility spectra, explainability–transparency nexuses, and cultural–institutional matrices—alongside latent variables shape public responsibility attribution to refine the organization–AI–user responsibility framework.

Despite these limitations, this study represents pioneering empirical work investigating public perceptions of AI and expectations regarding corporate crisis communication and management strategies in AI-service-failure contexts, establishing conceptual foundations for subsequent research on this rapidly evolving yet critically important domain.

Conclusion

This study extends yet complicates core tenets of the media evocation paradigm by investigating public perceptions within AI-service-failure crises—a context where AI’s ontological ambiguity collapses into reductive instrumentalism, yielding an asymmetric Organization–AI–User responsibility triangle. Within this framework, AI crises afford organizations no greater latitude for responsibility deflection than traditional service failures; accountability remains principally organizational, with corporate apologies and corrective actions constituting normative expectations. These findings compel organizations to adopt an AI guardianship model prioritizing ethical foresight over profit-driven expediency while cultivating trust through demonstrable commitments to human-centric values. As AI's societal integration intensifies, aligning crisis strategies with these empirically grounded public expectations becomes imperative for sustaining organizational legitimacy and advancing equitable technological progress.

Footnotes

Ethics Statement

All the interview participants have provided informed consent. The study protocol was approved by the Institutional Review Board (IRB) of the School of Media and Communication, Shanghai Jiao Tong University (approval number: H20250007I).

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

The data will be shared on reasonable request to the corresponding author.