Abstract

In a rapidly evolving digital landscape, public service media (PSM) face a significant challenge: how to leverage recommendation algorithms to enhance their visibility and attractiveness without sacrificing their core values. This study examines the extent to which the Belgian Radio-Television of the French Community (RTBF) has integrated PSM values into the development of a recommender system for news articles on its platform (rtbf.be). The research highlights a conscientious effort to define and translate PSM values before the algorithm design phase, both inside and outside the organization. However, the study also reveals limitations that hinder the full realization of PSM values within the recommender system. Consequently, these values currently function more as abstract guiding principles and communication tools than tangible technical features. The study emphasizes the challenge of reconciling public service ethos with algorithmic realities and concludes by highlighting the need for further empirical research to bridge this gap in the digital age.

Keywords

Introduction

Public service media (PSM) are essential for democracies (Holtz-Bacha & Norris, 2001; Neff & Pickard, 2021). They provide trustworthy information, promote cultural variety, and encourage citizens to participate in public life (Curran et al., 2009; Garnham, 1990; Keane, 1991; Puppis & Ali, 2021 ; Scannell, 1989). However, the digital age has brought new challenges for PSM. Competition from commercial media and social media platforms has increased. To stay relevant and appealing to audiences, PSM need to adapt to new technologies and to the ways in which information is consumed today (Bardoel & d'Haenens, 2008; Burri, 2015; Donders, 2012; Jakubowicz, 2007; Nielsen, 2010).

A promising solution for PSM is to use recommender systems (Álvarez et al., 2020; Fieiras-Ceide et al., 2023). These systems can help PSM better understand what their audiences are interested in, allowing them to curate content that is more relevant and engaging (Hildén, 2022; Møller, 2022a). However, there are ethical concerns about using these algorithmic systems (Luengo & Herrera, 2021; Sørensen, 2020). They could potentially influence how people get informed and participate in public life (Joris et al., 2022; Sørensen, 2011).

PSM face a difficult balancing act (Leurdijk, 2007; Raats, 2023; Van den Bulck & Moe, 2018). They need to adopt recommender systems to stay competitive, but they also want to maintain their unique public service values. This challenge is illustrated by the Belgian Radio-Television of the French Community (RTBF), a PSM trying to develop “public service algorithms.” Despite the scholarly emphasis on the role of public service values in the personalization dynamics reshaping PSM (Hildén, 2022; Møller, 2022a; Sørensen, 2019, Van den Bulck & Moe, 2018), it remains unclear how deeply these values are integrated into the design of recommender systems.

This study bridges that gap by investigating the extent to which public service values are embedded within RTBF's news recommender system through in-depth ethnographic research. Drawing on the Science and Technology Studies (STS) framework, specifically the sociology of translation (Akrich et al., 2006; Callon, 1986, 1990; Latour, 2007), and incorporating communicational approaches to organizations (CAO), this research examines how RTBF operationalizes public service values in the design and operation of its news recommender system on RTBF’s platform, rtbf.be.

The study reveals RTBF's proactive approach in defining public service values prior to the development of the recommender system. This effort involved both internal discussions within RTBF and external discussions with stakeholders. However, organizational and market constraints have prevented these PSM values from being fully incorporated into the technical aspects of the algorithm. As a result, these PSM values currently serve more as abstract guiding principles and as talking points for RTBF when communicating about the recommender system, rather than being directly reflected in the algorithm's code or functionality.

Literature Review

Public service values and PSM

The academic literature on PSM did not really start to develop until the 1980s and the liberalization of the media sector. According to Moe and Syvertsen, before this period there was little actual research on public service broadcasting […]. Notwithstanding some studies of the history of the original institutions, such as the early history of the BBC, research did not flourish until the late 1970s and 1980s. (2009, pp. 400–401)

In Western Europe, media liberalization meant the end of public monopolies on broadcasting (Bardoel & d'Haenens, 2008; Donders, 2012; Leurdijk, 2007). Now competing in a market, public service broadcasting needed to adjust quickly. This involved not just keeping pace with new policies (Hallin & Mancini, 2004; Kuhn, 1985; McQuail & Siune, 1986) and new technologies, but also understanding and responding to the changing needs and interests of audiences (Jakubowicz, 2007).

This quick adaptation left PSM in a somewhat vulnerable position and paved the way for their digitization (Glowacki & Jaskiernia, 2017). It was also during this period that the term “public service media” became established, replacing the term “public service broadcasting” (Lowe & Bardoel, 2007). Since then, scientific research has sought to document and analyze the transformations of these organizations and to define the specificities of PSM through comparison with their commercial competitors.

The concept of PSM presents a significant challenge within the field of media studies (Harrison & Woods, 2001; Kuhn, 1985; Moe & Syvertsen, 2009). Defining PSM remains a contentious issue, with some scholars arguing that its inherent heterogeneity renders it resistant to a single, universally accepted definition (Bolin, 2004; Søndergaard, 1999). This complexity stems from the vast array of contexts, organizational structures, funding models, control mechanisms, and missions that characterize PSM institutions across the globe. The multiplicity of factors, as noted by Syvertsen (1999), undermines the concept's analytical utility due to its inherent ambiguity.

In contrast to the notion of an elusive definition, some scholars argue that PSM share common characteristics that enable more precise conceptualization (Van den Bulck & Moe, 2018). Two fundamental features are frequently emphasized. First, PSM stand apart from private media because of a rigorous legal and regulatory framework governing their operations. They may encompass specific obligations regarding content creation, funding sources, and governance structures. Second, PSM are defined by a public service mission and the embodiment of core values. Beyond mere content dissemination, they strive to meet the needs and expectations of society by promoting public service values.

Pinpointing a universally agreed-upon set of PSM values remains a key challenge for research. Born and Prosser (2001, p. 671) identified three core principles based on a large-scale survey conducted in the early 2000s: (1) enhancing social, political, and cultural citizenship; (2) universality; and (3) quality of service. Similarly, the United Nations Educational, Scientific, and Cultural Organization (UNESCO) (2001, pp. 11–13) proposed four principles: (1) universality, (2) diversity, (3) independence, and (4) distinctiveness. While “universality” and “diversity” appear as recurring themes across numerous PSM studies (Hildén, 2022; Sørensen, 2019: Van den Bulck & Moe, 2018), a definitive consensus on the complete set of core values has yet to be established.

However, the academic literature provides evidence that these values hold normative power, shaping various facets of an organization's life. They can function as a pull factor, attracting individuals who resonate with the PSM's mission. Additionally, these values serve as guiding principles for content creation and dissemination. Furthermore, public service values can be leveraged externally. PSM can utilize them as justifications within the institutional framework (including regulatory bodies and policymakers), advocating for differential treatment that supports their unique role in society. Interestingly, recent studies suggest that these values also influence how technical innovations are adopted and received within PSM (Hildén, 2022; Murschetz, 2021; Schwarz, 2015; Van den Bulck & Moe, 2018).

News recommender systems in PSM

News recommender systems are now widely deployed in the media landscape (Álvarez et al., 2020; Bodó, 2019). These systems aim to generate recommendations for the user by selecting and ranking news articles from a pool of items according to a predefined set of rules (Möller et al., 2018). They can employ a variety of techniques, including content-based filtering, collaborative filtering, popularity-based filtering, or hybrid approaches (Álvarez et al., 2020; Hildén, 2022; Møller, 2022b). These systems contribute to personalization dynamics in PSM (Hildén, 2022).

News recommender systems hold significant interest for news media organizations. By tailoring content to individual user preferences, these systems enhance user experience, foster engagement, and provide valuable insights into audience behavior (Murschetz, 2021). This knowledge enables media outlets to optimize content promotion (Bodó, 2019) and ultimately expand their audience. Some researchers even argue that news recommender systems could encourage users to diversify their news consumption (Bozdag & van den Hoven, 2015; Helberger et al., 2016).

Research has extensively explored various dimensions of the impact of news recommender systems (Mitova et al., 2022). Numerous studies have examined their influence on news consumption habits (Beam, 2014; Joris et al., 2022; Thurman et al., 2019), their role in democratic access to quality information (Helberger, 2019; Heitz et al., 2022), or user trust in these systems (Shin, 2020). Others have highlighted the tensions arising from the implementation of these systems in terms of journalistic roles and practices (Bucher, 2017; Bastian et al., 2021; Diakopoulos, 2019), including gatekeeping mechanisms (Møller, 2022b; Wallace, 2018).

However, literature reviews by Murschetz (2021) and Mitova et al. (2022) reveal a complex and ambivalent relationship between PSM and personalization dynamics. Personalization through algorithmic recommender systems emerges as a central challenge. PSM organizations face the dilemma of balancing competitive pressures to adopt such systems with the imperative to uphold their core values and missions (Raats, 2023; Schwarz, 2015; Sørensen, 2019). This tension involves both technical and ethical issues, including what is referred to as the “black box” nature of recommender systems (Murschetz, 2021, p. 77).

This dilemma arises from the potential risks recommender systems pose to users. The formation of “filter bubbles” (Pariser, 2011) and “echo chambers” (Sunstein, 2007) is a primary concern linked to individual polarization. While the scientific consensus on the extent of these negative effects is debated (Dubois & Grant, 2018; Flaxman et al., 2016; Fletcher et al., 2020; Haim et al., 2018; Ross Arguedas et al., 2022), PSM take this issue seriously. Research by Van den Bulck and Moe (2018) on the Norwegian Broadcasting Corporation (NRK) and the national public service broadcaster for the Flemish Community of Belgium (VRT), Schwarz (2015) on the Swedish Television (SVT), Sørensen (2020) on the Danish Broadcasting Corporation (DR), and Hildén (2022) on a comparative analysis indicates that PSM often base their personalization strategies on the assumption of filter bubble risks, shaping their technological choices accordingly. In this context, recommender systems are perceived as threats to the values of diversity and universality upheld by PSM.

Overall, there is a limited body of research specifically examining the link between news recommender system design and public service values. For instance, Bastian et al. (2021) explored the importance of integrating journalistic values into algorithmic recommendation systems for journalists. They highlighted that the significance of these values varied based on professionals’ perceptions of these systems. Hansen & Hartley (2021) also addressed journalistic values in their ethnography. They described how journalistic values such as “timeliness” and “localness” are transformed through the recommender system design.

Most research on recommender systems in PSM tend to invoke public service values based on the perceptions or representations of stakeholders or journalists. But there is a gap in our understanding of how these values actually influence the design of such systems (Møller, 2022b). This is why Sørensen (2020), Hansen & Hartley (2021), Hildén (2022), and Møller (2022b) call for future research in this area. As Sørensen (2020, p. 107) states: “The question, worthy of further research, is whether ‘relevant recommendations’ are fundamentally different in a public service context compared to a commercial VoD?”

Theoretical framework

This study investigates the concept of a “public service algorithm” as promoted by RTBF and its practical implementation. Central to this research is understanding how public service values are identified and integrated into the development of the news recommender system, and to what extent these values are reflected in the system's final design.

Situated at the intersection of Science and Technology Studies (STS) and PSM research, this study employs the theoretical frameworks of the sociology of translation (Akrich et al., 2006) and CAO (Bouillon et al., 2007; Bouillon, 2010). The sociology of translation explores how technologies emerge, the factors influencing their success or failure, and their broader societal implications (Callon, 1990; Latour, 2007). Within this framework, the design, power, and impacts of technologies are viewed as outcomes of complex networks involving humans and non-human actants (Akrich et al., 2006).

A fundamental concept in the sociology of translation is that innovations are the result of ongoing “translation” processes. According to Callon et al. (1999), translation is defined as the set of actions that allow actors to coordinate around a project by advocating their interests, relying on material, discursive, or institutional resources that frame and influence them. During the innovation journey, various actors continuously interpret and adapt their goals and values to fit the evolving context. This dynamic process is primarily managed by “translators”—central figures who drive and shape the innovative efforts. Translators are responsible for engaging and convincing an expanding network of participants to support and contribute to the initiative. These translation processes occur within “translation centers” which are focal points where ideas, values, and objectives are negotiated and refined (Callon et al., 1986). This study applies the sociology of translation to examine how public service values are identified, transformed, and embedded within RTBF's algorithmic design process.

While not a standalone theoretical framework, CAO serve as a methodological approach to analyzing empirical data. CAO research examines social phenomena as discursive constructs by analyzing interactions, technologies, and symbolic elements. In this study, CAO is used to trace the journey of public service values throughout the design process across these three dimensions.

Case study

RTBF serves as the public service broadcaster for Belgium’s French-speaking community. This dynamic broadcasting sector thrives despite the community’s relatively small size. RTBF manages a diverse media portfolio, including nine radio stations (excluding web radio), three television channels, a dedicated website (rtbf.be), and the Auvio digital platform, which offers catch-up TV and free video-on-demand (VoD) services.

As part of its “Vision 2022” plan, a comprehensive strategy aimed at modernizing and adapting to the evolving media landscape (RTBF, 2016, p. 8), RTBF has embarked on a significant investment plan to leverage its data assets since 2016. This initiative coincided with the official launch of the Auvio digital platform, which serves as a central hub for users to seamlessly access, explore, and revisit a broad range of RTBF's content, including exclusive materials (2016, p. 20). Recognizing the potential of data, RTBF initiated a research and development project to optimize the utilization of data collected on digital content consumption patterns (2016, p. 21).

The year 2017 witnessed continued growth in RTBF's commitment to big data technologies. In February, a collaborative effort between RTBF, The Faktory, and the Data Fellas consortium yielded the public release of the initial iteration of the recommendation algorithm developed for Auvio (RTBF, 2017, p. 62). The same year also saw the production of RTBF's data centralization platform and the implementation of an automated metadata extraction system. Notably, 2017 marked the first public declaration of RTBF's intention to create “public service algorithms” (RTBF, 2017, p. 62).

The year 2018 brought significant organizational restructuring under the “Vision 2022” plan. Transitioning from a hierarchical to a circular organizational model, this transformation facilitated the incorporation of the newly established “Data Management Department” within RTBF's structure (RTBF, 2018, p. 116). Furthermore, in September 2018, RTBF partnered with Data Fellas to form the joint-stock company Data Reco, with RTBF holding a 40% ownership stake (RTBF, 2018, p. 116). This collaboration aimed at the development of innovative recommender systems. Subsequently, the Data Management Department shifted its focus toward the in-house development of a new recommender system specifically designed for the Auvio platform.

Under the leadership of the Data Management Department Director, 2019 saw a significant overhaul of RTBF's data governance structure (RTBF, 2020). This restructuring led to the creation and coordination of multiple data-focused entities within RTBF. These entities include the Data Committee, Data Analytics Committee, Algorithms Committee, Metadata Committee, General Data Protection Regulation (GDPR) Committee, and others. Functioning as both decision-making and discussion forums, these committees collaborate to define the requirements and roadmaps for data-driven projects. Additionally, they serve as platforms for establishing and monitoring a set of key performance indicators (KPIs) for data initiatives.

The year 2022 was also a year of significant advancements for RTBF’s data initiatives. A key achievement was the development of a news recommender system (Figure 1) on the rtbf.be website. Additionally, the organization demonstrated its commitment to responsible data practices by establishing a new entity within its structure: the “Editorial Committee for Algorithms.” This committee plays a role in overseeing and guiding the development and implementation of algorithmic systems at RTBF.

The personalized recommendation banner (widget) on the rtbf.be platform.

This brief overview of the initiatives undertaken with regard to data management and algorithms highlights how RTBF serves as an exemplary case of employing personalization dynamics in PSM. Unlike other PSM organizations, RTBF has made the decision to develop its news recommender system from scratch instead of adopting preexisting solutions (Sørensen, 2019, p. 9). The outcome of this process is the establishment of a highly intricate and interconnected technical and organizational framework that revolves around data and algorithms.

Methods

In recent years, ethnographic methods have gained prominence in examining algorithms within organizations owing to their ability to capture holistically the multifaceted nature of these entities (Christin, 2020; Hansen & Hartley, 2021; Jaton, 2019; Kavanagh et al., 2015; Lange et al., 2018). Christin even argues that “ethnographic methods and thick ethnographic descriptions are often the preferred approach for studying enrolments and associations, especially when they relate to science and technology” (2020, p. 905).

To examine the development of the news recommender system, I conducted a 13-month ethnographic study at RTBF. This involved spending one to two days per week immersed in the organization. Acting essentially as the “right-hand man” to the Data Management Director, I gained broad access within RTBF. However, the executive committee meetings, as well as private meetings between the Data Management Director and the CEO, were outside the scope of my observation.

Despite these limitations, my role offered a unique vantage point. I directly observed the internal dynamics of the Data Management Department as they developed and implemented the recommender system. More importantly, my position placed me at the center of a web of activity. Various departments, all connected in some way to the RTBF recommender system project, intersected here. This translated into attending meetings with a wide range of people: service providers, technical and platform support teams, journalists, the team responsible for the GDPR implementation, and even representatives from other PSM.

The research yielded a rich collection of data, including my observation notes, roughly 900 pages of internal documents (presentations, meeting minutes, working documents, technical documents), 400 pages of external documents (annual reports, management contracts, charters), numerous photographs, and screenshots. Additionally, I conducted six in-depth interviews with stakeholders (three from the Data Management Department, and three from the platforms team and the analytics team). The audio recordings from the interviews were all transcribed.

I employed a multi-thematic analysis based on the methodology developed by Ayache and Dumez (2011). First, I aimed to construct a timeline charting the development and implementation of the recommender system, thereby reconstructing the “biography of the algorithm” (Glaser et al., 2021). This involved examining both the temporal (“design,” “implementation,” “postimplementation”) and spatial (“internal” and “external” to the organization) dimensions of the system's life cycle. The second step involved identifying the public service values embedded within the development of the recommender system and tracing their discursive transformations across the various stages composing the algorithm's biography. The third coding stage involved identifying the translation processes at work using the three dimensions of the CAO framework. The final step was a triangulation process, drawing on insights from interviews and the “heteroglossia” highlighted by Seaver (2017).

Findings

Identifying values through multiple translations

RTBF's initial foray into recommendation algorithms involved developing a system for video recommendations on the Auvio platform. This collaborative project with the company Data Reco aimed to establish a novel and extensible solution applicable across PSM. However, unforeseen challenges resulted in its ultimate failure. This setback prompted a strategic shift in 2018. Recognizing the crucial role of recommendation algorithms, RTBF opted to bring development and management in-house. This marked a significant transformation for the data management department, transitioning from a support function to a dedicated production unit focused on building and overseeing the recommender system. To achieve this, a substantial expansion tripled the department's workforce, accompanied by a parallel focus on cultivating expertise in recommender systems, machine learning, and data analysis.

This successful transformation yielded significant benefits. Not only was a robust and effective recommendation algorithm implemented for Auvio, but the newly acquired expertise also paved the way for the development of two recommender systems for rtbf.be. This included a dedicated system for recommending news articles, further enriching the personalized content experience for RTBF's website users. X: It is important to understand that a recommendation algorithm is primarily intended to highlight RTBF's main asset, which is its content […] There is a strong demand from audiences to have a personalized experience when they connect to our platform.

The development of the recommendation algorithm for rtbf.be involved a thorough and collaborative process. The Director of Data Management, who initiated the project, engaged in extensive discussions and negotiations with the CEO of RTBF to reach a consensus. The project was then presented to the company's Executive Committee (Comex) for approval, which was successfully obtained. The implementation of the system, however, encountered resistance from editorial teams. A compromise was reached: the recommendation banner would initially be placed at the bottom of the rtbf.be homepage, allowing the technology to prove its value.

The project's core principle was to ensure alignment between the algorithm's development and RTBF's values and public service mission. This alignment aimed to achieve the creation of a “public service algorithm.” While RTBF's mission statement, “Inform, Educate, and Entertain,” serves as a general guiding principle for its stakeholders, in-depth discussions with project teams yielded the identification of specific values for guiding the system's development.

The project's initial phase mirrored Callon's (1986) concept of “problematization,” where preparatory meetings served as a forum for defining and framing the core issue. This stage involved formulating the central question: “What values should a public service algorithm embody?” Through this process, project teams identified three key values: diversity, transparency, and quality. Diversity was operationalized as a multifaceted objective, aiming to: (a) Mitigate the formation of filter bubbles by ensuring exposure to a wider range of content; and (b) Foster exposure to the breadth and richness of RTBF's programming, ensuring users encounter the full spectrum of content offered. Transparency referred to the algorithm's capacity to provide clear and interpretable explanations for its recommendations. Quality was defined by the algorithm's effectiveness in generating relevant and thematically coherent suggestions.

Following their identification, an examination of the trajectory and evolution of these values is warranted. The three core values established for the recommendation algorithm will undergo modifications as translation operations are conducted in translation centers both within RTBF and externally.

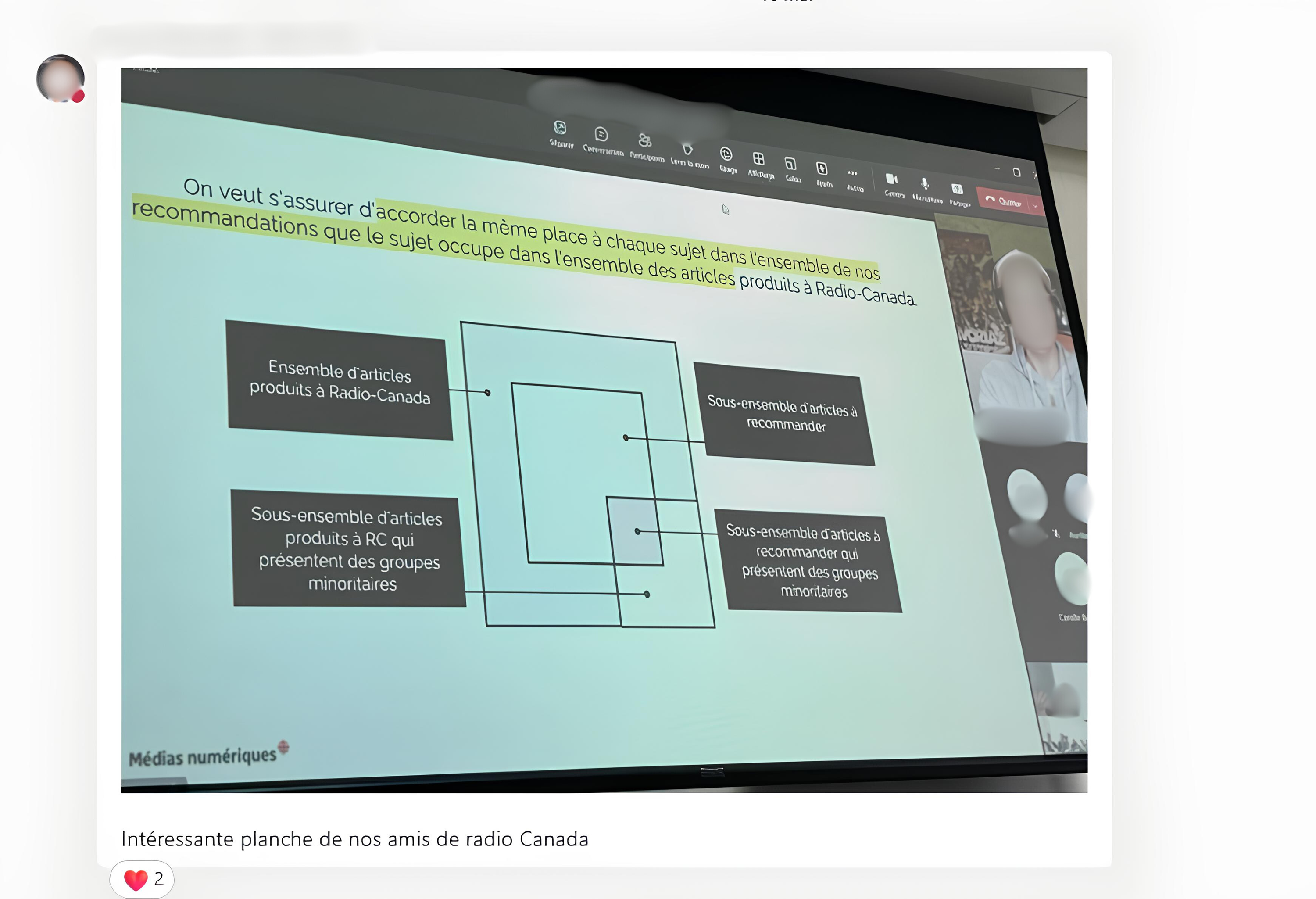

Other PSM, and more extensively the European Broadcasting Union (EBU), will be instrumental in reshaping these values through a collaborative effort informed by two key influences. First, the international institutional framework of PSM encourages personalization (EBU, 2019; see also Jakubowicz, 2007; Murschetz, 2021), but within the context of established public service principles. Second, rather than seeking benchmarks from commercial entities, the RTBF teams draw inspiration from best practices implemented by other PSM organizations (Figure 2).

A team member sharing a presentation on Radio Canada’s approach to personalization for news articles.

In anticipation of the implementation of an overly strict regulatory framework by the Parliament and Government of the French Community during negotiations for the renewal of RTBF's management contract (RTBF, 2019), the project teams also foresaw reactions and decisions from the national institutional framework. RTBF stakeholders were aware that the issue of personalization and algorithmic integration within the organization's strategy was being scrutinized by these institutions. To preempt potential criticisms, the development process emphasized public service values, particularly content diversity.

Similarly, RTBF stakeholders drew upon the recommendations by the Higher Council for the Audiovisual Sector (CSA, 2022, p. 33). The CSA, which conducts an annual evaluation of RTBF's activities, highlights the following specific aspects that should be considered when creating a “public service algorithm”: a) Suggesting a broad and diverse range of content to users, in other words, offering audiences not only what they want to see but also broadening their horizons by presenting them with content they might not otherwise be exposed to; b) Avoiding the creation of ‘filter bubbles’ or ‘cognitive bubbles’; c) Acting in a complementary manner to human editorialization, without replacing it; d) Highlighting the functioning of the algorithm through a two-pronged approach to transparency: at the level of the recommendation system by measuring the effectiveness of the diversity of recommendations made, and at the level of each user by explaining why a particular piece of content was recommended.

In general, RTBF is also highly committed to partnerships with university research, and it is not uncommon for stakeholders to draw inspiration from scientific papers (Figure 3).

A team member sharing a scientific article on building ethical algorithms with other team members. His message says: “enjoy the read!”

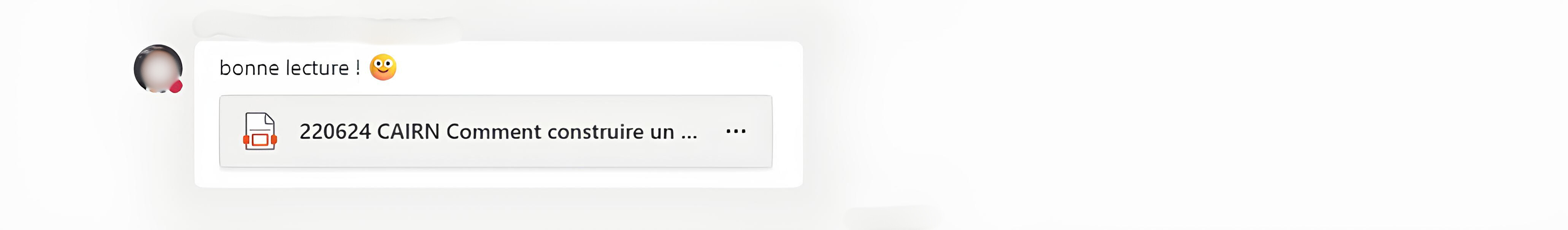

Interestingly, a separate research project, Alg-Opinion, conducted by a team at the University of Louvain (UCLouvain) in collaboration with RTBF, sheds further light on the dynamic nature of these value transformations. This project focused on analyzing information consumption patterns among young social media users. Notably, the researchers developed a graphical representation (Figure 4) that encapsulates the central challenges faced by the prototype of their information recommendation interface (Alveho).

The graph created within the Alg-Opinion project (Claes, 2022).

The data management team's extensive adoption of the graph served as a catalyst for repurposing it to frame and advocate for their project. This process led to the amplification of “user autonomy” as a core value. Subsequently, the concept of discoverability was integrated within a broader notion of positive impacts, encompassing aspects like serendipity, pluralism, and universalism. Furthermore, transparency was reframed as explainability, incorporating elements like explanations, visualizations, and controls. Hence, this graphical representation stabilized the translation processes around the following three core values before the design phase: user autonomy, positive impacts, and explainability.

An examination of the identification and trajectory of values challenges the notion of a predetermined set of values governing algorithmic development. Instead, it highlights the dynamic nature of values, framing them as products of an ongoing process of construction and translation. Values evolve over time through translation operations that take place within translation centers located both within the organization and externally. These centers operate based on encounters with new actants (another PSM, a national or international institution, a graph) who participate in the project, modifying both the contours of the networks involved and the values themselves.

Their involvement actively reshapes the contours of the networks involved. This highlights the interconnectedness of algorithmic development, where seemingly disparate elements (e.g., collaborating institutions, data visualizations) can significantly influence the project's trajectory. Furthermore, the concept of a shifting value hierarchy underscores the dynamic nature of these guiding principles. Understanding how the relative importance of values (diversity vs. discoverability) fluctuates throughout development is crucial for ensuring the algorithm aligns with its intended goals. Despite the absence of explicit definitions and a dynamic hierarchy, the identified values retain their significance. They coalesce into a flexible framework that guides stakeholders. This framework functions less as a rigid rulebook and more as an “ideal to be achieved.”

Constrained embedding of values into algorithmic design

The identification and evolution of values in algorithmic development manifest as an ongoing process of construction and translation. However, closer scrutiny reveals a gap between these values and their practical implementation. This section examines the limited integration of values within the algorithm's design and operation, highlighting the technical and organizational impediments that hinder their full realization. This limited integration of values emerges because of the complex interplay between aspirational goals, technical limitations, and stakeholder dynamics.

Throughout the translation processes, the concept of diversity, initially translated into discoverability and subsequently refined into positive impacts, emerged as a central guiding principle. This multifaceted value underwent a conscious reduction in scope as the engineers focused on two key dimensions during the design phase due to time and technical constraints: discoverability and pluralism. Discoverability refers to the ability of users to stumble upon unexpected yet relevant content, while pluralism highlights the algorithm's capacity to showcase content that may not necessarily be the most popular.

To address the phenomenon of filter bubbles, a research project was initiated to explore methods for mitigating their formation. This project investigated a User-to-Content (U2C) recommendation approach as an alternative to solely relying on Content-to-Content (C2C) techniques. Within this framework, a hybrid recommendation system was developed utilizing two algorithms. The first, the Jacquard algorithm, leverages Pearson correlations. The second, named Cosinus, employs vector calculations within a graph database to establish content relationships. These two algorithms are used together to identify and provide recommendations for news articles on rtbf.be.

However, this approach necessitated a delicate balance between content discoverability and user personalization. To mitigate the emergence of filter bubbles, a strategy was implemented to incorporate a percentage (ranging from 10% to 20%) of recommendations that deviated from the user's most relevant content as identified by the vector calculations. This ensures that users are not solely exposed to information that reinforces their existing preferences, thereby promoting exploration of novel areas of interest, and, thus, avoiding the formation of filter bubbles.

The value of autonomy, encompassing user control over algorithmic recommendations and personalization, encountered significant challenges in its operational implementation. Granular user controls proved technically complex and resource intensive. Additionally, concerns arose regarding the potential for excessive user customization to hinder the algorithm's effectiveness in achieving its intended objectives. Consequently, the pursuit of autonomy was temporarily deferred. This decision was driven by the need to balance user control with algorithmic efficiency. X: On top of fighting against clickbait media, we’re not going to make things harder for ourselves as well.

Furthermore, the organization faced the challenge of avoiding additional complexities that could place it at a competitive disadvantage relative to commercial media platforms.

Despite the temporary deferral of user autonomy, the project teams acknowledged its long-term significance. They recognized the need to investigate mechanisms that could empower users while maintaining algorithmic efficiency. One potential approach involved empowering users to adjust specific parameters of the recommendation engine. This could include altering the weight assigned to factors such as user preferences and thematic relevance. Such granular control would enable users to personalize the algorithm's behavior without compromising the system's overall effectiveness. However, these considerations remained in the realm of future exploration during my fieldwork.

Explainability was also reduced to two dimensions: public explainability and algorithmic control. Public explainability refers to the ability to inform users about the algorithm's functioning and the principles underlying its recommendations. Algorithmic control encompasses the ability to monitor and regulate the algorithm's behavior to prevent it from becoming a black box. This dimension addresses concerns about the algorithm's potential opacity, even to its own developers.

The pursuit of public explainability for the algorithm was quickly abandoned during the development phase. Initial discussions explored the possibility of offering explanations alongside recommendations, such as within the recommendation banner itself. However, this user-centric approach was ultimately discarded in favor of maintaining a clean homepage interface. Consequently, efforts to address public explainability were limited to postimplementation communication strategies (see the following section).

Similarly, the issue of algorithmic control was addressed retrospectively. Article 37 of the new RTBF management contract (RTBF, 2023) mandates that: RTBF shall prepare an annual evaluation report on its recommendation algorithm to assess its effectiveness and address potential biases, aligning with a critical media education approach […]. The report shall be submitted in its entirety to the Standing Committee and the CSA services, who will ensure its confidentiality.

To fulfil this mandate, RTBF needs to develop metrics to “measure the algorithm's impact on content discoverability, including quantified results on the promotion of information, cultural and educational content, and artists from the Federation.” At the conclusion of my fieldwork, the identification and development of these KPIs based on the algorithm's technical characteristics were still ongoing.

Despite the rhetoric surrounding the importance of user autonomy, positive impacts, and explainability, the practical implementation of these values in the algorithm falls short of expectations. The value of positive impacts is narrowly defined as a mere 10–20% deviation from the best vector calculations, while the other two values have been disregarded.

The limited integration of values has ultimately led to a reframing of the justification for the recommendation algorithm project. The focus shifted from demonstrating how the algorithm itself embodies public service values to emphasizing the public interest nature of the content it recommends. By the end of the fieldwork, one stakeholder confidently asserted: Our algorithm is a public service algorithm because we are a public service organization, and our content is of public interest. The problem faced by commercial platforms is that they must filter the content they offer because they do not have control over it […] this is the root of the filter bubbles issue. We can recommend any content through the algorithm because it is always aligned with our editorial guidelines and our public service mission.

Leveraging public service values for differentiation

While the upfront integration of public service values into the algorithm's design and development may be limited, these values can still exert a significant influence postimplementation across four key domains: public communication, institutional legitimation, internal guidance, and symbolic competition within the PSM landscape. This strategic deployment involves a process of iterative reframing in communicating public service values. The message is tailored to the specific audience being addressed, ensuring that core values resonate effectively with diverse stakeholders.

First, RTBF employed a multifaceted approach to communicate its public service values to audiences regarding its algorithmic development. In this context, an explanatory video demystifying the algorithms’ workings was created (Figure 5).

Screenshot of the explanatory video titled “Public Service Algorithm” created by RTBF (https://auvio.rtbf.be/media/inside-algorithme-de-service-public-2950887).

The organization's vision of a “public service algorithm” is elaborated upon through a dedicated webpage and associated articles (e.g., https://www.rtbf.be/article/comment-la-rtbf-fait-elle-decouvrir-ses-contenus-et-ses-valeurs-a-travers-ses-algorithmes-11087831). These resources demonstrate continuity with the values identified in the Alg-Opinion project. Notably, they introduce a new set of principles emphasizing human-centric values: respect, transparency, diversity, connection, and audacity. This shift in focus suggests the influence of the mediation team, responsible for public relations, in prioritizing clear and accessible communication around the algorithm's functionalities and objectives.

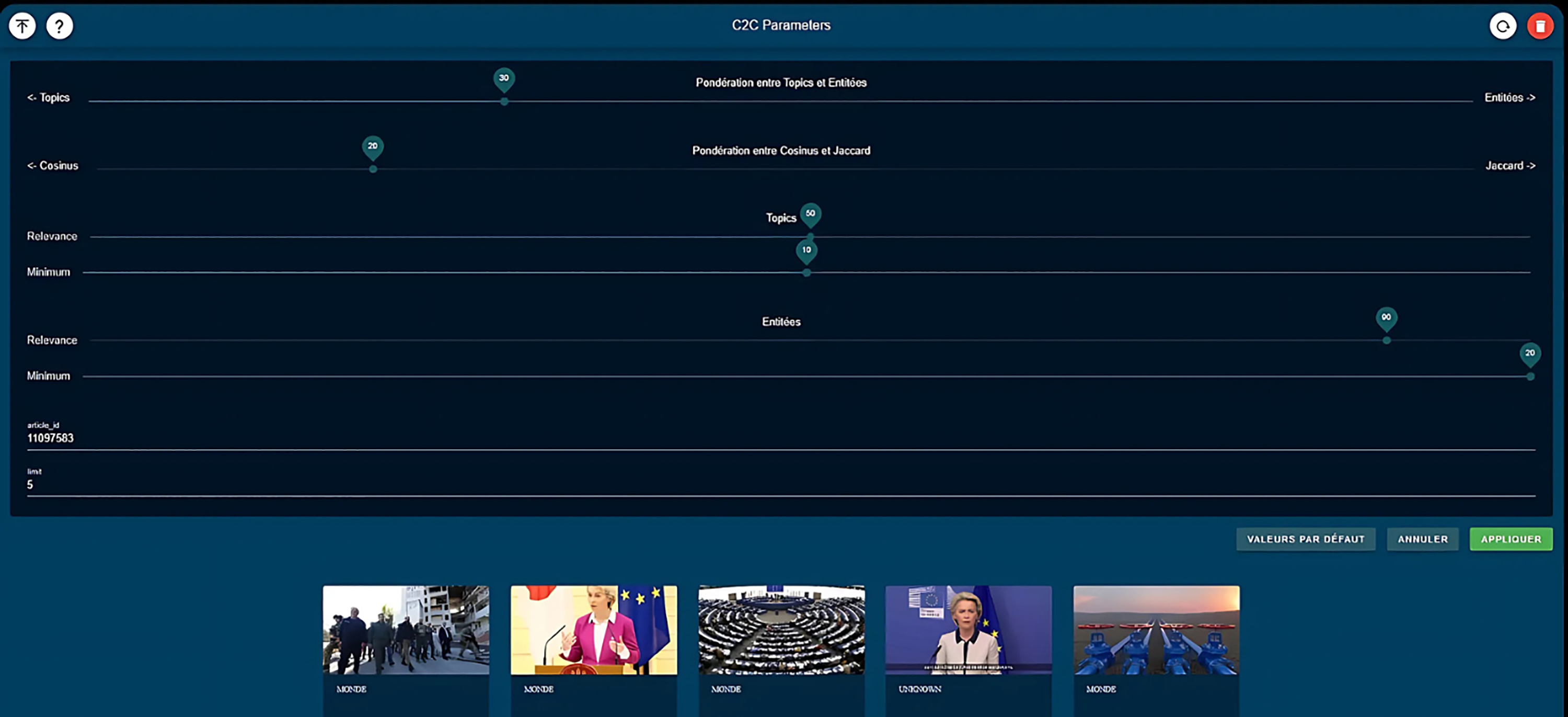

In a complementary effort, RTBF conducted media literacy sessions for visitors within the framework of a media education project (AlgoScope). These sessions aim to empower users with a deeper understanding of how the algorithm works. The AlgoScope tool interface (Figure 6) provides a user-friendly environment for workshop participants to explore and interact with the recommendation system parameters. Notably, the AlgoScope tool originated from user autonomy initiatives developed during the algorithm's development phase. However, these initiatives were ultimately not integrated into the rtbf.be platform.

The interface of the AlgoScope tool allows workshop participants to adjust the parameters of the recommendation system.

Within the RTBF organization itself, the public service dimension served as a tool to reinforce the legitimacy of the personalization processes associated with the development of the recommender system. To effectively communicate the strategy and foster its adoption across the organization, a multi-pronged approach was implemented, as follows: (a) Targeted intranet communication: key messages outlining the strategy were disseminated through targeted posts on the company's intranet. This ensured that all employees were informed about the initiative and its objectives. (b) Internal testing: the AlgoScope tool underwent an internal testing phase, during which it was made available to other teams for evaluation and feedback. By framing these efforts within the context of RTBF's public service mission, the project teams addressed potential concerns among internal stakeholders and gained support for the project. This internal legitimacy was crucial in navigating the complexities of data governance and potential ethical implications.

The public service dimension also played a role in RTBF's interactions with the broader institutional framework. The organization emphasizes its adherence to public service principles to justify its approach, arguing that its use of algorithms aligns with its public service mission. This emphasis on public service values serves to legitimize RTBF's actions in the eyes of policymakers and regulatory bodies.

Interestingly, the public service dimension also manifested in a form of competition among PSM organizations. There was an underlying drive to establish which media outlet was at the forefront of algorithmic innovation while upholding the values of public service. This competition, while not always explicit, fueled the development and adoption of algorithms within the PSM landscape. PSM institutions strived to demonstrate their commitment to technological advancement while maintaining their adherence to public service values. Numerous references to the desire to “do better” than the BBC, which is held up as a model for other actors, along with exchanges in joint projects within the EBU, where actors continuously compare themselves to other PSM, bear witness to this. The significance of this competition was further underscored when, following my fieldwork, the EBU hosted an “algorithmic recommendation” challenge, which was ultimately won by the RTBF teams (Figure 7).

A LinkedIn post by RTBF congratulating the data team for winning the “Algorithmic Recommendation Challenge” (“Our teams have won the prestigious EBU Algorithmic Recommendation Challenge. This award underscores our ambition to develop a high-quality, personalized relationship with our audiences”).

The cumulative evidence presented highlights a transformation in the realization of public service values, marked by a notable shift from an emphasis on technical characteristics to a focus on the communicative and symbolic dimensions of public service values. This shift is exemplified by RTBF's strategic utilization of public service values to effectively communicate, legitimize, and differentiate its algorithmic development endeavors from those of its competitors.

Discussion and conclusion

Understanding public service values as a construct

PSM is undergoing a significant transformation characterized by the increasing adoption of algorithmic personalization processes, often involving recommender systems. Given their unique role in the media landscape, understanding the role of public service values within these algorithmic frameworks is crucial (Fields et al., 2018; Van den Bulck & Moe, 2018).

The case of the RTBF reveals that public service values evolve dynamically throughout the development of recommendation systems, undergoing a series of translations that shape their final form within the algorithm. This contrasts with the traditional approach of abstractly defining values and then applying them to specific cases (Raboy, 1996). By decontextualizing values, previous research has often overlooked their fluid nature and their dependence on specific contexts. This can create a misleading impression of values as static and universally applicable principles in any context.

The study suggests that public service values are not merely applied to algorithmic systems but are actively transformed through the process of translation. While conflicts of interpretation of values may arise between different actors and contexts due to their abstract nature in previous studies (Hildén, 2022; Møller, 2022b; Sørensen, 2020), the mechanisms that lead certain interpretations to dominate and influence system design remain understudied.

Science and Technology Studies (STS), particularly the sociology of translation, offer valuable insights into this dynamic process. By demonstrating the non-deterministic nature of innovation, STS helps to challenge the notion of fixed and predetermined values. While the case of the RTBF is unique, these value translation processes are intrinsic to any innovation project in PSM, as translations are at the core of such endeavors (Callon et al., 1999). Hansen and Hartley (2021) support this perspective using assemblage theory to analyze similar dynamics in the development of another recommender system.

Furthermore, the study shows how certain actants, both human and non-human, exert a more significant influence than others on the translation of values. While most studies focus on journalistic values (Bastian et al., 2021) and highlight the role of journalists (Bodó, 2019; Schapals & Porlezza, 2020), the case of the RTBF reveals that journalists are less involved than one might think and that journalistic values are not prioritized. The emphasis is rather on the values of the PSM as an institution. This is because journalists constitute only a fraction of the constellation of actors involved in developing recommender systems.

Indeed, other actors, such as engineers, designers, or decision-makers (Smets et al., 2022), exert significant influence in these translation processes. This transfer of autonomy from the newsroom to technical profiles leads to tensions between editorial considerations and algorithmic decisions, as highlighted by Bodó (2019), Weber & Kosterich (2017), and Møller (2022a). Values are thus confronted with technical and organizational logics that can profoundly transform them. Consequently, future research might also broaden its scope beyond the newsroom, adopting a more holistic view of the organization and considering the multitude of actors involved in personalization processes within PSM.

Focusing on algorithmic design

Despite the growing body of research on PSM and algorithms, there is a lack of empirical studies that explore the specific ways in which values are translated and integrated into news recommender systems. In conclusion to their literature review, Mitova et al. state that: transparency, accountability, and explicability of the employed algorithms have also mostly been discussed theoretically […] However, research has yet to empirically investigate the extent to which these considerations are present in current NRS design [...]. (2022, p. 99)

I argue that these limitations largely stem from methodological shortcomings. Much of the research on personalization processes relies heavily on interviews rather than fieldwork. While interviews are valuable for understanding the perspectives of key actors, they fall short in capturing how the public service context influences the development of recommender systems. To truly understand the reality of personalization processes, we must go beyond actors’ representations and step into the “design room,” as described by Hansen and Hartley (2021). This approach also aligns with Jaton's (2019) assertion that an ethnographic perspective in the “laboratory of algorithms” is essential for understanding their design and impact.

The case underscores the significant challenges in integrating values into the design of a news recommender system. Here, these challenges stem from a combination of arbitrary decisions, technical constraints, and organizational limitations. As a result, these values have little influence on the algorithm's design and often fail to translate into concrete functionalities. This observation aligns with the concept of media convergence, further emphasized by Tim Raats and Tom Evens’ (2021) quote from their study on the VRT: “If you can't beat them, be them.”

Given this limited integration, we also observe that the public service nature of the recommender system ultimately reveals itself through the content it recommends. In practice, this means that the recommender system's adherence to public service values is often reflected in the nature and diversity of content it surfaces for users, rather than through explicit mechanisms embedded in the system's design.

This observation aligns with Sørensen's analysis, where he notes that “most interviewees have an editorial understanding of diversity, arguing that diversity is ensured by the global set of items (videos) available in the system” (2019, p. 3). Essentially, Sørensen points out that the understanding of diversity within these systems is often limited to the idea that having a broad and varied collection of content inherently guarantees diversity. However, this approach is influenced by the constraints faced by PSM, such as budget limitations, technical challenges, and operational pressures. These constraints often lead to a reluctance to introduce additional complexities into recommendation systems, for fear of compromising the ability of MSP to provide competitive services.

Public service values beyond technological boundaries

The RTBF case study highlights a compelling aspect of how public service values are strategically utilized for communication and legitimacy. Although these values are not incorporated into the technical aspects of recommender systems, they are repurposed within their symbolic and interactional dimensions to set RTBF apart from its competitors.

This suggests that viewing public service values as a form of capital—across political, media, and other public service domains—provides a valuable perspective. This perspective is consistent with Pierre Bourdieu's (1980) framework of social fields and capital, where capital refers to various resources that individuals or groups leverage to gain influence within specific fields (Bourdieu, 1976). In the PSM context, adherence to public service values can be interpreted as a form of capital that enhances legitimacy, credibility, and trust among audiences, stakeholders, and other media actors.

Thus, the study reveals a complex interplay of “isomorphism” (Powell & DiMaggio, 1991) and differentiation, as further explored by Blassnig et al. (2024). For actors, adopting recommender systems is seen as crucial for maintaining competitiveness in the evolving media landscape. The organization aligns with this need by emulating strategies from other media outlets, reflecting a trend toward isomorphism where similar practices are adopted across the industry to remain relevant and competitive. However, RTBF also distinguishes itself through a deliberate emphasis on its unique values and its concerns about the potential risks associated with these systems. Therefore, although values play a limited role in the design of the recommender system, they continue to serve as a guiding principle and an ideal to be achieved.

In conclusion, while the importance of public service values is widely recognized, a significant performance gap remains in their operationalization within recommender systems. This issue raises a critical question: Can a recommender system be legitimately deemed a public service algorithm? If so, what technical functionalities are necessary to effectively bridge this divide and integrate the ethos of public service into the algorithmic framework?

The study highlights this challenge but is not without its limitations. Primarily, it is based on a single case study without a comparative dimension, which complicates the generalization of its findings to other PSM. Although the process of identifying and translating public service values is likely a common endeavor across PSM, the outcome of this process is heavily influenced by the specific organizational context. Furthermore, the constraints faced by PSM vary, according to their unique settings and circumstances (Van den Bulck & Moe, 2018), adding another layer of complexity.

Future research should aim to address this gap by empirically exploring strategies employed by different PSM organizations to integrate public service values into recommender systems. Additionally, exploring the development of robust evaluation metrics that assess the algorithm's impact on user behavior and its alignment with public service objectives would be valuable. Such research could help refine our understanding of how to effectively incorporate public service values into algorithmic design and contribute to the broader goal of ensuring that recommender systems uphold and reflect the principles of public service.

Footnotes

Declaration of conflicting interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author received no financial support for the research, authorship, and/or publication of this article.

Ethical statement

All data were collected, stored, and processed in strict adherence to the General Data Protection Regulation (GDPR). Written or verbal informed consent was obtained from all participants prior to data collection during the interviews. All identifying information has been removed from the data to ensure the anonymity of all participants.