Abstract

Micro-targeting, which is when messages are specifically tailored to an individual based on the information that can be derived from their digital footprint, has become a prevalent practice in digital spaces. This communicative approach often centers around personality, the rationale being that individuals resonate with messages differently depending on their personality type. There has been research into whether machine learning methods can improve the detection of personality types, but insufficient analysis has focused on whether large language models enhance this technique even further. And so, this study examines the ability of GPT-3.5 and GPT-4, two of the models underpinning OpenAI's ChatGPT, to classify personality type. Using a publicly available dataset collected through the ‘PersonalityCafe’ forum, this paper found that GPT-3.5 and GPT-4 were able to perfectly classify 73% and 76% of the sample's personality type, respectively, simply by analyzing an individual's 50 most recent tweets—a level which is significantly better than random guessing and other machine learning models. This has important implications for communication scholarship because if LLMs enable actors to detect personality types more accurately, micro-targeting could become even more powerful and widespread. There is also a possibility for misuse by bad actors and privacy threats, so understanding these mechanisms is crucial for building safe artificial intelligence systems and safeguards against this.

Introduction

In digital spaces, a considerable amount of communication is ‘micro-targeted,” meaning that actors focus on “recipients’ personal vulnerabilities by tailoring different messages for the same thing, such as a product or political candidate” (Lorenz-Spreen et al., 2021). With the increase in the volume of online information about individuals who exist on social media sites, there has been a concomitant growth in the practice of micro-targeting (Kosinski et al., 2013).

Personality tends to occupy a central position in micro-targeting as actors often design their messages based on the type of personality the recipient is thought to be in order to heighten its resonance (Decker & Krämer, 2023). Consider the widely publicized and controversial case of Cambridge Analytica (see The Guardian, 2018). The British consulting company collected data from personality quizzes to build psychometric profiles of individuals, which were then used in a variety of sensitive contexts, including “to interfere with people's political behavior such as voting” (Pashentsev, 2023). Explaining the approach to online communication, former Chief Executive Officer of Cambridge Analytica Alexander Nix stated that when attempting to convince people to respect the Second Amendment, an individual who was high in Neuroticism would be shown an image of a “scary burglary” to tap into their predisposition to reacting to fear-based messaging (Van Der Linden, 2023). Conversely, for someone high in Agreeableness, the same message would instead show a father teaching his son how to use a firearm as this would appeal to the individual's tendency to respect “tradition and family” (Van Der Linden, 2023). 1

Micro-targeting has also penetrated other domains, from how chatbots interact with customers to potentially targeting people who are psychologically predisposed to gambling (Herzig et al., 2017; MacLaren et al., 2011). Major organizations have adopted this practice in their communication with individuals, too; companies such as Meltwater have built systems that tailor messages to the specific characteristics of the recipient (Meltwater, 2019). Disinformation campaigns may also experience more success if they target people based on their personality type. This is because people with certain personality traits have been shown to be more susceptible to disinformation (Barman & Conlan, 2021). While recent academic work outlines how large language models (LLMs) may drastically improve “influence operations,” LLMs might also enhance personality detection, which, in turn, may improve these types of operations even further (Goldstein et al., 2023). There are also major privacy concerns associated with micro-targeting. Borgesius et al. (2018) contend that the collection of personal data, and the subsequent misuse that can arise from this through micro-targeting, has “chilling effects.” This is exacerbated by the fact that often people do not even realize that the data trail they leave in digital spaces is being used against them in this way (Jansen & Krämer, 2023).

Research has shown that technological improvements have made it easier to glean insights about individuals which has, in turn, amplified the ability of actors to micro-target messages (Choong & Varathan, 2021; Lambiotte & Kosinski, 2014). However, much less attention has been allocated toward analyzing whether LLMs, such as the models that underpin OpenAI's ChatGPT, can perform this task more effectively. Therefore, this study seeks to determine whether new, state-of-the-art artificial intelligence (AI) systems can increase the accuracy of personality prediction. It does this by using two of OpenAI's models, GPT-3.5 and GPT-4, to predict the personality traits of individuals, along with the Myers-Briggs Type Indicator (MBTI), based on their 50 most recent tweets, using a publicly available dataset collected through “PersonalityCafe.” Recent research, such as Cao & Kosinski (2024), has examined how effectively LLMs can estimate public perceptions of the personalities of public figures. Additionally, Rao et al. (2023) measured the accuracy of these models in assessing personality types. However, there has been limited study related to the direct prediction of personality types using LLMs.

This paper finds that GPT-3.5 and GPT-4 perfectly classified 73% and 76% of the sample's personality type along the MBTI, respectively. This is significantly better than random guessing and other machine learning (ML) models. The improved ability to predict personality type has three particularly important implications for communication theory. First of all, by increasing the accuracy of personality prediction, the advent of LLMs might make the communication style of micro-targeting even more powerful than it currently is. Second, because these models are widely available and cheap, it is likely that this communication style will become more prevalent in digital spaces. Lastly, the fact that micro-targeting can be used by bad actors, such as in disinformation campaigns, underscores the importance of this area of scholarship. It is only by investigating the misuse of LLMs and micro-targeting that we can develop better safeguards and regulatory frameworks to protect those on the receiving end of these messages. As OpenAI's own “Safety & Alignment” team puts it: We take lessons learned from these actors’ abuse and use them to inform our iterative approach to safety. Understanding how the most sophisticated malicious actors seek to use our systems for harm gives us a signal into practices that may become more widespread in the future, and allows us to continuously evolve our safeguards. (OpenAI, 2024)

Background

Personality traits are “patterns of thought, emotion, and behavior that are relatively consistent over time and across situations” (Funder, 2012). Since personality contains important information about how individuals are likely to behave, personality type detection has used cases across many domains, including job screenings, recommendation systems, and counseling (Mehta et al., 2020).

The MBTI is one of the most popular personality indicators and will be used in this study. It categorizes people into 16 distinct personality types along four dimensions: Introversion (I)–Extroversion (E); Intuition (N)–Sensing (S); Thinking (T)–Feeling (F); Judging (J)–Perceiving (P). It is usually administered through a self-report questionnaire which consists of a series of statements that people agree/disagree with along a spectrum (e.g., “you regularly make new friends”). After completing the questionnaire, individuals are assigned a four-letter code that represents their traits across the four dimensions.

While the MBTI is well-known and has intuitive appeal, it is important to note that its reliability and validity as a measure of personality and predictor of actual behavior have been subject to criticism in the literature (Pittenger, 2005; Stein & Swan, 2019). One important reason for the question over its reliability is that individuals sometimes get different results when they retake the test (Pittenger, 2005). Nevertheless, this study opts to use a dataset that includes traits from the MBTI because it continues to be used as a decision-making tool in a wide range of important contexts. As per the Myers-Briggs Company, the MBTI is “[u]sed by more than 88 percent of Fortune 500 companies in 115 countries” and “most often used by organizational development professionals, coaches, and consultants, as well as by counselors and educators” (The Myers-Briggs Company, 2024a, 2024b). Put a different way: regardless of its robustness and construct validity (Thompson & Borrello, 1986), the MBTI still has serious implications across many domains because it continues to be used in communication in digital spaces. If micro-targeting is strongly informed by the MBTI, it follows that the framework warrants analysis, despite its questionable reliability, by virtue of its prevalent use across social media platforms.

There have been attempts to use AI systems to predict personality traits using different personality indicators, such as the “Big Five.” For example, Pratama and Sarno (2015) use K-nearest neighbors and support vector machine methods to classify people's personality types. Moreover, Kosinski et al. (2015) used ML methods—least absolute shrinkage and selection operator linear regressions—to make judgments about personality traits based on their Facebook Likes (Kosinski et al., 2015). They found that “Computer-based judgments (r = 0.56) correlate more strongly with participants’ self-rating than average human judgments do (r = 0.49).” Their model significantly outperformed the target individuals’ work colleagues (r = 0.27), achieving accuracy rates comparable to those of the targets’ spouses (r = 0.58). It is worth noting that there is a significant overlap between the Big Five model and MBTI, which adds some academic weight to the latter (Furnham, 1996). Furnham (1996) found that Agreeableness (Big Five personality trait) is correlated with Thinking-Feeling (MBTI personality trait), Conscientiousness with Thinking-Feeling and Judging-Perceiving, Extraversion with Extraversion-Introversion, and Openness with all four MBTI traits.

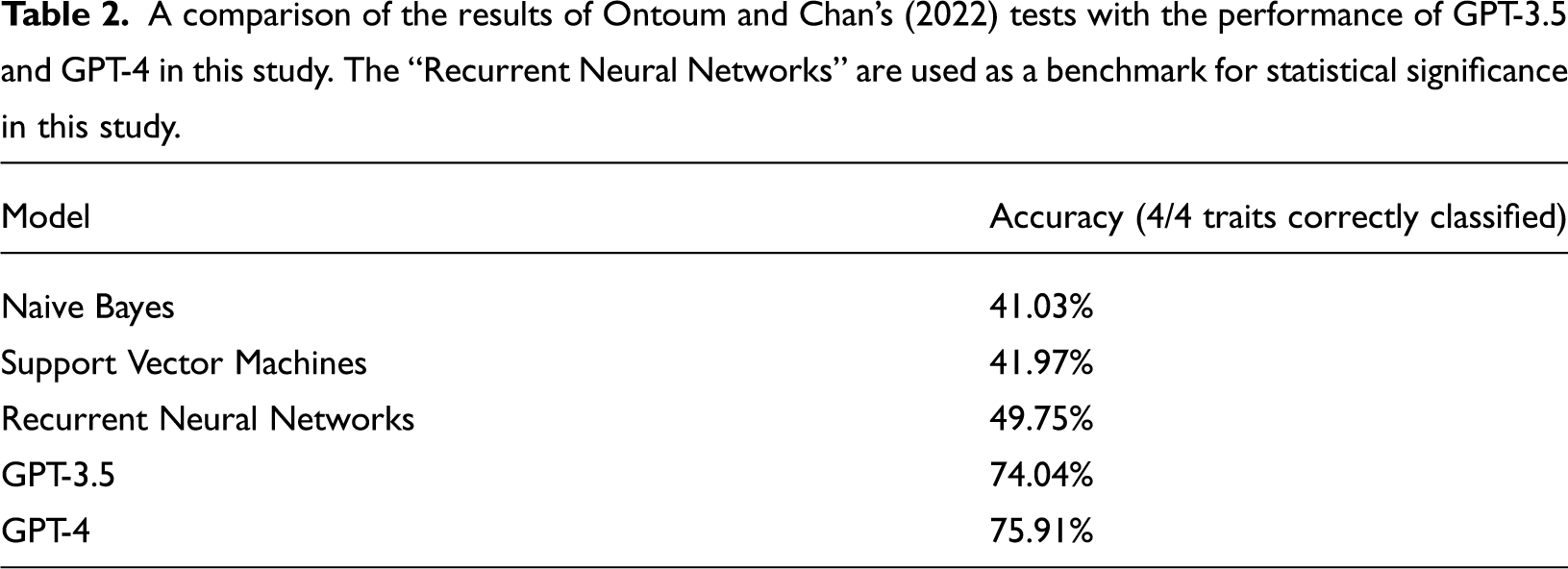

Researchers have also designed models to predict personality type utilizing the same MBTI dataset that is used in this study. This is an additional advantage of using the MBTI as the main personality framework in this study: since other researchers have tested whether other technologies are able to predict traits along the MBTI, it enables a valuable comparison to the strength of LLMs vis-a-vis older ML methods. Hernandez and Knight (2017), for example, built a neural network to sort people into their MBTI personality types based on text samples from social media posts. They found limited success, though, because their model “struggled to accurately predict the binary class for each MBTI personality dimension,” and instead only tended to pick up on some of the dimensions (Hernandez & Knight, 2017). Similarly, Ontoum and Chan (2022) measured the ability of several ML algorithms to classify the personality types of individuals in the dataset, including naive bases, support vector machines, and recurrent neural networks (RNNs). The RNN was the most performant, classifying 49.75% of the individuals correctly.

Method

To investigate whether the GPT models accurately predict personality type, this paper uses the publicly available “(MBTI) Myers-Briggs Personality Type Dataset” from Kaggle which was collected through the “PersonalityCafe forum” (Kaggle, 2017). PersonalityCafe forum is an online community focused on personality development, and the dataset was collected by asking users to take an MBTI questionnaire and then analyzing their recent tweets. The dataset contains more than 8600 rows of data, where each row relates to a different person. There is a column that contains the individual's personality type (the four-letter MBTI code), and another column that includes that same individual's last 50 tweets. Due to the computational costs of analyzing 50 tweets for each of the 8600 individuals (which equates to 430,000 data points), this experiment opted to only feed 3399 rows of data into GPT-3.5. Since GPT-4 was released toward the end of this experiment, coupled with the fact that its API costs were significantly higher at the time, only 2001 rows of data were fed into this model.

To verify that there were no statistically significant distributional differences in personality types between the full dataset and these two subsets, chi-squared tests were conducted on the proportions of personality type distributions. The difference between the full sample and the GPT-3.5 and GPT-4 subsets had P-values of 0.6798 and 0.9390, respectively. This suggests that the distribution of personality types is consistent with the overall distribution and that there is no evidence of sampling bias. Similarly, there was no evidence of bias between the two subsets, since a chi-squared test found a P-value of 1.0 for this.

For each set of tweets, the models were asked to predict what personality type this person is most likely to be. The predictions were then compared to the actual personality type that they received from the MBTI test. If the model classified each of the four letters correctly, this was counted as “perfect”; and if the model classified three out of four letters correctly, this was viewed as a “partial” match. If the model predicted fewer than three of the letters correctly, this was not counted as a match.

Since the dataset was released in 2017, a natural question that might be asked is whether GPT-3.5/4 was trained on the contents of the dataset and is therefore simply regurgitating information it learned from its training period. While it is not wholly clear what went into the training data for these models, it seems unlikely that they are directly retrieving the personality types in the MBTI dataset. This is because these models have been designed to “calculate the generative likelihood of word sequences,” not to directly fetch information related to a query such as information retrieval systems do (despite recent attempts to bring the two together, such as New Bing) (Ding et al., 2023). The fact that the models did not perform with perfect accuracy all the time lends further support for this, as does the better performance of GPT-4 compared to GPT-3.5 (if both were simply extracting information from the dataset, they would both get the same perfect level of accuracy).

It is also worth highlighting that unlike Amirhosseini and Kazemian (2020), who opted to remove any mention of MBTI type in their research to remove any chance of bias in their research, no such pre-processing was conducted in this study (Amirhosseini & Kazemian, 2020). Their rationale was that the model may be influenced by certain personality traits being mentioned in the tweets. While this may be a factor, the pre-processing analysis in this study showed that out of the 3399 rows that GPT-3.5 analyzed, under 4% of people mentioned their own personality type in their tweets. 2 As such, the strong performance of GPT-3.5/4 cannot be attributed to the model relying on people explicitly stating what personality type they are in their tweets.

OpenAI has multiple models accessible through API, of which “chat-gpt-3.5-turbo” and “gpt-4” were the most appropriate for this investigation.

For this study, the subsequent prompt was used: Predict what this person's personality type is (along the Myers-Briggs Personality Type scale) based solely on their last 50 tweets. In your response, provide only the acronym (e.g. ISFJ) of the personality type you think the person is. Last 50 Tweets: {the user's tweets} Personality type:

3

The output was then compared with the actual personality type of each individual.

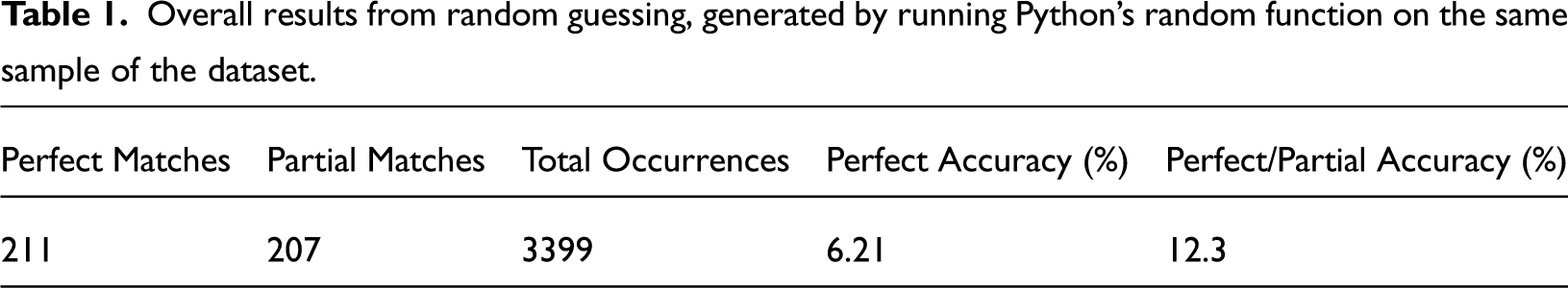

To evaluate the accuracy of OpenAI's models, the models’ performance was compared with two benchmarks. The first was random guessing. A random guess was run on the 3399 rows used in this study (Table 1), and this resulted in a perfect and partial accuracy rate of 6.21% and 12.30%, respectively. 4

Overall results from random guessing, generated by running Python’s random function on the same sample of the dataset.

The results of Ontoum and Chan’s (2022) RNNs were then used as the second benchmark (Table 2), which had an accuracy of 49.75% (shown below, along with the performance of the other models). This is because it was run on the same dataset as the one in this study, and was the most performant of the models they tested. The tests for statistical significance were conducted on proportional (not absolute) outcomes of the models, making it possible to compare the results from this analysis (which analyzed a subset of the data) to the results from the performance of this RNN (which investigated the whole dataset as it was more computationally feasible).

A comparison of the results of Ontoum and Chan’s (2022) tests with the performance of GPT-3.5 and GPT-4 in this study. The “Recurrent Neural Networks” are used as a benchmark for statistical significance in this study.

Results

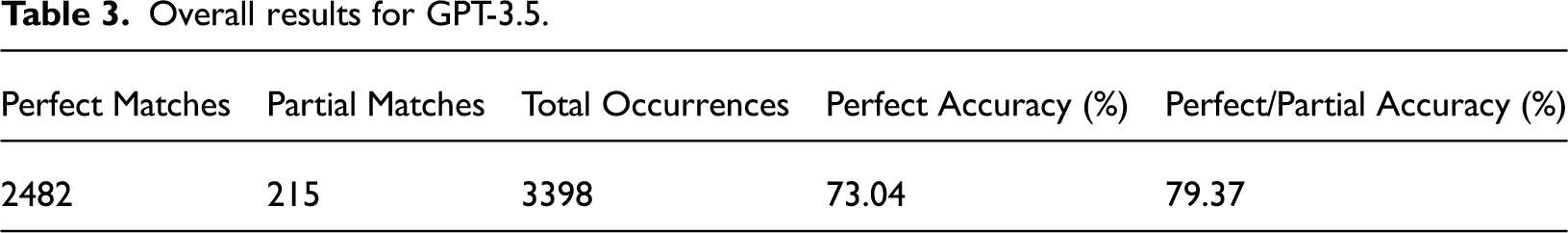

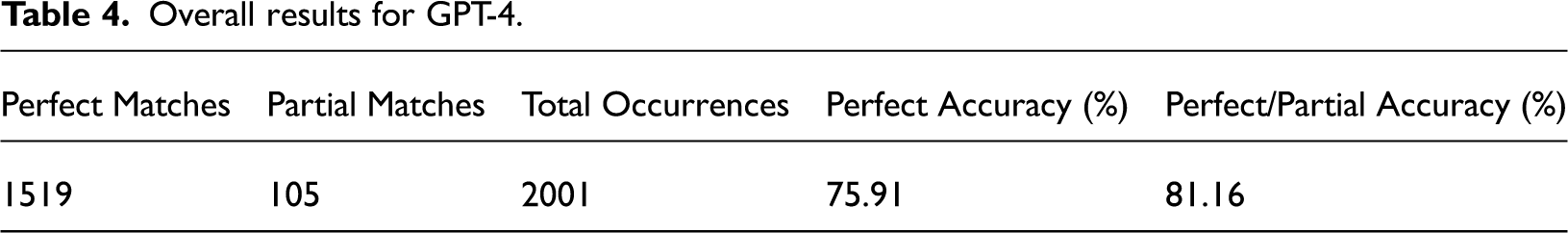

Both GPT-3.5 and GPT-4 performed remarkably well at this task (Tables 3 to 4): they were able to predict 79.37% and 81.16% of the individual's personality traits either perfectly (4 out of 4 personality traits correct) or almost perfectly (3 out of 4 personality traits correct), respectively.

Overall results for GPT-3.5.

Overall results for GPT-4.

For GPT-4, out of the 2001 individuals analyzed it perfectly matched 1519 of them (75.91%), and partially matched a further 105 (5.2%). For GPT-3.5, 3399 individuals were analyzed, and of these 2482 were perfect matches (73.04%) and 215 were partial matches (6.33%).

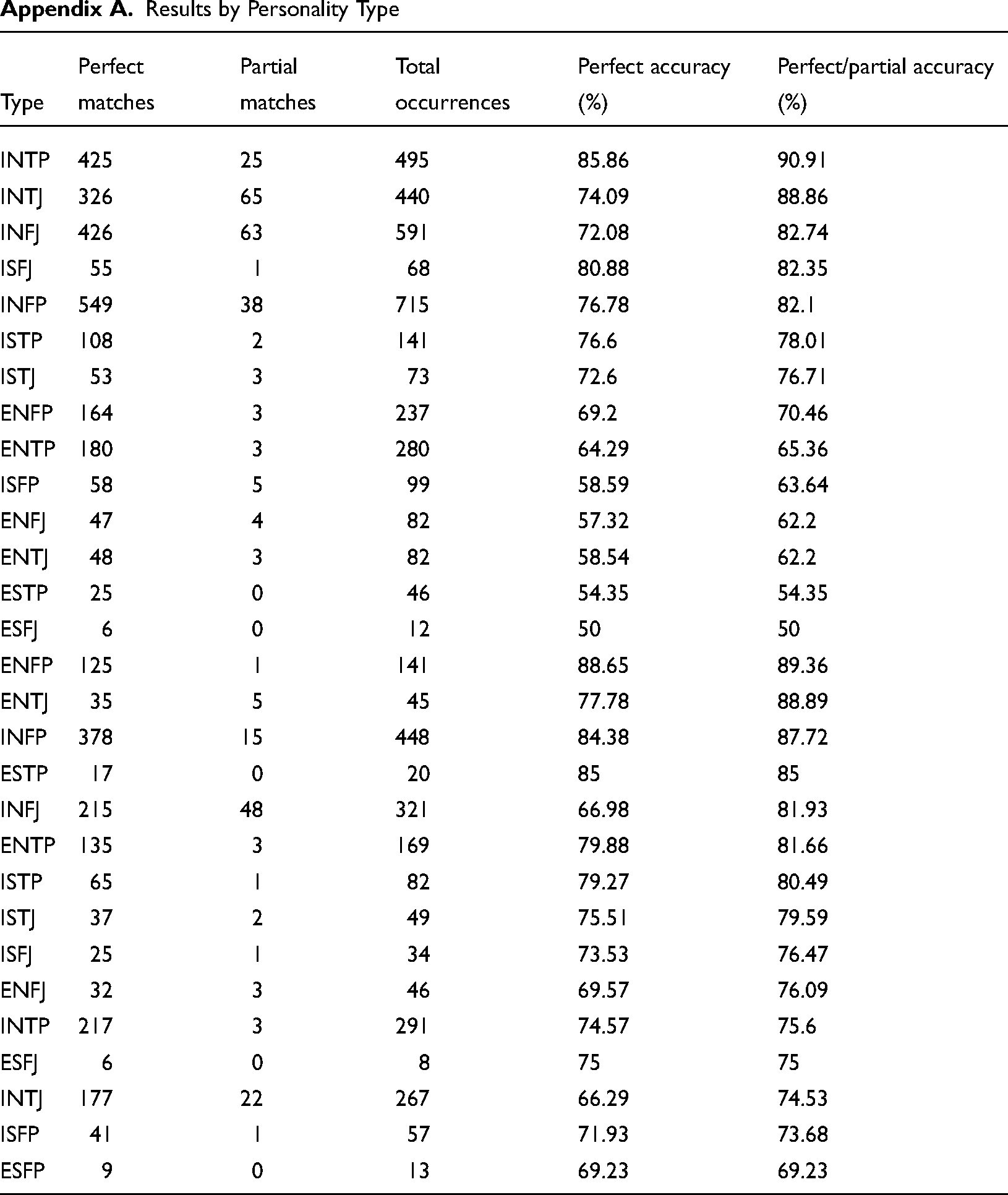

GPT-4 was most performant for those with “ENFP,” “ENTJ,” and “INFP” personality types, and least accurate for people who were “ESFP,” “ISFP,” and “INTJ.” Conversely, GPT-3.5 performed most accurately for people with “INTP,” “INTJ,” and “INFJ” personality types, and least accurately on “ESFP,” “ESTJ,” and “ESFJ” (see Appendix A in the Supplemental Material).

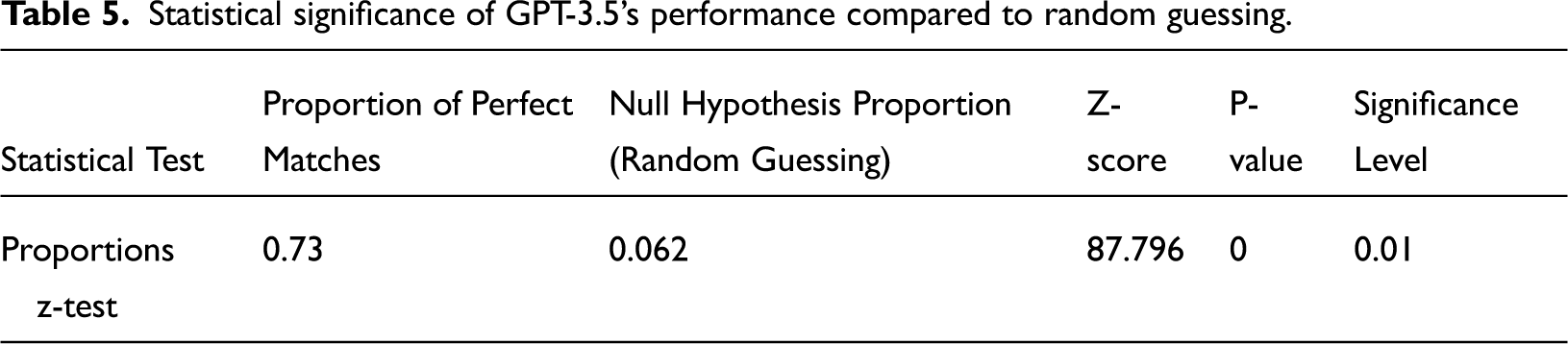

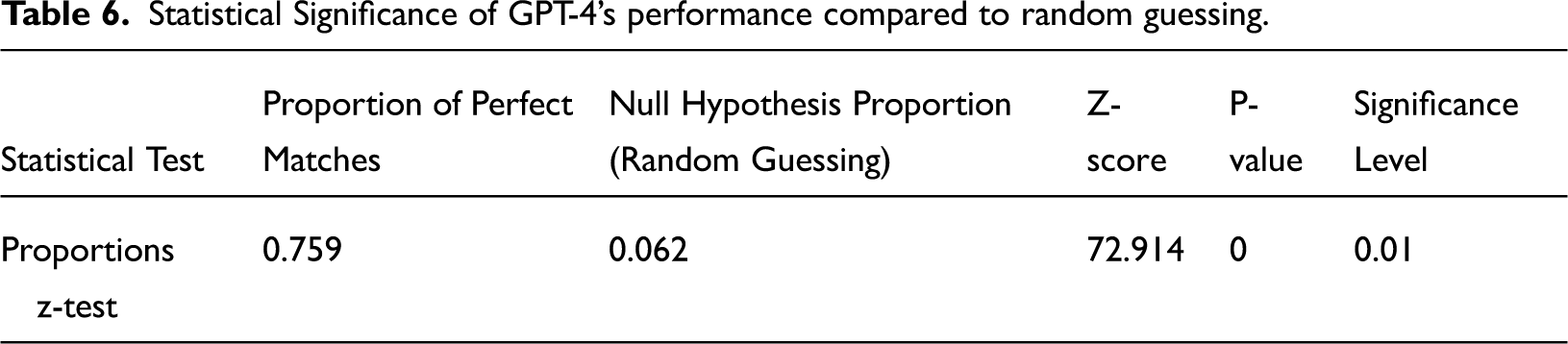

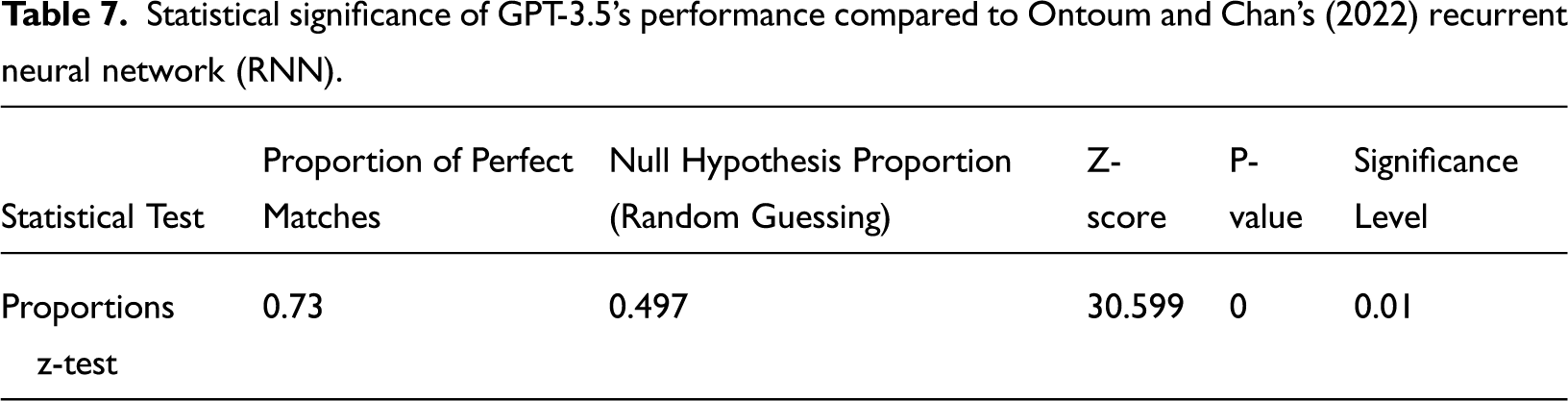

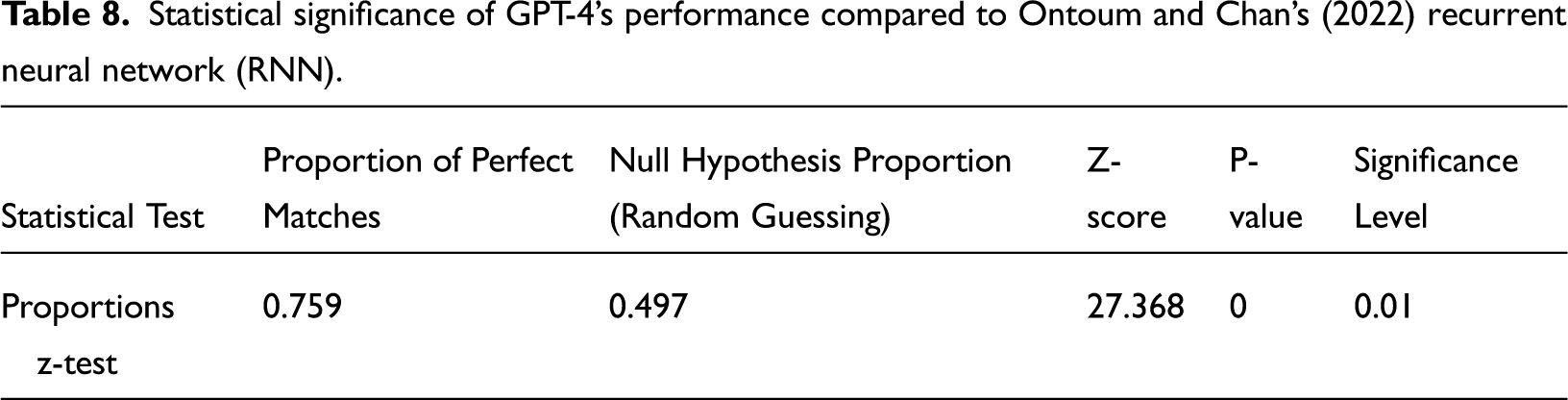

Using a significance level of 0.010, there is sufficient evidence to reject the null hypothesis of this study that GPT-4 and GPT-3.5 will be unable to predict an individual's personality type more accurately than each of the “random guesses” and an RNN (Tables 6 to 8).

Statistical significance of GPT-3.5’s performance compared to random guessing.

Statistical Significance of GPT-4’s performance compared to random guessing.

Statistical significance of GPT-3.5’s performance compared to Ontoum and Chan’s (2022) recurrent neural network (RNN).

Statistical significance of GPT-4’s performance compared to Ontoum and Chan’s (2022) recurrent neural network (RNN).

Discussion

Micro-targeting, a form of communication that is now seen across social media sites, is often designed around personality types. Messages are tailored toward an individual's personality in an attempt to increase persuasiveness or relevance. While research has shown that technology can hyper-charge micro-targeting (Choong & Varathan, 2021; Lambiotte & Kosinski, 2014), this paper focuses on whether LLMs specifically might increase the effectiveness of this communicative practice even further.

The null hypothesis of this study is that GPT-3.5 and GPT-4 will be unable to predict an individual's personality type (based on an individual's 50 recent tweets) more accurately than random guessing and a high-performing ML algorithm (an RNN). Having run OpenAI's models on an MBTI dataset, there is sufficient evidence to reject the null hypothesis. The analysis found that GPT-3.5 and GPT-4 perfectly classified 73% and 76% of the sample's personality type along the MBTI, respectively. This is significantly better than random guessing and other ML models, including RNNs.

This finding has important implications for communication scholarship and sets up several future avenues for research. In particular, future work might investigate whether this ability to micro-target individuals with heightened accuracy using LLMs translates into an increased incidence of this practice. Put differently: now that micro-targeting can be done more effectively, are more actors on social media engaging in it? Furthermore, there is the possibility of misuse in this area of communication: bad actors can harness the improved capabilities that LLMs offer to manipulate or deceive people. This makes it crucial for academics, social media companies, and those working in AI safety to partner collaboratively to build the necessary safeguards and countermeasures.

It is important to caution that this study is limited in some ways. First, since the dataset is taken from the PersonalityCafe forum, many of the tweets are related to a discussion about personality. While the impressive performance of the models cannot be solely due to people explicitly stating their personality type (under 4% of people in the relevant part of the data did this), it might be that people revealed their personality through how they discussed other personality types. The most exciting future directions of this work might collect new data—from Twitter and other social media sites, such as Reddit—where the conversation is not related to personality whatsoever. Second, future studies would do well to investigate whether the models perform better if different prompts are used. Additionally, it would be interesting to see if the number of perfect responses could be increased by requesting two personality types (one the models are most confident in and another it thinks the individual could also be), as well as by fine-tuning the model.

Ultimately, the hope is that this paper encourages more research into how LLMs might change the dynamics of micro-targeting in digital spaces. Models such as GPT-3.5/4 will enable a range of actors, including nefarious ones, to detect individuals’ personality types more accurately than ever before, and then persuade or even manipulate them accordingly. It is only by engaging in this research, and pushing these models to their limits, that the potential for misuse can be effectively addressed.

Supplemental Material

sj-ipynb-1-emm-10.1177_27523543241257291 - Supplemental material for Artificial Intelligence and Personality: Large Language Models’ Ability to Predict Personality Type

Supplemental material, sj-ipynb-1-emm-10.1177_27523543241257291 for Artificial Intelligence and Personality: Large Language Models’ Ability to Predict Personality Type by Max Murphy in Emerging Media

Footnotes

Declaration of conflicting interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by Harvard's Shorenstein Center on Media, Politics and Public Policy,

Supplemental material

Supplemental material for this article is available online.

Notes

Results by Personality Type

| Type | Perfect matches | Partial matches | Total occurrences | Perfect accuracy (%) | Perfect/partial accuracy (%) |

|---|---|---|---|---|---|

| INTP | 425 | 25 | 495 | 85.86 | 90.91 |

| INTJ | 326 | 65 | 440 | 74.09 | 88.86 |

| INFJ | 426 | 63 | 591 | 72.08 | 82.74 |

| ISFJ | 55 | 1 | 68 | 80.88 | 82.35 |

| INFP | 549 | 38 | 715 | 76.78 | 82.1 |

| ISTP | 108 | 2 | 141 | 76.6 | 78.01 |

| ISTJ | 53 | 3 | 73 | 72.6 | 76.71 |

| ENFP | 164 | 3 | 237 | 69.2 | 70.46 |

| ENTP | 180 | 3 | 280 | 64.29 | 65.36 |

| ISFP | 58 | 5 | 99 | 58.59 | 63.64 |

| ENFJ | 47 | 4 | 82 | 57.32 | 62.2 |

| ENTJ | 48 | 3 | 82 | 58.54 | 62.2 |

| ESTP | 25 | 0 | 46 | 54.35 | 54.35 |

| ESFJ | 6 | 0 | 12 | 50 | 50 |

| ENFP | 125 | 1 | 141 | 88.65 | 89.36 |

| ENTJ | 35 | 5 | 45 | 77.78 | 88.89 |

| INFP | 378 | 15 | 448 | 84.38 | 87.72 |

| ESTP | 17 | 0 | 20 | 85 | 85 |

| INFJ | 215 | 48 | 321 | 66.98 | 81.93 |

| ENTP | 135 | 3 | 169 | 79.88 | 81.66 |

| ISTP | 65 | 1 | 82 | 79.27 | 80.49 |

| ISTJ | 37 | 2 | 49 | 75.51 | 79.59 |

| ISFJ | 25 | 1 | 34 | 73.53 | 76.47 |

| ENFJ | 32 | 3 | 46 | 69.57 | 76.09 |

| INTP | 217 | 3 | 291 | 74.57 | 75.6 |

| ESFJ | 6 | 0 | 8 | 75 | 75 |

| INTJ | 177 | 22 | 267 | 66.29 | 74.53 |

| ISFP | 41 | 1 | 57 | 71.93 | 73.68 |

| ESFP | 9 | 0 | 13 | 69.23 | 69.23 |

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.