Abstract

Michael G. Jacobides, Annabelle Gawer, Nikolaus Lang, and David Zuluaga Martínez argue that AI regulation reflects domestic political economy and geopolitics. They urge layered, sector-embedded governance that will allow AI to revitalize economies while checking corporate concentration, aligning suppliers with adopters, and keeping markets contestable.

Technology reshapes industries and redefines competitive dynamics. These days, regulation is no longer an afterthought; it is central to strategic advantage: “the new hot thing in strategy.”

1

Firms that once focused purely on innovation and execution must now contend with an increasingly complex and politicized regulatory environment. Some actors challenge any move to regulate as innovation busting, while others embrace the need for certainty, collaboration, alignment, and checks on excessive power, but grapple with the specifics.

2

Technology reshapes industries and redefines competitive dynamics. These days, regulation is no longer an afterthought; it is central to strategic advantage: “the new hot thing in strategy.”

1

Firms that once focused purely on innovation and execution must now contend with an increasingly complex and politicized regulatory environment. Some actors challenge any move to regulate as innovation busting, while others embrace the need for certainty, collaboration, alignment, and checks on excessive power, but grapple with the specifics.

2

This is especially true in the digital and tech domains, where the rise of digital platforms and ecosystems has triggered intense regulatory soul-searching. These business models challenge traditional regulatory categories, blurring the boundaries between firms and industries, redefining market power, and introducing dependencies between sectors. 3 Yet regulatory responses have often been reactive, fragmented, and outdated. In attempting to discipline tech power—with measures like the EU’s Digital Markets Act, US antitrust suits, or data sovereignty efforts—regulators have struggled to keep up with rapidly evolving business models and technologies. Even well-intentioned regulation risks irrelevance and unintended consequences. And the emergence of artificial intelligence (AI), and particularly the meteoric rise of generative AI (GenAI), has added a new urgency and complexity to regulating technology.

As AI rules and regulations continue to proliferate and change, technologists and executives need a compass to guide their expectations, while policymakers need to rapidly learn a great deal so they can devise better boundaries. All of them would benefit from a rigorous understanding of how regulation is shaped by the political economy and geopolitics of AI, and particularly of GenAI, which dominates current policy discourse and action.

The political economy of GenAI comprises the complex interactions between regulators, businesses, and civil society and how those interactions guide regulation. What gets regulated, how, and by whom is determined by a complex negotiation of interests and incentives between various parties: policymakers who are under pressure to “do something” about AI, whether substantive or symbolic; the representatives of powerful tech firms seeking to influence outcomes, waving the banner of “responsible AI” while lobbying for flexible, innovation-friendly rules; traditional incumbents seeking protection or exemptions; and non-business societal actors who generally advocate for stronger protections for consumers, small and medium enterprises (SMEs), freelancers, gig economy workers, and citizens.

The political economy of GenAI is essentially domestic; it concerns the actors that shape regulatory choices within a given jurisdiction. However, these domestic dynamics are part of the broader geopolitical context. At the same time, the regulation of GenAI will be a product of each country’s geopolitical ambitions and vulnerabilities with respect to the technology. AI industry leaders will also use their power to influence regulatory policy, playing governments against each other and exploiting their ambitions.

The State of Play

GenAI has become the focal point of regulatory discourse because it dramatically increases the impact of the AI family of technologies. Given the competitive dynamics of the GenAI industry, regulatory action is particularly urgent. Moreover, legacy AI regulation is not adequate to the challenge of GenAI. We must understand the geopolitics and political economy of GenAI specifically as determining the future trajectory of broader AI regulation.

Why GenAI dominates AI policy discourse

AI’s impact is expanding from focused intelligence to strategic infrastructure. It began as a highly specialized tool that could automate predictions, optimize logistics, and filter content. It was initially applied to specific functions, often by tech firms that already had clean data, agile teams, and modular architectures. Rapid technical advances, cheaper computing, and increasingly sophisticated use of machine learning and other forms of AI to solve problems in a wide range of sectors caused swelling excitement about AI. 4 At the same time, tech firms were designing tools that produced better results by complementing organizational processes and data infrastructure while accommodating regulatory settings.

Generative AI, however, is not just another productivity tool; it is a class of technologies that is changing whole systems. Generative AI has expanded AI’s functional domain as well as the kinds of data it can use. It allows AI to generate qualitatively original and novel content. By mimicking human expression and reasoning, GenAI extends AI’s reach, moving into broader cognitive, professional, and particularly creative domains. It blurs the boundaries between producing and consuming content, between junior employees and automation, between human judgment and machine suggestion, between performance and understanding. 5 And unlike those of prior waves of automation, the effects of GenAI are not isolated; they are diffuse and pervasive and may compound. 6

Far from simply automating concise tasks, GenAI can reshape workflows, alter hierarchies of skill, and undermine established indicators of expertise. 7 It is invading fields like law, consulting, education, and software, where credibility and craft were once the preserve of trained human professionals. GenAI is both far more capable than AI and also far easier to use. The natural language interface democratizes access to GenAI tools and allows users to accomplish a wide range of tasks including coding, a method termed vibe coding. GenAI may subtly but entirely redefine the infrastructure of knowledge work (a topic we’re currently investigating in a related project).

But the pace and magnitude of GenAI’s impact on the economy are not just a product of technological potential; they depend just as much on what economic actors choose to do with it. Still, the likely, or at least possible, systemic transformations of GenAI do explain why generative models and their novel regulatory concerns dominate the conversation about AI policy.

Why this moment matters

GenAI regulation is urgent because of both the magnitude of the technology and the unprecedented speed of its development. Despite recent corporate rhetoric, we might need to act prudently and firmly to establish the regulatory contours of this new field.

8

Indeed, government intervention can complement innovation in AI, shaping its trajectory to engender social benefits.

9

It is certainly possible that such intervention could chill innovation, but some actors may also have self-servingly overplayed that risk.

10

As cutting-edge research is increasingly conducted at a handful of corporations, rather than at top universities, these corporate giants are moving away from the customary open standards and transparent communication, raising concerns about AI’s future. As the leaders of Stanford’s Human AI institute recently put it, “we have a fleeting opportunity to shape the trajectory of AI before it shapes us.”

11

Innovation does not necessarily lead to collaboration.

One central issue here is that, while GenAI may be considered a general-purpose technology, it is not modular. 12 Managers must coordinate it’s use within and between firms. And its success depends on how it can be embedded and complemented in practice, which, in turn, depends on how regulation shapes the incentives for collaboration and coordination between firms and what complementary activities and investments those firms undertake. The state may even be able to coordinate and encourage such activities, laying the foundation for innovation ecosystems. 13 Strong uncertainty limits the power of market forces, even, and perhaps especially, in the absence of regulation. Innovation does not necessarily lead to collaboration.

In the case of AI, a fast-moving market with platform characteristics or massive economies of scale in some areas, there is reason to believe that regulators should take early action to establish a competitive perimeter. 14 Even beyond the apparent concentration and power of GenAI or of foundation models themselves, there is a risk that GenAI exacerbates the existing winner-take-most dynamics of digital markets. 15 Large firms with proprietary data and the ability to integrate systems seem set to lock in their advantages while the rest struggle to adapt. Poorly designed regulation could further entrench this dynamic by raising compliance costs, ossifying standards, or allowing incumbents to capture the market.

Well-calibrated regulation, by contrast, can be a strategic equalizer, opening up access to data, clarifying the rights and responsibilities of all participants, and ensuring that innovation does not outpace accountability. It can also reinforce national strategic aims by fostering domestic AI ecosystems, setting defensible norms, and providing leverage in international negotiations.

All too often, however, the current approach falls somewhere in the middle, being too abstract to shape practice and enforcement and too slow to respond to change. We need a more grounded, strategic view of AI regulation, not just to mitigate risks, but to consciously structure the market.

The inadequacy of legacy AI regulation

The evolution of AI, from traditional predictive models to advanced generative systems, has produced complex challenges that the existing regulatory frameworks struggle to address. Early AI applications prompted regulation that focused on data privacy, bias, and transparency. GenAI introduces complex issues related to downstream usage and the ownership of training data. To thoroughly understand the political economy of GenAI, it is first necessary to understand the implications of regulating, or not regulating, these novel areas.

Early AI systems were designed primarily for specific tasks including credit scoring, fraud detection, and medical diagnostics. Its use in these applications raised concerns about data privacy, algorithmic bias, and the transparency of its decisions. Regulators responded with: • • •

However, GenAI, which is capable of creating text, images, and a variety of other content that appears to be human-generated, presents entirely new regulatory challenges that these existing frameworks are ill-equipped to handle. 16

Intellectual property and training data

GenAI models are trained on vast datasets which include copyrighted materials. This practice raises significant concerns about intellectual property. Managers and regulators should consider: • • •

The existing intellectual property laws are not sufficient to the complexity of GenAI technologies. The window in which regulation can make a tangible difference is closing fast because, in future, artificially generated, or synthetic, data is expected to become central to training AI models.

Downstream usage and sector integration

GenAI’s capacity to create content that seems human raises questions about integrating it into various sectors. The technology has significant legal and ethical implications. In healthcare and law, for example, regulators must scrutinize the use of AI-generated content with an eye to accuracy, accountability, and ethics. 19 Regulators should also consider sector-specific rules that address the unique challenges of GenAI in different industries, making sure that companies use it in keeping with the standards of their sector and the broader public interest. 20

Current regulatory approaches are rarely sufficiently granular to address concerns about the use of GenAI in specific sectors. They leave gaps in oversight such that their use is uncertain and regulators are building a patchwork of solutions that will be difficult to align globally. 21

Regulators must contend not only with the interactions between humans and GenAI systems, but also with the interactions between different AI systems, which will come to define many markets as so-called AI agents become more common. A world in which machines can carry out market transactions without benefit of human participation poses entirely different challenges from one in which machines can mislead, deceive, or exploit humans.

In response to the transition from predictive AI to GenAI, therefore, regulators must reevaluate their frameworks, emphasizing downstream usage and liability as well as data ownership. Current regulatory approaches are rarely sufficiently granular to address concerns about the use of GenAI in specific sectors.

The National and Corporate Logic that Shapes AI Regulation

Like other AI technologies, GenAI crosses borders. Yet the policies that govern it are deeply rooted in national legal traditions. As a result, global regulation varies wildly, reflecting differences in domestic political economies as well as the specific national interests, institutional capacities, and geopolitical ambitions of individual countries.

Unequal prospects and divergent approaches

GenAI expands AI’s overall impact, but it also raises the geopolitical stakes. As a driver of economic value, military advantage, and cultural influence, it has become a vital policy concern. How and if countries choose to regulate AI is largely a function of national strategies developed or revised in the face of GenAI.

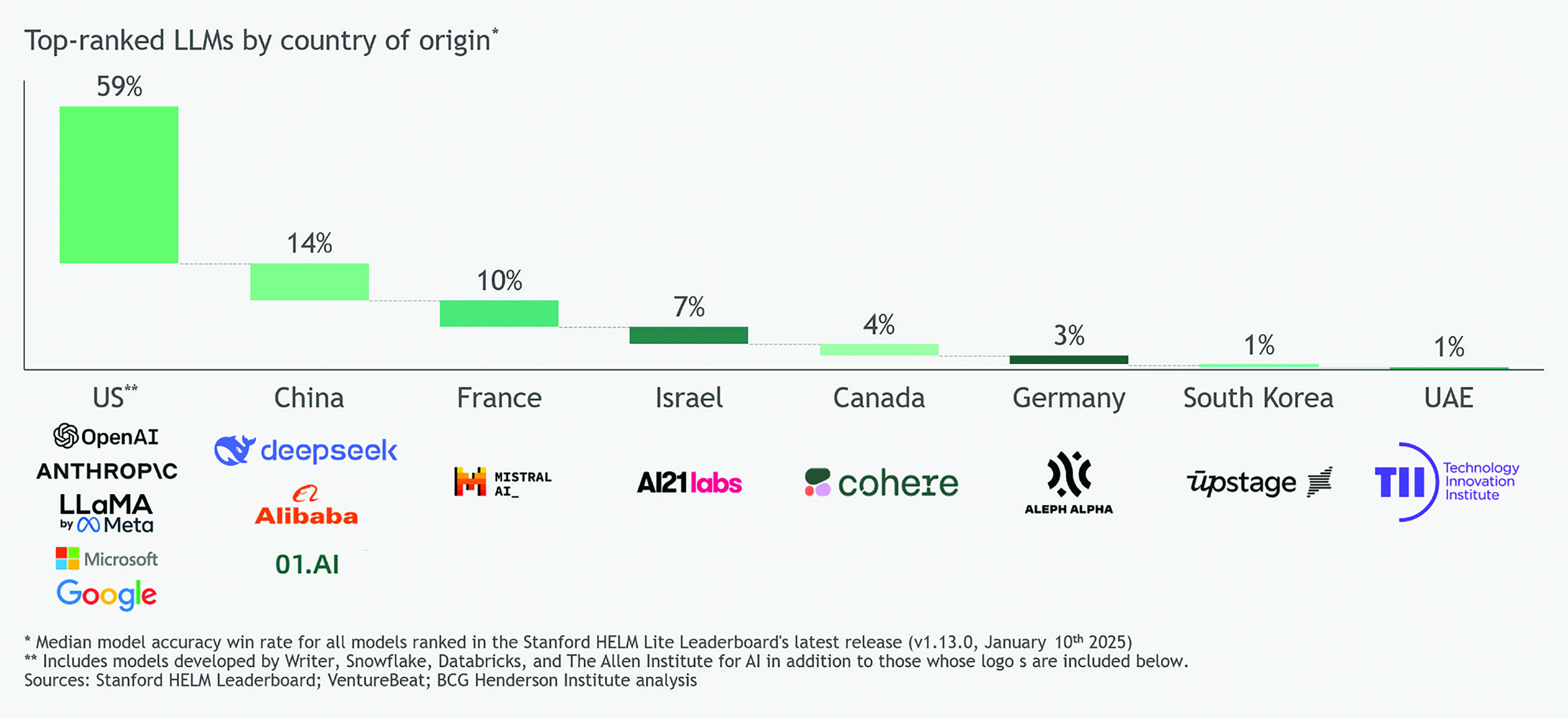

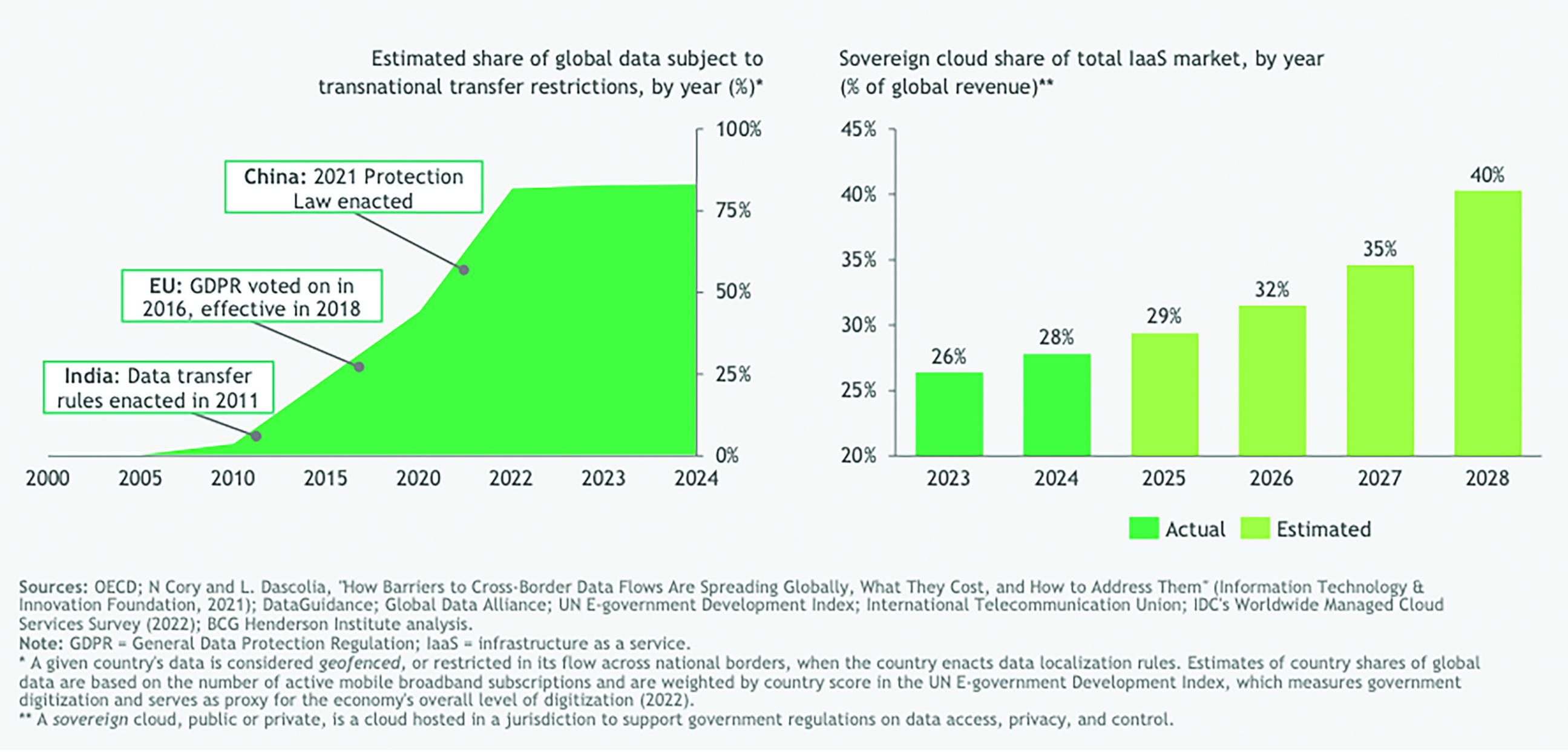

These national strategies are initially shaped by each jurisdiction’s choice of whether to secure a position as a supplier of GenAI or further its adoption on the demand side. While many countries profess an aspiration to become AI-sovereign, able to develop, govern, and use AI on their own terms, for most that goal is unattainable. Developing GenAI is expensive and technically complex. Very few countries are in a position to sustain a geopolitically salient role as its suppliers. For the few that can, regulation is as much about fostering a growing GenAI industry, particularly the development of foundation models, as it is about managing the risks involved in the use of its products. The national strategies of the rest, who have little influence over how the technology is produced, tend to focus more on adopting it safely and effectively. Just eight countries produced all of the leading GenAI foundation models, as ranked in the Stanford HELM Leaderboard. The US and China jointly account for nearly three-quarters of that number (see figure 1). Regulation in countries that supply GenAI technology is, unsurprisingly, shaped largely by their interest in retaining and expanding their influence. Countries that need only focus on the adoption and application of GenAI tend to take a more defensive and reactive stance. Leading GenAI Models by Country

Companies trying to become GenAI suppliers face significant barriers. Developing competitive foundation models is costly and technically challenging. Executives must secure a great deal of computing power, in the form of AI data centers, to serve them on a large scale. A recent analysis by the BCG Henderson Institute concluded that there are effectively two GenAI superpowers: the US and China. There are also a handful of lesser powers that might manage to become suppliers, including the EU, Saudi Arabia, the UAE, Japan, and South Korea. 22 This analysis does not rule out the possibility that other countries may emerge as important players. The UK, Canada, and Israel, for example, have strong AI research which could produce breakthrough models with superior capabilities. Indeed, the UK and Canada have produced some of the most influential AI innovations, but they don’t have the capital or the computing power to compete effectively in the global GenAI market (see appendix 1).

In countries that focus on furthering adoption and developing GenAI applications, regulators focus less on accelerating the development of new models or slowing the advance of geopolitical rivals and more on ensuring that the technology is used safely and in keeping with human values. They also work to protect the one strategic technological asset over which they have effective jurisdictional control: data. For most countries, AI sovereignty is practically unattainable. And when it comes to controlling GenAI systems, the stakes are very high. Executives and technologists should therefore expect geopolitical dynamics to reinforce the yearslong trend towards data nationalism and computing-location requirements (see figure 2). Geofenced Data and Sovereign Cloud Use

The significance of this divide between national strategies that focus on supply and those that focus on demand is exemplified by how the EU’s debate about AI policy has changed. 23 Since the 2024 Draghi report, which called for a renewed focus on competitiveness, European regulators began to see that they could empower European companies and not just police a market dominated by foreign players. 24 The geopolitical stakes of the AI race are pushing EU actors into a more offensive stance, contending in the global GenAI supply market. Their new approach may alter the entire trajectory of EU regulation as it is turned toward the development and growth of a robust GenAI industry. 25

Corporate influence

National policymakers and regulators make decisions in the context of global AI strategies. Corporate leaders, on the other hand, see their companies as the primary targets of regulation and their response is far from passive. Indeed, they actively shape policies in keeping with their strategic interests. Corporations, and particularly big tech companies, have data, distribution, and cloud infrastructure advantages that position them to readily incorporate AI. Unlike other actors in the political economy of GenAI, they have an established advantage that could prevent competition and ensure that GenAI models do not become interchangeable. Indeed, tech businesses that supply GenAI are the most powerful non-state actors in this political economy, shaping AI regulation in their own favor.

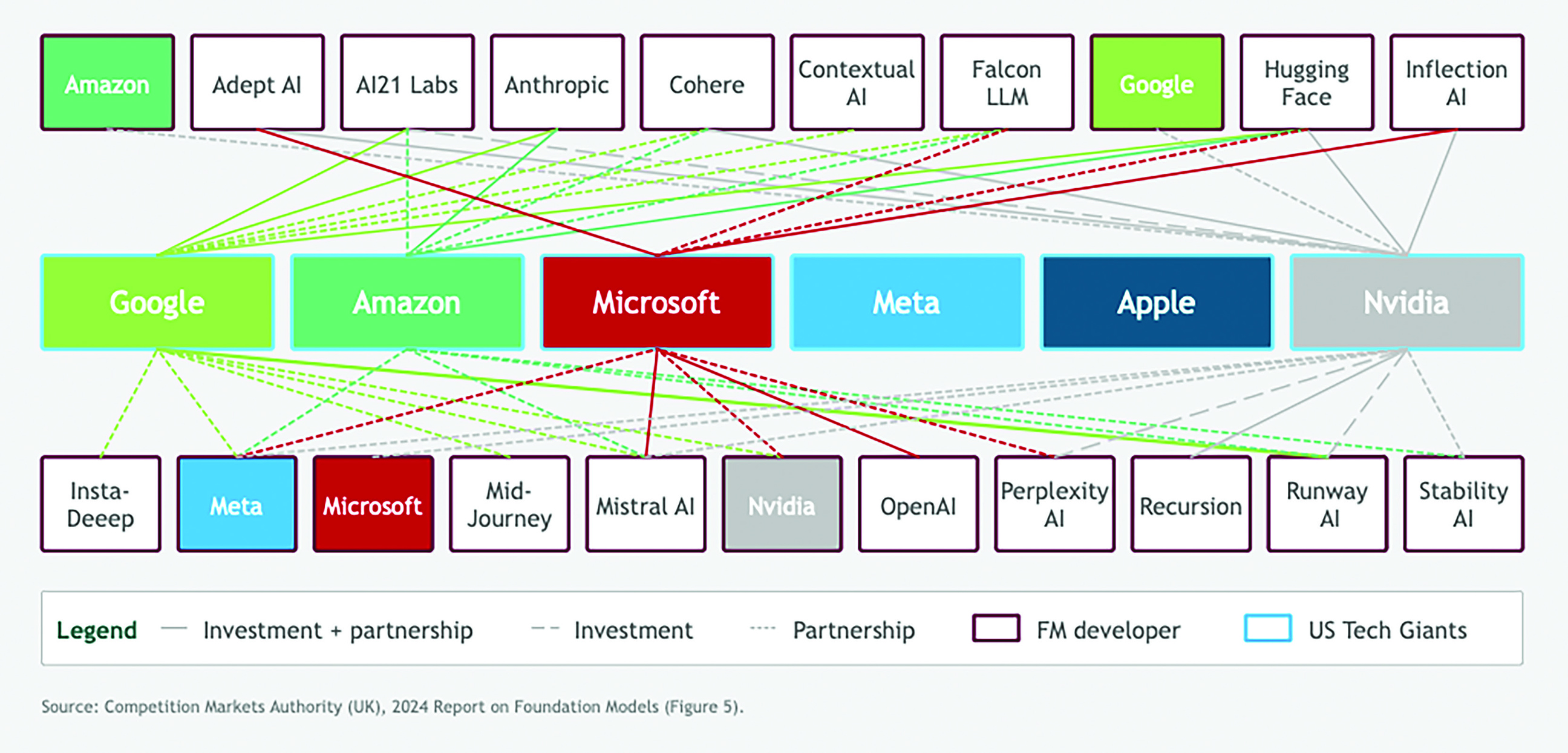

Existing tech players are already moving into position to absorb new entrants and use AI to strengthen their hold on their own customers (see figure 3). In order for vital innovation to continue, regulators must not only ensure safety and fairness, but create an environment in which new entrants and challengers can plausibly compete. The task is problematic, since the incumbents can readily integrate GenAI into all their services—from cloud infrastructure and enterprise software to consumer search and productivity tools. Of course these firms are not all equally keen to facilitate contestability or equally capable of affecting it, but absent regulatory action, the structural trend is clear enough: as GenAI is embedded in existing systems, the barriers against new competitors grow, even when their core technology is on a par with that of incumbents. Links Between Tech Giants and AI Labs

The interactions between corporate interests and national strategies are complex, revealing how the geopolitics and political economy of GenAI influence each other. National leaders who want to strengthen domestic GenAI suppliers may have to balance increasing competition against an enduring geopolitical advantage. The release of DeepSeek’s R1 model just a few months after OpenAI’s pioneering o1 model revealed this tension, showing that fast followers can very quickly catch up with pioneers. For the end user, R1 is 90 percent cheaper than o1. This is great news for consumers of the technology, but represents a structural challenge for pioneering GenAI labs which, having spent billions developing novel architectures and engineering techniques, see them replicated by rivals within months and at a lower cost. 26 Representatives of western GenAI labs, which once pushed for minimal or no regulation, are now urging their governments to erect ever-higher barriers against foreign competition (see appendix 2).

And the many businesses and people who rely on or are threatened by GenAI also participate in its political economy, contributing to domestic policy debates. Unlike tech giants, however, users have little coordinated agency. As a result, they have less power to advocate for or shape regulation even though they are vital in allowing the technology to create broader societal and economic value. Policymakers, inundated with pleas, grasping at dwindling resources, and beset by an ever-expanding set of complex problems, are ill-equipped to defend these important but less powerful people who will be affected by AI. And regulatory action is not driven by aspirational agendas, but by history and existing silos, especially when those who are regulated have significantly more technical expertise than those who have the unenviable job of setting the rules.

Current AI regulations show a strategic divergence between countries engaged in geopolitical competition and corporate influence on policy, leaving global regulation fragmented, often overlooking critical aspects of integrating AI and governing data. Global leaders must make a concerted effort to harmonize regulations, promote transparency, and ensure that AI development is aligned with broader societal values and interests. At the same time, we need to better understand how policy affects the industry so that we can shape the impact of AI and manage its broader repercussions. Global leaders must make a concerted effort to harmonize regulations, promote transparency, and ensure that AI development is aligned with broader societal values and interests.

What Could Go Wrong

AI regulation can shape entire markets, manage complex economic changes, and define legitimacy for a technology that is as promising as it is problematic. Yet current regulatory debates and initiatives do not address how AI is actually changing business and society, in part because they neglect its geopolitics and political economy.

Regulation should center on uses, not technologies

So far, regulators have focused on the technological design of specific GenAI foundation models. This tech-centric approach is a mistake; rapid technological developments can quickly and unexpectedly render such regulations obsolete. Biden-era regulations in the US, for example, attached regulatory scrutiny to specific technological features like the quantity of compute used to train foundation models. 27 Other jurisdictions emulated that approach. Yet as designers brought new methods to GenAI models—notably the test-time compute approach pioneered by OpenAI in 2024—these rules could no longer track AI’s capabilities. For this new reinforcement learning, raw FLOPs, a count of math operations per second, abruptly mattered less than high-bandwidth memory. The change also made China’s stockpile of Nvidia A800/H800 chips even more valuable and exposed a loophole in US export controls. To avoid this kind of specific technical irrelevance, regulators must build stable frameworks that focus on what the technology can be used to do.

While policymakers fret about frontier capabilities, alignment with human values, and existential risk, the most immediate challenges right now are about application: how AI is used in various sectors, professions, and public services. The risk lies not just in what AI can do, but in what firms and institutions can do with it. These dangers include the opaque use of AI in hiring, healthcare, finance, and law; the creeping erosion of accountability; and the further consolidation of power so that a handful of players control both the infrastructure and the use of AI. As a recent report from the London Business School, the Institute of Directors, and Evolution Ltd revealed, the problem is that regulators dwell on technology while businesses are already busy integrating AI into their practice. 28

The tech-centric model is also vulnerable to profound information gaps between regulators and the leaders of tech giants who are in the know about the frontier of technology. And it neglects the vital perspectives of corporate and personal users.

The inertia of regulatory silos

In most jurisdictions, regulation follows function: data privacy, employment law, consumer protection. But AI transcends these boundaries. It affects what counts as legitimate expertise, who has opportunities, and how decisions are made and justified. These changes redistribute value and opportunity, introducing new concerns about fairness and risk. Rules that were designed to protect fairness, safety, or competition are now being stretched or bypassed altogether.

We need a regulatory perspective as wide as the policy visions reflected by national AI strategies. These strategies represent intentional geopolitical bets informed by economic, security, and cultural considerations. Rather than disjointedly resolving legal questions one by one, AI regulation should likewise aim to encompass the broader societal and economic implications of the technology.

Managing the full implications of technically complex technologies that are imperfectly understood will require some experimentation with regulatory governance to create a healthy exchange of knowledge between regulators and technologists. Efforts like the UK’s AI Regulation Bill, which calls for the creation of an AI authority that cuts across boundaries, have already demonstrated that establishing these lines is easier said than done. 29

And for all of our excitement about coordinated and thoughtful responses, we will have to acknowledge, as social scientists have done for years, that inertia is likely to prevail with regard to substance and administrative division of labor. 30 If current trends continue, we will see evidence of strategic drift in several forms.

National fragmentation and regulatory arbitrage

Countries will continue to adopt divergent rules that reflect their political traditions and lobbying dynamics. A few jurisdictions, notably the EU, may impose horizontal AI frameworks. Others will default to voluntary approaches, often by sector. This divergence will give global firms opportunities for arbitrage, allowing them to deploy AI wherever regulation is weakest or slowest.

Rules shaped by courts, not legislatures

In the absence of clear statutory rules, intellectual property disputes about GenAI, like The New York Times v OpenAI and the Reddit and GitHub user cases, will become precedents. 31 Critical questions about data rights and economic value will therefore be decided through litigation, often in US courts, rather than through democratic or multilateral processes.

Corporate capture of the policy agenda

Firms with foundational models will continue to shape global governance, setting standards, framing questions technically, and promising to regulate themselves through AI safety pledges and the like. This regulatory capture may not be a matter of corruption, but rather of dependence, with regulators and legislators relying on private firms for expertise, infrastructure, and implementation, much as they did with platform governance.

Slow, uneven integration into sectors

Many governments will struggle to translate general principles into actionable guidance for all sectors. Without strong horizontal coordination or an empowered central agency, regulatory responsibility will fall to an uncoordinated range of bodies. The greater the complexity and societal importance of a sector, for example health, law, education, the greater the burden of regulations and the harder it will be to use AI effectively. Not coincidentally, these are the very sectors in which AI has the greatest potential to increase social welfare, making slow and uneven progress particularly harmful.

Unintended consequences to small outsiders

If AI regulation is enacted simply to regulate all things AI, without attention to the details of each sector, it will impose an administrative burden that large firms can manage but that devastates small, entrepreneurial firms.

Accelerated ecosystem lock-in

If left unchecked, GenAI is likely to help a handful of firms to establish rigid control of compute, models, deployment frameworks, and distribution channels, in short, the whole AI ecosystem. Their power will shape who benefits from AI and even how the economy is organized, fueling their market power, economic inequality, and an ever more bifurcated model in which a few, highly concentrated firms win and many others struggle or go under. It is therefore essential for regulators and leaders to understand the dynamics of AI-induced disruption. 32

More favoritism and industrial capture than policy

Rather than focusing on effective industrial policies, local powers will try to secure preferential treatment and protection. This effort may impede efficiency for final and intermediate users and, worse still, slow the very advances that regulation should foster. There is mounting concern, for instance, about tech firms persuading governments, including the UK’s, that AI is special and should therefore be exempt from intellectual property obligations, even though existing statutes might apply if effectively enforced. Firms’ requests for special privileges in the form of tax and other incentives, in exchange for the promise of “building AI advantage,” may also be hard for governments to resist. The calculus is complicated one. Meanwhile finding a balance between safety and competition or current benefits vs. opportunities for future challengers, or between a range of other dimensions, is difficult, while the sophistication and resources of the interested parties dwarf those of overstretched public authorities, making it an uneven match.

Looking Ahead: Principles for More Effective AI Regulation

However likely strategic drift may be, it is not destiny. We can and must build a robust regulatory framework for AI. And we must start by recognizing the geopolitics and political economy specific to GenAI. The resulting framework should have the following characteristics.

Layered and modular

Regulation should distinguish between governance of models, ensuring safety, robustness, and transparency; overseeing deployment, for use in sectors, professions, and services; and integration with systems, and its effect on ecosystems, market structure, and interdependencies.

Each layer may require a distinct governance structure with specific capabilities: deep technical expertise for the technology-oriented model governance, strong links to industry for overseeing deployment, and so on. We expect that regulation of system integration will become more important as agentic uses of AI begin to create semiautonomous marketplaces. For example, regulators will need to contend with the consequences as digital advertising markets shift away from Internet search and towards GenAI aggregating information or of AI agents becoming proxy consumers in various digital markets.

Embedded by sector

Many of the risks and opportunities of AI depend not on the design of the fundamental AI models but rather on how they are used in specific domains. Regulation must work with the existing governance of each sector, for example with financial regulators, health oversight bodies, and education ministries, bringing AI literacy to them. GenAI is a general-purpose technology and the boundaries between sectors tend to fade in an economy shaped by expanding systems, but the institutional infrastructure of regulation is still largely at the sector level. Regulators will find this existing infrastructure to be an asset to designing regulations that focus on the specifics of how AI is used in particular parts of the economy and society. AI will be everywhere, and while some coordination between sectors will be welcome, regulators should focus largely on each sector separately.

Geoeconomically aware

Effective regulation must anticipate how AI will change value across borders, industries, and firms. That means governments must align AI regulation with their broader industrial strategy, with competition law, and with digital sovereignty policies, especially those concerning data, cloud, and compute infrastructure. Even fiscal policy may be relevant, since it directly affects the economic case for rapidly automating labor. 33 As well as making rules, states can use public investment and procurement to shape the AI stack itself. Europe’s emerging EuroStack initiative combines open interfaces with the preferences of the demand side, turning data sovereignty into practical portability instead of preparing for the unlikely arrival of autarky. 34

Explicit about data and intellectual property

Policymakers must confront the issue of who owns training data. They must clarify what constitutes fair use of material in the public domain; whether it is necessary to obtain consent or provide compensation when scraping data; how creators and publishers should be compensated (an issue that Australia, Canada, and France are already addressing); and whether, and to what extent, intellectual property rights extend to synthetic data, which trained models generate for use in further model training. 35

Emerging proposals, including the UK’s AI Regulation Bill or the amendment tabled by Baroness Beeban Kidron in the data bill under discussion on May 2025, offer the beginning of a template, especially in their provisions that require organizations to keep records of training inputs and intellectual property. Yet in the world’s many jurisdictions, such obligations remain rare and often vague.

Designed to rein in ecosystem power

The UK Competition and Markets Authority’s ecosystem mapping clearly reveals that foundational model providers, including OpenAI, Google DeepMind, and Anthropic, are ever more central to how AI is integrated into services further downstream. But policymakers must pay attention to broader ecosystem effects, especially since foundation models may become like utilities, while the power moves to some other part of the system. Effective regulation must therefore establish interoperability standards that prevent lock-in; ensure that developer and deployment markets permit contest; address the leverage generated by adjacent domains, like cloud services combined with AI and productivity software; and consider how AI is changing the power dynamics of specific downstream markets. Unless regulators address these issues, powerful firms may create a self-reinforcing loop in which AI fuels the further concentration of economic and political power.

Recognize that regulation enables as well as constrains

Too many people see regulation only as a set of restrictions. But as a 2025 study conducted by OECD, BCG, and INSEAD shows, governments can also use incentives and facilitation to shape AI adoption.

36

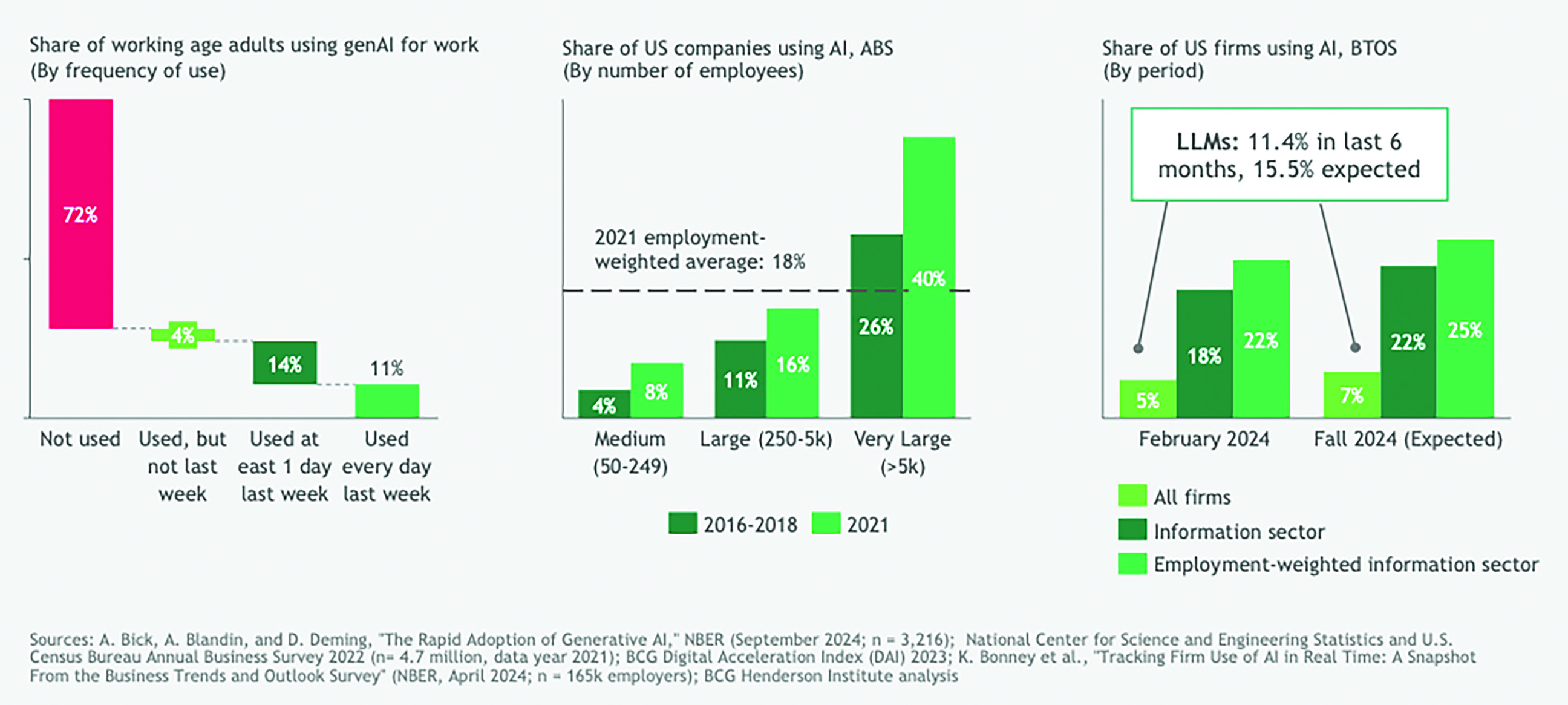

They can support training and education, provide access to high-quality public data, simplify procurement, and advise small and medium enterprises. Enabling regulation—whether through targeted subsidies, investment in infrastructure, or facilitating institutions—can expand the productive diffusion of AI, especially to less digitally mature firms and sectors. Since even the most advanced economies are adopting enterprise AI at a relatively modest rate, these supportive measures are particularly important (see figure 4).

37

We need to view regulation and state intervention as a staircase, not a railing, a pathway rather than a barrier. The window for shaping the trajectory of AI is quickly closing. Enterprise Adoption of AI

The window for shaping the trajectory of AI is quickly closing. The infrastructure is already being built. Business and political leaders are already forming the power structures, both nationally and globally. If regulators continue to muddle through, they will entrench the incumbents, miss the redistributive effects, and leave critical questions to litigation rather than policy. They will also risk focusing too much on technology and the need to be seen to “do something,” adding a layer of bureaucracy with little effect and overlooking the crucial issues of downstream application.

To avoid this outcome, policymakers must stop asking “What does AI do?” and start asking “What kind of economy—and society—do we want? How can we make sure AI brings it about, sector by sector?”

Author Bios

Footnotes

Acknowledgements

We would like to thank BHI’s Etienne Cavin for his contribution to this article and Tom Albrighton for able copyediting. This research was made possible by funding from BCG’s Henderson Institute, Evolution Ltd, and the London Business School.

Appendix 1: AI Approaches Around the Globe

A recent analysis by the BCG Henderson Institute found that the supply-side map of the geopolitics of GenAI is defined by the relative strength of six resources needed to become a supplier of intelligence: capital power, computing power, energy, data, talent, and intellectual property. 38 While ongoing policy changes, particularly in the US, are poised to reshape countries’ relative strength in these resources, an international comparison clearly singles out the primary actors: 39

A number of other countries, including Singapore and India, have adopted national strategies that focus on developing the application layer of GenAI, including use-specific applications that are built on foundation models. The Singaporean case is instructive in this regard, as it strongly emphasizes upskilling, aiming to triple the number of AI practitioners in the country by 2029, and institutional infrastructure to accelerate adoption and GenAI value creation, for example by setting up AI Centers of Excellence to build and research GenAI solutions in partnership with leading corporations, and by servicing SMEs and startups.

Appendix 2: Patterns in the Political Economy

Incumbents are trying to reduce their exposure to increasingly unreliable global supply chains. Industry interests and national strategies are profoundly shaped by fragmented and interdependent supply chains, particularly for semiconductors, the material underpinnings of the entire digital economy. The geopolitics of GenAI, as well as the actions of major corporate players, are profoundly shaped by supply-chain interdependencies. US-based Nvidia, for example, virtually controls the global market for the most advanced GPUs which are manufactured by the Taiwanese company TSMC using equipment provided by the Dutch company ASML and raw materials sourced from China, Japan, Germany, and the US. The Trump administration’s withdrawal of the AI Diffusion Framework, put in place by the Biden administration, speaks to the tensions between containing geopolitical adversaries and empowering corporations.