Abstract

The city has long captivated feminists as both a site of possibility and a terrain of struggle structured by patriarchal foundations. Contemporary feminist engagements with the smart city extends this tradition of critique, highlighting how current smart urban imaginaries remain male-centric and unsafe for women. Within this discourse emerges the “feminist smart city”: an urban future imagined as efficiently automated, safe, and gender-inclusive all at once, on the condition that women are invited to “voice” their concerns and experiences to co-shape this so-called smart future. Taking Safecity and Safetipin—two feminist crowdsourcing platforms founded in India and now globally influential—as emblematic sites of this vision, I examine the promise and limits of this invitation to “voice.” Analyzing a decade’s worth of materials surrounding the two initiatives, including media coverage, app interfaces, websites, stakeholder reports, academic papers (co)authored by the platforms’ founders, I show how, despite being cast as empowering, grassroots perspectives are flattened into clusters of data points: gathered only to be separated, amassed only to be isolated, and assembled only to be disassembled and reassembled with other datasets. In such processes, their interoperability is valued over their narrativity. Ultimately, I argue that the feminist investment in a safer, more just smart city has not materialized through these data-driven incorporation of women’s voice in smart urban governance; rather, what ensues is the encroachment of operational and securitized rationality into the very pursuit of gender justice.

Introduction

In the classic and widely influential book, Discrimination by Design: A Feminist Critique of the Man-made Environment, feminist architect Leslie Kane Weisman (1992) critiqued the dominant, male-centric imaginary of the future smart environment. She deconstructed an article on optical data transmission and high-speed computers that appeared in Science Digest, a popular monthly American magazine on general science, for its failure to challenge gender roles. The article under critique, dating back to 1983, imagined a future smart home in 2000 where a family’s everyday tasks were done through the computer. It read: “By the year 2000 the result will be a world profoundly different from the one we find today” (Science Digest, cited in Weisman, 1992: 161). What persisted, however, as Weisman rightly suggested, was the gendered division of labor: Mark—the imagined father—still “spen[t] today working from home,” while Tiffany—the imagined mother—continued to use the computerized terminal of her ivy-covered cottage “to do the shopping and update the children’s monthly physicals” (Science Digest, cited in Weisman, 1992: 161). Invoking this high-tech scenario as paradigmatic of a “HE future,” a term borrowed from futurist James Robertson, Weisman lamented: “We should not be fooled by the perfidious visions of a liberated future filled with technological gadgetry. Such visions are nothing more than patriarchal sophistry” (1992: 162). Juxtaposing this HE future to what she characterized as SHE future, Weisman argued that the future environments imagined by women are ones that could “change, grow, and respond sensitively to human needs” (1992: 171). The contrast between the two futures could not be more pronounced: while the HE future embodied the dream of an automated space, driven by an unrelenting pursuit of technology-enabled space colonization and expansion, the SHE future was “sane, human, and ecological” (Weisman, 1992: 160). The solution that Weisman hinted toward the end of the book sounds commonsensical: “[Women] must exercise judgment and make decisions about the nature of the spaces in which they live and work” (Weisman, 1992: 171). Women need to speak their minds, voice their concerns, and society needs to listen.

The injunction that women must “voice” their concerns and perspectives has come to frame much of scholarly, policy, and practitioner discourse on women’s empowerment, embedding within it un unexamined faith in speech as liberatory (see also Rangan, 2017). As social historian Jane Parpart astutely notes: “The search for empowerment has thus become a search for women’s voices” (Parpart, 2010: 15). Within contemporary feminist engagements with the so-called “smart city,” this critique of the lack of women’s voice—and the subsequent proposal of inviting women to speak—continues to reverberate (see, for example, Bansal et al., 2022; Chang et al., 2022; Maalsen et al., 2023; Macaya et al., 2022; Nesti, 2019). In these accounts, it goes mostly undisputed that the addition of women’s voices will help recalibrate the predominantly male-centric vision of the “smart city” into one that is safer, more inclusive, and more responsive to women’s needs. Beyond the risk of essentializing gender, within this strand of critique, the liberatory potential of speaking up is unproblematically assumed to be a linear progression from silence to voice (Gill and Ryan Flood, 2008), and the question about the conditions under which voices become meaningful (or not) are consequently stranded. The question of whose voices are omitted and overlooked is undoubtedly important. Equally important, however, is the question of when women do speak, how their voices come to matter (Couldry, 2010; Dreher, 2010; Le, 2024, 2025).

In this article, I take up the latter question, examining the conditions that mediate women’s speech once it is uttered and circulated within the circuits of data-driven urban governance. In other words, I ask what can—and cannot—be heard when women are invited to voice their concerns to co-construct a more gender-sensitive smart city. To ground this inquiry, I focus on two feminist crowdsourcing platforms based in India—Safecity and Safetipin—as my case studies. Both organizations, founded in 2013 by women’s rights activists in India in the aftermath of the horrific and widely publicized gang rape and murder of a medical student widely known as the Nirbhaya case, have since expanded globally. As of May 7, 2025, Safetipin’s website states that the organization has extended its reach to 16 countries and 75 cities worldwide. It has also engaged in partnership with numerous high-profile international organizations, such as UN Women, UNICEF, UN-Habitat, and Cities Alliance, and has been positively featured in many media reviews (see, for example, Bramley, 2015; Dai, 2018; Dune7, 2020). Similarly, Safecity has won numerous accolades for its advocacy and activism, such as the prestigious UN Sustainable Development Goals Action Award in 2017 (SDG Action Awards, 2017). Both initiatives epitomize a broader trend so perceptively observed by Tanja Dreher, Danielle Hynes, and Poppu de Souza that in our world today, “datafied systems are claimed to offer increased opportunities to have a say, to be heard in decision making and service provision, and to be part of governance structure” (Dreher et al., 2024).

Central in their advocacy for women’s voices is crowdsourcing apps that invite women to voice feelings of unsafety or narrate lived experiences of sexual violence in a predefined, sequentialized steps. These datafied voices—geolocated, timestamped, and aggregated—are subsequently analyzed for patterns. The aspiration is that when enough women voice their concerns about the places in which they feel unsafe or experienced violence, those places will be rendered legible. Consequently, women can be informed of safer routes to travel, police departments can optimize their patrol routes, and the current gender-blind smart city agendas can ostensibly become more gender sensitive. Both initiatives, indeed, have been celebrated for their innovation of using “Big Data to create safer public places for girls and women” (Dai, 2018) or of using smart technologies to “save our cities” (Dune7, 2020).

One cannot but be struck by how deeply these feminist proposals echo the problematic logic underpinning the so-called predictive policing, which, as many have argued, encodes and reproduces a discriminatory past, disproportionately targets marginalized communities, and legitimizes surveillance under the guise of safety and prevention (Das, 2021; Le, 2022). While this line of critique is important, it does not give us the full picture, not least because it confines the critique of prejudice at the level of data itself (i.e. biased data leads to biased decisions). Consequently, it might give the impression that remedy lies in technical interventions: improving data collection methods or diversifying datasets (Norori et al., 2021). This article argues instead for the impossibility of meaningful voices in data-driven and automated systems, and that this impossibility should be best understood not by looking at biased data and their unintended consequences, but through an examination of how certain biases are inherent features of automated mode of governance, and for which, they cannot be “de-biased.” In more specific terms, the significance of “datafied voices” offered by interventions such as Safetipin and Safecity, as I will argue, should be situated within the structural tendency of automated governance to privilege action over narratives, one that fragments political speech into interoperable signals. This reading is inspired by media scholar Mark Andrejevic’s (2019) notion of “operationalism,” a logic of governance that emerges when automated systems are deployed to orchestrate urban life.

In the sections that follow, I first situate my analysis within the conceptual framework of operationalism before turning to the method and the empirical materials that ground this inquiry. The subsequent finding sections detail the journey on which grassroots voices become inaudible. I begin with a survey of how voices are operationalized and flattened by Safetipin and Safecity. I then trace how grassroots perspectives are visualized on the apps’ interfaces to form clusters of dangerous hotspots, a process through which accounts that do not conform to the locational pattern are potentially erased. The following section moves beyond the treatment of grassroots data/voice within the two platforms themselves and considers their treatment within the broader data ecosystem grassroot voices are endlessly assembled and disassembled. Within such system, what is celebrated as grassroots voice is flattened to modular inputs for urban management in ways that are indifferent to the meaning they convey, their frictionless interoperability being the only aspect that matters. Ultimately, I contend that women’s voices have not been incorporated to co-shape a more gender-sensitive smart city; what happened is precisely the opposite: the encroachment of operational and securitized logic into the very pursuit of gender justice.

Conceptual framework: Understanding datafied voices through “operationalism”

The deployment of automated technologies—data-driven analytics, networked communication, ubiquitous computing, and algorithmic decision-making—to govern space and orchestrate urban life has been approached from multiple vantage points. Media scholar Shannon Mattern (2013), for example, critiques the technocratic dream of datafying the cities as a form of “instrument rationality,” wherein governance is premised on the belief that all significant urban processes and activities can be sensed, quantified, and rendered tractable through computational solutions. Increasingly, geographers have started to explore the politics of automated space by foregrounding the lens of temporality. Rob Kitchin (2014), for instance, characterizes the smart city as “the real-time city,” highlighting the shift in urban governance logics that increasingly hinge on the real-time, continuous management of physical spaces. Similarly foregrounding the temporal dimension of smartness, Agnieszka Leszczynski (2016) compellingly approaches smart urbanism as a project of “future-ing,” emphasizing how the vision of urban big data, in large part, aspires to securitize, control, and preempt the unfolding of undesirable urban futures.

Concurrently, various scholars have approached the implications of automated urban governance not from temporal but spatial concepts, mobilizing, for example, the Foucauldian conception of environmental governance (Foucault, 2008) to trace how automated techniques shift the target of governance away from individual subjects toward the modulation of and interventions into the broader environment—the “milieu”—as a means to manage the conduct of individuals and populations (Andrejevic, 2019; Das and Kwek, 2024; Gabrys, 2014). One example discussed by Andrejevic et al. (2024) concerns the 2022 incident in New York city, where an attorney was barred from attending a Rockettes performance with her child after a facial recognition technology flagged her as affiliated with a law firm involved in a litigation against the venue’s parent company. In this instance, rather than relying on a panoptic gaze that compels individuals to internalize surveillance and self-regulate, automated infrastructures intervene by embedding exclusionary measures directly into the physical environment that denies access to certain targets. While this rich body of scholarship has illuminated how states and corporate actors harness automated systems to govern urban life, less critical attention has been paid to how grassroots initiatives are also being enfolded into these smart projects.

This article shifts the focus onto the more grassroots initiatives that attempt to recalibrate the smart urban agenda to better reflect and cater to women’s lived experiences—particularly those that crowdsource and datafy women’s voice in an effort to reclaim women’s right to navigate public space. I ask what awaits these voices once they are uttered and circulate further to the circuits of data-driven, automated systems within the smart city. In other words, I attend to informational infrastructures and their underlying logics that absorb, circulate, and condition the legibility of these voices: the data ecosystems within which grassroots voice is made to travel, come to be meaningful, and for whom. To this end, I draw on Andrejevic’s (2019) account of operationalism and operationality, which borrowed from the work of visual artist Trevor Paglen, who, in turn, took up and sharpened the concept from filmmaker Harun Farocki’s notion of “operative images.” For Farocki, who coined the term in 2000 in his audiovisual installation Eye/Machine, operative images are ones that serve a functional purpose rather than being representations of the real world. They are made “neither to entertain nor to inform . . . These are images that do not represent an object, but rather are part of an operation” (Farocki, 2004: 17). What unsettles Paglen (2014) about these images is their opacity and inscrutability to us, despite their ubiquitous use in the service of capitalism and militarism.

In keeping with the logic of operationality invoked by Farocki and Paglen, Andrejevic speaks of “operationalism,” a governance logic which seeks to operate on data in ways that displace narrative accounts and explanations (2019). Operationalism, for Andrejevic, has an inherent bias toward operation and action: data collected are responsible for correlating with each other and forming (actionable) patterns rather than to bring any narrative understanding to the phenomenon. As automated systems are designed only to isolate a pattern, not to interpret or make sense of it, the “frame” that guides any interpretation of events is disarrayed and dissolved. Philosopher Byung-Chul Han, who writes about the crisis of narration and representation emblematic of the information society, concurs: “Correlations are the most primitive form of knowledge. They do not allow us to understand anything . . . The question ‘why?’ is replaced with a non-conceptual ‘this-is-how-it-is’” (Han, 2023: 50, italics in the original).

This conceptualization of the smart city as an “operational city” offers an avenue for exploring the “fate” of grassroots voices when women are encouraged to share their experiences and perspectives in the name of co-producing a more equitable—or ostensibly “feminist”—smart city. As media scholar Nick Couldry argues, voicing essentially presupposes the practices of listening, which, in turn, involves a frame that mediates how those voiced perspectives are received (Couldry, 2010). This irreducible necessity of the frame in guiding how we respond to others is invoked by philosopher Judith Butler as they give the example of reading and responding ethically to a human face: If one is to respond ethically to a human face, there must first be a frame for the human, one that can include any number of variations as ready instances. But given how contested the visual representation of the “human” is, it would appear that our capacity to respond to a face as a human face is conditioned and mediated by frames of reference that are variably humanizing and dehumanizing. (Butler, 2009: 29 my italics)

The notion of the frame, which was first put forth by sociologist Erving Goffman (1974) in Frame Analysis, has been taken up particularly in media studies to describe certain process of narrating that involves selection, emphasis, and omissions. Frame analysis has then become a way to uncover “ideologies” or “agendas” in media messages (Hamborg, 2023; Scheufele, 2006). Butler’s account, however, points to the irreducibility of frames in shaping (mis)recognition. The digital #MeToo movement, for example, which involved hundreds of thousands of women taking to social media to denounce their experiences of sexual harassment, can be interpreted as “credibility in number” or, alternately, as a modern-day witch-hunt that ruined men and their careers with unverifiable claims (Banet-Weiser, 2021). Here, one might debate whether a frame is ethical or unethical, humanizing or dehumanizing, but the point is that its presence must remain firmly in view if we are to engage in a meaningful discussion about how this frame might mediate our practices of listening and (mis)recognizing others’ voices. Operational mode of governance, however, seeks to do away with the frame and this displacement raises profound questions—not about, to paraphrase Spivak (1988), whether the subaltern can speak (for they undoubtedly can with the aid of crowdsourcing apps that gather and datafy their voices)—but about how their voices come to matter, and indeed, whether we can really know.

I use the lens of operationalism to draw attention to the multiple frames that are displaced when grassroots voices are incorporated into the governance of smart cities. On one hand, Safetipin and Safecity promise to inaugurate a new form of “participatory democracy” (Bramley, 2015), positioning their crowdsourcing apps as an offering of voice where women, girls, and other marginalized groups are empowered to speak to co-shape a safer city (so long as they possess an Internet-connected mobile phone). The promise is evident in the Safetipin’s mission statement: “Shaping cities where every woman has a voice,” a mission that is also positively welcomed by its many constituents (Safetipin, n.d.-a). As one user puts it in their review of Safetipin on the Google Play Store: “Unique app with a fantastic cause-since downloading I feel that I’m able to contribute making [sic] my city a safer place for all, that my voice is heard for others to see. Highly recommended!!” On the other hand, Safetipin and Safecity’s partnership with urban stakeholders mean that once the grassroots voices are datafied, they are no longer recognized as representations of grassroots perspectives or lived experiences: the referents (to use a semiotic term) from which data originate and are meant to signify disappear from view as the signifiers, or datafied accounts, are continuously fragmented, disassembled, and reassembled with other data points in the ecosystem. To put it differently, the emphasis on data exchange for smoother, more effective urban governance means that grassroots accounts, upon entering this informational infrastructure, become shifting clusters of data, subject to multiple interpretations and reinterpretations by a diverse set of actors whose values and goals might be radically contradictory.

Methodology

The materials for this chapter are drawn from a wide range of texts surrounding the two initiatives, Safetipin and Safecity, including media coverage retrieved through the Factiva database supplemented with Google searches spanning 11 years of their operation (2013–2024). Using the search term “Safetipin or Safecity and safe or unsafe and place or space and data,” I compiled a corpus of more than 100 news articles that discursively construct how grassroots data is used by the two initiatives to facilitate the identification of safe and unsafe locations and how these data are incorporated further in the urban governance process. A preliminary content analysis of these news articles unsurprisingly revealed the recurrent association of darkness with unsafe spaces: dimly lit alleys, deserted streets, and a lone woman featured prominently across the media coverage. Yet my intention in this article is not merely to examine the discursive construction of the role of grassroots data in shaping a more just smart city, but also to explore how grassroots data actually are made to “matter.” To supplement the analysis of news coverage, I thus conducted a close reading of the two apps, engaging with Safetipin and Safecity’s interfaces, websites, stakeholders’ reports, and academic papers written by their founders as well as the technical process of crowdsourcing, collecting, aggregating, and visualizing the datasets. I organize the analysis on the journey through which grassroots voices are made to travel, from how voices are operationalized and visualized to how they are disassembled. In showing how multiple frames are displaced within this process, I show how grassroots voices become inaudible.

Operationalizing voices: Flattening out narratives

The promise to offer every woman a voice paradoxically relies on a highly standardized process to operationalize, collect, and make sense of voices. Specifically, Safecity employs a standardized survey format to gather testimonies of gendered violence. On its mapping interface, survivors are prompted to answer a series of multiple-choice questions about where, when, how, and what they think that might have led to the incident (i.e. because of their gender, sexuality, level of education, migrant status, ethnicity, caste, as even a broad option such as climate change). Survivors are then invited to either pinpoint an exact location on the map or enter the approximate area in which the incident occurred. Through this process, voices are transformed, not into speech acts but into machine-readable data points.

In the context of Safetipin, having one’s voice heard involves individually conducting an “audit” of one’s surroundings based on a set of predefined criteria. These criteria include elements such as lighting, the ability to walk with ease, how crowded or deserted a space is, the diversity of genders in that space, how open or enclosed it is, how visible one is to either inhabitants or shopkeepers, and whether or not security or police are nearby (Safetipin, n.d.-b). This method of collecting women’s perception of space is rooted in a concept called the Women’s Safety Audits, first developed in Canada in 1989 by the Metropolitan Toronto Action Committee on Violence Against Women and Children (METRAC) as a response to growing concerns about violence against women in public spaces (UN-Habitat, 2008). Kalpana Viswanath, co-founder of Safetipin, who had been working with the India-based women’s NGO Jagori on issues of gender mobility using Women’s Safety Audit, adapted this concept into a map-based mobile app in 2013.

Both Safetipin and Safecity’s crowdsourcing platforms serve as a space for voices to materialize; their logics and affordances inevitably shape how women come to give an account of their experiences. With Safecity, the logic of recording of violence in clock time and the focus on the cartographic absolute of each incident invites survivors to narrate their experiences as a set of “asynchronous urban events” even though the lived temporality of gendered violence is “periodical, sporadic, routine” as well as “generational, historical, cyclical and corporeal” (Datta, 2020: 5, 3, 6). As geographer Ayona Datta argues, for the young women who live in the urban margins, the violence they experience cannot be quantified in precise units but rather recurs in a slow, cyclical rhythm (Datta, 2020). Equally problematic is the set of criteria, such as lighting or the presence of security personnel, used by Safetipin to gather a collective perception of space, which promotes a profoundly disembodied way of narrating spatial experiences (Le, 2022). As phenomenological accounts of women’s experiences of space have established, there is no way for us to objectively experience spaces since experience is always lived, embodied, and connected to other forms of violence. Recounting her experience of being sexually assaulted when she was jogging, Sara Ahmed (2017: 23) writes: “Experiences like this: they seem to accumulate over time, gathering like things in a bag, but the bag is your body, so that you feel like you are carrying more and more weight. The past becomes heavy.” This image of the body like a bag that accumulates weight over time offers a compelling metaphor for understanding the embodiment of spatial experience: how the same body might perceive and interpret a spatial environment differently depending on what the body has experienced in the past. In other words, what we feel when we are at a particular place is not immediately transparent and detached from our bodily experience. It is constantly shaped and reshaped through our corporeal bodies, which are burdened by layers of past experiences, and which might constantly anticipate violence even when violence is not realized (Vera-Gray, 2017).

I propose that we can think of this operationalization of voice as an act of “flattening out”—one mired in the representational flatness characteristic of the cartographic gaze (Wilmott, 2020), and one that disables a deeper understanding of gendered violence as resulting from historical and antagonistic relations. Specifically, gendered violence—entrenched within an interlocking system of neoliberal capitalism, racism, imperialism, white supremacy, patriarchy, and heteronormativity (Vergès, 2022)—is flattened into only three dimensions, expressed solely in terms of longitude, latitude, and time (see also Jefferson, 2017: 783). Unlike traditional narratives, which unfold through a temporal sequence (a beginning, a middle, and an end) because they are bound by the linear order of syntax (Hayles, 2012), the datafication processes deployed by Safecity and Safetipin parse the beginnings, middles, and ends into nonlinear units of data points so that they can be contained and organized spatially within tabular files and databases. The next section will show how this flattening out of voices into data points allows for sense-making techniques such as visualization and statistics to be applied, a process that delineates the boundary between which voice is deemed useful from what is dismissed as “noise.”

Visualizing voices: Clustering and erasing

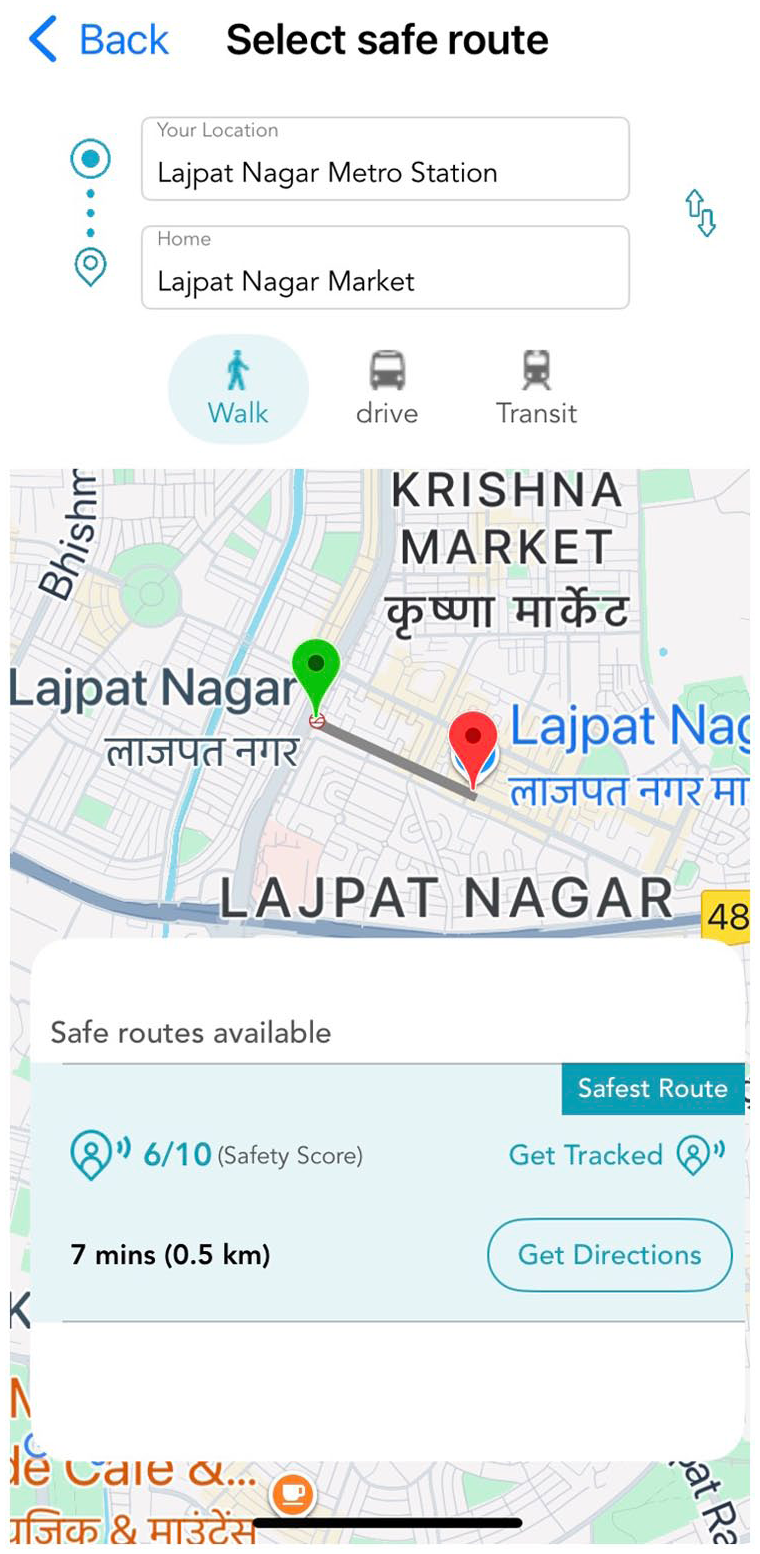

Both Safetipin and Safecity visualize grassroots-contributed data in different forms of maps with a positivist promise that the borders between safety and danger can be neatly drawn. In the dashboard provided to its stakeholders, Safetipin deploys a color-coded system of red, amber, and green, with red signifying unsafe, amber less safe, and green safe. In its user interface, this color-coded schema is replaced by a more minimalistic style, which displays only a safety score when a specific location or route is queried (Figure 1).

Author’s screenshot of the My Safetipin app’s interface that shows a safety score of 6/10 when a route between two locations in Delhi, India is queried.

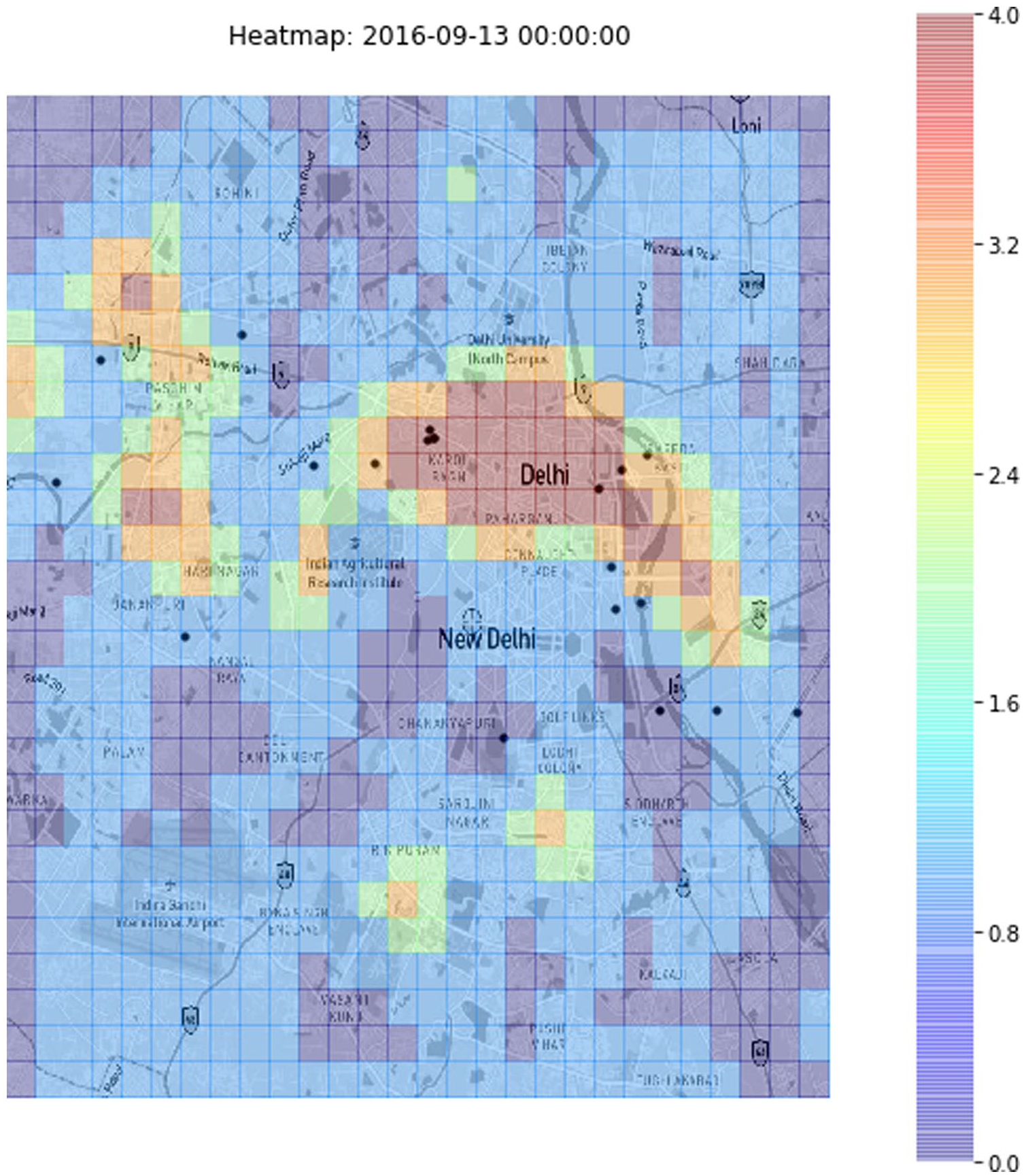

Safecity similarly collaborated with AI startup Omdena to develop heat maps and use machine learning to predict sexual abuse hotspots in several Indian cities (Omdena, 2022). Although the idea of a global real-time heat map is a utopian vision for now and the one-off partnership between Safecity and an AI startup served promotional purposes more than providing a materialized project, this is nevertheless indicative of the preoccupation with prediction and preemption as solutions to gendered violence, a fantasy that neatly dovetails with the smart urbanism agenda (Figure 2). In an interview with The Guardian, the founder of Safecity, D’Silva, pushed back against the accusation that a hotspot map might lead to certain “demonization” of certain areas, claiming that such map visualizations of safe and unsafe are indeed “making all neighborhoods safer” (Bramley, 2015). For her, hotspots map is useful to the extent that they provide a transparent way to identify risky places that helps to optimize the deployment of policing resources or guide women away from unsafe clusters. In short, it promises a translation of grassroots voices to useful, actionable insights.

Heatmap produced by Safecity’s partner, AI startup Omdena, that visualizes the intensity of gendered violence in Delhi, with the reddishness of the cell signifying the level of risk (Omdena, 2022).

In her research in digital cartographies of femicide in Latin America, activist scholar Suárez Val conceptualizes maps that visualize women being murdered as “feminist affect amplifiers” (2021: 163). For Suárez Val, the maps have the potential to shift the atmosphere surrounding feminicide from “indifference and denial, to awareness, concern, and action” (2021: 171). Drawing on Butler’s (2016) notion of grievability, Suárez Val argues that data visualizations of feminicide on the maps function as a form of “open grieving”—that is, they are “public displays of sadness, pain and outrage, public tears opposing the ruling feelings of indifference and inaction” toward gendered violence (Suárez Val, 2021: 176). The theory of social change proposed here is a process from private pain to public struggle, which eventually culminates collective action, facilitated by the visualization of violence on the maps and its affective resonance. Such a theorization of maps as “affect amplifiers,” however, cannot be generalized to any maps of violence. As Martin Engebretsen and Helen Kennedy (2020) remind us, it is important to attend to the specific conditions under which certain map visualizations are generated and how they are thought to benefit society. It is therefore important to examine what kind of action is promoted by the map visualizations of Safetipin and Safecity, and what mode of attentiveness to the world they enact (Amoore, 2009).

It is one thing to claim that mapping enables us to communicate the scale at which violence occurs, which is perhaps the rationale behind Suárez Val’s (2021) claim of map as “affect amplifier.” It is quite another to assert that maps allow us to take certain actions. If we consider the societal benefits that Safetipin and Safecity attribute to their maps (such as the optimization of policing resources and a safe route prescription function), it becomes evident that these maps are indicative of the fantasy of “command and control” through seeing—what Orit Halpern terms “communicative objectivity,” where visualization is accorded with democratic virtue for the transparency it supposedly embodies (Halpern, 2014: 15–16). Consider, for example, the paper “Safer Cities for Women: Global and Local Innovations with Open Data and Civic Technology,” in which co-founder of Safetipin, Viswanath, and her colleagues propose: “Open online visualization does not require any expert skills in cartography or geographical information systems to interact with, making it useful for a wide audience interested in urban safety in the city” (Hawken et al., 2020: 101). The notion that visualization possesses a “universal value”—implying that anyone, regardless of their background, ideologies, and political values, can derive the same benefit or understanding from it—is also a recurring theme in Safecity’s rhetoric. As Safecity’s founder, Elsa Marie D’Silva emphasizes in an interview with the US-based nonprofit World Justice Project: “These stories are documented and visualized on a map so that we can see local-based patterns and trends, and that data is available to anyone to use globally to make their community safer” (World Justice Project, 2023, my emphasis).

Visualization, as these discourses insist, provides a transparent map for action. Yet, visualization is not a neutral object that represents space in a transparent way; instead, as Orit Halpern rightly argues, it is an epistemology (2014). In other words, different forms of visualization foreground different relationships and patterns and hence different modes of knowing and acting in the world (Rettberg, 2020). Displaying gendered violence as spatial clusters or hotspots, I argue, has important consequences for what voices are considered as valid, meaningful, and reliable and by extension, what are muted. Clustering means the need for more data to coalesce around a geographic point in order to form a discernible pattern. As Ashish Basu, the other co-founder of Safetipin, who specializes in the technical side of the initiative, elaborates: “You can’t have a sample size of two people telling you that an area is unsafe. You’re looking for more information” (Rao, 2015). He continues: “The collated statistics are what prompt action; rarely are individual, ostensibly disparate personal accounts enough to influence the status quo” (Rao, 2015). This logic is echoed by D’Silva, founder of Safecity, who, together with her co-authors in a paper published in the journal Crime Prevention and Community Safety, raises the “validity concerns” of anonymous accounts submitted to its platform, particularly those that do not conform to the overall locational patterns. As they argue, Validity is checked using the reliability of patterns. The platform is designed to identify locations in which reported events cluster. As such, accounts in certain quadrants tend to echo one another. If one is quite different than the locational pattern, it raises a validity concern. (Lea et al., 2017: 230)

D’Silva and her co-authors did not elaborate on how they would handle data that raise “validity concerns.” However, it is likely that such cases are filtered during the data-cleaning process. The visualization here therefore counts some voices, yet it discounts others. It seems that grassroots voices only matter only if they conform to the central tendency of a statistical law—the spatial cluster—while those deviating to the extremes are dismissed as noise, doubted, or even erased.

(Dis)assembling voices: Making voices “operational”

Strikingly, grassroots voices are not merely subsumed under statistical “law”—as noted above, the spatial cluster—but are only valued within the context of pattern formation in relation to other datasets. For instance, in a report celebrating their 10th-year journey, Safetipin prides itself on the achievement that its data has been “seamlessly incorporated” into many city data platforms and become part of those cities’ data ecosystems (Safetipin, 2023: 21), reporting that Safetipin’s crowdsourced data is now accessible to many government departments and urban practitioners. For example, in Durban, South Africa, Safetipin data was incorporated into the city’s dashboard. In the city of Pune, India, its data was integrated into the India Urban Data Exchange Portal (IUDX)—an initiative by the Indian Institute of Science to facilitate the sharing of data to “address the complex problems faced by urban India,” while in Delhi, the data have become part of the Delhi Government’s GeoSpatial database (Safetipin, 2023). In its collaboration with Omdena to visualize heatmaps and calculate safe routes across major cities in India, Safecity has similarly combined its crowdsourced data about sexual violence in public spaces with other open-source data, such as infrastructure data about schools, colleges, hospitals, cinemas, and public parks (D’Silva, 2019). Much of rhetoric of “giving voice” to marginalized groups, it turns out, is actually about asking them to contribute to an interoperable dataset that can be subsumed inside of (any) mainstream database. To amplify grassroots voices is to shuffle their data through a data ecosystem (see also Bowsher, 2023; Luque-Ayala and Marvin, 2020).

Against this context, interoperability trumps narrativity: the emphasis here is on how to seamlessly combine datasets and what can be inferred across different points of data. Detailing its methodology, Safetipin states that: “It is possible . . . to display places where the crowd parameter is high, but the safety score is low, so they can deploy police vans to those areas” (Safetipin, n.d.-b). This is close to what political theorist Louise Amoore (2011) terms “the ontology of association”—a type of risk calculus that does not seek “a causal relationship between items of data but works instead on and through the relation itself” (p. 2). According to Amoore, the logic of association calculates uncertainty through a mathematical equation: “if *** and ***, in association with ***, then ***” (2011: 2). In the logic of Safetipin, the risk calculus would look like if *there is a high crowd parameter* in association with *a low safety score*, then *police deployment* is warranted. The range of variables could be endlessly multiplied; once integrated into institutional databases, factors such as weather conditions or accident reports might be folded into the calculus. Here, in keeping with Farocki (2004) and Paglen’s (2014) notion of an operational image and Andrejevic’s (2019) account of operationalism, it could be argued that datafied voices become operational data insofar as they do not correspond to any narrativizable or meaningful accounts of lived experiences; the referents are displaced, if not dissolved altogether. What really matters is how grassroots accounts form inter-relationship with other datasets, with the primary focus on insights that can be used to trigger certain actions to regulate urban space.

The way data are organized also signals the imperative of malleability and interoperability of data at work. For example, Safetipin claims that in addition to visualizations, stakeholders can also access their data through comma separated value (csv) files—a text file format that uses commas to separate values and new lines to separate records (Safetipin, n.d.-b). The purported benefit of storing data in csv files is interoperability, since its simple tabular data format allows for it to be easily shared across and read by different systems and applications. The timings and types of incidents data tables theoretically could be easily combined with traffic flow data tables from local governments to infer high-risk areas and optimize decisions. In this sense, the csv file is not simply the background on which grassroots data are recorded and organized, it becomes a constitutive aspect of the dataset; as Thi Nguyen (2024) astutely observes, data that are formatted to “travel and aggregate” can be exchanged across organizations and settings (p. 98). More importantly, this is not something that can be easily resisted, since interoperability is the inbuilt feature that has led to the contemporary valorization of data. Implied too is the notion that assembling and disassembling is a process relatively immune from resistance from the data itself (Kaufmann, 2023), since the world is conceived to be made up of “atomized” data bits that can be queried and “manipulated through command” (Hayles, 2012: 193).

This cybernetic-inflected world view is hardly novel. The notion that society, despite its inherent chaos, is ultimately mappable and manageable through principles of information control has long permuted into various practices of social and economic management central to the operation of late capitalism (Franklin, 2015). In the post-World War II period, urban planning and design also gradually integrated cybernetics and communication theory into their environmental understanding (Halpern, 2014). Urban space came to be conceptualized as an “interface” and earlier notions of the structures that defined urban forms gave way to understandings of an interactive space between individuated bodies and the larger environment that was manageable through information flow (Halpern, 2014: 82). This is also the trajectory into which modern feminist data activism now also inserts itself.

At stake here, then, is not so much the issue of losing meaning when data are taken out of context, as raised in the famous provocations for Big Data by danah boyd and Kate Crawford (2012). As Nick Seaver (2015) points out, context can also be operationalized and consequently made interoperable. At stake here is the displacement of frames—the interpretive vantage points from which experiences can be comprehended, understood, or misrecognized—for the imperative of interoperable data ecosystems transformed the often competing interests of distinct social actors, for instance, women and the patriarchal state represented by the police, and their antagonistic historical relations into “synthesizable bounded knowledge,” a “cybernetic ethos” that seeks to grasp the complexities of the world as fragmented and isolated bits that can be endlessly assembled and reassembled (Grove et al., 2023: 9; see also Halpern, 2014). To borrow from Andrejevic (2019: 84), once inserted into the ecosystem of data, grassroots voices become “non-narrativizable.” The question of whether the subaltern can speak then becomes: “How can we know?”

Total capture and deferred knowledge: The syntax of securitization

The previous sections have centered on the informational infrastructures through which grassroots voices are made to travel and become inaudible. This final section grapples with what I argue to be the key, and troubling, consequence of this mode of inclusion of women’s voices within the smart city. While Safetipin and Safecity’s insistence on the inclusion of the experiences of every woman into urban governance processes might appear inclusive, I contend that this aspiration paradoxically feeds the logic of securitization and exclusion. Securitization relies on a framing of risks as perpetual and, thus, there is an imperative to constantly assess them through data collection in order to form and actionable insights with the ultimate aim of preempting these risks. For instance, to achieve more comprehensive coverage, Safetipin partnered with rideshare companies such as Uber to scale-up and automate their data-collection effort. After an initial pilot project in September 2015 in the Indian cities of Bangalore, Mumbai, Gurgaon, and Noida, the partnership with Uber has now expanded to 50 other cities, including cities in Africa, South America, and Asia (Economic Times, 2015). The desire for endless data capture is evident, again, in the way Safetipin encourages its users to submit their evaluations of space through gamification techniques. It introduced a reward scheme that incentivizes users based on the number of their contributions. Those who reach the highest level are eligible for discounts from women-led brands who have partnered with the platform. The desire for more data culminated in the launch of the Quick Audit feature by Safetipin in 2022, which allows women to submit only photographs of their surrounding environment and leave the calculation to its AI algorithms. The feature is designed to eliminate the supposedly painstaking process of having women go through every aspect of the environment such as the lighting and crowds to evaluate how each of these contribute to their sense of safety. It attempts to make the “voicing” process less time-consuming and, hence, to encourage more voices.

This ambition to collect every voice, despite being cast as empowering, results in what Sun-Ha Hong (2022) calls a “paranoid epistemology” (p. 41)—the language of perpetual risks which insists that endless data collection is the only feasible option to fully preempt these risks. Indeed, if traditional narratives irreducibly involve selection—we always omit something and make something else more salient as we recount stories—the logic of databases is one of “inclusivity,” if not total capture (Hayles, 2012: 182). In the imagination of Safetipin and Safecity, cities “become systems with an endless capacity for change, interaction, and intervention, and problems of urban blight, decay, and structural readjustment have no clear definitive endpoint, just as their solutions become constantly extensive” (Halpern, 2014: 121). For instance, the recent partnership between Safetipin, IUDX, and the Smart City of Pune is indicative of this logic of perpetual, always emergent risks, where, as part of this partnership, Safetipin combined its crowdsourced grassroots datasets with other open datasets available on the City of Pune’s open data portal to compute “live” safety scores, using “multiple static and dynamic data” (Viswanath, 2024). The use of terms such as “dynamic” and “live” are telling, for they suggest a temporality of risks that forever resides on the horizon. Lindsay Thomas (2014) refers to a similar logic in her work on disease surveillance systems and disease preparedness in the digital age, which she calls a “security paradigm.” Thomas argues that securitization, or the security paradigm, aspires to render future catastrophes available for “real time” intervention. Yet, it is this very attempt to collapse temporality that propels us toward the direction of perpetual preparedness, a mode of being that normalizes continual data collection to pre-empt risks. In crude terms, attempts to construct a “live” safety score that guides women away from unsafe areas means there will never be enough voices. Instead, they must be constantly updated and incorporated into calculations.

There is a risk that characterizing the data practices of Safetipin and Safecity as a form of paranoid epistemology could be accused of trivializing the feeling of fear experienced by many women who have downloaded and are relying on the app when they navigate public spaces. To be clear, I do not deny the possibility that the apps might be useful for certain users. My point, which is shared with other feminist scholars such as Sharon Marcus (1992), is that the constant state of being fearful of the risk of violence cannot be understood outside and is inseparable from its representation and the framing of its solution. In the case of Safetipin and Safecity, implicit in the promises of collective data collection to build a “feminist” Tripadvisor, is the message that gendered violence is relatively unpredictable (without data). It is if only we equip women or potential victims and/or the government with predictive technologies in the forms of heat maps, safety scores, and patrolling routes that we might be able to mitigate the risk and optimize our resources. This is precisely the syntax of securitization. It is worth remembering, however, that most violence is perpetrated in the home, often by someone we know and trust (UNODC and UN Women, 2024). Yet, emergent risk needs to be produced so that data-driven calculations of safe and unsafe can promise their utility to tame these ostensibly indeterminate futures.

It is useful to pause here and juxtapose the use of grassroots data to preempt gendered violence and securitize urban space, with the dominant public health approach to violence prevention. One key pillar of the public health approach to violence prevention is primary prevention, which seeks to address the key underlying causes of violence such as ingrained cultural norms about masculinity, sexuality, and gender roles (Powell and Henry, 2014). Primary prevention aims to transform these norms through strategies such as targeted community programs, school curricula, awareness-raising resources, and media coverage. While I cannot do justice to the complexities and diversity of this approach in this thesis and while this approach is not without criticism (see, for example, Pease, 2014), what I want to highlight here is that underpinning the primary prevention approach is an endeavor to understand potential perpetrators and their motivations. As the name “public health” suggests, this approach has its root in the medical field, which attempts to uncover the underlying causes in the hope that this understanding might be useful in developing treatments, cures, and prevention. Here, questions that animate the primary prevention approach are ones such as: What are the demographic characteristics of the perpetrator? What are their ideologies and beliefs? Why do they believe that and how can we change those beliefs? Primary prevention here focuses on “explanation.”

By contrast, the model of data-driven violence preemption popularized by Safetipin and Safecity sits within a post-disciplinary context where interventions do not address a subject but are correlations of various elements or risk factors. This is not a novel observation. Three decades ago, sociologist Robert Castel (1991: 283) observed that we were living in a “risk society” where risks were increasingly becoming detached from that of danger. Writing in the context of psychiatric treatment for the mentally ill, Castel argued that the modality of care for mentally ill people in modern societies has shifted from care to the administration and management of risk; that is, we moved from caring, diagnosing, and curing to assessing, evaluating, and minimizing risk (Castel, 1991). The modern obsession with prevention, as Castel wrote and which remains insightful today, is “overarched by a grandiose technocratic rationalizing dream of absolute control of the accidental, understood as the irruption of the unpredictable” (1991: 289). What I wish to add to Castel’s formulation of “dangerousness to risk,” then, is the function of grassroots data/voice within a securitization framework, which is not as much to accurately pre-detect risks as it is to serve an affective function. As Patricia Clough (2009) argues, under the securitization paradigm, information can be considered as “in- forming, affective or activating, a matter of connecting or attention-getting/giving rather than meaning” (p. 57, my emphasis). Live safety scores, a safe route function in real time and the vision of a smart safety app for women to navigate the city and for the city to respond to women’s needs are indicative of this affective function of data, insofar as they constantly remind us of the indeterminacy of the risks to be violated.

What ensues when interventions address the correlations of various elements, then, is a resource shift from explanation-based policies and toward preemptive, data-driven securitized actions that are actionable yet incontestable. In one context, gendered violence might be correlated with inadequate street lighting; in another, it could be the design of public walkways. In the future, it might be possible to add other variables such as alcohol consumption or the timing of sporting events. The potential to add further variables are theoretically limitless. As securitization intensifies, the frame to theorize what indeed causes gendered violence might drop out altogether: we are left with only actionable patterns and correlations. Paradoxically, feminist investment in a safer, more just smart city has not materialized through these data-driven incorporation of women’s voice in smart urban governance; rather, what ensues is the encroachment of operational and securitized rationality into the very pursuit of gender justice.

Conclusion

In this article, I have examined the promise and politics of voice as they are embedded within data-driven and automated systems of governance. I traced the process from how grassroot voices are collected, operationalized, and visualized to how they are disassembled, shuffled around, and interpreted by multiple actors and stakeholders. Through the conceptual lens of operationalism, I showed how grassroots voices are valued for their actionable intelligence rather than for their narrativity. One of the key, and troubling, consequences is how the imperative of perpetual data collection posits gendered violence, not as a result of specific historical and social structures, but instead as dynamic threats that are always on the horizon, the understanding of which is always preliminary, forever incomplete, and in need to be updated with more data points. It is this doing away with the frames to theorize the structural causes to gendered violence, and the diversion of resource away from explanation-based policies, that most concerns us in contemporary vision of the feminist smart city.

Footnotes

Acknowledgements

Not applicable.

Ethical considerations

Not applicable.

Funding

The author disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported by the Australian Government through the following Australian Research Council grants: DP200100189 and CE2000100005.

Declaration of conflicting interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data availability statement

All data used in this research are publicly available and were obtained from sources in the public domain.