Abstract

Background

Traumatic brain injury (TBI) is common among individuals seeking treatment for substance use disorders in behavioral healthcare settings, but evidence-based TBI screening methods are underutilized. We investigated provider perceptions of the acceptability, feasibility, and appropriateness of TBI screening, and whether these perceptions influenced the relationships between screening intentions and behaviors. Understanding how these implementation outcomes are interrelated can help clarify the temporal sequencing of implementation processes and lead to more precise and cost-effective dissemination and implementation (D&I) strategies.

Method

In Phase 1 of this explanatory sequential mixed methods study, 215 behavioral healthcare providers completed an electronic survey assessing their intentions to screen for TBI using the Ohio State University TBI Identification Method (OSU TBI-ID). After 1-month, a second survey assessed the number of screens conducted, and perceptions of the acceptability, feasibility, and appropriateness of the OSU TBI-ID. Binary logistic regressions were used to examine whether acceptability, feasibility, and appropriateness moderated the relationship between screening intentions and behaviors. In Phase 2, 20 providers participated in an interview to contextualize the quantitative results. Qualitative data were analyzed thematically and integrated with the quantitative results.

Results

The mean acceptability, feasibility, and appropriateness scores were 4.12, 4.02, and 3.69, respectively. Acceptability (OR = 0.80, p = .29), feasibility (OR = 0.93, p = .88), and appropriateness (OR = 0.97, p = .65) of TBI screening did not moderate the relationship between intentions and behaviors. Providers endorsed the OSU TBI-ID as easy to use and integrate into practice, relevant to clients, and helpful in guiding referrals and treatment decision-making.

Conclusions

Positive perceptions of an intervention are important but insufficient for shaping the transition from intentions to behavior. This study begins to disentangle interrelationships between early-phase implementation outcomes, which can help guide more precise D&I strategy development to enhance implementation efficiency and effectiveness.

Plain Language Summary

Traumatic brain injury (TBI) is common among adults who seek treatment for substance use disorders in behavioral healthcare settings. TBI can affect a person's ability to fully engage in and benefit from treatment. Although evidence-based TBI screening interventions exist, they have not been widely adopted in behavioral healthcare settings, which can affect treatment decisions and outcomes. We used surveys and interviews to assess providers’ perceptions of the acceptability, feasibility, and appropriateness of using a validated TBI screener in behavioral healthcare settings. We also examined how these perceptions might affect the shift from intending to use the TBI screener to actual use of the screener. Results demonstrated that positive perceptions of the feasibility and appropriateness of the TBI screening intervention led to adoption, but these perceptions did not necessarily affect the transition from intentions to behavior. Additional barriers within the implementation context may be affecting providers’ willingness and capability to screen clients for brain injury. Implementation strategies, such as external facilitation or champions, as well as dissemination strategies such as policy briefs or joint messaging statements about brain injury, are needed to raise awareness of the importance of screening clients for TBI. Future research is needed to address poor adoption rates through testing implementation strategies that target implementation barriers in behavioral health settings. Research is also needed to test which dissemination strategies can improve provider awareness and responsiveness to brain injury in clients.

Keywords

Introduction

Implementation outcomes are critical for measuring the success of implementation efforts and can be important metrics for determining early indicators of provider- and organizational-level decisions to adopt evidence-based interventions (EBIs) (Proctor et al., 2011, 2023). Proctor et al. (2011) first conceptualized a taxonomy of implementation outcomes and differentiated these from service (i.e., safety, effectiveness) and client outcomes (i.e., satisfaction, functioning). The paper proposed an aspirational research agenda to advance implementation science by calling for research to examine interrelationships between implementation outcomes. Specifically, the paper articulated several key implications for understanding these interrelationships, including that empirically investigating interrelationships can help to shape and clarify their conceptual distinctions and advance our understanding of how these outcomes interact throughout the implementation lifecycle. The paper further called for implementation research to empirically test these interrelationships using validated and efficient implementation outcome measures, which would simultaneously improve the rigor of implementation research and the utility of these measures in guiding implementation decisions.

In 2023, Proctor and colleagues conducted a scoping review of 10 years of implementation outcomes research, which investigated the extent to which their earlier agenda had been achieved (Proctor et al., 2023). Of the 400 articles included in that review, only 5% examined relationships between implementation outcomes, including three articles that examined interrelationships between acceptability and adoption, three that examined interrelationships between feasibility, reach, and adoption, and two that examined interrelationships between sustainability and adoption. In addition, few studies used theory to inform hypothesis testing, thus limiting the generalizability of how implementation occurs across disease conditions, interventions, and contexts (Foy et al., 2011; Kislov et al., 2019).

Theoretical Underpinnings

Drawing on these earlier articles, we sought to empirically investigate interrelationships between three implementation outcomes drawn from Diffusion of Innovations Theory (DOI) (i.e., acceptability, feasibility, and appropriateness) (Greenhalgh et al., 2004; Proctor et al., 2011) and the adoption of traumatic brain injury (TBI) screening in behavioral healthcare settings. DOI theory suggests that individuals’ perceptions regarding the relative advantage, complexity, and malleability of the EBI can potentially affect whether the EBI becomes adopted (Rogers, 2003). As an implementation outcome, acceptability is derived from DOI's innovation “complexity” and is defined as individuals’ perceptions that the EBI is satisfactory for use (Proctor et al., 2011). Acceptability is a potential early indicator that the EBI will be adopted, and hence an integral pre-implementation phase outcome. Appropriateness is derived from DOI's “compatibility” of the intervention and relates to individuals’ perceptions that the EBI fits with and is relevant to the implementation context. DOI theory posits that individuals’ perceptions of the appropriateness of the EBI could impact whether the EBI is adopted. Feasibility is derived from DOI's intervention “trialability” and is the degree to which an EBI can be effectively delivered within an organization. As intervention-level constructs, the extent to which the EBI is perceived as acceptable, feasible, and appropriate could ultimately affect whether the intervention becomes used (Mettert et al., 2020; Proctor et al., 2023). Proctor et al. (2011) define adoption as an intention and/or a behavior. Yet, intending to perform a behavior does not always lead to behavioral change, and the transition from intention to behaviors may be obstructed by perceptions of the EBI (Sheeran, 2002; Sheeran & Webb, 2016), including whether the intervention is perceived as feasible, appropriate, or acceptable within the treatment setting.

The Public Health Problem and EBI

TBI is a common condition among persons seeking treatment in behavioral healthcare settings. Approximately 50% of individuals seeking treatment in these settings have sustained at least one lifetime exposure to TBI (Davies et al., 2023). As a chronic condition, TBI can lead to cognitive and behavioral impairments that can affect a person over their lifetime (Dams-O’Connor et al., 2023). Sustaining even one TBI can have lasting effects on an individual's cognitive and behavioral functioning. For individuals seeking substance use treatment, these impairments can make it difficult to fully engage in and benefit from treatment if the treatment is not adapted to the needs of these clients (Corrigan, 2021). Universal screening for TBI using validated screening methods is the first step in formulating treatment plans that can improve client success. Furthermore, identifying potential sources of cognitive impairment, including impairment resulting from TBI, is now required by the updated clinical practice guidelines of the American Society of Addiction Medicine (ASAM, 2023). Yet, few providers in behavioral health treatment settings have adopted validated screening methods to identify clients with TBI who may have residual cognitive sequelae (Hyzak et al., 2023).

First developed in 2007, the Ohio State University TBI Identification Method (OSU TBI-ID) is an evidence-based TBI screening method used to determine lifetime exposure to TBI and can be employed across treatment settings (Bogner & Corrigan, 2009; Corrigan & Bogner, 2007). The OSU TBI-ID is validated for administration by providers or through client self-report, and takes approximately 7 minutes to complete (Lequerica et al., 2018). The OSU TBI-ID uses optimal recall methods that probe for potential TBI through various mechanisms or situations (i.e., military service, sports, or repeated blows to the head or neck from domestic violence), first injury, most recent injury, number of prior injuries, and worst injury (i.e., being dazed or confused, or approximate length of loss of consciousness). The OSU TBI-ID has demonstrated greater sensitivity over other brief screeners or single-question approaches in identifying lifetime exposure to TBI in vulnerable populations (Schneider-Cline et al., 2019; Stubbs et al., 2020). Furthermore, although not a diagnostic method, the OSU TBI-ID is a time-effective, cost-effective, and scalable alternative to medical diagnostic procedures (i.e., magnetic resonance imaging, functional magnetic resonance imaging, diffusion tensor imaging, and proteomics) (Epstein et al., 2016; Han et al., 2015, 2016; King et al., 2016; McGlade et al., 2015; Peltz et al., 2020; Sheth et al., 2020), which are often not sensitive in identifying lifetime TBI exposure.

Present Study

We present the third and final sequence of results from our federally funded study where we sought to disentangle theory-driven characteristics of the OSU TBI-ID that might affect the relationships between intentions to screen for TBI and TBI screening behavior (e.g., adoption) in behavioral healthcare settings in the USA (Coxe-Hyzak et al., 2022). In our first study, we used the Theory of Planned Behavior to investigate provider-level constructs as proximal indicators (i.e., attitudes, subjective norms, and perceived behavioral control) and mediators (i.e., intentions) on TBI screening behaviors (Hyzak et al., 2023). Of the 215 providers who participated in the study, only 25% adopted TBI screening at least once over the study period, which was largely driven by providers’ motivations to trial the intervention. Results demonstrated that more favorable attitudes toward screening clients for TBI and greater perceived norms around screening predicted greater screening intentions and TBI screening behaviors, while perceived control over screening did not have a significant effect on intentions or behavior. In our second study, we qualitatively assessed barriers to using the OSU TBI-ID in behavioral healthcare settings using the Consolidated Framework for Implementation Research (CFIR 1.0) and systematically mapped these barriers to the Expert Recommendations for Implementing Change strategies (Damschroder et al., 2009; Hyzak et al., 2024; Powell et al., 2015). We found barriers across four CFIR domains, including the Inner-Setting (i.e., leadership engagement, priority, resources, organizational incentives), Outer-Setting (i.e., state-level mandates, incentives), Process (i.e., buy-in), and Individual-Characteristics (i.e., knowledge, self-efficacy).

In the present study, we investigated whether providers’ perceptions of the acceptability, feasibility, and appropriateness of the OSU TBI-ID moderate the relationships between providers’ intentions to screen for TBI and TBI screening behaviors. We hypothesized that greater perceived acceptability, feasibility, and appropriateness of TBI screening using the OSU TBI-ID will strengthen the relationship between TBI screening intent and TBI screening behavior. This study begins to disentangle interrelationships between three implementation outcomes often salient to the pre-implementation phase to better understand how these outcomes affect provider-level decisions to use EBIs. To avoid duplication of results from our previously published studies, we focus this article on the main constructs of interest—acceptability, feasibility, and appropriateness—when reporting our results.

Methods

Study Design and Setting

We used an explanatory sequential mixed methods design (Creswell & Clark, 2010) with quantitative, longitudinal survey data collected at two-time points in Phase 1, followed by qualitative interviews in Phase 2. Details on this mixed methods research design and rationale are published elsewhere (Coxe-Hyzaket al., 2022). We use the Journal Article Reporting Standards for Mixed Methods Research for transparent reporting (Levitt et al., 2018; Supplemental File 1). Participants were licensed behavioral healthcare professionals (e.g., social workers, counselors, psychologists) employed in behavioral health treatment settings (e.g., substance use disorder treatment agencies, publicly funded mental health clinics, private mental health counseling organizations, etc.). Data were collected between November 2020 and April 2022. Our study is approved by the Institutional Review Board at The Ohio State University. All participants provided informed consent prior to study participation.

Phase 1

Recruitment and Data Collection

Participants were recruited through multiple sources, described in detail in our prior work, including from the Star Behavioral Health Providers Program (sample 1), Google searches/referrals (sample 2), continuing education listservs (sample 3), and a professional organization for substance use treatment professionals (sample 4) (Hyzak et al., 2023). At Time 1, we distributed an electronic survey link by email. The link included an educational module publicly available through the authors’ home institution that demonstrates how and why to conduct the OSU TBI-ID. After completion of the module, participants completed a survey asking about their intentions to use the screener over the next month. After one month, participants who completed the first survey were sent a second survey assessing their perceptions about the acceptability, feasibility, and appropriateness of the OSU TBI-ID, and the number of TBI screens conducted. Because we used self-report to capture TBI screening behavior, we limited the time between surveys to one month to reduce recall errors. The retention rate between Time 1 and Time 2 surveys was 74.4%.

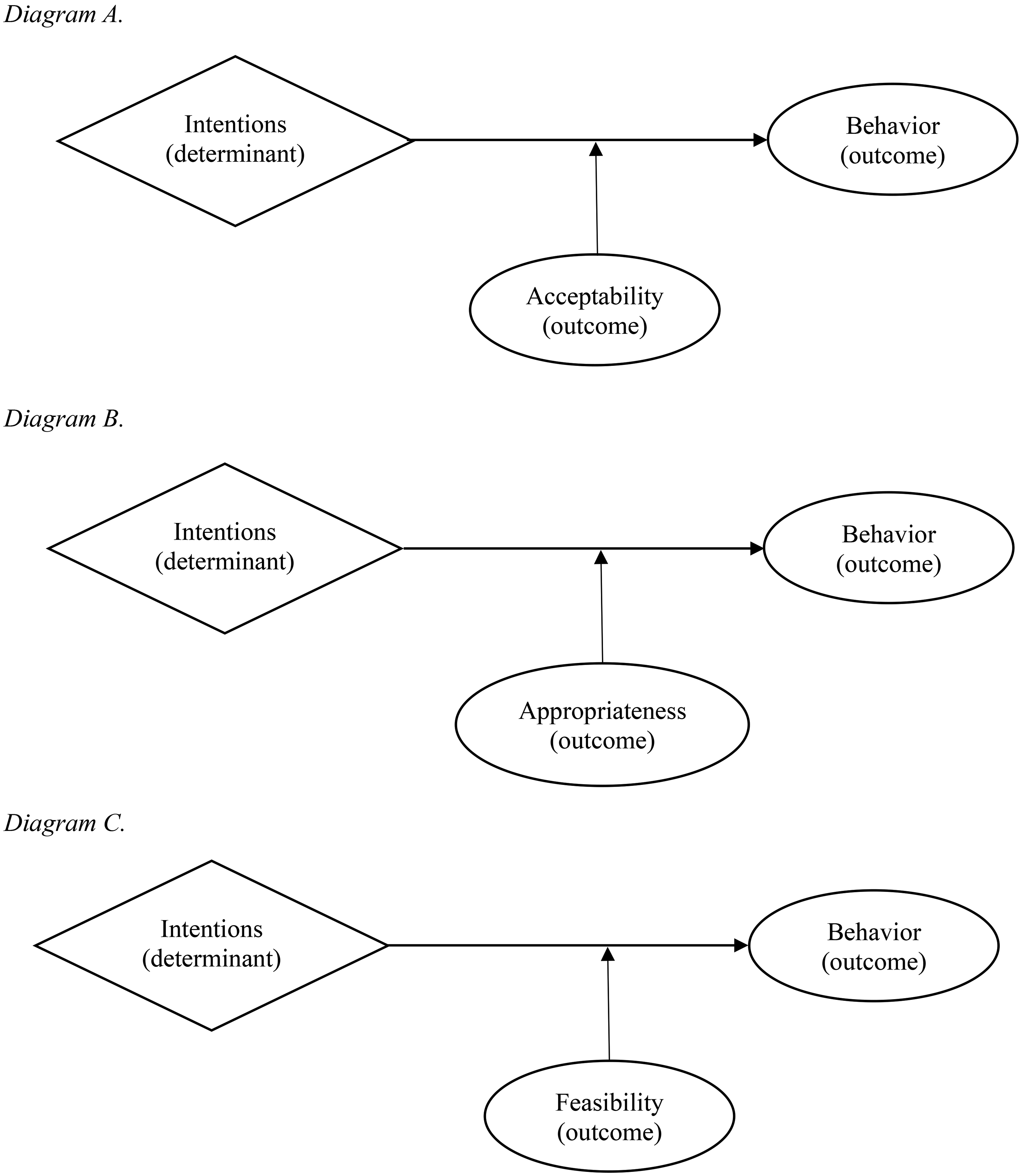

Implementation Outcomes and Causal Pathway Models

Proctor defines adoption as an ‘intention’ and/or a ‘behavior’ (Proctor et al., 2011). In this study, we deconstructed adoption into two conceptually distinct constructs to build our theory-informed causal model, with intentions as the ‘determinant’ and ‘behavior’ as the main outcome. We conceptualized the acceptability, feasibility, and appropriateness outcomes as moderators linking the relationships between intentions and behavior. We constructed three causal model diagrams following “determinant” →“outcome” → “outcome” patterns to test our theory-informed hypotheses (Lewis et al., 2020) (Figure 1).

Hypothesized theory-informed causal pathway diagrams.

Measures

Intentions were measured using three items adapted from an existing measure that asked respondents to report the likelihood, intentions, and chances of screening for TBI over the next month (Glegg et al., 2013). Items are measured on a 7-point Likert scale (1 = strongly disagree; 7 = strongly agree) and averaged for a total score (Hyzak et al., 2023). We measured TBI screening behaviors as the self-reported number of times providers used the OSU TBI-ID. We measured acceptability, feasibility, and appropriateness using three validated measures: the Acceptability of the Intervention Measure (AIM), Feasibility of the Intervention Measure (FIM), and Intervention Appropriateness Measure (IAM) (Weiner et al., 2017). Each measure contains four-items measured on a 5-point Likert scale from 1 (completely disagree) to 5 (completely agree). Items are averaged for a total score, where higher scores indicate greater acceptability, feasibility, or appropriateness. For each item anchor, “intervention” was replaced with “OSU TBI-ID.”

Statistical Analysis

Two hundred fifteen providers participated in Phase 1 of the study. Descriptive statistics characterized our sample and the means, standard deviations, and ranges of each outcome measure. We compared demographic data from our samples using Pearson chi-squared tests for categorical variables or Fisher's exact tests as appropriate. We conducted post-hoc analyses using Bonferroni corrections to determine where statistically significant differences occurred, which guided the selection of covariates in the advanced analyses (Allen, 2017). We compared continuous variables using One-Way ANOVA, and conducted post-hoc tests using Tukey-Kramer comparisons to account for the unequal sample sizes between the four samples (Haynes, 2013). TBI screening data were coded as binary counts (1 = screened for TBI; 0 = did not screen for TBI). Statistical significance was set to an α = .05. Descriptive data were analyzed using SPSS v.27 (IBM Corp, 2020).

For our main analyses, we constructed binary logistic regression models using STATA v.15 (StataCorp, 2023). “Intention” served as the continuous predictor variable, “acceptability,” “feasibility,” and “appropriateness” as continuous moderator variables, and “utilization of the OSU TBI-ID” as the binary outcome. We controlled for level of education (masters/doctorate versus associates/bachelors) and private practice setting (1 = yes; 0 = no) due to significant differences found between samples on these variables. Interaction effects between “intentions” × “acceptability,” “intentions” × “feasibility,” and “intentions” × “appropriateness” were used to determine whether each of these constructs moderated the relationships between screening intentions and behavior. Our alpha level was set to p < .05. We checked for potential sources of model violations, and none were detected. The power calculation used to conduct our structural equation model in our previously published paper served as the same power calculation here (Hyzak et al., 2023).

Phase 2

Interview Guide Development

Results from Phase I guided the interview development for Phase 2. In alignment with our mixed methods design, interview questions centered around the primary study constructs of DOI theory and were intended to provide greater contextualization to providers’ perceptions about the acceptability, feasibility, and appropriateness of the OSU TBI-ID (Creswell & Clark, 2010; Fetters et al., 2013). Primary questions specifically asked to what extent the provider believed the OSU TBI-ID was feasible to implement, to what extent the OSU TBI-ID was appropriate to use with their clients, to what extent the OSU TBI-ID was an acceptable intervention to use with clients, and whether they believed clients found it acceptable to be screened for TBI. We also asked why providers chose to screen for TBI or not during the study period. Probing questions were used throughout the interviews to elicit in-depth responses to the phenomena of interest.

Recruitment and Data Collection

Participants who completed both surveys in Phase 1 were eligible to participate in individual interviews. We purposively recruited 20 providers based on survey responses, geographic location, and employment setting to increase the variability of implementation contexts (Fetters et al., 2013). We conducted interviews through videoconference, which lasted about 35 min on average.

Qualitative Data Analysis

Interviews were professionally transcribed verbatim and uploaded to NVivo v.12 (QSR International Pty Ltd, 2020). We organized data under each of the main study constructs from DOI theory to frame the data according to the study aim and to prepare the data for integration in the mixed analysis (Fetters et al., 2013). Two coders independently familiarized themselves with the data by reading each transcript and then coded interviews deductively relative to each construct (Nowell et al., 2017). The coders met to discuss initial coding and settle discrepancies. The coders returned to the data to further condense and collate the codes, refining them into themes (Braun & Clarke, 2006; Nowell et al., 2017). Themes were discussed again, revised, and finalized, with exemplar quotes selected in support of each theme.

Mixed Methods Analysis

Quantitative and qualitative data were analyzed side-by-side to assess for confirmation, expansion, or discordance with the mean AIM, FIM, and IAM scores (Fetters et al., 2013). We drew meta-inferences from interpreting both sets of data together side-by-side and present both quantitative and qualitative data strands weaved together in the text on a construct-by-construct basis, and a joint display table to visually present the quantitative and qualitative results (Guetterman et al., 2015). In the joint display, the means and standard deviations from the AIM, FIM, and IAM are presented alongside the qualitative themes, exemplar quotes, and meta-inferences. We present the odds ratios (ORs), 95% confidence intervals, and p-values from the logistic regression models in a separate table for clarity.

Results

Sample Characteristics

Two hundred fifteen professionals from 25 unique treatment settings across 31 states participated in the study. Participants were predominantly White (81.9% Phase 1; 95% Phase 2), female (85.4% Phase 1; 90% Phase 2), and earned a masters- or doctoral-level degree (78.3% Phase 1; 85% Phase 2). Participants were licensed as social workers (70.8% Phase 1; 65% Phase 2), clinical counselors (19.5% Phase 1; 40% Phase 2), chemical dependency counselors (13% Phase 1; 10% Phase 2), or psychologists (2.8% Phase 1). In our quantitative sample, 23 different types of organization were represented, with about half comprising private practices (26.5%) or community-based outpatient programs (25.6%). Additional organizations included hospital-based outpatient services (12.1%), prisons/jails (5.6%), school-based behavioral health (5.1%), and hospital-based inpatient services (4.2%). Additional details on participant characteristics across Phases are published elsewhere ( Hyzak et al., 2023). Across the quantitative sample, the mean score on the intentions scale was 3.34 (SD = 1.51), and 25.6% reported that they used the screener at least once over the previous month (Hyzak et al., 2023).

Acceptability

The mean AIM score was 4.12 (SD = 0.63, Mdn = 4.0, range = 2.5–5.0), and qualitative results expanded on the high AIM score. See Table 1. Overall, participants reported that using the OSU TBI-ID to screen for TBI would be highly acceptable to adopt in practice. Specifically, providers discussed that the OSU TBI-ID would be a welcomed tool to guide clinical decision-making because it provides a more comprehensive understanding of the client. A participant explained: That actually gives me a really good full picture of what the client might be experiencing [if its] mental health…if it's part of the TBI, and that gives me a really good idea of what I can recommend for resources, long-term counseling, and things like that. But it also gives me a really good picture of what I can pull to try to educate them on what might be going on with them. (Licensed Social Worker, Crisis Counselor, domestic violence shelter)

Mixed Methods Results of the Main Study Constructs.

Providers also described that the OSU TBI-ID would be an acceptable screening method that could be added to their current clinical assessment because of their experiences with adopting other screeners and diagnostic assessments. They explained that adding screeners to assess clients for various conditions is common practice. Providers discussed that adding the OSU TBI-ID would be welcomed, although it would add time to their already lengthy assessment.

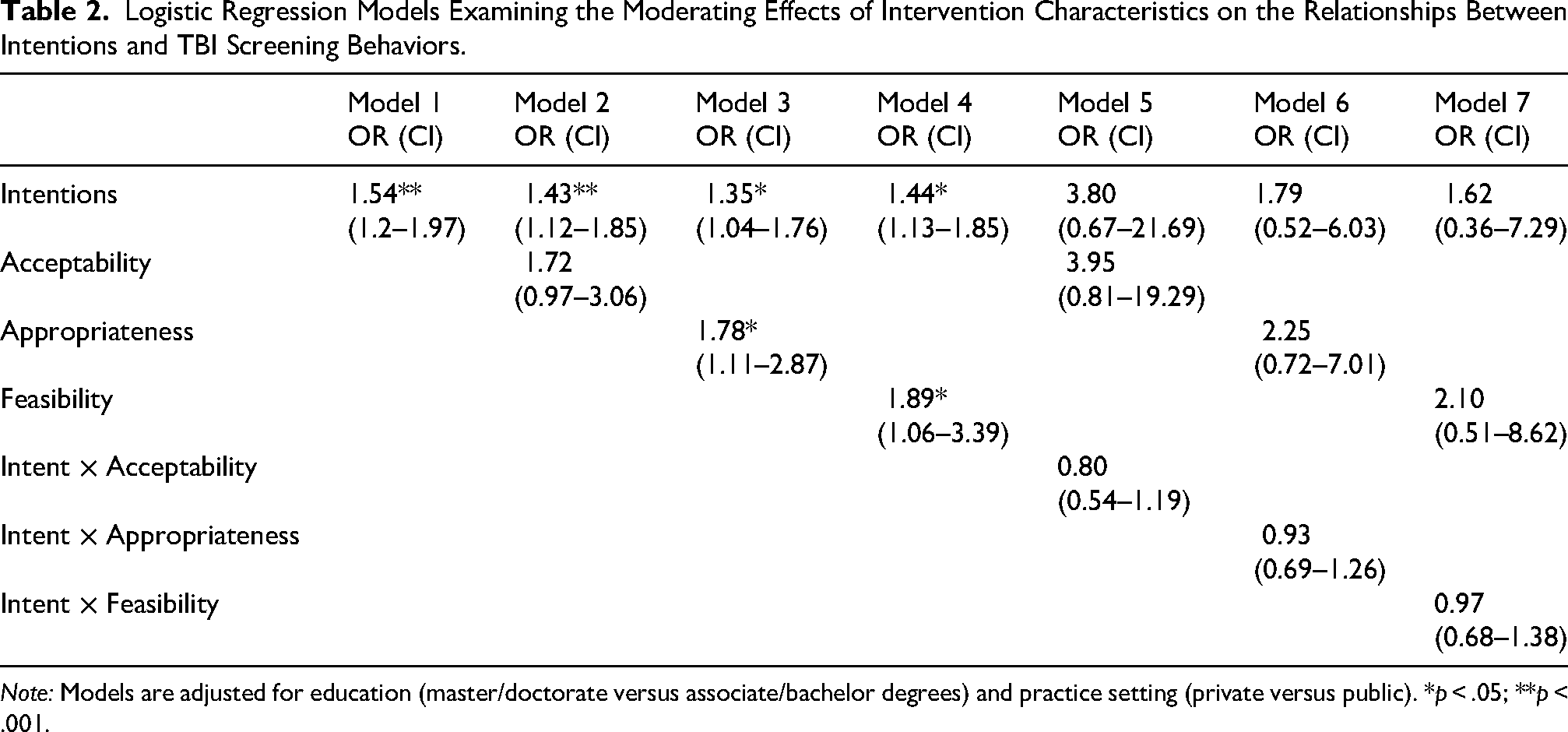

The main hypothesis that the acceptability of using the OSU TBI-ID to screen for TBI would moderate the relationship between intentions and TBI screening behaviors was not supported. In other words, no interaction was found between provider intentions to screen for TBI and their perceptions about the acceptability of the OSU TBI-ID on TBI screening adoption (OR = 0.80, 95% CI = 0.54–1.19, p = .29). See Table 2. When the adjusted model was tested without interaction effects, providers who reported higher acceptability of the OSU TBI-ID demonstrated greater odds of adopting TBI screening, however, this finding also was not significant (OR = 1.72, 95% CI = 0.97–3.06, p = 0.07).

Logistic Regression Models Examining the Moderating Effects of Intervention Characteristics on the Relationships Between Intentions and TBI Screening Behaviors.

Note: Models are adjusted for education (master/doctorate versus associate/bachelor degrees) and practice setting (private versus public). *p < .05; **p < .001.

Feasibility

The mean FIM score was 4.02 (SD = 2.48, Mdn = 4.0, range = 2.5–5.0). Interviews confirmed the score, where participants reported the OSU TBI-ID was feasible to adopt due to its relative simplicity and minimal learning curve. Notably, the step-by-step layout and straightforwardness of the OSU TBI-ID contributed to their perceptions of feasibility. In addition, participants reported that integrating this EBI into current workflows would be a relatively simple process, as long as leadership or higher-level decision-makers approved. A participant elaborated: “I think it would definitely be doable. I don't think it would be a difficult thing to implement given the right backing… Just by talking to the clinical director about it, rather than just me [deciding]” (Licensed Independent Social Worker with Supervision Distinction, Therapist, private practice).

Providers also discussed that this screening method could be readily incorporated into current workflows due to the short length and minimal time needed to implement it. When asked where this screening method would fit into workflows, providers discussed potential fit within the trauma section of the biopsychosocial assessments.

The main hypothesis that the feasibility of screening for TBI would moderate the relationship between intentions and screening behaviors was not supported (OR = 0.97, 95% CI = 0.69–1.26, p = .88). When the model was tested without interaction effects, providers who reported greater perceptions of the feasibility of using the OSU TBI-ID demonstrated significantly greater odds of screening for TBI (OR = 1.89, 95% CI = 1.06–3.39, p = .03).

Appropriateness

The mean IAM score was 3.69 (SD = 0.89, Mdn = 4.0, range = 1.0–5.0). The qualitative results expanded on this score, where participants explained that they believed screening for TBI was appropriate due to the amount of violence and trauma many of their clients experienced, which might result in TBI. Providers explained that many of their clients are survivors of domestic violence, have survived childhood physical abuse, or are combat veterans. A participant explained: I think it is very appropriate because a lot of my clients, and probably a lot of my peers and colleagues, don't necessarily know what counts as contributing to a possible TBI. So like for domestic violence survivors, they don't even think of that. It's a possible scenario that would contribute to TBI … Like there's a lot of domestic violence in my clients’ backgrounds, and that could be victimization, that could be perpetration, that could be both, right? And so, I think that domestic violence is like a very underreported area when it comes to TBI or even under asked….. (Licensed Independent Social Worker with Supervision Distinction, Mitigation Specialist, prison setting)

Participants also discussed that the OSU TBI-ID is highly relevant to clinical practice decisions. Participants explained that the OSU TBI-ID would be valuable for gaining insight into the client, guiding referrals to physician specialists, occupational therapy, and physical therapy, as well as guiding treatment plans. One participant explained that adopting TBI screening could bridge gaps in medical and substance use treatment; however, there was insufficient role clarity on who should be conducting the screening.

The main hypothesis that the perceived appropriateness of screening for TBI would moderate the relationship between intentions and behaviors was not supported (OR = 0.93, 95% CI = 0.69–1.26, p = .65). When the model was tested without interaction effects, providers who reported higher appropriateness demonstrated significantly greater odds of screening behavior (OR = 1.78, 95% CI = 1.11–2.87, p = .02).

Discussion

This is the first study to examine interrelationships between the acceptability, feasibility, appropriateness, and adoption of an evidence-based TBI screening intervention for clients seeking behavioral health treatment. Our primary hypothesis that acceptability, feasibility, and appropriateness of screening for TBI would moderate the relationships between intentions and behaviors was not supported. However, results suggest that positive perceptions about the feasibility and appropriateness of TBI screening are important precursors to behavior change. Overall, providers reported favorable perceptions about TBI screening and explained that the OSU TBI-ID was easy to integrate into workflows, relevant to clients and clinical practice, and beneficial for guiding referrals or other treatment decisions. Together, results from this study advance the science of implementation by building the knowledge base on the proximal, causal sequencing of implementation processes, which can guide more precise implementation and dissemination strategy identification and deployment across the implementation lifecycle (Proctor et al., 2011, 2023).

Our main hypothesis that the acceptability, feasibility, and appropriateness would moderate the relationships between intentions and TBI screening behaviors was not supported. However, results from our descriptive analyses demonstrated relatively high mean scores on the three measures. This is consistent with findings from other studies that used the AIM, FIM, and IAM to assess perceptions of the intervention in chronic pain medicine, respiratory medicine, maternal and infant mortality, mental health, HIV care, and surgical oncology (Amin et al., 2023; Gmuca et al., 2022; Gresh et al., 2023; Kopelovich et al., 2022; Li et al., 2023; Tarfa et al., 2023). This may suggest that the interventions selected for implementation are already designed for relevance to the patient population, feasible, and satisfactory for use by the time they are assessed. For instance, teams are not attempting to implement an intervention designed for cancer screening when the goal is to identify brain injury. Similarly, these results, paired with the broader literature, may also suggest a ceiling effect on these measures and little variability across responses, possibly leading to a superficial result of the perceptions about an intervention. Despite the acceptable psychometric properties associated with the FIM, IAM, AIM (Weiner et al., 2017), the four items on each of these measures are redundant and may not necessarily be capturing the full picture of perceptions of how an intervention is deemed feasible, acceptable, or appropriate. The field of implementation science would benefit from additional research on the development and improvement of existing implementation outcome measurement (Mettert et al., 2020).

Nonetheless, acceptability, feasibility, and appropriateness are still important outcomes to consider during intervention design or adaptation to new settings or populations and may still offer important insights into the adoption process. Our results demonstrate that positive perceptions about an intervention, and specifically feasibility and appropriateness, are important precursors to behavior change; however, they may be insufficient in leading to sustained adoption and implementation. As demonstrated in previous work, determinants such as leadership engagement, incentives, self-efficacy, competing priorities, and implementation support are also important predictors that may bridge the transition between intentions and behaviors, and impact sustained change over time. Additional research could focus on investigating how perceptions of acceptability, appropriateness, and feasibility of the intervention delivery may shift over time and as interventions are scaled across settings. Research should also investigate how these other determinants may interact with perceptions and behaviors so that more precise implementation strategies can be designed to initiate, accelerate, and sustain change.

Results suggest that greater intentions and perceptions of the feasibility and appropriateness of the intervention may lead to adoption, but other factors salient to the implementation context may be obfuscating the adoption process. Prior qualitative research has identified several barriers affecting the adoption of TBI screening, including poor leadership engagement, readiness for implementation, incentives, awareness of a TBI screening intervention, and unwillingness to screen clients without knowing what to do if a client has a positive screen (Coxe et al., 2021; Hyzak et al., 2023). Paired together, these studies demonstrate that although providers may want to begin addressing TBI among clients, they will need additional support throughout the implementation process to overcome the numerous barriers hindering adoption and implementation. Future work focused on theoretical implementation research can build on these studies by generating hypotheses that empirically test more nuanced mechanistic models to better understand the adoption process. Applied implementation researchers and practitioners can use these results to develop implementation strategies that capitalize on positive intervention characteristics during training, skill-building, and workflow integration, while also developing strategies that address barriers to intervention adoption, implementation, and sustainment. Specifically, facilitation, which is an interactive process of implementation support, is a potential implementation meta-strategy that could improve the adoption and implementation of TBI screening by acting on modifiable determinants within the implementation context (e.g., self-efficacy, implementation climate), leading to behavior change (Berta et al., 2015; Garner et al., 2020). In addition, champions can be leveraged to help identify areas for workflow integration, promote adaptability, and improve perceptions around TBI screening as a practice priority in these settings (Bunce et al., 2020; Hyzak et al., 2024).

While systematic implementation efforts will be necessary for improving implementation, effectiveness, and sustainment, dissemination strategies are also a necessary step in raising awareness about the relevance of TBI to substance use treatment, as well as the existence of TBI screening tools and treatment modifications to improve client outcomes. With recent changes to ASAM criteria, systematic and active dissemination strategies designed in collaboration with clients, family members, and providers will be equally as important as implementation strategies in producing system-level capacity to equitably adopt and sustain these practice standards (Kwan et al., 2022; Schipper et al., 2016). Bottom-up approaches, such as co-designing dissemination strategies alongside these partners, like policy briefs or joint messaging statements about brain injury, can help increase the likelihood of messaging relevance to capture adopter interests and attention (Kwan et al., 2022). Top-down approaches that target policy-makers and other stakeholders with influence in altering payment structures to incentivize providers to screen for TBI and adapt treatment will also be necessary for dissemination and sustainment (Ashcraft et al., 2020; Kwan et al., 2022). These policy-level dissemination strategies might include using champions to bridge academics, clients, families, and providers to policy-makers, tailoring policy briefs for staff (i.e., story-telling approach) and legislators (i.e., data-based approach), and using relevant communication channels like printed materials or personal communication to reach policy-makers and state leaders. Other dissemination strategies for improving awareness and shifting attitudes might include leveraging social media platforms or national professional organizations. Future research should involve community partners in dissemination strategy co-design and deployment, investigate the effectiveness of these dissemination strategies on provider engagement, and the extent to which these strategies lead to adoption of standard-of-care practices in behavioral health treatment.

As suggested in these results and in our prior work, implementation strategies that target leadership engagement will be critical to catalyzing change (Hyzak et al., 2023, 2024). Leadership engagement is becoming an increasingly recognized mechanism of change, where leadership-focused implementation strategies like Leadership and Organizational Change for Implementation (Aarons et al., 2024) and Helping Educational Leaders Mobilize Evidence (Locke et al., 2025) have demonstrated promise for improving or buffering the implementation of interventions in substance use treatment settings and schools, respectively. Similar strategies may be adapted and tested for engaging leadership to improve the implementation of TBI screening and treatment in behavioral health treatment settings.

We acknowledge several study limitations. First, it is possible that social desirability bias affected how providers responded to the AIM, FIM, and IAM, resulting in higher overall scores. Second, due to poor survey response on our earliest data collection attempts, likely driven by the COVID pandemic, we had to use several sampling strategies, which resulted in differences between groups on key demographic variables. Although we controlled for this in our statistical models, it is possible that perspectives from different provider types, settings, and education levels may result in different outcomes. Similarly, while our sample is representative of the largely non-Hispanic White female behavioral health workforce in the U.S., due to small cell sizes, findings may not generalize to other professionals who do not fall in this demographic. Given efforts to increase workforce diversity to better serve diverse patient populations, it is important for future research to also reach a more diverse sample. In addition, it is also possible that providers who did not think brain injury was relevant self-selected out of the study, which could have affected the AIM, FIM, and IAM scores and the qualitative results. Fifth, due to the self-report nature of TBI screening behaviors used in this study, it is possible that providers did not accurately report on the number of TBI screens conducted. Notably, one month may have been too long a timeframe for accurate recall of the number of screenings conducted. Similarly, we captured TBI screening behaviors at a single point in time, and hence we could not determine how screening rates may have increased or tapered off over time. Future research should use objective, prospective, longitudinal adoption measures, like observation or electronic health records, to reduce bias and to track whether adoption leads to implementation over time. In addition, although the qualitative data highlight the importance of leadership engagement and workflow fit as important determinants to adoption, we did not quantitatively assess organizational-level constructs (e.g., leadership engagement, implementation climate) in this study, which may have affected the transition from intentions to behaviors. Future research should test whether and how these determinants interact with intentions and behaviors, and develop strategies that target modifiable determinants to implementation of TBI screening. Specifically, future research can build on this work by testing causal mechanistic models, where leadership engagement and implementation climate serve as mechanistic pathways to adoption. Finally, because the results of our moderation hypothesis were not significant, we chose to focus on mixing data from the mean AIM, FIM, and IAM scores with the qualitative interview results. Future mixed methods research could further explore nuanced relationships between interactions in qualitative interviews (e.g., whether perceptions of the feasibility of the screener influenced providers’ decisions to use the screener), which in turn, could help to elicit additional information about mechanisms in implementation science.

Conclusion

This study is the first to investigate interrelationships between acceptability, feasibility, appropriateness, and adoption of TBI screening in behavioral health treatment settings. Results suggest that favorable opinions about an intervention do not necessarily lead to greater adoption of that intervention. Notably, although we used the validated AIM, FIM, and IAM to measure our main constructs of interest, results demonstrate gaps in implementation outcome measurement, making it difficult to draw firm conclusions about how these implementation outcomes are interrelated on intervention adoption. Improving implementation research measurement is critically important to address these concerns. Nonetheless, dissemination strategies will be crucial in creating awareness about the existence of and need for interventions to address TBI in substance use and other behavioral health treatment settings.

Supplemental Material

sj-docx-1-irp-10.1177_26334895261417230 - Supplemental material for Examining Interrelationships Between Implementation Outcomes in the Context of Traumatic Brain Injury Screening in Behavioral Health Treatment

Supplemental material, sj-docx-1-irp-10.1177_26334895261417230 for Examining Interrelationships Between Implementation Outcomes in the Context of Traumatic Brain Injury Screening in Behavioral Health Treatment by Kathryn A Hyzak, Alicia C Bunger, Jennifer A Bogner and Alan K Davis in Implementation Research and Practice

Footnotes

Abbreviations

Ethical Approval and Informed Consent Statements

All participants provided informed consent prior to study participation, which included consent to the publication of anonymized results. For the surveys, participants were provided an electronic version of the informed consent document, and those who agreed to participate clicked the “forward arrow” at the bottom of their screen to begin the survey, as approved by our IRB. Verbal informed consent was obtained in the case of qualitative interviews, as approved by our IRB. This research study was reviewed and approved by the Institutional Review Board at the Ohio State University (Study #2021E0734).

Author Contributions

KAH conceptualized and oversaw the entire study. She was responsible for all data collection and analyses, led the interpretation of the findings, and wrote the manuscript. ACB contributed to the study design, informed the data collection procedures, informed the data analyses, and provided substantive edits to the manuscript. JAB informed the quantitative data analysis and provided substantive edits to the manuscript. She is also one of the developers of the Ohio State University Traumatic Brain Injury Identification Method, which is the intervention used in this study. AKD helped inform data collection procedures, quantitative data analysis, and provided substantive edits to the manuscript.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This study was funded by the National Institute of Neurological Disorders and Stroke of the National Institutes of Health under Award Number F31-NS124263 (Hyzak, PI). The content is solely the responsibility of the authors and does not necessarily represent the official views of the National Institutes of Health. This work was also supported by the Alumni Grants for Graduate Research and Scholarship through The Ohio State University and the Ph.D. Seed Grant Program through the College of Social Work at The Ohio State University awarded to Dr. Hyzak. Dr. Bunger is supported by the National Institute for Drug Abuse (NIDA) through grant number R34DA046913. Dr. Bogner's efforts were supported in part by a grant from the National Institute on Disability, Independent Living, and Rehabilitation Research (NIDILRR) to The Ohio State University (Grant #90DP0040). NIDILRR is a Center within the Administration for Community Living (ACL), Department of Health and Human Services (HHS). Dr. Davis is supported by the Johns Hopkins Center for Psychedelic and Consciousness research, which is funded by Tim Ferriss, Matt Mullenweg, Craig Nerenberg, Blake Mycoskie, and the Steven and Alexandra Cohen Foundation. Dr. Davis is also supported by the Center for Psychedelic Drug Research and Education, funded by anonymous private donors. The contents of this publication do not necessarily represent the policy of any funding organization, and you should not assume endorsement by the Federal Government.

Declaration of Conflicting Interests

The author(s) declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: Dr. Kathryn Hyzak is a board member of the Society for Implementation Research Collaboration (SIRC). Dr. Alicia Bunger is on the Editorial Board of Implementation Research and Practice.

Data Availability Statement

Anonymized data may be provided by the corresponding author upon reasonable request.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.