Abstract

Background

The science of intervention adaptation is rapidly expanding, yet there has been limited research evaluating how context affects intervention fidelity and adaptation. The current study sought to address this gap by closely characterizing the delivery of an autism evidence-based practice (EBP), Project ImPACT, within an Early Intervention (EI) system to understand how context shaped both intervention adaptation and providers’ coaching fidelity.

Method

Twenty-one EI providers were trained in Project ImPACT. Following training, providers submitted videos of each of their Project ImPACT sessions, which were scored for Project ImPACT coaching fidelity, Project ImPACT adaptation, and the presence and quantity of supplemental therapeutic content. After each session, EI providers also completed a brief survey about how they delivered Project ImPACT and adaptations they made.

Results

Mixed methods data from 100 sessions demonstrated that how providers reported delivering Project ImPACT was misaligned from adaptations that were observed within the same session. Overall, providers’ Project ImPACT fidelity was variable and driven by the integration of other content areas within the confines of relatively short therapy sessions. EI providers adapted Project ImPACT in approximately half of their sessions and spent about 17% of their recorded session time covering other therapeutic content. Spending a greater percentage of session time integrating other content areas was significantly associated with dropping core Project ImPACT coaching activities and having lower Project ImPACT fidelity within that same session.

Conclusion

The current study highlights the critical role of context in shaping providers’ Project ImPACT coaching fidelity. Fidelity outcomes in this study were consistent with other EI implementation trials and raise questions about fidelity benchmarks and normative delivery within community settings. Findings also highlight the need for holistic fidelity tools and training models that support the delivery of core intervention functions in relationship to child-, family-, and system-level factors.

Plain Language Summary

Researchers have been trying to understand how to help providers deliver high-quality interventions in ways that work for families and community service systems. The goal of the current study was to look at how providers adapted their use of one evidence-based practice (EBP), Project ImPACT, to meet the needs of families in a public Early Intervention (EI) system. Twenty-one EI providers were trained in Project ImPACT. After training, EI providers submitted videos of each of their Project ImPACT sessions, which were scored for Project ImPACT coaching fidelity (or how well they used the strategies), Project ImPACT adaptation, and additional content they discussed with families. After each session, EI providers also completed a survey where they reported how they delivered Project ImPACT and any adaptations they made. EI providers added additional content to half of their Project ImPACT sessions and spent about 17% of their session time covering this other content with families. Spending a greater percentage of their session time adding content to Project ImPACT meant that providers needed to remove other Project ImPACT activities and had lower Project ImPACT fidelity within that session. However, this did not prevent them from eventually delivering all Project ImPACT content. The study findings may mean that we need fidelity tools and training models that help providers deliver EBPs in ways that are more responsive to the needs of families and systems.

Introduction

A growing number of research studies are striving to characterize the adaptations made to interventions during implementation (Chambers, 2023; Escoffery et al., 2018; Movsisyan et al., 2019), the reasons they may occur, and the extent to which adaptations are theoretically aligned with the core functions of an intervention (Kirk et al., 2020). This research has robustly shown that most evidence-based practices (EBPs) are adapted to align services with the context in which they are being implemented (Kim et al., 2020; Lau et al., 2017; Yu et al., 2022), and include adaptations made in response to patient-level, organization-level, and system-level factors (e.g., Aarons et al., 2012). By and large, community clinicians perceive that adaptations enhance the fit of EBPs for diverse patient populations (Kim et al., 2020; Lau et al., 2017; Rudrabhatla et al., 2024), and that they promote the feasibility, acceptability, and sustainability of EBPs when delivered within community systems (Chambers et al., 2013).

While EBP adaptation is both common and necessary, maintaining intervention fidelity—at least to some degree—is thought to be essential for an intervention to maintain its effectiveness (e.g., Kirk et al., 2020). Several adaptation models depict “fidelity consistency” as mediating the relationship between an adaptation to an intervention and its impact on treatment outcomes (Kirk et al., 2020; Miller et al., 2020; Wiltsey Stirman et al., 2019). This hypothesis is important yet raises longstanding questions of how best to balance fidelity and flexibility (Sackett et al., 1996), especially knowing that fidelity may at times be at odds with the flexibility necessary to carry out interventions within community settings. This tension is heightened within underrepresented community contexts where more adaptation may be required to align services with resource and workforce constraints, and the needs of minoritized patient populations historically excluded from intervention development processes where external validity is unclear.

Despite its prominent role in theory, the bounds of flexibility in relationship to fidelity are not well understood (Chambers, 2023). On the one hand, several research studies have demonstrated that adapting an intervention by augmenting it (i.e., adding to or extending an intervention) is common, viewed favorably by community clinicians, and positively associated with therapeutic alliance and patient outcomes (Kim et al., 2020; Rudrabhatla et al., 2024). While adapting an intervention by reducing it (e.g., removing or shortening core intervention elements) is often characterized as fidelity inconsistent (e.g., Wiltsey Stirman et al., 2019), fidelity inconsistent adaptation may be critical to allow time to address psychosocial stressors and other client goals not accounted for in EBPs (Aschbrenner et al., 2021; Pickard et al., 2023). Reducing adaptations is also associated with augmenting adaptations both within and across EBP sessions (Rudrabhatla et al., 2024), suggesting that the actual process of adapting interventions is fluid, with certain types of adaptation likely resulting in the manifestation of others.

Perhaps the lack of clarity regarding the process and impact of intervention adaptation stems from poorly specified measures of fidelity (i.e., measures of adherence rather than of core function; Edmunds et al., 2022) or the challenges measuring fidelity in a fine-grained enough manner to understand how the relative balance of fidelity and flexibility shapes implementation outcomes (Ayele et al., 2023; Chambers, 2023; Geng et al., 2023). Given these limitations, there is a need to expand research from simply categorizing EBP adaptation to understanding why adaptations are made and how they impact implementation outcomes. Models such as the Model for Adaptation Design and Impact (MADI) can be used to theoretically ground this type of research (Kirk et al., 2020) given that they denote the types of adaptations made to interventions while also specifying factors hypothesized to mediate (i.e., fidelity consistency) and moderate the impact of an adaptation on implementation and service outcomes (Kirk et al., 2020). Using models like MADI a priori may help clarify the motivation for certain types of adaptations and the extent which adaptations are indeed linked to intervention fidelity and other implementation outcomes.

Evaluating adaptation processes and impact using models like MADI is highly relevant to autism services research given the diverse support needs of autistic children and their families and the complex health and educational systems in which they are served (Boyd et al., 2022). For autistic toddlers, public early intervention (EI) systems are an ideal context in which to study EBP adaptation and impact as they are federally mandated to provide therapeutic services to over 400,000 children 0–3 years each year with developmental delays under Part C of the Individuals with Disabilities Education Act (IDEA, 2004). Implementation efforts within public EI systems have focused on training EI providers to deliver parent-mediated intervention (PMI) to the large percentage of children in EI systems with a high likelihood of being autistic (Pellecchia et al., 2023; Rooks-Ellis et al., 2023; Stahmer et al., 2020). PMIs are an evidence-based approach to EI in which caregivers are taught intervention strategies that support their child's learning across home and community activities (Nevill et al., 2018). As is the case with other psychosocial interventions, there has been a proliferation of manualized PMIs over the past two decades with many PMIs now having structured manuals, specific training and certification processes, and clearer fidelity guidelines for community settings (e.g., D’Agostino et al., 2024).

While the manualization of PMIs makes them well-suited for delivery within EI systems, their relatively narrow scope may also mean that they are prone to adaptation, particularly given the diversity of children and families served within EI systems (Early Childhood Technology Assistance Center, 2022), the requirement that single EI providers address multiple areas of family need (e.g., communication, feeding, sleep behavior; IDEA, 2004), and given that family-centered care is an integral value of service delivery within EI systems (Pickard et al., 2023). The systematic requirement to address multiple goal areas in a manner that centers family preferences may drive EI providers to integrate several therapeutic priority areas simultaneously into manualized PMIs (Pickard et al., 2021). Thus, there are important questions regarding how to deliver manualized PMIs within a context that heavily prioritizes family-centered care and where flexibility to address the multiple support needs of autistic children and their families is at the core of the system.

In addition to adaptations to align manualized PMIs with family-centered EI delivery, there may be other factors that drive adaptations to manualized PMI in EI systems. These factors include EI providers varied disciplinary backgrounds and prior experience delivering EI services, including manualized PMIs, to autistic children and their families (Aranbarri et al., 2021; Pickard et al., 2021). Further, the actual delivery model used within public EI systems varies significantly across states, such that the EI session length, session frequency, and session duration varies depending on a providers’ disciplinary background, the child's deemed service needs, and the age at which a child enters services within EI systems (Edmunds et al., 2023). Thus, EI providers may have more time and flexibility to expand manualized PMIs that were previously designed to fit within a short-term outpatient delivery model.

In response to the need to better understand the relationships between intervention adaptation and impact, the current study sought to characterize the delivery and adaptation of an evidence-based PMI, Project ImPACT (Ingersoll & Dvortcsak, 2019), when delivered in an EI system as part of routine implementation practice. Specific aims were to integrate observational, self-report, and qualitative approaches to: (1) describe provider fidelity to Project ImPACT within the EI context; (2) evaluate how EI provider fidelity relates to observed and self-reported adaptations to Project ImPACT; and (3) integrate expansive qualitative coding of observed Project ImPACT sessions to determine how context influences EI providers’ decisions to deliver Project ImPACT and the impact of these decisions on intervention fidelity.

Method

Procedures

Setting

This project was embedded within an ongoing training contract with Georgia's Department of Public Health (DPH). Georgia's DPH oversees the state's Part C EI system, called Babies Can’t Wait. Babies Can’t Wait serves approximately 19,000 children under the age of three each year, approximately 2,500 of whom screen as having an increased likelihood of being autistic. Each year, Project ImPACT training is offered to EI providers as part of ongoing contractual arrangements. If they opt into training, providers can deliver Project ImPACT within routine EI services.

Study Design

Study procedures were approved by the Emory University Institutional Review Board and the Georgia Department of Public Health. State-level EI leadership supported the recruitment of participants by disseminating flyers through provider listservs used across the EI system. Providers were able to contact the study team to express interest in Project ImPACT training participation. Providers were also eligible for research participation if they were: (1) currently employed or contracted by Georgia's EI system; and (2) maintained an active therapy caseload, as defined by seeing at least one child 12–34 months of age with social communication delays as measured by a positive screen on the Parent Observation of Social Interaction (POSI; Smith et al., 2013). Providers were required to see eligible children at least 2 h per month. Given that the study occurred as part of routine training initiatives, EI providers were not randomized. All EI providers who enrolled in training and met eligibility criteria were trained in Project ImPACT and able to opt into research procedures (e.g., additional survey, observational, and qualitative measures) to evaluate routine delivery and adaptation.

Participants

Forty-one EI providers enrolled in routine Project ImPACT training. Of the 36 providers who completed training, 26 providers (75%) met inclusion criteria for the current study. The most common reason for not meeting inclusion criteria was not carrying an active therapy caseload due to being in a service coordinator role. Of those trained and eligible, 21 providers participated in the current study. All providers identified as female and reported M = 8.61 years of experience working within EI systems (SD = 6.83). Providers represented a range of disciplines, including special instruction, speech language pathology, occupational therapy, and social work. See Table 1 for provider demographic information.

Provider Demographic Information.

Intervention

Project ImPACT is a manualized, PMI that supports social communication skills in young children (Ingersoll & Dvortcsak, 2019). Providers delivering Project ImPACT coach caregivers to use a blend of developmental and naturalistic behavioral intervention techniques across daily routines to support their child's social engagement, communication, imitation, and play skills. Project ImPACT can be delivered once each week for one hour over the course of 12‒24 weeks in both in-person and telehealth delivery models. The program begins with collaborative goal setting with caregivers in social communication skill areas targeted by the program. In subsequent sessions, caregivers receive: (1) didactic instruction in intervention strategies; (2) modeling of the intervention techniques; (3) live coaching while practicing with their child; and 4) a practice plan for implementing the targeted strategies with their child in a daily routine or activity.

Intervention Training and Consultation

EI providers first completed an asynchronous 6-h online tutorial that offers an overview of Project ImPACT, followed by a four-part live webinar series conducted via Zoom. The 14-h webinar series includes didactic instruction while allowing for Project ImPACT role play, video review, and implementation planning. Following training, providers participated in group consultation for one hour per week across approximately 12 weeks. Group consultation embedded a combination of didactic instruction, role play to facilitate behavioral rehearsal, video review of provider sessions, joint problem solving, and planning around subsequent sessions. As part of consultation, participating EI providers submitted videos of each of their Project ImPACT sessions which were scored for Project ImPACT coaching fidelity, Project ImPACT adaptation, and the presence and length of other, non-Project ImPACT therapeutic content. After each session, EI providers were also sent a REDCap survey to report how they delivered and adapted Project ImPACT.

Measures

Sample Characterization

Provider Demographic Information

Prior to training participation, providers reported their age in years, gender identity, race, ethnicity, and educational attainment. They also provided information on their professional discipline, employment status (i.e., contracted or employed provider), years of experience working within EI systems, years of experience working with autistic children, and their current caseload size.

Fidelity and Adaptation Measures

Project ImPACT Coaching Fidelity

Recorded Project ImPACT sessions were behaviorally coded using the Project ImPACT Coaching Fidelity Checklist (Ingersoll & Dvortcsak, 2019). The checklist includes items about: (1) setting up the coaching environment, (2) using coaching strategies (i.e., reviewing practice; introducing the session topic, demonstrating strategies; supporting caregiver practice of strategies; creating a practice plan), and (3) using collaborative strategies to partner with caregivers. Each of the 21 items was rated on a three-point Likert scale, where 1 indicated the provider did not use the strategy and 3 indicated that the provider used the strategy fully. Total fidelity scores for each session were summed and divided by the total possible fidelity points to achieve a percent rating (0%–100%). Fidelity was coded by two graduate-level clinicians certified in Project ImPACT. Reliability was calculated on 20% of all recorded sessions. Average intraclass correlation (ICC) for individual fidelity items was 0.77.

Project ImPACT Session Adaptations

Each Project ImPACT session was behaviorally coded for the presence of adaptations that were categorized based on the first domain of the MADI (Kirk et al., 2020), which represents the Framework for Reporting Adaptations and Modifications to Evidence-Based Interventions (FRAME; Wiltsey Stirman et al., 2019). Based on these frameworks and prior research (e.g., Lau et al., 2017), adaptations were grouped into those in which Project ImPACT was augmented (i.e., such that core Project ImPACT functions were supplemented or extended); or adaptations in which Project ImPACT was reduced (i.e., in a manner that might attenuate the impact of core Project ImPACT functions). Examples of augmenting adaptations include repeating Project ImPACT topics, lengthening Project ImPACT sessions, and integrating other therapeutic content or approaches. Examples of reducing adaptations included dropping Project ImPACT coaching activities (e.g., dropping coaching, demonstration, and/or practice planning), skipping Project ImPACT topics, and shortening the session. Each adaptation was rated as present or absent by the same trained individual coding Project ImPACT fidelity. Reliability was calculated on 20% of sessions and indicated 81% agreement.

In addition to behavioral coding, providers were sent a brief survey via REDCap after each Project ImPACT session in which they self-reported adaptations. The adaptations that providers rated (yes/no) were identical to those behaviorally coded and were also characterized into the same augmenting and reducing adaptations.

Other Emergent and Nonemergent Therapeutic Content

Each Project ImPACT session was coded using an extended version of the Emergent Life Events Coding system (Guan et al., 2017). The research team used this tool to code the presence of any discrete psychosocial stressor (i.e., emergent event) within a Project ImPACT session. Examples of emergent events included a caregiver disclosing a recent car accident, housing loss, and/or medical emergency; or a caregiver becoming visibly upset. In addition to noting emergent content, the coding scheme was expanded to code the presence of other non-emergent therapeutic content that represented a departure from traditional Project ImPACT content focused on social communication. This included the integration of content related to sensory differences, toilet training, feeding, and emotion regulation. For each session, the coding team rated: (1) the presence or absence of emergent content (yes/no); (2) the presence or absence of other non-emergent therapeutic content (yes/no); and (3) the percentage of total session time spent on any other therapeutic content, both emergent and non-emergent (calculated as total time spent covering other content divided by total session length). They also qualitatively described any non-Project ImPACT content that was observed within the session. Training in this coding scheme involved the lead researcher and two students coding other content together for the first 10 sessions and using consensus methods for reliability, prior to breaking apart to score independently. Reliability was scored on the presence/absence of both emergent and non-emergent other therapeutic content across 20% of sessions and indicated strong agreement at 84.6%.

Qualitative Decision Sampling Questions

Immediately following their Project ImPACT session, providers were asked to respond to the open-ended questions: (1) What were your main goals for this session?; (2) Was there anything unanticipated that happened during your session?; and (3) How did you respond to the situation and why? Questions were modeled from previous research seeking to understand the factors that influence providers’ delivery of autism EI (Pickard et al., 2023).

Analytic Plan

Quantitative Analyses

All analyses were conducted in SAS version 9.4 (Cary, NC), and statistical significance was assessed two-sided at the 0.05 threshold. Descriptions for provider- and session-level data for Project ImPACT were calculated using means and standard deviations for continuous variables and frequencies and percentages for categorical variables. Adaptations to Project ImPACT sessions were self-reported by providers, and a subset of sessions was observed for adaptations by a second rater, as a measure of reliability. Agreement between provider self-reported adaptations and rater-observed adaptations was calculated using conditional exact logistic regression for matched pairs, with 95% confidence intervals (CI).

Other Project ImPACT session metrics, such as fidelity, percentage of session time devoted to other content, and presence of session adaptations, were modeled against various exposures, such as presence of emerging events, using generalized linear mixed models (GLMMs). For each model, exposure(s) were treated as fixed effects, and random effects included intercepts to account for variability in baseline outcomes between providers. Additionally, GLMMs accounted for within-subject correlations across repeated measurements for children nested within providers. Results from the models are reported as least-squares means (LS-means) for continuous outcomes (e.g., fidelity) and odds ratios for binary outcomes (e.g., presence of session adaptations). Finally, multivariable (MV) regression procedures were employed to concurrently evaluate multiple predictors of Project ImPACT session fidelity. For MV modeling, all predictors were retained, irrespective of statistical significance.

Qualitative Analyses

Open-Ended Survey Data

Provider responses to open-ended questions about their session were de-identified and imported into NVIVO for analysis. Qualitative analysis was occurred by three research team members, one with a strong background in qualitative analysis and two additional members trained in qualitative analysis and who had behaviorally coded sessions. Given that qualitative data was obtained from open-ended survey data rather than iterative, semi-structured interviewing, directed content analysis was used to categorize responses in a manner that related to the survey questions (Elo & Kyngäs, 2008; Hsieh & Shannon, 2005). This codebook was applied to each of the 78 total responses with consensus coding used for reliability.

Expanded Observational Coding

Written notes from the expanded emergent event coding system were compiled and imported into NVIVO. Like the qualitative descriptions of specific sessions, these data included text descriptions of the events and other topics that arose within Project ImPACT sessions. The qualitative codebook for these descriptions had been developed alongside the behavioral coding of sessions, which included developing and iteratively refining categories of psychosocial stressors and other therapeutic content that were the focal point of sessions. This codebook was applied by each of the three team members and reliability was formally calculated using NVIVO software.

Mixed Methods

The current study followed recommendations for mixed-methods research that aims to integrate both quantitative and qualitative approaches and outcomes (Palinkas et al., 2011). Within the current study, all data was collected simultaneously and the approach sought to integrate self-report, observational, and qualitative data across 100 Project ImPACT sessions. Convergence was used to determine whether the quantitative and qualitative methods (i.e., observation and self-report) provided similar findings via formal comparisons. Expansion was used to examine the extent to which one method provided additional insight into the context that drove EI providers to make modifications to Project ImPACT.

Results

Project ImPACT Delivery

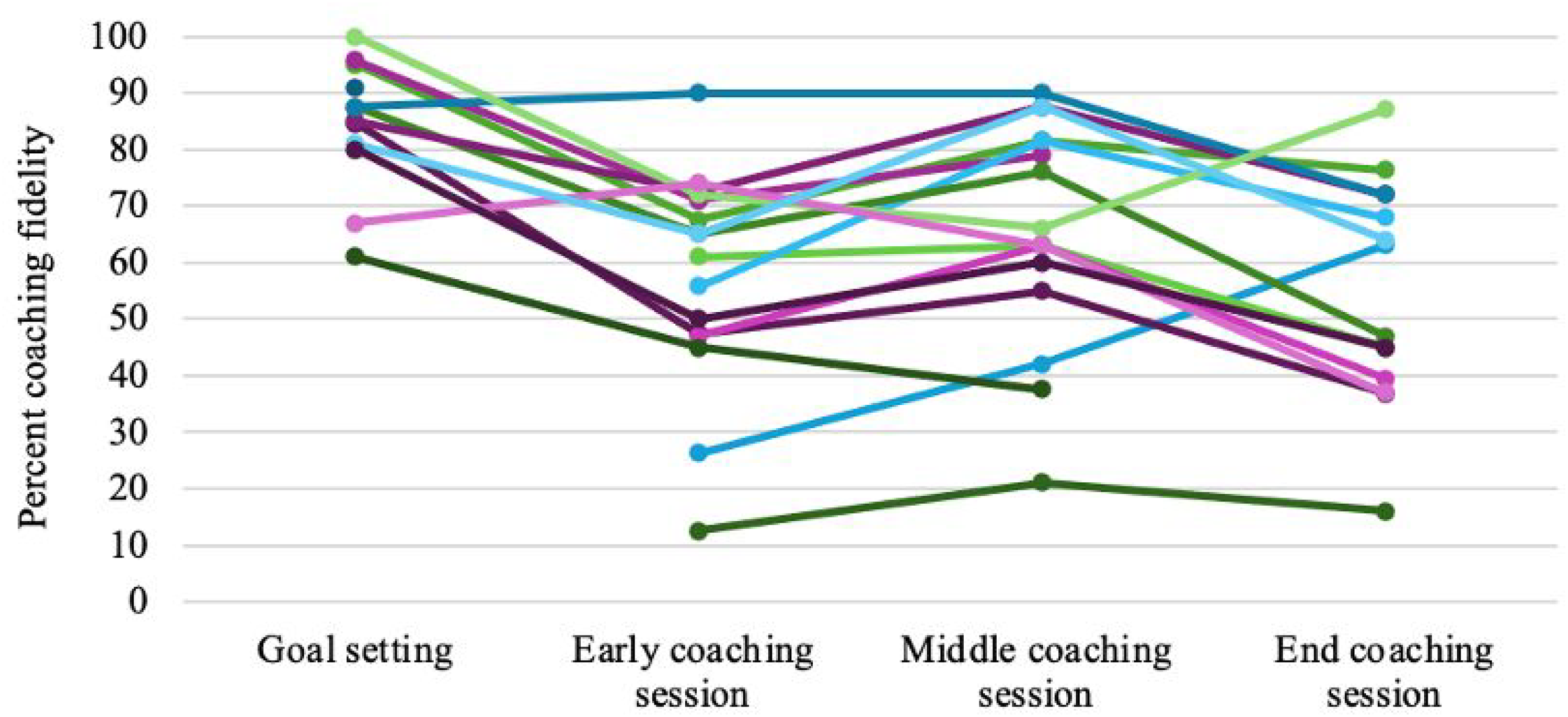

Recordings were available from 100 Project ImPACT sessions. Approximately three-quarters of recorded sessions were delivered over telehealth with session lengths ranging from 11–63 min (M = 35.3 min; SD = 12.00 min), and coaching fidelity ranging from 23.6%‒100% (M = 63.7%; SD = 20.57%). Coaching fidelity was highest in early collaborative goal-setting sessions (M = 84.67%; SD = 13.23%), where formal caregiver coaching is not required, and lowest during the final Project ImPACT coaching sessions focused on helping caregivers teach their child new communication skills (M = 56.15%; SD = 19.16%). See Figure 1 for EI providers’ fidelity while delivering Project ImPACT.

EI Providers’ Coaching Fidelity Across Early, Middle, and Late Project ImPACT Sessions.

As depicted in Table 2, EI providers spent an average of 30.10 recorded minutes covering Project ImPACT content (range 0–63 min; SD = 14.6 min) and 5.2 min covering non-Project ImPACT therapeutic content (range 0–38 min; SD = 8.11 min). This meant spending 17.1% of session time integrating other therapeutic content (range: 0%–100%; SD = 28.71%).

Summary of Project ImPACT Recorded Sessions (N = 100).

Self-Reported and Observed Adaptations to Project ImPACT

EI providers self-reported at least one adaptation in 53% of Project ImPACT sessions. Adaptations reported at the highest frequency included repeating Project ImPACT topics (26.2% of reported sessions), covering other therapeutic content not typically included in Project ImPACT (11.5% of sessions), and addressing family stressors (8.2% of sessions). EI providers rarely indicated dropping core coaching activities from their Project ImPACT sessions, apart from dropping practice planning in 11.5% of sessions. Observational coding similarly indicated a high level of adaptation, with at least one adaptation observed in 46% of recorded Project ImPACT sessions. Common observed adaptations included integrating other non-Project ImPACT therapeutic content (31.6% of sessions) as well as not demonstrating a Project ImPACT strategy to a caregiver (18% of recorded sessions) and not collaboratively determining a plan for practicing Project ImPACT strategies (14.8% of sessions).

EI providers’ report of whether they made specific adaptations to their Project ImPACT session was inconsistently associated with the adaptation being observed in the same session. As depicted in Table 3, for sessions when provider self-report and video recorded data were both available, there was significant likelihood of disagreement in the domains of repeating Project ImPACT sessions, integrating other non-Project ImPACT content, and removing demonstrating a Project ImPACT technique. Agreement between self-report and observational data occurred in the domains of addressing an emergent event, lengthening the session, shortening the session, removing coaching, and removing the practice plan.

Alignment of Self-Reported and Observed Adaptations for a Sub-Sample of 61 Sessions With Both Provider-Report and Observational Data.

Odds of adaptation in session for observed versus provider reported—significant OR indicates discord (i.e., disagreement/unreliable).

Indicates augmenting adaptation.

Indicates reducing adaptation.

Bolded values represent those that are significant at the p < .05 threshold.

The Presence and Impact of Other Therapeutic Content

As noted, each Project ImPACT session was coded for: (1) the presence or absence of emergent content; (2) the presence or absence of non-emergent other therapeutic content; and (3) the percent of session time spent on any non-Project ImPACT therapeutic content, both emergent and non-emergent. Overall, an emergent event was observed in 9.6% of Project ImPACT sessions and was most often related to a child's health (e.g., seizure, hospitalization), housing instability, or other family stressors (e.g., car accident). Other non-emergent therapeutic content was observed much more commonly in 47.3% of sessions. As depicted in Figure 2, other non-emergent content included EI providers helping caregivers to access other therapeutic services (i.e., occupational therapy, autism evaluations); problem-solving childcare placements; and discussing concerns related to topics not covered explicitly within Project ImPACT such as sensory regulation, emotion regulation, and toileting. Allied health providers, including occupational therapists and physical therapists, also spent time addressing discipline-specific goals (e.g., fine motor, sensory regulation, and gross motor skills). One provider noted in their session note: “As an Occupational Therapist, I usually integrate sensory treatments into my plan.”

Percent of Sessions That Included Other Non-Emergent Therapeutic Content Areas.

The presence of other emergent content was not significantly associated with core Project ImPACT coaching activities being dropped (i.e., reducing adaptations) within the same session (p = .32) nor with Project ImPACT fidelity within the same session (p = .11). Similarly, the presence of other non-emergent therapeutic content was not associated with the number of reducing adaptations (p = .69) nor Project ImPACT fidelity (p = .52) within the same session. However, the percent of session time spent on any other therapeutic content, whether emergent and nonemergent, was associated with a significantly greater odds of having one or more reducing adaptation within the same session (OR = 1.24; 95% CI = 1.03, 1.50; p = .03) and was also associated with reduced Project ImPACT coaching fidelity within the same session (p < .001).

Qualitatively, providers described their decisions around Project ImPACT delivery as being largely driven by caregivers’ priorities, with many providers making comments such as, “I don't follow a rigid intervention schedule. I flowed with the family and child's needs.” Other decisions driving providers’ delivery included the length of time a child was being seen within the Part C system. For example, one provider shared: “I adjusted the length of time [of Project ImPACT] based on how long the family would have been in Babies Can’t Wait [Part C]” reflecting the need to expand Project ImPACT due to seeing families for longer than 3 months. Finally, other providers noted that they often integrated discipline-specific goals into their Project ImPACT sessions.

Overall Relationships

As shown in Table 4, a MV regression model was used to evaluate the extent to which the total number of observed augmenting adaptations, observed reducing adaptations, the percent of time spent covering non-Project ImPACT therapeutic content, and the total recorded session length predicted a significant amount of the variance in EI providers’ Project ImPACT coaching fidelity. Within this model, the total number of reducing adaptations and the percent of time spent covering non-Project ImPACT therapeutic content predicted a significant amount of the variance in coaching fidelity within the same session.

Multivariable Regression Relationships With Percent Coaching Fidelity in the Same Session.

Percent of time spent covering nonproject ImPACT therapeutic content and total recorded session length were each scaled by 10%.

Bolded values represent those that are significant at the p < .05 threshold.

Discussion

Researchers have been trying to understand how to help providers deliver EBPs in ways that work for families and community service systems for decades. Although the science of intervention adaptation has rapidly expanded to meet this need (Chambers, 2023; Escoffery et al., 2018; Movsisyan et al., 2019), there has been limited research systematically evaluating how context shapes EBP fidelity and adaptation. The current study sought to address this gap by closely characterizing the routine delivery of Project ImPACT within an EI system. Mixed-methods data demonstrated that providers’ Project ImPACT coaching fidelity was variable and driven by adaptations to integrate other non-Project ImPACT content within the confines of relatively short therapy sessions. How providers reported adapting Project ImPACT was somewhat misaligned from adaptations that were observed within the same session. EI providers adapted Project ImPACT in approximately half of their sessions and spent about 17% of their recorded session time covering other therapeutic content. Spending a greater percentage of session time integrating other content areas was significantly associated with dropping core Project ImPACT coaching activities and having lower Project ImPACT fidelity within that same session.

The results of this study have several implications and related future directions. First, the inconsistent alignment between how EI providers reported adapting Project ImPACT and what was observed within sessions may reflect challenges self-reporting adaptations in the manner that they are currently conceptualized. Challenges reporting adaptation appeared particularly salient for adaptations that involved integrating other content and repeating Project ImPACT sessions. The requirement of EI systems to address multiple goal domains may drive EI providers to integrate several therapeutic topics simultaneously. Within this context, integrating other therapeutic content or even dropping some Project ImPACT activities within a single session may not be viewed as an adaptation rather than the inevitable way in which manualized programs are integrated within the EI context (Youn et al., 2024). Rather than asking providers about whether they adapted a session, it may be more useful to ask providers what topics they discussed in their session and the extent to which they used evidence-based coaching strategies (e.g., Lau et al., 2024).

More importantly, the current study highlights the critical role of context in shaping Project ImPACT coaching fidelity and raises questions regarding the conceptualization of fidelity within community settings. On the surface, results suggest that providers’ coaching fidelity was predominantly driven by the extent to which core coaching activities were dropped from their session. Although these findings might suggest the need for longer EI sessions and for providing consultative support to maintain coaching activities, these types of strategies run the risk of being agnostic to the EI service context. In the absence of being able to increase session length due to the structure and constraints of EI systems (Noyes-Grosser et al., 2018), there is a need for guidance on how to deliver manualized programs within the context of briefer or less frequent therapeutic sessions. Rather than attempting to fit entire sessions into 30 min, it will be important that EI providers have explicit decision supports that help them know when and how to effectively split or repeat sessions in a manner that retains the critical balance between Project ImPACT core components and flexible delivery.

It is important to note that while EI providers’ average coaching fidelity was much lower than the 80% threshold put forth within effectiveness research, fidelity outcomes in this study were consistent with other community-based and EI implementation trials (e.g., Pellecchia et al., 2024; Pickard et al., 2024; Stahmer et al., 2020). The fidelity scores within this pilot and others raises questions about normative PMI delivery within community settings. Within contexts where integrating other therapeutic content is the norm, it may not be appropriate to use fidelity tools and benchmarks that have been designed for use within effectiveness trials. This is particularly true given that longer periods of augmentation and integration may inevitably result in dropping core coaching activities in the same session but may not necessarily mean that fidelity is reduced across all sessions.

These findings suggest that reporting fidelity across all EI providers and even averaging a single providers’ coaching fidelity may mask our understanding of whether core PMI functions versus a random sample of specific session activities are delivered. Thus, findings from the current project highlight the need for holistic fidelity tools focused on the extent to which the core functions of manualized programs are delivered rather than the presence and quality of individual session activities. The use of more holistic fidelity tools may allow us to capture the delivery of core intervention functions in relationship to child-, family-, and system-level factors and that better reflects best practices in adult learning theory that speak to the need for adjusting the pacing of teaching to any number of contextual factors (Taylor & Hamdy, 2013). It is important to note that the creation of a tool like this is contingent on a much deeper understanding of what intervention functions are necessary to affect caregiver and child outcomes, and how best to practically implement a tool within the context of EI systems.

In addition to holistic fidelity tools, more research is needed on training models that provide guidance to EI providers on PMI delivery that balances integration with core intervention functions. For example, rather than specifying the timeline and content of individual sessions, training may need to more explicitly teach the circumstances to consider when deciding the timing and pacing of delivering core intervention functions in relationship to other content areas. For some providers, this may mean delivering programs in a traditional 12-session outpatient model, but for others may mean delivering manualized programs over much shorter or longer periods of time to accommodate other goal domains and caregiver preferences. Specific considerations that providers might weigh in choosing the delivery format of manualized PMIs are the timing and intensity of their discipline specific goals (e.g., fine motor skills), the family's preferences, the extent to which other service providers are available to address distinct goal areas, and the amount of time the provider will be working with the family. These types of models may help to better align manualized programs within EI systems, perhaps resulting in better dissemination and uptake and use of the programs (D’Agostino et al., 2024).

An emphasis of training on core functions and decision-making processes in this manner may be a departure from both current training models as well as current fidelity measures, many of which are focused on adherence and specific intervention activities that can be measured at the session level. Although this approach may be more pragmatic and easier to measure when sessions are randomly sampled and intended to be delivered in a highly structured format, they are likely misaligned with community settings and may hinder our ability to train in a delivery model that is more responsive to the session duration, system, family goals, and family learning style. In order to train in core functions and adaptation in the manner that is spelled out in models such as the MADI, it may be critical build in brief provider report tools within electronic medical records so that core functions are tracked live and across all versus randomly sampled sessions (Kirk et al., 2020).

There are several limitations in this study. Although the study represented fine-grained data from many Project ImPACT sessions, video was not available from every session. It is also possible that sessions were not recorded and shared due to more significant departures from Project ImPACT and that recorded session lengths were much shorter than the true length of Project ImPACT coaching sessions due to not recording check-ins and problem-solving at the start of the session. Thus, it is possible that the amount of adaptation reported in this project is a significant underrepresentation of the total amount that actually occurs across EI sessions. Critically, this study did not link providers’ coaching fidelity to caregiver and child outcomes, including caregivers’ learning and use of Project ImPACT strategies. This is an important next step and area of future research. Finally, this study was not designed to evaluate factors that influenced EI providers’ fidelity to Project ImPACT, such as their experience, discipline, or educational background. It is possible that inconsistent fidelity is driven by other factors outside of attempts to align interventions with the Part C system.

There is mounting interest in increasing access to autism PMIs. Although the EI system has been thought of as an ideal translational setting, provider fidelity in these settings has been variable and not well described. The current study highlights the critical role of context in shaping EI providers’ Project ImPACT coaching fidelity and the need for EBPs and associated training models that are responsive to this context.

Footnotes

Acknowledgments

We would like to acknowledge the significant support of administrators, providers, and families within Georgia's Part C Early Intervention system, Babies Can’t Wait, who provided input into the ideas presented in this project, and who participated in ongoing training initiatives and supported data collection. We would like to especially acknowledge the effort and support of Synita Griswell, MPH, the autism services coordinator within Babies Can’t Wait.

Ethical Approval and Informed Consent

Study procedures were approved by the Emory University IRB and included informed consent for all participants.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This study was funded by the National Institute of Mental Health (NIMH) 1 K23 MH130651-01.

Declarations of Conflicts of Interest

Katherine Pickard is a certified trainer in Project ImPACT and has received compensation for Project ImPACT training and consultation. Any compensation received is donated to the Marcus Autism Center Early Intervention program.