Abstract

Background

Shifting organizational priorities can negatively affect the sustainment of innovations in community settings. Shifting priorities can present barriers to conducting clinical research in schools if a misalignment occurs between school district priorities and the aims of the study. Often this misalignment occurs due to a shift during the period between when the study is submitted for funding and when research activities begin. Participatory research approaches can be employed to restore alignment between study processes and school district priorities. The purpose of the study is to describe data from a shared process with district partners. The shared process resulted in modifications to the main study's implementation processes and strategies in order to restore alignment with evolving school priorities while remaining faithful to the aims of the study.

Method

Data originated from qualitative interviews conducted with 20 school district and school personnel in a large urban school district. Qualitative themes were organized into categories based on a social-ecological school implementation framework. Data from team meetings, meetings with school district administrators, and emails served to supplement and verify findings from interview analyses.

Results

Themes included barriers and facilitators at the macro-, school-, individual-, team-, and implementation quality levels. Adaptations were made to address barriers and facilitators and restore alignment with school district priorities. Most adaptations to study processes and implementation strategies focused on re-training and providing more information to school district coaches and school-based staff. New procedures were created, and resources were re-allocated for the larger study.

Conclusions

Findings were discussed in relation to the implementation literature in schools. Recommendations for sustaining strong collaboration among researchers and school partners are provided.

Plain Language Summary Title

Preparation for Implementation of Evidence-Based Practices in Urban Schools: A Shared Process with Implementing Partners

School-based implementation research requires a strong partnership between schools and researchers to ensure that the processes and implementation strategies of the study align with evolving school district priorities and processes. The article describes a stakeholder engagement process conducted during the preparation phase of implementation. The process resulted in the re-alignment of study processes and implementation strategies, and goals and priorities of the school district.

Introduction

Shifting organizational priorities have been found to negatively affect the sustainment of innovations in community settings (Shelton et al., 2018) and can present barriers in the conduct of clinical research if a misalignment is created between the priorities and study processes and implementation strategies. In school-based research, divergence of goals can happen between the time the project is conceived and the start of research activities. This divergence of goals could lead to diminished support for the study from the school district, poor implementer buy-in, and difficulty recruiting school staff and students to participate. Misalignment could ultimately set the stage for poor implementation and sustainment.

Iterative preparation work with district partners at the start of the study can address misalignment between new district priorities and study aims (Beidas et al., 2017; Glasgow et al., 2020). The purpose of this study is to describe findings from a shared process with district partners that was conducted to make modifications to the main study processes and implementation strategies, in order to restore alignment with new school priorities while remaining faithful to the aims of the study. The present study contributes to the literature on methodologies for conducting study corrections and tailored implementation strategies (Fernandez et al., 2023; Glasgow et al., 2020; Lewis et al., 2018; Powell et al., 2017).

A Public Health Approach for the Delivery of School Mental Health Interventions

An ever-increasing number of U.S. schools provide mental health services to students at risk for mental health problems using multitiered systems of support (MTSS) frameworks such as Positive Behavioral Interventions and Support (PBIS; Sugai & Horner, 2009). PBIS is a comprehensive school-wide multitiered service delivery framework based on the public health prevention model (National Research Council and Institute of Medicine, 2009) that emphasizes the “teaching of social behaviors that increase social and academic success at school” (p. 310; Sugai & Horner, 2009) and can be used to support the provision of a continuum of mental health interventions to students. Tier 1 supports focus on preventing behavioral problems, reducing problem severity, and building skills and competencies at the universal level (Sugai & Horner, 2009). The school district where the study took place uses PBIS to implement universal interventions. The framework can also incorporate Tier 2 selective supports (e.g., group therapy) for children at risk for problem behavior, and Tier 3 indicated supports (e.g., individual and family therapy) for students with severe behavior problems. Implementation of Tier 2 interventions requires assembling a leadership team to evaluate schoolwide discipline and referrals, oversee interventions, manage resources, and ensure that properly trained professionals (coaches, counselors) are available for implementation (Hawken et al., 2009).

Community–Academic Partnership

In school-based research, community–academic partnerships can facilitate successful implementation of the evidence-based practice (EBP) by leveraging the contributions of researchers and key school staff (Moullin et al., 2020). This can be accomplished through participatory research approaches (Pellecchia et al., 2018).

In a typical partnership, researchers share information about the EBP and community partners share information about its fit, feasibility, and appropriateness for the targeted setting (Pellecchia et al., 2018). However, community partners’ levels of influence and involvement may vary depending on the initiative and maturity of the partnership. With less involvement, their contributions are limited to providing guidance to researchers around the implementation context; with more involvement, they have shared decision-making authority and collaboratively manage the implementation effort (Pellecchia et al., 2023). With the former involvement level, community partner contributions center on coordination and cooperation with researchers. In the latter, community partner contributions center on collaboration with researchers (Goodman & Thompson, 2017).

In this study, community partners shared decision-making authority with researchers and collaboratively managed the implementation effort. Consistent with participatory research approaches, we (members of the partnership) strived to maintain mutual trust, respect, and acknowledgment of each other's expertise, and shared decision making (Drahota et al., 2016).

District administrators requested revisions to the research project that would be consistent with new district priorities. They participated in the process of modifying study processes and implementation strategies and the dissemination of the changes to those involved. We used an Action Research approach (AR; Stringer & Aragon, 2021) to address misalignment between some aspects of the study and the new district priorities. AR is a set of processes that can be applied to the conduct of research in community settings. The processes include self-reflective cycles of planning, observing, critical reflection, and re-planning (Koshy et al., 2011).

Evaluation of School-Based Implementation Barriers and Facilitators

The social-ecological framework (Domitrovich et al., 2008) is a conceptual framework for understanding the influence of contextual factors on implementation quality in schools. The framework was designed to guide implementers after a district or a school has decided to adopt an EBP, before the EBP is implemented or formally integrated into the system (Domitrovich et al., 2008). Implementation quality in this framework is influenced by interdependent factors. At the macro level, community factors influence the quality of implementation within schools. At the school level, the school as an organization influences implementation of the EBP. At the individual level, professional and psychological characteristics of the implementers and their perceptions and attitudes toward the interventions impact quality of implementation. At the team level, characteristics of school staff affect the quality of services. At the middle layers, implementation is influenced by the core elements of the EBP, level of standardization, how the EBP is delivered, and how implementers are supported (Domitrovich et al., 2008).

We used the social-ecological framework for our examination of barriers and facilitators to implementation because quality implementation is key to ensuring implementation fidelity and sustainment (Fernandez et al., 2023; Stirman et al., 2012). We also used this framework in a prior collaboration between the research team and the district and conducted formative work (Eiraldi et al., 2018, 2019) to ensure that the EBPs selected would be appropriate for the context and population (e.g., ensuring the randomized controlled trials of the EBPs had been conducted in under-resourced schools with students from minoritized backgrounds). The evidence from those studies suggests that the EBPs should be effective when implemented by school personnel under similar circumstances to those in the present study.

Evolving School District's Priorities

Between the time the research team secured the support of the school district for the research proposal and the time the study was funded (approximately 15 months), the district implemented changes regarding student mental health support. These changes can be conceptualized at different levels of the social-ecological framework (Domitrovich et al., 2008). For example, district administrators decided to accelerate the implementation of an MTSS program (i.e., PBIS) for behavioral and emotional difficulties across all district schools (Macro Level). To this end, the district divided schools according to level of need and availability of resources for the use of the tiered system for behavioral health supports (Support System). Of key importance for the larger research project was the reorganization of the support provided to schools for implementation. Also, due to prior experience participating in research where implementation often ceased after the research project was over, district administrators wanted to ensure that district personnel be present for any training offered by the research team and meetings with school-based personnel to ensure sustained implementation after study completion.

Purpose of the Main Clinical Trial

The main aim of the larger study was to compare implementation and student outcomes of Tier 2 interventions across two sustainment approaches for school staff implementing EBPs in 12 K-8 schools (Eiraldi et al., 2022). Schools were randomly assigned to condition at the conclusion of the implementation phase. Consultants from the research team (two White, female, Master-level practitioners with over 20 years of experience serving students in the district) provided all training and consultation support during the implementation phase. The original plan was to recruit 12 school district coaches (six in each condition) to support schools during the sustainment phase, and to utilize three EBPs at Tier 2: (a) a group intervention for externalizing behavior problems (Coping Power Program [CPP]; Lochman et al., 2008), (b) a group intervention for anxiety problems (CBT Anxiety Treatment in Schools [CATS]; Khanna et al., 2016), and (c) an individual intervention for externalizing behavior problems (Check-in/Check-out [CICO]; Crone et al., 2010).

Purpose of the Present Study

The purpose of this study was to inform and develop adaptations to study processes and implementation strategies during the preparation phase of the study to align study goals and procedures (Glasgow et al., 2020) with school district priorities that evolved since the research was proposed 15 months prior. We expected that sharing a process (Jolles et al., 2022) to better understand contextual factors would facilitate adaptations that did not affect the main aims of the larger study while increasing study buy-in and enhancing implementation fidelity. To conduct the study, school partners and researchers needed to ensure that participating schools had Tier 1 supports in place and sufficient resources to implement mental health supports at Tier 2. They also needed to identify barriers and facilitators to the implementation of EBPs and make appropriate adaptations to fit the specific context of participant schools.

Informed by the implementation (Domitrovich et al., 2008; Fernandez et al., 2023; Glasgow et al., 2020) and PBIS literature (Hawken et al., 2009), the research questions were: (A) what are the barriers and facilitators to implementing and sustaining Tier 2 EBPs in the school district? and (B) what adaptations to the EBPs and implementation strategies are needed to increase fit at Tier 2 in participating schools?

Method

Procedures

Procedures for the study were approved by the pertinent investigational review board (IRB). Qualitative data were collected from school partners as part of a larger cluster randomized trial of two sustainment conditions (Eiraldi et al., 2022) in a large urban school district in the Northeast United States. In the participating schools, 90% of students were Black/African American, 5% Hispanic/Latino, 4% Multi-Racial/Other, and 1% White. All students were eligible for free- or reduced-price meals. The district identified the schools selected for the study as in need of extra school climate support. The larger study had three phases spanning three years: Implementation (Year 1), Sustainment 1 (Year 2), and Sustainment 2 (Year 3). Schools entered the study in three waves of four schools each. The present study was conducted during the first year (Implementation phase) for schools in the first wave. During the Implementation phase, research team members conducted all training of EBP implementers. Therefore, adjustments could be made to the research protocol without affecting the study aims or hypotheses.

Participants

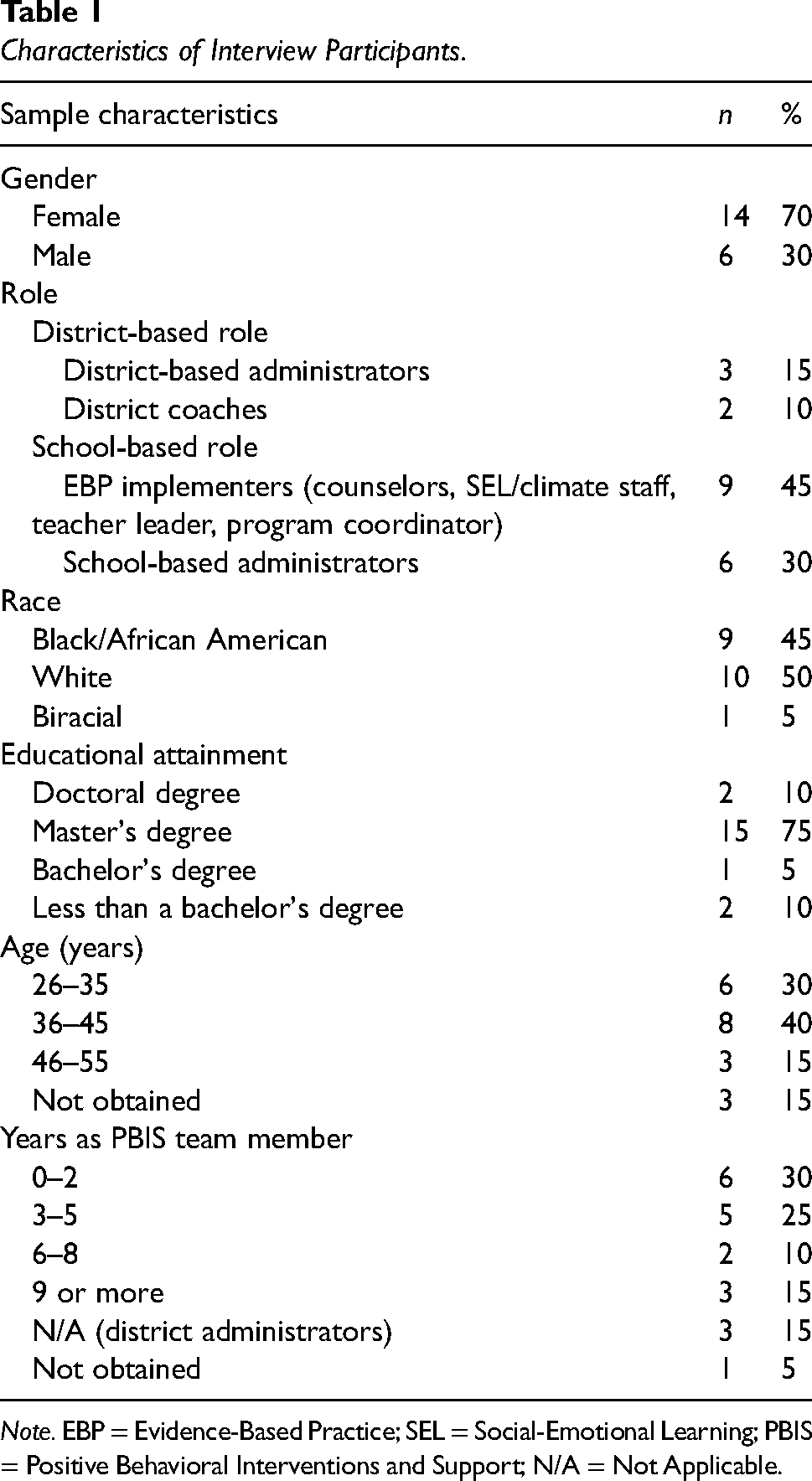

Participants included school district office-based administrators, district coaches (i.e., staff with behavioral health-related backgrounds hired by the district to support the development and implementation of MTSS and Tier 2 and 3 processes), school-based administrators (i.e., principals and assistant principals), and school-based staff implementing EBPs. Interviews were conducted with 20 participants (see Table 1).

Characteristics of Interview Participants.

Note. EBP = Evidence-Based Practice; SEL = Social-Emotional Learning; PBIS = Positive Behavioral Interventions and Support; N/A = Not Applicable.

Interviews were conducted over the phone or via a Health Insurance Portability and Accountability Act (HIPAA)-compliant video platform at the beginning and end of Year 1. The interviews took place while participants and the researcher were home or at work. While many participants were interviewed in private, some may have participated near other people. School-based participants for these interviews were purposively sampled based on their role in the school and intervention, experience, and educational attainment. Sampling was guided by “information power” (Malterud et al., 2016). The research team determined information power through assessment of the quality and specificity of the data collected in terms of the research objectives (Malterud et al., 2016)—namely, rich and nuanced descriptions of school context and experiences to inform appropriate adaptations to study processes and implementation strategies. All those invited to participate in interviews accepted the invitation, except one coach and one school-based staff, both on leave at the time of interviews.

Members of the research team (i.e., university professors and members of the research team) and district administrators (i.e., a Deputy Chief of Prevention, Intervention and Trauma, a Director of Prevention and Intervention, and an Assistant Director of MTSS Tiers 2 and 3) used monthly remote meetings, email communication, biweekly school observations and meetings with school administrators or implementers, and qualitative interviews to gather information, self-reflect, and plan and execute changes to the study. Implementing partners guided the research team on the effects of context on implementation and also fully collaborated with researchers on school, coach and EBP selection, and training.

Data Collection

We conducted semi-structured interviews at the beginning (pre-implementation) and end of Year 1 (post-implementation). A senior, White, female, PhD-level qualitative researcher on the team drafted interview guides, guided by the Consolidated Framework for Implementation Research (Breimaier et al., 2015), to understand perceptions of the inner (i.e., school) and outer contexts for implementation, existing resources/facilitators that could support the implementation process, and perceptions of the role of the school in behavioral and mental health support. These questions also served as “grand tour” questions (Spradley, 1979). The guides also described the EBPs that would be (in pre-implementation interviews) or had been (in post-implementation interviews) implemented, probing to explore perceptions of feasibility, acceptability, and appropriateness. Interview guides were iteratively refined in collaboration with two members of the team (a senior, White, Latino, PhD-level researcher, and a White, female, Master-level researcher) and questions were added to elicit perspectives about the feasibility and acceptability of the sustainment strategies proposed in the study. The Supplementary Materials includes an overview of the interview guides.

A White, female Research Associate who holds a Master of Science in Education, conducted all of the interviews, with training and supervision from PhD-level clinical psychologists from the research team. The interviewer was not involved in delivery of the interventions. However, she was a part of the team providing the service, which could have influenced the interview data. She interviewed virtually, which could have promoted interviewee feelings of confidentiality or created a barrier to establishing trust and rapport. During interviews, she took notes to guide sequencing and appropriate wording of interview questions, ensure that questions were clearly answered, and guide unplanned follow-up questions for clarity, exploration, or elaboration (DeJonckheere & Vaughn, 2019). Interviews were audio-recorded, transcribed by a transcription company, and de-identified. The transcripts were quality-checked against the audio recording and used for coding and analyses. Transcripts were not returned to participants for comment and/or correction. Interviews lasted, on average, 20.25 min (SD = 0.004).

We interviewed 15 participants at pre-implementation and 14 participants at post-implementation (see Table 2). At the school level, we interviewed 75% of the same respondents at both time points. Two Tier 2 team members that were interviewed pre-implementation were principals, and, following recommendations for an “appropriate sample” (Morse et al., 2002), were replaced with assistant principals in the post-implementation interviews, given their familiarity with the implementation process.

Participant Interviews by Time Point.

Note. CICO = Check-in/Check-out.

Data Analysis

As part of the observation and critical reflection part of the AR process, we conducted a hybrid inductive–deductive thematic analysis of the data (Fereday & Muir-Cochrane, 2006; Hamilton & Finley, 2019), informed by the social-ecological framework by Domitrovich et al. (2008). One author used NVivo qualitative data analysis software (QSR International Pty Ltd., 2022) to code interview transcripts both inductively and deductively. Some codes reflected research questions and were developed a priori; other codes that reflected barriers and facilitators identified by participants emerged throughout the coding process. The codes and coded data served as the observation aspect of the AR process. They were reviewed with the research team for discussion and feedback. Interview data were analyzed using Braun and Clarke's (2006) six phases of thematic analysis. Themes were organized into categories based on the conceptual framework. To supplement and verify findings, we compared interview data with meeting notes and emails, which we organized around the conceptual framework. Data from the pre-implementation interviews were analyzed and findings were compared with post-implementation interview data. This analysis catalyzed the critical reflection part of the AR process (Koshy et al., 2011). Together, the research team reviewed the data to ensure that findings were well supported. This article was also reviewed by members of the district staff. Their feedback centered around precision of language and careful framing about CICO.

We made adaptations (or re-planning, in the AR process) to the study based on findings that occurred at two time points, after implementation began, and following the analyses of the second interview and review of study-related documents. To develop adaptations, findings were discussed in regular meetings with district administrators. Partners had collaborative conversations about barriers and how it would be possible to address them within the confines of the context. Psychologists with expertise delivering the interventions with fidelity were part of this process to ensure that adaptations made would be unlikely to compromise fidelity.

Results

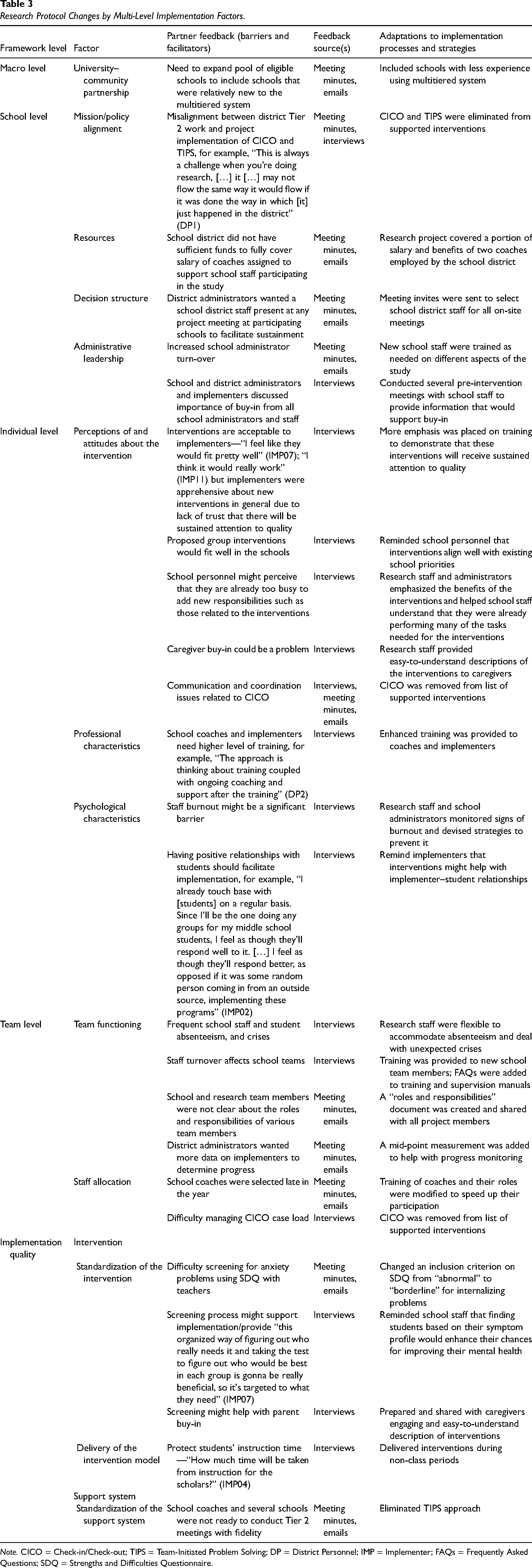

This section presents results regarding barriers and facilitators for each of the multi-level implementation levels. Within each level, we discuss the barriers and facilitators related to each factor (Research Question A). We also list changes made to the study and procedures related to these findings (Research Question B). Table 3 summarizes these barriers and facilitators and lists the sources of these findings.

Research Protocol Changes by Multi-Level Implementation Factors.

Note. CICO = Check-in/Check-out; TIPS = Team-Initiated Problem Solving; DP = District Personnel; IMP = Implementer; FAQs = Frequently Asked Questions; SDQ = Strengths and Difficulties Questionnaire.

Macro Level

University–Community Partnership

In Year 1, school recruitment focused on schools with experience implementing MTSS at Tier 1 with fidelity. Moving into Year 2, district administrators asked for changes to expand the pool of eligible schools to include schools with less experience effectively using MTSS.

School Level

Mission/Study Alignment

One district administrator noted in a pre-implementation interview that the district made progress improving Tier 2 interventions prior to the official launch of the project that may conflict with the implementation of CICO (Crone et al., 2010) as part of the study. The administrator explained that the real-world processes of the district proceeded while the research was still launching. Misalignment was evident through significant barriers to CICO implementation reported in post-implementation interviews. In response, the team removed CICO (Crone et al., 2010) and the related Tier 2 team meeting using the Team-Initiated Problem Solving (TIPS; Newton et al., 2012) approach from the project-supported interventions.

Resources

The school district did not have sufficient funds to fully cover the salaries of coaches assigned to support school staff participating in the study. Project meeting minutes showed several months of hiring struggles and discussions about coaching updates during the first school year of implementation. To mitigate this barrier, the research project covered a portion of salary and benefits for two district coaches, which the district anticipated taking on after the research project ended.

Decision Structure

According to meeting minutes, district administrators wanted an administrator present at all project meetings at participating schools to facilitate sustainment. Thus, electronic calendar invitations were sent to select district administrators for all on-site meetings.

Administrative Leadership

School administrator turnover was greater than originally anticipated. Half of the school principals left their position by the end of the first year. As a result, new school administrators were trained as needed on different aspects of the study.

In addition, participants discussed that schoolwide buy-in would be important to facilitate implementation. In a pre-implementation interview, a district administrator noted the importance of school administrator buy-in and commitment to supporting implementation through things such as “giving their counselor release time to have those groups at those times and frequency” (DP2). A few respondents stated that communicating about the program with all staff might support buy-in so that staff schoolwide understand “the programs and everything that it entails” (Tier Two Team [TTT]13). As a result, the research team conducted several pre-intervention meetings with school staff.

Individual Level

Intervention Perceptions and Attitudes

Almost all pre-implementation interview participants voiced general positivity about the appropriateness or acceptability of the interventions for their context. District administrators named specific ways that this program aligns with existing priorities, including addressing mental health, improving the MTSS process, and strengthening Tier 2. Importantly, two participants voiced the need to monitor the quality of implementation over time. As a result of these concerns, more emphasis was placed on training to support implementation fidelity.

Many participants also raised minor concerns about scheduling and time in pre- and post-implementation interviews. Barriers included conflicting schedules, balancing other responsibilities, and the need to cover classes in the face of insufficient staff. Respondents suggested strategies to mitigate the time challenge such as implementing before or after school (TTT05) or for school administrators to intentionally hold time “sacred” for implementers (TTT13). Strategies such as these were discussed in pre-intervention meetings with staff meant to secure schoolwide buy-in. Research staff and school administrators also emphasized the benefits of the interventions and helped school staff understand that they were already performing many of the tasks needed for them.

A few participants felt that lack of caregiver buy-in and engagement could be barriers for the project. One participant observed that communication about the project increased caregiver buy-in, noting, “when we talk about it, they like it” (IMP15). To address concerns about caregiver buy-in, research staff made sure that caregivers received clear, comprehensible information about the interventions.

In post-implementation interviews, most participants who had roles related to CICO reported implementation challenges related to communication and coordination. For example, two implementers were unsure whether they actually had a CICO coordinator at their school. One school administrator mentioned an issue related to communication with parents and teachers regarding CICO. We did not make changes in direct response to these, since, as previously mentioned, CICO was removed from the list of project-supported interventions because of alignment issues.

Professional Characteristics

School district administrators shared that district coaches and EBP implementers needed consistent training. In response, the project team enhanced the training support provided to district coaches and implementers. Coaches and school staff were provided access to virtual training that could be reviewed anytime. In addition, trainings were edited at the end of the first year of implementation to clarify anything that emerged as confusing.

Psychological Characteristics

When considering other programs that have launched in their schools recently, respondents reported the following as the biggest potential barriers to implementation: staffing issues and burnout, too many programs starting without attention to implementation, and lack of cultural relevance. Importantly, however, two participants voiced that existing positive relationships with students will support successful implementation. One implementer (IMP02) explained, “I feel as though they’ll respond better, as opposed to if it was some random person coming in from an outside source implementing these programs.” Similarly, another EBP implementer (IMP11) was concerned about the dynamics of the group (e.g., students bullying) if implemented by an external adult but seemed less concerned if someone from the school was facilitating. As a result of these findings, researchers and school administrators emphasized the importance of strong relationships and monitored signs of burnout and devised strategies to prevent it.

Team Level

Team Functioning

In pre-implementation interviews, a few participants stated that team flexibility for training and implementation was necessary, especially for child absences, staff absences, and crises that arise and must be prioritized. As a result, research staff were proactive to accommodate absenteeism and deal with unexpected crises. For example, research staff offered multiple trainings at times that match school staff's availabilities and provided reminders about times of year that typically have lower attendance in order to proactively plan intervention schedules. Research staff also urged intervention teams to begin interventions as early as possible so that unexpected changes to the schedule would not impede implementation.

According to meeting minutes, school participants and members of the research team were not clear about the roles and responsibilities of various team members. In response, the project team developed a clarifying document and shared it with district personnel.

In post-implementation interviews, a few participants noted that implementer turnover could be an implementation challenge, especially for schools that will not receive support from the research team the following year. Training was provided to new school team members and frequently asked questions were added to training and supervision manuals to mitigate barriers related to turnover.

District administrators expressed a desire to receive more data to monitor intervention success and progress. This also came up in interviews with a few participants focused on information about implementation quality. In response, the study was adapted to include a mid-point measure to help with progress monitoring.

Staff Allocation

Concerns related to staff allocation surfaced in Year 1. According to meeting minutes, school district coaches were identified later in the year than originally planned. In addition, in pre-implementation interviews, two participants raised concerns about consistency and sufficient staff. As a result, training of district coaches and their roles were modified to speed up their participation.

Implementation Quality

Standardization of Implementation

According to team meeting minutes and emails, the research team had difficulty screening for student anxiety problems with teachers using the Strengths and Difficulties Questionnaire (SDQ; Goodman et al., 2000). The team struggled to identify eligible children for the CATS intervention using the initial inclusion criteria. There were several potential participants who had a history of anxiety-related problems and did not meet the threshold (abnormal level) criteria for inclusion. As a result, the research team secured permission from the IRB to change an inclusion criterion on SDQ (Goodman et al., 2000) from “abnormal” to “borderline” for internalizing problems.

Although one pre-implementation interview participant reported that screenings seemed like a barrier to implementation, two other participants noted that screenings may support successful implementation. One stated that screenings allow implementation to be appropriately targeted. The research team then emphasized during training that finding students based on their symptom profile would enhance their chances for improving student mental health.

Delivery of the Intervention

In pre-implementation interviews, many participants raised minor concerns about scheduling. While some concerns were general, two participants were concerned about time taken from instruction. As a result of these concerns, the research team then urged EBP implementers to deliver interventions during non-class periods, such as lunch.

Discussion

The purpose of the study was to collaborate with implementing partners during the preparation phase of the study to better understand barriers and facilitators to implementation of Tier 2 EBPs in the school context and to adapt study process and implementation strategies to re-align study goals and procedures with district priorities. We hoped that this examination of the context would support adaptations to the larger clinical trial that would both address the barriers and leverage the facilitators (Fernandez et al., 2023). The study is similar to other work employing participatory approaches for a changing context, including the integration of research collaborations to inform implementation processes (Jolles et al., 2022), processes for identifying barriers and facilitators (Fernandez et al., 2023), and the use of guided discussions to document implementation, tailor implementation, and inform adaptations during EBP implementation (Finley et al., 2018; Glasgow et al., 2020; Lewis et al., 2018; Powell et al., 2017).

The main adaptation to the study at the macro level (Domitrovich et al., 2008) included expanding the inclusion criteria to include schools with less experience effectively implementing the multitiered system, which required more resources dedicated to training. This adaptation enhanced the external validity of the study (Green & Nasser, 2018) because the new school sample better reflected the characteristics of all district schools.

At the school level, the shift to include schools with less experience with MTSS made it difficult to implement individualized EBPs that required a more complex implementation approach than the group-based interventions. This is consistent with research indicating that easy-to-implement EBPs are more likely to be successfully implemented and sustained than more complex EBPs (Greenhalgh et al., 2004). The district was open to the creation of internal capacity for implementation but had limited financial resources for this development. Financial barriers at the school and district level have been repeatedly found to impede implementation and sustainment of EBPs (Cammack et al., 2014). District administrators wanted all school leaders to be included in key meetings and communications with district coaches and implementers to increase buy-in, to create the conditions for school leaders to continue to support implementation after the study was over, and to counteract the negative effects of school administrator turnover. The literature supports this request, showing that EBP implementation and sustainment can be facilitated by having the necessary buy-in and support from school leadership and peers (Langley et al., 2010) and that administrator and implementer turnover can be a significant problem for sustained implementation (Mellin & Weist, 2011).

Adaptations to study processes and implementation strategies included project support of CICO (Crone et al., 2010) and the TIPS approach (Newton et al., 2012) during Tier 2 meetings, partially covering salary and benefits for two district coaches, including school administrators in key meetings and communications, conducting extra meetings with school staff, and familiarizing new school administrators with the goals and procedures of the study. The elimination of CICO and TIPS was largely related to misalignment between the narrower scope of the research project and the wider context of district-wide implementation and scaling up of interventions, although other issues such as staff allocation and communication and coordination played a role.

At the individual level, interviewees emphasized the importance of implementing with quality, fit of interventions, and caregiver buy-in, as well as need for comprehensive training, and potential staff burnout that could affect implementation. Interviewees’ perspectives are supported by findings related to the need for a high level of training for implementers (Herschell et al., 2010), addressing implementer perceptions about implementation difficulty and burnout (Lyon et al., 2011), and devising strategies for increasing buy-in (Haine-Schlagel & Walsh, 2015).

Adaptations at the individual level included increasing the level of training to enhance fidelity, addressing differences in perceptions about implementation, monitoring and addressing signs of implementer burnout, and providing caregivers with succinct, understandable information about the interventions.

Regarding the team level, respondents stated that school teams needed to be ready to deal with frequent student absenteeism, staff turnover, and staff difficulty managing crises and caseload of students in Tier 2. Roles and responsibilities of team members were not clear, and interviewees noted that schools needed to have access to timely data to measure progress. Also, the district encountered various obstacles to designating district staff to serve as coaches to support EBP implementers at the school level, which delayed the training of coaches. Here again, feedback was supported by the literature. For example, interdisciplinary teams have become the norm rather than the exception in schools (Markle et al., 2014), but team functioning is affected by staff turnover and lack of time for regular meetings (Eiraldi et al., 2015; Markle et al., 2014). Also, administrative support, having consistent resources (Markle et al., 2014), staffing, staff stability and buy-in (Shelton et al., 2018), team use of data (McIntosh et al., 2013), clarifying roles and responsibilities (Markle et al., 2014), and developing effective mechanisms for frequent and open communication (Nigg et al., 2012) affect how school teams function.

Adaptations at the team level included emphasizing flexibility in training and implementation to manage absenteeism and turnover, providing timely training to new implementers, clarifying roles and responsibilities, and adding a new measurement point to track progress.

At the implementation quality level, school staff had difficulty using the SDQ to screen for symptoms of anxiety, finding the right time to deliver mental health supports, and difficulty running Tier 2 teams. Interviewees reported that the screening process could be used to support implementation.

The cut-off score for identifying children at risk for anxiety disorders according to teacher report via the SDQ (Goodman, 2001) was not sensitive with the sample of students in the study. A minor adjustment to the cut-off score for including students with anxiety into the study solved this problem. Several participating schools had difficulty running Tier 2 team meetings using the problem-solving TIPS approach, as it became apparent that they had not yet acquired the basic foundations of the approach (Newton et al., 2009) nor the personnel to effectively run meetings and coordinate interventions with Tier 2 implementers. An adjustment was made to eliminate TIPS from the study.

Recommendations for Sustaining Strong Collaboration with Schools

Implementation research in schools requires close collaboration between researchers and implementing partners, especially for goal alignment. Goal misalignment can happen between the time the project is conceived and the start of research activities. To a large extent, the success of the research rests on the strength of the relationship between researchers and implementing partners at the school and district levels. Researchers are advised to establish a community–academic partnership (Drahota et al., 2016) at project conception and use participatory approaches (Pellecchia et al., 2023) and appropriate implementation frameworks to guide the assessment of barriers and facilitators in the school context. The AR process utilized in the present study is an example of a participatory approach. Our hope is that the key barriers and facilitators we identified in the course of this work and how we collaboratively addressed them (summarized in Table 3) will be a resource for others embarking on similar efforts. The evolution of the community–academic partnership can be steered and sustained with the help of partnership frameworks such as the partnership synergy framework (Cramm et al., 2013; Lasker et al., 2001). Partnerships can be effective if based on mutual trust, respect, and acknowledgment of each other's expertise.

Limitations

This work was conducted in a sample of four schools within a single school district, so may not generalize to other locales, and occurred in the context of the ongoing COVID-19 pandemic, which was likely associated with unique barriers. In addition, we could not fairly compare whether issues raised in pre-implementation were still relevant post-implementation since we did not systematically ask about the presence of each barrier. Participants’ desire for support as part of the research study may have shaped some interview responses about barriers and facilitators. Practical constraints, including time and the need to maintain the key components of the interventions that support evidence-based outcomes, imposed limitations regarding adaptations.

The sample size was small but possessed a large amount of “information power” (Malterud et al., 2016) in relation to the aims of the study and was, therefore, sufficient. Our purposive sampling supported variation across experience, educational attainment, and role in implementation. We sampled over 35% of school-based staff implementers, one administrator from each participating school, and three out of four coaches (one was on leave).

Conclusion

The shared process sought to create a shared vision for the implementation and sustainment effort envisioned in the larger study and to increase the likelihood for sustainment (Fernandez et al., 2023). Our use of a participatory research approach (Drahota et al., 2016) in a large urban school district provides insight into how to re-align study goals with evolving organizational priorities within the district. In this study, most adaptations to implementation processes and strategies resulting from this process focused on re-training, providing more information to coaches and implementers on procedures and interventions, and re-allocating resources for the study. We hope this work will add practical information to the literature on methodologies for adapting implementation strategies (Fernandez et al., 2023; Lewis et al., 2018; Powell et al., 2017) and provide some guidance for other school mental health researchers engaging in partnered research.

Supplemental Material

sj-docx-1-irp-10.1177_26334895241279503 - Supplemental material for Preparation for implementation of evidence-based practices in urban schools: A shared process with implementing partners

Supplemental material, sj-docx-1-irp-10.1177_26334895241279503 for Preparation for implementation of evidence-based practices in urban schools: A shared process with implementing partners by Ricardo Eiraldi, Rachel Comly, Courtney Benjamin Wolk, Quinn Rabenau-McDonnell, Barry L. McCurdy, Muniya S. Khanna, Abbas F. Jawad, Jayme Banks, Stacina Clark, Kristina M. Popkin, Tara Wilson and Kathryn Henson in Implementation Research and Practice

Footnotes

Acknowledgements

We acknowledge the effort and commitment of busy school and school district staff, which has made this study possible.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Research reported in this publication was supported by the National Institute of Mental Health of the National Institutes of Health under Award Number R01MH122465 to Ricardo Eiraldi (principal investigator). The content is solely the responsibility of the authors and does not necessarily represent the official views of the National Institutes of Health.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.