Abstract

Background

External implementation support (EIS) can aid implementation and scale-up efforts, but less has been reported about the experience of those receiving EIS, such as the feasibility and usability of participating in the support process.

Method

From November 2016 to April 2022, data were collected from the support participants across 13 regions in North Carolina and South Carolina implementing the Triple P system of interventions and the regional support team members who provided EIS to these partners. The experience of participating in EIS was assessed using measures of acceptability, appropriateness, accessibility, quality of delivery, feasibility, likelihood and actual use of support materials received, degree of collaboration, and frequency of contact. Mann–Whitney U tests or Kruskal–Wallis tests were conducted to explore differences in these measures across a variety of regional characteristics and contexts.

Results

Support participants generally found EIS to be accessible, acceptable, appropriate, feasible, and delivered with high quality across different states, regions, and over the course of the support relationship. Support was generally provided 1–2 times per month and collaboration between regional support teams and regional Triple P partners was rated highly significant differences between support participant experiences were generally limited to ratings of support accessibility, engagement with data collection processes, and number of monthly contacts.

Conclusions

This pattern of findings suggests that EIS as provided by regional support teams is feasible for support participants across a diversity of contexts. Additional research on EIS would help refine the field and illuminate promising practices and mechanisms of change to accelerate successful and sustainable implementation.

Plain Language Summary Title

External Implementation Support is Feasible for Participants

Research shows that external implementation support (EIS) can help successfully implement and scale up interventions. However, less is known about the experience of those receiving EIS, which may provide clues about the range of effectiveness reported from EIS studies. This report examines information from participants across 13 regions in North Carolina and South Carolina and their EIS support team about the feasibility of engaging in the support relationship. Ratings across several measures suggest that EIS is experienced favorably and is feasible for participants across different contexts. Further research into the feasibility and usability of other support models would help refine the field and illuminate insights to accelerate successful and sustainable implementation.

Keywords

Introduction

External implementation support (EIS) and related program-specific activities (e.g., technical assistance, facilitation, knowledge brokering [Albers et al., 2020; Aldridge, Roppolo, Brown, et al. 2023]) are key strategies utilized by implementation support practitioners (ISPs; Albers et al., 2020) to support and facilitate capacity development among organizations and systems. EIS is often designed to support the development of general capacity alongside programmatic-specific capacity and therefore can be deployed in support of a wide range of programs or innovations. In contrast to internal change agents, such as leaders and implementation teams within an organization, external ISPs operate from outside the organizational environment being changed and are thus able to play a distinct role and bring a unique perspective in implementation and scale-up efforts (Leeman et al., 2017; Meyers et al., 2012; Waltz et al., 2015). Although research has demonstrated the benefits of EIS for achieving programmatic outcomes (National Academies of Sciences, Engineering, and Medicine, 2019), less has been reported about the experiences of receiving EIS, such as the feasibility and usability of participating in the support process. This gap in our understanding may limit the adoption of EIS, the willingness of participants to engage in EIS, and the optimization of EIS for specific needs and contexts.

EIS dynamically integrates a multitude of change mechanisms and practitioner competencies to support improvement at individual, team, organizational, and system levels, often over longer-term engagements (Aldridge, Roppolo, Brown, et al., 2023; Metz et al., 2021). It can be challenging for ISPs to appropriately tailor these practice elements across support interactions, often provided under a range of project and funding conditions. The pattern of change mechanisms, practice activities, and competencies from which ISPs draw may be influenced by features of local contexts, such as specific implementation goals, needs, and preferences; existing motivation and capabilities for change; and leaders’ and partners’ histories, buy-in, and support for change processes. ISPs’ navigation of these complex environments may lead to a variety of experiences for those participating in the support process.

Aldridge, Roppolo, Brown, et al. (2023) proposed 10 core practice components (CPCs; see Table 1) and a conceptual model for how these CPCs, when used in alignment with practice principles and theory, are hypothesized to influence a set of practice outcomes:

the working alliance with local leaders and implementation teams; implementation best practice knowledge, skills, and abilities among local leaders and implementation teams; local system capacity and performance for implementation and scale-up; and the abilities of local leaders and teams to self-regulate effective implementation processes over time and without dependence on EIS.

Core Practice Components (CPCs) and Practice Activities

Note. From Trajectory of External Implementation Support Activities across Two States: A Descriptive Study by Aldridge, Roppolo, Chaplo, et al. (2023), Implementation Research and Practice. CCA = Community Capacity Assessment; IDA = Implementation Drivers Assessment; IOCA = Intermediary Organization Capacity Assessment; PDSA = Plan-Do-Study-Act.

Activities considered to be key to effective application of CPC.

While in use for more than 5 years, the implementation capacity for Triple P (ICTP) practice model was iteratively improved, refining sets of practice activities for each CPC based on data and ISPs’ practice experience. Long-term descriptive data of ICTP regional support teams’ application of the practice model within the ICTP projects has been recently published (Aldridge, Roppolo, Chaplo, et al., 2023). The authors emphasized the need to further explore the feasibility of engaging in tailored, long-term EIS from the participant perspective.

Since 2016, The Impact Center at FPG's ICTP (https://ictp.fpg.unc.edu) projects have adopted this model and used it to provide EIS to regional leaders and implementation team members (support participants) in North Carolina and South Carolina implementing an evidence-based, multilevel, public health approach to parenting and family support (Triple P—Positive Parenting Program; Prinz, 2019). Support participants in North Carolina Triple P are organized within 10 regions for Triple P scale-up. Regions were onboarded to the project as cohorts based on their readiness to engage in the support process (see Aldridge, Roppolo, Chaplo, et al., 2023 for more on the readiness assessment process). Implementation support began in November 2016 for a single region and expanded to all 10 regions by October 2019. All regions received support tailored to their unique contextual needs, capacities, strengths, and barriers. In July 2020, regions were organized into support tiers, either receiving intensive, broadly focused support across multiple areas of implementation performance; brief, narrowly focused support across a single or limited area(s) of implementation performance; or universal support via dissemination of materials, resources, or strategies made widely available to all stakeholders. EIS was provided by regional support teams, typically consisting of two ISPs trained and supported by senior members of the ICTP project team (for more information on professional development and training, see Aldridge, Roppolo, Chaplo, et al., 2023). In South Carolina, ICTP regional support teams partnered with coaches from a South Carolina intermediary organization (Strompolis et al., 2020) to provide EIS for Triple P scale-up, beginning with one region in October 2018 and increasing to three regions by May 2019. Region selection followed a similar request for interest and readiness assessment process as in North Carolina, including exploration of willingness and ability to participate in support and organizational fit of Triple P. In July 2020, two regions transitioned from receiving EIS from ICTP to the South Carolina intermediary organization, and the third transitioned in June 2021, and data collection ceased, resulting in a varied duration of support across regions, from 20 to 66 months.

Bowen et al. (2009), when discussing situations where a feasibility study may be indicated, detail two conditions that apply to the ICTP practice model. First, Bowen and colleagues describe that in situations where “community partnerships need to be established, increased, or sustained” (p. 453), a feasibility study may be indicated. A core feature of ICTP implementation support has been establishing close partnerships and a working alliance with community-based support participants. Aldridge, Roppolo, Brown, et al. (2023) identify this task as a foundational element of EIS. Second, Bowen and colleagues describe that when “there are few previously published studies or existing data using a specific intervention technique” (p. 453), a feasibility study may be indicated. ICTP projects offer the first proactive, systematic use of Authors’ proposed practice model. Moreover, as discussed above, little has been published concerning the perspective of the support participant in EIS. Finally, examining the feasibility of engaging with EIS may be important before justifying a summative evaluation of the associations between EIS CPCs and practice outcomes.

Capitalizing upon the extensive data collected through the ICTP projects, this report explores the feasibility and usability of EIS as operationalized within the ICTP projects. The report explores to what extent support participants find EIS: (1) accessible, (2) delivered with high quality, (3) appropriate for their needs and goals, (4) acceptable in their current context, and (5) provides feasible strategies. Additionally, the report explores whether support participants (6) were likely to or (7) actually used resources provided through support in their day-to-day implementation activities. The (8) degree of collaboration and (9) frequency of contact between support participants and ICTP regional support team members were also explored. Administrative data on these characteristics of support were collected from ICTP regional support team members and their support participants from November 2016 to April 2022 and were reviewed and evaluated post hoc to explore the feasibility and usability of EIS as operationalized within the ICTP projects.

Method

Participants

Two sets of participants provided data for the present report: (1) support participants in North Carolina and South Carolina and (2) regional support team members providing EIS. Participation in data collection is voluntary for support participants and expected for ICTP regional support team members as part of their usual job responsibilities. Due to shifts in staffing, the data provided from either group of participants may not be from the same individual(s) but is reporting on the same team or organization.

Support Participants

Support participants include both leaders and implementation team members working to scale up Triple P implementation. Leaders have decision-making authority and responsibility for demonstrating commitment to scale-up, the co-creation process, selecting and aligning interventions to respond to community needs, and reviewing and recommending solutions for shared implementation barriers and system needs. Implementation team members are frequently Triple P coordinators: staff with responsibility for day-to-day implementation across the region, including practitioner support, data collection, community engagement and outreach, and implementation support for community Triple P provider organizations (North Carolina Triple P Partnership for Strategy and Governance, 2020). The number of support participants providing data varies over time and across sites; between one and three support participants at each region participated in voluntary data collection activities. When more than one support team member provided data, responses were averaged and reported at the team level.

Regional Support Team Members

Regional implementation support team members are ISPs with backgrounds in psychology, social work, public health, prevention science, and/or implementation science. Regional support teams generally consist of two ISPs. All regional support team members are required to participate in data collection activities as part of their regular duties and responsibilities and responses are averaged and reported at the team level. In addition to foundational and ongoing professional development on the use of the practice model (see Appendix C of Aldridge, Roppolo, Brown, et al., 2023), ISPs engage in monthly peer-to-peer practice coaching to enhance the use of support practices that demonstrate commitment to practice model values, principles, and core components and to build ISP confidence and competence to apply effective support practices across diverse contexts.

Procedures

ICTP Support Tracking

To capture details of EIS with support participants, a member of the regional support team completed an online contact log following every support event. Data included the date, format, and estimated dosage of the support. For additional information about the ICTP tracking process, please see Aldridge, Roppolo, Chaplo, et al. (2023). Only one member of the regional support team is expected to complete the support tracker.

North Carolina Monthly Online Surveys

Regular feedback on the feasibility and usability of ICTP implementation support was collected using a Qualtrics survey distributed monthly to support participants. After completing an informed consent process for these data to be used for quality improvement and future research purposes, support participants were asked to rate implementation support on implementation outcomes (Proctor et al., 2011) including quality, feasibility, appropriateness, likelihood of using strategies and materials from EIS, accessibility, and acceptability. Beginning in July 2020, the data collection process was changed from monthly to requests for feedback sent only after significant support events, such as intensive coaching sessions, action planning activities, or use of adult learning strategies.

North Carolina Quarterly Online Surveys

To gather information on the collaborative nature of the support relationship, sustainability of Triple P, the actual use of strategies and materials from EIS, and contextual changes in the region, quarterly surveys were administered to both regional support team members and support participants, who provided informed consent for these data to be used for future research purposes.

South Carolina Monthly and Quarterly Online Surveys

Beginning in 2019, the South Carolina intermediary organization assumed distribution of monthly and quarterly surveys to support participants in South Carolina. They opted to decrease the number of questions to a single item for each implementation outcome to reduce administrative burden and to remove questions about the actual use of implementation strategies provided through ICTP support and the working alliance from the quarterly survey. Survey adaptations did not result in a statistically significant difference between the multiple-item and single-item scores (see Measures below for Wilcoxon signed rank test results).

Measures

Measures of Implementation Support Feasibility

Nine variables were assessed to explore the feasibility of participating in EIS. See Table 2, for example, items, survey adaptations, and details of measure performance.

Variables Assessing Implementation Support

Note. Items in bold were adopted in 2020.

EIS Acceptability, Appropriateness, and Feasibility. Three 4-item measures, Acceptability of Intervention Measure (AIM), Intervention Appropriateness Measure (IAM), and Feasibility of Intervention Measure (FIM; Weiner et al., 2017) were used in monthly online surveys of support participants until July 2020, when these measures were reduced to single item in response to feedback regarding the administrative burden of surveys. The individual items were chosen based on the highest variability in past responses, the judgment of the authors and an external evaluation partner, and edited for clarity. A Wilcoxon signed rank test revealed no statistically significant difference between the multiple-item and single-item scores, suggesting the adapted item performs similarly to the original multi-item measure.

Quality of EIS. Five items to measure the quality of implementation support delivery were drawn from Dane and Schneider (1998), who conceptualized quality as an aspect of fidelity that is comprised of five components: skill in using a technique or method, effectiveness, enthusiasm, preparedness, and practitioner attitude. As with the above variables, the measure of quality was reduced to a single item in July 2020. A Wilcoxon signed rank test again revealed no statistically significant difference in multiple-item and single-item ratings for quality, suggesting the adapted item performs similarly to the original multi-item measure.

Likelihood of Using Strategies and Materials from EIS. To understand the perceived utility of the strategies and materials provided by ICTP support, a single item was rated by support participants following support events or monthly and scored on a 5-point Likert scale. In July 2020, as part of the overall effort to reduce the administrative burden of surveys, this item was dropped.

Accessibility of EIS. Whether it was easy for support participants to get the implementation support they needed was assessed using a single item, “It was easy to get the support we needed,” asked of regional Triple P partners following support events or monthly and scored on a 5-point Likert scale. In July 2020, to reduce the administrative burden, this item was moved from monthly to quarterly surveys. A Wilcoxon signed rank test revealed no statistically significant difference between accessibility ratings before and after this change in data collection, suggesting the adapted item performs similarly to the original multi-item measure.

Actual Use of Strategies and Materials from EIS. The extent to which strategies and materials provided by ICTP support are used by regional Triple P partners was rated by a single item, “How often have you used the strategies or resources provided by the Implementation Support Team?,” scored on a 5-point Likert scale by regional Triple P partners quarterly.

Collaboration and Contact between Regional Triple P Partners and Regional Support Teams. Regional support team members completed two additional measures of the working relationship quarterly: Chilenski and colleagues’ (2016) 7-item Collaboration with External Providers of Implementation Support measure and a 2-item measure of the frequency of contact between regional support team members and regional Triple P partners. The number of contacts was scored as the average number of interactions per month from regional support teams and regional Triple P partners.

Measures of Support Participant Characteristics

Eight characteristics were examined to explore the feasibility of participating in EIS among different regions. See Table 3 for a summary of regional Triple P support participants’ characteristics.

Regional Triple P Support Participant Characteristics

Note. South Carolina Triple P regions were not onboarded to ICTP implementation support in cohorts.

State. North Carolina (n = 10) and South Carolina (n = 3) support participants were compared to examine whether ratings differed by state. Data from support participants within each of the 13 regions were averaged if more than one support participant provided data.

Onboarding Cohort to ICTP Support. The onboarding cohort was also examined as a between-groups level of analysis for North Carolina (n = 10) support participants. Regions were onboarded to the project based on their readiness to engage in the support process with earlier cohorts determined to be more ready.

Dose of ICTP Support. Regions were organized into four groups according to the average monthly dose of support provided to regional Triple P partners (high dose: 5 or more hours; medium dose: 3–4 h; low dose: 2–3 h; and very low dose: less than 2 h) to explore the impact of dose of ICTP support. The dose was calculated as the total number of hours reported in the online contact logs for each region divided by the number of months of the support relationship. Cut points for binning were chosen to ensure at least three cases per group.

Participation in Data Collection. Regions were organized into three groups according to the average number of surveys completed by regional Triple P partners per year (high participation: more than 9 surveys per year; medium participation: between 5 and 9 surveys per year; low participation: less than 5 surveys per year) to explore the impact of engagement with support. The maximum number of surveys is 12: 8 monthly surveys and 4 quarterly surveys. Cut points for binning were chosen to ensure at least three cases per group.

EIS Tiers of Support. Regional data from July 2020 to April 2022 were coded as intensive support or brief support (no regional team was assigned to the universal tier during this period) to explore the ICTP tiered model of support. If regions changed tiers, changes to their data were coded accordingly.

Historical or Point-in-Time. Data were combined into ten 6-month blocks (July–December 2017, January–June 2018, July–December 2018, etc.), to examine whether there was a point-in-time effect on ratings, such as refinement of the practice model over time or changes to EIS during the COVID-19 pandemic.

Trajectory of Support. Data from regions were binned into 6-month increments (e.g., data from months 1–6, 7–12, 13–18, etc. of support within each region were pooled) to explore changes in variable ratings across the trajectory of the support relationship, such as newer versus more mature relationships. The 6-month increment was chosen to reflect the pattern of CPC use noted by Aldridge, Roppolo, Chaplo, et al. (2023).

Regional Staffing Turnover. Administrative records were reviewed, and regional support team members indicated months where staffing turnover or vacancies were a hindrance to EIS. Data reported during turnover or ongoing team vacancies were compared with data from months or quarters with no reported staffing challenges.

Analyses

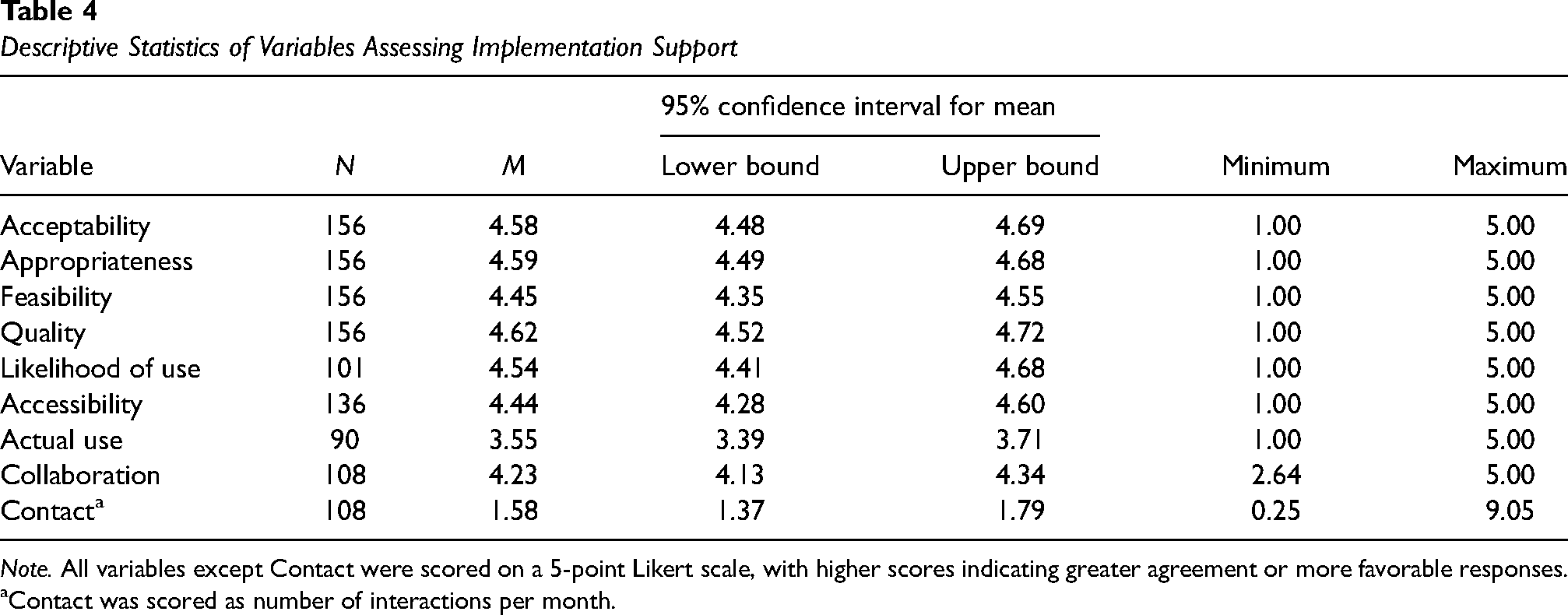

As the data for all variables were not normally distributed, non-parametric Mann–Whitney U tests or Kruskal–Wallis tests were conducted using IBM SPSS Statistics (Version 28) to explore whether experiences receiving EIS from ICTP regional support team members varied by support participant characteristics. To limit Type 1 errors, Bonferroni adjustments were used to set more stringent alpha levels for comparisons. Comparison groups with fewer than two cases were excluded from analyses. Table 4 provides descriptive statistics of the variables assessing implementation support.

Descriptive Statistics of Variables Assessing Implementation Support

Note. All variables except Contact were scored on a 5-point Likert scale, with higher scores indicating greater agreement or more favorable responses.

Contact was scored as number of interactions per month.

Results

State

A Mann–Whitney U test revealed no significant differences between North Carolina and South Carolina support participant ratings for any variable, U = 748.5, z = .014, p = .988, r = .0014.

Onboarding Cohort to ICTP Support

Between-groups level of analysis for North Carolina regional support teams using Kruskal–Wallis tests with a Bonferroni adjusted alpha level of .01 (.05/5) revealed a statistically significant difference for average number of monthly contacts, χ² (4, n = 95) = 20.915, p < .001. Support participants in cohort 1 received significantly more average monthly contacts (Mdn = 1.5 contacts per month) than later cohorts 3 and 4 (both Mdn = 1.0).

Dose of ICTP Support

A Kruskal–Wallis test with a Bonferroni adjusted alpha level of .0125 (.05/4) revealed a significant difference for the average number of monthly contacts, χ² (3, n = 108) = 13.211, p = .004. High-dose regional partners received more monthly contacts than those receiving medium-dose of ICTP support (Mdn = 1.5 vs. 1.125).

Participation in Data Collection

A Kruskal–Wallis test with a Bonferroni adjusted alpha level of .0167 (.05/3) revealed a significant difference for accessibility, χ² (2, n = 136) = 8.348, p = .015, and average number of monthly contacts, χ² (3, n = 108) = 13.211, p = .004. The average rating of accessibility was lower from low participation compared to medium participation partners (Mdn = 4.1 vs. 5.0). Regional support team members also reported fewer monthly contacts for support participants with low data collection participation compared to medium or high participation (Mdn = 1.0 vs. 1.5 for both).

EIS Tiers of Support

A Mann–Whitney U test was used to compare variable scores from intensive or brief support tiers and revealed no significant difference in scores for any characteristic, U = 388.5, z = 1.867, p = .062, r = .256.

Historical or Point-in-Time

A Kruskal–Wallis test with a Bonferroni adjusted alpha level of .005 (.05/10) revealed no statistically significant differences for any variable across time when adjusted by the Bonferroni correction for multiple tests, χ² (9, n = 156) = 10.380, p = .321.

Trajectory of Support

A Kruskal–Wallis test revealed no statistically significant differences for any variable across the support relationship when adjusted by the Bonferroni correction for multiple tests, χ² (10, n = 136) = 15.768, p = .106.

Regional Staffing Turnover

A Mann–Whitney U test revealed a significant difference in ratings of acceptability (U = 1833.0, z = 2.339, p = .019, r = .187), with higher ratings during times of reported staffing changes compared to times of staffing stability (Mdn = 5.0 vs. 4.875).

Discussion

The current report examines the feasibility and usability of engaging in EIS through analysis of over 5 years of data collected by the ICTP projects in North Carolina and South Carolina. Results suggest that support participants found implementation support to be accessible, acceptable, appropriate, feasible, and delivered with high quality. Regional support team members reported strong collaboration with support participants and provided support 1–2 times per month. Altogether, this pattern of findings suggests that the ICTP practice model is being experienced favorably by those receiving support across different states, over time including during COVID-19, throughout the support relationship, and across tiers of support.

Notable differences in ratings across support participant characteristics warrant further discussion and interpretation. Accessibility of EIS ratings was lower among teams with low (vs. medium) participation in data collection and during periods with staff turnover. Engaging in data collection and accessing EIS may be more difficult when a region is overwhelmed or at low staffing capacity to fully connect with support. Relatedly, average monthly contacts were lower for regions with low participation in data collection activities and regions with higher monthly contacts received a greater dose of support per month. The average number of monthly contacts was also highest among the earliest support participants, who were from regions determined to be more ready for engaging in support; regions determined to be less ready to engage in support were onboarded later and ultimately participated in fewer monthly contacts. Low participation in data collection activities might be reflective of factors that create challenges to accessing support (e.g., dedicated staff availability or competing priorities) or that these support participants were less engaged with support activities. Accessibility, being rated higher during periods of stability (i.e., no staff turnover) supports this interpretation. Actual use of resources and strategies adopted during support was lower than the perceived likelihood of using them. This may reflect the differences between behavioral intentions and behavioral follow-through in complex community scaling initiatives or a need for stronger behavioral support in the application and coaching of strategies and resources provided during support.

Limitations

These findings must be considered within the same context and similar limitations as those described by Aldridge, Roppolo, Brown, et al. (2023) in their report on the trajectory of EIS, namely that these data are from one specific model of EIS used across practice settings in two states deploying a regional, cross-sector scale-up of an evidence-based system of parenting and family supports. The practice model itself evolved through three iterations of CPC operationalization during the period under investigation. There were no comparison groups, randomization, or other controls. Generalization of findings is therefore cautioned. Except for regional Triple P partners’ collaboration with regional support teams, all data were self-reported, raising the risk of social desirability bias. Teams, both among support participants and ICTP regional support teams, were not static over time and changes to team makeup and processes for reporting (such as the number of staff members completing the surveys), could bias the data. Additionally, Triple P partners’ collaboration is also built into the program design—participation in EIS was integrated into support participants’ funding requirements within 2–3 years of the start of data collection. As data collection on the part of support participants is voluntary, there are missing data points across regions and data submission patterns (number of completed surveys, timeliness) vary. Finally, ISPs from the Impact Center at FPG were not the only members of regional support teams during the 5 years of data collected for this report. The backgrounds, experiences, and parallel activities of ISPs from partner organizations may have influenced the perceived feasibility and usability of ICTP support during the times in which they were engaged.

Implications for Implementation Practice

Findings in this report support the feasibility of participants from this practice setting to engage with this model of EIS, regardless of differences in regional or organizational characteristics. One of the intentions of the ICTP practice model is that ISPs tailor support according to contextual differences across support participants. Understanding the feasibility and usability of participating in EIS may help clarify interpretations about the reported impacts of EIS on implementation and program outcomes. Moreover, examining the feasibility of engaging with EIS might be important before justifying a summative evaluation of the associations between CPCs and practice outcomes in any EIS practice model. Funders and policymakers may also want to consider feasibility and utility before requiring, and during, engagement with EIS.

Considerations for Future Research

This report finds support for the feasibility of participating in a single, well-operationalized practice model for EIS in a particular contextual environment. Given the growing number of EIS models, frameworks, and practice profiles in the field of implementation science, further research examining the feasibility and usability of other well-operationalized EIS models would help refine the field and illuminate promising practices and mechanisms of change. Additionally, we could learn more about the experience of ISPs engaged in this work: to what extent is adhering to this model feasible for ISPs? This information would be useful given the concerns of burnout and resiliency among ISPs (The Center for Implementation, 2022). Examination of the effectiveness of ICTP support is a major next step in our research activities. The ICTP projects have over 5 years of practice outcomes data with which to examine associations between our practice activities and practice outcomes.

Conclusion

As EIS is often characterized by long-term engagements that require substantial investments from all system partners, it is essential that support participants find the experience feasible and usable. Given the complexity of implementation practice, the ability of ISPs to co-create and tailor EIS activities across contexts and time is an important skill to ensure successful support. Although this evaluation report reviews only one EIS practice model in one set of practice settings, findings suggest that EIS, when well operationalized, can be experienced as feasible and useful by support participants.

Supplemental Material

sj-docx-1-irp-10.1177_26334895241253793 - Supplemental material for A feasibility study of external implementation support provided across two states in the U.S.

Supplemental material, sj-docx-1-irp-10.1177_26334895241253793 for A feasibility study of external implementation support provided across two states in the U.S. by Rebecca Roppolo, William Aldridge, Christina DiSalvo, Ariel Everett, Capri Banks and Sherra Lawrence in Implementation Research and Practice

Footnotes

Acknowledgements

The authors would like to acknowledge both current and former members of The Impact Center at FPG team for their contributions to the continual refinement and application of the model of external implementation support used for this study and diligent data submissions. We would also like to acknowledge Jennifer Robinette for her formatting and copyediting support. These data were collected following Non-Biomedical Institutional Review Board at the University of North Carolina at Chapel Hill approved protocol which has determined these data to be not human subjects research.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the North Carolina Department of Health and Human Services, Division of Public Health, North Carolina Department of Health and Human Services, Division of Social Services, The Duke Endowment (grant number 00035954, 00034805, 00036619, 00037333, 00039054, 00040617, 00042356, 00044072, 1945-SP, 2004-SP, 2037-SP, 2081-SP)

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.