Abstract

Background

Understanding the barriers and facilitators of implementation completion is critical to determining why some implementation efforts fail and some succeed. Such studies provide the foundation for developing further strategies to support implementation completion when scaling up evidence-based practices (EBPs) such as Motivational Interviewing.

Method

This mixed-methods study utilized the Exploration, Preparation, Implementation, and Sustainment framework in an iterative analytic design to compare adolescent HIV clinics that demonstrated either high or low implementation completion in the context of a hybrid Type III trial of tailored motivational interviewing. Ten clinics were assigned to one of three completion categories (high, medium, and low) based on percentage of staff who adhered to three components of implementation strategies. Comparative analysis of staff qualitative interviews compared and contrasted the three high-completion clinics with the three low-completion clinics.

Results

Results suggested several factors that distinguished high-completion clinics compared to low-completion clinics including optimism, problem-solving barriers, leadership, and staff stress and turnover.

Conclusions

Implementation strategies targeting these factors can be added to EBP implementation packages to improve implementation success.

Plain Language Summary

While studies have begun to address adherence to intervention techniques, this is one of the first studies to address organizational adherence to implementation strategies. Youth HIV providers from different disciplines completed interviews about critical factors in both the inner and outer context that can support or hinder an organization's adherence to implementation strategies. Compared to less adherent clinics, more adherent clinics reported more optimism, problem-solving, and leadership strengths and less staff stress and turnover. Implementation strategies addressing these factors could be added to implementation packages to improve implementation success.

Keywords

Introduction

Motivational interviewing (MI) is an evidence-based treatment for addressing substance use and other risk behaviors in young people (Miller & Rollnick, 2012; Naar & Suarez, 2021) and has shown efficacy in improving such behaviors in youth with HIV (Chen et al., 2011; Fortenberry et al., 2017; Murphy et al., 2012; Naar et al., 2020; Naar-King et al., 2009, 2010; Outlaw et al., 2010). However, MI competence is not easy to achieve. In a study of MI fidelity among 151 adolescent HIV providers from multiple disciplines, only 7% scored in the intermediate or advanced competency range (MacDonell et al., 2019). A review of 10 studies in health care settings suggested that MI workshops significantly improved MI skills compared to controls; however, workshops alone were not sufficient for trainees to achieve MI competency (Miller et al., 2004; Mitcheson et al., 2009; Moyers et al., 2008, 2009). Basic science findings in communication science, behavioral skills training, and cooperative learning environments have been systematically translated into a tailored MI (TMI) implementation package for youth HIV contexts in the Adolescent Trials Network for HIV/AIDS Interventions (ATN) to improve on the evidence-base on MI implementation strategies (Naar et al., 2021).

The implementation science literature describes adherence to evidence-based practice (EBP) as a critical component of fidelity, including factors such as delivery of specified intervention strategies, dosage of treatment, and frequency of supervision (Aarons et al., 2012). While studies have begun to address adherence to intervention strategies (e.g., Pedersen et al., 2018; Shanbhag et al., 2018), much less attention has been paid to implementers' adherence to implementation strategies (Schoenwald et al., 2013). Saldana (2014) began to fill this gap with Stages of Implementation Completion tool, which yields a proportion score of the percentage of activities completed within a stage. Thus, adherence to implementation strategies may be measured as a proportion of activities completed during the implementation stage.

An understanding of the barriers and facilitators of implementation completion is critical to determining why some implementation efforts fail and some succeed. Such studies provide the foundation for developing further strategies to support implementation completion and success when scaling up EBPs such as MI. To the best of our knowledge, there is limited information about how perceived barriers and facilitators at pre-implementation relate to subsequent implementation completion. However, a scoping review of studies identified perceived barriers and facilitators to implementing MI (Lim et al., 2019). Results suggested that MI implementation might be promoted by using enabling technology, focusing on patient-centered care and process improvement, developing a shared vision, and creating an organizational culture of learning and systems thinking. The Exploration, Preparation, Implementation, Sustainment (EPIS) framework (Aarons et al., 2011) provides further detail regarding potential barriers and facilitators of implementation that can be leveraged to understand implementation completion. Across different phases of implementation, EPIS features inner organizational and outer system context variables that reflect the complex context in which interventions are implemented and scaled as well as bridging factors (between contexts) and characteristics of the EBPs themselves (innovation factors).

Within the ATN, ATN protocol (146) (Naar et al., 2019, 2022) was a Type III hybrid effectiveness-implementation trial testing the effects of TMI implementation strategies on provider competence to deliver MI to address substance use and other risk behaviors while utilizing EPIS (ATN 153; Idalski Carcone, 2019, 2022) to understand implementation context. Pre-implementation interviews were previously coded using inner and outer context factor codes. The present mixed-methods study first assessed implementation completion and then utilized the EPIS-coded data to understand how perceptions of inner and outer context implementation factors in the pre-implementation phase differed between clinics with a high proportion of implementation completion and clinics with a low proportion.

Method

(Idalski Carcone et al., 2019, 2022) previously described the EPIS study (ATN 153), and (Naar et al. 2022) described the methods for the hybrid randomized trial of TMI in which it was embedded (ATN 146). In brief, using a stepped-wedge design, clinics were randomized to the implementation phase in five steps (N = 10 clinics, 2 per step). At each step key stakeholders at the randomized clinics completed the 1-hr EPIS interview prior to initiating implementation until all 10 clinics completed interviews. Of the 250 identified as potentially eligible by the clinic leads, 28 declined to participate, 27 were not eligible, and 54 did not complete the interview in the collection window (N = 141 interviews completed). Demographic information and professional role were self-reported by interviewees (see Table 1).

Participant Demographics by Site and in Total

TMI implementation strategies. All sites received a $3,000 incentive to participate. Implementation began with an in-person 10-hr workshop for all providers lasting 2–3 days (clinic choice). Within 1 month, the trainer provided two individual 1-hr telephone coaching sessions. These were followed by quarterly fidelity monitoring assessments using a phone-based standard patient interaction model. Follow-up coaching was mandatory if scores fell in the beginner or novice range and optional if scores fell in the intermediate or advanced range.

Measures

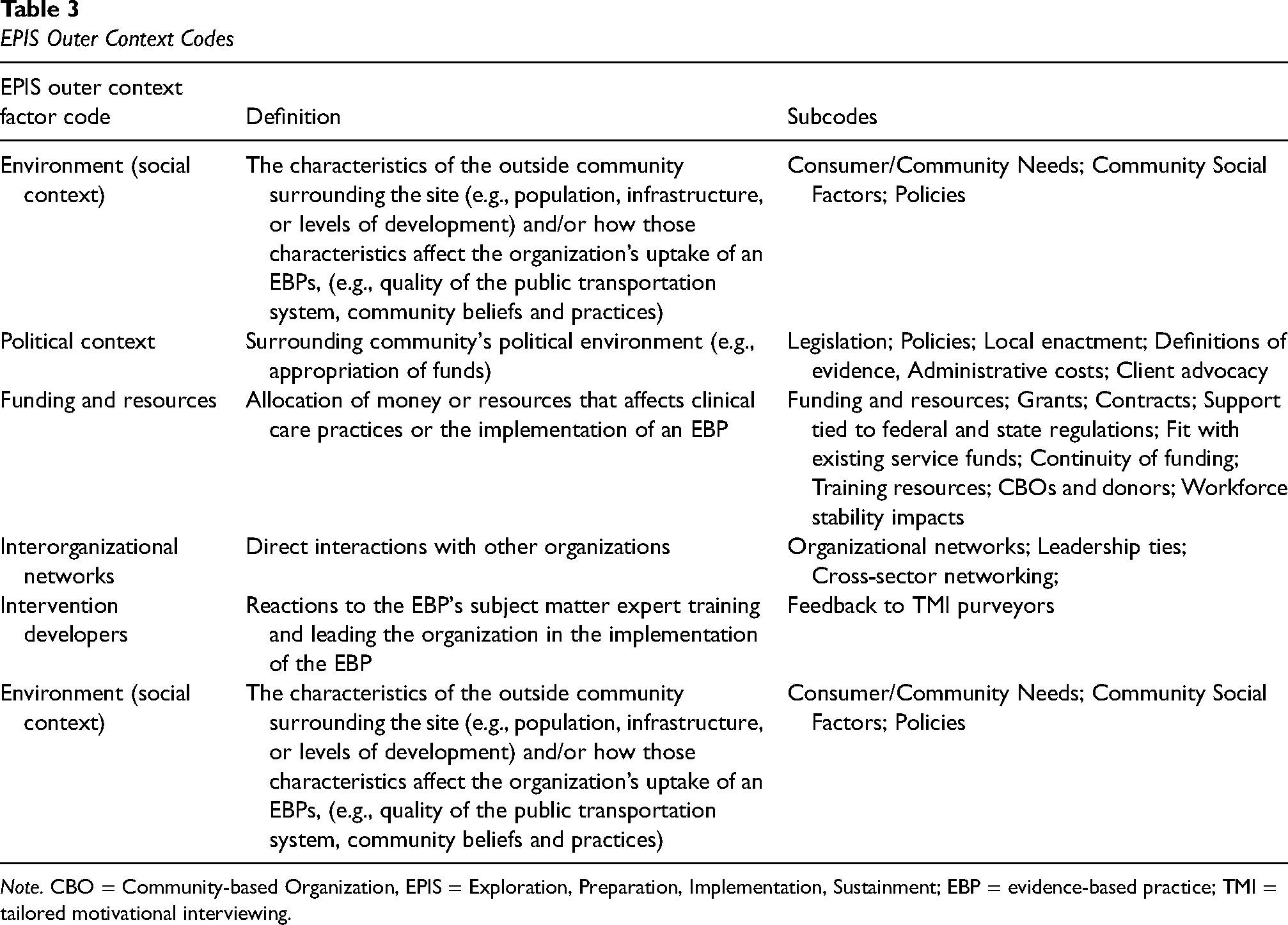

EPIS qualitative interview and coding. The interview was adapted from the EPIS developers (Aarons et al., 2012) and has been previously described (Idalski Carcone et al., 2019, 2022). Trained interviewers conducted telephone interviews using a semi-structured interview guide to elicit anticipated barriers and facilitators of TMI implementation with a focus on inner organizational factors (which included bridging and innovation factors in the original EPIS framework). Clinic directors were also asked about organizational history with EBPs, internal (organizational) and external (community, state) leadership structures, political context (policies, funding mechanisms), and fiscal considerations. Questions about inner and outer context factors were focused on EBPs generally, while questions about innovation factors were focused on MI specifically. Staff interviews required 45–60 min to complete; interviews with clinic directors required 60–90 min. Interview data were coded using directed content analysis (Hsieh & Shannon, 2005) based on the EPIS coding framework (Aarons et al., 2011, 2016). These coding methods and initial findings have been previously published (Idalski Carcone et al., 2019, 2022). In brief, the coding framework was iteratively updated throughout the coding process resulting in a total of 58 constructs organized under the primary EPIS inner context and outer context factors (see Tables 2 and 3). An initial assessment of intercoder reliability was conducted on two novel interviews (one clinic director, one key implementer), percentage agreement between coders was high at 97.45%; Intercoder reliability was assessed on an ongoing basis throughout the coding process by randomly selecting 30% of the interviews for co-coding. Overall intercoder reliability was acceptable at k = .53 (Landis & Koch, 1977) for data sets with a large number of codes, and percentage agreement remained strong at 98.2% agreement among coders.

EPIS Inner Context Codes

Note. EPIS = Exploration, Preparation, Implementation, Sustainment; EBP = evidence-based practice; TMI = tailored motivational interviewing; MI = motivational interviewing.

EPIS Outer Context Codes

Note. CBO = Community-based Organization, EPIS = Exploration, Preparation, Implementation, Sustainment; EBP = evidence-based practice; TMI = tailored motivational interviewing.

Proportion of implementation completion. Because the focus of the study was on inner and outer organizational factors, versus individual provider attitudes, we averaged completion scores across all providers at each clinic to guide the classification of clinics for qualitative comparative analysis (see Table 4). Proportion of implementation completion was a composite score comprised of the average of provider scores within each clinic for each follow-up implementation strategy: percentage of post-workshop coaching attended, percentage of quarterly standard patient interactions completed, and percentage of quarterly fidelity coaching when required. We calculated the mean proportion of the three components, giving each component equal weight.

Proportion of Implementation Completion

Analysis Plan

The current study utilized an interactive mixed-methods approach (Greene, 2007) incorporating elements of a sequential exploratory mixed-methods design (Creswell & Creswell, 2017) with a primary focus on the qualitative data. An interactive mixed-methods approach is an iterative analytic design that allows for the qualitative and quantitative data to interact with each other through a sequential process whereby each phase of the analysis involves examining a different type of data (Greene, 2007). For the quantitative analysis, we rank-ordered the clinics from highest to lowest proportion scores. We then selected the three clinics with the highest proportions and the three clinics with the lowest proportions for qualitative comparative analysis.

Using the pre-coded EPIS data (ATN 146), we utilized a comparative phenomenological inquiry framework since the study focused on comparing the lived experiences of providers from the high- and low-completion clinics as they contemplated using MI (Moustakas, 1994; Patton, 2014). To examine patterns between high- and low-completion clinics, exemplary pre-coded data segments for each EPIS factor were extracted by the primary author and placed into data matrices. Then, six analysts reviewed each matrix independently and submitted comments regarding similarities and differences. The team met biweekly to review a single combined document. During these meetings, the team discussed the content of the transcripts and arrived at consensus on the similarities and differences in EPIS factors between high- and low-completion clinics.

Results

Quantitative Analyses

The average completion for each component across providers at each clinic, as well as a composite percentage score for each clinic, are summarized in Table 4. High-completion clinics scored >60% implementation completion, whereas low-completion clinics scored below 50% completion.

Qualitative Analysis

The results of the comparative analyses for the EPIS factors coded from the pre-implementation interviews and corresponding quotes from high- and low-completion clinics are summarized below. We include codes where there were sufficient nodes to determine patterns of similarities and differences.

Inner Context Factors

Organizational Characteristics

Size includes the number of staff and the ratio of leader/staff and provider/patient at each clinic. Although both groups noted that the number of staff ranged between 16 and 20, the high-completion clinics perceived their teams as larger and more interdisciplinary with mental health and social services providers serving in key leadership roles while the low-completion clinics described their teams as smaller with medical providers being in charge. When discussing staffing, we noted that the high-completion clinics described the type and diversity of comprehensive services being offered rather than delineating the number and disciplines of staff as was done by the low-completion clinics.

Staff Knowledge and Skills address provider training/experience, time in the organization, and turnover. We noted that both groups stated their staff carried heavy workloads, juggled multiple roles and responsibilities, and offered numerous services in and out of the clinics. While there was a sense and appreciation for EBP in both groups, low-completion clinics reported a more superficial understanding of MI than the high-completion clinics. A lot of staff that do work here … do their work not knowing the whole process behind things being evidence-based … as an aside when you interview other staff, they may not even understand the questions and give uninformed answers because they are just busy delivering the work. (Low-completion)

So the biggest things that we learned from the trainings were really how to as clinicians utilize different types of questions to elicit information from patients and to assess their motivation. (High-completion)

The high-completion clinics recognized the training and effort staff had put into becoming proficient with MI while the low-completion clinics seemed to attribute their skills to being “awesome” providers and likened MI to good clinical skills. So we have large trainings, and everyone participates in the motivational interviewing, role playing, et cetera. (High-completion)

I’m a pediatrician with a specialty in adolescent medicine, and so we tend to use it just as a natural way of interviewing and talking to teenage patients, a motivational interview kind of a style. (Low-completion)

The high-completion clinics had more training and more MI-experienced staff due to lower staff turnover than the low-completion clinics. Although both groups discussed staff turnover, the low-completion clinics discussed turnover more frequently than the high-completion clinics. Not surprisingly, both groups attributed turnover to outer context funding issues such as losing a grant. The low-completion clinics seemed to suggest that as a result of turnover their staff had insufficient MI skills which would impact their ability to implement MI. In contrast, high-completion clinics focused on staff's longevity in the organization and commitment to the client population.

Routine activities include staff's usual duties and responsibilities (clinic level) and the job's emotional burden (individual provider level). We noted that both groups described their work as being emotionally demanding and expressed having strong emotional bonds, but the low-completion clinics seemed to focus on the burden rather than the comprehensive services. …It can be extremely demanding emotionally because you want sometimes more for them than they want for themselves. (Low-completion)

We know these patients. They trust us with information that we’re providing and they know they can call upon us at any time… (High-completion)

It appeared that staff from high-completion clinics had better coping mechanisms such as work/life balance, staff support, humor and more willingness to take mental health days than those from low-completion clinics. Staff from low-completion clinics seemed more burdened by the needs of their patients and expressed some frustration with their patients’ array of needs. Low-completion clinics used terms such as “heartbreaking,” “frustrating,” and “taxing” while those from high-completion clinics used “tolerant,” “emotionally invested,” and “dedicated.” I just can’t turn it off…. it can be very taxing. (Low-completion)

…we manage to find things that are funny…. we support each other. (High-completion)

Organizational values include innovativeness, mission vision, willingness to change, and perceived failure tolerance. We noted that both groups seemed highly engaged, committed, and passionate and agreed that MI was valuable and would fit well within their organizations. The high-completion clinics described an organizational culture that supported autonomy, flexibility, and openness to learning; they seemed to focus more on the unit's rather than on individual provider's investment in MI as we noted for the low-completion clinics. The high-completion clinics seemed to value teamwork and collaboration and supervisors were highly supportive of MI practices. The low-completion clinics seemed to operate like a group of like-minded individuals rather than an organized, well-supported team sharing institutional goals and values. Oh, I think if the worker – you know, the clinic staff member – isn’t buying into its value, then they won’t do it correctly or care about doing it correctly. (Low-completion)

…Everyone is pretty open to different techniques and the different ways to approach people ‘cause obviously all of our clients need different things and not one method will work with every single person. (High-completion)

Absorptive Capacity is the ability to recognize, assimilate, and apply new knowledge and commit resources to a new effort. When discussing the practical implications of assimilating an EBP into their organizations, both groups brought up time commitments, funding, and scheduling constraints but we noted differences in how the issues were presented. While the high-completion clinics acknowledged their staff's heavy workload, they were open to engaging with MI because they recognized the benefit for patients and providers and expressed willingness and interest in learning new things. The low-completion clinics described time commitments in terms of added burden with questionable benefits. I think we’re just at our limits of what we can do … being asked to do it might push us over the edge. (Low-completion)

I think the problem really is workload and have the time to actually do it … but I think the philosophy and buy in to it is already there. (High-completion)

Low-completion clinics seemed more pessimistic regarding implementation of MI and more resistant to asking staff to take the necessary time to implement MI than the high-completion clinics. In contrast to the high-completion clinics which seemed to feel that MI would complement their current activities, the low-completion clinics questioned whether staff would implement MI with fidelity. Additionally, in the high-completion clinics, we noted upfront planning and willingness to accommodate the implementation strategies (i.e., workshop), but the low-completion clinics seemed more resistant and frequently stated that they did not have the person power to implement the program. I just don’t know how you can get people to say “Oh yeah, I’ll take that extra thing on just because I think it would be in the best interest of our clinic.” (Low-completion)

I think there is a certain level of enthusiasm of “we want to learn the skills.” (High-completion)

Leadership

Leadership and Autonomy factors tap into how attitudes, decisions, vision, and communications of organizational leaders affect the uptake of EBP. Both groups concurred that leadership buy-in and promotion were important for successful implementation. However, the high-completion clinics suggested they had buy-in from leadership, provided more specific details about how leadership promoted the EBP and stressed the importance of leading by doing. The low-completion clinics seemed focused on needing to get leadership buy-in and hinted that implementation would happen if and when leadership promoted it. I think at this point, we have a lot of support from most of the supervisors and the director. (High-completion)

I just think that if the supervisors and managers buy in, that’s probably the key…. We’ll just have to wait and see how that works with the supervisors. (Low-completion)

The low-completion clinics appeared less motivated to participate and more skeptical of being able to incorporate MI into their day-to-day activities than the high-completion clinics. The high-completion clinics seemed to have higher degrees of autonomy and greater openness to providing input than the low-completion clinics. In the high-completion clinics, autonomy seemed to be associated with an openness toward learning about new EBP which did not emerge among the low-completion clinics. The low-completion clinics were mostly led by medical providers, emphasized medical care, and seemed to have a more autocratic, hierarchical structure than the high-completion clinics. I don’t want to say that it’s a participatory democracy. I mean, sometimes somebody has got to be at the lead and say, “This is what we’re doing.” (Low-completion)

I think that our organization really wants to promote autonomy and kind of self-care with our youth. And so I do think that MI really fits with that model, with trying to empower youth to take care of themselves and to be responsible for their health. (High-completion)

MI Champion. The high-completion clinics were more specific about the people who were or would be MI champions than the low-completion clinics. We noted that the high-completion clinics readily and consistently identified the champion while the low-completion clinics seemed reticent to identify individuals but preferred to indicate that everyone was a champion. Enthusiasm?? I don’t know if that’s the right word. I think it’s more of a – it’s not a oh boy, let’s get to some motivational interviewing. I think, I think that what’s important, it would be targeting or prioritizing which, which clients would benefit most from motivational interviewing. (Low-completion)

Well, for instance even when he presents to the group that there might be this intervention coming, you know, he does it with enthusiasm. (High-completion)

Innovation Factors

General experience with EBP. We noted that both groups had experience and appreciation for EBP, were willing to follow guidelines and were often involved in research to develop such guidelines.

The low-completion clinics seemed to have lower levels of awareness of the meaning of evidence based than the high-completion clinics. The high-completion clinics seemed more flexible and willing to use EBP in engaging and innovative ways instead of strict adherence to guidelines as we observed among the low-completion clinics. Our staff are trained. I’m not aware of an evidence-based intervention, but the protocol that comes down from the county is followed. (Low-completion)

…so I think we need to be flexible and people have an opportunity to use their skills and training to accomplish what we’re all trying to achieve. (High-completion)

Fit of tailored MI. Although there were varying levels of endorsements of MI, overall, both groups perceived MI as being beneficial for their patients and useful for promoting behavior change. Despite potential barriers to implementation, both groups seemed to support using MI, but the high-completion clinics seemed more enthusiastic and excited about using MI. Also, the low-completion clinics talked about MI as something that they were already using instead of describing it as something novel that could improve their work as described by the high-completion clinics. I think is a certain level of enthusiasm of “We want to learn the skill. We want to know the skills that is out there as providers.” (High-completion)

I feel like we use it all the time. But we just probably don’t. (Low-completion)

While the two groups agreed that using MI fits well with younger clients and routine clinic operations, there seemed to be varying expressions of “fit” ranging from being helpful to patients and aligned with the organizational culture, to musing over how much easier it is to just tell patients what to do. The low-completion clinics were more likely to discuss factors that reduced fit such as patient language barriers, patients with serious mental health needs, and staff resistance than the high-completion clinics. The low-completion clinics appeared less clear on how MI would fit into their day-to-day operations while the high-completion clinics saw MI as enhancing or complementing their daily work. The high-completion clinics appeared to have a deeper understanding of MI, were more willing to apply the core concepts in ways that fit the population, had a sense that MI could be integrated into clinical care, and placed greater emphasis on team building and collaborative efforts in the education and implementation of EBP than the low-completion clinics. So I would say a language barrier, potentially, could be a second reason why it might not be such a fit, besides the other one that I gave about the resistance from well entrenched employees who already have a style. (Low-completion)

Our organization really wants to promote autonomy and kind of self-care with our youth. And so I do think that MI really fits with that model, with trying to empower youth to take care of themselves and to be responsible for their health. (High-completion)

In terms of fit of implementation strategies, both groups saw “time” as the biggest barrier. However, staff capacity, complexity of TMI, and time commitments required for MI implementation seemed to be of greater concern for the low-completion clinics than the high-completion clinics. The high-completion clinics seemed optimistic in their clinic's ability to successfully implement MI while the low-completion clinics seemed ambivalent about future success. The tone of the comments from the low-completion clinics seemed more negative, more resistant, and more pessimistic regarding MI implementation than that of the high-completion clinics. It’s about, about it, in line with other stuff that we’re doing. How can we like create a framework that’s going to be okay and add in, but not necessarily take away from other things. (Low-completion)

That there’s ongoing monitoring and feedback and setting up someone within our group to work with us on an ongoing basis so that it stays in everyone’s mind. And it’s all within our group, so that I guess that would be good to have our own little community helping each other. (High-completion)

Outer Context Factors

Environment includes the allocation of resources that impact clinical practices and/or implementation of EBP, the characteristics of the surrounding community, and how these characteristics impact the uptake of EBP. We noted both groups had similar allocation of resource in the services they offered and the frequency of routine clinical visits. Although both groups served similar patient populations (e.g., patients with high needs, high stress, and low levels of social support), we noted differences in their approach to patients and clinical practices. For instance, to improve the delivery of specialized services, several high-completion clinics allocated resources to standardized assessments of patient needs and risk factors at every visit. The low-completion clinics described serving patients with “special concerns” that were not the typical clients, seemed to approach clinical care from the providers' perspective, and to express provider frustration in caring for clients not focused on their own health. The high-completion clinics seemed to follow a more patient-centered approach and were more understanding and empathetic toward their patients' needs. Working with HIV involves working with a lot of patients who have mental health, substance abuse problems … stigma … discrimination … its stressful work … a population that doesn’t always focus on their health … it can be very frustrating for care providers to put forth a lot of energy to see patients … ignore or waste the effort of the staff. (Low-completion)

We all know that if someone is not in a stable living environment, housing or they’re concerned about where their next meal is coming from, you know, we definitely try to address the psycho-social and get that stabilized first, before we try to address them taking medicines for their HIV. (High-completion)

Political context. Except for one clinic speaking about the lack of political support for the work that they do, the high-completion clinics made few comments on the political context. The low-completion clinics stressed that they followed local, state, and national clinical guidelines, and seemed very concerned about monitoring and oversight, as well as the influence of political appointees that could negatively impact their work. We are always concerned about funding and policy shifts depending on who is in charge. (Low-completion)

Funding. For both groups, prior MI training was tied to specific funding, tended to be episodic, and the training workshops were brief. The low-completion clinics tied turnover more directly to funding losses than the high-completion clinics although both groups acknowledged the role of funding in maintaining staff. So if you haven’t provided every single step of the service as written in the manual, you may not get reimbursed for money that you put out there to pay salaried staff on a project because you forgot to do one particular type of assessment which may not even need to be done, but it’s in that manual that says, “You must do this in order to get complete reimbursement.” (Low-completion)

We received some small amount of funding from the county, but when it really comes to providing services and care, they really do not, you know, they don’t guide us in how to do things. We’re really the leaders in that area. (High-completion)

Discussion

Organizations' completion of implementation strategies is critical to implementation success but not adequately understood. This mixed-methods analysis demonstrated clinic differences in completion of implementation strategies designed to promote MI competence in the context of adolescent HIV providers scaling up TMI. Low- and high-completion clinics were similar in their perceptions of MI being a good fit with the population and both groups of clinics acknowledged implementation barriers. However, several factors distinguished high- and low-completion clinics suggesting that high-completion clinics were more flexible in adapting the EBP to their setting. That is, the high-completion clinics appeared to be more optimistic about the implementation strategies fitting into their setting, to have more innovative ideas about handling implementation barriers, and to have a deeper understanding of the EBP and the needs of clients.

Other differentiating EPIS factors may explain this optimistic and flexible mindset, suggesting possible mechanisms of implementation completion and potential intervention strategies. First, while both groups of clinics expressed concerns about outer context funding cuts, low-completion clinics discussed funding as a primary trigger of turnover and losing staff who had more experience with the target EBP. This is indicative of a “bridging factor” from the revised EPIS framework that links outer context policies or funding to inner context organizational imperatives (Lengnick-Hall et al., 2021). Although all clinics receive public funding from the Ryan White Care Act, it is still possible that differences in clinic resources may impact implementation completion. Second, low-completion clinics seemed more likely to report policy environments that were more restrictive and autocratic in how services are delivered and documented while the high-completion clinics appeared to be more autonomous and had greater flexibility with service delivery. Third, while both groups of clinics reported highly stressful job conditions, high-completion clinics reported more coping strategies with routine job stress. Fourth, high-completion clinics reported leadership approaches consistent with those that support a positive implementation climate such as autonomy-supportive leadership with buy-in and MI champions at multiple levels of management (Aarons et al., 2014).

Implications for Implementation Science and Limitations

These findings have implications for the EPIS framework and for developing new implementation strategies to promote organizations' implementation completion that can be added to strategies to promote provider competence. In the context of adolescent HIV settings, certain EPIS determinants and mechanisms, specifically in the outer context domain, had less coding density. It is possible that these factors may be less relevant to this context, but future studies are necessary to confirm this assertion. However, issues of bridging factors such as how policies are translated into contracting and contract longevity were invoked in regard to staff turnover (Lengnick-Hall et al., 2021). Alternatively, as in other studies examining EPIS outer context influences, data sources other than staff interviews (e.g., policy analysis, funder interviews) would be necessary to fully explore outer context factors (Aarons et al., 2016; Lui et al., 2021). There were evident differences between the clinics in regard to the organizational stance toward new innovations and it will be helpful to better understand and consider organizational profiles and dynamics when beginning to work with organizations to implement evidence-based innovations (Lengnick-Hall et al., 2020). Future studies should query and code more directly for EPIS bridging factors that describe the dynamic interplay between outer context and inner context. Finally, while previous experience with MI appeared to be more common in high-completion clinics, a previous study demonstrated that objectively coded baseline competency was low across clinics (MacDonell et al., 2019). Nonetheless, high-completion clinics may have had more MI exposure suggesting that clinics with lower exposure may benefit from an introduction to MI prior to initiating the initial workshop.

A limitation of the current study was the focus on clinics in a research network in primarily urban settings that may not be generalizable to some other community-based settings or more rural settings. Future studies could consider a comparative analysis in relation to implementation outcomes such as feasibility, acceptability, or reach (Proctor et al., 2011) and consider additional stages of implementation completion (Saldana, 2014). It is important to note that the current measure of implementation completion focused on the MI training components and did not include other components like project planning meetings. Also, each component of TMI was given equal weight in the quantitative measure and future research may elucidate whether some components are more important than others for achieving EBP competency (e.g., role-play and feedback vs. coaching). Another possible limitation is the less-than-optimal interrater reliability for initial EPIS coding, likely due to the high number of sub-codes under each primary factor. However, such reliability as a metric in qualitative studies is questionable (O’Connor & Joffe, 2020), and the study utilized rigorous comparative analysis strategies to increase reliability of conclusions. Future studies can also consider quantitative predictors of implementation completion such as baseline MI competency or EPIS quantitative measures (Moullin et al., 2019).

In terms of intervention, the following implementation strategies could occur prior to implementation (i.e., in the EPIS Preparation Phase): educate providers about the EBP to be implemented to ensure a deeper understanding of the EBP; train leadership to be knowledgeable about the EBP and promote an autonomy supportive style (Aarons et al., 2017); identification of an MI champion at each level of leadership including middle management (Birken et al., 2018); and increase providers' stress management and coping skills (Green et al., 2014). This latter issue of providers’ stress management and coping during implementation is particularly salient for behavioral health providers in the current pandemic (Sklar et al., 2020).

Conclusions

In a national implementation study of TMI in adolescent HIV settings, the completion of implementation strategies was variable. This mixed-methods comparative analysis revealed several inner and outer context factors that differentiated high- and low-completion clinics. Factors associated with leadership and provider factors, suggest several implementation strategies to promote completion that can be added to strategies to promote competence including leadership training (e.g., leadership and organizational change for implementation intervention, Aarons et al., 2017), provider stress management training (e.g., burnout prevention, Zhang et al., 2020), and developing MI champions at multiple levels of the organizational structure (e.g., opinion leaders, Flodgren et al., 2019). Future research is necessary to replicate these findings outside of academic medical centers.

Author's Note

Karen MacDonell, Center for Translational Behavioral Science, Florida State University, Tallahassee, FL, USA.

Footnotes

Acknowledgements

The authors would like to thank Carolyn Blue, Maurice Bulls, Demetria Cain, Scott Jones, Leah King, Sonia Lee, Sarah Martinez, site PIs, study coordinators, and the passionate adolescent HIV providers.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Eunice Kennedy Shriver National Institute of Child Health and Human Development, Adolescent Medicine Trials Network for HIV/AIDS Interventions (ATN 146, 153) as part of the Scale It Up Program (U19HD089875; PI: Naar). The content is solely the responsibility of the authors and does not necessarily represent the official views of the National Institutes of Health. Henna Budhwani was supported by the National Institute of Mental Health (NIMH) on award K01MH116737. Gregory A. Aarons was supported by the US National Institute on Drug Abuse on grant R01DA049891. Additionally, Dr. Aarons serves on the editorial board for Implementation Research and Practice (all decisions on this paper are to be made by another editor).