Abstract

Background

Because substance use and substance use disorders among people with HIV are both prevalent and problematic, improving the integration of substance use treatment within HIV service settings is urgently needed (Azar et al., 2010; Garner et al., 2022; Hartzler et al., 2017; Weaver et al., 2009). Motivational interviewing-based brief interventions (MIBIs) have a strong evidence base for reducing alcohol use in multiple settings and are cost-effective (Jonas et al., 2012; Kaner et al., 2018). HIV service organizations (HSOs) are concerned about their clients’ overall well-being and provide non-medical support services. Although substance use is not something HSOs have addressed historically, this is a gap that has much growing interest. MIBIs may be a good candidate service for HSOs to address this critical need, yet widespread implementation of MIBIs remains limited, which may be partially due to limited information on the potential costs.

The Substance Abuse and Mental Health Services Agency (SAMHSA) has funded Addiction Technology Transfer Centers (ATTCs) to offer free MIBI training to interested organizations. The ATTCs use a gold-standard and widely recognized staff-focused strategy to train individual staff in MIBIs. Although the staff-focused ATTC strategy is viewed as necessary for helping staff learn motivational interviewing, the ATTC strategy may be insufficient on its own, especially in settings, like HSOs, that have not previously offered similar services. The Substance Abuse Treatment to HIV Care Project was funded to test the Implementation & Sustainment Facilitation (ISF) strategy as an adjunct to the ATTC strategy for improving the implementation of MIBIs in HSOs. The ISF strategy is a team-focused strategy developed to help improve the implementation and sustainment of MIBIs by addressing issues around implementation climate, workflow and quality improvement processes, and organizational support. The ISF strategy significantly improved the consistency and quality of MIBI implementation and decreased clients’ likelihood of using their primary substance at follow-up (Anonymous, 2020). ISF did not affect sustainment of MIBIs. The study also did not find reduced substance use among clients receiving a MIBI from ATTC-only staff. If HSOs want to add the MIBI, Garner et al. (2020) suggests that ATTC alone is not sufficient to improve the effectiveness of MIBIs.

In this study, we present novel evidence on the cost and cost-effectiveness of the ISF strategy on both implementation outcomes and client outcomes relevant for federal policymakers. Because SAMHSA pays the ATTCs to promote the widespread dissemination and implementation of services like MIBIs, it would be the likely payer for the ISF strategy as an adjunct to what the ATTCs already provide. In addition to reducing substance use, SAMHSA expects investment in the ATTC strategy to increase the provision of services by HSOs and other organizations, and we provide evidence on whether the addition of ISF is valuable to SAMHSA. Likewise, the Health Resource and Services Administration, which provides substantial funding to HSOs, could also be a potential payer: support for the implementation strategies could flow through its AIDS Education and Training Centers Program.

Beyond the context of our study, few rigorous trials have assessed cost and cost-effectiveness of implementation strategies (Barnett et al., 2020; Eisman et al., 2020; Hoomans & Severens, 2014; Reeves et al., 2019; Roberts et al., 2019). In their review of literature published between 2003 and early 2016, Roberts et al. (2019) found 22 implementation studies that conducted economic evaluations. All 22 studies had poor methodological rigor for the costing element, noting that justification was rarely provided for the sources and methods of estimating unit costs. This study contributes to the implementation research literature by rigorously estimating the costs of multiple implementation strategies, which helps address the noted methodological quality deficits in other economic evaluations of implementation strategies. We also highlight several methodological considerations for conducting cost-effectiveness analyses of implementation strategies.

Methods

Study design

The full study protocol for this dual-randomized type-2 hybrid trial is available (Garner et al., 2017a, Garner et al., 2017b). Type-2 hybrid trials simultaneously test the effectiveness of an implementation strategy and a clinical intervention. ISF is the novel implementation strategy, and MIBI is the novel clinical service being tested. Thirty-nine HSOs were randomized between the ATTC strategy (n = 19) and the ATTC + ISF strategy (n = 20) (see Figure 1). HSOs were enrolled across three cohorts from January 2015 through August 2017 and were in the central United States (n = 14), western United States (n = 11), and eastern United States (n = 14). Eligible HSO organizations had to have at least 100 clients; have at least two staff members willing to be prepared to implement a MIBI; and have at least one leadership staff member willing to help support the MIBI staff. There were no exclusion criteria.

Dual-randomized type 2 hybrid trial design.

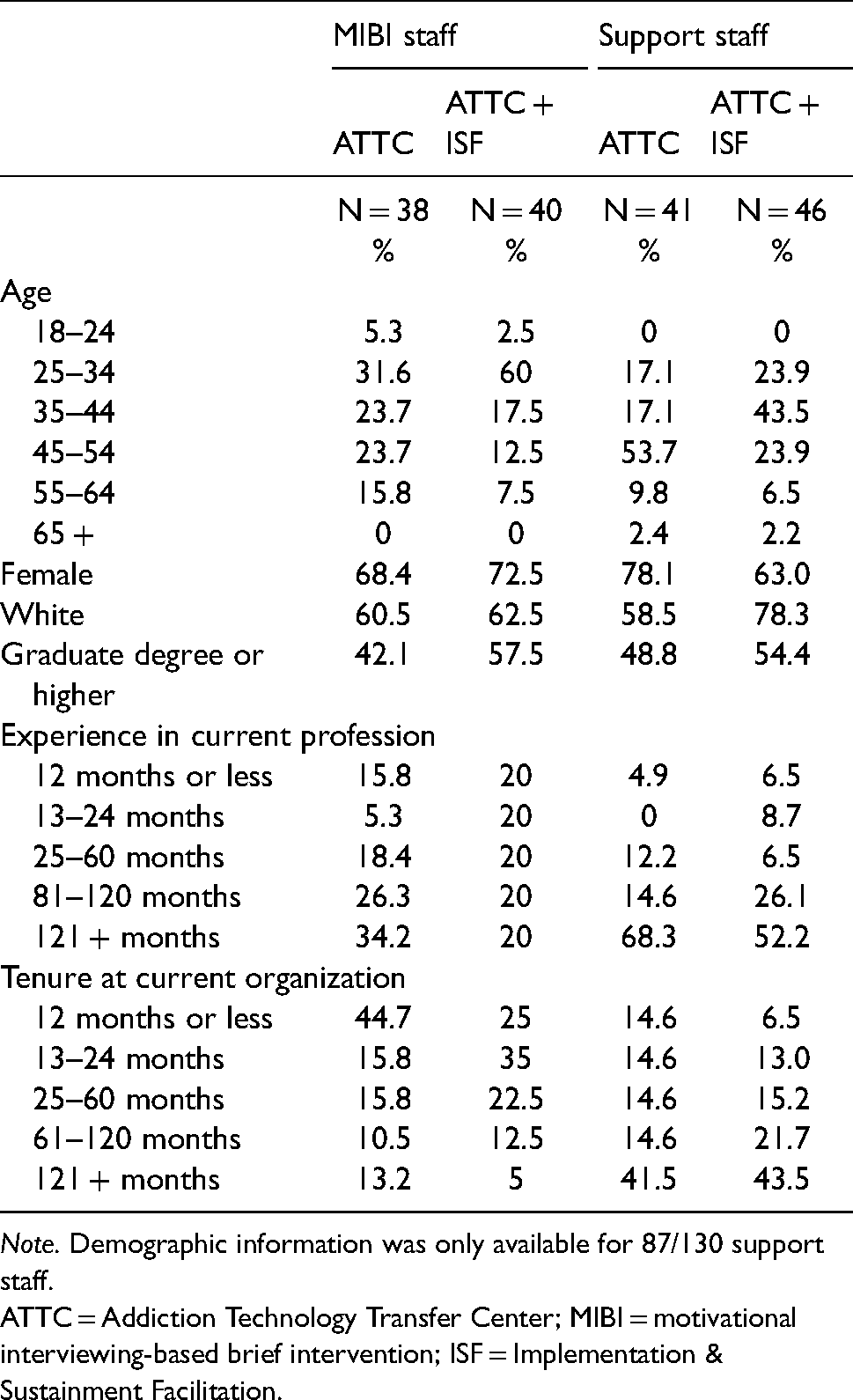

Two staff members from each HSO participated in the ATTC strategy (N = 78), which consisted of five core activities to train and support staff in delivering MIBIs: (1) an online training, (2) an in-person training, (3) individual feedback sessions, (4) MIBI feedback reports, and (5) group consultation meetings. Staff characteristics are presented in Table 1. In ATTC + ISF HSOs, MIBI staff and additional HSO support staff participated in monthly facilitation meetings that helped HSOs prepare to implement the MIBIs and support ongoing implementation. ISF support was provided for 12–18 months and was intended to foster sustained MIBI implementation beyond the period of ISF support. All discrete implementation strategies included in the ATTC and ISF strategies were defined according to the recommendations of Proctor et al. (2011) and Powell et al. (2012) (see Supplemental Appendix Table 1 and Garner et al., 2017b).

Staff characteristics.

Note. Demographic information was only available for 87/130 support staff.

ATTC = Addiction Technology Transfer Center; MIBI = motivational interviewing-based brief intervention; ISF = Implementation & Sustainment Facilitation.

Within each HSO, regardless of ISF status, clients were randomized to either a usual care condition (UC; n = 415) or a UC + MIBI condition (n = 409). UC involved referral to formal addiction treatment, mutual-help services, or both. The MIBI was a brief (e.g., 20–30 min), single intervention to motivate clients to reduce their substance use. Only staff who received the ATTC strategy delivered MIBIs. Client characteristics are presented in Supplemental Appendix Table 2. We do not compare UC clients to UC + MIBI clients within the ATTC-only and ATTC + ISF strategies. This study was approved by the RTI International Institutional Review Board (IRB), and all participating staff and clients provided informed consent.

Cost study

Our micro-costing study is broken into four components, and we follow methods commonly recommended for implementing and reporting economic evaluations (Glick et al., 2007, Husereau et al., 2013 [see Supplemental Appendix Table 3]). We first conducted a taxonomy of activities for each strategy and intervention. Second, we compiled information on the inputs (i.e., quantity) for each activity and the prices of each input. Third, we multiplied the price for each input by the quantity of inputs to get a cost per activity. Finally, we added up the costs for each activity to estimate the cost per HSO and per staff. We use a payer perspective with a 1-year time horizon. We primarily used activity-based costing. For certain cost inputs that do not vary at the staff level, we used a top-down approach to spread the costs across staff (e.g., time spent by administrative and support staff). Development- and research-related costs were excluded.

For the ATTC strategy, the online training took 5 h to complete. The in-person training content required 16 h per staff member, with two trainers per cohort ($110/h). We extracted the costs of transportation, lodging, meals, & incidentals from invoices. We estimated staff travel time using ticketing information for airline/train travel and Google Maps for individuals who drove. For flight/train travel, we assumed individuals arrived 90 min prior to departure. We imputed the travel-related costs for the trainers using the average travel time and travel costs for the specific cohort. Costs of the training space were obtained from hotel invoices for Cohorts 1 and 2. Cohort 3's training space was provided in-kind, and we used a cost of $152/h. The rate was obtained from an internet search in December 2019 for New York City conference room rates and averaged rates from peerspace.com ($160/h) and spacebase.com ($144/h).

Staff recorded one to three practice MIBI sessions, uploaded audio recordings to a website, and received individual feedback sessions. For the first recording, we include the cost of an additional staff who served as practice subjects. A trained expert ($90/h) rated the recording using the Independent Tape Rater Scale, filled out a printable feedback report, and provided feedback to the staff member during a virtual one-on-one meeting (Martino et al., 2008). The feedback report displayed counts, adherence ratings, and competence ratings for motivational interviewing-consistent and motivational interviewing-inconsistent items. We assumed it took 55 min to complete a rating and 35–60 min to complete a feedback session. We also included $12 per HSO for a conference line and for maintenance for the website used to manage the recordings ($11,328 total, or 70.8 h for a technology specialist at $160/h). Staff also received feedback reports for actual MIBI sessions. A different group of trained raters ($30/h) rated the recordings just like the training and provided printable feedback reports. Monthly group consultation meetings were held across 6 months, and we tracked staff attendance. From trainer reports, we assumed that each meeting lasted 45 min.

The ISF strategy consisted of two core activities to support the ATTC strategy: virtual monthly meetings and an in-person meeting. Non-MIBI support staff also participated in ISF meetings. During these meetings, facilitated by a coach ($90/h), HSO staff reflected on the status of the implementation effort, shared lessons learned, and supported one another. We tracked meeting length and HSO staff attendance at monthly facilitation meetings. For meetings with a missing length, we imputed using the cohort-median time. We allocated the costs of the non-MIBI staff time equally across both MIBI staff at an ATTC + ISF organization. Additionally, we included $12 per HSO for the conference line. The in-person ISF strategy meeting was 4 h long and included imputed costs for the ISF strategy facilitator's travel. We estimated the travel cost for the facilitators by assuming it was a 2.5-day trip ($325/day) and 16 h of travel time (Business Travel News, 2018).

There were no specific activities for UC, and the costs are assumed to be zero. MIBI length was captured with a time-stamped recording. We used within-cohort median imputation for those with missing recording lengths. Through discussion with project and HSO staff, we determined that there were no other discernable activities associated with the MIBI beyond the screening. The screening was embedded within a broader research eligibility instrument, and we did not have accurate time estimates for the screening.

Staff wage estimates were directly obtained for most staff via survey at two time points. We either took the average salary across both surveys or used the reported salary if staff only participated in one survey. If the staff member did not participate in the survey, we imputed their salary using median annual salary estimates from the Bureau of Labor Statistics for one of three categories: Social and Human Service Assistants (Occupation code 21-1093, $99,730) for MIBI staff, Social and Community Service Managers (Occupation code 11-9151, $65,320) for mid-level administrative staff, or Medical and Health Services Managers (Occupation code 11-9111, $33,750) for executive directors (BLS 2018). All salaries were loaded assuming a fringe benefit and indirect rate of 30%, and the hourly rate was obtained by dividing by 2,000 h (Bray et al., 2014; Johnson et al., 2017). We multiplied the hourly salary for that staff member by the hours they spent on each activity. All costs were adjusted to 2018 U.S. dollars using the Consumer Price Index.

Effectiveness outcomes

We assess two implementation outcomes and one client health outcome. Implementation outcome measures include the number of MIBIs delivered (i.e., consistency) and the average quality of MIBIs delivered. MIBI delivery was tracked using project administrative data. Ninety-four percent of MIBIs (384/409) had a time-stamped audio recording, and there were no differences in missing recordings across study conditions. To calculate the MIBI quality score, trained raters scored each recorded MIBI on 10 distinct motivational interviewing-consistent items, using 7-point scales for adherence and competence using the Independent Tape Rater Scale. The product of the adherence and competence ratings (range: 1–49) was then summed across the 10 motivational interviewing-consistent items to determine the MIBI quality score (range: 10–490).

For the client health outcome, we aggregate clients’ days of abstinence up to the staff level, following Garner et al. (2018). Clients self-reported days of abstinence in the previous 4 weeks at baseline and follow-up. A client-level assessment of the impact of ATTC + ISF on abstinence may be inappropriate here. Because many of the ATTC and ISF costs occur before the delivery of MIBIs, they are fixed costs. Fixed costs are often excluded from cost-effectiveness analysis. Because the ISF strategy intended to increase the number and quality of MIBIs, excluding relevant fixed costs could produce misleading cost-effectiveness estimates. Aggregating to the staff level better aligns the outcome as the impact of the staff's performance on their clients and the costs as a direct input. We also argue that the sum of days is a better metric for capturing the full impact of the staff's activities relative to average days. Using a pooled average will not capture the total impact if the ATTC + ISF staff deliver more MIBIs.

Following Garner et al. (2018), we also convert aggregate client days of abstinence to quality-adjusted life-years (QALYs) by dividing days of abstinence by 365 and multiplying by a disability weight of 0.373 reported in Salomon et al. (2015) for moderate alcohol use disorder from the cross-national Global Burden of Disease study. We use alcohol-specific disability weights because alcohol was the most reported primary substance in the trial.

Cost-effectiveness analysis

We estimated differences in costs and outcomes between study conditions using multilevel generalized linear models. For the cost outcomes, we used a gamma distribution and log link. For the remaining outcomes, we used a normal distribution and identity link. All models included an indicator for the ATTC + ISF condition, indicators for study cohort, and HSO-level random effects. Models were also weighted using inverse-propensity scores calculated from a logit model with staff characteristics.

We then calculated incremental cost effectiveness ratios (ICERs), which are the ratio of the differences in the costs between the two conditions to the difference in the outcomes. The ICER indicates how many resources must be invested to obtain an additional unit of the outcome at a given willingness to pay. Because there are differences in the scale of the three non-cost outcome variables, we also standardized each outcome and calculate incremental differences and ICERs based on the standardized variables. All analyses were conducted using Stata 16 (StataCorp, 2019).

We also conduct three sensitivity analyses. Sensitivity analyses are a way to test how changes to key variables affect the main results. First, to estimate statistical uncertainty for the non-QALY outcomes, we calculated cost-effectiveness acceptability curves using a probability sensitivity analysis. We used 1,000 bootstrapped samples from the original study data to create an empirical distribution of the expected costs and outcomes. Across the replications, we assessed whether the ATTC + ISF strategy had the highest probability of being optimal for a range of willingness to pay values. To calculate a confidence interval for the QALY outcome, we used Salomon et al.’s (2015) high-range weight (0.508) for the lower limit and their low-range weight (0.248) for the upper limit.

Second, we assess a change in the outcome model for days of abstinence. Our preferred definition does not incorporate baseline substance use for which traditional effectiveness studies often control. Therefore, we tested two additional models that include baseline substance use. First, we re-estimated the model with the average baseline days of abstinence across all clients of a staff member, included as a covariate. Including the sum of days of abstinence at baseline is not preferable because ATTC + ISF staff delivered more MIBIs. The imbalance due to the implementation strategy may condition out some of the treatment effect. Second, we changed the outcome definition itself. We calculated the difference in days of abstinence between baseline and follow-up for each client and then added up the differences for all clients of a staff member (i.e., the change in days of abstinence from baseline to follow-up). In a third sensitivity analysis, we assess how the inclusion or exclusion of certain costs affect the results.

Results

Costs per HSO

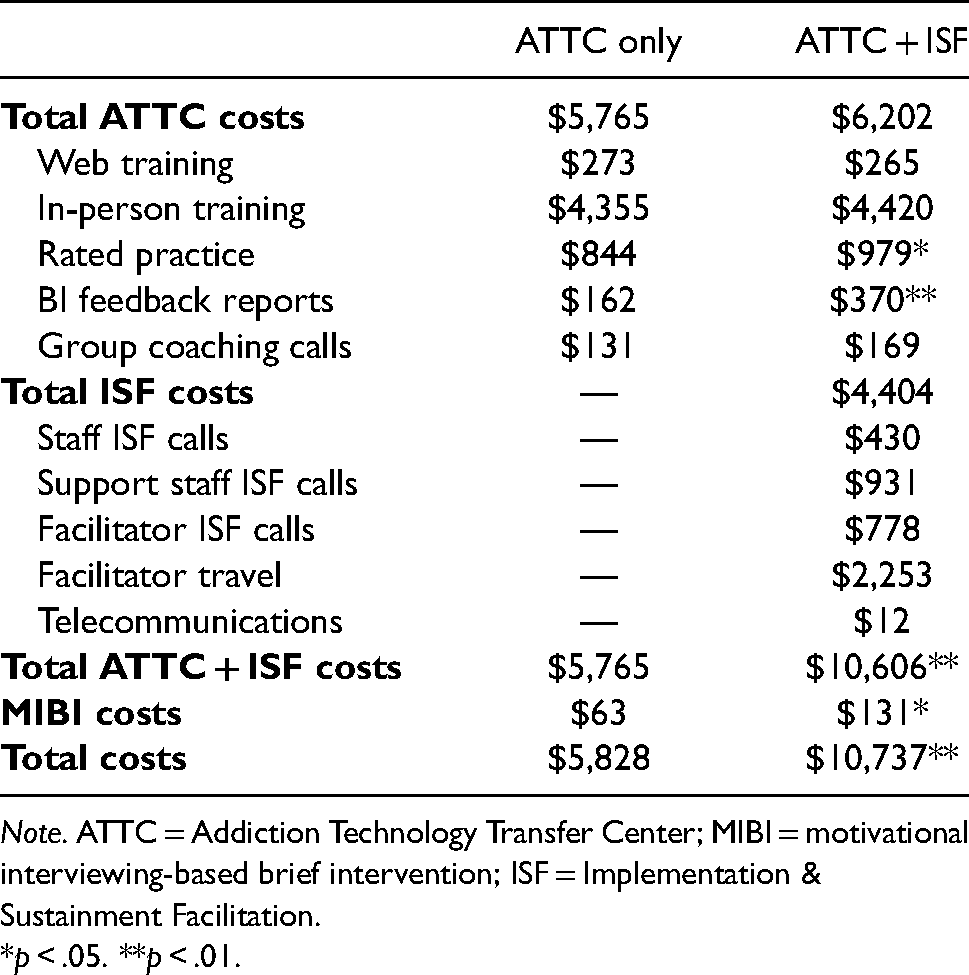

Per-HSO costs for the ATTC-only and ATTC + ISF conditions are shown in the second and third columns of Table 2. Overall, the cost per HSO was $5,828 for the ATTC-only condition and $10,737 for the ATTC + ISF condition. The difference in costs ($4,909) between conditions was statistically significant.

Average cost per HSO.

Note. ATTC = Addiction Technology Transfer Center; MIBI = motivational interviewing-based brief intervention; ISF = Implementation & Sustainment Facilitation.

*p < .05. **p < .01.

The costs for all ATTC-related activities were $437 higher in the ATTC + ISF condition ($6,202) than in the ATTC-only condition ($5,765), but the difference was not significant. Most ATTC strategy costs—76% in the ATTC-only condition and 71% in the ATTC + ISF condition—were driven by the in-person training. There was a statistically significant difference of approximately $135 between the conditions for the preparation-phase rated practice sessions, indicating that the ATTC + ISF staff were more engaged in this facet of the ATTC strategy. Similarly, the MIBI feedback report costs were $208 greater in the ATTC + ISF condition. This increase can also be seen in the cost of the MIBIs themselves, which cost $63 per HSO in the ATTC-only condition and $131 per HSO in the ATTC + ISF condition (a 110% increase). The MIBI delivery costs represent a small fraction of the overall costs.

The average cost per HSO for the ISF strategy alone was $4,404. We present the separate labor and indirect costs for the ISF instead of the specific activities, as there were only two activities. Of the ISF cost components, travel costs for the facilitator were the largest, at $2,253 per HSO, making up 51% of total ISF costs. Labor costs accounted for the remaining 49% of ISF costs, with support staff call costs ($931, or 21%) being the highest sub-category. MIBI staff time accounted for only 10% of the ISF strategy costs.

Base case cost-effectiveness results

Adjusted means and incremental cost-effectiveness ratios at the staff level are presented in Table 3. The incremental cost per staff was $2,457. The incremental effectiveness was 3.73 for the number of MIBIs delivered and 61.45 for the average quality score, resulting in ICERs of $659 and $40. The ICERs indicate that it would cost $659 to increase the number of MIBIs delivered by one using the ATTC + ISF strategy and $40 to increase the average quality score by one point. For the base case client outcome, we found an incremental increase of 59.24 days at follow-up and an ICER of $41. The right two columns of Table 3 present the incremental standardized differences for the outcomes and ICERs using the standard difference. ATTC + ISF had similar effect across all three outcomes: the number of MIBI's delivered increased 0.72 standard deviations, quality increased by 0.66 standard deviations, and days abstinent increased by 0.71 standard deviations. The resulting ICERs were $3,750 for quality and approximately $3,400 for number of MIBIs and days abstinent. In terms of QALYs, the 59.24 days abstinent at follow-up converts to 0.06 QALYs and an ICER of $40,578.

Adjusted means and ICERs.

Note. ATTC = Addiction Technology Transfer Center; ICER = incremental cost-effectiveness ratio; ISF = Implementation & Sustainment Facilitation. Standard errors in parentheses. # indicates the base case outcome.

*p < .05. **p < .01.

Uncertainty and sensitivity analysis results

To assess precision for the ICERs, we present cost-effectiveness acceptability curves for each outcome in Figures 2–4. Figure 2 shows a high confidence that ATTC + ISF is cost-effective as willingness to pay approaches $1,500 for an additional MIBI and a lower chance that ATTC + ISF is cost-effective with a willingness to pay of less than $500. Figure 3 shows a high confidence that ATTC + ISF is cost-effective around $100 for quality improvements and is unlikely to be cost-effective with a willingness to pay of less than $25. Lastly, per Figure 4, the base case cost-effectiveness acceptability curve (solid black line) shows that ATTC + ISF is highly likely to be cost-effective near a willingness to pay of $90 for a point increase in follow-up-only days abstinent. The confidence interval for QALY ICER from days of abstinence is $29,795 to $61,031.

Cost-effectiveness acceptability curve for consistency.

Cost-effectiveness acceptability curve for quality.

Cost-effectiveness acceptability curve for days abstinent at follow-up.

Next, we focus specifically on the sensitivity analysis that changes the outcome model for days abstinent to include baseline substance use. The bottom two rows of Tables 3 present incremental differences from ICERS for these alternative models. When controlling for days abstinent at baseline, the incremental difference is slightly smaller at 45.4 days and ICER of $54. In Figure 4, the profile of the cost-effectiveness acceptability curve with baseline substance use as a covariate is like the base case and ATTC + ISF is cost-effective near a willingness to pay of $125. In terms of QALY, we estimated an ICER of $52,949 (95% CI: $38,878, $79,636).

When using the sum of the difference in days abstinent, the incremental difference is approximately one-third of the base case outcome (18.63), and the ICER is approximately three times larger ($132). In Figure 4, the profile is much different for the difference in days—ATTC + ISF is highly likely to be cost-effective near a willingness to pay of $300 and is unlikely to be cost-effective with willingness to pay of less than $100. In terms of QALYs, we estimated an ICER of $129,032 (95% CI: $94,742, $194,068).

Next, we assessed how varying certain cost components affects the results. In online Supplemental Appendix Table 4, we aggregated costs to the organization level and assessed differences across cohorts. We note differences of $1,000 to $2,000 in total costs across cohort-condition averages. As a sensitivity analysis, we excluded travel costs to account for the fact that each strategy could be delivered virtually; travel costs account for more than 45% of the total costs, and travel costs drove variability across cohorts (see online Supplemental Appendix Table 5). The ATTC + ISF strategy costs $2,562 per staff, excluding travel, and the ATTC-only strategy costs $1,265, yielding a difference of $1,297. Revised ICERs that exclude travel costs are $346 for the number of MIBIs delivered, $21 for quality, and $22 per days abstinent or $21,424 per QALY.

Finally, we lacked accurate time estimates for the screening, which was embedded within a broader research eligibility instrument. Based on discussions with MIBI staff and the research team, we anticipate that these screenings could have taken 1–5 min each. A 1 min screen would increase the incremental cost by approximately $4, and a 5 min screen would increase the incremental cost by approximately $19. The ICER for the number of MIBIs delivered would increase from $660 and $664, a change of $5 at most, and the ICERs for the other two outcomes are relatively unchanged from their base case values.

Discussion

This study examined the cost and cost-effectiveness of the ISF strategy as an adjunct to the ATTC strategy for implementing MIBIs for substance use in HSOs across the United States. We found the ATTC strategy costs approximately $2,915 per staff, and the ISF strategy added an additional $2,457 per staff. With our base case scenario ICERs, we found that ATTC + ISF was cost-effective for the number of MIBIs delivered at a willingness to pay of $659, for quality at a willingness to pay of $40, and for days abstinent at a willingness to pay of $41 or $40,578 in QALYs. In sensitivity analyses for the non-QALY outcomes, we find there is high certainty of cost-effectiveness at approximately twice the ICERs.

There is not a recognized or recommended threshold for assessing cost-effectiveness of implementation outcomes. One potential pragmatic threshold that policymakers could reference is the existing reimbursement rates for MIBI, which are about $50 per MIBI (SAMHSA, 2020). If it costs more than the available reimbursement rate to deliver an additional MIBI, ATTC + ISF may not be worth the investment from a payer or provider perspective. It is unlikely that ATTC + ISF would be deemed cost effective for consistency given the base case ICER is well above $50 and a low probability of cost-effectiveness in Figure 2.

The quality ICER does fall below $50 and from Figure 3 there is a 71% chance that ATTC + ISF is cost-effective at a willingness to pay of $50. However, a one unit increase in quality may not be meaningful. If policymakers are interested in increasing quality by a standard deviation for example, their willingness to pay would need to be much higher for ATTC + ISF to be cost-effective (ICER = $3,750). As shown in Table 3, there is also similar ICER for a standard deviation increase in consistency (ICER = $3,411), suggesting similar cost-effectiveness for the implementation outcomes in standardized units.

For the days abstinent outcome, it is more useful to focus on the QALY ICER for policy relevance. Like the implementation outcomes, there is not a consensus on the relevant threshold for QALYs. One approach is to use a range of thresholds: $50,000, $100,000, and $200,000 per QALY and another approach suggests one-to-two times the per capita GDP, which would be $62,795 to $125,590 per QALY (Neumann et al., 2014; Marseille et al., 2015; World Bank, 2018). Our base case QALY ICER falls below $50,000, and the upper bound of confidence interval falls below $100,000, which suggests that ATTC + ISF may be considered cost-effective across several potential thresholds. This conclusion largely holds across the sensitivity analyses that control for baseline substance, and we could conclude again that the ATTC + ISF is cost-effective.

Our findings have several implications for policymakers. First, the ISF strategy clearly improved both quality and client health (Anonymous, 2020). We also found the ISF strategy increased engagement in ATTC-related activities. We urge SAMHSA and other payers to consider adding the ISF strategy as an adjunct service to the ATTC strategy, as there was strong evidence of cost-effectiveness for two of the study outcomes. For example, HSOs have historically relied on federal funding from the Ryan White HIV/AIDS Program administered by the Health Resource and Services Administration to operate (Charles et al. 2015; KFF 2020). Although core medical services are more likely to be billable to insurers, staff that would provide MIBIs are typically case managers who are not generally providing billable services. MIBIs are also billable service and thus offer HSOs a potential pathway to revenue generation in addition to addressing an unmet need among their population. Although we did not explore reimbursement and financial sustainability of MIBIs among the HSOs, this is an important area for future research.

Second, we note that cost-effectiveness case for consistency was inherently limited by this dual-randomized type-2 hybrid trial design, which, due to simultaneously testing the effectiveness of the implementation strategies and the effectiveness of the clinical intervention, asked MIBI staff to at least initially prioritize quality over quantity and therefore only deliver three MIBIs per month. Low recruitment rates will usually lead to a determination of not cost-effective because of the relatively large cost inputs that went into training and supporting the MIBI staff. Although we likely cannot conclude in this trial that ATTC + ISF was cost-effective specifically for consistency, it could be cost-effective with scaled-up recruitment practices or in higher-volume HSOs.

We also note that our models consider each outcome independently. Some frameworks consider the outcomes jointly, which allow policymakers to assess combinations of willingness to pay across the outcomes that are cost-effective (Hoch et al., 2002; Negrin et al., 2006). Following Nystrand et al. (2021), who compare QALY and non-QALY outcomes, we graph the relationship between the consistency and QALY outcomes in Supplemental Appendix Figure 1. Even if policymakers are only willing to pay $50 for an additional MIBI, ATTC + ISF is cost-effective overall if they are willing to pay $37,529 for an additional QALY. Assessing the outcomes together provides an even stronger case that ATTC + ISF is overall cost-effective, even if one outcome independently may not appear so.

Third, we noted a large portion of the costs and variability across cohorts was attributable to travel costs. Future implementations should consider virtual training options, which would incur zero travel costs and thus have the potential to significantly reduce costs overall.

We also make several contributions to the implementation and economic evaluation literature. First, we added detail to a growing literature on economic evaluation of implementation strategies. Since the Roberts et al. (2019) review, we identified two studies with an economic evaluation of an implementation strategy for substance use disorder and the ATTC + ISF costs are similar or lower than both studies. First, one study ((Garner et al., 2018) tested the cost-effectiveness of pay-for-performance as an addition to implementation-as-usual training strategy for the Adolescent Community Reinforcement Approach. Per-HSO costs were approximately five times higher for pay-performance and training in that study than in ATTC + ISF; however, adding pay-for-performance only increased costs by 5%, as opposed to the approximately 80% increase in costs from ISF. Our main cost estimates per HSO were overall higher than another study by Herman et al. (2020) but showed a lower relative cost increase. Herman et al. (2020) tested an implementation support system for a program to delay initiation of alcohol and marijuana use in middle school students. They found that the usual approach costs $829 per site, and the implementation strategy costs $5,424 per site—a 554% increase. Excluding travel costs from our estimates would bring the ATTC + ISF costs level with Herman et al.’s (2020) approach.

In terms of cost-effectiveness of ATTC + ISF, pay-for-performance yielded similar increases in consistency (116% increase, ICER = $333) and larger increases in quality (325% increase, ICER = $453) and days abstinent (325% increase, ICER = $8). We reported a 114% increase in MIBIs delivered (ICER = $659), a 61% increase in quality (ICER = $40), and a 141% increase in days abstinent (ICER = $41). ATTC + ISF ICERs are also closer when travel costs are excluded. Herman et al. (2020) did not report tests of effectiveness or cost-effectiveness.

As a second implication for researchers, we highlight a challenge of how best to balance (if at all) the prioritization of implementation quality and implementation quantity. Based on our experience, we recommend that, when planning a dual-randomized hybrid trial, researchers consider the plausible range of incremental differences in outcomes and costs, as well as whether that range might lead to a cost-effective result. Although implementation costs and outcomes are often unknown in advance, using hypothetical examples could help inform design-related decisions. Supplemental Appendix Table 6 provides a hypothetical example for the current trial, showing a range of ICERs calculated from three different incremental cost differences ($1,500, $3,000, and $4,500) and the incremental number of MIBIs (5–100). This type of exercise shows that an increase of 30 MIBIs would be needed to be cost-effective at an incremental cost of $1,500, and 90 MIBIs would be needed for an incremental cost of $4,500. If researchers do not prospectively think a 30 MIBI increase is plausible, the implementation strategy may need to be augmented to minimize costs.

As a final implication, we highlight a methodological challenge defining the client health outcome. There is no clear guidance on how to handle client outcomes when assessing implementation strategies. Conducting a client-level analysis in our context would necessitate excluding a large portion of the costs incurred to train MIBI staff and prepare the HSO for MIBI delivery. Aggregating client outcomes to the staff level can address that concern, but there is not a strongly established precedent for doing this, nor for using the sum of the outcome instead of the average. Lastly, we noted that client-level analyses often account for baseline substance use. Controlling for baseline substance as a covariate produced a similar result to the base case, but using the difference in days between baseline and follow-up produced a larger ICER. Although still likely cost-effective, it is a noticeable difference. We urge other researchers to carefully consider how they operationalize client outcomes in economic evaluations of implementation strategies.

Our study is subject to several limitations. First, as previously discussed, we did not conduct universal screening, and staff sought to implement only three MIBIs per month. Although necessary for the project's effectiveness aim, this artificially low target may have resulted in an artificially high cost per staff because the denominator—number of clients receiving a MIBI—was low, or it may have failed to capture the full implications. Second, we lacked data on the number of clients screened and could not quantify costs to the organization related to screening, nor a cost per positive screen. Third, our estimated costs for the ATTC + ISF strategy may reflect a lower bound due to potential additional meetings held for that condition, which we did not capture in our tracking system. Fourth, some cost elements relied on reports from project staff and participants and are subject to recall bias.

Conclusions

We believe that at least three important conclusions should be drawn from our study. First, our study offers comprehensive, novel evidence on the cost-effectiveness of ATTC + ISF that suggests the ISF strategy can improve the integration of substance use services within HSOs if scaled up to reach more clients. Second, our study adds rigorous costs estimates from a dual-randomized type-2 hybrid trial to a literature with relatively poor methodological quality to date. Third, our study highlights critical challenges to conducting cost-effectiveness analysis as part hybrid trials, especially hybrid trials simultaneously testing implementation strategies and clinical interventions. We hope that our study and its conclusions benefit implementation research and implementation practice conducted in the future, and we hope these benefits are not limited to implementation research or implementation practice focused on our specific niche. Rather, we believe our study and its conclusion may be generalized and relevant to most (if not all) implementation research/practice seeking to include a rigorous economic component.

Supplemental Material

sj-docx-1-irp-10.1177_26334895221089266 - Supplemental material for The implementation & sustainment facilitation (ISF) strategy: Cost and cost-effectiveness results from a 39-site cluster randomized trial integrating substance use services in community-based HIV service organizations

Supplemental material, sj-docx-1-irp-10.1177_26334895221089266 for The implementation & sustainment facilitation (ISF) strategy: Cost and cost-effectiveness results from a 39-site cluster randomized trial integrating substance use services in community-based HIV service organizations by Jesse M. Hinde, Bryan R. Garner, Colleen J. Watson, Rasika Ramanan, Elizabeth L. Ball and Stephen J. Tueller in Implementation Research and Practice

Footnotes

Acknowledgements

This work was supported by the National Institute on Drug Abuse (NIDA; R01DA038146; PI Garner). NIDA had no role in the design of this study and will not have any role during its execution, analyses, interpretation of the data, or decision to submit results. The content is solely the responsibility of the authors and does not necessarily represent he official views of the government. The authors also acknowledge Alexander Cowell, William Dowd, Brendan Wedehase, Mike Bradshaw, Marianne Kluckman, Alyssa Toro, and the rest of the SAT2HIV Project team.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the National Institute on Drug Abuse (grant number R01DA038146).

Supplemental material

Supplemental material for this article is available online.

Trial registration

ClinicalTrials.gov: NCT02495402, https://clinicaltrials.gov/ct2/show/NCT02495402, and ClinicalTrials.gov: NCT03120598, ![]() .

.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.