Abstract

Background

Most evidence-based treatments (EBTs) for posttraumatic stress disorder (PTSD) and anxiety disorders include exposure; however, in community settings, the implementation of exposure lags behind other EBT components. Clinician-level determinants have been consistently implicated as barriers to exposure implementation, but few organizational determinants have been studied. The current study examines an organization-level determinant, implementation climate, and clinician-level determinants, clinician demographic and background factors, as predictors of attitudes toward exposure and changes in attitudes following training.

Method

Clinicians (n = 197) completed a 3-day training with 6 months of twice-monthly consultation. Clinicians were trained in cognitive behavioral therapy (CBT) for anxiety, depression, behavior problems, and trauma-focused CBT (TF-CBT). Demographic and background information, implementation climate, and attitudes toward exposure were assessed in a pre-training survey; attitudes were reassessed at post-consultation. Implementation climate was measured at the aggregated/group-level and clinician-level.

Results

Attitudes toward exposure significantly improved from pre-training to post-consultation (t(193) = 9.9, p < .001; d = 0.71). Clinician-level implementation climate scores did not predict more positive attitudes at pre-training (p > .05) but did predict more positive attitudes at post-consultation (ß = −2.46; p < .05) and greater changes in those attitudes (ß = 2.28; p < .05). Group-level implementation climate scores did not predict attitudes at pre-training, post-consultation, or changes in attitudes (all ps > .05). Higher frequency of self-reported CBT use was associated with more positive attitudes at pre-training (ß = −0.81; p < .05), but no other clinician demographic or background determinants were associated with attitudes at post-consultation (all p > .05) or with changes in attitudes (all p > .05).

Conclusions

Clinician perceptions of implementation climate predicted greater improvement of attitudes toward exposure following EBT training and consultation. Findings suggest that organizational determinants outside of training impact changes in clinicians’ attitudes. Training in four EBTs, only two of which include exposure as a component, resulted in positive changes in clinicians’ attitudes toward exposure, which suggests non-specialty trainings can be effective at changing attitudes, which may enable scale-up.

Keywords

About 30% of youth in the United States have an anxiety disorder or posttraumatic stress disorder (PTSD; Merikangas et al., 2010). Exposure therapy (“exposure”), which is a treatment component of cognitive behavioral therapy (CBT) that involves approaching feared stimuli, is a key ingredient of evidence-based treatments (EBTs) of anxiety and PTSD. Exposure is included in 100% of PTSD treatment protocols (Borntrager et al., 2013) and nearly 90% of anxiety treatment protocols (Higa-McMillan et al., 2016). There is strong evidence for the efficacy of exposure in treating anxiety disorders and PTSD (Dorsey et al., 2017; Higa-McMillan et al., 2016). In treatments for anxiety disorders, the introduction of exposure tasks is associated with acceleration in treatment progress (Peris et al., 2015), larger treatment effect

A primary barrier to the implementation of exposure therapy in CMH settings is a lack of clinician training (Reid et al., 2017a, 2017b; Wolitzky-Taylor et al., 2018). Most clinicians receive limited or no training in exposure and report difficulty finding training opportunities (Reid et al., 2017a, 2017b; Reid et al., 2018; Wolitzky-Taylor et al., 2019). Furthermore, a one-time training may not lead to sufficient increases in attitudes, knowledge, and competency if it is not followed by ongoing consultation (Foa et al., 2020; Frank et al., 2020). A recent meta-analysis found that trainings were effective in changing knowledge, attitudes, self-efficacy, intentions to use, and use of exposure (Trivasse et al., 2020). However, studies included in this meta-analysis trained clinicians in only one EBT containing exposure and many trainings had an explicit focus on changing exposure attitudes. While these training were effective, they are limited in scope and costly, which may limit the ability to scale-up trainings to meet the large demand.

Implementation science frameworks emphasize the need to consider contextual determinants that might contribute to infrequent implementation of EBTs even following extensive training (Damschroder et al., 2009). Studies have shown that attitudes toward EBTs influence uptake and use (Aarons, 2004; Addis & Krasnow, 2000; Becker-Haimes et al., 2019; Beidas et al., 2015). Clinician-level determinants of exposure implementation have been well documented, with negative attitudes consistently emerging as barriers to high-quality delivery (Deacon et al., 2013a, 2013b). Many clinicians believe that exposures will lead to client attrition (Olatunji et al., 2009), not generalize to the real world (Feeny et al., 2003), damage emotionally fragile clients (Rosqvist, 2005), and worsen client's symptoms (Deacon et al., 2013a, 2013b). Negative attitudes toward exposure have profound and pervasive impacts on the use of exposure. Specifically, clinicians’ negative attitudes toward exposure are associated with less frequent use (de Jong et al., 2020; Reid et al., 2018; Whiteside et al., 2016), lower proficiency (Harned et al., 2013), and suboptimal delivery (Deacon et al., 2013a, 2013b; Deacon et al., 2013a, 2013b; Farrell et al., 2016). Therefore, it is important to understand what influences clinicians’ attitudes toward exposure.

Potential influences on clinician attitudes may include organization- and clinician-level factors, such as organizational climate and clinician demographics. Theoretical models of training emphasize the importance of understanding how pre-training clinician-level determinants influence the training process (McLeod et al., 2018). One study found that higher self-reported CBT use was associated with more positive attitudes toward exposure (Whiteside et al., 2016). Other studies found that female gender (Deacon et al., 2013a, 2013b), higher caseload (Becker-Haimes et al., 2017), and older age (Deacon et al., 2013a, 2013b) predicted more negative attitudes toward exposure. While most studies have been in non-CMH settings (Deacon et al., 2013a, 2013b; Whiteside et al., 2016), the current sample consists of CMH clinicians who are similar to other CMH samples in that they are largely White, female, Master's-level clinicians (Amaya-Jackson et al., 2018; Bartlett et al., 2015; Jensen-Doss et al., 2020), which suggests that results from the current study may be generalizable to other CMH contexts. Further research is necessary to understand how clinician-level determinants influence changes in attitudes during training and consultation.

Implementation science frameworks also emphasize the importance of organization-level determinants of EBT implementation (Damschroder et al., 2009), which may be especially important for difficult-to-implement EBT components like exposure. Implementation climate, the extent to which the use of an innovation is expected, supported, or rewarded within an organization (Klein & Sorra, 1996), has emerged as a potential determinant of EBT use. There is evidence that implementation climate predicts use of an EBT (Williams et al., 2018), implementation effectiveness of an innovation (Jacobs et al., 2015; Sawang & Unsworth, 2011), and attitudes toward an EBT (Powell et al., 2017). Clinicians’ individual implementation climate perspectives can be considered a determinant, or they can be aggregated to the group-level (Jacobs et al., 2015). Theory conceptualizes implementation climate as an organization-level construct (Jacobs et al., 2014; Weiner et al., 2011). However, it is also theorized that individual perceptions of implementation climate may be more appropriate for an innovation that is a smaller piece of an individual's practice (Jacobs et al., 2014; Weiner et al., 2011), such as exposure, which is only one component of EBTs for anxiety and PTSD. Some previous studies have aggregated implementation climate within organizations (Becker-Haimes et al., 2017; Ehrhart et al., 2016; Jacobs et al., 2014; Williams et al., 2018), and some have used individual perceptions (Dong et al., 2008; Jacobs et al., 2014; Kratz et al., 2019; Osei-Bryson et al., 2008). Weiner et al. (2011) offer analytic recommendations to determine the most appropriate level of analysis by assessing the extent to which clinician-level responses show sufficient agreement to justify aggregation. The current study follows the analytic recommendations of Weiner et al. (2011) for the main analysis. However, to explore when it is appropriate to use each level of analysis for implementation climate and to offer transparency in the research process, the current study will present results of implementation climate analyzed at both the individual clinician-level and the aggregated group-level as an exploratory aim. This distinction has an impact on the future conceptualization and analysis of implementation climate.

Among the few studies that have examined organizational determinants of exposure implementation, most are primarily descriptive in nature with a focus on generating potential predictors of exposure use (e.g., Becker-Haimes et al., 2020; Wolitzky-Taylor et al., 2018; Wolitzky-Taylor et al., 2019). The only study that has quantitatively examined organizational determinants found that group-level implementation climate was marginally associated with exposure use (Becker-Haimes et al., 2017). Despite the need to consider both individual- and organization-level determinants (Becker-Haimes et al., 2019), to our knowledge, no studies have quantitively examined implementation climate as a determinant of exposure attitudes. An organization with a more positive implementation climate communicates to clinicians that they expect, support, and reward the use of exposure, which may mean clinicians have more knowledge and practice using exposure, which may lead to more positive attitudes before training. In an organization with a more negative implementation climate, clinicians may be unfamiliar with and hesitant about using exposure, which may lead to more negative attitudes before training. Implementation climate may also predict changes in attitudes throughout a training initiative. For example, an organization with a more positive implementation climate may serve as a supportive environment as clinicians engage in training, receive consultation, and practice delivering exposure, which may lead to greater changes in attitudes.

Given the need to identify factors that influence clinicians’ attitudes toward exposure, we conducted a study with CMH clinicians who participated in a state-funded EBT learning collaborative (LC), which trains clinicians in four EBTs and provides 6 months of consultation. To our knowledge, this is the first study examining changes in attitudes toward exposure following a multiple-EBT training, which is more scalable than a specialty training focused on a single EBT. The current study is one of few to examine changes in attitudes toward exposure following training with consultation, which is a key ingredient for effective training (Foa et al., 2020; Frank et al., 2020). Furthermore, the current study considers both organization- and clinician-level determinants of exposure, which has been called for (Becker-Haimes et al., 2019). This study examined three pre-specified hypotheses involving key determinants of exposure at the organization-level (implementation climate) and at the clinician-level (clinicians’ attitudes and beliefs toward exposure, referred to as “attitudes”). Hypothesis 1: Given previous evidence suggesting the influence of implementation climate on clinician attitudes toward EBTs (e.g., Powell et al., 2017), we expected that higher pre-training implementation climate scores would be associated with more positive attitudes toward exposure at pre-training and greater changes in attitudes after training and consultation. Hypothesis 2: We also sought to replicate and extend previous findings (e.g., Becker-Haimes et al., 2017; Deacon et al., 2013a, 2013b; Whiteside et al., 2016) by examining clinician-level demographic and background determinants of attitudes toward exposure. We expected to replicate previous findings that being older, identifying as female, having a larger caseload, and lower self-reported CBT use would be associated with more negative attitudes toward exposure. Hypothesis 3: We follow analytic recommendations (Jacobs et al., 2014) to determine if implementation climate should be aggregated for main analyses. However, as an exploratory aim, we present the results of both aggregated and non-aggregated implementation climate perceptions to contribute to the understanding of implementation climate measurement and analysis.

Method

Procedure

We follow the preferred reporting guidelines for observational studies (STROBE) to enhance transparency (von Elm et al., 2014). Data were collected from a Washington state-funded LC, called the CBT+ Initiative (see Dorsey et al., 2016) from September 2018 to October 2019. Three-day trainings were held in five locations. Clinicians were trained in CBT for anxiety, depression, behavioral problems, and trauma-focused CBT (TF-CBT; Cohen et al., 2006). Participating organizations must have a supervisor who already completed, or was currently completing, the CBT+ training. Before the training, participants (n = 236) completed an electronic survey, which included demographic and background information, the Implementation Climate Scale (ICS), the Therapist Beliefs about Exposure Scale, and measures used for program evaluation. Informed consent was obtained from participants included in the study.

In line with best practices for training (Foa et al., 2020; Frank et al., 2020; Valenstein-Mah et al., 2020), clinicians applied CBT+ techniques and received expert consultation via telephone or video-based group calls twice-monthly for 6 months (12 calls total). Each group contained 10–15 clinicians, who were led by a CBT+ faculty member or a local stakeholder (see Triplett et al., 2020). Consultants and clinicians used an online system that allowed clinicians to enter de-identified case information (e.g., standardized assessment results, CBT+ model delivered, self-reported fidelity) and allowed consultants to track clinician attendance and progress with cases.

Clinicians completed the second electronic survey after the 6 months of consultation (n = 202, 85.6% completed both surveys). The post-consultation survey included the Therapist Beliefs about Exposure Scale and measures for program evaluation. Clinicians were emailed reminders once-a-week for 3 weeks, then were considered lost to follow-up. Values were missing from eight respondents, who did not appear to systematically differ after visual inspection of covariates. The final sample includes 194 clinicians (96.0% of the post-consultation survey response). Certificates of completion were given to clinicians who completed the pre-training and post-consultation surveys, were present for nine of the 12 group calls, presented one case during consultation, and submitted information demonstrating standardized measure use and CBT+ application with clients (judged by consultants using the data entered into the online system). University of Washington Institutional Review Board determined study activities exempt from review.

Measures

Demographics and background information

Clinicians self-reported demographics and background information including gender identity, race and ethnicity, theoretical orientation, years of experience delivering therapy, number of active cases, and frequency of CBT use. Frequency of CBT use was measured with an item at pre-training asking, “How frequently have you used cognitive behavioral therapies with your psychotherapy clients?” This item was rated on a 7-point rating scale from 0 = “Have not provided psychotherapy in the past 5 years,” 1 = “never [0% of clients],” 2 = “rarely (1–5% of clients),” 3 = “occasionally (6–25% of clients),” 4 = “sometimes (26–50% of clients),” 5 = “often (51–75% of clients),” and 6 = “Almost always (76% of clients or more).” These items were recoded to approximate an interval variable with equal spacing between responses (i.e., 1 = 0%–25% of clients [responses 1, 2, 3], 2 = 26%–50% of clients [response 4], 3 = 51%–75% of clients [response 5], and 4 = 76%–100% of clients [response 6]). The clinicians who selected response 0 were excluded (n = 7).

Attitudes toward exposure

The Therapist Beliefs about Exposure Scale (TBES) is a 21-item scale assessing participants’ negative attitudes and beliefs toward exposure therapy (“attitudes” in the current study; Deacon et al., 2013a, 2013b). For example, the scale assessed clinicians’ perceptions about the potential for vicarious traumatization, iatrogenic harm, confidentiality breaches, and damage to the clinician–client relationship. Participants indicated agreement with statements on a 5-point Likert scale, 0 = “strongly disagree” and 4 = “strongly agree.” The total score included all items summed (range = 0–84). Higher scores indicate stronger negative attitudes toward exposure therapy. The initial validation sample found the TBES to have high internal consistency (αs = .90–.96) and 6-month test–retest reliability (r = .89; Deacon et al., 2013a, 2013b). Reliability in our sample was good (a = .85).

Implementation climate

Five of six subscales (15 items) of the ICS (Ehrhart et al., 2014) assessed how much clinicians perceive that their organization expects, supports, and reward

Analytic plan

We calculated descriptive statistics to characterize our sample, including demographics and attitudes toward exposure at pre-training and post-consultation. We used paired t-tests to examine change in attitudes toward exposure from pre-training to post-consultation and present Cohen's d effect sizes.

We used linear mixed-effects regression models to determine the associations between implementation climate, clinician demographic and background determinants (i.e., age, gender, caseload, theoretical orientation, and self-reported frequency of CBT use), and attitudes toward exposure. Final linear mixed-effects models included random intercepts for organization to account for nesting of clinicians within organizations. Fixed effects were added to account for all clinician- and organization-level determinants. Clinician-level demographic and background determinants included age, identification as female (vs. all other gender identities), clinician-level implementation climate perceptions, theoretical orientation, active caseload, and self-reported frequency of CBT use. Organization-level determinants included group-level and clinician-level implementation climate. We followed an iterative model building approach for each outcome variable (pre-training attitudes, post-consultation attitudes, and change in attitudes). For each of the three models, first, only random effects for organizations were included to provide an estimate of organization and residual variance as well as intraclass correlation coefficients (ICCs) for each model. Next, clinician- and group-level determinants were run individually as fixed effects to estimate the variance in attitudes toward exposure that was attributable to clinician-level determinants. Nonsignificant effects (p > .05) were removed for parsimony. Our final models included significant determinants and a random effect for group. All analyses were conducted using the lme4 package in R.

We examined both clinician-level perceptions of implementation climate and group-level perceptions of implementation climate as determinants of clinicians’ attitude toward exposure in separate models. For clinician-level implementation climate, we utilized the Implementation Climate Scale (ICS) mean score for that clinician. For group-level implementation climate, we averaged the clinician-level ratings within organizations with three or more respondents. Because it is not yet known which level of implementation climate measurement is most appropriate for assessing the impact on clinicians’ attitudes toward exposure, we followed Weiner et al.’s (2011) recommendations and examined the ICC(1,1), ICC(1,k), r*wg(J), and eta-squared of implementation climate ratings before analyzing the impact of either level on attitudes toward exposure (for more information on ICC, see Shrout & Fleiss, 1979). Our ICCs indicated that most of the variance in implementation climate was at the individual level: (ICC(1,1) = 0.085 and ICC(1,k) = 0.20). Some studies recommend a cut-off of 0.80 to determine if data are homogenous enough to justify aggregation (Lance, 2006), which would indicate an aggregated implementation climate score may not accurately represent our clinicians’ “shared” perceptions. The average r*wg(J) values for all groups was 0.59, and r*wg(J) values ranged from −0.35 to 0.87, which indicates there was substantial heterogeneity in within-group agreement between groups. Eta-squared was 0.42, suggesting 42% of variance in implementation climate scores is attributable to group; however, this estimate may be biased and overestimate variance (Bliese, 1998). These analyses led us to conclude that our data are best represented through clinician-level implementation climate perceptions, however, previous literature has often included aggregated implementation climate scores for organizations with more than three respondents. Thus, we present the results of implementation climate at both the clinician- and group-level and consider both to be measuring an organization-level construct based on theory underlying the measure (Jacobs et al., 2014; Weiner et al., 2011).

Results

Demographics and descriptives

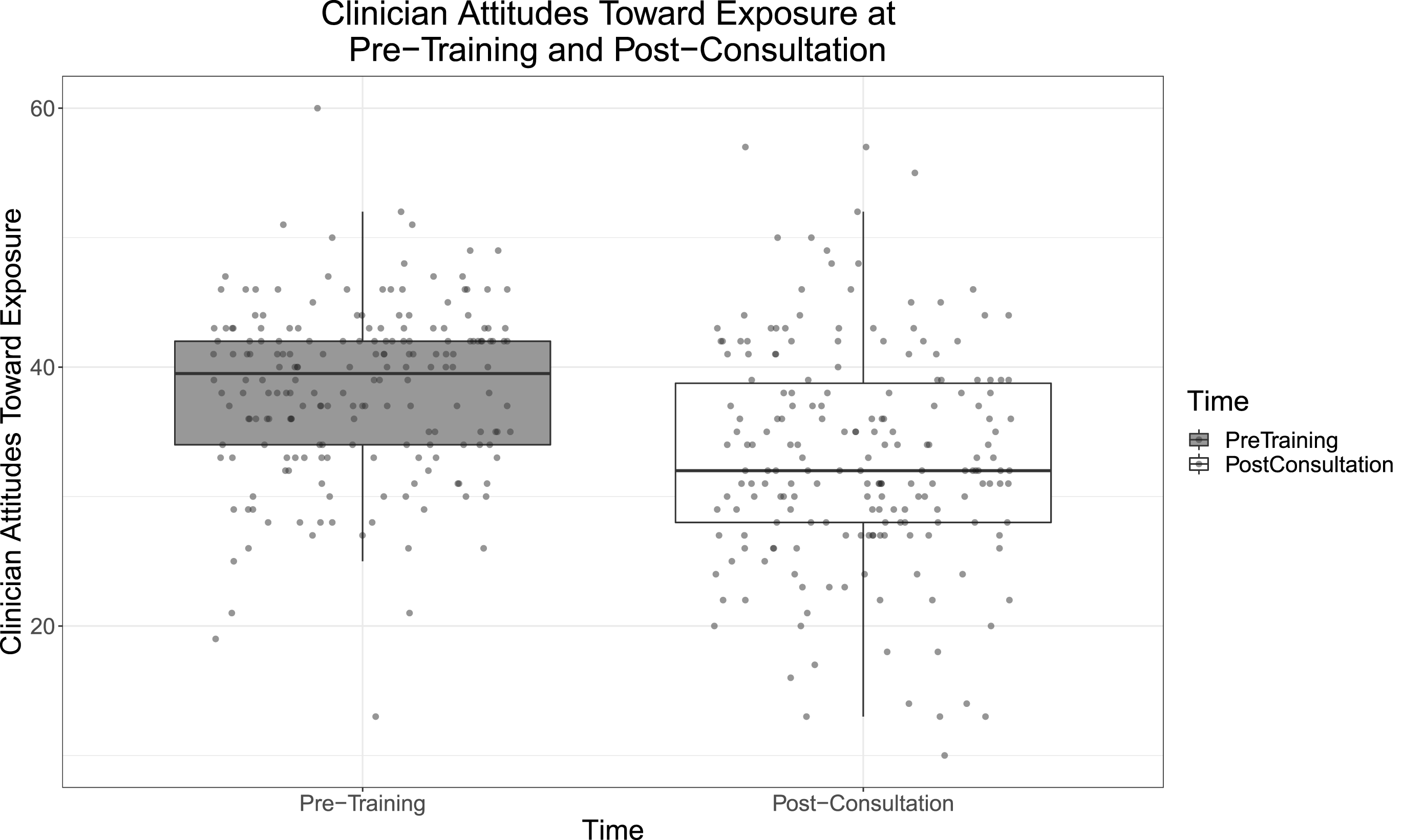

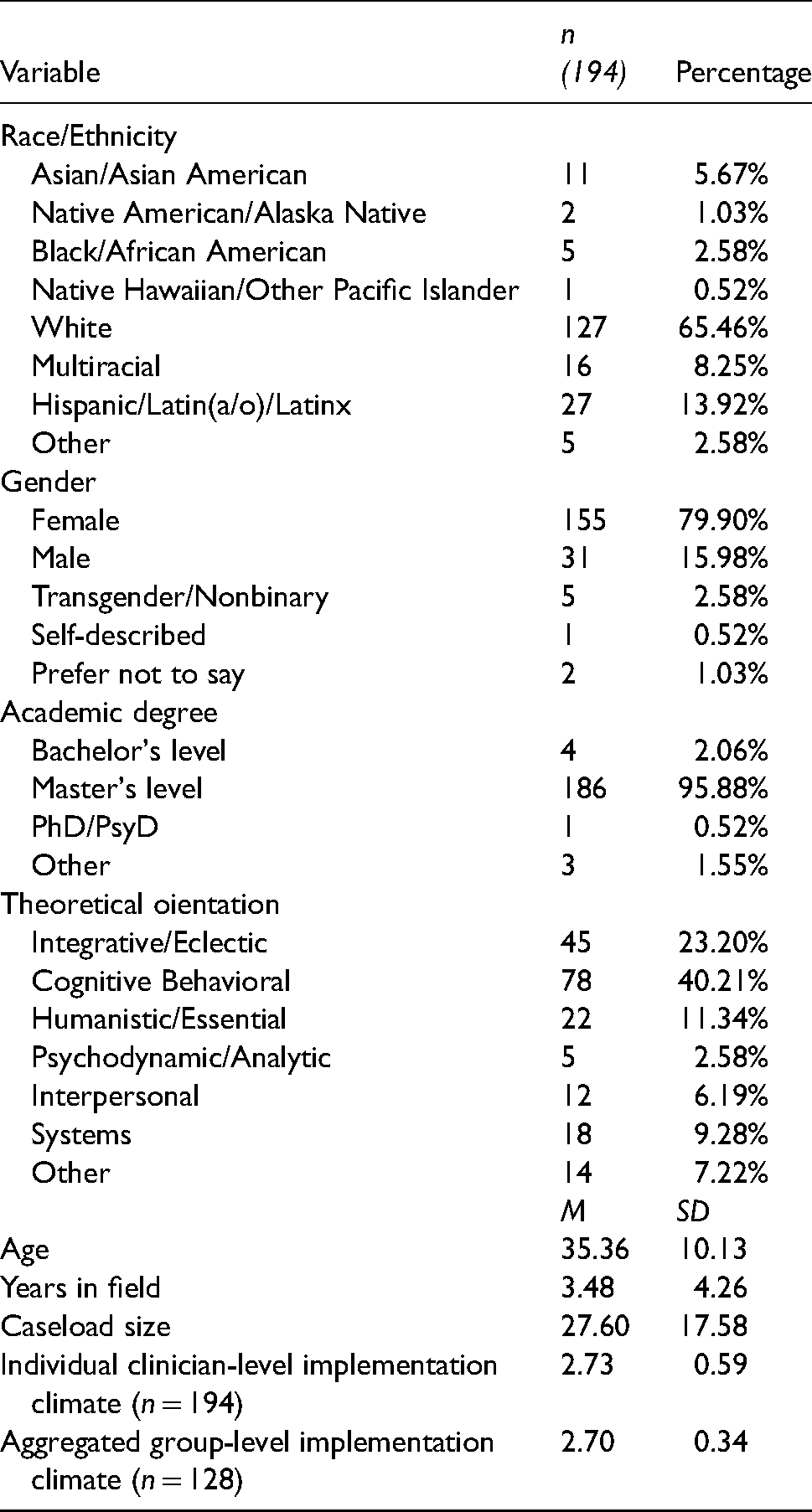

Participants (N = 194) were predominantly female (79.9%, n = 155) and White (65.5%, n = 127). Most had a Master's degree (95.9%, n = 186). Nearly half identified cognitive-behavioral as their primary theoretical orientation (40.2%; n = 78), with the next most common being integrative or eclectic (23.2%; n = 45). On average, participants were 35.4 (SD = 10.1) years old with 3.5 (SD = 4.3) years of experience providing psychotherapy. The average active caseload was 27.6 clients (SD = 17.6). Clinicians represented 72 organizations. The average clinician-level implementation climate rating was 2.7 (SD = 0.6; range of scale: 0–4), and average group-level implementation climate for organizations with three or more participants (n = 29, 40.3% of organizations) was also 2.7 (SD = 0.3). The clinician- and group-level implementation climate scores indicate that participants perceived their organizations to expect, support, and reward the use of “evidence-based practices” at a slight-to-moderate extent. The average TBES score at pre-training was 38.3 (SD = 6.6; range of scale: 0–84). Organization-level random effects accounted for 2.5% of the total variance in TBES at pre-training. The average TBES score at post-consultation was 33.0 (SD = 8.5). Organization-level random effects accounted for 11.2% of the total variance at post-consultation. In our sample, TBES scores significantly decreased from pre-training to post-consultation, t(193) = 9.9, p < .001; d = 0.71 (Figure 1). Full demographic and descriptive statistics are included in Table 1. Correlations between all individual- and organization-level variables are shown in Table 2.

Clinician attitudes toward exposure at pre-training and post-consultation. Higher scores indicate stronger negative attitudes toward exposure therapy.

Demographic information.

Pearson correlation coefficients between individual- and organization-level variables.

*p < .05. **p < .01. ***p < .001.

Note. CBT = cognitive behavioral scale; ICS = Implementation Climate Scale.

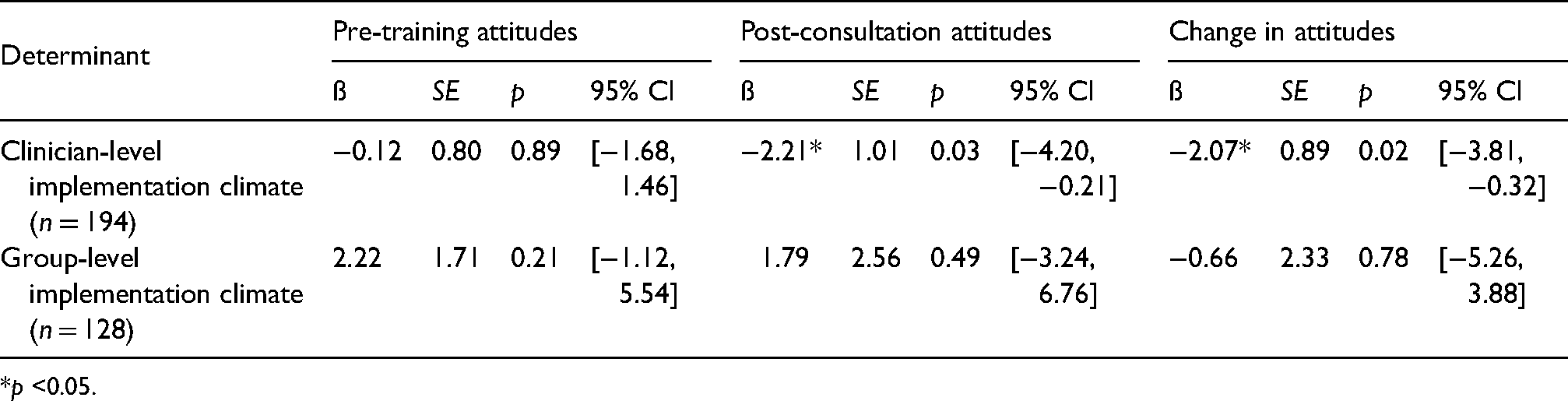

Implementation climate and exposure

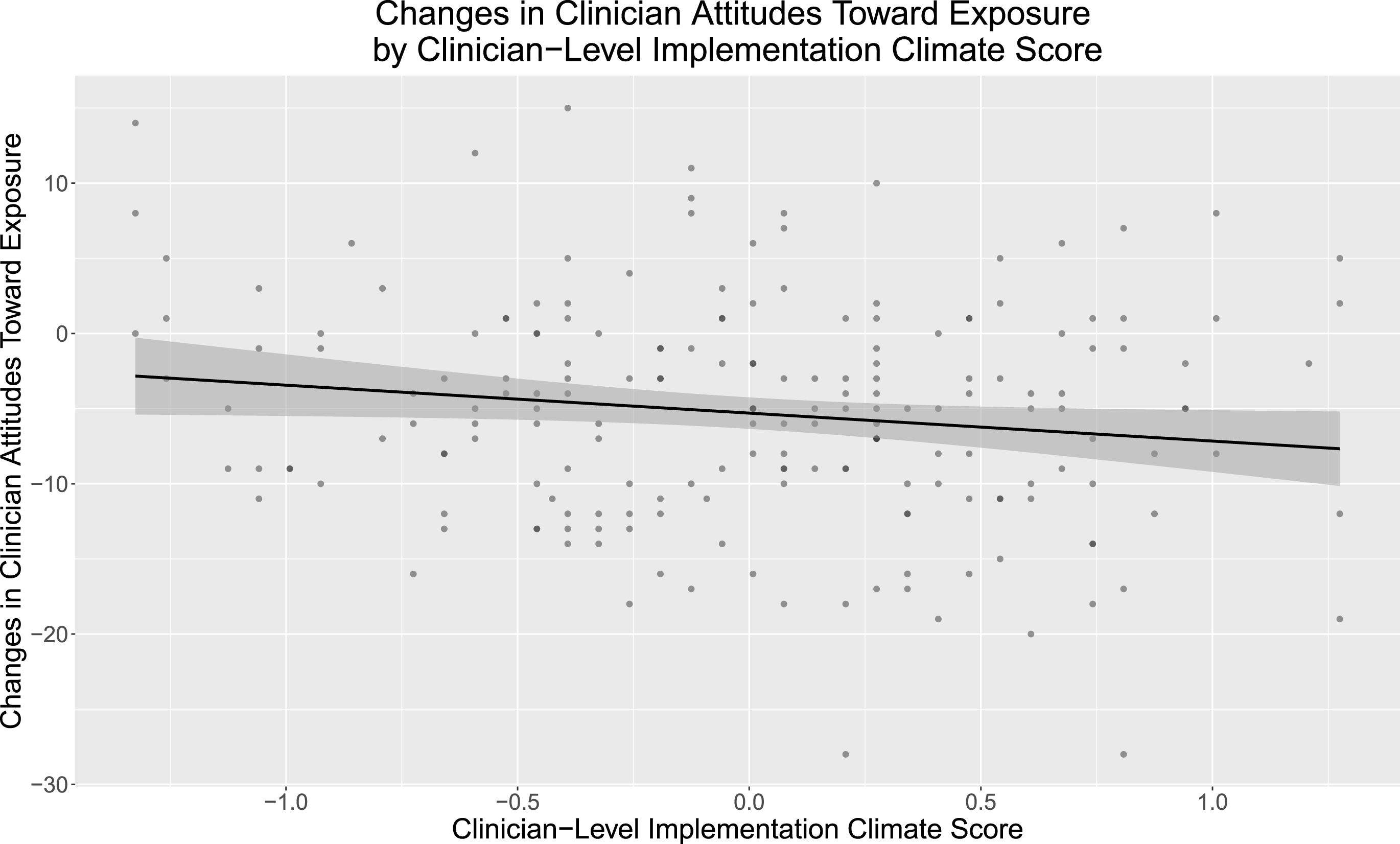

Single-factor model associations between implementation climate and attitudes toward exposure are presented in Table 3. At pre-training, neither clinician- nor group-level implementation climate scores were associated with TBES scores (both p > .05). At post-consultation, higher clinician-level implementation climate scores were associated with lower TBES scores (i.e., fewer negative attitudes; ß = −2.21; p < .05), but group-level implementation climate scores were not associated with TBES scores (p > 0.05). Following a similar pattern, higher clinician-level implementation climate scores were also associated with greater changes in attitudes toward exposure from pre-training to post-consultation (ß = −2.07; p < .05; Figure 2), but group-level implementation climate scores were not (p > .05).

Changes in clinician attitudes toward exposure by clinician-level implementation climate scores. Higher attitudes scores indicate stronger negative attitudes toward exposure therapy.

Single factor models examining associations between implementation climate and clinician attitudes toward exposure.

*p <0.05.

Clinician-level determinants and exposure

Single-factor model associations between clinician demographic and background determinants (i.e. age, caseload, gender, theoretical orientation, and self-reported frequency of CBT use) and attitudes are presented in Table 4. At pre-training, higher rates of self-reported CBT use were associated with lower TBES scores (i.e., fewer negative attitudes; ß = −1.04; p < .05). At pre-training, no other clinician demographic or background determinants were associated with TBES (all p > .05). At post-consultation, no clinician demographic or background determinants were associated with TBES (all p > .05). No clinician demographic or background determinants were associated with changes in TBES scores (all p > .05).

Single factor models examining associations between clinician determinants and clinician attitudes toward exposure.

*p <0.05.

Note. CBT = cognitive behavioral therapy.

Discussion

Efforts to support clinicians delivering EBTs in CMH settings have highlighted the need to address determinants across multiple levels, especially for difficult-to-implement and infrequently implemented treatment components, such as exposure. Results from this study indicate that organizational determinants within a clinician's agency, and outside of the training process, may influence how much clinicians’ attitudes toward exposure change throughout training and consultation. Higher clinician-level pre-training implementation climate scores were not associated with more positive attitudes toward exposure at pre-training but were associated with more positive attitudes at post-consultation. Furthermore, higher clinician-level pre-training implementation climate scores were associated with greater changes in attitudes toward exposure from pre-training to post-consultation. Implementation climate was found to be a potentially helpful factor for improving clinicians’ attitudes toward exposure; however, in our sample, implementation climate only influenced attitudes in the presence of training and consultation. As such, implementation climate appears to have acted as a helpful, but not sufficient, factor. Given that negative attitudes toward exposure have consistently been associated with less frequent (de Jong et al., 2020; Reid et al., 2018; Whiteside et al., 2016) and less competent use of exposure (Deacon et al., 2013a, 2013b; Deacon et al., 2013a, 2013b; Farrell et al., 2016; Harned et al., 2013), it is important to identify factors that may influence these attitudes over time and in different organizational climates.

The current study provides evidence for an improvement in attitudes toward exposure following a non-specialty training, which offers a less resource-intensive alternative to meet the large demand for exposure training among CMH clinicians (Wolitzky-Taylor et al., 2019). While other studies have had success in changing attitudes toward exposure, prior studies focused on training clinicians in only one EBT containing exposure (Trivasse et al., 2020). Prior to the current study, the only work investigating the effects of a multiple EBT training on intentions to use exposure found that clinicians had the lowest intentions to deliver exposure compared to other CBT components (Wolk et al., 2019). Even in modular treatments designed to be responsive to comorbidities and flexible in implementation (Chorpita et al., 2005; Weisz et al., 2012), exposure was the least used therapeutic component at a 5-year follow-up, which suggests some difficulty or discomfort with the implementation of exposure (Thomassin et al., 2019). In contrast to prior studies, the current study did not explicitly focus on addressing attitudes toward exposure and included training for four EBTs, of which only two include exposure as a treatment component.

We predicted that higher pre-training implementation climate would lead to more positive attitudes toward exposure at pre-training. However, we found that higher pre-training implementation climate did not predict more positive attitudes at pre-training but did predict more positive attitudes at post-consultation and greater changes in attitudes throughout training and consultation. While beyond the scope of the current study, we theorize that there is some interplay between implementation climate and skill development. For example, this pattern of results could reflect that a higher implementation climate does not necessarily shape attitudes before training, but rather it functions (1) to increase clinicians’ motivation to learn and practice EBT skills following the training and during the consultation period and (2) as a supportive environment while clinicians practice new skills in the context of workplace-based supervision and consultation. Both processes could lead to more positive attitudes toward exposure at post-training for clinicians who perceive more positive implementation climates in their organization. Future work should seek to investigate the interplay between skill development and implementation climate to understand whether implementation climate functions as a mechanism underlying clinician behavior change.

While item-level analyses were not conducted in the current study, we can speculate about how some of the TBES items are likely to interact at the organization level. For instance, if participants are in an organization where using exposure and/or other EBPs is the norm, they are perhaps less likely to worry about damage to the clinician-client relationship from exposure. If they have peers and/or supervisors who also use exposure, they would be able to consult them regarding the ethics of exposure (Becker-Haimes et al., 2020). Future work should investigate changes in specific attitudes toward exposure and the association with implementation climate.

The current study examined clinician demographic and background factors predicting attitudes toward exposure. At pre-training, more positive attitudes toward exposure were predicted only by higher ratings of self-reported CBT use. Additionally, no clinician-level determinants were associated with attitudes at post-consultation or with changes in attitudes. While another study also found that higher self-reported CBT use was associated with more positive attitudes toward exposure (Whiteside et al., 2016), other studies found that gender (Deacon et al., 2013a, 2013b), caseload (Becker-Haimes et al., 2017), and age (Deacon et al., 2013a, 2013b) predicted attitudes toward exposure as well, but those findings were not replicated. Most clinicians in Deacon et al. (2013a) (2013b) worked in private practice or hospital settings, and there were more doctoral-level and male clinicians than in our sample. Compared to Becker-Haimes et al. (2017), the current study had less experienced and less racially diverse clinicians. In general, our sample may be more representative of the average CMH clinician population, including largely Master's

To contribute understanding when it is appropriate to examine individual versus aggregated perceptions of implementation climate, we report both the group-level and individual-level perceptions. The variation in group-level implementation climate scores indicates clinicians did not highly agree on their perceptions, which means that the aggregated implementation climate ratings could represent few clinicians’ actual perceptions. Theoretically, it makes sense that clinician-level perceptions were most valid because, in this study, exposure was a small piece of an individual's practice (one component in only two of the four EBTs taught; Jacobs et al., 2014). Group differences within organizations may have emerged due to the number of respondents within an organization. Because our data is collected as part of a state-funded training which aimed to include as many organizations as possible, organizations could only send a maximum of three clinicians and one supervisor to the training. As such, only 40% of the organizations in our study had enough respondents to be aggregated (66% of the clinician sample), which could have influenced our ability to fully compare clinician versus group perceptions. Additionally, emerging literature shows clinical supervisors play a role in shaping clinicians’ perceptions about their organization (Birken et al., 2018; Meza et al., 2021). Thus, clinicians having different supervisors may have contributed to the variability of climate perceptions within each organization. More work must be done to distinguish what constructs are being measured with individual versus group implementation climate perceptions to ensure high construct validity and appropriate future use. This distinction has an impact on the conceptualization and approach to analyzing implementation climate across disciplines.

The current study has several strengths. To our knowledge, this is the first study to investigate changes in attitudes toward exposure following a multiple-EBT training, which is more scalable than a specialty training. We are also among the first to examine changes in attitudes toward exposure following training with consultation, which is a key ingredient for effective training (Frank et al., 2020). We use both organization- and clinician-level determinants of exposure, which has been called for in the literature (Becker-Haimes et al., 2019). Second, this study included a large and representative sample of CMH clinicians from many different organizations in Washington participating in a state-funded training. This sample is similar to other CMH training studies (Amaya-Jackson et al., 2018; Bartlett et al., 2015; Jensen-Doss et al., 2020), which suggests that results may be generalizable to other CMH contexts. Third, the current study focuses on clinician perceptions of implementation climate following analysis of ICCs as recommended (Jacobs et al., 2014), but we also report the results based on group-level implementation climate perceptions. This allows us to contribute to discussion around appropriate future measurement and analysis of implementation climate. Lastly, a strength of this study is the assessment of attitudes toward a specific EBT component, exposure, which has strong evidence for effectiveness (Higa-McMillan et al., 2019).

In addition to its strengths, this study also has several limitations. First, we did not examine cross-level interactions between organization and individual characteristics, thus, we cannot rule out the complex interplay between determinants across levels (Becker-Haimes et al., 2019). Although other studies demonstrate that attitudes impact delivery for exposure (e.g., de Jong et al., 2020), we had no measure of exposure use after the consultation period ends, so we cannot be confident that improved attitudes led to behavior change. Measurement was limited by the fact that data were collected as part of an evaluation of a state-funded training. Since this study relies on clinician self-report, the data are subject to self-report biases (Borntrager et al., 2015; Donaldson & Grant-Vallone, 2002; Eccles et al., 2006). Additionally, our sample included many CBT-leaning clinicians, which may differ from other CMH contexts. Due to the constraints of being a state-funded training initiative aiming to include as many organizations as possible, clinicians represented 72 organizations in our study, but only 29 organizations (40.3%) had three or more respondents necessary to aggregate implementation climate to the group-level, which may have affected ICC analysis indicating that clinician-level analyses were most appropriate. Next, implementation climate was only measured at pre-training, so we cannot rule out the possibility that climate shifted throughout the training, and that that shift led to attitude changes. However, it is somewhat unlikely that the implementation climate shifted significantly over the 6-month study period, especially given that only four people from each organization (maximum) could attend the training. Lastly, the implementation climate measure assessed climate for evidence-based practices broadly while the attitudinal measure assessed attitudes toward exposure specifically. Future research may want to examine the relation between clinician perceptions of implementation climate for specific EBT components like exposure and attitudes toward those specific components.

Building on the results of this study, we recommend four future directions for further investigation. First, future work could examine the individual items on the TBES scale, which each assess a specific exposure attitude. For example, the changes in specific attitudes and the relationship between specific attitudes and implementation climate could offer insights for targeted research and intervention. Second, few studies have examined if and how changes in attitudes toward exposure translates to clinical practice (Trivasse et al., 2020). Future work should seek to measure exposure use during and after training. Third, long-term follow-up studies assessing clinicians’ practice following training and consultation are necessary to fully understand the effectiveness of training efforts by evaluating if practices are sustained (Chu et al., 2015; Thomassin et al., 2019). Fourth, future work should investigate how to improve organizational climate since it may be important in shaping clinicians’ attitudes (Powell et al., 2017), behaviors (Williams et al., 2018), and effectiveness of training and consultation. Organizational interventions have shown success by reducing clinician turnover (Glisson et al., 2006), improving EBT adoption and use (Williams et al., 2017), and improving client outcomes (Glisson et al., 2010). Interventions aimed at enhancing implementation climate specifically are currently being evaluated (e.g., Aarons et al., 2015; Aarons et al., 2017).

Conclusions

Training in four EBTs, only two of which include exposure as a component, resulted in positive changes in clinicians’ attitudes toward exposure. These results suggest that non-specialty trainings may provide a less resource-intensive alternative to address clinician's attitudes about exposure than specialized trainings. Further, CMH clinicians who endorsed a higher implementation climate within their organization had more positive attitudes toward exposure following EBT training and consultation. Clinicians who perceived higher implementation climates also showed greater improvements in attitudes after EBT training and consultation. These findings suggest that organizational determinants within a clinician's agency, and outside of the training and consultation process, may influence the changes in attitudes that happen throughout training, treatment implementation, and consultation.

Footnotes

Acknowledgments

This publication was made possible in part by funding from the Washington State Department of Social and Health Services, Division of Behavioral Health and Recovery, and the trainers and community partners for the CBT+ Initiative, Lucy Berliner, Dr. Nathaniel Jungbluth, Amanda Russell, Nikki Sharp, Sean Wright, Dan Fox, Naomi Leong, Melissa Gorsuch-Clark, Amber Ard, Dr. Georganna Sedlar, Angelica De Anda, Jamilyn Ellis, Derrick Conley, Rachel Barrett, Cassandra Ellsworth, Louisa Hall, Laura Merchant, Dr. Minu Ranna-Stewart, Jennifer Evinger, Crystal Hynek, and Claudia Kirkland. Furthermore, as a co-founder and former co-director of the CBT+ Initiative, Lucy Berliner has expertly served as a liaison between government agencies, community stakeholders, and researchers around the country to bring high-quality, accessible mental health treatment for children and families across Washington State, which made this and many other studies possible.

Declaration of conflicting interests

Dr. Dorsey was paid by the Washington State Department of Social and Health Services, Division of Behavioral Health and Recovery to serve as a trainer, consultant, and evaluator for the activities outlined in this study.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Plain language summary

Exposure is highly effective for treating trauma symptoms and anxiety-based disorders, but it is not commonly used in community mental health settings. Clinicians who endorsed higher expectations, support, and rewards for using exposure in their agency had more positive attitudes toward exposure after training and consultation. Additionally, clinicians who endorsed that exposure is expected, supported, and rewarded in their agency showed a greater improvement in attitudes throughout the training process. Organizational culture can affect clinicians’ attitude changes in the training process, and therefore should become a focus of training efforts.