Abstract

Background

Intimate partner violence (IPV) is a population health problem affecting millions of women worldwide. Screening for IPV within healthcare settings can identify women who experience IPV and inform counseling, referrals, and interventions to improve their health outcomes. Unfortunately, many screening programs used to detect IPV have only been tested in research contexts featuring externally funded study staff and resources. This systematic review therefore investigated the utility of IPV screening administered by frontline clinical personnel.

Methods

We conducted a systematic literature review focusing on studies of IPV screening programs for women delivered by frontline healthcare staff. We based our data synthesis on two widely used implementation models (Reach, Effectiveness, Adoption, Implementation and Maintenance [RE-AIM] and Proctor's dimensions of implementation effectiveness).

Results

We extracted data from 59 qualifying studies. Based on data extraction guided by the RE-AIM framework, the median reach of the IPV screening programs was high (80%), but Emergency Department (ED) settings were found to have a much lower reach (47%). The median screen positive rate was 11%, which is comparable to the screen-positive rate found in studies using externally funded research staff. Among those screening positive, a median of 32% received a referral to follow-up services. Based on data extraction guided by Proctor's dimension of appropriateness, a lack of available referral services frustrated some efforts to implement IPV screening. Among studies reporting data on maintenance or sustainability of IPV screening programs, only half concluded that IPV screening rates held steady during the maintenance phase. Other domains of the RE-AIM and Proctor frameworks (e.g., implementation fidelity and costs) were reported less frequently.

Conclusions

IPV is a population health issue, and successfully implementing IPV screening programs may be part of the solution. Our review emphasizes the importance of ongoing provider trainings, readily available referral sources, and consistent institutional support in maintaining appropriate IPV screening programs.

Plain language abstract

Intimate partner violence (IPV) is a population health problem affecting millions of women worldwide. IPV screening and response can identify women who experience IPV and can inform interventions to improve their health outcomes. Unfortunately, many of the screening programs used to detect IPV have only been tested in research contexts featuring administration by externally funded study staff. This systematic review of IPV screening programs for women is particularly novel, as previous reviews have not focused on clinical implementation. It provides a better understanding of successful ways of implementing IPV screening and response practices with frontline clinical personnel in the context of routine care. Successfully implementing IPV screening programs may help mitigate the harms resulting from IPV against women. Findings from this review can inform future efforts to improve implementation of IPV screening programs in clinical settings to ensure that the victims of IPV have access to appropriate counseling, resources, and referrals.

Intimate partner violence (IPV) is a global population health problem (World Health Organization, 2013), defined as behavior by a current or former intimate partner that causes physical, sexual, or psychological harm. IPV can include physical aggression, psychological abuse, and sexual coercion (World Health Organization, 2013). Although individuals of all gender identities experience IPV, women are most likely to experience severe IPV along with associated adverse outcomes (World Health Organization, 2013). Nearly a third (30%) of women worldwide have experienced IPV (Devries et al., 2013), which can result in lingering reproductive, cardiovascular, neurological, mental health, and social impacts (Campbell, 2002; Dillon et al., 2013; Diop-Sidibé et al., 2006; Miller & McCaw, 2019), including suicide (Iverson et al., 2013). Women who experience IPV present frequently to health services (Dichter et al., 2018; Musa et al., 2019). Such visits provide opportunities to have conversations with patients about IPV experiences and offer referral and support as needed.

Screening for IPV can identify women who have experienced it, and can lead to interventions to increase safety and improve health (Feltner et al., 2018; Hegarty et al., 2013; McCloskey et al., 2006; Miller et al., 2011). Thus, in some countries, routine screening for IPV is recommended among women presenting to primary, preventative, and prenatal care (Gee et al., 2011; Institute of Medicine, 2011; US Preventive Services Task Force et al., 2018). In addition, women who experience IPV interact with other aspects of the healthcare system where screening may be useful for detecting IPV (e.g., emergency departments, mental health, public health settings, and services embedded within homeless shelters). Programs to identify and address IPV can offer information, resources, referrals, and interventions (O’Campo et al., 2011). Additionally, being able to disclose in a safe environment with a supportive provider may provide validation and relief (Spangaro et al., 2011).

To support the uptake of IPV screening programs, many IPV screening tools have been validated for use among women across healthcare settings (Feltner et al., 2018; Rabin et al., 2009). Commonly used screening tools are typically 3–5 items and include: the Hurts/Insults/Threatens/Screen (HITS) and an extended version of the HITS (Chan et al., 2010; Sherin et al., 1998), Humiliation/Afraid/Rape/Kick (Sohal et al., 2007), and Partner Violence Screen (Feldhaus et al., 1997). Such screening tools can be self-administered via paper-and-pencil or technology (i.e., tablets), or clinician-administered.

There may be crucial differences, however, in the ways that IPV screening tools perform in well-financed research studies versus the resource-constrained clinical settings where most women receive healthcare. To address this distinction, it is important to consider implementation outcomes of IPV screening and response practices (i.e., the extent to which such tools and practices achieve widespread use outside the context of robust external research funding).

To better understand the ways that IPV screening and response practices can be successfully implemented, we conducted a systematic literature review with outcomes rooted in two widely used implementation frameworks. First, we used the Reach, Effectiveness, Adoption, Implementation and Maintenance (RE-AIM) framework, which attends to clinical effectiveness (i.e., the extent to which IPV screening practices successfully identify women experiencing IPV, such as the proportion of screened women who screen positive) while also providing a robust evaluation of key implementation outcomes (i.e., reach, adoption, implementation fidelity/quality, and maintenance) (Glasgow et al., 1999). Second, we supplemented RE-AIM with other important implementation outcomes highlighted by Proctor et al. (2011), namely acceptability, feasibility, appropriateness, and costs. We included these dimensions in addition to RE-AIM because they reflect important aspects of the implementation process and contribute to clinical decision-making regarding whether, when, and how to implement a clinical innovation (Eisman et al., 2020).

To maintain a focus on implementation, we focused exclusively on studies in which the IPV screening tools were administered by endogenous clinical staff (rather than those hired and paid by the research team). Such studies (e.g., Bullock et al., 2006; Kornfeld et al., 2012) are important for exploring the implementation outcomes described above. By focusing on studies of IPV screening and response implementation by frontline clinical staff, our approach aims to provide useful data for administrators and clinicians committed to improving the detection and treatment of IPV among women in their own clinical settings. As the overwhelming majority of existing work on healthcare-based interventions for IPV have focused exclusively on women patients, we focus our review on this population, noting that individuals of other gender identities may also experience IPV and benefit from healthcare-based screening and response.

Methods

Search process

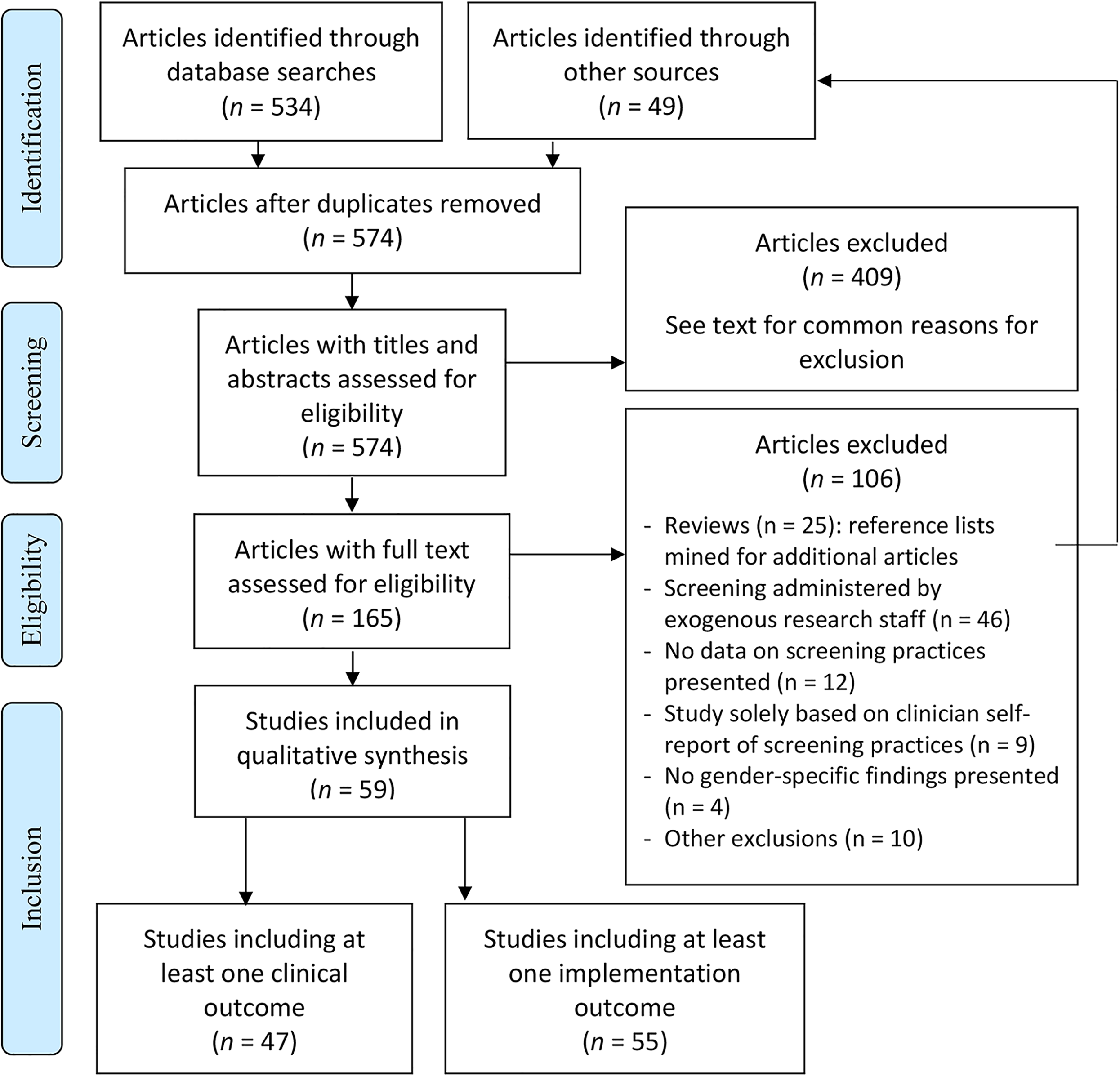

We searched PubMed for English language manuscripts from the earliest available date through March of 2020. Our initial search terms consisted of the following: (((((intimate partner violence[Title/Abstract]) OR domestic violence[Title/Abstract]) OR domestic abuse[Title/Abstract]) OR spouse abuse[Title/Abstract]) OR battering[Title/Abstract]) AND screen[Title/Abstract]. We used similar search terms in clinicaltrials.gov to identify other articles derived from recent studies of IPV screening not yet captured in PubMed. We supplemented this search process with snowball sampling, which involved mining the reference lists of key articles identified via our search process, and using the Google Scholar citation function to find manuscripts that cited papers we identified. We also examined the citation lists of included manuscripts and conducted forwards and backwards citation tracking. This process, along with the analytic process described below, meets criteria for conducting a systematic review (Grant & Booth, 2009). Details of our search process and results can be found below and in Figure 1.

Preferred reporting items for systematic reviews and meta-analyses (PRISMA) diagram (Moher et al., 2010).

Inclusion and exclusion criteria

To be included, articles had to meet the following criteria:

Investigated one or more IPV screening measures for women delivered within the healthcare context, delivered by endogenous healthcare clinic staff rather than externally funded research staff. For these purposes the healthcare context was broadly defined to include various types of healthcare settings. “Endogenous clinic staff” were defined as staff who were already employed at the sites implementing the screening, and who administered IPV screening as part of their clinical duties (rather than in a separate role as research staff, such as a research assistant). Reported empirical results stemming from IPV screening related to at least one RE-AIM or Proctor dimension (details below). Were available in English. Reported prevalence rates of IPV without tying results to specific screening practices. Reported provider self-report of IPV screening practices without any additional data. For example, papers reporting survey results in which clinicians self-reported the frequency with which they screened for IPV were excluded in the absence of other outcome data. Reported results in such a way that findings specific to women could not be abstracted (e.g., women's data were presented in aggregate with data from men). Reported results from IPV screening delivered by externally funded research staff, or that featured financial incentives for women participants that could not be replicated in routine clinical care. We therefore ruled out studies that used research dollars to hire or fund the screening personnel. Review papers that did not contain original data. However, we mined the reference lists of identified reviews to supplement our search process.

We excluded articles that met any of the following criteria:

Review stages

We used two rounds of review for each identified article: the first was a title/abstract review stage to rule out manuscripts that obviously did not meet the aforementioned inclusion and exclusion criteria. For example, our search uncovered many articles that (by their titles and/or abstracts) were clearly opinion pieces on IPV, or described the impacts of IPV without concrete data on screening practices, and were therefore excluded prior to full-text review.

For the remaining articles, we then conducted a full-text review. The goal for this review stage was to make the ultimate determination for whether an article met the aforementioned inclusion and exclusion criteria, and therefore qualified to be included in the sample.

Reliability

We used double coding at two stages of our review to ensure reliability in the application of our inclusion and exclusion criteria. First, two study coauthors (OA, CJM) independently conducted title/abstract review for a subset of 45 manuscripts identified by the search process described above (8% of identified articles). These included some articles that the primary coder (OA) ruled out based on title/abstract review, and others that the primary coder determined should proceed to full-text review. We resolved discrepancies regarding whether articles should proceed to full-text review by independently reviewing the full article followed by discussion to come to consensus.

Second, three study coauthors (OA, CJM, KI) independently conducted full-text review for a subset of ten manuscripts. These ten manuscripts included some that the primary coder determined had met inclusion criteria, along with others that the primary coder thought should be excluded. Intraclass correlation for all three raters for this subset of manuscripts was 0.94, indicating excellent reliability regarding whether manuscripts met our inclusion and exclusion criteria at the full-text review stage. Furthermore, we used group consensus to ensure reliability of our data extraction process (details below), with all coauthors reviewing draft versions of study results (Tables 1and 2) and discussing revisions and modifications in a series of team meetings.

Clinical effectiveness outcomes and implementation outcomes from included studies.

Note. ED = Emergency Department; IPV = intimate partner violence. EMR = electronic medical record; NHS = national health service; PCP = primary care physician.

Outcomes from the RE-AIM framework (Glasgow et al., 1999).

Outcomes from Proctor dimensions (Proctor et al., 2011).

Analytic approach

We extracted descriptive data about each included study (e.g., study setting, patient population, specific screening tool used [including whether the tool was modified], and randomization if applicable). We did not extract demographic data (regarding patients or clinicians), as these data were reported inconsistently among our included studies. We extracted results based on implementation outcomes derived from two implementation-oriented frameworks: RE-AIM (Glasgow et al., 1999) and Proctor's dimensions of implementation effectiveness (Proctor et al., 2011; details below). We chose these frameworks based on their widespread use in the implementation literature, and their inclusion of constructs relevant to implementation success in multiple settings (Eisman et al., 2020; Harden et al., 2018). Data extraction was guided by these frameworks regardless of whether the authors of the included studies specifically used or cited them. Questions regarding data extraction were resolved via consensus of the entire study team. First, the RE-AIM framework (Glasgow et al., 1999) includes five dimensions: Reach, Effectiveness, Adoption, Implementation fidelity, and Maintenance. We extracted quantitative and qualitative data related to these dimensions, and operationalized them in the following ways:

Reach: proportion of women who received IPV screening (among those eligible for screening). Effectiveness: clinical implications of, or follow-up to, a positive screen (e.g., IPV disclosure or screen-positive rates, referral rates after a positive screen, or receipt of psychosocial services after a positive screen). Adoption: in Glasgow's original formulation, adoption referred to the proportion and representativeness of clinics or worksites that agreed to implement the innovation in question. Very few of our included studies, however, reported site-level representativeness, and so we focused our data extraction for adoption on the proportion of clinics that administered the IPV screening. Because IPV screening is frequently conducted by frontline clinical staff, we also operationalized adoption as the proportion of providers who administered IPV screening. Implementation fidelity: extent to which IPV screening was delivered as intended in the study. This could include reports of psychometric performance (e.g., sensitivity, specificity) compared to a gold standard measure of IPV. Maintenance: extent to which IPV screening practices remained in place beyond the primary study endpoint. Acceptability: extent to which clinicians or patients found IPV screening acceptable in their setting. The Proctor model also includes dimensions of Appropriateness and Feasibility: given conceptual similarities between these and Acceptability we combined results for these three dimensions rather than reporting them separately. Cost: financial or resource cost of delivering IPV screening programs. Note that other Proctor dimensions (of Adoption, Fidelity, Penetration/Reach, and Sustainability/Maintenance) are captured under the RE-AIM dimensions listed above.

We also extracted quantitative and qualitative data related to Proctor's dimensions of implementation effectiveness (Proctor et al., 2011). We operationalized these dimensions as follows:

In aggregating quantitative data, we report the median among included studies rather than mean and standard deviation, as we were concerned that the mean might be biased by outlier studies or nonnormality of data (Hartwig et al., 2020).

Results

Results of search process

See Figure 1 for our preferred reporting items for systematic reviews and meta-analyses diagram (Moher et al., 2010). Briefly, we screened titles/abstracts for 574 articles, and excluded 409 of them based on title/abstract review. The two most common reasons for exclusion at this stage were (1) reports of IPV prevalence without screening data, and (2) opinion or policy pieces without empirical results. Other common reasons for inclusion at this stage included: study or review protocols; psychometric validations of IPV screening tools administered by externally funded research staff; self-reports of providers’ views toward, or use of, IPV screening tools without corroborating data; and qualitative studies of providers’ perceptions of barriers to IPV screening.

We conducted full-text review of 165 qualifying articles (Figure 1). The most common exclusion at the full-text review stage was articles involving administration of IPV screening by externally funded research staff. Thus, we extracted data from 59 articles meeting inclusion criteria.

Characteristics of included studies

Table 1 describes the characteristics of included studies. Of the fifty-nine studies, 42 (71%) were conducted in the United States and 17 (29%) were conducted in other countries. Other countries represented include: United Kingdom (n = 4), Australia (n = 4), Canada (n = 2), Spain (n = 1), New Zealand (n = 1), India (n = 1), Lebanon (n = 1), Turkey (n = 1), Iran (n = 1), and Guinea (n = 1). Twenty-three (39%) used a specific IPV screening tool, while 16 (27%) used a modified tool and 20 (34%) used a custom or unnamed tool. Examples of modified tools include tools that combined two or more validated screening tools into one (e.g., Kornfeld et al., 2012; Parker et al., 1993), tools that combined the questions of a validated screening tool with additional screening questions (e.g., Scribano et al., 2011; Trautman et al., 2007), and tools that only used select questions from a validated screening tool (e.g., Janssen et al., 2002; McColgan et al., 2010). The most common clinic settings included primary care (n = 17, 28%), emergency departments (n = 13, 22%), and maternal and child health programs (n = 25, 42%). Most studies were conducted within hospitals (n = 30, 51%) or community health centers (n = 24, 41%); others involved home visitation programs (n = 4, 7%). Sample sizes varied widely among the studies that reported these data, both in terms of involved providers (range: 1–649) and patients (range: 26–540,300). Most studies (n = 37, 63%) were observational, with some involving quasi-experimental (n = 17, 29%) or randomized (n = 5, 8%) designs. Seven (12%) studies were fully retrospective, often involving administrative data or chart review following implementation of an IPV screening program. Among the studies that reported their data collection period (n = 49, 83%), the median was 12 months; implementation periods (among studies describing a specific implementation effort) ranged from 3 days to 24 months.

Characteristics of included studies.

Note. ED = Emergency Department; UC = urgent care center; PC = primary care clinic; OB/GYN = obstetrics/gynecology clinic; CHC = community health center; AAS = abuse assessment screen; WEB = women's experience with battering screen.

Outcomes of IPV screening programs

Table 2 summarizes outcomes, based on RE-AIM (Glasgow et al., 1999) and the Proctor dimensions (Proctor et al., 2011). Reach or penetration, referring to the percent of women eligible for screening who completed an IPV screen, varied widely among the 40 studies that reported it, with a median of 80%. Some studies (n = 8, 14%) reporting pre–post data showed marked improvement in screening rates during the period in which IPV screening programs were implemented (e.g., Mancheno et al., 2020), including reach/penetration of IPV screening above 95% (e.g., Dauber et al., 2019).

The RE-AIM dimension of effectiveness refers to the clinical effectiveness of IPV screening (see Table 2). We conceptualized this as including (a) the percent of women screened who screened positive, (b) the percent of women who screened positive for IPV who were referred to follow-up psychosocial services, and (c) the percent of women who attended follow-up psychosocial services (among those referred). From studies reporting one or more of these findings, the median screen-positive rate for IPV experienced within the past 12 months was 11% (based on n = 33 studies, 56%). The median rate of referral to follow-up psychosocial services among those screening positive was 32% (based on n = 16 studies, 27% of all included studies). Among those referred to such services, a median of 54% of women attended or received those services (based on n = 9 studies, 15%).

Only seven studies reported concrete data on the RE-AIM dimension of adoption (i.e., the percent of providers or clinics delivering IPV screening). Each of these seven studies reported provider-level rather than clinic-level adoption, with adoption ranging from 23% to 100% of clinicians conducting IPV screening. Reports of implementation fidelity (i.e., the RE-AIM dimension of implementation, operationalized here as the extent to which IPV screening programs were conducted as intended in the study) were primarily limited to two types of data. Specifically, some studies reported differential screening rates (e.g., across geographic areas) as evidence of variable fidelity to IPV screening protocols, while others described the psychometric properties of IPV screening compared to a gold standard assessment of IPV.

Fourteen studies reported data on the RE-AIM dimension of maintenance or sustainability of IPV screening programs. Of these, about half demonstrated IPV screening or referral rates that were comparable to those found at the primary study endpoint (e.g., Hamberger et al., 2010; Taft et al., 2015). In three studies, the maintenance of these effects was attributed to institutional policies (e.g., recurring trainings regarding IPV screening, or disciplinary action for clinicians who failed to continue screening for IPV). Others found that IPV screening practices reverted to previous levels during the maintenance phase (e.g., Higgins & Hawkins, 2005; Mezey et al., 2003) based on factors such as high clinician turnover and difficulty coordinating continued IPV services across geographically disparate sites.

Turning to the additional Proctor dimensions, most studies (n = 50; 85%) described the perceived acceptability, appropriateness, or feasibility of IPV screening. While differences in contextual factors (e.g., clinic settings and patient populations) made it difficult to draw firm conclusions across studies, several findings stood out nonetheless. First, emergency departments may be particularly important yet challenging setting for screening. Given the frequency with which women who experience abuse may present there with IPV-related injuries, there were perspectives supporting the appropriateness of IPV screening in this context. But several issues can complicate the feasibility and acceptability of Emergency Department (ED)-based IPV screening programs, including high patient volume, the frequent presence of children or significant others, limited privacy, and the urgency of the injuries that necessitated the ED visit (Krasnoff & Moscati, 2002; Scribano et al., 2011; Spangaro et al., 2020).

Second, three articles reported patient or provider perspectives regarding the acceptability of tablets or other electronic modalities to conduct IPV screening (Bacchus et al., 2016; Trautman et al., 2007; Warren-Gash et al., 2016). Such administration was generally viewed favorably by providers and patients, though there were pros and cons identified for technology-assisted IPV screening. Some women may be more comfortable disclosing IPV via electronic methods, but others may find such impersonal administration methods off-putting and prefer the face-to-face contact of a caring provider.

Third, seven studies reported that a lack of follow-up services (to which women who screened positive could be connected or referred) undermined efforts to implement IPV screening programs (e.g., Clark et al., 2020; Dauber et al., 2019; Samandari et al., 2016; Trautman et al., 2007). In contrast, in at least one study, robust referral options facilitated clinicians’ ongoing commitment to IPV screening and response practices (Sohal et al., 2020).

Only six studies presented information on financial or resource cost considerations for integrating IPV screening programs into clinical care, most of which did not formally quantify numerical costs. Among the studies that addressed this issue, most (n = 5) emphasized the importance of financial resources and clinical personnel to support full implementation.

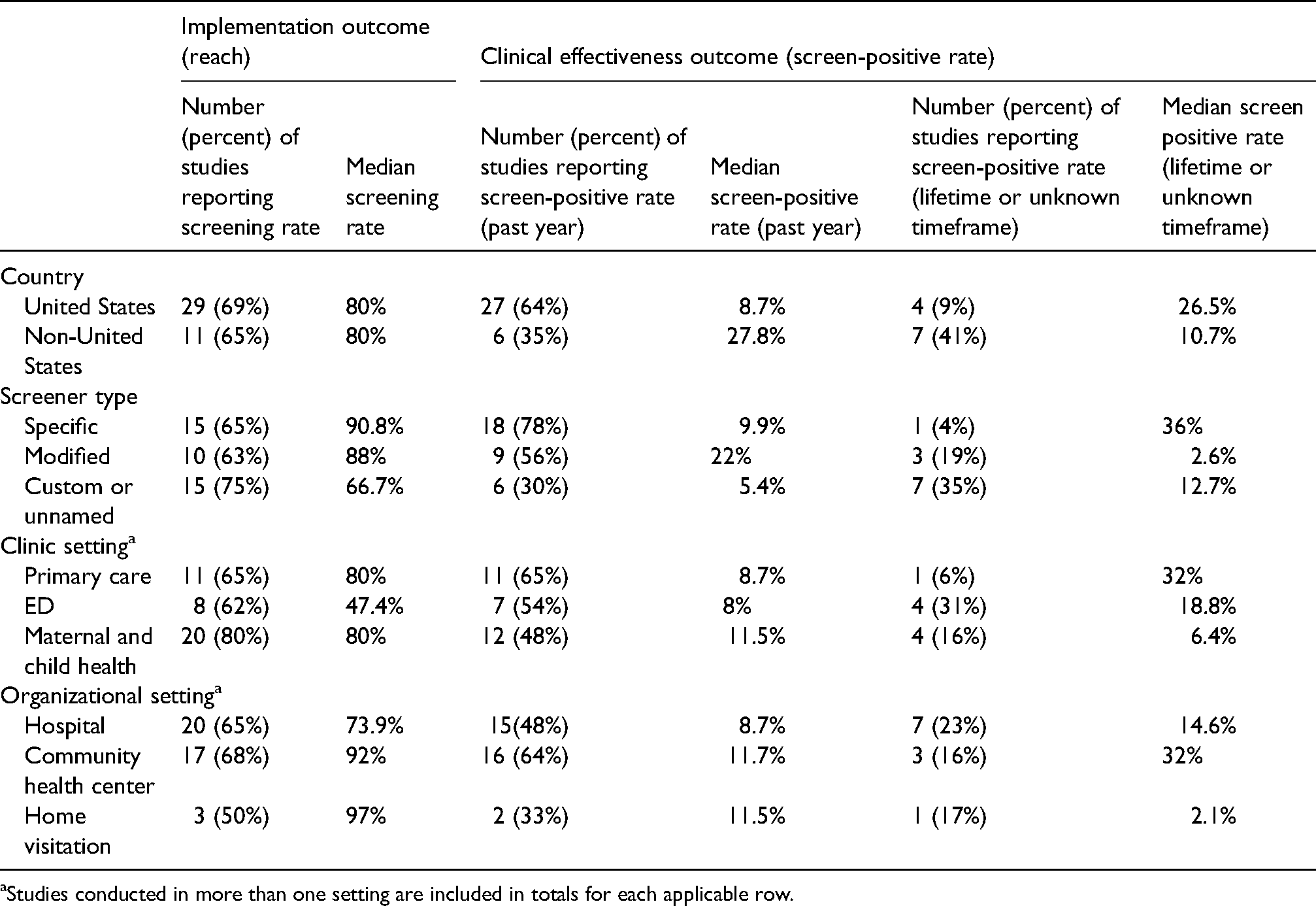

Subgroup analyses

Our sample was too heterogeneous (e.g., in terms of clinic settings) to conduct formal meta-analysis or to support statistical comparisons between studies. Instead, Table 3 summarizes post-hoc subgroup findings for two quantitative outcomes: reach and clinical effectiveness (i.e., the screen-positive rate). We noted a curious relationship between study location and screen-positive rates, with U.S.-based studies reporting higher rates of recent IPV, but lower rates of lifetime IPV. Furthermore, emergency department settings demonstrated lower rates of IPV screening than primary care or maternal/child health settings. It was difficult to discern other reliable patterns regarding differences across settings, given variations in reporting (e.g., some studies calculated the screen-positive rate as the percentage of women reporting IPV in the past year, while others reported lifetime IPV, and yet others did not describe the timeframe in which women had to experience IPV to screen positive).

Subgroup analyses.

Studies conducted in more than one setting are included in totals for each applicable row.

Discussion

Major findings in context

IPV is a population health problem (World Health Organization, 2013). IPV screening programs in healthcare settings represent one way to identify individuals who experience IPV, thereby facilitating the provision of support, resources, referrals, and interventions. There is a robust literature on IPV screening programs, but many studies have included features that cannot be replicated in routine clinical care (e.g., externally funded research staff to facilitate IPV screening; financial incentives to encourage patients to complete IPV screening). Thus, in this systematic review we focused on 59 studies of IPV screening conducted by frontline clinical personnel, and collected information on clinical effectiveness and implementation outcomes informed by two widely used implementation frameworks (Glasgow et al., 1999; Proctor et al., 2011). While individuals of any gender identity may experience IPV, our review focused on IPV screening programs for women, as women are most likely to experience frequent and severe IPV, and most research on IPV screening has been conducted on women (World Health Organization, 2013).

Starting with study results rooted in the RE-AIM framework, we found variability in reach of IPV screening programs (i.e., the percent of women eligible for screening who were screened), with a median of 80%. Emergency department settings featured a lower screening rate (closer to 50%). Whereas this setting may present an important opportunity for IPV screening and response (Ahmad et al., 2017; Kothari & Rhodes, 2006), factors like high patient volume, frequent presence of children or significant others, limited privacy, and the severity of injuries may make it difficult to conduct IPV screening in the emergency department (Krasnoff & Moscati, 2002; Scribano et al., 2011; Spangaro et al., 2020). Regarding clinical effectiveness of IPV screening, the median screen-positive rate for past-year IPV among included studies was 11%. This median rate is slightly lower than the screen-positive rates from recent randomized controlled trials in which screening was facilitated by research staff (12%–18%) (Klevens et al., 2012; Koziol-McLain et al., 2010; MacMillan et al., 2009). These findings suggest that IPV screening programs conducted in frontline clinical contexts may obtain broadly similar screen-positive rates when compared to more tightly controlled research contexts.

Among those who screened positive, a median of 32% received a referral to follow-up services; among those who were referred, more than half (a median of 54%) had documentation of receiving those services. It is difficult to discern how these findings compare to controlled trials that were excluded from this review, as such studies tend to focus on IPV and health symptoms as primary outcomes and rarely report on psychosocial service use as a function of screening positive for IPV (e.g., Klevens et al., 2012; Koziol-McLain et al., 2010). For example, MacMillan et al. (2009) reported that 33% of women who were screened for IPV (regardless of screening results) received services with a psychologist or social worker, and 13% received advocacy or counseling for abuse within 6 months of being screened. Nonetheless, the current findings on referrals and associated service use are promising, considering that such services may be required to reduce a patients’ exposure to violence and improve health.

When considering the referral findings, we note that there are likely incidences of patient-provider discussions in response to IPV disclosure that focus on validation and other resource provision, but are not necessarily captured in a “referral to services” outcome metric. There may be subtle but important benefits for patients in simply having a safe, empathic person with whom to discuss unhealthy relationship experiences, regardless of whether the patient is interested in follow-up services related to IPV, as reported by some providers, patients, and clinical experts (Dichter et al., 2020; Iverson et al., 2019; Miller & McCaw, 2019). Patients also value self-determination in following through with referrals (Feder et al., 2006), as readiness to change, personal and familial safety considerations, and myriad contextual factors (e.g., availability of referral services; details below) impact such decisions. Qualitative research with patients who have experienced IPV emphasizes the importance of providing support for connecting to services, in addition to providing information about the services (Dichter et al., 2021).

We operationalized the RE-AIM element of adoption as the proportion of providers or clinics delivering IPV screening. This was reported at the provider level in seven studies, with a broad range (23%–100%). Some researchers noted that direct provider feedback (e.g., audit/feedback for providers who were not consistently screening) were important for ensuring adoption of IPV screening programs. This aligns with findings from other research examining the use of specific implementation strategies to facilitate adoption of IPV screening practices (Adjognon et al., 2021). Workload pressures, competing demands, limited time, and a paucity of referral options (described in more detail below) appeared to contribute to providers’ reluctance to adopt IPV screening practices. These factors are consistent with findings from the broader literature examining healthcare providers’ barriers to IPV screening (e.g., Sprague et al., 2012).

The RE-AIM element of fidelity refers to the extent to which IPV screening was conducted as intended. In our sample, this was primarily explored by (a) investigating variability in screening rates as evidence of variable fidelity, or (b) calculation of psychometric properties of IPV screening tools in the rare event that a gold standard comparison evaluation of IPV was conducted. Notably, none of our included studies reported on the more person-centered aspects of conducting IPV screening, including the interpersonal nuances of screening and response practices. For example, no studies assessed the extent to which IPV screening was conducted empathically, sensitively, and nonjudgmentally, and to what extent responses and recommendations were individually tailored, even though these factors have been deemed essential by IPV survivors (Chang et al., 2005; Feder et al., 2006; Iverson et al., 2014). While behavioral observations may represent the gold standard assessment method for determining whether care is delivered in this way, we realize that the sensitivity of IPV may make such methods impractical, particularly outside the context of externally-funded research. Instead, future studies could employ point-of-care surveys or interviews to assess these critical factors that likely impact the clinical effectiveness of IPV screening practices.

The RE-AIM element of sustainability refers to the extent to which IPV screening programs were used beyond the primary study endpoint. Ongoing institutional support (e.g., provision of periodic staff trainings) was associated with sustainability, while workload pressures and logistical difficulties were associated with reductions in screening over time (a phenomenon referred to as “drift” in the sustainability literature) (Stirman et al., 2019).While ongoing institutional support has been identified as an important approach to overcoming low levels of provider education and comfort in the context of IPV screening, studies have suggested that staff and provider training alone are insufficient to maintain high IPV screening rates (Waalen et al., 2000). Only with a combination of training and additional IPV screening implementation strategies are screening rates sustainable; such additional strategies include but are not limited to: (a) adequate funding and staffing resources (e.g., dedicated clinicians for training and provision of follow-up services), and (b) the use of IPV stakeholders’ learning collaboratives (Adjognon et al., 2021).

Proctor et al. (2011) posited additional implementation outcomes above and beyond the RE-AIM dimensions described above. First, we collected data from our review related to the acceptability, appropriateness, and feasibility of IPV screening. Some studies described programs in which screening was conducted via tablets or other technological mechanisms, with mixed results, consistent with studies that have found both benefits and limitations to electronic versus paper or clinician-administered screening (Ahmad et al., 2009; Chang et al., 2012; Chen et al., 2007). Our findings are consistent with those from more tightly controlled trials of IPV screening methods, which have generally shown that patient self-administered or computerized screenings are as effective as clinician-administered screening in terms of disclosure, comfort, and time spent screening (Ahmad et al., 2009; Chen et al., 2007). More broadly, our results emphasize the importance—in terms of perceived acceptability of IPV screening programs—of having treatment or referral options available for women who disclose IPV. The absence of referral options is associated with reduced clinician enthusiasm for or commitment to screening, due to concerns of not having adequate supports for patients in need (D'Avolio, 2011; Dichter et al., 2015; Sprague et al., 2012). Unfortunately, this may contribute to a negative feedback loop, as reduced IPV screening on the part of providers can create the false impression that IPV is not a major clinical issue, in turn reducing the chances that patients receive help.

Few studies reported concrete data on the Proctor dimension of implementation cost. While IPV screening may at first seem to be a relatively inexpensive clinical process, complexities abound. Ongoing clinician trainings, the filling of crucial staff roles, facilitation (Miller et al., 2020; Ritchie et al., 2020), or technological updates in electronic medical records may represent substantial investments for resource-strapped medical systems—but without these investments, it is unlikely that IPV screening will be consistently adopted and sustained.

Limitations

As with many systematic reviews, we faced challenges in consolidating our results based on differences in how data were collected, conceptualized, or reported across studies (e.g., different time frames for IPV detection). We attempted to address this by using group consensus in extracting our data. In addition, based on the information in our included articles, we were unable to consistently extract comprehensive data regarding differential screening rates by provider type (e.g., nurses vs. physicians) or patient demographics (e.g., age, ethnicity). Given that some evidence suggests that provider type may be an important predictor of screening practices, future research should explore it further in implementation-focused trials. Third, over half of the included studies used either a custom or modified IPV screening tool, potentially limiting generalizability. In addition, the variability in positive screen-rates across studies is likely impacted, in part, by differences in the wording of screening questions and types of IPV assessments. While we took steps to ensure reliability at several stages in the review process, we did not double code all articles, raising the possibility that reviewer drift may have impacted our results. We also note that our review contained studies from multiple countries, healthcare systems, and clinic settings. While this allowed us to get a broad picture of IPV screening practices, it also made it difficult to determine the relative impact of these contextual factors on those studies’ results. For example, we found intriguing differences in screening rates between ED and other healthcare settings, but variations in reporting made it difficult to draw firm conclusions regarding possible differences in screen-positive rates between settings. Furthermore, some of our included studies were conducted in less traditional healthcare contexts, such as perinatal home visits by IPV-trained community health nurses (e.g., Bacchus et al., 2016) and others were in the context of acute trauma care (Weinsheimer et al., 2005); it is plausible these settings may be associated with higher rates of IPV screening (and positive screens) compared to other settings. We also note that our reliance on quantitative (as opposed to qualitative) studies, published in English, means that we may have missed relevant studies published in other languages or featuring different methodologies. Furthermore, we organized our findings around the RE-AIM and Proctor frameworks. While these frameworks are widely used in implementation research contexts, we acknowledge that including constructs from other relevant frameworks (e.g., the Consolidated Framework for Implementation Research [Damschroder et al., 2009]) would likely have enriched our findings. Finally, our study was limited to research focused on screening for and addressing IPV among women patients, and the extent to which findings would extend to programs for patients who do not identify as women is unknown. The literature on IPV screening and response practices for male and nonbinary gender identity patients is relatively sparse, limiting the ability for review—expanding the research on the effectiveness and uptake of IPV screening programs for men and nonbinary patients is an area for future research.

Conclusions

This review on IPV screening implementation outcomes and effectiveness is particularly novel in its focus on studies of IPV screening conducted by frontline clinical staff. Our findings emphasize the importance of ongoing commitment to IPV screening (e.g., via recurring staff trainings) if such screening programs are to be sustained in healthcare settings. Implementation outcomes should be further examined in the IPV screening literature, as successful implementation of IPV screening and response practices is critical to ensuring their clinical benefits. For example, future research could incorporate more nuanced evaluation of the contextual factors (e.g., leadership support and organizational culture) that may ultimately determine which clinical settings successfully embed IPV screening in routine care—and which do not. It is similarly important to examine the success of specific implementation strategies used to promote IPV screening in specific healthcare contexts, such as the mixed methods research being done to evaluate implementation and clinical outcomes in the largest integrated healthcare system in the United States (Iverson et al., 2020). Emphasis should also be put on better assessing adoption, fidelity, and person-centeredness in IPV screening, as almost all clinicians across healthcare settings will encounter patients who have experienced IPV. Deliberately seizing every opportunity to properly screen for IPV, counsel, refer, and follow up on patients who endorse IPV in healthcare settings is a cornerstone to address this public health issue effectively.

Footnotes

Acknowledgements

Drs. Miller and Iverson gratefully acknowledge their fellowships with the Implementation Research Institute (IRI), at the George Warren Brown School of Social Work, Washington University in St. Louis, through an award from the National Institute of Mental Health (5R25MH08091607).

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Department of Veterans Affairs, Office of Research and Development, Health Services Research & Development (HSR&D) (grant number SDR 18-150: Iverson & Miller).