Abstract

In recent years, there have been many calls for scholars to innovate in their styles of conceptual work, and in particular to develop process theoretical contributions that consider the dynamic unfolding of phenomena over time. Yet, while there are templates for constructing conceptual contributions structured in the form variance theories, approaches to developing process models, especially in the absence of formal empirical data, have received less attention. To fill this gap, we build on a review of conceptual articles that develop process theoretical contributions published in two major journals (

In recent years, a number of authors, and indeed journal editors, have urged scholars to reach beyond traditional styles of theoretical writing in organization and management studies (Cornelissen, 2017; Cornelissen & Durand, 2012, 2014; Delbridge & Fiss, 2013; Tsoukas, 2017). By traditional styles of theoretical writing, we refer mainly to ‘variance theorizing’: i.e. the linking together of concepts expressed as dependent, independent, mediating and moderating variables, usually accompanied by formal propositions, and with a focus principally on explaining variance in outcomes. These traditional styles of conceptual work have dominated the management literature ever since the foundation of

Cornelissen (2017) identifies two strong and viable alternatives to the variance-based propositional style of theorizing: the ‘process model’ and the ‘typology’. In this paper, we focus specifically on the ‘process model’ as we believe that it represents an important but relatively poorly understood approach to theorizing. Indeed, while scholars have offered toolboxes for the construction of variance theories and the modelling of propositions (Whetten, 2009), it is rather less clear how to go about developing process theoretical contributions that are not explicitly data-driven. In general terms, a ‘process model’ involves a narrative storyline that reveals the mechanisms by which events and activities play out over time (Cornelissen, 2017). Yet there has been considerable onto-epistemological debate occurring around what precisely the term process does or should include theoretically speaking (Langley & Tsoukas, 2016; Van de Ven & Poole, 2005). It thus seems likely that there is no single recipe for developing informative process conceptualizations. We therefore ask,

To address this question, we build on a review of process theoretical contributions published in two key journals,

The paper begins with a discussion of the nature of process theory, raising several specific challenges of engaging in process theorizing, notably in the absence of empirical data. After describing our review process, we introduce the four categories of conceptual articles identified, focusing on key exemplars that reflect the essence of these categories, and identifying the specific strategies authors use to develop these contributions. We conclude with an overarching model that integrates the four styles, with suggestions for further developing and enriching process theorizing in organization studies, a mission that we hope authors will further address in the future in the pages of

Specificities and Challenges of Non-Empirical Process Theorizing

The nature of process theorizing

An important task before beginning this review is to specify what we mean by process theorizing in the context of this review. This is not necessarily an easy task given the range of different concepualizations in the literature. Langley and Tsoukas (2010) discuss three different but inter-related distinctions that express these diverse forms. A first distinction developed by organizational theorist Lawrence Mohr (1982) positions ‘process theory’ against ‘variance theory’ where variance theory refers to conceptual constructions that relate variables to one another, while process theory refers to conceptual constructions that focus on the way in which phenomena emerge, evolve or terminate over time through activities and events (see also Langley, 1999; Van de Ven, 1992). This is the simplest distinction that we used to introduce this paper above. A process view thus implies paying particular attention to temporality and change over time, something that may sometimes be virtually absent from variance theorizing.

A second related distinction can be made between narrative and logico-scientific modes of knowing (Bruner, 1990; Langley & Tsoukas, 2010) reflecting, on the one hand, understandings of the world from within based on the richness of lived experience, and on the other, connections between abstract and generalized representations of the world seen from outside them. The narrative form captures temporality, human emotion, meaning and plot, and can be seen as one significant way to think about and conceptualize processes as constructed through experience (Fachin & Langley, 2017; Rantakari & Vaara, 2017) in a way that might be different from the more abstract conceptualizations of processes from outside implied by Mohr (1982).

Finally, a third and more fundamental distinction can be made: that between process and substance ontologies (see also Langley, Smallman, Tsoukas, & Van de Ven, 2013; Langley & Tsoukas, 2016), referring here to radically different conceptions of the nature of the world. Process ontology is grounded in the work of philosophers such as Whitehead, Bergson, James and others (Helin, Hernes, Hjorth, & Holt, 2014; Rescher, 1996), who construe the world as continually becoming and as constituted by ongoing processes. In contrast, a substantive ontology gives priority to entities or things. This has given rise to a further distinction between what has been called ‘weak’ and ‘strong’ process theory (Langley & Tsoukas, 2016). While the first may indeed incorporate the notion of change and evolution of time, it views processes as

Overall, all of the process-oriented perspectives discussed have in common an orientation towards activity, temporality and flow (Langley et al., 2013) although this may be expressed in different ways. In this paper, we decided not to restrict ourselves to one or other of the conceptualizations of process theorizing described above, although we remain sensitive to their distinctive implications and devote some discussion to these as we proceed. We should also note that, in practice, not all of our observed examples of process theorizing are entirely ‘pure’. Intuitively, it would appear problematic to bridge different ontological perspectives, or to mix narrative and logico-scientific modes of knowing. Mohr (1982), in fact, urged scholars to avoid attempting to combine variance and process theorizing in the same study for fear of creating more confusion than insight. However, it turns out that purity is relatively rare in practice, offering another topic for discussion as we move forward. The nature of processes and process theorizing also raises a certain number of specific challenges as compared with more mainstream variance approaches, as we now discuss.

Challenges of non-empirical process theorizing

Scholars have already pointed out some of the challenges of process thinking, notably in relation to theorizing from

From variables to events, activities and trajectories

The starting point for variance theory (i.e. the ‘what’ of theory) (Whetten, 1989) is the set of concepts that will be linked together to generate causal predictions. These concepts usually present themselves as variables which may take different values and which are often visually represented in boxes connected by arrows. The ‘what’ or concepts that are the central focus of process theorizing – events, activities, trajectories – are however harder to pin down because they often have a fluid temporal character, that must nevertheless be articulated well enough for rigorous arguments to be developed about how they work together.

The open-endedness of process-based concepts may thus lead some process theorists to prefer discursive rather than diagrammatic forms to represent their theorizing (e.g. Feldman & Pentland, 2003; Tsoukas & Chia, 2002). Process theorists may also try to draw diagrams expressing such temporally constituted concepts, sometimes placing them in boxes linked by arrows (which represent for example, precedence rather than causality). However, as Feldman (2016) points out, because of their fluid and connecting character, process concepts may actually be better represented by arrows than by boxes. In her chapter in the

From entities to entanglements

A second challenge of process theorizing concerns the way in which process theorizing (especially when a ‘strong’ process view is espoused) tends to eschew a focus on static entities or substances that are clearly separable and isolated from other entities, to focus on webs of dynamic relations and entanglements that together constitute organizing processes: a move that Tsoukas (2017) refers to as the passage from disjunctive to conjunctive theorizing. The nature of language, which tends to privilege nouns over verbs, can make this a particularly challenging conceptual task, so many process theorists compromise with such a purist view, and nevertheless refer to separable entities in their theoretical constructions.

A particularly fruitful angle for process theorizing, however, can be to take what might seem initially to be a conceptual ‘entity’ such as a routine, an institution, an organization, and to ask what dynamic practices, activities and entanglements underpin its stabilization and existence. Thus, Feldman and Pentland (2016) developed a generative theory of routines that describes how routines are continually reconstituted in action through ongoing performances guided by patterns derived from experience as well as by embedded material artefacts. Similarly, Thompson (2011) recounts how the notion of ‘community of practice’ originally referred to the emergent situated learning activities of legitimate peripheral participation that underpin co-located practitioners’ work (Lave & Wenger, 1991). However, later, through ‘ontological drift’, this notion subsequently became frozen or reified to refer to a separable formal structure or entity, described not by the ongoing situated learning activity constituting it (i.e. a process view) but by attributes qualifying it (a substantive or variance-based view). The trick of process theorizing is to remain focused on the multi-faceted doings that underpin apparently stabilized entities rather than on the entities themselves.

From correlation to contingent interaction

Variance theory explanations rely on developing relations between constructs (the ‘how’ of theory) (Whetten, 1989) that are usually expressed in terms of causation or correlation (e.g. the more X, the more Y). Process theoretical explanations, in contrast, rely on a different kind of causality composed of explicit chains of events, activity and interactions that may or may not accomplish things over time. Indeed, as Mohr (1982) points out, the

Process theorists therefore need to identify the event-based contingencies that are likely to redirect pathways over time. This again can make process theorizing a complex intellectual exercise. It is here that data may be helpful, and its absence quite challenging. Data (expressed as recorded events) generates empirical pathways that offer a reference point for explanation. In addition, qualitative or phenomenological data can help the process theorist tap into the reasoning of actors involved, and gain insight into their lived or retrospective experience of process: why and how certain decisions or choices are made, at specific moments in time. The theorist working with purely conceptual linkages rather than empirical data or understandings is not so oriented. Thus theorists often need to imagine in more abstract terms the types of temporally embedded interactive contingencies that might drive events and activities in different directions, and to theorize about them, as well as to think about the context and about how the past is likely to inform the present and shape the future as they are enmeshed one into the other (George & Jones, 2000; Reinecke & Ansari, 2017).

From outcomes to potentialities

A related feature of process thinking which makes theorizing complex, particularly without data to support it, is the open-endedness of outcomes. In variance theorizing, there is always a clear dependent variable, usually construed as an outcome, which becomes the focus of causal explanations. Process thinking, however, often requires a different kind of conceptualization, as time never stops. Moreover, as ongoing, flowing, emergent and performative accomplishments that may be shaped by contingent interactions, process pathways and outcomes may be multiple. Empirical data can help to pin down outcomes, and may seem to make theory building easier. However, concretely observed outcomes are often only one of a multitude of ‘potentialities’ that coexist in a particular situation at an earlier time period (Lord, Dinh, & Hoffman, 2015; Nayak & Chia, 2011). Empirical data may in fact mask that reality.

Thus, conceptual process theorists ideally need to find ways to capture potentialities, heterogeneity and indeterminism while still making sense of them in some kind of parsimonious way. But how does one do this when the possible contingencies and counterfactuals, each orienting towards an alternate pathway, are (at least in principle) infinite? As we shall see later, in some conceptual contributions, the multiplicity of potentialities inherent to process theorizing can manifest itself as a burgeoning complexity of explicit alternate pathways that a process might take.

From predictive regularities to generative mechanisms

So far, we have discussed some of the challenges of thinking through the various surface components of conceptual contributions and in particular the ‘what’ and the ‘how’ (concepts and relations of whatever form). However, as Whetten (1989) and Sutton and Staw (1995) point out, these elements are not the essence of what makes or constitutes a ‘theory’. It is the explanatory story or narrative (the ‘why?’ of theory) that is most important (see also Van de Ven, 1992). In variance type theories, the explanatory narrative may be derived from pre-existing grand theories, or logical arguments, as well as examples and empirical regularities. A convincing theoretical contribution is likely to be based on a multiplicity of these elements that contribute to constructing a set of predictive regularities in the form of testable propositions that hang together as a whole. The underlying explanatory elements for process theoretical contributions seem more likely to build however on some underlying generative mechanisms grounded with perhaps deeper theoretical roots (Cornelissen, 2017).

It is no doubt desirable for process theorists to be familiar with a repertoire of these explanatory tools that each have their own logic, and can be mobilized for the purposes of understanding multiple mid-range phenomena. Van de Ven and Poole (1995) proposed, for example, four canonical generative mechanisms that they argued underpin most theories of change and development: lifecycle theories (based on genetic determinism); teleological theories (based on learning and adaptation); dialectical theories (based on tension and contradiction); and ecological theories (based on competition between elements and the survival of the fittest). Other generic and potentially generative process theoretical tools include structuration theory, symbolic interactionism, actor–network theory, complexity theory and practice theories, all of which have been specifically associated with process theorizing by Langley and Tsoukas (2016) because of their focus on activity, flow and interactions evolving over time. We think that building up from these generic process theorizing tools offers rich conceptual handholds and metaphors for the expression of processes in specific problem areas, and provides a useful starting point for conceptual contributions, as we shall discuss further later.

Methodology

As mentioned above, in this paper, we focused our attention on a corpus of theory papers published in

We began our review process by reading the abstracts of every article published in

In a second step, we copied the references, abstracts, tables, figures and propositions (if any) of each article into a separate document, producing a summary for each of two to five pages, which we then collated into a master document, allowing for easier and quicker reference and comparison between papers. We then carefully read each summary, first to eliminate papers that we may have misclassified as process papers during the initial selection phase, and second to inductively categorize the remaining papers. We carried out this review and analysis first independently, and then jointly. This second analytical step allowed us to reduce our sample of papers from 128 to 82, 26 in

Classification of Process Papers.

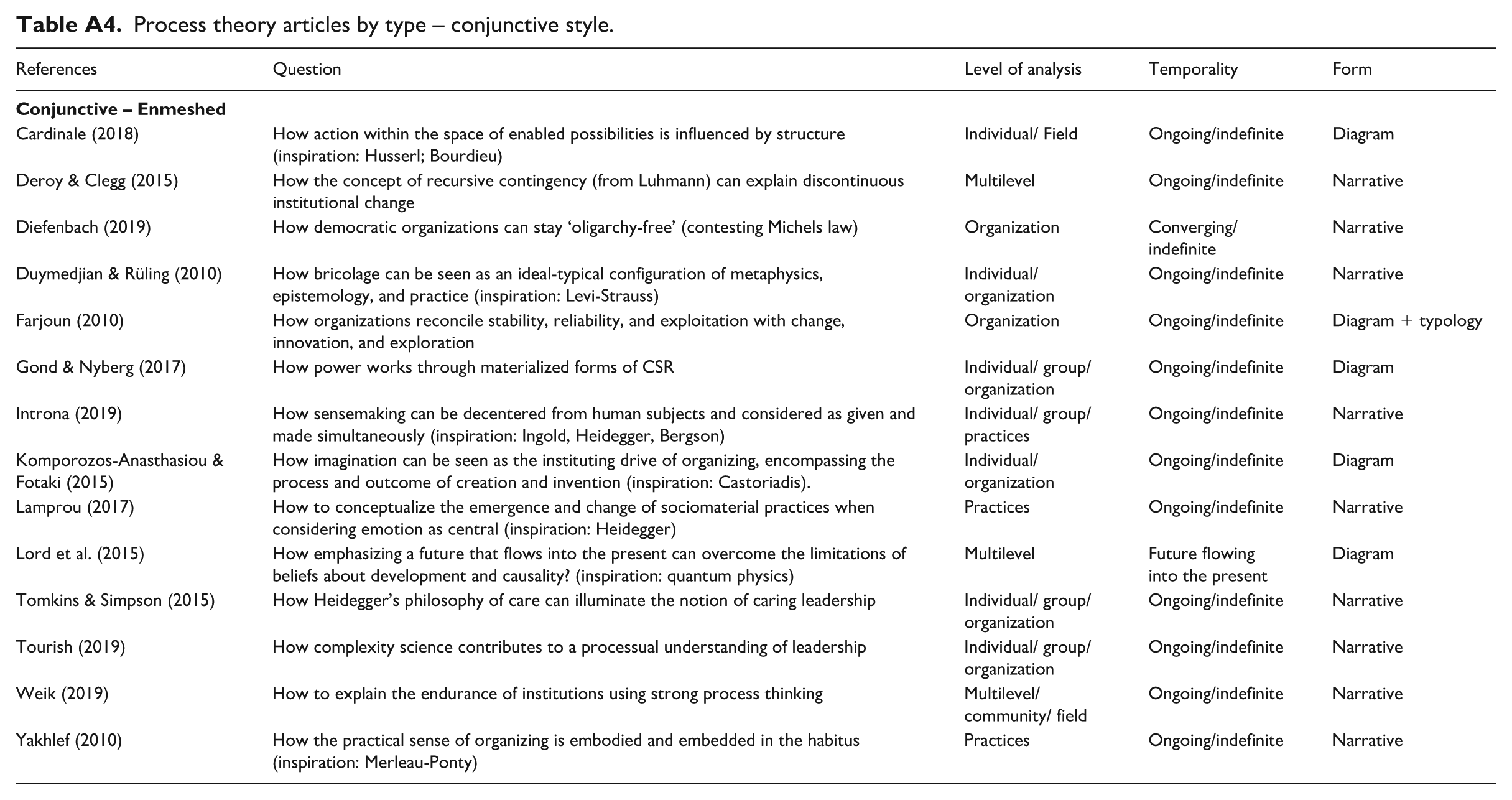

The results of this analysis are presented in the findings section below, where we explore the characteristics and variants of each style in more detail, focusing on selected exemplars. Summaries of all 82 selected papers are tabulated in the Appendix (Tables A1 to A4).

Linear Style of Process Theorizing

The first and simplest theorizing style we observed among the corpus of articles examined follows what we call a ‘linear’ style. Process theories in this category reflect a singular and more or less well-defined ‘sequence of prescribed stages’ (Van de Ven & Poole, 1995, p. 514), each stage occurring one after the other, in a specific order. In these contributions, phenomena are presented as following processes that are linear, cumulative and unidirectional over time, and that may or may not lead to a specified outcome. Although a few authors develop their own stage-based process frameworks from scratch, building their own process-based logic to do so (e.g. Fiol, Pratt, & O’Connor, 2009; Sydow, Schreyögg, & Koch, 2009), many typically borrow or deduce from prior studies an existing and often well-known staged process model, usually broken down into three to five discrete stages, which they then seek to enrich or complexify in some way (e.g. Cropanzano, Dasborough, & Weiss, 2017; Lippmann & Aldrich, 2016; Ren & Gray, 2009; Meyer, Jancsary, Höllerer & Boxenbaum, 2018). These borrowed models, which are often taken for granted and for which relatively little information may be provided, serve primarily as a base from which authors can theorize about elements or events affecting process phenomena at each stage, between stages, or both. The dominant aim is to better understand a known process, to identify and further specify the mechanisms underpinning it, or to help better predict process outcomes.

For example, by their own account, the aim of Cropanzano et al.’s (2017) paper is to ‘enrich our understanding of leader–member exchange (LMX) development’ (p. 233). In particular, they argue that ‘affective events’ can have an important impact on the quality of leader–member relationships at each of three preconceived stages of development: role taking, role making and role routinization. During the role-taking stage, for example, an ‘affective event’ could be an incident in which the leader demonstrates some emotion (e.g. joy, anger, fear) in interacting with a member, which would then influence evolving relations between the two (e.g. via emotional contagion). In terms of theorizing style, the authors thus take a preconceived process based in prior literature, and theorize about how a particular type of contingency (in this case, affective events) affects the process (leader–member exchange development) at each stage, and by association its outcomes (the quality of leader–member relationships). The authors offer nine propositions that formalize their predictions for each stage. As can be seen, this kind of conceptual formulation essentially combines process and variance theorizing in the sense of Mohr (1982). Process conceptualizations are evident in the underlying (but unquestioned) assumption of staged evolution in the basic phenomenon of interest, and in the use of certain kinds of ‘events’ as key contingencies. Variance conceptualizations are evident in the formulation of propositions predicting how the presence of different kinds of events affects outcomes at each stage.

While following a similar strategy of building on borrowed models, rather than focusing on contingencies affecting stage-specific phenomena, other studies using a linear theorizing style pay more attention to

Fisher et al. (2016), for example, theorize about the processes whereby entrepreneurs acquire and manage organizational legitimacy over time. They use a four-stage lifecycle model of technology ventures as their starting point: conception, commercialization, growth and stability. The authors then argue that because the audiences providing financial resources to new ventures change over a typical venture’s lifecycle, venture growth depends on meeting the different legitimacy expectations of each of these key audiences at each stage of the venture process. They refer to ‘legitimacy thresholds’ as the required minimum for growth to occur at a given stage, which in turn makes it possible for an entrepreneur to ‘move on’ to the next stage, where they will be required to meet a different legitimacy threshold. In this theorizing style, the authors take a well-known process (a lifecycle model of new venture growth), and a known factor important to this process (legitimacy) and on this basis theorize the conditions (e.g. venture-identity embeddedness) and practices (e.g. legitimacy buffering) needed to allow focal actors (entrepreneurs) to navigate the

While the above examples remain relatively simple, there are multiple ways of enriching an existing linear process model and some authors combine several of these in the same paper. For example, Perry-Smith and Mannucci (2017) begin their theorizing with a well-known four-stage innovation process (generation; elaboration; championing and implementation) which they refer to as the ‘idea journey’. They then enrich their model in three ways: first, they suggest that ideas at each stage have different needs (e.g. ‘socially derived ingredients that facilitate success’, p. 55). Second, they propose a factor (social networks) that can help address these needs. Third, on this basis, they propose a further contingency (cognitive representation of a network) that facilitates network activation. These three elements (needs, networks and cognition) provide the theoretical apparatus necessary to predict the ease and speed with which an idea will traverse the innovation process and to explain why ideas may get ‘stuck’ at different stages of the journey using a series of eight propositions and variants of these.

One of the critiques that Van de Ven (1992) raised about early linear process models found in the literature (e.g. for decision making, innovation or growth) is that they often seem to assume stage-based patterns that oversimplify the variety present in the empirical world, and at the same time stop short of revealing the explanatory mechanisms that might give rise to such patterns. From this perspective, the more these linear models are elaborated and complexified to focus on mechanisms driving transitions among stages, or on probabilistic contingencies allowing progression, acceleration or breakdown over time, the deeper and more convincing these process conceptualizations become. Another step towards enhancing the richness of linear process theorizations involves digging more deeply into the origins of temporal progressions rather than importing them from elsewhere. A few articles adopting the linear style attempt this.

Fiol et al. (2009), for example, quite unusually theorize backwards to deduce the stages of their model of how management might intervene to resolve intractable conflict. They begin from the assumption (drawn from prior work) that identity matters to these processes and use this as a basis for theorizing about what is required (in terms of intergroup identity articulations) to achieve the desired end state of intergroup harmony. They further assume that each stage ‘provides a necessary but not sufficient condition for enduring harmony’ and that ‘successful completion of the prior phase is necessary to carry out each subsequent phase of the model’ (p. 37). On this basis, they successively deduce what conditions are needed to achieve intergroup harmony and what management interventions facilitate those conditions, working backwards until they reach a credible starting point for a four-stage process likely to lead to intergroup harmony – a progression that is novel to their study. They complete their model by adding a moderating trigger (readiness/ripeness for change) that explains the conditions under which the process is likely to unfold.

In another example, Sydow et al. (2009) delve into the phenomenon of organizational path dependence, seeking to understand the conditions and dynamics that lead to ‘organizational rigidities and structural inertia’ (p. 689) which are the main characteristics (and outcomes) of this phenomenon. Working from the premise that past events influence subsequent actions, they break down the path dependence process into three stages (pre-formation, formation and lock-in), each ‘governed by different causal regimes and constituting different settings for organizational action and decision making’ (p. 690). They explicitly identify four self-reinforcing mechanisms that help explain the constitution of an organizational path in which options are selected and then narrowed down until a point of lock-in is reached. This analysis produces a high-level, visually appealing and parsimonious stage theory of path dependence.

A final example shows how metaphor may be a useful tool in process theorizing (Boxenbaum & Rouleau, 2011), and in particular for thinking through the drivers of linear patterns. Specifically, Røvik (2011) proposes the metaphor of virology as a richer and more nuanced way of understanding the uptake and use of management ideas than previous theories. He outlines the six stages of possible spread (or not) of a virus (i.e. management idea), relying on concepts like ‘immunity’ and ‘mutation’ to explain why some ideas never take, others take partially, and others spread like wildfire. While simpler than many of the studies featured above, this study is ingenious and rich in terms of the substance and nuance it brings to a known process.

In sum, with a few notable exceptions, scholars who adopt a linear style of process theorizing generally begin with an existing unitary staged process model which they then seek to unpack or enrich in some way, by adding elements that explain stage-level and cross-level dynamics and/or help predict process outcomes. The more elaborate among these studies will often also add considerable content detail by fleshing out stages or concepts related to them based on prior studies. So, for example, Fisher et al. (2016) produce a detailed table that shows different audience types and their associated legitimacy expectations at each stage of the new venture lifecycle process. This is then used as the basis for their theorizing about legitimacy thresholds, and about how transitions between one set of legitimacy expectations to another can be managed.

In proceeding as they do, most of the authors featured in this category tend to hybridize elements of process and variance theorizing (Mohr, 1982) within the same conceptual structure, often using propositional forms to express the variance elements. However, the process theoretical content of these models can be enriched by considering

Parallel Style of Process Theorizing

The second style we observed followed a format we call ‘parallel’ process theorizing. Process theoretical contributions in this category typically feature two or more linear process trajectories that are inter-related in some way. The aim of these studies is to enrich current understanding of a higher-level process by showing and explaining how sub-processes mutually influence each other, or by specifying and explicating alternate trajectories that a process might follow. We identified two main patterns in this category based on the type of inter-relations observed: co-evolution (e.g. Grodal, Gotsopoulos, & Suarez, 2015; Huang & Knight, 2017; Sillince & Barker, 2012), and bifurcation (e.g. Bourne & Jenkins, 2013; Hammond, Clapp-Smith, & Palanski, 2017; Martí & Scherer, 2016; Shepherd & Williams, 2018).

As a theorizing strategy, co-evolutionary thinking involves looking at two relatively known processes or sub-processes that in prior research have only been examined separately, and to consider, as the study’s novel contribution, how one is related to and/or influences the other over time. Or in other words, to consider and theorize about how processes

In a similar fashion, Sillince and Barker (2012) begin by claiming that current theories of institutionalization are unsatisfactory because they tend to consider the symbolic-linguistic and practice-material processes underpinning institutionalization separately. They argue that a more complete understanding of institutionalization processes requires looking at ‘how language and material practices

A second kind of parallel theorizing we call ‘bifurcation’ because it involves considering junctures, or possible ‘forks in the road’, that a process might encounter, and which might explain the parallel pathways or trajectories followed. This is, for example, what Martí and Scherer (2016) do in their theorizing about how theories shape social reality, using contemporary research on the efficiency of new financial tools. They trace ‘typical’ theorizing paths around the notion of performativity, suggesting how current financial innovations promoting efficiency and stability tend to model the economy in their image through the narrow kinds of regulation they encourage. They then question the assumptions driving this trajectory and argue that developing theories that include issues of justice could shape social reality onto a different path, one that – in the view of the authors – would lead to more inclusive regulation with greater potential to influence social welfare positively than current theories.

Hammond et al.’s (2017) study of how leadership identity develops through leaders’ cross-domain experiences adopts a similar strategy. In their model, it is assumed that a cross-domain event or experience triggers a four-stage sensemaking process. However, the trajectory followed is theorized to be contingent on the characteristics of the triggering event (as planned/unplanned or appreciative/challenging, for example) with a domino effect on subsequent sensemaking patterns leading to two possible pathways that the process of leadership identity development might follow. In turn, each pathway produces different learning outcomes which, cumulated over time, come to constitute a leader’s ‘identity’.

The notion of bifurcating parallel pathways may be generalized further by including more than one juncture point, allowing for multiple possible trajectories. When this occurs, the mode of conceptualization tends to shift closer to what Cornelissen (2017) called a ‘typology’ style of theorizing as the bifurcating contingencies become more prominent in the article’s arguments. We see this, for example, in Werner and Cornelissen’s (2014) conceptualization of how different frame building and frame blending practices aimed at instigating institutional change may be mobilized depending on the nature of existing schemas, and intended changes constructed as a 2 × 2 matrix. Similarly, Bourne and Jenkins (2013) develop a ‘dynamic perspective of organizational values’ by considering the trajectories that might lead to four different patterns of organizational value tensions, also displayed as a 2 × 2 matrix.

In sum, the parallel style of process theorizing focuses on multiple contingent processes as related in some way, either influencing each other (coevolution) or proliferating into alternate pathways depending on key contingencies (bifurcation). While sharing similarities with the linear style, notably in the way authors seek to enrich process models by theorizing about elements likely to shape them in some way, they are also inherently more dynamic than many of the linear models we examined, notably those that tend to embroider on imported stage models using variance-based elements. Theorizing explicitly about alternate trajectories, bifurcations and mutual influence makes them so. The next category outlines yet another way of enriching the process theoretical repertoire.

Recursive Style of Process Theorizing

The third and by far the most common theorizing style we call ‘recursive’. It groups together articles that reflect processes as ongoing cycles of adaptation and/or reproduction, very often represented diagrammatically through one or multiple feedback loops. Recursive processes are generally portrayed as ongoing, with an underlying assumption that they continue indefinitely, unless interrupted. As argued by Van de Ven and Poole (1995), all process theories are in principle recursive. However, scholars adopting linear or parallel styles of theorizing tend not to focus on this: their conceptualizations are driven by an expected or desired outcome beyond which there is no perceived need to theorize further about ‘what might happen next’. In contrast, a feature of the recursive style is that even in situations where a clear process outcome is specified, there is an underlying assumption that ‘things do not end here’ and that the process continues.

Thus, for example, in DeRue and Ashford’s (2010) theorizing about leadership identity construction in organizations (described in detail below), the desired outcome of the theorized process is specified as ‘clarity and acceptance of leader–follower relationship’. There is however nothing to suggest that ‘clarity and acceptance’ is determined once and for all. On the contrary, the main activities identified as contributing to clarity and acceptance are represented as recursive and ongoing, and possibly weakened or reinforced over time. Similarly, Smith and Lewis’s (2011) dynamic equilibrium model of paradox embeds the assumption that despite periods of stability, tensions or contradictions will inevitably rise again at some point, triggering renewed but never identical cycles. This observation suggests a potentially deeper engagement with process ontology than observed in previous categories. We documented five approaches to recursive theorizing that we label interactive, systemic, cyclical, dialectical and evolutionary.

The most common approach to theorizing within this general ‘recursive’ category is for scholars to take an

A second recursive approach to theorizing involves taking a

Gray and Kish-Gephart’s (2013) study of how social class distinctions and thus inequality are sustained within organizations offers a telling example of how mutually reinforcing processes can lead to rigidity. Their analysis focuses on the micro-level interactions that serve to justify and reinforce existing status hierarchies in day-to-day organizational life. In their theorizing, cross-class encounters create anxiety which actors then seek to minimize by justifying the perceived status difference in different ways, depending on whether they perceive themselves as high, middle or low class. These interactions, which the authors refer to as ‘maintenance class work’, serve to preserve the assumptions and practices associated with class differences. Repeated over time, these interactions lead to the reinforcement of social class distinctions and to the legitimation of behaviours associated with them.

In contrast, Beckert’s (2010) macro-level analysis of how interactions between social networks, institutional rules and cognitive frames are an important source of market dynamics, offers an example of how things that are outwardly stable, can be seen as dynamic through the lens of micro-level social processes. Beckert argues that while the primary function of the different types of structure underpinning a social entity (here markets) is to maintain stability, contradictions between them create spaces for innovation and change. These studies are rich examples of process thinking in which we see how micro-level processes are intertwined dynamically and systemically constitute higher-level phenomena (see also Feldman & Pentland, 2003; M. Thompson, 2011).

A third approach to recursive theorizing involves taking a

The fourth and fifth approaches we observed in this category involved taking a

For example, Di Domenico et al.’s (2009) study of how corporate–social enterprise collaborations can integrate or accommodate the differences between their respective organizational forms aims to enrich social exchange theory by viewing social exchange interactions between corporations and social enterprises from a dialectical perspective. The authors argue that sustained collaboration between these two fundamentally different types of organization requires that the antithetical forces inherent to their relationships be resolved, which occurs in repeated cycles of thesis, antithesis and synthesis, strengthening the partnership over time and potentially giving rise to a new organizational form. Spisak et al.’s (2015) analysis of leadership development over long periods of history adopts a recursive evolutionary theorizing style, where repeated patterns of variation, selection and retention of leadership traits produce a particular type of leadership style adapted to solving coordination problems within a given niche context, which in turn will impact how this niche is likely to evolve and change over time.

As in the previous categories, authors of recursive process models often complexify their basic process conceptualizations in various ways. Sometimes, this may occur by adding ‘variance’ type elements such as contingencies that might help predict the intensity or direction that a process is likely to follow. For example, DeRue and Ashford (2010) enrich their recursive theoretical model of leadership identity emergence by identifying a number of contextual conditions (sharedness of leadership structure schemas; clarity, visibility and credibility of claims and grants, etc.) that might help predict the amplification or not of leader/follower identity formation processes. Likewise, Smith and Lewis (2011) add propositions to their process model that highlight elements that cause latent paradoxes to become salient as well as factors that spur vicious or virtuous cycles.

Integrating embedded loops into their models (thus showing cycles within cycles) is another strategy used by several authors (Dokko, Nigam, & Rosenkopf, 2012; Gond & Nyberg, 2017; Gray & Kish-Gephart, 2013; Mena, Rintamaki, Fleming, & Spicer, 2016). Finally, many authors enrich their models by connecting levels of analysis by showing, for example, how individual-level recursive processes scale up to group- or organizational-level ones (Costas & Grey, 2014; Dionysiou & Tsoukas, 2013) or how organizational-level processes scale up to field-level ones (Gray et al., 2015; Martí & Gond, 2018; Ocasio, Mauskapf, & Steele, 2016), while simultaneously influencing them in mutual recursive relations.

In sum, recursive approaches to process theorizing are clearly relevant and applicable to a large number of process phenomena, in different contexts and at different levels of analysis. In contrast with the linear and parallel theorizing styles, the recursive view tends towards embracing a more processual ontology where phenomena are embedded in social interactions, continually changing and mutually constituting each other across levels and over time. Scholars achieve this by considering feedback loops, examining how lower-level interactions recursively constitute higher-level ones, and by pulling in other well-established process theoretical resources such as social interactionism (DeRue & Ashford, 2010), dialectics (Di Domenico et al., 2009), evolution (Spisak et al., 2015), performativity (Martí & Gond, 2018) and practice theory. The recursive style is somewhat more evenly distributed between the two journal outlets we considered as compared to the other two styles described thus far (12

Conjunctive Style of Process Theorizing

A fourth style of process theorizing observed among the papers in our corpus we label ‘conjunctive’ because these papers seem to reflect Tsoukas’s (2017) recent call for more complex ‘conjunctive theorizing’ that seeks ‘to make connections between diverse elements of human experience through making those analytical distinctions that will enable the joining up of concepts normally used in a compartmentalized manner’. Specifically, the papers in this category appear to deliberately break down pre-established distinctions and dualisms inherent to the mainstream literature such as those between agency and structure (Cardinale, 2018), between change and stability (Farjoun, 2010), between cognition, action and emotion (Weik, 2019), between human and material (Introna, 2019; Lamprou, 2017), between body and mind (Komporozos-Athanasiou & Fotaki, 2015) and between social and bodily knowing (Yakhlef, 2010) in order to formulate explicit strong process theoretical contributions. Scholars use terms such as ‘meshwork’ (Introna, 2019), ‘harmonic arrangements’ composed of ‘subjects, objects, discourses, and practices’ (Weik, 2019, p. 330) and ‘enmeshing’ (Lamprou, 2017) to describe the dynamic experiential interpenetration of phenomena that are very often taken to be separate and distinct in other styles of theorizing.

More often than not, scholars achieve this by drawing on theoretical ideas derived directly from process philosophy, or from process theoretical frameworks originating from domains outside organization studies, to contribute to the understanding of specific organizational issues. For example, Lamprou (2017) and Tomkins and Simpson (2015) draw on Heidegger’s (1962) philosophy of care to discuss socio-material practices and leadership respectively, Yakhlef (2010) draws on Merleau-Ponty’s (1962) phenomenological perspective to consider the corporeality of practice-based learning in organizations, and Lord et al. (2015) draw on ideas from quantum physics to argue for a new approach to the study of time and organizational change.

The conjunctive style of process theorizing has a number of key features that distinguish it from the previous categories. First, in consonance with the intent to transcend dualisms, with a few exceptions, these articles tend not to include many of the key structuring devices we see for other styles: notably box and arrow diagrams (with or without feedback loops); tables (e.g. showing concepts and relationships); and formal propositions. Explicitly, of the fourteen articles in this category, five include conceptually oriented figures (three published in

The discursive style may also reflect to some degree many of the inspirational source texts being drawn on, that are themselves broad, multi-faceted, written in a discursive style and subject to a variety of interpretations (e.g. Heidegger, 1962; Husserl, 1991; Lévi-Strauss, 1966; Luhmann, 1995; Merleau-Ponty, 1962). It is not for nothing that Tomkins and Simpson (2015, p. 1026) write, ‘Engagement with Heidegger’s philosophy is unsettling, though, for as soon as we think we might have grasped or captured something, we have probably missed the Heideggerian point.’ This does not imply however that the organizational implications of this type of thinking are not valuable, although achieving this may be sometimes challenging and controversial.

A device that is liberally drawn on in almost all conceptual articles in one form or another is the ‘foil’ – the construction of a contrast with one or more previous conceptualizations of a phenomenon that are viewed as inadequate or simplistic, and with respect to which the proposed perspective is constructed as richer or superior. Foils seem, however, to be particularly prevalent as full-fledged structuring devices used throughout a paper for articles adopting a conjunctive style. A foil may be a single ‘received’ view as in Cardinale’s (2018) revised theorization of the Giddensian structure–agency dynamic underpinning existing conceptualizations of institutions (achieved by drawing on Husserl and Bourdieu), and Introna’s (2019) challenge to the Weickian notion of sensemaking (achieved by drawing on Heidegger, Bergson and Ingold). Alternatively, authors may enrich their arguments by constructing multiple alternative foils. For example, Weik (2019) develops her own ‘strong’ process theoretical explanation of institutional endurance by drawing on the notion of ‘aisthetics’ (her term) in direct contrast to four existing explanations of institutional endurance in the literature. Similarly, Lamprou (2017) contrasts her Heideggerian approach to socio-materiality and spatiality of care to three alternative perspectives on socio-materiality.

Of particular note, the ‘foils’ chosen in these papers are generally alternative theoretical frameworks that would normally

While many of these articles build on process theoretical ideas from philosophy, Lord et al. (2015) innovate by drawing on ideas from the natural sciences. Specifically they apply quantum theory from physics to the consideration of time and organizations, pointing out the insights that may be obtained by reversing the trajectory of time, i.e. by considering the future not as an extrapolation of the present, but by viewing it as multiple and flowing into the present. They argue that such a perspective can enrich organizational studies considerably by taking into account the multiple potentialities inherent in existing situations. While clearly processual, and strongly so, this article, in contrast to others in this category, pushes process theorizing towards a more mathematical form, based on more objectively defined notions of events and temporal processes than those discussed above. However, its creative breaking down of the forward-looking temporal assumptions common to most process theorizing led us to place it in this category.

In sum, these contributions take us squarely into the territory of what Langley and Tsoukas (2016) would label ‘strong’ process theorizing. Even more than the other categories, these articles challenge readers to think in unfamiliar ways for management scholars. It is often the connection to process thinkers from outside the field of organization studies that creates both the opportunity and the challenge of achieving this. Moreover, given the intellectual traditions underpinning the two journals tapped, it is perhaps not surprising that ten out of these fourteen articles (see Table 1) appeared in

Discussion and Conclusion

We were motivated to develop this article by the perception that while there have been multiple calls to engage in non-propositional forms of theorizing, and in particular to develop conceptual contributions in the form of process models (Cornelissen, 2017; Delbridge & Fiss, 2013), the literature offers very little in the way of guidelines about how to achieve this. Scholars have written much about how to theorize using process

Contrasts and commonalities across the four styles

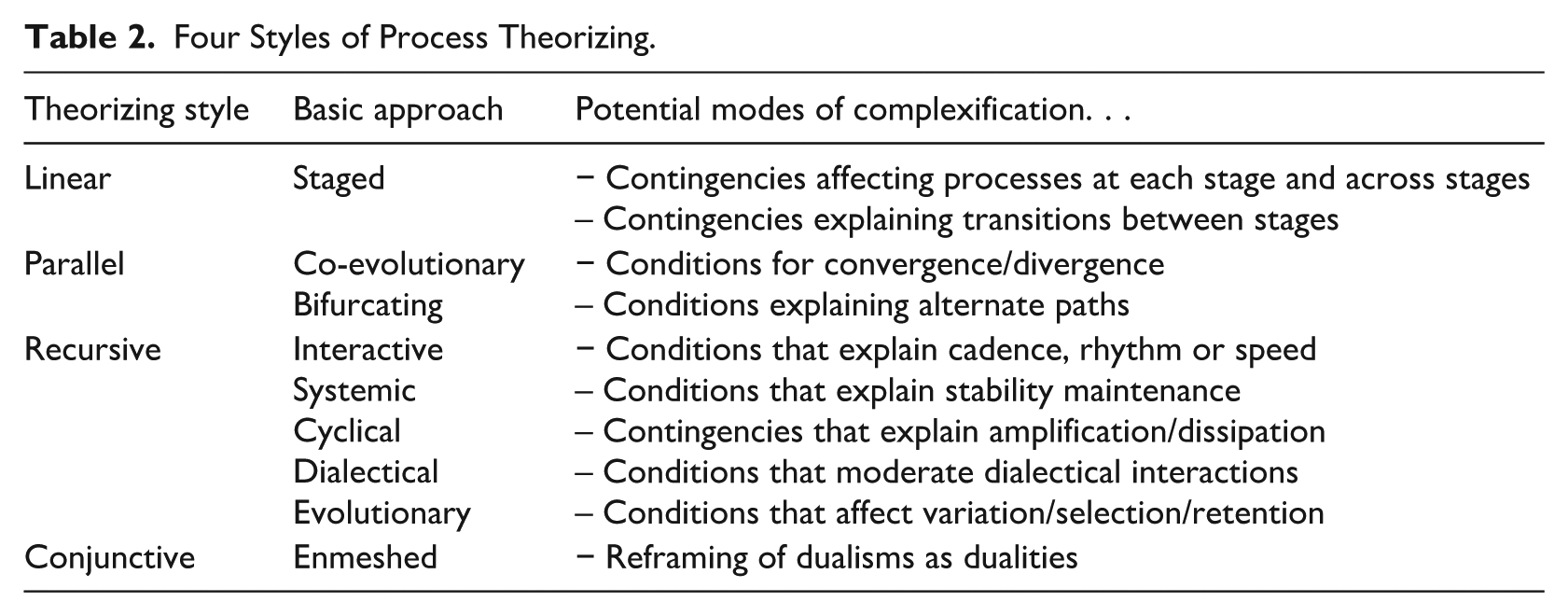

A first observation is that as we move from linear, to parallel, to recursive, to conjunctive styles of process theorizing, our onto-epistemological perspective shifts as well, with a more substantive ontology dominating in the linear and parallel categories, and greater emphasis on processes as fully constitutive of the world in the recursive and conjunctive categories. That said, despite the central orientation of these articles around process, variance reasoning often plays a complementary role, particularly (but not only) in the linear category. This is manifest for example in the propositional inventories scholars develop to explain how various conditions affect processes at each stage and across stages, and influence transitions from one stage to the next. Many process conceptualizations are indeed rendered richer, more complex and potentially more satisfying by an exploration of the contingencies surrounding them. These contingencies may for example be theorized to redirect pathways, disrupt or reproduce stabilized patterns of interaction, and amplify or dissipate recursive patterns. Table 2 summarizes the four styles of process theorizing identified, along with the particular devices that may be used to complexify the conceptualizations proposed.

Four Styles of Process Theorizing.

A second cross-cutting feature concerns the way in which and the degree to which scholars develop their conceptual models based on pre-existing theoretical or conceptual resources. Papers adopting a linear style often depend on preconceived staged process models that are largely taken for granted. Authors then embroider around these to construct their own contributions. A completely different type of borrowing is evident in the conjunctive style. Here authors often draw on complex theoretical ideas from process philosophy. Rather than attempting to develop those ideas as such, scholars construct their own contributions by showing how these relatively unfamiliar conceptual tools might alter understandings of the organizational phenomena they are considering as compared with simpler process perspectives. Articles adopting the parallel and recursive styles are situated somewhere in between. Notably, studies drawing on the recursive style often built on sociological theories such as symbolic interactionism, dialectics and evolutionary theory, possibly mobilizing them in combination with other theories to explore their focal phenomenon.

A third notable observation concerns the relative role of ‘data’ in so-called ‘non-empirical’ process theorizing. While all the papers included in this study are primarily conceptual in nature (a formal requirement for publication in

The simplest references to empirical ‘data’ are occasional hypothetical situations invoked to support a particular proposition, or perhaps a three- or four-line reference to a prior empirical study. This is common across all the four styles. However, at the other extreme, some authors draw on more extended case vignettes. For example, Introna (2019) (adopting a conjunctive style) builds on Weick’s (1993) classic Mann Gulch disaster case to illustrate his novel view of sensemaking based on process theoretical notions of temporality, materiality and implicitness. Other scholars use background examples throughout their papers to render each step of their conceptual argument more concrete. For example, Cardinale (2018) draws on a running example of Peter, a university graduate, considering his next career move. In between the two extremes, Gray et al. (2015) (adopting a recursive style) use a rich array of empirical vignettes (dealing with contexts as diverse as the foundation of Facebook, female circumcision, Oxbridge dining and juvenile gang member reform, among many others) to bring their relatively complex theory of the micro-foundations of institutions to life. We suggest that such extensive references to concrete situations offer one approach through which scholars may overcome some of the challenges of fluidity in articulating process theoretical ideas we noted at the beginning of the paper.

One might ask then what difference it might make to the theoretical styles developed that studies are explicitly data-driven or not. Sutton (1997) once made the rather subversive argument that many so-called theory papers (notably those published in

Our discussion so far has looked at the styles individually rather than as a related set. We now integrate them into an overarching framework that illuminates them as a continuum.

Theorizing as a process of disentanglement

As noted above, as we move from linear to parallel to recursive to conjunctive theorizing, we witness a shift from a ‘weak’ to a ‘strong’ process ontology in the studies reviewed. We adopt this weak/strong terminology from previous writings, but also recognize that rather than simply reflecting a difference in ontological emphasis, it may unintendedly evoke the sense that a ‘strong’ process orientation is somehow better than a ‘weak’ one. While there is always a risk in attempting to bridge ontological differences, we believe that drawing on the notion of ‘framing,’ 4 as used by Callon (1998) (who himself borrowed it from Goffman) offers a fruitful and perhaps less judgemental way of conceptualizing the differences and relations between the theorizing styles we propose here.

Callon (1998, p. 16) refers to framing as a ‘process of disentanglement’. While he does so in the specific context of market construction, his ideas can, in our view, offer insight into theorizing about process more generally. About framing, Callon writes: Framing is an operation used to define agents (an individual person or a group of persons) who are clearly distinct and dissociated from one another. It also allows for the definition of objects, goods and merchandise which are perfectly identifiable and can be separated not only from other goods, but also from the actors involved, for example, in their conception, production, circulation and use. It is owing to this framing that the market can exist and that distinct agents and distinct goods can be brought into play. Without this framing the states of the world cannot be described and listed and, consequently, the effects of the different con-ceivable actions cannot be anticipated. (Callon, 1998, p. 17)

If in the above excerpt one replaces the word ‘market’ with the word ‘process’ and refers only to ‘objects’ (goods and merchandise being objects which are specific to a market setting), framing becomes a way of thinking about how to disentangle the complex reality that are processes, where processes are viewed as a complex arrangement of multiple individuals and groups of individuals interacting at different levels and different proximities, between themselves and with various objects in an ongoing manner, over time. Occasionally certain actors leave and new ones come in, disrupting (and possibly changing) the flow of what is going on, making it difficult to know when a process starts and when it ends. Framing, or thinking about what and what not to include within a frame when theorizing about processes, creates boundaries around these elements or objects which helps make them more manageable and thus easier to study. Of course, the possible ‘objects’ of process theorizing are not just people and their movements, but process-based concepts such as doings, experiences, activities, events and trajectories and the relations among them. A framing metaphor helps us view theory building as a process of disentangling these elements and their interconnections from each other in order to better see what is going on.

Also of interest with Callon’s notion of framing is that there is no implication that what is outside the frame does not exist. Callon (1998, p. 249) argues, ‘Framing puts the outside world in brackets, as it were, but does not actually abolish all links with it.’ He refers to what is outside a frame as ‘overflows’. This is an interesting idea when applied to our understanding of processes, as it offers an alternative way of thinking about ‘strong’ and ‘weak’ process theorizing. It invites us to ask: What is (and is not) included in a frame? The more elements a theorist includes, the more complex and richer the potential theory. Such complexity however often comes at the expense of parsimony and elegance. Thus, at the far end of the scale, where a frame includes everything and where elements are more fully intertwined, enmeshed and joined together (as is the ambition of a strong process perspective), traditional conceptual tools such as boxes and arrows become potentially useless. The only way to make sense of reality framed in this way is to disentangle the elements at play and revert back to a less strong processual view or switch to entirely different mediums of expression – such as narrative, metaphors or poetics (Tsoukas, 2017) – which have emerged historically for the specific purpose of capturing complex and entangled emotions, sensations and experiences.

Our effort to depict these contrasts in visual form appears in Figure 1. Metaphorically, as we move from left to right across this figure, we see how frames become more and more inclusive but also how elements become more and more closely interwoven (from linear to parallel to recursive to conjunctive) to the point where they ultimately blend into one another. We explore the implications of this framing metaphor as we consider in conclusion broader heuristics that scholars may use in selecting approaches for process theorizing.

An Integrative Model of Process Theorizing Styles.

Heuristics for process theorizing

Various scholars have proposed what have now become classic process thinking heuristics. These include: thinking of phenomena in terms of gerunds or verbs instead of nouns (Weick, 1979); thinking of something as a sequence of events (Abbott, 2004); or adding the word ‘work’ to a concept usually thought of in static terms (identity, emotion, etc.) (Lawrence & Phillips, 2019). Theorizing backwards, as Fiol et al. (2009) did (see example described above), to imagine how a given outcome ‘came to be’ is yet another way of thinking about phenomena in process terms. Finally, the suggestions we offered in our introduction about ways to address the usual challenges of process theorizing, and in particular process theorizing without data, as well as the categories and complexification modes outlined in Table 2, also provide a good base from which to extrapolate generative questions.

To these we would like to add the notion of framing itself as a useful heuristic for theorizing about process, which becomes clear if one considers how framing is used in film. When filming a scene, cinematographers need to make decisions about focus, depth and time lapse (among other things). It is in the interplay between these three elements that cinematographers gain their creative licence to produce captivating and memorable scenes and stories. We suggest that process theorists can also use these ideas to produce interesting theories about process phenomena.

For example, cinematographers need to decide what subject they will focus on, which actors, objects and/or activities will be included in the ‘frame’ of the shot they will be taking. Similarly, scholars need to delimit which part (or parts) of a process they wish to focus their attention on. Both cinematographers and scholars also need to decide how far to ‘zoom in’ or ‘zoom out’ of their subject of interest (Nicolini, 2009) and what specific angle or perspective to take. Next, cinematographers need to make decisions about depth of field. Will only those elements in the foreground be clear (while all background elements remain blurred or are cut out) or will both background and foreground elements be clear? Scholars might ask themselves what contextual details they need to include and exclude in their process theoretical ‘frame’. In cinematography, depth-of-field decisions often involve trade-offs: while gaining depth can help transform a two-dimensional frame into a three-dimensional one, it can also create distractions, which is not always desirable. Adding more depth or context to a theory certainly enriches it (as it does a scene) but it also complexifies the task of developing it. In addition, it potentially takes away from the element of interest by crowding it out with too much detail. Finally, cinematographers need to make decisions about time and motion. They must decide what time bracket to focus on, and on how long of a time lapse to let pass between each scene or shot: do they capture movement and action in real time or do they bracket time in order slow it down? Slowing motion down can help viewers see things they would never have been able to see otherwise: the runner whose hand crossed the finish line first, the soccer player tripping his opponent. Process theorists also need to make time-related decisions. What time lapse

Again, we suggest that no one decision or choice is necessarily better. All depends on the cinematographer’s and process theorist’s eye, and on what he or she wishes to show or reveal. But choices and trade-offs are inevitable: as the famed photographer Henri Cartier-Bresson put it, ‘Things-as-they-are offer such an abundance of material that a photographer must guard against the temptation of trying to do everything. It is essential to cut from the raw material of life – to cut and cut, but to cut with discrimination’ (Cartier-Bresson, 1952). The word

Footnotes

APPENDIX: Summary of Process Theory Articles Reviewed

Process theory articles by type – conjunctive style.

| References | Question | Level of analysis | Temporality | Form |

|---|---|---|---|---|

|

|

||||

| Cardinale (2018) | How action within the space of enabled possibilities is influenced by structure (inspiration: Husserl; Bourdieu) | Individual/ Field | Ongoing/indefinite | Diagram |

| Deroy & Clegg (2015) | How the concept of recursive contingency (from Luhmann) can explain discontinuous institutional change | Multilevel | Ongoing/indefinite | Narrative |

| Diefenbach (2019) | How democratic organizations can stay ‘oligarchy-free’ (contesting Michels law) | Organization | Converging/ indefinite | Narrative |

| Duymedjian & Rüling (2010) | How bricolage can be seen as an ideal-typical configuration of metaphysics, epistemology, and practice (inspiration: Levi-Strauss) | Individual/ organization | Ongoing/indefinite | Narrative |

| Farjoun (2010) | How organizations reconcile stability, reliability, and exploitation with change, innovation, and exploration | Organization | Ongoing/indefinite | Diagram + typology |

| Gond & Nyberg (2017) | How power works through materialized forms of CSR | Individual/ group/ organization | Ongoing/indefinite | Diagram |

| Introna (2019) | How sensemaking can be decentered from human subjects and considered as given and made simultaneously (inspiration: Ingold, Heidegger, Bergson) | Individual/ group/ practices | Ongoing/indefinite | Narrative |

| Komporozos-Anasthasiou & Fotaki (2015) | How imagination can be seen as the instituting drive of organizing, encompassing the process and outcome of creation and invention (inspiration: Castoriadis). | Individual/ organization | Ongoing/indefinite | Diagram |

| Lamprou (2017) | How to conceptualize the emergence and change of sociomaterial practices when considering emotion as central (inspiration: Heidegger) | Practices | Ongoing/indefinite | Narrative |

| Lord et al. (2015) | How emphasizing a future that flows into the present can overcome the limitations of beliefs about development and causality? (inspiration: quantum physics) | Multilevel | Future flowing into the present | Diagram |

| Tomkins & Simpson (2015) | How Heidegger’s philosophy of care can illuminate the notion of caring leadership | Individual/ group/ organization | Ongoing/indefinite | Narrative |

| Tourish (2019) | How complexity science contributes to a processual understanding of leadership | Individual/ group/ organization | Ongoing/indefinite | Narrative |

| Weik (2019) | How to explain the endurance of institutions using strong process thinking | Multilevel/ community/ field | Ongoing/indefinite | Narrative |

| Yakhlef (2010) | How the practical sense of organizing is embodied and embedded in the habitus (inspiration: Merleau-Ponty) | Practices | Ongoing/indefinite | Narrative |

Acknowledgements

We thank Markus Höllerer and Joep Cornelissen for their advice and encouraging support in developing this paper.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article. The order of authors is alphabetical reflecting equal contributions.

Both authors contributed equally.