Abstract

Artificial intelligence is a strong focus of interest for global health development. Diagnostic endoscopy is an attractive substrate for artificial intelligence with a real potential to improve patient care through standardisation of endoscopic diagnosis and to serve as an adjunct to enhanced imaging diagnosis. The possibility to amass large data to refine algorithms makes adoption of artificial intelligence into global practice a potential reality. Initial studies in luminal endoscopy involve machine learning and are retrospective. Improvement in diagnostic performance is appreciable through the adoption of deep learning. Research foci in the upper gastrointestinal tract include the diagnosis of neoplasia, including Barrett’s, squamous cell and gastric where prospective and real-time artificial intelligence studies have been completed demonstrating a benefit of artificial intelligence–augmented endoscopy. Deep learning applied to small bowel capsule endoscopy also appears to enhance pathology detection and reduce capsule reading time. Prospective evaluation including the first randomised trial has been performed in the colon, demonstrating improved polyp and adenoma detection rates; however, these appear to be relevant to small polyps. There are potential additional roles of artificial intelligence relevant to improving the quality of endoscopic examinations, training and triaging of referrals. Further large-scale, multicentre and cross-platform validation studies are required for the robust incorporation of artificial intelligence–augmented diagnostic luminal endoscopy into our routine clinical practice.

Introduction

Artificial intelligence (AI) systems in luminal endoscopy are now on the precipice of being widely commercially available. The development of deep learning (DL) in diagnostic imaging has further potentiated the realms of AI in luminal endoscopy and overcomes some of the limitations of machine learning (ML) through the ability to process high-dimensional endoscopic data and to self-identify trainable parameters not appreciable to humans. One of the most researched DL methods uses convoluted neural networks (CNNs) (Figure 1) designed to assimilate biological neural networks. 1 Here, we review the existing data for AI in diagnostic endoscopy encompassing upper gastrointestinal, small bowel capsule and colonic examinations. Additional roles of AI outside diagnosis relevant to endoscopy are discussed alongside future requirements for research and global adoption of AI into routine endoscopic practice.

Schematic diagram of a CNN. CNNs differ from traditional fully connected networks in that each perceptron connects to a few neurons instead of all neurons. With each hidden layer of the CNN there will be an input value multiplied by the weight and added to the biases. This value will then be passed through an activator function (ReLU); if the value is above the threshold, it will fire. The output of this layer will then become the input of the next hidden layer and follow the same formula. The final layer is fully connected and is where image classification ensues with final activation by pixel values from the pooling layer exceeding the threshold: a high value will correctly identify the image, a low value will not.

Upper gastrointestinal endoscopy

AI-augmented endoscopic imaging has been studied across benign and malignant pathologies of the upper gastrointestinal (GI) tract. The majority of data of AI in the upper GI tract are retrospective,2–4 with two real-time diagnostic evaluations for the diagnosis of early gastric cancer 5 and Barrett’s neoplasia. 6

Helicobacter pylori

AI could potentially improve the diagnostic yield by abating the false positive rate secondary to conventional sampling error. All studies for the diagnosis of

Diagnostic test summaries for artificial intelligence–augmented endoscopy of the upper gastrointestinal tract.

P, prospective; R, retrospective; ACA, acetic acid; AI, artificial intelligence; AUC, area under the curve; BLI, blue laser imaging; CAD, computer-assisted diagnosis; DL, deep learning; FICE, Fujinon intelligent chromoendoscopy; HRME, high-resolution magnification endoscopy; LCI, linked colour imaging; mNBI, magnification narrow band imaging; NBI, narrow band imaging; WLE, white light endoscopy.

Three retrospective CNNs have achieved comparable diagnostic performances with a sensitivity of 79–87% and specificity of 83.2–87%.7,8 One of these CNNs used images classified to location and achieved a significantly higher accuracy than endoscopists (by 5.3%). 7

Preliminary analysis of prospectively collected data using a refined feature selection trained artificial neural network (ANN) of cases and controls yielded an accuracy of greater than 80% for the detection of intestinal metaplasia, atrophy and the severity of gastric inflammation. 3

Linked colour imaging (LCI) demonstrates encouraging preliminary findings with high accuracy of greater than 93%4,9 and appears to be superior to blue laser imaging (BLI) with areas under the curve (AUCs) of 0.96 and 0.95, respectively, 4 but requires large-scale study.

Barrett’s neoplasia

Application of AI in this remit is for the detection and diagnosis of Barrett’s-associated neoplasia using the conventional Seattle biopsy protocol and is summarised in Table 1. 10 Retrospective studies demonstrate overall accuracy for the detection of Barrett’s neoplasia of approximately 83–85%.11–13 Preliminary novel findings of a recent study evaluating histograms of volume laser endomicroscopy (VLE) images appear to further optimise diagnostic performance. 14 ML using decision trees (DTs) on videos of nondysplastic Barrett’s and Barrett’s-associated dysplasia undergoing acetic acid examination using i-SCAN (PENTAX) was formed upon the mucosal evaluation by three expert endoscopists. The overall DT model accuracy of diagnosis was 92% and demonstrated augmented performance and improvement in the accuracy of nonexpert endoscopists using the DT algorithm. 15 Real-time diagnostic study of using DL demonstrated an overall accuracy of 89.9% in a small test sample of 14 cases [36 images of early adenocarcinoma and 26 nondysplastic Barrett’s oesophagus (BE)] and using only 129 images for training. 6

Although the current data do not satisfy the preservation and incorporation of valuable endoscopic innovation (PIVI) thresholds for advanced imaging technologies of a per-patient sensitivity of ⩾90% and a negative predictive value of ⩾98%, 16 through further optimisation of algorithms in large-scale studies, this appears feasible and achievable.

Squamous cell neoplasia

Applications of AI include the diagnosis of squamous cell neoplasia (SCC) and the estimation of depth of invasion, a pertinent factor to decipher the appropriate management strategy driven by a range of imaging modalities, summarised in Table 1.

Diagnosis

White light endoscopy

A retrospective CNN demonstrated excellent diagnostic performance for the detection of early SCC and differentiation from inflammation. 17 The preliminary performance of CNNs on unseen videos having been developed on static images was able to detect SCC in 8 of 10 patients. 18

Narrow band imaging

DL using a CNN was piloted on manually generated regions of interest (ROIs) of narrow band imaging (NBI) intrapapillary capillary loops. 19 A subsequent large-scale study demonstrated a rapid accurate analysis taking 27 s for the test database analysis (1118 images). 20 A multicentre NBI DL study of 2770 images of dysplasia and early neoplasia and 3703 images of benign lesions trained a model for real-time diagnosis. Validation of images and videos with a heatmap generation for neoplasia demonstrated a high performance on static images with AUC of 0.989 over four validation datasets. 21

High-resolution microendoscopy and endocytoscopy

A tablet interface high-resolution microendoscopy system was designed to facilitate morphometric nuclear analysis from which neoplasia can be diagnosed with an AUC of 93%. 22

Preliminary findings of endocytoscopy-based CNNs demonstrated encouraging results with a sensitivity of 92.6% on a test database of 55 patients (

Depth of invasion

Evaluation of a retrospectively trained CNN of SCCs to predict the depth of invasion in 68 patients (

Gastric neoplasia

The function of AI related to gastric neoplasia includes diagnosis, estimation of depth of invasion and delineation of borders: vital information for treatment strategising. Various imaging modality–driven AI have been evaluated and are summarised in Table 1.

Diagnosis and delineation of neoplastic borders

WLE

Inception study of CNNs yielded high sensitivity but poor specificity (30.6%). 25 Favourable results for real-time diagnosis have been demonstrated with detection of 64 of the 68 cancerous lesions in 62 patients. 5

The real-time multicentre study developed and validated the DL Gastrointestinal Artificial Intelligence Diagnostic System (GRAIDS). A large training set of 1,036,496 images from 84,424 patients was used, with accuracy in the external validation sets ranging from 91.5% to 97.7%; similar to expert endoscopists but superior to senior doctors/trainees, demonstrating the potential to optimise and standardise practice. 26

Virtual chromoendoscopy (Fujinon intelligent chromoendoscopy)

Primary studies using Fujinon intelligent chromoendoscopy (FICE)-driven AI models revealed modest results (Table 1); 27 however, diagnostic accuracy improved with BLI and a larger validation set of 100 early gastric cancers. 28

NBI

Diagnosis with magnification NBI using support vector machine algorithms on only 126 training images demonstrated excellent diagnostic performance with a sensitivity of 97% and specificity of 95%; however, limited performance was seen for delineation of the lesion with border. 29 Further study using magnification NBI CNNs on a larger training dataset of more than 2000 images yielded an overall accuracy of 90.91% on a test dataset of 314 images (170 cancer images). 30

Further CNNs have been trained to differentiate gastric neoplasia from gastritis using NBI with high NPV (91.7%) in a modest test dataset of 258 images with a rapid image evaluation (0.02 s per image). 31

Depth of invasion

Unvalidated neural networks have demonstrated potential for predictions of depth of invasion between T1a and T1b from benign lesions with AUC of 0.851. 32 A feed-forward ANN demonstrated an overall accuracy of 64.7% and per depth of T1 at 77%, T2 at 49%, T3 at 51% and T4 at 55%. 33 Using a larger training and testing dataset, DL was highly specific (96%) for SM2 or deeper invasive cancers: a useful scenario where specificity is the priority for therapy planning. 34

Surveillance

Gastric mapping using image retrieval networks from an index endoscopic examination provides the potential for real-time guidance for retargeting areas of concern which could be extended for colonic surveillance. 35

Small bowel

In addition to pathology detection, applications of AI in the small bowel include image enhancement, three-dimensional (3D) image reconstruction and localisation. AI-augmented capsule reading may automate the reading process and therefore reduce reading time.

Image enhancement

ML algorithms have been developed to reduce artefact interference within frames using wavelet transformation, 36 deblurring algorithms and adaptive learning algorithms.37,38

Localisation and 3D image reconstruction

Algorithms for vision-based simultaneous localisation and mapping (vSLAM) have been developed

Pathology detection

Video capsule endoscopy (VCE) reading can be a time-consuming process that requires patience (mean capsule reading time of 30–40 min) with an inherent risk of missing pathology and high intervariability of reading due to up to 50,000–100,000 frames to be viewed. QuickView (Medtronic, Dublin, Ireland) selects 10% of the most prominent images for review with a sensitivity for pathology detection of 94%.40,41 ML algorithms have been proposed using static image analysis in relatively small datasets with AUC of 0.89. 42

DL proves to be promising in this field. AlexNet, a pretrained network of static images of erosions and healthy mucosa, achieves an accuracy of circa 95%. 43 Deep convolutional neural network (DCNN) performance (5360 training images of small bowel ulcerations/erosions) was tested on 10,440 images (440 contained such pathology). The AUC was 0.958 [95% confidence interval (CI), 0.947–0.968], sensitivity was 88.2% and accuracy was 90.8% using a cut-off point of 0.481 probability score. The DCNN evaluated the WCE images at a speed of 44.8 images/s. 44

Bleeding

The performance of commercially available bleeding detection software such as the Suspected Blood Indicator algorithm (Medtronic) has been suboptimal (sensitivity of 55%, specificity of 58%), necessitating further development.40,45

ML demonstrated improved performance (sensitivity of 89.5%, specificity of 96.8%) using ROI generation and DT-based learning algorithms with the ground truth determined by human-labelled images. 46

DL offers advantages over ML due to superior aspects in feature selection for the detection of bleeding. Consistency of static image and video collection with capsule endoscopy makes it an attractive substrate for AI.

DCNNs appear to further improve the detection of small bowel angioectasia with a frailty index (FI) score of 0.9955 on a test dataset of 10,000 images. 47 Subsequent analysis of 10,488 images (488 with angioectasia) revealed an AUC of 0.998 and NPV of 99.9%. 48 Comparable data have been observed using a semantic segmentation approach with CNN. A total of 4166 VCE videos were used from a national multicentre database, from which 600 control and 600 angioectasia frames were selected and divided for training and testing. The CNN yielded a sensitivity of 100%, specificity of 96% and NPV of 100%. The total automated video analysis time was 39 min for a capsule of 50 000 frames. 49

Colonoscopy

Colonoscopy with computer-aided detection (CADe) is one of the most attractive applications and the subject of the broadest literature available to date. Additional roles for AI include polyp characterisation and the assessment of colitis for activity and dysplasia. The potential for large datasets results in the ability to perform refined training of algorithms and validation studies. 50

Polyp detection

CADe aims to mitigate human factors for missed lesions such as fatigue, distraction/inattention blindness, loss of mucosal inspection during visual scanning and endoscopist skill. DL appears to enhance polyp detection compared with ML; however, pre-DL data were limited by small-scale studies with fewer polyps (<30) and artefactual interference. Several studies of real-time CNNs illustrate the potential of AI in this area. A 3D video-trained CNN (3,017,088 manually reported frames, 930 polyps) yielded a sensitivity of 84% for protruded lesions and 87% for flat lesions in real time.

51

Further real-time evaluation of a CNN (trained on >8000 images from 2000 patients) demonstrated impressive diagnostic accuracy of 96% and appeared superior to human detection for polyp detection (45

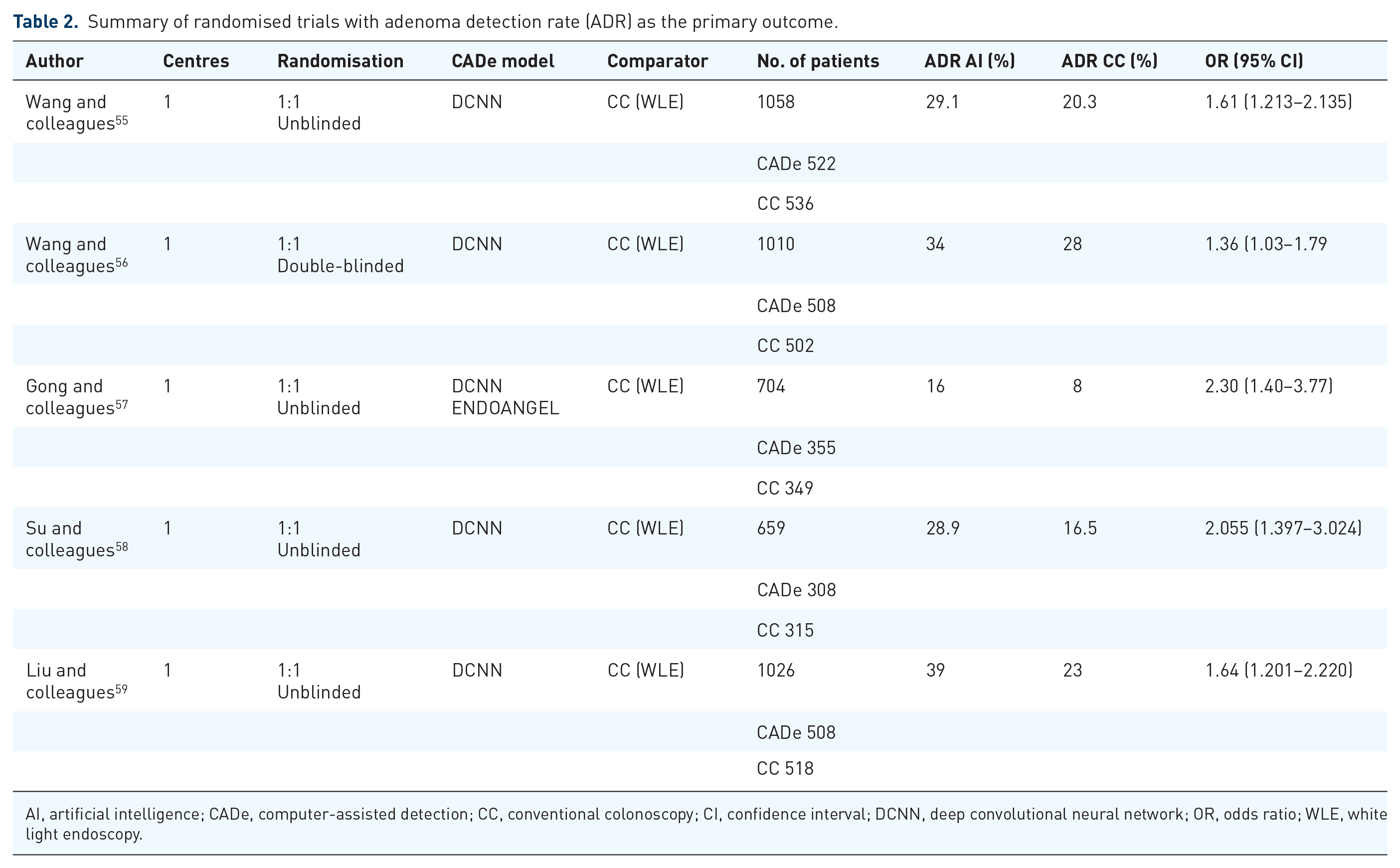

There are five randomised trials for CADe to date with adenoma detection rate (ADR) as the primary outcome (Table 2). The comparator in all randomised trials is conventional colonoscopy (CC) using WLE, and all have demonstrated a significantly higher ADR by CADe.55–59 The first randomised controlled trial (RCT) demonstrated a higher polyp detection rate and ADR using CADe (0.29

Summary of randomised trials with adenoma detection rate (ADR) as the primary outcome.

AI, artificial intelligence; CADe, computer-assisted detection; CC, conventional colonoscopy; CI, confidence interval; DCNN, deep convolutional neural network; OR, odds ratio; WLE, white light endoscopy.

Polyp diagnosis

AI-augmented polyp characterisation may serve to support the PIVI criteria for diminutive polyps including resect and discard (PIVI-1) and diagnose and leave (PIVI-2). 61 Furthermore, it can provide an educational adjunct to endoscopist skill development in polyp diagnosis and finally to guide endoscopic therapy through the evaluation of depth of invasion. AI models have been studied using all advanced imaging modalities as a range of image substrates. WLE-based models, although an attractive modality given its global nature, have limited performance (accuracy ca. 70%) even with DL and a decent sample size. 62 Further refinement of DL with the ‘deep capsule neural network’ demonstrates the ability to diagnose and differentiate hyperplastic polyps, adenomas and serrated adenomas, traditionally difficult for existing AI models. 63

NBI

NBI-driven (Olympus, Tokyo, Japan) AI is the most extensively studied modality to date. ML of magnification NBI differentiated neoplastic and nonneoplastic lesions with a sensitivity of 85–93%.64,65 Retrospective DL studies surpassed PIVI-2 criteria with an NPV of 91.5–97%.66,67 Prospective evaluation with real-time magnification NBI also exceeded the PIVI-2 criteria with an NPV of 93.3% and was also able to predict surveillance strategy in exact agreement with histology in 92.7%. 68

Chromoendoscopy

Preliminary models identifying pit patterns are encouraging (accuracy of 98.5%). 69 Image segmentation using wavelet textural approaches also demonstrates potential and await large-scale study. 70

Autofluorescence imaging

Preliminary study of autofluorescence imaging (AFI) based on green:red ratios demonstrates an NPV of 96.1% in a small patient population (

Confocal laser endomicroscopy

Initial study of confocal laser endomicroscopy (CLE) differentiated advanced colorectal cancer with an accuracy of 84.5% 73 and 89.6% between neoplastic and nonneoplastic polyps. 74 in addition to the identification of optimum images for clinician review.75,76

Endocytoscopy (EndoBrain)

EndoBrain is one of the first regulatory body–approved AI systems. Initial studies using methylene blue nuclear staining identified neoplastic features with 89.2% accuracy.77,78 Further development for the diagnosis of diminutive polyps (

Assessment of colitis

NBI-EndoBrain has been evaluated for the assessment of mucosal healing in ulcerative colitis (UC). A retrospective analysis of 187 patients (100 used for validation) demonstrated a high specificity of 97% for mucosal inflammation. 84 A retrospective WLE CNN (GoogLeNet) model performed favourably with an area under the receiver operating characteristic curve (AUROC) of 0.86 for Mayo 0 and 0.98 for Mayo ⩽1 with the highest accuracy for rectal images (AUROC of 0.92). 85 A large-scale prospective study of a constructed DCNN on 40,758 images from 2012 patients with UC validated prospectively in 875 patients was able to detect endoscopic remission to 90.1% accuracy and importantly histological remission with 92.9% accuracy and kappa coefficient agreement with histology of 0.86. 86

Quality of endoscopy

Education

The first RCT in AI-augmented gastroscopy revealed significantly fewer blind spots identified with AI-assisted WLE (WISENSE)

ML with DTs built on video substrates of nondysplastic Barrett’s and Barrett’s-associated dysplasia using i-SCAN (PENTAX) in 40 patients demonstrated improved diagnostic accuracy of nonexpert endoscopists when using the DT algorithm. 15

Automated endoscopy reporting

Piloting of CNN-automated procedure labelling such as colonic intubation time, caecal recognition and withdrawal time on video-recorded colonoscopy illustrates high accuracy when compared with manual recording (

Triaging of endoscopy referrals

Triaging referrals in GI endoscopy are subject to high variance pending on route of referral and straight-to-endoscopy qualifiers which can result in delays in patient pathways. Natural language processing can provide assistance with ‘auto-triaging’ for suspected cancer referrals, providing community clinicians with a vetting tool for referrals for further management including direct endoscopy referrals. 89 NHS England (UK) recently introduced an AI-supported application ‘C the signs’, a class 1 device with the Medicines and Healthcare products Regulatory Agency (MRHA) using National Institute for Health and Care Excellence (NICE) guidance designed to be used in primary care to support clinicians to identify investigations and referrals required. 90

Future considerations and directions

Digital reform is inevitable, necessary and has arrived in multiple spectra of healthcare. AI-augmented colonoscopy has been acknowledged in the recently updated European Society of Gastrointestinal Endoscopy (ESGE) Advanced Imaging guidance for the diagnosis and detection of colorectal neoplasia. 91 Further multicentre randomised trials are warranted to evaluate both the effect and validity of AI software across patient populations and are required to support its global uptake. Current AI software is trained on pristine images. Further development of AI algorithms that are able to adapt to real-life artefacts such as faecal residue/mucus yet detect polyps is required. In the realms of adopting AI into routing endoscopic practice will come a new age of responsibility regarding data storage and protection, which will require further attention. A potential limitation to this incorporation of AI-augmented endoscopy is cost related to installation of hardware, software with on-going required maintenance/upgrade and additional computational requirements. In addition, there is the hypothetical threat of behavioural reliance on AI for endoscopic diagnosis; however, the role of AI is an adjunct to diagnosis and can potentially be implemented to improve endoscopic diagnosis through self-learning. Furthermore, endoscopic management is a multifaceted patient-specific decision process of which AI-augmented endoscopic imaging is one facet. Future focus on trainees/nonexpert endoscopists and QUALY analyses will be required to inform the potential wider benefits and impact of adopting AI-augmented endoscopy into routine clinical practice. It is our duty to patients to use technology and advance care to the maximum benefit, making for an exciting future ahead for endoscopic practice.

Footnotes

Conflict of interest statement

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.