Abstract

Background

Conducting longitudinal cognitive analyses is an essential part of understanding the underlying mechanism of Alzheimer's disease, especially for social and health behavior determinants. However, the cognitive test administration is highly likely to change across time and thus complicate the longitudinal analyses. The China Health and Retirement Longitudinal Study assessed memory through word recall tests across five study waves from 2011 to 2020. Since 2018, changes in the test stimuli and administration posed challenges for longitudinal cognitive analyses.

Objective

To address differences in administration and to preserve differences attributed to characteristics such as age and education and to derive equated scores for use in longitudinal analyses in CHARLS.

Methods

To ensure consistent underlying test ability across waves in the full sample (N = 19,364), we derived a calibration sample (N = 11,148) balancing age, gender, and education. Within this sample, we used weighted equipercentile equating to crosswalk percentile ranks between 2015 and 2018/2020 scores, then applied the algorithm to the full sample.

Results

Mean original delayed word recall was higher in 2018 (4.3 words) and 2020 (5.1 words) versus 2015 (3.2 words). Following equating, scores in 2018 and 2020 aligned better with previous waves (2015, 2018, 2020 immediate means: 4.1, 3.6, 4.0; delayed: 3.2, 2.4, 2.9 words).

Conclusions

Equipercentile equating enables the derivation of comparable scores, facilitating longitudinal analysis when cognitive test administration procedures change over time. We recommended the use of equated scores for longitudinal analyses using CHARLS cognitive data.

Keywords

Introduction

Cognitive decline and dementia are major public health concerns worldwide 1 ; however, most studies are conducted in high-income countries. It is essential to assess cognitive decline and dementia in low-income and middle-income countries to expand the current evidence base and further our understanding of the determinants of cognitive decline and dementia. 2 Since 2011, the China Health and Retirement Longitudinal Study (CHARLS) has collected data on individual and household characteristics and various aspects of aging-related health measures from a nationally representative sample of middle-aged and older adults in China every two or three years. 3 As of early 2024, CHARLS has released five waves of data between 2011 and 2020 and has become a leading data resource for characterizing cognitive impairment and cognitive aging among middle-aged and older adults in China.

One critical advantage of CHARLS is its longitudinally repeated assessments of cognitive function, which is essential for examining changes in cognitive function with age. However, changes to the content and administration of cognitive tests over time are commonplace in research studies, due to changes in study design, priorities, or logistics. Alterations to cognitive test items over time can complicate analyses of cognitive change by mixing true changes in cognitive function with changes in test scores due to test administration differences. In 2018, CHARLS integrated the Harmonized Cognitive Assessment Protocol (HCAP) into its main household survey. The HCAP is an international research collaboration that aims to measure and understand the risk, prevalence, and consequences of dementia within ongoing longitudinal studies of aging worldwide using comparable data. 4 The integration of the HCAP into the main CHARLS household survey in 2018 significantly expanded the battery of cognitive tests, but also coincided with modifications to existing cognitive tests that had been administered in prior waves.

Because of modifications to the test administration and word lists introduced in 2018, longitudinal differences in test performance do not necessarily reflect intra-individual changes in cognitive function but could be due instead to differences in perceived test difficulty or other test administration characteristics. We aimed to document these modifications made to the CHARLS word recall test and to apply a test-equating approach to adjust for the test item non-equivalence that stems from different test administrations to generate comparable scores that account for the differences in test content and administration between tests administered in 2011–2015 versus 2018–2020 to facilitate longitudinal analysis.

Methods

Data and participants

Data were from CHARLS, an ongoing nationally representative longitudinal survey of persons in China 45 years of age or older and their spouses regardless of age. 3 The national baseline was conducted between June 2011 and March 2012 and included 17,708 respondents. CHARLS respondents are followed approximately every 2–3 years. Respondents have been followed in 2013, 2015, 2018, and 2020. The CHARLS-HCAP was introduced as part of the main household survey in 2018 and was also administered in 2020. Further details of the CHARLS-HCAP can be found elsewhere. 5

Immediate and delayed recall tests: before 2018 and 2018 onward

At each wave since its baseline in 2011, CHARLS has administered immediate and delayed word recall tests, a widely used measure of episodic memory. Respondents are asked to immediately recall ten nouns that are read aloud (immediate recall) and then recall the same words after a five-minute delay (delayed recall). 6

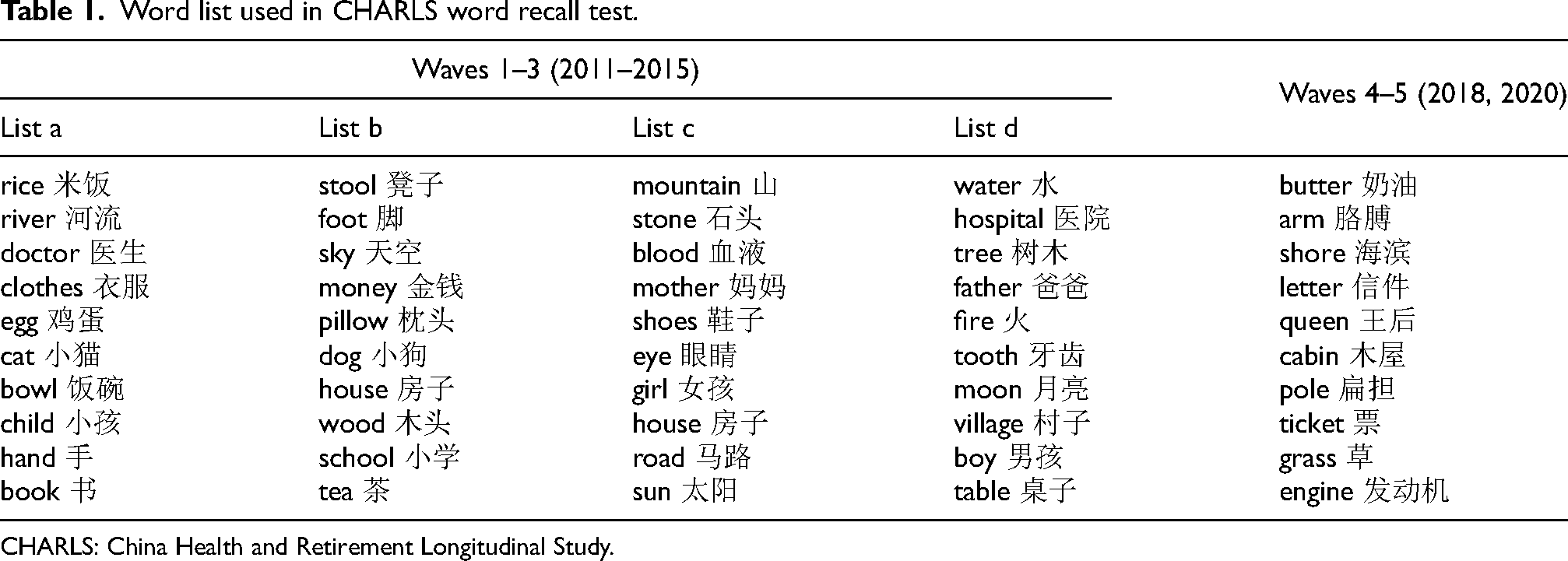

With the adoption of the HCAP version of the recall test from 2018 onward were two major changes in the CHARLS word recall test. First, the specific nouns used in the test were changed. Earlier survey waves included commonly used words in Mandarin, as spoken by Chinese individuals. The ten nouns used in the CHARLS HCAP tests starting from 2018 were translated directly from a Consortium to Establish a Registry for Alzheimer's Disease (CERAD) word list 7 used in the U.S. Health and Retirement Study (HRS) HCAP that was distinct from those used in the 2011–2015 waves (Table 1). Some words used in the 2018 CHARLS HCAP were less familiar to many older Chinese people, such as butter and shore. Neuropsychological research has suggested that low-frequency words often yield poorer recall performance compared to high-frequency words.8,9 A second change to the CHARLS word recall test starting in the 2018 wave was to administer three trials of immediate word recall tests instead of one trial. This change led to higher delayed recall scores in the 2018 and 2020 waves relative to prior survey waves (solid lines in Figure 1). Indeed, delayed recall scores greatly exceeded recall on the first trial for most respondents in 2018 and 2020 because participants were given three times as much exposure to the word list. Additionally, starting in the 2018 wave, the word lists were read and presented visually to the participants, whereas the words were only read to the participants in the 2011–2015 waves. Moreover, in the 2011–2015 waves, if a person did not remember any words in the immediate word recall test, the interviewer would reread the word list to the respondent, whereas this was not done in immediate word recall trials in 2018 and 2020. In the analysis, we used the first trial of immediate word recall tests from waves 2018 and 2020.

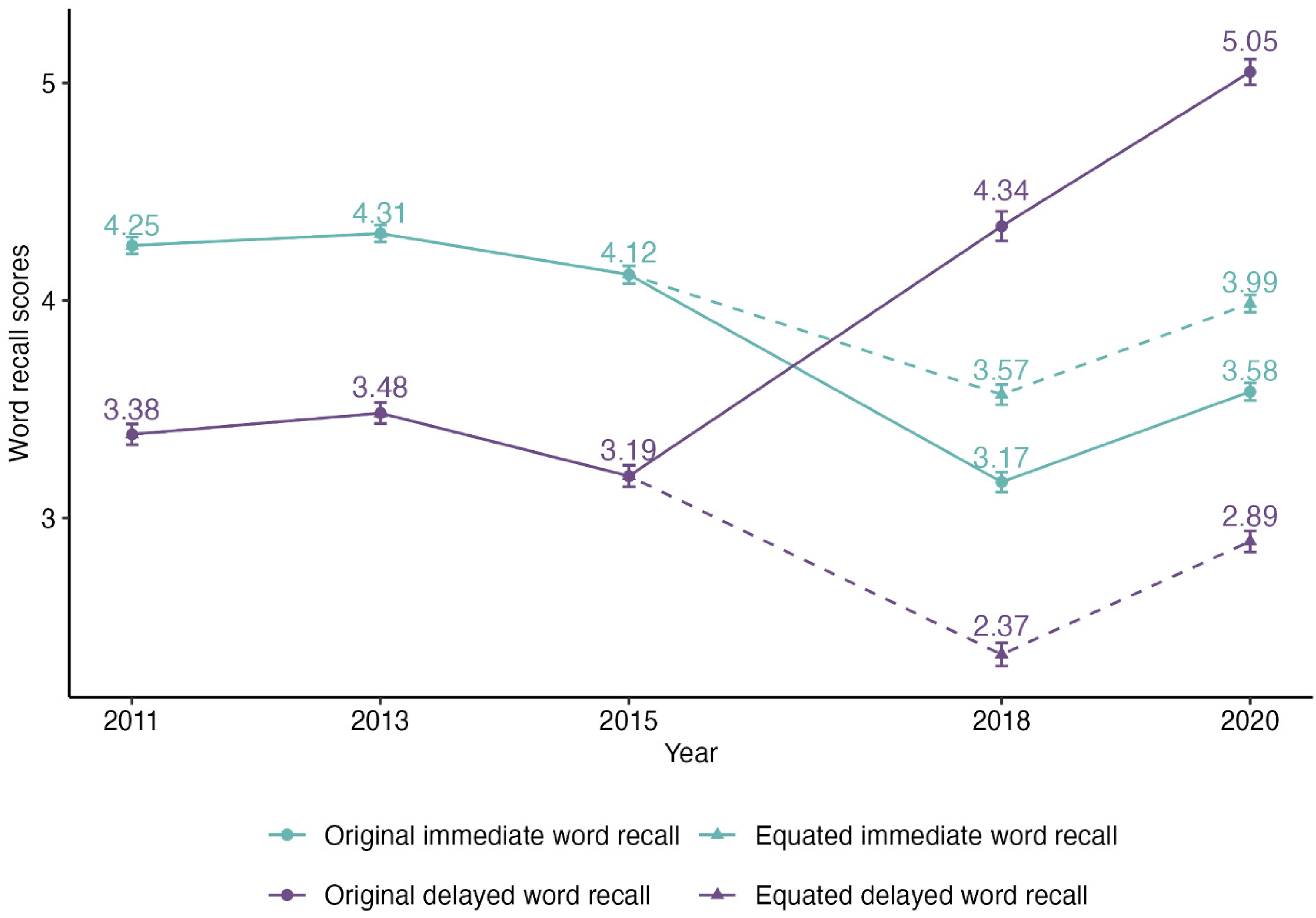

Empirical plot for original (solid lines) and equipercentile equated (dashed lines) immediate word recall scores and delayed word recall scores in a balanced sample – attended all 5 waves of immediate word recall tests (n = 6830) and delayed word recall tests (n = 6324). Mean ± 2 × SE was shown for each wave.

Word list used in CHARLS word recall test.

CHARLS: China Health and Retirement Longitudinal Study.

Statistical analysis

We calculated and compared the mean scores for immediate and delayed recall among participants in each study wave from 2011 to 2020. To address form differences introduced in 2018, we adapted a weighted version of equipercentile to derive comparable versions of immediate and delayed word recall test scores for the 2018 and 2020 survey waves relative to the 2015 wave. To do this, we adopted a two-stage approach.10–12

Given the consistent administration of word recall tests from 2011 to 2015, we selected wave 2015 as the reference wave for equating. We used the first trial of waves 2018 and 2020 as the focal scores for equating. In the first stage, we selected an equating sample to derive an equating algorithm based on characteristics of test score distributions. The goal of this stage was to define a calibration sample of participants in CHARLS 2015, CHARLS 2018, and CHARLS 2020, such that any differences in memory scores between the waves are likely attributable to form differences in the tests and not attributable to demographic differences or real cognitive changes. This goal was achieved by restricting the calibration sample to a group with a common age range and similar education level across CHARLS waves in 2015, 2018, and 2020 and using weighting to further balance these characteristics across the waves. Specifically, the calibration sample was restricted to participants aged between 45 and 90 years who completed word recall tests in either 2015, 2018, or 2020 because this age range is present in each wave. Participants in the highest (post-graduate degree, e.g., Master, PhD) or lowest (less than elementary school) education category were excluded due to low numbers and to ensure a more comparable relationship between memory performance and education level. These restrictions helped to preserve differences between people in memory performance attributable to aging, and education level.

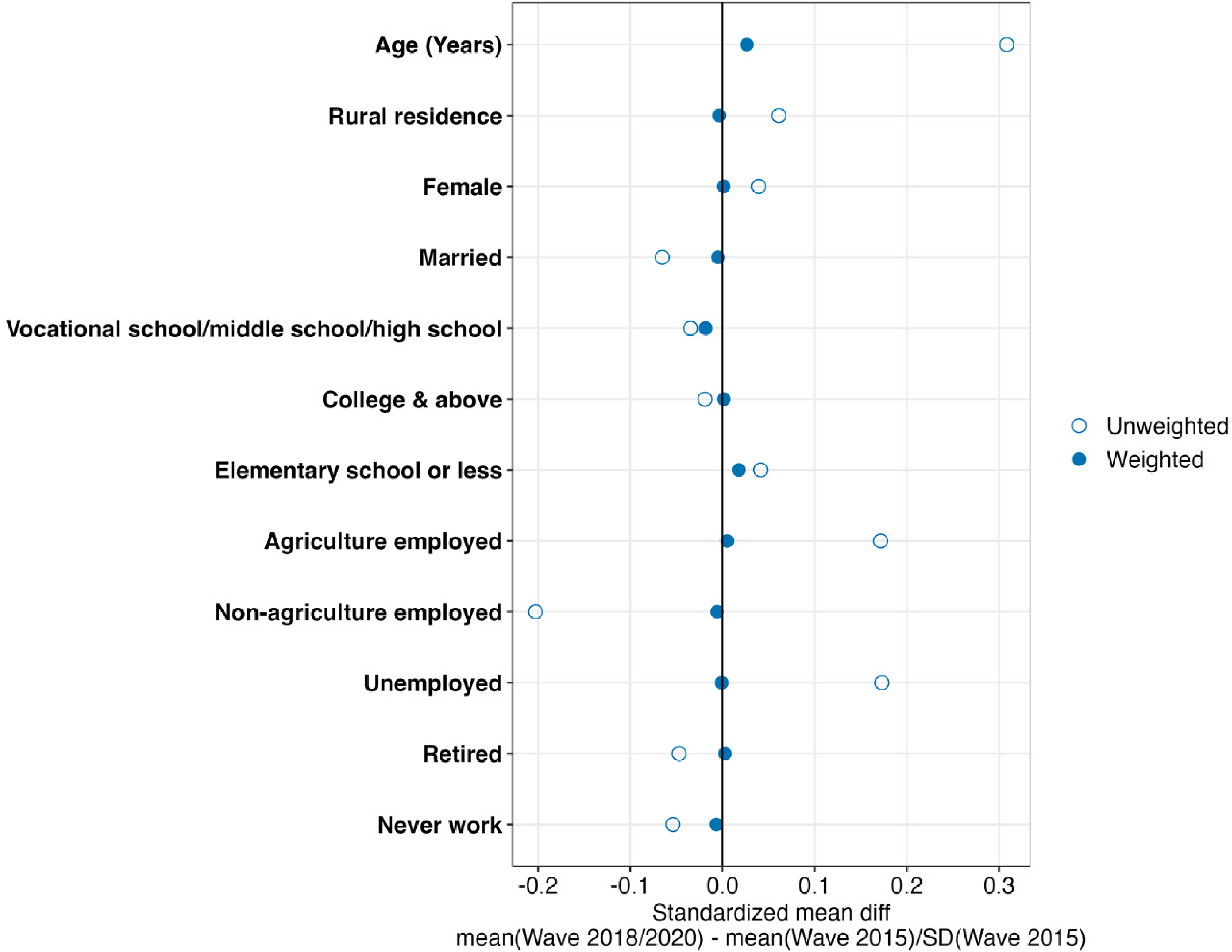

Next, we developed weights to ensure a consistent underlying test ability for completing each of the immediate and delayed word recall tasks in 2015 and in a combined group of 2018 and 2020 (hereafter, 2018/2020). Specifically, we fit a logistic regression model to obtain the probability (propensity score) of completing the test in 2018 or 2020 versus 2015. Time-varying measures include wave-specific age (years), rural residence (yes/no), marital status (yes/no), and working status (agricultural work/non-agriculture employed/unemployed/retired/never work). Time invariant measures include gender (women/men) and education level (elementary school or less/middle school level/college). Rural residence was defined using information recorded at the community level mapping to the rural or urban region definition by the National Bureau of Statistics of China. 13 The rest of the covariate measures for modeling the propensity scores were self-reported. We then calculated stabilized inverse odds of weights (sIOW) for each respondent in the calibration sample who completed the test in CHARLS 2018 or 2020 by multiplying the inverse of the odds of completing the 2018 or 2020 recall test given the covariates, and the unconditional odds of completing the 2018 or 2020 testing. After developing sIOW based on the strongest determinants of cognitive test performance (age and education), we examined the covariate balance between the weighted CHARLS 2018 or 2020 participants and the CHARLS 2015 participants in the calibration sample, calculated as the standardized mean difference (mean[CHARLS 2018 or 2020] – mean[CHARLS 2015])/SD[CHARLS 2015] for each variable. We iteratively adjusted covariates and interaction terms included in the propensity score models until the covariate balance was maximized based on available data. Larger standardized mean differences indicated stronger sources of underlying differences. Differences < 0.2 or <0.25 are often deemed to indicate balance (i.e., small differences). 14 We choose the most parsimonious model for weight development based on covariate balance. The model included age, sex, education level, working status, rural residence, and interaction terms between rural residence with sex and working status.

In the second stage, we derived an equipercentile equating algorithm in the weighted calibration sample and applied it to the full CHARLS 2018 and 2020 samples to obtain the 2015-equivalent immediate and delayed word recall test scores. The equipercentile equating method accounts for the nonlinear relationship between the cumulative distribution function of the scores in 2018 and 2020, and those in 2015 because it leverages percentile ranks of scores. Given that the underlying abilities of the individuals in the calibration sample who completed the recall tests in both 2015 and 2018/2020 are assumed to be equal (due to restriction to common age and education ranges), the weighted percentile rankings of the recall scores obtained from the 2015 and 2018/2020 participants were set to be comparable. We then calculated the equipercentile equivalent of the recall scores for the 2018/2020 respondents on the 2015 survey scale. This calculation was achieved by finding the percentile rank of a particular score in the lumped 2018/2020 sample and finding the CHARLS 2015 score associated with that percentile rank in the CHARLS 2015 in the calibration sample. This crosswalk was then applied to the full study sample. We examined the equated scores and the original scores for three immediate word recall test trials and the delayed word recall test in waves 2018 and 2020 in the full study sample. Data construction and statistical analysis were conducted using R 4.3.1. The equipercentile equating analyses were run using the R package equate. 15 The code is available on GitHub (https://github.com/Yingyan-Wu/charls_memory_equating).

Results

Descriptive statistics

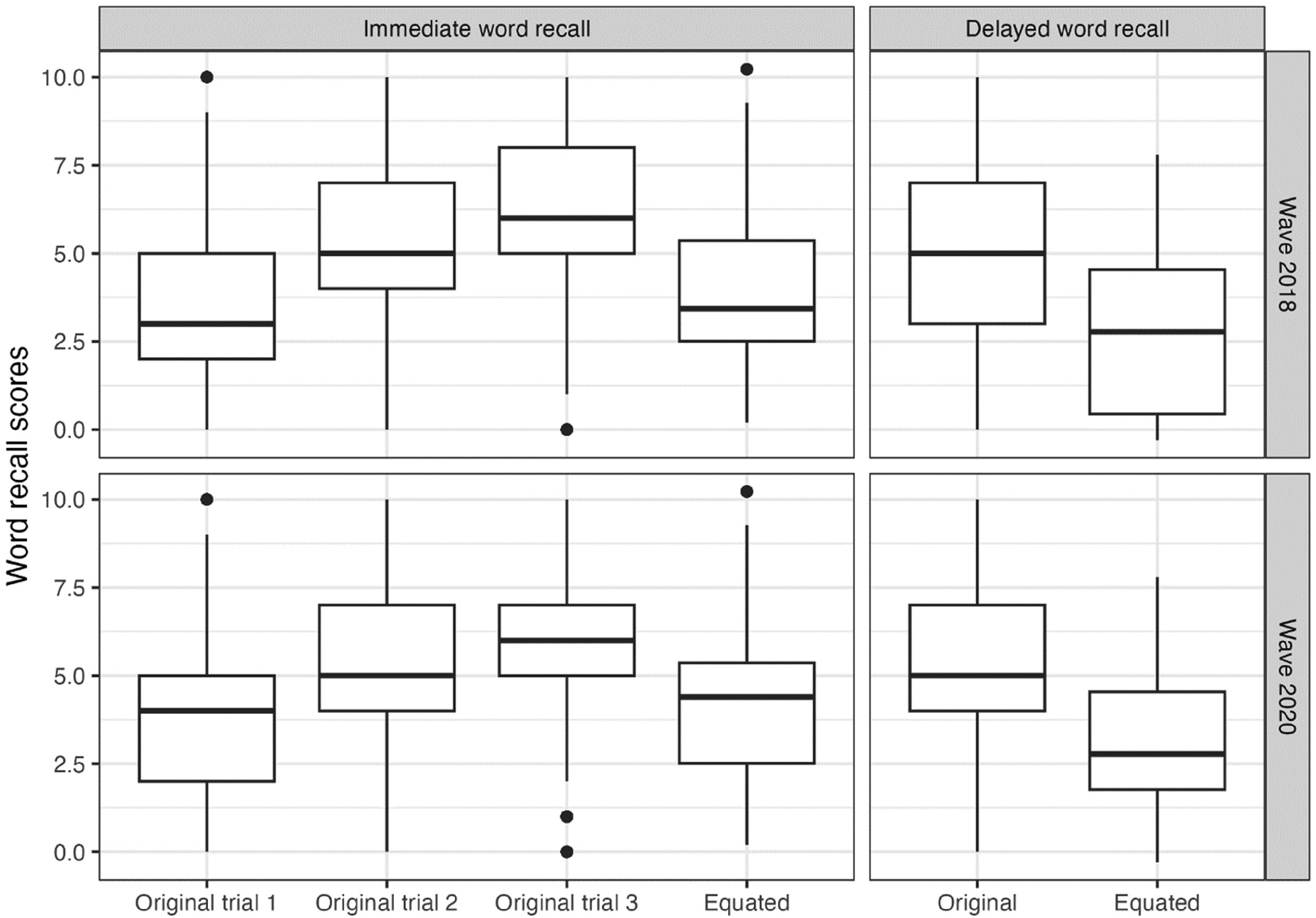

In the full sample, among those N = 14,440 aged 60 + years in 2018, N = 7676 had complete data on trial 1 immediate recall and delayed recall, of whom 52% had delayed recall scores greater than the first of three trials of immediate recall scores. Similarly, in 2020, the proportion of participants having higher delayed recall scores than the first of three trials of immediate recall scores was 65%. For the 2018 and 2020 waves where CHARLS implemented three immediate word recall trials, we examined scores of the three immediate word recall trials in the full sample; the median of the immediate word recall trials increased with each trial, and the delayed word recall test scores were always lower than the third trial of immediate word recall test scores (Figure 2).

Distribution of original and equated immediate and delayed word recall scores in the full sample. In waves 2018 and 2020, immediate word recall tests were given 3 times to the participants before the delayed word recall test.

In a balanced sample among which the participants completed all 5 waves of immediate word recall tests or delayed word recall tests, mean scores with standard errors for both immediate (N = 6830) and delayed (N = 6324) recall tests across the four CHARLS waves from 2011 to 2020 are shown in Figure 1. From 2011–2015, mean immediate recall was consistently lower than that of delayed recall. However, the patterns changed starting in 2018 with the introduction of the HCAP: there was a marked drop in the mean score of the immediate recall test in 2018 compared to previous waves, coinciding with comparatively higher delayed recall scores (solid lines in Figure 1).

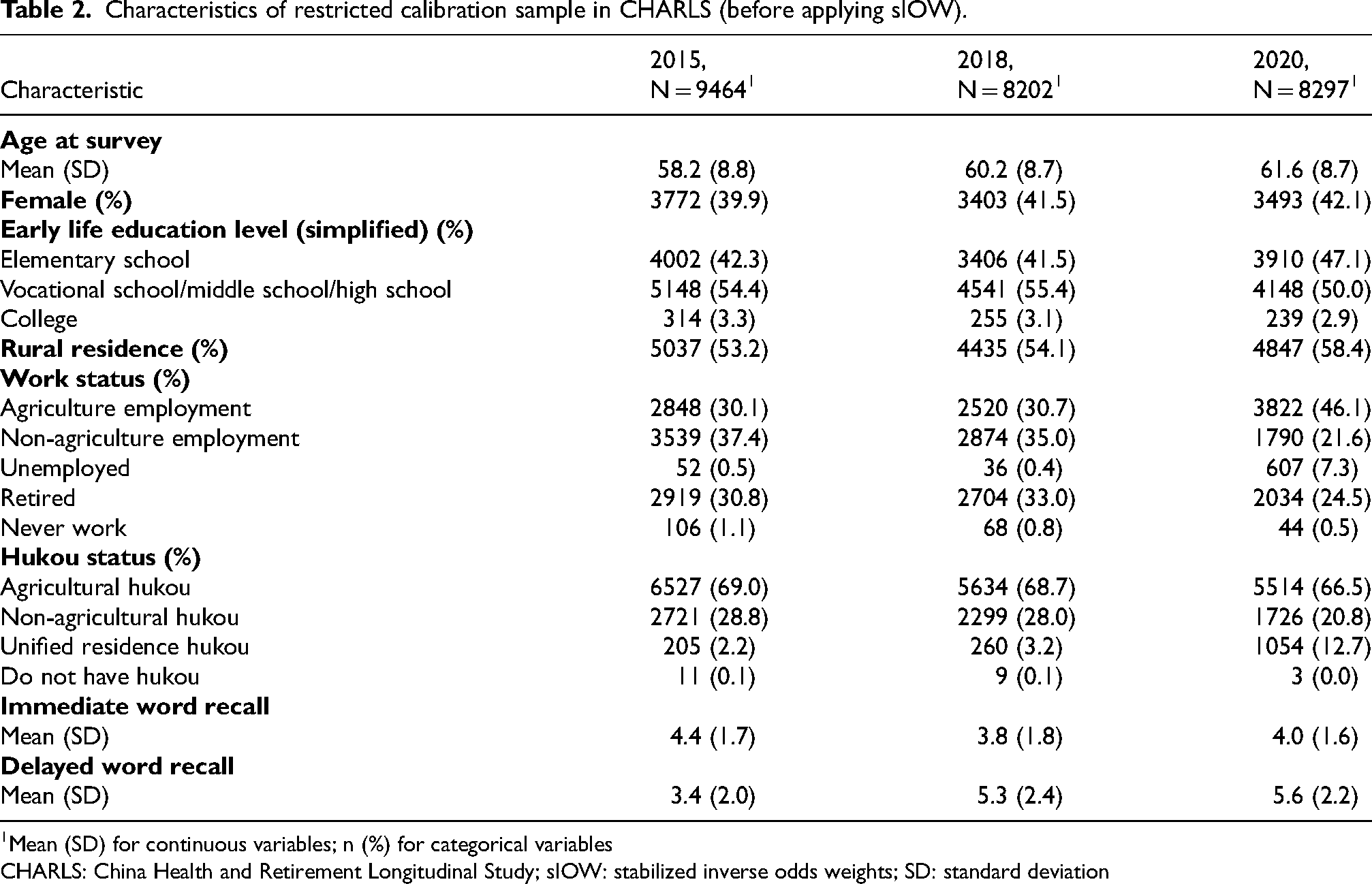

Calibration sample

Restricting the sample to exclude those with less than elementary school education or those who have a postgraduate degree (master's or PhD), resulted in analytical samples of n = 9464 in 2015, n = 8202 in 2018, and n = 8297 in 2020 (unique participants: n = 11,148). The characteristics of the calibration sample by study wave (2015, 2018, and 2020) are shown in Table 2. After applying weights, covariate balance was achieved with <0.1 SD units for all the variables including the variables not included in the weight-generating models: standardized mean differences were close to 0 (Figure 3).

Covariate balance plot showing the standardized mean difference of important characteristics of testing ability between wave 2015 and wave 2018, and 2015 and 2020 before and after applying IOW to the calibration sample. Circles represent unweighted standardized mean difference and dots represent weighted standardized mean difference.

Characteristics of restricted calibration sample in CHARLS (before applying sIOW).

Mean (SD) for continuous variables; n (%) for categorical variables

CHARLS: China Health and Retirement Longitudinal Study; sIOW: stabilized inverse odds weights; SD: standard deviation

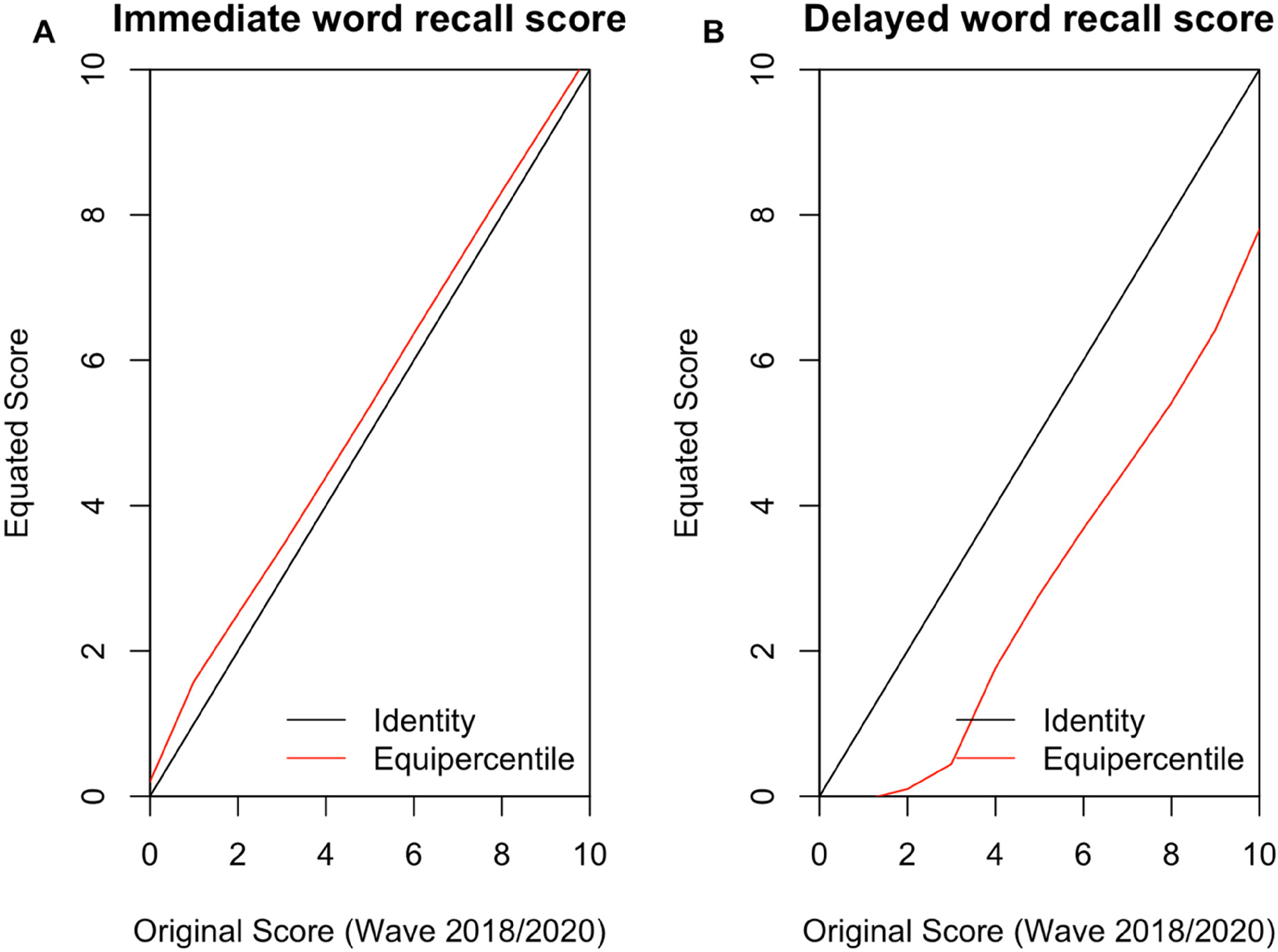

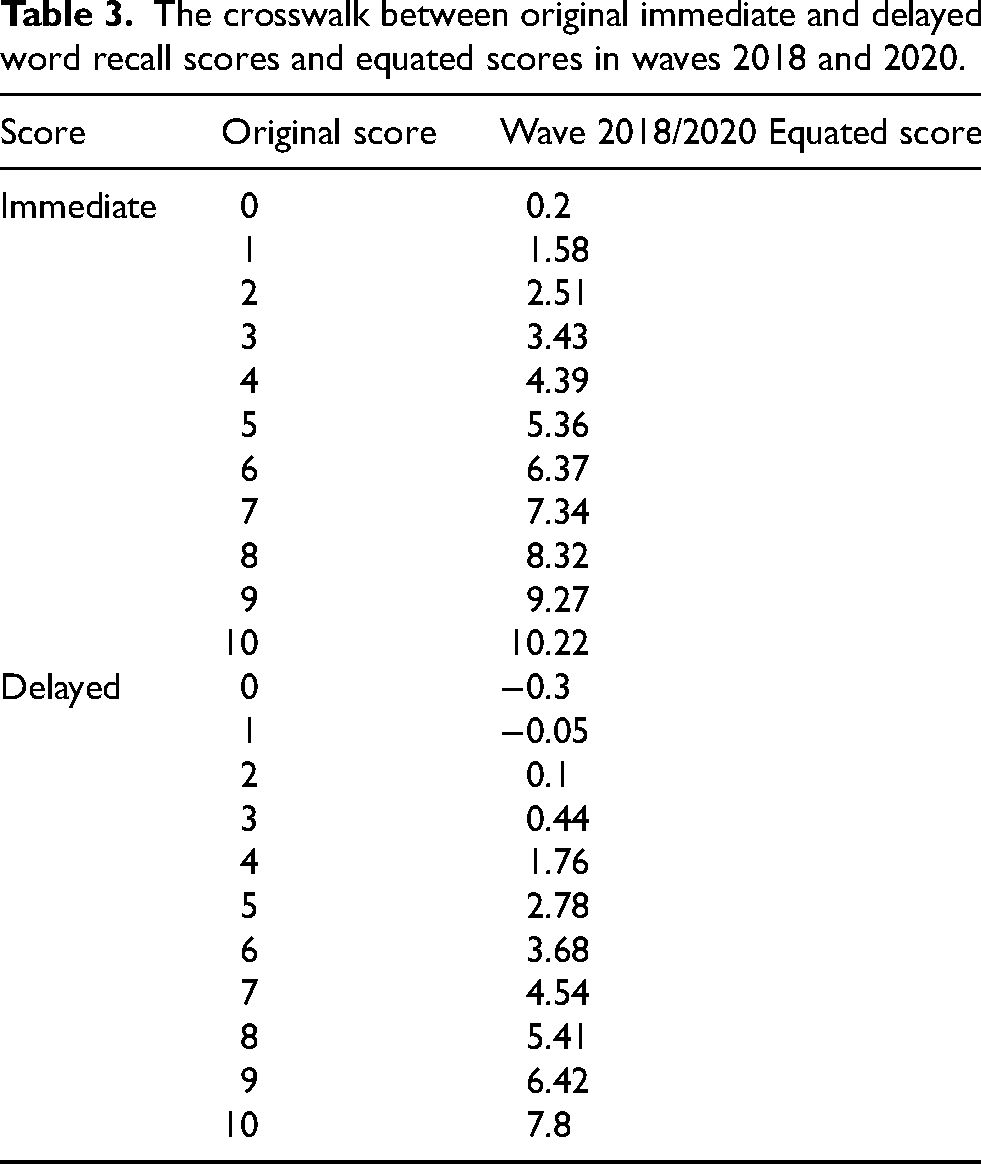

Crosswalk of equated scores and original scores

The crosswalk of equated scores and original scores for survey waves 2018 and 2020 are in Figure 4 and Table 3. We did not scale the scores back to a 0–10 scale to preserve the discrimination ability of the equated score distribution. As is shown in Figure 1, in the balanced sample where participants completed all 5 waves of immediate word recall tests or delayed word recall tests, mean equipercentile equated immediate word recall scores were higher than original scores in both 2018 and 2020 (2018 equated versus original score 3.57 versus 3.17; 2020 equated versus original score 3.99 versus 3.58). Mean equated delayed word recall scores were lower than the original scores in both 2018 and 2020 (2018 equated versus original score: 2.37 versus 4.34; 2020 equated versus original score 2.89 versus 5.05). Using equated scores, a general decrease in mean recall was observed for both equated immediate and delayed word recall scores over time. In the full sample, equated immediate word recall scores were generally higher than the equated delayed word recall scores in waves 2018 and 2020 (Figure 2).

The relationship between equated and original immediate and delayed word recall scores from CHARLS waves 2018 and 2020 in the weighted calibration sample.

The crosswalk between original immediate and delayed word recall scores and equated scores in waves 2018 and 2020.

Discussion

In this study, we described form differences in test items and test administration that led to spurious longitudinal differences in performance on immediate and delayed word recall tests across waves of CHARLS. We then applied equating methods to remove these form differences. Prior to applying equating methods, immediate word recall scores substantially decreased while delayed word recall scores drastically increased in 2018, compared with previous waves. Alterations to the content and administration of cognitive test items over time in a study are common but can obfuscate longitudinal analysis of change. This study demonstrates the application of equating methods when non-equivalent tests are administered in longitudinal surveys. By applying a test-equating method, we created CHARLS 2015-equivalent word recall scores for the CHARLS 2018 and 2020 wave respondents that could be used to leverage longitudinal CHARLS data to examine changes in cognitive function, particularly memory.

An advantage of using the 2015 wave as the reference wave against which to conduct equipercentile equating is that it retains practice effects observed across CHARLS study waves 2011, 2013, 2015, 2018, and 2020. Retest effects have been shown to be present for up to 17 years between measurement occasions. 16 The retest effects were probably present in CHARLS due to contextual contributions to practice, 17 despite the use of alternate word lists in CHARLS across the 2011–2015 waves. 10 However, the most pronounced evidence of retest typically occurs between the first and second occasions of measurement.17–19 Thus, we believe that changes from 2011 to 2013 may have been the most likely period during which to observe a retest effect. Ensuring the retention of practice effects is crucial for reliable longitudinal analysis for cognitive decline.

It is important to study cognitive decline and dementia using a nationally representative cohort in low-income and middle-income countries, e.g., CHARLS data in China. Most research to date on these topics has been conducted in high-income countries, a smaller portion of the world population, making the transportability of the current evidence uncertain to low-income and middle-income countries, a larger portion of the world population. It is also important to assess research questions in a low-income and middle-income context to further our understanding of determinants of cognitive decline and dementia.

Alterations in tests administered across time are common, particularly in longitudinal studies where changes in test format, difficulty, or administration procedures may occur due to various reasons such as updates in assessment protocols. These changes can pose challenges when attempting to compare repeated measures over time and may complicate longitudinal analyses aimed at tracking changes in cognitive function within individuals. Our method, which involves utilizing the weighted equipercentile equating method offers a solution to this issue for CHARLS and other studies. We presented a valuable tool for researchers seeking to conduct comprehensive longitudinal analyses for cognitive change with comparable cognitive scores over time.

Measurement adjustment techniques have been employed to account for shifts in cognitive assessments over time, such as transitions from in-person to telephone administration across study waves 20 and to evaluate the reliability of cognitive tests that measure the same psychological traits across time 21 using methods including equipercentile equating.

Equipercentile-equated scores are unnecessary in cross-sectional research unless different forms were administered to different subgroups of participants in a non-random fashion at the same time. However, cautions merit attention for making comparisons to other studies when the tests were administered differently (e.g., varying scales 12 or item sets 11 ). Most of the major questions in modern cognitive aging research are longitudinal in nature and require consideration of change in cognitive performance because cognitive change is the fundamental feature of cognitive disorders in aging. 22 Highlighting the importance of longitudinal data, most impactful trials and cohorts in cognitive aging research take repeated measures. Particularly for investigators using data they did not personally collect, it is crucial to read study documentation in detail to understand the measures used to assess cognitive performance, including how tests were administered, scored, and coded. Form differences in word lists are a subtle, pernicious feature of many longitudinal studies that arise when not enough attention has been paid to making word lists equivalent. 23 Equating methods have a rich history of being used to resolve problems that arise in longitudinal analyses from form differences.10,11

Several caveats merit attention. The equipercentile equating method relies on the assumption that two test-taking populations have the same underlying ability to be validly equated. In our case, we did not have the same study sample or two randomly assigned groups who were administered two versions of tests simultaneously. To address this challenge, prior to applying the equating algorithm, we restricted the sample and applied IOW in a calibration sample in which the underlying abilities of the respondents who took the two tests in 2015 and 2018, and 2015 and 2020, could be assumed to be identical. Our ability to identify a group of respondents who completed the two different tests and had the same underlying memory function depended on the availability of covariates that are predictive of memory and were limited to age and education. Another limitation, specific to the equipercentile equating method, is that the outcome should be continuously distributed and have enough range to reliably distinguish different percentiles. Given that the word recall scores only have 11 categories, ranging from 0 to 10, the equipercentile-equated scores we used are not integers.

In conclusion, our work documented our effort to address the challenges posed by non-equivalent cognitive tests with different administrations over time. We recommend using the equated word recall scores for longitudinal analysis of memory change utilizing word recall tests in CHARLS. Specifically, for the immediate word recall test, we advise using the first trial scores updated with the equated word recall scores from waves 2018 and 2020 in longitudinal analyses of immediate word recall performance. We have made the crosswalk of original and equated word recall scores available, and we have also distributed code to reproduce the equipercentile crosswalk. Harmonization methods such as equipercentile equating methods can be useful to generate comparable word recall test scores to facilitate longitudinal analyses for cognitive change.

Footnotes

Acknowledgments

We would like to express our gratitude to the China Health and Retirement Longitudinal Study (CHARLS) participants for providing their consent to the data. We also appreciate the efforts of the CHARLS team and Gateway to Global Aging Data team for making the original and harmonized nationally representative data available.

Author contributions

Yingyan Wu (Conceptualization; Data curation; Formal analysis; Methodology; Software; Visualization; Writing – original draft; Writing – review & editing); Yuan S Zhang (Conceptualization; Funding acquisition; Methodology; Writing – review & editing); Lindsay C Kobayashi (Funding acquisition; Methodology; Writing – review & editing); Elizabeth Rose Mayeda (Conceptualization; Funding acquisition; Methodology; Writing – review & editing); Alden L Gross (Conceptualization; Funding acquisition; Methodology; Supervision; Writing – review & editing).

Funding

This work was supported by the National Institute on Aging [grant numbers R01AG070953 to L.C.K and A.L.G, R01AG063969 and R01AG052132 to E.R.M., and R00AG070274 to Y.S.Z.].

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.