Abstract

Over the last decade, replication research in the psychological sciences has become more visible. One way that replication research can be conducted is to compare the results of the replication study with the original study to look for consistency, that is to say, to evaluate whether the original study is “replicable.” Unfortunately, many popular and readily accessible methods for ascertaining replicability, such as comparing significance levels across studies or eyeballing confidence intervals, are generally ill suited to the task of comparing results across studies. To address this issue, we present the prediction interval as a statistic that is effective for determining whether a replication study is inconsistent with the original study. We review the statistical rationale for prediction intervals, demonstrate hand calculations, and provide a walkthrough using an R package for obtaining prediction intervals for means, d values, and correlations. To aid the effective adoption of prediction intervals, we provide guidance on the correct interpretation of results when using prediction intervals in replication research.

Spurred by what is generally referred to as the replication crisis in psychology, replication research has become more prominent in psychological sciences. During the past decade or so, several large-scale replication initiatives have been conducted to test the replicability of important research findings (e.g., Klein et al., 2014; Open Science Collaboration, 2012, 2015). To put it simply, these initiatives have produced disappointing results with respect to the replicability of psychological research. For some, the widespread increase in the attempt to replicate scientific results is a positive step toward improving the credibility of scientific knowledge (Munafò et al., 2017; Vazire, 2018; Vazire et al., 2022).

Replications can be conducted to assess the credibility of the original finding by comparing it with the replication (e.g., Open Science Collaboration, 2012, 2015). The logic of replications can appear deceptively simple: Conduct an original study followed by a replication and then assess if the result of the replication is consistent with the original study. However, comparing results across studies to ascertain their agreement presents researchers with statistical considerations that are not present when testing hypotheses within individual studies. Specifically, what criterion should be used to determine if results are consistent or inconsistent? In other words, what can be used to determine if the study “successfully replicated” or “failed to replicate”?

When considering the replication question, it is important to distinguish between “replication” and “reproduciblity.” Unfortunately, these two terms are often used interchangeably. Replication research is generally understood to involve rerunning studies and collecting and analyzing new data (Peng et al., 2006). In contrast, reproducibility generally refers to the ability of a researcher to generate the same results of a study from the same raw data (Goodman et al., 2016; Gundersen, 2021; Patil et al., 2019; Plesser, 2018).

When it comes to replications, some of the popular replication initiatives in psychology have explicitly acknowledged that, “There is no single standard for evaluating replication success” (Open Science Collaboration, 2015, p. 943) and employed multiple criteria to evaluate correspondence across studies (Open Science Collaboration, 2012, 2015). However, in practice, replication success is often determined by examining if the replication study found a statistically significant result in the same direction as the original study (Anderson & Maxwell, 2016). Some authors have highlighted the limitations of relying on statistical significance to evaluate replication success and have proposed statistical alternatives to examining statistical significance (e.g., Anderson & Maxwell, 2016; Maxwell et al., 2015; Spence & Stanley, 2016). At the same time, some researchers have proposed frameworks evaluating replication consistency by comparing two studies’ results using confidence intervals (CIs; e.g., LeBel et al., 2019), whereas others have proposed Bayesian alternatives (e.g., Verhagen & Wagenmakers, 2014).

The computation of prediction intervals has been proposed and used as a method to assess the inconsistency of results between an original study and a replication (e.g., Patil et al., 2016; Spence & Stanley, 2016). Although lesser known than other approaches, prediction intervals are useful in that they provide researchers with a statistical method for determining a range of results that might reasonably occur in a replication because of sampling error. In the current article, we outline how prediction intervals can be computed and interpreted in the context of replications. We show how the results of a replication study can be statistically classified as inconsistent with the original study. Practical examples for prediction intervals, formulas, and R code are presented herein.

Replications: Assessing the Role of Sampling Error

A number of methods have been used to determine if a study has replicated. A common approach to evaluating replications has been to compare the significance of the original study with that of the replication study (cf. LeBel et al., 2019; Maxwell et al., 2015). This approach typically involves assessing whether the p value is less than .05 (or the CIs fails to overlap with zero). Next, the direction of the effect is assessed to determine if it is in the same direction as the original study. If there is consistency in direction and significance, the replication is deemed successful. If, however, there is inconsistency, the replication is deemed a failure. Aside from the well-documented limitations of relying solely on significance testing to interpret results (Kline, 2013), Cumming (2008) illustrated that this is a flawed approach because p values fluctuate so considerably across replication attempts because of sampling error, making it a poor criterion to evaluate replications. Moreover, the difference between significant and nonsignificant p values is not necessarily statistically significant (Gelman & Stern, 2006). This means that a significant and a nonsignificant result may not be significantly different from one another. Consequently, this consistency in significance testing approach is, at best, an attempt to look for superficial correspondence between studies.

Another approach for trying to understand the role of sampling error when making a substantive conclusion across different studies is the use of meta-analysis (see Borenstein et al., 2021; Schmidt & Hunter, 2014). Meta-analysis is typically used to average a large number of study results, estimate the likely variability because of sampling error, and evaluate moderators in light of this variability. Nonetheless, some researchers have attempted to use meta-analysis with just two studies: an original study and a replication study. In this context, a meta-analytic mean based on just the original study and replicating study is calculated (for an example, see Open Science Collaboration, 2015). This meta-analytic mean is then tested to see if it is different from zero. Although this approach could potentially be considered an improvement over using p values, it has been discounted because it is assumed that the effect-size estimate from the first study is inflated because of publication bias (Open Science Collaboration, 2015). Most importantly, the result of a meta-analysis with just two studies is considerably less robust than a meta-analysis with a large number of studies.

A third approach that is an attempt to understand the role of sampling error in a replication interpretation is to use CIs (e.g., Gilbert et al., 2016). If interpreted correctly, CIs can offer inferential information that is not available with p values (Belia et al., 2005; Cumming & Finch, 2005). CIs are interpreted as an interval constructed around a sample statistic that will capture the population parameter with a specified probability in an imagined infinitely large set of repeated studies. For example, imagine a scenario in which 20 studies are conducted to estimate a population mean. For each of the 20 studies, a 95% CI can be constructed, and each of the 20 intervals will likely be centered around a different mean and have a different width. However, 19 of 20 (i.e., 95%) of the different 95% CIs are expected, on average, to capture the population parameter (i.e., the population mean).

The use of CIs for interpreting replications, however, can be problematic (Cumming et al., 2004; Hoekstra et al., 2014). Specifically, CIs are sometimes incorrectly interpreted as representing a capture percentage for the next study result (see Cumming et al., 2004; “confidence-level misconception”). As noted above, however, CIs are designed to capture population parameters, not subsequent sample statistics from a replication. The capture rate of CIs for subsequent sample statistics departs substantially from 95% and varies greatly across sample-size scenarios (e.g., Spence & Stanley, 2016). Consequently, CIs are not an appropriate tool for trying to understand the role of sampling error when interpreting a replication study.

Thus, a variety of approaches have been used to evaluate replications. We believe that the framework proposed by Patil and colleagues (2019) provides a useful lens for thinking about approaches to evaluating replications. Within this framework, a successful replication occurs when a new data set is collected in a second study and the results of the second data set are consistent with the results of the first data set. Like Patil et al. (2016), we view consistency through the lens of sampling error. The results of a second study can be expected to differ from the results of a first study because of random sampling error. An inconsistency occurs when the results of the second study differ from the results of the first study by more than random sampling error. When this occurs, there is a failure to replicate. In this tutorial, we focus on explaining how to use and interpret prediction intervals when evaluating single replications. Prediction intervals provide a range of results that can be expected, because of random sampling error, for a replication study before it is conducted.

Prediction Intervals

Prediction intervals are a useful statistic for evaluating the results of replication studies. Specifically, prediction intervals provide researchers with a statistical method for determining if the original study and replication study are inconsistent with each other (Cumming, 2008; Patil et al., 2016; Spence & Stanley, 2016). Prediction intervals assess between-studies consistency by considering the variability in both the original study and replication study caused by sampling error and reconciling this variability into a single statistic. By considering sampling error in both studies, researchers have a yardstick to know if the results are more different from each other than you would expect because of random sampling error. Ideally, a prediction interval is calculated after the original study is conducted but before the replication is conducted. Indeed, a key feature of prediction intervals is that they can be used to provide a frame of reference for interpreting a replication result before the collection of replication data.

Prediction Intervals for Means

In the following sections, we outline how prediction intervals can be computed for means, d values, and correlations. We begin with an illustration of how to compute a prediction interval for means because this is the simplest case. To illustrate how a prediction interval can be computed for means, we use a hypothetical scenario.

Imagine that a student, Jane, is interested in estimating the number of hours undergraduate students typically sleep at her very large university of 100,000 people. In this example, students at the university are the population. Jane randomly samples 50 people

At this point, a key question for Richard is what range of results for his replication study would be consistent with Jane’s original study. Said another way, how much would one reasonably expect a replication sample mean to differ from the original sample mean (7.21) because of random sampling? Specifying this interval (i.e., a prediction interval) before collecting the data for the second sample (i.e., the replication study) will help to interpret the findings across both studies.

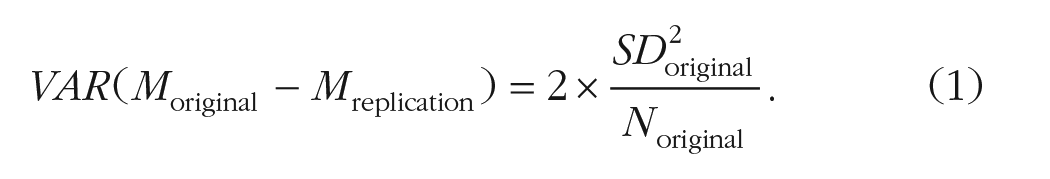

To obtain a prediction interval for the second mean (i.e., the mean in the replication study), it is important to realize that the researchers are mathematically modeling the difference between the two means, that is, the difference between

When the original study and the replication study have the same sample size (

Spence and Stanley (2016) provided a review of this approach and illustrated how the formula could be rearranged to account for different sample sizes in the original and replication studies. Spence and Stanley noted that this formula is simply an application of the well-known rule for calculating the difference between independent variances:

In Equation 2, the first term represents the sampling variance of the original study mean—consistent with the central-limit theorem. The second term represents the sampling variance of the replication mean. Although the replication study has not yet been conducted, it is assumed to be from the same population as the original mean; consequently, the variance estimate from the original is used as an estimate of the population variance in the second term. Calculations, however, use the standard deviation (i.e., standard error) version presented in Equation 3:

To calculate a prediction interval, see Equation 4. For the difference between two means, we include the mean that was observed in the original study and a two-tailed critical t value using degrees of freedom

Richard, who is familiar with prediction intervals, has not yet conducted his replication study, but he does know the sample size he plans to use. He plans to collect data from 70 people,

Consequently:

This 95% prediction interval [6.39, 8.03] provides the range of means that can be expected in a replication from the same population because of sampling error alone. With this prediction interval now calculated, Richard proceeds to collect his data.

Once Richard collects his data, he calculates a sample mean,

On the other hand, if the 95% prediction interval does capture his sample mean,

Application: Mean Difference Prediction Interval

Prediction intervals are well suited for determining if a replication result is inconsistent with the original result. However, caution should be exercised before identifying that a result that falls within the interval is consistent with the original study result. We detail the reasons for this with a new example.

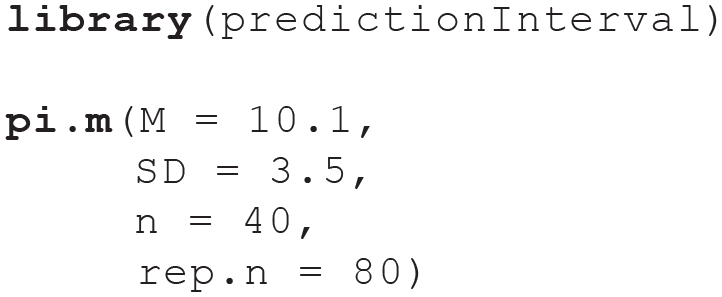

Consider a study that assessed the mean number of alcoholic drinks consumed by engineering-college students during orientation week. The published study does not specify how the sample was collected but indicates that

This code provides a 95% prediction interval of [8.73, 11.47]. If the population parameters (i.e.,

As previously noted, we stress caution when interpreting a replication result that falls inside the prediction interval. Imagine a situation in which a researcher conducts an original study and then creates a 95% prediction interval around that original study mean. Now imagine that the replication mean falls inside the 95% prediction interval. We consider two scenarios that can underlie this situation. We emphasize that one would need to be omniscient to know which scenario they are in as an applied researcher. In the first scenario, the replication mean is sampled from the same population as the original mean. As a result, the original mean and sample mean differ only because of sampling error. In this first scenario, interpreting the replication mean falling within the prediction interval as a “replication success” would lead to a correct conclusion. The same population mean underlies both sample means. In the second scenario, the replication mean is sampled from a different population (with a different population mean). As a result, the original mean and the replication mean differ for two reasons: (a) the difference in population means and (b) the random sampling error. Because the population means differ, this is conceptually a replication failure. Moreover, it is possible that even though the two population means are different, the replication mean could fall inside the prediction interval constructed around the original study mean. In this second scenario, interpreting the replication mean falling within the prediction interval as a “replication success” would lead to an incorrect conclusion. Consequently, when a replication mean falls within the prediction interval, it could reflect two scenarios. Therefore, prediction intervals should not be used to “confirm” a successful replication because of the inherent uncertainty created by random sampling.

Prediction intervals provide statistical criteria to determine a replication failure but not a replication success—because of the way sampling error operates. When a replication result falls within a prediction interval, there is no evidence of statistical difference, but it is not conclusive; additional replications are needed. This conclusion asymmetry is similar to the logic found in null hypothesis significance testing, in which it would be a misguided to conclude there is no effect when a p value is nonsignificant (e.g., Wasserstein et al., 2019). When a replication result falls outside a prediction interval, it indicates only that the replication result is unlikely if the two studies were samples from the same population. Indeed, replication results will fall outside a 95% prediction interval 5% of the time becauses of random sampling error. Consequently, we note that a result falling outside the interval does not constitute “proof of failure.”

Prediction Interval for Effect Sizes

In addition to means, prediction intervals can also be computed for standardized mean differences (i.e., d values) and correlations (Spence & Stanley, 2016). Computing prediction intervals for these statistics is slightly different because of asymmetry in the sampling distributions of nonzero effect sizes. To address this nonnormality, a different procedure is used to calculate the prediction interval in these cases; however, the underlying interpretation of the interval remains unchanged.

Standardized mean differences

To illustrate how to use prediction intervals with standardized mean differences (i.e., d values), we focus on an ego-depletion study by Sripada et al. (2014). We do so because this study was the focus of a large-scale replication study by Hagger et al. (2016). The design in the original and replication studies was a one-way experimental design with an experimental ego-depletion condition and a control condition. The dependent variable was reaction-time variability.

The original study reported by Sripada et al. (2014) found an ego-depletion condition

If a replication of Sripada et al. (2014) was conducted, what range of results can be expected because of sampling error? Below, we review two scenarios: Scenario 1, in which the prediction interval captures the replication result, and Scenario 2, in which the prediction interval does not capture the replication result. We stress, as we walk through evaluating this preexisting data, that prediction intervals are intended to be calculated after the original study but before the collection of data for the replication study.

Prediction interval captures replication

To begin, we examine one of the many replications contained in Sripada et al. (2014). Specifically, we revisit the replication by Hagger and colleagues (2016) focusing on the study by Hagger, Chatzisarantis, and Zwienenberg. These authors conducted a replication with

Given the intended replication cell sizes, what range of results can be expected in this particular replication because of sample error alone? To answer this question, a 95% prediction interval can be constructed. This interval indicates where 95% of d values in a replication are expected to fall because of sampling error alone—assuming identical population parameters.

Because nonzero d values have sampling distributions that follow noncentral t distributions and noncentral t distributions are asymmetrical, we cannot use the same approach we used with means. The asymmetry of sampling distributions increase as effect sizes increase (i.e., the further the d values are away from zero). As a result, using the same technique we used for means will result in increasingly inaccurate estimates as effect sizes increase.

To compute prediction intervals for d values, we can follow an approach outlined by Spence and Stanley (2016) that takes the asymmetry of sampling distributions into account. Spence and Stanley applied a technique developed by Zou (2007). Below are the three steps in this approach.

Step 1: obtain original study d value CI

In the original study,

which produces the output:

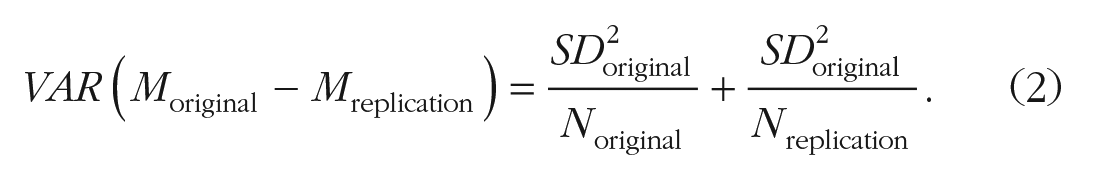

We use the notation below to indicate this original-study CI with several decimals:

Step 2: obtain replication study imaginary d value CI

True to form, a

which produces the output:

We use the notation below to indicate this replication-study CI:

Step 3: obtain the

-value prediction interval

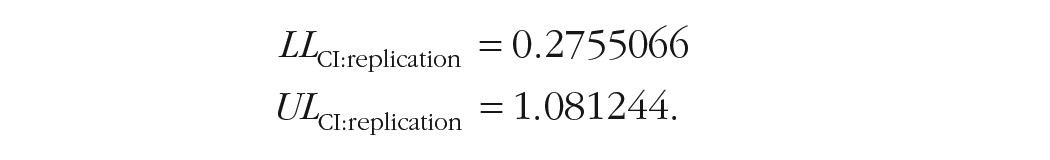

The

In Equations 5 and 6,

Substituting the values obtained in Steps 1 and 2, we can calculate the lower and upper prediction interval limits.

The prediction interval lower limit is

The prediction interval upper limit is

As a result, the 95% prediction interval for Sripada et al. (2014) with the sample size used by Hagger et al. (2016) is [–0.04, 1.39]. Pretending that we are Hagger et al., now that the prediction interval is calculated, we would now proceed to collect our data.

Following data collection, Hagger et al. (2016) found

This result may be surprising given that the replication study found no statistical difference between conditions. The wide interval is useful in highlighting the lack of information and imprecision contained in small-sample studies. Because of the small sample sizes, the large difference observed between studies is still within what can be expected because of random sampling error.

Again, we stress that when a replication result falls within the prediction interval, one cannot conclude that the replication was a success. One can conclude the replication did not fail only when considering what is expected because of sampling error. This is because, as outlined in the mean prediction interval section above, there are multiple reasons replication results can fall within the prediction interval. Prediction intervals are useful for indicating a failed replication. When a replication falls within the prediction, there is no evidence of statistical difference—additional replications are still needed.

Obtaining a

For the original study, d = 0.68, N1 = 23, N2 = 24, 95% CI = [0.09, 1.27]. For the replication study, N1 = 46, N2 = 55. The 95% prediction interval is [–0.04, 1.39].

Prediction interval does not capture replication

Consider the second scenario, one in which the replication result is inconsistent with the original study. Recall that in the original Sripada et al. (2014) study that

To calculate the prediction interval, recall that Sripada et al.’s (2014) original study found a

For the original study, d = 0.68, N1 = 23, N2 = 24, 95% CI = [0.09, 1.27]. For the replication study, N1 = 40, N2 = 49. The 95% prediction interval is [–0.05, 1.41].

Hypothetically, Evans et al., having calculated a 95% prediction interval of [–0.05, 1.41], proceeded to data collection. Following data collection, Evans et al. found

Correlation

In this section, we walk through an example of how a prediction interval can be calculated in the context of replications in which correlations (

To begin, we consider the replication conducted at the University of Oregon as the “original” study (effect size reported in Figure 1 and sample size reported in Table 1 of Chartier et al., 2020). Because we argue that prediction intervals should be conducted before the replication is conducted, we pretend as if a replication study has not been conducted and we wish to determine the range of results that may be expected in a replication. To do so, we calculate a prediction interval using the technique presented by Spence and Stanley (2016). Because the steps are the same and outlined the three-step process in detail for the

The “original” University of Oregon study reported a correlation of

Using the predictionInterval package, we would use the following code, in which r is the original study’s correlation, n is the original study’s sample size, rep.n is the expected sample size of the replication, and prob.level specifies a 95% PI:

This code generates the following output:

This indicates that a correlation between –.21 and .31 can be expected in a replication using a sample size of n = 81. Now we can pretend that Ashland University goes ahead and conducts its replication and finds a correlation of .02 (Figure 1 of Chartier et al., 2020, p. 336). This correlation falls within the prediction interval and can therefore be interpreted as being not inconsistent with the “original” study, which is to say it falls within a range that can be expected because of sampling error, given the sample sizes of the original study and replication study. We note a web interface for the R code is also available: https://replication.shinyapps.io/correlation/.

Discussion

Summary

With the rise of replication research, it is important for researchers to have a way to effectively and objectively assess the results of replication studies in relation to the original study. Commonly used approaches to assessing replications (e.g., p values, CIs) have important limitations. In the current article, we present and review the prediction interval as a method for assessing if the results across two studies are statistically inconsistent with one another. The criteria for assessing inconsistency is based on the extent to which a difference can be expected because of sampling error. We covered how prediction intervals can be computed for means,

Comparisons with other statistical approaches

Equivalence testing

Researchers familiar with equivalence testing (Lakens, 2017) may wonder how this approach compares with the use of prediction intervals. With prediction intervals, information from an original study is used to create an interval around a sample statistic that will capture a subsequent sample statistic with a specified probability. The width of the interval is based entirely on the sampling error for the distribution of differences for a particular sample statistic. In contrast, with equivalence testing, researchers typically specify an interval around zero that they believe corresponds to a null effect. The bounds of this interval are typically based on the smallest effect size of interest (SESOI)—see Lakens et al. (2018). Researchers can determine the SESOI through a wide variety of techniques, ranging from subjective to objective. The equivalence test itself is effectively two one-sided t tests used to determine if the population effect size falls in the interval defined by the researcher. Typically, equivalence testing is used to reject the presence of a small effect within a single study.

Meta-analysis

The prediction-interval approach is most appropriate in a two-study scenario, that is, when a prediction interval is constructed after an original study and before a replication and is then used to assess the replication result. When there are already a large number of studies in a research domain, we suggest the “Did it replicate?” question is less relevant. In this circumstance, we suggest moving from a replication mindset to a knowledge-aggregation mindset, that is, a meta-analytic mindset (see Borenstein et al., 2021; Schmidt & Hunter, 2014). There are different types of meta-analysis, and arguably the most useful of these is the psychometric meta-analysis approach (Schmidt & Hunter, 2014), which allows a researcher to generate an estimate of the population-level effect size while simultaneously correcting for study artifacts such as reliability, range restriction, and scale coarseness, among others. Meta-analysis allows for not only the aggregation of the effect sizes but also the calculation of the variance of effect sizes once the variability because of sampling error and other artifacts has been removed. Researchers can also explore the extent to which variation in study results is moderated by study attributes. We note, however, that if there is publication bias in a research domain, this bias will be encoded in the meta-analysis. Consequently, it is important to conduct additional analyses that explore the potential influence of publication bias on meta-analytic estimates (for a review, see McShane et al., 2016). Indeed, the APA Journal Article Reporting Standards (Appelbaum et al., 2018) requires the use of bias-detection techniques. Meta-analysis is extremely effective for knowledge generation when researchers can analyze a large number of studies in which publication bias is not a factor—such as with the Many Labs projects. Our previously stated concerns about meta-analysis in replications pertain only to situations in which meta-analysis is used with a very small number of studies.

CIs

Some researchers might be tempted to use CIs to capture the results of other studies. This approach is incorrect because CIs are designed to capture population parameters, not sample statistics from other studies. Indeed, using a 95% CI to capture sample statistics is problematic because the capture rate can depart substantially from 95% and vary considerably across situations (depending on the relative sample sizes of the two studies). For example, Spence and Stanley (2016) conducted simulations that revealed that when sample sizes are unequal, a 95% CI around a

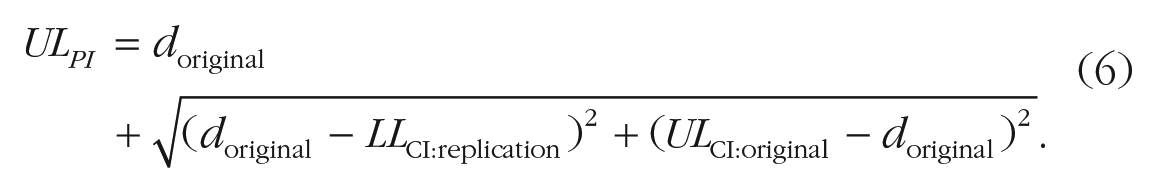

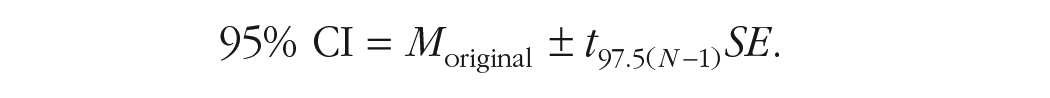

The problems associated with using CIs to capture sample statistics is most easily demonstrated in context of sample means—although the same issues apply with

Contrast the resulting formula above with the usual way of writing a 95% CI. Notice the only difference is the

In the case of study means (with equal sample sizes), a 95% CI has an error term that is too small to capture sample statistics at a 95% rate. The error term would need to be multiplied by

In this equal-sample-size scenario, it is also possible to determine what the capture rate of a 95% CI would be for sample statistics. Said another way, if we were to view a 95% CI (for means with equal sample sizes) as a prediction interval, what would the prediction interval capture percentage be? To determine this value, we can algebraically convert the CI into a prediction interval. We do this in a scenario in which

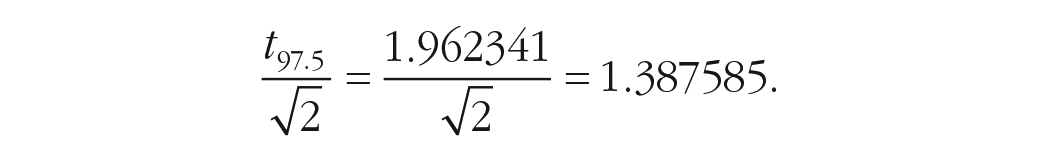

We begin by noting that for a 95% CI, we use a

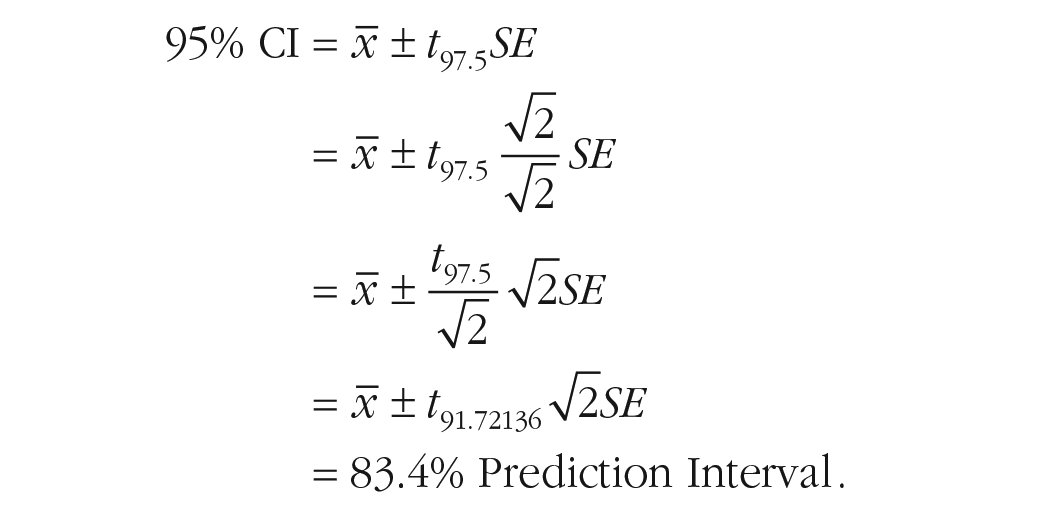

A 95% CI can be converted to a prediction interval by adding a 1.0 multiplier in the form of

We redistribute one

Now that we have the CI in prediction-interval format, we need to investigate the

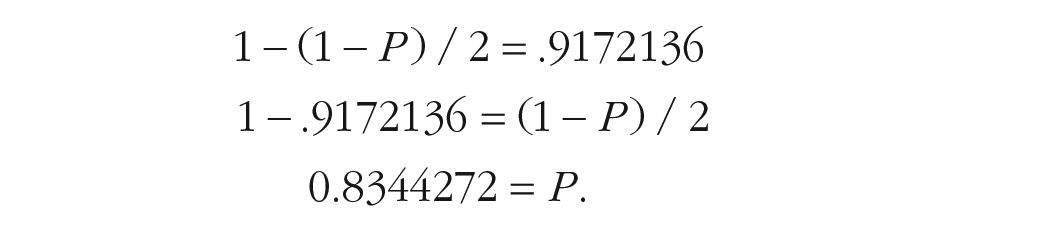

We note that 1.387585 corresponds to the

Therefore:

Moreover, creating an interval using

Consequently,

Thus, a 95% CI functions as an 83.4% prediction interval—when working with means in which the original and replication sample sizes are identical. We stress that the

Interval-width considerations

Prediction intervals are effective because they consider the sampling error associated with both the original and replication studies and reconcile this information into a single statistic. Correspondingly, the width of a prediction interval is directly influenced by the sample sizes of both studies. To the extent that both studies have adequate sample sizes, the respective CIs for each study will be narrow and result in a narrow prediction interval. Researchers will likely find a narrow prediction interval desirable when attempting to assess a replication result.

Prediction intervals can be calculated before or after a replication study is conducted. We believe that prediction intervals have the greatest utility if calculated before collecting data for the replication study as an aid for planning (and possible preregistration). A researcher can use an initial planned replication sample size in the prediction-interval calculation and then assess if the resulting interval is undesirably wide (a personal judgment). If the resulting interval is judged to be wide, the researcher can recalculate the prediction interval using a larger sample size for the replication study. In many cases, simply increasing the replication sample size will decrease the width of the prediction interval. In some cases, however, the prediction interval may not decrease in width when the replication sample size is increased—indicating that the wide interval is the result of small sample size for the original study. This situation is problematic because the original study sample size cannot be increased after the fact.

We believe that when a prediction interval is undesirably wide because of the sample size of the original study that this indicates a replication mindset may not be appropriate. Others might argue that this indicates a problem with prediction intervals; however, we suggest this situation merely communicates the consequences of a small-sample-size choice for the original study. Unfortunately, small-sample-size studies are so common in psychology that some believe much of psychological research is statistically unfalsifiable (Morey & Lakens, 2016). We suggest that a prediction interval that is extraordinarily wide only because of the sample size of the original study is an indication that the findings of the first study may be unfalsifiable. Indeed, a wide interval could indicate that validating an initial study is not worthwhile given the error associated with it. In this situation, it might be most appropriate to discard the conclusions from the original study and conduct a new, well-designed study instead. That is, the new study is not viewed as replication but, rather, as the first meaningful source of information on the original question. Of course, the original study could still be a useful input to a future meta-analysis but likely has little informational value on its own.

We expect most researchers to construct 95% prediction intervals because of the ubiquitious use of 5% as the desired Type I error rate when significance testing. We note, however, that it is possible to construct prediction intervals at other probability levels. A dicussion of the associated issues is beyond the scope of this tutorial; however, we encourage readers interested to see the logic outlined in Maier and Lakens (2022).

Final comments

For individuals interested in more reading on prediction intervals in the context of replications, the following sources are recommended: Anderson and Maxwell (2016), Spence and Stanley (2016), and Patil et al. (2016). With respect to prediction intervals more generally, Cumming (2008) and Cumming and Fidler (2009) contain discussions of prediction intervals as they pertain to means. In the context of multiple regression, Cumming and Calin-Jageman (2016) discussed the placement of prediction intervals around individual criterion (Y) scores. And there has been some psychology research that has examined how prediction intervals can help how people interpret uncertainty in forecasts (e.g., Savelli & Joslyn, 2013).

We remind readers that prediction intervals can be calculated using the free predictionInterval R package. In addition, prediction intervals are also provided as web applications. For mean application, see https://replication.shinyapps.io/mean/. For correlation application, see https://replication.shinyapps.io/correlation/. For d-value application, see https://replication.shinyapps.io/dvalue/.

Footnotes

Transparency

Action Editor: Katie Corker

Editor: David A. Sbarra

Author Contributions

Both authors contributed equally.