Abstract

Research shows that questionable research practices (QRPs) are present in undergraduate final-year dissertation projects. One entry-level Open Science practice proposed to mitigate QRPs is “study preregistration,” through which researchers outline their research questions, design, method, and analysis plans before data collection and/or analysis. In this study, we aimed to empirically test the effectiveness of preregistration as a pedagogic tool in undergraduate dissertations using a quasi-experimental design. A total of 89 UK psychology students were recruited, including students who preregistered their empirical quantitative dissertation (

Keywords

In recent years, psychology has put reproducibility, replicability, and transparency at the forefront of the research agenda (Asendorpf et al., 2013; Munafò et al., 2017; Open Science Collaboration, 2015). Fueled by replication concerns in the general scientific literature, an era of “Open Science” has prompted a plethora of ideas and recommendations to envision a new future for science (Pashler & Wagenmakers, 2012). A move to study preregistration, open materials, and open data are proposed to combat “questionable research practices” (QRPs; John et al., 2012) that plague the literature, such as

Much of the recent shift to Open Science practices has been championed by grassroots, collaborative initiatives (e.g., see Button et al., 2020; Pownall, 2020a). In recent years, psychologists have developed initiatives such as the Society for the Improvement of Psychological Science (https://improvingpsych.org), the open-source reporting forum PsychDisclosure (LeBel et al., 2013), and the journal club led by early career researchers, ReproducibiliTea (Orben, 2019), all with the aim of improving the rigor and reproducibility of psychological science. Beyond these, organizations and initiatives are centered around the improvement of psychological science, stressing the importance of rigorous, robust methods (e.g., Crüwell et al., 2019; Munafò et al., 2017; Simmons et al., 2011; Tennant et al., 2016; Wagenmakers et al., 2012). For example, Klein et al. (2018) noted the importance of preparing and sharing research in a way that values transparency and noted how this can be done incrementally to improve research efficiency and credibility. Likewise, Devezer et al. (2020) focused on recommendations to improve methodological problems in science reform, such as the adoption of a formal approach that embeds statistical rigor and nuance into science reform.

Open Science in Undergraduate Training

The recent shifts toward novel and creative ways of promoting uptake of Open Science practices offer the opportunity to reevaluate core aspects of undergraduate training and wider scientific-research practices. For example, there have been some emergent initiatives that have specifically concentrated on how to embed teaching on the “replication crisis” and Open Science practices into undergraduate teaching (e.g., Button et al., 2016; Chopik et al., 2018; Frank & Saxe, 2012; Janz, 2016). There has also been a keen interest in interventions to improve understanding of QRPs in, for example, graduate psychology training (Sacco & Brown, 2019; Sarafoglou et al., 2020). However, the impact that these have on students’ learning and perceptions is yet to be empirically investigated.

The Value of Preregistration

One method of reducing QRPs and enhancing research transparency is study preregistration. Study preregistration comprises a time-stamped, uneditable protocol that transparently outlines a study’s research questions, design, hypotheses, methods, and analysis plan before data collection and/or analysis (Nosek et al., 2018; van’t Veer & Giner-Sorolla, 2016). The process of preregistration encourages researchers to plan the decisions that have traditionally been made after data collection (e.g., exclusion criteria, analysis details) beforehand, using a wide host of platforms such as OSF (https://osf.io/) and AsPredicted (https://aspredicted.org/). Preregistration increases transparency about the authors’ original intentions (LeBel & Peters, 2011) and should, in theory, limit selective reporting of results (Nuzzo, 2015).

Here, we propose that preregistration is one entry-level way of establishing a level of rigor and robustness into the undergraduate dissertation process (as per Pownall, 2020b). The potential value of preregistration in this context has been noted by educators. For example, the Framework of Open and Reproducible Research Training (FORRT; www.forrt.org) includes preregistration as one of the six pillars of effective reproducibility training, including at the undergraduate level. Others have suggested that “most study programmes should offer easy ways of implementing preregistration in empirical research seminars” (Olson et al., 2019) because of the potential for preregistration to promote “critical reflections of research practices” and improve students’ statistics literacy (Olson et al., 2019). As Pownall (2020b) also argued, the process of embedding preregistration of undergraduate dissertations largely complements current practices in dissertation supervision. Sacco and Brown (2019) noted that preregistration is thus useful when conducting research with the view to publish the results with undergraduate students (see also Blincoe & Buchert, 2020). In this study, we examine the value of study preregistration in the undergraduate curriculum to assess whether this can improve attitudes toward statistics (e.g., students’ perceived difficulty of statistics, value of statistics, and perceived competence in statistics) and QRPs and students’ perceived understanding of Open Science.

The undergraduate dissertation

In the UK, final-year psychology dissertations consist typically of an independent empirical project that requires students to design a protocol, collect data, and analyze the results. According to the accreditation standards of the British Psychological Society (2019), undergraduate psychology dissertations in the UK require students to “individually demonstrate a range of research skills including planning, considering and resolving ethical issues, analysis and dissemination of findings.” Final-year projects are thus typically self-contained research studies that are constrained by the scope and availability of resources but are supervised closely by an experienced academic. Much pedagogic research has demonstrated that given the level of autonomy that students have over their final-year dissertation, students typically struggle with some of the components of this mandatory part of their degree. For example, it has been reported widely that undergraduate students face anxiety, disengagement, and stress related to their final-year dissertation (e.g., Devonport & Lane, 2006). Indeed, research has shown that undergraduate students often experience difficulty with their dissertation because of pedagogic issues such as debilitating statistics anxiety (e.g., Onwuegbuzie & Wilson, 2003), underconfidence with their writing ability (Greenbank et al., 2008), and challenges navigating supervisory relationships (Day & Bobeva, 2007).

Contemporary research has also indicated that QRPs are prevalent in undergraduate research projects (Krishna & Peter, 2018; Kvetnaya et al., 2019; Sorokowski et al., 2019). For example, Krishna and Peter (2018) assessed the prevalence of QRPs in final-year undergraduate dissertations and found that students typically engage in QRPs related to reporting and analyzing their results. Likewise, Olson et al. (2019) studied the prevalence of QRPs of taught master’s students’ theses and found inconsistency of

The use of QRPs in the undergraduate dissertation likely stems from many different sources: Resource and time constraints mean that many undergraduate experiments are typically underpowered (Button et al., 2016); students perceive that there is a pressure from supervisors to “find” significant results, which are more likely to lead to a publication (Wagge et al., 2019); and in our own experience, students also worry that a “lack of significant” results will adversely affect their grades. QRPs may also stem from a lack of awareness that they are problematic (e.g., Banks et al., 2016). This is related to the pressures put on academics to publish novel, positive results (Franco et al., 2014) because of the “publish or perish” culture that pervades academia (Grimes et al., 2018), which might filter down to their students. Indeed, an undergraduate publication is seen as an advantage when applying for highly competitive places on taught master’s and doctoral training (Button, 2018). If these studies are then selectively published, they contaminate the scientific literature with unreliable results. Understanding undergraduate students’ use and acceptance of QRPs is useful because students’ research behavior reflects the quality of Open Science teaching and adoption of rigorous practices more broadly (Olson et al., 2019). Some emergent research has begun to investigate the research practices of early career researchers (Nicholas et al., 2017), including uptake of Open Science practices (Stürmer et al., 2017).

Consideration of the prevalence of QRPs in the undergraduate dissertation has led to interventions to reduce them. Button et al. (2020), for example, described and evaluated an approach to improving rigor of undergraduate dissertations via a consortium approach to science. This approach also echoes Detweiler-Bedell and Detweiler-Bedell’s (2019) team-based approach to undergraduate research supervision. Creaven et al. (2021) stressed the importance of embedding a concern for rigor, transparency, and openness into the undergraduate dissertation, stressing how the undergraduate dissertation should be thought of as an important learning activity that offers many pedagogical benefits to students. Likewise, Blincoe and Buchert (2020) proposed that preregistration may be a useful pedagogical tool for undergraduate psychology students. Despite some useful and recent conversations that discuss the need to embed an Open Science approach into undergraduate research training (Button et al., 2020; Creaven et al., 2021; Pownall, 2020b), an empirical exploration into how Open Science practices may benefit both students and the Open Science movement has been notably absent from these conversations. Indeed, although much work has considered how to promote uptake of preregistration practices of early career (Zecˇević et al., 2020) and more established researchers (Kidwell et al., 2016; Munafò et al., 2017), little research has explicitly focused on the utility of preregistration for undergraduate students’ research practices despite recommendations that preregistration could facilitate engagement with the dissertation process (e.g., Nosek et al., 2018), reduce statistics anxiety, and improve students’ experience of their dissertation (Creaven et al., 2021; Pownall, 2020b).

The Present Study

We aimed to investigate empirically the pedagogical effectiveness of preregistration in undergraduate-dissertation provision, that is, how the process of preregistration may be useful at tackling some of the core pedagogical challenges that students face in their dissertation research (including attitudes toward statistics), while also considering how engaging with the process of preregistration can aid understanding of Open Science issues more generally. Our core research questions aimed to evaluate whether preregistration is a useful pedagogic practice to improve students’ attitudes toward statistics (i.e., perceptions of the value and difficulty of statistics and students’ perceived competence in statistics), awareness of QRPs, and perceived understanding of Open Science in this cohort. To achieve this, we employed a 2 (Group: Preregistration vs. Control) × 2 (Time: Time 1, Before Dissertation vs. Time 2, After Dissertation) mixed design with group as the between-participants factor and time as the within-participants factor. We had three confirmatory hypotheses based on a significant two-way interaction between group and time. For all of the hypotheses, we predicted a significant Time × Group interaction such that participants in the preregistration group would show improvements above and beyond those that occur because of time differences (Time 1 vs. Time 2):

Finally, as an exploratory measure with no predetermined hypotheses, we also assessed students’ capability, opportunity, and motivation toward preregistration at Time 1 and qualitative responses regarding the perceived barriers and facilitators of preregistration at Time 2.

Method

Transparency statement

All materials and data are publicly available on OSF (https://osf.io/5qshg/), and our study meets Level 6 of the Peer Community in Registered Reports bias control (https://rr.peercommunityin.org/help/guide_for_authors). In the sections that follow, we report all measures, manipulations, and exclusions. This study was conducted as a Registered Report; preregistered Stage 1 protocol is available at https://osf.io/9hjbw (date of in-principle acceptance: September 21, 2021).

Design and participants

The study comprised a 2 (Group: Preregistration vs. Control) × 2 (Time: Before Dissertation vs. After Completion) mixed-factors design. To be eligible for inclusion, participants were required to confirm that they were a final-year undergraduate student studying psychology at a UK institution and planning an empirical quantitative undergraduate dissertation. Participants must have not already preregistered their proposed undergraduate study at Time 1 and confirmed this in the beginning of the study. This was to ensure that the study contributes directly to existing pedagogic policy discussions regarding embedding Open Sciences in the undergraduate dissertation (e.g., course accreditation standards by the British Psychological Society, 2019). To be eligible to participate at Time 2, participants must have completed Time 1 measures (and have a corresponding participant ID number to match up responses). To be included in the preregistration group at Time 2, participants indicated that their preregistration included a “data analysis plan” (see Time 2 measures).

Our planned sample size was based solely on resource and time considerations, including the time window for participant recruitment and available funds for participant compensation (see Lakens, 2021). We initially aimed to recruit 240 final-year undergraduate students. We planned to recruit psychology students and expected an approximately 20% attrition at Time 2 given prior research sampling from online platforms (Palan & Schitter, 2018). We planned to recruit 200 participants to include an experimental group of approximately 100 having initiated a preregistration of their final-year quantitative project and a control group of 100 not initiating a preregistration. Simulation-based power analyses conducted using the

At Time 1, there were initially 354 participants with complete data (i.e., responses with survey progress of 100%). Of these participants, 187 passed the various attention checks (see Method). After removing five direct duplicates (i.e., whereby a participant had clearly completed the study twice or submitted the survey twice), there were 182 participants left to invite back at Time 2. At Time 2, 139 participants initially responded to the survey. Of these participants, 108 both had 100% progress and passed the attention checks (see Procedure). Fifteen participants at Time 2 did not match with participants in Time 1, and there were four participants removed because of duplicates (i.e., identical responses and ID codes), leaving 89 complete participants with Time 1 and Time 2 data left for analysis. Therefore, our final sample comprised 89 participants (age:

Recruitment plan

We purposefully sampled students via Prolific Academic (using custom prescreening) and university participant pools (SONA Systems) and through social media adverts, ensuring they met the inclusion criteria. Inclusion criteria were included in all recruitment materials, and participants confirmed they met these in the first page of the study’s procedure, via checklist boxes. After reading a brief definition of preregistration, participants were asked to confirm at Time 1 and 2 whether they preregistered their undergraduate dissertation. We used

A Sample of Universities Sampled Who Offer Preregistration in the Final-Year Curriculum

Procedure

Data were collected online using Qualtrics (https://www.qualtrics.com/uk/) through the various recruitment strategies above. At Time 1, participants were enrolled for their final year but had not initiated their dissertation project or their preregistration (September–November 2021). This provided a baseline in which to compare responses at Time 2 (after dissertation; May–July 2022).

Participants first provided demographic information (age, gender, ethnicity, institution of study) before confirming that they were in the final year of their bachelor’s of science undergraduate psychology degree and planned to undertake a quantitative dissertation project in the 2021–2022 year (yes/no). Participants who answered “no” were informed that they did not meet the inclusion criteria for the study. We then collected data related to students’ self-reported academic attainment in the mandatory statistics module of their degree in second year and their average grade in the second/penultimate year of their degree. This was scored on a categorical scale that is in line with the UK conventions of academic grades awarding: first-class classification (> 70%), 2:1 classification (60%–69%), 2:2 classification (50%–59%), third-class classification 40% to 49%, and fail (< 40%). This was to control for potential baseline differences between our two groups.

Participants were then provided with a brief definition of preregistration, adapted from Lindsay et al. (2016):

Preregistering a research project involves creating a record of your study plans before you look at the data. The plan is date-stamped and uneditable. The main purpose of preregistration is to make clear which hypotheses and analyses were decided on before you have accessed your data and which were more exploratory and driven by the data.

Then, to ensure participants had not yet preregistered their project at Time 1, we asked participants whether they planned to preregister their undergraduate dissertation (yes/no/unsure) and whether the undergraduate dissertation had already been preregistered (yes/no). All participants at Time 1 then completed the same measures. The items relating to participants’ plans were not used to categorize participants into groups and instead were used to guide quota sampling.

Measures (Time 1)

SATS-28

To assess whether preregistration improves attitudes toward statistics, students completed the SATS-28. This 28-item scale includes items related to statistics affect (e.g., “I am scared by statistics”), cognitive competence (e.g., “I can learn statistics.”), value (e.g., “Statistics is worthless”), and difficulty (e.g., “Statistics is highly technical”). These items were scored on a Likert scale from 1 (

Acceptance of QRPs

To assess whether preregistration influences attitudes toward QRPs, students rated their views on 15 research decisions (11 of which are QRPs, four of which are neutral/acceptable) on a sliding scale from 1 (

Perceived understanding of Open Science

As per other literature (Krishna & Peter, 2018; Stürmer et al., 2017), to test perceived understanding of Open Science practices and terminology, students indicated their confidence in their ability to understand 12 key terms (e.g., “Replication Crisis,” “

Attention and bot checks

As an attention check (i.e., to ensure that participants were actively paying attention to the survey materials and to prevent spam/bot respondents), we added an item, “Please select strongly disagree to this question,” in the COM-B measure to assure data quality. This was repeated in Time 1 and Time 2. As a second attention check, we used a protocol from the Prolific guidelines and asked participants, “Please enter the word

Exploratory measures

Capability, opportunity, and motivation toward preregistration

In line with Norris and O’Connor (2019), we also applied a behavior-change approach to assess the facilitators and barriers to study preregistration at Time 1 only. The capability, opportunity, motivation, behavior (COM-B) model (Michie et al., 2011) posits that a behavior occurs only if an individual has sufficient capability, opportunity, and motivation to perform it. Capability includes psychological capability (i.e., knowing how to perform the behavior) and physical capability (i.e., being physically able to perform the behavior). Opportunity includes social opportunity (i.e., being around others who are performing the behavior) and physical opportunity (i.e., having the time and resources to perform the behavior). Motivation includes reflective motivation (i.e., plans and beliefs to perform the behavior) and automatic motivation (i.e., desires, impulses, and inhibitions toward the behavior; Michie et al., 2011). The brief measure of COM-B developed by Keyworth et al. (2020) was employed. This measure contains six items; two items address each of the three components of the COM-B on a 5-point Likert scale ranging from 0 (

After dissertation (Time 2)

The same sample of students was asked to complete all of the above measures, except for the COM-B, again at Time 2, which represents a follow-up after their dissertation was completed in approximately May 2022. At Time 1, participants reported whether they planned to preregister their dissertation, and at Time 2, participants first reported whether they did actually preregister (yes/no). Participants’ responses to this question at Time 2 were used to allocate participants to the preregistration versus no-preregistration groups. For example, if participants responded at Time 1 that they planned to preregister but at Time 2 they did not, they were allocated to the no-preregistration control group for the final analyses. At Time 2, we also asked participants who preregistered to self-report the extent to which they followed their preregistration plan (1 =

In addition, participants were also asked four questions to assess whether they had implemented other Open Science practices associated with their dissertation: (a) creating an OSF account or uploading (b) material (open material), (c) code/scripts (open code), and (d) data (open data) to a public archive. This was used descriptively to gain more insight into other contextual factors that are associated with preregistration. Qualitative responses of students’ experiences of the preregistration process, including enablers and barriers, were also collected through three open-ended questions: “Please list all of the advantages you perceive of preregistration,” “Please list all of the disadvantages,” and “Do you see any barriers to preregistration?”

Perceptions of supervisory support

Finally, given the literature that suggests that perceived supervisor support affects students’ experiences of their dissertation research (Roberts & Seaman, 2018) and that supervisor belief affects preregistration behavior (Spitzer & Mueller, 2023), to assess students’ perceptions of their supervisory support at Time 2, we used a 14-item measure of perceptions of supervisor support. This scale includes items such as “I am satisfied with the support I have received from my supervisor” and “My supervisor was knowledgeable about research design/process as related to my project.” One item was “I felt pressure from my supervisor to find significant results in my dissertation” (reverse-scored). These were measured on a scale from 1 (

Risk and mitigations

At Stage 1 of this Registered Report, we acknowledged certain risks associated with our study and aimed to mitigate these with the following measures. The first risk was participant attrition from Time 1 to Time 2, leading to incomplete data across measures. We aimed to mitigate this by accounting for average attrition rates in our planned sample as per other longitudinal studies conducted on Prolific (7%–24%; Palan & Schitter, 2018) and using a varied recruitment approach. At Time 2, participants not recruited via Prolific were entered into a prize draw to incentivize participation. Likewise, recruitment of the preregistration group required a level of buy-in from institutions that embed a preregistration model into their undergraduate-dissertation process. Members of the research team had contacts with these institutions listed in Table 1, which should mitigate barriers to student access in the preregistration group. We ran a sensitivity power analyses on the complete data and used this to contextualize our discussions and interpretation of final results. Our final sample size is smaller than planned, largely because of our stringent attention checks and matching of data from Time 1 to Time 2; we discuss this in the Limitations section.

Second, at Stage 1, we had also factored in discrepancies in definitions of preregistration practices by providing all students with a student-friendly, accessible definition of preregistration from the literature (Lindsay et al., 2016). This should mean that students were able to readily identify whether they engaged in this specific process above and beyond other processes in the dissertation timeline (e.g., discussing a protocol with their supervisor or writing an ethics application). Asking students to confirm at Time 2 that they had preregistered their study should also have alleviated any problems with students erroneously being allocated to the wrong condition at Time 1.

Finally, our study may have had confounding variables that we aimed to reduce. For example, it is likely that institutions that actively embed preregistration into the dissertation process may also teach Open Science practices more generally within their curriculum, which may be a confound when evaluating the effectiveness of study preregistration. This was first checked by establishing whether there are differences in students’ Open Science attitudes and knowledge at Time 1. Second, we mitigated this by investigating the interaction between group and time on all of our outcome variables. Specifically, we expect that despite any differences between groups at Time 1, there will be a significant interaction indicating that engaging with the preregistration process has an additive effect on students’ attitudes, behaviors, and perceptions of Open Science (i.e., it improves scores beyond improvement that occurs because of differences in time point).

It could also be possible for ceiling effects to occur in the preregistration group at Time 1, particularly given the aforementioned concern about contextual factors that affect students’ knowledge of Open Science and QRPs. This could mean that differences from Time 1 to Time 2 are “masked” because of high scores at Time 1 for the preregistration group. Although we cannot methodologically mitigate this concern, we discussed it in detail following data collection and use this to guide interpretation of our results. Finally, we avoided missing data adversely affecting our statistical power by using a “requested entry” option on Qualtrics, so participants were unable to progress in the survey without first confirming that they were happy that they had answered all the questions they wished to (if some were left unanswered).

Analysis Strategy

Our full analysis strategy, registered at Stage 1, is shown in Table 2.

Research Questions, Accompanying Hypotheses, and a Priori Analysis Plan

Note: ANOVA = analysis of variance; QRPs = questionable research practices; COM-B capability, opportunity, motivation, behavior model.

Results

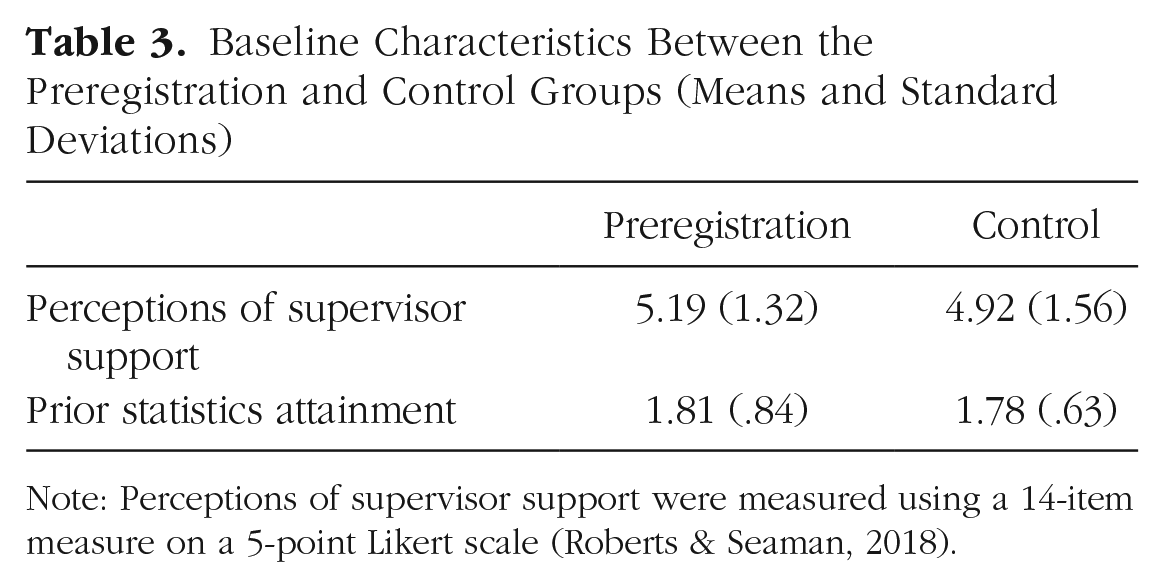

Baseline characteristics of perceived supervisory support and prior statistics attainment at Time 1 did not significantly differ between the preregistration and control groups (see Table 3; both

Baseline Characteristics Between the Preregistration and Control Groups (Means and Standard Deviations)

Note: Perceptions of supervisor support were measured using a 14-item measure on a 5-point Likert scale (Roberts & Seaman, 2018).

A series of 2 (Group: Preregistration vs. Control) × 2 (Time: Time 1 vs. Time 2) mixed analyses of variance were conducted on attitudes toward statistics (SATS-28; Hypothesis 1), attitudes toward QRPs (Hypothesis 2), and perceived understanding of Open Science (Hypothesis 3). For our complete analysis plan, see Table 2. Bonferroni corrections were applied to elucidate pairwise comparisons, and statistical significance was denoted as

As an exploratory analysis, we also conducted a between-participants

Descriptives about preregistration practice

Of the 52 students who preregistered their dissertation, 27 students (51.92%) reported that they somewhat followed the analysis plan set out in the preregistration, and 25 (48.1%) followed the plan exactly. No students reported that they did not follow the analysis plan in the preregistration, and thus all participants were retained in the analyses. Students preregistered most commonly on a university preregistration template (55.8%,

Attitudes toward statistics

We predicted that there would be a main effect of time such that over time, students’ perceptions of statistics would improve (i.e., their scores on this scale would go down) in both groups (for our full analysis plan, see Table 2). We also predicted that there would be a two-way interaction between group and time with the preregistration condition exerting an additive effect on this to show more marked improvement in statistics attitudes. However, contrary to hypotheses, there were no significant main effects or interactions between preregistration groups on the four dimensions of statistics attitudes. Specifically, for statistics affect, there was no significant main effect of group,

Acceptance of QRPs

Contrary to hypotheses, we were unable to detect a significant main effect of time,

Perceived understanding of Open Science

We predicted a Group × Time interaction whereby participants in the preregistration group would improve their perceived understanding from Time 1 to Time 2 compared with the no-preregistration group. There was a significant main effect of time,

Two-way interaction between preregistration group and time on perceived understanding of Open Science.

Exploratory analyses

COM-B

A between-participants

As a final exploratory analysis, we explored whether there were differences in capability, motivation, and opportunity for preregistration between the students who indicated at Time 1 that they initially planned to preregister and then at Time 2 did not (

Qualitative analysis

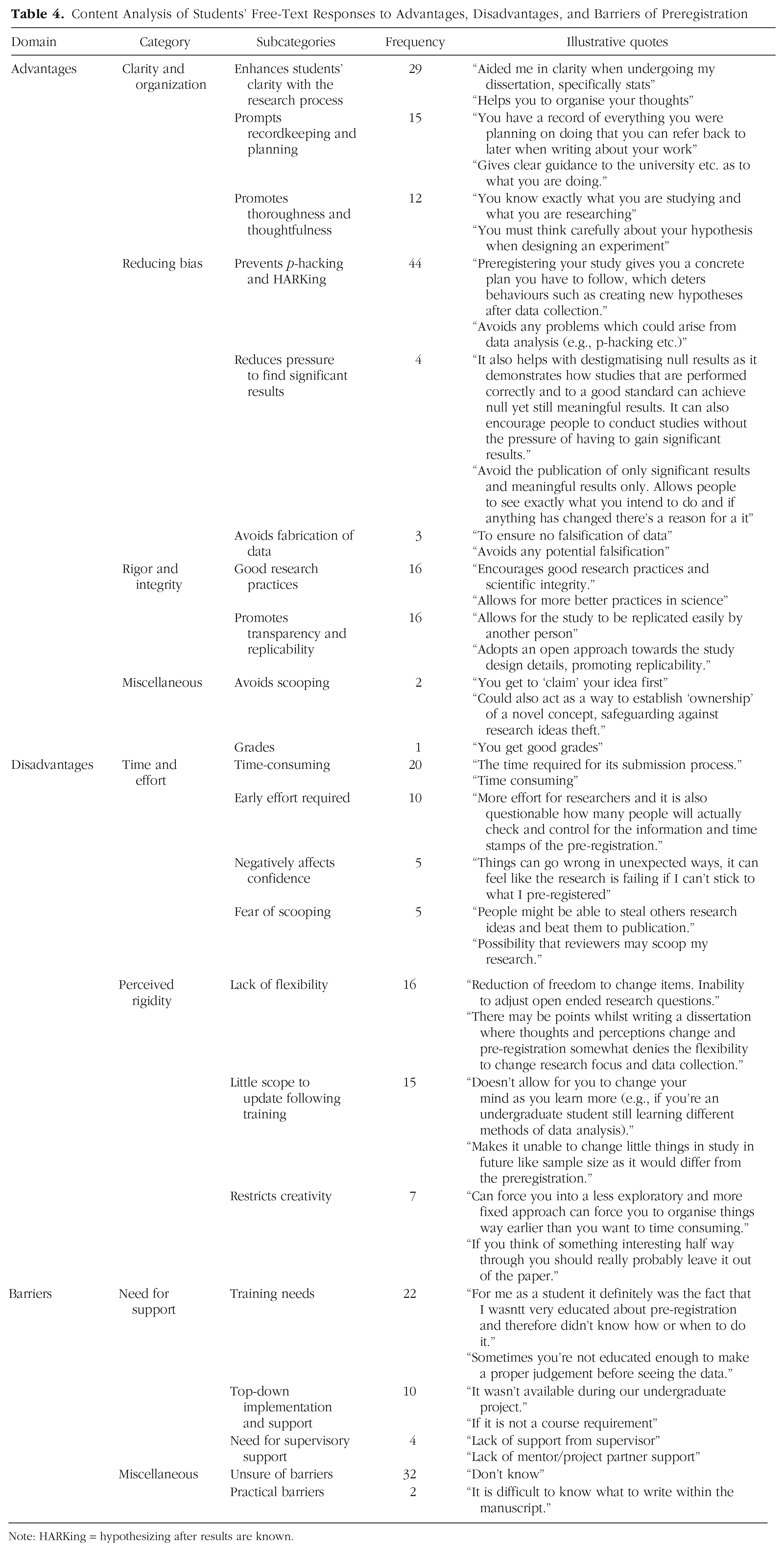

Students’ responses to the open-ended questions at Time 2 were analyzed using qualitative content analysis to identify advantages, disadvantages, and barriers to preregistration in students. This involved one author reading and coding the free-text responses for their content before discussing with the rest of the core authorship team (C. R. Pennington, E. Norris, and K. Clark). M. Pownall, in consultation with the rest of this research team, then generated categories and subcategories for the data before counting frequency within the responses. This allowed an exploratory investigation into students’ firsthand accounts of the advantages, disadvantages, and barriers of preregistration.

Table 4 shows the results of this content analysis. Three core categories were found for the perceived advantages of preregistration, each with subcategories. These were perceptions of preregistration for (a) improving clarity and organization, (b) reducing bias, and (c) promoting rigor and integrity. In terms of perceived disadvantages, two core categories were identified: (a) the time and effort required to preregister and (b) perceived rigidity of preregistration. Finally, the majority of participants did not report that they knew of any barriers but frequently noted need for support (including supervisory support and top-down wider support for preregistration) as a barrier to preregistration. For each category, there were also miscellaneous categories that were not frequent enough to represent core categories, but these are still presented in Table 4 for completeness.

Content Analysis of Students’ Free-Text Responses to Advantages, Disadvantages, and Barriers of Preregistration

Note: HARKing = hypothesizing after results are known.

Discussion

The aim of this study was to provide the first empirical investigation into the pedagogical impact of study preregistration on undergraduate students in the final-year dissertation. Students who preregistered their dissertations showed an increase in perceived understanding of Open Science terms (e.g., the replication crisis,

Implications

This study has much to contribute to the Open Science movement because it is the first study, to our knowledge, that empirically considers how one entry-level Open Science practice might be useful in tackling some of the challenges that undergraduate students face in their dissertation-research process. Our findings suggest that the process of preregistration can bolster students’ confidence with understanding Open Science concepts more broadly, which suggests that this practice may indeed be a useful way of providing an entry point into the wider Open Science conversation. However, findings also generally found no evidence to suggest that preregistration affected attitudes toward statistics and acceptance of QRPs, contrary to our hypotheses. Preregistration may also have benefits beyond those that are captured in the measures of the present study, and thus this warrants further research. For example, engagement in the preregistration process may likely improve outcomes such as students’ trust in the research they are conducting, inspire ambitions to pursue a career in research, and improve research literacy above and beyond attitudes toward statistics. These potential variables are all worthy of investigation in future studies to further interrogate how preregistration and, indeed, Open Science tools more broadly may confer advantages to undergraduate students.

Furthermore, our study also has broad implications for communities of Open Science, too. Supporters of Open Science have eloquently and convincingly made the moral and theoretical argument for embedding Open Science within undergraduate teaching and supervision. However, there is a notable lack of empirical, experimental research that gathers data to assess whether students actually benefit from engagement with these practices. To our knowledge, this study is the first to use quasi-experimental methods to begin to investigate this research question. This study thus responds directly to the calls of Pownall et al. (2023) to adopt the principles of Open Science (e.g., robust methodologies, preregistration, open data sharing, collaborative science) to pedagogical research about the value of Open Science. As Pownall et al. noted, to date, the majority of evidence available to educators and scholars who wish to make decisions about the incorporation of Open Science into their pedagogy typically relies on anecdotal and local-level evaluations of practice, which lack control groups and the ability to draw broader conclusions.

Limitations

We must acknowledge certain limitations of the present study. First, our sample size was smaller than we initially planned, largely because of the attrition from Time 1 to Time 2 of the survey and the implementation of rigorous data-quality checks. This meant that instead of being able to detect effect sizes of approximately

Other limitations include the discrepancies in student experiences, particularly when collecting data cross-institutionally. For example, students and supervisors who develop a detailed, rigorous preregistration and engage in the process more with their supervisor might report greater benefits compared with students and supervisors who develop a poor-quality, less detailed preregistration. Indeed, there is emerging literature to suggest that the specificity of preregistrations differs between researchers (Bakker et al., 2020). However, it is beyond the scope of this research to assess each preregistration for quality and rigor. Likewise, adherence to preregistration protocols is another indicator of preregistration value (i.e., if researchers do not strictly adhere to their analysis plan, it may not be useful in reducing QRPs or, in our context, improving statistics attitudes). No participants in our sample indicated that they did not follow their preregistration plan at all in their dissertation, but the extent to which students closely and actively used their preregistration is unknown; this suggests that more research is needed into the implementation of preregistration in a pedagogical context. Practical reasons for this may also be informed by our qualitative data here, which report perceived (dis)advantages to preregistration, including time restraints, perceptions of preregistration requiring high effort, and fears of limited flexibility in the analysis. Furthermore, many participants in our sample used “university templates” to preregister their dissertations. Although we asked participants to confirm that they set out an analysis plan in the preregistration, some templates may be more stringent than others, and these in themselves might differentially affect the pedagogical outcomes of their use. Future work could also focus on how preregistration may be useful for different types of dissertations, including qualitative studies and analyses of secondary data.

Conclusion

Taken together, our quantitative and qualitative findings have demonstrated that although study preregistration did not significantly affect students’ attitudes toward statistics or their acceptance of QRPs, students who preregistered reported significantly greater perceived understanding of Open Science from Time 1 to Time 2 compared with students who did not preregister. Furthermore, students who preregistered reported significantly greater capability, opportunity, and motivation to preregister, suggesting that the COM-B model of behavior change might be a useful theoretical approach to understand Open Science uptake. Specifically, this suggests that when there is sufficient opportunity, capability, and motivation to engage with the preregistration process, there may be beneficial downstream consequences for students, including bolstered understanding of Open Science and science reform. Students also reported a range of positive potential benefits of preregistration, including heightened transparency, improved clarity with the dissertation data-analysis process, and reduction of the lure to engage in QRPs (e.g.,

Our findings also contribute to the case that Open Science should be embedded into higher education for improved student scientific literacy and confidence (for a review, see Pownall et al., 2023). In response to the UK House of Commons Science and Technology Committee’s call for evidence of the contributors to research integrity, FORRT argued the importance of the pedagogical consequences of how students are taught, mentored, and supervised (Azevedo et al., 2021). A wide range of resources have recently been developed to support student learning of Open Science, including a student guide (Pennington, 2023), the FORRT project materials (Azevedo et al., 2019), and the Collaborative Replications and Education Project (Wagge et al., 2019).

These efforts aim to strengthen student knowledge and engagement in research to become more savvy consumers of science (Korbmacher et al., 2023). There is now a need for researchers to continue this line of work, critically and empirically investigating how barriers to Open Science can be negated with students (and, indeed, more broadly) to continue embedding high-quality, rigorous, thoughtful research practices into the undergraduate dissertation and beyond.

Footnotes

Acknowledgements

Stage 1 Peer Community in Registered Reports recommendation is available at https://rr.peercommunityin.org/articles/rec?id=48. Stage 2 Peer Community in Registered Reports recommendation is available at ![]() .

.

Transparency

All authors except M. Pownall, C. R. Pennington, E. Norris, and K. Clark are in randomized order.