Abstract

Determining carpet pile direction is necessary in the manufacture of sample boards and when installing carpet because it is desirable for any shading effects to be homogenous. Depending on the style of the carpet, determining pile direction can be time-consuming and difficult. Two approaches to automating it are developed and tested in order to improve sample board production rates. Significant research has already been performed in the automatic detection of pile lay orientation in carpet and fiber orientation in nonwovens. These methods are insufficient for the present application because they yield the angle of a line parallel to the carpet pile but not the direction along that line in which the pile points. In this work, labeled carpet samples of varying styles are used to train and validate a convolutional neural network (CNN). These samples are also used to test an electromechanical solution. The CNN is shown to provide 100% accuracy when determining pile lay orientation and 93% accuracy when determining pile direction. The electromechanical method for determining pile direction is 65% accurate when used alone and 90% accurate when combined with prior knowledge of the pile lay orientation. These values fall short of the 99% accuracy of an expert operator detecting pile direction but compare favorably to that of a beginner.

Introduction

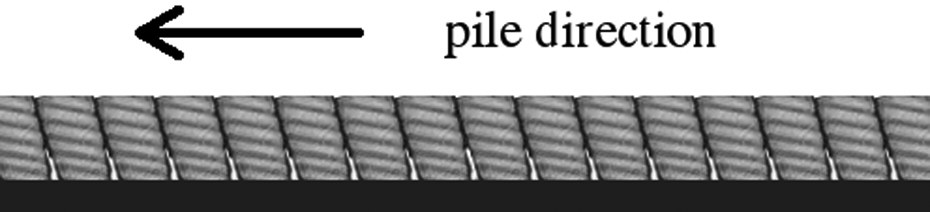

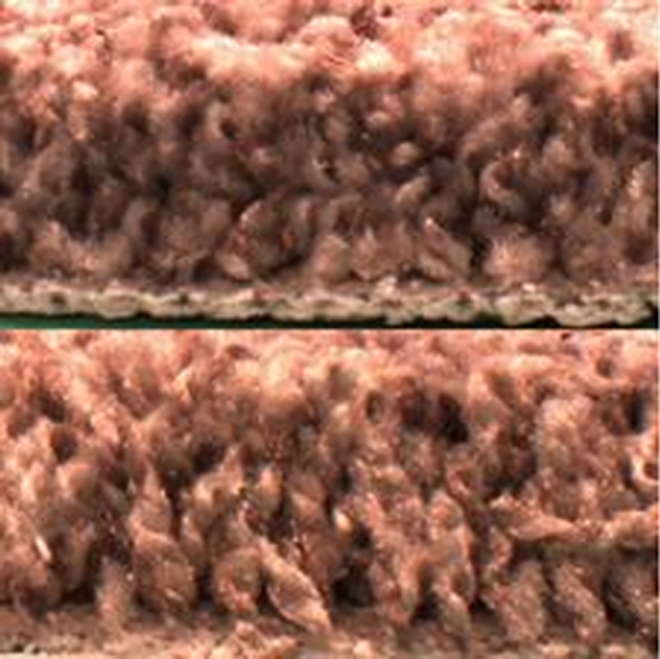

Carpet sample boards are used for retail product displays. One comprises dozens of rectangular carpet samples of varying styles glued to an engineered wood product board. Carpet manufacturing techniques often result in a natural pile lay orientation, as depicted in Figure 1. This orientation can sometimes be evidenced in shading when viewing the carpet from different directions. A common requirement for sample boards is that the pile direction, or nap, for all samples points toward the bottom edge of the board. Detecting the pile direction is therefore necessary in production.

Carpet side view parallel to pile axis.

Creation of a sample starts with cutting strips from a carpet roll. The strips are cut into smaller rectangles: the samples. The final step is to bevel the four sides of each sample. The finished samples are dropped into a container, the import of which is that they have random orientations at this point in the sample board production process.

Operators are trained to detect the pile direction, a process called napping. They take a sample from the container and nap it to meet the orientation requirement for sample boards. Some operators nap by sight; the side toward which the pile is laying looks more densely packed than the other three sides of a sample. Typically, operators nap by feel; running one’s finger through the pile is most difficult when going against the pile lay. Referring to Figure 1, the carpet would provide greater resistance to pushing from left to right than other directions. Depending on the carpet style and the operator’s experience level, the napping process can be time-consuming and cause a bottleneck. It is desirable, therefore, to develop an automatic napper with accuracy similar to that of an operator.

One idea for removing the bottleneck of manual napping is to print a directional mark on the carpet back prior to cutting the samples. A sample width of 5 cm is often used, so the mark would have to be repeated frequently on the carpet back to ensure at least one mark being printed on the back of every sample. This might be printed either during production or when making sample boards. While the carpet is sometimes produced specifically for sample boards, at other times the carpet for the boards is pulled from general inventory. Printing during carpet production would therefore necessitate printing the directional mark on the back of all carpets, whether destined for a sample board or installation. The sponsoring entity produces hundreds of millions of square yards of carpet per year and only three-tenths of 1% of it is used for samples. Owing to the operational cost of printing on this unnecessarily vast area of the carpet, an automatic napping device is deemed preferable.

Printing at the sample board production line would move the process to just the carpet needing a directional mark. This would most reliably be effected by an inkjet printer. The factory layout would necessitate that the print nozzle point upward and be mounted to a linear rail. Lint falls from the carpet as on the gears and chain in Figure 2. Keeping the nozzle clean would require significant time if printing upward onto the carpet back. Other ideas that require neither the maintenance nor cost and complexity of a linear rail–mounted printer are considered.

Lint on equipment.

The present work investigates two approaches to automatic napping based on the two methods used by operators. A convolutional neural network (CNN) is trained to nap using vision. A custom electromechanical device that acts in a fashion similar to a blade-disc-type tribometer is developed to nap using mechanical resistance. The work also evaluates the accuracy when combining these two approaches.

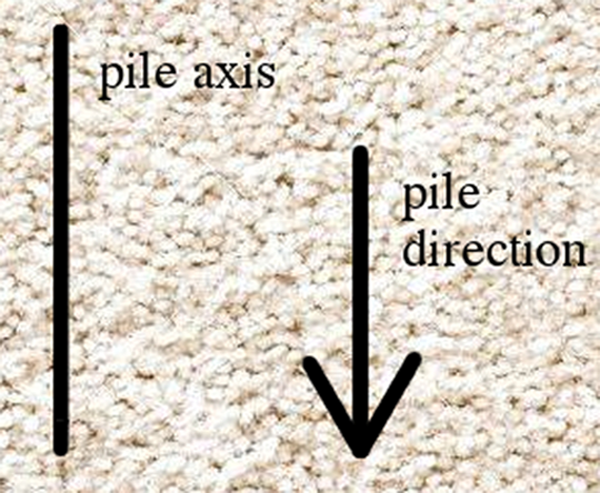

This article assigns different meanings to the terms pile axis and pile direction. The pile axis is defined as a line parallel to the pile lay orientation. The pile direction, then, is a vector that is parallel to the pile axis and points in the same direction as the nap. Figure 3 depicts these terms. As a usage example of these terms, consider that every sample on a board is to have its pile axis perpendicular to the bottom and top edges of the board and pile direction pointing toward the bottom edge of the board.

Carpet pile axis and direction indicators superimposed on carpet face.

Several methods, both manual and automatic, exist for (visually) detecting the pile axis, or “pile lay orientation,” which is insufficient for sample board production. Lomas et al. developed a manual approach to determining pile lay orientation, which could be helpful for automating the process in that it provides rules, but it is only for measuring the orientation, not direction. 1 Various machine vision approaches to determining orientation have been studied. As part of work calculating the orientation distribution function, Pourdehyhimi et al. place thin fabrics on a mirror to obtain gray scale images amenable to their “direct tracking” technique. 2 Besides the fact that it does not compute the direction, it is thought that this technique is not applicable to sample board production because sufficient light would not be reflected back through the carpet. Flow field techniques exist for determining pile axis orientation; one example is the work of Pourdeyhimi et al. 3 Goniophotometry was used for pile axis detection by Jose et al., among others. Inconsistent results were obtained but regardless, the technique is not applicable when detecting pile direction. 4 Xu uses a trio of image analysis methods to find pile axis orientation and other characteristics of the carpet. 5

This article describes two approaches to automatic napping. They are tested using 1000 carpet samples and the results are provided herein. A summary concludes the article.

Neural network

Some operators nap using visual cues and so a CNN (GoogLeNet

6

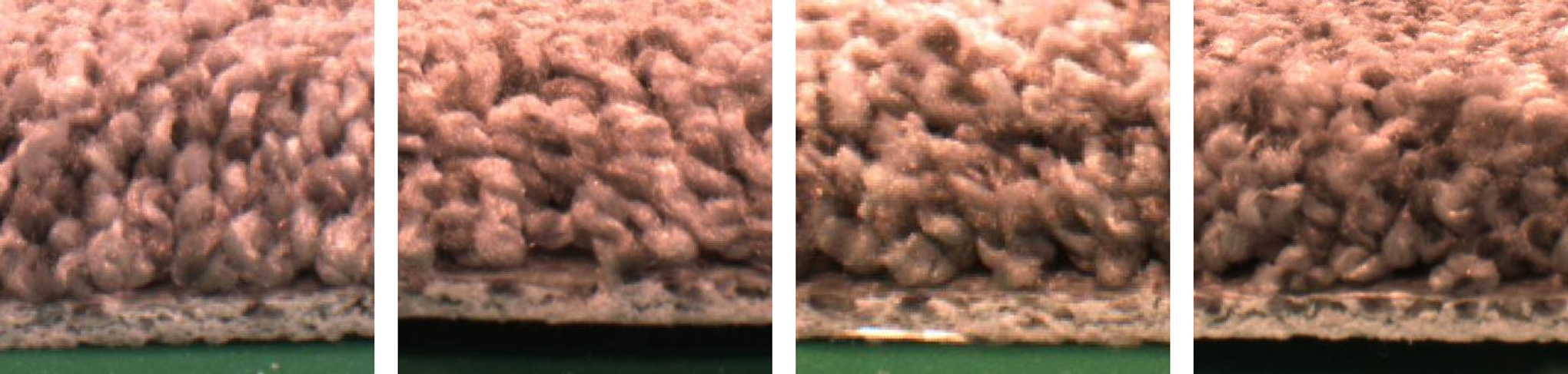

with a reduced number of outputs) is trained for image classification on carpet samples. There are four possible pile directions for a carpet sample. Images of the four sides of a sample of frieze carpet are shown in Figure 4. Here, the pile is pointing toward the camera in the rightmost image. The result is a view with more yarn ends than in the other images. The other sides of the sample, especially the opposite one (in the second picture from the left), show the sides of the yarn more than the ends. The yarn appears more densely packed when the pile is pointing toward the viewer. The experienced eye can discern that the pile direction is toward the rightmost camera. It is a subtle distinction, one that does not lend itself well to a rules-based image classification, therefore convolutional neural networks are an appropriate tool.

Most tasks that consist of mapping an input vector to an output vector, and that are easy for a person to do rapidly, can be accomplished via deep learning, given sufficiently large models and sufficiently large datasets of labeled training examples.

7

Four sides of a carpet sample.

In this case, the input vector might be a composite image as shown in Figure 4 and the output a label indicating toward which camera the pile direction points, so it is well suited to deep learning.

To simplify the image classification problem, a second (similarly modified) instance of GoogLeNet is trained to determine the pile axis orientation from an image of the carpet backing. This determination can remove two side images from consideration. For example, with knowledge of the axis orientation, the first and third pictures in Figure 4 are immaterial.

This method mimics the one used by some operators who describe the visual napping process as an identification of which side has the most dense appearance. An alternative visual napping approach could instead retain the first and third pictures in Figure 4, jettisoning the second and fourth, and train a CNN to identify the pile direction from images parallel to the pile axis. It seems worthwhile to compare these two approaches but that is left for future work; this investigation takes its cues from the grandest of all neural networks, the human brain, and follows the method espoused by visual-napping operators.

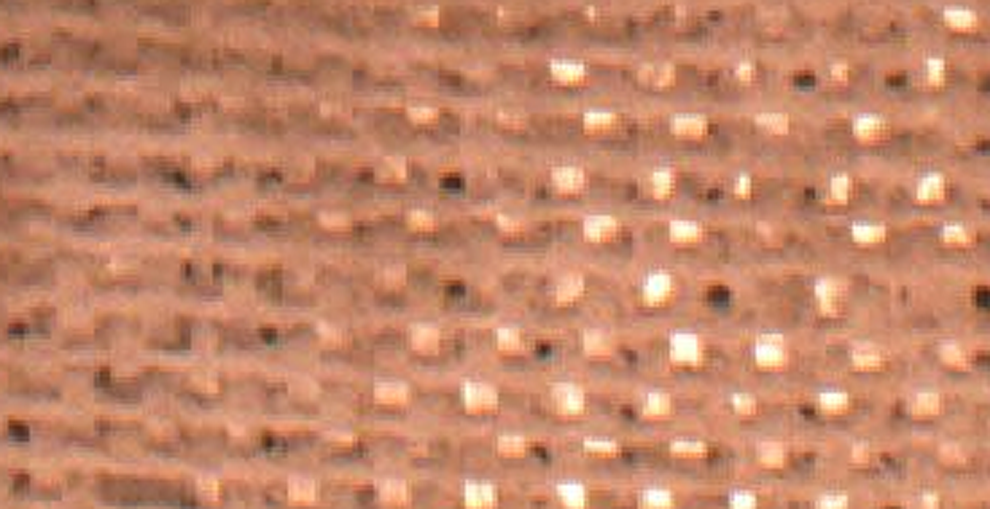

Visually detecting axis orientation from carpet backing is a simple job. In Figure 5, for example, there exists a clear distinction between the vertically oriented, extruded tape warp yarn (parallel to the pile axis) and the horizontally oriented, spun fill yarn. Adding a pile axis classifier simplifies the task for the pile direction CNN.

Example of carpet back.

Used here to provide a proof of concept, GoogLeNet is a relatively large network: 22 layers deep. It “was designed with computational efficiency and practicality in mind, so that inference can be run on individual devices including even those with limited computational resources.” 6 The sequential pair of networks on an Nvidia GeForce GTX 1080 GPU naps a sample an order of magnitude faster than an expert operator.

Electromechanical design

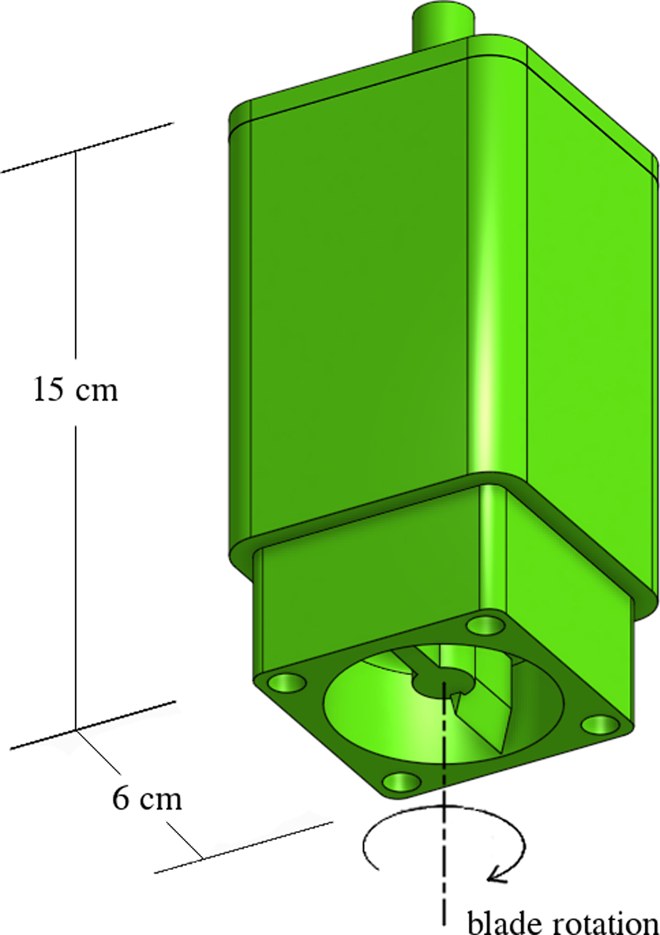

Most operators nap samples by feeling the relative resistance when using their fingers to brush the pile in different directions. This is emulated using a custom device that is similar in form to a blade-disc-type tribometer. Investigators have utilized a blade-disc-type tribometer for measuring surface roughness but not fiber direction in nonwovens. 8 The machine developed for the present work is depicted in Figure 6. The blade, at the bottom of the device, spins about the vertical axis and is brought into contact with a horizontal carpet sample. It is driven by a DC motor having an optical encoder.

Model of electromechanical napper assembly.

Referring to Figure 6, the top protuberance is for mounting the device on the collet or chuck of a mechanical press, so that the axis of rotation is perpendicular to the sample. A prototype, made using a 3-D printer and the Arduino Uno, is shown in a chuck above a carpet sample in Figure 7.

Prototype mounted on press and positioned above carpet sample.

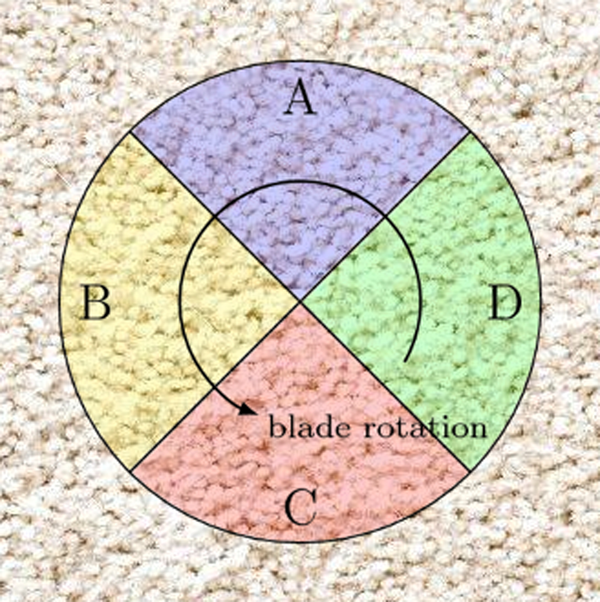

During operation, the motor is energized so that the blade spins like a propeller and rubs across a circular portion of the carpet face, as the sensor is brought into contact with the carpet. The voltage across a resistor in series with the motor gives an indirect measurement of torque because it is proportional to current in a DC motor. The Arduino measures this voltage with an analog-to-digital converter and calculates the torque, which it stores along with the corresponding motor shaft position. The torque measurements are averaged for each quadrant, as shown in Figure 8.

Quadrants in which torque measurements are averaged.

It is assumed that the pile direction is perpendicular to an edge of the sample. Referring to Figure 8, if the pile direction was pointing down as in Figure 3, then (with the counterclockwise blade rotation indicated here) the blade would experience most resistance from the pile when moving through quadrant D. It is therefore expected that the highest average motor toque would occur when the blade is in this quadrant. If the pile direction was pointing to the right, then quadrant A should yield the highest average motor torque, and so on.

Results

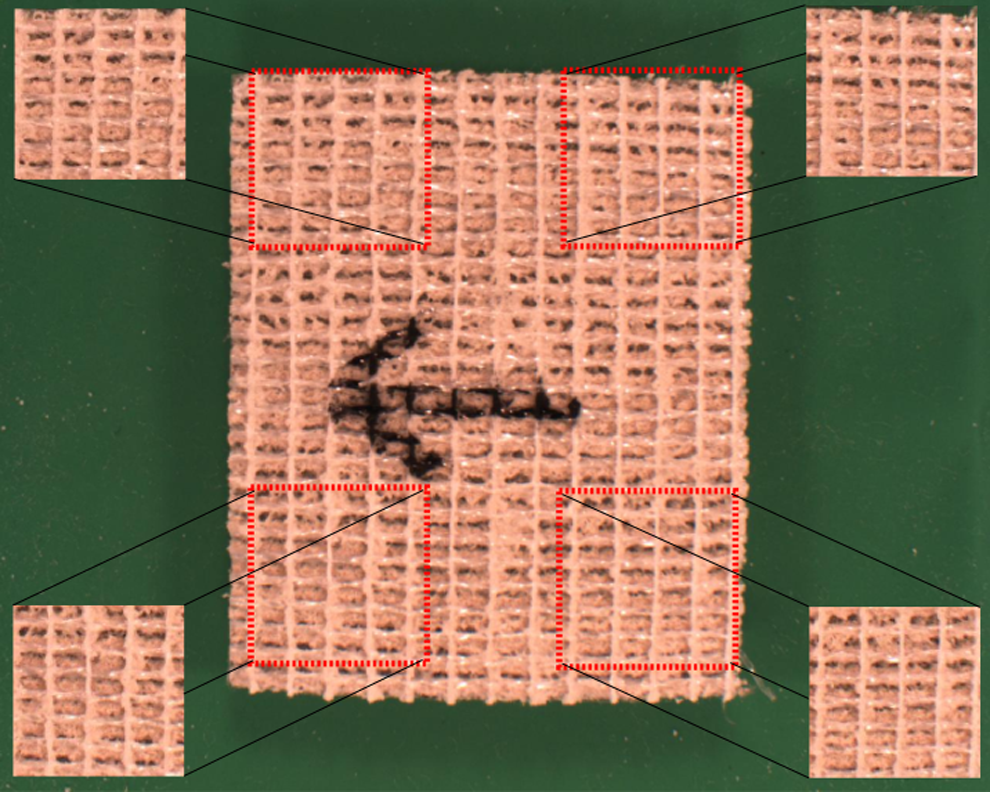

An expert operator labeled (in the form of an arrow drawn on the back, see Figure 9) the pile direction for 1000 carpet samples of various sizes and in 7 different styles: texture, Saxony, frieze, level loop (both architectural and residential), Berber, and patterned. Upon close inspection, it was determined that some of these were mislabeled. Using this information to calculate expert napper accuracy yields 99% ± 1% via maximum likelihood estimation. This provides a goal for automatic napping.

Back of labeled carpet sample, four cropped images shown.

Images of the samples were used to train and validate the CNNs. Many of the samples are too small for the electromechanical device, so its success rate is determined using just 749 samples.

Convolutional neural networks

Five pictures were taken of each sample: one of the carpet back and four of the sides. These images were used to train the two CNNs, as described below.

Pile axis (classifying images of carpet back)

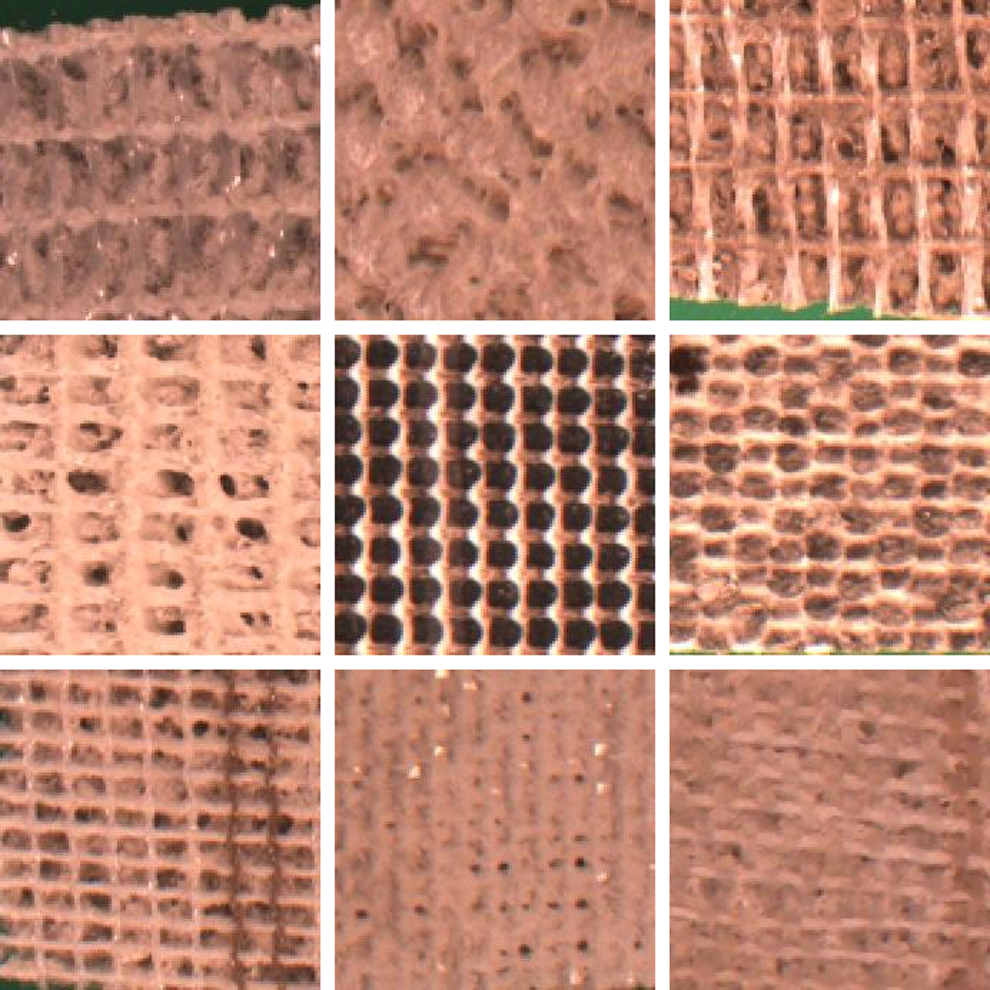

Training and validation for this CNN starts with photographs of the back of 1089 carpet samples. Three of every four (75%) are used for training and the rest for validation. From each photograph, four images measuring 224 × 224 pixels are cropped, as shown in Figure 9, in order to increase the size of the training set. (This is the input image size for GoogLeNet 9 but other sizes are also used in the literature. For example, 227 × 227 and 256 × 256 are common.) The CNN is therefore trained using 3268 images and validated with a set of 1088. It has two possible outputs: pile axis horizontal in the image or pile axis vertical. Despite the variation in carpet back appearance between different styles, see Figure 10, the trained CNN correctly identifies the pile axis orientation in 100% of the validation images.

Back of nine different carpets.

Pile direction (classifying images of carpet sides)

In Berber and architectural level loop carpets, the pile direction is exceedingly difficult for a person to discern; the pile axis is obvious. Sample boards showcasing these carpet styles, therefore, require alignment only of pile axis, not pile direction. Since the pile axis CNN is shown to correctly identify the pile axis for all carpet types and since knowing pile axis is sufficient for the sample boards of Berber and architectural level loop, these two styles are removed from consideration for the pile direction CNN. The resulting image set represents the remaining 956 carpet samples. Again, the photographs of 75% of the samples are used for training and the remainder for validation. Four side photographs are acquired for each sample but knowing the pile axis orientation means that two of them are superfluous.

Half of the photographs, those having an image plane parallel to the pile axis, are removed from the image set. As with the pile axis CNN, it is desirable to increase the size of the training set, so from every remaining photograph, 10 crops are taken. Each crop has a 2:1 aspect ratio, so that it can be concatenated with a crop from the opposite side of the sample to form a 1:1 aspect ratio image as shown in Figure 11. The concatenation is then scaled to 224 × 224 pixels. This process is repeated with the same two crops, but the concatenation order is reversed to double the number of images. After all cropping and concatenating has been performed, the training set comprises 14,430 images as shown in Figure 11 and the validation set is made up of 4780 such images.

Opposite sides of a carpet sample. Top image: pile direction toward camera; bottom image: pile direction away from camera.

The pile direction CNN has two possible outputs: pile direction toward the camera of the top picture or pile direction toward the camera of the bottom picture. In Figure 11, the pile direction is toward the camera of the top picture. (Again, the carpet appears denser in the top picture than in the bottom because the yarn is viewed end on rather than from the side.)

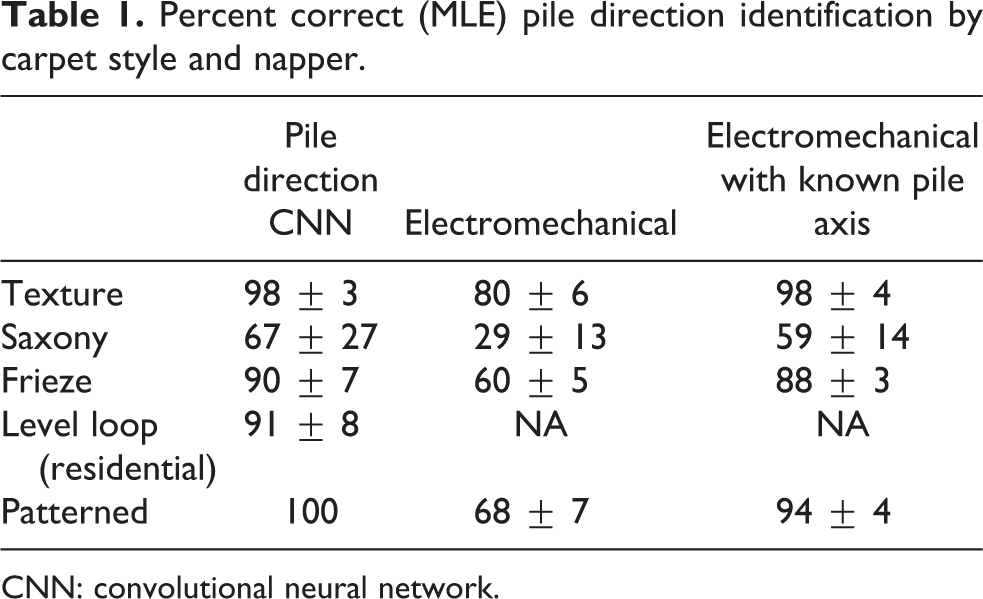

This approach to napping through computer vision takes two steps: one to determine the axis orientation and a second to identify the pile direction. The trained pile direction CNN correctly identifies the carpet pile direction in 93% ± 3% of the validation images, according to maximum likelihood estimation. The results vary by carpet style, so details are given in Table 1.

Percent correct (MLE) pile direction identification by carpet style and napper.

CNN: convolutional neural network.

Electromechanical napper

The machine introduced in the “Electromechanical design” section is brought into contact with carpet samples and the results are recorded for two scenarios: when the pile axis is unknown and when it is known. Due to a systematic error in the electromechanical sensor, possibly owing to axis misalignment from the low-precision fabrication methods used for the prototype, the highest average torque is often found in a quadrant adjacent to the expected one. Knowledge of the pile axis orientation allows these adjacent quadrants to be removed from consideration, thereby improving napping accuracy. In practice, the pile axis CNN could be used to provide pile axis orientation.

Berber and architectural level loop samples are not tested because knowledge of pile axis suffices for sample boards of these styles. Furthermore, not every sample is large enough to be used with the electromechanical napper. None of the residential level loop samples are large enough. Removing all the aforementioned samples means that testing is done with a subset of 749 samples.

The electromechanical napper correctly identifies the pile direction in 65% ± 3% of the samples when the pile axis is unknown. Providing the napper with the pile axis orientation, thereby eliminating two quadrants from consideration, yields 90% ± 2% accuracy on the samples tested. Performance varies by carpet style, so details are provided in Table 1.

The data show that both the visual and electromechanical napping approaches fail most often with Saxony carpet. This is the densest style and it thus provides meager clues, either visual or tactile, as to pile direction. As mentioned earlier, mistakes were detected in some of the 1089 samples labeled by an expert napper; Saxony had the highest percentage of incorrect labels. It is a difficult style to nap for machines and man.

Conclusion

Two automatic nappers are presented. One utilizes a pair of convolutional neural networks and the other is an electromechanical device.

The CNN approach yields a 93% overall accuracy by combining two trained networks. The first determines pile axis orientation using a picture of the carpet backing. Pile axis is identified by the CNN for 100% of the samples tested, which means that sample boards requiring only pile axis information (like Berber) are napped with 100% accuracy by the CNN. The second network uses pictures of the two sample sides perpendicular to the pile axis for discerning pile direction. Except for the dense, Saxony carpet, the confidence intervals for CNN napping overlap that of an expert for nearly every other style. The electromechanical napper is correct in 68% of the cases where the pile axis is unknown and is 90% accurate when augmented with knowledge of pile axis orientation. It is therefore believed that the automation of the napping process in sample board manufacture is viable.

If guidance were sought for how to implement automatic napping, two considerations would recommend the CNN. First is the relative accuracy mentioned above. Second, the electromechanical napper requires physical introduction to the carpet and has moving parts that are subject to wear. For situations where acquiring the side images of the carpet is not feasible then a combination of the pile axis CNN and the electromechanical device can provide napping.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.