Abstract

With the increasing introduction of entrepreneurship education, supported by governments and policymakers, questions on their effect have occurred. Thus, several studies on their impact have also emerged over the years. However, many of these studies have issues related to control groups, students’ self-selection into the education, unclear objectives and vague descriptions of the education, or no contextual considerations in the studies’ design. In addition, although there have been many calls for studies investigating behavioural outcomes over a longer timeframe, few studies have answered the calls. As a continuous evaluation of graduates in many instances would be costly and time-consuming, not to mention the difficulty of obtaining a sufficient response rate over time, a potential source for evidence is register data, for instance, from public records. In this conceptual paper, I discuss the issues of the current evaluation practices of entrepreneurship education before the use of register data is introduced in consideration of long-term evaluation and common methodological issues. I present potential evaluation examples using register data on a particular program in entrepreneurship. The potential benefits and limitations of using such data are addressed, along with potential avenues for future research using register data in entrepreneurship education impact research.

Introduction

Entrepreneurship has shown to be a source of economic development and growth, increasingly supported by policymakers and governments (Gilbert et al., 2004), where the introduction of entrepreneurship education has been means of action to support and encourage entrepreneurial activities (Pittaway & Cope, 2007). Over the years, the increase in entrepreneurship education has been high (Katz, 2003; Kuratko, 2005; Torrance et al., 2013), and many universities now have entrepreneurship education integrated campus-wide and often through mandatory courses (Hytti, 2018). Therefore, in the growing literature on entrepreneurship education, a returning question revolves around the design of a well-working practice and the impact of the education (Duval-Couetil, 2013; Fayolle et al., 2006; Longva & Foss, 2018; Nabi et al., 2017). With the support and high expectation from society and policymakers, the investigation of how these initiatives are performing has also become more important and pressing with the increasing investments in such initiatives (O’Connor, 2013). Previous research has therefore extensively tried to address the question of whether entrepreneurship education has a positive effect. By investigating students’ skill development, their change in entrepreneurial intention, increase in self-efficacy, change in affection towards entrepreneurship, and general knowledge development, as some examples, scholars have tried to answer the calls (Longva & Foss, 2018; Nabi et al., 2017). And while the current studies in the field give important insights into what is possible in entrepreneurship education, the investigations into the longer-term outcomes are clearly few in number or even absent in some contexts (Longva & Foss, 2018; Nabi et al., 2017).

Most of the impact research on entrepreneurship education applies lower-level impact indicators, focusing on short-term impact and subjective outcome measures (Nabi et al., 2017). As such, research on entrepreneurship education impact is often leaning on the premise that changes in skills, intention, or affection will lead to entrepreneurial endeavours later, or at least increase the chances for such activities. However, few have conducted studies with a design rigorously showing that entrepreneurship education increases the number or level of involvement in entrepreneurial activities among alumni (Duval-Couetil, 2013; Longva & Foss, 2018; Nabi et al., 2017; Rideout & Gray, 2013). A reason for this might be the costs of studying entrepreneurship alumni over time (Samwel Mwasalwiba, 2010), which would be needed to investigate entrepreneurial activities and performance. While Samwel Mwasalwiba (2010) mentions decade-long databases developed and maintained by faculty, other sources, like public records, could be a potential source for investigating entrepreneurial activity, for instance, the quantity and quality of entrepreneurship over time (see e.g., Guzman & Stern, 2020).

Another issue related to entrepreneurship education evaluation is the consideration of the objectives of the effort in focus; impact research in entrepreneurship education needs to take the education’s goals into account. However, with unclear and vaguely described goals and definitions in entrepreneurship education (O’Connor, 2013), the effect of an education might be impossible to investigate. Moreover, different educational designs influence outcome measures differently (Walter & Dohse, 2012). New study designs should therefore consider context and objectives in impact evaluation – ‘what do we do and why do we do it?’

The purpose of this paper is therefore to advance the view on entrepreneurship education evaluation by investigating potential approaches that deal with some of the current issues in the field’s research. Specifically, I conceptually discuss the use of register data as a future avenue for long-term impact research in entrepreneurship education, including potential restrictions such data have, answering the question: what are the benefits and limitations in using register data when investigating entrepreneurship education impact?

In the next section, I present the current practices of entrepreneurship education evaluation and their known issues. There I discuss the challenges connected to long-term investigations, students’ self-selection to an education, the educational design, and the various objectives and outcomes from entrepreneurship education. I then introduce Kirkpatrick’s (1996) model for evaluating education in the context of entrepreneurship education. This model spans multiple levels of evaluation with clear guidelines for each level, where the two levels reaction and learning focus on the short-term impact, while behaviour and results focus on the longer-term impact. The model is therefore an appropriate foundation for holistically discussing the evaluation of the multifaceted outcomes of entrepreneurship education. In the fourth section, I illustrate how register data from public records can be applied and used in impact research on entrepreneurship education based on Kirkpatrick’s (1996) model. In this part, I use an entrepreneurship program as a case, where the evaluation examples use register data aligned with this programme’s design and context. In the final sections, I discuss and conclude on the potential benefits and limitations of using register data in entrepreneurship education evaluation.

Current State of Impact Research on Entrepreneurship Education

In today’s entrepreneurship education, the educational approach and focus have become more action-oriented, authentic, student-directed and real-world connected (Hägg & Gabrielsson, 2020; Kassean et al., 2015; Neck & Corbett, 2018). The changes in how entrepreneurship is delivered have occurred fast (Hägg & Gabrielsson, 2020) and multiple ways of delivering entrepreneurship have been introduced in higher education (Nabi et al., 2017). The design, contexts, and authentic learning (where students are professional entrepreneurs, not mimicking or simulating entrepreneurs) of entrepreneurship education have been acknowledged as influencing factors on students’ learning in the literature (Aadland & Aaboen, 2020; Nabi et al., 2017; Thomassen et al., 2019). Different approaches to entrepreneurship education create various outcomes; however, the objectives of entrepreneurship education also differ in the field (O’Connor, 2013), justifying the vast differences in how entrepreneurship education is conducted. This variation could also be a reason for the mixed results found in the literature (see e.g., Bae et al., 2014). The evaluation of entrepreneurship education, therefore, needs different designs for different educational efforts, but it is also important to remember that some evaluation practices do not fit in all contexts or give varying results between student groups (Longva & Foss, 2018). This makes the comparison of results difficult without clear insights into the education evaluated and its objective, which also make the literature on entrepreneurship education impact fragmented (and perhaps ambiguous, see further Fayolle and Gailly (2015) and Nabi et al. (2017)).

Objectives in Entrepreneurship Education Evaluation

Previous literature on entrepreneurship education has focused on start-up and job creation, stimulating entrepreneurial skills, and increasing entrepreneurial spirit, culture and attitude as main categories of objectives (Samwel Mwasalwiba, 2010). In terms of policy, the first would be a clear and wanted outcome to create economic growth (Gilbert et al., 2004), while the second and third might, at least for full programmes in entrepreneurship, need a more nuanced and detailed view on what broader impact they create. In such cases, the usage and value of obtained skills need to be explored and analysed in how they are applied in the alumni’s careers. The same applies to the entrepreneurial spirit, culture, and attitude – how are these affective factors influencing and benefitting the individual alum’s career or entrepreneurial efforts? These questions and problems relate to the connection between some educational outputs (e.g., subjective changes in intentions, skills, or mind-set) and the actual behaviour and impact created by the individual alum. Several calls for such research have appeared in the literature (e.g., Rauch & Hulsink, 2015), and while there are results showing the usage of alumni’s skills in their careers (Alsos et al., 2023) and how these skills influence the selection of jobs (Kucel et al., 2016), the long-term connection between entrepreneurship education’s subjective outcomes and post-graduation activities is still under-researched.

In addition, as more objective outcomes are investigated in long-term evaluations, these are few in number and the methodological rigorousness have been questioned by several (Duval-Couetil, 2013; Longva & Foss, 2018; Nabi et al., 2017; Rideout & Gray, 2013). For instance, in terms of the entrepreneurship education’s objective of creating jobs and new firms, a known issue is that entrepreneurship often occurs later in individuals’ careers (Burton et al., 2016; Marshall & Gigliotti, 2020). Some students might not start their own venture when graduating (or ever) (Dahlstrand & Berggren, 2010; Sørheim et al., 2021), but might apply for a job in an established firm or other start-ups, or pursue alternative careers (Dahlstrand & Berggren, 2010). Thus, those who become entrepreneurs and create jobs might do so later in their careers. This is not unexpected, as many entrepreneurs fail in their efforts, and starting a new venture requires investment and risk handling from the individual that might be undesirable if not necessary (Burton et al., 2016). Hence, expecting that entrepreneurship education meets a very high rate of contemporaneous start-ups among its alumni is perhaps unrealistic, and short-term evaluation might miss the correct career characteristics of the alumni. For other educational disciplines, like nursing, engineering, or business education, the outcome and objective might be investigated through employability; entrepreneurship education, on the other hand, needs to consider multiple outcome measures and objectives.

Sampling Issues in Entrepreneurship Education Evaluation

Another methodological issue, especially for education being elective, is the problem with control groups (Rauch & Hulsink, 2015). Students in elective courses or programs might be entrepreneurs before enrolling in their education; insights into how they behave after their education, without controlling for their entrepreneurial attraction, give a limited understanding of the education’s impact. Experiment designs have been applied and advocated to solve this challenge (Camuffo et al., 2020; Rauch & Hulsink, 2015), but practical as well as ethical issues may arise in such studies. For the studies that have applied an experimental design, the focus has often been on subjective outcomes and less on behavioural measures (Longva & Foss, 2018).

Related to the previous is the problem with self-selection (Rauch & Hulsink, 2015). Students that apply for a program or select elective courses or modules might have an interest in entrepreneurship or a goal of becoming an entrepreneur, which often means they have a positive attitude toward entrepreneurship (Karimi et al., 2016). As such, the difference in self-reported outcomes might differ pre and post education between students in elective and mandatory entrepreneurship education due to the students’ initial interest (see e.g., Karimi et al., 2016), and even in some instances, decrease the students’ outcome measure (see Oosterbeek et al., 2010). In the latter case, students who have some insights into entrepreneurship might think they have a good skillset but could experience the opposite when engaging in entrepreneurship education. This would, nevertheless, be positive as learning would have occurred (Karimi et al., 2016), and with good designs, the actual competence or skill development could be mapped (see e.g., DeTienne & Chandler, 2004). However, whether the development is creating an impact later in the students’ careers is still an under-researched and important question.

Long-Term Impact Evaluation of Entrepreneurship Education

In recent literature reviews on entrepreneurship education evaluations (Loi & Fayolle, 2021; Longva & Foss, 2018; Nabi et al., 2017; Rideout & Gray, 2013), the result show that few studies have investigated the long-term impact of entrepreneurship education on the individual’s behaviour. Fayolle and Gailly (2015) investigate the impact of entrepreneurship education on entrepreneurial intentions and its antecedents in the short term and after six months, finding evidence for a positive influence on attitude and perceived behavioural control after six months. Rauch and Hulsink (2015) also use the theory of planned behaviour in their study but also investigate entrepreneurial behaviour 18 months after the program. They find that entrepreneurship education positively influences students’ attitudes, perceived behavioural control, and intentions to become entrepreneurs, the latter of which also is found to influence entrepreneurial behaviour in the longer term. The individual’s entrepreneurial behaviour is also investigated by Gielnik et al. (2017). They study the impact of a training program on undergraduate students over 32 months, with measurements before and one, 12, and 28 months after the training program. In their study, they focus on the short- and long-term effects the educational efforts on passion, entrepreneurial self-efficacy, and business creation. The results show the complexity of the effects of entrepreneurship education, and how these interrelates and changes over time. However, while their study is longitudinal, they still call for more research using “[p]anel studies with an even longer scope”, as this could give more insights into the development of the individual’s entrepreneurial activities (Gielnik et al., 2017, p. 349). Such calls are also made by Rauch and Hulsink (2015), pointing to the fact that many start their entrepreneurial careers later in life. Thus, being able to look at the individual over a longer period would help in investigating the impact of education.

Evaluation of Education – Kirkpatrick’s Four-Level Model

Evaluating entrepreneurship education is, as previously mentioned, a difficult task, not only due to the inherent difficulty of evaluating education in general but also because entrepreneurship education is vaguely defined and objectives often are unclear (Samwel Mwasalwiba, 2010; O’Connor, 2013). In addition, managing a long-term investigation of educational impact is complex and inherently difficult (Fayolle & Gailly, 2015), especially with the long-term view needed in entrepreneurship education evaluation (Gielnik et al., 2017). To evaluate education can, as mentioned, occur on several levels (see e.g., Nabi et al., 2017), and should in some instances occur on multiple levels. One framework previously used in long-term entrepreneurship evaluation, is Kirkpatrick’s four-level model (Fayolle & Gailly, 2015; Kirkpatrick, 1996; Kirkpatrick & Kirkpatrick, 2006), first introduced in a series of articles on the different levels in the late 1950s (Kirkpatrick, 1996). This model for evaluating training programmes has been used in many contexts and studies, including entrepreneurship (Fayolle & Gailly, 2015), and it consists of four levels: reaction, learning, behaviour, and results. In the first level, reaction, the participants’ immediate reaction to the program is evaluated. This is described as “measuring the trainees’ feelings”, where there is no focus on the learning of the individual (Kirkpatrick, 1996: 54). The learning of the individual takes place in the second level, where a pre-post evaluation is suggested, capturing the immediate influence of the programme; the most applied approach in impact research on entrepreneurship education (Nabi et al., 2017). In the third level, the actual behaviour is in focus, where the aim is to capture the change in the individual’s actions due to the program in focus. In the fourth level, the results of the training are in focus, where Kirkpatrick and Kirkpatrick (2006) give examples of increased production, better quality, increased sales and profits, and where the result introduced and evaluated should be based on the reason for the programme. Hence, when evaluating entrepreneurship education, the variables or phenomenon in focus should align with the objective(s) of the programme.

When evaluating the long-term impact of entrepreneurship education, level three and four are relevant starting points when designing studies. However, as Kirkpatrick and Kirkpatrick (2006) also stress, the evaluation of a program should not bypass some of the levels, and both the individual’s reaction and the learning might be relevant to the behaviour and results. Hence, whether the objectives of an education are clear, and outcome measures are designed and appropriate for answering on the education’s impact, the entrepreneurship education’s design needs to be considered when answering whether the education has an impact. This means we need to understand and consider different dimensions or levels of the education. One dimension is the difference in educational design (Aadland & Aaboen, 2020; Nabi et al., 2017). Using different pedagogical or educational approaches might influence the learning of the students (Hägg & Gabrielsson, 2020), and also the amount of exposure to entrepreneurship and the length of the educational effort might influence outcomes (see e.g., Neergård & La Rocca, 2020). The level of the students and their background also influence knowledge development and learning (Fayolle & Gailly, 2008; Maresch et al., 2016). Technical students might have different levels of subjective changes compared to business students (Maresch et al., 2016), and freshmen might struggle with their own contribution while seniors are more inclined towards entrepreneurship with more educational experience (İlhan Ertuna & Gurel, 2011). Hence, the content, the what of the education, also needs to be considered when investigating the two top levels.

For implementing the evaluation, Kirkpatrick (1996: 57) presents the following guidelines for the two top levels:

‘Behavior

• Use a control group, if feasible. • Allow enough time for a change in behavior to take place. • Survey or interview one or more of the following groups: trainees, their bosses, their subordinates, and others who often observe trainees' behavior on the job. • Choose 100 trainees or an appropriate sampling. • Repeat the evaluation at appropriate times. • Consider the cost of evaluation versus the potential benefits.

Results

• Use a control group, if feasible.

• Allow enough time for results to be achieved.

• Measure both before and after training, if feasible.

• Repeat the measurement at appropriate times.

• Consider the cost of evaluation versus the potential benefits.

• Be satisfied with the evidence if absolute proof isn’t possible to attain.’

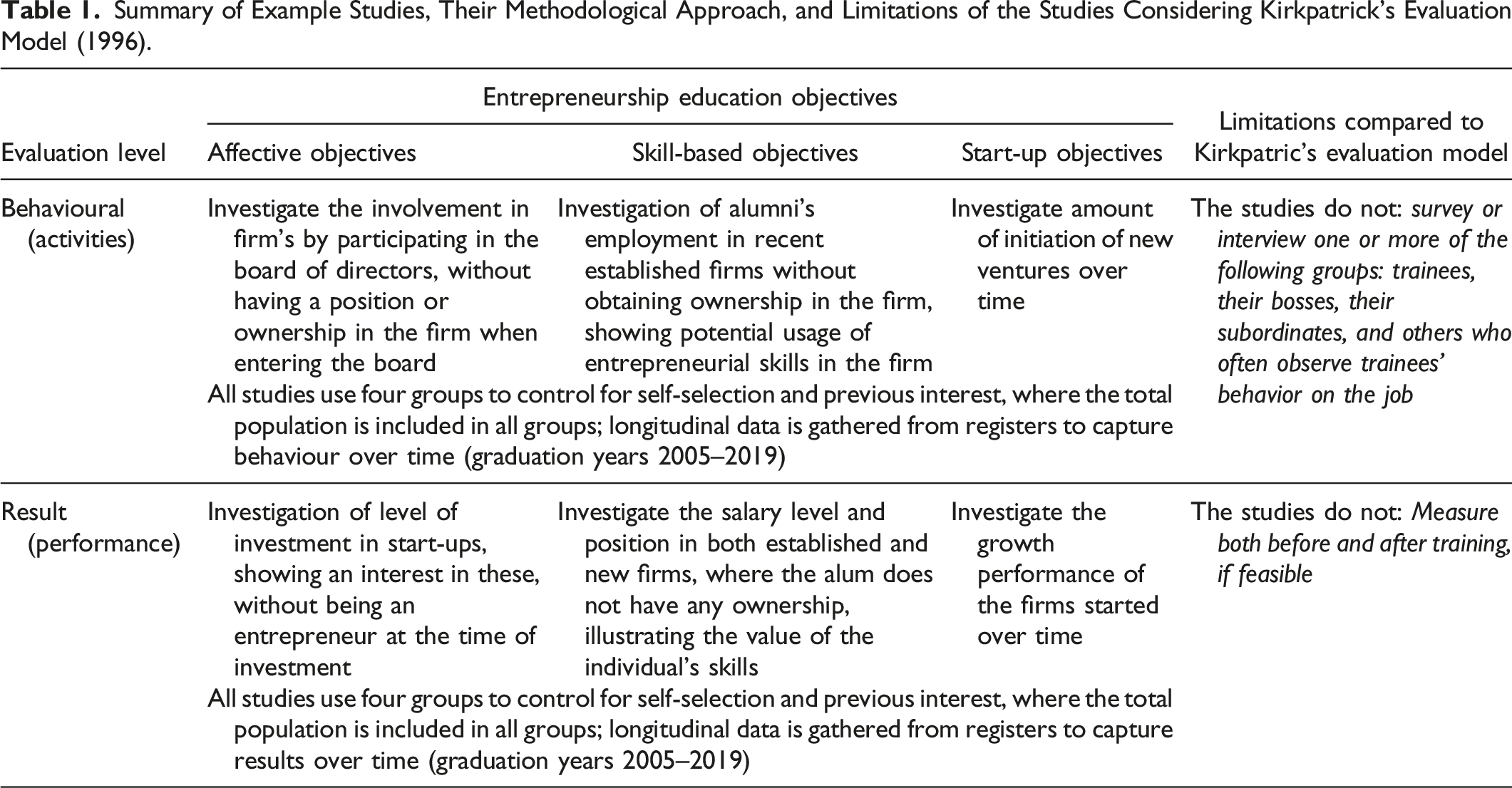

When linking these levels to the general objectives found in entrepreneurship education (Samwel Mwasalwiba, 2010), starting with the behavior level, affective changes with the individual might be reflected in support of entrepreneurial activities in general. For instance, this could occur through facilitation for collaboration with young firms if the alum is employed in an established firm, or as an advisor or investor in start-ups, although not actively being an entrepreneur, or by contributing, supporting and having a relationship to the former the education (e.g., Hall, 2011). For the skill-based objectives of entrepreneurship education, using these skills after graduating would be evidence of behaviour, independent of the context where it is applied (e.g., in start-ups or established firms, see further Alsos et al., 2023). Lastly, investigating the objective of start-up activities, starting or contributing to the early stages of a new firm would be clear evidence of relevant behaviour (Gielnik et al., 2017).

In terms of results in Kirkpatrick’s (1996) model, the last level, the discussion becomes more complex for entrepreneurship education. For instance, a change in the individual’s affection towards entrepreneurship might lead to a positive attitude towards entrepreneurship in the general population, more focus on small-firm collaboration among individuals in established firms, or a long-term movement into entrepreneurial careers (Fayolle & Gailly, 2015). For the skill-level objectives, the relevance and value-creation of the individual’s skill usage could be investigated, as the skills obtained from entrepreneurship education programmes have been found applicable in various contexts (Alsos et al., 2023). In terms of start-up activities, one might expect that education in entrepreneurship could give the individual a benefit, leading to better performance or viable activity, for example through growth (Guzman & Stern, 2020).

Investigating Entrepreneurship Education Impact Using Register Data

As presented, Kirkpatrick’s (1996) model presents the evaluation of a programme in a holistic manner, thus being relevant to the complex outcomes and objectives of entrepreneurship education. For longer-term impact, the model’s levels behaviour and results focus on this, and these levels are therefore discussed considering entrepreneurship education’s objectives when using register data in the evaluation. Register data is in this paper defined as data maintained by public bureaus, for instance, the official records from the university or data obtained by the government. The data consists of different variables and types, for instance, place of birth and income, where time series variables are updated monthly or annually. The data about the individual and related firms are organised in a panel data structure. To illustrate the use of register data, I use a single educational programme as a case study. In this section, I therefore present the case first, then the rationale and data, before this case is used to illustrate the use of register data in the different levels of evaluations of affective, skill-based and start-up objectives.

Case Presentation

The case is a two-year master’s degree in entrepreneurship, where the students are starting their own ventures as a part of the educational design. The programme is open to all individuals that hold a three-year bachelor’s degree or equivalent, and the students are coming from all backgrounds, for instance, nursing, engineering, economics, and so forth. The experiences and outcomes from their venturing activities are used in the education as important sources for learning, and the program can be labelled a venture creation programme (Lackéus & Williams Middleton, 2015). As the programme is located at a technical university in a tech-oriented region, most of the start-ups founded during the programme are technology-based, and several of the courses in the programme have a tech focus. In addition, the students sit in an incubator to which only the programme’s students have access, and where the students’ experiences consequently are shared in a community of peer professionals. The evaluations described here are therefore of a programme that is student-directed (action-based) and where its design is using authentic technology-based contexts in giving students relevant learning.

The main objective of the programme is to educate business developers, where this includes business developers in established, young and self-started firms. Hence, students need skills to be capable to create value in various employment positions, affective capabilities making them interested in working with entrepreneurial endeavours (meaning business development in an entrepreneurial manner), but also the ability to and behaviour in terms of creating their own start-up. Therefore, evaluating the programme’s impact needs to include investigation of affective, skill-based, and start-up outcomes, but also to match this with the educational approach and contextual influences.

Rationale and Data Collection

The program has an admission process that consists of applying for enrolment through the university’s admissions office. The application includes a motivational letter, CV, and transcript of grades, and based on these documents, it is decided which students are invited for an interview with the programme’s faculty. After the academic staff has conducted the interview, the candidates are thoroughly assessed and discussed among the faculty, before a group of applicants is nominated for admission to the study program. In recent years, for the programme’s 35 places, there have been approximately 350 students who have applied, of which around 130 have been invited for an interview, and where most of the remaining have not submitted a letter of motivation.

In order to control for the admission process and motivation of the students, as well as compare against students from higher education in general, the evaluations have been designed to examine four groups of former students: (a) alumni who have completed entrepreneurship education (the program in focus), (b) alumni who applied for the program and participated in interviews, but were not offered a place, (c) alumni who applied but were not invited for an interview, and (d) alumni who have not applied for the program but have a master’s degree from the same university. The evaluations, therefore, include multiple groups that are related and have similar characteristics.

Because there are few students in the individual cohorts (on average 23 students in each cohort in the programme; from the class of 2013, the number of enrolled students increased by 50 percent), it will also be important to gain insight into a larger number of alumni from the program to do good analyses, in accordance with Kirkpatrick’s (1996) implementation guidelines. The evaluations therefore include analyses on alumni in the four groups over the years 2005 (the first graduating class from the programme) until the graduating cohort in 2019. The graduation year 2019 is chosen because 2020 is the last year with the most up-to-date data from the empirical sources and it is desirable to study cohorts that have at least one year’s work experience registered in the data. The sample sizes among the different groups are: (a) 321 alumni, (b) 310 alumni, (c) 1293 alumni, and (d) 38,406 alumni.

To get reliable information about the individuals and their activities, register data is collected from the university, Statistics Norway (national statistical institute responsible for official statistics), and the Norwegian Research Council. This includes data on income, employment, firm ownership, personal status, firm data, address, and demographic variables, as a few examples. More than one thousand different variables are available through Statistics Norway, giving opportunities to investigate multiple relations and developments. However, it should be noted that obtaining such data might require a lot of resources as the data might not be easily accessible. In this case, an immense application process is required, both at the university’s ethics committee and the official bureaus, and the process could potentially last years, depending on the project. This is due to the complexity and privacy regulations, which could hinder access entirely in other situations and contexts.

Meeting Affective Objectives

The first general objective of entrepreneurship education is to increase entrepreneurial spirit, culture, and attitude (Samwel Mwasalwiba, 2010). Through participation in education, the individual gets a sense of belonging in a network of like-minded people, and the individual builds relationships that can be active long after graduation and in relation to the educational institution (Hall, 2011). Having a degree from a given education can also help influence the attractiveness of the individual in the labour market (Hall, 2011). Although entrepreneurship education may result in the individual starting his or her own business in or after the education, the competence of the individual is also attractive to both newly established and established companies (Alsos et al., 2023). As such, alumni from a specific education could benefit from their insights and social network or the attractiveness of their educational background in their careers. Thus, contributing to the community through investments, being on the board of directors, or working in entrepreneurial firms might be a proxy for entrepreneurial spirit, culture, and attitude.

To investigate this objective both behaviourally and in terms of results, multiple viewpoints should be included in an evaluation. The register data can, for instance, be used to explore whether graduating from entrepreneurship education can affect the individual’s choice (opportunities) of jobs, as well as investigate whether the network established in such education affects where the individual works. Working in start-ups (not as a founder) or taking a board role in other alumni’s start-up companies would be clear behavioural activities relevant to the affective objective, which register data could give insights into.

Furthermore, by studying whether alumni from entrepreneurship education work in the same start-up (meaning alumni recruit alumni), contribute to other alumni through investments in the latter’s start-up companies, and whether the companies that are founded to a greater extent are co-located (by postal code), more result-oriented insights can be extracted from the register data. This data could show regional development over time if firms stemming from the education cluster in a specific region.

Meeting Skill-Based Objectives

For the skill-based objective, it has already been shown that students from entrepreneurship education can have various positions and use their skills in new and established firms (Alsos et al., 2023). In the work by Kucel et al. (2016), they also conclude that entrepreneurship education contributes to skills that make the students find a good job match. Their conclusion builds on the assumption that these students are more prepared for working in a changing environment, and further, that these skills also contribute to higher productivity in such environments. As such, the students with entrepreneurial education obtain better jobs (Kucel et al., 2016). However, how the former students that utilise these skills develop their careers is to a lesser extent explored, although the calls have been many (e.g., Pittaway & Cope, 2007). Moreover, having experience as an entrepreneur from the program could give, for instance, special management skills that could also be of value in an established firm (Alsos et al., 2023).

Investigating the behavioural effects on the skill objective is done by investigating the position of the individual and the employing firm, whether this is a newly founded firm or an established company, and if the position is at the management level or similar. The register data give insights into these details and show how the individuals are positioned in the working life, but also show how this evolves, giving indications of the skill base and -usage. This evaluation of the programme therefore shows insights into education’s influence on future entrepreneurial efforts in start-ups, indicating whether a more student-directed approach gives the students the skills and experience which could make them pursue other start-up initiatives later in their careers.

When investigating the results of the education on the skill-based level, the former students’ positions and firm characteristics, but also the salary level the former student, can provide indications of how alumni’s skills or profile are utilised among firms. As found in the work by Kucel et al. (2016), a better job-match could give better performance through the efficiency of the alum, which could be reflected through a higher salary or position. By investigating the register data, the value of the competence obtained from the education could therefore be illustrated. This insight could give an indication of the quality of the students from the programme, but also where the alumni work, showing the variation of the students’ potential jobs and where their competence is valued.

Meeting Start-up Objectives

The last objective of entrepreneurship education is start-up initiatives. As already mentioned, not all students start working in their own ventures upon graduation (Dahlstrand & Berggren, 2010), and the entrepreneurial career might start later in the individuals’ lives (Burton et al., 2016; Marshall & Gigliotti, 2020). While research has investigated the long-term behaviour of students in terms of entrepreneurial activities and business creation (Gielnik et al., 2017; Rauch & Hulsink, 2015), the time frame of these is rather short.

To investigate the behavioural impact of the programme on start-up activities, the starting of new ventures is of interest, and especially over time. For instance, if the students are serial entrepreneurs, meaning they start multiple sequential ventures over time, would be of interest. Also, where the ventures are located or the industry where they are initiated would be of interest, as this could give insights into how students’ educational backgrounds and contexts influence the venturing activity. Hence, by using register data, it is possible to gain insights into these numbers and show the behavioural impact of the education.

While the numbers of new ventures are easy to investigate, also the quality of the firms is of interest, but this could require other analytical approaches. When investigating the result level of the start-up objective, performance indicators on the individual’s firm could give valuable insight into the impact of the education, especially for firms founded by former students. Specifically, performance measured through the start-up’s growth could give result insights, for instance by using register data to explore capital invested in the individual’s start-ups, financial value creation from the firm’s statements (for growth investigation), and information about government grants and assets as a measure for how well these firms acquire such funds. As such, it is possible to use register data to explore whether the education gives the students a learning experience that has an impact on their entrepreneurial performance as alumni, this illustrating a result-level evaluation of the start-up objective.

The Benefits and Limitations of Register Data in Impact Research on Entrepreneurship Education

The evaluation examples in the previous section intend to be one step toward a more thorough impact evaluation of entrepreneurship education. As entrepreneurship education increases in numbers and support from policymakers, being able to investigate the impact of such education is important (O’Connor, 2013). However, as there is a challenge in self-selection bias, control groups (Rauch & Hulsink, 2015), longitudinal career characteristics, and long ‘time-to-entrepreneurship’ for alumni (Marshall & Gigliotti, 2020), along with vaguely described objectives in entrepreneurship education and in its evaluation (O’Connor, 2013), studies of entrepreneurship education impact need to be designed such that these issues are minimised. The use of register data aims at meeting some of these challenges.

Summary of Example Studies, Their Methodological Approach, and Limitations of the Studies Considering Kirkpatrick’s Evaluation Model (1996).

Register Data and Methodological Issues of Entrepreneurship Education Evaluation

Of the three methodological issues identified in the previous sections, register data is a good source to handle all of these, given entrepreneurship education programmes like the one described above. The longitudinal data is key in solving the issue regarding the time-to-entrepreneurship problem and that entrepreneurship in start-ups could occur in phases of an individual’s career (Burton et al., 2016). By using the data collected from official records on the four groups in the case, the results are rigorous and will give insights into the individuals’ actual activities over time. Researchers can therefore investigate different activities and outcomes connected to the individual, giving rich insights into former students’ career paths.

Moreover, by also collecting information about relevant control groups, the issue regarding self-selection is solved, although this design is not a true experiment in terms of complete randomisation of treatment or not. The different groups included help in diminishing several methodological problems. First, students’ interest in entrepreneurship is controlled through two groups; the ones that have the most motivation and understand they need to submit a letter of motivation, and the ones that have some interest in entrepreneurship education and only apply. Second, those that submit their letter of motivation, but are not enrolled in the program, still have a high quality and are difficult to separate from those who are enrolled in the program. These two groups can therefore be regarded as equal for most of the groups’ members and can furthermore be used in an experimental design although there is no complete randomness in the admission process. However, creating full randomness in the application process might cause unintended and unwanted outcomes, in addition, to having the potential of being somewhat unethical. Third, the last group is also included as a control group, investigating whether having a master’s degree from a technical university is influencing the alumni more than the education in focus. As such, it is controlled for treatment and no treatment, and high interest, interest, and higher education, handling the self-selection and control group problem found in current research (Rauch & Hulsink, 2015).

Thus, in terms of methodology, the studies described here align well with Kirkpatrick’s (1996) evaluation guidelines regarding time to behaviour and results, in addition to the inclusion of control group(s).

Register Data and Objectives in Entrepreneurship Education Evaluation

In terms of objectives, the previous show that register data have the possibility to contribute to evaluating entrepreneurship education, however, strictly investigating one outcome measure might not give insights into the full impact of the education. Students could be influenced differently by the same offerings (Longva & Foss, 2018), and as such, evaluations should investigate several measures to understand entrepreneurship education’s impact. As an example, the objective of improving students’ affective characteristics could also influence the creation of jobs and start-ups, although indirectly through investments, board participation or other support activities, for instance through supporting their former education (Antal et al., 2014). Hence, looking at job creation alone might not give a thorough understanding of the impact of the education. At the same time, investigating affective changes might not give insights into start-up creation, and skill changes do not say anything about the quality of potential start-ups nor if these skills are utilised in entrepreneurial activities post-graduation.

Therefore, by designing evaluation studies with different objectives in mind, in addition to considering the context and educational design, the programme’s impact can be evaluated more rigorously. The programme used as an example in this paper aims at creating business developers with special experience within technology-based business development. Alumni from this programme might therefore work in established firms, or start-ups created by themselves or others, but also contribute to business development through participating in boards, with investment or as advisors for other entrepreneurs. Hence, solely looking at one of these outcomes will not give a full impression of the programme’s impact, and I, therefore, recommend investigating multiple objectives with multiple outcome measures. As such, the different objectives of entrepreneurship education need different evaluation forms in Kirkpatrick’s (1996) model’s levels, and to capture the potential outcomes at various levels, different ideas, approaches, and designs are needed.

Limitations of Register Data in Entrepreneurship Education Evaluation

Regarding limitations, when looking at Kirkpatrick’s (1996) implementation guidelines, a pre-evaluation of results is difficult, if not impossible, for some of the objectives in entrepreneurship education, as most individuals in this context have never been working or been entrepreneurial through starting new ventures. As such, expecting students to have high results prior to the education might be too ambitious, however, not out of the question. Especially the affective objectives could have a potential change in results, for instance in investments in start-ups (equity crowdfunding, as a possible example). Thus, there might be situations where students change their result indicators before and after an education, but for the skill-based and start-up objectives, assuming students are early to mid-twenties, this might be less likely. However, for situations where the students are more likely to have entrepreneurial activities prior to the education in focus, the register data could also be gathered and analysed prior to the educational efforts. The complexity of the analysis would increase in such situations, depending on the age of the students and their prior careers.

For the behavioural evaluation level in Kirkpatrick’s (1996) model, a study using register data will lack information from the individual in focus, its colleagues, partners, and similar. While the previous issue with pre-post result analysis could be handled and in some instances being irrelevant, the second issue is more problematic, at least when evaluating the individual, as is the case in this paper’s examples and in Kirkpatrick’s (1996) model. When the use of register data gives rigorous results and insights into the individual over time, a critique of the use of register data alone is that it might be limited in explaining beyond the empirical level. Without interviews or surveys with the individual, their colleagues, partners, and similar, it is difficult to understand the individual’s actions and what is causing the actions. To understand the outcomes’ connection to the education, the use of mixed-methods and follow-up studies is recommended. Building theoretical contributions from the empirical data might be difficult, for instance, on an alum’s employment in other alumni’s start-ups, but combining these data with qualitative investigations might help us in understanding the phenomenon. Using register data alone shows us the magnitude and difference between groups, indicating an impact on various outcome measures; understanding the magnitude and difference requires us to re-engage with our former students. Hence, if we would like to build theory when investigating entrepreneurship education at the individual level, register data might give us insights into the what and how; the why might need additional data (Whetten, 1989).

Conclusion

This paper has, by showcasing the use of register data in evaluating entrepreneurship education, illustrated the possibilities and limitations of such data sources. By using register data, it is possible to evaluate different objectives in entrepreneurship education and cope with the common limitations found in the literature, especially if the programme has an admission process like the case study presented here. Utilising the admission process, if there is a high number of applicants, can reduce the problem with self-selection and interest in entrepreneurship by comparing alumni from the programme with those not enrolled. Register data can also give insights into long-term developments of entrepreneurship education alumni, which is needed as entrepreneurship takes time and could occur later in the alumni’s careers. Impact evaluation through register data could therefore move beyond short-term impact, and further show the actual behaviour and results of entrepreneurship education which is lacking in previous research (Nabi et al., 2017). However, register data can simultaneously indicate lower-level impact, for instance through affective measures. Thus, register data can indicate the impact of entrepreneurship education on the common objectives found in these educational offerings – affective, skill-based and start-up objectives in entrepreneurship education – both at the behavioural and result level of Kirkpatrick’s (1996) model. As such, available register data can serve as a source for evaluating entrepreneurship education, giving program managers or other stakeholders an alternative to surveys and other data collection that might require resources in development and continuous maintenance. It must be said, though, that collecting and analysing register data could be an immense activity, both in access applications, as previously mentioned, but also in the potential complexity of working with such (potentially) multidimensional datasets. Therefore, having deep insights into the programme and its context is necessary to ensure that available register data can serve as a source of impact evaluation.

However, while register data can give valuable, reliable, and rich information on the activities of alumni from entrepreneurship education, potentially showing examples of results on both a behavioural and result level for multiple objectives, this paper identifies three limitations in using register data. First, while the objective is of uttermost importance when evaluating, focusing on one outcome measure for one objective, as an extreme, gives limited insights into the impact. Focusing only on start-up creation might give a skewed insight into entrepreneurial activity. Hence, using multiple outcome measures is needed to explore the multiple opportunities and outcomes entrepreneurship education might provide.

Second, an important limitation of using register data is the lack of insights from colleagues, managers, peers or similar on the individual’s behaviour, and even the individuals themselves, which is an important part of Kirkpatrick’s (1996) third level of evaluation. While this level focuses on change in behaviour in a pre-post situation, often in a firm with expected changes due to a training programme, using the individual and close contacts in linking the learning to behaviour could be of high value and importance for understanding and developing the programme.

Third, and building on the former, although register data is a good source to investigate the individual’s historical activities – exploring variables and their relation – understanding and explaining the events could prove difficult. Meaning why the individual behaves as the data shows could be impossible to explain. Using register data as empirical proof could be meaningful and important in many instances but building theoretical insights into the former students’ results from the education – their learning and development – could meet resistance unless additional data is included. For such studies, using a mixed method approach could be beneficial, for example by including interviews or surveys, answering both what entrepreneurship education could lead to and why this is the case.

While this paper gives some suggestions and identifies benefits and limitations when using register data, it is important to mention that the case study used here is a specific entrepreneurship education programme in Norway. While many programmes are similar, their objectives, context, students, and design might slightly differ, which could require their own or different ways of designing evaluations. As such, while that is a limitation of this work, it is also an example of the conclusion in this paper, that different entrepreneurship education with different objectives often need their own evaluation methods.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.