Abstract

Research into entrepreneurship education is on the rise, yet the assessment of causality resulting from various teaching methods and their impacts on students and society at large remains limited. Consequently, incorporating experimental designs into entrepreneurship education research becomes imperative to move the field forward. Establishing cause-and-effect relationships, discerning which approaches yield desired outcomes (and which do not), contributes to a clearer understanding of the connections between educational activities and their results. This special issue underscores the importance of experiments as a methodological tool for showcasing causality. In this editorial, we expand on the reasons for the need for more experiments within the field. Additionally, we feature an interview with Dr. Thomas D. Cook, a pioneer in education research experiments, and offer an overview of the articles included in this special issue. We finish with a shortlist of tips for anyone interested in conducting experiments.

Introduction

One of the biggest challenges confronting entrepreneurship education research is the analysis and assessment of causality reflected in the relationships between pedagogical methods, approaches, and outcomes for the students and broader society. While the relationship between cause and effect is critical in educational settings, the multiple factors and variables that emerge in the classroom and the broader educational context bring additional complexity into play, and most of the research designs used in entrepreneurship education still fail to analyze such causal relationships. Despite the challenges associated with assessing causality, entrepreneurship education research urges us to take a firm step forward in understanding more clearly the patterns of relationships between causes and effects. To advance the field in such a direction, scholarly work in entrepreneurship education would benefit from adopting experimental research methods.

This inquietude sparked the current special issue. A few years ago, the guest-editors of this special issue gathered around this urgency in bringing experiments as a research method to entrepreneurship education. While experiments have been the focus of special issues published in leading entrepreneurship and management journals (Anderson et al., 2019; Stevenson et al., 2020; Williams et al., 2019), the conversation is still much more silent in the field of entrepreneurship education. As scholars and educators who also assume leadership roles in two prominent organizations that support entrepreneurship and entrepreneurship education research (USASBE - United States Association for Small Business and Entrepreneurship; and ECSB - European Council for Small Business and Entrepreneurship), we found support for the need to launch a call for experimental studies in entrepreneurship education. The nature and scope of USASBE’s Entrepreneurship Education & Pedagogy, the leading journal in entrepreneurship education, was the most adequate “home” for this important conversation. Beyond the evident fit with the topic of this special issue, this also represented an important opportunity to strengthen the ties of collaboration between USASBE and ECSB members.

This special issue pertains to advancing the field of entrepreneurship education through experiments as a methodological toolkit that can demonstrate causality. In this editorial, we aim to a) reflect on the reasons why such conversation is necessary for the advancement of the field; b) share an interesting interview that we had the opportunity to have with the “father” of experiments in education research, Dr. Thomas D. Cook; and finally c) provide an overview of the articles that are part of this special issue. As we will describe in this editorial, the journey to realize this special issue was long and rich with several fruitful discussions along the way.

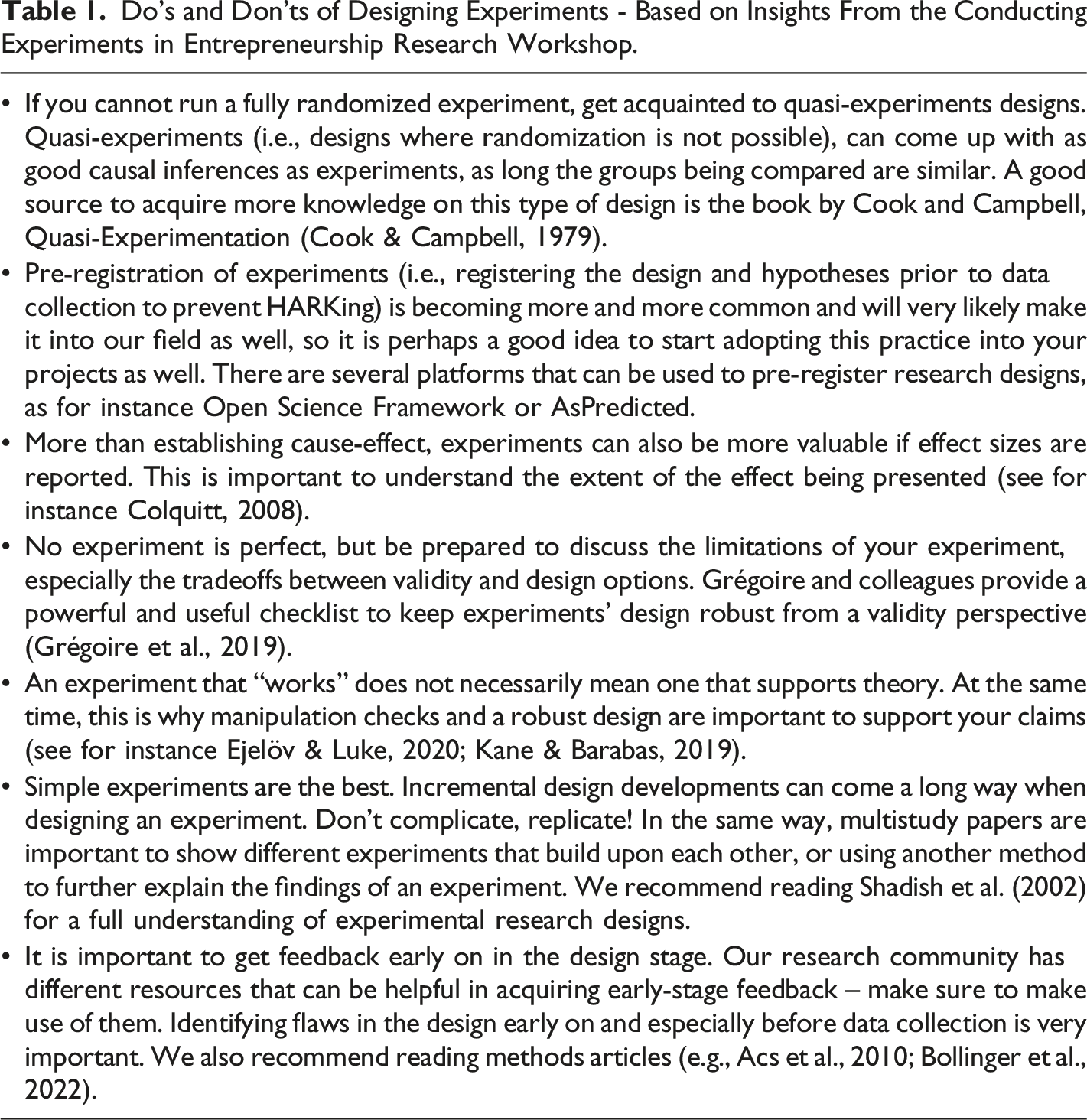

While managing this special issue, we organized four meetings with prospective authors, colleagues and experts in experiments, each of which was critical toward sparking the conversation and discussion around the theme of experiments in entrepreneurship education. At the end of this editorial we present a summary of the main recommendations and suggestions from experts in the field on how to successfully conduct experiments in entrepreneurship research compiled during these meetings. It is our hope that this special issue is the beginning for a larger discussion around the need for conducting research using experimental designs in entrepreneurship education research.

The Need for Experiments in Entrepreneurship Education Research

Understanding entrepreneurial activities is a complex endeavor. Such activities reflect a diversity of heterogeneous characteristics and dynamic patterns (Lichtenstein et al., 2007). Hence, establishing the nature of causal relations between what determines entrepreneurial activity and its outcomes is a difficult challenge. Experimental designs provide a powerful lens to determine causality (Colquitt, 2008) and have been labeled “the most rigorous of all research designs” (Trochim, 2001, p. 191).

Interest for such designs is growing in the areas of entrepreneurship and small business as they are seen as a necessary means for effectively isolating certain variables and understanding their roles through understanding causal relations. Indeed, over the last decade several calls for more experimental designs in entrepreneurship research illustrate that the scholarly community recognizes that experiments have an important contribution to advancing the field (e.g., Williams et al., 2019). Scholars have also created communities, networks and activities to support conducting experiments in entrepreneurship research. For instance, the Annual Meeting of the Academy of Management has held several sessions about conducting experiments in entrepreneurship research; and in Europe, the Conducting Experiments in Entrepreneurship Research Network (supported by ECSB) has now organized four editions of the annual online workshop to support researchers working with this method. The proliferation of these initiatives demonstrate that scholars recognize the importance of experiments as a research method in entrepreneurship and are joining forces to bring more of this method into our field.

The observation of a cause-effect relationship is crucial in entrepreneurship education and training. Scholars have for long dedicated efforts to understand the effects of entrepreneurship education on competence development, reflected on the best practices for entrepreneurship education and conducted comparative studies to understand the impact of education in different groups of individuals (Fayolle et al., 2006; Fayolle & Gailly, 2015). Yet recent reviews (e.g., Schenkel et al., 2023) reveal that the state of extant scholarly efforts remains fragmented, raising significant questions and concerns around insight aggregation and generalizability. Additionally, universities and other institutions invest significant resources in entrepreneurship education in hopes that it stimulates entrepreneurial interest and behavior for students and the broader local, regional and national ecosystems.

Collectively, these observations suggest that the need to employ experimental designs in testing the effects of entrepreneurship education programs and activities is urgent and essential to measure the impact of pedagogical approaches. Despite the benefits of using experimental designs to establish causal relationships, this research design is still rare in the entrepreneurship field in general (Williams et al., 2019) and in entrepreneurship education specifically (Blenker et al., 2014). While entrepreneurship education has a central role in defining policy agendas to better support entrepreneurial activity and entrepreneurs (e.g., European Commission designed the Entrepreneurship Education agenda), questions continue to persist on how and why it affects individuals’ entrepreneurial activities (von Graevenits et al., 2010). Beyond investigating the effects of educational interventions in entrepreneurship, experiments can address other pressing issues of the field. Next, we present three examples.

First, while experiential learning is pointed out as a beacon in entrepreneurship education (e.g., Neck & Corbett, 2018), research continues to lack an understanding of how different types of knowledge derive from experiential approaches (Hägg & Kurczewska, 2020). Experimental designs bring an important advantage to uncover such important relationships as it allows to isolate variables as predictors of specific outcomes, and thus assess how experiences in the classroom result in different aspects of knowledge (e.g., competencies, skills, intentions, attitudes, or perceptions).

Second, entrepreneurship education research recognizes that students’ idiosyncrasies are important when designing educational interventions (e.g., Costa et al., 2018). However, prior research attributed inconclusive results about the effects of entrepreneurship education in students’ competence development to students’ homogeneity (Shneor et al., 2020). Because randomization is a fundamental aspect of experimental designs, it allows researchers to assume that between groups, individuals are homogeneous and therefore test its effects in a robust manner. Alternatively, experiments can also compare groups of individuals with different characteristics, or in specific educational settings or disciplines (Kleine et al., 2019). In this way, experiments can address the sample homogeneity issue.

Third, the majority of studies on the effects of entrepreneurship education (including experiments) on the development of entrepreneurial competencies predominantly focuses on the positive outcomes of educational interventions. However, scholars have been calling for more studies challenging the dominantly positive view on entrepreneurship education, calling for a need to "unsettle" the field (Berglund et al., 2020) and questioning the "dark side" of entrepreneurship education (Bandera et al., 2020). Because experiments are the best way to establish causality, they should also be a preferred method to test such adverse effects of interventions.

In sum, using experiments to establish causal relationships in entrepreneurship education is important for three reasons. First, it allows isolating certain variables as predictors of certain outcomes. Second, it would move the field forward by going beyond the inherent limitations of (single group) pre- and post-test designs – which are currently predominant in entrepreneurship education literature – by including more rigorous, full experimental designs using both experimental and control groups. Third, it enables looking at the effects of pedagogical interventions with different groups of individuals.

The advantages of using experimental designs in entrepreneurship education research do not come without significant challenges. While randomization of participants is key for designing experiments, it is not always possible for various reasons. Therefore, researchers are often tasked to design alternatives to test their educational interventions. For this reason, quasi-experiments are often a safe alternative to test cause-effect relationships in this field (Shadish et al., 2002). Additionally, certain educational interventions aim to bring students or potential entrepreneurs to the same level as experienced entrepreneurs in a given competence or skill (e.g., developing one’s entrepreneurial intentions, entrepreneurial mindset, among others). In these cases, a multiple group analysis and comparison with the entrepreneur population is often necessary, though sampling difficulties make these hard to achieve. In contrast, more recent types of educational interventions focus on simulations (Fox et al., 2018), and experiments would enable to explore more clearly the effects of such interventions and how close to reality they are. These examples of challenges ask for an urgent conversation and discussion about best practices and ethical considerations when using experiments in entrepreneurship education, which is also necessary to cover in this special issue. To do so, we sought out and had the honor to interview Dr. Thomas Cook, recognized as the father of experiments in education research for perspective gained through his many years of experience. In the next section, we present transcripts of this inspiring conversation.

Learning from an Expert: Insights from an Interview with the Father of Experiments in Education Research Dr. Thomas D. Cook

Whereas the entrepreneurship field does not have a long tradition in conducting experiments, other disciplines such as sociology, psychology and education have been using this method since the 19th century. Dr. Thomas D. Cook is a Professor Emeritus of Sociology, Psychology and Education at Northwestern University, and is recognized as the father of experiments. To gather more insights about the best practices and ethical considerations on experimental research, Sílvia Costa had the privilege to interview Dr. Cook in the context of this Special Issue.

Dr. Cook has conducted extensive work on experiments as a research method – more than 200 publications, including the well-known book Experimental and Quasi-Experimental Designs for Generalized Causal Inference (co-authored with Sadish and Campbell) that is widely recognized as a common guide for anyone interested in designing experiments. Dr. Cook has also done extensive work on the use of experiments and quasi-experiments to test the effects of educational approaches and practices, in top leading journals such as Review of Educational Research, Annals of the American Academy of Political and Social Science, Journal of Policy Analysis and Management, Educational Evaluation and Policy Analysis, Nature Human Behavior, among many others. His insights on conducting experiments in educational settings have been groundbreaking and, we believe, offer value to the field of entrepreneurship education as well.

In this interview, we gathered insights on three main topics: a) how can we promote a cultural shift within the entrepreneurship education research field towards a larger interest in experiments?; b) what are particular challenges in conducting experiments in the field of entrepreneurship education that we should be aware of?; and c) are there any specific ethical concerns in conducting experiments that we need to consider? Next, we present a transcription of what we considered the most relevant parts of this interview.

Promoting a Cultural Shift Within the Entrepreneurship Education Research Field Towards a Greater Interest in Experiments

In American education, the cultural shift towards randomized experiments has been heavily financed by the US Department of Education which has spent, I would guesstimate, at least $2 billion over the last two decades in the promotion and execution of randomized experiments. A separate arm of the Department of Education was even established, called the Institute for Education Sciences, whose initial mandate was to promote school-based randomized experiments. Universities and contract research firms were enlisted to conduct such experiments in anticipation that their results would be communicated to educational researchers, school officials, the general public, and media professionals and would lead to school change. Just how much change occurred because of the experimental findings generated is not clear; clearer, though, is that most (but not all) studies showed that the interventions examined had no measurable impact – a result that was considered more valid and credible than if other forms of research had been conducted to assess program impact. The same evolution occurred in France, though on a much smaller scale. Initially, there was strong resistance to randomized experiments, probably due to the long French heritage of “planification” and its central assumption that substantive theory and experience-based thinking would identify effective program design and implementation, thus obviating the need for any independent test of efficacy like randomized experiments provide. This belief in planning was so strong that it permeated the public sector as a whole, not just education. More recently, though, La Cour des comptes has noted the limits of planning and has advocated for more randomized experiments, has increased the number of evaluation workshops for educators throughout the country, and has funded a center for evaluation at the Ecole des Sciences Politiques that embeds the advocacy of experiments within a mixed method ideology. All this is still in a work in progress, however, and implementation of the random assignment agenda still lags behind the US case. In Germany, the experimental agenda has been increasingly advocated, particularly by psychologists, but it has not been widely implemented despite intermittent advocacy. Absent seems to be strong, coordinated support from the educational or larger political establishment. The US investment in experiments includes funding national evaluations by contract research firms, smaller grant-based evaluations by individual educational researchers, creating a professional research organization and journal, and organizing many training workshops about the logic and practice of experiments. Also salient has been creating a digital library, called the “What Works Clearinghouse”. It archives the results of thousands of educational experiments in a way that is meant to make them easily available to all stakeholders, including teachers, researchers, school board officials, and state and national decision-makers. All are supposed to be able to use the stored information about what works to increase, in their own way, the odds of improving the achievement of American students. Such a process has surely sometimes resulted; but since randomized experiments have more often identified planned interventions that do not work as opposed to those that do work, the clearinghouse also help point to interventions that are not likely to be worth pursuing. Understandably, some researchers now want to “shoot the experimental messenger” rather than admit to the limited reach of the new ideas that were tested, whether derived from educational theory, ruminations on practice, or imagination. Other researchers prefer to highlight the complicated processes of adaptation that are needed to go from theory, single experiments, and small meta-analyses to very general and heterogeneous practices that cannot themselves be reasonably tested at the usual experimental scale. When experiments do not produce the hoped-for results, decision makers have to rely on professional judgment, even while admitting that this may be a logically inferior approach. Why do most randomized experiments show what doesn’t work rather than what does? It may be because most of the interventions tested to date involve only marginal differences from current practice; they are not aimed at fundamental change. Typically implemented is a study to examine the consequences of tweaking one element of the total reading process, or one element of the math process, or one element of teacher training. If the interventions entail a small difference from current practice, is it realistic to expect large effects to be detectable? When experimentation began in earnest in education (and other social science fields) about 50 years ago, one motto was: “Only test bold ideas”, implying that it wastes precious resources to evaluate minor changes that are likely to result in only minor impacts. Such advice has gotten lost, but it was one salient original impetus for conducting social experiments.

Another way to promote the experimental agenda is to emphasize the logic of randomized experiments. It is quite easy to demonstrate that if you have two groups that are initially equivalent to each other but are different later after an intervention has been applied to only one of them, then the final obtained difference might be due to the intervention but cannot be due to the groups being differently composed initially or to them being otherwise differently treated. Just as important is to show how alternative methods of reaching this conclusion are prone to bias, sometimes leading to false positive and sometimes to false negative findings. It helps, of course, to have several concrete case studies that show such bias occurring in easily understood contexts. This way, people will quickly understand the logic of randomized experiments, and why this logic is to be preferred to other ways of gaining causal knowledge.

Challenges in Conducting Experiments in the Field of Entrepreneurship Education

The companies that now do experiments all the time are the big tech ones, less so small start-ups. This is because the Microsofts and Googles of the world can invisibly embed experiments within their routine and profitable procedures multiple times a day. In their case, each impact they obtain is tiny, but the total impact over many such tiny objects of study and hundreds of millions of users is considerable. Very precise estimates of very many small differences can have major implications, limiting the adage: “Only evaluate bold ideas”. Whether random assignment was used much when these companies were small and under truly entrepreneur control is a moot point. Today, such experiments happen in established firms that are the product of entrepreneurship rather than subject to entrepreneurial efforts. But all entrepreneurs need to think that their model works, and that evidence of its effectiveness may help persuade others of this, whether funders or even the general public. Thus, if they could see that their experiments, or those of academics who study entrepreneurship, demonstrate the value of entrepreneurship, then this is evidence they would like to have to complement what they would otherwise have. Everybody likes to have more support for their ideas and to anticipate less opposition to it. That’s the angle for public acceptance of the idea of entrepreneurship, I think.

Ethical Aspects of Conducting Experiments in Entrepreneurship Education Research

A second thing to remember is the ethics of not doing an experiment. If you don’t do an experiment, for fear of inequality or other ethical concerns, then you will never know with as much certainty that the intervention works. And if you use an alternative weaker causal method and conclude the intervention works when it does not or claim that it does not work when it does, then you run a great risk if the intervention is widely adopted and have wasted much time and resources. The ethics of not giving people an intervention has to be weighed against giving it but providing the wrong conclusion because of the non-experimental method used.

To explore causal explanatory process or causal contingencies requires adding the requisite measures before an experiment begins and observing them especially during the period between when the cause is in place and the final impact is assessed. The analysis then seeks to identify whether the impact is stronger among those who underwent the hypothesized causal process than among those who did not. Within limits, this allows you to rule out some processes as irrelevant because the data don’t support them, and others as continuing explanatory contenders because they are not inconsistent with the obtained data pattern. A major problem here, of course, is that by measuring these intervening processes, one is intruding into the randomized experiment, and so one could ask oneself: “would I get the same results in the randomized experiment if there were no measurement of these processes?” To measure possible intervening processes reduces external validity, lowers the generalizability of the core causal knowledge obtained. A second problem is that the knowledge gained about processes or other causal contingencies is bound to entail a less secure inference than the inference about causation that the RCT permits. In much basic science, knowledge of explanatory processes occurs, not within single studies, but over a period of programmatic research aimed at gradually refining the process knowledge. If one speculates about a mechanism that might mediate a given causal connection, it can then be deliberately manipulated in a future study to establish its effects. If the explanation is still incomplete one can enlarge the program even further by conducting yet another study, gradually moving along a path that probes one explanation after another, a valuable (but expensive) way in which science is supposed to proceed. Notwithstanding, some people have argued for doing larger experiments with more people, more schools, more times or whatever in order to assess sources of variation in effect size. To do this, the analyst essentially creates distinct subgroups from the data and estimates differences across these groups. Or the analyst collects potential explanatory data at times between the treatment onset and the outcome measurement. Both strategies are expensive since more of the research budget is spent on measurement and broader sampling frames. And so, the big question tactically is: Is it better to fund fewer but larger experiments, with extensive intermediate process measurement and analysis, or to fund more experiments that permit more interventions to be tested on smaller and more homogeneous samples with less probing, if any, of causal contingencies and other explanatory processes? That is a problem some decision makers struggle with, making it seem naïve to call reflexively for “mixed methods”.

[end of content from the interview]

Reflecting on the insights from the interview

The interview with Dr. Cook contributed to our understanding of how experiments are important in research and especially in education research, with decisive insights to entrepreneurship education. We distill three key points in this interview. First, legitimizing experiments in entrepreneurship and entrepreneurship education research is important and can be achieved by looking at other fields where this method has been well established. This is particularly relevant given the resources invested but also the different initiatives being developed at universities and by governments and institutions to stimulate entrepreneurial behaviors. Experiments have the power to help understand what interventions work towards an intended outcome and which do not. This knowledge can also be well documented and made accessible to the general public, which augments the importance of translation of research into practice, and helps educators across schools to borrow from this knowledge when implementing interventions in their schools. Not only researchers and educators but also entrepreneurs themselves need to become aware of the benefits of experiments. For example, they help demonstrate that not only their products work but also at a higher level that entrepreneurship itself generates certain desirable effects on individuals and society. Overall, running experiments with potential and actual customers to test hypotheses is part of the entrepreneurial (and intrapreneurial) journey, so bringing that practice to entrepreneurship education is necessary. If entrepreneurship educators task their students with conducting experiments and testing their products and services with potential customers, they must also practice what they preach, and develop experiments to test the effectiveness of their pedagogical practices.

Second, this shift, however, may face a challenge when we consider the ultimate goal of implementing entrepreneurship education programs and interventions. Experiments will be able to test particular effects of educational interventions at the individual level (e.g., knowledge gained, attitudes and intentions developed, skill improvement etc.); however, experiments will not speak to macro effects, such as the impact of entrepreneurship education activities at the societal and economic level (e.g., number of new startups created, regional and national economic growth, job creation, etc.). Therefore, the challenge for researchers after establishing experiments as a gold standard methodology in testing educational interventions in entrepreneurship, is to find innovative and impactful ways of showing that these cause-effect relationships lead to outcomes which go beyond the results seen at the individual student level after these interventions.

Third, we were both inspired and worried by the notion of having to consider the ethical aspects of not conducting an experiment to assess the effects of educational interventions about and within entrepreneurship. Indeed, without establishing with certainty the cause-effect relationship between such educational interventions and their outcomes, there is a high risk of wasting resources and implementing pedagogical approaches that lack effectiveness on the desired learning outcome to students. Additionally, experiments have the potential to explain cause-effects but also the mechanisms through which an effect is created.

The articles in this special issue

In their article entitled “Are Practice-Based Pedagogical Approaches Useful for Nascent Entrepreneurs Students? Results of an Experiment Conducted in Morocco” Siba Koropogui, Etienne St-Jean, and Safaa Zakariya examine the impact of a training program on key outcomes identified in the extant entrepreneurship education research. Specifically, drawing on a split sample comprised of nascent entrepreneur students and a control group with random assignment, they use a pre- and post-test design to investigate the relationship between program participation and the change in knowledge, entrepreneurial skills and competencies, attitude, self-efficacy (ESE), intention and behavior.

The editorial team believes that entrepreneurship scholars and educators will benefit from this investigation in at least three important ways. First, it highlights the importance of carefully considering the role circumscription can and needs to play in pedagogy and training design. Specifically, the findings illustrate the importance of pedagogical content alignment toward fostering knowledge, self-efficacy, and nascent behavior. Second, and closely related, they also illustrate the challenges associated with employing experimental techniques effectively – that is, as Dr. Cook notes, working to balance substantive issues like the desire to see immediate impact against having no or little knowledge that a given intervention is effective prior to conducting an experiment. Another is the need to address unanswered yet important questions around the time variance of programmatic impacts. Third, the finding that the control group made further progress in terms of advancing their attitude toward entrepreneurship and, to some extent, in advancing their relative entrepreneurial knowledge than the experimental group exemplifies an important, counterintuitive ethical question of whether current pedagogical approaches to training might be more harmful than “doing nothing”. Indeed, what makes this finding particularly interesting is that it runs counter to the seemingly ubiquitous assumption that all training is likely to be positive in its influence.

Each of these aspects suggests opportunities for future researchers to consider as they seek to use and leverage experimental approaches and methods to advance enduring theoretical debates.

In the article “Team formation strategies among prospective entrepreneurs – evidence from a large-scale survey experiment,” Arendt examines the influence of team formation strategy on prospective entrepreneurs’ attraction toward venturing. Using a quasi-experimental design with vignettes with a sample of business and entrepreneurship graduates, the author assesses how team formation strategies affect venturing and co-founding attraction.

This innovative work brings together the literature on entrepreneurial teams and entrepreneurship education through the use of an experimental design. The author explores how different types of team formation strategies (resource seeking strategies; interpersonal attraction strategies, and dual strategies, Lazar et al., 2020) affect venturing and co-founding attraction. Importantly, the author also explores the moderating role of entrepreneurship education in this relationship.

The main findings have relevant implications for the team formation strategies and attraction toward venturing and co-founding, and entrepreneurship education. First, there are distinct patterns of relationships between the different team formation strategies and attraction toward venturing and co-founding. Specifically, resource seeking team formation and dual strategies are both positively related to venture and co-founding attraction, while interpersonal attraction is not related to venture and co-founding attraction. Second, the interaction effect of entrepreneurship education on the strategy-attraction relationship is contingent on strategy. More specifically, entrepreneurship education graduates exhibit higher levels of venturing and co-founding attraction under the resource seeking strategy condition than non-entrepreneurship education graduates, but there were no differences under the interpersonal attraction condition.

One of the distinctive characteristics of this study was developing a between-subject quasi-experimental approach using vignettes manipulating team formation processes in the context of entrepreneurship education.

The results of Arendt’s study show that entrepreneurship education should support teaching team formation strategies and exercises early and throughout programs, as team formation strategies matter and most entrepreneurial ventures are started by teams.

We hope readers will be inspired and encouraged by this work and continue exploring the intersections between entrepreneurship education and team formation strategies through the experimental research designs.

In their article “Using experimental designs to study entrepreneurship education: A historical overview, critical evaluation of current practices in the field, and directions for future research,” Basil Englis and Arjan Frederiks examine the development and use of experiments as a research method in the field of entrepreneurship education. Specifically, they review the existing literature on entrepreneurship education research that employs experimental designs to provide readers with a comprehensive overview of the field and identify areas for further research. We as editors believe that researchers in the field of entrepreneurship education will greatly benefit from this overview and critical look at experiments as a somewhat neglected but indeed very valuable method to advance the field.

The use of experiments in the study of human behavior has contributed significantly to our understanding of various phenomena, including entrepreneurship education. Specifically, there is a growing number of experiments that offer unique insights into the effects of educational interventions on entrepreneurial outcomes. The comprehensive literature review covers extensively researched areas as well as those that remain relatively unexplored. The authors intend to encourage future researchers to fill these research gaps and therefore expand the frontiers of knowledge in entrepreneurship education.

In addition to the review, the paper critically evaluates previous experimental studies in entrepreneurship education research. In doing so, the paper provides valuable insights and recommendations to improve the quality and rigor of experimental designs. Building on this evaluation, the paper proposes a future research agenda for experimental designs in entrepreneurship education. The authors make a case for theory-driven experiments that draw on and test existing theoretical frameworks. They argue that the way the impact of existing educational programs or elements has been tested limits theoretical development in the field. Instead, they advocate developing treatments based on theoretical concepts of interest. The authors also emphasize the importance of including manipulations to ensure that theoretical constructs of interest have been successfully activated.

Second, the study suggests that future research should address more challenging research questions and therefore increase the bar of future research. For example, by including moderators, mediators, multiple independent variables, and multiple levels of variables, researchers can make a greater theoretical contribution.

Third, Englis and Frederiks emphasize the need for more robust experimental designs. They encourage researchers to isolate variables of theoretical interest and test specific theoretical concepts rather than comparing complex treatments. They also recommend the use of active treatment or placebo control groups to mitigate potential confounding effects.

We, the editors, highly recommend reading this paper as it makes a significant contribution to this special issue by highlighting the need for experimental and quasi-experimental designs in entrepreneurship research. It provides insights into the causal relationships studied to date and highlights areas for more advanced future research. We trust the results of the investigation will guide entrepreneurship education practice and strengthen the empirical evidence base.

Conclusion

Entrepreneurship is a relatively recent independent and legitimate field of research within management studies and randomized experiments are not yet very common in our field, and even more so in entrepreneurship education research. This special issue is the culmination of several initiatives, conversations and discussions on the need to bring more experiments into entrepreneurship education research.

Do’s and Don’ts of Designing Experiments - Based on Insights From the Conducting Experiments in Entrepreneurship Research Workshop.

We truly hope that this special issue sparks the readers’ interest in learning more about experiments and to start implementing them in their endeavors on entrepreneurship education research.

Footnotes

Acknowledgements

We would like to thank Ulla Hytti, Christoph Winkler, and Eric Liguori for their invaluable support and guidance during the editorial process of this special issue. We would also like to express our gratitude to our interviewee, Thomas D. Cook, for granting us this interview and for his guidance during the writing process of this article. Finally, we thank all the colleagues who have been active members and facilitators of the Conducting Experiments in Entrepreneurship Research Workshop, from whose advice we have derived important guidelines at the end of this manuscript.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.