Abstract

Background:

Artificial intelligence (AI) platforms, such as ChatGPT, have become increasingly popular outlets for the consumption and distribution of health care–related advice. Because of a lack of regulation and oversight, the reliability of health care–related responses has become a topic of controversy in the medical community. To date, no study has explored the quality of AI-derived information as it relates to common foot and ankle pathologies. This study aims to assess the quality and educational benefit of ChatGPT responses to common foot and ankle–related questions.

Methods:

ChatGPT was asked a series of 5 questions, including “What is the optimal treatment for ankle arthritis?” “How should I decide on ankle arthroplasty versus ankle arthrodesis?” “Do I need surgery for Jones fracture?” “How can I prevent Charcot arthropathy?” and “Do I need to see a doctor for my ankle sprain?” Five responses (1 per each question) were included after applying the exclusion criteria. The content was graded using DISCERN (a well-validated informational analysis tool) and AIRM (a self-designed tool for exercise evaluation).

Results:

Health care professionals graded the ChatGPT-generated responses as bottom tier 4.5% of the time, middle tier 27.3% of the time, and top tier 68.2% of the time.

Conclusion:

Although ChatGPT and other related AI platforms have become a popular means for medical information distribution, the educational value of the AI-generated responses related to foot and ankle pathologies was variable. With 4.5% of responses receiving a bottom-tier rating, 27.3% of responses receiving a middle-tier rating, and 68.2% of responses receiving a top-tier rating, health care professionals should be aware of the high viewership of variable-quality content easily accessible on ChatGPT.

Level of Evidence:

Level III, cross sectional study.

Keywords

Introduction

The use of artificial intelligence (AI) methodologies for various applications in orthopaedic foot and ankle surgery is growing.3,7 -10,24 ChatGPT (Generative Pretrained Transformer) (Open AI, San Francisco, CA) was launched by OpenAI on November 30, 2022, and is a generative language model tool with the capacity to enable the public to converse with an AI network utilizing a text-based platform. Early in 2023, ChatGPT was the fastest-growing consumer application to date, reaching more than 100 million users. 4 ChatGPT is capable of providing answers to a vast array of questions, generating potential applications across multiple service-based fields. Already, there is considerable excitement regarding its use in medicine.13,16,17 Generative language tools could be the answer to the challenge of excessive medical documentation that has been attributed as a major cause of physician burn out.15,17 Modern AI systems have evolved to the extent where they exhibit remarkable capacity to replicate human abilities: ChatGPT scored higher than the current passing threshold for third-year medical students on the National Board of Medical Examiners (NBME) Step 1 sample examination. 6 Despite the recency of the launch of ChatGPT, its use within the orthopaedic community has already been proposed. 16

Moreover, the impressive verbal capacities of ChatGPT and other generative language AIs may lead to a heightened trust in the responses generated from these applications than those that can be found through a simple internet search. Therefore, if the quality of the medical information contained within a ChatGPT-generated answer is poor, use of the application may ultimately lead to wide distribution of medical misinformation. Left to their own devices, patients will often seek sources that are inaccurate, leaving them misinformed.

Thus, the purpose of this study was to evaluate the quality and usability of answers generated using ChatGPT to a variety of questions pertaining to foot and ankle orthopaedic surgery. The generated answers were scored by fellowship-trained orthopaedic foot and ankle surgeons and by current accredited orthopaedic foot and ankle fellows.

Methods

Search Strategy and Data Collection

The artificial intelligence platform ChatGPT (https://chat.openai.com/chat) was asked to answer 5 commonly asked questions related to the foot and ankle on March 15, 2023. The search was conducted by using the following questions: “What is the optimal treatment for ankle arthritis?” “How should I decide on ankle arthroplasty versus ankle arthrodesis?” “Do I need surgery for Jones fracture?” “How can I prevent Charcot arthropathy?” and “Do I need to see a doctor for my ankle sprain?” Our intent was to analyze the responses that ChatGPT would give if patients were to ask the platform for advice related to these disorders of the foot and ankle. The search yielded 5 answers total, 1 per question (n = 5).

Scoring System

Two separate scoring systems were used to evaluate the quality and educational value of the ChatGPT-generated responses: the DISCERN, a previously validated tool used to determine reliability and quality of a treatment approach, and the AI Response Metric (AIRM), a metric devised by our research team to assess the quality of an AI-generated response to a medical question. The AIRM score was modified from a similar scale created for evaluating the utility of the social media platform TikTok as an educational tool for pathologies of the foot and ankle from previously published work by Tabarestani et al. 19

DISCERN for reliability and quality assessment

The DISCERN is a questionnaire metric for written medical information that aims to assess the accuracy and relevancy of textual descriptions of health care treatment-related information. The tool is well validated, has been used since the late 1990s, and is composed of 16 questions (Table 1).

DISCERN Instrument.

The first 8 questions listed in the DISCERN system assess reliability of the publication (DISCERN 1) and the next 7 questions determine the quality of the author’s source base (DISCERN 2). The final question then rates the publication as a whole in terms of its quality as a source of information (DISCERN 3). Although its original intended purpose was designed to be a tool for written information, it has been successfully adapted as a tool for scoring the quality of videos. 12 DISCERN scores are categorized as the following: excellent is denoted by scores of 63 to 75 points, good is denoted by scores of 51 to 62 points, fair is denoted by scores of 39 to 50 points, poor is denoted by scores of 27 to 38 points, and very poor is denoted by scores of 16 to 26 points.

AIRM for Educational Suitability Assessment

To grade the educational value of the ChatGPT-generated responses, we developed the AIRM as a modification from previously published work. 11 This test determines whether or not the viewers can properly understand and rely on the advice following reading ChatGPT’s response. The AIRM has 4 focuses, with a scale of 1 to 5 as grading options. Surgeons were tasked with selecting which set of answers most closely aligned with their thoughts about each answer provided by ChatGPT. Responses were graded using the metric in Table 2. Higher scores denote higher overall quality of ChatGPT-generated responses.

AIRM Instrument.

Assessment

The responses were collected by 2 reviewers and independently evaluated by our orthopaedic research team. Once the data regarding the artificial intelligence response metrics was collected for each response, the content of the videos was graded using the DISCERN and AIRM tools by a multiinstitutional group of foot and ankle orthopaedic surgeons and fellows. Each response was graded separately by 2 trained reviewers. Any points of discrepancy between the 2 reviewers were resolved by a third author.

Statistical Analysis

Scoring and characteristic data are presented as the mean (SD), median (interquartile range [IQR]), and percentage. A 2-sample t test was used to compare the 2 types of uploaders by using the mean, SD, and sample size of each continuous and categorical variable. Statistical significance was set at P < .05, for comparisons other than interrater reliability. All analyses were performed using Microsoft Excel (Microsoft Corp, Redmond, WA).

Results

Basic Characteristics

In total, 5 responses were given after using the aforementioned search questions into ChatGPT. Of the 5 responses given, all were included in the final analysis. Table 1 presents the basic characteristics of the questions asked and the responses given by ChatGPT (Tables 3 and 4, Supplementary Tables 1–3).

Question and Answer 1.

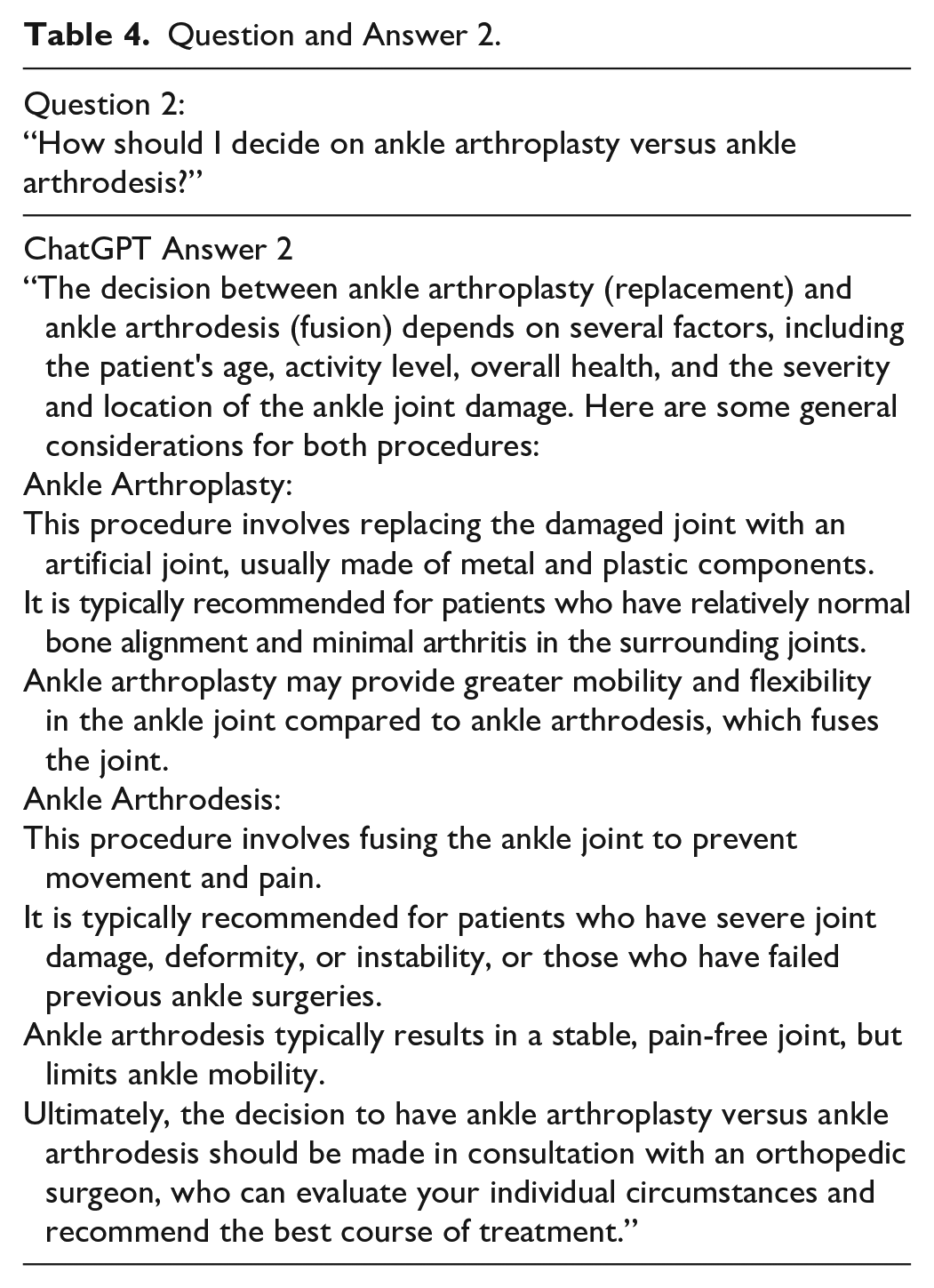

Question and Answer 2.

Rating of Responses, DISCERN Questionnaire

Fellowship-trained orthopaedic foot and ankle surgeons and foot and ankle fellows were asked to grade the responses generated by ChatGPT to each question. Scoring for each question using the DISCERN questionnaire is described in Supplementary Table 4.

Rating of Responses, AIRM

Fellowship-trained orthopaedic foot and ankle surgeons and foot and ankle fellows were asked to grad the responses generated by ChatGPT to each question. Scoring for each question using the DISCERN questionnaire is described in Supplementary Table 5.

Discussion

This study was the first in orthopaedic foot and ankle surgery to assess the quality and usability of answers generated using ChatGPT to a variety of common patient questions. We found a high degree of interobserver variation in the assessment of the answers generated by ChatGPT. Several evaluators were impressed with the ChatGPT responses, scoring them very highly. Others found the answers to be deficient in a number of categories and scored the responses at lower- to middle-tier ratings. This preliminary analysis is underpowered and lacks the validation required to recommend the widespread use of ChatGPT in clinical practice. Rather, our intention was to generate discussion regarding the use cases of AI systems in orthopaedic foot and ankle surgery. Several of the foot and ankle fellows and attending surgeons at our institution were unaware of ChatGPT and remarked at the impressive grammatical correctness of the reviewed answers as well as the perceived completeness of the response.

This study is the first to evaluate ChatGPT-generated responses to several common questions related to foot and ankle orthopaedics. Other studies have investigated the usage of this AI modality in other areas of medicine. A recent systematic review identified 65 and 100 papers in the PubMed and EuropePMC databases, respectively. An additional systematic review of the use of ChatGPT in health care identified 60 manuscripts for inclusion. Advantages to the use of ChatGPT were listed in 51 of the 60 studies (85.0%). Benefits of ChatGPT included efficient analysis of data sets and performance of literature reviews within research, workflow streamlining and cost saving in the clinical setting, and improvements in health care education including improved personalized learning. 18

Although ChatGPT exhibited numerous avenues for potential benefit within health care, 58 of the 60 included studies (96.7%) reported concerns regarding use of this technology. Copyright and plagiarism ethics, citation and reference transparency, and inaccurate content were all cited as potential drawbacks to the use of ChatGPT in health care. 18 Several attending surgeons at our institution commented on failure of ChatGPT to include in-text citations, leading to a lack of granularity regarding source material.

Our study is the first to evaluate ChatGPT in foot and ankle. However, other AI models have been previously researched within domains relevant to foot and ankle surgery. Radiographic interpretation is an area of active research within computational modeling. Kitamura et al 25 utilized 5 different convolutional neural networks (CNNs) to detect ankle fractures from plain radiographs. They internally validated each of the CNNs and noted a fair CNN fracture detection accuracy of 81%. Ashkani-Esfahani et al 2 internally validated 2 deep CNNs for the same purpose and achieved a near-perfect area under the curve of 0.99. AI has been used for image analysis throughout foot and ankle surgery, including for Achilles tendinopathy, 21 stress fracture, 22 Lisfranc fracture, 1 and calcaneal fracture. 5

Symptom, complication, and outcome prediction is another area where AI is applied broadly in foot and ankle surgery. Support vector machine modeling has been applied for classifying hallux valgus patients as having painful feet or pain-free feet. Using radiographic metrics such as hallux valgus angle (HVA), intermetatarsal angle (IMA), and distal metatarsal articular angle (DMAA), Wang et al 20 internally validated this approach to hallux valgus classification, with an accuracy of 76.4%. Likewise, logistic regression testing and gradient boosting models have been used to predict short-term complications such as readmissions and mortality following open reduction and internal fixation for ankle fractures. Merrill et al 14 internally validated these approaches and found both models to perform similarly, with areas under the curves for logistic regression ranging from 0.7101 to 0.7583 and areas under the curves for gradient boosting ranging from 0.6979 to 0.7580. A recent systematic review by Gupta et al 7 provides an overview of 31 studies related to the use of AI in foot and ankle surgery and is an excellent resource for surgeons interested in learning about the capabilities of AI in our feild.

A considerable potential exists for further investigation of generative-text AI systems such as ChatGPT within foot and ankle surgery. Direct comparison of the ChatGPT-generated responses to answers curated from simple internet search tools would elucidate whether newer-model AI provides a valuable addition to what is currently widely available on search platforms. In addition, research should seek to characterize the accuracy, reliability, and usability of ChatGPT-generated clinical documentation. Is this technology adequate for operative report generation and coding and billing purposes? Could ChatGPT combined with voice recognition software replace in-person or virtual scribes in a high-volume clinical setting? The answer to these questions has the potential to revolutionize the practice of surgery and medicine in an era of increased burnout, 23 freeing up provider time for direct patient care.

This study has several important limitations. Most notably, our study samples respondents from a single institution, including fellows and attending foot and ankle surgeons who may have particular, institutional practice patterns that have influenced the evaluation of the responses generated by ChatGPT. As an additional limitation, we used a nonvalidated tool of our own creation to assess the quality of responses generated by ChatGPT. Further research should seek to validate metrics that can be used to assess the usability of AI-generated text blocks. We sought to minimize these limitations by using a well-validated and heavily applied tool for evaluation of written medical text, the DISCERN. Finally, this study was completed within several weeks after the initial release of ChatGPT. Subsequent models may be further refined as the AI algorithm continues to develop and learn.

Conclusion

In summary, ChatGPT is a rapidly growing generative language tool with the capacity to provide answers to a wide variety of common patient questions. This study was the first in orthopaedic foot and ankle surgery to assess the quality and usability of answers generated using ChatGPT to a variety of common patient questions in our field. We found a high degree of interobserver variation in the assessment of the answers generated by ChatGPT, with several evaluators rating the responses very favorably, and others critiquing the ChatGPT-generated answers. Generative language AI systems have the potential to greatly impact the provision of medical care, and further investigation will be required to assess the various use cases of this technology across foot and ankle surgery.

Supplemental Material

sj-pdf-1-fao-10.1177_24730114231209919 – Supplemental material for Evaluating the Quality and Usability of Artificial Intelligence–Generated Responses to Common Patient Questions in Foot and Ankle Surgery

Supplemental material, sj-pdf-1-fao-10.1177_24730114231209919 for Evaluating the Quality and Usability of Artificial Intelligence–Generated Responses to Common Patient Questions in Foot and Ankle Surgery by Albert Thomas Anastasio, Frederic Baker Mills, Mark P. Karavan and Samuel B. Adams in Foot & Ankle Orthopaedics

Footnotes

Appendix

AIRM Questionnaire Grading of ChatGPT-Generated Responses to Questions 1-5 by Orthopaedic Foot and Ankle Surgeons and Fellows.

| AIRM Scores | Scorer 1 | Scorer 2 | Scorer 3 | Scorer 4 | Scorer 5 | Scorer 6 | Scorer 7 | Scorer 8 | Scorer 9 | Scorer 10 | Scorer 11 |

|---|---|---|---|---|---|---|---|---|---|---|---|

| AIRM score 1 | 3 | 4 | 5 | 4 | 3 | 4 | 5 | 2 | 4 | 2 | 5 |

| AIRM score 2 | 3 | 2 | 5 | 4 | 2 | 4 | 5 | 3 | 5 | 3 | 5 |

| AIRM score 3 | 3 | 4 | 5 | 4 | 5 | 3 | 5 | 1 | 5 | 3 | 5 |

| AIRM score 4 | 4 | 4 | 5 | 4 | 5 | 5 | 5 | 4 | 5 | 4 | 5 |

| AIRM score 5 | 3 | 4 | 4 | 4 | 4 | 4 | 5 | 3 | 5 | 4 | 5 |

| AIRM score total | 16 | 18 | 24 | 20 | 19 | 20 | 25 | 13 | 24 | 16 | 25 |

Ethical Approval

No ethical approval was needed for this study. No patients were included in this work. Online, publicly available Artificial Intelligence responses were analyzed.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article. ICMJE forms for all authors are available online.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.